Abstract

Background

The Veterans Health Administration (VHA) developed the Stratification Tool for Opioid Risk Mitigation (STORM) dashboard to assist in identifying Veterans at risk for adverse opioid overdose or suicide-related events. In 2018, a policy was implemented requiring VHA facilities to complete case reviews of Veterans identified by STORM as very high risk for adverse events. Nationally, facilities were randomized in STORM implementation to four arms based on required oversight and by the timing of an increase in the number of required case reviews. To help evaluate this policy intervention, we aimed to (1) identify barriers and facilitators to implementing case reviews; (2) assess variation across the four arms; and (3) evaluate associations between facility characteristics and implementation barriers and facilitators.

Method

Using the Consolidated Framework for Implementation Research (CFIR), we developed a semi-structured interview guide to examine barriers to and facilitators of implementing the STORM policy. A total of 78 staff from 39 purposefully selected facilities were invited to participate in telephone interviews. Interview transcripts were coded and then organized into memos, which were rated using the −2 to + 2 CFIR rating system. Descriptive statistics were used to evaluate the mean ratings on each CFIR construct, the associations between ratings and study arm, and three facility characteristics (size, rurality, and academic detailing) associated with CFIR ratings. We used the mean CFIR rating for each site to determine which constructs differed between the sites with highest and lowest overall CFIR scores, and these constructs were described in detail.

Results

Two important CFIR constructs emerged as barriers to implementation: Access to knowledge and information and Evaluating and reflecting. Little time to complete the CASE reviews was a pervasive barrier. Sites with higher overall CFIR scores showed three important facilitators: Leadership engagement, Engaging, and Implementation climate. CFIR ratings were not significantly different between the four study arms, nor associated with facility characteristics.

Keywords

Background

Many health systems, including the Veterans Health Administration (VHA), have taken proactive, multifaceted approaches to mitigate the harms of opioids in the United States. As part of these efforts, VHA developed the Stratification Tool for Opioid Risk Mitigation (STORM), a suite of provider-facing electronic reports which use a predictive model to identify patients at risk for opioid or suicide-related events (Oliva et al., 2017). Updated nightly, the STORM reports provide an estimated risk level for all Veteran patients, as well as additional information to assist clinicians to apply appropriate risk mitigation strategies tailored to individual patient risk factors and needs.

In March 2018, VHA released a national policy notice requiring that all facilities begin conducting “case reviews” of Veterans determined to be at highest risk based on the STORM model. Completing these case reviews entailed using a data tool, such as the STORM dashboard, to evaluate risk and determine the utility of providing risk mitigation strategies (e.g., referral to a pain specialist, providing naloxone). Completed case reviews and actions taken by the clinician(s) were documented using a standardized note in the VA electronic medical record.

The implementation of this policy notice was randomized to meet continuous improvement goals (Chinman et al., 2019; Oliva et al., 2017). Initially, all sites were required to review the top 1% of those at risk. Using a stepped-wedge design, VHA facilities were randomized to require an expanded number of case reviews (moving from top 1% of those at risk to top 5%) at either 9 or 15 months after the notice was released. In addition, VHA facilities were randomly assigned to receive a version of the policy notice requiring additional oversight if the site did not achieve a case review completion rate of 97% after 6 months. The rate was calculated as the number of completed case reviews of those Veterans identified by the STORM model as high risk (Chinman et al., 2019). This created four study arms, varying by oversight/no oversight and length of time before increasing the number of case reviews. This design allowed for examination of the effect of requiring case reviews for the top 1% versus 5% of high-risk Veterans and the impact of requiring additional oversight for facilities that failed to meet target goals for case review completion.

The first phase of an evaluation of this policy notice identified specific strategies associated with improved case review completion and found that being randomized to receive additional oversight did not impact the number or type of implementation strategies used to complete case reviews (Rogal et al., 2020). In the second phase of evaluation, described here, we analyzed 78 qualitative interviews representing 39 sites to examine barriers and facilitators to implementing the case review policy. This phase of evaluation aimed to (1) identify the barriers and facilitators for implementing case reviews; (2) assess variation in barriers and facilitators across the four study arms, and (3) evaluate the associations between Consolidated Framework for Implementation Research (CFIR) ratings and three facility characteristics: facility size, rural or urban location, and level of support provided through academic detailing.

The implementation of change in large-scale systems is especially complicated and requires a high level of training, multi-disciplinary team building, and the ability to identify barriers to change as they occur. (Mann & Lohrmann, 2019). VHA is one of few healthcare systems with the infrastructure to support an evaluation of these barriers and facilitators, with sites given substantial discretion as to how to set up their local implementation efforts. Thus, variability would be expected for both barriers and facilitators of the implementation across sites. This evaluation, with both qualitative insight and multi-site data can inform future large-scale policy-driven implementation efforts.

Methods

This study was reviewed and approved by the VA Pittsburgh Health Care System's Institutional Review Board. All participants provided informed consent prior to participating in the phone interview.

Sample Design and Participant Recruitment

Within each of the four study arms, we purposefully sampled 10 sites, using performance on a national VA metric called the Opioid Therapy Guideline Adherence Metrics (OTG), which measures the extent a facility is following VHA national best practices for opioid treatment. Our aim with this sampling strategy was to interview sites with varying levels of baseline performance on opioid risk mitigation strategies. A statistician not associated with the interview process ranked sites in each arm based on their performance on the OTG metrics, and we selected 5 sites with the highest OTG scores and 5 with the lowest from each of the four study arms. If a site could not be reached or declined to participate, we moved to the next site on the list for that arm. Case completion rate was not available at the time of site selection, and was not considered in site selection.

We contacted the individual who served as the STORM point of contact (POC) at the selected sites by email, with phone and direct-messaging follow ups, and invited them to participate in one 45-min interview. A total of 63 sites were contacted to obtain the target sample of 40 sites. During the interview, the POC was asked to suggest one or two other individuals who could comment on the policy implementation at their site, and these individuals were then invited to participate. A total of 82 participants at 40 sites were interviewed, with 9 sites having 1 interview, 21 sites with 2 interviews, and 9 sites with 3 interviews. Two interviews could not be used due to poor audio quality and one site was dropped because they had not started implementation, resulting in a final total of 78 interviews from 39 sites for analysis.

Interview Development

The interview guide was developed using CFIR, a meta-theoretical framework for evaluating the factors that may influence implementation efforts (Damschroder & Lowery, 2013; Damschroder et al., 2009b, 2017). This framework was chosen because it is especially flexible and can be tailored to specific content. The CFIR has been used in other VA evaluations, including multi-site evaluations. (Bokhour et al., 2018; Damschroder & Lowery, 2013). As such, it was especially appropriate for this complex implementation effort (Gale et al., 2019).

CFIR consists of 32 constructs organized into 5 domains: implementation process, characteristics of the clinical intervention being implemented (in this case, the case reviews), characteristics of individuals implementing the clinical intervention, characteristics of the implementing organization (called inner setting), and the impact of forces outside of the organization (called outer setting). CFIR has an existing interview guide that includes questions about all 32 constructs which can be tailored for individual evaluations.

Sixteen of the most relevant constructs within four CFIR domains were selected for the interview guide in this study (Table 3). Data from the individual characteristics domain was not collected because we believed that factors at larger ecological levels were more critical, and asking about all domains would make the interview burdensome. Two research assistants experienced in qualitative interviews were trained to administer the interview, and the interview was piloted with clinicians from two facilities outside the study sample. All interviews were conducted by phone, recorded, transcribed, and validated by trained research staff. Interviews lasted approximately 45 min and were conducted from March 2019 to August 2019, approximately 1 year after the policy was released. Participants provided demographic information, including gender, time in VHA, time in their current role, type of training and clinical practice, and involvement with completing case reviews.

Joint display of quantitative and qualitative data about STORM barriers and facilitators.

Interview Coding and Memo Development

A qualitative content analysis approach was followed (Forman & Damschroder, 2007), using the CFIR constructs as the structure. The coding was approached both deductively using CFIR constructs and inductively as codes emerged from the data. Following transcription and transcript verification, a codebook for the interviews was developed by an experienced qualitative coder (GK). The codebook was reviewed by the research team for completeness, and the coder coded the first 35 interviews using Nvivo 12 software. A second coder (MS) was trained to apply the codes in the codebook to the transcripts and double-coded five interviews. Three authors experienced in qualitative research (SM, GK, and MS) reviewed the double-coded interviews and resolved any differences by consensus, adapting the codebook and reviewing previous interviews to maintain consistency. The main coder continued to code interviews, with the secondary coder double coding every fifth interview to prevent drift in coding. All discrepancies were addressed by the qualitative team and significant questions were raised to the entire research team for resolution (Campbell et al., 2013; Damschroder et al., 2009b, 2017).

Individual interviews from a single site were combined to create a “memo”—text from all interviews at a site organized by CFIR construct—an analysis technique used to provide a more complete view of each site. In the memo, each individual CFIR construct is rated on a scale of −2, −1, 0, +1, and +2, with negative valence indicating the construct represented a barrier to implementation, and positive valence indicating the construct was a facilitator (https://cfirguide.org/). Six team members were trained in the rating process following CFIR guidelines, and three teams of two reviewed site memos and rated the constructs using standard CFIR guidance and exemplars of each category. Rating pairs met to achieve consensus after independently rating constructs for a memo. The entire group of raters also met regularly to discuss any uncertainty in rating and to maintain consistency. As a further check for consistency, an experienced CFIR rater (SM) rated two memos from each team and a comparison showed a high degree of consistency and adherence to CFIR rating guidance. Over time, teams were varied such that coding was done by different partners, to assure continued consistency and prevent team drift in rating. In addition to the memo rating, the first author and one other author (GK) reviewed all memos and interviews qualitatively, as well as the coded data results (codes within the constructs), and the research team met regularly to discuss recurring themes, both within the constructs and across constructs.

Study Arms and Facility Characteristics

Study arm was operationalized using two dummy variables: one for the type of policy memo received (standard vs. increased oversight), and one for the timing of the increase in case review requests (early vs. late). Facility-level variables included the number of academic detailing visits, a measure of rurality, and a measure of facility complexity (an algorithm that considers patient risk, number and breadth of available specialists, intensive care unit availability, and teaching and research activities) (Chinman et al., 2019). Academic detailing is a defined support bundle provided by pharmacists within VHA who help clinicians improve prescribing using training, problem-solving, and data feedback, and was used to operationalize training and support for implementing case reviews.

Analysis

We used descriptive statistics to characterize the responding providers and sites and to compare the 39 participating sites to facilities where no interviews were conducted. Consistent with the qualitative content analysis, the full set of interview data was explored deductively using coded CFIR constructs, as well as inductively to explore whether any additional barriers or facilitators might emerge that did not fit into the CFIR constructs. Research team members who read and coded the site memos also met regularly to discuss important overall themes in the data.

For each facility, we computed a total CFIR score as the mean summary rating across all 16 constructs (the individual ratings from −2 to +2 for each construct) as well as the proportion of constructs with positive, negative, and zero ratings. We tested the associations between the 16 construct ratings and four study arms, baseline OTG level, and facility characteristics using Kruskal-Wallis tests to allow for non-normality of the ratings. We identified sites in the top and bottom quartile of CFIR ratings based on the 10 sites with the highest and the 10 sites with the lowest mean CFIR scores. Then, for each of the 16 CFIR constructs, we calculated difference scores by subtracting the mean of that construct in the bottom quartile from the mean of that construct in the top quartile. This allowed for an examination of which CFIR constructs contributed the most to the differences between high and low quartile sites.

The qualitative and quantitative data were combined using convergent parallel mixed methods (Guetterman et al., 2015). These methods allow for the integration of quantitative data (the difference score for each CFIR construct by high and low quartile) and qualitative data (CFIR interview quotes) that are collected and analyzed separately and in parallel. We integrated those findings using a joint display, a technique that visually combines qualitative and quantitative results to draw out new insights (Guetterman et al., 2015).

Results

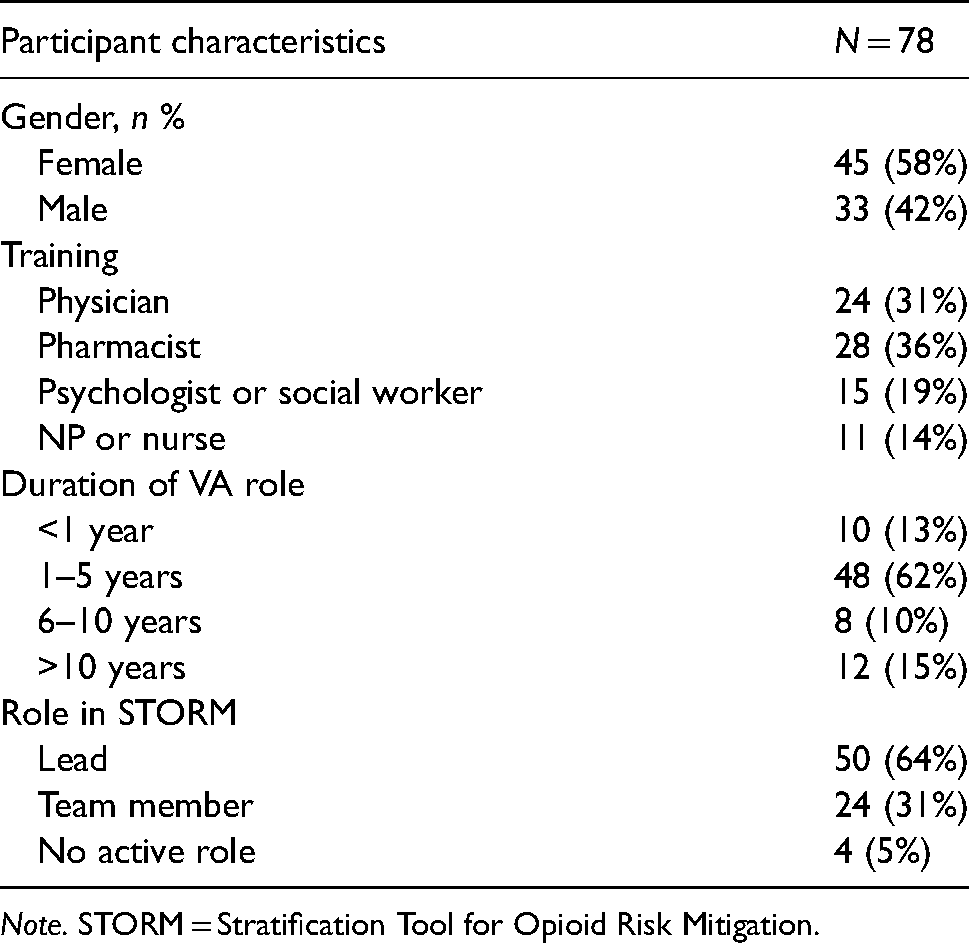

The 39 facilities targeted for interviews were similar in terms of facility characteristics to non-interview sites (Supplementary Table 1). The 78 interview participants from these 39 sites were majority women (58%) and covered a range of professional disciplines. Most participants had 1–5 years of experience in their current roles and were leaders in the STORM implementation efforts at their facility (Table 1). Six facilities were rural and 33 were urban, although many urban facilities have outpatient clinics in more rural locations. Geographically, 8 sites were Northeast, 9 were Midwest, 7 were West, and 15 were from the South.

Interview participant characteristics.

Consolidated Framework for Implementation Research (CFIR) ratings by randomization arm for 39 facilities.

The CFIR ratings for each of the 16 CFIR constructs, the total CFIR scores, and the proportion of positive scores were not significantly different between the four randomization arms (Table 2). For example, overall, the mean CFIR rating in the

There were also no significant differences based on baseline OTG score, oversight versus no oversight, and early versus late increase in case reviews (Appendix 1). There was no association between the 16 CFIR construct scores and three facility characteristics: medical center complexity, rural versus urban, and top quartile of academic detailing provided versus other quartiles (Appendix 2).

Mixed-Methods Result With Joint Display

Table 3 presents quotes that illustrate each construct as a barrier and as a facilitator, the distribution of ratings for 39 sites, and the difference score on each CFIR construct rating between facilities in the highest and lowest quartiles of overall CFIR scores.

While most constructs functioned as both barriers and facilitators across the sample, some had primarily negative or positive ratings. For example,

The three CFIR constructs with the largest difference scores between the top and bottom CFIR quartiles are

Leadership Engagement

The

In contrast, facilities with overall high mean CFIR scores described supportive leadership as facilitating their implementation efforts. One participant described having time protected to conduct case reviews and said that their supervisors were, “very supportive of this as well. …if I needed to go to them and say, ‘hey, I need more time blocked to do these STORM reviews’…they would do that.”

Engaging

The

The

For these sites, the process was incremental and involved changes to existing processes rather than the creation of new ones. One staff member said: “it was a little easier for us because we were already doing these things… so now we’re in good shape and …it was easy to just say…We have to change our note… “. Although pre-existing teams had to adjust to a new method of review, they had the existing structure and skill to respond to the notice, making the demands on these sites quite different from sites without existing teams.

In sites without existing teams, a single individual or very small group might attempt to do the case reviews, often with mixed results, depending on the number of case reviews they were asked to complete. Consistent with the

A related challenge was team development. The policy notice recommended an interdisciplinary team, and across interviews, 27 different roles were mentioned as participating in the case review process, with professional backgrounds varying from medical doctors and nurse practitioners to recreational therapists and podiatrists. The complexity of creating such an interdisciplinary team, in a large and sometimes siloed environment like the VA, was considerable. Participants described difficulty finding members to participate, challenges with identifying time to work on reviews, and communication struggles between disciplines. Forming new teams often created an implementation barrier, as evidenced by the frustration from this interviewee: “…we’ve tried to partner with the suicide prevention people and they’re overwhelmed too. They’re like … oh we can't take responsibility for that. Don't tag us onto the note. Don't tell us… Yeah, so it's like, well if not you, who?”

Another participant noted a lack of role clarity around who should take responsibility for establishing and maintaining the team, stating: “There is that push and pull, ‘This should be Mental Health's baby. No, this should be Primary Care's baby. No, this should be Pain Clinic's baby.’ …I think it should be an interdisciplinary team. ”

Implementation Climate

The

In a high quartile site, with a more receptive climate, one participant noted: “I think at first, just like with any new notice, (they) sort of, groaned a little bit and…dragged their feet but I think we’re, we’re good now. I think our facility is really receptive.” Sites in the top quartile were more likely to have provided a positive context for implementing the STORM notice.

Available Resources

The

Interviews in the low quartile sites demonstrated this concern, with one staff member stating: “…the problem is that we get zero dedicated time. And we’ve asked leadership several times, and it just falls on deaf ears so, no, we get zero dedicated time to do this.” Many were adding this to an already full workload, as described by this individual: “I’m also a provider…I have a full patient load…And there's not really time to do all this.” In a few cases, clinic time slots were blocked so providers could complete case reviews, but more typically, and still infrequently, they were only given time to attend the team meeting to discuss the reviews. When providers had flexibility in their schedule to complete the reviews, they described this as a facilitator.

Other CFIR constructs were barriers or facilitators in some cases, as described in Table 3, but were less pervasive or intense than those identified above. This is evident from the qualitative review as well as the percentage of positive ratings provided for each construct.

Discussion

In this evaluation of the randomized rollout of a policy requiring case reviews at VHA facilities, we identified key implementation barriers and facilitators, although the differing policies for oversight and pacing did not seem to influence what sites were needed for successful implementation. In addition, characteristics such as facility size, complexity, rurality, and implementation resources (i.e., academic detailing) were not associated either positively or negatively with barriers and facilitators. The predominant facilitators were strong and appropriate engagement, supportive leadership, and a positive implementation climate. Important barriers included lack of time to complete the case reviews and a perceived lack of evidence for the intervention. The mixed-methods approach and use of joint displays is a novel approach that enabled the analysis of a large volume of qualitative data. The combination of ratings and coded data helped to develop a strong picture of the barriers and facilitators for this intervention.

The differing study arms appeared to have no effect on implementation barriers and facilitators. Having increased oversight and requiring sites to complete action planning did not seem to impact how facilities implemented the notice. This finding replicates earlier work indicating that oversight did not change the number or type of strategies used for the implementation (Rogal et al., 2020)). The oversight could have been viewed as both positive and negative, since it included assistance from the national office, perhaps confounding the effect of this contingency. The other randomization condition—altering the timing of requiring more case reviews—also did not impact implementation barriers and facilitators, even though some facilities experienced a large increase in the number of required case reviews prior to their interviews. Because facilities were not told in advance about the increase, it is not surprising that this evaluation, which focused on the process for completing the case reviews, did not show differences between time frames. Because the interviewers were intentionally blinded to the condition of the interviewees, we did not ask specific questions about this increase, and it was seldom mentioned spontaneously.

We were surprised to find no association between implementation experience and the facility characteristics explored. It might be expected that facilities with higher levels of care (complexity) or more training (academic detailing) would develop different, perhaps better, approaches to this implementation. This difference has previously been demonstrated, including work on following clinical practice guidelines at the VA, where less complex, Western, more rural sites, had less implementation of practice guidelines (Buscaglia et al., 2015). It seems possible that the high level of interdisciplinary work required by this notice cut across those simple characteristics and favored a configuration of resources, leadership, climate, and engaging to maximize positive implementation. This need for synergy in the implementation of complex innovations is also described by Rapp et al. (2010).

We identified four CFIR constructs as facilitators

Implementing the STORM case reviews required sites across the country with varying capacities to respond to a very specific and complex task: completing interdisciplinary case reviews and successfully logging completion of reviews using a note in the electronic medical record. The task required team creation and management, pain expertise, and technical skills in managing the case review note and understanding the STORM tool. Further, the task was charged to a group already stretched by clinical demands, and each site was asked to develop an individualized approach to completing the task, rather than being provided with specific tools and task assignments. While sites developed many different approaches to the task, some had greater resources and capacity to accomplish this, and some were simply exhausted and frustrated. Although recent work by Kim et al. (2020) points to the importance of heterogeneity in implementation efforts, the complexity of this innovation points to a need for greater task clarity and specificity. In addition, although the task was complex, the evaluation of the task was extremely simple, a correctly titled note in the medical record. Fidelity to this task was not evaluated, and some participants commented that there was likely to be great variation in the depth and quality of this note. Finally, as Greenhalgh et al. (2004) stress, it is important to recognize the potential impact of sociopolitical context in which this implementation was taking place, as this was a time of political stress and resource challenges for the VHA system, with calls for privatization and changes to allow outside providers to serve Veterans.

While this was a novel approach to evaluation with several notable findings, there were limitations. First, only a limited number of CFIR constructs can be included in an interview without becoming overwhelming, and it is possible that some important constructs were omitted. Second, 23 sites declined to participate in the qualitative interview, possibly leading to a selection bias. However, participating and non-participating facilities did not significantly differ on objective measures, and a 45-minute interview may simply have seemed too challenging for busy providers to accommodate. Third, the analytical strategy of comparing the sites with the top and bottom quartiles of overall CFIR scores may have masked important constructs that functioned in the moderately rated sites. However, we believe comparing the top and bottom quartile allows us to glean useful barriers and facilitators that clearly differentiated sites. Fourth, sites were characterized on the basis of only a few individuals, although every effort was made to ensure these individuals were those most knowledgeable about this implementation. Fifth, there was variability regarding the point in time that the interview was conducted relative to the implementation. This may have influenced the nature of barriers and facilitators reported. Finally, an objective implementation measure was not used in any analyses. Although the case review completion rate was the designated implementation measure for each site, mapping this measure on to the memo ratings proved unreliable due to the differing temporal relationship between the interviews and the completion rate, calculated quarterly. In addition, the measure did not quantify the actual number of case reviews completed but instead the proportion of reviews that were completed for a site over time. The outcomes of this policy initiative are being evaluated, and early evidence shows positive clinical outcomes for Veterans who are identified as at risk by the STORM dashboard. These Veterans are more likely to have a case review completed and to have risk mitigation strategies put in place (Strombotne et al., 2021).

In conclusion, this evaluation of a national implementation identified key barriers and facilitators across multiple implementation sites. Although we found no difference in implementation barriers and facilitators across randomization arms, the evaluation demonstrated the value of strong, supportive leadership and climate, realistic expectations about time, and engaging the right people in creating a positive experience for implementation. Further, a perceived lack of training and accurate, well-explained feedback were barriers to the process. In future large-scale, nationwide implementations it would be constructive to consider the overall readiness for implementation, including the development of strong implementation leadership (Bonham et al., 2014). In addition, more proactivity (Birken et al. 2013) and comprehensive training might help with the improved adoption of an intervention of this complexity, as shown in much previous research (Damschroder et al., 2009b; Greenhalgh et al., 2004; Phillips & Allred, 2006). Finally, the findings suggest the limits to using official policies promising low-level consequences as a strategy to overcome implementation barriers.

Conclusion

In evaluating the randomized rollout of a policy requiring case reviews at VHA facilities, we did not find any association between study arms and implementation barriers and facilitators. Facilitators were strong engagement of appropriate individuals, engaged and supportive leadership, and a positive implementation climate. Lack of time to complete the case reviews and a perceived lack of evidence for the intervention were barriers. The evaluation used a mixed-methods approach and joint displays, an innovative approach that enabled the analysis of a large volume of data.

Supplemental Material

sj-docx-1-irp-10.1177_26334895221114665 - Supplemental material for Tracking the randomized rollout of a Veterans Affairs opioid risk management tool: A multi-method implementation evaluation using the Consolidated Framework for Implementation Research (CFIR)

Supplemental material, sj-docx-1-irp-10.1177_26334895221114665 for Tracking the randomized rollout of a Veterans Affairs opioid risk management tool: A multi-method implementation evaluation using the Consolidated Framework for Implementation Research (CFIR) by Sharon A. McCarthy, Matthew Chinman, Shari S. Rogal, Gloria Klima, Leslie R. M. Hausmann, Maria K. Mor, Mala Shah, Jennifer A. Hale, Hongwei Zhang, Adam J. Gordon, and Walid F. Gellad in Implementation Research and Practice

Supplemental Material

sj-docx-2-irp-10.1177_26334895221114665 - Supplemental material for Tracking the randomized rollout of a Veterans Affairs opioid risk management tool: A multi-method implementation evaluation using the Consolidated Framework for Implementation Research (CFIR)

Supplemental material, sj-docx-2-irp-10.1177_26334895221114665 for Tracking the randomized rollout of a Veterans Affairs opioid risk management tool: A multi-method implementation evaluation using the Consolidated Framework for Implementation Research (CFIR) by Sharon A. McCarthy, Matthew Chinman, Shari S. Rogal, Gloria Klima, Leslie R. M. Hausmann, Maria K. Mor, Mala Shah, Jennifer A. Hale, Hongwei Zhang, Adam J. Gordon, and Walid F. Gellad in Implementation Research and Practice

Footnotes

Acknowledgments

We acknowledge that this work would not be possible without the cooperation and support of our partners in the Office of Mental Health and Suicide Prevention and in the HSR&D-funded Partnered Evidence-Based Policy Resource Center. The contents of this paper are solely from the authors and do not represent the views of the Department of Veterans Affairs or the United States Government.

Authors’ contribution

SM, WG, GK, and MS designed and conducted the interviews. SM, MC, MS, GK, WG, and LH rated memos. HZ, MM, GK, SR, and SM analyzed the data. SM drafted the manuscript. JH provided exceptional administrative support and AG provided significant editing. All authors worked on study conception and design, interpretation of data, and critical review and approval of the manuscript.

Availability of Data and Materials

Please contact the corresponding author.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethics approval and consent to participate

The VA Pittsburgh Healthcare System approved this research study.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Health Services Research and Development,(grant number SDR 16-193).

Supplemental material

Supplemental material for this article is available online.

Appendix

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.