Abstract

Background:

Systematic reviews of measures can facilitate advances in implementation research and practice by locating reliable and valid measures and highlighting measurement gaps. Our team completed a systematic review of implementation outcome measures published in 2015 that indicated a severe measurement gap in the field. Now, we offer an update with this enhanced systematic review to identify and evaluate the psychometric properties of measures of eight implementation outcomes used in behavioral health care.

Methods:

The systematic review methodology is described in detail in a previously published protocol paper and summarized here. The review proceeded in three phases. Phase I, data collection, involved search string generation, title and abstract screening, full text review, construct assignment, and measure forward searches. Phase II, data extraction, involved coding psychometric information. Phase III, data analysis, involved two trained specialists independently rating each measure using PAPERS (Psychometric And Pragmatic Evidence Rating Scales).

Results:

Searches identified 150 outcomes measures of which 48 were deemed unsuitable for rating and thus excluded, leaving 102 measures for review. We identified measures of acceptability (

Conclusion:

While measures of implementation outcomes used in behavioral health care (including mental health, substance use, and other addictive behaviors) are unevenly distributed and exhibit mostly unknown psychometric quality, the data reported in this article show an overall improvement in availability of psychometric information. This review identified a few promising measures, but targeted efforts are needed to systematically develop and test measures that are useful for both research and practice.

Plain language abstract:

When implementing an evidence-based treatment into practice, it is important to assess several outcomes to gauge how effectively it is being implemented. Outcomes such as acceptability, feasibility, and appropriateness may offer insight into why providers do not adopt a new treatment. Similarly, outcomes such as fidelity and penetration may provide important context for why a new treatment did not achieve desired effects. It is important that methods to measure these outcomes are accurate and consistent. Without accurate and consistent measurement, high-quality evaluations cannot be conducted. This systematic review of published studies sought to identify questionnaires (referred to as measures) that ask staff at various levels (e.g., providers, supervisors) questions related to implementation outcomes, and to evaluate the quality of these measures. We identified 150 measures and rated the quality of their evidence with the goal of recommending the best measures for future use. Our findings suggest that a great deal of work is needed to generate evidence for existing measures or build new measures to achieve confidence in our implementation evaluations.

Keywords

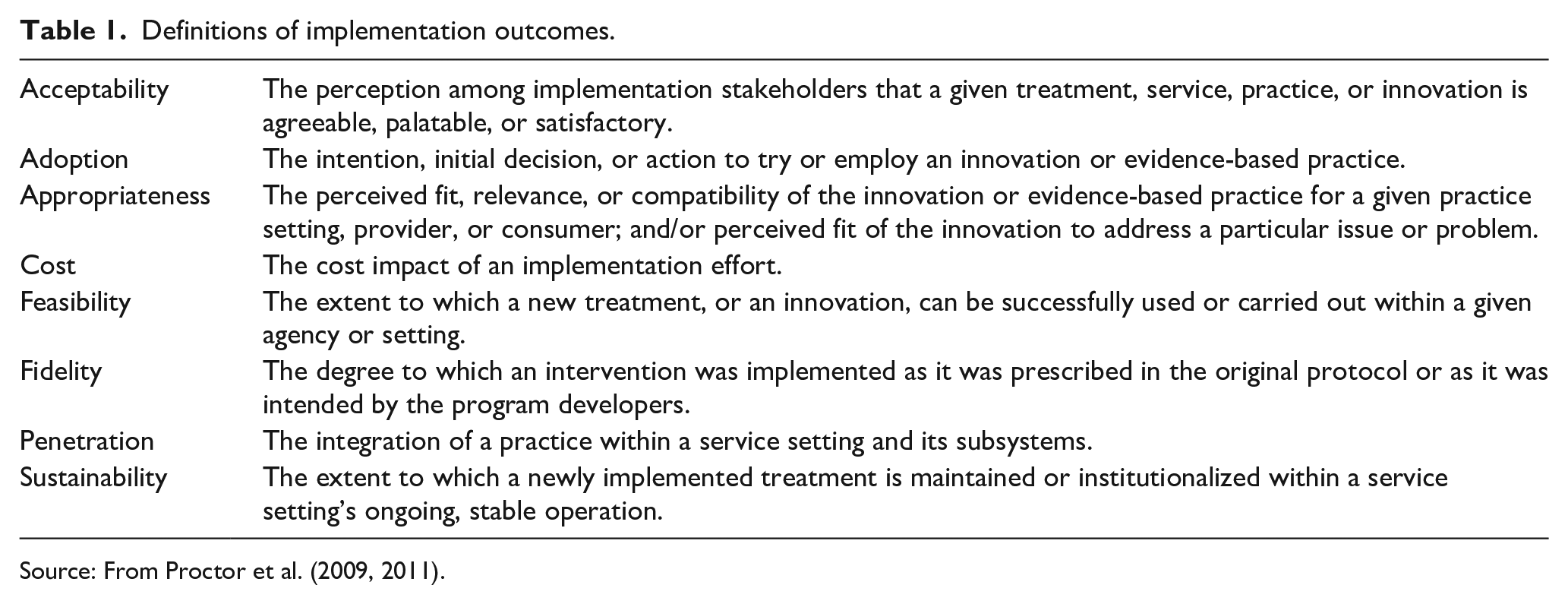

It is well established that evidence-based practices are slow to be implemented into routine care (Carnine, 1997). Implementation science seeks to narrow the research-to-practice gap by identifying barriers and facilitators to effective implementation and designing strategies to achieve desired implementation outcomes. Proctor and colleagues’ seminal work (Proctor et al., 2009, 2011) articulated at least eight implementation outcomes for the field: (1)

Since 2015, we have sought to update and expand these reviews. Full details about our updated approach are published in a protocol paper (Lewis et al., 2018). Three major differences are worth noting. One, we expanded our assessment of measures to their scales given that many measures purportedly assess numerous constructs delineated by scales. For example, the Texas Christian University Organizational Readiness for Change Scale contains 19 scales measuring constructs such as

Although it has only been 4 years since the publication of our initial systematic review (Lewis et al., 2015), the field of implementation science has evolved with rapid pace. This progress, together with the enhancements made to our systematic review protocol (Lewis et al., 2018), calls for an update to our assessment of measures of implementation outcomes. Specifically, this article presents the findings from systematic reviews of the eight implementation outcomes, including a robust synthesis of psychometric evidence for all identified measures.

Method

Design overview

The systematic literature review and synthesis consisted of three phases. Phase I, measure identification, included the following five steps: (1) search string generation, (2) title and abstract screening, (3) full text review, (4) measure assignment to implementation outcome(s), and (5) measure forward (cited-by) searches. Phase II, data extraction, consisted of coding relevant psychometric information, and in Phase III data analysis was completed.

Phase I: data collection

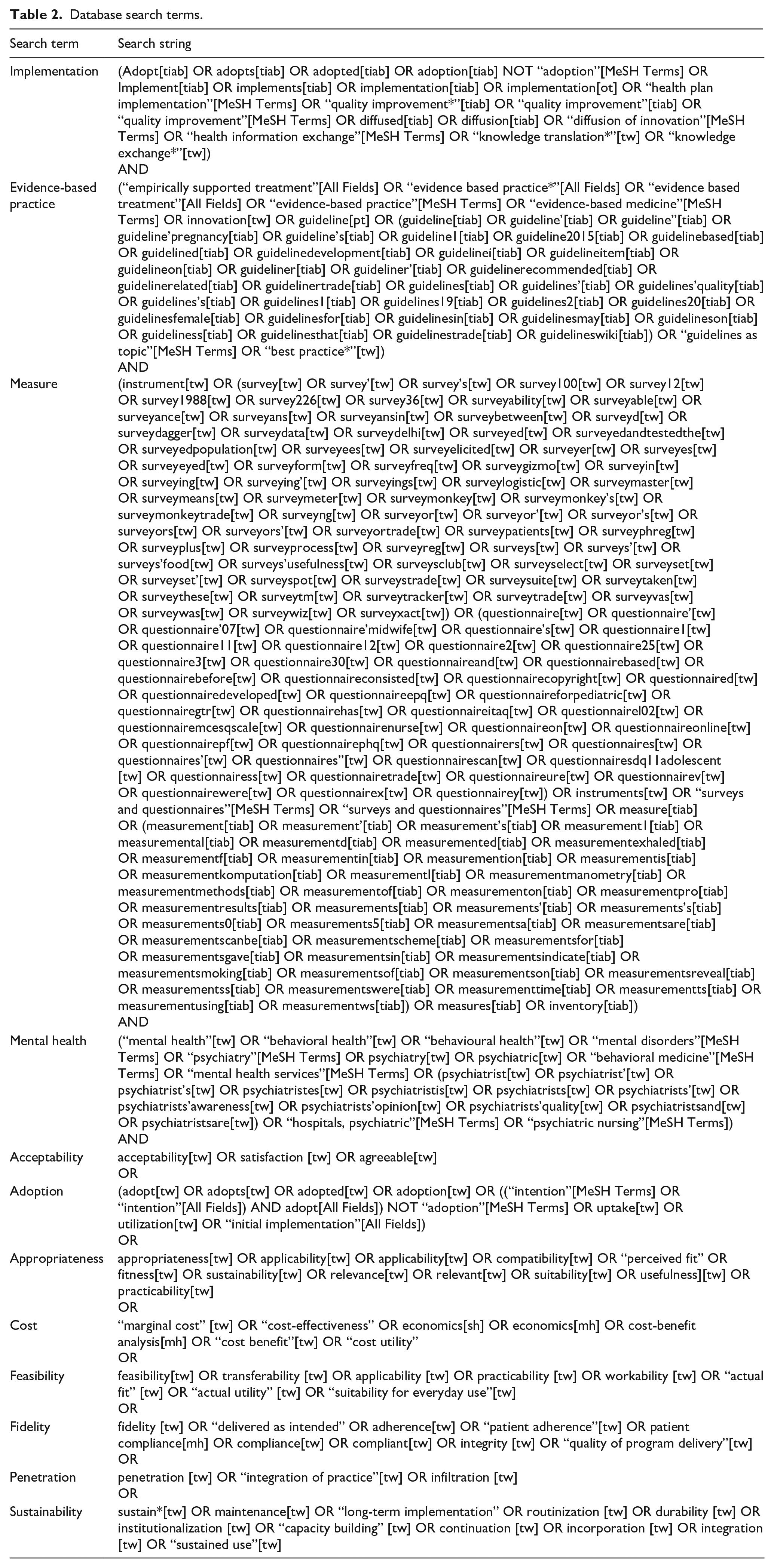

First, literature searches were conducted in PubMed and Embase bibliographic databases using search strings curated in consultation from PubMed support specialists and a library scientist. Consistent with our funding source and aim to identify and assess implementation-related measures in mental and behavioral health, our search was built on four core levels: (1) terms for implementation (e.g., diffusion, knowledge translation, adoption); (2) terms for measurement (e.g., instrument, survey, questionnaire); (3) terms for evidence-based practice (e.g., innovation, guideline, empirically supported treatment); and (4) terms for behavioral health (e.g., behavioral medicine, mental disease, psychiatry) (Lewis et al., 2018). For the current study, we included a fifth level for each of the following

Database search terms.

Identified articles were vetted through a title and abstract screening followed by full text review to confirm relevance to the study parameters. In brief, we included empirical studies and measure development studies that contained one or more quantitative measures of any of the eight implementation outcomes if they were used in an evaluation of an implementation effort in a behavioral health context. Of note, we decided to retain only

Included articles then progressed to the fourth step, construct assignment. Trained research specialists (C.D., K.M.) mapped measures and/or their scales to one or more of the eight aforementioned implementation outcomes (Proctor et al., 2011). Assignment was based on the study author’s definition of what was being measured. Assignment was also based on content coding by the research team who reviewed all items of the measure for evidence of content explicitly assessing one of the eight implementation outcomes when compared against the construct definition. Construct assignment was checked and confirmed by content expert (C.L.) having reviewed items within each measure and/or scale.

The final step subjected the included measures to “cited-by” searches in PubMed and Embase to identify all empirical articles that used the measure in behavioral health implementation research.

Phase II: data extraction

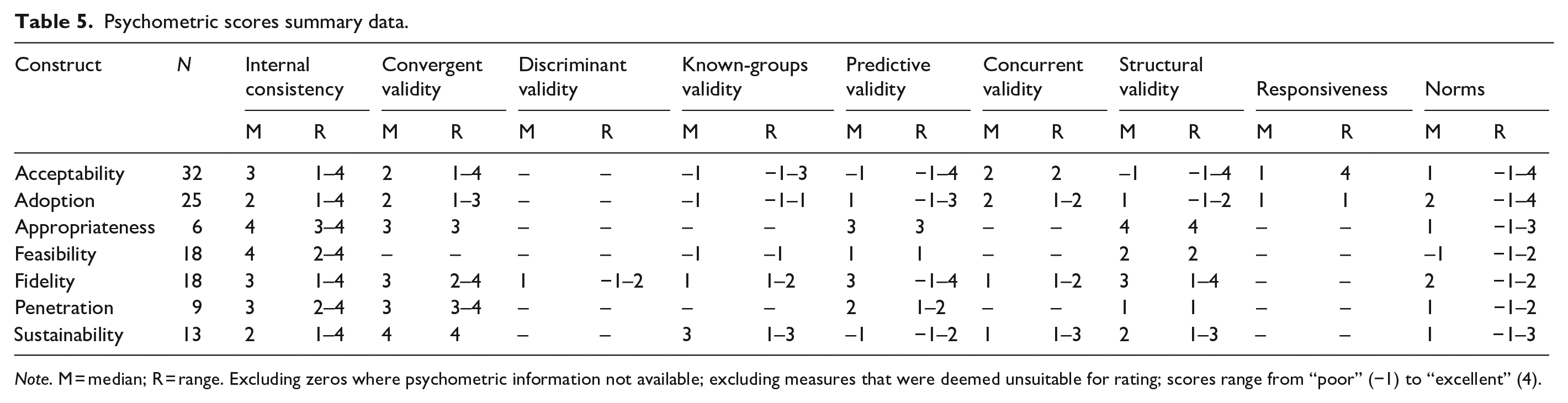

Once all relevant literature was retrieved, articles were compiled into “measure packets.” These measure packets included the measure itself (as available), the measurement development article(s) (or article with the first empirical use in a behavioral health context), and all additional empirical uses of the measure in behavioral health. In order to identify all relevant reports of psychometric information, the team of trained research specialists (CD, KM) reviewed each article and electronically extracted information to assess the psychometric and pragmatic rating criteria, referred to hereafter as PAPERS (Psychometric And Pragmatic Evidence Rating Scale). The full rating system and criteria for the PAPERS are published elsewhere (Lewis et al., 2018; Stanick et al., 2019). The current study, which focuses on psychometric properties only, used nine relevant PAPERS criteria: (1) internal consistency, (2) convergent validity, (3) discriminant validity, (4) known-groups validity, (5) predictive validity, (6) concurrent validity, (7) structural validity, (8) responsiveness, and (9) norms. Data on each psychometric criterion were extracted for both full measure and individual scales as appropriate. Measures were considered “unsuitable for rating” if the format of construct assessment did not produce psychometric information (e.g., qualitative nomination form) or format of the measure did not conform to the rating scale (e.g., cost analysis formula, penetration formula).

Having extracted all data related to psychometric properties, the quality of information for each of the nine criteria was rated using the following scale: “poor” (−1), “none” (0), “minimal/emerging” (1), “adequate” (2), “good” (3), or “excellent” (4). Final ratings were determined from either a single score or a “rolled up median” approach. If a measure was unidimensional or the measure had only one rating for a criterion in an article packet, then this value was used as the final rating and no further calculations were conducted. If a measure had multiple ratings for a criterion across several articles in a packet, we calculated the median score across articles to generate the final rating for that measure on that criterion. For example, if a measure was used in four different studies, each of which rated internal consistency, we calculated the median score across all four articles to determine the final rating of internal consistency for that measure. This process was conducted for each criterion.

If a measure contained a subset of scales relevant to a construct, the ratings for those individual scales were “rolled up” by calculating the median which was then assigned as the final aggregate rating for the whole measure. For example, if a measure had four scales relevant to

In addition to psychometric data, descriptive data were also extracted on each measure. Characteristics included (1) country of origin, (2) concept defined by authors, (3) number of articles contained in each measure packet, (4) number of scales, (5) number of items, (6) setting in which measure had been used, (7) level of analysis, (8) target problem, and (9) stage of implementation as defined by the Exploration, Adoption/Preparation, Implementation, Sustainment (EPIS) model (Aarons et al., 2011).

Phase III: data analysis

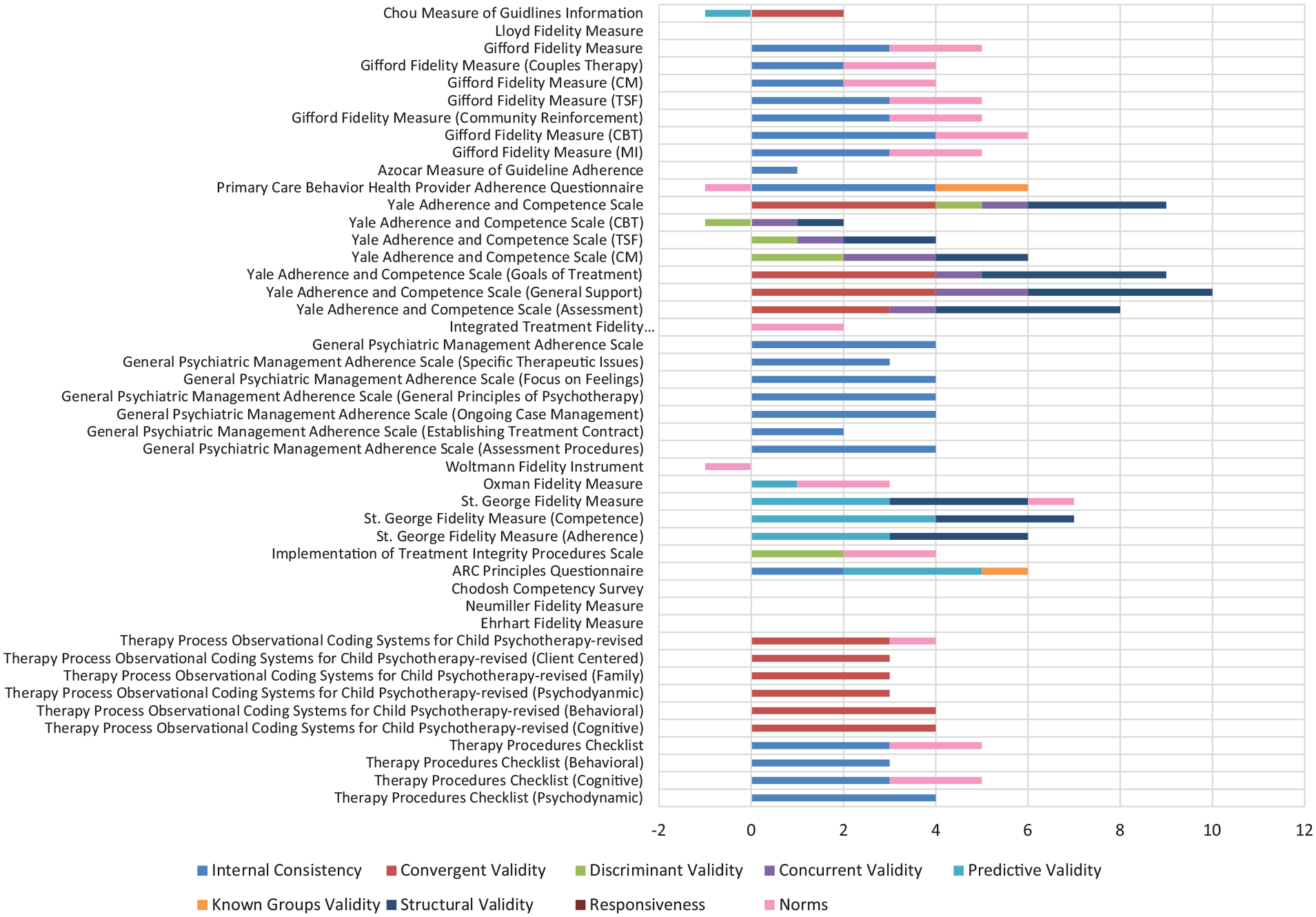

Simple statistics (i.e., frequencies) were calculated to report on measure characteristics and availability of psychometric-relevant data. A total score was calculated for each measure by summing the scores given to each of the nine psychometric criteria. The maximum possible rating for a measure was 36 (i.e., each criterion rated 4) and the minimum was −9 (i.e., each criterion rated −1). Bar charts were generated to display visual head-to-head comparisons across all measures within a given construct.

Results

Following the rolled-up approach applied in this study, results are presented at the full measure level. Where appropriate, we indicate the number of scales relevant to a construct within that measure (see Figures A1–A8 in the Appendix 1 for PRISMA flowcharts of included and excluded studies).

Overview of measures

Searches of electronic databases yielded 150 measures related to the eight implementation outcomes (

Characteristics of measures

Table 3 presents the descriptive characteristics of measures used to assess implementation outcomes. Most measures of implementation outcomes that were suitable for rating were used only once (

Description of measures and subscales.

EPIS: exploration, adoption/preparation, implementation, sustainment.

Availability of psychometric evidence

Of the 150 measures of implementation constructs, 48 were categorized as unsuitable for rating; unsurprisingly the majority of which were cost measures (

Data availability.

Excluding measures that were deemed unsuitable for rating.

Psychometric evidence rating scale results

Table 5 describes the median ratings and range of ratings for psychometric properties for measures deemed suitable for rating (

Psychometric scores summary data.

Individual psychometric ratings.

Leadership and Organizational Change for Implementation (LOCI); San Francisco Treatment Research Center (SFTRC); Attitude, Social norm, Self-efficacy (ASE); Child Parent Psychotherapy (CPP); Bio-behavioral Intervention (BBI); Texas Christian University (TCU); Cognitive Behavioral Therapy (CBT); Twelve Step Facilitation (TSF); Clinical Management (CM); Motivational Interviewing (MI); Alternatives for Families - A Cognitive Behavioral Therapy (AF-CBT); Also known as (AKA)

Acceptability ratings.

Adoption ratings.

Appropriateness ratings.

Feasibility ratings.

Fidelity ratings.

Penetration ratings.

Sustainability ratings.

Acceptability

Thirty-two measures of

The Pre-referral Intervention Team Inventory had the highest psychometric rating score among measures of

Adoption

Twenty-six measures of

The Williams “Intention to Adopt” and Ruzek “Measure of Adoption” measures had the highest psychometric rating scores among measures of

Appropriateness

Six measures of

The Pre-referral Intervention Team Inventory had the highest psychometric rating score among measures of

Cost

Thirty-one measures of implementation

Feasibility

Eighteen measures of

The Children’s Usage Rating Profile had the highest psychometric rating score among measures of

Fidelity

Eighteen measures of

The Yale Adherence and Competence Scale had the highest psychometric rating score among measures of

Penetration

Twenty-three measures of

The Degree of Implementation Form had the highest psychometric rating score among measures of

Sustainability

Fourteen measures of

The School-wide Universal Behavior Sustainability Index-school Teams had the highest psychometric rating score among measures of

Discussion

Summary of study findings

This systematic review identified 150 measures of implementation outcomes used in mental and behavioral health which were unevenly distributed across the eight outcomes (especially when suitability for rating was concerned). We found 32 measures of

Measures were moderately generalizable across populations with the majority of empirical uses occurring in studies providing treatment for general mental health issues, substance use, depression, and other behavioral disorders (

Comparison with previous systematic review

The findings of this updated review suggest a proliferation of measure development for mental and behavioral health in just the past 2 years—66 new measures were identified—with a continued uneven distribution of measures across implementation outcomes. This demonstrated growth in number of measures confirms that significant focus is being dedicated to measuring implementation outcomes. Importantly, those outcomes that some may argue are relatively unique to implementation, such as feasibility, compared with those that are common to intervention, such as acceptability, increased by 10-fold from 2015 to 2019. It is also worth noting that we found fewer measures of acceptability (

While more measures in this new review had psychometric information available (86; 70%) on at least one criterion compared with the measures in the previous review (56; 56%), psychometric information for some criteria, such as discriminant, convergent, and concurrent validity as well as responsiveness, remained limited even despite their criticality for scientific evaluations of implementation efforts. We hope that future adoption of measurement reporting standards prompts more reporting and, perhaps, more psychometric testing. However, overall, this finding illustrates that the field is continuing to grow in its testing and reporting of psychometric properties with more attention to the production of valid and reliable measures. With continued focus on gathering information and evidence for these important psychometric properties, the field may move toward a consensus battery of implementation outcomes measures that can be used across studies to accumulate evidence about what strategies work best for which interventions, for whom, and under what conditions.

The development of “in-house” measures used only once for a specific study contributes to the proliferation of measures that have limited evidence of reliability and validity (Martinez et al., 2014). These measures are typically designed to suit immediate needs of a project and not developed with supportive theory. Of the 150 measures identified, 126 (83%) were only used once in behavioral health care (this included all 48 of the measures deemed not suitable for rating). Of those 78 remaining measures that were suitable for rating, 26 (33%) had available information about internal consistency and scores ranged from “1—minimal/emerging” to “4—excellent.” For convergent validity, seven (9%) measures had information available with scores ranging from “2—adequate” to “4—excellent.” None of these measures had information available for discriminant validity. For concurrent validity, only one (1%) measure had information available and it scored a “1—minimal/emerging.” For predictive validity, 17 (22%) measures had information available with scores ranging from “−1—poor” to “4—excellent.” Only two (3%) measures had information available for known-groups validity and scores ranged from “−1—poor” to “1—minimal/emerging.” For structural validity, only 10 (13%) measures had information and scores ranged from “1—minimal/emerging” to 4—excellent.” For responsiveness, only one (1%) measure had information available and it scored a “1—minimal/emerging.” Finally, 29 (37%) measures had information for norms with scores ranging from “−1—poor” to “4—excellent.” Limiting development of “in-house” measures will also likely increase the ability to accumulate knowledge across studies by deploying common measures.

Limitations

There are several noteworthy limitations to this systematic review. One limitation of the current study is the length of time that has transpired since the original literature searches were completed in 2017. Due to the immense undertaking of the full scope of this R01 project, it took the research team nearly 2 years to screen articles, extract data, apply our rating system, and complete this article. This systematic review is part of a larger project to identify measures of all implementation constructs associated with the Consolidated Framework for Implementation Research Constructs (CFIR) (Damschroder et al., 2009), which are included in this special section. In total, our team conducted 47 systematic reviews over the course of 4 years. Due to this gap in time between when we conducted our searches and when we finalized our data, it is possible that new measures of implementation outcomes were developed that we did not identify. Despite this, our measure forward “cited-by” searches described above were conducted in the early months of 2019, which gives us confidence that we captured all recent uses of the measures we identified in 2017.

Another limitation is that this review focused only on implementation outcomes in mental and behavioral health care. It is possible that reliable and valid measures of these outcomes exist that have not yet been used in this context. It is also possible that some of the measures included in this review have been used outside of mental health or behavioral health care; in that case, the psychometric ratings described above could change, either positively or negatively, with additional evidence from such studies. A coinciding measurement review (Khadjesari et al., 2017) is underway to identify measures of implementation outcomes in physical health care settings. It will be illuminating to discover how their findings compare with the findings in this review.

Finally, poor reporting practices in published articles not only limited the information available about measures’ psychometric properties, but also the completeness of that information for psychometric rating. For example, authors occasionally reported that structural validity was assessed through exploratory or confirmatory factor analysis; however, they did not report the variance explained by the factors or neglected to report key model fit statistics. Likewise, authors sometimes stated that all scales exhibited internal consistency above a certain threshold (e.g., α > .70) rather than stating the exact values. As is the case in all systematic reviews, the consistency and quality of reporting of measurement properties in the included studies influenced the extent to which measures’ psychometric properties could be rated and the level of uncertainty of those ratings. Relatedly, it is worth noting that although some measurement properties could have improved over time through adaptation and refinement, earlier evidence was also considered in our rating summary which may have negatively skewed the quality of burgeoning measures.

Conclusion

This systematic measure review highlights significant progress with respect to the development of implementation outcome measures and assessment of their psychometric properties. Even so, our review makes clear the need for additional measure development and testing both to correct the mal-distribution of measures of implementation outcomes and to enhance the psychometric properties of existing measures. Although some of the measures included in this review are promising and merit further refinement and evaluation, most measures lacked information on critical psychometric properties making it unclear whether they warrant further investment. High-quality measures of outcomes are especially critical for advancing implementation science. A concerted, coordinated effort to develop such measures is needed to gain confidence in the findings of future evaluation efforts.

Footnotes

Appendix 1

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Funding for this study came from the National Institute of Mental Health, awarded to Dr. Cara Lewis as principal investigator. Dr. Lewis is both an author of this article and an editor of the journal,

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institute of Mental Health (NIMH) “Advancing implementation science through measure development and evaluation” (1R01MH106510), awarded to Dr. Cara Lewis as principal investigator.