Abstract

Background

Despite efforts to use standardized taxonomy and research reporting, documenting implementation strategies utilized in community settings remains challenging. This case study demonstrates a practical approach to gather use of and satisfaction with implementation strategies utilized within community-based sites to understand community providers’ perspectives of implementing an early intensive behavioral intervention (EIBI) for children on the autism spectrum across different settings.

Methods

Using a sequential explanatory mixed-methods design, survey and interview data were collected from directors/supervisors and direct providers (n = 26) across three sites (one university and two community-based replication sites). The Implementation Strategies and Satisfaction Survey (ISSS) was administered to identify staff-reported implementation strategy use and satisfaction. Informed by quantitative results, follow-up semi-structured interviews were conducted with a subsample (n = 13) to further understand staff experiences with endorsed implementation strategies and elicit recommendations for future efforts.

Results

Survey results were used to demonstrate frequencies of implementation strategies endorsed by site and role. Overall, staff felt satisfied with implementation strategies used within their agencies. Content analysis of qualitative data revealed three salient themes related to implementation strategy use—context, communication, and successes and challenges—providing in-depth detail on how strategies were utilized, and strategy effectiveness based on community providers’ experiences. Recommendations were also elicited to improve strategy use within “broader” community settings.

Conclusions

The project demonstrated a practical approach to identifying and evaluating implementation strategies used within sites delivering autism services. Reporting implementation strategies using the ISSS can provide insight into community providers’ perspectives and satisfaction with agency implementation strategy use that can generate more relevant and responsive strategies to address barriers in community settings.

Examining community providers’ preferences and experiences with implementation strategies used to facilitate evidence-based practice uptake can broaden our understanding of what, how, and why implementation strategies work in community-based settings ( Chaudoir et al., 2013; Leeman et al., 2017; Proctor et al., 2013). Such efforts have great potential to tailor implementation strategies to address barriers/facilitators typically found in community-based settings.

This case study demonstrates a practical approach using mixed methodology to: (a) gather self-reported use of and satisfaction with implementation strategies to understand community providers’ perspectives of implementation strategy success. Using a new survey, the Implementation Strategies and Satisfaction Survey (ISSS) conjoined with interviews, the study demonstrated a practical approach using standardized language to report strategies used in one university-based site and two community-based replication sites that deliver an early intensive behavioral intervention (EIBI) for children on the autism spectrum. This paper contributes to one of the five priorities to enhance public health impact—improve tracking and reporting of implementation strategies utilized when translating research into practice ( Dingfelder & Mandell, 2011; Powell et al., 2019; Stahmer et al., 2019). This approach emphasizes the importance of understanding context (e.g., community organizations providing services to children on the autism spectrum) to develop strategies that work better for EIBI implementation and scale-up. Understanding community provider's preferences and experiences with implementation strategies can support use of implementation strategies that better fit usual care contexts, with the ultimate goal of improving implementation practice in community-based settings.

Keywords

Implementation science (IS) serves an important role in facilitating the successful uptake of evidence-based practices (EBPs)—programs, practices, and policies proven to be efficacious, effective and/or informed by research—across settings (APA, 2006). Critical to EBP uptake, implementation strategies refer to the actions (or “systematic processes”) utilized to facilitate the implementation and sustainment of practices in usual care settings, thereby promoting healthcare practice changes for broader impact (Bauer et al., 2015; Brownson et al., 2017; Proctor et al., 2013). These strategies influence the success of EBP use and have significant implications for the effectiveness and quality of implementation- and individual-level outcomes. However, ongoing challenges in implementation research and practice limit our understanding of what, how, and why implementation strategies work.

Two challenges include a lack of clarity in conceptualizing and reporting on implementation strategies. Many studies have either not labelled strategies with standardized language or poorly described implementation strategies utilized in their study (Huynh et al., 2018; McKibbon et al., 2010; Perry et al., 2019; Powell et al., 2012, 2015). These inconsistencies have led to poor understanding of the causal pathways of implementation strategies on EBP implementation within a given setting (Lewis et al., 2020; Powell et al., 2015). Current research recommends the use of a standardized language around implementation strategies to develop a more comprehensive understanding of its impact and role on EBP outcomes (Moullin et al., 2020; Powell et al., 2015, 2019).

Efforts to provide conceptual clarity of implementation strategies with definitions have been made by a panel of IS experts through a modified Delphi process (Powell et al., 2015). The Expert Recommendations for Implementing Change (ERIC) study resulted in a compilation of 73 implementation strategies, refining an earlier compilation of categorized discrete strategies (e.g., strategies collapsed into general categories; Powell et al., 2012). Other efforts have developed reporting standards that provide guidelines for researchers and practitioners to detail implementation strategies utilized in studies and further classify strategies into actions and targets (Leeman et al., 2017; Proctor et al., 2013).

To enhance the potential impact of implementation strategies collectively, implementation researchers and practitioners are called to focus on five priorities including “improving tracking and reporting of implementation strategies” (Powell et al., 2019, p. 2). While standards and guidelines for implementation strategies are useful, tracking implementation strategies using standards remains difficult, particularly in community settings (Bunger et al., 2017; Leeman et al., 2017; Lokker et al., 2015). More recent efforts have yielded measures and/or procedures to evaluate and track implementation strategies in community-based agencies, specifically. For example, innovative, pragmatic approaches to track implementation strategies being used in community agencies have involved surveys, process mapping, coding systems, ethnographic procedures, observational methods, and retrospective mapping with archival records (Boyd et al., 2018; Bunger et al., 2017; Fernandez et al., 2019; Huynh et al., 2018; Lewis et al., 2018; Proctor et al., 2019; Rogal et al., 2020; Walsh-Bailey et al., 2021). These practical approaches have allowed for iterative refinement of implementation strategies reportedly used within community settings; yet, quality of use and the associated effectiveness remains unclear. Further, little to no guidance has been provided on tailoring implementation strategies to address determinants typically found in community settings (Fernandez et al., 2019; Waltz et al., 2019).

Without full knowledge of what, how, and why implementation strategies work in community settings, there is a need to track and document strategies that are effective in practice and aligned with IS recommendations (Chaudoir et al., 2013; Leeman et al., 2017). In alignment with one IS priority area (Powell et al., 2019), this study demonstrates an approach for tracking and reporting implementation strategies utilized to translate autism spectrum disorder (ASD) EBPs into practice among community-based agencies (Dingfelder & Mandell, 2011; Stahmer et al., 2019). Moreover, very little is known about community stakeholders’ preferences for implementation strategies utilized for autism-related services (Proctor et al., 2013; Drahota et al., 2021). Staff characteristics may impact perceptions of EBP adoption and utilization, as well as the overall implementation process (Aarons, 2004; Frambach & Schillewaert, 2002; Rogal et al., 2019). The current study conceptualizes implementation strategies using the ERIC compilation to demonstrate one practical, retrospective mixed-methods approach to (a) report use and satisfaction with implementation strategies utilized in a coordinated network of one university-site and two community replication sites delivering an early intensive behavioral intervention (EIBI) for children on the autism spectrum and (b) explore the impact of staff characteristics on reported implementation strategy use to obtain a greater understanding of the implementation process across settings.

Case study

To illustrate this approach, the paper describes a case study focused on the implementation of the Early Learning Institute (ELI) within one university-based and two community-based replication sites. ASD is a lifelong neurodevelopmental disorder impacting around 1.8% of the U.S. population (Maenner et al., 2020). EBPs, including EIBIs, developed for this population can improve both short- and long-term outcomes for young autistic children (Estes et al., 2015; Landa, 2018). The ELI is an EIBI founded upon applied behavior analysis and designed to help young autistic children develop adaptive and functional skills at an early age by fostering social, communication, and independent living skills. Notably, ELI was developed to expand access to EIBIs for families receiving services through Medicaid (Plavnick et al., 2020). As many children receive services from community-based agencies, effectively translating EIBIs to this context can ensure service consistency and quality, which is crucial for the successful development of young autistic children (Plavnick et al., 2020). Though ELI initially began in a university-based setting in August 2015, staggered implementation was replicated at two community settings over the next 2 years (August 2016, 2017). To read more about the development of ELI, please refer to Plavnick et al. (2020).

Methodology

This case study utilized a sequential explanatory mixed methods (Ivankova et al., 2006; Palinkas et al., 2011; Pluye et al., 2018) structured in two phases (QUAN→QUAL). In phase one, information related to implementation strategy use from staff at the three sites was collected via a survey. In phase two, semi-structured interviews were conducted with a subsample of participants to capture experiences with endorsed implementation strategies and elicit suggestions for improving future implementation efforts in community settings. Both data strands served the function of expansion, where quantitative data evaluated what implementation strategies were used to provide breadth, and qualitative data further elaborated on how strategies were used to provide depth of understanding (Palinkas et al., 2011). This study was part of a larger project assessing implementation outcomes and determinants to the implementation of ELI (Bustos, et al., 2021). The current paper focuses on data related to reported implementation strategy use and community provider experiences.

Qualitative data were quantitized and merged with quantitative results to create a joint display table—a useful method to identify points of convergence and divergence in participant responses (Aarons et al., 2014). The joint display provides ever-coded (total number of transcripts with assigned codes) and frequency (total number of times codes were assigned throughout transcripts) counts, supporting the salience of the emergent themes (Landrum & Garza, 2015).

Quantitative phase

Measure

Implementation Strategies and Satisfaction Survey (ISSS)

The ISSS was developed in 2015 by the third author to obtain information about discrete and general implementation strategy use and satisfaction. Implementation strategies included in the ISSS were based on existing compilations and categorization of strategies (Powell 2012; 2015), as well as additional strategies thought to facilitate sustainment of innovations (cf. Hailemariam et al., 2019). First, participants endorsed (yes/no) whether their site used the general implementation strategies (i.e., Planning, Financial, Restructuring, Implementation Testing, Quality Management, Sustainment). If a general implementation strategy category was endorsed, participants were asked to endorse whether their site used each of the discrete strategies that are subsumed within the general category (e.g., “Revise professional roles” is a discrete implementation strategy within the “Restructuring” general implementation strategy category). Further, participants rated their level of satisfaction with each endorsed strategy (1 = Extremely Dissatisfied; 6 = Extremely Satisfied) (see Appendix I). Additionally, the ISSS was adapted in consultation with the ELI director to remove strategies categorized as “Education Strategies” (n = 16 discrete strategies; Powell et al., 2012) and include items categorized as “Implementation Testing Strategies” (n = 3; Drahota 2015). In early summer, respondents were asked to select implementation strategies used at the agency in the past academic year. Demographic information was collected on provider and organizational characteristics (Table 1).

Participant demographics.

ASD, autism spectrum disorder.

Case study illustration: quantitative methods

Participants

Directors, supervisors, and providers knowledgeable about the EIBI implementation process at each site during the 2018–2019 academic year were recruited to participate (Palinkas et al., 2015). Eligibility criteria included: 18 years or older; employment at one of the sites involved; and proficiency in reading and speaking English. A total of 26 staff members participated in the quantitative phase. Across sites, the sample included 20 direct providers (behavior technicians [BTs]), three board certified behavioral analysts (BCBAs), and three site directors. Participants were later categorized as “BTs” or “Directors/Supervisors” (including BCBAs; Table 1).

Procedures

Michigan State University IRB approved all study procedures (#STUDY00000234). Research personnel recruited site directors, providing information about the study and an opportunity to ask clarifying questions. Once site directors agreed to participate, site staff were recruited through recruitment flyers and presentations. Staff email addresses were collected. Staff were emailed the online consent form to obtain written consent, screening questionnaire, and a link to the 10-min Qualtrics survey. Participants completing the survey received a $10 honorarium.

Data analysis

Implementation strategy endorsement and reported satisfaction was examined by role, then by setting (community vs. university) using descriptive and exploratory statistics. Frequencies of endorsed strategies were transformed to count variables; endorsement scores were calculated for each general and discrete category. For each general category, satisfaction scores were calculated by averaging discrete strategy satisfaction scores that comprised the general category (e.g., “Build coalition” is a discrete strategy within the general “Planning” implementation strategy). Continuous data was normalized using the fractional rank method in SPSS (Solomon & Sawilowsky, 2009). Independent samples t-tests were utilized for mean comparisons by role and setting. Correlational analysis using Spearman's Rho evaluated associations among variables—both continuous and discrete level data (Prion & Haerling, 2014).

Case study illustration: quantitative results

Implementation strategies by role

Results indicate that Directors/Supervisors and BTs most commonly reported use of quality management (100%; 65%), restructuring (100%; 60%) and sustainment (100%; 50%) general strategies (Table 2). Independent samples t-tests of count scores demonstrated significant mean differences by role for all endorsed general strategies (p's < .05), with the exception of implementation testing strategies. Thus, Directors/Supervisors endorsed higher rates of general implementation strategy use within the past year than BTs (Table 3).

Frequencies of endorsed general and discrete implementation strategies and average rate of perceived satisfaction by role.

Continuous data was normalized using fractional rank method.

BT, behavior technician. Bolded entries indicate the “general strategy” that aggregates all discrete strategies listed below it.

T-test comparisons of count scores for implementation strategies by role.

*p < .05, **p < .01.

Implementation strategies by setting

For both university- and community-based sites, the most commonly reported general implementation strategies included quality management (21%; 21%), sustainment (19%; 21%), and restructuring strategies (19%; 21%). Financial-based strategies were least endorsed in community sites, while planning strategies were the least endorsed at the university site. See Table 4 for frequencies in each discrete category. Independent samples t-test comparisons of general implementation strategies count scores only indicated significant mean differences in financial-based strategies endorsed, with the university site endorsing more use than community sites (p = .024; Table 5).

Frequencies of endorsed general and discrete implementation strategies and average rate of perceived satisfaction by setting.

Continuous data was normalized using fractional rank method. Bolded entries indicate the “general strategy” that aggregates all discrete strategies listed below it.

T-test comparisons of count scores for implementation strategies by setting.

*p < .05, **p < .01.

Satisfaction

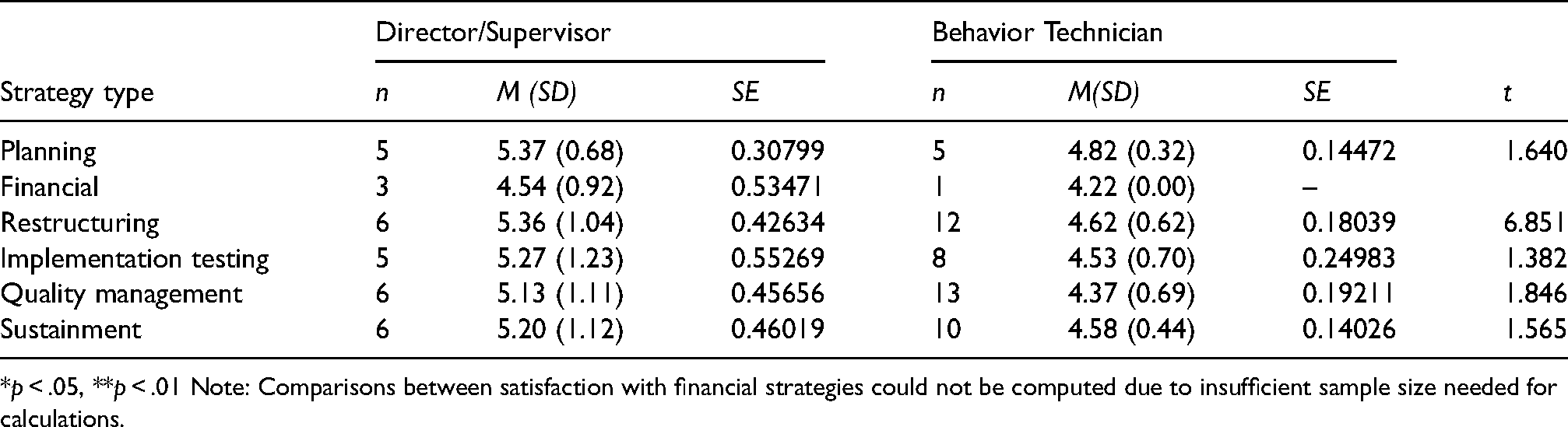

Overall, Directors/Supervisors were very satisfied with endorsed general implementation strategies, while BTs felt somewhat satisfied with endorsed general implementation strategies. Directors/supervisors and BTs felt somewhat less satisfied with financial-based strategies than other strategies. Moreover, both university and community settings reported high levels of satisfaction (ranging from somewhat satisfied to very satisfied) with endorsed implementation strategies. Results from two independent samples t-test indicated no significant differences between roles or settings in rates of satisfaction with endorsed implementation strategies (p's>.05; Tables 6 and 7).

T-test comparison of mean satisfaction with implementation strategies endorsed by role.

*p < .05, **p < .01 Note: Comparisons between satisfaction with financial strategies could not be computed due to insufficient sample size needed for calculations.

T-test comparison of mean satisfaction with implementation strategies endorsed by setting.

*p < .05, **p < .01.

Note: Comparisons between satisfaction with financial strategies could not be computed due to insufficient sample size needed for calculations.

Spearman's Rho indicated negative correlations between role and total number of general strategies, planning, financial-based, restructuring, quality, and sustainment strategies (p's < .05), but not implementation-testing strategies (p > .05). Employment status was positively correlated with implementation-testing (r = .415, p = .035). Total number of general implementation strategies endorsed was positively correlated with perceived satisfaction of financial-based, restructuring, quality, sustainment implementation strategies (p's < .05; Table 8). Thus, a higher number of endorsed strategies was related to higher rates of satisfaction, depending on the general category.

Spearman's Rho correlations.

*Correlation is significant at the 0.05 level (two-tailed).

**Correlation is significant at the 0.01 level (two-tailed).

ASD, autism spectrum disorder.

Qualitative phase

Measure

Semi-structured interview

The semi-structured interview protocol was designed to expand on quantitative findings to explain and contextualize participants’ perspectives on and experiences with implementation strategies used within the past year at their sites as well as elicit suggestions for improving implementation in community settings. Examples of prompts included: “What implementation strategies worked well?” and “Would other strategies work better?” To facilitate these discussions, the interviewer provided participants with a copy of the ERIC compilation to ensure common terms/definitions for implementation strategies.

Case study illustration: qualitative methods

Participants

Using purposive sampling, all participants who completed the survey were invited via email to participate in the qualitative phase. Thirteen participants (50%) agreed to participate (nine BTs, four Directors/Supervisors). Qualitative data saturation was determined at the data collection stage using consensus procedures after the completion of 13 interviews. The first and second author agreed that data collected within the interviews were no longer eliciting “new information,” such that any potential codes or themes had already been collected (Saunders et al., 2018).

Procedure

The first author, who had certified qualitative research expertise, conducted individual, semi-structured interviews via Zoom. These interviews took place in confidential locations at the participants’ convenience with verbal consent obtained. Interviews ranged from 21:45 min to 1:07:11 min (M = 46.87, SD = 15.48). All participants received a $15 honorarium for their participation.

Data analysis

Qualitative data was analyzed using inductive coding and content analysis to expand on quantitative findings by providing context for strategy use and satisfaction within each setting (Bernard, 2006; Hsieh & Shannon, 2005; Vaismoradi & Snelgrove, 2019). Interview data was transcribed, de-identified, and verified for accuracy. The first and second authors developed the coding schema by independently reviewing two transcriptions for emergent codes. The coding schema included code definitions and exemplar text to guide the coding process. After the coding schema was finalized, two additional coders were trained to conduct the qualitative coding. All four coders were randomly assigned three transcripts to independently code for reliability procedures. Once consensus was reached and reviews were considered reliable, two pairs of coders were randomly assigned to independently code 6–7 interviews; all transcripts were double-coded. Coding discrepancies were resolved with consensus meetings. Codes and categories revealed underlying meaning and patterns found within the data. Emergent themes were identified by assessing frequency and salience of codes within and across transcripts. All data was analyzed using MAXQDA software. Additionally, the first and second author reviewed the consolidated criteria for reporting qualitative studies (COREQ) to ensure inclusion of key elements (Tong et al., 2007).

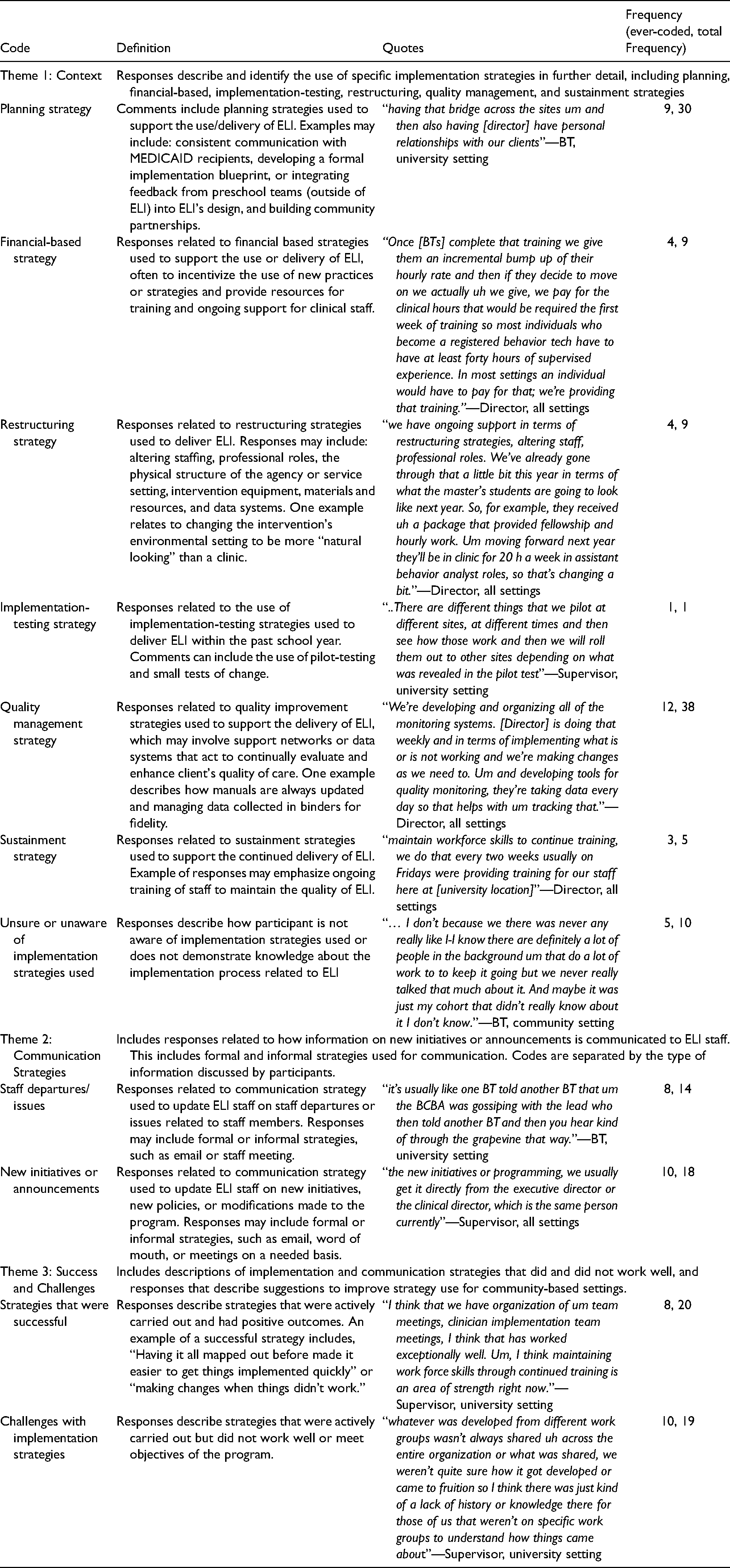

Case study: qualitative findings

Content analysis of semi-structured interviews revealed three emergent themes related to implementation strategy use: (1) Context, (2) Communication Strategies, and (3) Successes and Challenges. The research aims and questions guided the development of themes and categories. Themes, categories, and illustrative quotes are displayed in Table 9.

Qualitative codes, definitions, and frequency.

Context

This theme expanded on and contextualized the use of implementation strategies endorsed on the ISSS. Subcategories were developed based on ISSS general categories: planning, financial, implementation testing, restructuring, quality management, and sustainment strategies. Qualitative data illustrated how strategies were utilized in practice, providing context and insight into variations across roles and sites.

Planning and quality management strategies were most frequently discussed throughout the interviews. Activities described for planning strategies included maintaining relationships among staff through regular “team meetings,” preexisting relationships with implementation sites, and developing and maintaining relationships with clients and families through “family training.” BTs and Directors/Supervisors both discussed the role of preexisting relationships in identifying new implementation sites, and the importance of maintaining consistent communication with families and community partners throughout implementation to ensure buy-in. One Director/Supervisor shared that all sites are “very open to visitors, which is very difficult, but that really helps sell the program…it really is great for our community partnerships.”

Both BTs and Directors/Supervisors discussed the use of various quality management strategies, including collecting and auditing client-level data, receiving feedback from the executive director, accessing new and ongoing staff training, maintaining communication and eliciting feedback from client's families, capturing and sharing local knowledge, and developing and utilizing various monitoring systems. Among BTs, staff training was viewed as the most influential for improving implementation skills across sites: “We would also have training…that were site specific that were more related to individual clients, to talk about what was happening at that specific site and I think that the group trainings were very beneficial because we were learning about the concepts and applying them and practicing them.” Conversely, Directors/Supervisors highlighted efforts to develop new monitoring systems for ongoing feedback: “We're developing and organizing all of the monitoring systems. [Executive director] is doing that weekly and in terms of implementing what is or is not working and we're making changes as we need to. And developing tools for quality monitoring, they're taking data every day so that helps with tracking.”

Financial-based and restructuring strategies were the least discussed throughout the interviews and least endorsed in the ISSS. Nonetheless, qualitative interviews contextualized staff's limited experiences in use, ranging from brief discussions about grants, maintaining a budget for professional development, and utilizing monetary incentives for BTs. Of note, there appeared to be less awareness of these strategies being used in practice. For instance, one BT (university site) stated “I assume there's research funding given to ELI which allows us to do what we do and everything.” In terms of restructuring strategies, staff highlighted daily adaptations made to the physical structure in community sites and transferring files and data to an electronic server for better assessments. One BT shared, “Upon arriving at work we'd set up the room in a way that we think is helpful for children to learn and where children are able to have enough space to be by themselves to learn and not get distracted by anything else.”

Sustainment strategies were described in the context of ongoing staff training and professional development, incentivizing strategies to maintain staff buy-in, and continued adaptations to implementation sites. One Director/Supervisor highlighted the frequency and context of strategies used across sites:“Maintain workforce skills to continue training, we do that every two weeks… we try incentivize training for people… one thing that we're doing for all of our staff we received some gift funding and that with some of our professional development funds that we had we providing a [EBP] training for everybody and we're getting…both of the individuals who came up that curriculum are delivering the training for all of our staff…”

Communication strategies

This theme described how information (e.g., new initiatives, staff departures, announcements, feedback) was communicated to program staff through formal and informal communication strategies. Overall, BTs and Directors/Supervisors across sites described various strategies used for feedback, “Rich opportunities and feedback comes in a variety of ways: written, in person, in the moment, after the fact, you know formal trainings, casual conversations, all kinds of ways,” as well as general information being disseminated through different individuals, “It just really depends on the information, who that comes through.”

Formal communication strategies included monthly performance evaluations, live feedback/supervision, weekly meetings with the executive director, and morning and afternoon announcement meetings. Directors/Supervisors (all sites) also stated that specific information was typically communicated to staff by specific individuals. For example, one director shared, “New initiatives, programming changes, those usually come through our executive director,” while “staff issues usually come through…our HR person.”

Informal communication strategies included communication through word of mouth, emails, phone call or text messages, checking in with Directors/Supervisors, and informal feedback structures. Of note, informal feedback structures were viewed as particularly helpful for improving implementation skills: One BT shared, “Informal feedback was really helpful so…the BCBA would pop in and watch a few things and either give constructive, positive reinforcement…and… some constructive feedback.”

Successes/challenges

This theme described strategies that did and did not work well and provided suggestions to improve strategy use for community settings.

Strategies That Were Successful described successful experiences with implementation strategies within the past year. When asked about which implementation strategies worked well, two Directors/Supervisors (all sites) discussed strategies related to adapting the environment, establishing a positive and nurturing environment for staff, developing tools for quality monitoring, and offering continued training to staff. For example, a Supervisor (university site) stated, “I think maintaining workforce skills through continued training is an area of strength right now.” Six BTs (all sites) discussed staff training, regular communication with the executive director, workgroups, and consistent team meetings as strategies that worked well. One BT (university site) explained that “meetings at the beginning and end of day, and then we do longer meetings on Fridays when we don’t have [clients] and I think having those full team sessions…at the site is incredibly helpful and bouncing ideas off each other” were effective implementation strategies to increase knowledge and skills to deliver the EIBI by learning from one another.

Challenges with Implementation Strategies captured staff experiences with strategies that did not work well or meet intended objectives. Three Directors/Supervisors (all sites) discussed challenges in implementing workgroups, lack of structure for receiving client feedback, not having clearly defined staff roles, and difficulty maintaining communication across various staff levels. For example, a Director (university site) explained, “It's sometimes really hard to get feedback on things like patient and consumer kind of things…it's hard to get any feedback and then what feedback we do get is sometimes hard to decipher.” Related to communication among staff, one Director (all sites) stated, “administration…lost the consistent contact and communication with the clinical group.” Seven BTs (all sites) discussed challenges related to not having enough time or access to meet with supervisors, limited staff training, and communication challenges related to treatment changes or updates made for specific family cases. For instance, one BT (community site) described lack of communication leading to limited preparation: “It wasn't as clear about what was actually going on in the meetings or what the family's really interested in so we would know…about the meetings happening but then all the sudden the families would just come into the room and we weren't as prepared.”

Staff also identified opportunities for improvement and provided suggestions to improve strategy use for community settings in the future. Areas for improvement included (1) improving feedback/supervision and (2) improving communication among program staff, school staff, and parents. Eight BTs and three Directors/Supervisors (all sites) made suggestions to provide feedback for those in leadership positions, increase access to supervisors, and increase the consistency and amount of feedback and supervision opportunities. Overall, all BTs indicated that feedback and supervision “definitely could happen more.” Five BTs and three Directors/Supervisors (all sites) suggested including more staff training with a focus on communication skills to work with community members outside the program staff and establishing clearer guidelines for communication shared via email and in meetings (e.g., content and/or frequency). Lastly, one BT (community site) emphasized the importance of communicating more information about the overall purpose of the program “…more meetings where they are laying out what the purpose is or what purposes some of the tasks are for the children.”

Discussion

The case study demonstrated the use of sequential mixed methods (QUAN→QUAL) to highlight frequency of and experiences with implementation strategies utilized in one university- and two community-based settings. A quantitative survey of implementation strategy use (ISSS) provided information on the range of strategies reportedly used to implement ELI, along with perceived satisfaction with endorsed strategies within the preceding year. Qualitative interviews then provided context underlying implementation strategy use, expanding on how these strategies were used, what did or did not work well, and suggestions to improve future implementation strategy use in community settings.

Notably, findings illustrated that all three sites delivering the ELI program utilized multiple implementation strategies, rather than utilizing one discrete strategy. For instance, regardless of setting, sites most often endorsed three general categories of strategies: quality management, sustainment, and restructuring strategies. Further, many discrete strategies were reportedly utilized within each general category and varied by setting. While some studies have shown that there are advantages to using multi-faceted strategies versus one discrete strategy for more effective implementation, the evidence is mixed (Powell et al., 2019; Squires et al., 2014). However, these findings emphasize the need to consider context in determining the appropriate number of strategies, as well as expected barriers to the implementation process for a given setting. Other studies have suggested that implementation strategies may be reported as endorsed but not implemented in a systematic manner, particularly in community settings (Drahota et al., 2021). Future studies are encouraged to explore the fit of implementation strategies to agency characteristics as well as fidelity of implementation strategy use to broaden our understanding on how the effectiveness of strategies utilized in community sites relies on the number of strategies used simultaneously, fit, and/or fidelity of strategy use (Powell et al., 2017; Slaughter et al., 2015).

Interestingly, financial strategies were the least endorsed across settings, with significant mean differences found between settings. Although these strategies were reportedly used less frequently than other implementation strategies, the university site tended to report more use of financial strategies than community sites. This is worth noting given prior evidence on how financial strategies may effectively facilitate EBP implementation, particularly in behavioral health care systems providing disability services (Dopp et al., 2020).

Implementation strategies and role

Findings highlighted how role is related to the endorsement of implementation strategy use. In the case study, Directors/Supervisors consistently endorsed significantly more implementation strategies than BTs regardless of category. Role also demonstrated a significant negative correlation with the number of general implementation strategies, planning strategies, financial-based strategies, restructuring, quality, and sustainment strategies endorsed. Overall, these findings suggest a unidirectional relationship between role and implementation strategy endorsed use. Prior literature on staff roles consistently highlights the direct influence of supervisors on implementation climate (Bunger et al., 2019; Guerrero et al., 2020; Sterba et al., 2020). Given this, it is possible that Directors/Supervisors influenced implementation climate and BTs perspectives on implementation strategy use leading to distinct implementation strategy reporting. Further, significant relationships were found between the total number of general implementation strategies endorsed and perceived satisfaction with their utilization. That is, staff who endorsed a higher number of general implementation strategies also tended to endorse higher rates of satisfaction. These relationships are evidenced in the literature, demonstrating that staff characteristics—role, perception, and/or experiences with prior implementation practices—and implementation processes are related and can potentially impact implementation quality and processes (Bunger et al., 2019; Guerrero et al., 2020).

Consequently, it is important to understand the extent to which community stakeholders are actively involved or aware of implementation strategies utilized in their setting (Slaughter et al., 2015). For instance, qualitative findings indicated that some staff were unaware of strategies utilized. Modest endorsements of implementation strategies from BTs when compared to Directors/Supervisors (from the survey data) also reflect this uncertainty. These findings suggest that staff across levels may lack awareness of implementation strategies being used to integrate ELI into their organizations. These findings warrant discussion about whether implementation data should be collected from all stakeholders or the most appropriate stakeholder, particularly if supervisors were primary decision-makers. However, limiting our scope to the most appropriate stakeholder (e.g., those in higher managerial roles) may limit our understanding of team dynamics in implementation strategy use as well as perceived satisfaction with their use in practice. Moreover, the dynamics of organizational influence is driven by the social exchange of staff from various roles and responsibilities (Gottfredson & Aguinis, 2017), underscoring the value of capturing implementation strategy use among different staff involved with the EBP. Thus, increasing staff awareness of implementation strategies being utilized to facilitate EBP implementation and sustainment may be an important component to address in future implementation efforts for practice. Other tracking tools for implementation strategy utilization have also called for more inclusive stakeholder input to align preferences with improved outcomes, as well as engaging all stakeholder groups earlier on in the process (Walsh-Bailey et al., 2021).

Communication strategies

Qualitative findings also highlighted important communication strategies utilized throughout the implementation process. Findings indicated that communication around new initiatives within the organization, staff changes, and other announcements were disseminated to staff in formal and informal ways. Previous research illustrates the importance and value of timely and effective communication (e.g., providing all relevant staff with information regarding plans or impact of change) to foster favorable attitudes for EBPs (Drahota et al., 2021; Grau, 1994). Without the use of communication strategies, staff may be resistant to organizational changes, such as the implementation of new EBPs. However, given the importance of communication strategies in EBP implementation (Drahota et al., 2021; Lanham et al., 2009; Manojlovich et al., 2015), future efforts in community contexts should consider the utilization of more systematic and formal communication strategies, as this may facilitate staff buy-in and support.

Taken together, the methodological approach applied in this case study illustrates one method for tracking implementation strategy use and eliciting stakeholder perspectives regarding implementation strategies, within the context of both community and university based settings providing services to youth on the autism spectrum. These findings can inform next steps to improve tracking and reporting for EIBI implementation of autism services provided in community settings. For example, findings can be used to generate a list of strategies actively utilized in practice to assess variations across roles and sites and to identify areas for support. Site directors may also use the list of strategies to create a quality improvement checklist to determine whether the number of implementation strategies used may be effective or whether all sites are using the same quality of strategies. Additionally, findings highlight how strategies, as named currently, are often misunderstood, particularly for community agencies providing ASD treatment. Although we attempted to clarify strategies by providing standardized definitions, community providers were still uncertain in how implementation strategies were conceptualized. This prompts future research and practice to tailor implementation strategies that fit specific community settings. Incorporating community-based participatory principles or human centered design into implementation strategies to co-create with providers or other participants may be most beneficial to improve implementation of ASD treatments delivered through community settings.

Limitations

First, the study included a relatively small sample size, which may have generated inflated statistical findings. Future studies with larger sample sizes (above 30) are needed to confirm whether statistical results can be replicated with different parameters. Thus, findings may not be generalizable to other ASD-CBOs or broader populations. However, great effort was taken to normalize the data and limit analysis assumptions. Second, the study was exploratory in its approach and attempted to capture naturally occurring processes of reported implementation strategy use. Therefore, results cannot ascertain causality of any kind. Third, during interview procedures, community providers interpreted the standardized definitions for the ERIC compilation of implementation strategies differently than expected (e.g., many BTs discussed buy-in strategies within the Planning category but were uncertain whether it was appropriate for the discussion). Along with recall bias due to timing of the surveys, participants may have been limited in their ability to report on strategy use. Importantly, this study did not utilize all strategies in the updated glossary of the ERIC compilation or fully expand on details from Proctor's guidelines for reporting. While these findings may direct next steps to enhance definitions for clarity and relevance in the context of autism services (Cook et al., 2019; Perry et al., 2019; Proctor et al., 2013), future studies should utilize the updated compilation to more accurately explore implementation strategy use and satisfaction within this context, and utilize the guidelines to increase the potential for replication in both research and practice.

Contributions

This paper contributes to one of the five priorities to enhance the impact of implementation strategies by describing a practical approach to “improve tracking and reporting” of implementation strategy use within university- and community-based agencies serving young children on the autism spectrum. Tying implementation strategies to the ERIC compilation helped synthesize and communicate information about reported implementation strategy use with a shared language, a recommendation asserted to enhance the application of implementation efforts across settings (Moullin et al., 2020). The study's approach emphasizes the importance of understanding community context to develop successful strategies for EIBI implementation and scale-up (Brookman-Frazee & Stahmer, 2018; Nahmias et al., 2019). By involving mixed methods, findings revealed a feasible, useful, and quantifiable measure of reported strategy use and then contextualized these with qualitative details from ASD providers, imparting details on the what and how of implementation strategy use (Palinkas et al., 2011). Directors in agencies serving children on the autism spectrum can make use of this practice through ongoing discussions about strategies being utilized (e.g., monthly/bimonthly meetings to discuss successful use of strategies or ongoing process evaluations with the integration of community providers’ voices). As reflected in our approach, understanding community provider's preferences by eliciting their experiences and input directly can help develop strategies that may readily fit into implementation-as-usual processes, with the ultimate goal of facilitating effective implementation in community settings (Leeman et al., 2017; Powell et al., 2015).

Footnotes

Funding

This work was supported by the Michigan Department of Health and Human Services under Grant M1299M.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.