Abstract

Objective

To synthesize the self-reported prevalence of scientific misconduct in health-related research through a systematic review and meta-analysis, including a broader range of unethical practices than previous investigations.

Methods

A systematic search was conducted across six electronic databases and three sources of gray literature. Observational studies reporting the prevalence of scientific misconduct among researchers, students, or health professionals were included. The methodological quality was assessed using the Joanna Briggs Institute checklist, and a random-effects meta-analysis was performed using the Freeman-Tukey double arcsine transformation.

Results

Twenty-nine studies were included, totaling 17,919 analyzed responses. The most prevalent form of misconduct was honorary authorship (32%; 95% CI: 21%–44%), followed by plagiarism (28%; 95% CI: 12%–47%), data falsification (12%; 95% CI: 6%–19%), data fabrication (11%; 95% CI: 6%–19%), and methodological manipulation (4%; 95% CI: 2%–7%). High heterogeneity was observed for most outcomes (I2 ≥ 95%), except for methodological manipulation (I2 = 62%). No evidence of publication bias was found (p > 0.05).

Conclusion

Scientific misconduct remains a widespread issue in health research. The findings underscore the need for institutional policies that promote research integrity, transparent authorship criteria, and ethical training. Despite the potential for underreporting due to self-reported data, the results provide an important overview of the extent and types of misconduct present in the current scientific practice.

Keywords

Introduction

Scientific misconduct refers to the manipulation of collected and analyzed data, which may compromise the results of the research, reducing or nullifying the veracity of the information presented. 1 This practice compromises the reliability of the science and researchers involved, as well as posing risks to the population, especially in areas such as health, in which decisions are largely evidence-based. 2

Data manipulation can occur intentionally or accidentally, resulting from methodological failures or data analysis. Among the forms of scientific misconduct are the falsification of data, the manipulation of methods and results, and plagiarism. Plagiarism comprises the unreferenced use of parts or all of third-party studies, while self-plagiarism refers to the reuse of own studies previously published without proper citation. 3

Another factor that may compromise data integrity is the conflict of interest, which occurs when personal, professional, or institutional interests influence the researcher’s judgment. In such cases, there may be intentional distortion of the results in order to meet certain expectations or objectives. Given the relevance of the theme, different initiatives have been proposed by scientific and editorial institutions to curb these practices and preserve the integrity of research. 4

A systematic review with meta-analysis investigated the prevalence of scientific misconduct, both self-reported and non-self-reported, in the biomedical area. The analysis focused on practices such as falsification, data fabrication and plagiarism, revealing high rates, with a significantly higher prevalence among non-self-declared cases. 5 However, the search strategy adopted was not comprehensive, limited to the consultation of a single indexed database, which may have compromised the representativeness of the results. In addition, scientific misconduct remains a highly relevant issue, as it undermines the reliability of research, weakens public trust in science, and can negatively influence clinical decisions and public policies. Although widely discussed, its true extent is difficult to determine because many cases are not detected or formally reported. Growing academic pressure to publish and evaluation systems focused on productivity rather than research integrity may contribute to the persistence of such practices. Therefore, expanding the current evidence with broader and more rigorous approaches is essential to better understand the phenomenon and support actions that promote research integrity.6,7

Therefore, this systematic review aimed to synthesize the evidence on the prevalence of scientific misconduct in health research and to expand the scope of analysis by including a broader range of inadequate practices in scientific work. In addition, it sought to answer the following research question: What is the self-reported prevalence of scientific misconduct among researchers, students and health professionals involved in health-related research?

Methods

The protocol of this systematic review was elaborated according to the guidelines of the Preferred Report Items for Systematic Reviews and Meta-Analysis (PRISMA). 8 The registration was carried out in the International Prospective Register of Systematic Reviews (PROSPERO) database under number CRD42021245251.

Eligibility criteria

The eligibility criteria were defined according to the PEOS framework. We included observational cross-sectional studies that investigated the prevalence of scientific misconduct among researchers, professionals, and undergraduate students in the health field, regardless of their specific training. Eligible studies had to involve participants actively engaged in health research and report the self-reported prevalence or frequency of practices such as data fabrication, data falsification, plagiarism, and honorary authorship, whether committed or witnessed by the respondents. No restrictions were applied regarding gender, ethnicity, or age group. Studies were excluded if they did not involve health researchers, did not provide prevalence data, focused exclusively on knowledge, perceptions, or understanding of scientific misconduct without reporting the prevalence of committed or witnessed practices, or belonged to other publication types, such as reviews, letters, books, conference abstracts, case reports, case series, opinion articles, or technical reports.

Sources of information and research strategy

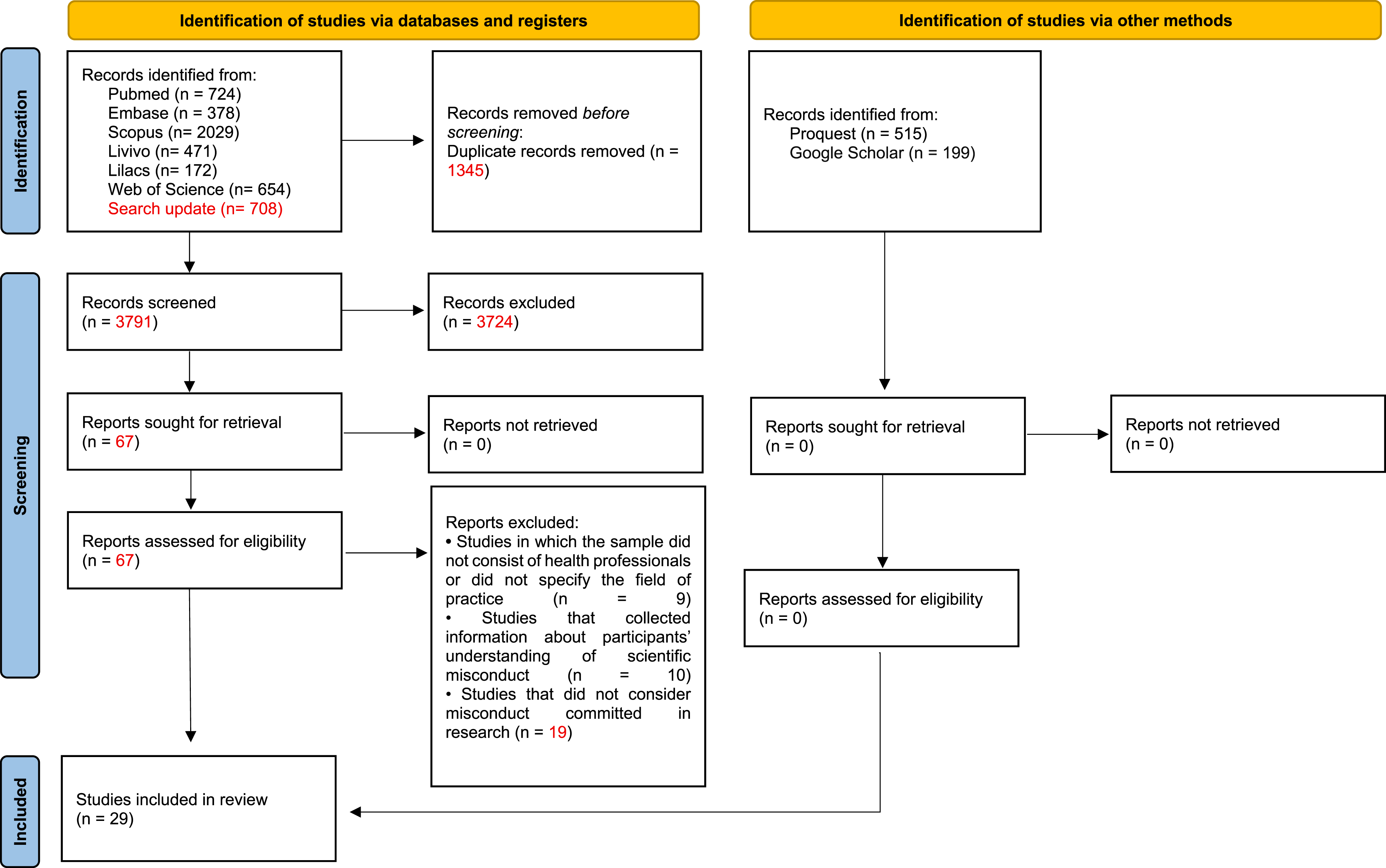

For the identification of the studies, structured searches were performed using controlled descriptors and free terms in the following databases: Latin American and Caribbean Literature in Health Sciences (LILACS), PubMed/MEDLINE, Scopus, LIVIVO, Embase and Web of Science. Gray literature was explored through sources such as Google Scholar, OpenGrey and ProQuest Dissertation & Thesis. Additionally, manual searches were performed in the reference lists of the included studies (Figure 1), with the help of the Citation Chaser software. The EndNote X20 reference manager (Thomson Reuters, Philadelphia, PA) was used to import references and eliminate duplicates. Database searches were conducted in December 2023 and updated in March 2025. The complete search strategies for all databases are detailed in Appendix 1. Flowchart of literature search and selection criteria. *Consider, if feasible to do so, reporting the number of records identified from each database or register searched (rather than the total number across all databases/registers). **If automation tools were used, indicate how many records were excluded by a human and how many were excluded by automation tools. Source: Page MJ, et al. BMJ 2021;372:n71. doi: 10.1136/bmj.n71.

In addition, the term ‘epidemiology’ was included to increase search sensitivity and identify observational surveys reporting prevalence or frequency of scientific misconduct, even when such studies were not explicitly labeled as surveys or questionnaires.

Selection process

The selection of the studies occurred in two phases. In phase 1, two independent reviewers blindly examined the titles and abstracts of all references identified in the electronic databases, based on the previously defined inclusion criteria. Studies that did not meet the criteria were excluded. In phase 2, the same reviewers evaluated, also independently and blindly, the full texts of potentially eligible articles. Both reviewers analyzed the reference lists of the included studies manually. To enable independent screening in both stages, the Rayyan Intelligent Systematic Review (https://www.rayyan.ai) website was used. Although the Rayyan platform includes optional artificial intelligence tools to suggest study inclusion or exclusion, these functionalities were not used. The software was employed exclusively to blind decisions between reviewers and to organize the screening process; all eligibility judgments were performed manually by the reviewers.

In all evaluations, reviewers remained blind to each other’s decisions, and any disagreements were resolved by consensus with the participation of a third reviewer. To ensure adequate calibration among the evaluators, the Kappa coefficient of agreement was previously calculated, and the systematic reading was started only after obtaining a value over 0.7, indicating satisfactory agreement.

Data collection process

Two reviewers independently extracted data from the manuscripts using a pre-defined data extraction form. Any discrepancies were resolved by discussion or, where necessary, by consulting a third reviewer. The following information was collected: authors, year of publication, country of origin, title, abstract, typical characteristics of the sample (including size and origin), qualifications of the interviewees, most prevalent behaviors observed, and the criteria used for their evaluation.

Data items

Data concerning the frequency (absolute or relative) of scientific misconduct practices committed or witnessed were extracted from the included studies, as well as the corresponding sample size of each study and of each outcome evaluated, according to the availability of the information in the respective articles.

Assessment of the methodological quality

The methodological quality of the included studies was evaluated by two independent reviewers using the Joanna Briggs Institute Critical Appraisal Checklist for cross-sectional studies. 9 The methodological quality was classified into four categories: low, moderate, high and not applicable. The classification was determined according to the proportion of “yes” responses in the evaluated domains: high quality when ≥70% of responses were “yes,” moderate between 50% and 69%, and low when ≤49%. After the evaluation, the results were compared between the reviewers, and potential differences were resolved by consensus, with the participation of a third reviewer, when necessary. The graphical visualizations were generated with the help of the Robvis website (https://mcguinlu.shinyapps.io/robvis/).

Effect measures and synthesis method

A random-effects meta-analysis was conducted to estimate the proportion of scientific misconduct practices in health research, weighted by the inverse of the variance of each included study. The choice of the random-effects model was based on the expectation of methodological and population variability among studies, implying that the true effect may differ across them. This rationale is consistent with the recommendations of the JBI Manual for Evidence Synthesis, 10 which advocates for the use of this model when clinical, methodological or statistical heterogeneity is present and when more generalizable estimates are desired. Between-study variance (Tau 2 ) was estimated using the DerSimonian and Laird method, and heterogeneity was assessed using Higgins’s inconsistency index (I2), which quantifies the proportion of variability across studies attributable to real differences rather than chance. To ensure the approximation of normality in the distribution of proportions—particularly in studies with values close to 0 or 1—the Freeman–Tukey double arcsine transformation was applied, as recommended for meta-analyses of proportions with substantial variability. The 95% confidence intervals were calculated using the exact Clopper–Pearson method, which provides greater precision for binary estimates, especially in small samples or when events are rare.

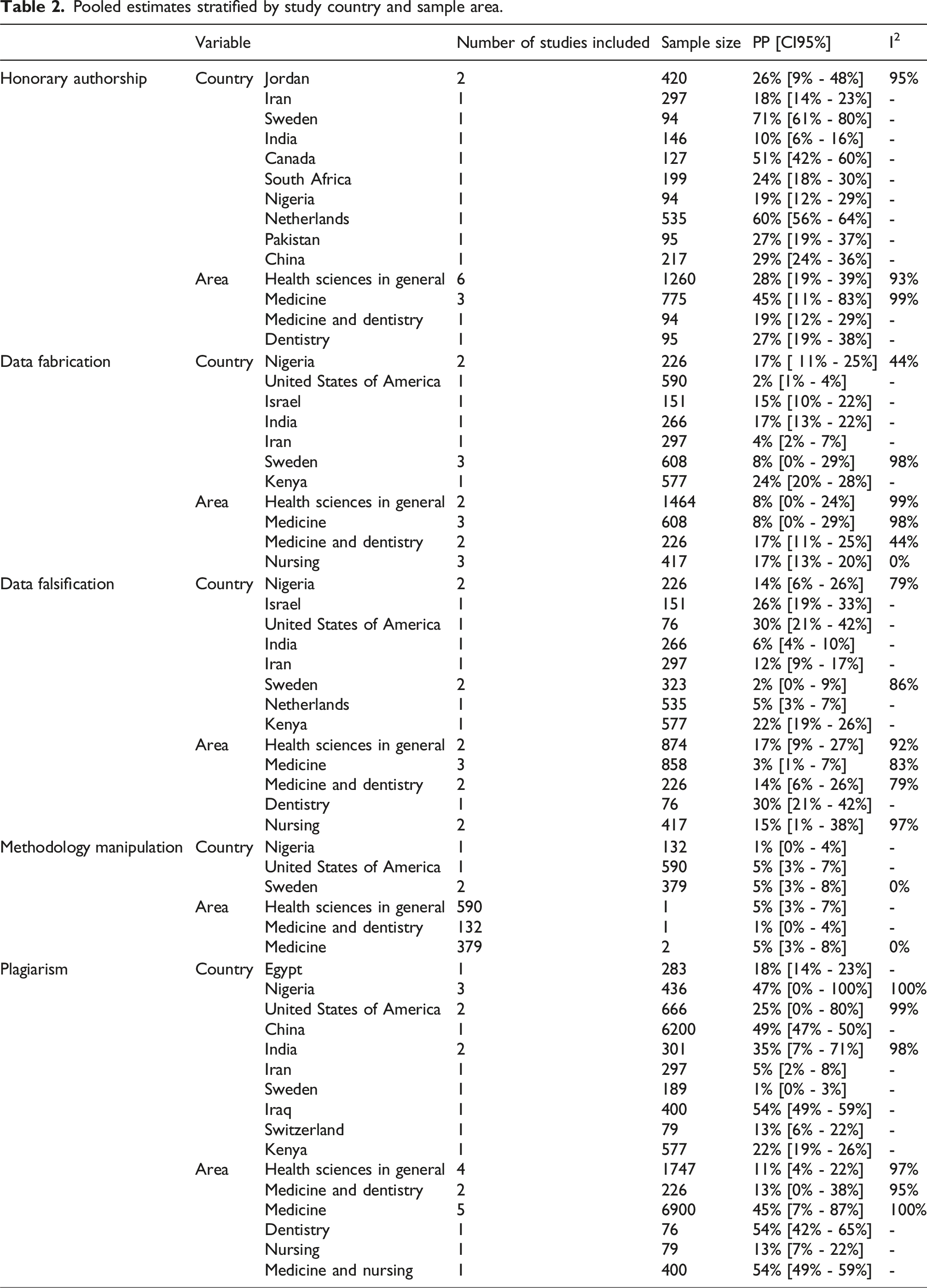

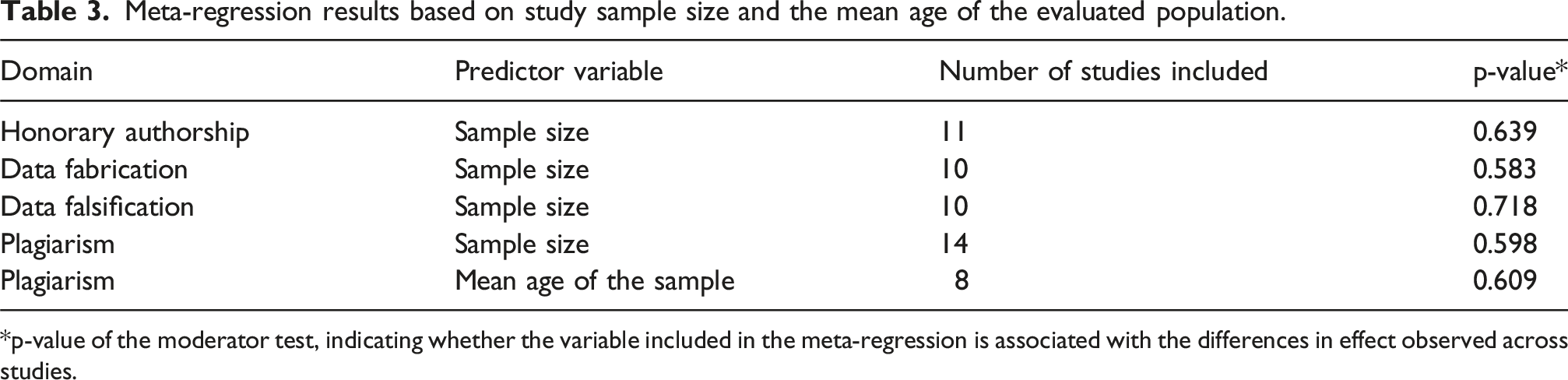

Potential sources of heterogeneity were explored through subgroup analyses and meta-regression using random-effects models to assess whether variability in the pooled prevalence estimates could be explained by the available study-level covariates. Subgroup analyses were performed when the relevant categorical variables were available across studies, while meta-regression was applied to continuous covariates when sufficient data were reported. A significance level of 5% was adopted. All statistical analyses were performed using RStudio software (Boston, USA).

Reporting bias

Publication bias was evaluated through visual inspection of the funnel chart and the application of the Egger test, considering p values below 0.05, indicating asymmetry.

Results

Selection of studies

Five thousand one hundred and thirty-six articles were initially identified in the databases. After the removal of duplicates, 3791 references were left for the screening of titles and abstracts. Of these, 67 were selected for full reading (phase 2), resulting in the exclusion of 38 studies, as detailed in Appendix 2. None of the studies from the gray literature and the list of references met the inclusion criteria. (Figure 1).

Characteristics of the studies

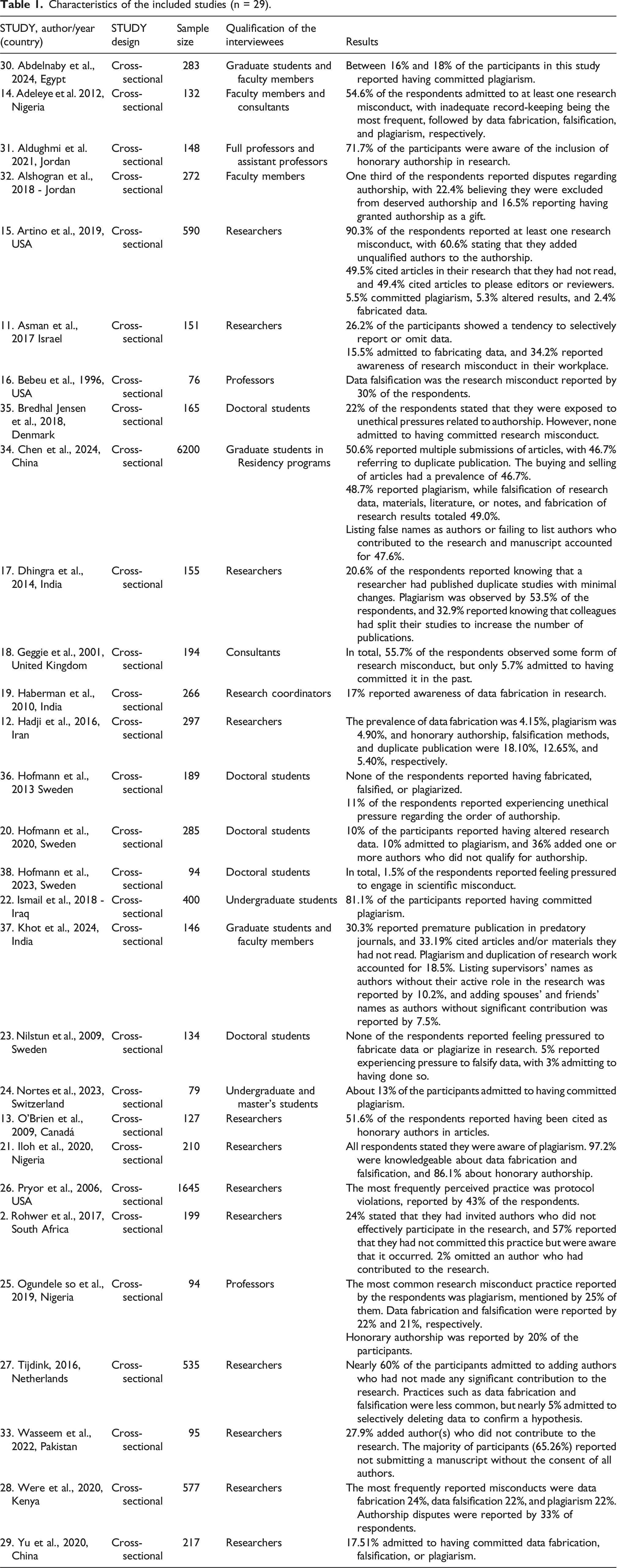

The included studies were published in English and conducted in several countries, including South Africa, Germany, Canada, China, Denmark, Egypt, the United States, Netherlands, India, Iran, Iraq, Israel, Jordan, Nigeria, Pakistan, Kenya, the United Kingdom, Serbia, Sweden and Switzerland. The outcomes analyzed referred to different forms of scientific dishonesty, such as data fabrication and falsification, methodological manipulation, plagiarism and honorary authorship.

The data collection was performed anonymously, through questionnaires applied to health professionals, students and researchers. In the studies that reported the gender of the participants, there was a predominance of females. The age group of the respondents varied between 19 and 77 years.

The included studies showed substantial variability in terms of professional field (students, researchers, and health professionals), institutional context, and survey focus, as reported by the original studies.

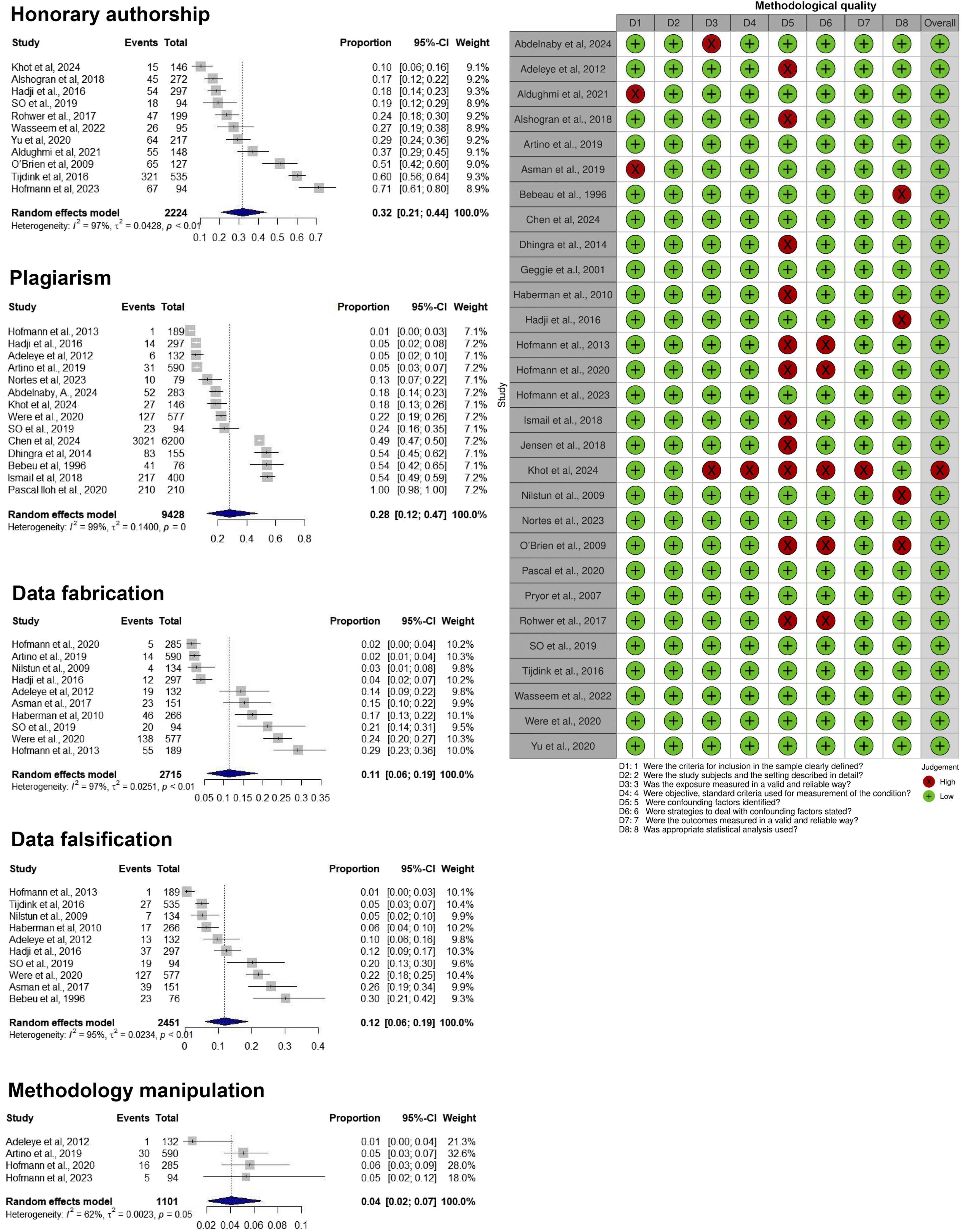

Methodological quality of the studies

Only one study was classified as having low methodological quality, while another was rated as moderate; the remaining studies were considered to have high methodological quality (Figure 2). The main methodological weaknesses identified included: absence of detailed statistical techniques used; lack of specific information on the validity and reliability of exposure variables measures; unclear inclusion criteria; lack of description of limitations of the studies; lack of identification of potential confounding factors; and lack of strategies for their control. Forest plot of the prevalence of research misconduct and the methodological quality of each included study.

Individual results of the studies

Most participants in the included studies had higher-level degrees, except for a study that mentioned the participation of individuals without an academic background. 11 Two articles did not provide information about the level of education of respondents.12,13 Among the most frequently reported behaviors in the studies were data fabrication, falsification and plagiarism. In total, 19 articles described at least one of these practices of scientific misconduct.11,12,14–30 Plagiarism was pointed out as the most common infraction in seven studies, with expressive percentages compared to the other behaviors evaluated.12,16,17,20,21,24,25 In five other studies, data fabrication was identified as the most prevalent practice,11,14,19,23,28 often associated with information falsification.12,16,21,23,25,28

Questions related to authorship were also highlighted, as the main focus of nine studies.2,12,13,15,20,27,28,31–33 Among the most frequently reported situations were the inclusion of unqualified authors15,21 and the omission of collaborators who effectively contributed to the research.2,16 On the other hand, three articles mentioned the presence of authors who did not participate significantly in the development of the study or in the writing of the manuscript.27,33,34 In addition, disputes and disagreements about authorship were reported in different contexts,2,26,28 pointed out as one of the main sources of unethical pressures in the academic environment.23,35,36 One study indicated that the order of authorship was defined by the first author based on the level of contribution of each participant. 31

Honorary authorship — characterized by attribution of authorship to individuals who did not substantially contribute to the study — was described as a recurrent practice in several articles.2,12,13,31–34,37

Only two studies reported that the participants denied having committed or known colleagues who had practiced scientific misconduct.35,36 However, even in these cases, the interviewees reported having been subjected to unethical pressures related to the order of authorship, to the methodological design or to the presentation of the results, without clarifying what attitudes they had taken in these situations. A third study also addressed similar reports 38

Summary of the results

Twenty-three studies were included in the present quantitative synthesis. A total of 17,919 responses were analyzed, corresponding to the estimates extracted from studies evaluating distinct types of scientific misconduct. Among the scientific misconduct behaviors evaluated, honorary authorship presented the highest estimated prevalence, with 32% [95% CI = 21%–44%], followed by plagiarism (28%, 95% CI = 12%– 47%), data falsification (12%, 95% CI = 6%–19%) and data fabrication (11%, 95% CI = 6%–19%). Methodology manipulation was the least frequent behavior, with a prevalence of 4% [95% CI = 2%–7%].

Characteristics of the included studies (n = 29).

Pooled estimates stratified by study country and sample area.

Meta-regression results based on study sample size and the mean age of the evaluated population.

*p-value of the moderator test, indicating whether the variable included in the meta-regression is associated with the differences in effect observed across studies.

Reporting bias

Asymmetry was not observed in the funnel graph (p > 0.05), and there was no evidence of publication bias among the studies included in the meta-analysis. This absence of asymmetry suggests that the results were not influenced by the non-disclosure of studies with smaller or non-significant estimates (Appendix 3).

Discussion

Scientific misconduct refers to unethical behaviors or practices in conducting research, which compromise the integrity and credibility of the results obtained. Such practices undermine confidence in science by disseminating conclusions, often mistaken, that negatively impact the advancement of knowledge and its practical application in health care.39,40 In addition, they result in the waste of financial resources for scientific production. 41 The present study aimed to evaluate the prevalence of different forms of scientific misconduct in the health area through a systematic review with meta-analysis. The results indicated that practices related to improper authorship and plagiarism are more frequent among the studies analyzed, while behaviors such as falsification, data fabrication and methodological manipulation were less frequently reported. These findings show the persistence of unethical conduct in the scientific environment and the need for institutional actions aimed at promoting integrity in research.

It is the responsibility of researchers and institutions to ensure reliability and ethics in conducting research. 42 However, the pressure for academic productivity and the appreciation of the number of publications have led to the prioritization of institutional partnerships and strategic co-authorships, even when lacking effective contribution. 43 This scenario favors the dissemination of inappropriate practices, such as honorary authorship — the most prevalent misconduct identified in this review. The absence of objective criteria for attribution of authorship represents a concrete threat to scientific integrity, 44 being reinforced by institutional policies that use publication rankings as the main criterion for hiring and promoting teachers, without effectively considering their individual contributions in works with multiple authors. 45

Institutional pressure to publish is one of the most cited factors as a cause of questionable conduct in research45–47 affecting mainly those researchers who feel the highest demand, who tend to admit greater involvement in these practices.27,48 On the other hand, studies show that, even under the guarantee of anonymity, many researchers omit the practice of scientific infractions, 15 which suggests a significant underreporting of the actual prevalence of these behaviors. 49

With the increase in scientific production in the health area, there was also a significant growth in the number of retractions of articles, most of them resulting from misconduct. 50 A study conducted in China — a country with a high volume of scientific publications and an estimated prevalence of misconduct at 40% 51 — revealed, through semi-structured interviews, that participants had limited understanding of the subject, recognizing mainly the fabrication, falsification of data and plagiarism as synonyms of scientific misconduct. 52 The findings of this review coincide with those of this study, indicating that such practices, although frequent, are often not recognized as ethically unacceptable by the authors themselves.

The increasingly frequent use of technology as a tool in the academic environment has contributed to the emergence of new forms of ethical infractions, called by some authors “variants of cheating” in research.39,53 Tools based on artificial intelligence, such as chatbots — among them ChatGPT —, although useful to assist in academic tasks, do not replace the guidance of specialized professionals, whose performance confers credibility to research through judgments based on technical knowledge and consolidated experience. The ability of artificial intelligence to rewrite excerpts from other authors with similar words should not be considered an acceptable practice in scientific production, because, regardless of the form employed, it is a form disguised as plagiarism.54,55

Among the misconducts analyzed in this review, plagiarism is the only practice subject to legal sanctions. However, there is consensus in the literature that other offenses should also be subject to equivalent punitive criteria. 45 Faced with the increasing spread of unethical behaviors, scientific journals began to demand, at the time of submission of articles, a detailed statement of the contribution of each author involved. 15

Limitations and future research

Some limitations should be considered when interpreting the results of this review. First, the pooled estimates were based on self-reported data, which introduces a risk of response bias. Scientific misconduct is a sensitive topic and, even under conditions of anonymity, participants may omit personal or colleagues’ behaviors, leading to underreporting and consequently to underestimation of the true prevalence. Future studies could combine self-reports with objective indicators, such as plagiarism detection tools, retraction databases or analysis of publication metadata.

Second, the included studies differed substantially in terms of sample profiles (students, researchers and health professionals), institutional contexts and methods used for data collection and reporting. This variability likely contributed to the heterogeneity observed in the pooled estimates. Although we applied a random-effects model, which is recommended when true effects are expected to vary across studies, subgroup analyses and meta-regression were conducted using the available study-level variables. However, only study area, country, sample size, and mean age of the sample could be analyzed, and these variables were insufficient to explain the observed heterogeneity. Future studies using standardized instruments and uniform reporting would allow for more refined comparative analyses.

Third, although no asymmetry was detected in the funnel plot, suggesting absence of publication bias among the included studies, this result should be interpreted cautiously. Funnel plot–based tests only assess bias among studies with available quantitative data and do not fully capture other forms of reporting bias, such as selective non-publication of surveys, theses, or studies that did not report prevalence estimates in sufficient detail. This is a limitation inherent to the available evidence rather than to the review process itself. Future research would benefit from standardized reporting, protocol registration, and greater accessibility of primary data.

Another limitation is the substantial heterogeneity observed across the pooled estimates. This variability in professional field, institutional setting, and survey focus may contribute to the observed between-study heterogeneity. High I2 values are common in meta-analyses of prevalence due to the sensitivity of proportional data and the intrinsic variability between study contexts. 56 Although subgroup analyses and meta-regression were conducted using the available study-level variables, including professional field, country, sample size, and mean age, these analyses were insufficient to explain the observed heterogeneity. This limitation reflects the restricted availability, inconsistency, and limited variability of reported covariates across studies. Therefore, although a random-effects model was applied to account for between-study variability, the influence of potential effect modifiers could not be further examined.

Despite these limitations, the findings provide an important overview of the problem and can support the development of educational, institutional and regulatory strategies to strengthen research integrity.

Conclusion

This systematic review and meta-analysis confirmed that scientific misconduct remains a persistent issue in health research, particularly in practices related to authorship and intellectual originality. Honorary authorship was reported by about one in three participants, followed by plagiarism, while data falsification and fabrication, though less frequent, still affected roughly one in eight to nine researchers. Methodological manipulation was less common but not absent. Despite heterogeneity among studies and potential underreporting, the findings consistently revealed structural ethical weaknesses within the scientific environment. The evidence highlights the need for continuous institutional efforts to strengthen research integrity through transparent authorship criteria, mandatory ethics education, and effective mechanisms for accountability and transparency. By including diverse professional profiles and a broader range of inappropriate practices, this study provides updated estimates that can inform policies to promote integrity, prevent misconduct, and foster a culture of responsibility in health research.

Supplemental material

Supplemental Material - Scientific misconduct in health research: A systematic review and meta-analysis

Supplemental Material for Scientific misconduct in health research: A systematic review and meta-analysis by Paloma Alves Miquilussi, Rayane Délcia da Silva, Glória Maria Nogueira Cortz Ravazzi, Karinna Verissimo Meira Taveira, Samara Fernandes da Silva Souza, Bianca Simone Zeigelboim, Diulia Pereira Bubna, Bianca Marques de Mattos de Araujo, Rosane Sampaio Santos, Cristiano Miranda de Araújo in Research Methods in Medicine & Health Sciences

Supplemental material

Supplemental Material - Scientific misconduct in health research: A systematic review and meta-analysis

Supplemental Material for Scientific misconduct in health research: A systematic review and meta-analysis by Paloma Alves Miquilussi, Rayane Délcia da Silva, Glória Maria Nogueira Cortz Ravazzi, Karinna Verissimo Meira Taveira, Samara Fernandes da Silva Souza, Bianca Simone Zeigelboim, Diulia Pereira Bubna, Bianca Marques de Mattos de Araujo, Rosane Sampaio Santos, Cristiano Miranda de Araújo in Research Methods in Medicine & Health Sciences

Supplemental material

Supplemental Material - Scientific misconduct in health research: A systematic review and meta-analysis

Supplemental Material for Scientific misconduct in health research: A systematic review and meta-analysis by Paloma Alves Miquilussi, Rayane Délcia da Silva, Glória Maria Nogueira Cortz Ravazzi, Karinna Verissimo Meira Taveira, Samara Fernandes da Silva Souza, Bianca Simone Zeigelboim, Diulia Pereira Bubna, Bianca Marques de Mattos de Araujo, Rosane Sampaio Santos, Cristiano Miranda de Araújo in Research Methods in Medicine & Health Sciences

Footnotes

Consent to participate

This article does not involve human participants, and therefore informed consent was not required.

Author’s contribuitions

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.