Abstract

Background

Research waste is a costly problem for scientific research, with poor design and conduct of the research being key elements which contribute to wastage. Interventions to address poor design and conduct may save time and money. The objective of the study was to map the interventions that have been evaluated for improving the design or conduct of scientific research to identify any gaps in the evidence.

Methods

We undertook a systematic scoping review. We searched MEDLINE, EMBASE, EconLit, ERIC, Social Policy and Practice, HMIC, ProQuest Dissertations and Theses Global and MetaArXiv from 1st January 2012 to 13th June 2022. Evaluated interventions that aimed to improve the design or conduct of scientific research by targeting researchers or research teams were included. Screening was completed by two reviewers and data charting by a single reviewer with another reviewer checking.

Results

A total of 81 evaluated interventions were included. Most of the interventions targeted research conduct, primarily focussed on registration, publishing, and reporting. Most included studies used observational evaluation methods. Categorising the interventions by the behaviour change wheel framework we found that most studies utilised restriction, coercion, and persuasion and fewer used enablement, training, or incentivisation to achieve their aims.

Conclusions

More evaluations of interventions aimed at how researchers design their research are needed, these should be developed appropriately and evaluated for effectiveness using experimental methods.

Keywords

Introduction

Estimates suggest that around 85% of research is “waste”, meaning that the research is addressing unimportant questions, not addressing important questions properly, or the results are just not available. 1 Chalmers and Glaziou suggested four areas where this waste could be addressed: question and priority setting to identify research questions important to patients-public and clinicians; appropriate study design and methods; full reporting of results; and unbiased and usable reports. 1 Some steps have been taken to improve practice across these four areas. For example, awarding online badges to reward data sharing 2 ; legal requirements for publication 3 ; funder recommendations for design choices 4 ; email reminders for increasing publication 5 ; providing training and tools to researchers to allow them to design better studies 6 However, research waste persists across all areas.7,8

Hardwicke et al. 9 proposed a four-stage framework for improving the efficiency, quality and credibility of research practice: 1) identify problems; 2) investigate problems; 3) develop solutions; 4) evaluate solutions. They note that many solutions are developed without any consideration for evaluation, resulting in mainly retrospective observational evaluations; where unintended consequences or lack of anticipated benefits may go undetected.

In advance of undertaking primary research to improve research practice in the development of appropriate design and conduct, we wanted to establish what interventions had previously been evaluated. There has been no overarching systematic review of interventions to improve the design and conduct of research or how these interventions are evaluated. Therefore, the aim of this research was to undertake a systematic scoping review to map existing evaluations of interventions which aim to improve the design or conduct of research.

We will use this review to identify knowledge gaps and to assess how this type of research could be improved.

Objectives

The aim of this scoping review is to address the below questions. • What interventions, aimed at researchers or research teams, to improve the design and conduct of research have been evaluated? o Where in the research pathway are these interventions implemented? (e.g. protocol development, registration, publishing) o What specific aspects of research are being targeted? (e.g. research knowledge, improved methods used, compliance with a standard.) o Who is implementing these interventions? (e.g. funders, ethics committees, research institutions, publishers.)

Methods

The protocol of this scoping review has been registered with the Open Science Framework registry 10 and has also been published. 11 The PRISMA reporting guidance extension for scoping reviews 12 has been used, a completed checklist is available in the supplementary materials. 13 All supplementary materials are available on the Figshare repository with individual documents cited with links where relevant.

Data and analysis code for this study is available in the supplementary materials.14,15

Eligibility criteria

Any interventions that aimed to improve the design or conduct of scientific research by targeting researchers or research teams were included.

Interventions aimed at any aspect of design and conduct were included, from initial design (e.g. question setting, protocol development), through undertaking the research (e.g. registration, changes to research design), and publishing (e.g. timely publication, reporting standards, data sharing).

Searches

MEDLINE, EMBASE, EconLit, ERIC, and Social Policy and Practice were searched along with the following grey literature sources: HMIC, ProQuest Dissertations and Theses Global and articles submitted to the MetaArXiv preprint server. These were searched from 1st January 2012 up to 13th June 2022.

The full search strategy is available in the supplementary materials. 17 No limits were applied relating to language or source of information. References within included articles were screened by a single reviewer to identify any further relevant articles that were undertaken.

Selection of sources of evidence

All records identified were de-duplicated using Endnote 18 then imported into Covidence Systematic Review software. 19

A two-stage screening process was used, first screening the titles and abstracts of all identified studies and then screening the full-texts of any that appeared eligible at the first stage. Both stages were completed independently by two researchers with any disagreements resolved by discussion.

This process was piloted initially to ensure agreement in decisions.

Data charting process

Data charting was completed using a data extraction template within Covidence. This process was done by one researcher with a second independently checking, with any disagreement being resolved through discussion. This process was piloted on a small number of initial studies to ensure consistency and that all pertinent data was captured using the data extraction template.

Data were collected on the details of the authors and report, interventions, methods of evaluation, and outcomes measured.

The intervention functions as assessed using the Behaviour Change Wheel Framework

16

were classified by two reviewers based on the below criteria: • Education – providing knowledge of practices. • Persuasion - communication for improving actions. • Incentivisation – providing expectation of reward. • Coercion – providing expectation of punishment. • Training - providing skills. • Restriction - rules or regulation • Environmental restructuring - changing physical or social context. • Modelling - showing examples of good practice. • Enablement - reducing barriers/increasing means to practice. • Other - Anything not captured by framework.

By using this framework, the types of intervention used can be assessed more broadly even if the research areas are not necessarily similar. It also allows identification of interventions which are potentially less which could be tested in future research.

Synthesis

A narrative synthesis is presented for each of the review aims. Relevant data are presented in tables and graphs. These are stratified by whether the intervention focussed on aspects of design, conduct, or both.

There was no planned critical appraisal as this was not one of the aims of this review, however the study design of all included studies was extracted in order to map what methods are used to evaluate these interventions.

Results

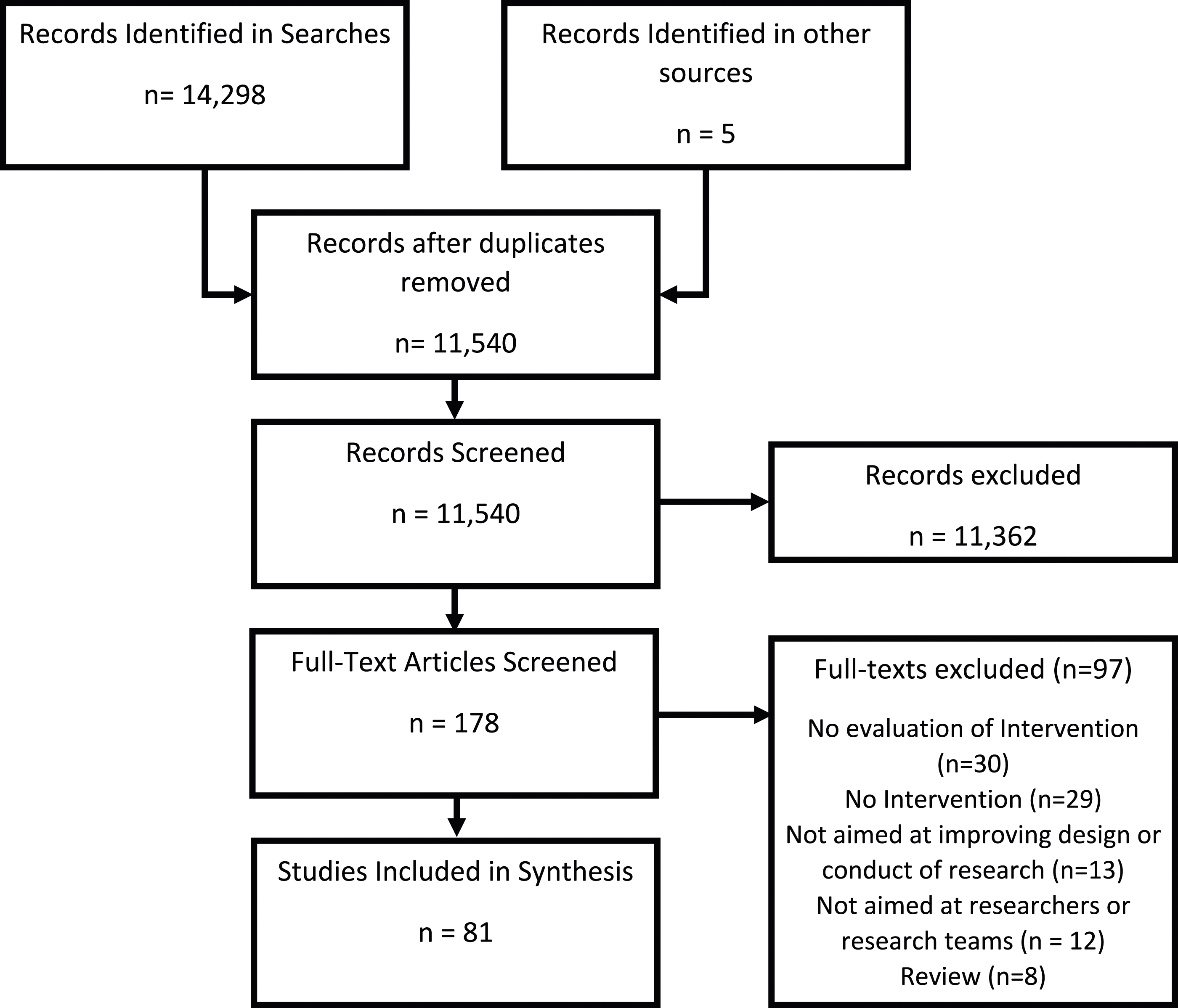

The titles and abstracts of 11,540 records and 178 full texts were screened. Eighty-one eligible studies were included in the review (Figure 1). A table of the included studies is provided in the supplementary materials.

20

Flowchart of Study Selection process.

Details of the studies excluded at full-text review and the reasons for exclusion can be found in the supplementary materials. 21 There were 30 that described an intervention but did not evaluate it; 29 described or investigated design or conduct problems with no reference to an intervention; 12 were not aimed at researchers or research teams (e.g. an intervention aimed at clinical teams or patients); and eight were review articles.

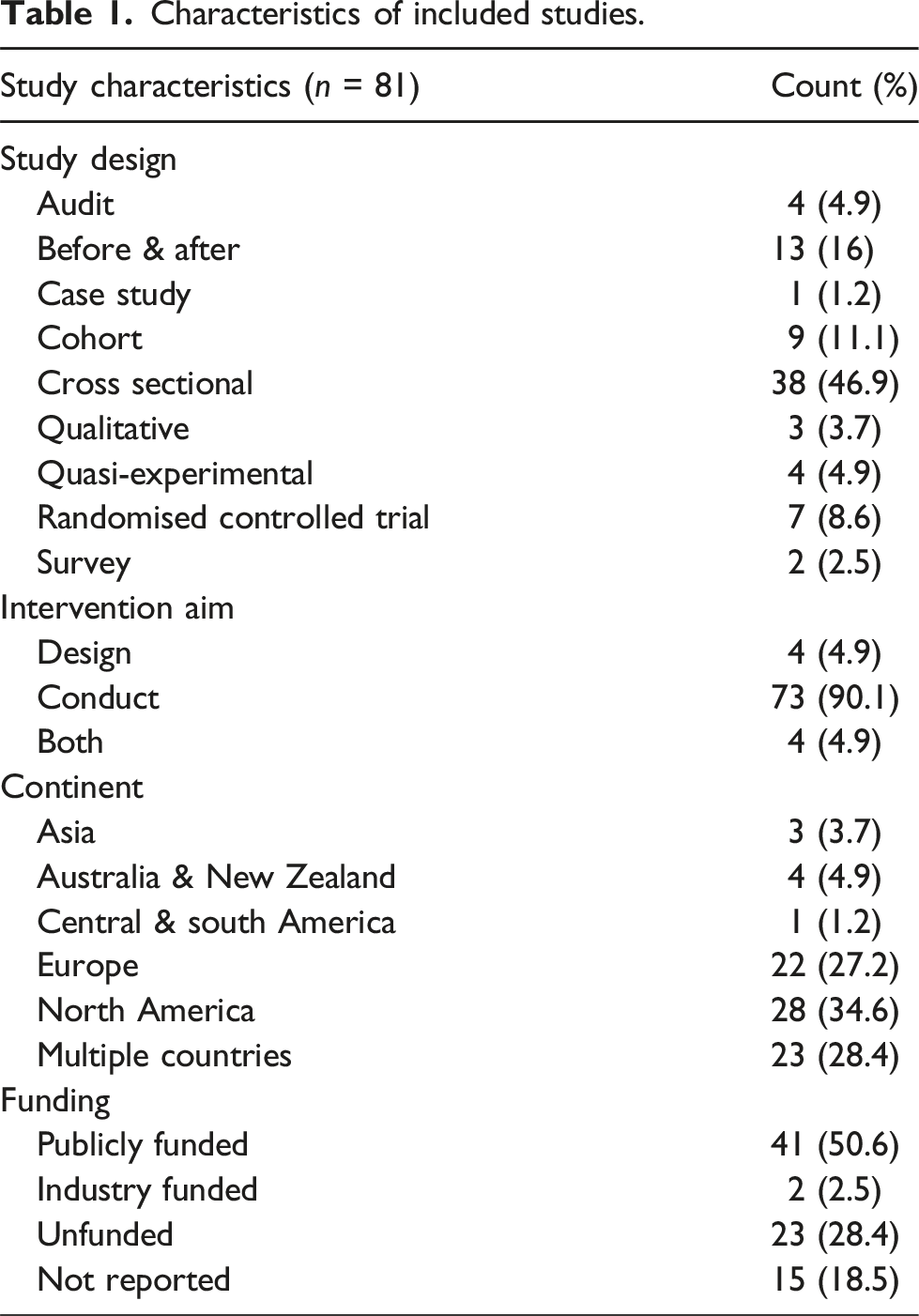

Characteristics

Characteristics of included studies.

The majority of included studies (n = 67, 82.7%) used an observational study design seven used a randomised controlled trial and four used a quasi-experimental design. Most of the studies were conducted in Europe, North America, or across multiple countries. The source of funding for the studies was reported in 66 (81.5%) of the studies with 43 (53.1%) being funded and 23 (28.4%) being unfunded.

The 81 included studies assessed 41 different interventions, the most commonly assessed was journal reporting guidelines or endorsement of specific guidelines (e.g. CONSORT, PRISMA), which was assessed in 13 evaluations.

Synthesis

What Interventions have been evaluated?

Design or conduct

Seventy-three of the included studies assessed interventions focussed solely on the conduct of the research, four were focussed solely on the design, and four covered both design and conduct.

Types of intervention

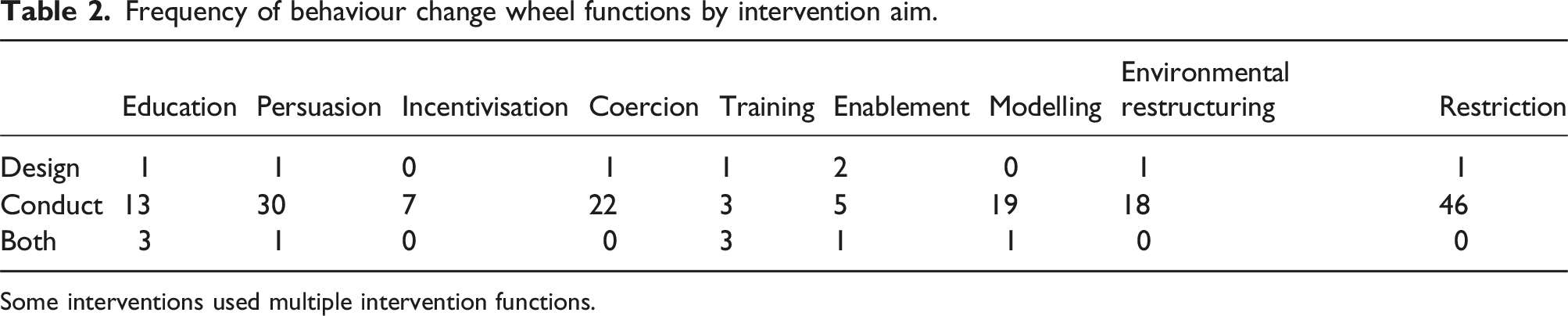

Each of the interventions was characterised as per the behaviour change wheel intervention function framework.

Persuasion, restriction, and coercion were the most used intervention functions overall. Fewer interventions used training, enablement, or incentivisation. The most common intervention function utilised for design-focussed interventions was enablement in design and restriction for conduct-focussed interventions.

Some examples of the intervention functions used in the included studies were: • Education: Expert consultation to advise and educate researchers

22

• Persuasion: Policies endorsing best practices

23

• Incentivisation: The offer of badges on publications to indicate use of good practices.

2

• Coercion: The threat of fines for not publishing results.

3

• Training: Training courses or standard operating procedures to follow

24

• Enablement: Provision of software or tools to enable good practices.

6

• Modelling: provision of reporting checklists with best practice examples (e.g. CONSORT)

25

• Environmental Restructuring: Prompting emails to remind researchers of requirements with time limits.

5

Frequency of behaviour change wheel functions by intervention aim.

Some interventions used multiple intervention functions.

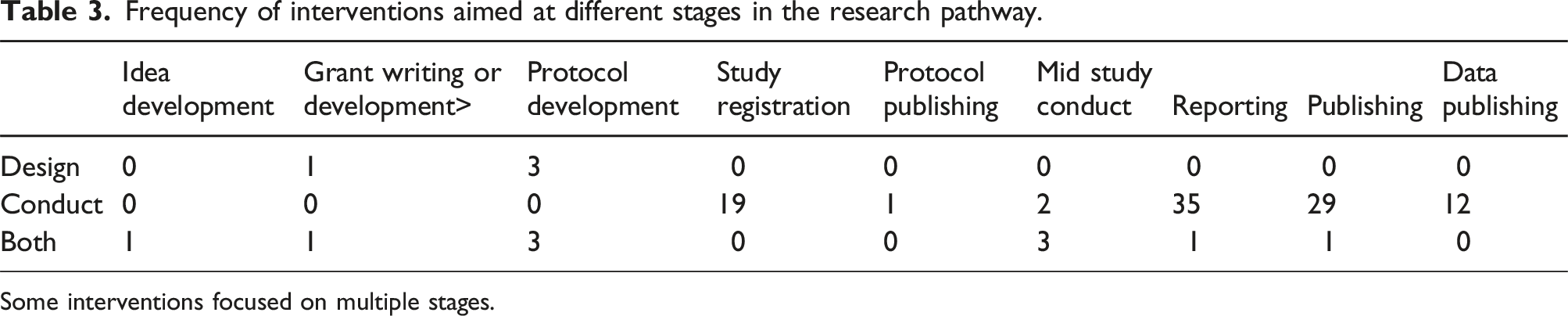

Where in the research pathway have these interventions been evaluated?

Frequency of interventions aimed at different stages in the research pathway.

Some interventions focused on multiple stages.

What aspects of research have been targeted?

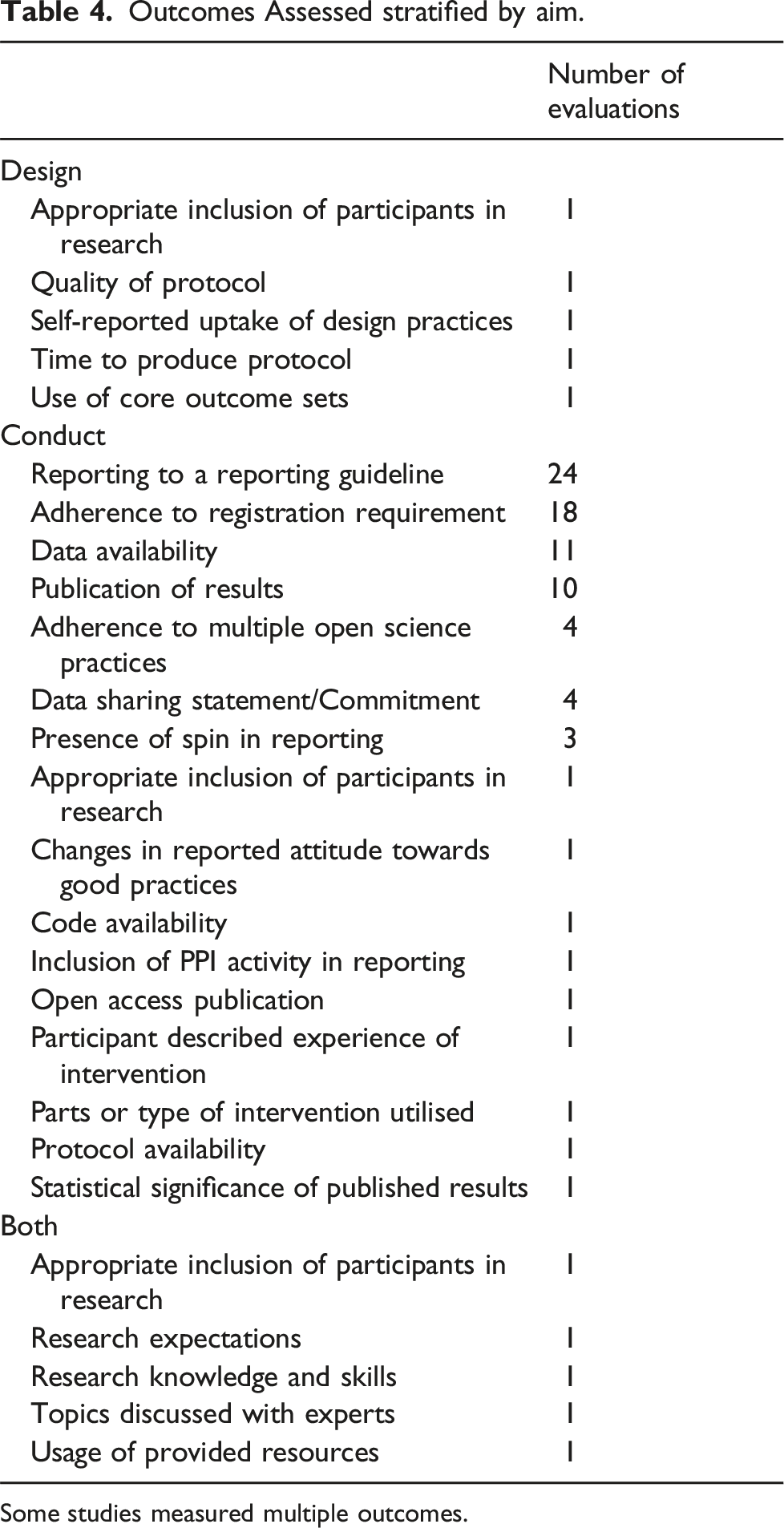

Outcomes Assessed stratified by aim.

Some studies measured multiple outcomes.

Most of the studies measured exactly what the intended effect of the intervention was, for example reporting to a particular standard for a reporting checklist/guidance 26 or meeting the study registration requirements of a policy. 27 Five studies assessed the researcher’s experience of the intervention either in how much it was used , 24 what aspects of the intervention were most utilised, 28 or the participant’s described experiences of the intervention. 29

For studies focussed on design, the uptake of the target research design practice was assessed in several different ways: self-reported usage of design practices in being surveyed on their usage 6 ; usage of the practice at the funding stage with funding applications being assessed 4 ; uptake of design practices assessed in publications retrospectively 30 ; and in a generic assessment of protocol quality and time to produce the protocol. 31

One study used validated measures to assess changes in research knowledge and skills and research expectations following the intervention. 32 They measured the Research Outcome Expectations Questionnaire and Research Knowledge and Skills Self-Assessment measure to assess a virtual laboratory intervention.

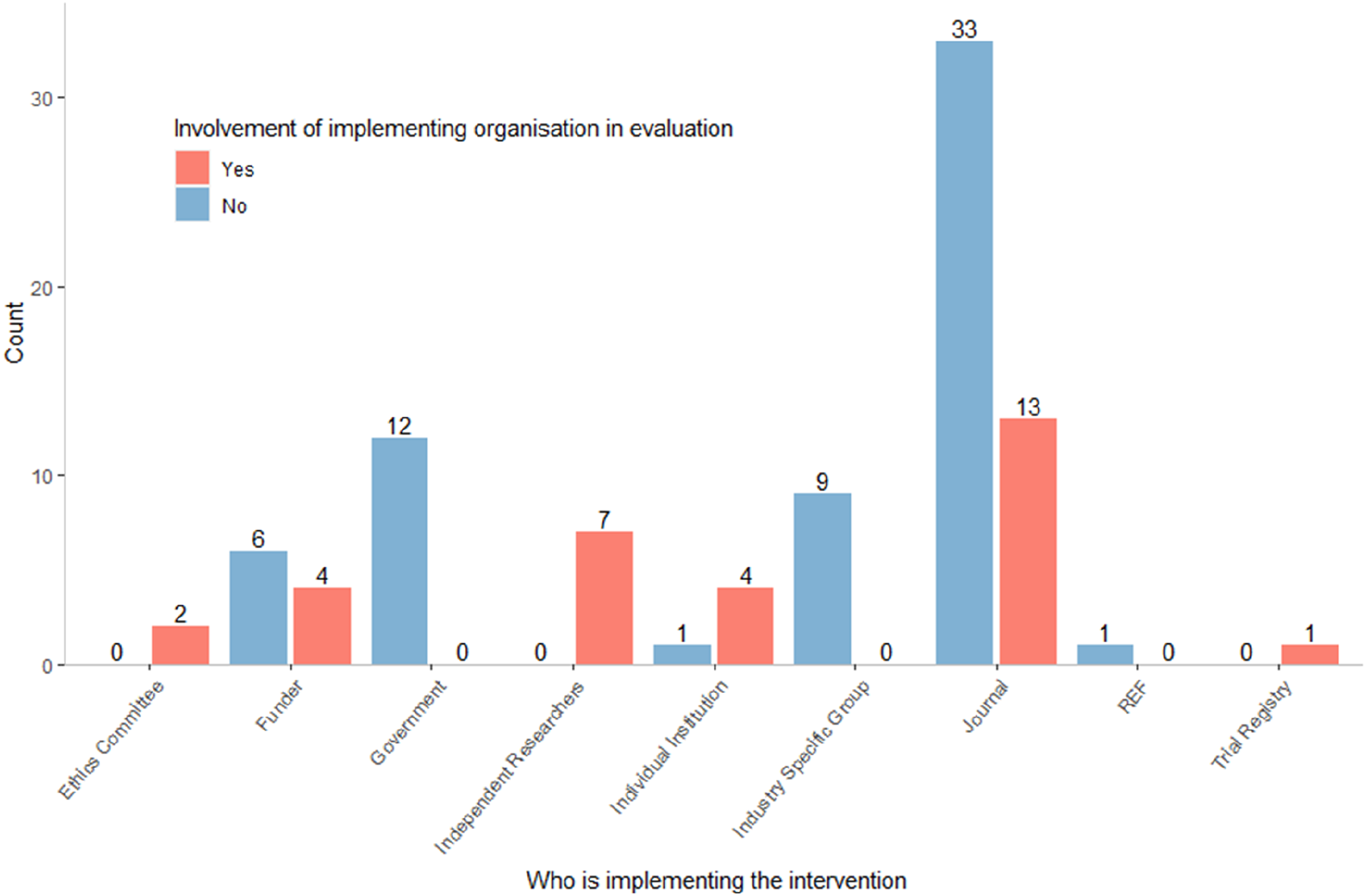

Who is implementing these interventions?

Most interventions were implemented by journals (n = 49), the different organisations and groups are summarised in Figure 2. Sixty-nine of the interventions involved a single organisation implementing them, 12 involved two organisations. Examples of this include a university initiative that aligns with government legislation

33

and government legislation and funder requirements aligning at the same time. Summary of implementing organisations.

Most of the evaluations were not completed by the organisation that implemented the intervention (n = 52, 64.2%). When the evaluations implemented by independent researchers are removed (n = 7), then 70% of the included evaluations were not completed by the implementing organisation.

Discussion

Summary of evidence

This review identified 81 evaluated interventions which were primarily aimed at aspects of research conduct rather than research design. This scoping review has identified a key gap in the evidence; whilst errors in publishing and reporting can be rectified after the fact it is often too late to fix errors in design once research is complete. Future research should have a stronger focus on how the design of research can be improved. This could be done by expanding upon the existing evidence in this review or development of new interventions. For example, Weeks et al. 6 tested the effect of providing resources and training regarding research design within one institution, this could be replicated in other institutions as the resources used were freely available and provided by the authors.

The ways in which improvement in design is measured may also need to be considered as the studies identified in this research primarily relied upon targeting one specific design aspect or self-reporting of usage of best practices in design. Most of the research focussed on conduct used an outcome based on adherence to a set guideline or standard which covered multiple important aspects of the research (e.g. the CONSORT reporting standard), utilisation of similar standards for assessing study design would allow assessment of broader impact than on individual design aspects.

The most common intervention functions utilised were restriction, persuasion, and coercion, one possible explanation for this is that it may be easier to suggest or mandate a particular standard to be met than to provide resources, education, or training to help meet these standards. Development of any future interventions could draw on the guidance for development of complex health and care interventions by properly developing a theory of the problem to assess whether restrictive or persuasive techniques are appropriate or whether researchers do not have the skills or tools to meet new standards and therefore other intervention functions should be utilised. 34

There is also potential that some of the interventions already tested need to be developed further. The most common incentive intervention, a digital badge accompanying article publication, has had mixed results and has received criticism regarding its implementation.35,36 Further research into what has caused the mixed results or how the intervention could be improved is needed.

One notable finding is that there were 30 records describing interventions which were not evaluated and were therefore excluded from the review as they did not meet the eligibility criteria. Some of these interventions have been evaluated articles which were included, such as the use of trial registration guidance 37 and the ICMJE data sharing requirements. 38 However, there are many that appear to have no evaluation at all such as new publishing models, 39 laws aimed at reducing conflicts of interest in research, 40 the use of specified staff for the oversight of animal research. 41

Hardwicke et al 9 suggested that most evaluations aimed at researchers would be limited to retrospective observational designs due to the lack of planning for evaluation. Our findings align with this as most of the included evaluations used observational methods. We also identified 29 records which detailed an evaluation without intervention, which would likely be categorised under “investigating problems” in Hardwicke et al’s framework. As with the articles that did not have evaluations, many of the problems identified have not had evaluations of interventions published to address them. Some of these include: solutions for reproducibility of results, 42 increasing public involvement in research, 43 and implementation of Good Laboratory practices. 44 This suggests that whilst there is work to identify and characterise problems and develop solutions, the final steps of appropriate evaluation and implementation of these solutions are not being addressed for a large portion of potential solutions.

Furthermore, the implementing organisations were rarely involved in the evaluations; suggesting that not only had they not planned for an evaluation, but there was no planned framework to ensure that any issues identified during evaluation were dealt with to improve the implementation. One example of this occurring is identified by DeVito et al , 3 who assessed compliance with the Food and Drug Administration Amendments Act (FDAAA) of 2007. In their study they note that despite poor compliance, the implementing organisation (FDA) had yet to implement any of the enforcement actions available to it that were key aspects of the intervention, such as fines for not complying.

Most of the interventions evaluated were implemented by journals. The cause for this may be that the data for assessment is often publicly available so independent researchers can evaluate the effect of journal policies and practices more easily than that of funders, ethics committees, or governments who may not have such easily accessible data. However, this results in far more research being done on reporting and data sharing than on other aspects of research which may be more fundamental to the reliability of the results, such as design choices and data management.

Finally, there were some areas such as reporting or publishing where there was substantially more research than in other areas, often with unnecessary duplication of very similar work. This along with the lack of testing of some interventions suggests that perhaps a resource for helping researchers to identify what problems need further research and what areas have sufficient research either completed or ongoing. There has been some success with platforms for addressing this in other areas, such as the PROSPERO platform for pre-registration of systematic reviews. 45

Limitations

The searches in this review were limited to those published after 2012. It is possible that there are interventions evaluated prior to this and thus some evaluated interventions may not be included.

Whilst this review has identified many interventions that have not been evaluated there are potentially further interventions that have been implemented and not evaluated. These interventions may differ in the type of intervention, the organisations implementing them, and the area of research they target. It is also possible that many organisations internally evaluate their interventions but do not publish the results.

Conclusions

Very few interventions have been evaluated for improving how researchers do research, with only a small proportion focussing on the design of research. There are many suggested interventions that remain untested and identified problems with no developed solutions. The evaluations that are available are often insufficient to determine the effectiveness of the intervention. Future research in this area should focus on interventions aimed at the design of research.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institute for Health Research (NIHR) under its Pre-Doctoral Fellowship (NIHR301991) to Andrew Mott. The views expressed in this publication are those of the author(s) and not necessarily those of the NIHR, NHS or the UK Department of Health and Social Care.