Abstract

Simultaneous stochastic optimisation frameworks provide a method for optimising long-term production schedules in mining complexes that aim to maximise net present value and manage risk related to supply uncertainty. The uncertainty and local variability related to the quality and quantity of material in the mineral deposits are modelled with a set of stochastic orebody simulations, an input into the simultaneous stochastic optimisation framework. Infill drilling provides opportunities to collect additional information associated with the mineral deposits, which can inform future production scheduling decisions. A framework is developed for optimising infill drilling locations with a criterion that seeks areas that directly affect long-term planning decisions and requires the use of geostatistical simulations. Actor-critic reinforcement learning is applied to identify infill drilling locations in a copper mining complex using this criterion. The case study demonstrates that adapting production scheduling decisions given additional information has the potential to improve the associated production and financial forecasts and identifies a stable area for infill drilling.

Keywords

Introduction

A mining complex is an integrated supply chain designed to transform extracted materials from several mines into a set of valuable products for delivery to customers and the market (Dimitrakopoulos and Lamghari, 2022; Pimentel et al., 2010). Production scheduling decisions are optimised to maximise net present value (NPV) and, in cases where uncertainty is considered, minimise technical risk related to the uncertain material supply. Recent advancements in stochastic mathematical programming have demonstrated the benefits of simultaneously optimising long-term production scheduling decisions within a mining complex, while considering supply uncertainty (Goodfellow and Dimitrakopoulos, 2016, 2017; Montiel and Dimitrakopoulos, 2015, 2018). The production scheduling decisions are optimised simultaneously given the model of a mining complex and the various behaviour of the components within. Furthermore, the uncertainty and local variability of the material supply is accounted for and managed in the optimisation framework by using stochastic orebody simulations to represent the quantity and quality of materials in the ground (Goovaerts, 1997; Remy et al., 2009).

Infill drilling is important for capturing additional information about the mineral deposit that can potentially reduce sources of error by identifying boundaries and discrete ore zones to more accurately classify the mineral resources and reserves (Parker, 2012). Planning which areas to invest in infill drilling remains a major challenge in the mining industry because the outcomes of drilling are uncertain and the information collected may not necessarily cause a change in the long-term planning decisions. However, infill drilling in areas that provide valuable information for orebody modelling including geostatistical simulations can directly impact the outcome of the resulting long-term production schedule, potentially leading to improved production forecasts. To assess the influence of infill drilling on production scheduling decisions, a new framework is developed herein that quantifies improvements to the long-term production schedule based on infill drilling data. This provides an improved criterion for infill drillhole selection. Future infill drilling data is sampled from a stochastic realisation and used to update a set of stochastic orebody simulations (Jewbali and Dimitrakopoulos, 2011; Peattie and Dimitrakopoulos, 2013). The production schedule is then reoptimised considering new information to account for the forecasted improvements to the long-term production schedule.

Past work aiming to optimise infill drilling consider minimising estimation variance (Diehl and David, 1982; Gershon et al., 1988; Soltani and Safa, 2015), which is influenced by the geometry of the drillhole configuration, sample density, number of samples and covariance structure (Cressie, 1991). This ignores the critical impacts related to uncertainty and local grade variability of the attributes of interest (Delmelle and Goovaerts, 2009; Ravenscroft, 1992). Furthermore, additional drilling is often assumed to reduce grade uncertainty, however, Goria et al. (2001) and Dimitrakopoulos and Jewbali (2013) document cases where collecting additional information can lead to greater grade variability and uncertainty. This supports the suggestion to consider the potential impacts of infill drilling on the long-term mine plan.

Several approaches for optimising infill drilling decisions are founded upon geostatistical simulation techniques and have been introduced to overcome these limitations by accounting for the effects of different drilling patterns. Dirkx and Dimitrakopoulos (2018) apply a multi-armed bandit approach for selecting infill drilling patterns in a stockpile using a criterion that accounts for changes to material classification. Infill drilling patterns that cause the largest changes to the material classification of stockpiled material are selected as this is expected to reduce misclassification of these materials. To assess the value of infill drilling, additional data is retrieved from a stochastic realisation of future infill drilling data and used to re-simulate a set of stochastic orebody simulations. Boucher et al. (2005) use geostatistical simulations of correlated variables to observe the impact of drilling on the forecasted profits obtained while evaluating different stockpiling options. By virtually drilling a simulated realisation of the future infill drilling data (assumed to be the true deposit) and using the information to re-simulate the deposit with denser drilling patterns, an optimised configuration is determined by comparing block classifications and economic indicators. Kumral and Ozer (2013) propose an approach that aims to optimise the drilling configuration with a genetic algorithm that minimises the maximum interpolation variance by sampling the drilling information using future simulated drillhole data and available exploration data. Verly and Parker (2021) apply conditional simulations and confidence intervals to classify minerals as measured or indicated. This could be used as another criterion for infill drillhole planning. A limitation of these simulation-based methods is that they do not consider the relationship between the drilling information collected and potential effects on long-term mine planning and production scheduling, which may lead to additional value.

The approach presented herein introduces a new framework for optimising infill drilling decisions that consider long-term production scheduling decisions in the context of the simultaneous stochastic optimisation of mining complexes by evaluating potential improvements to the long-term production schedule and associated forecasts. Actor-critic reinforcement learning with deep neural networks (Lillicrap et al., 2015; Sutton and Barto, 2018) is applied to learn a policy for selecting infill drilling locations. The reinforcement learning policy guides the optimisation process to relevant drilling locations with a stochastic search, learning the areas that lead to the largest improvements in the production schedule through continuous trial-and-error. Stochastic orebody simulations are updated with ensemble Kalman filter (EnKF), which overcomes the requirement to re-simulate the entire deposit each time new additional information is retrieved (Evensen, 2003). Then, the long-term production schedule is simultaneously optimised considering the additional drilling information collected. Several recent works have demonstrated the successful performance of deep neural networks for a variety of optimisation problems (Kumar and Dimitrakopoulos, 2021; Kumar et al., 2020; Lillicrap et al., 2015; Mnih et al., 2015; Silver et al., 2017). Applying reinforcement learning for selecting infill drilling locations is advantageous as the stochastic search utilises contextual information from the mining complex to guide the optimisation process. Additionally, actor-critic methods work with continuous actions allowing the agent to select coordinates for drilling. The value of infill drilling in a mining complex is strongly related to the value of information, a frequent topic in decision theory (Bratvold et al., 2009; Yokota and Thompson, 2004). Froyland et al. (2018) discuss how additional infill drilling relates to these topics and highlights that the value gained from infill drilling can only be realised if it results in changes to production scheduling decisions. However, a method was not employed to address these challenges.

In the following sections, the proposed method based on actor-critic reinforcement learning and simultaneous stochastic optimisation is introduced for optimising infill drilling that provides potential to improve production and financial forecasting in mining complexes. Next, a case study at a copper mining complex demonstrates the key aspects of the proposed approach and the impact of selecting a set of infill drilling locations. Conclusions and recommendations for future work follow.

Planning infill drilling with reinforcement learning and simultaneous stochastic optimisation

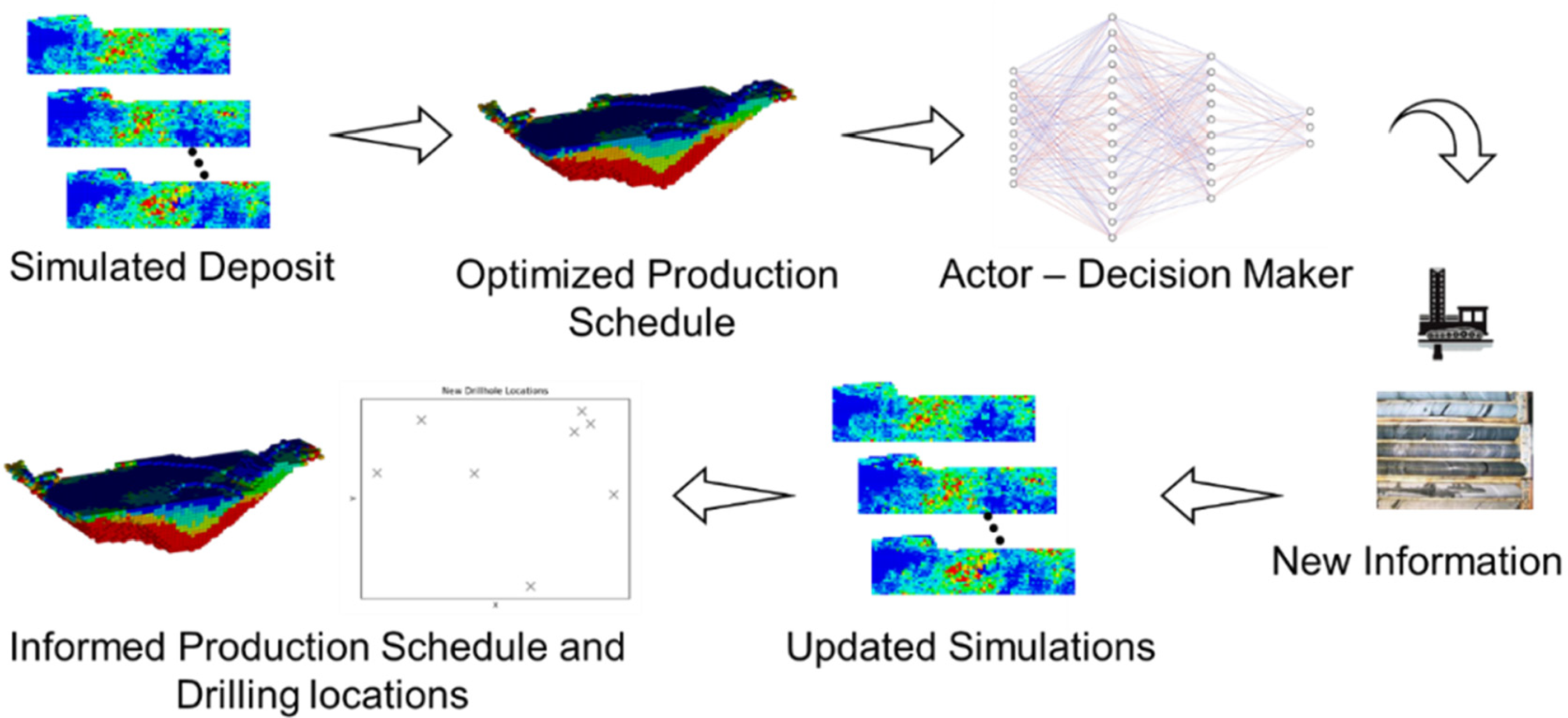

The framework developed herein for planning infill drilling in mining complexes is outlined in this section. An overview of the workflow developed for infill drillhole selection is outlined in Figure 1.

Primary workflow: (a) generate a set of equiprobable stochastic orebody simulations; (b) simultaneous stochastic optimisation of a mining complex; (c) sample drillhole from stochastic simulation (d) EnKF update; (e) retrieve drilling locations and adapted long-term production schedule.

The process begins by inputting a set of stochastic orebody simulations into the simultaneous stochastic optimisation framework. These stochastic orebody models represent the uncertain supply of material within the mineral deposit and are simulated using geostatistical techniques (Boucher and Dimitrakopoulos, 2009; Godoy, 2003). Then, the long-term production schedule is simultaneously optimised by jointly considering the extraction sequence, destination policy and processing stream decisions to maximise NPV and manage technical risk. The optimisation considers current information available for orebody modelling which accounts for the uncertainty and local variability of the material grades with a set of stochastic orebody simulations. The formulation for the simultaneous stochastic optimisation of mining complexes is detailed in Goodfellow and Dimitrakopoulos (2016, 2017). After obtaining the optimised production schedule given the information prior to infill drilling, a set of features is processed from the long-term production schedule forecasts and the stochastic orebody simulations to define the state of the mining complex. The state is observed by a reinforcement learning agent (or decision maker), which chooses an infill drilling location based on the current neural network policy denoted

Reinforcement learning background

The reinforcement learning framework applied is an actor-critic reinforcement learning approach (Lillicrap et al., 2015; Sutton and Barto, 2018). The agent or decision maker interacts with a mining complex environment E over discrete timesteps to maximise the cumulative reward or return. The infill drilling approach is modelled as a Markov decision process consisting of a state space S, action space A, initial probability distribution with density

Actor-critic reinforcement learning is applied largely due to its success with large continuous action spaces (Lillicrap et al., 2015), which works well for selecting infill drilling locations. In actor-critic reinforcement learning the policy is learned by adjusting the neural network parameters

Additionally, when neural networks or other nonlinear function approximators are used to approximate a value function it can be unstable or potentially diverge. This is caused for two primary reasons including correlations in the sequence of the observations and the correlations between the action values and target values. These challenges are addressed using a replay buffer and a target network that are periodically updated (Lin, 1992; Mnih et al., 2013; Mnih et al., 2015).

Defining the state space

The state of the mining complex environment that is used for selecting infill drilling locations is based on features derived directly from the optimised production schedule, orebody simulations and available information on site. These provide a numerical representation of the factors that were considered important in this work for drillhole selection. This information can include previously drilled locations, the scheduled period of extraction a drillhole will intersect and areas of higher or lower grade variability. Each feature is calculated based on the values obtained from the production schedule at timestep t and linear or non-linear transformation of the input parameters denote by the function

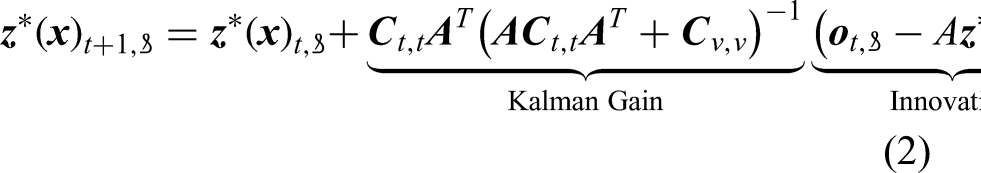

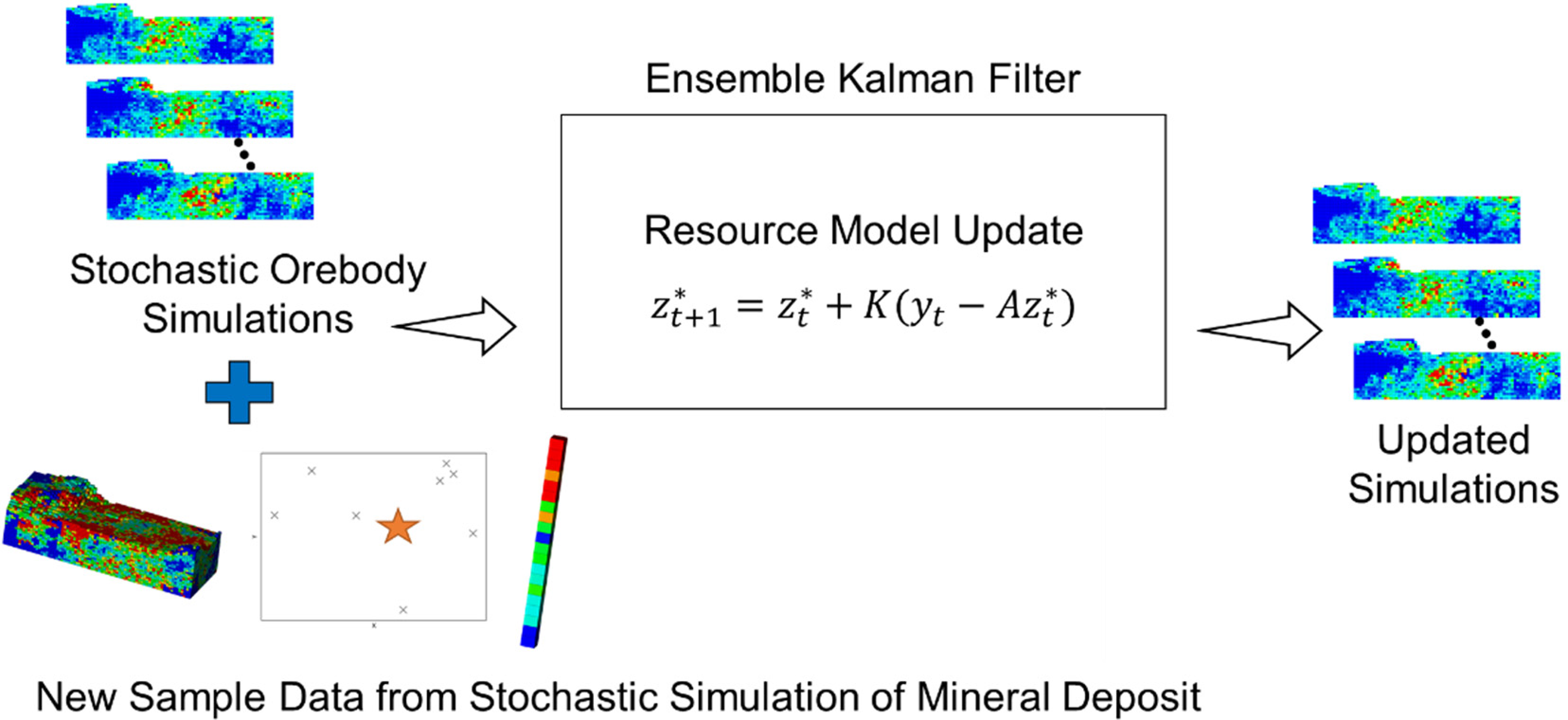

Updating simulations with ensemble Kalman filter (EnKF)

An EnKF framework is applied where the stochastic orebody simulations are updated using new information collected from future infill drilling (Figure 2). The magnitude of the update is based on the error between the simulated realisations and observed drillhole data that is retrieved from a stochastic simulation of the drillhole data at the location selected. The sampled data represents the drilling data in a real deposit and different randomly selected simulations of the drillhole data are used over several runs to ensure that after each run of the optimisation that the drillholes selected are within similar areas. Benndorf (2020) describes the EnKF updating framework for mineral resource applications in further detail.

EnKF approach for updating stochastic simulations with additional drilling data.

The EnKF framework provides an efficient process for updating a set of simulations

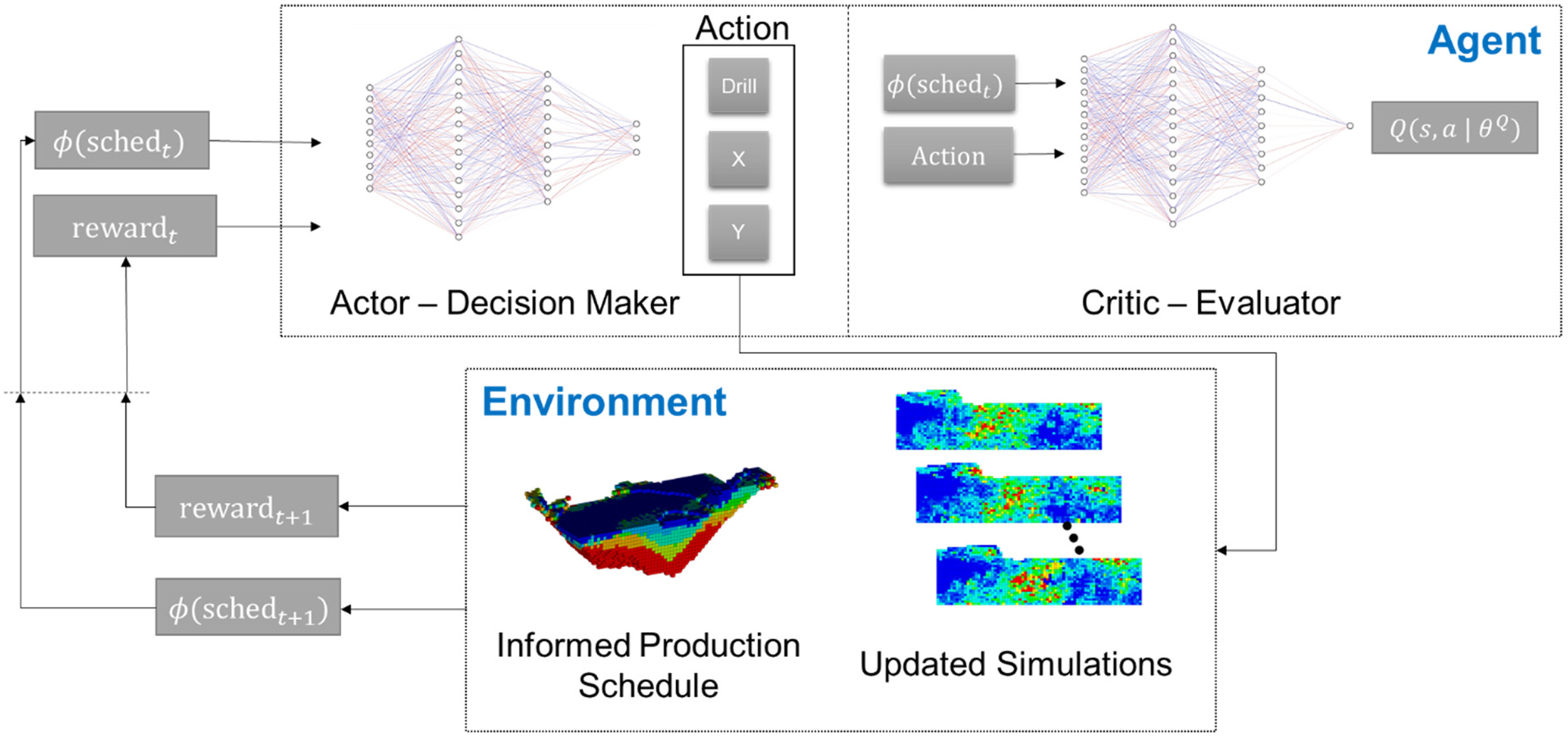

Actor-critic reinforcement learning

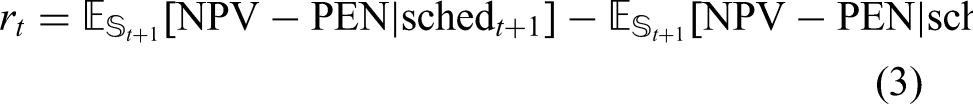

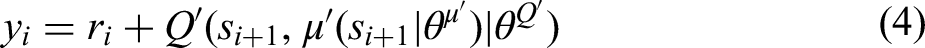

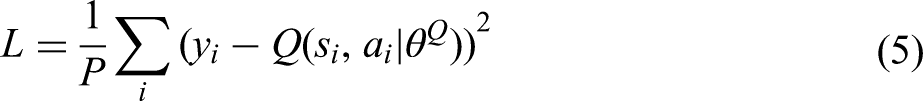

An off-policy actor-critic reinforcement learning agent is applied to optimise infill drilling. The actor-critic architecture is based on the policy gradient theorem (Peters et al., 2005; Sutton et al., 1999). During each timestep t the agent receives an observation from the mining complex

Actor-critic reinforcement learning framework for infill drillhole selection.

The actor-critic reinforcement learning algorithm uses an agent composed of two components. The actor learns a parametrised policy that maps the current state of the mining complex

Each episode of learning is a sequence of states, actions and rewards that end in a terminal state. In the mining complex, the actor decides which locations to infill drill based on the input features and proceeds until the terminal state is reached. The terminal state can occur when the agent decides to no longer continue drilling or a budgetary constraint is exceeded. A random process 1. An action 2. The agent selects the nearest drillhole location to the output coordinates given by the agent's action 3. After drilling, a reward

Note that equation (3) accounts for the most up-to-date information available ( 4. Each transition in the environment is stored in a replay buffer 5. The critic and policy networks are updated by minimising the loss L and using the sampled policy gradient 6. Lastly, the target networks are then updated using the updated policy and critic network parameters:

The process is repeated until the agent converges or the maximum number of trials are reached. PyTorch is used for training the neural network (Paszke et al., 2019). A flowchart for the method can be found in Appendix B.

Testing the agent

After learning is completed, the trained actor (neural network policy) is used to select additional infill drilling locations. The stochastic orebody simulations of the deposit prior to additional information are provided as input along with the stochastically optimised production schedule prior to additional information. The random process noise used for action exploration is eliminated and drillholes are selected until the budget constraint is reached or the trained actor decides it is no longer valuable to continue drilling. The resulting output is the infill drilling configuration that leads to the largest improvement in NPV and/or reduction of technical risk based on the current policy. The learning process is repeated several times with different stochastic simulations of the mineral deposit for sampling drillholes to ensure stability of the results and that similar locations are found independent of the stochastic realisation that is sampled.

Case study in a copper mining complex

The framework developed for selecting additional infill drilling locations is tested in an operating copper mining complex with an open pit mine, several processing streams, stockpiles and a waste dump facility. The objective of the proposed framework is to determine a set of infill drilling locations that maximise NPV and minimise the risk of deviating from production targets given a fixed drilling budget.

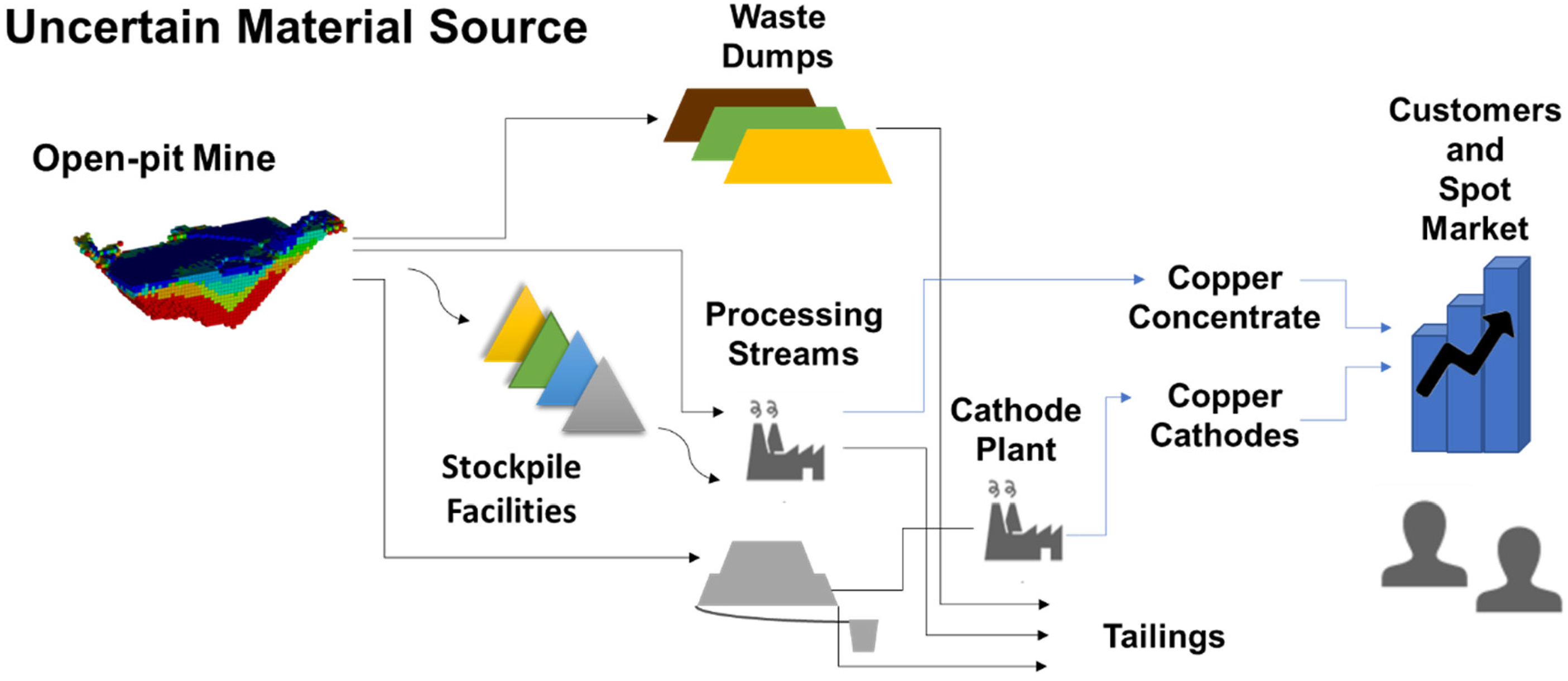

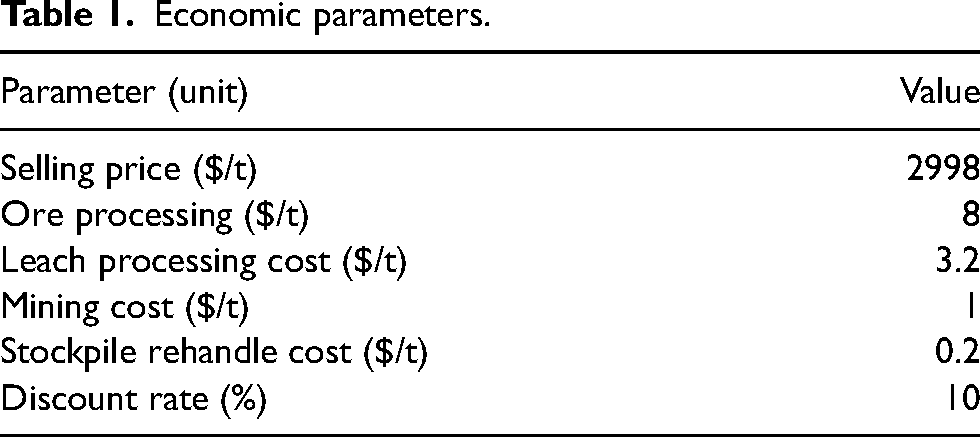

The mining complex considered is shown in Figure 4, which includes the allowable material flows from the open pit mine to the customers and the market. A set of stochastic orebody simulations are used to represent the uncertain material supply. The simulated attributes considered include total copper and soluble copper. There are two processing streams with different recoveries. The process plant produces copper concentrate and can recover sulphide and oxide materials. The heap leach destination is used to recover copper from oxide materials and produces a copper cathode product after treatment at a cathode plant. In addition, a stockpile facility is included in the mining complex to allow material to be stored and processed in later periods. The extraction sequence, destination policy and process stream decisions are optimised with the simultaneous stochastic optimisation framework and the resulting production schedule is the initial input to the infill drilling optimisation framework. Lastly, the economic parameters for optimising the mining complex are shown in Table 1 (scaled for confidentiality reasons).

The copper mining complex.

Economic parameters.

The case study considers a set of 10 stochastically simulated orebody realisations with a block size of 20 × 20 × 15

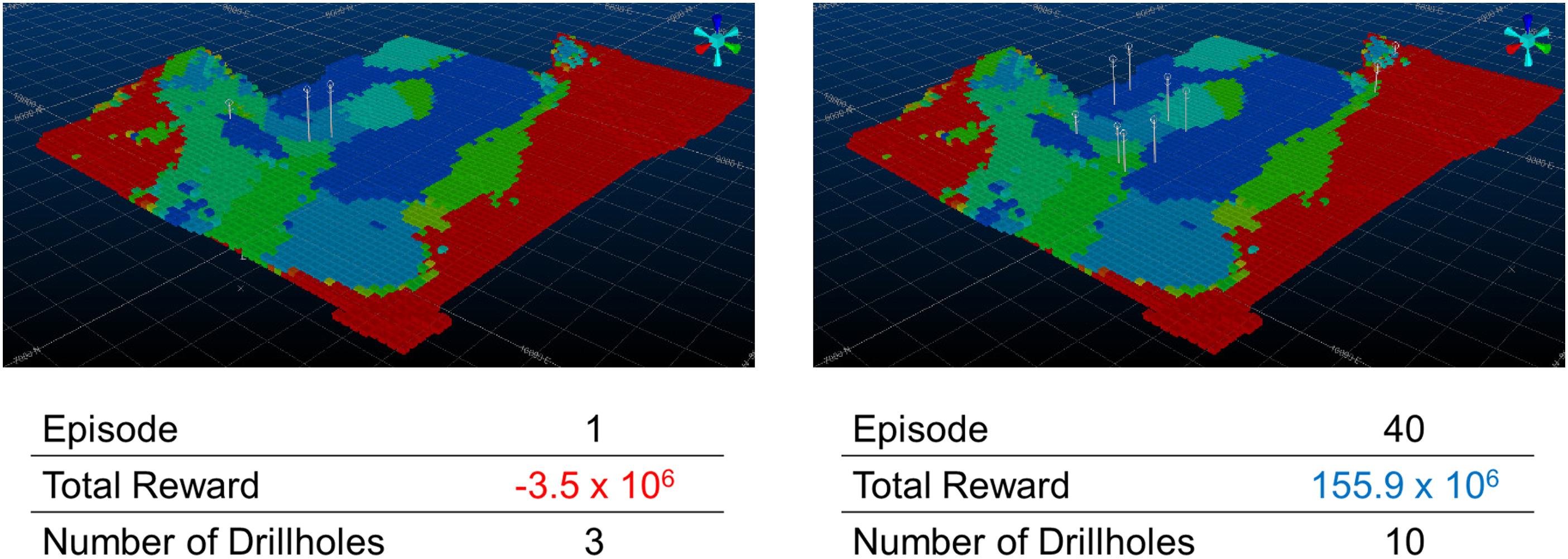

During training, the actor-critic reinforcement learning agent is left to interact with its environment to learn a set of infill drilling locations that provide the largest improvement in the long-term production schedule. Two example episodes of training the reinforcement learning approach are illustrated in Figure 5. The image shows the initial production schedule generated given the information prior to additional infill drilling and the periods of extraction are indicated by the colours of each block. Marked using a circular survey collar location are the different drilling locations determined by the actor during each episode of training. The total reward quantifies the improvement in the objective function obtained through collecting additional information. Additionally, the number of holes drilled in each episode are listed. The number of drillholes selected changes between each episode as the reinforcement learning agent also decides when to stop drilling. The cumulative reward obtained varies significantly depending on where drilling is commenced and the potential value added by adapting the production schedule to new information. Finally, the trained actor is used to select the number of drillholes and appropriate locations. The stochastic orebody simulations are updated using EnKF and a new production schedule is created using the simultaneous stochastic optimisation framework.

Training examples for different episodes including the number of drillholes drilled and the total cumulative reward obtained.

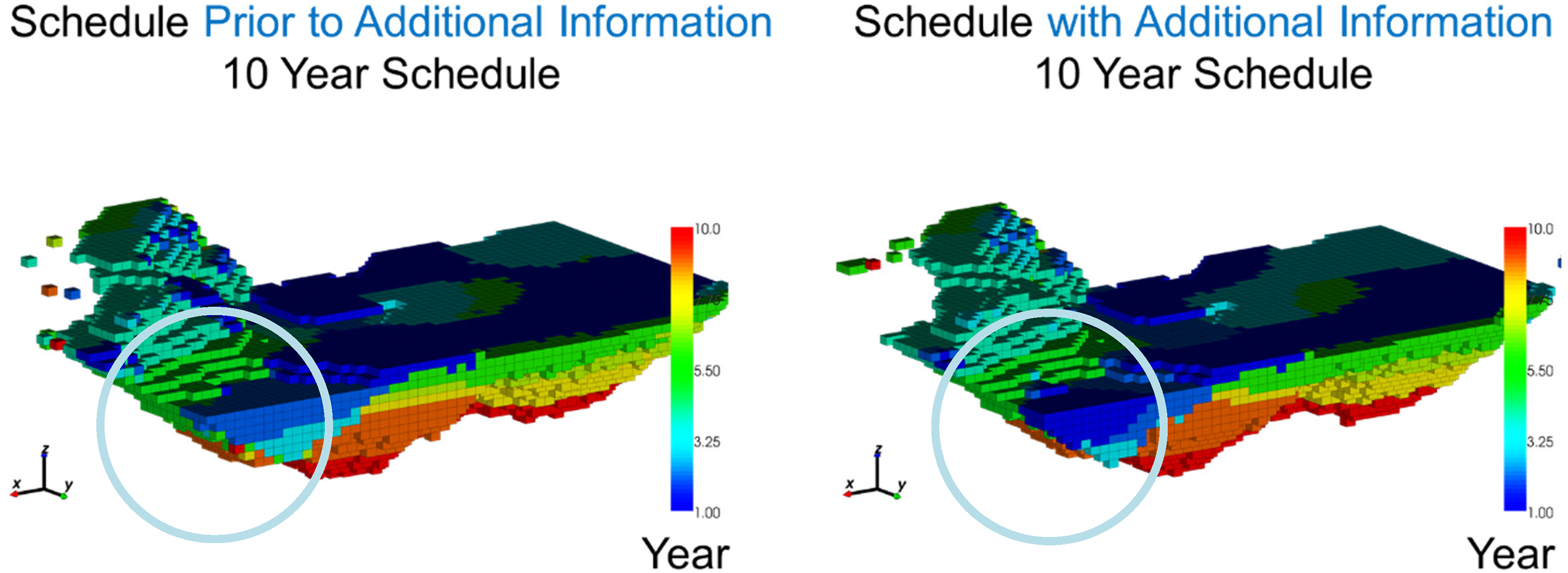

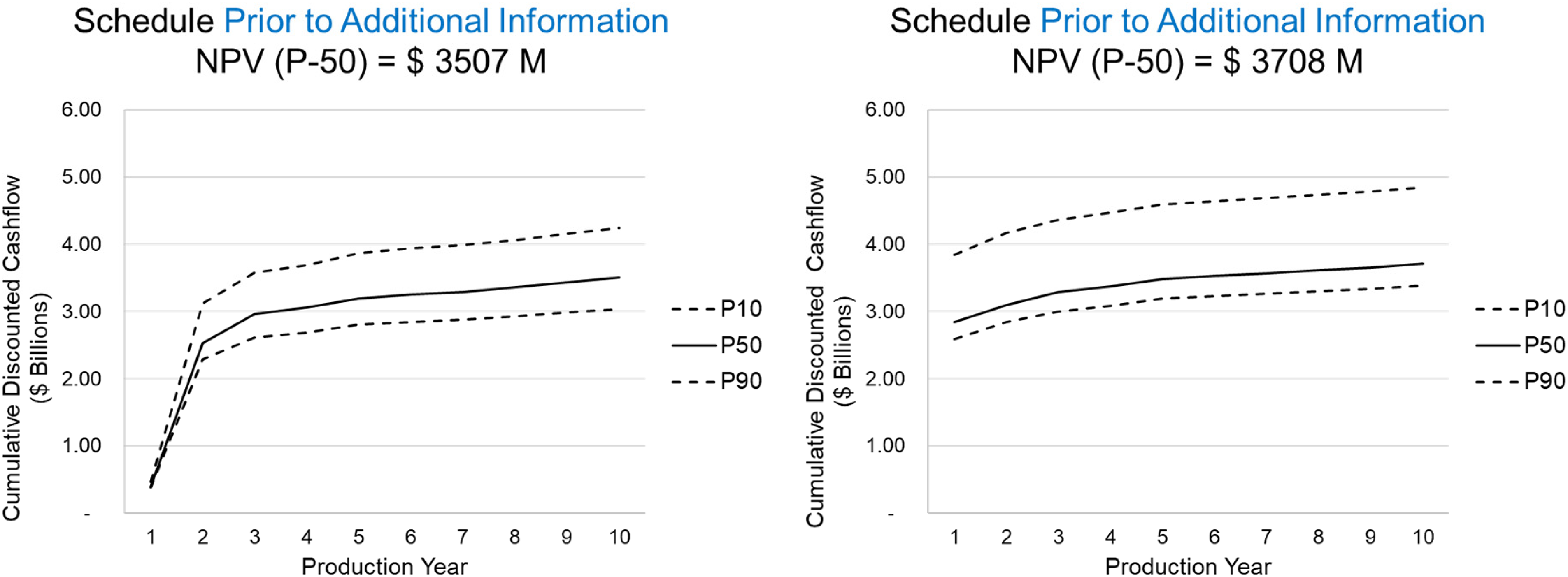

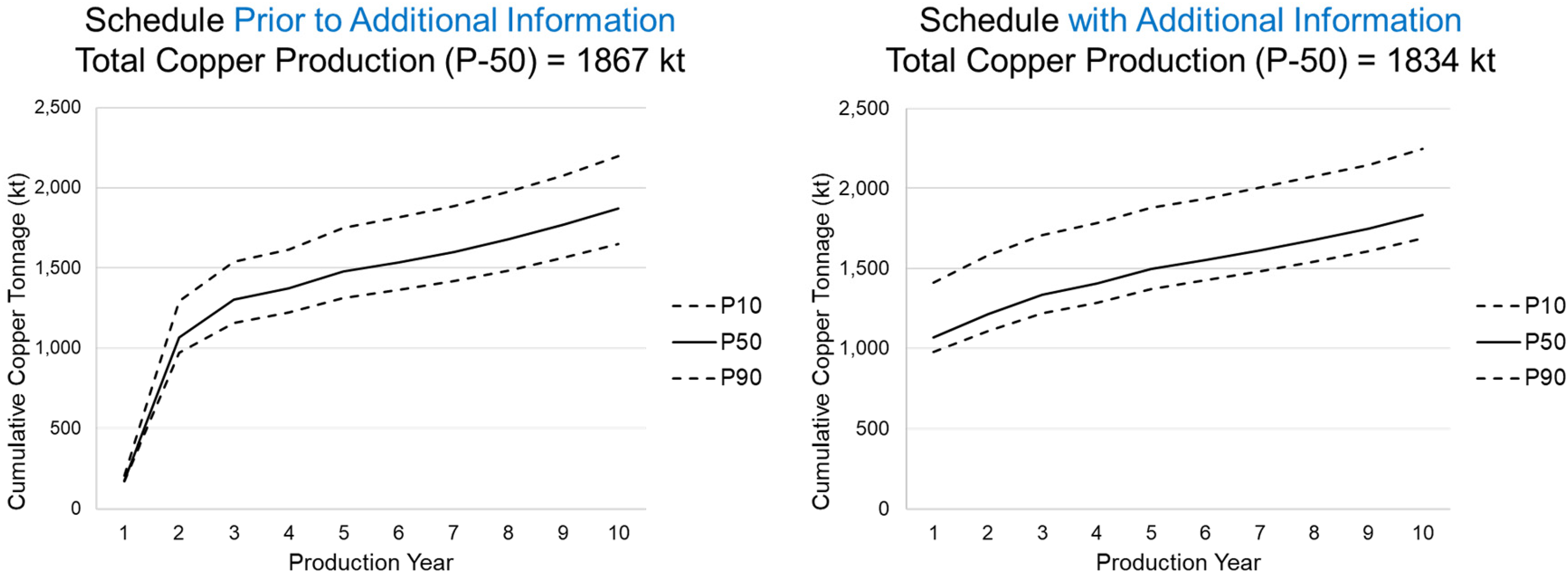

In Figure 6, the resulting production schedule prior to new information (left) is compared to the production schedule with additional information (right). The primary findings reveal that the extents of the final pit are similar between the two production schedules, however, the extraction sequence over the 10-year schedule changes significantly. In the main areas of drilling, marked with a circle, the early extraction sequence decisions change due to additional drilling information by adapting to an area of lower risk to generate further value for the mining complex. As a result of extracting this area earlier in the mine life other areas are pushed back to later periods. This is noticeable in the central part of the deposit as the simultaneous stochastic optimisation approach adapts the schedule to manage production targets and increase NPV. In Figure 7, the schedule that adapts to additional infill drilling information, which results in a 5.7% increase in the forecasted NPV. Furthermore, the additional information changes the resulting production forecasts leading to a 33 kt decrease in recovered copper at the process plant over 10 production years which is shown in Figure 8. This leads to a significant improvement to the long-term production schedule forecasts which is only obtained by adapting the production schedule to potential new information that is collected via infill drilling. Mining higher grade materials earlier with a higher confidence during the first two production years helps improve the overall production cashflows as drilling provides further confidence for mining these areas taking advantage of the time value of money.

Production schedule: (left) prior to additional information; (right) after additional drilling.

Cumulative discounted cashflows given the schedule: (left) prior to additional information; (right) after additional drilling.

Total copper production given the schedule: (left) prior to additional information; (right) after additional drilling.

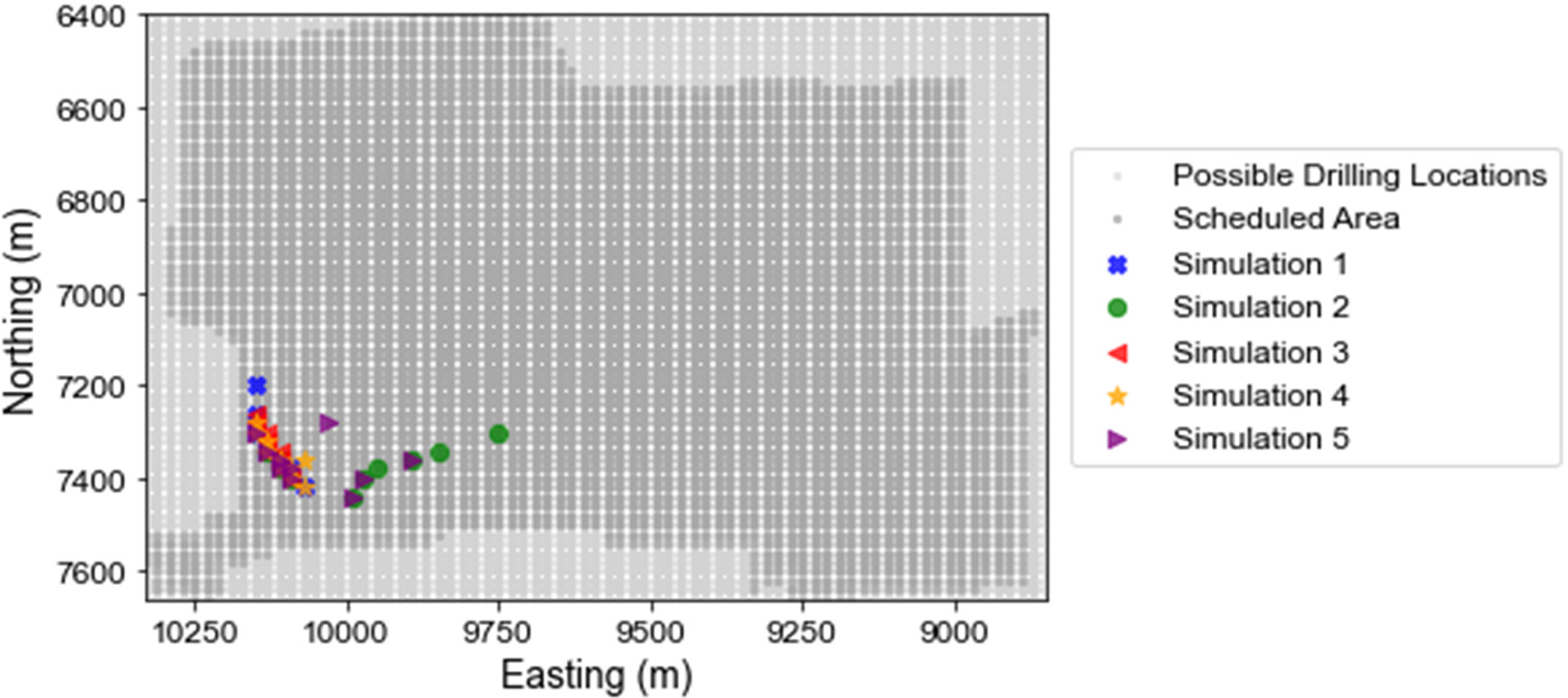

Five different randomly sampled geostatistical simulations of future infill drilling data are used to represent the potential mineral deposit in five different runs. The proposed framework is run five times where the infill drilling data is retrieved from the respective simulation. The repeated testing of the proposed process using different simulations to represent the mineral deposit shows that similar areas are drilled and identifies a stable area for infill drilling (Figure 9). The drillholes selected in each run are shown in Figure 9, light grey points show all possible drilling locations in the mining complex and the dark grey region shows the extent of the scheduled areas in the long-term production schedule. The drillholes are highly localised in the northeast portion of the open pit mine and there is considerable overlap in the drillhole locations when using different simulations to represent the sampled infill drilling data. Furthermore, the drilling area is contained within a 250 m × 500 m area. Therefore, the drillhole selection framework identifies a drilling area in a stable way that is not affected by using different or additional realisations.

The infill drilling locations selected are shown for each run of the algorithm that use a different stochastic simulation to represent the true drillhole data. The light grey shows the possible drilling locations and the dark grey shows the scheduled areas.

Conclusions

A new approach has been proposed to determine infill drilling locations in mining complexes that uses actor-critic reinforcement learning. Reinforcement learning guides the framework to valuable drilling locations with a new criterion that links infill drilling to its impact on long-term mine production scheduling decisions. Simultaneous stochastic optimisation is applied to quantify the expected improvements to the long-term production schedule based on the new information collected. This provides insight on where drilling should occur to understand the complexity of the mineral deposits by updating the stochastic orebody simulations that are used as inputs for long-term production planning. The proposed approach was tested in a copper mining complex where the results demonstrate significant improvements to the forecasted NPV of the project by adapting the schedule to potential future infill drilling data. This method eliminates the need to use conversion indicators and other proxies to the production schedule value and directly considers the influence of drilling on production scheduling decisions. Actor-critic reinforcement learning is applied to optimise the infill drilling locations and takes advantage of key criteria from the mining complex to select infill drilling locations including considerations related to the uncertainty and local variability of the mineral deposits. Future work should consider allowing the proposed approach to select drillholes with different azimuth and dips to increase the flexibility in the drilling direction. In addition, supplementary information related to the deposit and production schedule could be explored to provide additional context for the drillhole selection process and improve the reinforcement learning agent's ability to distinguish valuable locations to drill.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is funded by the National Science and Engineering Research Council of Canada (NSERC) CRD Grant 500414-16, NSERC Discovery Grant 239019, the COSMO mining industry consortium (AngloGold Ashanti, Anglo American, BHP, De Beers, IAMGOLD, Kinross Gold, Newmont, and Vale), and the Canada Research Chairs Program.

Appendix A: Simultaneous stochastic optimisation.

The simultaneous stochastic optimisation framework outlined in Goodfellow and Dimitrakopoulos (2016) uses the following objective function: