Abstract

Construal-level theory (CLT) proposes that psychological distance influences the level of abstraction at which something is mentally construed: Things perceived as less probable (likelihood) or further away from the here (spatial distance), now (temporal distance), or self (social distance) are thought about more abstractly. In this international multilab study, we tested four basic hypotheses derived from core assumptions of CLT and explore potential moderators and boundary conditions of the effects. Participants (

Keywords

The mind’s ability to represent the world in concrete terms or as abstract concepts is a fundamental aspect of human cognition. This ability is central to understanding processes underlying, for instance, prejudice, judgment and decision-making, and problem-solving (Burgoon et al., 2013). Being able to predict how objects, people, and events are mentally represented is therefore essential to understanding how people interact with the world around them. Construal-level theory (CLT) is a framework developed to explain when and why the mind construes objects and events in more concrete (low-level) or abstract (high-level) terms (Trope & Liberman, 2010).

According to CLT, how an object is construed depends on how psychologically distant it is perceived to be. CLT suggests that as perceived psychological distance increases, objects and events should be represented at a higher construal level. That is, they should be represented in more abstract, simple, and decontextualized terms compared with objects and events perceived as psychologically close (Trope & Liberman, 2003). CLT proposes four types of psychological distance: temporal, spatial, social, and likelihood (or hypothetical) distance. Specifically, objects and events can be perceived as close or distant in time or space, people can be perceived as close (e.g., similar) or distant (e.g., dissimilar) to the self, and events can be perceived as close (likely/actual) or distant (unlikely/hypothetical) from the real world. Increased distance on any of these dimensions should lead to higher-level, more abstract construals (for a review, see Trope & Liberman, 2010). We refer to this as the “direct” effect of psychological distance on construal level (as opposed to indirect, downstream effects on other variables).

As an example, consider how temporal distance may influence construal level. Imagine that you are to attend a friend’s wedding in a year’s time. According to CLT, at the current point in time, you are likely to think about the event in abstract and decontextualized terms. Your representation of the wedding should be schematic, focusing on typical and core aspects, such as well-dressed people and the value of celebrating a couple’s love for each other. However, as the day approaches, your representation of the wedding should become more contextualized and specific. The day before the wedding, your thoughts may be more on details such as what to wear and how to get from your hotel to the wedding ceremony.

The above example describes a few ways in which a difference in construal level may manifest itself; specifically, that greater distance increases people’s tendency to think of actions in terms of their purpose instead of their concrete steps of implementation. The CLT literature proposes several other manifestations of higher-level construal—for example, an increased breadth with which people categorize objects and an increased tendency to focus on the whole rather than the parts. A large number of dependent measures have been developed to assess construal level and mental abstraction (for a list of commonly used measures of abstraction, see Burgoon et al., 2013).

Apart from the direct effect of psychological distance on construal level, CLT also proposes downstream consequences. These are secondary effects of psychological distance on behavior. More specifically, the theory proposes an indirect path such that level of mental construal mediates the effect of psychological distance on behavior. For example, previous studies have found that psychological distance influences performance predictions (e.g., of one’s ability to perform a task in the near or distant future; Nussbaum et al., 2006), evaluations (e.g., of an essay written by someone similar or dissimilar to oneself; Liviatan et al., 2008), and behavioral intentions (e.g., the number of hours one is willing to volunteer in the near or distant future; Eyal et al., 2009). These findings have been interpreted as the result of varying levels of mental construal. Although research on downstream consequences constitutes a large part of the CLT literature, the topic lies outside the scope of the current research. Here, we focus specifically on direct effects of psychological distance on construal level.

By now, hundreds of experiments that test predictions from CLT have been published (for a bibliometric overview, see Adler & Sarstedt, 2021). In the most comprehensive meta-analysis to date, Soderberg et al. (2015) concluded that the existing literature provided support for a medium-sized effect of psychological distance on both mental abstraction and downstream consequences. In addition to this large body of work, converging evidence for the theory has been reported in other areas of inquiry. This includes effects of social distance on person-perception, temporal effects on memory, and the relationship between power differences and social distance (Trope & Liberman, 2010). Consider, for example, the correspondence bias in person-perception research, which is the increased tendency to ignore situational information and draw inferences about an actor’s stable traits when judging others’ (vs. one’s own) behavior (Gilbert & Malone, 1995). From a CLT perspective, this is because others are construed more abstractly than the self, resulting in a greater focus on abstract, decontextualized dispositions (Trope & Liberman, 2010).

Despite the vast body of work on CLT, replication studies are rare. Independent replications are crucial to obtain accurate estimates of effect sizes, uncover potential moderators, and determine whether the effects are replicable outside the original labs (Nosek & Errington, 2020; Simons, 2014). The few extant replication attempts provide a mixed picture of the replicability of CLT findings. These studies have produced either (a) mixed results that both replicated and contradicted the original findings (Luke et al., 2021; Žeželj & Jokić, 2014), (b) nonsignificant results or results in the opposite direction of the original findings (Calderon et al., 2020; Gong & Medin, 2012; McCarthy et al., 2018), or (c) estimates of effect sizes in the expected direction but substantially smaller than the originally reported effects (Sánchez et al., 2021). Furthermore, with the exception of Calderon et al. (2020) and Sánchez et al. (2021), these replication studies have focused on downstream consequences of construal level, that is, the effect of psychological distance on behavior. Studies on direct effects are central to CLT’s assumption that construal level is the mechanism driving the influence of psychological distance on behavior.

In a recent unpublished preprint, Maier et al. (2024) reanalyzed the meta-analytic data of Soderberg et al. (2015) using novel, robust Bayesian meta-analytic techniques (Bartoš et al., 2021). This reanalysis estimated a bias-corrected effect size near zero for direct effects of psychological distance on construal level. In addition, Maier et al. found that the rate of positive results in the CLT literature far exceeds that which would be expected given the average statistical power of studies in the literature. These signs of bias in conjunction with the limited number of independent replication attempts highlight the need for powerful tests of the robustness and boundary conditions of CLT’s hypotheses.

The Present Research

In the present research, we add to the CLT literature by conducting direct and paradigmatic replications of the direct effects of four psychological distances on construal level. Using an international multilab approach, we directly replicated the following two studies: Liberman and Trope (1998, Study 1; temporal distance) and Fujita et al. (2006, Study 1; spatial distance). In addition, the experimental paradigms used in the above studies were extended to test the two remaining distances—social distance and likelihood. All four studies used the Behavior Identification Form (BIF; Vallacher & Wegner, 1989)—which is the most widely used measure of abstraction in the literature (Burgoon et al., 2013)—as the main dependent variable.

Our primary analyses examined whether the effects in the four studies were in the direction predicted by CLT. In addition, for the two direct replications, we examined whether the replication effects were consistent with the original results in terms of direction and size of the effect. Furthermore, the large scale of the project allowed us to examine potential moderators, thereby addressing a critical research gap noted by others in the field (Soderberg et al., 2015). Among other things, we examined whether the effects are contingent on the mode of data collection (online vs. in lab) and regional variations. The moderator analyses were aimed at identifying potential boundary conditions of the tested effects, which may prompt further specification of the theory and/or revision of its hypotheses.

Method

Identification of suitable studies

To be a candidate for replication for the current project, a study should have (a) experimentally manipulated one and only one form of psychological distance and (b) used a direct measure of construal level as the dependent variable. To identify suitable studies, we screened experiments included in Soderberg et al.’s (2015) meta-analysis on direct effects of psychological distance on construal level. Out of an original 134 experiments, 47 were excluded because in our view, they did not examine a direct effect of psychological distance on construal level (e.g., we did not consider ratings of the feasibility vs. desirability of an outcome as a direct effect, in line with Liberman et al., 2002). The remaining 87 experiments were screened further. For details on the screening procedure, see https://osf.io/tpf6v/. To identify additional studies, we screened all articles in a recent bibliographic article on CLT research (Adler & Sarstedt, 2021). After excluding duplicates with Soderberg et al., of the 844 articles identified in Adler and Sarstedt (2021), only six contained studies that experimentally examined the influence of psychological distance on a direct measure of construal. One of these, Calderon et al. (2020), was excluded from further screening because it was a direct replication of a prior CLT study. The remaining five articles contained 12 potentially eligible studies. We uncovered an additional seven potentially eligible experiments from three documents not included in either Soderberg et al. or Adler and Sarstedt: Danziger et al. (2012), Grinfeld et al. (2021), and Liviatan et al. (2008).

The potentially eligible experiments (

Failed validation of measure

Based on advice from researchers in the field, we pretested several direct measures of mental construal (Mac Giolla et al., 2024). These measures were developed for paper-and-pencil data collections. To validate their use in computerized contexts, we examined how the measures responded to direct manipulations of mental abstraction in which we directly asked participants to imagine events in more concrete or abstract terms. Out of the five dependent variables we attempted to validate, only one—the BIF (Vallacher & Wegner, 1989)—worked as intended. Studies using any of the other four dependent measures were excluded.

Perceptual measure

Perceptual measure refers to dependent variables such as the Navon Letters task (Navon, 1977) and the Gestalt Completion Test (Ekstrom, 1976). Studies using such measures were excluded on advice from an expert in the field (N. Liberman, personal communication, March 11, 2020).

Previous unsuccessful replication

Studies were excluded if there were previous unsuccessful replication attempts of the studies.

Design issues or retracted

Studies were excluded if there were serious design issues (e.g., the experimental manipulation was confounded with other variables) or the article was retracted.

Logistical issues

Studies were excluded if they were methodologically inappropriate for a multisite project (e.g., were highly culturally specific, required extensive prescreening).

Study unpublished or unavailable

Studies were excluded if they were unpublished or unavailable.

Original effect inconsistent with theory

Studies were excluded if not all of the hypothesized effects were statistically significant (

Figure 1 shows a breakdown of the screening procedure for each of the four types of psychological distance (for the full data set of coded studies, see https://osf.io/x9w4v). For temporal distance, there were several potentially suitable studies. We opted for Liberman and Trope (1998, Study 1) because it is a seminal study in the field that is highly influential (more than 2,900 citations on Google Scholar as of December 2022). For spatial distance, we identified only one suitable study, Fujita et al. (2006, Study 1). This is also a highly influential seminal study in the field (more than 900 citations on Google Scholar as of December 2022). However, we could not identify any suitable studies for either social distance or likelihood. For this reason, we conducted paradigmatic replications for these two distances. In brief, we extended the basic design of Liberman and Trope and Fujita et al., but we replaced the temporal and spatial manipulations with social and likelihood manipulations. For details, see the study-specific protocols below.

Overview of study-selection procedure. Light-gray bars represent excluded studies.

Labs and study participants

Labs were recruited by both efforts of the coordinators of the project (e.g., via a project website, Twitter, Facebook, online forums, and email lists of social-psychology networks) and a call for labs announced by the Association for Psychological Science. The project had no financial resources to pay study participants, and participating labs were therefore free to choose the means of compensation best suited for their local sample (e.g., monetary reimbursement, course credits, voluntary participation). Type of compensation was recorded by each lab (52 labs used course credit, nine labs used monetary reimbursement, four labs used both course credits and monetary reimbursement, six labs offered other forms of compensation, and six labs gave no compensation to participants). Only individuals 18 years or older were eligible for participation. We set a deadline (January 31, 2024) for labs to confirm that they were willing and able to collect data within a designated period of time.

By the recruitment deadline, 95 labs had signed up to participate. Of these, 78 labs provided data for the analyses. A total of 12,514 participant responses were recorded. We excluded data for three broad reasons: (a) Some labs identified cases of participants completing the study more than once, and the additional responses (beyond the first) were removed (

Labs from 27 countries and regions contributed: United States (

Statistical power

In most situations, statistical power in multilab designs is more quickly accrued by increasing the number of labs rather than increasing the number of participants per lab (Westfall, 2016). To increase the number of contributing labs, we therefore required each lab to collect only a modest number of participants (

Sample sizes of the four studies ranged from

Design and procedure

Each lab received a unique link to the study, which was administered via Qualtrics survey software. For the sake of experimental control, labs were strongly encouraged to collect data in the lab and were asked to consider online data collection only if data collection in the lab proved impossible. In such cases, however, a local sample had to be used (e.g., a university participant pool, local community members). It was not permissible to use crowdsourcing platforms, such as Amazon Mechanical Turk or Prolific. In total, 53 labs (67.9% of all labs) collected data in the lab only, 22 labs (28.2% of all labs) collected data online only, and three labs (3.8% of all labs) used a combination of lab and online data collection. This resulted in the collection of data from 7,871 participants in the lab (66.8% of all participants) and 3,715 participants online (31.5%). For one lab that collected data both in lab and online, a procedural error led to it not being possible to reliably determine which cases were collected in which modality (

In each lab, participants were randomly assigned to one of four study protocols: temporal distance, spatial distance, social distance, or likelihood. They were then randomly assigned to one of the two experimental conditions in each study (close vs. distant). For a flowchart of the full procedure, see Figure 2. For ethical or practical reasons, some labs required minor procedural modifications (e.g., omission of recording ethnicity data), and these modifications are noted in the supplemental material (https://osf.io/ptczq).

Flowchart of the study procedure. Sample and group sizes are reported after the removal of participants with incomplete data.

Durations for the experiments were automatically recorded by the survey platform. Some durations recorded were clearly incorrect (e.g., multiple days), likely because of technical issues (e.g., the platform treating a completed survey as though it had not been submitted). Examining the raw data from responses that took more than 60 min (

General instructions

Before providing informed consent, all participants were informed about approximately how long the study would take, roughly what it would consist of, and what compensation they would receive for participating, if any. Participants were also informed that participation was voluntary; they could withdraw at any stage without any explanation needed; their responses were anonymous, insofar as answers could not be traced to any individual; and the anonymous data would be made openly available to other researchers. The exact formulation of labs’ consent forms varied because of differences in local institutional-review-board requirements. For labs’ verbatim consent forms, see OSF (https://osf.io/zywms/). Participants were required to actively check a box on the computer screen to indicate that they had understood the information and provided their consent. If participants consented, they were taken to the next page, which inquired about demographic information.

For demographics, participants were asked the following questions (answer options are provided in parentheses): age (numeric entry in years), gender (male, female, nonbinary, other), nationality (list of countries), ethnicity (free text), occupation (employed, student, other), and highest education level achieved (primary school, secondary level [high school], college/university, postgraduate). If college/university or postgraduate was selected, an additional question about primary subject area was asked. Inquiring about demographics before the experiment was required because of the experimental manipulation in the social-distance study (see study protocols below).

Participants were then randomly assigned to one of the four study protocols. The “evenly present elements” option in Qualtrics was applied to ensure that the randomization process produced approximately equal group sizes in each lab.

Protocols

We kept the two direct-replication protocols—temporal distance (Liberman & Trope, 1998) and spatial distance (Fujita et al., 2006)—as similar as possible to the original studies but made some necessary adjustments. Primarily, changes concerned making the protocols appropriate for an international data collection instead of a region-specific one and switching from paper-and-pencil data collection to collecting data via an electronic questionnaire. Below, we present a brief description of the four study protocols. For the full study protocols for all four studies and a detailed description of the differences and similarities between the original and replication experiments, see OSF (https://osf.io/zywms/).

The translation of the study materials followed the procedure used by Jones et al. (2021) in a recent multilab-replication project (for the original translation procedure, see Brislin, 1970). Labs conducting the study in a language other than English were asked to coordinate the translation of the protocols to their own language, and translations were then independently back-translated to ensure accuracy (for full details on the translation procedure, see OSF https://osf.io/awzfc).

To maximize transparency, each lab was asked to make a video recording of their procedure for administering the study in the lab using a mock participant. The videos are available on OSF (https://osf.io/r89ks/).

The effect of temporal distance on the BIF

Original study

In the original study (Liberman & Trope, 1998, Study 1), participants (

Replication

We received the original study materials from the authors and followed them as closely as possible. Participants were first presented with standard instructions for filling out the BIF. As with the original study, this was closely based on the instructions for Vallacher and Wegner’s (1989) original scale. To manipulate temporal distance, participants were asked to imagine engaging in the 19 behaviors of the abridged BIF either “next year” (temporally distant) or “tomorrow” (temporally close). In line with the original instructions, each BIF item was also phrased in accordance with the experimental condition. For example, participants were asked to “Think about yourself painting a room [next year]/[tomorrow]”. 2

In addition, for the replication study, we included the six BIF items that were excluded in the original study for exploratory purposes. The original authors excluded the items because they were deemed irrelevant for their sample. Our replication study, however, used a more heterogeneous sample than the original study, and these items could provide interesting information about effects on all 25 activities. The six added items were presented separately on a subsequent page so they could not affect the responses on the 19 items included in the original study (for the 19 original items used in the primary analyses, see Appendix A).

The effect of spatial distance on the BIF

Original study

Fujita et al. (2006, Study 1) used the same basic approach as Liberman and Trope (1998, Study 1) except that spatial distance was manipulated instead of temporal distance. We obtained a copy of the original materials from the authors. In the study, participants (

Replication

Except for two necessary adjustments, the instructions in the replication were identical to the original instructions. First, adjustments were made to account for each lab’s specific location. Labs were instructed to use their home city for the close condition and a distant city in their own country for the distant condition. Furthermore, for labs in countries and regions using the metric system, the distances were expressed in kilometers rather than miles. For example, when data collection took place in the city of Gothenburg, Sweden, the instructions for the close/far conditions were “just outside of Gothenburg, which is under 5 km away/just outside of Kiruna, which is over 1,200 km away.” Second, participants were asked to “choose” rather than “circle” their preferred items on the BIF. This was because the replication was conducted on a computer rather than using paper and pencil. All other instructions were identical.

The original authors excluded 12 items from the BIF because they were deemed irrelevant to the scenario. For exploratory purposes, these 12 items were presented separately on a subsequent page so they could not affect the responses on the 13 original items that were included in the primary analyses.

The effect of social distance on the BIF

There was no suitable original study examining the effect of social distance on the BIF. Instead, we conducted a paradigmatic replication in which we adapted the design of Liberman and Trope (1998, Study 1; used in the temporal-distance replication above) to test the effect of social distance. Participants were given the same basic instructions on how to fill out the BIF as in Liberman and Trope’s (1998) study. However, instead of manipulating temporal distance, we administered a social-distance manipulation inspired by Yan et al. (2016, Experiment 3). Participants were asked to imagine a target person that was either similar (close target) or dissimilar (distant target) to themselves in terms of age, gender, educational background, and personal interests.

The description of the target was modeled on the demographic information that participants provided at the start of the study. In the socially close condition, the target’s age and gender matched that of the participant. Age was calculated by adding 2 years to the participant’s own reported age. The target’s name was drawn randomly from a list of six common first names, specific to the country of data collection, of men (for male participants) or women (for female participants) born in the 1960s (for older participants) and 1990s (for younger participants). For participants who reported being nonbinary or of other gender, the name for socially close targets was selected randomly from a list of six common gender-neutral names specific to the country of data collection.

For participants in the socially distant condition, the target’s age and gender did not match that of the participant. For participants below 40 years, 20 years were added to the reported age. For participants 40 years or older, 20 years were subtracted from the reported age. The target’s name was again drawn randomly from a list of six common first names specific to the country of data collection. However, for male participants, the target name was a common name of women born in the 1960s (for younger participants) and 1990s (for older participants). For female participants, the target name was a common name of men born in the 1960s (for younger participants) and 1990s (for older participants). For participants who reported being nonbinary or of other gender, the name was randomly chosen from all of the 12 male or female names born in the 1960s (for younger participants) and 1990s (for older participants). In a pretest ( [Socially close] Hannah is a woman age 26. Hannah has an educational background that is similar to yours, and she shares several of your personal interests. In other words, Hannah is a person with whom you have a lot in common. [Socially distant] Paul is a man age 44. Paul has an educational background that is very different from yours, and he does not share any of your personal interests. In other words, Paul is a person with whom you have little in common.

When filling out the BIF, participants were asked to imagine that the target was performing the activities. Using the socially close example above, the instructions would read, “For each behavior in the list, you will be asked to imagine Hannah performing them.” Again, each BIF item was phrased in accordance with the experimental condition. For example, participants were asked to “Think about Hannah locking a door.” For this study, participants filled out the full 25-item BIF.

The effect of likelihood on the BIF

There was no suitable original study examining the effect of likelihood on the BIF. Instead, we conducted a paradigmatic replication in which we adapted the design of Fujita et al. (2006, Study 1) using an experimental manipulation of likelihood developed by Wakslak et al. (2006, Study 1). Participants were asked to imagine that they had been asked to help a friend move. Participants were then told that the friend would be moving only if they were offered a job they had applied for. In the high-likelihood condition, participants were told the friend thought there was a 95% chance they would get the job. In the low-likelihood condition, they were told that the friend thought there was a 5% chance they would get the job. Participants were then asked to fill out an abridged nine-item BIF and to imagine performing the activities in relation to the scenario of helping a friend move. The nine BIF items were selected based on pretesting. In the pretest, an online sample (

Follow-up questions

In addition to the primary outcome measures of the four studies, we also included several follow-up questions. These were presented to all participants and included a comprehension check, an assessment of participants’ mood, a dispositional measure of analytic thinking versus holistic thinking, and a manipulation check. The follow-up questions were included for exploratory analyses and robustness checks.

Comprehension check

Participants were asked a multiple-choice question about the distance-manipulation instruction that they had received at the beginning of the study. Specifically, they were asked how they had been asked to imagine the activities that they had previously rated. They were then given six response options, presented in random order, one of which was correct. The exact formulation of the question and the six response options were tailored to the specific study. For example, the question for the temporal study read, You were previously asked to imagine engaging in a series of activities (e.g., making a list, painting a room, brushing teeth). When were these events supposed to take place? (1 =

All comprehension checks are presented in Appendix B.

Self-rated mood

Several previous CLT studies have examined mood as a potential confound to the effect of psychological distance on construal level. For example, Wakslak et al. (2006, Study 1) measured participants’ mood to check that the experimental groups did not differ on this variable. This is a legitimate concern given that positive mood has been shown to positively correlate with mental abstraction (Fredrickson & Branigan, 2005). To examine mood as a potential confound, self-ratings of participants’ mood were collected in all four study protocols using the Positive and Negative Affect Schedule (PANAS; Watson et al., 1988). The scale consists of 20 items measuring different affective states (e.g., interest, stress, excitement), which are rated using a 5-point scale (1 =

Tendency for analytic thinking versus holistic thinking

Participants completed the 12-item Analysis-Holism Scale (AHS; Martín-Fernández et al., 2022). The AHS measures analytic-holistic thinking style on four subdomains: causality, attitude toward contradictions, perception of change, and locus of attention. To measure participants’ locus of attention, for example, participants are asked to rate the degree to which they agree with statements such as “The whole, rather than its parts, should be considered in order to understand a phenomenon” and “It is more important to pay attention to the whole context rather than the details.” Ratings were made on a 7-point scale (1 =

Manipulation check

At the end of the survey, participants were randomly assigned to validate the experimental manipulation from one of the three studies in which they had not taken part. Thus, participants were presented with a manipulation check for a different psychological distance than that to which they had already been exposed. This allowed us to gauge the strength of each manipulation in a way that did not rely on participants’ retrospective memory for the manipulation and was minimally affected by their previous responses.

Participants received a brief description of the type of psychological distance examined in that study and were then presented with the experimental manipulation from the close or distant condition, phrased as similarly as possible to the manipulation in the actual study. For example, the manipulation check for the close condition in the temporal study read, Events can feel closer or more distant in time from the present moment. When events feel like they will happen soon, they are said to be temporally close. In contrast, when events feel like they will not happen for a very long time, they are said to be temporally distant. To what extent does something taking place tomorrow feel temporally close or distant?

Participants then provided their response on a 7-point scale ranging from 1 (

Assessment of statistical power

We conducted three sets of power analyses to provide an evaluation of the current replications in relation to the existing literature examining the effects of psychological distance on construal level. Previous experiments examining these effects constitute the body of evidence substantiating the conceptual hypotheses we aimed to test with the present replications. Thus, this literature provides a useful point of reference for interpreting the present results.

First, we calculated the sample sizes needed to detect the replication effects across different levels of power (1%–99%). This analysis provides information that can be applied when planning future studies. In addition, it provides an intuitive measure of how “visible” the estimated effect is (i.e., how many people would need to be observed to reliably detect the effect).

Second, we calculated the effect sizes that the extant literature (i.e., experiments examining direct effects of psychological distance on construal level) was sensitive to detect at 80% power. To calculate these effect sizes, we extracted the sample sizes and number of groups in the design from each of the experiments we screened for replication (see Fig. 1). We then plotted a frequency distribution of these effect sizes with the replication summary effects, and for each replication, we calculated the percentage of experiments in the past literature that had 80% power to detect an effect at least as large as the summary effect size for the replication. This comparison provides information about how the effects estimated by the replications compare with the sensitivity of previous experiments. The median effect size for which previous experiments (

Finally, we calculated the power that previous experiments had to detect the summary effect sizes from the four present replications. These power estimates were based on the group sizes extracted from the previous studies. Assuming that the replications provide reasonable estimates of direct effects of psychological distance on mental abstraction, examining these values provides information about how well powered prior experiments have been to detect effects of interest.

Valence differences between response options for the BIF items

A reviewer of the Stage 1 Report commented that the response options for the items on the BIF may be systematically biased such that the abstract options tend to be more positively valenced than the concrete options. The reviewer pointed out that this bias might be particularly problematic for the social-distance replication, in which participants might be motivated to provide more positive descriptions for socially closer targets. To address this issue, we recruited participants on Prolific (prolific.com) to rate the valence of the response options independently (i.e., each option was rated on its own;

To assess the plausibility of these valence differences as a threat to validity, we joined our pretest data with data from a previous social-distance experiment with a design similar to ours. We requested the data for Yan et al. (2016, Experiment 3), which the authors graciously provided, and analyses with these data suggested that although the valence differences (rated comparatively, measured as a standardized mean difference for each item) predicted responses on BIF items, controlling for the valence differences did not change the effect of social distance, and there was no significant interaction between valence and the distance manipulation. Thus, before conducting the present studies, we believed the valence differences posed little or no threat to the validity of the replications. However, as a robustness check, we conducted analyses for each replication testing whether participants’ responses to the BIF were influenced by the valence differences and whether the valence differences interacted with the distance manipulations. These analyses used the measurements from the comparative pretest ratings. For details on the pretests and the analyses of previous data, see https://osf.io/g6d5v.

Results

Project compendium

Materials for this project are available in a compendium comprising two digital repositories located on OSF (https://osf.io/ra3dp/) and GitHub (https://github.com/RabbitSnore/CLIMR). Raw data, which include all variables to reproduce the analyses and additional exploratory variables, are available on OSF. Detailed analysis reports and supplemental information are archived on OSF. These reports are available with embedded graphics on GitHub. The R code (R Core Team, 2022) for performing the main analyses was written and registered before data collection. The code was altered only to correct errors, facilitate importation and formatting of raw data, and/or troubleshoot technical issues. The most up-to-date version of the code is available on GitHub, and the version of the code repository as it existed before data collection is archived on OSF. Instructions for reproducing the analyses and data visualizations are provided in the readme file on GitHub.

Analytic strategy

For each experiment from each lab, we calculated an effect size for the primary comparison of interest. Three labs contributed both in-lab and online samples. For one of these labs, effect sizes were calculated for the in-lab and online samples separately. For another one of these labs, there were too few cases in the online data set, so one effect size was calculated for both data sources (and this lab’s data were excluded from the analysis of modality as a moderator). For the third lab, an error prevented identification of each participant’s modality, so effect sizes were calculated for the whole sample. Thus, with 78 contributing labs, we had 79 effect sizes for each experiment except for the likelihood replication, for which, we had 78 effect sizes because a technical issue with one lab rendered the data from this experiment unusable. In all four experiments, the critical comparison was between psychologically close and psychologically distant conditions. Because our dependent variable (the BIF) uses sum scores, we calculated standardized mean differences (

For each experiment, we conducted a random-effects meta-analysis of the effect sizes from each contributing lab. We used the

In addition, we report the number of participants that would be required to achieve 80% and 95% power to detect the estimated effect for each replication, assuming a two-group experimental design. We report the percentage of previous experiments that had the sensitivity to detect the estimated effect of each replication at 80% power or higher. We also report the median statistical power for each replication effect size for sample sizes from previous experiments.

For each replication experiment, we also provide an estimate of the average effect of the manipulation on the manipulation check. This estimate and its corresponding 95% CI are derived from a random-effects meta-analysis.

Replication effects

Figure 3 displays the results for each of the four experiments. Forest plots for the four individual study protocols can be found at https://osf.io/c37dw. Figure 4 displays the power analyses conducted to contextualize the replication results in relation to the existing literature.

Replication and original effect sizes for each experiment. Individual points represent replication effect-size estimates from contributing labs. Symbols with error bars represent the meta-analytic effect size from the replications (dots) and the original effect sizes (squares), with 95% confidence intervals as error bars. For social distance and likelihood, “original” effect sizes are the meta-analytic estimates for those distances from Soderberg et al. (2015).

Evaluation of the statistical power of the existing literature. In the top left panel, each curve represents the relationship between sample size (total

Temporal distance

The temporal-distance studies—direct replications of Liberman and Trope (1998, Study 1)—yielded a meta-analytic effect of

In a two-group experiment, the meta-analytic effect size for the temporal studies would require

Across labs, the meta-analytic estimate for the effect on the manipulation check for temporal distance was

Spatial distance

The spatial-distance studies—direct replications of Fujita et al. (2006, Study 1)—yielded a meta-analytic effect of

In a two-group experiment, the meta-analytic effect size for the spatial studies would require

Across labs, the meta-analytic estimate for the effect on the manipulation check for spatial distance was

Social distance

The social-distance studies yielded a meta-analytic effect of

In a two-group experiment, the meta-analytic effect size for the social studies would require

Across labs, the meta-analytic estimate for the effect on the manipulation check for social distance was

Likelihood

The likelihood studies yielded a meta-analytic effect of

In a two-group experiment, the meta-analytic effect size for the likelihood studies would require

Across labs, the meta-analytic estimate for the effect on the manipulation check for likelihood was

Robustness check: comprehension-check failures

For each experiment, we asked questions to check whether participants understood the stimuli. As a robustness check, we excluded observations for which participants failed to respond correctly. Across all studies, 2,044 (17.4%) people out of 11,775 failed the comprehension check. For in-lab data collections, 1,276 (16.2%) out of 7,886 people failed the comprehension check. For online data collections, 768 (19.7%) out of 3,889 people failed the comprehension check.

In the temporal replications, 632 (21.5%) out of 2,941 participants failed the comprehension check. Excluding comprehension-check failures, the temporal-distance studies yielded a meta-analytic effect of similar magnitude, but the CIs now included zero,

Excluding comprehension-check failures had little influence on the effect-size estimates for the spatial, social, and likelihood replications. Analyses with these data excluded are available in the supplemental materials (https://osf.io/z5axm).

Robustness check: valence differences in response options for BIF items

To examine the potential influence on the results of the valence differences in the response options for the items on the BIF, we fit a series of mixed-effects logistic-regression models for each experiment. The first model predicted responses on BIF items (0 = concrete, 1 = abstract) using the distance manipulation as a fixed effect, with random intercepts for each participant nested in each lab and random intercepts for each item. The second model added the standardized mean difference (

In a model including the valence differences, the coefficient for the distance manipulation is interpretable as the estimated effect (in log odds scale) at the average value of the response-option valence differences. In a model including the interaction term, the coefficient for the distance manipulation is interpretable as the estimated effect when the valence difference is zero. The valence-difference coefficient is interpretable as the extent to which participants preferred to select the abstract option because that option was more positively valenced. The interaction term is interpretable as the extent to which the manipulation’s effect is amplified (if positive) or mitigated (if negative) as the valence difference increases.

Figure 5 displays the predicted probability of selecting the more abstract BIF response option, as a function of valence differences, for each experiment. These predicted probabilities were calculated from the retained models (see below).

Predicted probability of selecting the abstract option as a function of valence differences, by experiment. Predicted probabilities at observed valence-difference values (marked with points) are connected with interpolation lines.

Temporal distance

For the temporal-distance replications, likelihood-ratio tests indicated that adding valence differences to the model offered significant improvement to the model, χ2(1) = 16.79,

To summarize, participants tended to select the more abstract option more frequently when it was more positive, and when the abstract option was more positive, the effect of the temporal-distance manipulation was mitigated. When there was no difference in valence between the response options, the lower bound of the 95% CI for the estimated effect of the temporal-distance manipulation excluded zero. Converting from log odds to

Spatial distance

For the spatial-distance replications, likelihood-ratio tests indicated that adding valence differences to the model offered significant improvement to the model, χ2(1) = 9.21,

To summarize, participants tended to select the more abstract option more frequently when it was more positive. At the average level of difference in valence between the response options (

Social distance

For the social-distance replications, likelihood-ratio tests indicated that adding valence differences to the model offered significant improvement to the model, χ2(1) = 16.16,

To summarize, participants tended to select the more abstract option more frequently when it was more positive, and when the abstract option was more positive, the effect of the social-distance manipulation was more negative. In other words, when the target person was socially close, participants selected the abstract option more frequently when that option was more positive. When there was no difference in valence between the response options, the lower bound of the 95% CI for the estimated effect of the social-distance manipulation did not exclude zero. Converting from log odds to

Likelihood

For the likelihood replications, likelihood-ratio tests indicated that adding valence differences to the model offered significant improvement to the model, χ2(1) = 14.26,

To summarize, participants tended to select the more abstract option more frequently when it was more positive. At the average level of difference in valence between the response options (

Exploratory and post hoc analyses

The following analyses were not preregistered. Unless otherwise specified, exploratory analyses used the full sample, including data from participants who failed the comprehension check.

Country and language differences

The contributing labs originated from 27 countries and regions and used 15 languages, so it is worthwhile to investigate whether the effect sizes varied across these factors. We conducted exploratory analyses using country and language as random effects. However, we found no evidence that country or language influenced the effect size of any of the studies. In the interest of space, these results are presented in the supplemental material (https://osf.io/8djym). Given that there was no evidence of significant heterogeneity for any of the effects, this lack of influence by country and language is unsurprising.

Differences across modality (in lab vs. online)

It is possible that participating in a physical-laboratory setting produces different effect sizes compared with participating online. We compared the effect sizes from in-lab and online data collections with a moderation analysis. Effect sizes from two labs were excluded because they switched from in lab to online during data collection. There was no evidence that the effects significantly differed across modalities for any of the four experiments. These results are presented in more detail in the supplemental material (https://osf.io/7rd8b).

Robustness check: location check for spatial-distance replication

The spatial-distance study materials assumed that participants were in a specific city, but online data collections do not stop participation from other locations. In the materials for online data collections, we included a question asking if the participant was in the correct city. Of the 903 participants for whom we had data for this question, 101 (11.2%) reported being in the incorrect location. To assess whether the results differ when excluding data from people reporting being in the incorrect location, we repeated the main analysis, the comprehension-check robustness check, and the modality moderation with these participants removed. Three labs that collected data at least partially online were missing the location-check question because of technical or procedural errors. The effect sizes of these labs were excluded from this analysis. These additional analyses produced results that were nearly identical to the main results. These results are reported in detail in the supplemental material (https://osf.io/bc8pd).

Cause size and effect size

There was evidence of significant heterogeneity in the manipulation checks across labs for all four studies (see https://osf.io/x2bh7). If the strength of the manipulation (i.e., the “cause size”; Abelson, 1995; Ejelöv & Luke, 2020) varies, it is plausible that the effect size would positively correlate with manipulation strength such that the effect is present or stronger only when the manipulation is stronger. To test this possibility, we fit metaregression models predicting the effect sizes from the cause sizes, and we found no evidence of a significant relationship between cause size and effect size. The results of these analyses are presented in the supplemental material (https://osf.io/ugvtp).

Item-level effects

It is possible that effect sizes vary across the different items of the BIF. To investigate this possibility, for each study, we calculated effect sizes for each BIF item for each lab and synthesized them in a mixed-effects meta-analytic model, accounting for each lab as a random effect and treating the items as a categorical moderator. The effect sizes for each item estimated by these models are presented in Figure 6. These results are presented in detail in the supplemental materials (https://osf.io/tuc7e). As we show, all the CIs for the item-level effects for the spatial-distance and likelihood studies included zero. In the social-distance study, the upper bound of 15 out of 25 items’ CIs excluded zero, consistent with the overall (negative) effect, and one item’s CI excluded zero in the positive direction. Note that these effect sizes do not account for the valence differences in the response options. In the temporal-distance study, five out of 19 items’ CIs excluded zero in a direction consistent with the overall effect, and five items’ CIs excluded zero in the opposite direction.

Item-level effects on the Behavior Identification Form. Error bars represent 95% confidence intervals.

Additional exploratory analyses

We conducted several exploratory analyses in addition to those described above. These include (but are not limited to) an examination of the potential moderating effects of positive and negative affect (measured by the PANAS), scores on the AHS, the physical distance between the cities used in the materials for the spatial-distance experiment, the passage of time across the data-collection period, and the amount of time taken by participants to complete the study. These analyses are documented in the supplemental material (https://github.com/RabbitSnore/CLIMR/).

Discussion

In the current multilab study, we tested the central tenet of CLT: that psychologically distant events are mentally construed more abstractly than psychologically near events. We tested this hypothesis by varying temporal, spatial, and social distance and the likelihood of events. Temporal distance and spatial distance were examined by direct replications of previously published studies, and social distance and likelihood were examined by paradigmatic replications. The present studies were selected and designed largely for their relevance to the fundamental hypotheses of CLT (e.g., using direct manipulations of psychological distance) so that their results would be theoretically informative (see e.g., Nosek & Errington, 2020).

Overall, results showed limited support for the predictions. According to our preregistered criteria, the replication effect for temporal distance was consistent in direction but inconsistent in magnitude with the original effect reported by Liberman and Trope (1998, Study 1): The main analysis revealed an effect in the predicted direction with a CI that excluded zero, but the observed effect (

Our planned power analyses showed that the studies in the previous literature overall had virtually no statistical power to detect the effects implied by the replication studies except for the social-distance effect size (which was in the opposite direction to the prediction). In addition, the median effect size for which previous CLT experiments had 80% power to detect was 8.5 times larger than the effect we observed for temporal distance (

Limitations

The current replication studies relied solely on the BIF (Vallacher & Wegner, 1989) as the outcome measure. The interpretation of the replication results is thus contingent on the suitability of the BIF as a measure of mental abstraction. In the preparation of the current replication studies, the lead authors (S. Calderon, E. Mac Giolla, K. Ask, & T. J. Luke) conducted extensive pretesting showing that the BIF indeed is highly sensitive to direct manipulations of mental abstraction (

We also note that a confound is built into several of the BIF items such that people perceive the abstract (vs. concrete) action descriptions more positively (https://osf.io/g6d5v/). Our robustness analyses showed that although the effects for temporal distance, spatial distance, and likelihood remained relatively stable, the observed effect for social distance (opposite to the predicted direction) was eliminated entirely when controlling for response-option valence. Thus, this effect was completely accounted for by the fact that when presented with a socially close (vs. distant) target, participants tended to identify that person’s actions using more positive descriptions. This finding highlights the need to develop valence-neutral measures of mental abstraction. In addition, our exploratory analyses revealed that the replication effect for temporal distance was present in only five of the 19 BIF items included in the study, which calls for further examination of the generality of the effect. Newer measures may address some of the concerns with the BIF. For instance, recent research suggests that using a modified version of the BIF as the dependent variable may produce larger and more reliable effects of temporal distance on abstraction (Nguyen et al., 2023).

A second potential limitation is that approximately 17% of our participants failed the comprehension check. This rate varied little between online and in-lab administrations of the studies. Because CLT research has not typically reported comprehension checks, we cannot know whether a 17% failure rate is representative of the field. Mitigating this issue, we found that results were largely unchanged when excluding participants who failed the check. One exception is that the CI for the temporal-distance effect size no longer excluded zero when excluding participants who failed the comprehension check. In absolute terms, however, the point estimate and confidence bounds changed only a small amount.

Constraints on generality

The contributing labs represent a diverse set of geographical locations, languages, and cultures. Thus, we do not consider a lack of diversity to pose a serious threat to the generality of our conclusions, although representation from Africa and South America is regrettably lacking. In addition, the low amount of heterogeneity associated with the replication effects despite the large variability in many lab-specific parameters increases our confidence of the generalizability of the reported findings. That being said, the sample of participants consisted of mostly women—a limitation shared with much of CLT research (Soderberg et al., 2015).

Conclusion

The current research presents strong evidence of weak or nonexistent relationships between the four forms of psychological distance and mental abstraction as operationalized in the current studies. CLT holds that the relationship between psychological distance and mental abstraction constitutes a fundamental and universal mechanism of human cognition (see e.g., Liberman & Trope, 2014; Trope & Liberman, 2010). Given sensitive measures, strong manipulations, and high statistical precision, one would expect a fundamental process of human cognition, previously observed in sample sizes a fraction as large as the current ones, to produce effects greater than

The current findings also raise a question with important applied implications: How can the direct effects of psychological distance on mental abstraction, estimated here to be very small at best, account for the large downstream consequences that have been documented in the existing literature (Soderberg et al., 2015)? We encourage researchers to consider whether alternative theoretical or methodological explanations that do not require CLT as an explanatory framework can provide plausible accounts of such findings. Finally, systematic validation is necessary to rule out the possibility that the current findings are simply due to a lack of adequate methods for manipulating and measuring the constructs of interest. We anticipate and hope that the current research will inspire theory development, methodological refinement, and a renewed focus on the practical applicability of CLT.

Supplemental Material

sj-docx-1-amp-10.1177_25152459251401177 – Supplemental material for Effects of Psychological Distance on Mental Abstraction: A Registered Report of Four Tests of Construal-Level Theory

Supplemental material, sj-docx-1-amp-10.1177_25152459251401177 for Effects of Psychological Distance on Mental Abstraction: A Registered Report of Four Tests of Construal-Level Theory by Sofia Calderon, Erik Mac Giolla, Karl Ask, Susanne Jana Adler, Jens Agerström, Burcu Akpınar, Nihan Albayrak, Francesca Romana Alparone, Shahrazad Amin, Antonio Aquino, Melissa Bachet, Baisile Baisile, Karin M. Bausenhart, Magali Beylat, Olga Bialobrzeska, Eliana C. Bloomfield, Lea Boecker, Matteo Bonora, Shannon T. Brady, Jared G. Branch, Nicole E. Brandy, Kelley T. Bui, Mariela Bustos-Ortega, Amparo Caballero, Andi Cai, Katarzyna Cantarero, Stephanie A. Cárdenas, Pilar Carrera, Jung-Tzu Chang, Hsuan-Fu Chao, Andrew G. Christy, Jennifer A. Cook, Junhua Dang, Scott Danielson, William E. Davis, Cara de Boer, Elise de Groot, Jaye L. Derrick, Sarah Dittmar, Tim Döring, Céline Douilliez, Martin Egger, Yannik A. Escher, Thomas Rhys Evans, Sofia Fabiani, Gilad Feldman, Nicole Fernandez, Julia Fischer, Magdalena Formanowicz, Malte Friese, Paul T. Fuglestad, Aurore Gaboriaud, Jessica Gale, Richard Gamrát, Oliver Genschow, Omid Ghasemi, Mauro Giacomantonio, Karolin Gieseler, Hedy Greijdanus, Siobhán Mary Griffin, Doğa Gül, Gul Gunaydin, Simona Haasova, Georgios Halkias, Christopher E. Hawk, Anna Helfers, Cindy L. Hernandez, Yanine D. Hess, Petr J. Horgos, Yehor Hrymchak, Markus Huff, Ezgi Ildırım, Biljana Jokić, Yoann Julliard, Pavol Kacˇmár, Barbara Kaup, Hyunji Kim, Kyungmi Kim, Alan Kingstone, Kenan Koç, Lina Koppel, Anita Körner, Bibiána Kovácˇová Holevová, Paul Danielle Labor, Bronwyn D. Laforet, Fanny Lalot, Leonie Lamm, Sean M. Laurent, Sean T. H. Lee, Yi-Chen Lee, Edward P. Lemay, Zhicheng Lin, Yun-Kai Lin, Jia-Xin Long, David D. Loschelder, Katerina Makri, Harry Manley, Nicolò Maugeri, Randy J. McCarthy, Cillian McHugh, Katarzyna Miazek, Marina Milyavskaya, Coby Morvinski, Michaela Muchová, Sümeyye Muftareviç, Dominique Muller, Gideon Nave, Ben R. Newell, Cécile Nurra, Marc Ouellet, Asil Ali Özdoğru, Mia Pagnani, Daniele Paolini, Frank Papenmeier, Hannes M. Petrowsky, Stefan Pfattheicher, Jean C. Picado, Ryan M. Pickering, Danka Purić, Alain Quiamzade, Jonathan E. Ramsay, Tristan Nicholas Renaud, Mónica Romero-Sánchez, Robert M. Ross, Ángel Sánchez-Rodríguez, Julio Santiago, Marko Sarstedt, Luke Scally, Michele Scandola, Judith P. M. Schachtner, Simon Schindler, Andreas Segerberg, Emre Selcuk, Verónica Sevillano, Edith Shalev, Xiaoyi Shao, Steven D. Shaw, Keyi Shi, Birte Siem, Pablo Solana, Meikel Soliman, Gaye Solmazer, Fatih Sonmez, Samantha K. Stanley, Janina Steinmetz, Adam W. Stivers, Aleksandra Szymkow, Maude Tagand, Yan Zhen Tan, Hilal Terzi, Miaomiao Tian, Gustav Tinghög, Ulrich S. Tran, David F. Urschler, Daniel R. VanHorn, Daniel Västfjäll, Bruno Verschuere, Amelie Verschueren, Anna Laura Vlad, Martin Voracek, Xiaotian Wang, Deming Wang, Lara Warmelink, Adam Kah Jjin Wee, Aaron Lee Wichman, Sera Wiechert, Karl-Andrew Woltin, Hoo Keat Wong, Jiawen Xu, Zai-Fu Yao, Siu Kit Yeung, Kumar Yogeeswaran, Iris Žeželj, Qing Zhang, Rene Ziegler and Timothy J. Luke in Advances in Methods and Practices in Psychological Science

Footnotes

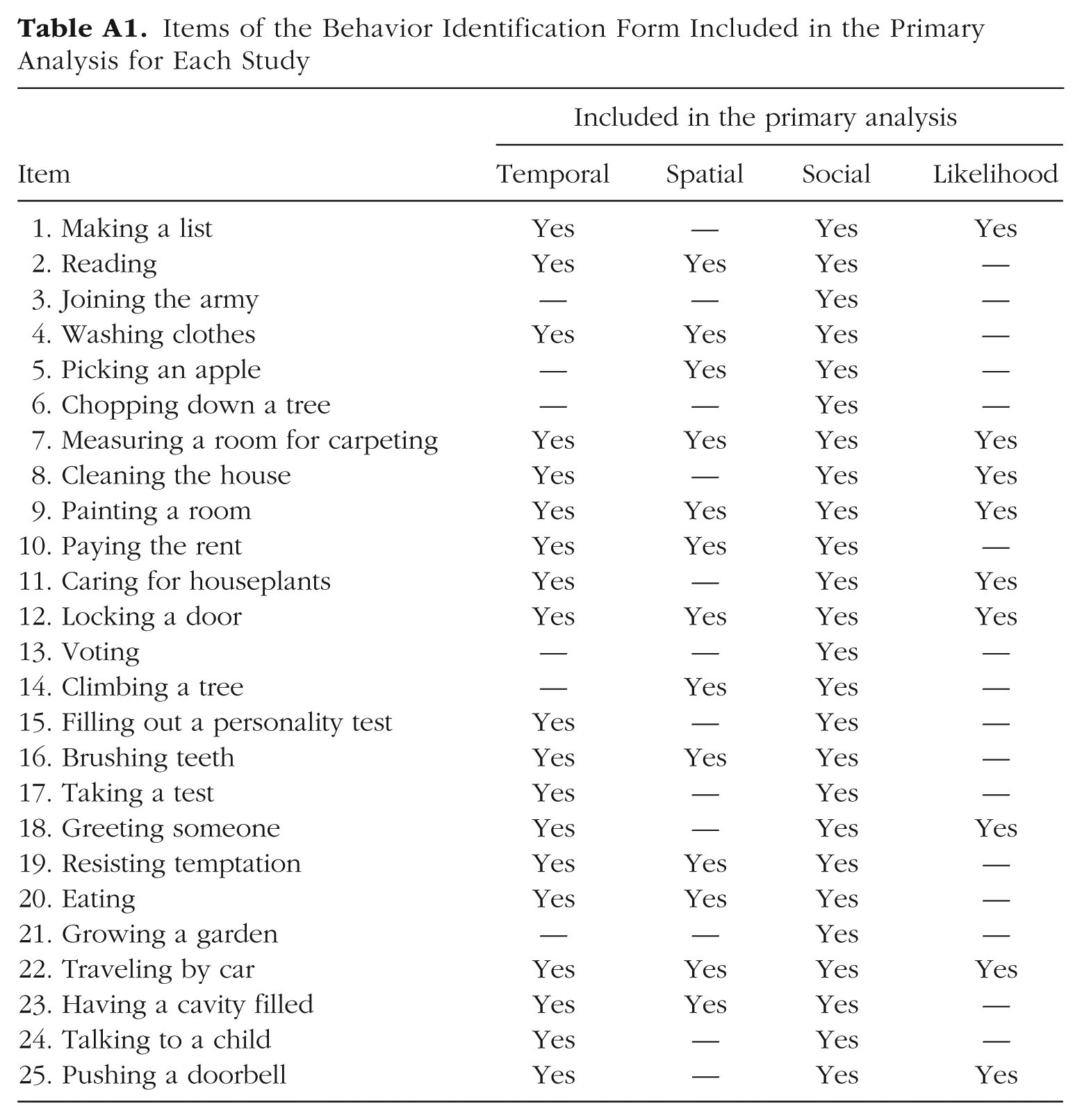

Appendix A: Items of the Behavior Identification Form

Items of the Behavior Identification Form Included in the Primary Analysis for Each Study

| Included in the primary analysis | ||||

|---|---|---|---|---|

| Item | Temporal | Spatial | Social | Likelihood |

| 1. Making a list | Yes | — | Yes | Yes |

| 2. Reading | Yes | Yes | Yes | — |

| 3. Joining the army | — | — | Yes | — |

| 4. Washing clothes | Yes | Yes | Yes | — |

| 5. Picking an apple | — | Yes | Yes | — |

| 6. Chopping down a tree | — | — | Yes | — |

| 7. Measuring a room for carpeting | Yes | Yes | Yes | Yes |

| 8. Cleaning the house | Yes | — | Yes | Yes |

| 9. Painting a room | Yes | Yes | Yes | Yes |

| 10. Paying the rent | Yes | Yes | Yes | — |

| 11. Caring for houseplants | Yes | — | Yes | Yes |

| 12. Locking a door | Yes | Yes | Yes | Yes |

| 13. Voting | — | — | Yes | — |

| 14. Climbing a tree | — | Yes | Yes | — |

| 15. Filling out a personality test | Yes | — | Yes | — |

| 16. Brushing teeth | Yes | Yes | Yes | — |

| 17. Taking a test | Yes | — | Yes | — |

| 18. Greeting someone | Yes | — | Yes | Yes |

| 19. Resisting temptation | Yes | Yes | Yes | — |

| 20. Eating | Yes | Yes | Yes | — |

| 21. Growing a garden | — | — | Yes | — |

| 22. Traveling by car | Yes | Yes | Yes | Yes |

| 23. Having a cavity filled | Yes | Yes | Yes | — |

| 24. Talking to a child | Yes | — | Yes | — |

| 25. Pushing a doorbell | Yes | — | Yes | Yes |

Appendix B: Comprehension Checks

Response options for all comprehension checks were presented to participants in random order.

Appendix C: Manipulation Checks

Acknowledgements

We thank all original authors who have provided materials for this project.

Transparency