Abstract

Past research on cross-cultural equivalence has focused on statistical procedures and techniques for ensuring measurement equivalence in tests and surveys. With the rise of big data and machine learning (ML), particularly natural language processing, researchers have powerful tools to study culture using large-scale, organic language data from social media. However, the lack of methodological guidance on how to establish cross-language equivalence in cross-cultural studies, especially with multilingual or culturally diverse text data, poses a major challenge. To address this gap, in this article, we propose a framework to raise awareness of key equivalence challenges and offer practical guidance for reducing measurement biases when applying ML techniques to social media language data. The framework outlines five types of equivalence following the ML pipeline from data collection to evaluation: source equivalence, sample equivalence, input equivalence, psychological-ground-truth equivalence, and model-performance equivalence. We also draw parallels to survey-based research to highlight shared conceptual challenges and identify future directions to advance cross-cultural research with big data and computational-linguistic methods.

Keywords

In an era in which technological innovations are reshaping the understanding of the human mind, the emergence of big data and machine learning (ML; i.e., the use of large language models [LLMs]) stands as a pivotal milestone, with the capacity to “transform social science research” (Abdurahman et al., 2023, p. 3; Grossmann et al., 2023), especially for the field of cross-cultural psychology. The digital revolution, marked by the proliferation of online social media platforms, has unleashed an unprecedented volume of linguistic data that people post online on a daily basis. The vast, digitally mediated linguistic data pool presents researchers with an unparalleled opportunity to delve into the nuances of cross-cultural psychology on a global scale.

Through the lens of social media, researchers now have the means to explore individual characteristics and social behaviors across diverse cultures with a breadth and depth that were not previously feasible. With emerging possibilities, there are also evolving novel issues that need to be considered when seeking comparisons or generalizations across cultures with linguistic data pulled from social media platforms. Drawing parallels and contrasts between diverse cultural contexts is not straightforward, especially when working with language data that are inherently nuanced and context-dependent. This brings the field to the crucial consideration of “equivalence” when conducting cross-cultural studies following ML approaches, described in the current article.

Key Concepts and Approaches in Cross-Cultural Psychology

To contextualize and interpret these cross-cultural comparisons, researchers often anchor their analyses in established theoretical frameworks that explain meaningful cultural variations. In the next section, we outline some foundational concepts in cross-cultural psychology that can guide the application of computational methods. Past cross-cultural psychology work has drawn on several influential theoretical frameworks that aim to capture meaningful cultural variation across countries. One of the most widely used models is Hofstede’s cultural dimensions, originally proposed with four basic dimensions, power distance, uncertainty avoidance, individualism versus collectivism, and masculinity versus femininity, and later expanded to include long-term orientation and indulgence versus restraint dimensions (Hofstede, 1983; Hofstede et al., 2010). These dimensions have provided a foundational structure for generating hypotheses and interpreting behavioral, cognitive, and organizational differences across nations. Building on and extending Hofstede’s work, Gelfand and colleagues (2011) introduced the concept of tightness-looseness, which reflects the strength of social norms and tolerance for deviant behavior across cultures. Tight cultures enforce strict norms and punish deviations harshly, whereas loose cultures are more permissive and open to variation (Gelfand et al., 2011).

Other notable contributions include the Schwartz theory of basic human values, which identifies universal values that differ in their prioritization across cultures (e.g., openness to change vs. conservation, self-enhancement vs. self-transcendence; S. H. Schwartz, 1992), and the Inglehart-Welzel World Values Survey, which distinguishes between traditional versus secular-rational values and survival versus self-expression values, reflecting sociocultural shifts over time (Inglehart & Welzel, 2005). Finally, researchers have explored cultural differences in cognition, such as analytic versus holistic thinking and attention to context versus focal objects (e.g., Masuda & Nisbett, 2001). These dimensions are often linked to deeper sociocultural influences, such as ecological pressures, educational systems, and social practices. For a summary of key cultural dimensions across those major theoretical frameworks, see Table 1.

Summary of Key Cultural Dimensions Across Theoretical Frameworks

Note: VSM = Value Survey Module; WVS = World Values Survey.

Past empirical research has heavily relied on survey-based methods to quantitatively investigate how cultures differ across the dimensions listed above in comparative studies (e.g., Gelfand et al., 2011; Guo et al., 2012; Masuda & Nisbett, 2001) to identify cross-cultural differences and similarities. These studies typically involve collecting parallel self-report data from participants across cultural groups, with an emphasis on ensuring measurement equivalence between scales developed in different languages (Davidov et al., 2014; Lakens et al., 2018; Van de Vijver & Leung, 2021; Van de Vijver & Tanzer, 2004). To create scales in the languages of different cultures, researchers have typically advocated for the translation-back-translation technique to preserve item meaning and reduce translation errors, which involves translating scale items into a target language by bilingual individuals and then translating them back to the original language for comparison and adjustment. Measurement equivalence (or measurement invariance), including configural invariance (ensuring the same factor structures), metric variance (ensuring equivalent factor loadings), scalar invariance (ensuring equivalent item intercepts for meaningful latent means comparisons), and residual invariance (the most restrictive form of invariance, ensuring equivalent residual variances) is evaluated to determine whether scales measure the same constructs across groups in comparable and meaningful ways (Putnick & Bornstein, 2016; Vandenberg & Lance, 2000) through techniques such as multigroup confirmatory factor analysis (Lakens et al., 2018; Van de Vijver & Leung, 2021). Without establishing equivalence, comparisons across cultures risk being confounded by measurement artifacts rather than reflecting true differences.

Recent Advances in Computational Text Analysis

Researchers now face new opportunities to go beyond surveys to collect new forms of organic data to examine cross-cultural similarities or differences. Specifically, the rise of using social media language and natural language processing (NLP) has provided unprecedented ways to better understand cultures with much larger samples than previously in more naturalistic environments. The development of computational-linguistic techniques and ML has offered the opportunity to efficiently process gigabytes to petabytes of language data for descriptive or predictive studies (Kern et al., 2016).

Recent advances in computational methods have significantly enhanced the ability to analyze cultural data using texts. Early methods relied on word-count approaches, such as the Linguistic Inquiry and Word Count (LIWC) tool, which provided insights into text-based phenomena. Although useful for detecting overall patterns and interpretability of the results, dictionary-based approaches are limited by their reliance on predefined dictionaries to capture word meaning in real context, posing threats to external validity (Atari & Henrich, 2023). The next major advance came with the development of static word embeddings (e.g., Word2Vec, GloVe [global vectors for word representation]), which represent words as fixed vectors based on co-occurrence patterns in large corpora, allowing researchers to capture semantic similarity (e.g., “king” and “queen” being closer than “king” and “flower”). However, these embeddings lack contextual understanding and overlook the different meanings a word may have under different situations.

More recently, contextualized models such as BERT (bidirectional encoder representations from transformers) and GPT (generative pretrained transformer) have transformed NLP by capturing word meanings within their surrounding context. These models are fine-tuned for tasks such as sentiment analysis, topic modeling, and semantic similarity, offering unparalleled flexibility for cross-cultural research. Researchers now have access to repositories such as Hugging Face, which provides pretrained models and tools for customization, and application programming interfaces, such as OpenAI’s GPT, which enable the scalable deployment of language models for analysis. By integrating these computational advances, researchers are better equipped to address the long-standing challenges in cross-cultural equivalence, such as linguistic nuances, polysemy (i.e., words containing multiple different meanings), and semantic shifts across languages, regions, and time (e.g., Atari & Henrich, 2023). These advanced computational methods are particularly useful when applied to naturally occurring text data, allowing researchers to go beyond traditional survey frameworks.

In contrast to self-report data, social media language use reflects organic, behaviorally grounded communications situated alongside other online behaviors, such as web browsing, liking/hearting, and reposting (Rozenfeld, 2014). Behavior-based measures of psychological constructs offer many advantages that self-report scales cannot. For example, social media language enables scholars to study individual characteristics, thoughts, and emotions directly and unobtrusively (Kern et al., 2016; Park et al., 2017; H. A. Schwartz et al., 2013). It minimizes the influences of respondents’ evaluative styles (Park et al., 2017) and overcomes the limitations of self-awareness (Thapa et al., 2021) and social desirability (Holtgraves, 2004), which impose potential threats to the validity of self-report measures. In addition, social media data offer the potential to move beyond the traditional limitations of WEIRD (Western, educated, industrialized, rich, and democratic) samples and English-centric research (Muthukrishna et al., 2021). Unlike conventional surveys often administered in English-speaking contexts, social media platforms host billions of users across linguistic, cultural, and geographic boundaries. Platforms such as Sina Weibo, Reddit, Facebook, and others provide access to naturalistic language use across diverse regions, allowing for more inclusive and ecologically valid investigations of human behaviors (Atari & Dehghani, 2022; Atari & Henrich, 2023).

Social media platforms provide rich, organic text data generated from people’s everyday interactions, making them a unique and valuable resource for cross-cultural comparisons. However, social media data also present distinctive methodological challenges. Methodological guidance on ensuring equivalence in cross-cultural/language studies is lacking, which imposes substantial challenges on conducting cross-cultural studies with social media data. Current studies have focused on the application or methodological concerns of social media use in a single language context, predominantly in English (e.g., Kern et al., 2016; Park et al., 2017; Youyou et al., 2015). For example, Kern et al. (2016) provided an excellent list of methodologies and challenges when working with social media language data, focusing on the U.S. context. Park et al. (2017) proposed and evaluated an ML approach to study time orientations using Twitter (now X) and Facebook posts written in English. Studies that considered cross-cultural comparisons on social media platforms outside the United States are still hard to find, largely because of the lack of methodological guidance on conducting equivalent comparisons with ML. Language use, as behavioral cues of underlying latent constructs, is intricate and critical to understanding following ML approaches. The unique language grammatical structures, syntax, semantic nuances, and written forms can all affect how online text should be processed and incorporated in ML models. Direct translations may not work as well with social media text data, which occur naturally in conversations heavily influenced by cultural norms and implicit contextual meanings.

The Current Framework on Cross-Language Equivalence

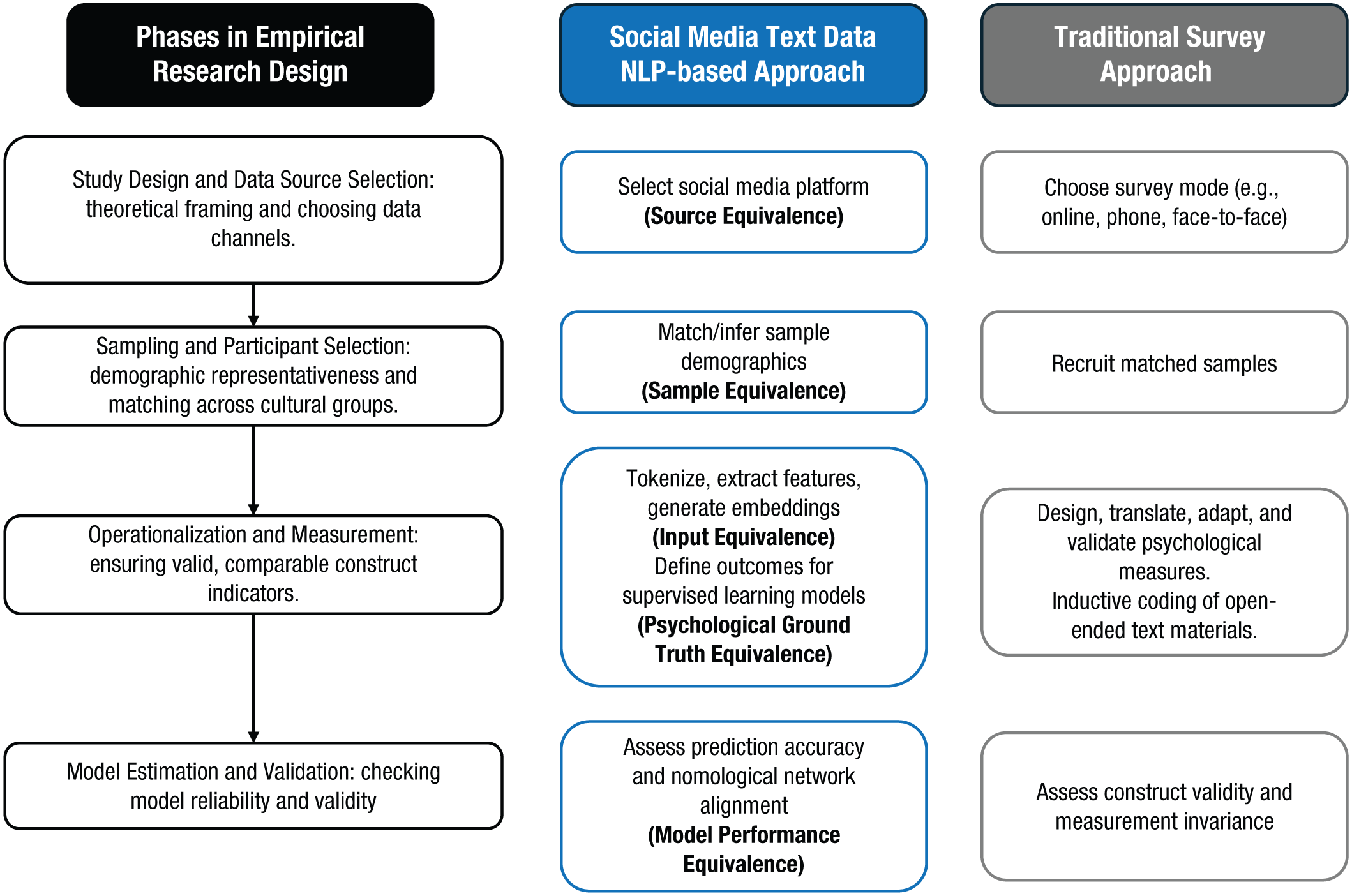

In the current article, we aim to raise attention to the unique language and cultural differences when following ML processes to conduct cross-cultural studies with large-scale social media text data. We hope to encourage more future studies that apply ML approaches to cross-cultural studies by developing a methodological framework for researchers and practitioners to draw on. In this article, we propose a list of key methodological issues and guidelines for equivalence when adopting ML techniques in cross-language and/or cross-cultural research, including source equivalence, sample equivalence, input equivalence, psychological-ground-truth equivalence, and model-performance equivalence, summarized in Table 2. We structured the five types of equivalence following the steps in empirical research design: study design, data collection, analysis, and evaluation. Figure 1 highlights parallels with survey research along the process, grounding our framework in familiar methodological practices.

Key Issues for Considerations in Ensuring Cross-Language Equivalence

Note: AUC = area under curve; DIF = differential item functioning; F1 = harmonic mean of precision and recall; ML = machine learning; SHAP = SHapley Additive exPlanations.

Parallels between natural-language-processing-based social media text research and traditional survey-based cross-cultural research.

In this article, we use the term “equivalence” to refer to the goal of enhancing cross-group comparability throughout the ML methodological approach, from data source and sample selection to building and evaluating ML models in different languages. Although full measurement equivalence may be unattainable across culturally distinct platforms, our aim is to encourage reflection on potential sources of bias and reduce distortions that could compromise meaningful comparisons instead of offering rigid thresholds and specific guidelines for evaluating equivalence. Achieving strict statistical equivalence across cultural and linguistic groups is inherently challenging, and statistical tests for bias do not always guarantee equivalence. However, equivalence, whether conceptual or statistical, is often implicitly assumed when researchers use quantitative comparisons to examine cultural similarities and differences. Without this assumption, researchers are left with either purely qualitative descriptions or fragmented interpretations of cultural patterns. In addition, some of those challenges are not unique to social media NLP research. For example, sampling equivalence reflects broader concerns shared across all forms of cross-cultural psychological research. Some of these equivalence challenges, such as platform norms, spontaneous expression, and country-level censorship, are specific to social media data, whereas others, such as algorithm selection, preprocessing decisions, and model interpretability, are distinct to the use of ML techniques.

In this article, we focus specifically on text-based social media data, referring to platforms in which user-generated textual content is central and produced in a spontaneous, informal manner, such as X (formerly Twitter), Reddit, Facebook, and Weibo. These platforms provide rich opportunities for NLP methods in cross-cultural research by offering large-scale, real-time data that reflect individuals’ everyday thoughts, behaviors, and linguistic patterns. Although other digital-data sources—such as forums, content-creation platforms (e.g., YouTube, TikTok), news articles, or structured survey responses (e.g., World Values Survey)—are also important for cultural analysis, they differ in terms of data characteristics and user intent. As outlined in Table 3, these sources often involve curated, performative, generic, or institutionally produced content and thus pose different methodological considerations. By narrowing our scope to social media text data, we aim to provide a focused yet adaptable framework that tackles the unique challenges of working with informal, user-driven textual data while offering insights that may inform future research on other digital-data sources.

Overview of Digital-Data Sources

The current framework makes a unique contribution to the literature by offering a systemic framework for evaluating cross-cultural and linguistic equivalence in ML applications using social media language data. Although prior work, such as Tay et al. (2022), has provided a broad discussion on traditional measurement bias and its extension to ML, our work specifically addresses the complexities of equivalence in cross-national and multilingual contexts. We move beyond general ML bias mitigation to systematically examine how cultural and linguistic variations influence the comparability and interpretability of ML-based assessments. Our framework organizes these challenges across five key stages of the ML pipeline, offering a structured approach tailored to cross-cultural research rather than generic bias reduction. Furthermore, we provide practical guidance on leveraging social media language data for cross-cultural analysis, ensuring rigor in naturalistic text-based research. By bridging insights from measurement equivalence, ML, and cultural psychology, in this article, we lay the foundation for more rigorous methodological applications of NLP and ML in diverse linguistic and national contexts.

Source Equivalence

Multilanguage data can be extracted from one platform operating in different countries and languages or from unique social media platforms operating in a specific country or language. Source equivalence focuses on the original sources—social media platforms—from which online data are extracted. Such data, often referred to as “organic data” (Groves, 2011), are collected by following the natural digital footprints of people’s online activities. Unlike survey approaches, in which researchers design questionnaires with established scales, platform designs can implicitly affect the organic data emerging from natural online behaviors (Xu et al., 2020). For a summary of selected global social media platforms commonly used in cross-cultural research, see Table 4. Source equivalence refers to the extent to which data sources used in a study are comparable in their fundamental characteristics such that differences in the data reflect the phenomena of interest rather than systematic differences in where or how the data were obtained.

Summary of Selected Global Social Media Platforms

Abbreviation: API = application programming interface; DACH = Germany, Austria, and Switzerland.

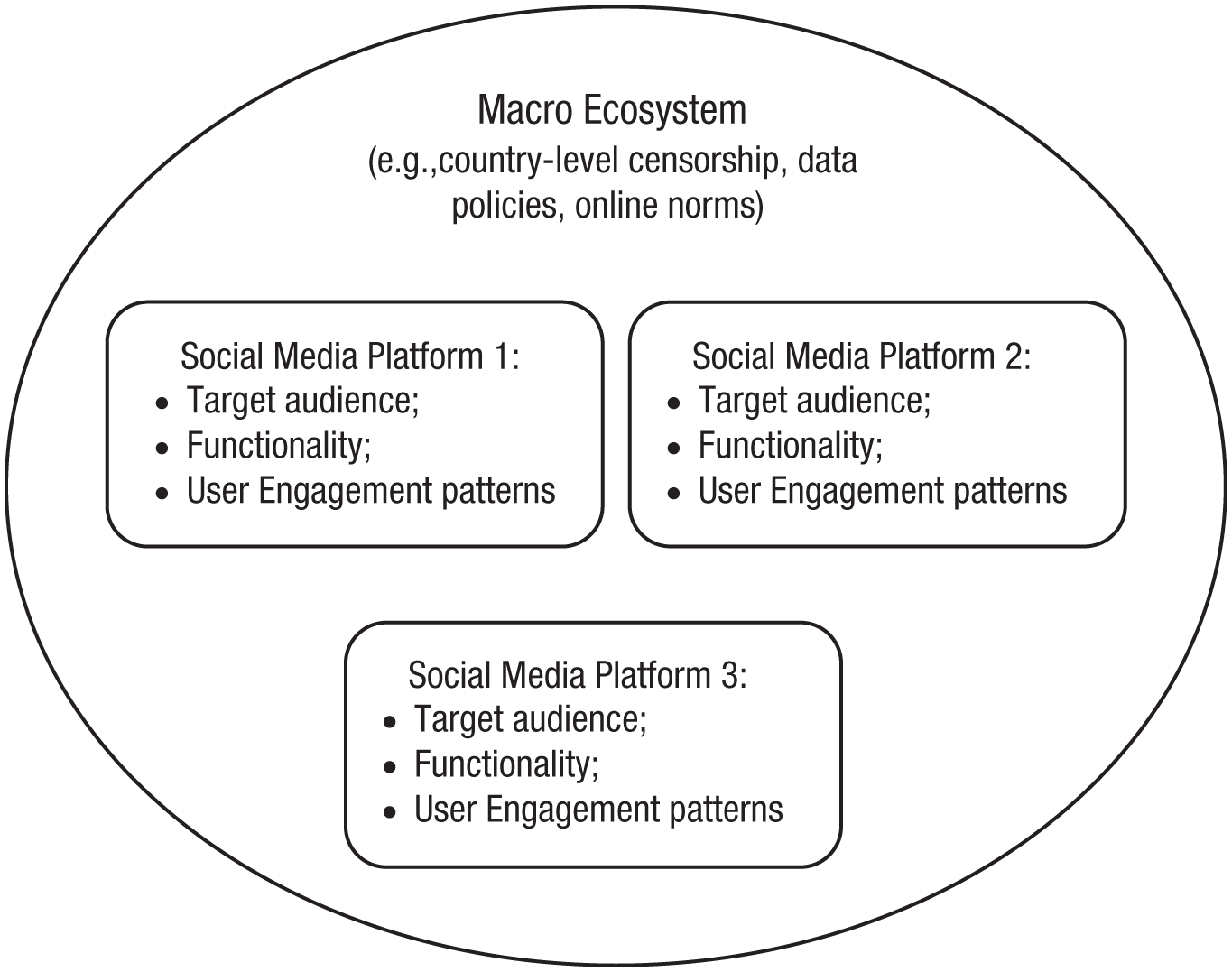

In the context of social media research, source equivalence means that platforms or the same platform across contexts provide comparable opportunities for users to express behaviors relevant to the constructs being studied. This includes four key categories of considerations: policy on data extraction and censorship, target audience, functionality, and user-engagement patterns (Pew Research Center, 2022; Tay et al., 2020), summarized in Figure 2. Researchers should carefully consider these differences when formulating research questions and planning data collection because they have significant implications for sampling strategies, data preprocessing, and the validity of subsequent interpretations.

Source-equivalence/bias considerations.

At the macro level, one important category to consider is the broader ecosystem in which social media platforms operate, such as country-level policies on data regulations and censorship. According to the Lumen database run by the Harvard Berkman Klein Center for Internet & Society, X (formerly named Twitter) has complied with more than 80% of U.S. government and court requests to remove or alter content for censorship (Hamilton, 2023). However, this level of censorship is relatively mild compared with Sina Weibo, a major social media platform in China, where government-imposed regulations enforce much more stringent content guidelines and monitoring. This disparity in censorship practices highlights the necessity of understanding the sociopolitical context within which a platform operates. For instance, Sina Weibo’s rigorous content-control policies significantly shape the discourse, restricting topics and the expression of opinions available on Weibo. This creates a unique digital ecosystem in which certain themes may be underrepresented or skewed. Thus, it is crucial for researchers to know the specifics of what content is censored on Weibo—ranging from political dissent to social activism—especially in the phase of coming up with research questions and deciding whether social media language data can help address those questions. Without awareness or understanding of censorship differences, researchers could risk misinterpreting the silence or absence of certain discussions for cultural disinterest or acceptance rather than a result of censorship.

Another two important categories to consider are the intended audience and functionality of social media platforms. In survey approaches, numerous studies have called researchers’ attention to the population differences and characteristics when sampling from third-party agencies, such as Amazon MTurk, Prolific, Qualtrics, and SONA (e.g., Douglas et al., 2023; Kimball, 2019; Peer et al., 2017), because such characteristics can play a confounding role in study results. Likewise, it is essential to understand the overall demographics and characteristics of users before sampling a subpopulation. Marcus and Krishnamurthi (2009) found that Japanese and South Korean social-network sites (e.g., Mixi in Japan) focus on a more general audience regardless of age, whereas social network sites in Western contexts (e.g., the United States) have a distinct age divide, such as Disney XS for preteens, MySpace for teenagers and youths, Facebook for young adults, and so on. Therefore, understanding the intended target audience is another key feature to consider when selecting social media platforms in different countries; if not careful, choosing sources targeting different populations can lead to downstream sampling bias, which we address in the next section.

A fourth important category to consider is the operational characteristics of a single social media platform, which can vary significantly across countries or cultures, reflecting diverse user behaviors and preferences (Malhotra et al., 2012). In investigating the different usage of X worldwide, Poblete et al. (2011) found that Indonesia and South Korea have the highest percentage of “mentions.” In contrast, Japan has the lowest “mentions” and “retweets” among the 10 countries studied, suggesting that X is used more for its conversational functions in some countries than others and likely generates more conversational/relational data in respective countries. In contrast, the United States had the most URLs shared/mentioned per user, suggesting that the United States uses X more for sharing news, publicizing events, or sharing external sources. Therefore, considering source equivalence is crucial even when drawing from a single social media platform because differences in how platforms are used across contexts can shape the type of data generated and potential relevance for research.

For each of the above-mentioned categories, behavioral differences observed across platforms and countries may arise from a combination of platform-design features and culturally rooted norms, posing a deeper challenge to cross-cultural research, especially in the research-design phase. Distinguishing between cultural influences and platform-design effects is inherently complex in cross-cultural research using social media data. Social media platforms are not neutral data sources; they are embedded in cultural systems and shaped by user behaviors, norms, and technological affordances that evolve within specific cultural contexts. These complexities highlight the need for researchers to carefully evaluate whether observed differences reflect platform-specific features or underlying cultural characteristics.

Whether such behavioral patterns should be treated as methodological artifacts or culturally meaningful expressions depends on how researchers conceptualize the role of social media platforms in their studies. Specifically, researchers should consider whether they view the platform as an essential part of culture, meaning that the above-mentioned features of social media platforms are culturally embedded practices worth examining on their own, or as a medium that carries culture-relevant information with the capability of dampening, enhancing, or filtering underlying cultural patterns. This decision is driven mainly by the research questions and goals, similar to the survey approach in the first step of considering where and how to recruit participants. When working with social media platforms, treating the platform as culture means incorporating its affordances, norms, and user behaviors into construct definitions, whereas treating it as a medium necessitates adjustments to account for downstream sample biases. Methodologically, this influences decisions on data preprocessing (e.g., filtering hashtags, advertisements, or retweets), analytic strategies (e.g., partitioning platform or cultural variances), and interpretation of behavioral indicators (e.g., frequency of posting as cultural expressiveness vs. platform affordance indicative of cultural norms). Rather than prescribing a one-size-fits-all solution, we recommend that researchers clarify whether their research goal is to examine platform-influenced user behaviors or culturally driven online behavioral patterns. This decision guides whether platform-design effects should be treated as part of the cultural phenomenon or managed (e.g., statistically controlled) to enhance comparability. In this article, we adopt the latter, viewing platforms as a medium through which cultural expressions are conveyed; this helps inform our subsequent discussions on other types of equivalence.

Given these complexities, the selection of social media platforms for cross-cultural research demands a meticulous and theory-informed approach. Landers et al. (2016) suggested that researchers identify the source of data and develop “a data source theory” to explain why such data exist and what information the data theoretically provide before extracting organic data online. We build on their recommendations to call for researchers conducting cross-cultural or bilingual/multilingual studies using organic data first to investigate the data sources they intend to extract data from, examining their key features, the social contexts in which they operate, and the national policies that may shape content availability. We emphasize that the appropriateness of a platform is closely tied to the research topic and question. General social-behavioral phenomena, such as general emotional expressions, population-level linguistic sentiment, or user-engagement patterns, may be less vulnerable to censorship or platform-level variability than politically sensitive or culturally contested issues. Thus, researchers should consider not only the characteristics of the platform but also the nature of their inquiry when evaluating whether a given source can yield comparable and interpretable data across cultural contexts. Another recommendation is to collaborate with local researchers or consultants who can provide insights into the cultural nuances and platform-specific dynamics that might not be apparent to someone outside the culture. Finally, when publishing findings, researchers should transparently discuss the limitations and ethical considerations of their data collection, especially in contexts in which censorship or other platform differences might influence the data. Making this transparent and acknowledging these limitations is crucial for the research community to evaluate and build on the work.

To help disentangle platform-specific effects from culturally embedded behaviors, future research could consider incorporating generalizability studies as a methodological strategy. For instance, researchers could examine user behavior across multiple platform types within the same cultural context to assess how platform features influence users’ online expressions. Alternatively, they could hold the platform constant and compare user behaviors across different cultural or linguistic groups, thereby isolating cultural influences while minimizing technological confounds. These research designs would allow for a more systematic examination of the sources of behavioral variability and help clarify whether observed differences should be attributed to platform architecture, cultural communication norms, or their interaction. Incorporating such approaches can strengthen the validity of cross-cultural inferences in social-media-based research and inform decisions about treating certain behavioral patterns as artifacts to control or meaningful signals to interpret.

Sample Equivalence

Once researchers decide on the appropriate platform to gather data, ensuring sample equivalence becomes critical for building and testing ML algorithms in cross-cultural studies. Sample equivalence refers to the extent to which samples drawn from different groups or contexts are comparable in representation of both their (a) respective broader populations and (b) characteristics (e.g., age, gender, education, socioeconomic status [SES]). Although it is appealing to dive into a large amount of organic social media data, it poses threats of overlooking information about the actual users behind the screen and risks of selection bias to the validity of the studies (Landers et al., 2016). Such concerns are not unique to social media data; sampling bias and demographic confounding are long-standing challenges in traditional cross-cultural research using surveys and experiments, particularly when convenience samples are used. In this section, we examine the two key aspects of sample equivalence—representativeness and comparability—and discuss their methodological implications with practical examples and potential mitigation strategies.

The first part of sample equivalence involves matching demographics to ensure that the differences between samples are attributable to cultural factors rather than other demographic characteristics. Population demographics on the same platform can vary across countries. For example, X operates in more than 10 countries with more than 69 language representations worldwide (Poblete et al., 2011). The United States, as the top country of users, has 27.6% of the total users. U.S.-based X has 71.8% male users (Mislove et al., 2021) and 38.5% users ages between 25 and 34 (The WeChat Agency, 2022). X reflects a racially diverse culture, representing a much more heterogeneous population than Weibo. On the other hand, Sina Weibo represents a more homogeneous population given that 93.7% of its traffic flows from mainland China (Chen et al., 2011). Sixty-four percent of Weibo users are women; 40% are between 14 and 25 years old, and most are between the ages of 15 and 40 (Qian et al., 2023). These population differences can lead to the selection of unmatched samples from different cultures or countries. Even when samples are matched on surface demographics, such as age and gender, underlying differences in education levels and SES may still skew comparisons.

The second part of sample equivalence concerns the representativeness of the broader populations because selection bias in who uses or has access to specific social media platforms can limit the generalizability of findings to the wider country or cultural group. For online social-network data, selection bias occurs when researchers overlook the overall characteristic differences between internet users and noninternet users (Bethlehem, 2010) and use inferences drawn from the internet-user population to generalize across all people when making comparisons across countries or cultures (D. Boyd & Crawford, 2012). This issue is further compounded in cross-cultural research by the global disparity in internet access, which is closely tied to economic development. For example, internet usage is centered around big cities in developing countries, whereas it is more scattered across all regions in developed countries (Han et al., 2012).

Technological access itself varies substantially across cultures and countries, meaning that certain populations or regions with limited digital infrastructure may be systematically underrepresented. This bias reflects broader concerns raised by Atari and Dehghani (2022), who caution that digital corpora, including social media data, tend to disproportionately capture the voices of those with greater technological access, literacy, and social privilege. As a result, such data risks reproducing the WEIRD bias in new forms. Acknowledging these sampling limitations is crucial for responsible interpretation, especially when findings are used to draw cross-cultural conclusions. On this point, we call for researchers to recognize the unique individual characteristics of overall social media users compared with nonusers when drawing samples for cross-cultural studies.

Although fully overcoming selection bias remains challenging, employing strategies such as poststratification weighting, in which researchers adjust sample weights after data collection to match known population distributions based on census or large-scale survey data (Bethlehem, 2010), can improve the representativeness of samples. This approach is particularly valuable when direct matching is not feasible because of privacy constraints or missing individual-level data and has been well documented in demographic survey studies, such as those conducted by Pew Research Center, and national census surveys to correct for oversampled or undersampled groups. Other strategies, such as triangulating with other data sources (e.g., surveys), clearly defining the population of inference, and conducting sensitivity analyses (e.g., testing how robust findings are to possible biases in sample composition), can further help mitigate these limitations and interpret cross-cultural findings more responsibly.

Identifying demographic information on social media

One of the key challenges in sampling comparable profiles for cross-cultural studies using social media data is that demographic variables for social media users are not always available to researchers. To address this gap, several works have developed methods to infer demographic characteristics from social media data by combining metadata, profile content, linguistic patterns, and ML models (Cesare et al., 2017; Coppersmith et al., 2015; Volkova et al., 2015; Wang et al., 2019).

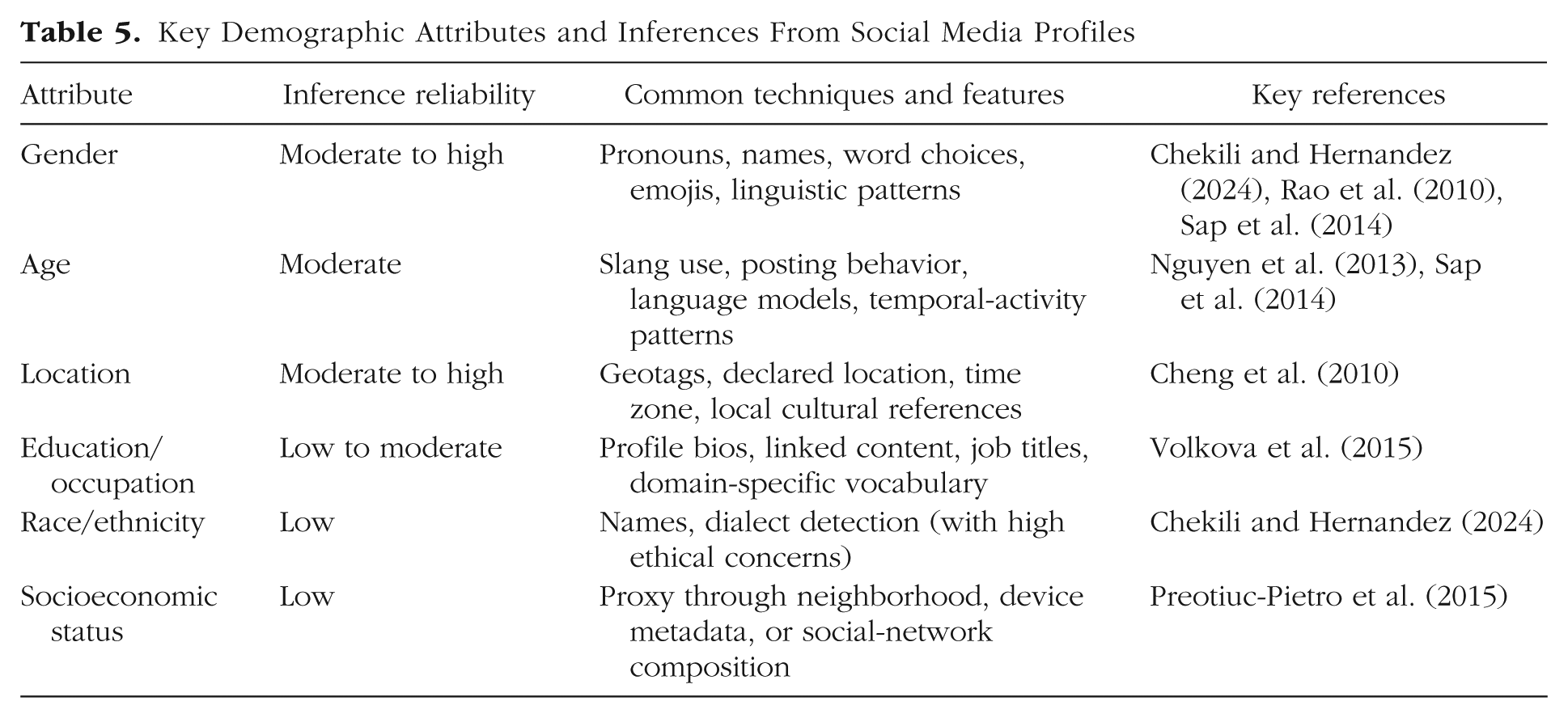

As summarized in Table 5, demographic attributes, such as gender and age, are among the most reliably inferred, with models achieving reasonable accuracy using features such as pronoun use, emojis, lexical choices, posting times, and user bios (e.g., Chekili & Hernandez, 2024; Rao et al., 2010; Sap et al., 2014; Volkova et al., 2015). Location can often be estimated using geotagged posts (when available), declared location fields, time zones, and language cues (e.g., Cheng et al., 2010). In contrast, education level and occupation are more challenging to infer directly, but some studies have attempted to estimate such information using user bios, network connections, and language modeling (e.g., Volkova et al., 2015). However, inference for attributes such as race, ethnicity, or SES remains highly uncertain and often context-dependent, requiring careful validation and ethical consideration. Overall, demographic inference methods should be treated as probabilistic rather than deterministic. Researchers should report uncertainty, validate with ground truth when possible, and avoid overinterpreting inferred attributes in their analyses and conclusions.

Key Demographic Attributes and Inferences From Social Media Profiles

Matching demographic profiles

Achieving sample equivalence in cross-cultural social media research requires effective matching of user profiles across platforms or cultural contexts. Existing research demonstrates the feasibility and validity of these approaches (Goga et al., 2015). When demographic data are available or can be inferred, researchers can build comparable samples by aligning key user characteristics, such as age, gender, or education level. One widely used method is propensity score matching, which involves estimating the probability that a user belongs to a particular group based on observed characteristics and then matching users across groups who have similar scores (Austin, 2011). Prior research provides valuable guidance on this matter. For instance, matching each participant with two counterparts (a 1:2 ratio) can improve precision without adding substantial bias, whereas adding more matches beyond four (e.g., 1:5 or higher) offers little additional benefit (Austin, 2010). This approach helps reduce selection bias by creating demographically balanced samples, especially when the two comparison groups differ in size and baseline characteristics, allowing for a more accurate estimate of group differences without demographic confounding. For example, Y. M. Cho et al. (2024) conducted a cross-cultural comparison of Twitter and Weibo users. To build equivalent samples, they surveyed their Twitter participants using Qualtrics to collect age and gender information and leveraged publicly available reports to gather similar data for Weibo users. Using the age and gender distributions from both platforms, they applied a propensity-score-based matching approach to construct balanced samples. This ensured that the Twitter and Weibo user groups were comparable in terms of key demographic characteristics, enabling meaningful cross-cultural comparisons without confounding effects from sample imbalances.

When self-reported or inferred demographic information is unavailable or incomplete, researchers can match users based on public attributes and behavioral features. For instance, Halimi and Ayday (2020) proposed a four-step framework drawing on public attributes, such as username, profile photo, description, location, and friendship networks. J. Liu et al. (2013) developed a technique for matching users across several communities using the rarity and commonality of usernames, and Shu et al. (2017) provided a comprehensive overview of profile-matching algorithms. Together, these methodological frameworks and approaches offer practical solutions for achieving sample equivalence when direct demographic data are limited or unavailable.

Input Equivalence

Next, researchers must decide which linguistic features to include in their ML algorithms. Input equivalence refers to the extent to which the raw and/or preprocessed input data are comparable (i.e., data type and amount) across language contexts and the consistency of data-preparation procedures, including preprocessing and feature-extraction approaches to build ML models. It is important to thoughtfully select the input data that best suit the research question, ensuring that relevant and obtainable data are used (Kern et al., 2016).

There are two major decisions to make regarding raw data. First, it is important to ensure raw data from different language sources are of comparable types. We focus on textual data with this framework; however, there are more nuances to raw data given the various formats of data available online. For example, will emojis from text postings or comments under videos be included from both language contexts for analyses? Should one include advertisements, or will advertisements add noise or cultural-relevant information to the study? How can one best identify text posts from bots in different language contexts and be able to eliminate those?

Second, researchers need to consider the amount of data needed for meaningful comparisons, which varies across languages. To develop a language model effectively, it is essential to have adequate data for each observational unit (Kern et al., 2016). Just as multiple items are required in a self-report measure to enhance reliability, a sufficient quantity of words is necessary to mitigate the impact of noise from limited responses. For example, about 1,000 written English words are required to reduce the absolute errors when predicting age and extraversion (Kern et al., 2016). Although given the same character limits, Chinese and Japanese may not need as many. In a cross-lingual study, researchers found that it takes nearly 4 times as many English characters and 1.6 times as many Japanese characters to express the same content in Chinese (Liao et al., 2015).

Although achieving perfect raw-data equivalence remains challenging, researchers can improve comparability by setting minimum text-length thresholds (e.g., Kern et al., 2016) or proportional adjustments across languages (e.g., Liao et al., 2015) to ensure raw-data comparability. In addition, several recent works have offered practical insights into other alignment strategies. For instance, CrossSum (Hasan et al., 2021) provides a multilingual summarization data set with prealigned, semantically similar content across 1,500+ languages, useful for analyzing topic-relevant content across cultures. XSemPLR (Sun et al., 2023) offers a benchmark for cross-lingual semantic parsing, covering 22 languages with consistent tokenization and representation schemes to ensure comparable semantic analyses. Such prealigned data sets and standardized benchmarks offer practical pathways to reduce inconsistency in cross-lingual research.

The other half of input equivalence is ensuring consistency and comparability of input-data preparation processes or preprocessing. At this step, the unique linguistic features of languages are crucial in making various preprocessing decisions before building ML models. Hickman et al. (2022) summarized a list of preprocessing decisions, including handling negation, spelling correction, and so on. However, some of the most discussed considerations (e.g., nonalphabetic character removal, lemmatizer, and stemmer) apply only to alphabetically written languages (e.g., English, Croatia, French). In contrast, logographic and syllabic written languages (e.g., Chinese Hanzi, Japanese Kanji), in which glyphs represent meaningful components of words rather than phonetic elements, would probably require researchers to consider removing alphabetic characters for data preparation. And detecting spelling errors is more challenging for logographic texts because they require more dependency on the contexts.

Moreover, linguistic features, such as polysemy (single words with multiple meanings) and the absence of word boundaries in certain languages, can further complicate the preprocessing of text data. For example, languages such as Chinese, Japanese, and Thai rely heavily on context to derive meaning; the accurate interpretation of polysemes requires a deeper understanding of the context to separate the text properly for interpretations. In Chinese, the phrase “这很难吃” can have two very different interpretations: (a) “This tastes awful” or (b) “This is hard to bite.” The meaning depends on the context, particularly how the polysemous character “难” (meaning “difficult” or “awful”) is interpreted and whether “难吃” should be segmented as a single unit (“awful taste”) or treated separately (“hard to eat”). Although Arabic includes spaces, its morphological complexity adds another layer of difficulty. Arabic words combine conjunctions, prepositions, and nouns into a single string, requiring special tokenization to separate these components.

Therefore, rather than applying identical preprocessing procedures across languages, it is crucial to account for the unique linguistic features and structures of each language to achieve more nuanced equivalence. Consulting native speakers or language experts, similar to the use of back-translation techniques in survey research, can help preserve culturally embedded meanings and norms. Although ML approaches may bypass some translation-related issues (i.e., the loss of cultural nuance, variation in response styles; Robert et al., 2006; Trimble & Vaughn, 2013) by analyzing naturally occurring language, linguistic expertise remains essential for ensuring valid and culturally sensitive input representations for model development.

After preprocessing the raw input data, researchers need to extract features from the texts as predictors to build algorithms. This often involves tokenization processes to identify units of analysis and embedding methods to consider tokenizers in context (Hickman et al., 2022). There are two primary approaches to feature extraction: closed-vocabulary and open-vocabulary methods. Closed-vocabulary approaches rely on predefined dictionaries to categorize words into psychological or linguistic categories, such as LIWC (R. L. Boyd et al., 2022; Pennebaker et al., 2022). Applying these methods cross-linguistically requires equivalent dictionaries in each language to maintain conceptual and measurement equivalence. For example, LIWC has been adapted into various languages over the years, including Mandarin and Hindi versions developed to parallel English LIWC categories, enabling researchers to conduct sentiment analyses consistently across languages (e.g., Gupta et al., 2021; Lee et al., 2022).

Open-vocabulary approaches, such as word embeddings, allow the model to capture diverse and evolving linguistic expressions and adapt to new words, phrases, and slang. Although tokenization in LLMs typically refers to splitting input into subword or word-level units for model processing, it is crucial to segment texts into linguistically meaningful units to ensure that cross-language comparisons are aligned at the appropriate level of analysis. For example, English is typically tokenized at the word level, whereas Chinese is often tokenized at the character level because of the absence of spaces between words, resulting in misaligned units of analysis. For example, the English word “we” corresponds to the Chinese “我们,” which comprises two characters. However, both “I” and “我” in Chinese consist of a single word/character. Therefore, for languages such as Chinese, applying word-segmentation tools (e.g., Jieba, Stanford Segmenter) can help to ensure comparable levels of embeddings.

Tokenization and embedding are central to achieving input equivalence in cross-cultural NLP. Although static word embeddings capture general semantic relationships, they often struggle with language-specific features, such as polysemy, morphological complexity, or culturally embedded expressions. This is especially problematic when embeddings are trained on curated corpora (e.g., Wikipedia or Common Crawl, as in FastText) that may not reflect informal, user-generated social media text or capture cultural nuances embedded in people’s everyday language use.

Contextual embeddings, such as BERT, particularly its multilingual variants (e.g., mBERT, XLM-R), better capture semantic variation and disambiguate meaning across languages. However, these models also inherit biases from their pretraining data, limiting generalizability to culturally distinct contexts. For low-resource languages, methods such as cross-lingual transfer learning and subword tokenization (e.g., byte-pair encoding) offer partial solutions; yet challenges remain in ensuring that input features are both linguistically valid and culturally representative. Table 6 summarizes commonly used embedding models, their training sources, and potential limitations when applied to social media text in multilingual settings.

Examples of Major Pretrained Embedding Models

Note: In both word-embedding and context-embedding categories, models are listed in order of historical progression and increasing relevance to current research.

Most recently, LLMs, such as GPT and Llama, have transformed computational analyses with powerful capabilities for sentiment analysis, thematic categorization, and generating text-based predictions. However, their reliance on massive and heterogeneous training data sets introduces additional linguistic, social, and cultural biases. These embedded biases may systematically affect how the models interpret and classify text across different cultural contexts. For example, sarcasm or indirect expressions common in one culture may be misinterpreted by the model trained predominantly on another culture’s discourse. These challenges require careful consideration and validation to avoid undermining cross-cultural comparisons when adopting these models.

Future research should aim to disentangle data-based variance, which reflects genuine cultural differences, from model-based variance introduced by algorithmic biases. Both closed-vocabulary tools (e.g., LIWC), which rely on expert-curated dictionaries, and transformer-based models (e.g., BERT or GPT) can distort interpretations if such variances are overlooked. Figure 3 illustrates how inferences may be shaped not just by the cultural nature of the source data but also the biases embedded in the analytic tools themselves. Advancing cross-cultural equivalence requires rigorous validation, triangulating across analytical approaches, benchmarking against culturally grounded references, and incorporating local expertise. Although native fluency enhances interpretation, technical decisions, including tokenization and embedding strategy, necessitate interdisciplinary collaboration.

Potential sources of bias in deriving inferences from social media data.

Psychological-Ground-Truth Equivalence

Beyond input equivalence, another key challenge in supervised learning is ensuring that the output or psychological ground truths are valid and equivalent because the supervised approach requires both inputs and outputs (i.e., psychological ground truths) to train or supervise the computer’s learning (Ayodele, 2010; Tay et al., 2022). Psychological ground truths are psychological constructs of interest or other outcomes that ML models seek to predict. These ground truths may reflect biases embedded in the training data, leading to systematically biased predictions and conclusions (Tay et al., 2022). Therefore, psychological-ground-truth equivalence focuses on ensuring that outputs provided to the ML models have minimal bias and are measured equivalently across language and cultural groups. In this section, we discuss two different forms for deriving psychological ground truths, self-reports and other-reports, and strategies to achieve equivalence in this process.

Using self- or other-reports from currently available scales or tests is one common way to establish ground truths for the training data set. For example, many ML personality-assessment studies have asked participants to complete self-report personality measures (e.g., International Personality Item Pool, Goldberg et al., 2006; NEO Personality Inventory–Revised, Costa & McCrae, 1992) and incorporate their results as the output to build regression models with the training sample (e.g., Park et al., 2015; Youyou et al., 2015). When it comes to multilanguage/cultural studies, ensuring measurement equivalence and invariance of these scales/tests used across different language groups is crucial. Although self-reports have been widely used in English contexts, limitations arise in obtaining such data from non-U.S. populations, posing challenges in using self-reports as cross-cultural psychological ground truth. Another caveat of using self-report to establish ground truth is the potential discrepancies between public and private expressions of behaviors. Hickman et al. (2022), for instance, found that the automated interviews using other-rated personality assessments yielded more valid and reliable results compared with models based on self-reports, suggesting that self-reports may not always be an accurate portrayal of how people are perceived by others. This is particularly relevant when using social media data because the nature of the posts is public and perceived by others. Using other-reports adds another layer of challenge in non-U.S. populations to recruit participants but should be given more consideration when operating in cultures with tight social norms that could potentially exacerbate the discrepancies between public and private expressions of self (Triandis, 1989).

Researchers can also rely on trained human annotations to establish ground truth with labeled data. This approach has been more commonly adopted in cross-cultural comparison studies. For example, Li et al. (2020) recruited two native-language annotators who independently rated social media posts from X and Sina Weibo for perceived politeness on a scale from least to most polite. In another study focusing on temporal orientations, researchers recruited three bilingual annotators who rated text posts from X and Sina Weibo at the sentence level into four categories of temporal orientations and used their annotation results to build predictive models on temporal orientations (Hou et al., 2024). These practices are well established in cultural-psychology research, in which inductive coding is frequently employed to identify culturally relevant meanings from open-ended materials, such as narratives and self-descriptions (e.g., Kitayama et al., 1997; Markus et al., 2006; Morling & Lamoreaux, 2008; Uchida & Kitayama, 2009). Building on this tradition, we note that cross-cultural annotation efforts in an ML context similarly benefit from careful consideration of who the annotators are.

In general, when selecting human annotators, it is best to have native speakers of the target languages because they can pick up language nuances and understand the societal and cultural contexts of the posts. Conducting frame-of-reference training, a process that helps annotators develop a shared understanding of the target construct and rating criteria, is highly encouraged before annotation work begins. During the training, human judges should practice annotating a small sample separately and debrief afterward together. Last, checking and reporting interannotator agreement (e.g., interclass correlation coefficient; Shrout & Fleiss, 1979) is critical before the validation phase. Likewise, some studies have used MTurk participants to label online text when different language speakers are available (Kern et al., 2016; Li et al., 2020); one caveat is the quality of ratings when the target construct is not clearly defined.

Model-Performance Equivalence

Finally, model-performance equivalence focuses on determining whether ML models trained in different languages or cultural contexts function comparably at the level of their outputs and inferences. Unlike earlier forms of equivalence that emphasize the design of the study and models, this type of equivalence mostly focuses on the evaluation phase, specifically, whether models trained separately produce comparable predictive accuracy and demonstrate similar structural relationships with theoretically related variables. Establishing model-performance equivalence helps ensure that observed differences across cultural groups reflect genuine psychological or cultural variations rather than methodological artifacts. In this section, we outline two approaches for evaluating model-performance equivalence: (a) comparing predictive-accuracy metrics and (b) assessing the consistency of predictive constructs in nomological networks.

After following comparable approaches to building ML models for each cultural or language group, researchers need to carefully evaluate performance differences to determine whether the models function equivalently and if not, whether the difference results from a genuine psychological difference or a method artifact. For supervised models, this may include comparing predictive-accuracy metrics, such as precision (i.e., the proportion of correctly identified positive cases out of all predicted positives), recall (i.e., the proportion of correctly identified positive cases out of all actual positives), and accuracy (i.e., the proportion of correctly classified cases out of all cases). For unsupervised models, relevant evaluation indices may include reconstruction error (i.e., how well the model reproduces the original input, indicating representation fidelity), silhouette scores (i.e., how well data points form distinct clusters, reflecting latent structure quality across cultures; Messner, 2022), or contrastive loss (i.e., how well semantically similar items are grouped together), depending on the modeling approach.

Another way to evaluate model-performance equivalence is to examine whether the predicted psychological construct behaves similarly across cultures in its associations with other theoretically related variables. Rather than interpreting cultural differences in model outputs or feature weights at face value, researchers should assess whether the relationships between the predicted construct and its nomological network are consistent across groups. This approach offers a more theoretically grounded and interpretable basis for evaluating whether models function equivalently across cultural contexts. For example, Li et al. (2020) found that although different lexical features predicted politeness on Weibo and X (e.g., gratitude vs. swearing), positive emotions correlated positively with politeness, and taboo language correlated negatively across both platforms, aligning with previous research. Conducting comparative correlational analyses between the predicted construct and its nomological network provides an initial evaluation of whether the two or more ML models built in different languages or cultures function equivalently. Researchers should draw on prior theory to specify expected correlational patterns and conduct such analyses to evaluate the performance equivalence across models as a starting point.

A robust evaluation strategy involves triangulating traditional psychometric analyses with model-interpretability tools. First, researchers can test whether the predicted construct exhibits consistent associations with relevant external variables across groups using techniques such as correlation comparisons, moderated regression, or multigroup structural equation modeling. Consistent structural relationships provide evidence of nomological equivalence, suggesting a shared psychological function despite differences in surface features.

Second, researchers could examine whether the ML models rely on similar or distinct linguistic features across groups by employing model-interpretability tools, such as SHapley Additive exPlanations (SHAP) values, attention weights, or feature-importance scores. These techniques help uncover the specific input features that drive predictions for each cultural group. Recent work (e.g., C. Liu et al., 2021; Rheault & Cochrane, 2020) has shown that multilingual models often rely on culturally distinct linguistic cues even when producing similar predictive outcomes. However, the differences in feature reliance raise an important question: Do these differences reflect legitimate cultural variation in construct expression, or do they indicate a lack of equivalence in model functioning? To resolve this ambiguity, interpretability results should be considered together with nomological network validation. If the model draws on culturally specific features but still maintains the expected structural relationships with related variables, then the model may reflect valid expressions of a shared construct. Conversely, if both feature importance and predictive relationships diverge across groups, this may suggest a lack of measurement or functional equivalence. Integrating these approaches allows researchers to differentiate between construct-equivalent models that adapt to cultural expression versus models whose divergent functioning compromises comparability. A combined strategy ensures that cross-cultural ML models capture true psychological similarities and differences rather than methodological artifacts.

Conclusion

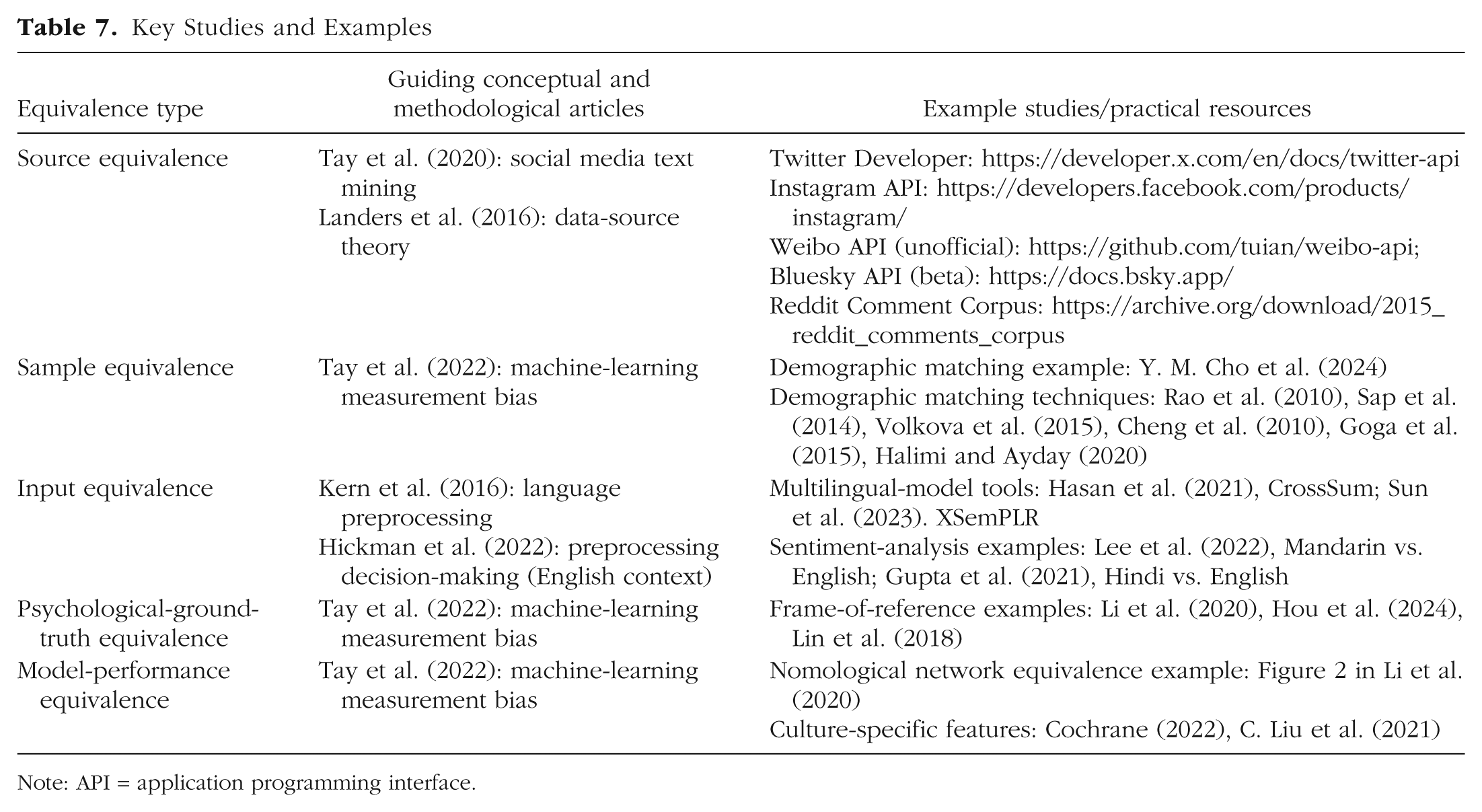

The advent of big data, particularly online social media data, and ML techniques, notably NLP, presents unprecedented opportunities to explore the nuances of cultural similarities and differences. These contemporary methods facilitate the analysis of large-scale, organic data, offering a more authentic and comprehensive view of cultural expressions and interactions. In this article, we embarked on a methodological equivalence exploration and discussion into the application of ML techniques in cross-cultural psychological research, focusing on cross-language equivalence using social media text language. Specifically, we propose an equivalence framework that introduces a new lens for viewing equivalence in the context of big data and ML, encompassing source equivalence, sample equivalence, input equivalence, psychological-ground-truth equivalence, and model-performance equivalence. To complement the conceptual descriptions provided earlier (see Table 2), Table 7 offers a summary of key studies and practical resources throughout the article for each type of equivalence.

Key Studies and Examples

Note: API = application programming interface.

Although our framework builds on long-standing concerns of bias and equivalence in cross-cultural psychology (e.g., construct, sample, and measurement equivalence; Van de Vijver & Tanzer 2004), it extends these ideas to meet the unique demands of naturally occurring, unstructured data generated on social media platforms and analyzed using ML approaches. Compared with classical approaches that assume discrete measurement instruments and low-dimensional feature spaces, our framework addresses the complexities of modeling constructs across cultures when working with high-dimensional, algorithmically processed behavioral data.

We hope this framework serves as an adaptable tool to assist researchers, reviewers, and practitioners in navigating the intricate landscape of cross-cultural studies in big data; facilitates more cross-cultural studies beyond the traditional WEIRD samples; and enables a richer and more diverse representation of global cultures. We also hope this work will inspire further research on the methodological issues of equivalence in cross-cultural studies and more cross-cultural research using social media text posts and advanced computational techniques.

Footnotes

Transparency

Action Editor: Kongmeng Liew

Editor: David A. Sbarra

Author Contributions