Abstract

Research-data availability contributes to the transparency of the research process and the credibility of educational-psychology research and science in general. Recently, there have been many initiatives to increase the availability and quality of research data. Many research institutions have adopted research-data policies. This increased awareness might have raised the sharing of research data in empirical articles. To test this idea, we coded 1,242 publications from six educational-psychology journals and the psychological journal

Keywords

Research-data availability is one of the keys to research transparency and credibility of scientific findings (Asendorpf et al., 2013; Bond-Lamberty, 2016; Hardwicke et al., 2018). From a societal perspective, reliable and transparent scientific findings are particularly critical when they are the basis for political and practical recommendations, such as in educational research (including educational psychology; Fleming et al., 2021; Patall, 2021). Available research data not only increase the comprehensibility of research results on which they are based but also serve as a valuable source to further process them into secondary data and use them as a buildup for secondary data analysis (Weston et al., 2019). Thus, data sharing as part of open science acts as a scientific accelerator and contributes significantly to scientific progress in the tradition of scientific paradigms formulated by Popper (1959, 1963) and Merton (1973). Yet the potential for growth in sharing research data is extensive (Bond-Lamberty, 2016). Earlier studies have shown that 73% of authors did not share research data from published studies (Wicherts et al., 2006). This is remarkable because these authors, by publishing their scientific article, have agreed to share research data for reanalysis as specified by the American Psychological Association’s (APA) Certification of Compliance With APA Ethical Principles (APA, 2001). Furthermore, research-data sharing relates to reported statistical quality (Wicherts et al., 2011). Because the availability of research data declines rapidly with article age (Tedersoo et al., 2021; Vines et al., 2014), in recent years, both scientific journals and research institutions have adopted policies on handling research data with the goal to archive research data in a sustainable way. Boccali et al. (2021) understood sustainability of research data to mean long-term preservation, accessibility, and interoperability. In the present study, we ask whether research-data policies (on both the journal and the research institute’s level) affect research-data availability in educational psychology.

Since the 2014 series of articles published in the journal

Several initiatives aim at overcoming the issue of low research-data availability, such as the GO FAIR initiative (Mons et al., 2020; Velterop & Schultes, 2020). Gradually, scientific journals have adapted their author notes to recommendations for sharing research data and require authors—more or less concretely—whether and how research data should or must be shared (“Time to Recognize Authorship of Open Data,” 2022). One of many measures to overcome data-transparency deficits is establishing high levels of data transparency, in line with the Guidelines for Promoting Transparency and Openness (TOP; Center for Open Science, 2020; Haroz, 2018; Nosek et al., 2015). The TOP guidelines provide a template for improving transparency in research published in scientific journals. Likewise, data-transparency levels allow the classification of journals’ data policies into multiple categories with ascending levels of strictness; Level 0 = a nonimplementation (i.e., if a journal just recommends data sharing but has not implemented a data policy yet); Level 1 = an article must include a link to the research data; Level 2 = data must be posted to a trusted repository, and exceptions must be explicitly stated; and Level 3 = data must be posted to a trusted repository, and reported analyses will be reproduced independently before publication (see also Table 1). When the journal

Data-Transparency Levels

On a systemic level, research institutions (e.g., universities or nonuniversity research institutions) have implemented research-data policies, and funding agencies have formulated requirements for handling research data (European Research Council, 2019; German Research Foundation, 2022).

The Costs and Benefits of Available Research Data

Note that making research data available is related to costs (Perry & Netscher, 2022). Data-curation costs and implementing transparency standards vary depending on—among other things—disciplines, study design, data complexity, and the personal information included in the data (Hensel, 2021). Thus, these costs should be estimated in the best possible way in advance when planning a research project. Measuring costs in research data management is complex, and there are only approaches to it in research so far. Measuring opportunity costs in the sense of the missed opportunity respective chance (e.g., investing in comprehensibility in argumentation instead) is much more difficult because the alternatives are not well known and hardly considered. Furthermore, the determination of the optimum in research-data availability, characterized by the maximization of the difference between the benefits and the costs (the balance of marginal benefits and marginal costs from a microeconomic perspective) of research-data-related measures in this respect, would be promising but is still pending.

Despite all this, the benefits of making research data available are manifold and range from replicability of scientific findings (Camerer et al., 2018) to increased citations rates (Piwowar et al., 2007; Piwowar & Vision, 2013). Articles that include statements that link to data in a repository have an up to 25.36% (±1.07%) higher citation impact on average (Colavizza et al., 2020).

Study Overview and Research Questions

We report a study in which we analyzed the availability of research data in articles published in six empirical journals covering topics in education research (i.e., educational psychology, learning, and education) in the years 2018 and 2020. As a baseline, we analyzed the research-data availability in

The general awareness of the importance of research data has substantially increased (Kidwell et al., 2016). The FAIR (findable, accessible, interoperable, reusable) data concept was published in 2016 (Wilkinson et al., 2016). Because it takes time to adopt new concepts in research, we chose 2018 as start point. We thus ask as Research Question 1 whether the availability of research data increases between articles published in 2018 and 2020.

The journal’s policy regarding the handling of research data is the basis for preparing and handling the submission and, thus, should be instructive for both the authors and the editor.

Likewise, the fact that a university or research institution has officially adopted a research-data policy should increase the researchers’ awareness. Therefore, we assume that the availability of research data is higher for articles whose corresponding author is from an institution that has implemented a research-data policy. Thus, we counted any research-data policy also when research-data sharing is only recommended. Consequently, Research Question 3 asks whether the research-data policies of the corresponding author’s institution affect the availability of research data.

Work published in the selected educational-psychology journals might differ from the work published in

Method

We report how we determined our sample, all data exclusions, all measures, research questions, and analytic plans in the study.

Sample

The initial screening began with analyzing the publication lists (e.g., annual reports) of German Leibniz institutes doing research in this field—the Leibniz Institute for Research and Information in Education (DIPF), the German Institute for Adult Education - Leibniz Centre for Lifelong Learning (DIE), the Leibniz Institute for Science and Mathematics Education (IPN), and the Leibniz-Institut für Wissensmedien (IWM). This resulted in a list of 15 journals. We analyzed the data-transparency levels of the following journals (see below and Table 2):

Detailed Overview of the 1,242 Analyzed Articles

Note: We removed one retracted article before data analysis.

We started with the idea to include one journal per data-transparency level in the analysis sample. However, because there was no journal with Data-Transparency Level 3, we changed sampling to consider both the 2018 Social Sciences Citation Index (SSCI) and data-transparency level. We assumed that both the importance of a journal (i.e., journal rank) and the free availability of research (i.e., open access) might stimulate data sharing. We thus first included the highly influential journals

Next, we selected the only journals with Data Transparency Level 1:

Finally, we cross-checked the final list with information from the Web of Science (i.e., Journal Citation Reports). We wanted to ensure that we included all relevant journals with a data-transparency level higher than 0. For this purpose, we analyzed the author guidelines of all 59 journals in the psychology, educational category of the year 2018 in the Journal Citation Reports. It turned out that there were no more journals with a data-transparency level higher than 0. Thus, our sample contained all relevant journals of this category.

Measures

Data-transparency level

We analyzed the journals’ editorial policies (i.e., author notes or instructions for authors). In particular, we applied the data-transparency levels as suggested by Nosek et al. (2015) and Haroz (2018). Data Transparency Level 0 was assigned if the policy just encourages data sharing or says nothing. Regarding the journals in our sample, After research results are published, psychologists do not withhold the data on which their conclusions are based from other competent professionals who seek to verify the substantive claims through reanalysis and who intend to use such data only for that purpose, provided that the confidentiality of the participants can be protected and unless legal rights concerning proprietary data preclude their release. . . . APA expects authors to have their data available throughout the editorial review process and for at least 5 years after the date of publication. (“Guide for Authors—Journal of Educational Psychology,” 2021)

Finally, the

Data Transparency Level 1 was assigned if the policy states whether data are available, if so, and where to access them. The

And The journal strongly encourages that all datasets on which the conclusions of the paper rely should be available to readers. We encourage authors to ensure that their datasets are either deposited in publicly available repositories (where available and appropriate) or presented in the main manuscript or additional supporting files whenever possible. (“Guide for Authors—Instructional Science,” 2021)

Data Transparency Level 2 was assigned if the policy states that the data must be posted to a trusted repository and that exceptions must be identified. The journal expects that when possible all data supporting the results in papers published are archived in an appropriate public archive offering open access and guaranteed preservation. . . . All papers need to be supported by a data archiving statement and the data set must be cited in the Methods section. The paper must include a link to the repository in order that the statement can be published. . . . In some cases, despite the authors’ best efforts, some or all data or materials cannot be shared for legal or ethical reasons, including issues of author consent, third party rights, institutional or national regulations or laws, or the nature of data gathered. In such cases, authors must inform the editors at the time of submission. It is understood that in some cases access will be provided under restrictions to protect confidential or proprietary information. Editors may grant exceptions to data access requirements provided authors explain the restrictions on the data set and how they preclude public access, and, if possible, describe the steps others should follow to gain access to the data. (“Guide for Authors—British Journal of Educational Psychology,” 2021)

Finally, Data Transparency Level 3 would have been assigned if a journal required the data to be posted to a trusted repository and reported analyses will be reproduced independently before publication. We did not identify a journal with this data-transparency level. We discussed the contents of the research-data policies and identified its data-transparency level accordingly. We did not calculate the interrater reliability.

Research-data policies

After identifying the corresponding author’s institution website URL (e.g., www.die-bonn.de), we used the following search string on Google: “research data policy site:institution” (e.g., “research data policy site: www.die-bonn.de”) and downloaded the research-data policy if available. Note that we considered only official policies or guidelines. In particular, we did not consider statements concerning best practices or tips on research-data management published on the institutions’ websites. An example of a strict research-data policy is from the University of New South Wales [UNSW] Sydney (2019, April 18), describing data accessibility as follows:

Researchers must make available any research data and materials related to publications for discussion with other researchers. Where confidentiality provisions apply (e.g., the researchers or the institution have given undertakings to third parties, such as the subjects of the research), it is desirable for researchers to keep data in a way that allows necessary third parties to reference the information without breaching such confidentiality. (Data Accessibility section)

One quality feature here is that UNSW Sydney reviews the policy at a specified date, which is mentioned in the policy. An example of a less strict research-data policy is from University College London (2013, August 2) mentioning that “Researchers should . . . develop and record appropriate procedures and processes for the collection, storage, use, re-use, access, and retention of the research data associated with their research program” (Researchers section).

To ensure that the policy was relevant for a specific article, we checked the release dates of each policy. If an article was published before the release date of the corresponding institution’s research-data policy, it was marked as published without policy. Because the analysis in the project considered only the presence (vs. absence) of an official research-data policy, we took only the release date of the initial policy into account even if it was updated later. If there was more than one version of a research-data policy (i.e., an updated version was published), we always considered the first date. For five institutions, we could not retrieve the publication dates of their research-data policies and inquired with the relevant offices. After 5 weeks, only two institutions responded to our inquiries, and we coded the availability of their research-data policy accordingly. For the remaining institutions, availability was coded as “NA” (six articles in total).

Note that changes to Google’s algorithm and/or changes at the institutional webpages might alter the discovery of the policies. This is beyond our control.

Prop data available is the number of articles with shared data relative to the number of empirical articles (

Research questions and analytic plans

Research Questions 1 to 3 and their related analytic plans were determined before data collection and data analysis. The fourth research question and its analytic plan was developed during data analysis.

Data analysis

Overview

We coded 1,242 scientific articles (including one retracted article, which we excluded before statistical analysis), of which 1,167 (

Flowchart depicting the different stages of article selection. Of the initial 1,242 articles, only 350 included a valid link to the corresponding research data. “All articles” includes one retracted article. See also Table 2.

Statistical tests

The analysis of the research questions is based on the articles published in the educational psychology journals (

Results

Research Question 1: Is the availability of research data increasing between articles published in 2018 and 2020?

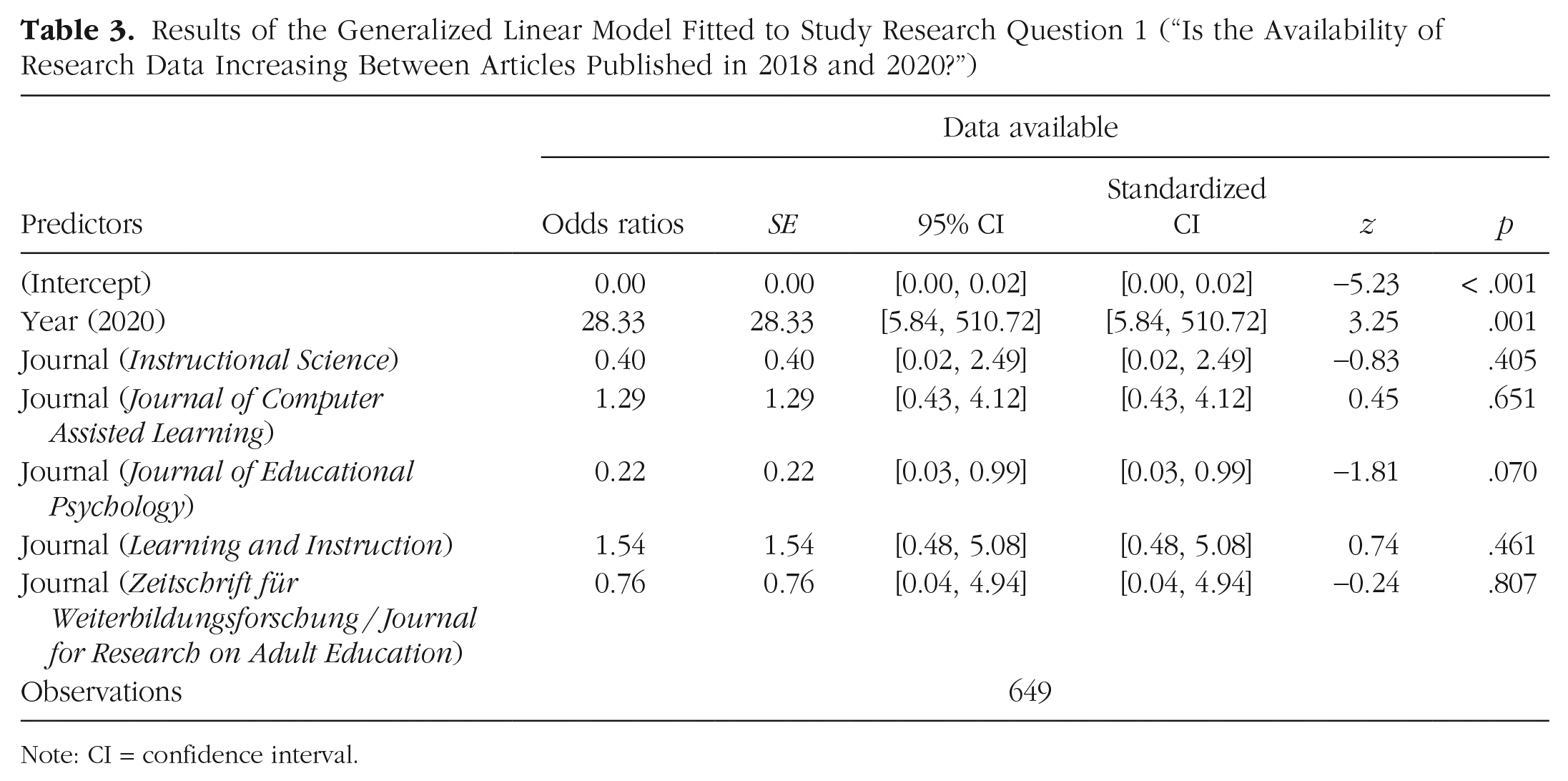

The fitted GLM included the year (2018, 2020) as a fixed effect by controlling for the journal (for results, see Table 3). Confirming Research Question 1, data availability increased significantly from 0.32% (one of 314 articles) in 2018 to 7.16% (24 of 335 articles) in 2020, odds ratio [

Results of the Generalized Linear Model Fitted to Study Research Question 1 (“Is the Availability of Research Data Increasing Between Articles Published in 2018 and 2020?”)

Note: CI = confidence interval.

Research Question 2: Do the data-transparency levels of the scientific journals affect the availability of research data?

The fitted GLMER included the data-transparency levels (0, 1, 2) as a continuous fixed effect by controlling for the year (for results, see Table 4) and journal as random intercept. Data sharing with Transparency Level 0 was 3.08% (10 of 325 articles), 4.09% (nine of 220 articles) with Data Transparency Level 1, and 5.77% (6 of 104 articles) with Data Transparency Level 2. Negating Research Question 2, data-transparency levels on the journal level were not associated with research-data availability,

Results of the Generalized Linear Mixed-Effect Model Fitted to Study Research Question 2 (“Do the Data-Transparency Levels of the Scientific Journals Affect the Availability of Research Data?”)

Note: CI = confidence interval.

Research Question 3: Do the research-data policies of the corresponding author’s institution affect the availability of research data?

The fitted GLM included the information on whether the corresponding author’s institution adopted a research-data policy (yes, no) as a fixed effect by controlling for the year. Data sharing in institutions without implemented research-data policy (16 of 394 articles; 4.06%) was not different from data sharing in institutions with implemented research-data policy (9 of 255 articles; 3.53%),

Results of the Generalized Linear Model Fitted to Study Research Question 3 (“Do the Research-Data Policies of the Corresponding Author’s Institution Affect the Availability of Research Data?”)

Note: CI = confidence interval.

Flowchart depicting the availability of research data as a function of whether the corresponding author’s institution has adopted an official research-data policy or not for the years (left) 2018 and (right) 2020.

Research Question 4: Is the work reported in the educational-psychology journals substantially different from the work reported in Cognition in terms of secondary data analysis?

As a control, we explored the differences in data availability between the work published in

Flowchart depicting the distribution of primary and secondary data analyses in

Discussion

In this study, we examined how the availability of research data in educational psychology has changed over time as a function of institutional context. Research-data availability was generally low (3.85% compared with 62.74% in

We did not observe an influence of the editorial policy (as operationalized via the journals’ data-transparency levels) or the availability of a data-management policy on the level of the corresponding author’s institution. We controlled for type of analysis (primary vs. secondary data analysis) that might be different for the journal types (

The role of the author guidelines and the editorial actions

We analyzed the guide for authors of the evaluated journals and found that the data-transparency level is not predictive for actual data availability. Whereas both the author guidelines of

The role of institutional research-data-management policies

A surprising finding of our analysis is that research-data availability is not different for universities and research institutes that have implemented a research-data policy and those that have not. We can only speculate about the reasons. First, the authors might not be aware of such a policy and, hence, do not know how research data should be handled. Second, although researchers know how to manage research data according to the institutional research-data policy, they balk at the effort of storing them in a repository because the curation of research data is related to significant costs (Perry & Netscher, 2022). This is a reasonable strategy in case the nonadherence to such guidelines is not sanctioned. Such sanctions could include funding restrictions, for example. However, we do not believe that sanctions are constructive and practicable. Instead, we think that it is more purposeful to incentive researchers (Mellor, 2021). For that, it is essential to get an overview of research-data practices. Thus, the research institutions should systematically monitor and document research-data output and outcome (e.g., usage, citations) similar to scientific publications. Researchers could add this information to their academic CV or web page. Alternatively, awarding useful and FAIR data sets on the level of universities, research institutes, or research foundations could offer a way to boost data sharing (van der Zee & Reich, 2018).

Toward an integrated data-management system

In the following, we identify four dimensions describing different but interrelated aspects to increase research-data availability: (1) the researchers, (2) the research institutions, (3) the scientific journals (including editors and reviewers), and (4) how technical solutions could support increasing research-data availability.

First, we assume that the most significant potential in the endeavor to increase the availability of research data lies with the researchers. Therefore, we consider it essential to improve data literacy (Ridsdale et al., 2015) and educate the researchers about the legal regulations and the benefits of shared research data. Such teaching units should include the requirements of the (national and international) funders, the institutional research-data policies, and the guidelines for safeguarding good research practice (German Research Foundation, 2019; Science Europe, 2021). Furthermore, such units should also teach the benefits of sharing research data. For example, scientific articles including statements linking to data in a repository have an up to 25.36% higher citation impact on average (Colavizza et al., 2020). More research is needed to study both the reasons and motives for (not) sharing research data on the levels of the individual researchers (Linek et al., 2017), possible interventions, and their potential effectiveness. The results of such studies are of high relevance to further developing the academic incentive system.

Second, we believe that institutional data-management professionalization is key to optimal data curation and increasing data availability (Hardwicke et al., 2018; Vines et al., 2013). As requirements in data management have increased over the years (e.g., FAIR criteria; Wilkinson et al., 2016), professional research-data managers or data stewards should be an integral part of every research project. The tasks of such research-data managers should include supporting the preparation of a research-data-management plan (including data descriptors). Recently, on the basis of an idea from Science Europe (2018, 2021), discipline-specific, standardized data-management plans have been developed (Bongartz & Kaluza, 2022; “Domain Data Protocols for Educational Research,” 2022), and—at least in Germany—a transfer to other disciplines is planned via the connection to National Research Data Infrastructure Germany (NFDI), which is organized in a discipline-specific way (e.g., Consortium for the Social, Behavioural, Educational, and Economic Sciences [KonsortSWD]). Note that the NFDI is the German counterpart to the European Open Science Cloud (EOSC). Furthermore, research-data managers should also assist in handling the data-privacy policies (in case human subjects are involved) and the final deposition of the research data on a trusted repository. This last step should consider the FAIR-data criteria (Wilkinson et al., 2016). In particular, FAIR data must include metadata (i.e., data descriptors) that are complete, of high quality, and machine-actionable.

The FAIR-data criteria advance the Open Data Concept, which traces back to 2006. According to the Open Knowledge Foundation’s (2022) The FAIR principles, although inspired by Open Science, explicitly and deliberately do not address moral and ethical issues pertaining to the openness of data. In the envisioned Internet of FAIR Data and Services, the degree to which any piece of data is available, or even advertised as being available (via its metadata) is entirely at the discretion of the data owner. FAIR only speaks to the need to describe a process – mechanised or manual – for accessing discovered data. . . . None of these principles necessitate data being “open” or “free.”

In a nutshell, compared with open data, the FAIR-data concept is more adapted to special needs in the research cycle. We suggest that the FAIR-data criteria guide institutional research-data management and policies including data handling and final deposition on a trusted repository (Wilkinson et al., 2016).

We further suggest expanding institutional support for research-data management. Professionalization in research-data management could also address the problem of suboptimal data curation (Hardwicke et al., 2018). Over the past decade, a plethora of new job titles have emerged (Tammaro et al., 2019) whose job profile, roles, and required competencies differ on national and international levels. Given the long-standing lack of common terminology, positions and skills still need to be more strongly elaborated and valued at the institutional level. Among others, research-data managers, data scientists, data librarians, data curators, and data stewards at the institutional level, for example, can support researchers in data handling along the research-data cycle (e.g., creating metadata, dealing with legal issues such as licensing, and data ingest).

Third, we believe that scientific journals have an essential role in increasing the availability of research data. This can be abstractly divided into editorial tasks and duties and infrastructural components (e.g., labeling scientific articles with published data). Most importantly, the chief editors should adopt a research-data policy. We suggest at least Data Transparency Level 2 (data must be posted to a trusted repository; exceptions must be identified at article submission). In the editorial process, all persons involved (i.e., editors, reviewers) must be aware of this policy and incorporate them into the overall decision-making process. During the review and revision process, attention should be paid to the availability of research data, and if not available, appropriate advice should be given to the authors. This could also reduce the proportion of articles reporting invalid links to research data, which was a lot higher for the educational-psychology journals than for

Fourth, we believe that low-threshold technical solutions for research-data management should support the whole research process. Currently, there are some promising approaches, such as the Research Data Management Organiser (RDMO; RDMO Research Data Management Organiser, 2022) or ZPID’s DataWiz (2022). Whereas RDMO is customizable to discipline-specific and institutional needs (“Domain Data Protocols for Educational Research,” 2022), DataWiz is already tailored to the needs of psychology. Both support the researchers during a research project. Thus, all data-related concerns are taken into account. Such technical solutions should be part of the researchers’ training and incorporated into the research projects.

Limitations

The present study is based on publicly available data published in scientific articles. Yet research-data availability might also be influenced by background factors such as the journals’ submission-process structure, reviewer comments such as the Peer Reviewers’ Openness Initiative (Morey et al., 2016), or individual editorial actions. All these factors are beyond our analysis. In particular, we did not analyze the structure and the affordances of the journals’ submission processes. The underlying technical systems and the implemented process have the power to influence research-data availability. For example, the inclusion of a question on whether research data have been made available according to the guide for authors might be a first step in this process.

A further limitation is the selection of the analyzed journals. Prescreening criteria were the relevance to internationally renowned research institutes of the Leibniz Association in educational sciences and educational psychology. The final selection criterium was data-transparency level (at least one journal from each level; note that we did not find a journal with Data Transparency Level 3). Although—in our opinion—the prescreening and the selection reflect the field and the results are thus of high relevance, we do not exclude the possibility that the results might be different for other journals in the field. We thus believe that the results must be interpreted cautiously.

In this study, we analyzed data availability as a function of research-data policies on the journals’ and the corresponding author’s affiliation level. We did not analyze recommendations regarding data sharing of further stakeholders, such as funding agencies and professional societies. Because data sharing is at a very low level, we consider the influence of recommendations and regulations from those stakeholders at best to be minimal. Further research is needed to study those influences on data-sharing activities.

We identified the data-transparency level while reading the journal’s guide for authors as a group. Although we believe that the categories are clear, note that we did not have an independent rating for the data-transparency level.

We searched the institutional research-data policies using a standardized search string on Google. This assumes that the policies are also accessible to Google search. If policies are stored in nonpublicly accessible areas of the network, we could not find them. This, in turn, might have affected our results.

Finally, this analysis covered only 2 years: 2018 and 2020. Although we observed increased data sharing in 2020 than in 2018, it would be interesting to monitor the impact of research-data policies on data-sharing behavior over a more extended period. We consider the present analysis as a starting point. The research data (including analysis scripts for the statistical programming language R) are freely accessible (on OSF at https://doi.org/10.17605/OSF.IO/6MW7A) and can be easily updated in the future. The raising awareness of the importance of data sharing (“Time to Recognize Authorship of Open Data,” 2022) and the calls for upgrading data publication (Deutsche Forschungsgemeinschaft | AG Publikationswesen, 2022) might also change the way research data are handled by both the authors and the journals.

Conclusion and outlook

As part of transparent and open science, data sharing serves as a scientific accelerator and contributes significantly to scientific progress. It is thus in the tradition of scientific paradigms as formulated by Popper (1959, 1963) and Merton (1973) as leading representatives of the philosophy and sociology of science. Yet data sharing in educational research is low and—as the present study shows—influenced by neither the institutional research-data policies nor the journals’ guidelines. We outlined an idea for developing an integrated and comprehensive data-management system that not only focuses on the individual researchers but also involves the various stakeholders (e.g., research infrastructure institutions). In summary, our approach complements the idea of open-education science (van der Zee & Reich, 2018; van Dijk et al., 2021) by adding an infrastructural emphasis. Because education research is particularly characterized by its diversity of research methods, high sensitivity of data, and high variety of data types, the sharing principles are highly important and, thus, must be based on a solid foundation. In addition to providing mere data storage, research infrastructure institutions, such as research-data centers and research libraries, play an essential role in assisting, guiding, and teaching the researchers in the complex processes that finally lead to the successful sharing of FAIR data.

In compliance with van der Zee and Reich (2018) and Mellor (2021), we consider incentives for data sharing as key to boosting sharing rates. Behavioral and metascientific research is needed to find the best and most promising ways. The possibilities include mandatory research-data sharing to receive a full peer review from authors following the Peer Reviewers’ Openness initiative (Morey et al., 2016) and compulsory uploading of the research data in the submission system. In our opinion, however, researchers themselves should realize the benefits of data sharing and act on their own accordingly. Thus, we propose teaching the researchers about legal aspects (e.g., licensing, founder requirements), technical solutions (e.g., RDMO), improvement of efficiency of the individual researcher and scientific discovery, protection against data loss, funding opportunities (Klein et al., 2018), and potential citation benefits (Colavizza et al., 2020). Finally, making shared data sets an official part of the academic CV would enable hiring committees to consider data-sharing activities as part of transparent and open science.

We started this research with the expectation to observe an effect of institutional policies on research-data sharing. Although requirements are met (at least from the perspective of the research infrastructure), data sharing in educational psychology is low and not affected by policies and guidelines. In our view, only a change within the scientific system that recognizes research data as a crucial part of the scientific process can lead to a substantial increase in the share of shared research data. Societal relevant fields such as educational psychology should have a particular interest in it.

Footnotes

Acknowledgements

We thank Chiara Grimm and Lara Kläffling for their help in coding the scientific publications.

Transparency