Abstract

Effect sizes are underappreciated and often misinterpreted—the most common mistakes being to describe them in ways that are uninformative (e.g., using arbitrary standards) or misleading (e.g., squaring effect-size

Nonsense: Words or language having no meaning or conveying no intelligible ideas

Psychological research has a long tradition of evaluating findings according to whether they are statistically “significant” or not, but more recently, increasing attention has been paid to the size as opposed to the significance of effects (e.g., Cumming, 2012). Effect size refers to the magnitude of the relation between the independent and dependent variables, and it is separable from statistical significance, as a highly significant finding could correspond to a small effect, and vice versa, depending on the study’s sample size. Students are routinely taught how to calculate and interpret significance levels; they are less often taught how to calculate effect sizes, and even more rarely are they taught how to evaluate them. This neglect of effect size persists into the research careers of many psychologists.

Much of the published literature reflects this continued neglect. Although many journals now require that effect sizes be reported, and researchers (usually) dutifully follow this requirement, they often ignore effect sizes otherwise. When researchers do draw implications from effect sizes, the interpretations they offer are, more often than not, superficial, uninformative, misleading, or completely wrong. In sum, effect sizes are widely unappreciated and often misunderstood, even by professional researchers.

Current research on psychological methods (e.g., as published in

Effect Size

The two most commonly used measures of effect size are Cohen’s

Although for a considerable period of psychology’s history it was common practice to report the key to our research [is not] to accurately estimate effect size. . . . When I am testing a theory about whether, say, positive mood reduces information processing in comparison with negative mood, I am worried about the direction of the effect, not the size. But if the results of such studies consistently produce a direction of effect where positive mood reduces processing in comparison with negative mood, I would not at all worry about whether the effect sizes are the same across studies or not, and I would not worry about the sheer size of the effects across studies. This is true in virtually all research settings in which I am engaged. I am not at all concerned about the effect size. (quoted in Funder, 2013, para. 4)

This is not an unusual opinion; similar comments can be found in a number of articles and blog posts. However, there are two problems with this line of thinking. First, and most obviously, this researcher routinely uses

The Two Most Common Ways to Interpret Effect Size

Interpretation of effect sizes traditionally proceeds in one of two ways. The first is literally nonsensical (in the meaning expressed in the definition opening this article), and the other is seriously misleading.

Cohen’s standards

The nonsensical but widely used interpretation of effect size is the famous standard set by Jacob Cohen (1977, 1988), who set

Squaring the correlation

As bad as these decontextualized criteria are, the other widely used way to evaluate effect size is arguably even worse. This method is to take the reported

We suggest that this calculation has become widespread for three reasons. First, it is easy arithmetic that gives the illusion of adding information to a statistic. Second, the common terminology of

The computation of variance involves squaring the deviations of a variable from its mean. However, squared deviations produce squared units that are less interpretable than raw units (e.g., squared conscientiousness units). As a consequence,

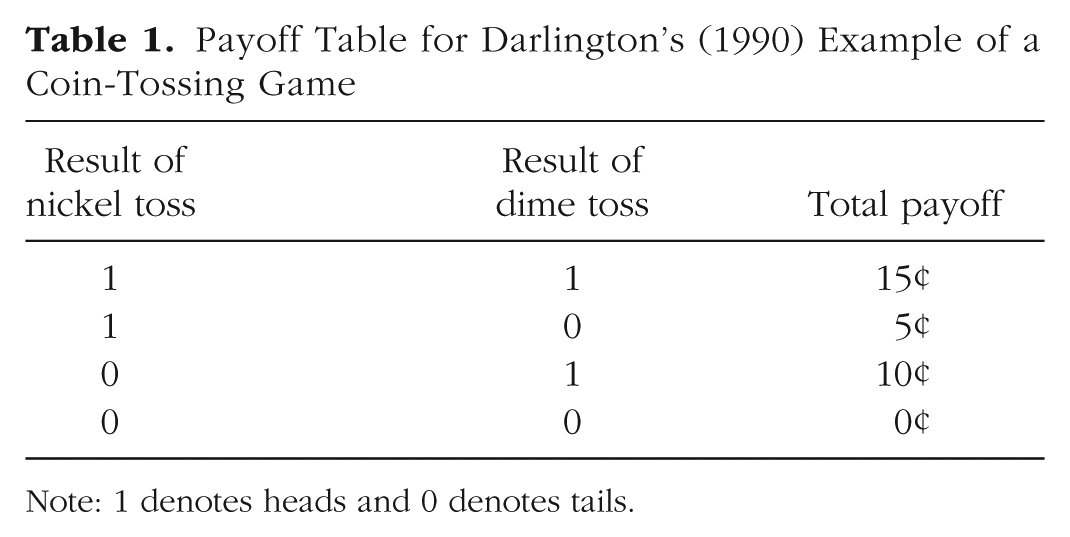

Consider the difference in value between nickels and dimes. An example introduced by Darlington (1990) shows how this difference can be distorted by traditional analyses. Imagine a coin-tossing game in which one flips a nickel and then a dime, and receives a 5¢ or 10¢ payoff (respectively) if the coin comes up heads. From the payoff matrix in Table 1, correlations can be calculated between the nickel column and the payoff column (

Payoff Table for Darlington’s (1990) Example of a Coin-Tossing Game

Note: 1 denotes heads and 0 denotes tails.

Toward Useful Interpretations of Effect Size

How can effect sizes be interpreted in a way that adds or provides meaning? We suggest two ways. The first is to use a benchmark, and the second is to estimate consequences.

Benchmarks

The idea behind using benchmarks to evaluate effect size is that the magnitude of a finding can be illuminated by comparing it with some other finding that is already well understood (or that at least is widely believed to be well understood). All of the benchmarking strategies we summarize in this section have the same aim: to help readers attain an intuitive “feel” for the meaning of an effect size. In the same way that people immediately gauge whether somebody is tall or short by comparing him or her with other people, researchers can approach a realistic appreciation of the meaning of a particular research result by using their knowledge of the sizes of classic findings, average findings, or other effects that are understood through everyday experience. J. Cohen (1988) used this strategy to justify his labeling numerical effect-size values as small, medium, and large. He likened a small effect to several specific effects, such as the mean height difference between 16- and 17-year-old girls. Medium effects were characterized as those “visible to the naked eye” (p. 26), though it seems he may have grossly overestimated the sensitivity of observers to at least some characteristics (Ozer, 1993). Large effects were described as similar in magnitude to the difference in mean IQ between college graduates and people with just a 50-50 chance of graduating from high school. One might well quibble with Cohen’s choices of examples, but given that this was early work when few researchers were talking about effect size, it would seem more fruitful to consider other benchmarking approaches.

Classic studies

One example of the benchmarking approach is provided by an analysis we reported some years ago (Funder & Ozer, 1983). We performed a simple reanalysis of three classic findings in the psychological literature: Festinger and Carlsmith’s (1959) finding of a reverse effect of incentives on attitude change, Darley and Latané’s (1968) and Darley and Batson’s (1967) studies of bystander intervention, and Milgram’s (1975) demonstrations of experimentally induced obedience. In each case, from the reported findings we simply computed an effect-size

This result should not have been surprising, but it was, in a zeitgeist in which a common complaint was that personality traits were not meaningfully related to behavioral outcomes because the correlations between them seldom exceeded .40 (e.g., Nisbett, 1980). And some writers at the time misinterpreted the implication of our calculations, in our view, by concluding that the calculations implied that these studies also “only” found “small” effects after all (or more disastrously, that “situations aren’t important either”). Our own view was that these studies were and remain classics of the social psychological literature, and nobody, certainly nobody at the time, doubted that the effects reported were foundation stones of social psychology that should be taught to every student in that field. We simply thought it was worth knowing that the reported effect sizes were in roughly the same range as the purported ceiling for effects of personality.

Other well-established psychological findings

In a later, similar, but much broader set of reanalyses, Richard, Bond, and Stokes-Zoota (2003) also calculated the effect-size

A similar analysis was performed by Roberts, Kuncel, Shiner, Caspi, and Goldberg (2007), who compared the validity of personality traits for predicting mortality, divorce, and occupational success with the well-established validity of socioeconomic status and intelligence as predictors of these same outcomes. The result was that the “magnitude of the effects . . . was indistinguishable” (p. 313). Even more striking, perhaps, was that for the prediction of mortality, the estimated

Comparisons with “all” studies

In even broader efforts, researchers have provided potential effect-size benchmarks by computing averages based on comprehensive reviews of the social and personality psychology literatures. In their ambitious effort, Richard et al. (2003) also calculated an average effect size for all the published effects in the social psychological literature that they were able to survey, and the resulting value was .21. A parallel but less extensive project surveyed the personality literature and came up with precisely the same average effect size:

A more recent and very large project reviewed 708 meta-analytically derived correlations from the literatures of both social and personality psychology, and found that the average effect-size

Comparisons with intuitively understood nonpsychological relations

Ordinary life experience or broader reading can lead to a sense of the strength of relationship between variables, and this understanding can also be used as an aid to the intuitive appreciation of a research finding. For example, do you take antihistamines to combat a runny nose and sneezing? If so, how well do they work? According to one estimate, the effect size of the relationship between antihistamine use and relief from these symptoms is equivalent an

Consequences

The binomial effect-size display

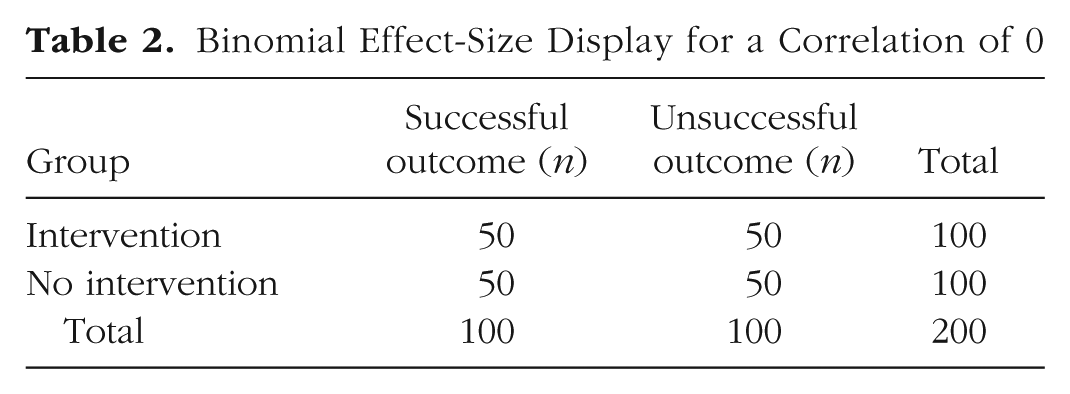

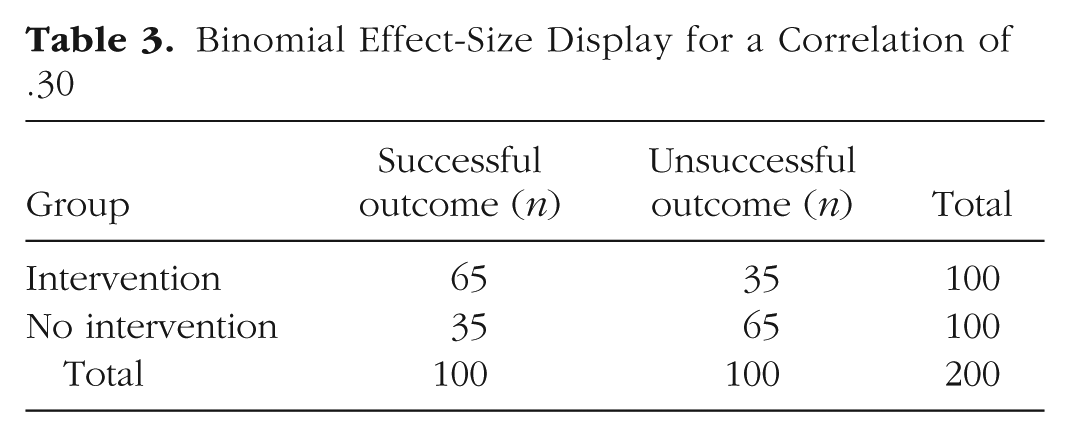

A more direct way to evaluate an effect size is to consider consequences, which in some cases can be numerically calculated. Perhaps the best known and easiest to use of these methods is the binominal effect-size display (BESD), introduced by Rosenthal and Rubin (1982). The BESD illustrates the size of an effect, reported in terms of

Binomial Effect-Size Display for a Correlation of 0

Binomial Effect-Size Display for a Correlation of .30

Some readers, traditionally trained to think of .30 correlations as “explaining only 9% of the variance” might be surprised to learn that an effect of this size will yield almost twice as many correct predictions as incorrect ones. More specifically, a table such as this, when combined with cost data for interventions and outcomes, could be used to calculate the utility of an intervention or of a predictive instrument in concrete, monetary terms. It could also be used, as in Rosenthal and Rubin’s (1982) own example, to assess the number of lives that could be saved by a health intervention. In a later analysis, Rosenthal (1990) calculated that the correlation of .03 between taking aspirin after a heart attack and prevention of future heart attacks implied the prevention of 85 attacks in a sample of 10,845 individuals. Less dramatically, a BESD could be used to calculate the payoff from using an ability or personality test to select employees. In a similar manner, the Taylor-Russell tables (Taylor & Russell, 1939) have long been used by industrial psychologists to combine the validity of a selection instrument with the selection ratio (the proportion of applicants hired) to predict the percentage of hired employees who will be successful on the job. 4

Consequences in the long run

In a classic analysis (which is nonetheless not as widely known as it should be) subtitled “When a Little Is a Lot,” the well-known cognitive psychologist Robert Abelson (1985) calculated the correlation between a Major League baseball player’s outcome in a single at bat and his overall batting average. Abelson’s calculation yielded an

However, the resolution to what Abelson characterized as a “paradox” (p. 131) turned out to be rather simple. The typical Major League baseball player has about 550 at bats in a season, and the consequences cumulate. This cumulation is enough, it seems, to drive the outcome that a team staffed with players who have .300 batting averages is likely on the way to the playoffs, and one staffed with players who have .200 batting averages is at risk of coming in last place. The salary difference between a .200 batter and a .300 batter is in the millions of dollars for good reason.

Another example comes from a large study that tracked 2 million financial transactions across more than 2,000 people. The correlation between an individual’s extraversion score and the amount he or she spent on holiday shopping was .09 (Weston, Gladstone, Graham, Mroczek, & Condon, 2018). Although this fact might not be very consequential for a single individual, multiply the effect by the number of people in a department store the week before Christmas, and it becomes obvious why merchandisers should care deeply about the personalities of their customers.

The overall implication, as Abelson (1985) noted, is that seemingly small effects can matter “in the long run, albeit not very consequentially in the single episode” (p. 133). In particular, a psychological process that affects the behavior of a single individual repeatedly 6 over time, or, analogously, the behavior of many individuals simultaneously on a single occasion, can have hugely important implications.

Relevance for Psychological Research

Abelson’s (1985) illustration of how seemingly small effects can cumulate has important implications for psychology. Every social encounter, behavior, reaction, and feeling a person has could be considered a psychological “at bat.” And imagine how many of those occur in a day, a week, a year, or a lifetime—certainly many more than the 550 or so a ball player gets in a year. Any psychological variable that affects any of these, every time it happens, will have an effect that could cumulate over time, with important consequences for numerous life outcomes, including (to name just a few examples) popularity and social success, physical health, financial success, personal relationships, and overall quality of life. 7

Individual differences research

The relevance of the cumulation of small effects over time is particularly obvious for research on individual differences, such as abilities or personality traits. If a stable trait—such as extraversion, agreeableness, or conscientiousness—affects much of what you do even in a small way, its consequences can add up very, and perhaps surprisingly, quickly. Analyses of the effects of personality on life outcomes have focused on long-term consequences such as health, relationship success, quality of life, and—that ultimate long-term consequence—longevity (Friedman et al., 1993; Ozer & Benet-Martínez, 2006; Roberts et al., 2007). But Abelson’s (1985) analysis suggests that one might need much less than a lifetime for noticeable consequences of stable personality traits to appear. A correlation of about .05 translates to large consequences with 550 at bats. How long does it take for a person to experience, for example, 550 interpersonal encounters?

Consider a student moving away from home to college and meeting the fellow residents of his dormitory for the first time. Assume that he is highly agreeable. How long will it take before he finds himself enjoying the enhanced popularity that is the reliable long-term result of this trait (Ozer & Benet-Martínez, 2006)? A back-of-the envelope calculation suggests that if the correlation between agreeableness and an individually successful social interaction is .05 (which is a hypothetical, conservative estimate 8 ), and if the student has 20 social interactions a day, then the consequences for his popularity in less than a month (550 interactions/20 interactions per day = 27.5 days) will be as noticeable as the consequences of batting ability for a baseball player’s success at the end of the season.

Even more remarkably, Epstein (1979) demonstrated that broad outcome criteria could be predicted with surprising precision from broad, aggregated predictor variables. For example, he showed that a person’s average behavior over a period of 14 days could be predicted by the person’s average behavior over a preceding period of 14 days with a correlation equivalent to .80 to .90 (p. 1123). The moral of his demonstration is that an appropriate and realistic target for behavioral prediction is not what a person does on one day or in one situation, but what he or she does in the not-very-long run.

Experimental research

The relevance of the way effects can cumulate over time is perhaps less obvious for experimental research in which independent variables are manipulated, but it is fundamentally no different. If a psychological process is experimentally demonstrated, and this process is found to appear reliably, then its influence could in many cases be expected to accumulate into important implications over time or across people even if its effect size is seemingly small in any particular instance.

For example, a process that has a small influence on the degree to which a person can accomplish self-control every time he or she experiences fatigue—which is perhaps not every day, but certainly not rare—will be psychologically important for understanding what goes on when people are tired.

9

Or, for another example, consider the recent conclusion that a meta-analytic

A long-standing tradition in experimental social psychology has been to try to re-create real-world situations in the laboratory (Aronson & Carlsmith, 1968). Influential studies have simulated circumstances in which a person appears to be in distress, in order to assess the conditions under which a bystander might intervene; in which a person is given dire orders to harm another person, in order the assess the conditions under which obedience or disobedience becomes more likely; and in which a person is given an initial (false) impression of someone he or she is about to meet, in order to assess the conditions under which this impression becomes self-fulfilling. Such research has become increasingly rare in recent years, perhaps because it is difficult to conduct for operational and ethical reasons, and also because easier methods of research, such as gathering responses to computer-presented stimuli, have become widely available (Baumeister, Vohs, & Funder, 2007).

Indeed, to capture a meaningful aspect of social experience in a psychological laboratory, for even a few minutes, is a remarkably ambitious and even daunting goal. Some experimental findings from research of this sort turn out not to be replicable and thus are not reliable after all. But when some aspect of a situation does turn out to affect behavior, and the finding is reliable across experimental attempts and different laboratories, then lightning has been caught in a bottle, 11 and it is not wise or even realistic to demand a “large” effect size (Gelman, 2018). Under the circumstances, to find anything at all can be impressive (Prentice & Miller, 1992).

When effects do (and do not) cumulate

The foregoing discussion applies to circumstances in which the effects measured in a research study can be expected to cumulate over time, situations, or individuals. Small effects accumulate into large ones in at least some, and probably many, but certainly not all circumstances. This cumulation can occur across time and occasions for a given individual, and across individuals at a single time or occasion.

The batting average in baseball provides an unambiguous example of an effect that cumulates across time and situations for an individual. Hits add up (in the not-very-long run) into runs, and runs add up (also in the not-very-long run) into won games. Another example of cumulation that seems almost as clear to us is the way the (even slightly) larger probability of a friendly act by a more agreeable person can lead, before too long, to an enhanced social reputation. More generally, precisely because they are consistent over time and across situations, the influences of personality on behavior can confidently be expected to affect consequential social, occupational, and health outcomes, and in fact they do (Ozer & Benet-Martínez, 2006).

Not all cases are as clear-cut as these, however. It is not difficult to think of examples in which the consequences of repeated effects fail to increase, increase nonlinearly, or even reverse over time and occasions. The well-known Weber-Fechner and Yerkes-Dodson principles describe how responses to increases in the level of a stimulus or motivation tend to level off or even reverse; the principle of habituation posits that responses to a repeated stimulus will eventually cease altogether. Cognitive systems of emotion regulation and physiological systems that support homeostasis, similarly, can reduce or eliminate the effect of repeated stimuli. Another potential complication in interpreting cumulation is the Matthew effect, which suggests that the accumulation of advantages (or other consequences) from a psychological process can actually accelerate, perhaps differently over time for different individuals.

Even in cases in which the strength of an effect itself does not build steadily over time, however, the consequences of the underlying process still might. In the case of ego depletion, for example, imagine a person who dislikes her job so much that she comes home every evening in a state of psychological fatigue that makes her more likely (

As one thinks through examples such as these—and whether or not one agrees with any particular interpretation—a common gap in psychological theorizing becomes evident: Theorizing typically does not extend to considerations of when and in what ways individual differences, situational variables, and their underlying processes—which may have small effects on single occasions—can be expected to cumulate in their strength or consequences. Nor does theorizing commonly consider which sorts of processes will

Reliable Estimation of Effect Sizes

Our analysis is based on a presumption that the effect size in question is, in fact, reliably estimated. This is a big presumption, and a critical concern when the effect size is in the range traditionally regarded as small. Although the difference between

Other, nonstatistical considerations can be of concern as well. Smaller effects are more at risk of being the product of an artifact rather than the process under investigation. For example, experimenter expectancy effects (Rosenthal, 1996), even if less powerful and more subtle than initially reported (Jussim, 2017), might be enough to account for effects in the range of, for example, the .08 effect of growth mind-set interventions we mentioned earlier . This example illustrates another facet of precise estimation when effects are small: Not only are larger sample sizes and more studies desirable, but also care in eliminating potential confounding variables becomes critically important. Other practices to reduce bias in analysis and reporting of research findings, such as preregistration of studies and the Registered Report process, can also be helpful, because the importance of potential bias becomes larger when effects or sample sizes are smaller.

Implications for Interpreting Research Findings

Our analysis of the evaluation of effect sizes has three important implications for how research findings should be interpreted.

Researchers should not automatically dismiss “small” effects

One reason why experimental social psychologists, in particular, have seemed reluctant to report or to emphasize effect sizes might be that, because of their traditional training (which often includes squaring correlations to yield the percentage of variance explained), they are taken aback by how small they seem. If readers of the psychological literature better understood the implications of effect size, apologies for reported effect sizes may no longer be necessary. An incentive structure that rewards performing selective analyses (

Indeed, researchers sometimes object to recommendations to gather data from large samples because they are concerned that small, unimportant effects will become significant. We believe this objection is mistaken, because it is smaller effect sizes that (realistically) will turn out to be the ones that are more likely to have been correctly estimated, and other things being equal, larger sample sizes are likely to provide more precise estimates regardless of the size of the effect.

Effect sizes will become more prominently and less reluctantly reported in experimental research, we believe, when researchers stop feeling (or being made to feel) defensive about them, and when explicit (rather than ritualized) discussions of the theoretical and practical implications of obtained effect sizes, of any magnitude, become more common. As publications with effect sizes reported in abstracts and perhaps even titles begin to accumulate in the literature, readers will begin to develop their own experientially based and more realistic intuitions about what

Researchers should be more skeptical about “large” effects

On the flip side, the traditional neglect of effect-size reporting has also allowed some implausibly large effects to sneak in under the radar. One famous example is the reported effect of unscrambling words referring to stereotypes of the elderly on walking speed. In two studies (each with an

Researchers have often reported anomalously large effect sizes in small-

Researchers should be more realistic about the aim of their programs of psychological research

Looking across a room full of research psychologists at a professional meeting, it is possible to be struck by the thought that everyone there believes, usually with some justification, that what he or she is studying is important. As a result, every psychologist is prone to expect that the variable he or she is studying should have a large effect on cognition, emotion, or behavior. This is perhaps sometimes true, but every researcher should also be aware that the psychologist in the next chair may be studying a very different topic with the same expectation. We all must face the fact: Human psychology is inherently complex, and there is only so much variation—in cognition, emotion, or behavior—to go around (Ahadi & Diener, 1989; De Boeck & Jeon, 2018).

How realistic is it to expect that any one research program, on any one topic or psychological process, determines more than a small piece of what is really going on in the psychological world at large? Perhaps all researchers should lower their expectations a little (or a lot). Psychologists are in the business of predicting the results of experiential or behavioral at bats and should not be surprised or begrudge that the variables they are studying must share their predictive validity with other correlates and causes.

Recommendations for Research Practice

Report effect sizes, always and prominently

The effect sizes for every study should be reported prominently. This is routine in individual differences articles, in which Pearson’s

A recent example illustrating these recommendations is an article reporting a meta-analysis of 761 effect sizes, calculated with data gathered on a total sample of 420,595 (Allen & Walter, 2018). The article reported—in its abstract—several relationships between personality traits and sexual behavior, including (among others) correlations between extraversion and frequency of sexual activity (

Conduct studies with large samples (when possible)

As we have noted, an often-neglected complication in interpreting effect sizes is that the confidence interval of

We believe that the effect size is information that should be reported and evaluated regardless of a study’s sample size. But the confidence interval should be reported as well, so that evaluation can be informed by the necessary degree of uncertainty when the sample size is small. The ideal solution is to run studies with large samples. This is not always feasible with certain kinds of research or subject populations (Finkel, Eastwick, & Reis, 2017). But an important priority should be to make samples as large as resources allow, and perhaps it would be wise to reallocate resources from numerous smaller studies to fewer larger ones. A few studies with larger samples are likely to produce more accurate and less confusing findings than will many studies with smaller samples. In particular, the recent history of social psychology illustrates the bewildering welter of seemingly contradictory results that can emerge from a literature dominated by small-

Report effect sizes in terms that are meaningful in context

Pearson’s

We are not the first to make the point that more meaningful measures of variables would lead to more meaningful measures of their effects (see, e.g., P. Cohen, Cohen, Aiken, & West, 1999). The need to employ standardized measures of effect size arises from the use of arbitrary and intrinsically meaningless measurement units. Researchers would be well served to be explicit about their measurement units and to utilize raw effect-size measures, such as mean differences or raw regression coefficients, alongside standardized measures of effect size, when possible. This would be a reminder of the ambiguities inherent to the standardized effect measures and would contribute toward the development of an interpretive framework for the most frequently used measurement units (Pek & Flora, 2018). With experience, even the meaning of a unit on a 7-point Likert scale might eventually become clear.

Moreover, in some cases, especially in applied research, the unit of measurement does have an intrinsic meaning. For example, mean differences in a countable health outcome, such as heart attacks, are meaningful in their own right and should be reported in preference to standardized measures, such as correlations or relative risks. The Harding Center for Risk Literacy (2018b), for instance, uses “fact boxes” to describe costs and benefits of health interventions in terms of concrete numbers, such as the number of people who would benefit from or be harmed by a screening or a drug. One of their fact boxes translates medical effect-size statistics in the following manner: Consider a sample of 200 people with acute bronchitis. If 100 of these people are given no treatment or a placebo, after 14 days 51 of them will still have a cough and 19 will feel ill in other ways (e.g., nausea). If the other 100 are given an antibiotic, 14 days later only 32 of them will still have a cough but 23 will feel ill otherwise (Harding Center for Risk Literacy, 2018a). This kind of format for presenting research results translates effect sizes into consequences people care about and use to make decisions.

Stop using empty terminology

It is far past time for psychologists to stop squaring

Revise the Cohen guidelines

This is our most presumptuous recommendation, and we offer it somewhat tongue in cheek, but not entirely. It is abundantly clear that the traditional Cohen guidelines (J. Cohen, 1977, 1988) are much too stringent. And as did Cohen, we think decontextualized guidelines are appropriate only for the most approximate of uses. But new guidelines can be proffered in the light of (a) Abelson’s (1985) demonstration of the not-so-long-term consequences of an effect-size

We offer, therefore, the following New Guidelines: Assuming that estimates are reliable (a critical concern, as already discussed), an effect-size

Summary and Conclusion

We began by describing problems with the traditional evaluation of effect sizes, including common ways in which they are misinterpreted—the most common mistake being to describe them in ways that either convey no useful information or are actively misleading. Next, we outlined several ways (building on proposals by prior writers) to imbue effect-size numbers with meaning. We concluded by offering some recommendations for the most useful ways to evaluate effect size and even, daringly, suggested a new set of standards. Our hope is that this article might play a small role in helping to advance the treatment of effect sizes so that rather than being numbers that are reported without interpretation, or interpreted superficially or incorrectly, they become aspects of research reports that will inform the application and theoretical development of psychological research.

Footnotes

Acknowledgements

We thank Ulrich Schimmack for identifying a calculation error in an earlier version of this manuscript.

Action Editor

Alexa Tullett served as action editor for this article.

Author Contributions

D. C. Funder and D. J. Ozer jointly generated the ideas for this article and jointly wrote the manuscript.

Declaration of Conflicting Interests

The author(s) declared that there were no conflicts of interest with respect to the authorship or the publication of this article.

Funding

Preparation of this article was aided by National Science Foundation Grant BCS-1528131 to D. C. Funder, principal investigator. Any opinions, findings, and conclusions or recommendations expressed in this article are those of the individual researchers and do not necessarily reflect the views of the National Science Foundation.

Open Practices

Open Data: not applicable

Open Materials: not applicable

Preregistration: not applicable