Abstract

Professional advocacy associations such as the American Psychological Association (APA) and American Academy of Pediatrics commonly release policy statements regarding science and behavior. Policymakers and the general public may assume that such statements reflect objective conclusions, but their actual fidelity in representing science remains largely untested. For example, in recent decades, policy statements related to media effects have been released with increasing regularity. However, they have often provoked criticisms that they do not adequately reflect the state of the science on media effects. The News Media, Public Education and Public Policy Committee (a standing committee of APA’s Division 46, the Media Psychology and Technology division) reviewed all publicly available policy statements on media effects produced by professional organizations and evaluated them using a standardized rubric. It was found that current policy statements tend to be more definitive than is warranted by the underlying science, and often ignore conflicting research results. These findings have broad implications for policy statements more generally, outside the field of media effects. In general, the committee suggests that professional organizations run the risk of misinforming the public when they release policy statements that do not acknowledge debates and inconsistencies in a field, or limitations of methodology. In formulating policy statements, advocacy organizations may wish to focus less on claiming consensus and more on acknowledging areas of agreement, areas of disagreement, and limitations.

Keywords

Professional scientific groups such as the American Psychological Association (APA), American Academy of Pediatrics (AAP), and American Medical Association often release public statements (collectively called policy statements here) that speak to the current nature of science. Such statements may be intended to provide information for policymakers, inform the general public, inform clinical practice, or simply set the agenda for the group itself. Given that such statements speak to science, they may be assumed to form objective summaries of current science. But given that most such organizations represent particular professions, and that the organizations exist to promote those professions, these organizations may not necessarily be objective, neutral arbiters of scientific fact. Thus, policy statements that exist to promote an organization’s agenda may be mistakenly viewed as scientifically objective by the public and policymakers. Understanding the fidelity of scientific policy statements in representing the science to which they refer can help place these statements in their proper context.

It is possible to assess policy statements critically and come to an understanding of their overall accuracy. Questions that may be asked include the following: Do policy statements provide balanced accounts of controversies in research fields and appropriately note limitations of current methodologies? Is the magnitude of effects faithfully represented, or are effects presented as binary (either existing or not)?

In this article, the News Media, Public Education and Public Policy Committee of the American Psychological Association’s Division for Media Psychology and Technology provides an example for how such a critical analysis can be conducted. In this case, we examine numerous professional organizations’ policy statements related to media effects. However, the approach taken here could easily be applied to other realms of science, providing well-needed checks and balances on professional organizations’ or guilds’ policy statements.

For decades, the potential impact of media on a variety of developmental issues, ranging from aggression and violence to sexual behaviors to body dissatisfaction, has been fretted about and debated. In response, numerous scientific professional advocacy organizations have released policy statements on various effects of media (e.g., effects of media violence, screen time, and sexual media). Some scholars have expressed concern that policy statements exaggerate effects and present the scientific evidence as more consistent than warranted, rather than faithfully discussing inconsistencies or methodological shortcomings (e.g., Consortium of Scholars, 2013; Farady, 2010; Linebarger & Vaala, 2010; Steinberg & Monahan, 2011). Others have suggested that policy statements are produced in light of political rather than scientific goals (Copenhaver, 2015). In light of these controversies, the News Media, Public Education and Public Policy Committee has conducted a review of publicly available policy statements on media effects by advocacy organizations for scientific professionals, such as APA and the AAP.

The Burgeoning Debate Over Policy Statements

The release of media-effects policy statements by professional advocacy groups dates back to at least the 1990s (e.g., APA, 1994). The ostensible intent of such statements is to provide clear, concise, and unequivocal statements of fact regarding media effects of interest to policymakers, clinicians, and parents. It is difficult to fully estimate the impact of such policy statements either on parenting practices or on media policy and regulation. However, such policy statements have been commonly cited in court cases involving various aspects of media regulation (e.g., Brown v. Entertainment Merchants Association, 2011; Forsyth v. Motion Picture Association of America, 2016).

A common criticism of these policy statements among dissenting scholars is that they have not fully represented inconsistencies in the data, in order to make claims more definitive (for examples of criticisms, see Davila, 2011; Ferguson, 2013; Hall, Day, & Hall, 2011; Linebarger & Vaala, 2010; O’Donohue & Dyslin, 1996). For example, critics have argued that some statements cited only studies supporting a particular adopted narrative and failed to consider those that yielded conflicting evidence (see Babor & McGovern, 2008). Other critics (e.g., Ferguson, 2013; Quintero-Johnson, Banks, Bowman, Carveth, & Lachlan, 2014) have argued that some statements were prepared by groups of professionals with homogeneous professional opinions on media effects that do not represent the spectrum of scholarly beliefs. This is not to suggest that policy statements are either all good or all bad; rather, our point is merely that they often generate as much controversy as clarity regarding the topics they are meant to illuminate. Although such policy statements can be helpful for audiences and sometimes afford political prestige to the organizations that provide them, there is also the concern that such statements may sometimes inadvertently damage the scientific reputation of their fields by taking positions that appear to be partisan rather than objective, and thus missing a crucial hallmark of science (O’Donohue & Dyslin, 1996).

Rigor, Replicability, and Policy Statements

One concern regarding policy statements generally is that they may be based on research studies that lack rigor or have not been replicated (Open Science Collaboration, 2015). Within the field of media effects, long-standing concerns about rigor (e.g., Freedman, 1984) focus on issues such as (a) the tendency to ignore null results and to report inconsistent findings as supporting the theory being tested, (b) researcher degrees of freedom (Savage, 2004), (c) poor measurement standardization and validity (Tedeschi & Quigley, 2000), and (d) failure of multivariate models to properly control for theoretically relevant variables. This last point is a particular concern with meta-analyses, which may produce misleading results by focusing exclusively on upwardly biased bivariate effects (Furuya-Kanamori & Doi, 2016).

In response to these concerns, some scholars have attempted to improve rigor in their fields by (a) increasing measurement standardization (see McCarthy & Elson, 2018, for a review), (b) preregistering their studies (Ferguson et al., 2017; Holz Ivory, Ivory, & Lanier, 2017; McCarthy, Coley, Wagner, Zengel, & Basham, 2016), (c) conducting rigorous multivariate analyses (DeCamp, 2015; Steinberg & Monahan, 2011), and (d) conducting direct (Przybylski, Deci, Rigby, & Ryan, 2014) and conceptual (Tear & Nielsen, 2013) replications of older studies. However, policy statements have often not commented on these well-known structural issues. For instance, the APA task force on video games made little reference to issues such as measurement standardization, proper controls, preregistration, or researcher degrees of freedom in their report (APA, 2015). Concerns along these lines are certainly not limited to behavioral research, and examples can be found in other fields, such as nutrition (Singal, 2017) and cancer (Begley & Ellis, 2016) research. Although some organizations have begun to encourage open-science principles (e.g., APA, 2017), extant policy statements may still be misinformed by previous research plagued by various methodological concerns. Thus, policy statements may miscommunicate to the general public the current state of the literature of the target field, particularly its validity and replicability. Therefore, the News Media, Public Education and Public Policy Committee of APA’s Division 46 (Media Psychology and Technology) sought to examine the scientific veracity of publicly available policy statements on media effects. This article represents the committee’s report, which was approved by the Division 46 Board of Directors.

Disclosures

In this article, we report our results in the aggregate. The Supplemental Material available online contains detailed results for each of the four categories of policy statements (see the next section for an explanation of the categories). The registered documents at the Open Science Framework (https://osf.io/4vk5x/) include the rubric used to evaluate the statements as well as the outcomes for each of the individual statements.

Method

Selection of policy statements

To be included in the current review, policy statements had to be produced by professional advocacy organizations (e.g., AAP, APA) representing organized bodies of scholars or clinicians with subject-matter expertise. We excluded statements or reviews by other bodies, because they have missions and purposes other than informing and influencing the public, because they have different influences than such advocacy organizations, and because they are perceived differently than influencers of policy. By constraining our assessment to policy statements by nongovernmental bodies of scientists or clinicians, however, we do not mean to imply that these other sources are without value or are unimportant to consider

To secure these publicly available policy statements, we conducted a search on Google Scholar for articles including all three of the following search terms: “policy statement,” “task force,” and “media.” The initial search was made in January of 2016. We augmented this search by examining the Web sites of leading scholarly organizations, specifically, the AAP, the American Academy of Family Physicians, the American Association for Child and Adolescent Psychiatry, the American Medical Association, the American Psychiatric Association, APA, the International Society for Research on Aggression, and the National Association for the Education of Young Children. We included a search of organizational archives when available. We also examined the reference sections of leading review articles in these fields for citations of additional policy statements released by professional organizations.

This search produced a total of 24 policy statements across a variety of topics, beginning in the early 1990s. These policy statements were grouped into four basic categories. The largest was the group of statements on media violence, and there were smaller groups for screen time and sexual media. The fourth category, labeled “general effects,” included policy statements on narrow areas that did not fit into any of the other three categories.

Evaluation

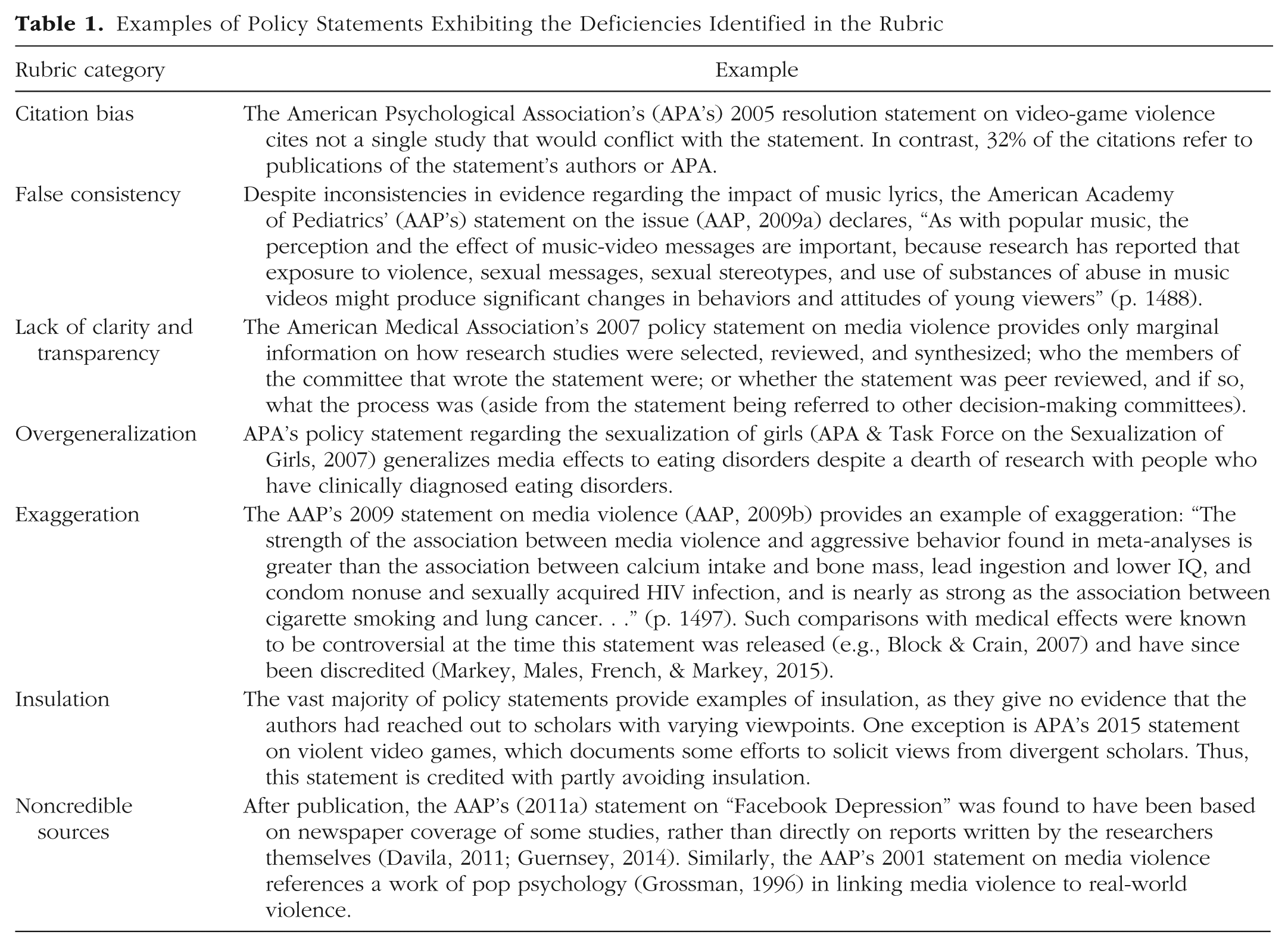

A subcommittee was then established for each of these four areas, and each subcommittee evaluated the policy statements in its area independently of the others. All members of the News Media, Public Education and Public Policy Committee are doctoral-level researchers or clinicians involved with media-related research, consulting, or clinical work. The committee includes members with a wide range of views on media effects. Membership in the committee is voluntary. The members of the media-violence subcommittee were M. Elson, J. Ivory, and D. Klisanin. The members of the screen-time subcommittee were D. Nichols and J. Wilson. The members of the sexual-media subcommittee were M. Gregerson, J. L. Hogg, and P. M. Markey. Finally, the members of the general-effects subcommittee were C. J. Ferguson and S. Siddiqui. A standardized rubric was developed for the evaluation of the policy statements. This rubric identified seven issues (see Table 1 for examples of statements illustrating these issues):

Citation bias: A policy statement was scored as exhibiting citation bias if there was evidence both supporting and not supporting the effect in question, but only the supporting evidence was cited. A statement was credited with avoiding citation bias if it cited and highlighted any evidence disconfirming a media effect. Our review of the evidence revealed that there was considerable disconfirmatory evidence relevant to all the selected policy statements.

False consistency: A policy statement was considered to exhibit false consistency if it presented research as consistent despite inconsistencies in the evidence base. The issue of false consistency is similar to that of citation bias. A policy statement with citation bias is likely also characterized by false consistency. However, in some cases, a statement may consider some disconfirmatory evidence only to proclaim, in the overall review, abstract, or conclusion, that there is consistent evidence for effects (e.g., APA, 2015). In other cases, research conflicting with the overall policy review may be cited, but the policy statement may fail to adequately note that the evidence is disconfirmatory (e.g., the citation of Schmidt, Rich, Rifas-Shiman, Oken, & Taveras, 2009, in AAP, 2016a). False consistency includes misconstruing evidence as supportive when it is disconfirmatory and discounting disconfirmatory evidence in conclusions, to imply consistency of evidence.

Lack of clarity and transparency: A policy statement was scored as lacking clarity and transparency if it did not clearly indicate the process by which it was developed, including how the task-force members and data were selected, or if it used new data (i.e., meta-analyses) that were not made openly available.

Overgeneralization: A statement was considered to overgeneralize if it extended research results to behaviors beyond what was appropriate (e.g., if laboratory measures of aggression were extended to violence in the real world). In some cases, generalization to equivalent behaviors or equivalently severe effects may be acceptable (e.g., laboratory tests of mild aggression might be generalizable to mild aggression, such as sarcastic comments in the real world, but not to criminal violence). However, we note that any generalization outside the context in which evidence is gathered (e.g., laboratory paradigms or self-report data) carries the risk of being miscommunication.

Exaggeration: A policy statement implying larger public-health risks than could be supported by within-study and across-studies effect-size estimates received a nonpassing mark for this issue. Exaggeration in some of the reviewed statements was due to failure to report effect sizes or failure to evaluate their magnitude. For example, r values typically below .10 (Cohen, 1992), or perhaps even .20 (Lykken, 1968), may reflect what Meehl (1991) has called the “crud factor” and Lykken (1968) has called the “ambient noise” of artifactual results that can mislead scholars into thinking they have found meaningful relationships (see Standing, Sproule, & Khouzam, 1991). Other policy statements were scored as exaggerating the evidence because they made comparisons equating the impact of media effects with the impact of medical effects such as smoking and lung cancer, despite the fact that such comparisons have been discredited (e.g., Block & Crain, 2007; Markey, Males, French, & Markey, 2015).

Insulation: A policy statement was considered to exhibit insulation if it failed to provide evidence that the authors had reached out to scholars with opinions divergent from their own. In some cases, a statement noted that the task force sought input from diverse scholars. APA’s 2015 statement on video games, for example, mentioned such outreach, but this effort was ultimately viewed as partial and nontransparent. If a policy statement made no note of an effort to reach out to scholars with divergent views, it was assumed that no such effort occurred.

Noncredible sources: A policy statement received a nonpassing mark for this issue if it relied on noncredible sources, such as books of popular, or “pop,” psychology or newspaper reports, as primary data sources.

Examples of Policy Statements Exhibiting the Deficiencies Identified in the Rubric

Each policy statement was primarily reviewed by two subcommittee members, and in the case of subcommittees with three members, different members evaluated different policy statements. The subcommittee members were content experts in the fields they considered and read both original research reports and research reviews in the relevant fields to fully understand the current data. Results were discussed at the subcommittee level, and any discrepancies were resolved via debate and consensus. Results were then shared with the full committee for final evaluation.

Results

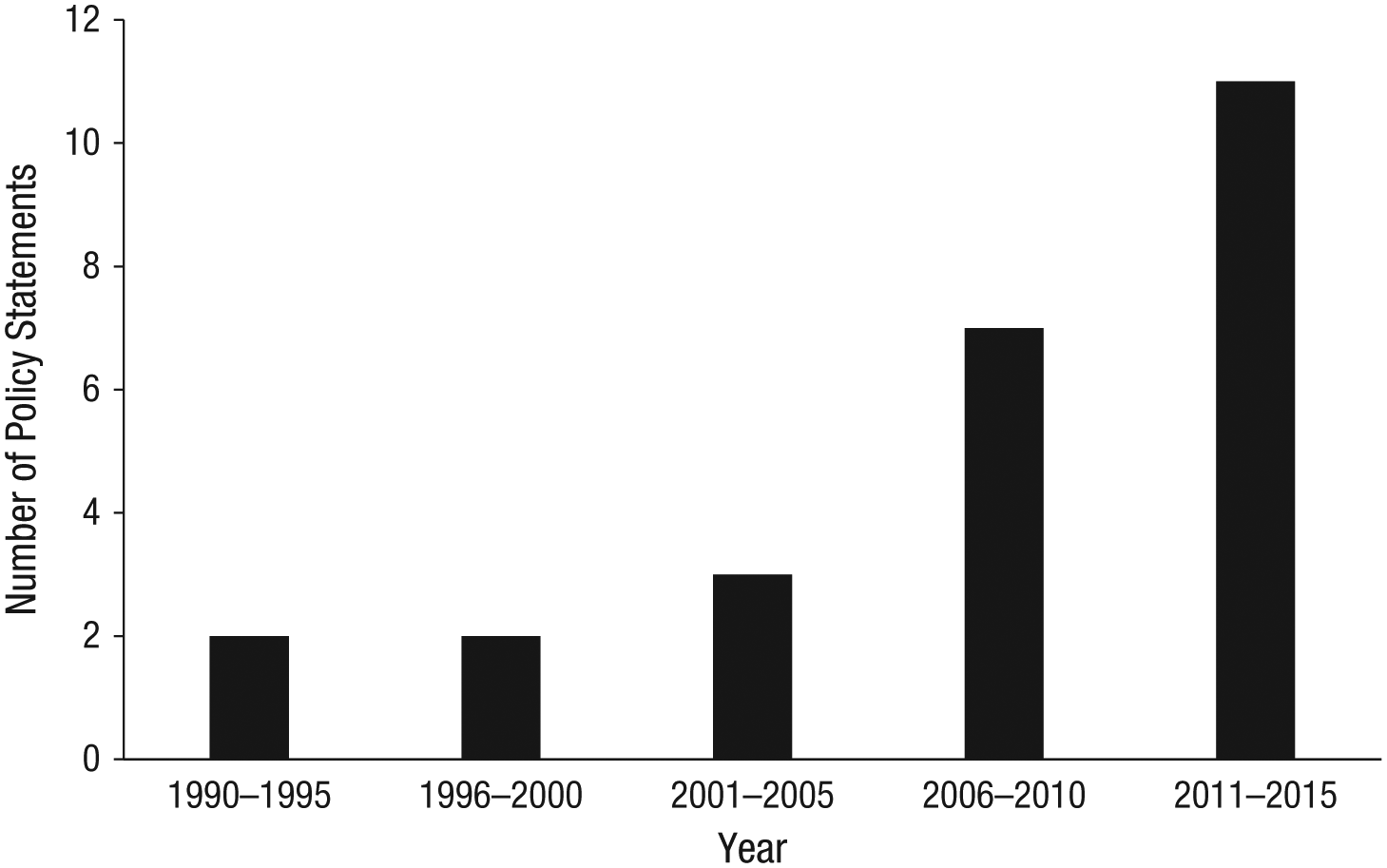

An examination of the temporal frequency of media-related policy statements revealed a remarkable increase in the frequency of such statements in recent years. From 1991 through 2000, only 4 policy statements concerning media effects were released by professional advocacy organizations (these statements include a 1999 AAP policy statement on screen time that is no longer active). The frequency increased to 3 during the period from 2001 through 2005, to 7 from 2006 through 2010, and then to 11 from 2011 through 2015 (see Fig. 1). Thus, there appears to be a trend toward a rapidly increasing frequency of media-related policy statements (2 more were released in 2016). It is possible that these data reflect the greater availability of policy statements released during the Internet era, but an examination of the archives made available by the most prolific organizations (i.e., AAP, APA) suggests that this is not a likely explanation for this trend.

Frequency of media-related policy statements. This figure includes the American Academy of Pediatrics’ (1999) retired statement on screen time, which was not included in the final set of 24 publicly available policy statements reviewed by the committee.

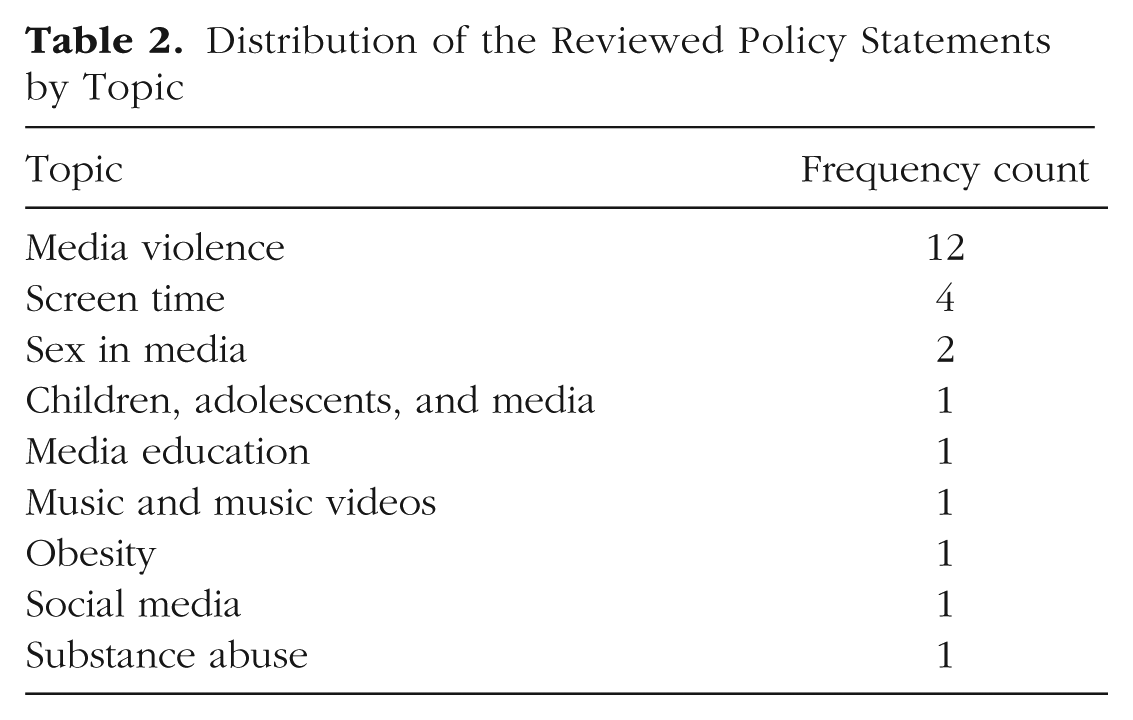

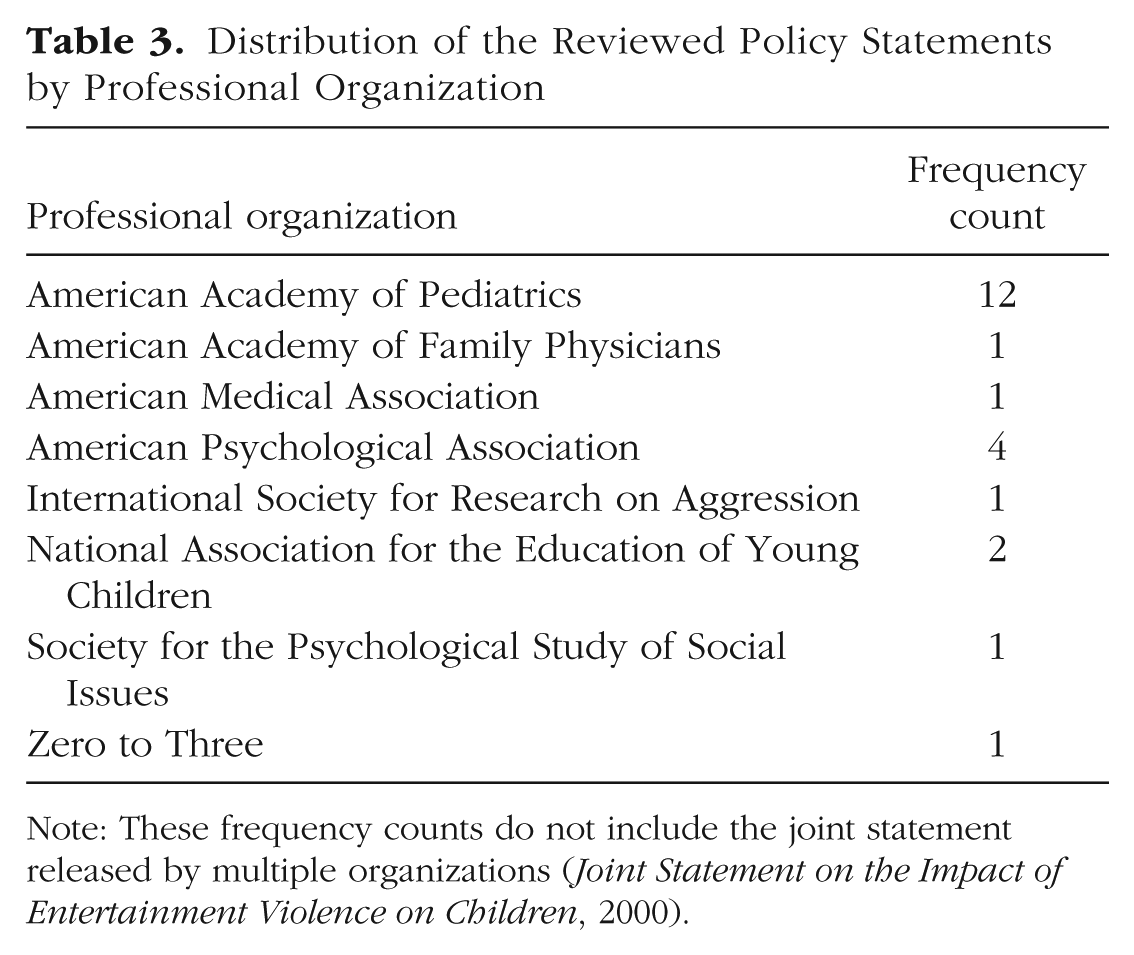

Table 2 lists the nine topics that were the focus of the selected policy statements and shows how many of the statements focused on each of these topics. Table 3 lists the professional advocacy organizations that produced the policy statements and indicates how many of the statements each organization produced. 1 Most of the policy statements were produced via committees developed by these organizations and were written by scholars who themselves were invested in the fields being covered (e.g., AAP, 2016b; APA, 2005; Anderson, Bushman, Donnerstein, Hummer, & Warburton, 2015).

Distribution of the Reviewed Policy Statements by Topic

Distribution of the Reviewed Policy Statements by Professional Organization

Note: These frequency counts do not include the joint statement released by multiple organizations (Joint Statement on the Impact of Entertainment Violence on Children, 2000).

We found that when the membership of the committees that produced the statements was clearly reported, the committees were always composed heavily of individuals with strong prior stances on media effects (15 out of 15 cases). These positions were expressed in public, retrievable publications or press releases. In all but 1 of those cases, the individuals identified had supported the existence of strong media effects, and the committees were not balanced by including individuals identifiably skeptical of media effects. APA’s 2015 statement on media violence was unique in that ostensibly APA had sought to create a neutral task force, but ultimately APA was criticized for including a majority of members with identifiable antigame views, and no members with pro-game views (Ferguson & Colwell, 2017).

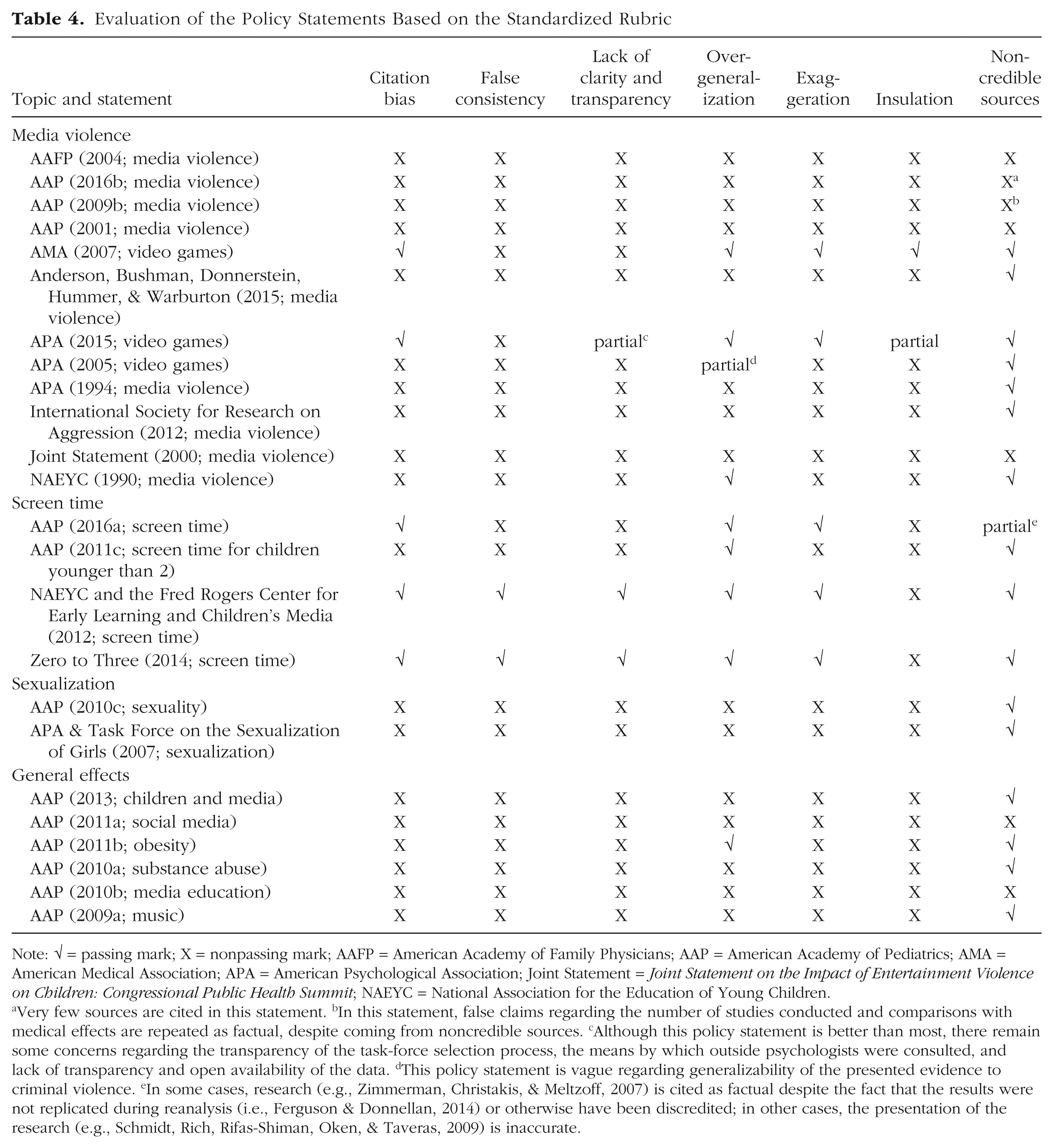

Results based on the rubric are presented in Table 4. In the following sections, we consider overall trends in the policy statements. We have provided a more detailed breakdown of the results in the Supplemental Material.

Evaluation of the Policy Statements Based on the Standardized Rubric

Note: √ = passing mark; X = nonpassing mark; AAFP = American Academy of Family Physicians; AAP = American Academy of Pediatrics; AMA = American Medical Association; APA = American Psychological Association; Joint Statement = Joint Statement on the Impact of Entertainment Violence on Children: Congressional Public Health Summit; NAEYC = National Association for the Education of Young Children.

Very few sources are cited in this statement. bIn this statement, false claims regarding the number of studies conducted and comparisons with medical effects are repeated as factual, despite coming from noncredible sources. cAlthough this policy statement is better than most, there remain some concerns regarding the transparency of the task-force selection process, the means by which outside psychologists were consulted, and lack of transparency and open availability of the data. dThis policy statement is vague regarding generalizability of the presented evidence to criminal violence. eIn some cases, research (e.g., Zimmerman, Christakis, & Meltzoff, 2007) is cited as factual despite the fact that the results were not replicated during reanalysis (i.e., Ferguson & Donnellan, 2014) or otherwise have been discredited; in other cases, the presentation of the research (e.g., Schmidt, Rich, Rifas-Shiman, Oken, & Taveras, 2009) is inaccurate.

Citation bias

Citation bias was common across the policy statements, being identified in 19 of the 24 (79.2%). Citation bias was far less common among the screen-time policy statements (25%) than among the other categories of statements. In all cases in which citation bias occurred, research that disconfirmed the presence of media effects was neglected.

False consistency

Issues of false consistency were identified in 22 of the 24 policy statements (91.7%). Three of the policy statements cited at least one disconfirmatory study (thus avoiding citation bias), but either misinterpreted those results as confirming media effects (e.g., AAP, 2016a) or ignored those results in the concluding or resolution statements (e.g., APA, 2015). As we found for citation bias, false consistency was less common (50%) among the screen-time policy statements than among the statements in other areas.

Lack of clarity and transparency

Issues of clarity and transparency were very common among the policy statements. Most did not provide sufficient information about who the task-force members were, how they were selected, and how the data were selected. In addition, in most cases, the data were not made publicly available. Only 2 of the 24 statements received passing marks in this area, and a third received a partially passing mark.

Overgeneralization

Issues of overgeneralization were found in 15 of the 24 policy statements (62.5%), and 1 received a partially passing mark. Thus, though still widespread, overgeneralization issues were less common than citation bias or false consistency. Overgeneralization issues were absent from the screen-time policy statements but more common in the other categories.

Exaggeration

Issues of exaggeration were also common; 19 of the 24 statements (79.2%) were scored as exhibiting exaggeration. Most often, the exaggeration took the form of claiming a public-health-related effect without considering the fact that the effect sizes were small and potentially trivial. However, specific comparisons with important medical effects were also not uncommon. As was the case for the other issues, the screen-time policy statements had fewer nonpassing marks than did the statements in the other categories.

Insulation

Only one of the policy statements made any references to diverse viewpoints, and a second received a partially passing score, and thus avoided the criticism of insulation. Most of the policy statements did not speak at all to the importance of ensuring the inclusion of diverse views, whereas some provided little transparency regarding the composition of the task force and how its members were selected. This issue was one of the most common across all the categories of statements, and it was the most common issue in the statements on screen time.

Noncredible sources

Use of noncredible sources was the least common problem in the 24 policy statements. Only 7 (29.2%) either relied on noncredible sources or failed to provide adequate sources; an 8th statement received a partially passing score. In most cases, the noncredible sources included newspaper articles, popular science or psychology books, or previously discredited research studies. One policy statement (AAP, 2016b) provided very few sources at all.

Results summary

With the sole exception of source credibility, the problems identified in our rubric were quite common overall among the policy statements. These problems were significant enough in most cases, in aggregate, to potentially result in misrepresentation of the research fields in question to policymakers and the public. Compared with the statements in the other categories, the screen-time statements less often exhibited these problems, with the exception of insulation.

Discussion

In the past three decades, professional advocacy organizations have released an increasing plethora of policy and resolution statements concerning the effects of media on youth. Several of these statements appeared to be fairly well-grounded reviews of the literature. However, the majority overstated the evidence for harmful effects and ignored or downplayed controversies in the various research fields. Certain subfields, such as media violence and sexualization issues, were particularly prone to problematic policy statements. By contrast, particularly given the AAP’s more recent (though still imperfect) 2016 policy statement on screen time, policy statements related to screen time appear to be undergoing a necessary, if still uncompleted, course correction compared with those from past decades (e.g., AAP, 1999) and should be commended for this.

There is an evident risk that policy statements taking strong stances may ultimately require reversal, with potential loss of face and credibility, at a later time point. For example, the Department of Health and Human Services’ strongly worded 1972 statement (Surgeon General’s Scientific Advisory Committee on Television and Social Behavior, 1972) was largely reversed by a statement in 2001 (U.S. Department of Health and Human Services, 2001), which relegated media violence to a very minor role in contributing to youth violence. Furthermore, some more recent statements by professional organizations have retreated from prior claims and recommendations. For instance, the AAP’s 2016 statement on screen time did away with the much-promoted recommendation of a 2-hr screen-time maximum limit. More recently, Division 46’s statement on video-game violence (News Media, Public Education and Public Policy Committee, 2017) went far to correct prior overstatements from the parent organization, APA. However, concerns remain considering the failure of most policy statements to correctly identify conflicts and inconsistencies in the relevant research fields.

Broader concerns

We note with concern the increased proliferation of policy statements, not just related to media but extending to numerous other areas, including, but not limited to, other science issues, clinical practice, and political and social issues. This steady rising stream of policy statements shows little sign of abating. We are concerned that if our examination of media-related policy statements is any indication, this increased frequency has not been met with increased quality. As to why the prevalence of policy statements has increased, we can only speculate. One possibility is that this proliferation reflects structural changes among certain guild organizations. Two organizations, specifically, the AAP and APA, account for the majority of policy statements in the area of media effects, and appear to be prolific producers of policy statements in other areas as well. It is possible that their profligate production of policy statements reflects both structural corporatization in these organizations and the perceived marketing value of policy statements broadly. These influences may be combined with an increased appetite among policymakers to use policy statements by guild organizations as often quasiscientific cover for political or regulatory efforts (Copenhaver, 2015).

The inclusion of expert reviewers on task forces and committees that develop policy statements is an issue worth evaluating. Our analyses indicated that it was very common for policy statements to rely on content experts in the field. However, these experts also often had unacknowledged bias, that is, very strong views about the content in question; in fact, they were often reviewing and essentially promoting their own work. In most cases, there appeared to be little effort to balance these views with those of more skeptical scholars. Thus, the concern appears to be less about the inclusion of experts and more about the appearance of unacknowledged bias, that is, selection of experts so as to promote a particular view as a consensus view, with consistent support, rather than to address relevant controversies fully and in a more balanced fashion. Obviously, we cannot say whether this common lack of balance in the task forces and committees whose statements we examined was inadvertent or purposeful on the part of the organizations in question.

Conflicts of interest are a related issue. Traditionally, researchers have been considered to have a conflict of interest if a stakeholder vested in a particular research outcome has given them a financial inducement (broadly defined to include inducements received by family members or indirect inducements, such as stocks). For the current review, such stakeholders could include not only media companies, but also antimedia activist groups, such as the National Institute of Media and Family, which has funded some media studies (e.g., Anderson et al., 2008). However, it is possible to consider a wider range of activities as posing lesser conflicts of interest for researchers who are tasked with producing objective reviews. For example, having produced multiple research articles or books on a topic, or having received grants on the topic, may make scholars biased stakeholders. This slanting, of course, is a facet of human nature and need not imply any bad faith. Further, our committee cannot claim immunity from this broader conceptualization of conflict of interest, as many of the committee members have produced research articles, written books (e.g., Markey & Ferguson, 2017), and received grants on topics related to media effects. The example of APA’s 2015 task force suggests that seeking ostensibly neutral reviewers, although desirable in principle, may be akin to a unicorn hunt. Despite taking this ostensibly valuable—whether or not realistic—approach, APA still defaulted to bias, that is, selecting task-force members who, in the majority, had clear prior antimedia views. Thus, it may be more valuable, particularly when significant debate or controversy exists in a field, to specifically seek out a balance of views on an expert panel rather than to look for neutral reviewers. If such a balanced panel cannot reach consensus, it may be best to avoid a policy statement.

Some scholars might argue that the evidence in some fields (e.g., the link between smoking and lung cancer) is so incontrovertible that the inclusion of skeptical scholars is unnecessary. Although we are somewhat sympathetic to such arguments, we suggest that the bar for such a strategy should be very high and has not been reached in media studies. Further, we are concerned that scholars heavily invested in one side of a debate may prematurely claim consensus in order to marginalize scholars on the opposing side. Perceptions of consensus can also reflect cultural norms, publishing practices, publication bias, and other problems within a field rather than true consistency in the data. Even if skeptical views are in the minority, including skeptics in policy-statement committees can help reveal flaws in thinking and weaknesses in data, and reduce groupthink. Put simply, “kicking the tires” to ensure the fullest presentation possible is always preferable to wishful hoping that points being made are not punctured with flaws.

We do acknowledge that our own review is not without limitations. For instance, our scoring of the policy statements was handled mainly through committee discussion and consensus, after each statement was rated by at least two individuals. Our procedure precluded a specific assessment of interrater reliability, however. Further, all task forces and committees see an issue through their own particular lens, and our group brought to bear its own biases and particular backgrounds that could have influenced our decisions.

Best practices for policy statements

Policymakers continue to turn to professional advocacy organizations for information. Pressures and concerns related to media appear unlikely to abate in the foreseeable future. We offer the following suggestions for a “best practices” media policy statement. Naturally, these best practices could apply to policy statements in other areas as well.

Acknowledge disconfirmatory data

One of the most common problems we saw was citation bias, the failure to cite disconfirmatory data. These failures included cases in which statements did not cite sources reporting that original results were not replicated or reanalyses of the data led to different results. Failure to acknowledge disconfirming evidence leaves policy statements very open to accusations of bias and propaganda and can result in the discrediting of entire research fields (Hall et al., 2011). Arguably, this practice should be considered unethical. It certainly sets a bad example for young scholars, who may adopt it. Thus, it is important that policy statements note the existence of inconsistent data and significant debates or controversies.

Focus on effect size

For two decades, APA has espoused the standard that interpretation of effect size is a critical element of scientific communication (Wilkinson & Task Force on Statistical Inference, 1999). However, very few policy statements discuss effect sizes, and some arguably exaggerate or distort the magnitude of effects. When effect sizes are small, weak, or trivial, they should be acknowledged as such. Attempts to rescue trivial effects with “small is big” arguments, such as by translating proportions of explained variance into the number of people affected (e.g., r2 = .01, means 1% of a population of millions will be adversely affected), or by comparing the targeted effect with medical effects, should be avoided.

Acknowledge methodological limitations

Most fields have significant, even systemic, methodological limitations that could influence the interpretation of results. For accurate scientific communication, including communication to the general public, it is incumbent that professional guilds and advocacy groups honestly acknowledge these limitations.

Solicit balanced views

We advocate that professional organizations intending to work on a policy statement do the hard work of making sure to include balanced (and not merely token) representative views from all sides of an active debate. There is reasonable uncertainty as to when discrepant views reach critical mass such that they deserve a full hearing. However, we struggle to think of many social issues that are immune to reasonable, discrepant views. And it behooves professional organizations to err on the side of caution in such matters.

Avoid secondary sources

We argue that even primary sources can prove unreliable, but secondary sources tend to compound the problem of unreliability. Reliance on secondary sources is an invitation to problematic, unverifiable claims, and such sources should be avoided when possible.

Distinguish scientific statements from advocacy statements

Undoubtedly, professional guilds are going to advocate for policy positions that benefit themselves or their membership, or that are simply popular among dues-paying members. However, such advocacy statements should be clearly identified with a disclaimer that they are meant to advocate for a particular goal, not summarize a scientific field in a balanced, objective manner. By contrast, scientific policy statements should not advocate for specific policy goals or promote resolutions from professional bodies, such as APA. Further, though this recommendation is less critical and perhaps up for debate, we argue that those statements that are advocacy based ought to avoid advocating policies that are unconstitutional, illegal, or realistically improbable for individuals to follow.

Release fewer statements

Again, we note that the release of policy statements on media effects appears to be on the rise, and this may be true more broadly as well. Policy statements clearly provide an opportunity for professional organizations to garner considerable press. Beyond that, they may gradually do science more harm than good, not only by hurting the reputation of the fields they represent, but also in causing a kind of fatigue and indifference in the general public as the number of warnings increases. We suspect that numerous warnings across fields result in divided attention, or warning fatigue, so that attention is drawn away from some health matters that may have real impact on mortality and morbidity. Thus, fewer statements may both have more impact and avoid unintended harms.

Be mindful of unintended harms

Some policy statements may lead to consequences that were unforeseen by the organizations that released them. For instance, in the United States, some politicians may refer to policy statements’ (false) claims of consensus on harmful effects of video-game violence in order to distract the public from discussions on gun control. We do not mean to disparage any political point of view, but only wish to make the point that when a policy statement does inform policy, it may do so in a way that the organization did not intend. In some cases, these unforeseen consequences may contribute to real harms (e.g., delay movement on gun control and result in more gun deaths).

Prioritize and encourage open science

Scientific studies that are preregistered and conducted under conditions of transparency and preregistration should be considered the current gold standard and given particular weight when evaluating evidence produced by research fields. Should open and nonopen research disagree, greater weight should be given to the open-science research. Naturally, however, even open-science research should be evaluated for other methodological limitations. Policy statements also provide an excellent opportunity to encourage further open-science research.

Concluding comments

We acknowledge that without a qualitative assessment of the individuals involved in the production of media policy statements, particularly at the administrative levels, it is difficult to know why these statements have been so consistently (with a few exceptions) problematic. On an anecdotal level, in personal conversations with administrators, members of this committee commonly hear that professional organizations feel under pressure by government agencies to provide the answer that would support public policy on a social issue. More in-depth analysis exploring whether this perception contributes to the problems in policy statements would be welcome, and we call upon professional organizations to open their administrative staff to such qualitative study. Another possible explanation is that different organizations may look at data in different ways. For instance, medical organizations may be more concerned with epidemiological data than with data from experiments, the latter of which may be more convincing to psychologists. However, our own read of the epidemiological data regarding media effects does not support the argument that policy statements being driven by epidemiological data is a primary issue. For example, longitudinal studies have failed to find convincing evidence that playing violent video games has long-term effects (e.g., Breuer, Vogelgesang, Quandt, & Festl, 2015; Etchells, Gage, Rutherford, & Munafò, 2016; von Salisch, Vogelgesang, Kristen, & Oppl, 2011). At the societal level, playing violent video games has been consistently found to be associated with reduced criminal violence (Cunningham, Engelstatter, & Ward, 2016). Similarly, problematic teen sexual behaviors, particularly pregnancy rates, have decreased to historic lows despite the increased availability of sexual media (Centers for Disease Control and Prevention, 2017).

Although our review of media-related policy statements has implications for policy reviews in other areas, we caution that the limited number of policy statements in any one area limits the degree to which observations can be generalized to other areas. We have sought to examine issues related to outcomes of professional organizations when crafting policy statements and have noted that the (unintentional) biases of such organizations’ past statements may have led to reduced credibility and more harm than good. In general, we suggest that decisions to make omnibus claims about entire research fields should be approached with greater caution than has been typical. When fields are split, including balanced perspectives and even “minority” views is likely to increase the quality of policy statements and reduce criticisms that they are biased. Such statements may be less certain in tone, but we suspect that, in most cases, an easy (and often documentable) charge of bias renders statements ineffective other than in rallying a base of preexisting supporters. There are probably no set metrics on what level of evidence needs to be achieved in order to argue for the release of a policy statement. Our greater concern is that, in the case of media effects at least, policy statements too often seem to ignore inconvenient evidence to reach omnibus claims that are fairly easy to fact-check as false.

It is worth asking whether it is possible to produce effective policy statements. Arguably, a central purpose of a policy statement is to provide a succinct and simple review of the research that promotes policy outcomes the organization in questions prefers. However, research data are seldom simple and clear. In the majority of policy statements we reviewed, the approach of the organization appeared to be reductive in that complexities and inconsistencies in research were ignored in favor of a narrative supportive of the organization’s policy agenda. There were some exceptions to this (e.g., American Medical Association, 2007; Zero to Three, 2014), although they were in the clear minority. Thus, if the intent of the policy statements was to provide straightforward and objective research summaries, it appears that the majority failed, doing more to misinform than to inform. However, the intent may never have been to provide objective research reviews, but rather may have been to provide reductive and one-sided arguments in favor of distinct policy goals. But such a purpose is necessarily at odds with the reputation of organizations as objective scientific bodies.

Supplemental Material

FergusonOpenPracticesDisclosure – Supplemental material for Do Policy Statements on Media Effects Faithfully Represent the Science?

Supplemental material, FergusonOpenPracticesDisclosure for Do Policy Statements on Media Effects Faithfully Represent the Science? by Malte Elson, Christopher J. Ferguson, Mary Gregerson, Jerri Lynn Hogg, James Ivory, Dana Klisanin, Patrick M. Markey, Deborah Nichols, Shahbaz Siddiqui and June Wilson in Advances in Methods and Practices in Psychological Science

Supplemental Material

FergusonSupplementaryResults – Supplemental material for Do Policy Statements on Media Effects Faithfully Represent the Science?

Supplemental material, FergusonSupplementaryResults for Do Policy Statements on Media Effects Faithfully Represent the Science? by Malte Elson, Christopher J. Ferguson, Mary Gregerson, Jerri Lynn Hogg, James Ivory, Dana Klisanin, Patrick M. Markey, Deborah Nichols, Shahbaz Siddiqui and June Wilson in Advances in Methods and Practices in Psychological Science

Footnotes

Action Editor

Pamela Davis-Kean served as action editor for this article.

Author Contributions

The authors constitute the News Media, Public Education and Public Policy Committee. They all contributed equally to conceptualizing this project and writing the manuscript. All the authors approved the final version of the manuscript for submission. This report reflects only the views of the members of the News Media, Public Education and Public Policy Committee. It does not necessarily reflect the views of the American Psychological Association or of Division 46, the Society for Media Psychology and Technology.

Declaration of Conflicting Interests

The authors declared that there were no conflicts of interest with respect to the authorship or the publication of this article. None of the authors are employees of technology companies, and none have received financial inducements from technology or media companies to produce research that is favorable to media industries. No media companies participated in drafting or developing this report or played a role in approving any draft. Although this is not a financial conflict of interest, for the sake of transparency we note that many of the authors have published research articles on media effects, including both articles reporting evidence for media effects (e.g., Giumetti & Markey, 2007; Holz Ivory et al., 2017) and articles reporting null results (e.g., Kneer, Elson, & Knapp, 2016). Some of the authors have published books (e.g., Barr & Nichols Linebarger, 2016; Markey & Ferguson, 2017) related to media and media effects. The research of some of the authors (e.g., M. Elson, C. J. Ferguson) has contradicted some of the policy statements under review, which could create some personal biases.

Open Practices

All data and materials have been made publicly available via the Open Science Framework and can be accessed at https://osf.io/4vk5x/. The complete Open Practices Disclosure for this article can be found at http://journals.sagepub.com/doi/suppl/10.1177/2515245918811301. This article has received badges for Open Data and Open Materials. More information about the Open Practices badges can be found at ![]() .

.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.