Abstract

Introduction

Generative artificial intelligence (AI) has transformative potential in medical training, and its role in medicine holds drastic implications for patients, healthcare providers, and society; however, its current use by medical students is unknown. The study aims to characterize the use, frequency of use, and perceptions of generative AI by Canadian medical students.

Methods

A cross-sectional survey was distributed to 6 medical schools in Ontario, Canada, to investigate how medical students use generative AI in education, clinical settings, and for communication, and to assess the perceived barriers and enablers that influence their use.

Results

A total of 167 respondents completed the survey (60.8% female, 69.3% in first and second year), and over 78.9% of respondents reported using generative AI, with ChatGPT being the most popular model; 53.0% of respondents were frequent users and reported using generative AI tools at least once a week. In clinical settings, students report using generative AI for learning and reviewing medical content, summarizing clinical guidelines, and generating differential diagnoses; 92.8% of students were willing to learn how to use generative AI to integrate it into their future clinical practice. At the same time, most medical students appreciated the limitations of generative AI in terms of its risk for inaccuracy (91.6%) and bias (78.9%); 75.9% of participants agreed that generative AI should be implemented as a resource or formal teaching topic in medical training.

Discussion

The findings of this study may help guide medical education institutions in adapting curricula and developing policies to promote the ethical and appropriate use of generative AI in medicine.

Introduction

The introduction of generative artificial intelligence (AI) built on large language models (LLMs) is forging a paradigm shift in human craftsmanship and intelligence. Large language models have demonstrated a remarkable capacity to process unstructured human language commands and respond with novel, contextually relevant text outputs. 1 OpenAI's ChatGPT has been a large driving force behind the notoriety and discourse surrounding LLMs, as this publicly accessible dialogue interface has an estimated 200 million active users. 2 ChatGPT is one of many dialogue interfaces in this flourishing space alongside other models, including Google Gemini, Perplexity AI, Glass AI, and more. The potential and ubiquity of generative AI have profound implications for future physicians and healthcare.3,4 Numerous studies have explored the potential utility of generative AI in medical education, boasting its abilities to accelerate complex, time-intensive tasks such as summarizing medical literature, creating personalized multiple-choice questions, and constructing clinical vignettes.5–7 In clinical settings, generative AI can facilitate clinical decision-making through the generation of differential diagnoses, investigation algorithms, and management plans. Medical trainees can greatly benefit from generative AI to navigate the rigorous demands and abundance of information involved in medical training. However, the use of generative AI in medicine has raised concerns. The outputs can be misleading, inaccurate, or biased, posing risks to patient safety. 7 The overreliance of generative AI by learners may lead to plagiarism and declining critical thinking skills. 8 Owing to these concerns, the use of generative AI in medicine must be cautioned and should be implemented in a manner that helps rather than harms clinical decision-making and improves patient safety.

Despite numerous studies highlighting the potential of generative AI in medicine, the understanding of how medical students use generative AI in their education is limited. While previous research has reported the perceptions and beliefs of medical students toward generative AI,9–12 this does not provide sufficient guidance for medical institutions to develop policies and curricular changes to responsibly address the real-world use of generative AI. The aim of this study is to address this gap in the literature and survey medical students on their exact use, frequency, and perceptions of generative AI in medical training across different centers in Ontario, Canada. The findings of this study are intended to support further consideration into how medical schools may embrace generative AI.

Methods

A systematic approach was used to develop the questionnaire, which aimed to investigate the use of generative AI, and attitudes and perceptions surrounding its application in medical education, communication, and clinical workflows. The survey items were generated through a literature review of studies exploring medical students’ perceptions and utilization of AI,10–15 as well as the broader applications of AI in medicine.1,6,7,16 The questionnaire was first pretested through a process of inclusion and exclusion based on binary and general feedback provided by 2 staff physicians (BNH, AJES). Based on this feedback, the questionnaire was revised to improve clarity, minimize confusion, and ensure alignment with the study objectives. It was then pilot tested with 5 medical students, 1 resident, and 2 staff physicians. Participants in the pilot testing agreed that the questionnaire was clear, easy to complete, and that the response time was appropriate.

This survey focuses on the text-to-text and text-to-image models of generative AI, as these models are the most prominent and frequently cited in the literature. The survey consists of 27 items divided into 4 sections. The “Introduction” section outlined the implied consent form. The “Methods” section explores the utilization of generative AI and details the model type, version (free or premium), frequency, and language it is used in. The participants are asked to indicate how they use generative AI in clinical and educational settings and for communication. “Statistical Analysis” section investigates the perceptions and attitudes medical students have toward generative AI. The questions in this section explore student interest in integrating generative AI into medical education curricula and how students anticipate integrating generative AI into their future practices. This section also examines the enabling factors and barriers to the use of this technology in medicine. The multiple-choice questions were single choice responses, unless explicitly stated to “select all,” which indicates multiple-choice responses were allowed. The “Results” section collects demographic information from participants, including age, gender, level of medical education, institution, and race. The “Discussion” section presents an open forum that allows students to provide their recommendations on how institutions should respond to the emergence of generative AI. The complete survey is available in Supplemental File 1: Survey. The open survey was administered digitally on Microsoft Forms.

The cross-sectional observational study was conducted at the Ottawa Hospital Research Institute, Canada. This study conforms to the Strengthening the Reporting of Observational Studies in Epidemiology guidelines 17 (Supplemental File 2). The questionnaire was distributed to the 6 medical schools in Ontario operating at the time of the survey period: McMaster University, the Northern Ontario School of Medicine University, Queen's University, the University of Toronto, the University of Ottawa, and Western University. The inclusion criteria were any medical students enrolled in an Ontario medical school at the time of survey dissemination. There were no exclusion criteria. The Ottawa Hospital Research Institute approved this study on May 2, 2024. This study adhered to the Declaration of Helsinki. The survey was emailed for dissemination to the Vice Deans of undergraduate medical education at each medical school in Ontario. The 6 medical institutions operating during the study period participated in the study, with 3839 medical students available as eligible study participants. For 4 out of the 6 schools, 2 emails were sent to recruit medical students of all years to participate in the survey. The first email was sent when the survey period began, and the second reminder email was sent 2 weeks before the survey was closed. For the other 2 schools, the survey was embedded in an electronic newsletter or posted on an online research forum. The survey was open for a total of 4 weeks at each institution. Participation was voluntary and anonymous. Implied consent was obtained at the start of the survey. We collected survey data from May 2024 to October 2024.

Statistical Analysis

All survey data collected were included in the statistical analysis using Microsoft Excel (Redmond, United States). Descriptive statistics were performed on survey responses, including the total number and percentages. Free-text responses were analyzed according to Kiger and Varpio's 6 step framework for thematic analysis. 18

Results

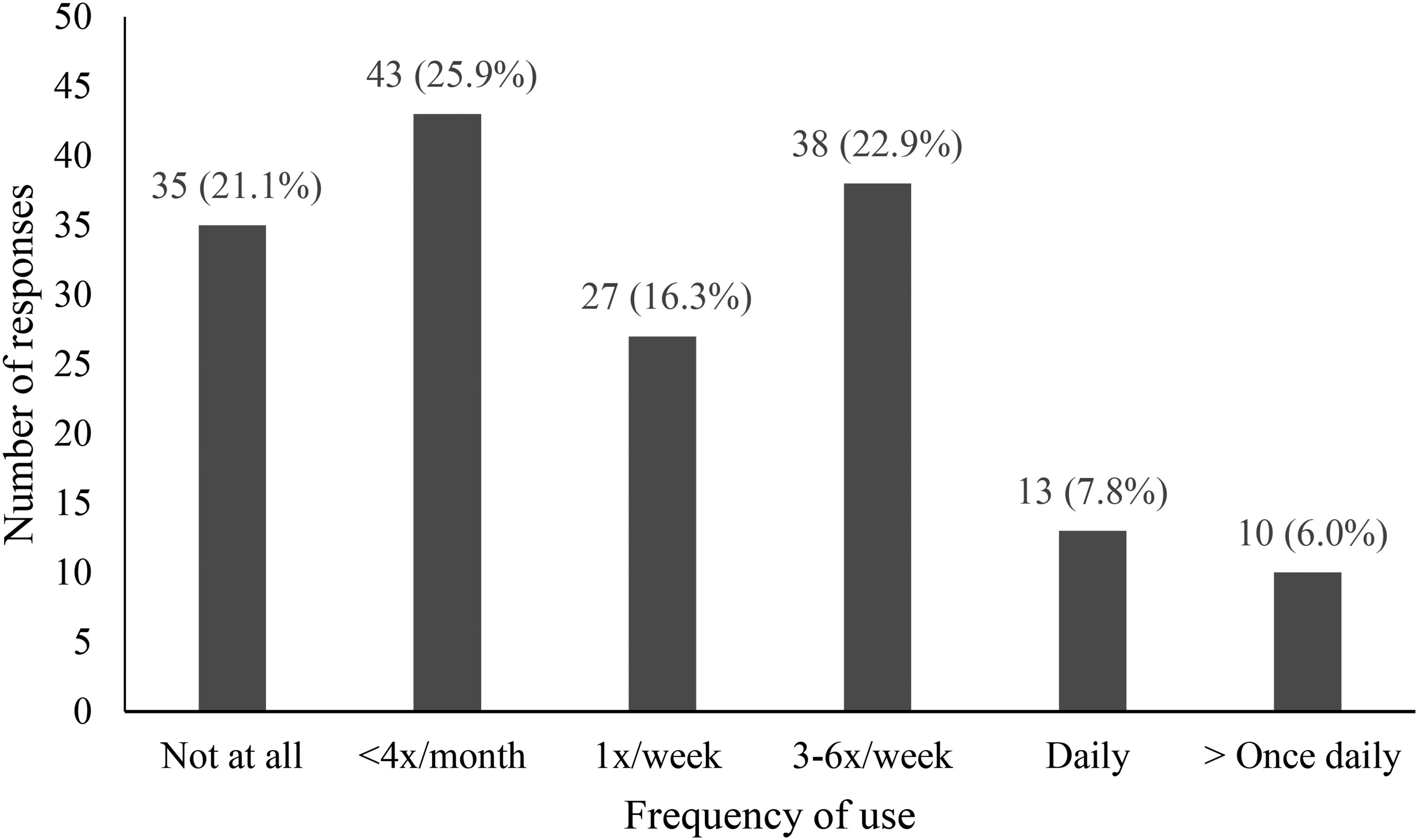

A total of 167 medical students completed the survey (overall response rate = 4.3%), and the completeness rate was 99.4% (n = 166). Over half of the participants were women (n = 101, 60.8%), and 69.3% of the respondents were in preclerkship years (the first or second year of medical school). The participant demographic characteristics are outlined in Table 1. Most participants (n = 131, 78.9%) were active users of generative AI, whereas 21.1% (n = 35) were not. The free version of ChatGPT, which was ChatGPT-4o at the time of survey release (n = 119, 90.8%), was the most popular LLM used (Supplemental File 3). Figure 1 describes the frequency of generative AI use. Over half the respondents (53.0%) report using generative AI at least once a week for both medical school and nonmedical school-related purposes. English is overwhelmingly the main language in which generative AI tools are used in (n = 129, 95.4%), with a small subset using generative AI in French (n = 5, 3.8%) and in Spanish (n = 1, 0.8%); 11.4% of participants paid for a subscription to use the premium version(s) of generative AI. Of those who did not pay for a subscription, they cited the following reasons: do not use generative AI enough (n = 70, 63.6%), too expensive (n = 48, 43.6%), and little added value to the paid versions (n = 35, 31.8%).

Frequency of Generative AI Use by Medical Students (n=166) for Both Medical School and Nonmedical School-Related Purposes.

Demographic Characteristics of Respondents (n = 166).

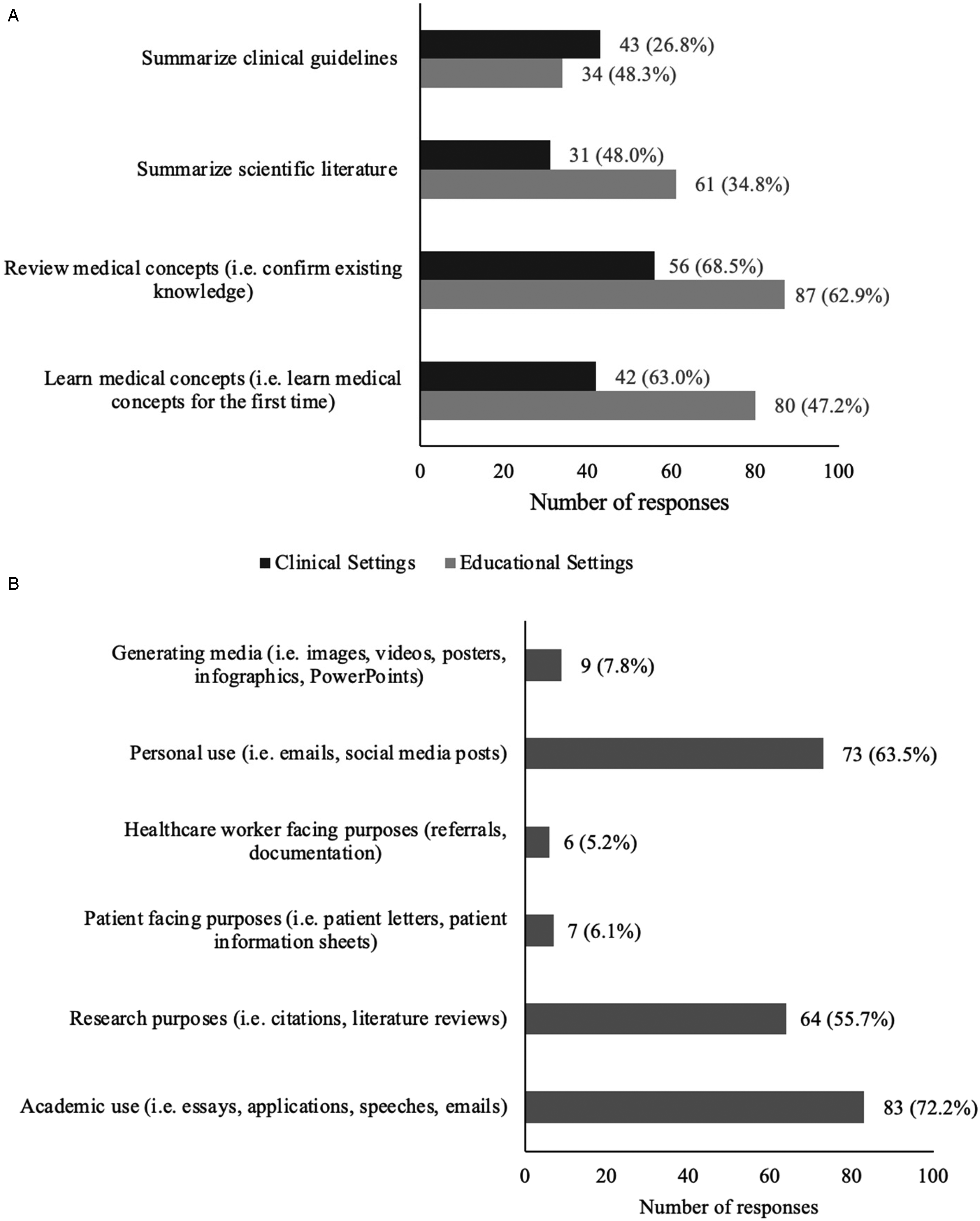

The most common use of generative AI in medical education was to review (n = 87, 68.5%) or learn (n = 80, 63.0%) medical content. Beyond knowledge consolidation, many students utilized generative AI as a study tool for examinations through the creation of study guides and summaries (n = 53, 41.7%), through self-testing as a question-bank generator (n = 44, 34.6%), and a diagram generator (n = 6, 4.7%). The use of generative AI as a learning tool in clinical settings was lower than that in educational settings, as shown in Figure 2. Other uses of generative AI in clinical contexts were to generate differential diagnoses (n = 41, 46.1%) and to support clinical decision-making (19, 21.3%). Figure 2 describes the applications of generative AI across 3 areas: education, clinical settings, and communication.

Medical Student Use of Generative AI: Applications in (A) Clinical (n=90) and Educational Settings (n=127), and for (B) Communication Purposes (n=115).

Most respondents (n = 126, 75.9%) believed that AI should be implemented as a resource or be taught in medical school or residency. An even greater number of participants (n = 154, 92.8%) were willing to learn how to use generative AI and integrate it into their future practice. Table 2 describes the medical applications that the respondents anticipated using generative AI to assist with in the future. Despite the enthusiasm for generative AI, many medical students were still able to identify the limitations associated with its use. Many reported concerns over the accuracy and reliability (n = 152, 91.6%), concerns of the inherent biases (n = 131, 78.9%), and acknowledged the negative stigma associated with its use (n = 114, 68.7%). The enablers and barriers that participants perceived as influencing their use of generative AI in the future are described in Figure 3.

(A) Enablers Medical Students Perceived to Encourage Their Use of Generative AI in the Future (n=165), (B) Barriers Medical Students Perceived to Discourage Their Use of Generative AI in the Future (n=164).

Medical Student Perceptions Toward the Use of Generative AI in Medicine.

In the free-text responses, the following themes were identified as topics medical students wanted formal education on (Table 3): validated and appropriate applications of generative AI in clinical settings (n = 29) and the use of generative AI as a study tool (ie, objective structured clinical exam preparation (OSCE), question bank generator, and a study guide) (n = 12). The following themes identified as areas of concern regarding the use of generative AI in medicine are described in Table 4. Many students expressed concern that the use of generative AI may negatively impact learning and critical thinking. Respondents shared recommendations for how they believe generative AI teaching can be delivered and implemented into the medical curriculum (Table 5).

Medical Student Free-Text Recommendations on Desired Educational Topics for Generative AI in Medical Training (n = 54).

Medical Student Free-Text Responses to Concerns Over the Use of Generative AI in Medical Training (n = 89).

Medical Student Free-Text Responses of Recommendations for How to Implement AI in Undergraduate Medical Education (n = 43).

Discussion

Our survey collected data on how medical students used and perceived generative AI for medical education. Due to the low response rate, the findings of the survey likely represent the behaviors and perceptions of a subgroup of early technology adopter medical students in Ontario. Over half of the surveyed medical students used generative AI regularly (≥once weekly), with ChatGPT being the predominant model across all purposes, not limited to medical education. Generative AI is used primarily for medical knowledge acquisition, educational coursework, and personal communication. In the clinical setting, generative AI is utilized for medical knowledge acquisition and generating differential diagnoses. It is infrequently used to assist in documentation for healthcare provider and patient-facing purposes. Many respondents shared concerns over the accuracy and inherent biases of LLMs. Most students agreed that institutional access and formal education on generative AI are important factors for the safe use of generative AI. Nearly all the respondents demonstrated a willingness to use generative AI in their future medical practice.

The findings of this survey suggest increased enthusiasm and propensity for generative AI among a highly engaged medical student subgroup compared with the literature. A 2023 study by Tangadulrat reported that 76% of medical students had positive perceptions of the use of ChatGPT for clinical practice, 14 while our study revealed that 92.8% of participants were willing to learn how to use generative AI and integrate it into their future practice. The buy-in suggests that this cohort of early-adopter medical students may perceive generative AI to have transformative utility and value for medical practice. This may imply that the incoming generation of physicians is increasingly trusting in and enthusiastic for the integration of novel technology in medicine. In 2023, Tangadulrat et al found that 62% of respondents have never used AI and that only 8% used it regularly, 14 whereas a year later, we reported that only 21.1% of respondents did not use generative AI, and that 53.0% of respondents used it regularly. This suggests that over time, as LLMs become more refined, more students may be engaging with LLMs, and those who had engaged previously are perhaps using these tools more regularly. In clinical settings, many students reported using generative AI to review medical concepts (68.5%) and learn medical concepts (63.0%). It was used to a lesser extent to generate differential diagnoses (46.1%) and very rarely to assist in documentation (5.2%). This decreased use may reflect concerns of breaching patient confidentiality, as documentation and diagnostic support require inputs with more detailed patient information. Further, this may indicate that while early adopters are enthusiastic about generative AI, they remain hesitant to use it in contexts that more directly impact patient care. A 2024 study by Mizuta et al found ChatGPT-4 was able to generate a differential diagnosis when given complex case reports with 95.9% accuracy, 19 alluding to its use as a supportive tool for generating differential diagnoses may be suitable. Interestingly, in future practice, 89.6% of participants reported a willingness to use generative AI to assist in documentation for healthcare worker-facing purposes. This discrepancy from current use may suggest medical students’ discomfort as trainees to employ AI to assist in medicolegal documentation, with greater anticipated comfort as an attending physician. This is a notable increase from the findings of Alkhaadi et al in 2023, where only 29.8% of medical students anticipated using ChatGPT to write patient notes, 12 which perhaps again signifies an increasing comfort with the adoption of this technology. However, proofreading and human verification remain essential. Hayden et al reported that although ChatGPT can generate longer, higher quality notes than dictation and typing, 36% of documents contain erroneous information. 20 Therefore, it is important to reinforce the necessity for human oversight by users, as they are ultimately responsible for any clinical decision and documentation put forward. 21

Our survey also identified various barriers and concerns that may discourage the use of generative AI in medicine. Most respondents were concerned with the accuracy and reliability (91.6%) and the inherent biases (78.9%) of generative AI. These concerns align with the existing literature. A recent meta-analysis demonstrated ChatGPT had an integrated accuracy of 56% in addressing medical queries, which consisted of a combination of United States Medical Licensing Examination questions, clinical cases, and online examination banks. 22 While generative AI has demonstrated immense promise as a medical resource, it is currently unable to fully capture the nuances and complexities of modern medicine. It should supplement and facilitate critical thinking rather than absolve it. This highlights the importance of educating medical students on safe practices of generative AI use, which was identified as one of the most common topics that respondents wish to formally learn in medical education regarding generative AI. Techniques such as “prompt engineering” to optimize LLM response, retrieving sources, and evaluating responses are integral methods to teach medical students to ensure appropriate, optimal use of generative AI for patient care. 23

Currently, various studies have explored how LLMs may be integrated into the UGME curriculum to support test performance, clinical decision-making, and knowledge acquisition. A 2024 study evaluated the utility of OSCEai, a LLM designed to simulate clinical encounters. 24 In this platform, users conduct a history via speech or text communication with the LLM and receive a feedback report based on the Calgary–Cambridge model for medical interviews. The study found that first-year medical students preferred OSCEai over traditional lecture-based methods, citing its value in providing on-demand feedback and supporting self-paced learning. A 2023 study by Meaney et al compared the performance of undergraduate medical students at the University of Toronto with that of ChatGPT-4 on a multiple-choice progress test designed to assess medical knowledge and clinical decision-making. 25 ChatGPT-4 outperformed all years of medical students, achieving a score of 79%. The highest-performing cohort was third- and fourth-year students, with mean scores of 52.2% and 58.5%. A 2023 study compared the performance of ChatGPT-3.5 with that of first-year medical students on one of McMaster University's medical school examinations, which included clinical vignettes and short-answer questions. First-year students achieved a slightly higher average score (3.67 out of 5) compared to ChatGPT-3.5 (3.29 out of 5), although the difference was not statistically significant. Notably, several instructors grading the examinations frequently mistook ChatGPT-3.5 responses for those written by students. Large language models have demonstrated potential promise in supporting learners across Ontario in medical education.

All universities included in this study have implemented institution-wide policies to address the emergence of generative AI in education. Overall, these policies adopt a cautious stance, emphasizing that generative AI should not be used unless explicitly permitted by course instructors with specific guidance on acceptable versus prohibited applications.26–31 A consistent expectation across institutions is that students must both cite and describe the nature of generative AI use. To our current knowledge, no institution has released policies tailored specifically to undergraduate medical education. While our findings show that medical students are using generative AI in clinical settings for medical knowledge acquisition and to support differential diagnosis, the lack of clear policy guidance may make it difficult for learners to navigate these technologies safely and effectively. As outlined in Tables 3 and 5, many students expressed a desire for concrete examples of appropriate generative AI use, as well as training on how to apply these tools effectively in clinical settings. Notably, the postgraduate Family Medicine program at the University of Ottawa has permitted the use of AI scribes for second-year residents. 32 This policy requires compliance with the College of Physicians and Surgeons of Ontario guidelines, which allow the use of AI scribes but place ultimate responsibility on the physician to ensure the accuracy and completeness of medical documentation, as well as to obtain patient consent. 33

Our study is cross-sectional; however, generative AI models continually evolve with frequent releases of new features and capabilities, which can alter one's usage and perception of these tools. Future research should explore the temporal variation in the use and perceptions of generative AI throughout medical training, accounting for differences in clinical experience and the rapidly changing AI landscape. Our target population consisted of medical students, so the use and perspectives of residents and attending physicians should be explored in future studies. There is a notable difference in the reported use of generative AI in clinical settings compared with education, which might be attributed to the reduced accessibility and the risk of patient privacy breaches in the clinical setting. This remains a frontier, and the responsible adoption of generative AI in clinical settings may enhance elements of documentation and patient care. Our hope is that institutions across Ontario recognize the value and growing usage of generative AI in undergraduate medical education and develop robust policies and implement curricular adaptations to ensure optimal, responsible use of generative AI.

This study has several limitations. First, the response rate of 4.3% is low, and the findings of this survey are not necessarily generalizable to all medical students across Ontario. This may be partly attributable to institutional policies that restricted the modes of survey distribution to platforms infrequently accessed by medical learners. In some cases, survey distribution also coincided with the summer break, further contributing to the lower response rate. One of the institutions, Queen's University, only had one participant; as a result, this limits the applicability of this study's findings to that institution. Second, it should be noted that the participants in this study may represent a cohort of early technology adopters, thereby introducing a selection bias which might skew the data to show increased usage and enthusiasm for generative AI. Due to the rapidly evolving nature of generative AI, any cross-sectional findings quickly become outdated, reflecting only a snapshot of an ever-changing present. This may be particularly true with ChatGPT, as both the premium and free versions have frequent updates and new releases, thus its use may be particularly prone to dynamic changes. Finally, most participants were in their preclerkship years, during which there was limited exposure to clinical activities; therefore, the data may consequently underrepresent the usage of generative AI in clinical settings.

Conclusion

Medical students reported the regular use of generative AI, primarily ChatGPT, to support their learning in medical education and to facilitate written communication. Medical students largely favor the adoption of generative AI in practice and wish to learn how to appropriately utilize these tools in practice. Moreover, many students shared concerns about the inherent biases in training data and the accuracy and reliability of outputs. This suggests that the use and integration of generative AI in the medical curriculum may enrich medical education and prepare the physicians of tomorrow with the skills to navigate these technological advancements in a responsible manner.

Supplemental Material

sj-docx-1-mde-10.1177_23821205251391969 - Supplemental material for Perceptions and Use of Generative Artificial Intelligence in Medical Students: A Multicenter Survey

Supplemental material, sj-docx-1-mde-10.1177_23821205251391969 for Perceptions and Use of Generative Artificial Intelligence in Medical Students: A Multicenter Survey by Cecilia Tran, Brett N. Hryciw, Sean William Moore, Alan Chaput and Andrew John Ervine Seely in Journal of Medical Education and Curricular Development

Supplemental Material

sj-docx-2-mde-10.1177_23821205251391969 - Supplemental material for Perceptions and Use of Generative Artificial Intelligence in Medical Students: A Multicenter Survey

Supplemental material, sj-docx-2-mde-10.1177_23821205251391969 for Perceptions and Use of Generative Artificial Intelligence in Medical Students: A Multicenter Survey by Cecilia Tran, Brett N. Hryciw, Sean William Moore, Alan Chaput and Andrew John Ervine Seely in Journal of Medical Education and Curricular Development

Supplemental Material

sj-docx-3-mde-10.1177_23821205251391969 - Supplemental material for Perceptions and Use of Generative Artificial Intelligence in Medical Students: A Multicenter Survey

Supplemental material, sj-docx-3-mde-10.1177_23821205251391969 for Perceptions and Use of Generative Artificial Intelligence in Medical Students: A Multicenter Survey by Cecilia Tran, Brett N. Hryciw, Sean William Moore, Alan Chaput and Andrew John Ervine Seely in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgments

The authors would like to thank Edita Delic for input with the research ethics board approval application.

Ethical Considerations

This study was granted Ottawa Hospital Research Institute approval on May 2, 2024 (Protocol ID#: 20240208-01H).

Consent to Participate

Implied written informed consent was obtained from all participants in the study. This study adhered to the Declaration of Helsinki.

Consent for Publication

Implied written informed consent was obtained from all participants in the study for consent to publish.

Authors’ Contributions

CT made substantial contributions to conception and design, acquisition of data, analysis and interpretation of data, and drafted the work. BNH made substantial contributions to the design of the work, and analysis and interpretation of data, and substantially revised it. SWM made substantial contributions to the design of the work. AC made substantial contributions to the acquisition, analysis of the work. AJES made substantial contributions to the conception and design of the work, and analysis and interpretation of data, and substantially revised it. All authors read and approved the final manuscript and to have agreed both to be personally accountable for the author's own contributions and to ensure that questions related to the accuracy or integrity of any part of the work, even ones in which the author was not personally involved, are appropriately investigated, resolved, and the resolution documented in the literature.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the University of Ottawa's 2024 Studentship Research Program. Faculty of Medicine, University of Ottawa.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr Hryciw is a co-founder of Osler AI Inc., a medical training and evaluation company. Dr Seely is founder and CEO of Therapeutic Monitoring Systems (TMS) Inc., a company that manages several point-of-care decision support software's. Dr Seely manages this active COI with agreements with the Ottawa Health Research Institute (OHRI) and disclosures on grants, presentations, and publications.

Data Availability

Available upon request.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.