Abstract

OBJECTIVES

Generative artificial intelligence (AI) models such as OpenAI's ChatGPT and Google's Bard have forced educators to consider how these tools will be efficiently utilized to improve medical education. This article investigates current literature on how generative AI is and could be used and implemented in undergraduate medical education (UME).

METHODS

A rapid review of the literature was performed utilizing a librarian-generated search strategy to identify articles published before June 30, 2023, in 6 databases (Pubmed, EMBASE.com, Scopus, ERIC via EBSCO, Computer Science Database via EBSCO, and CINAHL via EBSCO). Inclusion criteria were (1) a focus on osteopathic and/or allopathic UME and (2) a defined use or implementation strategy for generative AI. Two reviewers screened all articles, and data extraction was performed by 1 reviewer and confirmed by the other reviewer.

RESULTS

A total of 521 relevant articles were screened during this review. Forty-one articles underwent full-text review and data extraction. The majority of the articles were opinion pieces (9), case reports (8), letters to the editor (5), editorials (5), and commentaries (3) about the use of generative AI while 7 articles used qualitative and/or quantitative methods. The literature is best divided into 5 categories of uses for generative AI in UME: nonclinical learning assistant, content developer, virtual patient interaction, clinical decision-making tutor, and medical writing. The literature indicates generative AI tools’ greatest potential is for use as a virtual patient and clinical decision-making tutor.

CONCLUSIONS

While the possibilities proliferate for generative AI in UME, there remains a dearth of quantitative evidence of its use for improving learner outcomes. The majority of the literature opines the potential for utilization, but only 7 studies formally evaluated the results of using generative AI. Future research should focus on the effectiveness of incorporating generative AI into preclinical and clinical curricula in UME.

Introduction

When OpenAI released the first widely available large language model (LLM) ChatGPT in November 2022, there was a shift in the world's perception of artificial intelligence (AI). Immediately, companies such as Microsoft and Google began to release their LLMs into the market to compete. Since that time, there have been calls to increase our understanding of generative AI models in all fields to better harness the power of these tools.1–3 As opposed to other AI models, the ease of access and user-friendly interface have made these tools popular with the general public, but the question remains about how these tools could be harnessed in academic settings such as medical education. 4 ChatGPT's ability to pass USMLE (United States Medical Licensing Examination) Step 1, 2, and 3 examinations has provoked medical educators to grapple with how to best approach deploying this technology in their learning context. 5 Academia, including medical education, is polarized regarding the use of generative AI with ethical concerns arising over misuse, cheating, and privacy. There are even calls to develop detectors that can discern human-written content from content written by LLMs although many believe this will spark an unwinnable arms race. 6 Educators across the educational spectrum are beginning to study how best to teach and implement such a tool in a classroom setting.

Previous technological shifts have proven to alter the way in which physicians practice. As one example, the electronic health record (EHR) changed how clinicians document and maintain a patient's health record, but medical education continues to struggle in preparing students for this part of medical practice. Since its rapid growth in the medical field, there have been calls to increase training on these systems earlier in medical education. 7 However, primary care physicians regularly cite the burden of documentation, data entry, and poor interoperability as increasing their level of burnout. 8 Additionally, the recognition of the technology's importance has not universally translated into increased training or educational experiences in EHR documentation in undergraduate medical education (UME). 9 The EHR provides a cautionary tale to medical education that with the advent of generative AI, high-quality educational curricula that build the technological skills to engage with these tools will become essential to prepare future physicians for medical practice.

The potential to improve the efficiency of learners and educators derives, in part, from the ability to understand the idiosyncrasies of natural language and produce readable outputs. 10 As the technology and algorithms behind generative AI improve, so will its ability to aid medical students throughout medical school and into medical practice. Learning from the introduction of electronic medical records, educators would benefit from understanding how best to incorporate these technologies into UME to harness the potential increase in efficiency to help students become good stewards of their resources during training and in practice. To better understand the current evidence and best practices behind how to implement generative AIs in teaching, this article performs a rapid review of the available literature about the potential uses, implementation strategies, and recommendations for this technology in UME. A rapid review methodology was chosen due to the emerging and changing nature of generative AI technology. Rapid reviews have been established as a method to investigate broad research questions in emerging fields to provide rapid guidance to stakeholders. 11 Through this review, we aim to provide clarity for educators and students about best practices for implementation of these tools in UME as well as identify gaps in the literature to inform future investigation into the inclusion of these tools into classrooms and curricula.

Methods

Search Strategy

A trained clinical health sciences librarian (Wright) performed our comprehensive electronic search of publications using the following databases: Pubmed, EMBASE.com, Scopus, ERIC via EBSCO, Computer Science Database via EBSCO, and CINAHL via EBSCO. All database results were collected from the inception of the database through June 30, 2023. Search terms were used to retrieve articles addressing the 2 main concepts of the search strategy: (1) medical education and (2) artificial intelligence. Search strategies include a combination of both text words and controlled vocabulary, when available. (Pubmed example search query: “generative ai” OR chatbot OR chatgpt OR “chat gpt” OR “chat generative” OR “large language model*” OR llm[tiab] OR llms[tiab] OR bard[tiab]) AND (“medical education"[tiab] OR “medical school"[tiab] OR “medical Schools"[tiab] OR “medical student"[tiab] OR “medical students"[tiab] OR education, medical[mesh] OR schools, medical[mesh] OR students, medical[mesh] OR “medical education"[Title/Abstract:∼4] OR (medical[ti] AND (student*[ti] OR educati*[ti] OR school*[ti]))).)

Results were downloaded to EndNote, and duplicates were removed. All references were uploaded to Covidence systematic review software, a web-based tool designed to facilitate and track each step of the abstraction and review process. 12

Selection of Articles

Inclusion criteria were (1) a focus on osteopathic and allopathic UME and (2) a defined use or implementation strategy for generative AI tools. Exclusion criteria were (1) no focus on osteopathic or allopathic UME, (2) no specific mention of generative AI, and (3) no mention of potential uses or implementation strategies for generative AI. No article or study types were excluded. Generative AI was defined as a model that utilizes natural language processing (NLP) to analyze user inputs and generate novel outputs/responses without relying upon rule-based systems or retrieval-based models that select from preexisting responses or categories.

All articles were reviewed by 2 reviewers (Hale, Alexander) on the study team to ensure they met the inclusion criteria. Each member reviewed the abstract for each article independently, and any conflicts between the 2 reviewers were resolved by consensus. Any article that was included then underwent full-text screening by both reviewers, and conflicts were resolved via consensus.

Data Extraction

Data extraction was performed by 1 reviewer (Hale) with confirmation of data by the other reviewer (Alexander). Data were extracted between August 10 and September 18, 2023. Data extracted included article identifiers such as title, author(s), journal of publishing, date of publishing, university, and country of the primary author. In addition, the specified aims of the article, study type, educational setting (eg, clinical or nonclinical), the language used to describe generative AI, potential uses of generative AI in UME, study-specific outcomes, and recommendations for the implementation of generative AI into UME were extracted. Results were then reported narratively per Cochrane guidelines. 11

Results

Search Results

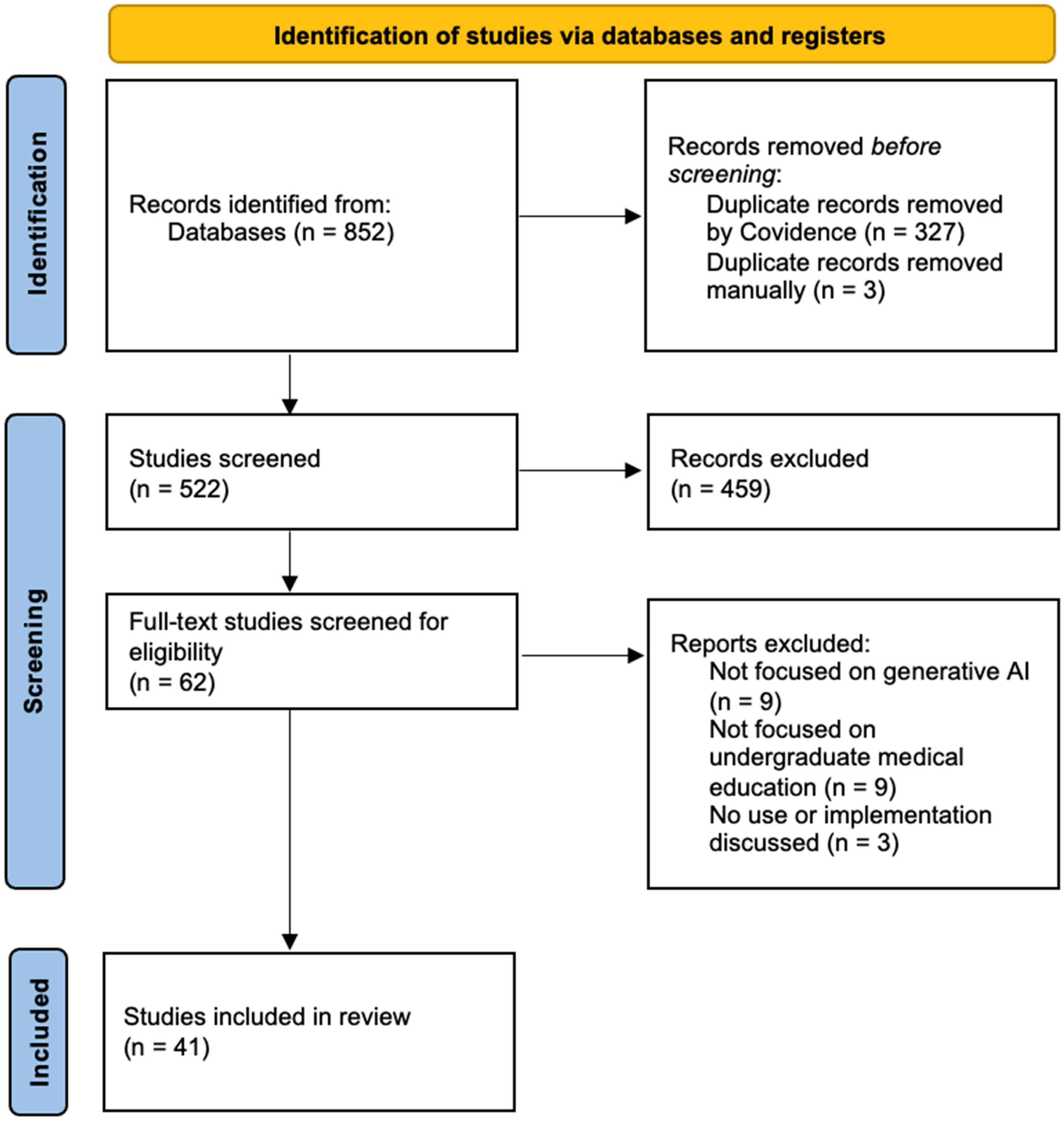

A total of 852 articles were identified, and 521 articles remained after duplicates were removed. Of these articles, 459 were deemed irrelevant based on abstract screening, and the remaining 62 underwent full-text screening. Twenty-one articles were excluded with 41 articles remaining for data extraction (Figure 1). The 41 articles were written in 19 different countries: 12 in the United States; 5 in Germany; 3 in India; 2 in Canada, Qatar, Pakistan, the United Kingdom, and China, respectively; 1 was a multinational project and the rest were written in Jordan, Iran, Mexico, Oman, Republic of Korea, Afghanistan, France, Singapore, Japan, and Greece (Table 1). Nine articles were opinion pieces, 8 case reports, 5 editorials, 5 letters to the editor, 3 commentaries, 3 quantitative studies, 2 mixed methods studies, and 2 comparison studies, as well as a technical evaluation, scoping review, book chapter, and cross-sectional study. A total of 13 different identifiers of generative AI were used with ChatGPT being the most commonly used (32 articles) followed by large language model (18 articles) and chatbot (8 articles). The educational settings for the articles were primarily nonclinically focused (18 articles) or both clinically and nonclinically focused (14 articles).

PRISMA diagram.

Characteristics of articles included.

Potential Uses of Generative AI in UME

Articles varied significantly in their aims and focus with a plurality written in 2023 focusing on the ways that generative AI, and ChatGPT more specifically (29 articles), can be utilized for UME learning (Table 2). The most commonly cited uses for generative AI were personalized learning assistant/tutor/information source (25 articles), aid in content development for educators (12 articles), medical writing (10 articles), clinical decision-making aid/tutor (8 articles), and virtual patient interaction (8 articles).

Articles associated with the 5 categories of uses for generative AI.

Nonclinical Learning Assistant

Five articles focused on the performance of ChatGPT on medical examination questions.5,13–16 Three articles displayed the ability of ChatGPT to pass standardized multiple-choice examinations.5,13,15 Takagi et al showed an improvement in the performance of ChatGPT-4.0 compared to ChatGPT-3.5 on the Japanese Medical Licensing Examination 15 while Strong et al showed that ChatGPT 3.5 could answer clinical free-response questions above a passing threshold 43% of the time. 16

Many articles suggested the use of generative AI as a learning assistant or personalized tutor for nonclinical content.4,17–33 Koga et al suggested that generative AI can provide an additional resource for feedback on multiple-choice questions rapidly. 26 Li et al demonstrated that learners who engage with conversational models can improve their comfort with anatomy concepts. 31 Other articles also suggested that generative AI can be a reference for students to search for and find medical information.10,14,34–36

Content Developer

Articles discussing content development focused on the creation of multiple-choice questions.4,26,37–39 These articles primarily discussed the ability of ChatGPT to write multiple-choice questions and provide explanations. Cheung showed the ability of ChatGPT to write multiple-choice questions at a similar level of proficiency to that of human examiners in significantly less time. They went on to suggest that in the development of multiple-choice questions, examiners should provide generative AI with reliable training material to generate questions with fewer errors. 39

Other articles suggested utilizing generative AI for curriculum content development.4,19,21,24,26,32,37–42 Karabacak et al acknowledged the ability of generative AI to develop cases and simulations and suggested the implementation of guidelines and detectors to prevent misuse by students. Additionally, they recommended shifting assessments toward more diverse methodologies. 40 Han et al also suggested that generative AI can be utilized as a source of content for educators, especially in a small-group learning session, but they suggested educating both educators and students on the accuracy and detail that ChatGPT provides. 38 Arora & Arora discussed the possibility of utilizing generative AI models to create synthetic patient data for training purposes. 42

Virtual Patient and Clinical Decision-Making Tutor

In 2020, Miller and Wood wrote a chapter about the emerging ability of generative AI to impact medical education. In their chapter, they provided insight into how the power of AI can be utilized as a personalized tutor alongside medical students to help them work through clinical cases. 32 Four articles successfully created generative AI models for this type of virtual patient interaction and tutoring.33,43–45 Afzal et al suggested that this is a model that is functional for students (0.65 on a Likert scale normalized from 0–1 for usability and experience), but there were limitations in the ability of the model at the time to provide personalized feedback. 43 Seetharaman, Cooper and Rashid, and Busch et al all indicated the ability of ChatGPT to act as a virtual patient and provide feedback to students on their clinical decision-making.23,29,37 Three articles suggested the power of ChatGPT to act as a clinical reasoning tutor,18,20,22 and another suggested that it could improve comfort with patient interactions. 27

Medical Writing

Medical writing was frequently cited as an area of use and an area of concern. Uses of the technology in this domain included creative writing assistance, research manuscripts, and grant writing as well as support in writing medical notes.10,22–24,28,30,41,46–48 Breeding et al and Ho et al displayed the value of utilizing ChatGPT to perform medical writing.10,48 Masters clearly defined the ways that ChatGPT-3.5 can hallucinate in providing references. 47 Periaysamy et al recommended requiring references for all written materials in UME to utilize this drawback of ChatGPT to prevent misuse 28 while Arif et al recommended the implementation of surveillance systems for generative AI use. 30 Abd-alrazaq et al focused on providing education to students and educators on the use of generative AI as a tool in medical writing and learning. 41

Discussion

The creation, utilization, and ethical dilemmas surrounding the implementation of generative AI in UME are areas of growing interest as AI tools continue to improve efficiency in other fields and expand their footprint in medical practice. 49 We identified 5 domains in the existing literature for using generative AI in UME: nonclinical learning assistant, content developer, clinical decision-making tutor, virtual patient, and medical writing. Much of the literature written was an immediate response to the wide availability of generative AI tools focused on 1 or more of these domains and lacked specific, quantitative outcomes of the implementation of these tools.21,23,27 However, the ideas put forth in these articles can provide a starting point for educators looking to innovate in the classroom and prepare students for a medical field that increasingly embraces AI tools. 50

The strongest evidence at this time points toward utilizing LLMs customized specifically for UME,31,33,43–45 rather than adapting models developed for general public use. These resource-intensive undertakings are often outpaced by privately developed tools from OpenAI, Microsoft, and Google. This suggests that educators and learners need effective ways to teach about and utilize these more general tools for their purposes. Establishing curricula based on evidence-based teaching practices for improving student engagement and understanding of these tools and their limitations in a medical context will become essential.

For students, descriptions of generative AI tools have shown that they can provide immediate feedback and insight into their learning as students create a complete differential diagnosis, identify knowledge gaps, or simulate different clinical situations.31,33,43–45 Many authors make the case that using generative AI can provide personalized insight into cognitive or practical mistakes that the learner makes during an activity and provide immediate feedback.16,22,23,32,33,36,43 This has particular advantages as the tools can potentially operate independently of educators, making this form of tutoring reasonable at scale without significantly increasing personnel requirements. However, it is unclear how eager learners are to engage with new AI-based technologies. 51 These findings indicate that clear, evidence-based curricula are needed to provide a scaffolded means for students to interact with LLMs and accomplish these goals.33,44,45

Utilizing generative AI has potential benefits for educators as well as students. Breeding et al provided an example of how case reports can be clearly and effectively written for medical students and laypeople which educators can use to increase resources for classroom or independent learning. 10 Additionally, Cheung et al showed that multiple-choice questions are generated quickly and accurately, decreasing the time educators need to spend on creating examinations which could allow them more time to focus on students and innovation. 39 Educators looking to incorporate generative AI into their curriculum also need to consider future uses of generative AI in the healthcare field. Having students engage with these tools could enable them to understand patient experiences as well as help them recognize their limitations.29,52 This basic understanding of generative AI, and AI in general, will allow future physicians to engage with patients and regulators about effective ways to use these tools to improve the healthcare system and keep patients safe.50,53

For medical educators, the use of generative AI does not come without risk of misuse. These risks led Van de Ridder et al to suggest the creation of consensus guidelines on the use of generative AI in medical education. 54 Other suggestions for mitigating misuse include the shift toward more diverse assessment styles16,40,41 or tailoring assignments toward the known limitations of LLMs so that students are forced to address, and understand, the shortfalls of these tools.28,41 As with the evolution of the EHR, these tools are likely to become ubiquitous in clinical practice so improving learner understanding of generative AI and how to utilize its capabilities will better prepare them for the physician workforce.

This review was limited by the evidence available in the literature and the rapid nature at which literature on this topic is being published. Inclusion of opinion articles, letters to the editor, and commentaries allowed for a limited discussion of the effectiveness of adopting generative AI tools. Only 7 articles investigated generative AI using quantitative and/or qualitative methods, and no articles at the point of data extraction assessed the implementation of these tools in a classroom setting using randomized or prospective designs. At present, this serves as an initial review from the authors who address this topic, and further reviews will be necessary as the literature evolves to better characterize and refine the themes described here.

Conclusion

As generative AI evolves and proliferates, there is the likelihood that users and/or institutions will have to pay for their use, exacerbating disparities in learning environments. Ensuring equitable access to these tools will become imperative for medical schools to provide adequate learning opportunities. Despite the widespread availability of these tools, there remains a dearth of evidence assessing the effectiveness of generative AI for use in UME. Future investigations should utilize quantitative methods to assess learners’ understanding of the material and identify areas of improvement using generative AI tools. Researchers and educators should further evaluate different teaching approaches to improve learner utilization and implementation of generative AI tools for nonclinical and clinical medical training and practice.

Supplemental Material

sj-docx-1-mde-10.1177_23821205241266697 - Supplemental material for Generative AI in Undergraduate Medical Education: A Rapid Review

Supplemental material, sj-docx-1-mde-10.1177_23821205241266697 for Generative AI in Undergraduate Medical Education: A Rapid Review by Joshua Hale, Seth Alexander, Sarah Towner Wright and Kurt Gilliland in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgments

We would like to thank the University of North Carolina School of Medicine for its support of this project.

Authors Contribution

Josh Hale was involved in the conceptualization, methodology, investigation, formal analysis, software, visualization, writing—original draft, and writing—review and editing of this manuscript based on CRediT taxonomy.

Seth Alexander was involved in the conceptualization, methodology, investigation, formal analysis, writing—original draft, and writing—review and editing of this manuscript based on CRediT taxonomy.

Sarah Towner Wright was involved in the methodology, investigation, resources, software, writing—original draft, writing—reviewing and editing of this manuscript based on CRediT taxonomy.

Kurt Gilliland was involved in the conceptualization, methodology, and writing—review and editing of this manuscript based on CRediT taxonomy.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.