Abstract

Background and Purpose:

Therapeutic reasoning—the mental process of making judgments and decisions about treatment—is developed through acquisition of knowledge and application in actual or simulated experiences. Health professions education frequently uses collaborative small group work to practice therapeutic reasoning. This pilot study compared the impact of a web-based/mobile tool for collaborative case work and discussion to usual practice on student perceptions and performance on questions designed to test therapeutic knowledge and reasoning.

Methods:

In a therapeutics course that includes case-based workshops, student teams of 3 to 4 were randomly assigned to usual workshop preparation (group SOAP sheet) or preparation using the Practice Improvement using Virtual Online Training (PIVOT) platform. PIVOT was also used in the workshop to review the case and student responses. The next week, groups crossed over to the other condition. Students rated favorability with the preparatory and in-workshop experiences and provided comments about the PIVOT platform via a survey. Student performance on examination items related to the 2 workshop topics was compared.

Results:

One hundred and eleven students (94%) completed post-workshop surveys after both workshops. The majority of students (57%) preferred using the PIVOT platform for workshop collaboration. Favorability ratings for the in-workshop experience did not change significantly from first to second study week, regardless of sequence of exposure. There was no relationship between examination item scores and the workshop platform the students were exposed to for that content (P = .29). Student responses highlighted the efficiency of working independently before collaborating as a group and the ability to see other students’ thought processes as valuable aspects of PIVOT. Students expressed frustration with the PIVOT user interface and the lack of anonymity when discussing their answers in the workshop.

Conclusion:

A web-based/mobile platform for student team collaboration on therapeutic reasoning cases discussed in small group settings yielded favorable ratings, examination performance comparable to standard approaches, and was preferred by a majority of students. During the rapid shift to substantial online learning for the COVID-19 pandemic, virtual collaboration tools like PIVOT may help health professions teachers to better support groups working virtually on scaffolded therapeutic reasoning tasks.

Keywords

Background and Purpose

Pharmacy students must be able to critically assess, select, and manage medication therapy.1-5 This process, called therapeutic reasoning, is influenced by one’s knowledge and experience.6-8 For a novice student, knowledge primarily drives therapeutic reasoning because they lack relevant experiences to draw upon. 9 Inexperienced pharmacy students can recall this explicit knowledge, but they often struggle to apply knowledge to new clinical situations.10,11 For example, while a novice student will attempt to recall all therapeutic management strategies learned within the classroom when managing a patient, an advanced student with prior experience can streamline their reasoning by focusing on relevant patient-specific factors. Students gain this necessary, practical experience during clinical encounters. These experiences can address gaps with knowledge application and strengthen a student’s therapeutic reasoning process, but there may be a benefit in introducing these skills earlier and in the classroom. 12

Context-learning is one way to teach therapeutic reasoning. 9 During context-learning, students practice problem-solving tasks similar to, or within, a clinical practice environment and receive instructor feedback. Students then respond to and act on instructor feedback to improve their performance. Small group, case-based learning is a commonly used example of context-learning in the classroom. Although patient cases provide some context, the clinical practice environment is largely missing from traditional case-based learning in the classroom.

Technology platforms may enhance context-learning by simulating clinical tasks and environments. Platforms that allow for learner interaction may be desirable for programs designed for distance learning, and are of especial interest given the physical distancing measures enacted due to the COVID-19 pandemic.13,14 One such platform is Practice Improvement using Virtual Online Training (PIVOT), a web-based and mobile application that provides case information in a simulated clinical learning environment. This program allows students to first gather, assess, and respond to patient information independently. After completing this step, students can see and comment on other students’ responses using the PIVOT discussion feature. In previous work, this study demonstrated that PIVOT was an effective class preparation tool that increases accountability and thought transparency in pharmacy student groups. 15 When studied as a collaborative learning tool among medical and pharmacy student pairs, PIVOT showed improved student self-reported knowledge, clinical reasoning, and communication skills. 16

To date, the value of PIVOT has been determined based primarily on student perceptions. The effect, if any, of PIVOT on student performance is unknown. Therefore, this study sought to determine if the use of PIVOT to prepare for and use within small group workshops results in improved student performance on examination questions designed to test therapeutic reasoning.

Methods

This study used a mixed methods approach to explore the student experience and the role of PIVOT within pharmacy education. This study was certified as exempt from review by the University of California, San Francisco (UCSF) Institutional Review Board (IRB # 16-21171).

Intervention: the PIVOT program

The PIVOT program provides patient case information in a manner similar to an Electronic Medical Record. In this way, some data gathering is required. After reviewing the information presented in the case, students respond to questions on the web portal or in the mobile application. Once students have submitted their individual responses to the questions, they can view the responses of their groupmates. PIVOT has the capability of “liking” the responses of other students, similar to common social media platforms, to facilitate group consensus.

Setting

This pilot study was conducted at the UCSF School of Pharmacy during the 2016 to 2017 academic year. The general demographic characteristics of students at our pharmacy school can be found at https://pharmd.ucsf.edu/about/facts-figures. Third year pharmacy students, naïve to the intervention, participated during their required infectious diseases therapeutics course. Weekly, 90-minute, small group (10-12 students) clinical case-based therapeutic reasoning workshops were a required component of the course. Prior to each workshop, student sub-groups of 3 to 4 individuals were assigned a practice case to complete together. The expected output was a collaboratively-designed therapeutic plan using the standard Subjective, Objective, Assessment, Plan (SOAP) format. The logistics of this process were left to the sub-groups, who could hold in-person meetings and/or use electronic systems such as Google Docs (Google, Inc, Mountain View, California) to collaborate. The sub-group plans were submitted at the start of each workshop and then discussed in the wider group.

Study design and implementation

This pilot study took place during the workshops held in course weeks 2 and 3 (study weeks 1 and 2). The topics of these workshops were therapeutic approaches to pneumonia and gastrointestinal tract (GI) infections, respectively. One investigator (CM) randomly assigned each of the 10 workshop sections to either the typical workshop preparation (SOAP arm) or to use PIVOT for workshop preparation (PIVOT arm) during the first week of the study (Figure 1). The following week, the sections were crossed over to the alternative workshop preparation method. During the first 45 minutes of the workshops studied, the workshop facilitator led a discussion of the prepared sub-group case responses via SOAP or PIVOT. In the SOAP arm, the facilitator selected 1 team to lead the group in a discussion of the preparatory plans, scribing answers on a whiteboard. In the PIVOT arm, the facilitator projected a webpage that displayed the different responses each team had submitted to the questions embedded in the PIVOT program, along with the number of likes each response received. In this way, PIVOT was used to facilitate both preparation and in-workshop discussion. In both conditions, the facilitator helped to guide the group in discussing key points for the case. After resolution of the pilot study activities during the workshop, students were introduced to and collaborated on another case during second half of the workshop, without using technology tools.

Study design.

Study variables and outcomes

Student perspectives and performance were investigated to evaluate the impact of PIVOT. To collect perspectives, in the last 5 minutes of the 2 studied workshops, students completed an online survey that explored favorability with that week’s preparatory method and in-workshop experience using a 5-point Likert-type scale: very unfavorable (1), unfavorable (2), neither unfavorable nor favorable (3), favorable (4), very favorable (5). At the conclusion of study week two, students were asked to rate which platform they preferred using a 5-item Likert-type scale: strongly prefer PIVOT, somewhat prefer PIVOT, no preference, somewhat prefer SOAP, and strongly prefer SOAP. Students also selected how they accessed information using a 3-item scale: mostly or exclusively mobile app, both about the same, mostly or exclusively web portal, and were asked to select how they collaborated using the SOAP approach using a 3-item scale: exclusively online, both online and in person, or exclusively in person. Students could also provide comments within a free-response question that asked their thoughts on the program for case-based learning in small groups.

To characterize performance, students completed a 2-hour examination during the fourth week of the course using a secure computer-based platform (ExamSoft). Five items on the exam were related to the workshop content: 1 multiple choice and 2 short-answer questions on pneumonia, and 2 multiple-choice questions on GI infections. Grading of short-answer questions was performed according to a standard rubric provided by the course director (CM) and performed by 2 of the authors (KG and JG) who were blinded to student identity. Scores for these items were summed by content area (pneumonia and GI infections) and analyzed to determine whether there was an association between workshop format (SOAP arm or PIVOT arm) and score on the corresponding content.

Analysis and statistical methods

Descriptive statistics were used to summarize survey response information. Stata SE version 15 (Statacorp, College Station, TX) was used to perform quantitative analysis. Mixed-effects regression, clustered by workshop section, student subgroup within the workshop section, and student, were used to estimate the effects of workshop format (SOAP or PIVOT) on student favorability for that week and on examination performance for the items related to the workshop content. The Wilcoxon rank-sum test was used to analyse student ratings of teaching methods by platform. A significance level of .05 was established a priori for all statistical tests.

Free response comments were analyzed in Dedoose Version 7.0.23 (SocioCultural Research Consultants, Los Angeles, CA). Two of the investigators (KG & TB) used grounded theory as a framework to generate an understanding of student preferences for case-based, small group learning using technology. 17 One investigator (KG) served as a workshop facilitator within this course. The second investigator (TB) did not teach in the course, but understood the goals and activities due to her administrative role at UCSF School of Pharmacy. Both were familiar with the PIVOT platform. These investigators drew upon their personal experiences as pharmacy educators to guide interpretation of the data. These investigators first coded all the data independently and then met to discuss codes and identify overarching themes.

Results

All 119 students enrolled in the course participated in the learning activities. Of these, 111 (94%) completed post-workshop surveys for study weeks 1 and 2. Mean scores for favorability of preparatory and in-workshop experience ranged from 3.29 to 3.98 (Table 1). For both SOAP and PIVOT arms, ratings numerically decreased from the first to the second study week. The mixed-effects regression models for student perception of the preparatory experience found that the decrease in favorability ratings between study weeks 1 and 2 was statistically significant for both the PIVOT-first group (−0.68, 95% CI −1.05 to −0.32, P = .003) and the SOAP-first group (−0.41, 95% CI −0.83 to −0.01, P = .04), with the magnitude of the decrease greater in the PIVOT-first group (P = .024 for interaction). This was not seen for the ratings of the in-workshop experience, where the change in favorability ratings from first to second study week was not statistically significant (−0.09, 95% CI −0.44-0.27, P = .61 for PIVOT to SOAP, −0.11, 95% CI −0.48-0.26, P = .67 for SOAP to PIVOT) and was similar regardless of sequence of exposure (P = .54 for interaction).

Student favorability ratings of preparation and workshop experience by preparatory method. a

Students rated favorability on a 5-point scale: 1 = very unfavorable; 5 = very favorable.

The vast majority (95%) of students reported mostly or exclusively using PIVOT via the web portal (95%) and most students (78%) reported collaborating exclusively online (eg, through Google Docs) when preparing their SOAP note. When asked to rate their preference of PIVOT versus SOAP, a majority (57%) of students indicated a preference for using PIVOT to collaborate on cases in a small group (Table 2). There was no statistically significant difference in preference according to the sequence of exposure to PIVOT or SOAP (P = .43).

Student preference for and approaches to different preparatory methods.

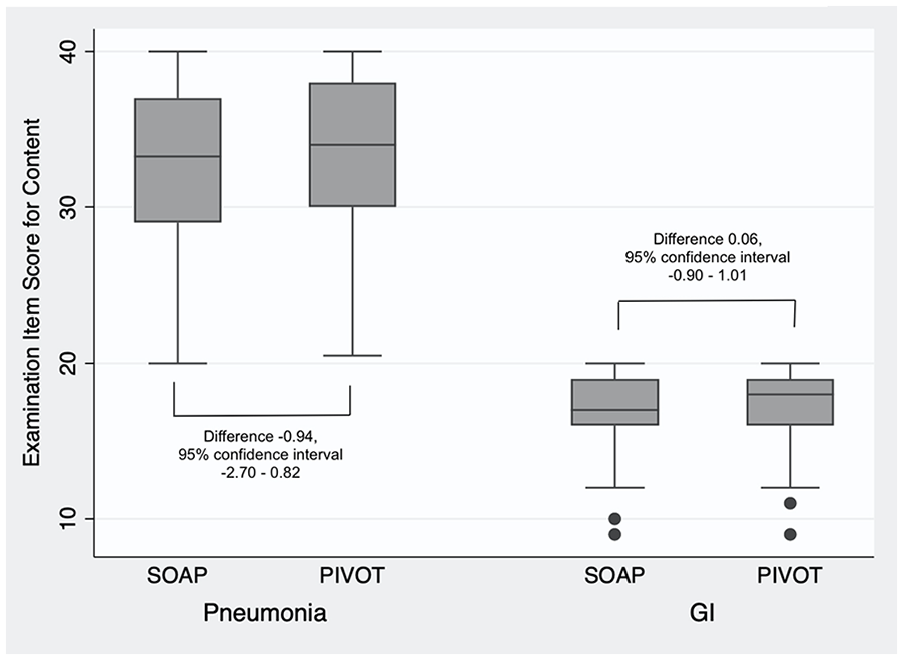

With regards to performance, mean total examination scores on questions related to the study workshops topics were similar between those students who were exposed to SOAP and PIVOT for pneumonia (32.6 vs 33.6, mean difference −0.94, 95% confidence interval −2.70-0.82, P = .18) and for GI infections (17.1 vs 17.2, mean difference 0.06, 95% confidence interval −0.90-1.01, P = .93) (Figure 2).

Examination item scores for related content by instructional type.

Four themes were identified from the student comments: thought transparency, ability to work independently before collaborating as a group, frustration with the user interface, and lack of anonymity (Table 3). These themes represented both positive and negative aspects of PIVOT compared to the SOAP preparation method.

Representative quotes for each theme.

Discussion

While this study did not detect a significant difference in student performance from using PIVOT as a preparatory tool, a majority of students indicated a preference for using PIVOT to collaborate on cases in small groups. This pilot study adds a large scale (N = 119 pharmacy students) evaluation of student perspectives after using PIVOT to prepare for and within small group therapeutics workshops. These findings augment previous results describing student perspectives on using PIVOT to prepare for large group discussions on cardiovascular cases (N = 110 pharmacy students) and interprofessional student experiences with receiving high/low guidance case prompts via PIVOT (N = 16 medical/pharmacy student pairs) studying an immunology topic.15,16

With regard to student perspectives, our results are aligned with those from prior study 15 —students generally describe positive experiences using PIVOT. Students highlighted the benefit of working independently before collaborating as a group, which promoted both independent student learning and accountability. Students also described seeing the therapeutic reasoning processes of their group members through the PIVOT program as valuable. Because inexperienced students often rely on instructors to cue their learning, this thought transparency may serve as an important scaffold. 18 Enabling visualization of the thought processes of peers and instructors may be especially important during distance learning, when remote interactions may be less rich than those that would occur in-person.

One of the aspects of PIVOT that students viewed as a negative was its perceived lack of efficiency. For example, some students commented that obtaining information through PIVOT took additional time compared to when this information was provided to them directly in the SOAP format. Previous studies of pharmacy students also report similar frustrations with health simulation technologies.15,19-21 For example, one study reported <30% of pharmacy students felt a computer simulation program was helpful for applying knowledge in a timely manner or developing a therapy plan, despite an improvement in student performance with more frequent use of the program. 20 Because this information seeking skill is a realistic feature of using electronic health records and because using electronic health records optimally is increasingly a skill needed by pharmacy graduates,4,22 this is likely a frustration that should be discussed openly in case preparation and debriefing. Students should also be made aware that learning therapeutic reasoning within a realistic information gathering setting can promote stronger context-learning and therefore may better prepare students for pharmacy practice.9,23

Several students also described displeasure with the non-anonymous postings within PIVOT where students could see and “upvote” the responses of their colleagues. This is consistent with findings of a systematic review of peer feedback in medical education which suggested that many students feel uncomfortable providing identifiable feedback to their peers. 24 This discomfort may be common in human nature but it can be addressed by teaching feedback techniques purposefully prior to using the program. 25 Because feedback is a key component of context-learning, 9 discomfort with the peer feedback structure may have limited the extent of learning with PIVOT.

Finally, this paper adds an evaluation of performance on short-term assessments of knowledge and problem solving with the PIVOT platform. This study did not detect a significant difference between PIVOT and SOAP on examination items measuring therapeutic reasoning related examination items. Context-learning posits that repetition is a key factor influencing knowledge organization and retrieval, 9 but students were only exposed to the PIVOT platform once before the examination. With more opportunities to practice therapeutic reasoning within PIVOT, student performance may have improved. Context-learning also requires students to acknowledge and respond to feedback. 9 While students in this course were responsible for completing preparatory assignments via PIVOT, their responses to feedback within the workshop were largely self-directed and occurred outside of PIVOT. To address this, future investigations of PIVOT may include student repairs of their initial responses following instructor feedback within the PIVOT platform to reinforce student responsibility in the learning process.

During the rapid shift to substantial online learning related, for example, to the COVID-19 pandemic, virtual collaboration tools like PIVOT may help health professions teachers to better support groups working virtually on scaffolded therapeutic reasoning tasks. Still, these results have some limitations. Although the students in this project had not used PIVOT previously, they had completed courses in other therapeutic areas, such as cardiovascular and respiratory, which also incorporated small group case-based workshops. The composition of these small groups was not consistent across the courses, so it is unlikely, but possible, that previous group norms influenced behaviors. For example, introducing PIVOT later in the curriculum may have led to more frustrations because students had already established their workshop preparation approach using the SOAP format. This study did not collect any specific demographics from the cohort, so it is also possible that other factors not analysed in this study influenced perceptions and performance. Finally, several students commented that the user interface of PIVOT needed to be improved. Specifically, the way information was displayed made it difficult for students to read their classmates responses and the platform would occasionally malfunction. The investigators plan to address these user interface issues with the PIVOT developer to improve the student experience.

Conclusion

Students preferred the PIVOT platform over typical workshop preparation, and demonstrated similar performance on exam items covering the respective therapeutic content areas in the treatment of pneumonia and GI infections. The thought transparency, accountability, and authenticity encouraged by this platform may provide value for instructors and students beyond short-term assessments.

Footnotes

Acknowledgements

The authors acknowledge the contributions of Mr. Sebastian Andreatta from Kiana Analytics for development of the PIVOT program.

Funding:

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The study was supported through an internal UCSF School of Pharmacy grant (Troy C. Daniels Curricular Innovation Award). Publication made possible in part by support from the UCSF Open Access Publishing Fund.

Declaration of conflicting Interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author Contributions

KG—conceptualization, formal analysis, investigation, project administration, original draft, review and editing. TB—conceptualization, funding acquisition, software, methodology, formal analysis, review and editing. JG—investigation, project administration, review and editing. CM—conceptualization, funding acquisition, methodology, software, formal analysis, visualization, review and editing.