Abstract

Objectives:

Fellowship program directors (FPD) and Clinical Competency Committees (CCCs) both assess fellow performance. We examined the association of entrustment levels determined by the FPD with those of the CCC for 6 common pediatric subspecialty entrustable professional activities (EPAs), hypothesizing there would be strong correlation and minimal bias between these raters.

Methods:

The FPDs and CCCs separately assigned a level of supervision to each of their fellows for 6 common pediatric subspecialty EPAs. For each EPA, we determined the correlation between FPD and CCC assessments and calculated bias as CCC minus FPD values for when the FPD was or was not a member of the CCC. In addition, we examined the effect of program size, FPD understanding of EPAs, and subspecialty on the correlations. Data were obtained in fall 2014 and spring 2015.

Results:

A total of 1040 fellows were assessed in the fall and 1048 in the spring. In both periods and for each EPA, there was a strong correlation between FPD and CCC supervision levels (P < .001). The correlation was somewhat lower when the FPD was not a CCC member (P < .001). Overall bias in both periods was small.

Conclusions:

The correlation between FPD and CCC assignment of EPA supervision levels is strong. Although slightly weaker when the FPD is not a CCC member, bias is small, so this is likely unimportant in determining fellow entrustment level. The similar performance ratings of FPDs and CCCs support the validity argument for EPAs as competency-based assessment tools.

Introduction

Pediatric fellowship program directors (FPDs) are responsible for directing the clinical and scholarly training of subspecialty fellows and ultimately verifying that fellows are competent to practice without supervision. This requires they use assessment tools that appropriately measure trainee clinical performance, professionalism, and scholarly activity. 1

The creation of the pediatric milestones promoted more consistent competency-based assessments. 2 The milestones are narrative descriptions of behaviors across the developmental continuum that focus on specific competencies beginning with a novice learner and progressing through a continuum to advanced beginner, competent, proficient, and, finally, expert learner.3,4

Entrustable professional activities (EPAs) complement the milestones in assessing trainees.5-7 Entrustable professional activities provide a context for the competencies and milestones by representing tasks that a practicing physician would be expected to perform without supervision. As opposed to the milestones that are based on competencies or abilities and reflect descriptions of behaviors, EPAs are focused on integrating competencies to perform tasks. In addition, the ability of a trainee to perform an EPA is based on the amount of supervision needed, ranging from direct to indirect to none. 8

In pediatrics, EPAs have been developed for trainees in general as well as subspecialty pediatrics.9-11 There are 7 EPAs that are common to all pediatric subspecialists of which 5 are also applicable to all general pediatricians and 2 that are specific to pediatric subspecialists. These EPAs (as well as their abbreviations) are listed in Table 1. A level of supervision scale for each EPA (Table 1) has also been created. 12

The 7 common pediatric subspecialty EPAs, their abbreviations, and their level of supervision scales.

Abbreviation: EPA, entrustable professional activity.

Not evaluated in this study.

The Accreditation Council for Graduate Medical Education (ACGME) requires that all ACGME-accredited training programs in the United States have Clinical Competency Committees (CCC) to evaluate trainee performance, assign milestones, and ensure that they are reported to the ACGME twice annually.13,14 Clinical Competency Committees must include a minimum of 3 faculty members and FPDs may or may not be a member. In residency programs, CCCs assimilate assessments from multiple sources to evaluate the performance of their trainees. However, compared with residency programs, most of the fellowships have fewer trainees and rotations, providing the faculty with more longitudinal experiences with each trainee. As a result, the members of the CCC may need less supplemental information to assign milestones and the required level of supervision leading to entrustment for their trainees. In addition, it is unknown whether FPDs and CCCs agree about the required level of supervision for their fellows and whether FPD membership on the CCC introduces meaningful bias.

We examined the association of trainee level of supervision determined by the FPD with that of the CCC for 6 of the 7 EPAs common to all pediatric subspecialties. We hypothesized that there would be a strong correlation and minimal bias between their judgments of the level of supervision required for their fellows.

Methods

The study was conducted by the Subspecialty Pediatrics Investigator Network (SPIN), a medical education research network. 15 Briefly, SPIN is a collaboration between the Council of Pediatric Subspecialties, the American Board of Pediatrics (ABP), the Association of Pediatric Program Directors Fellowship Executive Committee, and the Association of Pediatric Program Directors Longitudinal Educational Assessment Research Network (APPD LEARN). 16 It also includes up to 2 representatives from each of the 14 pediatric subspecialties that have ABP certification. The subspecialty representatives are responsible for recruiting programs within their subspecialty and, in this study, the goal was to recruit at least 20% of programs in each subspecialty. Institutional review board approval was obtained from each participating institution, as well as from the University of Utah, the lead site.

One week prior to the CCC meeting, each FPD was asked to assign a level of supervision for fellows in their program for 6 of the 7 common pediatric subspecialty EPAs (Table 1). The Scholarship EPA was not included because it had not been fully developed at the time the study was conducted. 17 Then, at the fellowship’s CCC meeting, members assigned a level of supervision for each fellow for each of the EPAs. The FPD and the CCC were not provided any specific instruction about the process to use to determine fellow ratings. In addition, the previously completed FPD assessments were not available to the other CCC members. Validity evidence for the level of supervision scales has been previously published. 12 These 5-point scales are based on direct, indirect, and no supervision of the fellow with case complexity being a variable in determining the need for supervision in some of the scales (Table 1).

Anonymity of trainees was ensured by creating a unique participant identifier number using an algorithm previously developed by APPD LEARN. 16 Once this ID was created, specific links to the data collection instruments were provided. There was no option to select a value between 2 levels of supervision and no centralized faculty development was provided to either the FPD or members of the CCC. Details about the data collection tools have been previously described. 12 Data were obtained in fall 2014 and spring 2015.

Additional data collected included the subspecialty, the dates when the FPD and CCC assigned their ratings, whether the FPD was a member of the CCC, number of years as an FPD, the number of fellows in the program, and the trainee’s year in fellowship. In addition, FPDs were also asked to self-rate their understanding of EPAs using a 4-point scale (unfamiliar, basic, in-depth, expert).

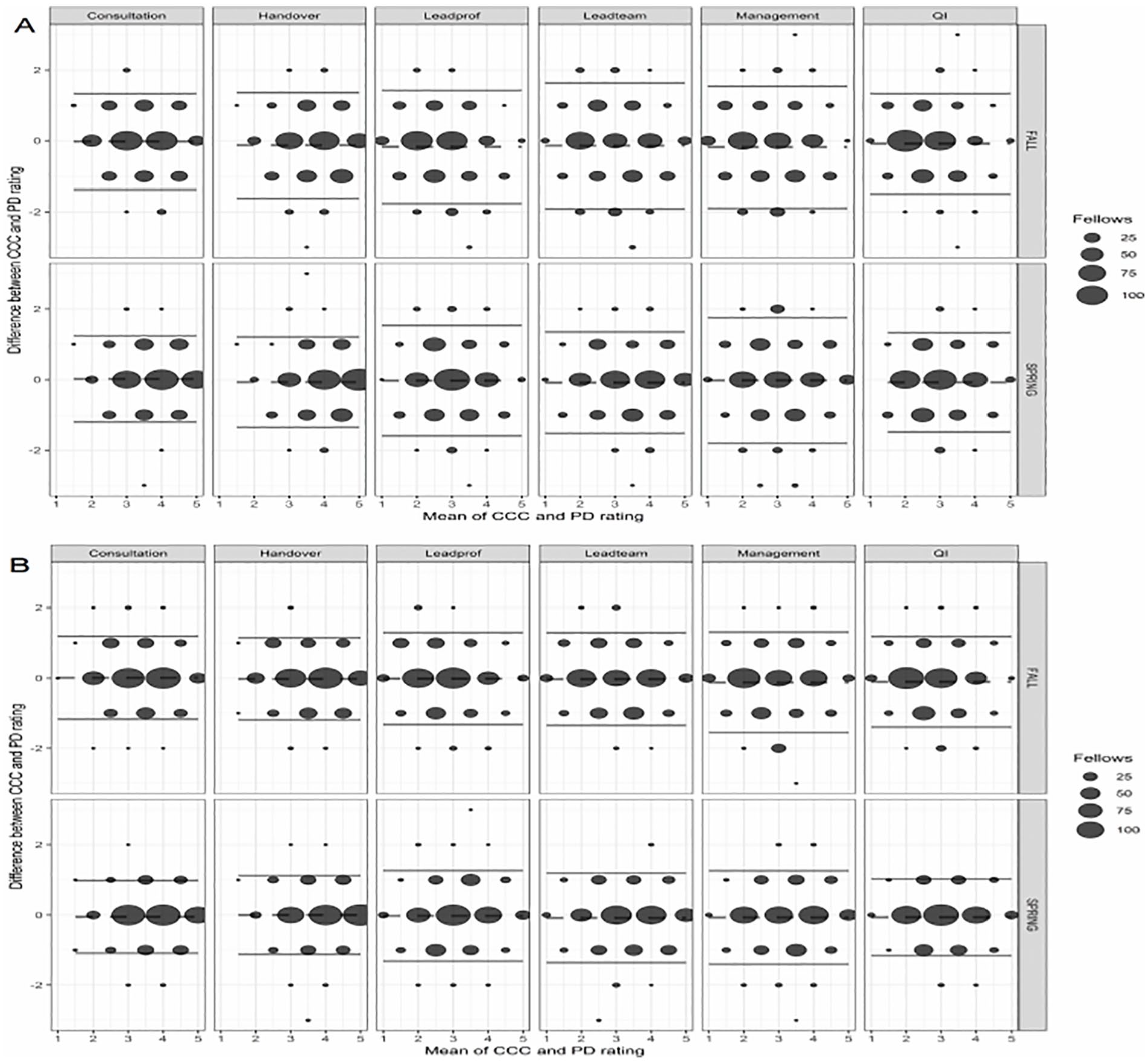

For each EPA, correlations between FPD and CCC assessments were analyzed with Spearman rho and bias was calculated as the difference between CCC and FPD ratings. Small programs were defined as those having 9 fellows or less. The contribution of program size, FPD membership on the CCC, and FPD understanding of EPAs on correlation and bias was examined using a series of linear mixed models controlling for clustering within programs. To investigate whether bias differed by program size category or data collection round, we fitted a linear mixed model for each score for each EPA with random intercepts for learner and program and fixed effects of rater (FPD versus CCC), program size, data collection round, and all 2- and 3-way interactions among the fixed effects. The main effect of rater represents bias overall; interactions with rater represent differences in bias by program size, data collection round, or jointly. We developed Bland-Altman plots for a visual representation of bias (CCC minus FPD ratings).

To investigate whether the association between FPD and CCC ratings varied by CCC composition (including FPD or not), years of FPD experience, or FPD self-rated understanding of EPAs, we fitted linear mixed models to the FPD scores for each EPA with random intercepts for programs, and fixed effects of the CCC score for the same learner on the same EPA, a single predictor of interest (eg, CCC composition), the interaction between CCC score and the predictor of interest, and data collection round. Data are reported as mean (95% confidence interval [CI]) except as noted.

Results

Study participation

A total of 1040 fellows were assessed in the fall and 1048 in the spring. Data were submitted from 78 and 82 different institutions and 209 and 212 programs in the fall and spring, respectively. In both periods, 79% (11/14) of subspecialties met the prescribed goal of having 20% of their subspecialty programs contribute data. Fellowship program directors were a member of the CCC for 57.5% (598/1040) of ratings in the first data collection period and 55.7% (584/1048) in the second. Overall, FPDs completed their assessments a median of 7 [interquartile range: 1-10] days before the CCC meeting. The time was somewhat shorter in the fall (6 [1-9]) compared with the spring (7 [1-11]; P < .001).

Correlation between FPD and CCC

The average correlation between FPD and CCC ratings was 0.76 (95% CI: 0.72-0.79) in the fall and 0.79 (0.76-0.82) in the spring. Correlations ranged from a low of 0.70 (0.66-0.74) for the QI EPA when the FPD was on the CCC in the fall to a high of 0.82 (0.79-0.84) for the Consultation EPA when the FPD was on the CCC in the spring (Table 2). In both rounds and for all 6 EPAs, there was a significant association between the FPD and CCC assessments (P < .001). However, in both periods and for all EPAs, the associations were better when the FPD was a member of the CCC as compared with when the FPD was not (P < .02 for each EPA). Nonetheless, the effect of the FPD being a member of the CCC on fellow rating was small. For the 6 EPAs, assessments ranged from 0.07 to 0.15 points higher on average when the CCC included the FPD.

Correlation and bias (mean [95% CI]) for when the FPD is or is not a member of the CCC.

Abbreviations: CCC, Clinical Competency Committees; CI, confidence interval; EPA, entrustable professional activity; FPD, fellowship program directors.

P < .05 versus null hypothesis of no difference in means between CCC and FPD. All correlations were significantly different than 0 (P < .05).

Bias

Overall bias (CCC assessments minus those of the FPD) across all EPAs in the fall and spring was −0.08 (−0.11 to −0.05) and −0.04 (−0.07 to 0.02), respectively. Fellowship program directors had higher ratings versus CCCs on average in the fall but similar ratings in the spring. Table 2 shows bias for each EPA by round and by whether FPD was or was not a member of the CCC. Biases for 2 EPAs (Management and Leadprof) were significantly different in the spring than in the fall, reflecting higher fall ratings made by the FPDs compared with CCCs (P < .05). In 3 of the 6 EPAs (Handover, Leadteam, and Leadprof), bias was significantly different depending on CCC membership, reflecting lower ratings made by FPDs versus CCCs when the FPD was not on the CCC (P < .05). In the Handover, Leadteam, and Leadprof EPAs, biases were 0.11, 0.11, and 0.16 greater, respectively, when the FPD was a participant on the CCC. As shown in the Bland-Altman plots (Figure 1), bias was similar across low and high ratings and symmetric around the mean.

Bland-Altman plots showing bias (CCC minus FPD ratings) for the 6 EPAs in the fall 2014 and spring 2015. The mean value is indicated by the dashed line and 2 standard deviations of the mean difference by the solid line. (A) The ratings for when the FPD is not a member of the CCC and (B) when the FPD is a member of the CCC. The larger the dot size, the greater the number of observations. CCC indicates Clinical Competency Committees; FPD, fellowship program directors.

Small versus large fellowship programs

In the fall, data were submitted for 742 fellows in small programs (⩽9 fellows) and 298 trainees in large fellowships. The categorization of “small” programs as <9 fellows is based on ACGME’s required program administrative support, where the greatest support is required for programs of 10 fellows or more (ACGME Program Requirements for Graduate Medical Education). The number of ratings were similar in the spring (741 and 302, respectively). In both periods, there was a higher association between fellow level of supervision assessments made by the FPD with those made by the CCC in smaller fellowships compared with larger ones (P < .001). The effect of program size on correlation was observed irrespective of whether the FPD was or was not a member of the CCC. Program size was a small but significant factor in the bias in only 2 of the EPAs (P < .05). For the Consultation and Handover EPAs, bias in larger programs was slightly greater than that in smaller ones.

FPD understanding of EPAs and role in position

Fellowship program director understanding of EPAs had little effect on the association between FPD and CCC ratings, except for 1 EPA. In the Consultation EPA, FPD ratings were lower than those of the CCC but only in FPDs who reported an in-depth understanding of EPAs (P < .05). Fellowship program director understanding of EPAs did not vary by subspecialty (P > .05). Similarly, years as FPD had little effect on the FPD/CCC associations. The only difference observed was in the Handover EPA in which FPDs who had been in their position for 4 to 7 years rated their fellows slightly higher than the CCC compared with those in the position fewer than 2 years (P < .05).

Specialty-specific results

Figure 2 shows the correlations between the FPD and CCC ratings by subspecialty for the 6 EPAs. Most of the correlations were 0.70 or greater. There were significant differences in the FPD/CCC agreement by subspecialty for each EPA (P < .001); these were observed when the FPD was and was not a member of the CCC. Correlations ranged from a low of 0.56 for gastroenterology (n = 40) in the Leadprof EPA to a high of 0.91 for nephrology (n = 34) in the Management EPA, both with the FPD on the CCC.

Correlation of level of supervision ratings (mean and 95% CI) between fellowship program directors (FPDs) and Clinical Competency Committees (CCCs) by subspecialty for when the FPD is a member of the CCC. CI indicates confidence interval.

Discussion

In this study, we examined how well FPDs and CCCs agree when assessing fellow performance for 6 of the pediatric subspecialty common EPAs using scales with a large amount of validity evidence. As hypothesized, there is strong correlation between CCC and FPD ratings of the level of supervision required for fellows. With only a few exceptions, a high association was observed in all 14 pediatric subspecialties.

The FPD has the ultimate responsibility of reporting fellow performance to the accreditation and certification regulatory bodies. However, the ACGME mandates that CCCs assess fellow performance using the Pediatric Milestones during their training and advise the FPD about advancing a trainee to the next level (ACGME Program Requirements for Graduate Medical Education) 18 . At the same time, the ABP requires the FPD to verify the ability of fellows to function without supervision at the completion of their training. 19 The strong correlation between the CCC and the FPD ratings of fellow level of supervision suggests that current reporting of trainee performance to the ACGME and the ABP is congruent. In contrast to residency programs, most pediatric fellowship programs are relatively small. This smaller size and opportunity for longitudinal observations over 3 years likely contribute to this high correlation. In fact, the higher associations between fellow level of supervision assessments made by the FPD with those made by the CCC in smaller fellowship programs support this reasoning. Interestingly, lack of familiarity with EPAs and shorter tenure as FPD did not significantly diminish the association. Perhaps this is because identifying the appropriate level of supervision for a fellow is inherent to what supervisors do in practice, thereby requiring less experience or formal training.

Fellowship program directors served as a member of the CCC in just more than half of programs (57.5% in the fall and 55.7% in the spring). Considering the strong correlation between reporting of levels of supervision among FPDs and CCCs, it was particularly important to assure that inclusion of the FPD within the CCC did not lead to rating bias. When comparing CCCs that included the FPD versus those that did not, bias was statistically significant but only in 3 of 6 EPAs. The difference, which was less than 0.2 rating point, is inconsequential in light of the supervision scales, which require faculty to assign whole numbers between 1 and 5. The precision did not differ significantly when the assigned level of supervision was toward the lower or higher end of the supervision scale. Including FPDs in CCCs, therefore, does not appear to unduly influence entrustment decisions. This lack of meaningful bias also indicates that, in cases where the CCC does not include the FPD, the assignment of entrustment level is still reliable.

In a previous study in which level of supervision scales were created for each EPA, we presented validity evidence using the framework advocated by Messick, Downing, and Cook and Beckman. 12 ,20-22 This study provides additional validity evidence for the internal structure of these scales. The correlation between FPDs and CCCs when the FPD was not a member of the CCC was high, indicating good inter-rater reliability. Although there were some differences in the correlation among the subspecialties, the differences were generally small.

The strengths of this investigation include its large sample size and good representation from all pediatric subspecialties. In addition, data were collected at 2 different time-periods. Nonetheless, there are some limitations. This study did not include the Scholarship EPA or the subspecialty-specific EPAs, possibly limiting some of the generalizability. A current investigation evaluating these EPAs is underway. 23 Aside from the presence or absence of the FPD on the CCC, we did not have data on the specific composition of the CCC at participating programs. We also did not have information about the factors that were considered by the FPD and CCC in making their level of supervision ratings or the role of the FPD within the CCC which may have varied at the different institutions. This may warrant future study.

In pediatric fellowships, which are characterized by close, longitudinal interaction between faculty and fellows, there is a strong correlation between FPDs and CCCs in determining the fellow’s required level of supervision for 6 of the pediatric subspecialty common EPAs. Although FPDs and CCCs have different functions, they each reach similar judgments of fellow performance, providing support for the argument that EPAs have an important role in competency-based assessment, an essential element of competency-based training.

Footnotes

Acknowledgements

The authors sincerely thank Alma Ramirez, BS and Beth King, MPP for their assistance with this project.

Funding:

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The American Board of Pediatrics Foundation provided financial support for this project. It had no role in study design, data analysis, and interpretation and writing of the report.

Declaration of conflicting interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author Contributions

RM contributed to the conception and design of the study, acquisition of data, analysis and interpretation of the data and writing and editing of the manuscript.

BH contributed to the conception and design of the study, interpretation of the data and review/editing of the manuscript.

CC contributed to the conception and design of the study, interpretation of the data and review/editing of the manuscript.

TA contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

JB contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

PC contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

JF contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

CS contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

DS contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

PW contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

MC contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

CD contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

PH contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

DH contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

JK contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

JM contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

KMG contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

AM contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

SP contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

DT contributed to the conception and design of the study, acquisition and interpretation of the data and review/editing of the manuscript.

AS contributed to the conception and design of the study, acquisition of data, analysis and interpretation of the data and reviewing/editing of the manuscript.

All authors contributed to the drafting and critical revision of the paper and approved the final manuscript for submission.

Collaborators

Advocate Lutheran General Hospital: Vinod Havalad; Albany Medical Center: Joaquim Pinheiro; Albert Einstein College of Medicine/Montefiore Medical Center: Elizabeth Alderman, Mamta Fuloria, Megan E. McCabe, Jay Mehta, Yolanda Rivas, Maris Rosenberg; Baylor College of Medicine: Cara Doughty, Albert Hergenroeder, Arundhati Kale, YoungNa Lee-Kim, Jennifer A. Rama, Phil Steuber, Bob Voigt; Benioff Children’s Hospital Oakland/UCSF: Karen Hardy, Samantha Johnston; Boston Children’s Hospital/Children’s Hospital: Debra Boyer, Carrie Mauras, Alison Schonwald, Tanvi Sharma; Brown University/Rhode Island Hospital-Lifespan: Christine Barron, Penny Dennehy, Elizabeth S Jacobs, Jennifer Welch; Case Western Reserve University/Metro Health: Deepak Kumar; Case Western Reserve University/Rainbow Babies and Children’s Hospital: Katherine Mason, Nancy Roizen, Jerri A. Rose; Children’s National Medical Center/George Washington University: Brooke Bokor, Jennifer I Chapman, Lowell Frank, Iman Sami, Jennifer Schuette; Children’s Hospital Medical Center of Akron: Ramona E Lutes, Stephanie Savelli; Children’s Hospital of Los Angeles: Rambod Amirnovin, Rula Harb, Roberta Kato, Karen Marzan, Roshanak Monzavi, Doug Vanderbilt; Cincinnati Children’s Hospital Medical Center: Lesley Doughty, Constance McAneney, Ward Rice, Lea Widdice; Cleveland Clinic Children’s Hospital: Fran Erenberg, Blanca E Gonzalez; Duke University Medical Center: Deanna Adkins, Deanna Green, Aditee Narayan, Kyle Rehder; Eastern Virginia Medical School: Joel Clingenpeel, Suzanne Starling; Emory University School of Medicine: Heidi Eigenrauch Karpen, Kelly Rouster-Stevens; Georgia Regents University/Medical College of Georgia: Jatinder Bhatia; Indiana University Medical Center-Riley Hospital for Children: John Fuqua; Johns Hopkins University: Jennifer Anders, Maria Trent; LAC+USC Medical Center: Rangasamy Ramanathan; Loma Linda University Children’s Hospital: Yona Nicolau; Maria Fareri Children’s Hospital Westchester Medical Center/New York Medical College: Allen J. Dozor; Massachusetts General Hospital: Thomas Bernard Kinane, Takara Stanley; Mayo Clinic College of Medicine (Rochester): Amulya Nageswara Rao; McGaw Medical Center of Northwestern University/Lurie Children’s Hospital of Chicago: Meredith Bone, Lauren Camarda; Medical College of Wisconsin, Children’s Hospital of WI: Viday Heffner, Olivia Kim, Jay Nocton, Angela L Rabbitt, Richard Tower; Medical University of South Carolina: Michelle Amaya, Jennifer Jaroscak, James Kiger, Michelle Macias, Olivia Titus; Michigan State University: Modupe Awonuga; National Capital Consortium (Walter Reed): Karen Vogt, Anne Warwick; Nationwide Children’s Hospital/Ohio State University: Dan Coury, Mark Hall, Megan Letson, Melissa Rose; New York Presbyterian Hospital-Columbia campus/Morgan Stanley Children’s Hospital: Julie Glickstein, Sarah Lusman, Cindy Roskind, Karen Soren; Nicklaus Children’s Hospital-Miami Children’s Hospital: Jason Katz, Lorena Siqueira; North Shore-LIJ/ Cohen Children’s Medical Center of New York: Mark Atlas, Andrew Blaufox, Beth Gottleib, David Meryash, Patricia Vuguin, Toba Weinstein; Oregon Health and Science University Hospital: Laurie Armsby, Lisa Madison, Brian Scottoline, Evan Shereck; Phoenix Children’s Hospital: Michael Henry, Patricia A. Teaford; St. Christopher’s Hospital for Children: Sarah Long, Laurie Varlotta, Alan Zubrow; Stanford University/Lucile Packard Children’s Hospital: Courtenay Barlow, Heidi Feldman, Hayley Ganz, Paul Grimm, Tzielan Lee; SUNY Upstate Medical University: Leonard B. Weiner; Thomas Jefferson University Hospital /duPont Hospital for Children Program: Zarela Molle-Rios, Nicholas Slamon; Thomas Jefferson University Hospital/duPont Hospital for Children Program/Christiana: Ursula Guillen; Tufts Medical Center: Karen Miller; UCLA Medical Center/Mattel Children’s Hospital: Myke Federman; University of Alabama Medical Center at Birmingham: Randy Cron, Wyn Hoover, Tina Simpson, Margaret Winkler; University of Arkansas for Medical Sciences: Nada Harik, Ashley Ross; University of Buffalo-Women and Children’s Hospital of Buffalo: Omar Al-Ibrahim, Frank P. Carnevale, Wayne Waz; University of California Irvine/Miller Children’s Hospital: Fayez Bany-Mohammed; University of California San Diego Medical Center, Rady Children’s Hospital: Jae H. Kim, Beth Printz; University of California San Francisco: Mike Brook, Michelle Hermiston, Erica Lawson, Sandrijn van Schaik; University of Chicago Medical Center: Alisa McQueen, Karin Vander Ploeg Booth, Melissa Tesher; University of Colorado School of Medicine/ Children’s Hospital Colorado: Jennifer Barker, Sandra Friedman, Ricky Mohon, Andrew Sirotnak; University of Connecticut/Connecticut Children’s Medical Center: John Brancato, Wael N. Sayej; University of Florida College of Medicine at Jacksonville: Nizar Maraqa; University of Florida College of Medicine-J Hillis Miller Health Center: Michael Haller; University of Hawaii/Tripler Army Medical Center: Brenda Stryjewski; University of Iowa Hospitals and Clinics: Pat Brophy, Riad Rahhal, Ben Reinking, Paige Volk; University of Louisville: Kristina Bryant, Melissa Currie, Katherine Potter; University of Maryland School of Medicine: Alison Falck; University of Massachusetts Memorial Medical Center: Joel Weiner; University of Michigan Medical Center: Michele M. Carney, Barbara Felt; University of Minnesota Medical Center: Andy Barnes, Catherine M Bendel, Bryce Binstadt; University of Missouri at Kansas City/Children’s Mercy Hospital: Karina Carlson, Carol Garrison, Mary Moffatt, John Rosen, Jotishna Sharma, Kelly S. Tieves; University of Nebraska Medical Center: Hao Hsu, John Kugler, Kari Simonsen; University of New Mexico School of Medicine: Rebecca K. Fastle; University of Oklahoma Health Sciences Center: Doug Dannaway, Sowmya Krishnan, Laura McGuinn; University of Pittsburgh Medical Center/Children’s Hospital of Pittsburg: Mark Lowe, Selma Feldman Witchel, Loreta Matheo; University of Rochester School of Medicine, Golisano Children’s Hospital: Rebecca Abell, Mary Caserta, Emily Nazarian, Susan Yussman; University of Tennessee College of Medicine/Le Bonheur Children’s Hospital: Alicia Diaz Thomas, David S Hains, Ajay J. Talati; University of Tennessee College of Medicine/St. Jude Children’s Research Hospital: Elisabeth Adderson; University of Texas Health Science Center School of Medicine at San Antonio: Nancy Kellogg, Margarita Vasquez; University of Texas Austin Dell Children’s Medical School: Coburn Allen; University of Texas Southwestern Medical School: Luc P Brion, Michael Green, Janna Journeycake, Kenneth Yen, Ray Quigley; University of Utah Medical Center: Anne Blaschke, Susan L Bratton, Christian Con Yost, Susan P Etheridge, Toni Laskey, John Pohl, Joyce Soprano; University of Virginia Medical Center: Karen Fairchild, Vicky Norwood; University of Washington/Seattle Children’s Hospital: Troy Alan Johnston, Eileen Klein, Matthew Kronman, Kabita Nanda, Lincoln Smith; University of Wisconsin School of Medicine and Public Health: David Allen, John G. Frohna, Neha Patel; Vanderbilt University Medical Center: Cristina Estrada, Geoffrey M. Fleming, Maria Gillam-Krakauer, Paul Moore; Virginia Commonwealth University Health System: Joseph Chaker El Khoury; Wake Forest University School of Medicine: Jennifer Helderman; West Virginia University: Greg Barretto; William Beaumont Hospital: Kelly Levasseur; Yale University School of Medicine: Lindsay Johnston.