Abstract

INTRODUCTION

Competency-based medical education (CBME) has transformed postgraduate medical training, prioritizing competency acquisition over traditional time-based curricula. Integral to CBME are Entrustable Professional Activities (EPAs), that aim to provide high-quality feedback for trainee development. Despite its importance, the quality of feedback within EPAs remains underexplored.

METHODS

We employed a cross-sectional study to explore feedback quality within EPAs, and to examine factors influencing length of written comments and their relationship to quality. We collected and analyzed 1163 written feedback comments using the Quality of Assessment for Learning (QuAL) score. The QuAL aims to evaluate written feedback from low-stakes workplace assessments, based on 3 quality criteria (evidence, suggestion, connection). Afterwards, we performed correlation and regression analyses to examine factors influencing feedback length and quality.

RESULTS

EPAs facilitated high-quality written feedback, with a significant proportion of comments meeting quality criteria. Task-oriented and actionable feedback was prevalent, enhancing value of low-stakes workplace assessments. From the statistical analyses, the type of assessment tool significantly influenced feedback length and quality, implicating that direct and video observations can yield superior feedback in comparison to case-based discussions. However, no correlation between entrustment scores and feedback quality was found, suggesting potential discrepancies between the feedback and the score on the entrustability scale.

CONCLUSION

This study indicates the role of the EPAs to foster high-quality feedback within CBME. It also highlights the multifaceted feedback dynamics, suggesting the influence of factors such as feedback length and assessment tool on feedback quality. Future research should further explore contextual factors for enhancing medical education practices.

Keywords

Introduction

Competency-based medical education (CBME) has brought a profound transformation in postgraduate medical education.1,2 Departing from traditional time-based curriculum approaches, CBME emphasizes trainees’ progression based on attaining competencies.3,4 The successful implementation of CBME requires incorporating new methods of assessment that emphasize assessment continuity, enabling the connection between assessment and learning activities and ensuring competency of new physicians. 5 These new assessment methods should also provide enough meaningful information stimulating trainees’ further development, and empower trainees to actively engage in their assessment process.4,6,7

To operationalize CBME, the concept of Entrustable Professional Activities (EPAs) has been introduced as a novel assessment method. 8 EPAs are concrete tasks or responsibilities at the heart of a medical profession, where trainees should demonstrate competence in order to be entrusted.9,10 Unlike competencies, EPAs reflect real-world clinical activities and offer a more intuitive framework to assessors and trainees. By delineating concrete tasks and allowing observation of performance in clinical settings, EPAs could facilitate meaningful feedback.11,12 Typically, EPAs consist of numerical entrustment scales and written feedback to support further development. 13 Through written feedback, trainees receive necessary information about their performance, identifying strengths and areas for improvement, not only within the scope of EPAs but across their broader development. 14 This iterative process is meant to enhance quality of feedback and facilitate its documentation.9,11

To promote further learning, written feedback of high-quality should be aligned with the EPA task, contain enough information on performance, and indicate areas for improvement.11,14 High-quality written feedback comments can provide valuable context to entrustment scales and performance that numerical scores alone cannot capture.15,16 Despite the indisputable significance of written feedback in learning, there is little evidence regarding feedback within EPAs. 17 Therefore, there is a need for examining whether EPAs influence the quality of feedback. This study aims to investigate the characteristics and factors that influence the quality of feedback provided within EPAs.

Methods

Organizational Setting

To establish a standardized curriculum across Flanders, 4 universities (KU Leuven, Ghent University, University of Antwerp, and Free University Brussels) have collaboratively designed a General Practitioner's (GP) Training. The GP Training lasts for 3 years and is structured into 3 phases. The first phase entails a 12-month traineeship in a GP practice, followed by a 6-month hospital traineeship in the second phase, and concluding with an 18-month traineeship in a GP practice for the third phase. Complementing clinical traineeships, trainees are required to attend classes at their respective home universities and actively participate in peer-learning groups facilitated by university tutors.

The practical coordination and decision-making responsibilities regarding this curriculum were entrusted to the Interuniversity Centre for GP Training (ICGPT). Among its responsibilities, the ICGPT is accountable for tasks such as allocating clinical traineeships, administering examinations, preparing trainers for their role, safeguarding quality of training in clinical practice, and managing trainees’ learning e-portfolios, where their competencies are assessed and recorded.

Educational Setting and Participants

In 2018, the ICGPT initiated a transition to CBME, by incorporating the Canadian Medical Education Directives for Specialists (CanMEDS) roles into the curriculum. This integration prompted stipulation of assessment guidelines for the clinical traineeships. Trainees were required to have 3 high-stakes evaluations based on the CanMEDS roles on yearly basis, with their workplace trainer and their university-tutor, respectively. Additionally, to facilitate low-stakes workplace-based assessments, trainees were expected to document 5 video-consultations, subsequently evaluated by their trainers. An institutional web-based portfolio was developed to streamline the assessment process.

In 2022, in collaboration with the ICGPT, we introduced an EPA based framework to enhance workplace-based assessment. 18 In total, we developed 60 EPAs covering different care contexts specific for Primary Care (ie, short-term care, chronic care, emergency care, palliative care, elderly care, care for children, mental healthcare, prevention, gender related care, and practice management). For more complicated EPAs, we defined behavior anchors that trainees should demonstrate to be entrusted with a given EPA. An example of such an EPA under short-term care is “Clarify the need for assistance (Ideas, Concerns, Expectations), take a medical history (focused on the diagnostic landscape), conduct a targeted physical examination, and arrive at a diagnosis or a ‘working hypothesis’.” The accompanying behavior anchors of this EPA are (1) Take a medical history, focused on the tract or part thereof related to the need for assistance or complaint. (2) Conduct a physical examination. (3) Based on the above: make a diagnosis or arrive at a refined ‘working hypothesis’. (4) Decide whether additional diagnostics are needed or if treatment and policy should be continued.

Concretely, EPAs were operationalized through a form available in the e-portfolio. 18 EPAs could be assessed through direct observation, video-observation, and/or case-based discussions. Both trainees and trainers could initiate an EPA assessment, by filling in the form choosing which EPAs needed to be assessed. Then, they had to choose an entrustability level based on the Ottawa Surgical Competency Operating Room Evaluation (O-SCORE), ranging from “I had to intervene” to “Supervising others during this EPA.” 19 Moreover, 2 feedback fields were available encouraging positive (“What goes well”) and negative feedback (“What needs improvement”). Once trainees completed an EPA form, trainers were notified in their e-portfolio accounts that an EPA assessment needs approval. We used simple random sampling to recruit our participants. 20 We recruited on voluntary basis dyads of trainees who were in the first phase of the GP Training, along with their trainers. Trainees and trainers were asked in their e-portfolio through a pop-up window whether they wanted to participate in the study.

Study Design

We employed a cross-sectional design, using quantitative content analysis to indicate quality of feedback from EPA assessments, and a correlation analysis and a multiple regression analysis.21,22 We used the Quality of Assessment for Learning (QuAL) score to analyze and code the feedback comments, along with characters number as a quality proxy. 23 Prior studies have established validity evidence of the QuAL score for evaluating short feedback comments produced during workplace-based assessments.23,24 The QuAL score comprises 3 key criteria as illustrated in Figure 1. The first component pertains to whether and to what extent the feedback provides evidence about the trainees’ performance. The second component focuses on the presence of suggestions for improvement, while the third component asks whether these suggestions are linked to the described behavior. 23

Structure of quality of assessment for learning (QuAL) Score. 23

Data Collection and Analysis

In collaboration with the developers of the e-portfolio, we collected feedback comments registered in trainees’ e-portfolios, after completing EPA assessments. The EPAs were assessed by workplace trainers, spanning the period of December 2022 until September 2023. All feedback comments were anonymous to limit potential bias. Initially, we examined all feedback comments and familiarized ourselves with the content and the QuAL scoring scale. We analyzed the data per EPA assessment for each QuAL component. Two raters independently scored a pilot of 120 comments. Throughout the pilot scoring process, we maintained documentation of decisions to enhance methodological rigor. Afterwards, we compared the results, discussed and reviewed ambiguities. 25 After completion of the coding, we aggregated the scores of each QuAL component to decide overall quality. Overall quality ranged from 0 (no quality) to 5 (most quality). We also calculated inter-rater reliability based on intraclass correlation coefficient (ICC) to ensure consistency between the 2 raters. 26 We used Microsoft Excel to code and analyze the feedback comments.

Furthermore, we used non-parametric Spearman's correlation coefficients (rs), since our data violated the assumption of normality. We investigated the relationship between the total quality score on QuAL, number of characters that each feedback comment contained, the entrustment level, and the type of assessment tool used.27,28 By doing so, we could discover possible relationships and patterns in the data. We recoded the type of assessment tool into a dummy variable (1: Video observation, 2: Direct observation, 3: Case-based discussion). Based on the results of the Spearman's correlations, we performed a hierarchical multiple regression analysis to investigate which parameters influence the number of characters in feedback comments. Variables demonstrating stronger correlations with the outcome variable, namely the number of characters, were prioritized, specifically, total quality score and type of assessment tool. We opted not to include entrustment level on EPAs, because of its weak correlation with the outcome variable. We used number of characters as the outcome variable and the identified predictors based on Spearman's correlations. All statistical analyses were performed in IBM SPSS Statistics (Version 29). The level of significance was set at P < .05. The reporting of this study conforms to the Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) Statement 29 (Supplemental File 1).

Results

Descriptive Statistics

In total, 199 trainees and trainers agreed to participate. We collected 1163 feedback comments from 1163 EPA assessment forms. Case-based discussions were used 419 times as an assessment tool, observation of performance via video was used 410 times, while 334 direct observations took place. The average number of characters was 400 characters per comment, the average total quality score was 3.63, and the average entrustment score was 3.62 out of 5 (Table 1).

Descriptive statistics.

Quality of Feedback Comments

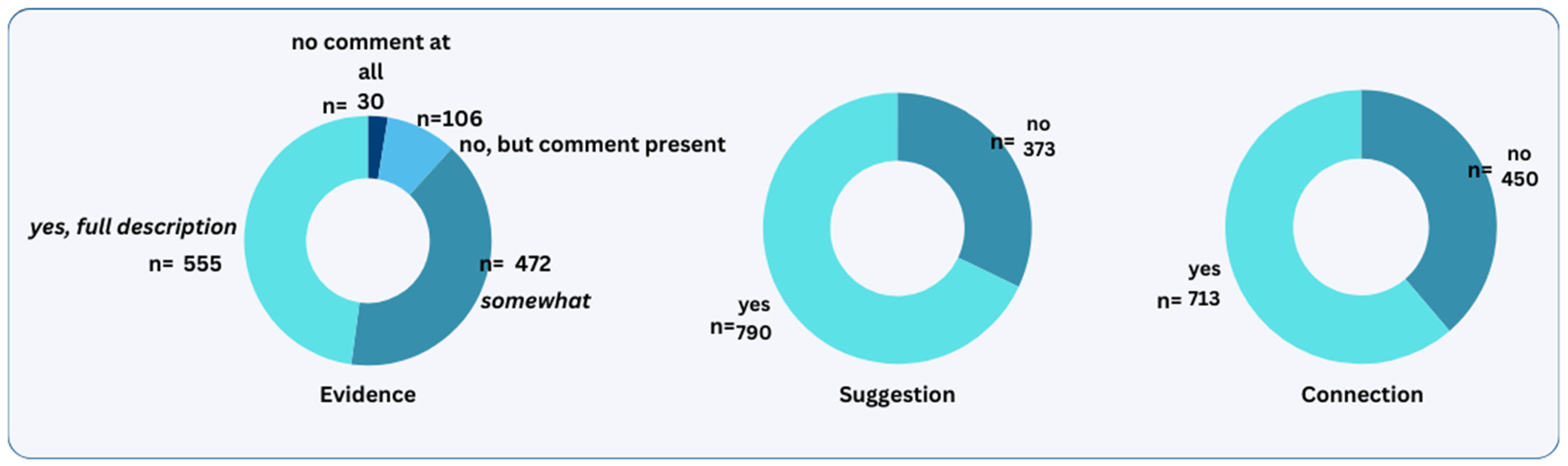

During the coding stage, the feedback comments were coded and assessed according to the QuAL criteria: evidence, suggestion, and connection. Intraclass correlation coefficients fell within an acceptable range (0.5-0.8), indicating consistency and agreement between the 2 raters (Table 2). 26 Because ICC for connection was close to the lower acceptable range, we discussed it afterwards to solve discrepancies. Most feedback comments provided scored high on evidence about trainees’ performance (n = 555), included suggestions for improvements (n = 790) and linked these suggestions to the behavior described (n = 713) (Figure 2).

Distribution of quality ratings of feedback comments across the QuAL scale.

Intraclass correlation coefficients (ICC) measuring inter-rater agreement.

The feedback comments were categorized into 3 distinct quality levels based on their total quality scores: low, moderate, and high. Low-quality comments received scores ranging from 0 (n = 21) to 1 (n = 96), moderate-quality comments scored between 2 (n = 163) and 3 (n = 155), while high-quality comments scored between 4 (n = 308) and 5 (n = 420).

Feedback comments of low quality received a score of either 0 or 1 in evidence, 0 in suggestion, and 0 in connection. They either lacked any comments or contained evidence that was insufficiently clear or relevant to the assessed EPA. The following example illustrates a low quality feedback comment given on the EPA “Clarifies the help request (Ideas, Concerns, Expectations), takes medical history (focused on diagnostic landscape), performs a targeted physical examination, reaches at a diagnosis or ‘working hypothesis’”: “communication, structure of medical history” (comment 29).

Moderate quality feedback comments received a score of 2 in evidence, or a combined score of 1 to 2 in evidence and 1 in suggestion. However, they did not link the provided suggestion to the behavior being assessed. This is an example of a feedback comment given for the same EPA as above: “[Anonymized] conducts a comprehensive medical history taking and fills in correctly (patient's) Electronic Medical Record. [Anonymized] sometimes still doubts when it comes to musculoskeletal pathology. However, [anonymized] has looked up and studied more in the meanwhile. I already notice an improvement.” (comment 65)

High-quality feedback comments received a combined score of 2 to 3 in evidence, 1 in suggestion and 1 in connection. They included evidence on trainees’ performance, had suggestions for improvements, and linked those to the assessed EPA behavior. The following feedback comment had a score of 5 on the same EPA as above: “Clear and comprehensive medical history taking with open and closed questions. Understanding of the complaint. Clear explanation about the clinical findings. Clear communication of the diagnosis. Correct and well-communicated treatment plan. Presentation of alarm symptoms and discussion of further course. Overall, very good and thorough consultation with a lot of understanding, empathy, and patience for the patient. (Improvement) Mixing open and closed questions at times. Asking useless questions with no added value for diagnosis or differential diagnosis (possibly influenced by filming oneself and thus appearing somewhat artificial?). Information (given to the patient) sometimes overly detailed, leading to the loss of the main message by the end of the consultation.” (comment 7)

Correlations

Number of characters was significantly and positively related to total quality score (rs = .344). The correlation between number of characters and entrustment score given for an EPA was also significant, but weak (rs = .079). There was also a significant correlation between number of characters and type of assessment tool used for the EPA (rs = −.237). In Table 3, the findings related to Spearman's correlations are displayed and the most important findings are discussed. Moreover, entrustment score was significantly related to the type of assessment tool used for the EPAs (rs = −.082). There was no significant correlation between total quality score and given entrustment score, rs = −.02, P = .503.

Nonparametric correlations (Spearman's correlation coefficients). a

BCa bootstrap 95% confidence intervals reported in brackets.

P < .01.

Multiple Regression Model

From the regression analysis, a R2 value of .155 obtained suggesting that the predictors included in the model collectively explained approximately 15.5% of the variability observed in the lengths of written feedback (Table 4). Moreover, the overall regression model was statistically significant, F = 106.369, P < .001, indicating that the predictors significantly contributed to the prediction of the number of characters in feedback comments. For every one unit increase in quality score, the dependent variable increased by 90.278 (P < .001), suggesting that feedback quality has a positive effect on feedback length. Additionally, the regression coefficients for the type of assessment tool was −90.523 (P < .001), indicating a significant effect of the assessment tool used on number of characters.

Regression Results Using Total Score on QuAL Score as the Criterion. a

b represents unstandardized regression weights, beta indicates the standardized regression weights, LL and UL indicate the lower and upper limits of a confidence interval, respectively, P represents the P value.

P < .01.

P < .05.

Discussion

In this study, we analyzed a set of feedback comments derived from EPAs, with the dual aim of evaluating and examining their quality, and investigating factors influencing the length of the feedback provided. Our findings suggest that EPAs can generate high-quality formative feedback, contributing to the literature about EPA assessments and providing evidence on feedback quality. Remarkably, a substantial proportion (approximately 63%) of the feedback comments had high-quality scores, fulfilling all 3 quality criteria (evidence, suggestion, and connection).

Consistent with prior research, our results indicated that most feedback comments were task-oriented and actionable.30,31 Notably, around 62% of these comments included concrete suggestions for improving the assessed behavior. By enhancing actionable feedback, EPAs can play a pivotal role in promoting learning growth, particularly in the context of low-stakes assessments in the workplace. 32 Furthermore, our findings suggest that EPAs can serve as valuable tools in supporting implementation of CBME, by linking learning activities to assessment.6,7

Nevertheless, we did not find a significant correlation between entrustment score and feedback quality. A potential explanation is that quality of feedback does not directly reflect trainees’ performance. Registered feedback might be limited due to other competing needs and increased demands of clinical work. 33 Another explanation for this finding is the impact of using numerical scales for entrustment. Trainers might have chosen a higher entrustment level than they should have, in order not to disappoint their trainees. 34 In the context of low-stakes assessment, using numbers might hamper the learning purpose of assessment, and blur the stakes for the stakeholders involved. 35 This finding suggests that the use of numbers might not be beneficial for low-stakes assessments and aligns with rising concerns about the sustainability of entrustment scales.34,36

Also, our findings illustrate the multifaceted nature of feedback quality, indicating an interplay of various influential factors. Despite our regression model explaining only 15.5% of variability, it shed light on crucial feedback dynamics. First, the length of feedback comments is influenced by the quality, suggesting that longer and more extensive comments tend to be of higher quality. Interestingly, our results indicate that the tool used in EPAs is also relevant for both the length and quality of feedback. Specifically, video or direct observations offer higher quality of feedback in comparison to case-based discussions. This echoes the importance of observing trainees during clinical trainings and strengthens observations as a key strategy in CBME implementation.37–39

Finally, future research should focus on comparing the quality of feedback provided by EPAs with feedback provided by other forms of workplace-based assessments. Such a comparison could help to further contextualize the strengths and weaknesses of EPAs compared to other assessment methods, providing a more comprehensive understanding of how different assessment tools impact feedback quality within a workplace context. Additionally, this could identify best practices and areas for improvement across various assessment methodologies.

Limitations

While our study had significant strengths in terms of sampling adequacy and rigorous analysis, certain limitations need to be acknowledged. Due to the fact that we relied on a third party for data collection, we might have missed some variables relevant to feedback quality. For instance, other contextual factors, such as frequency of EPAs or trainers’ experience, could also play a significant role on feedback quality. Future research should seek to explore how these variables relate to feedback quality. One more potential limitation is the voluntary basis for recruitment, which may have introduced selection bias. Given that participation was voluntary, it is possible that the dyads that chose to participate were more motivated to use the EPA framework, potentially leading to a higher proportion of high-quality feedback comments. Furthermore, although the QuAL scale comprises 3 key criteria, it might not capture all dimensions of feedback quality comprehensively. Nonetheless, we selected this scale due to its established validity and structured criteria.40,41 Also, the QuAL scale is constructed to evaluate feedback in workplace-based assessments, aligning with our study setting. 23

Conclusion

Given the importance of EPAs within CBME, this study provides evidence on the potential of the EPAs to foster high-quality feedback. In this study, EPA feedback comments contained evidence of performance and improvements linked to the assessed behavior, highlighting the potential of EPAs to foster formative feedback leading to learning growth. Furthermore, this study shed light onto the multifaceted feedback dynamics, with findings indicating the influence of factors such as feedback length and type of assessment tool. Consequently, this study contributes evidence to the literature on EPAs and their role in low-stakes assessments.

To further investigate feedback challenges, future research should explore the impact of contextual factors on feedback quality, building upon the results of this study to enhance medical education practices. Also, future research should seek to replicate these findings in another context and, eventually, include a wider scope of influential factors beyond those explored in this study. Such factors could be frequency or complexity of EPAs, trainers’ experiences, and the relationship between trainee and trainer. Also, further studies could explore the discrepancy between entrustment score and feedback quality. It is necessary to understand how the use of numerical scales for entrustment can affect feedback quality and explore whether there are alternative methods. Finally, the use of video observations in EPAs is worth exploring further. Technological advancements could offer possible solutions and advancements in medical education.

Supplemental Material

sj-docx-1-mde-10.1177_23821205241275810 - Supplemental material for Evaluating Feedback Comments in Entrustable Professional Activities: A Cross-Sectional Study

Supplemental material, sj-docx-1-mde-10.1177_23821205241275810 for Evaluating Feedback Comments in Entrustable Professional Activities: A Cross-Sectional Study by Vasiliki Andreou, Sanne Peters, Jan Eggermont and Birgitte Schoenmakers in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgments

The authors would like to thank Mr Guy Gielis, Mrs. An Stockmans, Mrs. Fran Timmers, and Mrs Karolina Bystram from the Interuniversity Center for GP Training that facilitated this study. The authors would also like to thank Mr Karel Verbert and Mrs. Cindy Rossi (Imengine-Medbook) for facilitating data collection through the Medbook e-portfolio. Finally, the authors would like to thank and acknowledge Prof d.Martin Valcke and Dr Mieke Embo for facilitating this study through the SBO SCAFFOLD project (![]() ).

).

Author Contributions

All authors contributed to designing the study. VA wrote the protocol, led the data analysis, and wrote this manuscript. BS participated in the data analysis as a second coder to achieve investigator triangulation. SP, BS, and JE contributed to the critical revision of the paper. All authors have read and approved the manuscript.

Data Availability

The datasets used and/or analyzed during the current study are available from the corresponding author on reasonable request.

DECLARATION OF CONFLICTING INTERESTS

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

This study was approved by the KU Leuven Social and Societal Ethics Committee G-2022-5615-R2(MIN), and all participants signed an informed consent prior to participation.

FUNDING

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Research Foundation Flanders (FWO) under Grant [S003219N]-SBO SCAFFOLD.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.