Abstract

Online media shapes public opinion, with messages from opposing information sources deepening polarization. How content is framed and presented determines its meaning and impact on readers. To date, studies on framing have focused on finding certain phrases, topics, or ideas characterizing messages from particular sources, a strategy providing only limited results. In this study, we used a broader, agnostic approach that involves extracting the narrative from text, including its characters, plot, setting, and moral of the story, and the relationships between these elements. We translated text from tens of thousands of health documents in the language of conspiracy corpus (LOCO) into representations called semantic graphs. We then compared these graphs across documents from conspiracy and mainstream sources. We found that conspiracy media framed health through belief, emphasizing immediacy and individual impact, whereas mainstream media used scientific framing with a long-term, institutional focus. Shared words carried divergent narratives. As one example, in documents related to COVID-19, conspiracy media emphasized preventing violence rather than preventing infection (the mainstream narrative). Understanding conspiracy narratives is crucial for policymakers and media platforms seeking to curb their spread. This understanding can inform efforts to boost media literacy and reduce the number of posts peddling misinformation. We suggest using automated tools and AI as an aid in both efforts.

Keywords

How messages shape people’s perceptions of reality, and their subsequent behavior, relies heavily on framing, or how the information is presented. In their groundbreaking study, Tversky and Kahneman 1 showed that framing affects people’s perspectives of a situation and therefore their decision-making. They likened a frame to a lens offering a particular view of a visual scene or scenario. For example, when asked to decide on a treatment for a fictitious disease that would kill 600 people, respondents favored the solution that saved 200 people by a huge margin over the one in which 400 people would die, even though the two were essentially identical.

Text framing focuses on some aspects of a scenario, making these aspects more salient than others and more likely to drive decisions. In 1993, Entman defined framing from this perspective: “To frame is to select some aspects of a perceived reality and make them more salient in a communicating text, in such a way as to promote a particular problem definition, causal interpretation, moral evaluation, and/or treatment recommendation for the item described.” 2

Whereas Tversky and Kahneman analyzed the cognitive effects of various frames on readers, we study frames from a mechanistic point of view. That is, we aim to delineate the linguistic attributes that sway readers, but our work does not extend to determining exactly how these attributes influence thought. As such, the frames we study are communicative as opposed to cognitive. See Reference 3 for an explanation of these frames.

Framing is an important factor in societal polarization and the spread of conspiracy theories. In recent years, researchers have used computational tools to identify framing patterns associated with different sides of political debates. Many of these studies focused on particular types of frames—for instance, using war terminology 4 or a “blame frame” 5 in social media discourse related to the COVID-19 pandemic. Other investigations isolated topics (solidarity and policy versus ideology and investigation) used predominantly by Democrats or Republicans to frame discussions about mass shootings. 6 In a previous work, 7 we highlighted moral frames behind particular political viewpoints.

These topic- or feature-based approaches unearth specific linguistic patterns that conform to a category. However, they do not reveal patterns outside this category or information that emerges from a passage as a whole, such as a potential rationale for the framing. These approaches often cannot adequately capture framing differences because texts with conflicting narratives may use similar words at similar frequencies. For instance, an analysis of tweets from conspiracy and science influencers showed an overlap in word usage. 8 Both groups used causal words such as how, why, and because, and outgroup language (they, them). Study findings also highlighted some linguistic patterns specific to conspiracy influencers, such as words that convey negative emotions.

In the present study, we used a more holistic approach to compare text framing in conspiracy websites to that in mainstream websites. Instead of looking for any particular feature or pattern in text, such as war metaphors or blame verbiage, we analyzed the text as a narrative, identifying its setting, characters, plot, and moral. These narrative frames are abstract constructs that refer to entire messages rather than individual content features. 9 They are also agnostic about the types of linguistic attributes that may differentiate the two types of media.

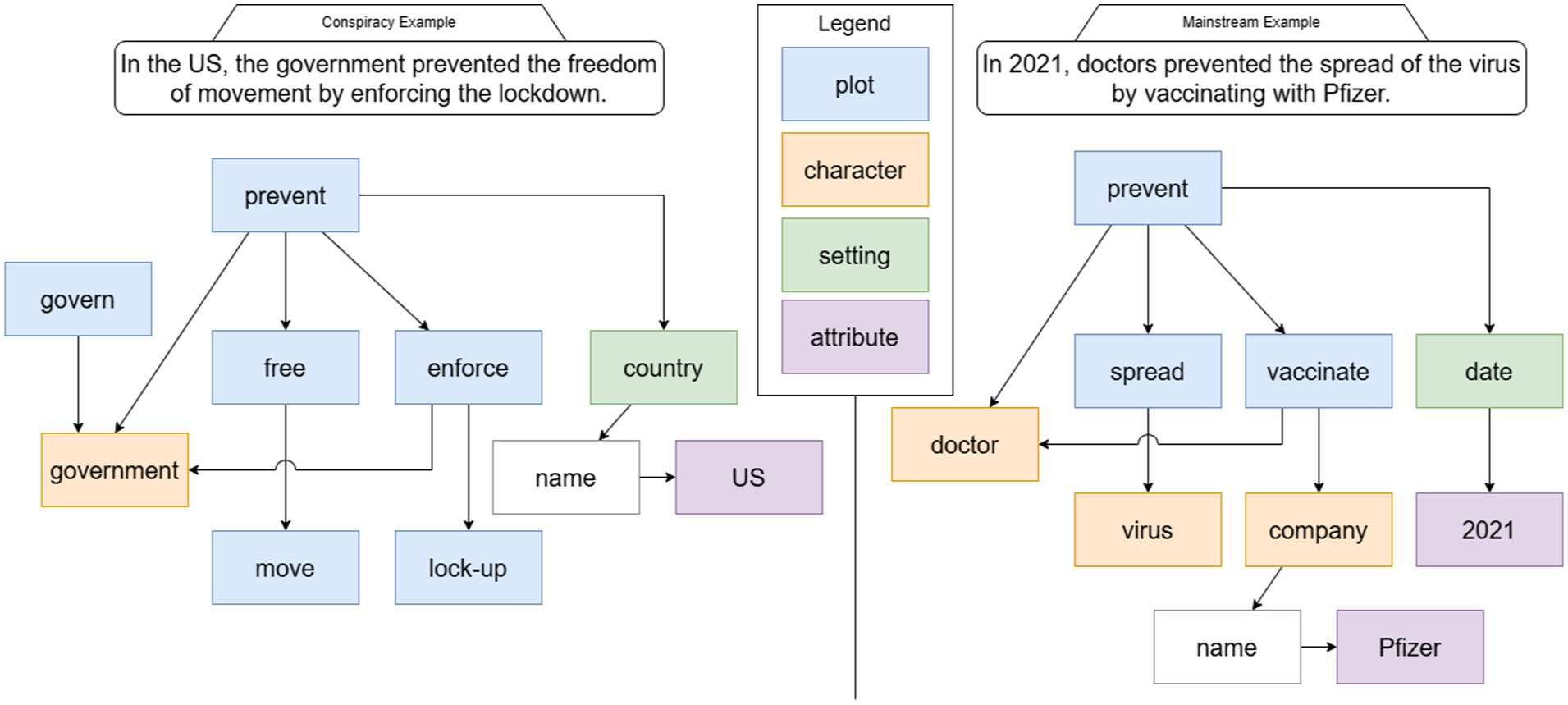

To identify narrative frames, we used a computational tool that translates text into semantic graphs (see Figure 1). The process strips the text of grammar so only narrative elements and their relationships remain. We applied this method to health-related documents from mainstream and conspiracy websites mined from the language of conspiracy corpus (LOCO). 10 We then compared graphs extracted from conspiracy and mainstream content to reveal framing differences.

Translation of two sentences into semantic graphs with narrative elements

We found that conspiracy content tends to be based on beliefs, using words such as claim and God, whereas mainstream media typically invokes scientific arguments, advanced by terms such as treat and University. Our approach also revealed how certain terms, such as prevent, appear in divergent narratives with different meanings. This nuance underscores the need for prompt action to identify and curb the use of common terms in promoting conspiracies before reconciling their separate meanings becomes nearly impossible. See the sidebar “Recommendations for Policymakers” for policy solutions.

Highlights

This article presents a novel method for extracting framing information from conspiracy and mainstream media through translating text into graphical representations that connect narrative elements such as plot, character, and setting.

Certain words and themes distinguish conspiracy from mainstream documents. Furthermore, mainstream and conspiracy media use some of the same terms to tell dramatically different narratives.

Policymakers and others can leverage this understanding of conspiracy narratives to reduce the amount of misleading content and educate the public on how to evaluate such content, reducing its impact on public health.

Data Source & Selection

We extracted narrative frames from documents pertaining to three health-related topics in LOCO, a database of nearly 97,000 documents from 150 English-language websites (92 mainstream and 58 conspiracy). 10 These documents, collected in 2020 and dating to 2004, were labeled as conspiracy if they originated from a website known to publish unverifiable information not always supported by evidence, as determined by the Media Bias/Fact Check list https://mediabiasfactcheck.com/conspiracy/). They were labeled as mainstream if they originated from other sites. See Reference 10 for details on data collection and processing.

We defined three health-related groupings using the following keywords: COVID-19 (vaccine.covid, covid.19, and coronavirus), diseases (aids, cancer, zika.virus, and ebola), and pharmacology (vaccine, pharma, and drug). This resulted in a batch of 8,722 documents related to COVID-19, 11,173 documents pertaining to other diseases, and 13,753 pharmacology documents. From these documents, our algorithm generated hundreds of thousands of semantic graphs representing narrative frames and elements, as described in the next section. Please see Table S1 in the Supplemental Material for a breakdown of the number of conspiracy and mainstream documents, graphs, and narrative elements for each topic.

Translation of Text Into Semantic Graphs

We use a graph-based class of tools called abstract meaning representations 11 to extract narrative frames from documents in LOCO. Our tool transforms text into semantic graphs, a format that a machine can understand. A semantic graph describes a sentence’s core meaning by homing in on who does what to whom, stripping away grammar such as verb tenses (syntax) and articles or prepositions (the, by). Similar graphs would, for instance, describe “Girl pets cat” and “The cat was pet by the girl.” In the graphs, nodes represent concepts (such as girl, cat, pet), and edges depict the semantic relationships—for instance, subject–object, the petter and the receiver of pets—between the concepts.

Figure 1 shows slightly simplified semantic graphs for two sentences, both referring to COVID-19, representing mainstream and conspiracy media. The graphs depict characters in orange (doctor, government), plot in blue (vaccinate, govern), setting in green (date, country), and attributes of setting (2021, U.S.) and of character (Pfizer) in purple.

In this case, the algorithm pulled out prevent as the dominant plot point for both sentences, so it appears at the top of the graphs. The arrows indicate relationships between the concepts. In the mainstream example, doctor is connected to two plot points—vaccinate and prevent—because the doctor is the actor for both. No line directly connects doctor to spread because doctors are neither spreading anything nor being spread. But doctor is tied to spread through prevent, as the doctor is preventing some kind of spread.

Virus is tied to spread because it is the virus being spread (as the object). A line links company to vaccinate because a company’s vaccine is used, but company is a step removed from prevent, at least semantically in this case. In addition, the prevention happened on a certain date, with the specific date indicated in purple. Similarly, the name of the company—Pfizer—is indicated as an operator in purple connected to company.

In the conspiracy example, by contrast, prevent is part of a different narrative with a different character (government) and a different effect (freedom of movement). In the example, the noun phrase “freedom of movement” refers to the unrestricted action of moving and thus requires a hierarchical plot structure involving the plot points move (at the bottom) and free (on top). The referenced government (and its implicit plot element govern) is linked to prevent as it is responsible for this form of prevention. Finally, the lock-down plot element is swapped with lock-up, which better captures the strongly suggested meaning of being imprisoned in this particular case.

This approach puts similar concepts in the same relative position on a semantic graph even when the instances of these concepts differ slightly. For instance, the same subgraph would represent a sentence in which a doctor who vaccinates is referred to as a “family doctor” and a sentence in which the same character is described as a “recently graduated doctor.” Similarly, we do not distinguish between different kinds of actors such as heroes and villains. This normalization of semantic information contrasts with previous approaches that extract actors’ specific roles, such as “family doctor” or “the recently graduated doctor.” 12 Our approach avoids the challenges posed by such role extraction, enabling concepts to be easily combined and allowing for flexible and interpretable frame extraction. 13 For more details on our methods, see the Supplemental Material and the open FrameFinder tool, described in Reference 14.

Recommendations for Policymakers

Monitor media content and, in some cases, impose penalties for the deliberate spreading of false health-related claims.

Look for patterns characterizing conspiracy media. Automated tools such as FrameFinder could aid in finding these patterns. Once identified, misleading or false content could be flagged, deleted, or reframed.

Require social media companies to specify the features of conspiracy narratives in their posting guidelines. Company officials or the online community could add warning labels, edit, or delete offending posts.

Require makers of AI chatbots implement guardrails that prevent the chatbots from promoting conspiracy messaging and other misinformation to users.

Incentivize creating online fake news games or quizzes that test people’s ability to identify manipulation techniques commonly used in conspiracy theories.

Incentivize developing community media literacy classes that incorporate knowledge of conspiracy narratives.

Mandate media literacy units in school curricula.

Build knowledge of conspiracy narratives into public service campaigns modeled after the United Kingdom’s Check Before You Share Toolkit.

Target educational efforts to individuals and communities most likely to lack this information, such as older individuals and people with minimal education.

Results: Comparison of Health Narratives in Conspiracy & Mainstream Media

Similarities in Document Length

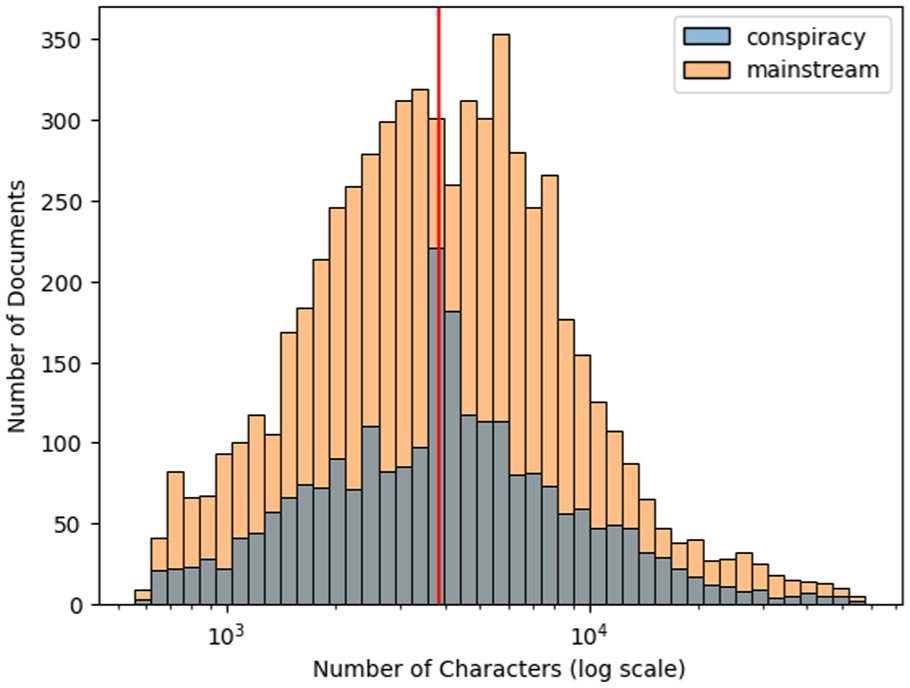

Documents from conspiracy media are difficult to differentiate using straightforward measures such as document length or word usage. Mainstream and conspiracy media include a similar distribution of document lengths, as measured by the number of characters (see Figure 2) or the number of words or sentences. In this dataset, however, we noted that conspiracy documents were more concentrated near the median document length (the red line in Figure 2).

Distribution of mainstream & conspiracy documents in dataset

Differences in Narrative Elements

Figure 3 depicts the semantic similarity of words in two-dimensional space. As shown in this figure, mainstream and conspiracy media differed in their predominant narrative elements. Words that are semantically similar (they would be used in a similar way in a sentence) are shown close to each other, whereas semantically dissimilar words are placed far apart. (See the Supplemental Material for tabular data on the predominant narrative elements.) Plot words appear in blue, characters in orange, setting in green, and the moral of the story in red. The most common plots, characters, settings, and morals for the two types of media in each health-related area appear as words in similar spaces for each media type.

Comparing narrative elements across conspiracy & mainstream media in three health-related subject areas

Across all three topics, conspiracy media tended to focus on war-focused framing such as using destroy and war as plot of the story, and defend, battle, and counter as the moral of the story. By contrast, mainstream media tended to rely on health-oriented framing such as the use of infect and treat as plot and disease, drug, virus, and patient as characters.

We also observed that conspiracy media tended to use argumentation frames such as believe, claim, and lie as plot. Conversely, mainstream media focused on action-oriented frames such as develop, spread, and reopen. In addition, the science-related characters (vaccine, virus) of mainstream media contrasted with conspiracy media’s use of characters that suggested large-scale conspiracies (world, truth). When considering setting, conspiracy media focused more on the present (today, here, now) than on a specific week, month, or year, as was the case for mainstream media. For the moral of the story, conspiracy media tended to underscore alarm, whereas mainstream centered on information in its narratives.

Although not shown in Figure 3, we also briefly investigated attributes such as names of characters or specific places for setting. See Table S3 in the Supplemental Material for the list of attributes. We observed that narratives in conspiracy media revolved around individuals, such as Gates and Trump, and had a focus on the United States and China. By comparison, mainstream media focused on institutions, such as universities and the National Health Service in the United Kingdom.

In the COVID dataset, we also found a focus on religion in conspiracy media, as denoted by the attributes Bible or Christians, that was not present in mainstream sources. In the disease dataset, we observed an emphasis in mainstream media on Global South countries (such as the two Congos, Uganda, or Africa in general) where the diseases discussed were most prevalent. These countries are mostly absent in conspiracy media. The pharmacology dataset illustrated mainstream media’s greater use of drug company names as entities. For more on this, see Table S3 in the Supplemental Material.

Predominant Narratives

In this section, we illustrate how using similar terms can result in divergent narratives by examining the semantic structure of three plot elements in our dataset: prevent, spread, and vaccinate. (See the Supplemental Material for the computational details). Here, we highlight overrepresented narratives that involved these terms in their simplified subject-verb-object form, although subject and object were not always present.

In the COVID-19 documents, prevent violence was a prevalent connection in conspiracy media narratives, which also often invoked government prevent individual (from doing something) narratives. By comparison, mainstream media focused on stopping the spread of the infection with prevent infect.

The use of the term spread also differed between conspiracy and mainstream media. Conspiracy theories often revolve around rumors; that is, their use of vaccine spread suggests that the vaccine spreads the disease. In contrast, mainstream media uses spread to mean viral spread, with a common use of the structure person spread virus. Similarly, the term vaccinate has divergent uses in the two media types. Military vaccinate is a common structure in conspiracy media, whereas vaccinate person is more common in mainstream media.

We observed similar patterns for the diseases and pharmacology topics. These topic areas also showed divergent narratives involving spread—vaccine spread versus spread virus—for the two media types. Similarly, in the pharmacology documents, person prevent and prevent infect were the predominant narratives in conspiracy and mainstream media, respectively.

Discussion

Our work highlights the contrasts between how mainstream and conspiracy media frame health topics. While mainstream sources typically rely on science- and action-based framing, conspiracy media promote belief-oriented narratives. In addition, our analyses of the two narratives highlights that the conspiracy sites emphasize an urgency and immediacy of the social problem, using words such as today and now or you and I. And the morals of conspiracy stories similarly revolve around alarm instead of information, the message of mainstream texts.

These characterizations are in line with the conspiracy theory mindset, which results in a reluctance to vaccinate and the rejection of science in general. 15 Interestingly, conspiracy sites also position the mainstream media as characters and part of the game in mentioning their mistrust of mainstream narratives. On the other hand, mainstream media does not refer to conspiracists, which points to a nonreciprocity in which conspiracy theorists are more focused on discrediting mainstream arguments than vice versa.

Similarly, we found that conspiracy media emphasizes its own correctness in its use of the words truth, true, actual, to an extent that mainstream media does not. Moreover, while war framing is often present in health-related discourse, 4 we observed a one-sided tendency toward war framing in conspiracy media, suggesting that war-like terms are beacons of conspiracy narratives. Overall, our findings reaffirm existing characterizations of conspiracy content by rediscovering them in a frame-agnostic way rather than searching for particular features based on prior conceptualizations of established frames. As such, our findings present a valuable compendium of the framing discrepancies between conspiracy and mainstream media.

Impact on Society & Policy Recommendations

Conspiracy and mainstream media have profound effects on society, as they shape the public’s perception of health risks and institutional trust, which in turn affects compliance with public health guidelines. During the COVID-19 pandemic, the divergent narratives from these media sources contributed to societal polarization over the severity of the crisis and the legitimacy of public health measures. 16 Furthermore, the flood of misinformation that accompanied the pandemic 17 undermined evidence-based health governance and posed significant public health risks, including increased vaccination hesitancy and the spread of harmful health behaviors.18 –20

A deeper scientific understanding of the frames and strategies used in conspiracy narratives is crucial to spotting false or misleading health information and reducing its impact on public health.21,22 We advocate a two-pronged approach in which policymakers would enact measures that would reduce dangerously misleading content on the one hand and educate the public about how to evaluate content on the other. Improving health literacy in addition to media literacy would further inoculate the public against belief in false claims, reducing harm to individuals and national health care systems. We recommend technology to aid both solution types.

Policymakers should monitor media content, and in some cases impose penalties for the deliberate spreading of false health-related claims. 19 To spot problematic posts, regulators could look for patterns characterizing conspiracy media we and others have identified. Automated tools such as FrameFinder 14 could aid in detecting these patterns. Once identified, such content could be flagged, deleted, or reframed, either manually or by using AI.

Social media companies could play a role in moderating content by specifying features of conspiracy narratives in their posting guidelines. Posts including these features should be given warning labels or be edited or deleted, as previously noted, either by the company or the online community through systems such as Community Notes on X and Meta platforms. In the long term, we hope content access systems such as search engines, automated recommendations, and social media feeds contain code that detects framing and blocks posts parroting conspiracy theories or spreading misinformation. 23

In addition to aiding the tracking and elimination of conspiracy content, our findings could underpin efforts to educate the public on identifying and evaluating such content. During the COVID-19 pandemic, efforts designed to help individuals identify misinformation were generally effective, 20 underscoring their value. Misinformation inoculation also tends to increase overall suspicion of news headlines and reduce reposts of all content. 24

Individuals could enhance their media literacy by playing online fake news games or taking quizzes that test their ability to identify manipulation techniques commonly used in conspiracy theories. 25 Policymakers should offer grants to individuals or groups for creating such content and for developing community media literacy classes that incorporate results like ours. Policymakers should also mandate media literacy units in school curricula.

Knowledge of conspiracy narratives could further inform public service campaigns such as the United Kingdom’s Check Before You Share Toolkit, which is designed to help citizens spot misinformation about COVID-19 vaccines. 26 Policymakers should launch similar campaigns that alert the public to telltale signs of conspiracy messaging. Further, policymakers should target their educational efforts to individuals and communities mostly likely to lack this information, such as older individuals and people with minimal education. 27

Policymakers should also require that the makers of AI chatbots implement guardrails to prevent promoting conspiracy messaging and other misinformation to users. The chatbots could learn to identify false messaging through the narrative elements identified in this study. Our approach for separating the two types of narratives could reduce the time and resources required for training the chatbots. In previous research, AI chatbots have increased skepticism of conspiracy theories and other misinformation through personalized dialogue and adaptive communication. 28 But educational chatbots like these might be difficult to deploy in real-world settings.

Limitations & Ethical Considerations

Our analysis has several limitations. First, the COVID-19-related documents we examined mostly dated to the beginning of the pandemic (see Figure S2 in the Supplemental Material) and did not cover anything posted after 2020 due to LOCO’s date range. As a result, our conclusions may not extend to dialog in later pandemic years. Second, how we sorted documents into conspiracy or mainstream categories was based on the website the document came from rather than the document itself, so we might have assigned some documents to the wrong category. Third, because we leveraged pretrained language models, we were subject to their inherent biases. When text is ambiguous, for example, these models must make assumptions about context and meaning and those assumptions could be incorrect.

Because conspiracy theories are a sensitive societal topic, we note two ethical considerations. First, we protected people’s privacy by using a publicly available dataset and presenting highly aggregated results so as not to identify any individual, website, or person. Second, our aim was to better understand conspiracy narratives. While we hope our findings will be used to better spot and defuse these narratives, we have little control over other uses of this knowledge. In theory, conspiracy promoters could exploit it for crafting messages that defy detection.

Summary

Our results show how conspiracy and mainstream media frame health-related issues in different ways that are embedded in their broader narratives. We found that conspiracy-oriented media often frames health topics in terms of personal beliefs and government control, while mainstream media emphasizes scientific evidence and institutional responses. This distinction is important for policymakers and public health organizations when identifying messages that promote conspiracies, as simply fact-checking false claims may not be enough to counteract their influence. Additionally, individual media literacy requires equipping individuals with the tools to recognize how framing influences their understanding of issues. Mapping these narrative patterns can help policymakers and educators develop more effective strategies for countering misinformation and improving public trust in health communication.

Supplemental Material

sj-pdf-1-bsx-10.1177_23794607261431053 – Supplemental material for Framing of health-related narratives in conspiracy versus mainstream media

Supplemental material, sj-pdf-1-bsx-10.1177_23794607261431053 for Framing of health-related narratives in conspiracy versus mainstream media by Markus Reiter-Haas, Beate Klösch, Markus Hadler and Elisabeth Lex in Behavioral Science & Policy

Footnotes

Author Note

We thank the rectorates of Graz University of Technology and University of Graz for their support of this research. We also thank our reviewers, who wish to remain anonymous, for their helpful comments in revising this paper.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the TU Graz Open Access Publishing Fund and was funded in whole or in part by the Austrian Science Fund (FWF) 10.55776/COE12.