Abstract

Employers increasingly use artificial intelligence (AI) to help screen job applicants. But the way algorithms are designed can make them highly susceptible to systematic biases against women in male-dominated fields, including the women who are best qualified for the jobs. Various methods for debiasing hiring algorithms are available to help ensure that the best-qualified men and women are all given fair consideration. However, little is known about which approach is most likely to appeal to applicants. To address this question, the author conducted a mock job application experiment that examined how decisions to apply for a competitive job differ depending on the debiasing approach used. The study showed that awareness of gender bias in an algorithm significantly deterred women from applying, including those who were most qualified for the job. It also demonstrated that all the debiasing approaches studied significantly increased the number of women applying without compromising the quality of the final applicant pool. An approach designed to give men and women an equal chance of being selected attracted the most female applicants and should be considered by employers who primarily wish to increase diversity in hiring. However, men and women in this study also perceived an algorithm that ignored gender as the fairest. Such gender-blind algorithms should be considered by employers who want to both improve numbers of female applicants and reinforce workers’ perceptions that hiring practices are fair.

Artificial intelligence (AI) has transformed the landscape of hiring in recent years. A 2021 report from the consulting firm Mercer found that 92% of human resource leaders were using or planning to use AI algorithms to screen or assess applicants. 1 Similarly, a 2021 Harvard Business School report found that 75% of large U.S. enterprises, including 99% of Fortune 500 companies, automate their applicant screening process. 2

However, the use of hiring algorithms is controversial. On the positive side, they can increase efficiency, reduce costs, and avoid human fatigue. But they can also reproduce and amplify past and present human biases, especially concerning disadvantaged or minority groups. 3 For example, algorithmic bias may unfairly penalize qualified women in male-dominated fields and cause employers to mistakenly reject them simply because of their gender.

The debiasing of algorithms can help reduce such inefficiencies for employers and increase fairness in the hiring process. Furthermore, recent research has shown that qualified women who learn that debiasing is used become more inclined to submit applications, resulting in more diversity of qualified candidates in the applicant pool. 4 Therefore, even in the absence of formal diversity, equity, and inclusion (DEI) programs, debiasing hiring algorithms can help organizations sustain and advance diversity efforts and achievements while adhering to fair and merit-based hiring principles.

Debiasing techniques can differ based on the concepts of fairness they apply. 5 Research on fairness conducted in the machine learning field (a branch of study in AI) has shown that it is generally mathematically impossible for any one algorithm to satisfy more than one class of fairness criteria,6,7 raising the question of which fairness criterion employers should prioritize.

I have conducted a study addressing this question. I first examined the degree to which knowing that AI hiring tools can be biased toward male applicants may affect women’s decisions to compete for competitive, high-paying jobs in male-dominated fields. I then examined how these decisions differ depending on how hiring processes are debiased.

In the following, I describe the different concepts of fairness that have been studied in the machine learning field. I next discuss different ways to debias hiring algorithms according to specific concepts of fairness as well as the trade-offs involved with each approach. I then describe my experimental design and present the results and their policy implications. (See Part 1 of the Supplemental material for an expanded version of this article, providing fuller discussions of fairness concepts, the experiment’s design, and the analysis results.)

Fairness Criteria & Approaches to Debiasing Algorithms

Many people would agree that applicants should be selected by merit alone. Hiring algorithms that maximize overall accuracy in their predictions (that, on average, do best at predicting applicant ability) could therefore be expected to be most effective at recommending candidates on the basis of merit. However, for statistical reasons, maximizing the average accuracy can often lead to an algorithm undervaluing certain groups of applicants, resulting in qualified applicants from these groups being systematically overlooked.3,8

All algorithms make mistakes, but an algorithm is considered biased if it systematically makes erroneous predictions about one group or another. This bias may be caused by the design of the algorithm itself or by bias-containing data that the algorithm is trained on. During training to make predictions relating to a task, algorithms learn how past decisions were made by examining the information in an existing data set. Once trained, they can make predictions for a new data set. However, if the training data are biased against a group—for example, if the past data included many discriminatory decisions against women— the algorithm would learn to discriminate against that group in its predictions.

Insofar as biases can be considered unfair, the use of debiasing methods would be expected to produce fairer outcomes than the use of nondebiased methods. But exactly what fair means in practice is not always clear.

Two main types of fairness criteria have been applied by researchers who study machine learning. One focuses on the equality of accuracy, the other on the equality of predictions—terms I define below. Two of the three debiasing methods I used in my study specifically seek equality of accuracy or equality of predictions. A third method takes a different tack for reducing bias.

Equality of Accuracy

When an algorithm makes more accurate predictions for men than for women, it could result in women being more likely to miss out on jobs they are qualified for. Equalized odds and predictive parity, two distinct fairness concepts in machine learning, use different criteria to ensure that algorithmic predictions are equally accurate for different demographic groups. When implementing equality of accuracy in this study, I used a less stringent form of equalized odds called equality of opportunity.9,10 In the context of this study, this fairness approach gave qualified men and women an equal chance of being selected. Say that 60 men and 40 women were applying for 20 positions and that 20 men (a third of the men) and 10 women (a quarter of the women) met the job criteria. A perfectly accurate algorithm that satisfied the equality of opportunity criterion would select 13 qualified men and seven qualified women (about 65% of qualified people of each gender). More realistically, a focus on equality of opportunity would result in 10 qualified men being selected (half of the qualified men) and five qualified women being selected (half of the qualified women) for the open positions. The five remaining spots would be filled by unqualified applicants of either gender but most likely male in this context.

Regarding tradeoffs, algorithms guided by the equality of accuracy concept are useful for limiting bias against a given group of applicants if the historical data used for training or the definition of “qualified” are not biased against that group. However, as I have noted, real-life data often contain biases. 11 For instance, if a certain labor market has long had more qualified men than qualified women, or if the existing definition of qualified inadvertently favors men, algorithms designed for equality of accuracy will likely result in very few women being selected. This outcome, in turn, would perpetuate gender inequalities in the field by continuing to deter women from getting qualified in the area. Thus, although this algorithm would likely recommend hiring more female applicants than a nondebiased algorithm would, it may not fully rectify historic biases against women now and in the long term.

Equality of Predictions

The equality of predictions criterion is based on the concept of statistical parity (also known as demographic parity) in the machine learning field. In essence, this view of fairness calls for algorithms to ensure that participants from two demographic groups have an equal chance of being selected. For instance, if the same 60 men and 40 women above applied for 20 positions (enough slots for 20% of the applicant pool), the algorithm should propose selecting 20% of the men and 20% percent of the women, on average, or 12 men and eight women. This standard has often been applied in response to laws requiring that the selection rate for any disadvantaged group should not be less than 80% of the selection rate for an advantaged group (a demand known as the four-fifths rule).

Attending to gender equality in predictions is especially useful for reducing bias if the data used in an algorithm’s training contained biases against women, such as those grounded in unfair stereotypes. Relative to using a typical hiring algorithm, this approach would ensure that more female applicants are chosen by the algorithm and that qualified women may be less likely to be overlooked. 12 It also increases the chance that women will be well represented among successful applicants, which in turn may encourage more women to enter the field in the future and thus reduce gender disparities in the long term. 13

However, this approach also has drawbacks. The stringent demand to have proportionally equal representation will likely decrease the overall accuracy of the algorithm’s predictions. Such an algorithm is also highly unlikely to provide equality of accuracy (giving qualified men and qualified women an equal chance of being selected), which could potentially lead to a higher number of qualified male applicants being rejected and more unqualified women being selected when compared to the nondebiased and equality of accuracy approaches.

Gender Blindness

Beyond programming algorithms to apply the concepts of fairness described above, a third approach to fairness entails using gender-blind algorithms. Such algorithms exclude gender and gender-related information (including any details, such as certain hobbies, that tend to be indicative of gender) from the training data and application materials. 14 These exclusions should therefore reduce gender biases in predictions, even if the training data contain gender biases, without having to impose stringent fairness criteria such as equality of accuracy or equality of predictions. Such an approach has also been found to enhance perceptions of fairness in hiring.15,16

Yet, outcomes are unpredictable because gender-blind algorithms do not necessarily ensure that the best-qualified men and women have an equal chance of being recommended for open positions or that equal percentages of males and females in general will be selected. Moreover, gender blinding can lead to less accurate predictions than are true of standard nondebiased hiring algorithms if the blinding inadvertently causes the algorithm to ignore potentially job-relevant information, such as leisure activities typically favored by one gender or the other. With the gender-blind approach, either gender could potentially be disadvantaged.

Experimental Design

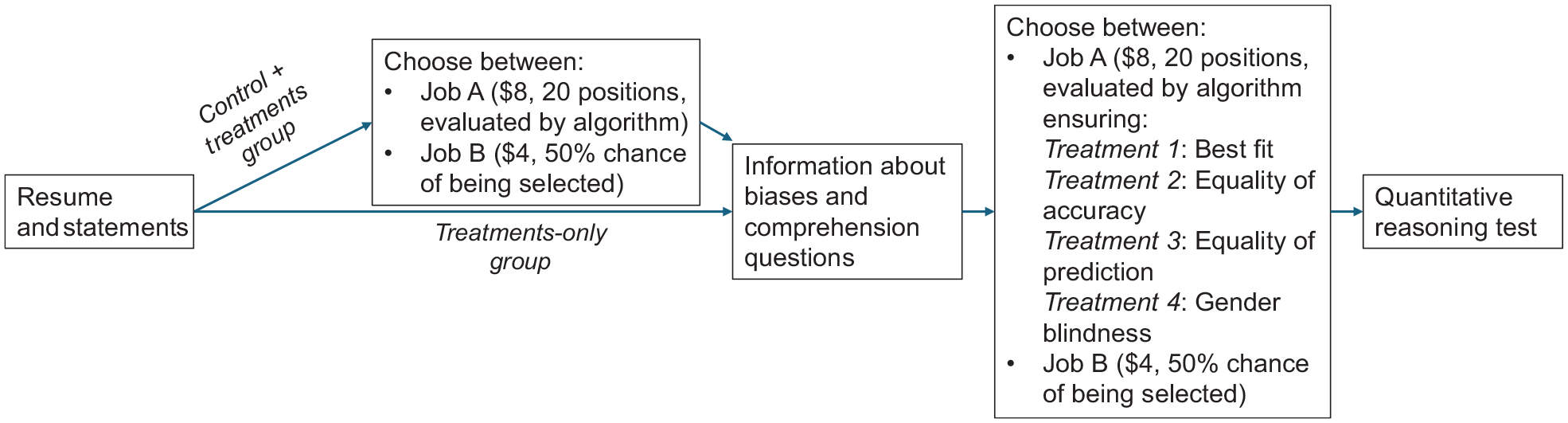

My goal in this study was to determine which debiasing approaches would appeal most to female applicants in male-dominated fields and thus potentially increase the number of female applicants overall and qualified female applicants in particular. To do so, I conducted an online economic experiment in which participants chose whether to apply for a competitive job when they learned about the hiring algorithm that was being used. See Figure 1 for an overview of the experimental design. As is standard for economic experiments, I applied a payment protocol that incentivized participants to make decisions truthfully. (See Part 1 of the Supplemental Material for details of the payment protocols.) The study was preregistered and received ethics approval.

Experimental procedure (main tasks only)

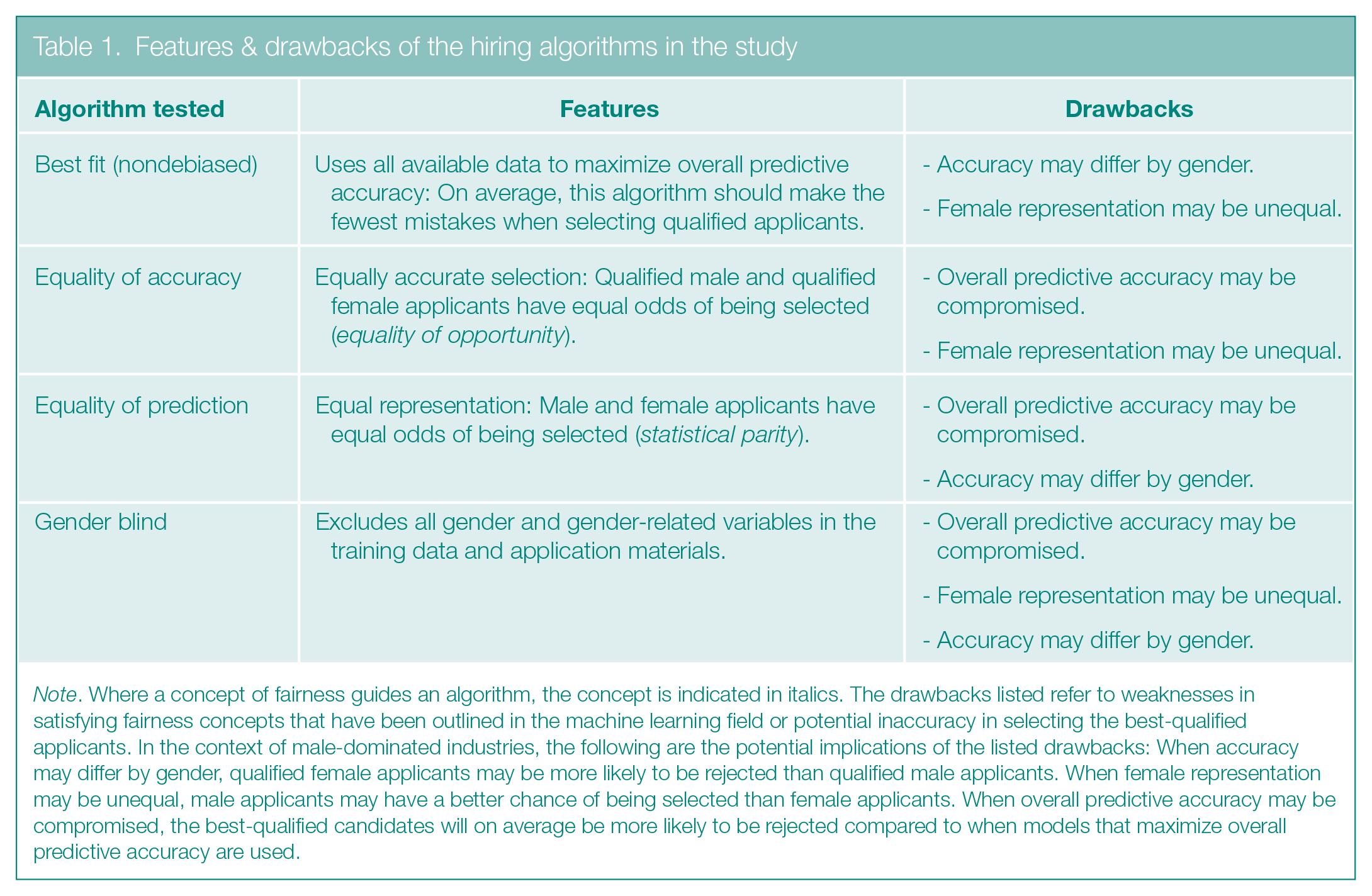

Features & drawbacks of the hiring algorithms in the study

Note. Where a concept of fairness guides an algorithm, the concept is indicated in italics. The drawbacks listed refer to weaknesses in satisfying fairness concepts that have been outlined in the machine learning field or potential inaccuracy in selecting the best-qualified applicants. In the context of male-dominated industries, the following are the potential implications of the listed drawbacks: When accuracy may differ by gender, qualified female applicants may be more likely to be rejected than qualified male applicants. When female representation may be unequal, male applicants may have a better chance of being selected than female applicants. When overall predictive accuracy may be compromised, the best-qualified candidates will on average be more likely to be rejected compared to when models that maximize overall predictive accuracy are used.

The study took place on March 22 and 23, 2024, using a U.S-based sample of working adults recruited through Prolific. The sample was gender balanced, consisting of 372 men and 364 women. Participants were fluent in English and representative of the nation’s ethnicity. The average age was 38.5 years; 26.1% participants identified as not White only; 60.7% held a degree; and the average years of work experience was 16.8. Before the participants were told anything about the tasks to be performed, they provided details about themselves, including educational history and work experience. I later used this information to produce a basic resume for each person. I also had participants list their three greatest strengths and skills and provide a statement on their mathematical reasoning skills. The resume and the statement on their skills later constituted the data fed into the hiring algorithms.

Next, participants carried out the main tasks (applying for jobs), which allowed me to study how different algorithms affected whether they chose to apply for a competitive job. Participants carried out up to five versions of a job application task, which varied in the information provided to them about the hiring algorithm being applied for a competitive job. In all versions of the job application task, participants were asked to apply for one of two job options as follows:

These options allowed me to simulate the kind of choices that men and women face when applying for traditionally high-paying positions in male-dominated environments where quantitative skills are often required, such as in the financial or technology realms. This job-choice protocol was modeled on a mock-labor market experiment I had previously conducted. 4 That study examined applicants’ preferences for four screening approaches: standard hiring algorithms, algorithms that were debiased, review by human assessors, or review by human assessors who were trained on reducing bias in making hiring decisions. The approach to debiasing the debiased algorithm was not specified.

In the current study, I wanted to answer two questions: (a) how biased algorithms could affect applicant behaviors—that is, which job they applied for—and (b) how three debiasing algorithms could change these behaviors. To answer the first question, I compared participants’ application decisions when they were not made aware of possible gender biases in a hiring algorithm with the decision taken when participants were made aware that an algorithm could be biased. To answer the second question, I compared the decisions job applicants made when they knew they would be evaluated by a standard (potentially biased) algorithm to decisions they made when they were informed an algorithm had been debiased in some way.

To implement the job application tasks, I randomly divided participants into two groups: control + treatments and treatments only. The control+ treatments group had 369 participants, of which 187 were men and 182 women. The treatments-only group had 367 participants, of which 185 were men and 182 were women.

Both groups ultimately received information about possible gender bias in hiring algorithms, specifically about the possibility of gender inequality in accuracy (algorithms may be more accurate for men than for women) and gender inequality in predictions (algorithms may choose more men than women) and about how these biases could arise if training data consisted of more men than women. The only difference between the control + treatments group and the treatments-only group was that, to help me answer the first question above, the control + treatments group alone was first asked to choose between Job A and Job B without being informed about any gender biases in the algorithm. This control condition allowed me to study applicant behaviors when gender biases were not salient. After that, the rest of the experimental procedure was identical for both the control + treatments group and the treatments-only group.

Once both groups received information about gender bias and passed comprehension checks, they completed four job application tasks that varied in the algorithms used to evaluate applicants for Job A. All participants in these treatment conditions made the choice between Jobs A and B for all four algorithms, which were presented one at a time in random order. For each of the job application tasks, participants were given a description of that particular algorithm’s features and tradeoffs. (See Part 2 of the Supplemental Material for the exact wording.)

The algorithms I used are described in Table 1, which also lists the drawbacks of each in the context of male-dominated fields where more men than women typically apply and are hired for competitive jobs. In short, the best-fit algorithm maximized overall accuracy without doing any debiasing. The equality of accuracy algorithm was designed to ensure that predictions about who was qualified would be equally accurate for men and women (in other words, that qualified men and qualified women had equal odds of being selected). The equality of prediction algorithm ensured that men and women had equal odds of being selected (in this case, 20% of men and 20% of women). The gender-blind algorithm removed all gender and gender-related information from training data and application materials.

In the context of most male-dominated industries, the equality of prediction algorithm should select the highest number of women, and the best fit option should select the least number of women. Both the equality of accuracy and gender-blind algorithms should select more women than the best-fit algorithm would, but which of these would select more women would depend on the specific context.

This study design enabled me to simulate the differences in the percentages of male and female applicants who would, on the basis of understanding each algorithm, apply for traditionally high-paying positions in male-dominated environments.

After completing the job application tasks, participants were asked their beliefs about their chances of success for the competitive job. Participants then completed a quantitative reasoning test to directly assess their mathematical ability. I used the test results for analytic purposes to identify the applicants who were most qualified for the competitive job. The results were not provided to the hiring algorithms, which only analyzed each participant’s resume and statements. The test, in common use by employers, included a set of multiple-choice questions, such as one asking what the next number would be in the series 30|28|25|21|16|. (The answer is 10). Participants answered as many of these questions as possible in 4 minutes and were paid $0.20 per correct answer for this task. Participants were then asked their beliefs about their performance in this task.

Finally, participants completed survey questions about their attitudes and experiences, such as their attitude to risk taking, perception of fairness, and preference for each evaluation method. During the course of the experiment, I also included three attention-check questions. And, after each job application was made, I asked participants to briefly explain their reasons for choosing Job A or Job B, to ensure they had thought about the features of the algorithms being used before making a selection.

Results

Effects of Algorithmic Gender Bias

To gain a baseline sense of how consideration of gender biases in hiring affects men’s and women’s decisions to apply for competitive jobs, I compared the control + treatments group’s initial decision to apply for Job A or Job B (before participants were informed about biases and debiasing methods) with the treatments-only group’s decisions when participants knew the best-fit algorithm was being used. (As a reminder, the best-fit algorithm was not debiased and focused on overall accuracy according to existing data. Its use thus resembled standard practice, and it could, in theory, select no women.)

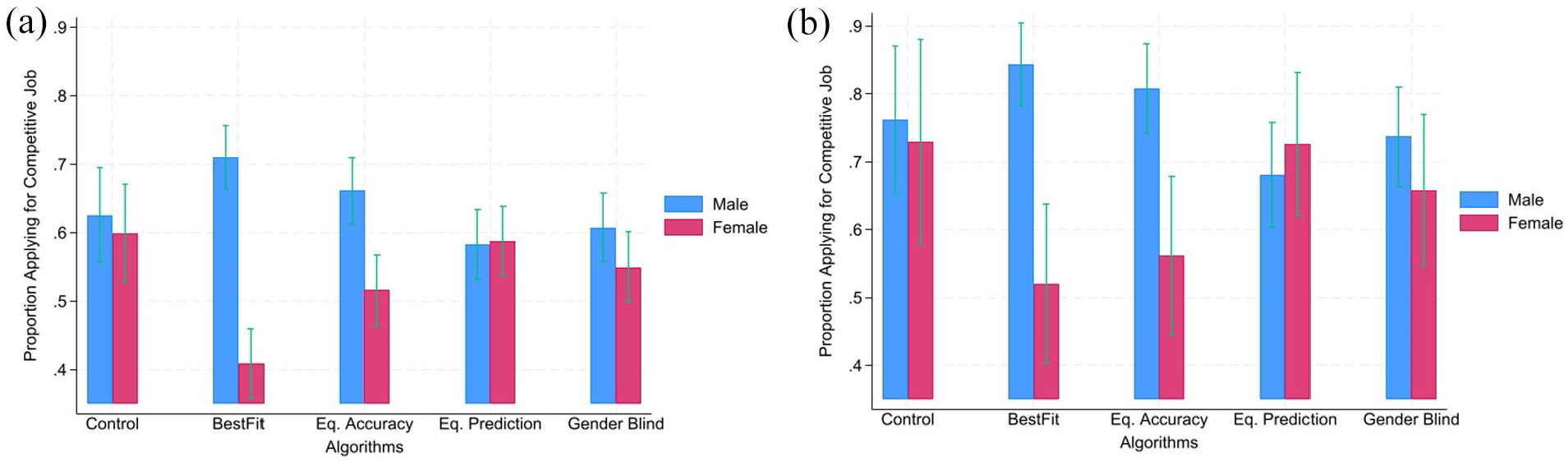

When the 369 people in the control + treatments group made their initial application decision before any discussion of gender biases, 62.6% of male participants and 59.9% of female participants applied for the competitive job. This gender difference was not statistically significant (p = .599). (See Note A for a discussion of the statistical terms used in this article, and see Note B for a comment on this finding.) In contrast, when the 367 people in the treatments-only group knew they were being evaluated by the best-fit algorithm, 73.5% of males applied for the competitive job compared to just 41.8% of females. This gender difference was statistically significant (p = < .001). In other words, the findings indicated that men were 10.9 percentage points more likely to apply for the competitive job when aware of an algorithmic bias that favored them (p = .024), whereas women were 18.1 percentage points less likely to apply when aware of algorithmic bias against them (p = < .001). This finding suggests that awareness of existing algorithmic gender bias leads to an increase in men competing and a much bigger decrease in women competing than when gender bias is not salient.

Similar results emerged when the analyses focused on the highest skilled participants, namely those who employers want to attract. I defined the highest skilled participants as those scoring in the top quartile (scores of 8 or higher) on the quantitative reasoning test. These participants totaled 141 men and 73 women. When deciding between Job A and Job B before any discussion of bias, similar percentages of the top male and top female participants—76.2% and 73.0% respectively—in the control + treatments group applied for the competitive job (p = .723). In contrast, these numbers were 85.9% and 55.6%, respectively, for the best-fit algorithm in the treatments-only group (p = < .001).

These results, which are summarized in the control and best-fit bars in Figures 2a and 2b, were confirmed by more formal regression analyses and are robust to the inclusion of such independent variables as age and education level (see Table S2 in Part 1 of the Supplemental Material).

Summary statistics for job application decisions

Responses to Different Debiasing Methods

I assessed how different debiasing methods affect the gender diversity and quality of applications submitted for the competitive job by using a within-subject analysis, specifically, by comparing how each of the 736 participants (that is, those in the control + treatments group plus those in the treatments-only group) responded to the four different hiring algorithms. (Note that a comparison of the job choices made by the control + treatments group and the treatments-only group revealed that the selections by the two groups were statistically indistinguishable; hence, I combined the two groups for analysis.) Figures 2a and 2b show summary statistics of the results for all four algorithms.

Overall, the likelihood of men or women applying for a competitive job largely corresponded with how likely it was that an algorithm would select someone of their gender in general. Men were most likely to apply when males were most advantaged (when the nondebiased best-fit algorithm was used) and least likely to apply when they had the least advantage (when the equality of prediction algorithm was used). Women were most likely to apply when females were least disadvantaged (when the equality of prediction algorithm was used), and least likely to apply when females were most disadvantaged (when the best-fit algorithm was used). The same pattern appeared when I focused on the participants who were the best qualified according to their scores on the quantitative reasoning test (see Figure 2b). More generally, regression analyses showed that women were significantly more likely to compete if algorithms were debiased by any means than when the best-fit algorithm was used. The pattern largely held true for the top-quartile participants as well.

The regression analysis comparing the debiased algorithms showed that men were significantly less likely to compete when algorithms were debiased with a focus on gender blindness or prioritizing equality of predictions (equal odds for men and women in general) than when an algorithm sought equality of accuracy (equal percentages of qualified men and women). In contrast, women were significantly more likely to compete when an algorithm prioritized equality of predictions or gender blindness over equality of accuracy.

As for the best-qualified participants, the regression analyses suggested that men may be less likely to apply (p = .074), and women may be more likely to apply (p = .093), when an algorithm prioritizes equality of predictions over gender blinding. Relative to the best-fit algorithm, the algorithm that prioritized equality of accuracy only had only a limited effect on decisions to compete among the best male and female participants. The results remained largely unchanged when I controlled for individual characteristics. (See Table S3 in Part 1 of the Supplemental Material for the full regression results.)

Notably, none of the algorithms in this study changed the number of top-quartile participants who applied for the competitive job. This finding implies that debiasing of algorithms should not reduce the quality of applicants. The only thing likely to vary by algorithm is the gender composition of the top applicants.

Overall, the results showed that being aware of gender biases significantly decreased the likelihood of women applying for competitive jobs. Women were more likely to compete when algorithms were debiased than when they were not, and algorithms that ensured an equal chance of success for men and women led the most women to apply for competitive jobs, indicating that such algorithms could close the gender gap in applications. These results also held when only the most highly skilled applicants were considered. More specifically, although awareness of bias dramatically decreased the likelihood of women applying for the competitive job, this gap was eliminated or at least diminished when either the equality of predictions or a gender-blind algorithm was used. And, crucially for employers, it is worth repeating that using debiased algorithms did not change the total number of qualified participants in this study who applied for the competitive job.

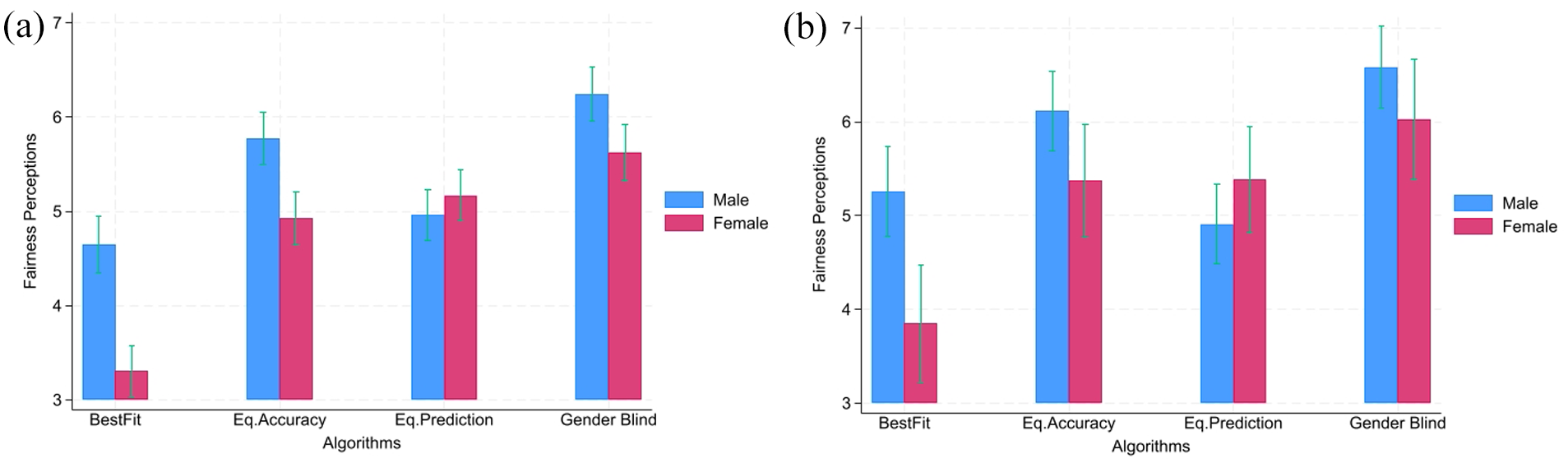

Perceptions of Fairness in Debiasing Methods

After completing the application tasks, participants rated how fair they thought each of the four hiring algorithms was on a scale of 0 to 10, with 10 being the fairest. Figure 3a provides summary statistics of fairness perceptions for the full sample, and Figure 3b summarizes the perceptions of the top-quartile participants. Fair decision-making processes, or procedural fairness, are an important aspect of inclusion in the workplace.17–20 Past research has shown that people consider gender-blind algorithms to be the fairest. 15

Summary Statistics of Fairness Ratings

The men and women in this study also considered the gender-blind algorithm the fairest and the nondebiased (best-fit) algorithm the least fair. Attitude does not necessarily translate into behavior, however, as is evinced in the finding that men were significantly more likely to apply for a competitive job when the algorithm was not debiased (was least fair) than when it was gender blind. It appears that people make decisions based on which algorithm advantages their gender the most.

Nevertheless, perceptions of fairness did play some role in the decision to compete. When I included the participants’ perceptions of algorithmic fairness in the main regression analysis, I found that men and women were both significantly more likely to apply for the competitive job when they considered an algorithm being used as fairer than another algorithm, although women were more influenced by fairness perceptions than men were. The same pattern held when I focused only on the top-quartile participants and when I included individual characteristics of the participants in the analyses. (See Table S4 in Part 1 of the Supplemental Material for the full regression results.)

Discussion

Hiring algorithms are widely used today and, if trained on past data in traditionally male-dominated fields, are likely to be inherently biased against women. This study provides multiple findings indicative of how applicants’ knowledge of the kind of algorithm used affects the numbers of women and men who apply for competitive jobs in or enter such fields.

First, the results show that gender bias against women in hiring algorithms can be detrimental to efforts to recruit more women in traditionally high-paid, male-dominated environments. Second, debiasing such algorithms can vastly increase the number of women competing for a high-paid position in environments where women are disadvantaged. Third, focusing on perceptions of algorithmic fairness, as past studies of algorithmic fairness have tended to do,15, 21–25 does not necessarily reveal how job hunters will act in response to the algorithms. Although the perception of fairness is an important consideration when deciding whether to compete, especially for women, I found that it does not always translate to behavior—that is, to applying for the job that uses the fairest hiring algorithm. What ultimately matters, it seems, is how the different fairness criteria used in algorithms affect the applicants’ chances of being hired.

This study contributes to both the literature on the effects on gender diversity when using AI and the literature on fairness in AI. Past work on debiasing algorithms has shown that knowing algorithms are debiased can increase women’s willingness to apply for competitive jobs 3 and that job hunters may prefer algorithmic hiring to human hiring if they know the algorithm is gender blind. 26 However, research had not compared the effects of different debiasing algorithms on the jobs people ultimately apply to. As for adding to the literature on AI fairness, the finding that perceptions of fairness do not always lead to favoring the fairest algorithm when people apply for a job highlights the importance of examining behaviors in addition to attitudes when studying the effects of algorithmic fairness.

Several of these findings have important policy implications. For one, when possible gender bias in algorithms was not known, men and women in this study were equally willing to compete for a competitive job. However, in the real world, gender biases in the standard models used by hiring algorithms are practically inevitable when the models are trained on data in male-dominated industries. Hence, debiasing of hiring algorithms is crucial to both merit-based hiring and fairness, ensuring that hiring practices do not unfairly deter qualified women.

Also relevant is the good news that the quality of the applicants did not differ depending on the fairness concept prioritized in an algorithm. All the algorithms tested attracted almost the same number of the highest qualified applications. The only difference was gender. Although nondebiased best-fit algorithms may select the best-qualified applicants overall (at least according to past history), they favor men and can thus be very harmful to diversity. In this study, the algorithm that ensured gender equality of predictions tended to attract the most female candidates of all algorithms tested. The gender-blind algorithm did not attract as many women, but it still attracted significantly more female candidates than the nondebiased algorithm did and was perceived by both genders to be the fairest algorithm. Although equality of accuracy is an important fairness concept in machine learning, these findings suggest that it is neither perceived as the fairest nor able to attract the most female applicants.

For policymakers and employers striving to close gender gaps in male-dominated industries such as technology and finance, such results suggest the following recommendations. (Also see the sidebar Key Points for Employers & Policymakers.) Those who wish to maximize the number of women applying for competitive jobs should employ an algorithm that ensures equal chances of selection for men and women (that is, an equality of prediction algorithm). In this study, this algorithm was also most effective at maximizing the number of female applicants who are qualified for a competitive job. On the other hand, if the objective is to choose an algorithm that both men and women consider fairest—as is important for building an inclusive culture that truly values diversity—then a gender-blind algorithm (which may be almost as effective at attracting female applicants) should be selected.

Key Points for Employers & Policymakers

Hiring algorithms are widely used. In male-dominated industries, standard algorithms are likely to be erroneously biased against women. In consequence, employers could miss out on female applicants who would suit their needs best, thus undermining the goals of fair hiring and selection based on merit.

Using debiased hiring algorithms can help uphold fairness and meritocracy in the job application process and help employers hire the best applicants regardless of gender. Their use can also potentially help organizations avoid undoing decades of diversity progress and sustain diversity goals even in the absence of formal DEI programs.

If focused on diversity alone, employers could opt for a debiasing algorithm that selects an equal proportion of male and female applicants.

If focused on diversity and inclusion (employees’ sense that everyone is treated equally and fairly), employers could opt for a debiasing algorithm that is gender blind.

Although diversity and inclusion efforts remain important around the world, it is impossible to ignore that, as this article went to press in 2025, many such efforts in the United States have been eliminated. Nonetheless, my findings remain relevant in the United States as well as elsewhere. Applying inclusive hiring technologies can still prevent disadvantaged yet qualified individuals from falling through the cracks and thus ultimately help employers attract the best people for the jobs at hand. Moreover, using effective debiased technologies that are favored by prospective employees can potentially help organizations achieve and maintain the goals of DEI even in the absence of formal DEI programs.

Supplemental Material

sj-pdf-1-bsx-10.1177_23794607251353585 – Supplemental material for Fair AI in hiring: Experimental evidence on how biased hiring algorithms and different debiasing methods affect the quality and diversity of applicants

Supplemental material, sj-pdf-1-bsx-10.1177_23794607251353585 for Fair AI in hiring: Experimental evidence on how biased hiring algorithms and different debiasing methods affect the quality and diversity of applicants by Edwin Ip in Behavioral Science & Policy

Supplemental Material

sj-pdf-2-bsx-10.1177_23794607251353585 – Supplemental material for Fair AI in hiring: Experimental evidence on how biased hiring algorithms and different debiasing methods affect the quality and diversity of applicants

Supplemental material, sj-pdf-2-bsx-10.1177_23794607251353585 for Fair AI in hiring: Experimental evidence on how biased hiring algorithms and different debiasing methods affect the quality and diversity of applicants by Edwin Ip in Behavioral Science & Policy

Footnotes

Author note

The author acknowledges valuable feedback from Miguel Fonseca, Tamaryn Meek, Joseph Vecci, and three anonymous reviewers.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by a grant from the Google Award for Inclusion Research Program.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.