Abstract

AI has the potential to help governments become more efficient and transparent and better able to serve their citizens. However, using AI in designing and implementing policies comes with challenges and risks. As in other arenas, it can lead to job loss and privacy infringements. But this article concerns another, more insidious risk: Using AI can amplify the tendency of people who apply the tools to rely on intuitive rather than deliberative reasoning, which, in the realm of governance, can result in flawed policies that can harm the public. This article introduces a framework, informed by cognitive science research, for helping government policymakers understand the risks of human–AI collaborations and mitigate those risks through inserting more deliberative reasoning into each aspect of these collaborations. By applying the framework to case studies, the article also demonstrates its potential for preventing disastrous outcomes from AI’s use in policy. Overall, the framework is meant to help policy professionals navigate AI’s complexities and produce effective, efficient policies that include procedural safeguards for protecting citizens from harm.

Keywords

Artificial intelligence (AI) is reshaping society in profound ways.1,2 In health care, its role in diagnosis and personalized medicine is improving patient outcomes and operational efficiency. 3 The financial sector benefits from AI for fraud detection, risk assessment, and trading. 4 In transportation, the integration of AI in autonomous vehicles and traffic management systems aims to enhance safety, reduce congestion, and optimize logistics. 5

AI is also transforming how public services are delivered and how governments interact with citizens. 6 It is increasingly used to enhance public safety, 7 for instance, by applying predictive policing algorithms that help allocate police resources based on patterns of criminal activity.7,8 In the delivery of government benefits, chatbots and virtual assistants provide citizens with 24/7 access to information and services. 9 (For more discussion of ways AI can improve governance, see the Supplemental Material.)

But AI also presents challenges and risks. Although data privacy breeches and job loss are known potential downsides, a more insidious challenge arises from human cognitive biases (such as overconfidence in one’s intuitions) that can lead to flawed decision-making when policymakers incorporate AI tools into designing and implementing policies. The flawed decisions, in turn, can result in policies that do more harm than good. Such outcomes are most likely when automated systems lack transparency, accountability, or ethical safeguards.1,10 In an example of such risks, known as the Robodebt scandal, a flawed data analysis by an automated system incorrectly accused hundreds of thousands of welfare recipients in Australia of owing money. 11 The fallout was devastating. Many of the falsely accused faced financial ruin, with the stress leading to divorce and mental health problems. Some people reportedly committed suicide over the erroneous debt notices.

I discuss Robodebt and two similar cases later in this article. Although the Robodebt system and another of the cases did not involve AI, the examples nonetheless lay bare the perils of unchecked automation. They also represent cautionary tales about the complexities of integrating automation into governance.12,13 And they underscore the importance of governments having robust frameworks to ensure the responsible development and use of AI. 14 Recent initiatives, such as a White House executive order on AI safety and security, the European Union AI Act, and the Safe and Responsible AI Consultation in Australia, illustrate the growing recognition of the need for government regulation.10,15

Regulation alone will not ensure the effective use of AI in policy, however, because policymakers also need to understand how to meet the required standards. Most of the available guidelines for doing this tend to focus on institutional and technological methods—such as requiring policymakers to be transparent about how the AI systems draw conclusions or requiring AI system designers to include algorithms that compensate for certain limitations of users, such as fatigue in people charged with monitoring the systems—and focus only on one part of the policymaking process.16–19 (See the Supplemental Material for more on these frameworks.) Although these frameworks offer valuable solutions to the challenges posed by incorporating AI into policymaking, they do not provide an overarching solution to the decision-making problems that can occur when policymakers interact with AI systems.

Developing such a solution requires viewing the interaction between humans and AI systems as a synergistic collaboration that, when it works well, maximizes the benefits each has to offer while reducing the risks.20,21 Humans excel at complex problem-solving, ethical reasoning, creativity, emotional intelligence, and having an intuitive grasp of intricate issues. Their limitations include a susceptibility to cognitive biases and an inability to process and analyze large volumes of data rapidly. Machines, on the other hand, excel at handling vast datasets, identifying patterns, and scaling up solutions, 22 but their operations can be opaque, and software can miss ethical nuances and contextual factors. If the relationship between humans and machines is managed well, the interaction can leverage their respective strengths and compensate for their respective weaknesses.20,21 Yet, without such management, human–machine interactions can exacerbate human cognitive biases, which can lead to serious errors in decision-making.

This article offers a framework for identifying when cognitive biases are likely to come into play at various stages of human–AI collaborations on policymaking and how to prevent these interactions from leading to undesirable outcomes.

The Effects of Cognitive Biases on Human–AI Collaborations

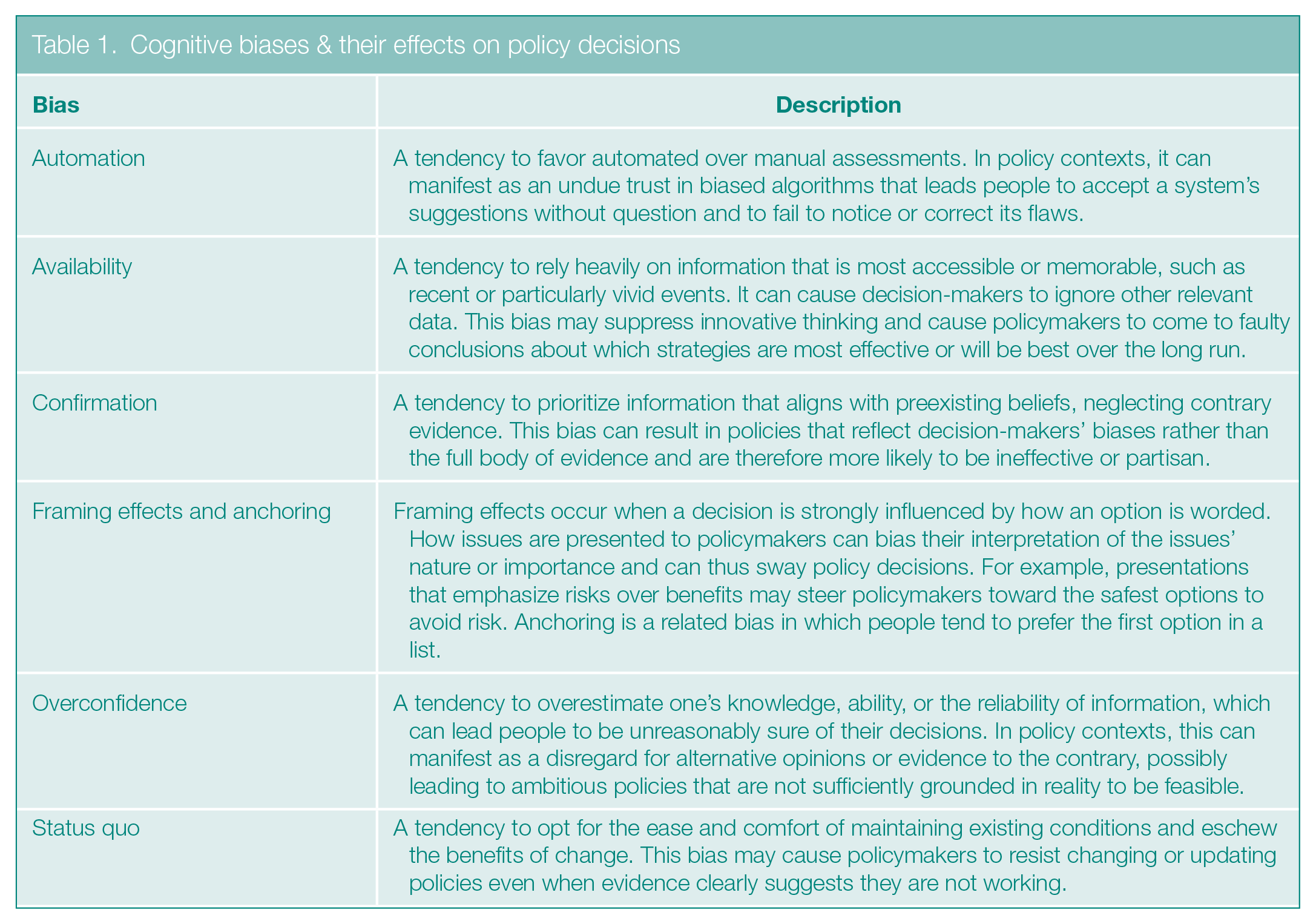

My framework builds on a large body of research that addresses the psychological processes contributing to decision-making mistakes. According to what is called the dual process model, the cognitive processes that shape decision-making involve two types of thinking: fast (Type I) and slow (Type II). 23 Type I thinking is not only rapid but also instinctive and often subconscious. It is the mode people employ when making quick decisions, such as those needed for routine tasks or snap judgments. Central to Type I thinking are heuristics, mental shortcuts that expedite decision-making. (Judging someone as kind because they look like a kind person you know is a heuristic, as is having a favorable view of something because it is familiar.) While efficient, these shortcuts are prone to a number of cognitive biases such as those described in Table 1. By contrast, Type II thinking involves a more deliberate and conscious process. People may engage in this type of thinking when faced with complex problems, such as formulating policy frameworks or evaluating regulatory approaches. Type II thinking involves careful consideration of available information, options, and the potential consequences of different choices.

Cognitive biases & their effects on policy decisions

Unfortunately, people often rely on Type I heuristics to deal with uncertain situations or ambiguous information, scenarios that abound in the policy arena. 23 As a result, policy decisions are particularly susceptible to the effects of these biases.24–26 The use of AI can then exacerbate these effects.

For instance, when AI systems are developed to carry out policies, they codify any cognitive biases that shaped the policies. And once the programs are written, they are often simply left to run, with little or no human input or oversight, perpetuating biases and the mistakes they engender. The lack of scrutiny comes in part from automation bias: People tend to favor automated over manual assessments and place undue faith in algorithms. In addition, policymakers may be motivated to trust algorithms to avoid accountability. If the computer makes a bad decision, they can blame the algorithm. In this way, AI tends to trigger some of the negative tendencies often found in large bureaucracies.

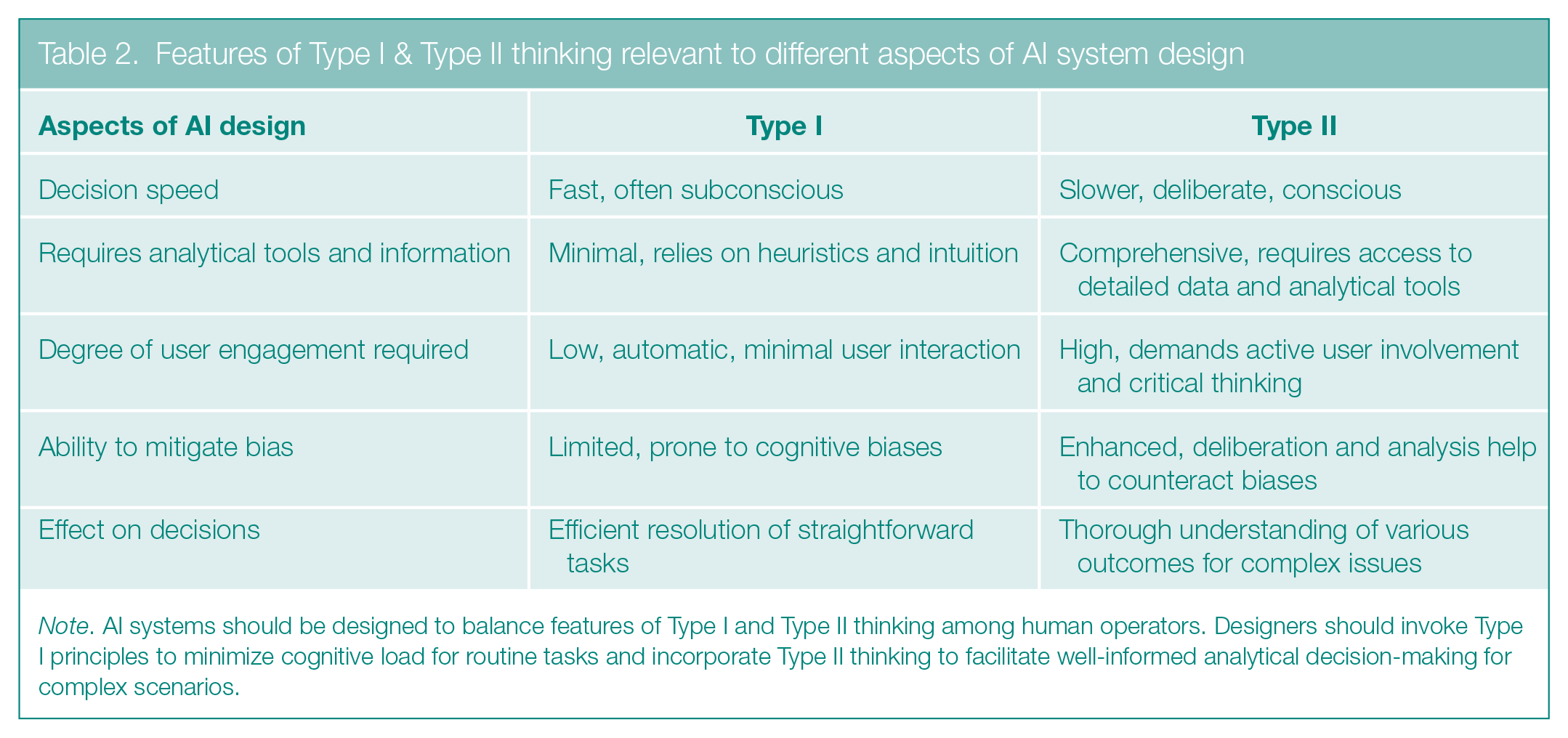

The Type I–Type II dichotomy may oversimplify the mental processes involved in decision-making. (See the Supplemental Material for a discussion of the reasons.) However, it remains a valuable tool for understanding decision-making at both the individual and organizational level, and for identifying what can go wrong when decisions involve AI.21,27,28 See Table 2 for more details of how Type I and Type II thinking differ in ways relevant to the human–AI collaboration.

Features of Type I & Type II thinking relevant to different aspects of AI system design

Note. AI systems should be designed to balance features of Type I and Type II thinking among human operators. Designers should invoke Type I principles to minimize cognitive load for routine tasks and incorporate Type II thinking to facilitate well-informed analytical decision-making for complex scenarios.

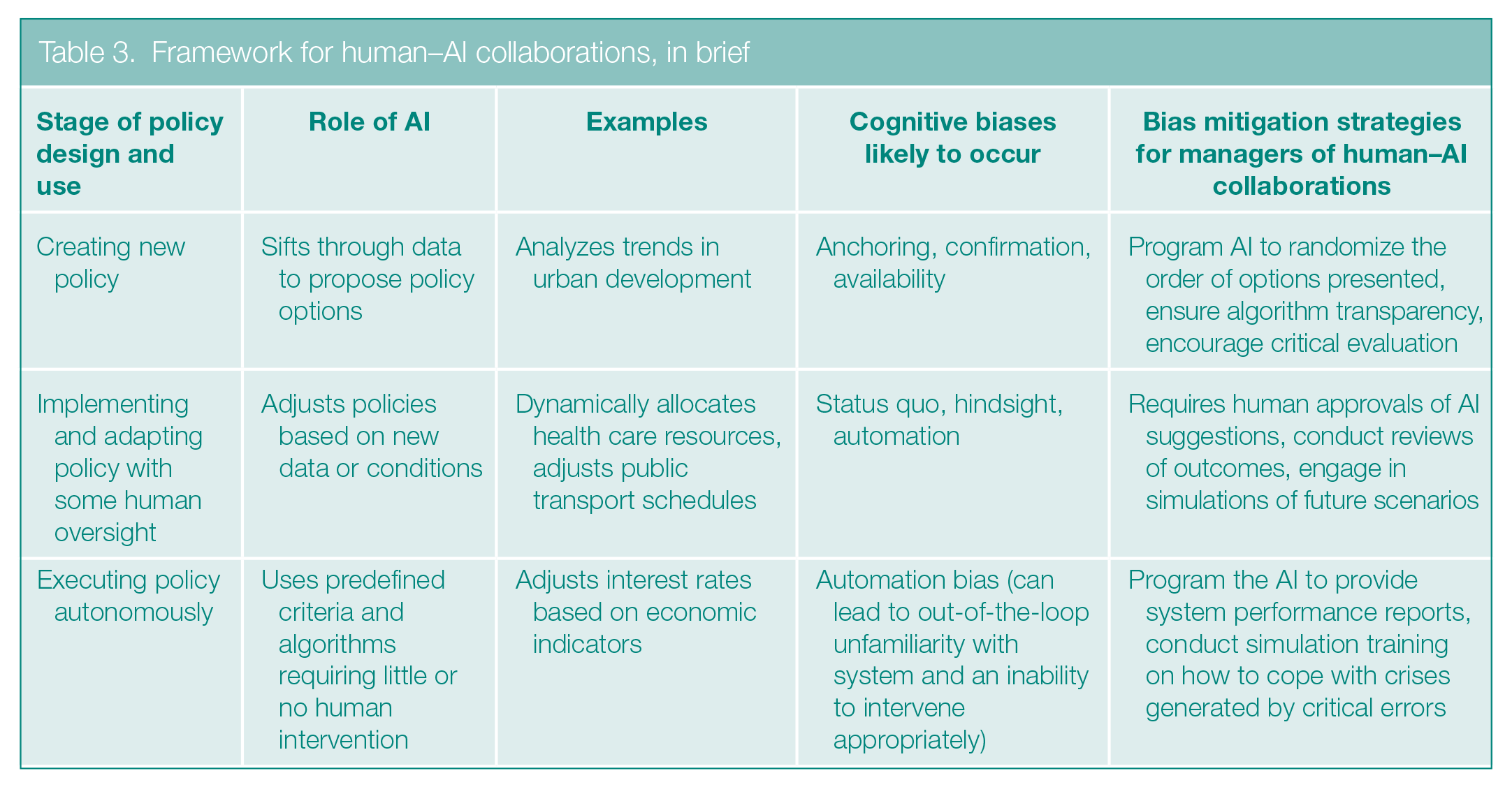

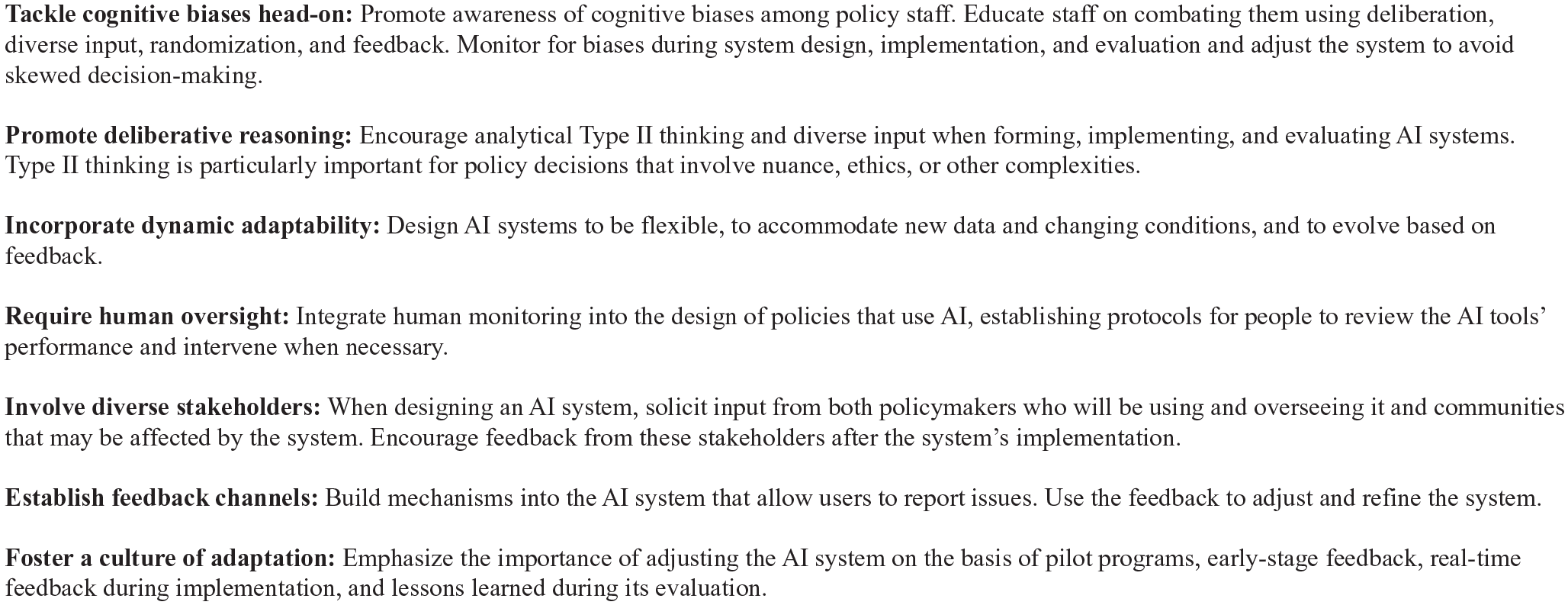

A New Framework for Human–Machine Collaboration

My proposed framework for managing the human–AI collaboration in public policymaking divides the policymaking process into three stages: policy creation; implementation and revision; and, eventually, autonomous execution in the case of many policies. For each stage, it identifies cognitive biases that frequently come into play when AI is involved and suggests ways to reduce reliance on Type I thinking29–31 and elicit more Type II thinking—that is, a more analytical mindset—when necessary.32–34 Table 3 summarize the framework, including stages of policy design and use, common cognitive biases, and potential mitigation approaches. The sidebar Principles Policymakers (Figure 1) Should Follow in Applying the Framework lists several principles, drawn from cognitive science research into human–machine interactions, for guiding mitigation efforts.

Framework for human–AI collaborations, in brief

Principles policymakers should follow in applying the framework

In general, I argue that policymakers who interact with AI can safely make quick, instinctive decisions for routine tasks but should engage in more deliberate decision-making in complex situations. 35 Unlike the original conception of these two types of thought, which centered on individuals, the framework described here acknowledges the influence that organizational dynamics and systems can have on decision-making. For instance, in the Robodebt case, the failure stemmed not only from individual decision-making but also from an organizational culture resistant to change and reliant on flawed automated systems.

Next, I describe in detail the cognitive biases and mitigation possibilities relevant to each stage of the policymaking process noted in the framework, beginning with policy creation.

How to Make Unbiased Policy

The initial steps of policy creation involve generating options for addressing a policy problem, assessing those options for feasibility and efficacy, and deciding which policies to pursue. At each step, AI can be used to sift through vast datasets to identify possibilities and predict outcomes, aiding in both idea generation and assessment. For instance, an AI system might analyze demographic trends, environmental impacts, and economic data to propose options for developing an urban area by constructing parks, commercial centers, or residential zones.

Once options are presented to policymakers, cognitive biases may influence which one is chosen. One such bias is anchoring, in which decision-makers tend to prefer the first option presented. Another is confirmation bias, in which people prefer options that align with their preexisting beliefs. Decision-makers may also show availability bias: the undue influence of recent events or of information that is easy to obtain.

By being aware of these biases, AI systems designers and policymakers can combat them before they influence policy. To counteract anchoring, system designers can make the AI tools part of the solution by programming the tools to present options randomly so that their order differs for each decision-maker. Mitigating the other forms of bias requires increasing Type II deliberation. One way that designers can encourage this type of thinking is by programming the AI to be transparent in spelling out how it generated the options and its level of confidence in them. Decision-makers will then consider whether the reasoning was sound and backed by relevant data rather than relying on their gut, a type of thinking that is prone to confirmation and availability biases. Thoughtful simulations of the rollout of each policy and consideration of what could go wrong may also go a long way to combat the effects of bias.

Policymaking leaders should also create a decision-making environment that encourages disagreement and perspectives that deviate from the norm. In such a setting, people are likely to question assumptions or beliefs and supply nonobvious information to bear on the issue. AI can be used as an ally in this process if it is built to generate nonintuitive options or ideas that challenge institutional norms, which can shock users out of their complacency. It is also worthwhile for leaders to remind decision-makers that real people will be affected by these policies, as these reminders should encourage them to consider what might go wrong and engage in actions that might prevent harmful outcomes.

How to Implement AI-Informed Policy

Once a policy has been adopted, the focus shifts to implementing it and adapting it as conditions change or new information comes to light. AI systems are ideally suited to monitoring policy outcomes and providing feedback to policymakers. By suggesting ways to adjust policies in light of this new data, AI can help ensure that policies remain effective and aligned with objectives. For instance, AI can assist in dynamically allocating health care resources during a pandemic based on real-time data on case numbers and hospital capacity. It might similarly adjust public transport schedules to match fluctuating passenger demand.

Systems vary in the degree of human oversight they require, ranging from needing explicit approval for changes to implementing updates unless actively vetoed. But in this second stage, humans monitor the systems to some degree.

The actions the system take may both reflect and reinforce cognitive biases in human operators. Norms in large bureaucracies tend to be conservative, and policymakers tend to show status quo bias, in which the ease and comfort of maintaining existing conditions outweigh appreciation of the benefits of change. As a result, policymakers often build or choose AI systems that tend to reinforce the status quo. Once the AI system draws its conservative conclusions, automation bias comes into play, leading policymakers to accept the system’s suggestions without question. If these suggestions also support preexisting beliefs, confirmation bias may further bolster a decision-maker’s confidence in the algorithm.36–38 This positive feedback loop may cause policymakers to ignore warning signs that a policy may need an update or, in the worst cases, serious repair.

Inserting Type II thinking into this cycle can prevent or mitigate the potential fallout. For example, requiring active confirmations, in which a decision-maker needs to approve the system’s suggestions for implementing a policy, is likely to motivate careful consideration of an AI’s output, because the decision-maker can be held accountable for its effects. Structured postimplementation reviews, in which policymakers reflect on the results of a completed operation, can also lead to useful reflection and lessons to be applied to current and future policies. At this stage, too, considering future scenarios downstream from a policy’s implementation can help policymakers anticipate outcomes and reconsider their assumptions, fostering a more dynamic and responsive approach.

Adding Human Monitoring to Fully Automated Systems

In the most advanced applications of AI in policymaking, systems eventually enter a third stage in which they operate autonomously. In this stage, they execute policies based on predefined criteria and algorithms with minimal to no human intervention. This level of automation is often seen in the financial sector, in which algorithms can adjust interest rates in response to economic indicators without direct human oversight. However, putting total trust in the AI (automation bias) and therefore leaving policymakers completely out of the loop may mean that no one is familiar with the automated decision-making process, something called out-of-the-loop unfamiliarity. As a result, when someone needs to intervene, say, in a crisis, the response can be slow or inappropriate.

It is important for managers overseeing human–AI collaboration to ensure that autonomous systems provide policymakers with regular, detailed reports on the systems’ performance and decision-making logic to engender the thoughtfulness characteristic of Type II thinking. Another way to ensure that human operators maintain the ability to intervene when necessary is to train them on simulated crises in which a system makes a critical error. Emphasizing Type II thinking in this context ensures that policymakers can critically evaluate the system’s operations and outcomes and therefore respond adeptly to unforeseen challenges, safeguarding the public interest.

Applying the Framework to Robodebt

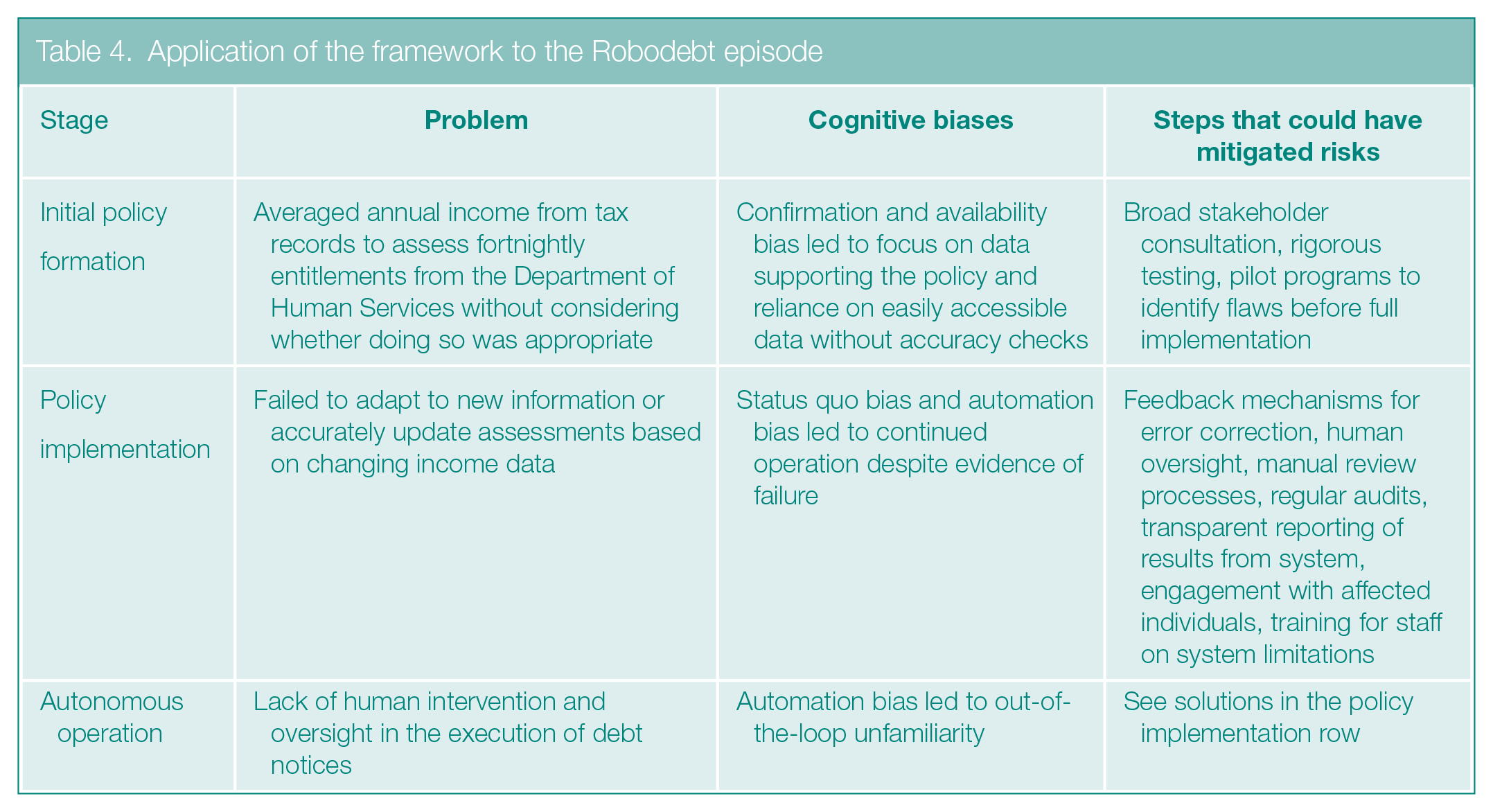

To illustrate how policy professionals can use these techniques, this section demonstrates how to apply them to the specific case of the Robodebt scandal mentioned earlier. 11 In 2016, the Australian government embarked on an ambitious project to recover welfare overpayments using an automated system known informally as Robodebt. 11 What was intended to be a showcase of precision and efficiency ultimately unraveled into a national scandal. The system, using a flawed analysis of income data, erroneously accused more than 470,000 welfare recipients of owing the government substantial sums of money. The fallout was immediate and, as previously mentioned, severe, with affected individuals facing financial ruin, mental anguish, and public stigma. After the program was shut down in 2020, the government ended up paying $1.8 billion to the people harmed. Next, I trace the missteps that led to the system’s failure, identify the cognitive biases at play, and suggest strategies that could have prevented the negative outcomes. (See Table 4 for a summary.)

Application of the framework to the Robodebt episode

Robodebt’s Overlooked Design Flaws

Policymakers conceived of Robotdebt in the process of developing a plan for identifying and recovering welfare overpayments they believed were occurring. Robodebt was designed to automate this process with little human input, supposedly saving time and resources. It was to match income reports from the Australian tax office with records from Centrelink, the agency that delivers social security payments, to identify discrepancies. 39 Specifically, Robodebt was designed to average annual income to assess fortnightly entitlements. However, welfare recipients were supposed to report income every two weeks only while they received support. Their average income for the year could be vastly higher than it was during the weeks they needed support, leading the system to mistakenly conclude that they had been overpaid even though they truly needed the support when they made their income reports. 40 What is more, human financial situations are often more complex than just their income statements, and yet the system was not built to take any of this context into account.

These shortcomings were either ignored or overlooked, as policymakers were heavily invested in affirming both their beliefs about the overpayments and Robodebt’s perceived efficiency, a classic case of confirmation and automation biases. 36 These biases led to an overconfidence in the system’s design and a disregard of dissenting data and of warnings early on that suggested the approach could result in significant errors. 40 Compounding this overconfidence was a reliance on the most accessible and salient data, which despite being incomplete and inconclusive, seemed to promise “mountains of gold” in potential debt recovery, according to a Royal Commissions report. 41 This instance of availability bias led to a failure to consider the full context and costs of pursuing those debts.

Designers of the Robodebt scheme could have mitigated these issues by seeking out inherent flaws, such as by engaging in a broader consultation process with stakeholders, including legal experts, the Australian tax office, and welfare recipients themselves, during the design phase of the system. Furthermore, conducting rigorous testing and pilot programs would likely have exposed Robodebt’s inaccuracies prior to its full-scale implementation. 38 These solutions are both examples of inserting more deliberation (Type II thinking) into the development of the system.

Robodebt’s Unchecked Performance

After Robodebt began operating, it had few, if any, checks on its methods. No mechanisms were put in place for updating policymakers on its output and spurring them to make any necessary corrections. Status quo bias led to a reluctance among decision-makers and operators to seek changes, despite increasingly apparent system inadequacies. 40 There even seemed to be little interest in ensuring that Robodebt’s methods were legal despite indications they were not. Overall, the culture in Australia’s Department of Human Services was resistant to change, prioritizing maintaining the status quo over addressing its flaws. 41

Confirmation bias also played a role in this lack of oversight and scrutiny in the implementation phase. Sociologist Robert van Krieken 38 has noted that Australian politics had a long-standing tradition of “constructing welfare recipients as the ‘undeserving poor,’” conflating fraud with inadvertent noncompliance. Decision-makers thus sought out information that confirmed this belief rather than looking carefully at the program and finding ways to fix it. They also placed undue trust in automated processes, making them overconfident about Robodebt’s capabilities. 42 Specifically, policymakers failed to attend to the fact that the algorithm did not fully account for the nuanced and dynamic nature of human financial situations. 39 This overconfidence, in turn, effectively sidelined human oversight and limited direct human interaction with the system, perpetuating its unchecked use.

Recall that once a system is allowed to run unchecked, out-of-the-loop unfamiliarity makes it increasingly difficult to rein it in. 42 This detachment represented a significant operational vulnerability for Robodebt, leading to a reduced awareness of the system’s shortcomings and an underestimation of its negative impacts on affected individuals. 40

Incorporating human oversight mechanisms from the start could have significantly mitigated the risks associated with excessive autonomy and accompanying biases. One such mechanism could have enabled feedback from welfare recipients, allowing them to report discrepancies between their actual income and the income reported by the AI system. This feedback would have prompted policymakers to make necessary adjustments, either in the system's algorithms or in specific cases. Policymakers also could have established regular reviews of the system’s decisions and established protocols for human intervention in ambiguous cases. These measures would have ensured that the system’s operations reflected the real-life situations of beneficiaries, enhancing accuracy and fairness. 43 Cultivating a culture of continuous monitoring and evaluation also keeps decision-makers informed and engaged with the system’s performance and its societal impacts. Additionally, training Centrelink staff on the system’s functionality and limitations would have enabled a more empathetic and precise approach to resolving disputed debts, balancing efficiency with empathy.

Other Disastrous Cases

A Dutch childcare benefits scandal uncovered in 2019 is another instance of automation gone awry because of too little human oversight and a resulting lack of Type II thinking. In this case, tens of thousands of families were wrongly accused of fraudulently claiming benefits and asked to repay the government. The culprit was a discriminatory AI algorithm that flagged families with dual nationalities or foreign-sounding names as being a high risk for fraud. 44 As with Robodebt, automation bias among Dutch policymakers led to undue trust in the algorithm’s decisions, which went unchecked because of insufficient human oversight. In addition, officials favored outputs from the system that aligned with their preexisting beliefs about who was likely to commit fraud. 36 The algorithm’s mistakes were tragic: Tens of thousands of families became impoverished, more than 1,000 children were placed in foster care, and some people committed suicide. 44

In what is known as the Post Office Horizon scandal, an automated accounting system called Horizon, installed in 1999 and 2000 in about 14,000 post office branches in the United Kingdom, led to a surge in the number of subpostmasters (people who run those branches) purportedly experiencing accounting shortfalls. The system falsely reported such shortfalls, suggesting that many branches had financial discrepancies when, in reality, no funds were missing. The subpostmasters had no way to interrogate the system, so they had no means to dispute its results.

But instead of looking into the system, the post office blamed these branch operators, leading to the wrongful prosecution of more than 700 subpostmasters over 15 years for theft, fraud, and false accounting. 45 Many subpostmasters went to prison and then had permanent criminal records. Many were financially ruined. A number lost spouses and suffered severe psychological stress.

Subsequent investigations showed that management selectively accepted system outputs that confirmed their suspicions of subpostmaster fraud, ignoring contrary evidence (confirmation bias).45,46 They also became detached from the operational realities of the Horizon system, reducing their ability to effectively address and intervene in its shortcomings. This detachment exacerbated their undeserved trust in the system and sidelined the critical role of human judgment.47,48 The assumption that the system was infallible meant that errors went unchallenged and uncorrected for years. 49

A mix of situational factors and inherent human cognitive biases in public governance make disastrous outcomes like these more likely. 50 The complexity and nuance of administrative decisions often require human judgments on whether automated systems are designed and operated in ways that best serve the public interest. There is thus a dire need for new government policies and procedures that ensure such judgment is applied to human–AI collaborations and that are informed by an understanding of the cognitive factors that influence how the presence of AI systems affects human decision-making.

Conclusion

This article presents an integrative framework for enhancing human–machine collaboration in public governance, delving into the cognitive processes that underpin such collaborations. The framework, which could inform the development of future regulatory frameworks, is grounded in a dual-process model of cognition. It optimizes the interplay between intuitive (Type I) and analytical (Type II) thinking in human–machine collaboration—in large part by balancing AI autonomy with human input—and thus mitigates the effects of cognitive biases to improve outcomes. A framework like this one is crucial for ensuring that AI deployment in governance aligns with ethical standards for policies, advances societal values, improves public policy, and importantly, does no harm.

For policy professionals, this article offers a guide to navigating the challenges presented by the use of AI. It frames automation as one component of the policymaking process and suggests strategies for identifying potential missteps and applying corrective measures. The suggested framework emphasizes the need to increase the amount of deliberative reasoning, human oversight, and feedback in the development and implementation of AI-driven policies and to reevaluate the appropriateness and effectiveness of AI-driven systems on an ongoing basis so they do not lead to undesirable outcomes. A culture of adaptation and learning along with software designs that encourage human input are critical to reducing the risks of AI in the policy arena. The arguments and examples presented here also underscore the need for more interdisciplinary research into ways to ensure that implementations of AI align with such essential societal values as fairness and the protection of individual rights.

Supplemental Material

sj-docx-1-bsx-10.1177_23794607251336099 – Supplemental material for A cognitive science framework could prevent harmful public policy decisions involving AI

Supplemental material, sj-docx-1-bsx-10.1177_23794607251336099 for A cognitive science framework could prevent harmful public policy decisions involving AI by Dirk Van Rooy in Behavioral Science & Policy

Footnotes

Disclaimer of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Part of this research was supported by grants from the BELSPO, the Belgian Defense research agency. The funder had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.