Abstract

The U.S. government administers many public programs and services. Creating programs that work requires an understanding of the psychological processes that influence behavior. To this end, policymakers may collaborate with academics who have expertise in behavioral science to generate ideas for improving existing programs, procedures, or policies; to test existing programs; or to design wholly new programs that address societal problems. Such collaborations also enable academics to test new or established theories in real-world settings. In this article, we draw on our collective experience in the U.S. Office of Evaluation Sciences, where we have worked on studies that evaluate various federal programs, to outline some of the core issues that make research collaborations between academics and government agents challenging. We also offer tips for making these partnerships productive and mutually beneficial.

Keywords

The U.S. federal government oversees a wide array of programs that serve state and local governments, organizations, and individuals. For instance, it provides financial aid to make college possible for many students, social insurance programs and tax incentives to promote retirement security, and initiatives that ensure access to health care and shore up financial security for families. The effective design and delivery of these and other public programs often hinge on an understanding of human behavior. They may depend, for example, on knowing that the arrangement or wording of choices can influence people’s decisions or that people often stick to a preset option instead of making an active choice even when the given default is not the best option for them. (A project example may be seen in reference 1.) Understanding these and other principles of human decision

Academic collaborators can support governmental efforts to bring behavioral insights to bear on programs and policies. For academics, collaborating on government projects may enable real-world checks of academic theories about how people making decisions are influenced by various stimuli. Academics can help policymakers study the efficacy of policies and programs by generating data that can inform program enhancements, expansion, or cuts. In other situations, they might aid in the design of new policies by envisioning and testing ways of solving a problem. In the past 10 years, evidence-building collaborations applying behavioral science to policy questions have proliferated, spanning studies with a cumulative sample size of over 23 million individuals. 2

A number of collaborations between behavioral science academics and policymakers have occurred under the auspices of the U.S. General Services Administration’s Office of Evaluation Sciences (OES). Since its inception in 2015, OES has partnered with dozens of federal agencies to design behaviorally informed program changes and conduct evaluations. The results from all OES projects—more than 100 to date—are published on the OES website, and many have been shared at academic conferences and in peer-reviewed journals (selected publications are showcased on the webpage https://oes.gsa.gov/publications/).

Several of us authors have worked as full-time fellows for OES, on loan from other institutions for a year or more. Others of us have been academic affiliates of OES who were assigned to specific projects as needed. Our collective experience working on OES projects with partners in federal, state, or local government has shown us the value of doing rigorous research that has both practical and theoretical implications. These partnerships have also underscored some of the challenges that can come up when academics and policymakers collaborate. Many of these challenges stem from misaligned incentives and cultural differences. In particular, academics are trained and incentivized to develop theories and uncover truths about human nature that are generalizable and to disseminate those insights in peer-reviewed academic journals. By contrast, policymakers and program administrators tend to focus on implementing specific programs successfully, such as by maximizing benefits for program recipients.

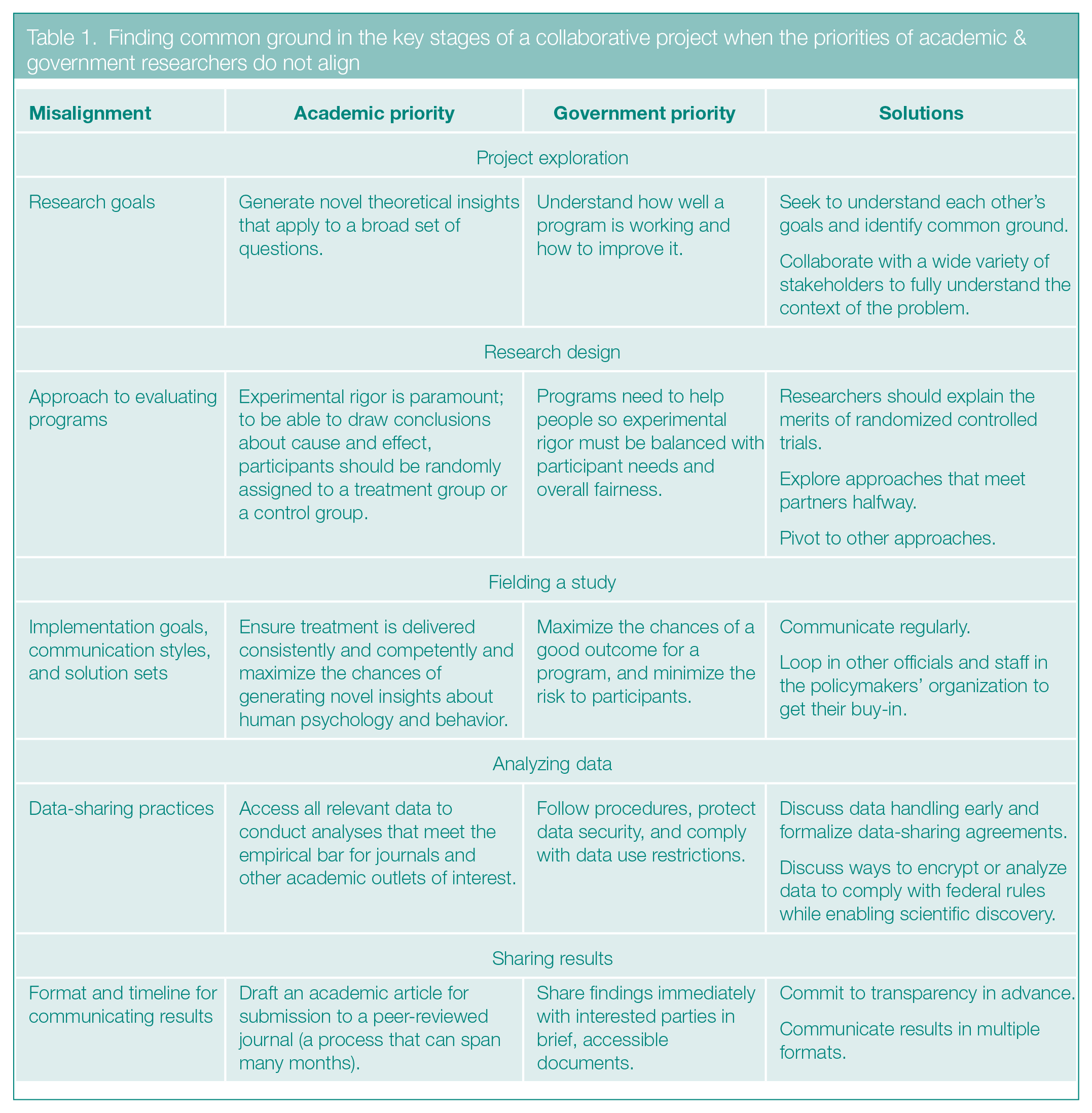

In this article, we provide a guide to the five key stages in the life cycle of a collaborative project: project exploration, research design, fielding a study, analyzing data, and sharing results. These are also the first five stages in the project process that OES uses in its evaluations. 3 For each stage, we identify the central challenges in academic–government partnerships and offer strategies for overcoming these difficulties. Table 1 summarizes the challenges and our recommendations.

Finding common ground in the key stages of a collaborative project when the priorities of academic & government researchers do not align

Project Exploration

For a collaborative research project to inform policy and contribute to academic literature, academics and policymakers need to come to an understanding about the outcomes they are seeking. The biggest early roadblocks to a productive collaboration usually involve a misalignment of the two parties’ research or practical goals.

On the one hand, academics are typically motivated by theoretical inquiries about what is known in a scientific field, 4 and they are rewarded primarily for publishing work that advances theory.5,6 As a result, they often focus on learning as much as possible about a particular theory or process and look for problems that offer opportunities to apply and advance knowledge in that area. Federal policymakers, on the other hand, are driven by empirical questions about what works 4 and tend to use a project to inform practical decisions about program implementation. This misalignment can stall projects if collaborators are not able to align their goals.

Solution 1: Seek to Understand Each Other’s Goals & Identify Common Ground

Academics interested in pursuing policy-based research can look up strategic

For their part, policymakers can look for lines of inquiry in academic research that dovetail with their programs and policies. For example, the American Political Science Association’s Public Scholarship Program creates public-facing summaries of political science research that make academic work more accessible to those with limited time or who lack access to journals that are behind paywalls. Other resources for policymakers who want to learn about relevant behavioral science include translational outlets, such as this journal and its partner blog, the

During early conversations, potential collaborators should be prepared to clearly communicate how the proposed project might meet shared goals and priorities and to be curious about the other’s needs. Academics should focus on learning as much as possible about the problem policymakers want to solve, in part by asking questions about its importance and its specific context, such as the constraints the policymakers face. After that, academics can begin developing ideas for solutions that align with and test current theories in their fields.

For their part, policymakers can explore what might make a collaboration appealing to academics, such as the possibility of publishing generalizable knowledge. Many academics may be willing to devote time to a project for free if they see an opportunity to test a certain idea, gain access to relevant data, and publish the findings. In line with this motivation, policymakers should expect to commit up front to transparency and sharing their findings, regardless of the result. (See the Sharing Results section later in this article for more on this topic.)

At times, these exploratory conversations may indicate that the policy needs of a given project do not mesh with the research goals of the academics. In such cases, academics should be frank about the conflict and attempt to connect their government partners with researchers who may be a better fit for the project.

Solution 2: Collaborate With a Wide Variety of Stakeholders to Fully Understand the Context of the Problem

To gain a deep understanding of the problem a policy is targeting, academics will want to talk to those who confront it on a daily basis. Grounding research in the experience of those affected by the problem can make studies and the programmatic decisions they inform more effective.

For example, the goal of one OES study was to develop an intervention to increase flu vaccination rates among veterans. 9 In the intervention, a health promotion staff member at a Veterans Affairs (VA) hospital provided feedback via emails to VA health care staff about their and their peers’ monthly vaccination performance metrics. This information was designed to motivate staff to boost their vaccination numbers, because many of them were not meeting goals set by the VA. Leaflets attached to the emails spelled out strategies for persuading the public to get vaccinated.

But the intervention produced no statistically significant differences in flu vaccination rates compared with a control condition in which no feedback or advice was given. In retrospect, providing performance metrics may have been insufficient to motivate health care staff who were working in an organizational culture that did not emphasize learning from performance metrics. Deeper knowledge of organizational practices and behavior through more extensive up-front engagement with people directly affected by the program could have helped the research team to shape the intervention in a more productive way.

By contrast, another OES study that involved a collaboration with several state governments produced promising results. In 2017, OES worked with the Department of Education (DOE) on a project designed to identify students who were eligible for a federal program that provided families experiencing housing insecurity with free meals, transportation, and a variety of additional educational supports. At the outset of the project, the research team, which included DOE staff, joined a call with state representatives in which the team explained the goals and rationale of the potential study. Later, the team met with representatives from states that were interested in participating in the study. During these conversations, the team learned that specific details about the homeless student program had been embedded in a long document containing lots of other information. This format led to information overload among the staff responsible for screening students for eligibility. These staff, called

To fix this problem, the team created separate, shorter emails just about the homeless student program to send to the homeless student liaisons. 10 The emails contained action items related to identifying homeless students and were sent every two weeks to remind the liaisons of the goal. The refashioned communications led the liaisons to identify more than 3,000 additional homeless students eligible for support through federal programs, an average of 12% more than the control group did.

One approach to improving a team’s overall understanding of a process or system is to create a journey map 11 or a behavioral map. The former outlines the steps of the process or system and helps partners understand people’s experiences when interacting with services. A behavioral map has a similar goal but emphasizes behavioral barriers and intervention opportunities—for instance, pain points that might prevent someone from applying for a benefit. Creating a journey map or behavioral map helps to clarify academic collaborators’ understanding of a process or system, highlights opportunities for improvement, and allows academic and policymaker collaborators to align on proposed interventions that address relevant barriers. Once all collaborators have jointly developed and agreed on a map, they can share it with stakeholders, such as program recipients, for feedback, which can help them refine the map and improve its accuracy. One example of such a map, seen in reference 12, outlines the steps for booking travel within the federal government and identifies questions and sources of confusion that discourage people from completing the process. It suggests that an intervention that reduces these psychological barriers could encourage people to book earlier and thereby reduce the cost of government travel.

Research Design

After the exploration stage, policymakers and academics need to decide on the design of a study. In academia, a randomized controlled trial is often seen as the gold standard for testing the efficacy of a treatment or intervention. In this design, one group of people receives a treatment or intervention (or different versions of a treatment), and a comparison or control group receives either no treatment or a standard, “business-as-usual” treatment. Individuals are randomly assigned to one of the groups. Random assignment avoids the possibility of having systematic differences between the groups other than the treatment that could explain the results.

The design can detect causal relationships between programs or program changes and outcomes and is therefore the best way to evaluate the efficacy of a new process or policy. However, such a design might seem impractical or unethical to government partners who would like to provide services or resources to all eligible individuals, particularly when the needs of the target population are urgent.

Solution 1: Researchers Should Explain the Merits of Randomized Controlled Trials

Academics can play a key role in explaining that randomized evaluations are aimed at building evidence around what works, which is necessary for helping the most people over the long run. They should also point out that if the treatment’s effectiveness in a particular context is still being evaluated, the treatment may be just as likely to harm as to help recipients, or it may be no more effective—yet more expensive—than the status quo. So before a program is adopted and scaled up, it can be important to test its effects in the most rigorous way.

If limited resources constrain the number of people who can receive a treatment, randomization may also be the fairest way to determine who gets it. For example, an OES study with the Department of Defense tested whether email alerts from pharmacists to providers could reduce concurrent prescribing of opioids and benzodiazepines for patients in the military health system. 13 Pharmacists did not have time to send alerts to every provider whose patients were being prescribed both medications. Randomly selecting which patients’ providers received the emails each week was arguably the fairest way to administer the program, in addition to being the most robust way to determine whether the strategy worked.

Solution 2: Explore Approaches That Meet Partners Halfway

In some situations, research partners might consider a wait-list control design—in which control participants get the treatment after the trial is complete. In this way, everyone ultimately receives the potential benefits of the intervention, and the researchers are still able to conduct a randomized evaluation. Another option is a “holdout” design in which half of the participants are randomly assigned to receive the intervention first, with the other half receiving it at a later time.

OES used a wait-list control design in a collaboration with the Idaho Housing and Finance Association (IHFA) to boost application rates to their Homeowner Assistance Fund. 14 In this program, homeowners facing mortgage delinquency can receive up to $50,000 in aid. IHFA wanted to see if direct mailers could induce more homeowners to apply. However, they also wanted to ensure that all Idaho homeowners with properties in preforeclosure or foreclosure received mailers. So the team sent half of these homeowners a mailer early in the study, and the other half received a mailer one month later. This enabled the team to compare outcomes for the treatment and control groups during the month between the two mailings, while ensuring that all homeowners received mailers in a timely manner.

Solution 3: Pivot to Other Approaches

Randomization may not be feasible for other reasons. For instance, the design may violate contractual obligations or introduce complications that cannot be accommodated within a project’s time constraints. In these cases, academic collaborators can consider using nonrandomized, or

In some cases, the team might want to consider a descriptive study. These projects generally focus on uncovering key aspects of a certain process or element of a program and pointing out possible barriers or pain points. For example, in one OES descriptive study, researchers used Department of the Interior data to quantify the use of three methods of evaluating job candidates and show how each method affected the length of the hiring process and the type of person who was recruited or selected at each stage. 16

In another descriptive study, OES worked with the Small Business Administration (SBA) to examine how local emergency funds were distributed to businesses in the initial stages of the COVID-19 pandemic. 17 SBA was interested in the extent to which these supports were allocated equitably to historically underserved business owners, such as racial and ethnic minorities and women. By examining publicly available information about these programs and conducting interviews with local government officials charged with implementing them, the research team confirmed that there was a significant need for relief funding and also revealed that evidence was lacking concerning how to best address potential barriers to distributing aid equitably. This research brought important issues to light and gave SBA some specific questions to add to its future research agenda.

Descriptive studies like these generate helpful insights and then lay the groundwork for future impact evaluations by generating hypotheses and helping to build relationships between collaborators. By boosting trust, goodwill, and an understanding of the collaboration process among members of the project team, a descriptive study can also set the stage for fruitful future collaborations.

Fielding a Study

Implementing a study involves navigating potentially divergent priorities as well. As we noted earlier, academics are primarily concerned with preserving treatment fidelity—that is, ensuring that the intervention is delivered consistently and competently—and advancing scientific ideas, whereas policymakers tend to focus on implementing their program effectively and in a way that avoids undue risk (for example, to program recipients) while staying within the project’s scope. Over the course of these projects, challenges can arise that put these goals in tension. Navigating those challenges requires clear and regular communication, often with individuals beyond the immediate project team.

Solution 1: Communicate Regularly

We recommend scheduling regular check-ins with partners. Regular meetings and ad hoc calls can be helpful tools for addressing issues as they arise, particularly during stages that involve a lot of work or tricky barriers to navigate. For example, implementing random assignment to an intervention or control group can be difficult, especially for those attempting it for the first time. Academics can help by meeting with partners to clearly explain how randomization works in practice, conducting the randomization on the partner’s behalf where possible, and providing written instructions to help address potential difficulties.

Solution 2: Loop in Other Officials & Staff in the Policymakers’ Organization to Get Their Buy-In

Policy-related collaborations often involve numerous interested parties beyond those directly involved in the study. For example, a team that administers a program and a team that handles data from a study may be separate from teams making policy or funding decisions. It is important to communicate with all of these parties to ensure that they fully understand and support the study.

Policymakers are best positioned to know who the organizational stakeholders are and what type of communication will be most effective with them, particularly when it comes to navigating complex organizational dynamics. As such, policymakers can stress the importance of considering the needs of a range of interested parties at each stage of a study. For their part, academics can create tools such as slide decks, summary memos, or email drafts to help their partners share details about the collaboration with others in their agency. These conversations can build enthusiasm for ongoing research collaborations and generate new ideas for potential partnerships and learning opportunities.

It is also important to make sure that the agency employees charged with administering the program are given adequate support and information to carry out the experiment, which may have requirements that go beyond their standard operating procedures. For instance, if agency employees are to deliver a randomized treatment involving text messaging, they may by default record the responses to the texts in a binary way, such as by categorizing them as either “answered” or “declined.” However, for text messages that went unanswered, academics may want to distinguish between cases in which the recipient actively declined the text (say, by replying “STOP”), those in which a message was received but apparently ignored, and those in which a message was not received because of an error in delivery. Academics should be sure to communicate requests like these to their collaborators.

Analyzing Data

Academics and policymakers often have disparate relationships with data. To academics, data can represent an opportunity to find answers and solve problems. To policymakers, using data also involves responsibilities to maintain the privacy and trust of the public beyond those imposed on academics by institutional review boards and professional ethics codes. Complex logistics and rules often surround the distribution of government data, particularly when it contains personally identifiable information. Academics may need to be patient when it comes to the restrictions and time-consuming complexities of federal data sharing. Next, we outline practical strategies for overcoming or mitigating limitations on data sharing.

Solution 1: Discuss Data Handling Early & Formalize Data-Sharing Agreements

Early on, academics and policymakers should create data use agreements and memorandums of understanding that outline the types of data needed for the project, what format the data will be in, how the data will be transferred to academic partners and other agencies, and who will be responsible for data transfer and security. Drafting this agreement and sharing it among the project partners as early as possible can help collaborators understand the process and figure out how to ensure data access for members of the academic team. Academic partners should also find out who is responsible for handling the federal data relevant to their project, because this team may be separate from the primary collaborators. The academic partners should then meet with the data team to understand how they work and how to interpret and use the data they plan to share.

Solution 2: Discuss Ways to Encrypt or Analyze Data to Comply With Federal Rules While Enabling Scientific Discovery

More often than not, federal agencies are required to comply with rigorous data security standards, particularly when it comes to sharing information that identifies individuals. These restrictions can make data sharing between government and academic institutions challenging. For example, it is sometimes important to match a given individual’s data in one data set with their data in another data set, but it can be difficult to do that without relying on personally identifiable information, such as a name or social security number. Academics can show their government partners how to strip data of personal information and replace it with a common set of codes that allow individuals to be uniquely—but not personally—identified and tracked across data sets. Being open and careful about these processes can build goodwill while also protecting people’s data privacy. These conversations are important to have early in a collaboration to avoid any misunderstandings later on.

In some circumstances, data transfer from a federal agency to an academic partner may not be easy or even possible. To overcome this difficulty, academics can supply software that enables the government researchers to analyze the raw data themselves and provide the academics with the results of that analysis. For instance, perhaps what matters to the academics is the difference in an average between two groups, and that result can be shared even when the individual data points cannot be.

Sharing Results

Policymakers and academics may have different incentives for communicating their results. In academia, peer-reviewed publication is a key motivator for doing research, whereas federal staff aim primarily to make a case for a new program or process or to continue, end, modify, or scale up an existing process or program. These goals require very different communication formats on divergent timelines. Policymakers want readable summaries of evidence produced quickly, in alignment with various deadlines, budget cycles, and other internal schedules, whereas academics generally want to pen a detailed description of the study for submission to a journal and then are willing to wait months for the journal’s extended peer review process before eventual publication.

Solution 1: Commit to Transparency in Advance

It can be helpful to discuss at the beginning of the project how and when both academics and policymakers aim to use the eventual findings. Will any publication deadlines or scheduling constraints influence project timelines?

It is also helpful to agree early on to share all results in some format, even if results are null or unexpected. Null results can lead policymakers to change an ineffective program or help researchers see flaws in a study’s design.

For example, the OES project with IHFA that studied their Homeowner Assistance Fund found that direct mailers had no effect on application rates among at-risk homeowners (those with homes in preforeclosure or foreclosure). 14 Because of this null result, IHFA canceled plans to roll out the mailers more widely and reallocated the funding to other forms of outreach that targeted rural homeowners. This example illustrates one way in which null findings can inform program decision-making. In other cases, null results have motivated research to estimate the effects of a program or program change more precisely or to better understand how to boost a program’s efficacy. Because null results can inform policy decisions and future studies, OES commits to sharing all findings publicly, regardless of the results. 3

Solution 2: Communicate Results in Multiple Formats

For policymakers, generating brief, accessible documents to share with other interested parties can be useful. Policymakers can emphasize the value of creating and sharing plain-language descriptions of evidence that supports better program delivery. These documents can often be produced alongside academic articles aimed at specialized audiences with marginal additional effort. Scientists can also distribute preprint versions of the academic articles, enabling the release of results in advance of the final version’s publication in a peer-reviewed journal. OES project findings are disseminated in digestible and accessible ways, such as project abstracts, presentations to relevant agencies, and intervention packs, which lay out an intervention’s components and methods in an easy-to-use format so that others can reproduce it.

Academic collaborators should also discuss coauthorship on publications with policymakers. Coauthorship can be a way to formally recognize policymakers’ expertise and contributions to the scientific process and to support a culture of evidence building that is inclusive of valuable, rigorous work outside of university contexts. Coauthorship may, however, require policy partners to check with their agencies about whether any agency disclaimers or clearances are needed for publication. Presentations—such as panel discussions at conferences involving both academics and their policy partners—can also be an exciting way to share findings and encourage future collaborations.

Conclusion

Academics and federal policymakers often have vastly different incentives, priorities, and goals. Here, we have outlined some of these misalignments along with ways to address them. Although unexpected challenges are likely to arise, if the two parties are adaptable and keep the solutions we have outlined in mind, the collaboration is likely to be successful. The rewards of that success are rigorous, evidence-based insights that can lead to more effective programs and policies as well as significant contributions to scientists’ understanding of human behavior. The benefits are worth the effort.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.