Abstract

The COVID-19 pandemic has added new urgency to the question of how best to motivate people to get needed vaccines. In this article, we present lessons gleaned from government evaluations of eight large randomized controlled trials of interventions that used direct communications to increase the uptake of routine vaccines. These evaluations, conducted by the U.S. General Services Administration’s Office of Evaluation Sciences (OES) before the start of the pandemic, had a median sample size of 55,000. Participating organizations deployed a variety of behaviorally informed direct communications and used administrative data to measure whether people who received the communications got vaccinated or took steps toward vaccination. The results of six of the eight evaluations were not statistically significant, and a meta-analysis suggests that changes in vaccination rates ranged from -0.004 to 0.394 percentage points. The remaining two evaluations yielded increases in vaccination rates that were statistically significant, albeit modest: 0.59 and 0.16 percentage points. Agencies looking for cost-effective ways to use communications to boost vaccine uptake in the field—whether for COVID-19 or for other diseases-may want to evaluate program effectiveness early on so messages and methods may be adjusted as needed, and they should expect effects to be smaller than those seen in academic studies.

Ever since vaccines for COVID-19 became available, public health officials have tried many strategies to induce as many people as possible to roll up their sleeves. 1 Yet, at the time of this writing, participation in vaccine programs has been disappointing. Rates of uptake for many vaccines fall well below public health recommendations, both in the United States2,3 and in other countries.4,5 In the United States, uptake of COVID-19 vaccinations has also been lower than expected.

Direct communication to individuals is a commonly used, relatively inexpensive tool for trying to increase vaccination rates, and communication “to enhance informed vaccine decision-making” is one of the five goals of the U.S. National Vaccine Plan. 2 The approach makes sense: Communications have the potential to address a number of behavioral barriers to vaccination. Individuals may be unaware that a vaccine is available and recommended for them, may not believe that a particular vaccination is safe or effective, may not form an intention to get vaccinated, or may not remember or be able to act on an intention to vaccinate. Research in behavioral science provides insight on how to design letters, emails, and other direct communications to overcome such barriers.6-8 For example, research suggests that particular kinds of messages have the potential to influence behavior, such as those that tap into people’s natural aversion to risk, provide the perspective of a hypothetical individual facing a decision, or reinforce good decision-making by emphasizing that a desired action is the norm.

Nevertheless, just how large a difference government communications can make has been unclear. In this article, we discuss a set of studies that presented an unusual opportunity to evaluate such interventions in a large-scale, real-world context. An analysis of this work offers lessons that might guide the use and evaluation of communications designed to improve uptake of vaccines against COVID-19 and other infectious disease.

The Evaluations in Detail

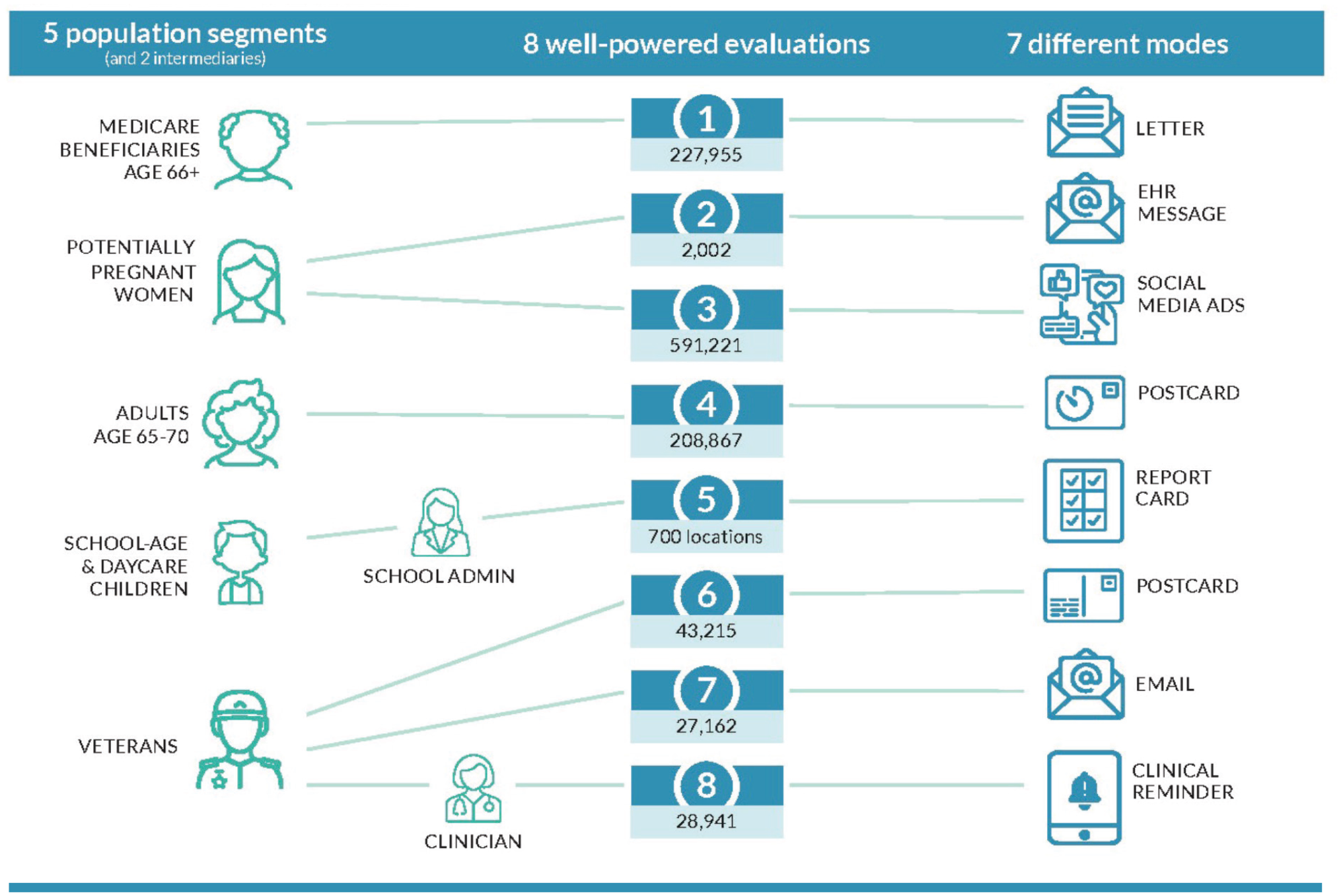

The research we review in this article was conducted by the U.S. General Services Administration’s Office of Evaluation Sciences (OES), a team of interdisciplinary experts who work across the U.S. government to help agencies build and use evidence, including behavioral insights, for the public good. Between 2015 and 2019, OES designed and tested an array of direct communications about vaccination in eight large-scale, randomized controlled trials—gold-standard experiments in which participants are assigned randomly to treatment and control groups to limit bias and enable researchers to explore cause-and-effect relationships. OES conducted the evaluations (known as the OES vaccination portfolio) in collaboration with a private health facility, a city department of health, a state department of health, three Veterans Health Administration health care systems, and one division of the U.S. Department of Health and Human Services.

The evaluations had a median sample size of 55,000, which is considerably larger than that reported in most behavioral science studies, as well as other appealing features. The interventions aimed to increase vaccination rates in populations that public health experts had strongly recommended be vaccinated, such as young children, pregnant women, and older adults. Several samples had high proportions of individuals from groups that have historically had lower vaccination rates. More than half the patients included in one of the evaluation’s samples at a Veterans Affairs facility, for example, were African American. The interventions were wide-ranging. Some experiments used email, postcard, letter, or social media notifications to convey messages to potential vaccine recipients. Others used very different strategies: In one, school administrators received a formal report card of a school’s vaccination compliance rate, and in another, clinicians received reminders through a hospital’s electronic health record (EHR) system. The behavioral insights that informed the interventions also varied. Behavioral studies have tested strategies such as reminders, prompts that encourage recipients to make a plan to get vaccinated, messages that emphasize social norms, communications designed to be persuasive, and variations in the source and timing of messages. All these strategies were used in one or more of the interventions OES evaluated.

Although the OES evaluations focused on routine vaccinations, the findings are relevant to addressing the ongoing challenge of COVID-19 in part because, as is true for routine vaccinations, the challenge of achieving and maintaining widespread immunization is expected to continue. Many of the OES evaluations were implemented in the midst of broader vaccination campaigns, which will also be necessary to continue to fight COVID-19.

We selected the OES vaccination portfolio for analysis for another reason as well: These evaluations overcome some drawbacks of many other investigations into the effects of communications designed to influence behavior. Although the amount of research on using communications to alter behavior has increased rapidly and some published experiments show measurable impacts, some of these effects have been hard to replicate in the real world.

A recent analysis of the literature on the use of nudges helps to explain why. Nudges, which often take the form of communications to influence behavior, are light-touch interventions that aim to alter people’s behavior without constraining choice or providing significant economic incentives. Journal articles reporting on academic studies of nudges show effects that are 7.3 percentage points higher, on average, than those seen in evaluations conducted by government units. The analysis suggests that a combination of publication bias and low statistical power can account for the gap. 9 Publication bias is the tendency to publish only statistically significant results. Such selective publication of results has been found to inflate expectations of actual effects and boost the likelihood of false positive findings. 10 Statistical power is a study’s ability to detect an effect if there is one. In general, published studies on communication interventions have had small sample sizes, which limit their power and the strength of the conclusions that can be drawn from them.

Overview of Office of Evaluation Sciences vaccination uptake evaluations showing population segments that were sampled, intermediaries in the communication chain, sample sizes, & the modes of communication

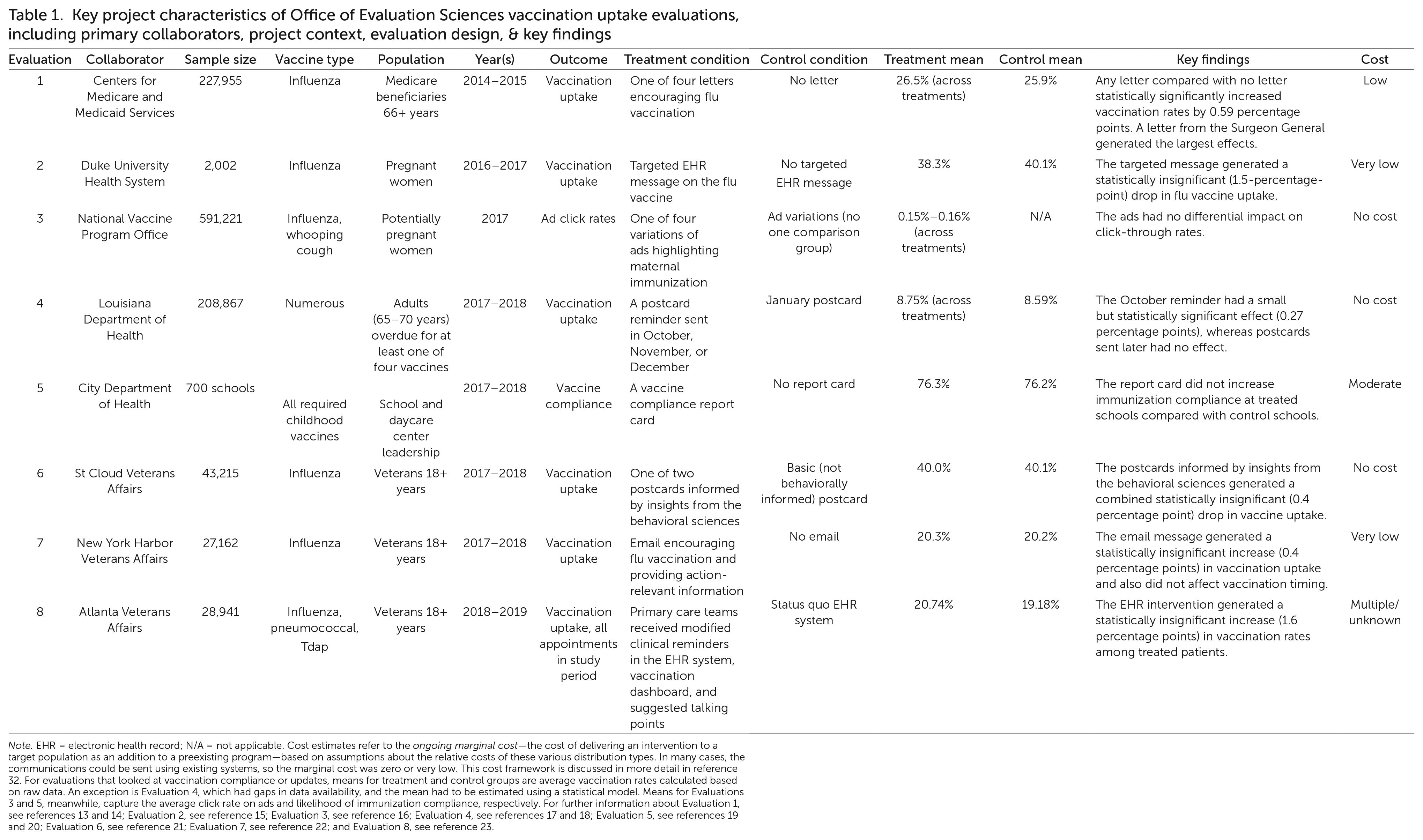

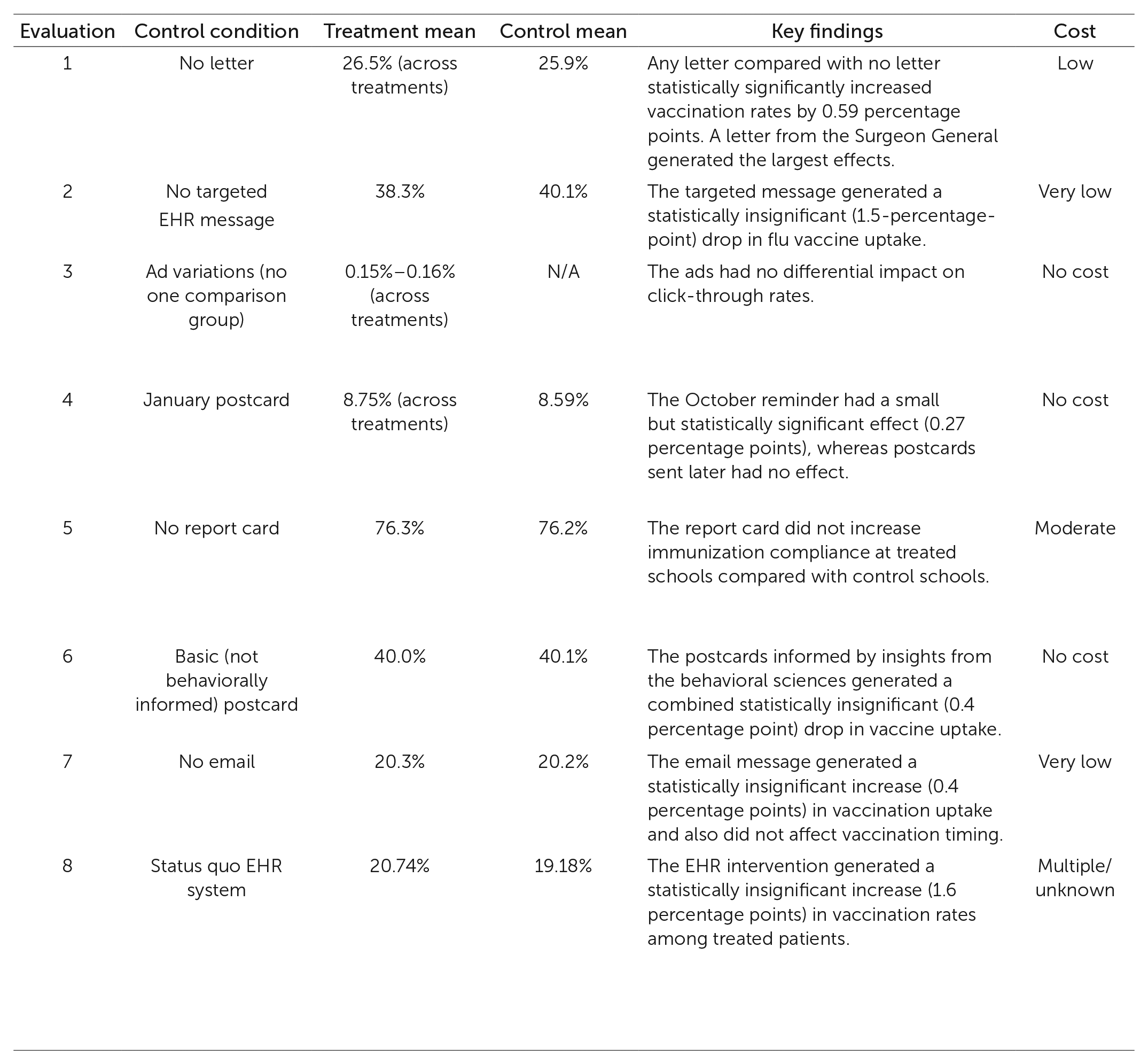

Key project characteristics of Office of Evaluation Sciences vaccination uptake evaluations, including primary collaborators, project context, evaluation design, & key findings

Note. EHR = electronic health record; N/A = not applicable. Cost estimates refer to the ongoing marginal cost—the cost of delivering an intervention to a target population as an addition to a preexisting program—based on assumptions about the relative costs of these various distribution types. In many cases, the communications could be sent using existing systems, so the marginal cost was zero or very low. This cost framework is discussed in more detail in reference 32. For evaluations that looked at vaccination compliance or updates, means for treatment and control groups are average vaccination rates calculated based on raw data. An exception is Evaluation 4, which had gaps in data availability, and the mean had to be estimated using a statistical model. Means for Evaluations 3 and 5, meanwhile, capture the average click rate on ads and likelihood of immunization compliance, respectively. For further information about Evaluation 1, see references 13 and 14; Evaluation 2, see reference 15; Evaluation 3, see reference 16; Evaluation 4, see references 17 and 18; Evaluation 5, see references 19 and 20; Evaluation 6, see reference 21; Evaluation 7, see reference 22; and Evaluation 8, see reference 23.

In the case of the vaccination portfolio, OES provided detailed preanalysis plans and committed to sharing the results of all evaluations; it has no “file drawer” where results are stashed away if they are not significant. The results of every evaluation of communications encouraging vaccination uptake conducted by OES from 2015 through 2019 have been reported on the OES website, and all evaluations are summarized here to avoid publication bias.

These evaluations were carefully designed to have high statistical power so as to detect even tiny effects. Minimum detectable effect, or MDE, is a measure of the sensitivity of a study; it is the smallest effect that, if it exists, would have an 80% chance of being detected. The OES evaluations had MDEs as small as 0.04 percentage points, and all but one had an MDE smaller than 1.7 percentage points. This made the evaluations powerful enough to detect the effects in the range of 2 to 4 percentage points that had been reported by two similar, related studies.11,12

Results of the OES Evaluations

Table 1 contains a summary of the results of the eight evaluations. The communications used in each study can be seen by visiting https://oes.gsa.gov/vaccines/ and clicking on the View Vaccination Portfolio Intervention Pack (PDF) button. Briefly, the interventions and results were as follows, listed roughly in the order in which they were done.

Evaluation 1

Letters encouraging flu vaccination were sent to Medicare beneficiaries in the experimental groups of this study, which was conducted in 2014-2015. A total of 227,955 beneficiaries received either no letter (the control group) or one of four versions of a letter encouraging vaccination (the experimental groups). The versions incorporated language that drew on past behavioral research. Study participants who received a letter were more likely to get their shot, although the version received made no difference.13,14

Evaluation 2

Messages encouraging flu vaccination were sent to a randomly selected subset of 2,002 pregnant women through a Duke University Health System EHR messaging system in this study, conducted in 2016-2017. The messages noted that pregnant women are at greater risk of contracting the flu and that the vaccine provides protection for both mother and infant. The messages reminded patients that they could receive the vaccine at their next scheduled obstetric appointment. The rates of vaccination did not differ significantly between women who received the messages and women who did not. 15

Evaluation 3

Varied social media advertisements promoted influenza and whooping cough vaccination for potentially pregnant women in this study, conducted in 2017. This campaign reached 591,221 women ages 20-34 years. It did not measure vaccination rates but instead analyzed click-through rates for four different messages to determine which messages motivated viewers to seek more information. The study found no statistically significant differences in the responses to the ads. 16

Evaluation 4

In this study, conducted in 2017-2018, the Louisiana Department of Health sent postcard reminders to 208,867 residents ages 65-70 years who were overdue for any of four vaccines. Postcards were sent on a staggered schedule over a season, enabling timing to be used to create treatment and control groups. A reminder sent in October had a small but statistically significant effect on vaccine uptake. Two rounds of postcards sent to different groups in November and December had no effect.17,18

Evaluation 5

In a study conducted in 2017-2018, the health department of a midsized city with 700 schools and daycare centers sent to randomly selected school leaders an immunization report card highlighting their school’s immunization compliance in comparison with that of similar schools. The report cards had no effect on the immunization rates for the schools that were sent the report cards, compared with the schools that were not sent report cards.19,20

Evaluation 6

Postcards promoting influenza vaccination were mailed to 43,215 patients in the St. Cloud Veterans Affairs Health Care System in Minnesota in a study conducted in 2017-2018. Three different postcards were designed using evidence from behavioral science: a basic postcard providing information about how to get a flu shot, a peer-group-influence postcard noting how many St. Cloud veterans get the shot, and an implementation postcard that prompted veterans to write a concrete plan for getting a shot at a specific time and place. There were no statistically significant differences in the uptake or timing of flu shots among the groups receiving the three postcards. 21

Evaluation 7

The New York Harbor Veterans Affairs Health Care System sent emails reminding patients to get their flu shots in a study conducted in 2017–2018. A total of 27,162 patients were assigned to either a treatment group or a control group. Using evidence from behavioral studies, messages sent to the treatment group framed getting a flu shot as a default course of action (requiring the patient to take action to opt out); gave an implementation prompt; and presented the benefits of a shot as being concrete and realized in the near term, providing protection within two weeks. The control group did not receive any emails. The emails had no significant effect on the uptake or timing of flu shots. 22

Evaluation 8

After a redesign, the Atlanta Veterans Affairs Health Care System’s EHR system bundled together three vaccination reminders to clinicians, provided an immunization information dashboard for each patient, and shared talking points that providers could use to address patient refusal or vaccine hesitancy. The evaluation, conducted in 2018-2019, enrolled 84 primary care team clusters that saw 28,941 unique patients during the test period. The difference in vaccination rates between the patients seen by providers exposed to the redesign and those seen by providers not exposed to the redesign was statistically insignificant.23,24

In summary, two of the eight individual evaluations yielded statistically significant effects. In Evaluation 1, letter reminders about influenza vaccination sent to older Medicare beneficiaries increased the probability that they would get an influenza vaccination by 0.4 to 0.7 percentage points (depending on the version of the letter)—a mean of 0.59 percentage points— relative to a group who received no reminder letter. 13 In Evaluation 4, postcard reminders sent to Louisiana residents ages 65-70 years in October increased the number of influenza, tetanus, pneumococcal, and shingles vaccinations they received (analyzed together) by a statistically significant 0.27 percentage points. However, later postcards mailed to different groups did not have a detectable effect. The overall difference in vaccination rates between postcard and no-postcard groups was smaller than 0.27 but still statistically significant: 0.16 percentage points. 17

To gain insights for COVID-19 vaccination campaigns from the OES studies, we performed a meta-analysis—a statistical analysis aggregating data from a group of related studies—of the six evaluations that measured vaccination rates at the individual level. We conducted the meta-analysis using a single number representing the effect size from each of those six evaluations (that is, the 0.59 and 0.16 percentage points corresponding to the average treatment condition effects in Evaluations 1 and 4). See the Supplemental Material for technical details.

The meta-analysis indicated that the effect from the communications was small and not statistically significant. We based this conclusion on the confidence interval we calculated. A confidence interval is determined using a procedure that gives a range of values that contains the true effect size some proportion of the time. For instance, if this meta-analysis were repeated 100 times with different data, 95 of those times the 95% confidence interval that we calculated would contain the true size of the effect in the sampled population. For the OES vaccination uptake evaluations, the 95% confidence interval for the difference in the vaccination rate in the treatment versus control conditions ranged from -0.004 (virtually no effect) to 0.394 percentage points. In other words, interventions like those in the OES evaluations are likely to reliably generate effects of no more than about half a percentage point.

Lessons for the COVID-19 Era

We draw four main lessons from our review of the OES evaluations.

Lesson 1

The first lesson is that behaviorally informed direct communications can increase vaccination rates at scale but may have smaller, less reliable effects than much of the published literature suggests.

The OES evaluations provide ballpark estimates for the effects that behaviorally informed direct communications might have at scale. Although the mailed reminders that yielded statistically significant effects in two studies produced small increases in the percentages of people who got vaccinated, those small differences translated into thousands of additional vaccinations, which may be considered meaningful by program managers.

Still, a public health official planning a vaccination campaign to combat COVID-19 or another disease would want to be mindful of the small sizes of the effects. A review of published studies gives the impression that direct messaging to individuals is more effective than the OES’s large-scale, real-world evaluations indicate is the case.

We have several reasons for putting more stock in the OES evaluations’ findings of small effects than in results from the studies described in the wider literature, including the studies that motivated OES and its collaborators to undertake the scaled-up interventions. For one thing, in contrast to the six OES evaluations that measured actual vaccination uptake, much of the literature applying behavioral science to vaccination focuses on individuals’ thoughts and feelings about vaccinations rather than actual vaccination uptake. 6 It is common for published studies to measure the likelihood of vaccination in a hypothetical scenario or an individual’s intention to be vaccinated rather than actual vaccination uptake. (See the 2011 study by Punam Anand Keller and her colleagues for an example of using a hypothetical scenario.) 25 But people often fail to follow through on their intentions to act. 26

Two non-OES studies that randomly assigned communications and measured actual vaccination rates, albeit with sample sizes under 10,000 participants, found effects in the 2- to 4-percentage-point range.11,12 A systematic review of studies exploring the efficacy of emailed reminders to vaccinate found increases in vaccine uptake ranging from 2 to 11 percentage points for people sent an email compared with people who were sent no reminder. 27

Another reason to trust the OES evaluation findings is that, as we noted earlier, the median sample size of 55,000 across the eight OES evaluations is considerably larger than that reported in most published studies. Finally, OES reported on the results of every evaluation it conducted.

A closer look at the OES results suggests that the context in which communications are used may explain why some effects seen in studies are not easy to replicate in government evaluations. For example, the OES evaluations did not find the effect seen in one recent field experiment done in an urban health clinic system. In that experiment, researchers testing 19 different text-message vaccination reminders in a sample of roughly 47,000 patients found an average increase of 2.1 percentage points in vaccination uptake. 28 The text messages were sent by primary care providers to a sample of patients who had upcoming appointments. The difference between that context and the government sending letters to older adults on Medicare (for instance) may provide a partial explanation for the smaller effects observed in the OES evaluations. The health clinic sample, consisting of people who had appointments scheduled with a familiar health care provider, may have been more responsive to messaging than were the OES evaluation’s sample of older adults on Medicare. In addition, working at large scale and in a government context sometimes affects which elements of a messaging campaign can be included. We discuss this point in more detail in Lesson 3.

The finding that behaviorally informed direct communications are likely to have only small effects at scale highlights the importance of sample size in a randomized controlled trial to evaluate the efficacy of such interventions. In many cases, a randomized control trial needs quite a large sample (several thousands of people) to achieve sufficient power to detect effects in a real-world context that has many additional influences on behavior that can reduce the salience and effect of an intervention.

Lesson 2

The second lesson is that additional evidence is needed to evaluate how the cost-effectiveness of behaviorally informed direct communications compares with the cost-effectiveness of other interventions.

Arguments in favor of using communication strategies to influence behavior tend to emphasize that these are inexpensive to implement when calculating costs on a per-recipient basis. Light-touch approaches like direct communications are generally seen as having a low cost per participant and being easy to implement relative to heavier-handed approaches like redesigning forms, prescheduling appointments, or offering material incentives. Also, direct communications can be aimed more precisely at particular individuals or subgroups than is possible with some alternatives, such as commercial advertising campaigns.

Only a few researchers have compared the cost-effectiveness of behaviorally informed communication interventions with the cost-effectiveness of approaches such as financial incentives or policy mandates.29-31 These studies generally find that communications compare favorably to other approaches. Similarly, a published report on one of the OES vaccination uptake evaluations 13 extrapolated from the cost of printing and sending letters to argue that the cost per additional vaccination in the most effective treatment condition was approximately $90, in line with costs of other approaches. The small effect sizes in the OES evaluations highlight the importance of determining whether the costs of various approaches are justified by the likely outcomes.

To date, OES vaccination uptake evaluations have not collected comprehensive cost information, including hours and salary costs for those involved in delivering an intervention. However, OES recently developed a framework to roughly categorize interventions based on their approximate ongoing marginal cost— the added cost of delivering the intervention along with other communications. 32 Using this framework, the eight vaccination uptake interventions evaluated by OES include three with no cost (defined as involving no new change to a delivery medium already in use), two at very low cost (from added e-mail), one at low cost (from added printing, printing and mailing, or phone messages), one at moderate cost (from added staffing costs as part of intervention delivery), and one with costs labeled “multiple or unknown” (from the use of more than one of the changes listed above or from other interventions, such as redesigned EHR messaging). The small effect sizes observed for behaviorally informed direct communications suggest that it may be most sensible to deploy these interventions when they can be delivered at very low or no cost, such as by using an existing communication pipeline.

To build stronger evidence about cost-effectiveness, future research needs to record more comprehensive cost data. 33 Ideally, researchers would go beyond printing and mailing costs, capturing both the administrative costs to design and deliver such interventions and the burdens the interventions impose on recipients.34,35 For example, one possible comparison is between behaviorally informed direct communications and material incentives. 36 Several studies have found that monetary payments increased vaccination rates,37,38 although, as Tom Chang and his coauthors have reported, that is not always the case. 39 If payments have orders-of-magnitude-larger effects on vaccination, they may actually be more cost-effective than are direct communications that cost less per target. Additionally, if these strategies have different effects on the behavior of nonidentical groups of people, it may be cost-effective to use both approaches in parallel.

Lesson 3

The third takeaway is that rapid evaluations of vaccination uptake interventions in real-world contexts are essential for learning what works in specific contexts for populations of interest.

The OES vaccination portfolio testifies to the importance of evaluating interventions as they are deployed in the field. As might be expected, both implementation details and effect sizes appear to depend highly on context, so engaging in testing during study implementation and designing studies to have high statistical power are both essential. If vaccination campaigns are staged to incorporate rapid evaluations of different approaches rather than deployed as a systemwide rollout of a single strategy, investigators will be able to quickly (and relatively cheaply) discard approaches that are not working and tweak their efforts based on observed results,40,41 enabling vaccination efforts to become increasingly effective over time. Widespread and rapid randomized controlled trials of vaccination uptake interventions could enable the COVID-19 vaccination campaign to build evidence about how much (if at all) interventions work to increase vaccination rates.

An important contribution of the OES vaccination portfolio is its demonstration that when scaling up the best practices outlined in the research literature, investigators may find that practical constraints dilute the expected effects of an intervention. For example, OES drew on a study in which about 3,200 utility company employees were sent a letter listing the days, times, and location of a workplace vaccination clinic. 12 The letters sent to employees in the treatment groups included a planning prompt that encouraged them to write in the date or date and time on which they planned to get their shot. OES added similar planning prompts to some of the letters sent to approximately 228,000 Medicare beneficiaries in Evaluation 1, which was described earlier in this article, 13 but it was not feasible to include information about the locations and hours of operation of local vaccination clinics. The OES study produced a smaller increase in vaccination uptake, which suggests that including a clinic’s location and hours might be necessary to reap the full benefit of a planning prompt. Issues of this sort may only become evident when a strategy is evaluated in the context in which it will be applied.

A second example of the practical constraints that can be revealed by real-world tests comes from Evaluation 8, which issued reminders to clinicians in Atlanta through a revamped EHR system at the Atlanta Veterans Affairs Health Care System, bundled patients’ needed vaccinations together, and provided talking points for clinicians to use to encourage vaccination. In an earlier study, Amanda F. Dempsey and her colleagues had tested an intervention that included providing 2.5 hours of training to providers in how to use language that presumes patients have a plan to receive the human papil-lomavirus vaccination rather than initiating a discussion about options. 42 That study found a 9.5-percentage-point increase in the initiation of a human papillomavirus vaccine series (see also a study that involved a one-hour training session 43 ). Building on that approach, OES modified an EHR system to encourage providers to use language that presumed the patient would vaccinate (for example, “It is time for your X shot today”). The change was part of a suite of modifications to the EHR system designed to make it easier for providers to recommend and order vaccines. Subsequent conversations with providers in the OES evaluation indicated that many did not actually use the presumptive language that was suggested. 24 This implementation information is invaluable for informing the design of future interventions, which might try alternative communication strategies or use intensive training.

Lesson 4

The final takeaway is that leveraging existing vaccination administration systems to support randomized evaluations can make evidence building easier and enable practitioners to tweak vaccine programs for maximum effectiveness.

The OES vaccination portfolio demonstrates the value of working within vaccination administration systems that can support randomized evaluations. 44 These studies were conducted quickly (often within a single influenza season) and at low cost by making behaviorally informed design changes to the content or delivery schedule of existing communication programs, which then delivered variants to randomly selected recipients through the existing systems. OES projects show that randomized evaluation can be embedded in a variety of systems with differing data capabilities, even within complex administrative systems ranging from a city department of health to a regional Veterans Affairs health care system. A system need not be specially designed for randomized controlled trials to enable randomized evaluations. It would be particularly easy to evaluate vaccination strategies on a national scale if there were a single federal immunization registry that recorded the vaccination status of every individual or if existing local immunization registries were standardized, which would enable the identification and random assignment of potential vaccination recipients to interventions.

The OES evaluations measured outcomes at low cost by using existing administrative data, such as that captured by state immunization registries, EHRs, and medical claims databases. The more comprehensive and up-to-date the databases, the more useful they are for measuring outcomes in evaluations. For instance, the availability of real-time data about pediatric vaccinations was crucial for the success of the OES collaboration with the city health department in Evaluation 5 because it facilitated the introduction of up-to-date immunization compliance report cards for schools. In contrast, the Louisiana Department of Health postcard collaboration, Evaluation 4, was complicated by the fact that health care providers are not required to report adult vaccinations.

Conclusion

The success of efforts to combat COVID-19 will depend critically on whether people get vaccinated. Communications are a key tool that governments can use to encourage vaccination. Together, eight randomized evaluations of efforts to increase routine vaccinations show that direct communications may increase vaccination uptake, but effect sizes are small. The small effects imply that such communications are a complement to but not a substitute for vaccination policies and programs that maximize convenience and access—for example, the widespread availability of free vaccinations, perhaps with incentives or mandates.

It is worth considering how the context of COVID-19 vaccinations may differ from the context for influenza and other routine vaccinations. Communications that increase the uptake of influenza and other common vaccines typically do so by reminding people who may otherwise forget to get vaccinated to do so and making it easier for them to follow through on existing intentions. 6 One review described this as “leveraging, but not trying to change, what people think and feel.” 6 These interventions are typically deployed in situations where vaccine supply exceeds demand.

Reported increases in vaccine hesitancy and resistance in recent years likely will create new challenges. Regardless of the specific challenges for continuing COVID-19 vaccination campaigns, the initial demand for COVID-19 vaccinations in the United States exceeded supply. By the beginning of 2022, the situation had reversed in the United States, and hesitancy and resistance to vaccination were reported at home and abroad. 45 The OES evaluations show how vaccination uptake interventions can be rapidly and rigorously evaluated at a large scale. Planning for these evaluations now and deploying them soon will allow for the collection of much-needed evidence about how to best apply communications and other interventions as part of current and future vaccination efforts.

Supplemental Material

sj-pdf-1-bsx-10.1177_23794607231192690 – Supplemental material for Using communication to boost vaccination: Lessons for COVID-19 from evaluations of eight large-scale programs to promote routine vaccinations

Supplemental material, sj-pdf-1-bsx-10.1177_23794607231192690 for Using communication to boost vaccination: Lessons for COVID-19 from evaluations of eight large-scale programs to promote routine vaccinations by Heather Barry Kappes, Mattie Toma, Rekha Balu, Russ Burnett, Nuole Chen, Rebecca Johnson, Jessica Leight, Saad B. Omer, Elana Safran, Mary Steffel, Kris-Stella Trump, David Yokum and Pompa Debroy in Behavioral Science & Policy

Footnotes

author affiliation

Kappes: U.S. General Services Administration’s Office of Evaluation Sciences and London School of Economics and Political Science. Toma: U.S. General Services Administration’s Office of Evaluation Sciences and Harvard University. Balu: U.S. General Services Administration’s Office of Evaluation Sciences and MDRC. Burnett: U.S. General Services Administration’s Office of Evaluation Sciences. Chen: U.S. General Services Administration’s Office of Evaluation Sciences and University of Illinois at Urbana-Champaign. Johnson: U.S. General Services Administration’s Office of Evaluation Sciences and Dartmouth College. Leight: U.S. General Services Administration’s Office of Evaluation Sciences and International Food Policy Research Institute. Omer: U.S. General Services Administration’s Office of Evaluation Sciences and Yale Institute for Global Health. Safran: U.S. General Services Administration’s Office of Evaluation Sciences. Steffel: U.S. General Services Administration’s Office of Evaluation Sciences and Northeastern University. Trump: U.S. General Services Administration’s Office of Evaluation Sciences and University of Memphis. Yokum: U.S. General Services Administration’s Office of Evaluation Sciences and Brown University. Debroy: U.S. General Services Administration’s Office of Evaluation Sciences. Corresponding author’s email: pompa.debroy@ gsa.gov.

author note

This work was funded in part by the National Vaccine Program Office and the Laura and John Arnold Foundation. We thank the many federal agencies, collaborators, academic affiliates, and the Office of Evaluation Sciences (OES) team members involved in developing and implementing the OES vaccination portfolio and the eight randomized evaluations referenced. A list of some of these collaborators is in the Supplemental Material at ![]() journal. We granted unlimited and unrestricted rights to the General Services Administration to use and reproduce all materials in connection with the authorship.

journal. We granted unlimited and unrestricted rights to the General Services Administration to use and reproduce all materials in connection with the authorship.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.