Abstract

In October 2022, Elon Musk took over Twitter. Although conservatives cheered the takeover, progressives decried it as dangerous for democracy. Despite scholarly interest in Twitter, little is known about the impact of “old” Twitter’s policies on the information environment, making it difficult to speculate about Musk’s effects. The authors begin to address this gap through an analysis of 245,020 tweets collected before and after Twitter suspended eight accounts calling for state audits of the 2020 presidential election results. In this analysis of message amplifiers, or accounts receiving 200 or more retweets, and message drivers, or top-ranked accounts, no evidence is found that the Twitter ban improved the ideas or the quality of information shared about the election, nor did it dramatically change who posted about the audit. The authors conclude with a discussion of the implications of these findings for future research on Twitter under Musk’s control.

After purchasing Twitter on October 27, 2022, Elon Musk tweeted “the bird is freed.” His tweet, and purchase of Twitter, was welcomed by Republicans, who had long criticized the platform for censoring conservative viewpoints. Senator Ted Cruz (R-TX) called Musk’s Twitter takeover “one of the most significant developments for free speech in modern times.” Marjorie Taylor Greene (R-GA) tweeted “FREEDOM OF SPEECH!!!” later adding “We are winning” (Popl 2022). Plenty of other conservatives agreed, flooding Twitter with pro-Musk memes (Miller 2022). Democrats were less thrilled with the takeover. Senator Elizabeth Warren (D-MA) called the deal “dangerous for our democracy.” She added, “Billionaires like Elon Musk play by a different set of rules than everyone else, accumulating power for their own gain” (Halaschak 2022). Journalists and pundits alike point to the recent, sometimes temporary, suspension of several mainstream journalists’ Twitter accounts as evidence of Musk’s desire to curtail speech that does not align with his brand of conservative politics (Peters 2022).

All the attention given to Twitter, its owners, and its policies is not surprising. Politicians, governmental offices, and public agencies have relied on Twitter for nearly a decade to quickly communicate everything from perspectives on policy proposals to information regarding road closures and local emergencies (Crudele 2022; Pattison-Gordon 2022). This is in large part because the platform reaches a healthy swath of the American public (23 percent of U.S. adults), many of whom use Twitter to quickly access news and increase their understanding of current events (Aslam 2022; Odabas 2022). Although Musk’s Twitter moves are likely to continue to make headlines, scholars should be careful in assessing the effects of his actions on the platform’s information environment. Specifically, before waxing nostalgic about the Twitter of yore, it is worthwhile to assess the relative effectiveness of policies, such as those involving deplatforming, under the old regime on the information environment, which, despite broad scholarly interest in Twitter and the quality of information on it, we know little about.

To examine whether deplatforming before the Musk takeover appears to influence the information environment around an issue, we analyzed tweets before and after Twitter banned eight accounts calling for the audit of the 2020 presidential election results in Maricopa County, Arizona, as well as in other states Trump lost (referred to hereafter as the Arizona audit accounts). The permanent suspension of the accounts occurred on July 27, 2021, and made national news as politicians on both sides of the aisle responded. Although Democrats praised Twitter for striking another blow against misinformation, Republicans decried the move as more evidence of censorship perpetrated by Democrats in collusion with big tech (Schwenk 2021). Here, we analyze the relationship between deplatforming and who broadcasts information and the quality of information they share. We assume that accounts engaged in discussions about the Arizona audit were unaware of the impending ban. That is, the account bans function as a quasi-exogenous shock to individuals participating in the conversation, which allows us to examine the impact of the ban on the Twitter information environment surrounding the Arizona audit. Although we are not able to infer causality, we are able to paint a more complete picture of how deplatforming shaped an issue information environment before Musk took over Twitter, providing critical contextual information for assessing the impact of current or future suspensions.

We address three research questions: Does banning accounts on Twitter change who drives the conversation around the Arizona audit? Does banning accounts on Twitter change the kinds of arguments accounts make about the Arizona audit? Does banning accounts on Twitter affect the type and quality of information shared about the audit? We answer these three questions through an examination of two different kinds of accounts: political message amplifiers, or accounts that receive 200 or more retweets in the sample, and political message drivers, or accounts that rank in the top 10 most posted tweets on each day associated with the sample. We find that the Twitter ban does little to improve the information environment around the Arizona audit. The ban does not appear to effectively change the kinds of ideas that are amplified on Twitter about the Arizona audit, nor does it appear to affect who posts about the Arizona audit. In fact, two accounts not only sent the majority of the tweets on each of the days in the sample but also remained steadfast in their support of the lie that the election was stolen from Donald Trump and that the fraud was being covered up by Democrats. We conclude the paper with a discussion of the implications of our findings for future research on Twitter under Musk’s control.

Political Influencers and the Information They Share before and after Bans

Social scientists have long been interested in who shapes political discourse in virtual forums. Much attention has focused on political influencers, which are also known as “opinion leaders,” “hashtag entrepreneurs,” “influentials,” “initiators,” “opinion brokers,” “networked gatekeepers,” and “crowd-sourced elites,” or the accounts that potentially alter how users think and talk about issues on platforms such as Reddit, Twitter, and Facebook (Dubois and Gaffney 2014; Kermani and Adham 2021; Sunstein 2017; Valente and Davis 1999). Not surprisingly, social scientists emphasize how political influencers amplify ideas and the relative reach of these accounts. Using metrics such as retweets, mentions, and follows as well as centrality measures such as PageRank and betweenness centrality, the extant research assesses how these accounts, which we call message amplifiers, influence the course and content of a conversation (e.g., Dubois and Gaffney 2014; Jackson and Foucault Welles 2016). For example, Stewart et al. (2017) found that message amplifiers use hashtags to influence framing contests and to channel how information flows on Twitter. Likewise, Meraz and Papacharissi (2013) found that message amplifiers, measured as accounts with high betweenness centrality scores, are well positioned to cross over between network clusters, share information, and influence the framing in both.

We know far less, however, about message drivers, or accounts that frequently post on a digital forum relative to a political issue or event but do not necessarily have large numbers of followers. There is reason to believe that these influencers also are important to understanding the information environment around political issues, at least on Twitter. First, scholars find that there are different ways in which an account, and its content, may become visible (Meraz and Papacharissi 2013). Rohlinger, Williams, and Teek (2020), for example, found that, after school shootings, accounts with local expertise (e.g., local journalists and students) and seemingly credible information (e.g., trolls posing as eyewitnesses) have more standing on Twitter and, subsequently, get far more attention than those of well-known activists, celebrities, or gun organizations. Likewise, and more relevant here, scholars find that accounts which send large numbers of tweets occasionally send posts that get a lot of attention. In analyses of message drivers, research reveals that accounts with the highest number of original outgoing tweets sometimes have a small overlap with accounts that also received the highest number of retweets (Boyraz, Krishnan, and Catona 2015; LeFebvre and Armstrong 2018). In short, high-tweeting accounts may be able to tap into visibility, regardless of their follower numbers, and shape political discourse around an issue.

Similarly, we do not know if there are substantive differences in the quality of information message amplifiers and message drivers share. To be sure, there are numerous studies that assess the general quality of information in digital environments as well as how it spreads. Social scientists studying mis- and disinformation routinely find that low-quality information is pervasive on platforms such as Facebook and Twitter (Allcott, Gentzkow, and Yu 2019; Bessi et al. 2015; Ferrara 2017; Ross and Rivers 2018), that mis- and disinformation spread faster and further than factually accurate information (Del Vicario et al. 2016), and that efforts to correct bad information do not work as well as we would like (Sangalang, Ophir, and Cappella 2019; Scheufele and Krause 2019; Thorson 2016; Yang, Torres-Lugo, and Menczer 2020). We know, for example, that conspiracy stories thrive in some social media communities because platforms make group homogenization easy (Del Vicario et al. 2016). What is missing in the research is whether message amplifiers and message drivers play similar (or different) roles in the spreading of low-quality information that undergirds some conversations.

We would expect message amplifiers and message drivers to play different roles in Twitter conversations because they are often responding to different incentives. In his research, Meyer (1995) found that “cultural elites,” which included celebrities, used mass media to build a following and reputation with an audience, which they leveraged into other opportunities such as additional jobs and appearances. Consequently, when speaking on behalf of political causes, cultural elites often “watered down” their positions and opinions in an effort not to offend audience members, as this could negatively affect their future revenue flows (Meyer 1995). It is reasonable to assume that this dynamic is similar on social media platforms such as Twitter. Message amplifiers, here often visibly verified cultural elites, use social media to grow their online audiences and potential influence while maintaining reputations that will further their professional aspirations. To this end, message amplifiers, particularly ones with national profiles, may signal support for individuals, issues, and events, but avoid the firebrand rhetoric that could come back to haunt them when doing so. Similarly, message amplifiers may also be reticent to share news stories with their original posts. News outlets often use dramatic or inflammatory language in their headlines to entice audiences to click over to their stories (Scacco and Muddiman 2016); the exact kind of language message amplifiers may seek to avoid.

This is probably not true of message drivers, who are less interested in how they are perceived by a broader audience and more concerned about the value of engaging on digital forums and directly affecting the course and content of the conversation. Schradie (2019), for example, found that conservatives in particular spent hours posting content to Facebook and Twitter because they saw it as their responsibility to get the “truth” about politics out into the world. Similarly, Rohlinger (2022) found that some users posted more than 100 tweets a day in response to the school shooting in Parkland, Florida, most of which used hashtags related to the shooting to share other, conspiracy-minded content. Given the extant research, which indicates that the most widely circulated falsehoods also tend to promote conservative points of view (Garrett and Bond 2021), conservative message drivers are more likely to share low-quality news stories with their posts.

Finally, we know very little about how account suspensions affect the course and content of a conversation. To our knowledge, there is only one study exploring the influence of Twitter bans on political discourse. Jhaver et al. (2021) examined the effect of Twitter bans on the number of conversations about the deplatformed account, the spread of offensive ideas held by the deplatformed account, and the overall activity of supporters of the deplatformed account. They found that deplatforming can be very fruitful in terms of removing an account from a larger conversation and reducing its influence on discourse. Although this is a critically important first step, it sheds little insight into how account suspensions might influence the kinds of political influencers weighing in on an issue, the kinds of arguments they make, or the quality of information they share with others.

Again, we would expect message amplifiers to behave differently from message drivers in the wake of account bans. Message amplifiers, who might be anxious about being suspended themselves, may be more cautious in terms of what and how they tweet after a Twitter suspension. For example, accounts that signaled support for misinformation or conspiracy theories may adjust their arguments and the quality of the information they share after a suspension to help make sure their accounts stay on the right side of Twitter’s policies. Message drivers, however, may be much less concerned with the prospects of a suspension, and they may even see it as an inevitable outcome of their online work (Rohlinger 2022; Schradie 2019). These accounts are more likely to maintain their tweeting behavior, which may include sharing misinformation and conspiracy theories.

Data and Methods

To explore whether a Twitter ban changed the information circulated about the 2020 presidential election and the Arizona audit, we used DiscoverText software and an academic developer’s license to scrape 245,020 tweets between July 17, 2021, and August 5, 2021. On July 27, 2021, Twitter suspended eight accounts related to the election audits, citing platform manipulation and spam. The suspension included the official account associated with the audit (@ArizonaAudit), an account known for spreading misinformation (@AuditWarRoom), and six AuditWarRoom spinoff accounts targeting additional states where Republican contested the election (@AuditArizona, @AuditMichigan, @AuditWisconsin, @AuditNevada, @AuditGeorgia, and @AuditPennsylvania). The ban was sudden and unexpected, meaning that it can be understood as a quasi-exogeneous “treatment” that may or may not influence whether and how individuals engage with these topics on Twitter. We used the keywords “Arizona audit” for our search parameters to collect tweets. As two of the suspended accounts directly concerned the Arizona audit and the remaining accounts were spinoffs inspired by the Arizona audit, the keywords “Arizona audit” were selected to capture discourse likely to be about the audit and reactions to the account suspensions.

We used DiscoverText to retroactively scrape and organize the data through Twitter’s application programming interface version 2, which stores all data and metadata older than 14 days. DiscoverText is in-browser software developed for researchers to help query Twitter’s application programming interfaces then organize and visualize data for analysis. The program collects and stores data in “archives,” which can be organized into smaller samples called “buckets.” The use of DiscoverText has clear benefits. DiscoverText ensures research compliance with Twitter’s terms of service. To this end, it automatically removes data that has been suspended or removed from Twitter and reposts content when those accounts are no longer suspended. Additionally, it does not allow researchers to export a data set from DiscoverText, only Twitter identification numbers, to ensure that scholars remain in compliance with Twitter’s developer agreement. 1 The downside of Twitter’s developer agreement is that researchers cannot include the content of suspended or deplatformed accounts in their analyses, which means that our data set does not include content posted by the eight suspended accounts. That said, we are confident that our sampling strategy adequately captures discourse, and potential discourse changes, resulting from the ban. In a separate DiscoverText data collection of 199,946 tweets in advance of the ban using the same key words, only one AuditWarRoom post received more than 200 retweets in the sample. This suggests that the accounts were a touchstone for debate and helped shape discourse but were not the sole drivers of content about the Arizona audit.

To analyze message amplifiers, we used DiscoverText’s “exact duplicate” function to sort tweets into “groups,” allowing us to identify posts that were retweeted by at least one other account during the 20-day period of interest. We operationalized message amplifiers as accounts that had at least 200 retweets. As there is not a standard metric by which researchers define message amplifiers (Dubois and Blank 2018; Jackson and Foucault Welles 2015; LeFebvre and Armstrong 2018), we selected 200 retweets as the cutoff point. This, we reasoned, allowed us to identify a broad range of accounts that potentially influenced discourse surrounding the Arizona audit and maintain an operable sample size for subsequent coding. A total of 148 accounts met this criterion, and their retweeted posts constitute 55.4 percent of the total sample. Relative to message amplifiers we explore three research questions: Does banning accounts on Twitter change who shapes the conversation around the 2020 presidential election and Arizona audit? Does banning accounts on Twitter change the kinds of arguments message amplifiers make about the 2020 presidential election and Arizona audit? Does banning accounts on Twitter affect the type and quality of information shared by message amplifiers?

To assess whether the ban changed who shapes discourse, we analyzed the biographical descriptions, profile pictures, and emojis associated with the 148 accounts. In addition to coding whether the account was verified or not, we analyzed how account holders identified themselves relative to the causes and issues they referenced. So doing generated 108 separate codes, which were inductively coded by the lead researcher, that captured each account’s stated current or former occupation (e.g., elected officials, academics, data analysts, teachers, media professionals, authors, engineers); political and partisan alignment (e.g., Democrat, Republican, independent, Libertarian, Trump supporter); references to political engagement (e.g., engaged citizen, democracy savage, citizen legislator); references to America, democracy, and patriotism; other affinities (e.g., dog lover, cat lover, music lover, bourbon lover); geographic mentions (local, state, region, and country, as well as mentions of rural and urban attachments); mentions of conservative, progressive, and QAnon causes; and support for particular individuals and groups, among others. Additionally, given the increased importance of visual indicators to signaling political orientations and allegiances (Gerbaudo 2015; Kariryaa et al. 2022), the lead researcher inductively coded 69 types of emojis included in the biography of each account as well as political, social, and religious symbols included in the account profile picture and banner (another 24 categories). These inductive categories were collapsed, when possible, into more meaningful categories (e.g., particular offices and types of political candidates were collapsed into a single political officeholder and candidate category) so that chi-square (>30 cases) and Fisher’s exact (<30 cases) tests could be used to assess whether there was a nonrandom relationship between the dependent variable (e.g., timing of the post) and independent variables (e.g., political candidate or politicians).

To assess whether and how the Twitter suspension changed the kinds of claims message amplifiers made about the Arizona audit and the presidential election more generally, the lead researcher and an undergraduate research assistant analyzed whether the tweet supported or opposed the audit as well as the general argument(s) referenced in the tweet. The lead researcher read all of the tweets, inductively coded the content of each in NVivo and then created a codebook that captured the most prominent arguments in the sample supporting and opposing the audit and the election results. This process generated 19 mutually exclusive codes. An “unclear” category was included to capture tweets that were neutral or (1) reported an action or event related to the audit but did not express supportive or oppositional sentiment regarding the audit or (2) shared a neutral headline related to the audit but offered no comment on the headline or audit. An example of the former would be an account remarking that they thought the audit might not be complete by the promised July deadline. An example of the latter would be an account sharing a news story with a headline to that effect with no original content in the tweet. All coding was dichotomous so that we could capture the presence of more than one type of argument, if applicable.

Finally, we coded what information message amplifiers shared and, if it was a news source, the quality of this information. The kinds of information account holders shared included legal documents; materials for political parties and candidates; interest group reports; government documents; screenshots of fund-raising materials; their own and others’ tweets; pictures of different aspects of the audit, Trump supporters, and rallies; Biden and other well-known Democrats; and blogs. Some individuals did not include additional material with their tweets, which we coded as “nothing shared.” If the account holder shared a news source, we categorized it according to Ad Fontes Media’s (2022) media bias chart. This media bias chart, which is updated frequently, categorizes outlets into one of seven bias types: most extreme left, hyperpartisan left, skews left, middle, skews right, hyperpartisan right, and most extreme right. Ad Fontes Media employs 40 analysts to categorize outlet content. Each article or episode is rated by three analysts representing the political spectrum on the basis of self-reported political views (left, right, and center). Reliability scores for coding between the lead researcher and undergraduate research assistant relative to the claims and information shared were consistently high (κ = .91).

We also explore whether the Twitter ban changed the accounts posting the most tweets (message drivers) or the kinds of content they posted. Here, we used DiscoverText to isolate tweets by day and exported the tweets into a “bucket.” Then, using the “Top Meta Explorer” option, we identified the top 10 posters for each of the 20 days and analyzed the content of their tweets. Because, to our knowledge, message driver is a novel concept, we included the top 10 posters from each day (on one day the top 11 because of a tie for 10th place) so that we could better assess the range of posting behavior during the time period of interest and identify what it means to be a highly active poster in the sample. The lead researcher coded each account according to (1) whether it supported or opposed the audit, (2) the types of arguments made in opposition or support of the audit, and (3) whether additional material was shared with their post. In terms of the latter, the lead researcher coded tweets supporting the audit or big lie as supportive of Trump, supportive of Republicans, opposed to Democrats, or supporting or sharing a conspiracy theory. Tweets opposing the audit were coded as opposed to Trump, opposed to Republicans, supportive of Democrats, or supporting or sharing a conspiracy. We conducted a reliability check of the coding using 20 percent of the sample and reliability scores were high (κ = .94).

We supplemented this analysis with information from Bot Sentinel, a free, nonpartisan platform that uses artificial intelligence and machine learning to score accounts from 0 percent to 100 percent. The higher the score on Bot Sentinel, the more likely the account engages in bad behavior such as “harassment, toxic trolling, or uses deceptive tactics engineered to cause division and chaos” (https://botsentinel.com/info/about). Account rating categories, which are based on an analysis of several hundred tweets associated with the account, include normal (a score from 0 percent to 24 percent), satisfactory (a score from 25 percent to 49 percent), disruptive (a score from 50 percent to 74 percent), and problematic (a score from 75 percent to 100 percent). The Bot Sentinel score provide additional context regarding an account’s Twitter behavior and, specifically, allow us to assess whether tweets about the audit are consistent with a larger corpus of posts shared.

Political Influencers and the Information Environment

Message Amplifiers

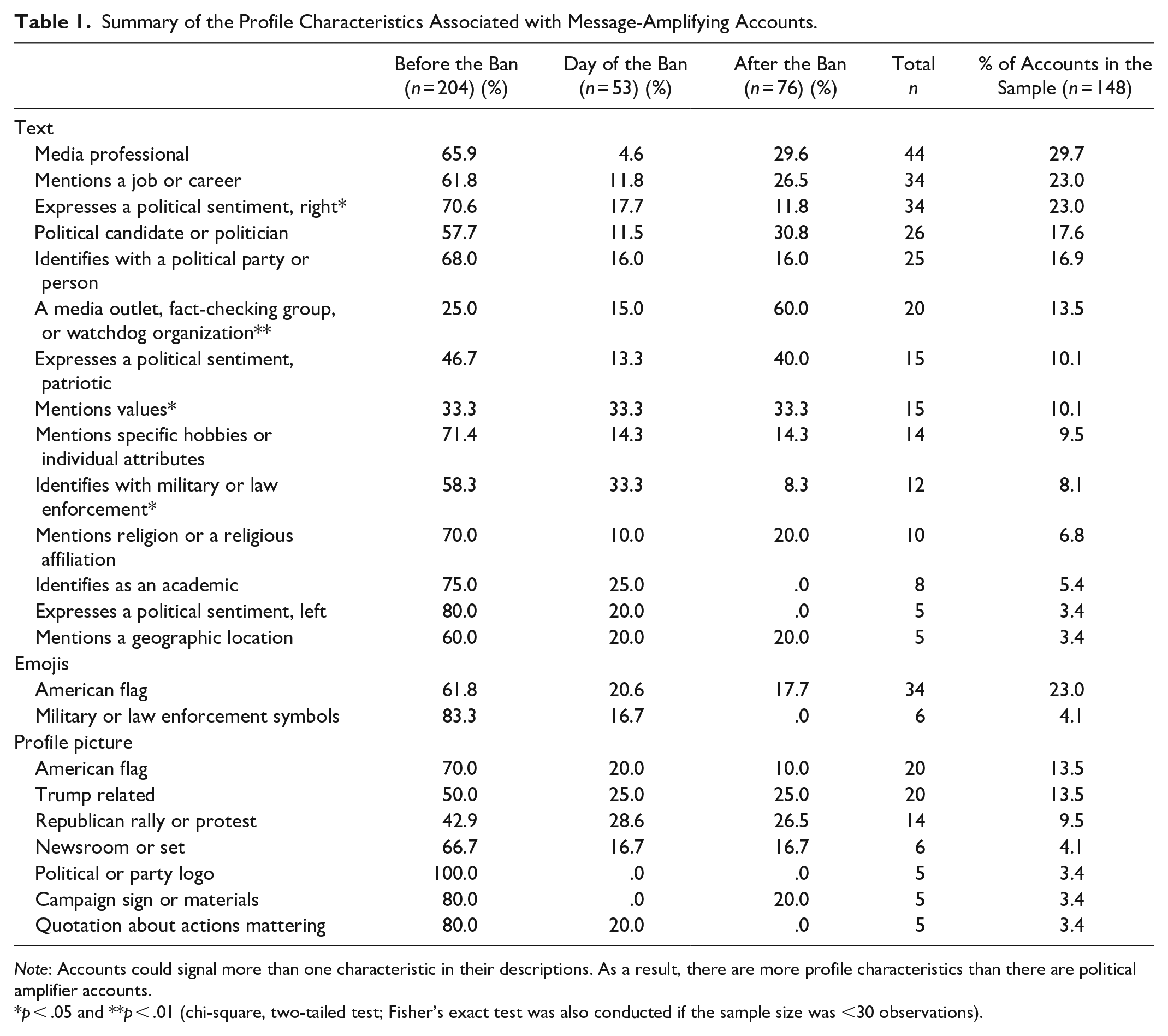

Table 1 summarizes the profile characteristics mentioned at least five times in message amplifiers’ accounts. The table provides the frequency of relevant information, including emojis, provided in the biography and profile picture before the ban, the day of the ban, and after the ban, and notes whether there is a significant difference in the distribution of each profile characteristic. Table 1 indicates that overall, the kinds of accounts that get the most retweets are largely stable before and after the ban. However, there are two significant differences worth noting. First, accounts expressing at least one conservative political sentiment were retweeted more often before the ban. Second, media outlets, fact-checking groups, and watchdog organizations were retweeted more often after the ban. At first glance, this suggests that the ban influenced who shaped the conversation about the audit, and, given the significant increase in media outlets, watchdog groups and fact checking organizations, potentially for the better.

Summary of the Profile Characteristics Associated with Message-Amplifying Accounts.

Note: Accounts could signal more than one characteristic in their descriptions. As a result, there are more profile characteristics than there are political amplifier accounts.

p < .05 and **p < .01 (chi-square, two-tailed test; Fisher’s exact test was also conducted if the sample size was <30 observations).

Of course, not all the accounts are retweeted at similar rates. Table 2 shows the frequency of retweets by quartiles for each of the profile characteristics. Here, we can see whether there are significant differences in the distribution of the profile characteristics among posts retweeted 201 to 278 times, 279 to 447 times, 448 to 950 times, and 951 to 23,831 times. Table 2 indicates that there is only one significant difference. Accounts with 448 to 950 retweets expressed a right-leaning political sentiment more often in the text description than accounts in the other three quartiles. In short, there are almost no differences in the profile characteristics of accounts receiving the most and the least tweets. We were surprised by the lack of variation in profile pictures and emoji use, particularly regarding the use of the American flag, which is a mainstay of conservative politics (Kariryaa et al. 2022). This may just be a reflection of our sample, but it may also reflect the efforts of Democrats to “reclaim” the flag and its symbolism from Republicans (Harrison 2020).

Summary of the Profile Characteristics Associated with Message Amplifiers by Quartile.

Note: Accounts could signal more than one characteristic in their descriptions. As a result, there are more profile characteristics than there are accounts. Q = quartile.

p < .05 (chi-square, two-tailed test; Fisher’s exact test was also conducted if the sample size was <30 observations).

Table 3 takes a closer look at the accounts to assess whose ideas were amplified and how this changed before and after the ban. Table 3 shows the frequency of the type (e.g., a media outlet, a media professional, an officeholder) and orientation (e.g., liberal/left, moderate/middle, or conservative/right on the basis of the Ad Fontes media bias chart, which was collapsed here for parsimony) of the account as well as the number of retweets each account type received before, the day of and after the ban. Pursuant to our agreement with Twitter, we report only the data of public figures, entities, and organizations, which is why the total number of tweets is slightly less than the number reported above. 2 Table 3 reveals that the ban seems to be a mixed bag in terms of its ability to improve information about the Arizona audit. On the one hand, more moderate, or what Ad Fontes labels “middle bias” outlets, media professionals, and a watchdog organization are retweeted relatively more often after the ban. Arguably, this signals there may be improvement in the quality of discourse, as the quality of information is more reliable. On the other hand, it is clear that partisans drive the discussion over the audit. Before the ban, liberal/left and conservative/right accounts were retweeted at similar rates overall. Liberal/left account retweets make up 49.8 percent of the sample and conservative/right accounts make up 42.9 percent of the sample before the ban. This changes the day of and after the ban. Conservative/right accounts dominate discourse the day of the ban and constitute the majority of retweets after the ban. More important, there is a distinct shift in the types conservative/right accounts that are amplified. Before the ban, Republican officeholders, candidates, and the party as well as media professionals are the most retweeted in this category. Although they maintain a presence, the day of and after the ban, there is a sharp increase in the “other” category, which consists of Trump loyalists, a Trump spokesperson, and an activist advocating for “election integrity” in Wisconsin (see the Supplement). In short, the Twitter ban does not appear to improve the kinds of accounts that amplify information about the Arizona audit.

Summary of Public-Oriented, Message-Amplifying Accounts.

Note: Because of rounding, percentages may total more than 100 percent. Pursuant to our agreement with Twitter, this table reflects only public accounts. The liberal/left, middle/moderate, and conservative/right categories are based on the Ad Fontes media bias chart. RT = retweet.

The ban, however, does seem to have shaped the kinds of arguments made before and after the ban. Table 4 indicates whether message amplifiers generally supported or opposed the audit, and Table 5 summarizes the general argument(s) they made in their tweets. First, Table 4 shows that only tweets expressing opposition to the audit significantly decreased after the ban. The frequency of tweets supporting the Arizona audit remained relatively stable. Second, there is a clear shift in the frequency of the arguments made before and after the ban, particularly among audit supporters (Table 5). Conspiracy theory–based arguments supporting the audit and negative comments about public figures who opposed the audit were significantly more likely to appear in posts before the ban than after, as were tweets supporting Donald Trump. These differences, however, disappear when we look at the frequency of retweets by quartile, suggesting that conspiracy theory and negative comments still are relatively prominent after the audit (Table 6).

Summary of Audit Support and Opposition.

Note: Accounts could make more than one argument in their posts. As a result, there are more argument types than there are political amplifier accounts.

p < .01 (chi-square, two-tailed test; Fisher’s exact test was also conducted if the sample size was <30 observations).

Audit Support and Opposition before, the Day of, and after the Ban.

Note: Accounts could make more than one argument in their posts. As a result, there are more argument types than there are political amplifier accounts.

p < .05 and ***p < .001 (chi-square, two-tailed test; Fisher’s exact test was also conducted if the sample size was <30 observations).

Audit Support and Opposition by Quartile.

Note. Accounts could make more than one argument in their posts. As a result, there are more argument types than there are accounts. RT = retweet.

p < .05 (chi-square, two-tailed test; Fisher’s exact test was also conducted if the sample size was <30 observations).

A closer analysis of the tweets in the top quartile before, the day of and after the ban reveals that what changes is how amplifiers talk about the audit. Of the 16 tweets in the top quartile before the ban, six tweets are supportive of the audit, and four of these tweets were from Republican officeholders and candidates who either called for audit expansion or identified co-conspirators in the “stolen” election. For example, Elise Stefanik (1,598 retweets), who is in the top quartile, alerted her followers, “Now that the Biden admin admitted to colluding with Big Tech to censor Americans. Was it the Biden Admin, who asked Big Tech to remove all videos of the duly elected Arizona State Senate’s forensic Audit hearing off their platforms? Patriots see what’s happening!” The other two posts suggest that the Democratic National Committee and RINOs (Republicans in name only) are complicit in the election “stolen” from Trump (2,440 retweets and 1,175 retweets respectively). Compare this with the top quartile the day of and after the ban. Of the 21 tweets in the top quartile, 12 are supportive of the audit, and four announced that Twitter had banned the audit accounts. The post with the most retweets urged conservatives not to be distracted by the ban and to “follow the Arizona audit results” (George Papadopoulos, 5,529 retweets), but the rest circulated claims that were rooted in or supported conspiracy theories about the 2020 election being stolen. Trump spokesperson Liz Harrington (2,476 retweets), for example, tweeted that Arizona “found 270,000 potential fraudulent ballots . . . in ONE county.” Similarly, a Newsmax reporter whose account was suspended, but who also accounted for three of the 12 most retweeted conservative posts, celebrated the work of the auditors and reminded his followers that Karen Fann (a state senator in Arizona) outlined a plan to decertify the 2020 election (1,095 retweets and 1,046 retweets, respectively). In other words, although the ban changed how political amplifiers talked about the stolen election, it did not strictly reduce the circulation of misleading and incorrect information.

The analysis also reveals that the account bans may cause some public figures, particularly those with seemingly clear professional aspirations, to either move their public focus to less risky topics or to double down on their support for the big lie. To illustrate this, we observe the activity of two public figures who appear in the top-quartile of the sample, Elise Stefanik and Wendy Rogers. Elise Stefanik was a vocal supporter of the audit and conspiracy theories about the election before Twitter suspended the accounts. However, Stefanik, the Republican conference chairwoman who has built a powerful fund-raising machine and is looking to run for a higher office (Zanona and Orr 2022a), did not comment again on the audit in the wake of the account bans. Instead, she attacked Biden from another angle, tweeting about the “Biden border crisis,” which she claims, is an “absolute catastrophe” involving more than 19,000 children at the southern U.S. border. As a politician who carefully monitors the shifting winds in Washington, D.C. (Zanona and Orr 2022b), Stefanik arguably has incentives to make sure she keeps her access to mainstream platforms such as Twitter. Compare Stefanik with Arizona state senator Wendy Rogers, who has built a national profile by appealing to white nationalists and calling for violence against her political opponents (Reinhard and Helderman 2022). The day of and after the ban, Rogers helped publicize the account ban, warned followers that Twitter would ban her next, amplified her own activities furthering the audit, and expanded her attacks on Joe Biden. In other words, Rogers did not really alter her tweeting behavior. Arguably, Rogers has little incentive to do so. As she has embraced the extremes of the Republican Party, she has little to lose—and perhaps something to gain in terms of credibility with her supporters—from getting suspended from Twitter.

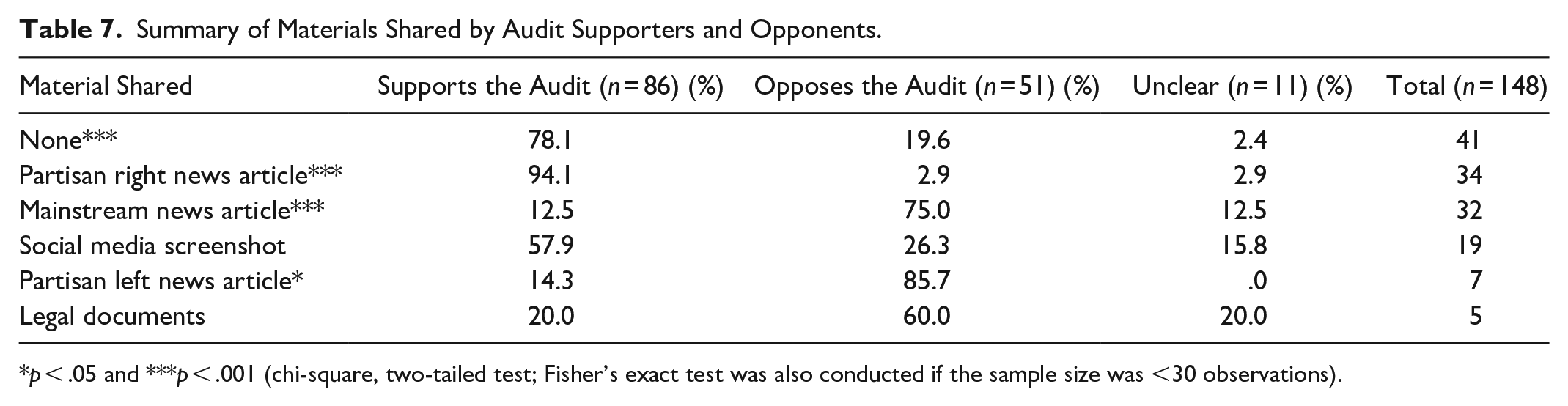

Table 7, which shows the frequency of the type of material shared by support or opposition to the Arizona audit, provides further support for the relative ineffectiveness of the ban. Opponents of the audit are significantly more likely to share mainstream and partisan left news articles and supporters of the audit are more likely to circulate partisan right news content or not to share content at all. Although this is not particularly surprising, Table 8 indicates that the quality of information shared changes very little before and after the ban. For example, there are not significant differences in the rate at which (largely) supporters shared partisan right news coverage before, the day of, or after then ban. The same is true of ban opponents who share mainstream and partisan left news sources at similar rates before and after the ban. That said, the accounts sharing partisan right information do not have the most retweeted posts. Table 9, which shows the frequency of the type of material shared by quartile, indicates that nearly half of the accounts sharing partisan right content are only retweeted between 279 and 447 times. The accounts with the most retweets share partisan right news content significantly less often. This suggests that the most influential political amplifiers seem to either imply in their posts that the Arizona audit is necessary because the election was stolen from Trump or share content to that effect, but not both. A close analysis of the tweets indicates that this is the case. For example, the Elise Stefanik quotation mentioned above, which suggests that Biden is implicated in a conspiracy with big tech, does not share additional information. This is also true of the George Papadopoulos tweet warning audit supporters not to be distracted by the account bans.

Summary of Materials Shared by Audit Supporters and Opponents.

p < .05 and ***p < .001 (chi-square, two-tailed test; Fisher’s exact test was also conducted if the sample size was <30 observations).

Frequency of Materials Shared before, the Day of, and after the Ban.

p < .05 and **p < .01 (chi-square, two-tailed test; Fisher’s exact test was also conducted if the sample size was <30 observations).

Frequency of Materials Shared by RT Quartile.

Note: RT = retweet.

p < .01 (chi-square, two-tailed test; Fisher’s exact test was also conducted if the sample size was <30 observations).

Message Drivers

Table 10 summarizes the distribution of the top 10 tweeting accounts supporting and opposing the audit before, the day of, and after Twitter suspended the audit accounts. Recall that a total of 201 accounts are included in the message driver sample. Here, we counted the number of accounts that posted (or reposted) clear arguments supporting the audit, the number of accounts opposing the audit, and the number of accounts in which the argument was unclear because it was a news story, a watchdog post, or the account was private or suspended. Table 10 makes clear that, other than the day of the account suspension, there are consistently more accounts tweeting their support for the Arizona audit. This trend is particularly pronounced among accounts that sent at least 50 tweets on a given day. In fact, only one account opposing the audit sent at least 50 tweets on a given day during the 20-day sample. This is far fewer than the 25 audit-supporting accounts during the same time frame. Accounts supportive of the audit also post more tweets than those opposing the audit. Audit-supporting accounts sent 65.8 percent of the tweets before the suspension, 73.4 percent of the tweets the day of the suspension, and 75.9 percent of the tweets after the suspension. In short, the suspension does not seem to deter audit-supporting accounts from posting.

Distribution of Account Types among Message Drivers.

Note: The total is 201 accounts rather than 200 because one of the days had a tie for the 10th most sent tweets. As one account was supportive of the audit and the other was not supportive of the audit, we left both in the sample.

p < .05. (Chi-square, two-tailed test; Fisher’s exact test was also conducted if the sample size was <30 observations).

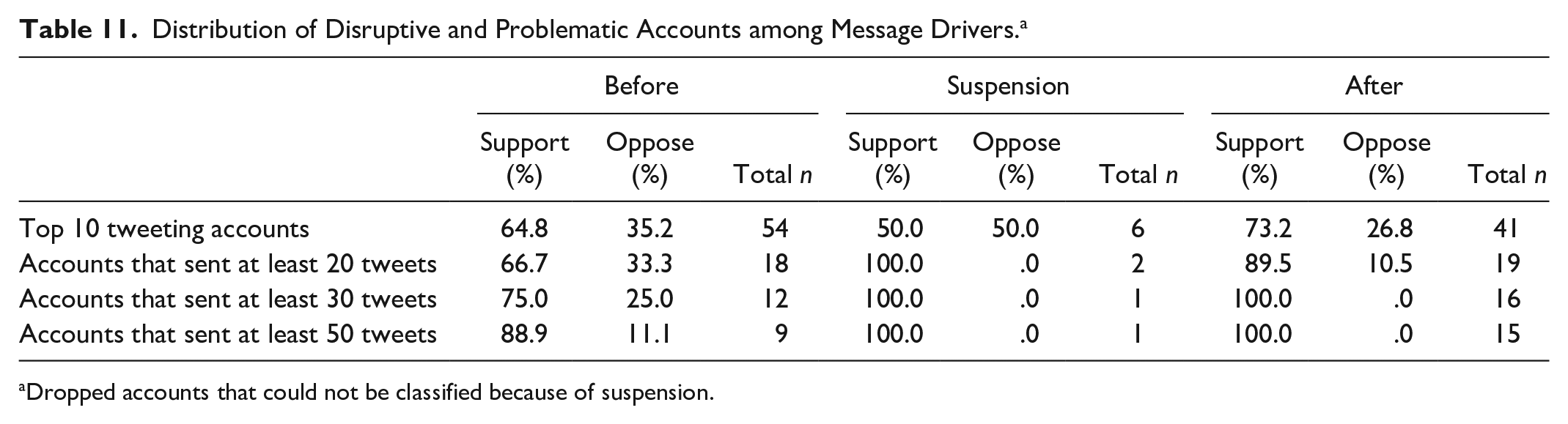

Importantly, Table 11, which shows the distribution of disruptive and problematic accounts by audit support and opposition before, during, and after the account suspension, indicates that there are more audit-supporting disruptive (a Bot Sentinel score of 50 percent to 74 percent) or problematic (a Bot Sentinel score of 75 percent to 100 percent) accounts in the sample. To assess what kind of content message drivers share, we conducted a qualitative content analysis of both their tweets during the time frame of analysis as well as the first 50 to 100 re(tweets) of the top 10 message drivers on each of the 20 days included in the sample. This analysis revealed three important insights. First, the analysis indicates that 71 of the 101 accounts supported the audit, and shared conspiracy theories about the election, coronavirus disease 2019, and globalism. Additionally, 54 of the 71 accounts were categorized as disruptive or problematic by Bot Sentinel (and two of these accounts were subsequently suspended by Twitter). Second, the ban did not affect the number of accounts actively spreading conspiracy theories. We found that, when we counted the accounts spreading conspiracy theory on each of the days, a total of 34 accounts spread misinformation about the election before the suspension, 3 accounts spread misinformation the day of the ban, and 34 accounts spread misinformation after the ban.

Distribution of Disruptive and Problematic Accounts among Message Drivers. a

Dropped accounts that could not be classified because of suspension.

Third, two accounts are almost completely responsible for spreading conspiracy theories during this time frame, although they engage in different tweeting behavior. The first account, which we call Bunny 3 (because this “problematic” account with a 97 percent Bot Sentinel score claims to represent a former Playboy bunny who sells jewelry), posted 859 tweets after the account suspension. Although the account is never among the most retweeted, Bunny is the top poster in the sample six of the nine days after the account suspension and largely works to amplify the work of Overstock founder and election conspiracy theorist Patrick Byrne. When we analyzed the corpus of tweets in the months before the Twitter suspended the audit accounts, we found that Bunny, in her capacity as the page admin for the Byrne account, largely worked to connect other Twitter users with Byrne on locals.com. Although the account continued to push the Byrne community after the suspension, her efforts were more direct (e.g., they are done in replies to tweets) and specifically amplified claims of election fraud, which are outlined in a “FREE EXCLUSIVE VIDEO” by the “Top funder of the AZ audit: Patrick Byrne,” and Byrne’s book The Deep Rig, which outlines the “election fraud and what happened on January 6th with pictures of Antifa changing into MAGA gear” (from July 28).

The second account, which we call Arizona Sam 4 (because the “disruptive” account with a Bot Sentinel score of 55 percent has no description, and the predominant image is of an American flag and the Arizona state flag), is more active, posting 1,544 conspiracy tweets during the 20-day period. Arizona Sam is the top tweeter 17 of the 20 days included in the sample and posted the most tweets of anyone in the sample on 12 different days, most of which occur before the audit accounts were suspended. However, the account seems influential after the ban as well. Arizona Sam’s tweets were among the most retweeted accounts in the sample on three different days, two of which fell after the suspension of the audit accounts. His content across the 20-days is also remarkably consistent. Although the account retweets content from Arizona politicians, particularly Wendy Rogers, and election conspiracy theorists (e.g., Jovan Pulitzer), he mostly chastises politicians and officials that “embarrass” Arizona with their “sham audit” that, he argues, is covering up fraud committed by Democrats and Dominion, a voting system company. The account’s most frequently sent tweet, which was sent hundreds of times as a reply to other accounts questioning the election results, asked the Maricopa County Board of Supervisors, “Can you tell us why you and Dominion refuse to provide routers, passwords and election materials? WHAT HAVE YOU DONE? [American flag emoji].” In short, the Twitter ban seems to have little influence on who was tweeting about the Arizona audit or what they were tweeting about.

Discussion and Conclusion

We do not find an association between the Arizona audit account bans and an improved information environment. First, we find that the ban does not seem to change the kinds of ideas that are amplified on Twitter about the Arizona audit. Although the popularity of accounts opposing the audit declined, conservative/right accounts, which consistently supported conspiracy theories, increased after the ban. Second, we find that the ban does not seem to affect who posts about the Arizona audit. Two accounts create the majority of tweets. Arizona Sam sent the most tweets 17 out of the 20 days sampled but also remained steadfast in his support that the election was stolen and that the fraud was being covered up in Maricopa County.

There are three interesting findings that warrant additional investigation. First, we find that the ban may have changed whether and how some accounts talk about the audit. We find that politicians respond differently to the account bans. Although this may be a function of who has the most standing at a given moment with an audience (Rohlinger, Williams, and Teek 2020), it may also reflect the incentives associated with tweeting. Politicians, who often aspire for higher office, are likely to tweet in ways that will further their professional goals. Viewed this way, there are few incentives for Wendy Rogers to change her tweeting behavior. Rogers, who has aligned herself with the extremes of the Republican Party, has little to lose, and perhaps credibility to gain with her right-wing base, if Twitter boots her from the platform. This is not true of Elise Stefanik, who carefully monitors the political winds in her quest for higher office. Stefanik likely sees professional downsides to losing access to a large, politically diverse audience. As long as Twitter is able to attract left-leaning and independent-minded users, the Musk takeover is unlikely to change the tweeting behavior of politicians seeking higher office. Although politicians such as Wendy Rogers have little to lose by becoming more extreme in their posts, there are still incentives for most politicians to moderate their tweets and position themselves so they might appeal to a broader swath of the voting public down the road.

Second, we find that the quality of information accounts share after a ban changes very little. Partisans predominantly share partisan sources, and this does not change after Twitter intervenes. Moreover, we find that posts with the most retweets do not typically share any materials, whereas those with relatively few retweets do. These findings lend some support to our argument that some message amplifiers may find it professionally expedient to signal support or opposition to an issue without attaching their opinions to clickbait news headlines that could create problems for them later (Scacco and Muddiman 2016) but also suggest that sharing materials is an important, and underanalyzed, aspect of Twitter behavior that warrants additional investigation. Although users may be cautious regarding what they share involving Musk personally, sharing behavior more broadly is unlikely to change.

Finally, we find that Twitter bans seem particularly ineffective on message drivers, who continued their open support of the audit and denied the election results after the ban. This finding buttresses our argument regarding the differential incentives driving the Twitter behavior of message amplifiers and message drivers. In this case, the largely conservative message drivers are more concerned with spreading the “truth” than they are of getting booted from Twitter (Rohlinger 2022; Schradie 2019). In fact, because the accounts do not have huge numbers of followers, the owners may feel like their “disruptive” and “problematic” tweeting behavior will go unreported. Even if they were reported, it may be difficult to justify a suspension given their posting behavior. Arizona Sam, for example, replied to hundreds of other tweets, pressing his conspiracy claim. Although it may have been an annoyance, his replies did not attack posters and were not offensive, meaning that they probably went unreported. Future research should continue to explore individual tweeting behavior and assess whether (and how) it has changed under Musk.

Ostensibly, Musk bought Twitter to create a “digital public square, where a wide range of beliefs can be debated in a healthy manner” (Katsuyama 2022). Although thus far, Musk’s critics are skeptical of his motives and practices, it is important for scholars to contextualize the realities of the information environment on Twitter of yore. Twitter was not a paragon of quality discourse in the past and is unlikely to become so in the future. However, Twitter remains an important platform for journalists, public officials, government offices, movement activists, and citizens globally. As such, scholars should continue to study Twitter and the effects of Musk’s practices and policies on this important platform.

Supplemental Material

sj-docx-1-srd-10.1177_23780231231152193 – Supplemental material for Does the Musk Twitter Takeover Matter? Political Influencers, Their Arguments, and the Quality of Information They Share

Supplemental material, sj-docx-1-srd-10.1177_23780231231152193 for Does the Musk Twitter Takeover Matter? Political Influencers, Their Arguments, and the Quality of Information They Share by Deana A. Rohlinger, Kyle Rose, Sarah Warren and Stuart Shulman in Socius

Footnotes

Acknowledgements

We would like to thank Daniel Kreiss, the PolCom group at Vrije Universiteit Amsterdam, Elizabeth Mazzolini, Dale Winling, and the reviewers and editors of Socius for their feedback, as well as Charles Phillips for his research assistance. An earlier draft of this article was presented at the 2022 International Communication Association Meeting in Paris and the Media Sociology Postconference.

Supplemental Material

Supplemental material for this article is available online.

2

A list of public actors and the number of retweets they received is available in the Supplement.

3

The account holder joined in December 2012, has 366 followers, and is following 24 accounts.

4

This account holder joined in February 2021, has 2,710 followers, and is following 716 accounts.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.