Abstract

In late March of 2020, a new hashtag, #FilmYourHospital, made its first appearance on social media. The hashtag encouraged people to visit local hospitals to take pictures and videos of empty hospitals to help “prove” that the COVID-19 pandemic is an elaborate hoax. Using techniques from Social Network Analysis, this case study examines how this conspiracy theory propagated on Twitter and whether the hashtag virality was aided by the use of automation or coordination among Twitter users. We found that while much of the content came from users with limited reach, the oxygen that fueled this conspiracy in its early days came from a handful of prominent conservative politicians and far right political activists on Twitter. These power users used this hashtag to build awareness about the campaign and to encourage their followers to break quarantine and film what is happening at their local hospitals. After the initial boost by a few prominent accounts, the campaign was mostly sustained by pro-Trump accounts, followed by a secondary wave of propagation outside the U.S. The rise of the #FilmYourHospital conspiracy from a single tweet demonstrates the ongoing challenge of addressing false, viral information during the COVID-19 pandemic. While the spread of misinformation can be potentially mitigated by fact-checking and directing people to credible sources of information from public health agencies, false and misleading claims that are driven by politics and supported by strong convictions and not science are much harder to root out.

This article is a part of special theme on Viral Data. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/viraldata

Introduction

In late March of 2020, a few Twitter users started using the hashtag #FilmYourHospital on social media to encourage people to visit local hospitals to take pictures and videos to “prove” that the COVID-19 pandemic was an elaborate hoax (Little and Garcha, 2020; Zadrozny and Collin, 2020). The premise for this conspiracy theory rests on the assumption that if hospital parking lots and waiting rooms are empty, then the pandemic must not be real or at least not as severe as reported by health authorities and the media. Of course, there is a simple explanation for why some hospital parking lots and waiting rooms might have been empty. As part of pandemic planning, many hospitals have banned visitors and postponed or canceled elective and non-urgent procedures to free up medical staff and resources. This is in keeping with expert advice from the Centers for Disease Control and Prevention (CDC) in the U.S. and other health authorities (Golden, 2020; Rinaldo and Jones, 2020). In addition, the CDC also recommended that healthcare facilities create separate intake and waiting areas for coronavirus patients (to slow cross infections with non-COVID-19 patients) and reserve emergency areas for acute medical events such as heart attacks and broken arms (CDC, 2020: 19). Furthermore, because of the lockdown, fewer people were exerting and hurting themselves, resulting in fewer heart attacks and stroke visits to the emergency department (Morse, 2020). This empty-hospital conspiracy theory joins a parade of false, unproven, and misleading claims about the virus making the rounds on social media including allegations that 5G wireless technology somehow plays a role in the spread of the COVID-19 virus or that drinking methanol, ethanol, or bleach prevents or cures someone from the virus (Gruzd and Mai, 2020; Mai and Gruzd, 2020; Temperton, 2020; WHO, n.d.).

To study the propagation of conspiracy theories on social media, we examine the case of #FilmYourHospital. Specifically, we examine two common forms of social manipulation in the context of this case: the use of “social bots” (Gruzd, 2016b)—social media accounts designed to act on Twitter and other platforms with some level of autonomy—and “coordinated inauthentic behaviour” (Twitter, 2019), which may include coordinated activities that attempt to artificially manipulate conversations to make them appear more popular than they are. These two forms of social manipulation, if left unchecked, could skew the conversation, manufacture anger where there is none, suppress opposition, or dampen debate. These tactics may also undermine citizens’ abilities to make decisions and reach consensus as a society (Giglietto et al., 2019; Starbird, 2019). For this case study, we focus on the use of Twitter in this campaign, which was launched on 28 March with a simple tweet posing a question: “#FilmYourHospital Can this become a thing?”.

Method

Research tools and dataset

We used Netlytic (Gruzd, 2016a) to collect and analyze data, Gephi (Bastian et al., 2009) to visualize the resulting communication network over time, the python library Twarc (Ruest and Milligan, 2016) to check if accounts had been deleted or suspended by Twitter, and the Botometer API (Yang et al., 2019) to assess if an account is automated (exhibiting a bot-like behavior).

In total, we collected 99,039 posts contributed by 43,461 unique users during the study period from 28 March to 9 April. At the time of the analysis, the hashtag got the most attention on 31 March, with a gradual decrease in engagement ever since (see Figure 1). As with most Twitter-driven, clicktivism-style campaigns (Gruzd et al., 2016a, 2020), most interactions (83%) were retweets.

Number of Tweets with #FilmYourHospital over time.

Social network analysis

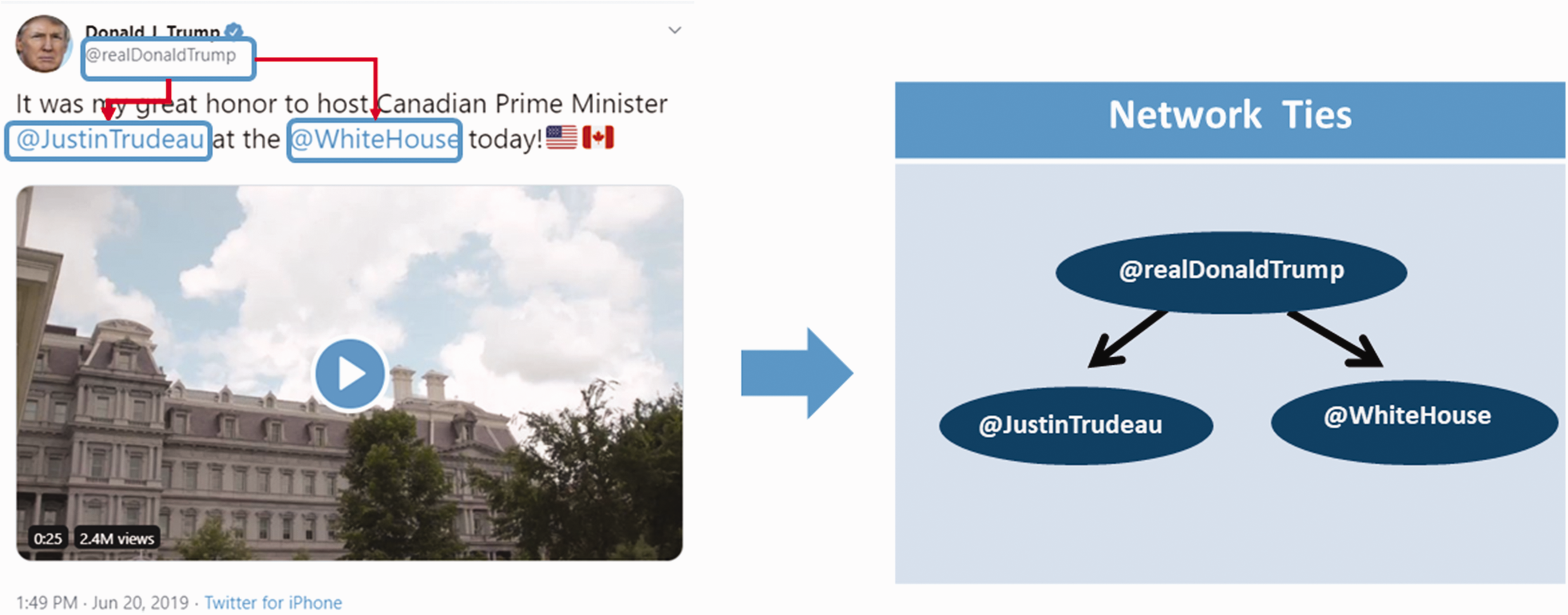

To study how information and misinformation propagates through different online networks, we use Social Network Analysis to visualize and examine user interactions at scale (Gruzd et al., 2016b). In this analysis, we represent the Twitter data as a network, where nodes represent Twitter users (individuals or organizations) and connections between nodes (called ties or edges) represent interactions among users (reply, retweet, or mention). For instance, a single tweet posted by Donald Trump to the @JustinTrudeau and @WhiteHouse accounts can be visualized as a simple network with three nodes, and two ties going from Trump’s account to the two accounts because both are mentioned in the tweet (see Figure 2).

Discovering a network structure, one Tweet at the time.

Figure 3 visualizes 79,736 interactions among 41,903 Twitter users who posted or retweeted using #FilmYourHospital as a network. To focus on interactions that directly lead to information diffusion, we excluded “isolated” accounts,—those without interaction with any other nodes from this network. Colors were assigned automatically to highlight densely-connected groups of nodes (clusters) that are more likely to interact with each other than with the other accounts, based on a “Fast Unfolding” community detection algorithm (Blondel et al., 2008).

#FilmYourHospital Communication Network of 41,903 users (ties represent reply, retweet, or mention). Usernames are shown for accounts with 100k or more followers.

Results

The evolution of #FilmYourHospital network over time

To understand how this campaign manifested on Twitter, we examined the formation of this network over time. Figure 4(a) displays #FilmYourHospital interactions posted during the first three days of the campaign. Notably, one of the most influential users who triggered the viral spread of this misinformation campaign was @DeAnna4Congress, a verified account for DeAnna Lorraine, a former Republican Congressional candidate who recently ran against Nancy Pelosi for the U.S. House California District 12. Unlike the anonymous poster who started the hashtag (attracting only around 30 retweets), Ms. Lorraine added legitimacy to this campaign and directly asked her 150k+ followers to “get #FilmYourHospital trending” and “[p]ost pics of ur hospital here!”. 1

(a) #FilmYourHospital Communication Network: Day 1–3, (b) #FilmYourHospital Communication Network: Day 1–4, and (c) #FilmYourHospital Communication Network: Day 1–5.

During the subsequent two days (Figure 4(b) and (c)), the network “lit” up with mostly retweets of tweets posted by prominent conservative commentators and political activists like @realcandaceo (with 2 M followers) and @DonnaWR8 (with 100 K followers), a pro-Trump account (Britzky, 2017).

Accounts behind the campaign

While influential conservative politicians and activists were behind some of the most shared and early content in this network, the question remains about who was behind the retweets that formed most interactions and sustained the network passed the initiation stage. To answer this question, we examined the most active accounts in this network and the most frequently used keywords in the account bios (see Figure 5). Based on the manual examination of public profiles, the majority of users who posted a tweet using this hashtag self-described themselves as Trump supporters and used words and hashtags such as #MAGA (Make America Great Again), #KAG (Keep America Great), and #trump2020.

100 most frequently used profile words by 100 or more users (visualized with wordclouds.com).

International reach

While the majority of users appear to be Trump supporters, seven days after the initial #FilmYourHospital tweet, on 3 April, the hashtag went international when a new cluster of users from Brazil emerged. It is shown as a green-color grouping of nodes in the top corner of the network visualization (Figure 6). Most of the tweets in this cluster were around popular conservative commentators and activists from Brazil such as @allantercalivre, Allan dos Santos—a self-described businessman, journalist, blogger, and a prominent pro-Bolsonaro supporter. While the international expansion of this hashtag is notable, the spread of this campaign in Brazil is not surprising. U.S.-style politics has been in ascendance in Brazilian society over the past few years, culminating with the election of Jair Bolsonaro, a far-right candidate during the 2018 Presidential Election (Recuero et al., 2019, 2020; Soares et al., 2019).

#FilmYourHospital Communication Network showing users tweeting in different languages. Top four languages: English (purple nodes), Portuguese (green); Arabic (orange); and Japanese (red).

As identified by Twitter, aside from 15,699 tweets in Portuguese (presumably from Brazil) and 73,010 tweets in English, there were tweets in 33 other languages, suggesting that the campaign received some international attention. The second and third largest non-English clusters were users tweeting in Arabic (1616 tweets) and Japanese (515 tweets), but both of these clusters were much smaller and are not as interconnected as the Brazilian one.

Suspended accounts and “inauthentic” behavior

Considering that this campaign was based on a conspiracy theory, it is useful to examine whether it was an organic campaign or driven by a coordinated action (what Twitter calls an “inauthentic” behavior). To examine this further, we automatically checked each account and tweet in our dataset to identify which were deleted or protected by a user or suspended by the platform.

Since Twitter has much more information about its users (such as users’ phone numbers and IP addresses) than what is available via its public API, knowing what accounts were suspended by Twitter a few days after they joined the campaign gives us an estimate of how many suspicious accounts were amplifying the campaign. An account can be suspended or temporarily restricted if it is in violation of the Twitter community rules and standards (Twitter, n.d.). Some of the reasons for suspension include:

artificially amplifying or suppressing information, interfering in elections, sharing synthetic/manipulated media which may cause harm, or promoting violence against, threatening, or harassing an individual or a group of people.

In total, as of 17 April, we found that 4779 (5%) of tweets and retweets were either deleted by users or disappeared because they were flagged by the platform (or other users). While there are a number of reasons why individuals may choose to delete their own “regrettable” tweets such as the presence of negative sentiment, cursing, or content related to sex, alcohol, drugs, violence, race, or religion (Zhou et al., 2016), we were more interested in those tweets that disappeared because their posters were suspended by Twitter, which would be an indication of an account violating the community norms as established by the platform.

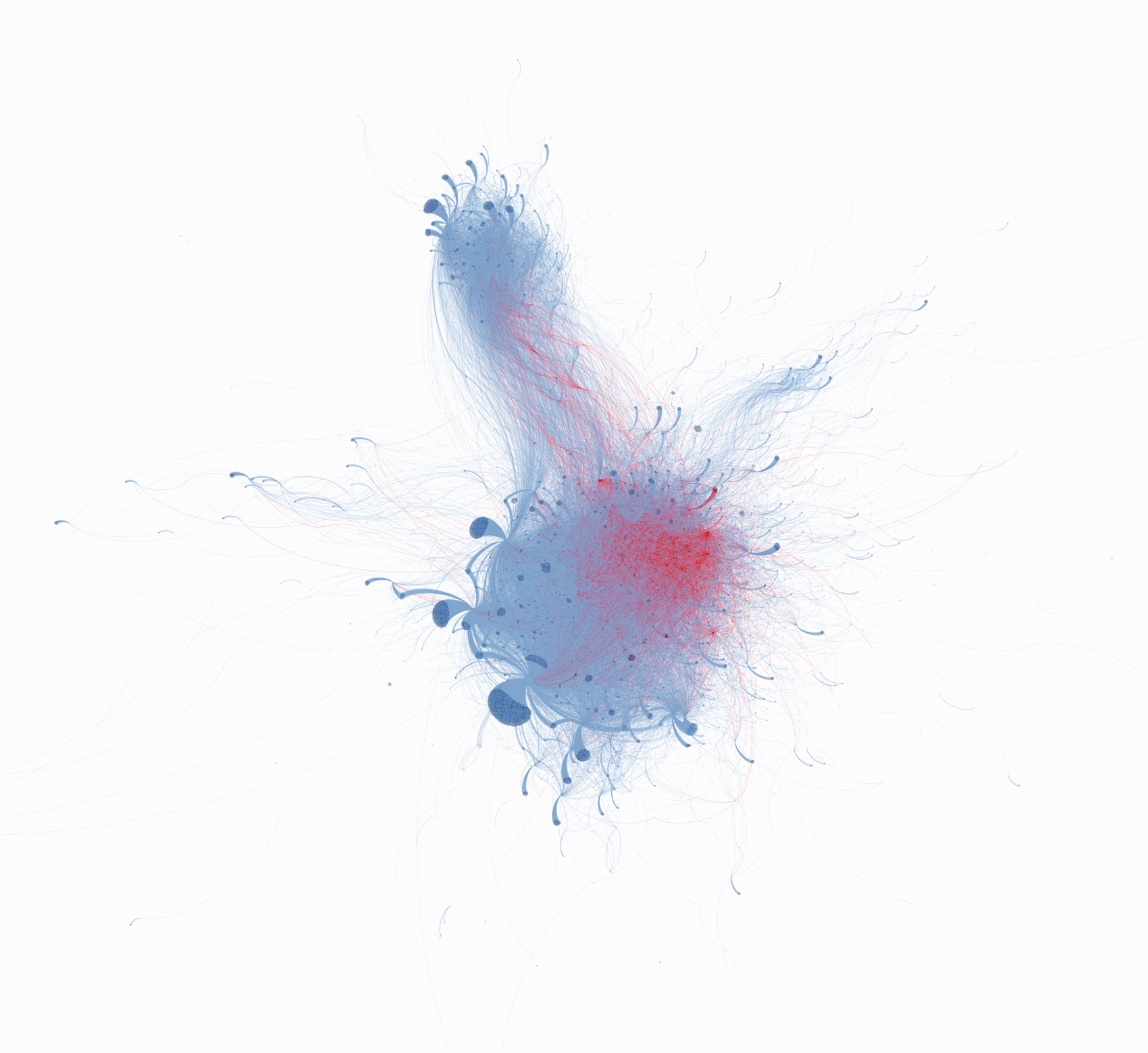

From our analysis, we identified 1059 accounts in the network that were either suspended or temporarily restricted by Twitter. We then used this information to highlight the corresponding nodes and interactions in the network visualization in red (see Figure 7). Based on the visualization, while temporary or permanently suspended accounts appear to be spread out across the network, they are especially concentrated in the top right corner. This is particularly intriguing because this part of the network that was especially active at a later stage of the campaign after 31 March.

#FilmYourHospital Communication Network (full dataset). Red nodes/ties = accounts suspended or temporary restricted by Twitter (as of 17 April 2020). Indigo nodes/ties = all other accounts.

Bot or not?

The identification of suspended accounts helped us understand the prevalence of potentially inauthentic behavior or coordinated effort to amplify the campaign. To check whether the accounts that contributed to this hashtag campaign are automated or not (aka bots), we used a machine learning tool to classify accounts as likely bots or not. There are multiple approaches to detecting social bots on Twitter, and none of them are perfect (Davis et al., 2016; Gurajala et al., 2016; Paavola et al., 2016; Rauchfleisch and Kaiser, 2020). Moreover, bot detection is a game of cat and mouse, as the bot makers are constantly evolving, becoming more sophisticated, and finding new ways to bypass detection. For this exploratory analysis, we relied on a third-party tool called Botometer (Yang et al., 2019) to check a sample of 1213 accounts (∼2.79%) that shared 10 or more tweets in the dataset, including 28 accounts that posted over 100 tweets. The reason for focusing on this sample is because of how active these accounts were relative to the other accounts in the dataset.

Botometer assigns each account a Complete Automation Probability (CAP) score between 0 and 1 to determine how likely an account is automated (0 = not likely to 1 = highly likely). We used the language-independent version of the provided CAP score since some accounts did not tweet in English. These scores are based on a number of factors, such as when the account was created, how often it posts, and who else it is connected to it on Twitter. Figure 8 shows the overall distribution of Botometer scores. Out of 1213 accounts tested, we could not retrieve scores for 26 accounts because 10 were suspended by Twitter, 10 were deleted by the user, and 6 became “protected” accounts. Following Zhang et al. (2019), we consider any account with the CAP score 0.25 or above as potentially automated.

The distribution of Botometer CAP scores using the logarithmic scale (n = 1187). Cenglish = includes content analysis; and cuniversal = language-independent model.

In total, most accounts (1147, 98%) had a Botometer score below 0.25. Only 40 accounts had the score equal to or above 0.25. This suggests that the majority of the most active accounts involved in this campaign were human-like in their behavior, with only a small number of accounts exhibiting bot-like behavior.

Conclusions

Our analysis suggests that most of the active and influential accounts behind the #FilmYourHospital campaign on Twitter were not automated. However, we did find signs of ad hoc coordination among conservative internet personalities and far right groups attempting to take this conspiracy theory and weaponize it against their political opponents.

Importantly, we found that while much of the content came from users with limited reach, the oxygen that fueled and expanded this conspiracy in its early days came from just a handful of prominent conservative politicians and far right political activists. These power users used this hashtag to build awareness about the campaign and to encourage their followers to film what is happening in their local hospitals. After the initial boost by a few prominent accounts, the campaign was mostly sustained by pro-Trump supporters, followed by a secondary wave of propagation outside the U.S.

In normal times, conspiracies like this might make people shake their heads at the gullibility of humanity. However, as COVID-19 cases continue to stalk the hallways of nursing homes and fill beds in hospitals around the globe, it is harder to ignore such conspiracies. The case of the #FilmYourHospital conspiracy from a single tweet demonstrates that while the spread of misinformation can be potentially mitigated by fact-checking and directing people to credible sources of information from public health agencies (Tambuscio et al., 2018; Vraga and Bode, 2017), false and misleading claims that are driven by politics are much harder to root out (Recuero et al., 2019; Vargo et al., 2018). As long as opportunistic political activists find it useful to exploit conspiracy theories during COVID-19, we will continue to see the spread of these damaging ideas.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is funded by the Canadian Institutes of Health Research (PIs: Veletsianos, Hodson, Gruzd), and is also supported by the Canada Research Chairs program (PI: Gruzd) and a Compute Canada computing grant (PI: Gruzd).