Abstract

Half of U.S. employers consider credit history when deciding whom to hire. The practice has become a contentious policy issue, with multiple jurisdictions limiting the use of credit reports in employment. Yet to date, there has been no test of how the introduction of credit history influences the way employers make decisions. Recent qualitative research finds that employers evaluate credit reports in contingent and person-specific ways, which opens the door to bias according to applicant characteristics, such as race and sex. To test for potential disparate impact in employment outcomes from the use of credit reports, we conduct a survey experiment with 1,050 hiring professionals. We find that including a bad credit report in an applicant’s file reduces respondents’ likelihood of hiring female (vs. male) applicants and reduces the recommended starting salary offered to black (vs. white) applicants. We discuss the implications of this study for research and public policy.

Today, about half of U.S. employers consult job candidates’ credit reports when making hiring decisions (Society for Human Resource Management 2012). 1 Employers across industry and job type have adopted the practice, thanks to easier computer access to credit reports, marketing efforts by credit bureaus and third-party background-check outfits, and a desire to certify workers as low risk—even though there is little evidence that credit history predicts workplace outcomes (Martin 2010; Bernerth et al. 2012; Bryan and Palmer 2012; Weaver 2015; cf. Oppler et al. 2008). In recent years, employment credit checks have become a contentious policy issue, partly from concern that credit checks may disparately affect minorities, women, and other categories of individuals protected under U.S. employment law (Equal Employment Opportunity Commission 2010). 2 Since 2007, 11 states and multiple cities have passed laws restricting employers’ use of credit history (National Conference of State Legislatures 2015), yet to date there has been no systematic study of how race and sex factor into hiring professionals’ decision making as they evaluate credit reports.

Drawing on an original survey experiment with 1,050 real-world hiring decision makers, we begin to fill this gap by examining how the introduction of credit reports to the hiring process shapes employment outcomes as a function of an applicant’s race and sex. By revealing what happens at the moment of decision making in a setting where only sex, race, and credit history vary, we are able to test for interactive effects between an applicant’s credit report and his or her ascriptive characteristics. In doing so, we aim to shed light on whether the introduction of good and bad credit reports to the hiring process leads to different employment outcomes for female versus male and black versus white job candidates.

This article builds on recent qualitative work that suggests credit history may factor into employment decisions in a way shaped by the social backgrounds of both job candidates and hiring professionals (Kiviat forthcoming). Credit reports provide detailed information about delinquent loans, accounts in debt collection, money-related court proceedings, and other aspects of candidates’ personal financial lives (Avery, Calem, and Canner 2003). Hiring professionals generally have discretion in how they integrate this information into employment decisions—in one survey, 80 percent reported sometimes hiring a candidate despite bad credit (Society for Human Resource Management 2012)—and they often make sense of the contents of credit reports in personalized ways, selectively drawing on narratives of responsibility, blame, and forgiveness (Kiviat forthcoming). While credit reports may at first blush seem to be purveyors of objective financial data, they are, in practice, fodder for a highly interpretive process. Nearly all employers check credit at the end of the hiring process, after interviewing candidates (Society for Human Resource Management 2012), which means employers already have substantial amounts of other information about candidates, including visible traits like race and sex.

Researchers have recently begun to explore the effects of using credit reports in hiring on the employment outcomes of racial minorities and other groups of workers. Blacks tend to have worse credit on average (Avery, Brevoort, and Canner 2009), which suggests that restricting the use of credit reports would increase the rate at which blacks are hired. But leveraging the passage of laws that restrict the use of credit reports, Bartik and Nelson (2017) find the opposite effect: In the wake of such restrictions, job-finding rates for blacks overall drop by 7 to 16 log points. (The researchers observe no conclusive effects for whites or Hispanics.) Ballance, Clifford, and Shoag (2017) also find that employment for blacks overall drops in the wake of credit check restrictions, and at the same time, employers begin to rely more heavily on other credentials, like college degrees, on which blacks also fare worse. Both sets of researchers interpret their findings through the lens of statistical discrimination, in which employers assume that group-level averages of traits apply to individuals (Phelps 1972; Arrow 1973). Under this theoretical framework, credit reports are taken to be a way of telling apart desirable from undesirable employees, and absent that signal, employers revert to racial stereotypes and alternate credentials. One potential conclusion from such findings is that employers should be allowed to use credit history in employment decisions.

This interpretation, however, fails to account for the fact that a credit report may not be a static signal. In a world where employers have access to credit reports, inequalities may persist if how hiring professionals understand the meaning of a credit report depends on whose credit report it is. Work in status characteristics theory shows that distinctions like race and gender evoke cultural beliefs about competence and worth and lead employers to expect better performance from some groups than from others (Wagner and Berger 1993; Correll, Benard, and Paik 2007), and research in double-standards theory demonstrates that an actor’s social group membership can determine how critically a judge evaluates that person’s actions (Foschi 1996, 2000; Goldin and Rouse 2000). For example, men are penalized more than women are for a history of part-time work (Pedulla 2016), women’s mistakes on math skills tests are taken to be more serious indicators of inability than are men’s (Rivera 2015), and black applicants with criminal records receive fewer job callbacks than do whites with the same criminal records (Pager, Western, and Sugie 2009; see also Pager 2003, 2007). Employer access to additional “objective” information can change how hiring professionals evaluate candidates, not always in a more equitable direction.

Differences in theoretical predictions arise because, as Correll and Benard (2006) explicate, the source of bias in statistical discrimination theory is informational, while the source of bias in the more sociological theories—which take heed of culturally informed beliefs and status relationships—is cognitive (see also Rissing and Castilla 2014). Given additional information about individual job applicants bias fades away in a world of statistical discrimination as employers dispassionately understand more and more about particular people, but bias may move in any direction in a world where the meaning of information changes based on who the information is about. Correll and Benard write that when discrimination is the product of a cognitive process, rather than an information deficit, it is often the case that “women and ethnic minorities in the workplace will be evaluated according to a more stringent standard than white men. … Thus, members of advantaged groups will be more likely to meet employers’ expectations for productivity than members of disadvantaged groups, given equivalent signals of productivity” (p. 100, emphasis added). That is to say, even when given the exact same information about job candidates, employers may draw different conclusions from that information based on whether the candidate is white or black, male or female. In the realm of employment credit checks, Kiviat (forthcoming) suggests such differences may be particularly pronounced when job candidates and hiring professionals differ in social background.

The possibility that credit reports could carry different weight depending on who the applicant is motivates the current study. Many Americans have negative marks on their credit reports: About one fifth of credit reports include a serious delinquency (Avery et al. 2009), nearly one third show at least one debt in collections (Ratcliffe et al. 2014), and one in eight include a public records item such as bankruptcy, foreclosure, or a lien for unpaid taxes (Avery et al. 2003). When hiring professionals consider credit reports, they largely pay attention to these and other indications of “bad credit” (Society for Human Resource Management 2012). Credit checks are one final way of sifting out potentially problematic candidates at the end of the hiring process; having good credit does not typically bring much added benefit (Kiviat forthcoming).

Given this asymmetry in how credit reports are used, it is important to examine not only how a subpopulation benefits or suffers on average from the use of credit reports but also how particular types of members within that subpopulation fare differently. It is plausible that black or female candidates with good credit benefit from the use of credit reports in hiring while black and/or female candidates with bad credit are disproportionately disadvantaged relative to white or male candidates with the same bad credit. Normative conclusions about the use of employment credit checks would then hang on which group is seen as more important to protect. Considering policy makers’ concern that the use of credit reports in hiring exacerbates existing economic disadvantage, a notion for which there is empirical evidence (Thorne 2007; Maroto 2012; Herkenhoff, Phillips, and Cohen-Cole 2017; Bos, Breza, and Liberman forthcoming; cf. Dobbie et al. 2017), the answer may be candidates who have bad credit.

To test whether and to what extent the introduction of credit report information affects hiring decisions differentially as a function of an applicant’s race and/or sex, we conducted a survey experiment with 1,050 real-world hiring professionals. Our sample of hiring decision makers allows us to examine, for the first time, how the introduction of good and bad credit reports shapes employment decision making relative to a situation in which hiring professionals have no credit report information. We present evidence that the introduction of credit history to an applicant’s file has a differential effect on employment outcomes depending on job candidates’ ascriptive traits. Specifically, we find that including a bad credit report in an applicant’s file reduces respondents’ likelihood of hiring female (vs. male) applicants and reduces the recommended starting salary offered to black (vs. white) applicants. We then discuss the implications of our study for public policy and future research.

The Survey Experiment

To examine how credit reports influence employment decisions we conducted an original survey experiment with a sample of 1,050 hiring professionals. Unlike information that is often available or inferable from an initial job application or résumé (e.g., a person’s race, sex, or felony conviction), credit reports rarely enter the picture until employers have narrowed the field of applicants down to a single or short list of final candidates, almost always after conducting interviews, at which point they purchase credit reports and other background-check materials (Society for Human Resource Management 2012; Kiviat forthcoming). Compared to audit study designs that permit examination of how race, sex, and applicant information—such as a criminal record (Pager 2003) or employment history (Pedulla 2016)—interact to shape first-round call back rates from employers, our survey experiment permits us to better simulate how the introduction of credit information may affect hiring considerations at this later stage in the process.

Respondents were recruited in July and August 2016 from an online business panel managed by the research firm Qualtrics and were asked to review the application materials of an individual seeking a position as an assistant store manager. 3 Each respondent was randomly assigned to one of three credit report conditions, receiving either (1) a credit report showing all accounts as current with no late payments (“Good Credit Report” condition), (2) a credit report showing a history of late payments and accounts currently in collection (“Bad Credit Report” condition), or (3) no credit report (“No Credit Report” control condition). In addition to the three credit conditions, we manipulated the race and sex of the applicant. This yielded a 3 (Good Credit, Bad Credit, No Credit) by 2 (Male, Female) by 2 (Black, White) between-subjects research design that enabled us to test whether and to what extent credit reports influence employment recommendations differently as a function of the applicant’s race or sex (see Tables A1–A3 in the appendix for covariate balance across conditions). In the rest of this section we provide details about our sample, experimental design, independent and dependent variables, and analytic approach.

The Sample

Respondents were selected through a multistage process. First, from Qualtrics’s panel of business professionals we drew a subset of individuals who either had indicated to Qualtrics that hiring was a job responsibility or who had a title suggesting this might be the case (human resources manager/director, manager, supervisor, team lead, small business owner). Next, these individuals were sent an email from Qualtrics asking them to update their current job responsibilities. Specifically, these business professionals were shown a list of several job responsibilities including hiring. 4 Those who indicated hiring was a job responsibility were then invited to take our survey. Finally, as a last filter, respondents were asked how many times they had reviewed job applications over the course of their career. Those with an answer greater than zero were invited to complete the survey.

This process yielded a sample of 1,050 business professionals with real-world hiring experience and responsibilities. Descriptive statistics on the respondents and their organizational context are presented in Table 1. Our sample of hiring professionals includes respondents with a range of experience in evaluating applicants. Almost 30 percent have reviewed applications more than 100 times, while about one fifth have reviewed applications fewer than 10 times. Just less than half work in a formal human resources capacity (as opposed to, say, management positions that include hiring) and more than three quarters report being in their current position for at least four years. Respondents hail from a range of industries, with no single industry accounting for more than 15 percent of the sample. Our sample was diverse geographically, with respondents located in 47 states and Washington, D.C., with no state or region dominating the sample. Notably, 57 percent of respondents reported that their organizations use credit reports at least sometimes in evaluating job applicants, which is consistent with the figures found in Society for Human Resource Management surveys (47 to 61 percent). This gives us confidence that our sampling captured a telling cross-section of hiring professionals.

Key Characteristics of Survey Respondents and their Organizational Context (n = 1,050).

Experimental Design

Respondents deemed eligible to complete the survey were shown the following prompt (text in brackets varied by whether the respondent was in the control condition): Please imagine that your company is in the process of hiring someone for an Assistant Store Manager position. The current Assistant Store Manager has screened the applicants and selected a handful of qualified candidates for additional review. You have been asked to review the file for one of the candidates. The file includes a candidate profile and summary of the initial screening interview, [and] a resumé, [and a copy of the candidate’s credit report]. At a managers’ meeting next week you will discuss this candidate and others in order to make a final decision. We realize that it is difficult to evaluate job candidates based on the limited information provided. Please do your best to answer each question, even if you do not feel like you have all of the information that you would usually have when evaluating a job candidate.

We told respondents that the applicant they would be reviewing had already been interviewed by the current assistant manager in order to simulate the type of information employers typically have at the point in hiring when they consult credit reports. According to the Society for Human Resource Management (2012), about 9 in 10 employers that conduct credit checks do so after job interviews. This means that when a hiring professional evaluates a credit report, he or she may very well have the sort of interactional information gleaned from an interview but unavailable from a résumé or other written document. By giving respondents knowledge of a colleague’s interview experiences, we replicate the real-world context in which credit reports are considered. 5 In the prompt to respondents we also made clear that there were still other applicants in contention for the job so that respondents did not feel pressure to hire their applicant lest the position go unfilled. Additionally, we stated that all candidates would be discussed at a managers’ meeting, so that respondents felt invested in making a thoughtful decision.

After reading the prompt, respondents were shown the materials for the applicant they were randomly assigned to review. The packet of materials contained a one-page candidate profile, including notes from an interview conducted by the current assistant store manager; a résumé; and for certain experimental conditions, a credit report. We include examples of these application materials in the appendix. The materials show the applicant earned a bachelor’s degree in business from Penn State University and since graduation has worked in two retail positions for about three years each with increasing levels of responsibility. We purposefully constructed the applicant’s education and past work experience to signal they are qualified for the position because by the time an employer considers a credit report, unqualified candidates have typically been screened out.

The candidate’s résumé—which was identical across conditions except for the applicant’s name—showed the applicant has a bachelor’s degree and several years of relevant work experience. The candidate profile included the candidate’s name, basic demographic information and employment history, and a written summary of the interview that had already taken place. The interview summary—which was identical across applicants—was positive, with the interviewer noting that he or she believes the applicant is qualified for the position. At the bottom of the candidate profile, respondents who were assigned to the bad credit report condition were told, “Following our interview, I pulled [candidate name’s] credit report per company policy and have attached it here for your review. It shows [he or she] has two accounts in collection, two accounts more than 90 days past due and one court judgement.” Respondents in the good report condition were told a credit report has been pulled that shows the applicant is current on all accounts.

Respondents in either the good credit report condition or the bad credit report condition also saw a copy of the applicant’s credit report, which we describe in detail below. Respondents in the credit report conditions therefore received more overall information about the applicant than those in the no credit report condition. We chose not to balance the amount of information across conditions by adding superfluous information to the no credit report condition, since doing so would contaminate the reference group and make it impossible to isolate the effect of adding a credit report to the hiring process. Including all three conditions—no credit report, good credit report, bad credit report—permits us to estimate the effect of including the applicant’s credit report as an additional piece of information about employment decisions.

To make sure respondents were able to fully digest the information we provided, we allowed them to move back and forth among screens as much as they wanted before moving on to the next part of the experiment.

After reviewing the applicant information, respondents were asked how likely they would be to recommend hiring the applicant and what starting salary they would recommend (details below). Respondents were then asked to assess the applicant’s potential job performance, personality traits, and other background and behavioral characteristics. The survey concluded with questions about the respondent and his or her employer.

Signaling Applicant Race and Sex

We signaled applicant race and sex in two ways. First, in line with previous studies, we used names with a high probability of belonging to blacks, whites, males, and females, respectively (Bertrand and Mullainathan 2004; Census Bureau 2014; Gaddis 2014; Bureau of Labor Statistics 2016; Pedulla 2016). We chose two names for each of our race-sex categories to help ensure our results captured race/sex effects and not idiosyncratic responses to a particular name:

Black male: Tyrone S. Washington, Jamal S. Washington

Black female: Ebony S. Washington, Keisha S. Washington

White male: Greg S. Baker, Neil S. Baker

White female: Allison S. Baker, Emily S. Baker

One potential problem with using names to signal race is that each name may be perceived differently, with some racially coded names more likely to be perceived as black than others (Gaddis 2017). To address this possibility, we also signaled applicant race and sex directly on the sheet with the candidate’s profile and interview summary. Since race and sex are subject to legal protections enforced by the federal Equal Employment Opportunity Commission, this information is often collected directly from the applicant at some point in the hiring process. Moreover, given that the applicant has already been interviewed by someone at the firm, the race and sex of the applicant would likely be known (or inferred) from that interaction. At the end of the survey we asked respondents to identify the race and sex of the applicant they reviewed. We find no statistically significant difference across the black coded names in the likelihood a respondent correctly identifies the applicant’s race or across the female coded names in the likelihood a respondent correctly identifies the applicant’s sex. 6

Signaling Applicant Credit Report Quality

To the best of our knowledge, we are the first researchers to use a credit report in an experimental setting. To design a mock credit report, we began with real-world examples of reports third-party vendors sell to employers. We designed our report to mimic both the formatting and content of such documents. These reports contain detailed fields of financial and other data; they do not include credit scores. To operationalize bad credit, we included two unpaid credit-card bills and two debts in collection (meaning that a firm has “written off” the chances of recouping money), as well as a money-related civil judgment under the header “public records.” These items capture common problems visible on credit reports (Avery et al. 2003; Avery et al. 2009; Ratcliffe et al. 2014). Employers’ accounts of what they pay attention to on credit reports and what counts as “bad credit” in their eyes heavily guided our selection of these particular negative items (Society for Human Resource Management 2012; Kiviat forthcoming).

The good credit report includes a summary box that notes all accounts paid on time. Below this summary box are specific credit accounts, with a status of “paid on time,” which is highlighted in green. The bad credit report is different. The summary box reads “1 court judgment; 2 accounts in collection; 2 accounts 90 days+ past due.” Below, each of those negative items is reported, with the status “unpaid” and “90 days late” highlighted in red. In addition to the credit report itself, the last line of the candidate profile notes that the applicant’s credit report had been pulled and summarizes its contents.

Key Outcomes

After reviewing the application materials, respondents were asked, “How likely would you be to recommend that your company

Analytic Approach

Ordinary least squares models are used to analyze the effect of the good and bad credit report conditions—relative to the control condition, which includes no credit information—on recommendations to hire and recommended starting salary. We first present models estimating the effect of the credit report conditions and applicant race and sex on hiring outcomes. We then interact the two credit report conditions with a binary indicator for whether the applicant is female and then with a binary indicator for whether the applicant is black. These models explore whether the addition of credit history differentially affects hiring outcomes as a function of the applicant’s race or sex.

For the models that include interaction terms, we first present unadjusted estimates (model 1). Given the relatively small sample size, we then introduce a series of theoretically motivated respondent covariates to increase the precision of the estimated treatment effects (Gelman and Hill 2006). Model 2 adds controls for respondent demographics (race/ethnicity, sex, age, income, education). Model 3 adds controls for the size of the respondent’s organization and the number of job applications the respondent reports having evaluated over the course of his or her career. Model 4 adds a binary indicator for whether the respondent lives in a state with a law restricting the use of credit reports in hiring. Finally, to examine whether treatment effects vary as a function of respondent characteristics, model 5 presents results estimated on a subset of the sample—male respondents for the sex-specific analysis and white respondents for the race-specific analysis.

Results

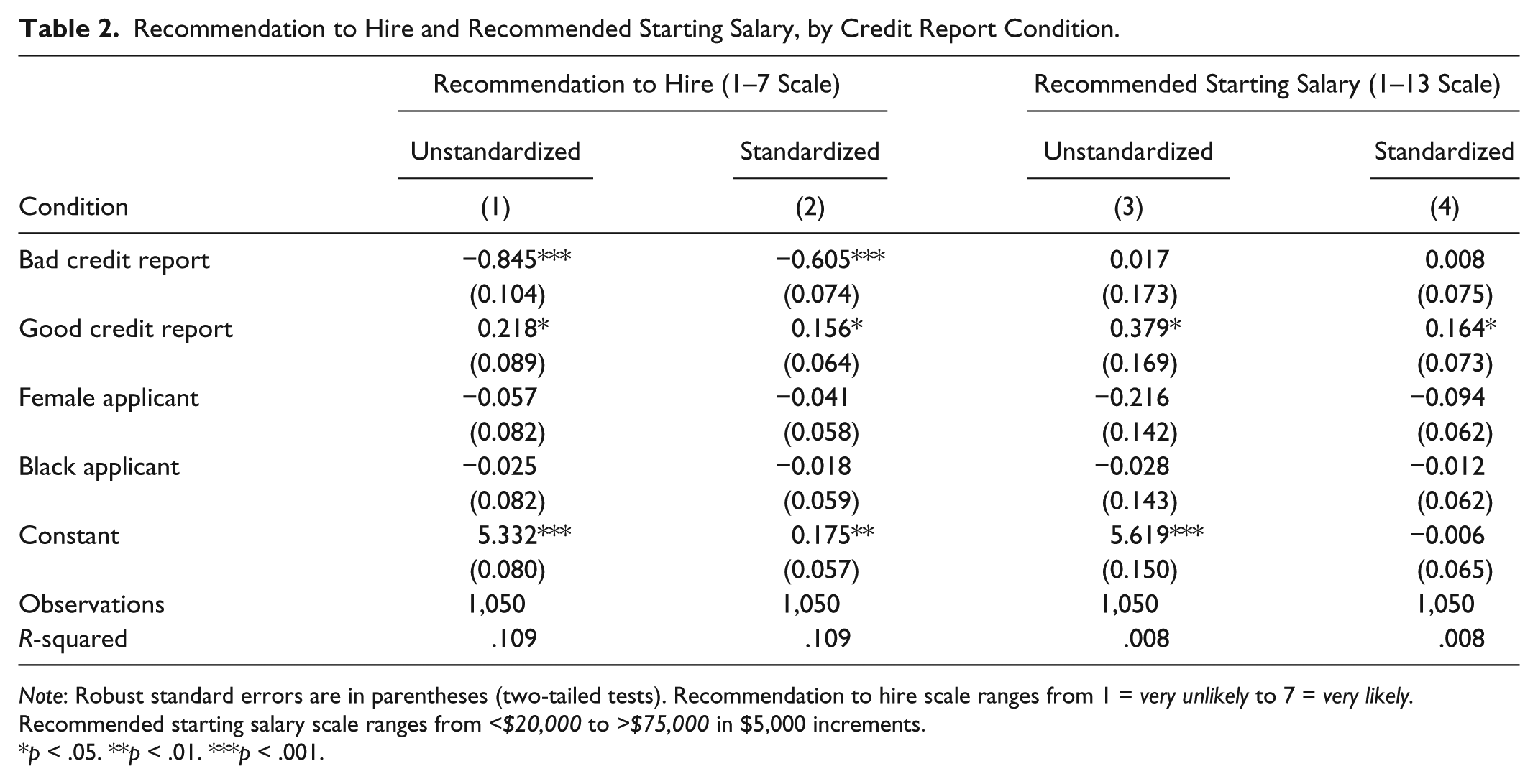

Table 2 presents the effects of various applicant profiles—including having a good credit report or a bad credit report relative to no credit information—on respondent recommendation to hire and recommended starting salary. These baseline models also include dummy indicators for applicant race and sex. The coefficient on the bad credit report condition is large, negative, and statistically significant (p < .001), indicating that having a bad credit report hurts an applicant’s prospects of being hired. The point estimate indicates having a bad credit report reduces the likelihood that a respondent will recommend the applicant be hired by .84 points on the 7-point Likert-type scale (column 1), equivalent to 60.5 percent of a standard deviation (column 2). The estimated coefficient on the good credit report condition is positive but smaller in magnitude, with the point estimate indicating that a good credit report increases the likelihood the respondent recommends to hire by 15.6 percent of a standard deviation (about .22 points on the 7-point Likert-type scale). Relative to no information about the applicant’s credit quality, a bad credit report appears to have a large negative effect on an applicant’s likelihood of being hired whereas having a good credit report confers only a small boost to the applicant’s chances of being hired.

Recommendation to Hire and Recommended Starting Salary, by Credit Report Condition.

Note: Robust standard errors are in parentheses (two-tailed tests). Recommendation to hire scale ranges from 1 = very unlikely to 7 = very likely. Recommended starting salary scale ranges from <$20,000 to >$75,000 in $5,000 increments.

p < .05. **p < .01. ***p < .001.

Turning to recommended starting salary, we see no effect of the bad credit report condition, but we do see a small, positive effect of the good credit report condition. We find no main effect of applicant race or sex on respondent recommendation to hire or recommended starting salary. The lack of a baseline discriminatory effect is consistent with other survey experiments and is expected in this stylized scenario where the applicant has already been deemed qualified and has successfully interviewed for the position.

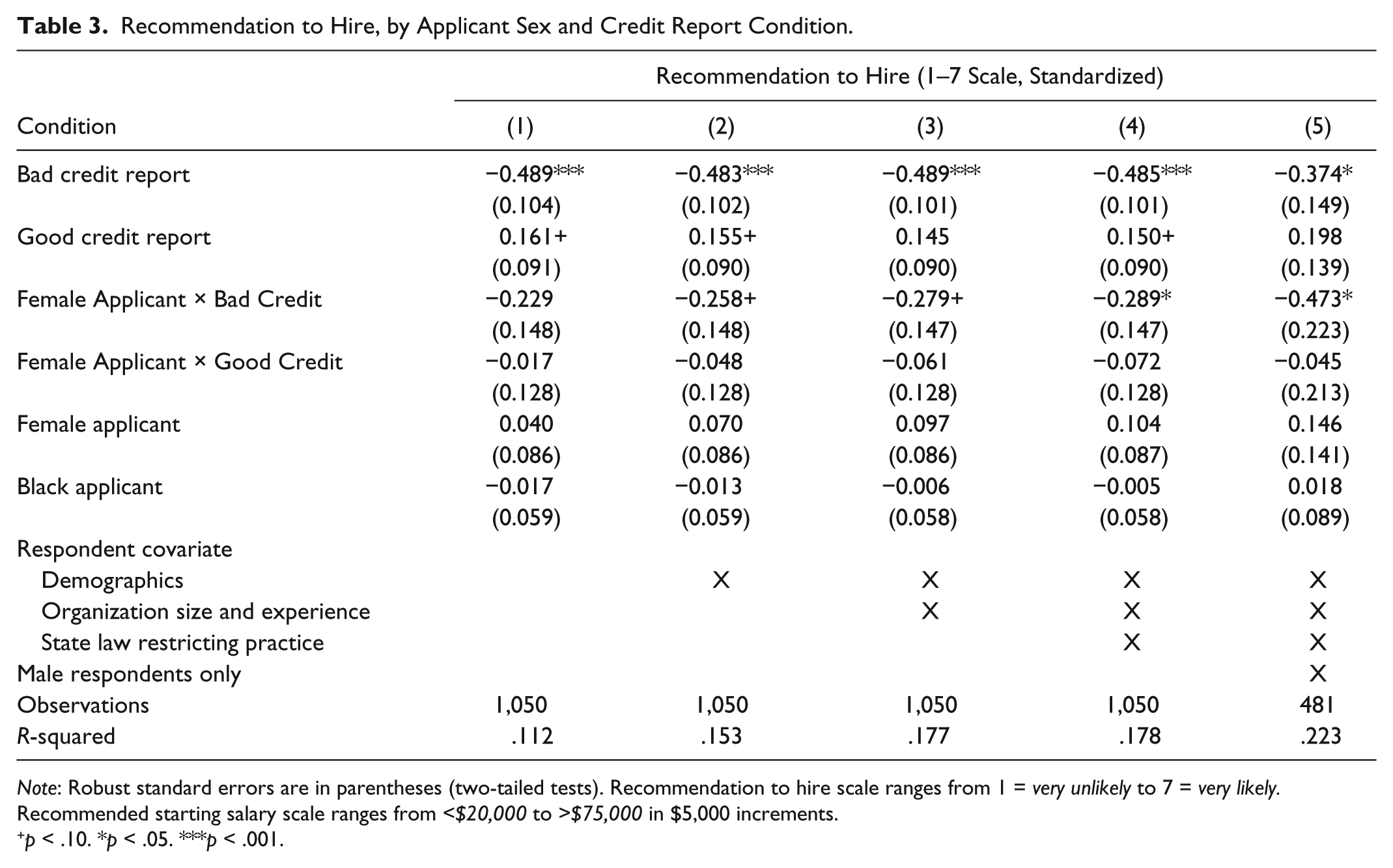

To examine whether female applicants are differentially affected relative to male applicants by the introduction of credit report information at this stage in the hiring process, we reestimated our models and included two interaction terms: one between the female applicant condition and the bad credit condition and one between the female applicant condition and the good credit condition. The reference category for the credit report conditions is no credit report; therefore, the interaction term indicates whether female applicants are differentially affected relative to male applicants by the use of credit reports in hiring. Put another way, the interaction term tells us whether the presence of credit reports in hiring evaluations has a different effect on hiring outcomes for female applicants relative to male applicants.

Estimates from models predicting respondent recommendation to hire (standardized) including these interaction terms are presented in Table 3. Column 1 presents estimates from the baseline model without respondent covariates. Here we see a relatively large and negative coefficient on the interaction term between female applicant condition and bad credit condition, although the effect is not statistically significant. To increase precision of our estimated treatment effects, columns 2 through 4 add in respondent covariates for demographics, organization size and experience, and working in a state with a law restricting the use of credit reports in employment. Inclusion of these covariates increases the absolute value of the estimated coefficient on the interaction term between female applicant and bad credit report condition, with the coefficient in the fully adjusted model large, negative, and statistically significant at the p < .05 level. This indicates that female applicants with bad credit are differentially affected by the inclusion of credit reports in the applicant profile relative to male applicants with bad credit.

Recommendation to Hire, by Applicant Sex and Credit Report Condition.

Note: Robust standard errors are in parentheses (two-tailed tests). Recommendation to hire scale ranges from 1 = very unlikely to 7 = very likely. Recommended starting salary scale ranges from <$20,000 to >$75,000 in $5,000 increments.

p < .10. *p < .05. ***p < .001.

Notably, the estimated coefficient on the interaction between female applicant and the good report condition is relatively small and not significant in any model specification, suggesting that female applicants may not be differentially affected relative to men by the introduction of a good credit report.

Column 5 reestimates the fully adjusted model on a subset of the sample restricted to male respondents only. Here the estimated coefficient increases in absolute magnitude. This suggests that male hiring professionals may be more likely to differentially evaluate credit information in the hiring process as a function of the applicant’s sex. This is consistent with qualitative evidence of how employers interpret credit reports in real-life hiring situations, where Kiviat (forthcoming) finds the characteristics of both the applicant and the hiring professional shape how credit information is understood and evaluated.

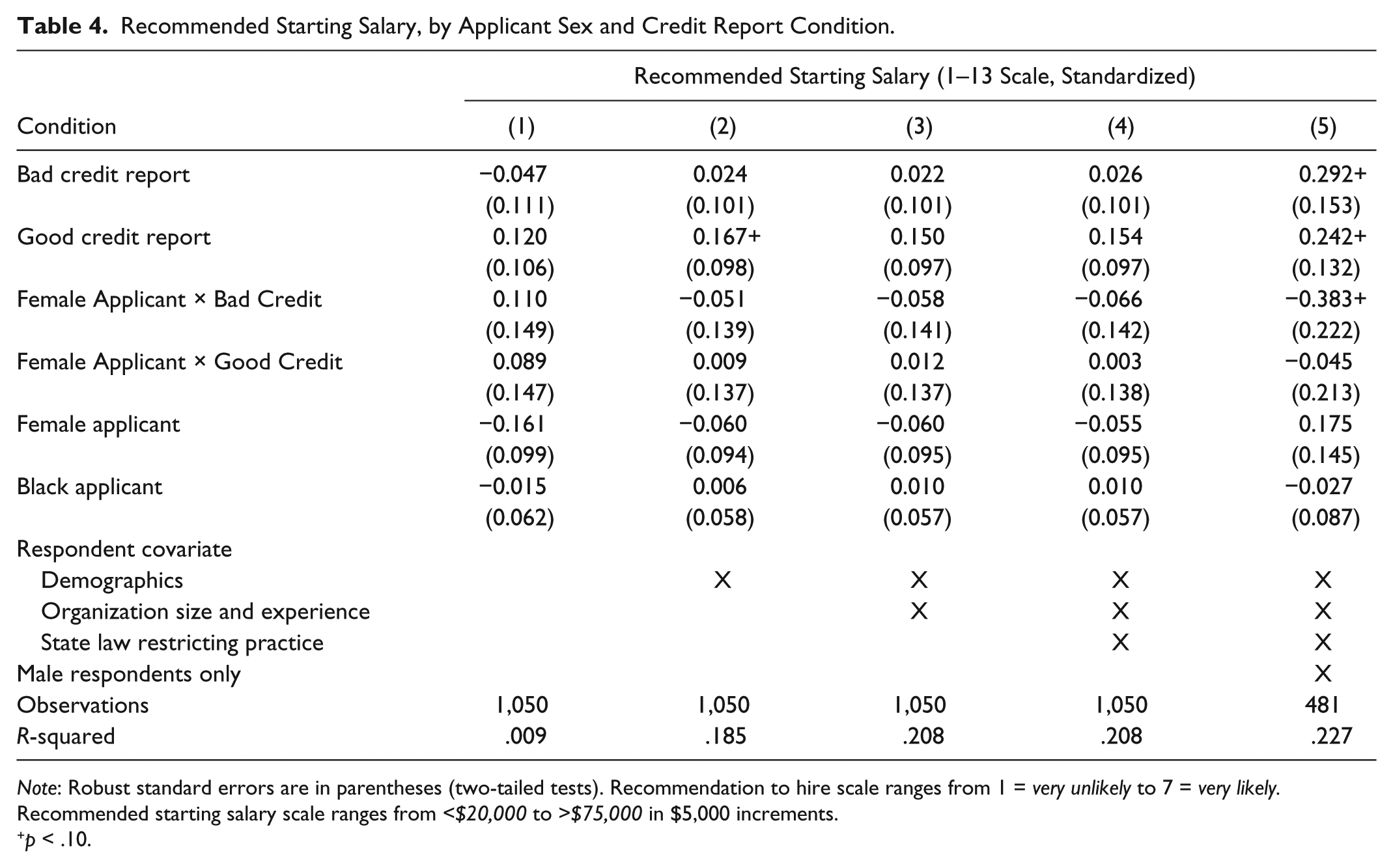

Table 4 presents estimates from models predicting our second hiring outcome—recommended starting salary—as a function of applicant sex and credit report quality. Here we see no significant interaction term in any of the models estimated on the full sample (columns 1–4). When we limit the sample to male respondents, we do see a relatively large and negative coefficient on the interaction term between female applicant and bad credit condition that is marginally significant at the p < .10 level. Again, we see no significant interaction between the female applicant and good credit condition in any model specification.

Recommended Starting Salary, by Applicant Sex and Credit Report Condition.

Note: Robust standard errors are in parentheses (two-tailed tests). Recommendation to hire scale ranges from 1 = very unlikely to 7 = very likely. Recommended starting salary scale ranges from <$20,000 to >$75,000 in $5,000 increments.

p < .10.

Next we examine whether the use of credit reports in hiring operates differently as a function of the applicant’s race. As before, to test for potential disparate impact by race in the use of credit reports in hiring we include in our model two interaction terms: one between the black applicant condition and the good credit report condition and one between the black applicant condition and the bad credit report condition. Models predicting recommendation to hire and recommended starting salary are presented in Tables 5 and 6, respectively. The interaction term between black applicant and bad credit report condition is small and statistically insignificant in predicting recommendation to hire across all model specifications in Table 5. The coefficient on the interaction term between black and good credit report condition is larger in magnitude and positive, although it is only marginally significant in one of the five model specifications.

Recommendation to Hire, by Applicant Race and Credit Report Condition.

Note: Robust standard errors are in parentheses (two-tailed tests). Recommendation to hire scale ranges from 1 = very unlikely to 7 = very likely. Recommended starting salary scale ranges from <$20,000 to >$75,000 in $5,000 increments.

p < .10. ***p < .001.

Recommended Starting Salary, by Applicant Race and Credit Report Condition.

Note: Robust standard errors are in parentheses (two-tailed tests). Recommendation to hire scale ranges from 1 = very unlikely to 7 = very likely. Recommended starting salary scale ranges from <$20,000 to >$75,000 in $5,000 increments.

p < .10. *p < .05.

Turning to models predicting recommended starting salary in Table 6, we see a large, negative coefficient on the interaction between black applicant and the bad credit condition that increases in absolute magnitude with the inclusion of respondent covariates; the estimated coefficient across models with any respondent covariates is significant at the p < .10 level. The estimated coefficient on the interaction term is even greater in magnitude and significant at the p < .05 level in the fully adjusted model restricted to white respondents only. This again indicates that the characteristics of the hiring professional may influence how credit information is understood and evaluated in the context of an applicant’s race or sex.

In sum, we find that relative to having no credit report information in the applicant file, a bad credit report hurts an applicant’s prospects of being hired. Consistent with the idea that credit reports are used most often to try to avoid hiring risky workers, having a good credit report appears to only marginally improve one’s chances of being hired. We further find evidence that introducing credit reports to the applicant profile has differential impacts on employment outcomes as a function of the applicant’s race and sex. Specifically, we find that including a bad credit report in an applicant’s file reduces respondents’ likelihood of recommending female (vs. male) applicants be hired and reduces the recommended starting salary offered to black (vs. white) applicants. Moreover, we find evidence that the absolute magnitude of this differential impact is greater for female applicants when we restrict the respondent sample to males and greater for black applicants when we restrict the respondent sample to whites, underscoring that the social categories of both the applicant and the hiring professional may affect how credit information informs employment decisions.

Discussion and Conclusion

Part of the promise of employers’ using seemingly objective information like what is contained in credit reports is that by basing hiring decisions on person-specific information, the influence of sex, race, and other social categories will be minimized. Our survey experiment shows how the inclusion of such information can actually accentuate social differences, not eliminate them. When we included credit reports among other job-application materials, the hiring outcomes of female and black applicants changed relative to those of men and whites, with bad credit doing more to hurt the prospects of both women and blacks, in terms of recommendation to hire and starting salary.

At first glance, these findings may seem to conflict with observational studies that find that when states allow employers to use credit checks, employers are more likely, on average, to hire blacks and that legal limitations on employment credit checks therefore hurt some of the people they are meant to help (Ballance et al. 2017; Bartik and Nelson 2017). In fact, our results show that even if these findings and their interpretation are correct, there is still a channel for disparate impact to occur. Drawing from sociological theories that detail the cognitive, rather than the informational, basis of bias (Correll and Benard 2006), we hypothesized that hiring professionals would be more influenced by a bad credit report when it belonged to a female or black job candidate. Drawing from qualitative research on how employers use credit reports in real-life settings (Kiviat forthcoming), we surmised that this influence would be most pronounced when hiring professionals and candidates differed in social background. Using an experimental design and a sample of hiring professionals, this is indeed what we found. Notably, we found evidence that hiring professionals use credit reports in a way that is inflected by sex—a form of bias little explored in existing research on employment credit checks—as well as by race. While a stylized survey experiment such as the one presented here cannot offer direct proof of what happens in actual labor markets, this study does provide evidence of a previously unconsidered mechanism by which employment credit reports might produce disparate impact.

What we would like policy makers to take from this study is an understanding of employment credit checks as a nuanced practice that calls for, at the very least, regulatory oversight. The Equal Employment Opportunity Commission or another government entity should collect data on the use of credit reports in hiring as a first step in evaluating whether and to what extent their use negatively affects the employment outcomes of members of protected classes, including, specifically, those with bad credit. Most employers currently leave room for discretion in the use of credit history (Society for Human Resource Management 2012), and one response to this article’s findings might be to impose strict standards on how credit reports can count against a person applying for a job since discretion is a common avenue for bias to emerge. Yet other research shows that making personalized exceptions for people with bad credit through no fault of their own can help deserving individuals secure employment (Kiviat forthcoming), so we hesitate to say that simply making hiring rules more rigid is the best approach. Rather, the goal should be granting exceptions for people with bad credit consistently across job candidates, regardless of race, sex, or other ascriptive trait.

Future research should address what exact benefits employers reap from using credit checks in hiring, since the concept of disparate impact essentially becomes moot if a screening device can be justified by “business necessity.” Extant research provides little evidence that credit history predicts workplace performance (Bernerth et al. 2012; Bryan and Palmer 2012; Weaver 2015; cf. Oppler et al. 2008), so employers, for their part, could contribute to the policy discussion by demonstrating that the use of credit checks helps drive down rates of fraud, absenteeism, or other issues they believe credit checks help address. Such findings would be particularly enlightening since employers outside of the United States rarely find it worthwhile to pull credit reports on large numbers of prospective employees (Mayer Brown 2015). If the U.S. practice of employment credit checks is more a matter of habit than it is empirically sound worker evaluation, then employers and legislators might have reason to reconsider the practice full stop, especially since otherwise qualified candidates with bad credit might be deterred from applying for jobs in the first place.

Looking beyond credit reports, our study motivates questions about whether other sorts of seemingly objective information may yield disparate impact in labor markets. As advances in technology enable employers to incorporate new, highly personalized pieces of information about individuals from the Internet, public records repositories, third-party consumer databases, and elsewhere, it is important to consider how this information may serve to reproduce or exacerbate inequalities between social groups. For example, many employers now screen job applicants by searching for information about them online and looking at their social media profiles and posts (Society for Human Resource Management 2016). While scholars have considered how such information may reveal otherwise occluded personal traits, such as religious belief and sexual orientation (Acquisti and Fong 2016), no one has yet investigated whether patterns of behavior revealed on such sites—like drinking, drug use, frequent dating, and “partying”—might be interpreted differently based on an applicant’s race, gender, class, and other social characteristics. Although we might anticipate, even hope, that providing more and more highly personalized information about individuals will lessen the impact of ascriptive or social characteristics on employment outcomes, inequalities may persist if how that information is assessed is contingent on whom it is associated with, as we find to be the case in this article.

Footnotes

Appendix

Acknowledgements

The authors thank David Pedulla, Curtis Chan, Peter Marsden, Letian Zhang, members of the Dobbin Research Group, seminar participants at the University of Wisconsin–Madison, and panel participants at the annual meetings of the Population Association of America and the American Sociological Association for their comments and suggestions. Kiviat gratefully acknowledges support from the Edmond J. Safra Center for Ethics, the Washington Center for Equitable Growth, and Harvard’s Multidisciplinary Program in Inequality and Social Policy.