Abstract

Introduction

No brief questionnaire comprising knowledge, skills, and attitudes is available to measure digital competence among clinical practice nurses.

Objective

The aim was to evaluate the structural validity and internal consistency of the Digital Competence Questionnaire (DCQ) for Clinical Practice Nurses.

Methods

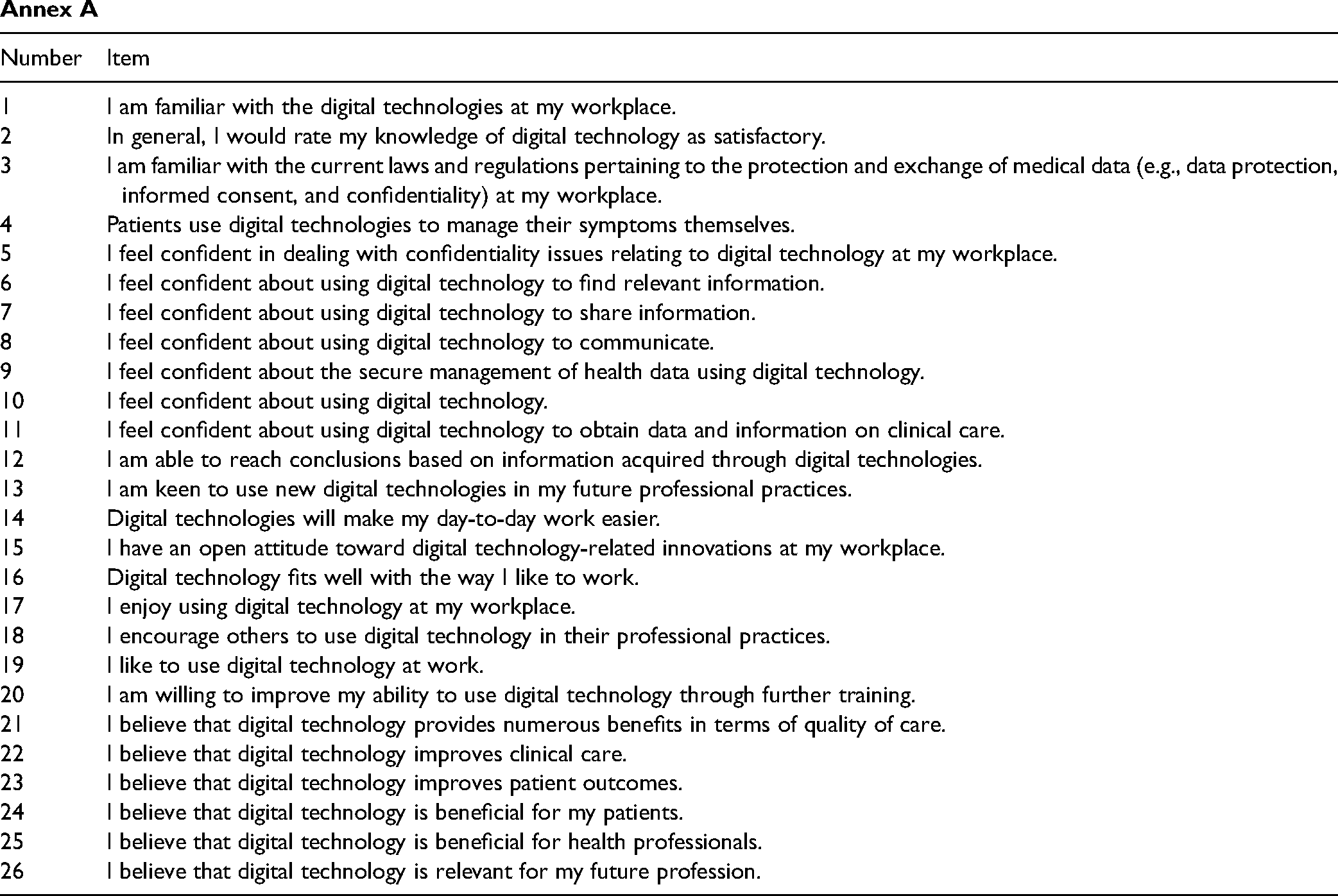

A cross-sectional study was conducted with English-speaking clinical practice nurses. Twenty-six items from an initial item pool, developed in a prior conducted Delphi Study, were included. Exploratory factor analysis for structural validity with “oblimin” rotation and a two-factor solution as well as internal consistency test using Cronbach's alpha were conducted.

Results

Data from 185 nurses was obtained. The final questionnaire comprised of 12 items allocated to two factors: knowledge & skills and attitude. Factor “attitude” explained 33% of the variance and factor “knowledge & skills” 24%, resulting in a cumulative explanation of the variance of 57% by both factors. Internal consistency per factor was satisfactory, with 0.81 and 0.91, respectively.

Conclusion

The DCQ for clinical practice nurses is valid and has satisfactory internal consistency. Researchers and nurse managers can use it to assess the level of digital competence among clinical practice nurses. Future psychometric validation of the DCQ for clinical practice nurses is required to allow a conclusion on the goodness of fit and reliability.

Introduction

Digital competence is the degree to which an individual thinks he or she has the ability to interact with technology (Venkatesh & Bala, 2008). It comprises the theoretical understanding of how a technology can be used (knowledge), the ability to use the technology (skills), and the feelings toward technology or the way of behaving when interacting with technology (attitudes) (Kleib et al., 2021; Konttila et al., 2019) (Figure 1).

Framework of digital competence by Golz et al. (2023).

In times of digital progress in the health sector, it is becoming increasingly important for clinical practice nurses to have sufficient digital competence, since it has been found to be an inhibitor of technology-related stress at work (La Torre et al., 2019). The so-called technostress can lead to higher burnout symptoms or lower job satisfaction among nurses (Golz et al., 2021), which in turn are associated with increased intention to leave the organization (Peter et al., 2020). Clinical practice nurses spend most of their workday providing direct care to patients as face-to-face interactions in all settings within the health sector. They interact with technology, for example, by entering the information in the electronic health record and should be able to securely manage and transfer this data (Hübner et al., 2018).

Review of Literature

Digital competence can be measured by using self-reported questionnaires. A scoping review from 2021 summarized fourteen questionnaires to measure nurses’ digital competence (Kleib et al., 2021). Most of these questionnaires focus on undergraduate or graduate students, nurse leaders, or nurse informaticists, and six of them were developed for nurses in clinical practice (Kleib et al., 2021). One of those is the Technology Informatics Guiding Educational Reform (TIGER)-Based Assessment of Nursing Informatics Competencies (TANIC), with 85 items comprising computer skills, information knowledge, and clinical information management (Hunter et al., 2015). Shortcomings of the available questionnaires measuring digital competence of clinical practice nurses include their sole focus on knowledge and skills (Kleib et al., 2021) and neglect of individuals’ attitudes at part of the digital competence definition (Konttila et al., 2019). A positive attitude toward technology at work in healthcare is associated with successful implementation and usage at work (Konttila et al., 2019). For example, it is known that technical issues and low reliability of implemented electronic health records lead to negative experiences among nurses with technology and consequently worsen their attitude towards using technology at work (Chang et al., 2016).

Most of the questionnaires summarized in the review of Kleib et al. (2021) such as the TANICS are lengthy and have more than 50 items and thus require much of the participants’ time to fill them out. The survey response rate has fallen sharply in recent years, and the forecast indicates a further decline (Stedman et al., 2019). Whereas in the 70s the response rate in surveys was high with approximately 75%, it is now usual to reach approximately 30%. The projected response rate for 2035 is expected to be near 20% (Stedman et al., 2019). There are different approaches to increase the response rate, such as the combination of different survey methods (e.g., phone, e-mail, and mail), but also the inclusion of the minimum questions needed to cover the questioned topics (Patten, 2016). Thus, to not burden nurses more than they are already through their work and still obtain adequate responses, research should aim to minimize the time needed to fill out questionnaires by obtaining their ability to measure the construct (Kleib et al., 2021).

Since no suitable questionnaire covers knowledge, ability and attitude, developing a short version based on existing questionnaires is impossible. Hence, a new brief questionnaire is needed to measure digital competence among nurses in clinical practice, paying attention to knowledge, skills, and attitudes. For this purpose, in a previous study, a Delphi Study was conducted, resulting in an initial item pool with 26 items with high content validity (average Content Validity Index = 0.95) (Golz et al., 2023). The aim of this study was, therefore, to evaluate the structural validity and internal consistency of the Digital Competence Questionnaire (DCQ) for clinical practice nurses.

Methods

Design

The development of the DCQ for clinical practice nurses was based on the Guidelines in Scale Development by DeVellis (2016). This includes the following eight steps: (a) Determine clearly what is to be measured, (b) generate an item pool, (c) determine the format of measurement, (d) have initial item pool reviewed by professionals with knowledge in the field, (e) consider inclusion of validation items, (f) distribute the survey, (g) perform item reduction, and (h) perform psychometric analysis of the reduced questionnaire. As preparation for the psychometric validation, an initial item pool with 26 items (knowledge

What is the factor structure of the Digital Competence Questionnaire (DCQ) for clinical practice nurses, and does it demonstrate adequate structural validity? Does the Digital Competence Questionnaire (DCQ) for clinical practice nurses exhibit sufficient internal consistency across its subscales?

Sample

To test the construct validity and internal consistency of a questionnaire, 5–10 participants per item (question) of a scale are recommended (DeVellis, 2016). Therefore, the authors aimed for a sample size between 130 and 260 participants based on the initial item pool with 26 items. A combination of convenience and snowball sampling with English-speaking clinical practice nurses was conducted internationally to complete an online survey.

Inclusion/Exclusion criteria

The criteria for entering the study were a sufficient English language skills to understand the items and working as a clinical practice nurse. Participants did not have to be a native English speaker. Study participants self-assessed their English proficiency based on the introductory text and confirmed this by completing the survey. Regarding the profession, they were informed about the inclusion criteria in the preceding text, and in the survey the profession was asked. Persons who assigned themselves to other professions were excluded.

Data Collection

A cross-sectional study was conducted using the online survey tool SurveyMonkey® between January and March 2022. Emails with information about the study's aim, inclusion criteria, data protection, and the survey link were sent directly to clinical practice nurses, and they were asked to forward the invitation to their colleagues. Private social media groups for clinical practice nurses on Facebook and Reddit were contacted and asked to forward the invitation for participation to their members. The study information and survey link were posted in private social media groups. Participation was voluntary.

Instrument

The instrument included questions on individual characteristics (age, country, and profession) and items related to digital competence. Only the question on profession was mandatory and designed to exclude participants from the analysis who did not belong to the sample. The 26 initial items on the DCQ for clinical practice nurses from the Delphi Study (Golz et al., 2023) were scored on a five-point Likert-Scale from 1 (“fully disagree”) to 5 (“fully agree”), with a high score indicating high self-perceived digital competence. The five-point Likert-Scale format was chosen because it is the most common item format for measuring opinions, beliefs, and attitudes (DeVellis, 2016).

Statistical Analyis

Data was analyzed using the statistical software R (R Core Team, 2021) and the package “psych.” Missing data was handled by listwise deletion if at least one item was missing from the 26 items on digital competence. The analysis comprised a descriptive analysis (mean, median and standard deviation, minimum, maximum, skewness, kurtosis) and exploratory factor analysis (EFA) for structural validity and an internal consistency test using Cronbach's alpha with satisfactory values >0.7 (Watkins, 2018). Skew and kurtosis were computed and evaluated for their potential impact on the EFA results with skewness ≥ ±2 and kurtosis ≥ ±7 (Watkins, 2018). The assumptions for an EFA were item correlations above 0.3, a significant Bartlett's test of sphericity, and Kaiser–Meyer–Olkin values ≥0.7 for the included items and all items combined (Watkins, 2018). If an item did not meet one of the criteria, it was excluded from the following steps, and all assumptions were re-evaluated. The rotation method “oblimin” was used for the EFA, as the included items were correlated (Watkins, 2018). The number of factors was chosen based on a scree plot and parallel analysis. Using the parallel analysis, the authors compared the random eigenvalues with the eigenvalues from the dataset. The number of factors was defined as the number of eigenvalues from the dataset exceeding the random eigenvalues. A factor was expected to comprise at least three items (Watkins, 2018). Cases with missing values for one item of the scale were excluded.

Results

Of the 197 individuals who responded to the online survey in March to April 2022, 6 reported having another function in nursing, such as nurse managers (

Flowchart detailing the number of included participants in the analysis.

Sample Characteristics

The mean age was 38.40 years (SD = 9.42). The majority was from United States of America (

Research Question Results

Table 1 summarizes the descriptive results of the 26 items. The median of the items ranged between 2 and 5, with high scores indicating a ceiling effect. Skew and kurtosis were not found to be above the cut-offs with <±2 for skewness and <±7 for kurtosis. On average, the participants took 6 min to complete the 26-item questionnaire.

Descriptions of the Items.

Structural Validity

Item 4 “Patients use digital technologies to manage their symptoms themselves” was excluded from subsequent steps due to an overall low correlation below the threshold of 0.3 (

EFA Loadings.

Internal Consistency

All included items reached the conventional threshold of 0.7 for Cronbach's alpha, indicating sufficient internal consistency for the factor “Knowledge & Skills” with 0.81 (CI95% 0.79–0.82,

Discussion

This article demonstrates the structural validity and internal consistency of the 12-item DCQ for clinical practice nurses. The two factors explain a sufficient proportion of the variance, since it meets the average percentage of explained variance in behavioral science of approximately 57% (Peterson, 2000). The DCQ for clinical practice nurses can be completed in less than six minutes. The included items in the questionnaire were found to be relevant and sufficient by international panelists. They had an overall 37 items to rate and the possibility to add further essential items but ended with a 26-item pool (Golz et al., 2023). The reduction from the initial 26 items with a high content validity index to a 12-item questionnaire indicates that not all 26 items are needed to explain a satisfactory variation of the latent variables (DeVellis, 2016). This is confirmed by the high internal consistency of both factors included. Additional items would not lead to a higher explanation of variation and therefore can be considered redundant. The internal consistency of the factor knowledge and skills was above 0.9, which may be undesirable. Such a high value may indicate that the items within a factor measure the same phenomenon and are therefore unlikely to be a valid measure of the construct. As the values of the factors cluster around this threshold of 0.9 and are below 0.95, the authors consider the value acceptable (Hair et al., 2017).

Both factors fit the underlying theory of digital competence. Factor attitude (

Regarding the number of items in questionnaires measuring the digital competence of nurses, the DCQ is shorter (Kleib et al., 2021) without losing validity and reliability. For example, the reliability of other scales such as TANIC or the Canadian Nurse Informatics Competency Assessment Scale for measuring nursing informatics competence ranges between 0.81 and 0.99 (Kleib et al., 2021). Regarding validity, for some scales, only content validity was elaborated, or no validation was reported (Kleib et al., 2021). An exploratory factor analysis was also conducted for the Canadian Nurse Informatics Competency Assessment Scale, resulting in a scale with 21 items allocated into four factors, where a distinction could be made between knowledge and skills but without attitude (Kleib & Nagle, 2018a).

Strengths and Limitations

The development of this digital competence questionnaire, the DCQ, is based on the eight steps by DeVellis (2016), laying the basis for developing a theoretically sound and applicable questionnaire. Furthermore, it facilitates traceability of the process. The authors adhered to clear guidance regarding decisions based on the respective cut-off values, which has been mentioned as often missing in publications on factor analyses for scale development (DeVellis, 2016). Factor analysis is a robust method for identifying items that are performing better than others (DeVellis, 2016). To increase the method's robustness, the authors applied “oblimin” for rotation and used Spearman correlation due to the non-normal distribution of the data (Watkins, 2018). The planned minimum sample size was reached. However, the sampling method could have led to a sampling bias since technology-savvy clinical practice nurses might be more active on social media platforms. This could be one reason for the high ratings of self-perceived digital competence. Nevertheless, other studies also found high self-reported digital competence among nurses (Golz et al., 2021; Kleib & Nagle, 2018b; Kuek & Hakkennes, 2020), which might indicate that the sample is adequate for nursing. Furthermore, the mean age of 38 years for the participants does not suggest that only the digital natives of Generation Z have filled out the survey. Compared with the mean age of 43 years for the nursing population in the United Kingdom, for example, the age difference seems small (Skills for Care, 2022). Other studies show that older age is associated with lower digital competence among nurses (Golz et al., 2021; Kuek & Hakkennes, 2020). In this respect, the scale needs to be validated to see if it can differentiate between age groups. Despite the potential limitation through recruitment on social media platforms, social media as a recruitment platform gaining increasing interest and is a cost-effective solution to reaching a suitable sample of the target population (Welch, 2020). In this case, the use of social media recruitment expanded the reach of English-speaking participants.

Another reason for the high ratings of digital competence could be the self-perceived overestimation of incompetent individuals (Schlösser et al., 2013). The phenomenon is known as the Dunning-Kruger effect and describes individuals’ unawareness of their levels of competence. In particular, lower performing individuals were shown to overestimate their knowledge and skills (Maderick et al., 2016), which may apply to clinical practice nurses from countries with less digitalized health sectors. To avoid the problem of measurability, adjuvant objective tests are recommended, such as multiple-choice tests on, e.g., word processing (Maderick et al., 2016).

Implications for Practice

The DCQ for clinical practice nurses still needs further psychometric testing. The current study misses, for example, information about reliability aspects like intra- and interrater reliability or test-retest reliability (DeVellis, 2016) and the sensitivity to change. Sensitivity to change is the ability of the questionnaire to identify actual differences between two measurements because of an intervention. This is especially informative when monitoring an intervention to improve the digital competences among nursing staff. Furthermore, other aspects of validity, such as discriminative validity, are needed to evaluate the questionnaire’s ability to discriminate between groups, such as technology-savvy vs. less technology-savvy clinical practice nurses. Further psychometric testing is also required using confirmatory factor analysis to confirm the underlying factor structure.

Conclusions

The authors developed a short digital competence questionnaire for clinical practice nurses and added the dimension of attitude to the underlying competence construct. Researchers and practitioners can use the short questionnaire to elaborate on clinical practice nurses’ digital competence. Researchers could use the mean score as a primary outcome for intervention studies. Nurse managers may assess the level of digital competence at the entry of new clinical practice nurses or those already employed to identify needs. Clinical practice nurses can self-assess their digital competence to raise awareness of their competence and encourage reflection on further training in the use of technology at work. If needs are identified, an in-depth evaluation of the need for action is needed. Future psychometric validation of the DCQ for clinical practice nurses is required to allow a conclusion on the goodness of fit.

Supplemental Material

sj-docx-1-son-10.1177_23779608241272641 - Supplemental material for Psychometric Validation of the Digital Competence Questionnaire for Nurses

Supplemental material, sj-docx-1-son-10.1177_23779608241272641 for Psychometric Validation of the Digital Competence Questionnaire for Nurses by Christoph Golz, PhD, Sabine Hahn, PhD and Sandra M.G. Zwakhalen, PhD in SAGE Open Nursing

Footnotes

Acknowledgements

The authors want to thank all the participants for contributing by completing the survey and forwarding the invitation to their colleagues.

Author Contributions

CG, SH, and SZ conceptualized the study and its design. CG conducted the formal analysis and SH and SZ validated the results. CG, SH, and SZ contributed in the writing of the first draft and the revision of the article. All authors read and approved the final manuscript.

Availability of Data and Materials

The dataset used during the study are available from the corresponding author on reasonable request.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval and Consent to Participate

The president of the Swiss ethical review board in Bern deemed ethics approval unnecessary according to the legislation Swiss Federal Act on Research Involving Human Beings (see 810.30 HRA Art. 2) since no health-related data were collected and data collection was anonymously. The decision was certified and archived under the following reference: Req-2020-00179. The study was conducted in accordance with the Declaration of Helsinki. It was performed on a voluntary basis for all participants. All participants were free to stop filling out the questionnaire at any time. Participants received written information before the start of the study about the contents, aim and voluntary nature of their participation and gave their informed consent by completing the first survey page.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Bern University of Applied Sciences. Berner Fachhochschule.

Supplemental material

Supplemental material for this article is available online.

| Number | Item |

|---|---|

| 1 | I am familiar with the digital technologies at my workplace. |

| 2 | In general, I would rate my knowledge of digital technology as satisfactory. |

| 3 | I am familiar with the current laws and regulations pertaining to the protection and exchange of medical data (e.g., data protection, informed consent, and confidentiality) at my workplace. |

| 4 | Patients use digital technologies to manage their symptoms themselves. |

| 5 | I feel confident in dealing with confidentiality issues relating to digital technology at my workplace. |

| 6 | I feel confident about using digital technology to find relevant information. |

| 7 | I feel confident about using digital technology to share information. |

| 8 | I feel confident about using digital technology to communicate. |

| 9 | I feel confident about the secure management of health data using digital technology. |

| 10 | I feel confident about using digital technology. |

| 11 | I feel confident about using digital technology to obtain data and information on clinical care. |

| 12 | I am able to reach conclusions based on information acquired through digital technologies. |

| 13 | I am keen to use new digital technologies in my future professional practices. |

| 14 | Digital technologies will make my day-to-day work easier. |

| 15 | I have an open attitude toward digital technology-related innovations at my workplace. |

| 16 | Digital technology fits well with the way I like to work. |

| 17 | I enjoy using digital technology at my workplace. |

| 18 | I encourage others to use digital technology in their professional practices. |

| 19 | I like to use digital technology at work. |

| 20 | I am willing to improve my ability to use digital technology through further training. |

| 21 | I believe that digital technology provides numerous benefits in terms of quality of care. |

| 22 | I believe that digital technology improves clinical care. |

| 23 | I believe that digital technology improves patient outcomes. |

| 24 | I believe that digital technology is beneficial for my patients. |

| 25 | I believe that digital technology is beneficial for health professionals. |

| 26 | I believe that digital technology is relevant for my future profession. |

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.