Abstract

With artificial intelligence (AI) on the rise in healthcare, we investigated the acceptance and adoption of AI among cancer survivors who are seeking information. We searched 3 databases—PubMed, Scopus, and CINAHL for original research studies examining cancer survivors’ acceptance and adoption of AI while seeking information. We screened 858 articles, resulting in the inclusion of 12 studies. Cancer survivors’ perceived benefits of AI include diagnostic accuracy, improved healthcare access, and reduced healthcare costs. Concerns included the loss of human interaction, data loss, and privacy issues. Adoption and acceptance of AI-based Chatbots and cancer trial search tools were also demonstrated. Regarding the ethical use of AI, cancer survivors shared a desire for data de-identification, disclosure, and transparency. Cancer survivors accepted AI as an adjunct to physicians. Patients demonstrated acceptance and adoption of both AI-based educational tools and search engines. Developing AI models with better explainability and education can improve cancer survivors’ acceptance and adoption of AI.

Keywords

Introduction

Though artificial intelligence (AI) use has been explored in healthcare since the 1970s, it has seen a rapid increase over the past decade. 1 AI offers opportunities to improve prognosis, reduce costs, and improve healthcare for clinicians, allied health personnel, and patients. AI is a multidisciplinary field that consists of subfields such as machine learning (ML), deep learning, natural language processing, and more. 2 As the current healthcare system moves toward patient-centric care, patients’ perspectives and involvement, including cancer survivors, become more critical in AI tool development and integration in healthcare.3,4

“An individual is considered a cancer survivor from the time of diagnosis through the balance of life,” based on the National Cancer Institute's definition. 5 Cancer survivorship brings multiple challenges, including lack of ongoing, personalized care and living in constant fear and uncertainty. These fears are heightened among those who are in a linguistic, ethnic, and racial minority group.6,7

AI applications have demonstrated positive outcomes for mitigating these challenges for cancer survivors with AI-driven digital health technologies, including increased motivation of patient-reported outcomes, reduced fatigue and pain levels, improved quality of life (QOL), and improved physical function. 8 Further, an AI-based platform, ASCAPE (Artificial Intelligence Supporting Cancer Patients across Europe) has utilized big data and ML to predict and support the health status of patients with breast and prostate cancer. 9

Other key challenges cancer survivors face include difficulty contacting healthcare teams, receiving timely replies, and information overload from online search engines. 10 With its ability to analyze and summarize large amounts of information concisely, generative AI can become an important tool to bridge the knowledge gap, provide support, and answer healthcare-related questions for cancer survivors. AI can also potentially improve cancer survivors’ clinical care along with screening and rediagnosis of cancer after the initial cure.

The degree of AI acceptance and adoption among cancer survivors is unknown. As with any emerging technology, AI's impact on cancer survivors’ care and experience will depend on their acceptance and adoption of AI tools, including those used by their healthcare team. Technology acceptance is the first step toward its adoption and reflects the attitude toward technology. 11 Research examining cancer survivors’ perceptions and adoption of AI tools is limited. While the technology acceptance model suggests that attitudes predict intentions, which influences actual technology adoption, this study focused on attitudes and intentions rather than the explicit behavioral changes made in the later stages of adoption. 12

The objective of this study is to evaluate the acceptance and adoption of (AI) among cancer survivors seeking information. We hypothesize that there will be variations in the acceptance and adoption of AI among cancer survivors, which will be influenced by factors such as age and digital literacy. We also hypothesized that cancer survivors’ acceptance and adoption of AI tools would improve patient-provider communication and QOL factors.

Methods

We created search terms to explore all existing literature to further our understanding of cancer survivors’ acceptance and adoption of AI. Search terms were derived from extant literature on each topic and are included in the appendix. We systematically searched 3 databases—PubMed, Scopus, and CINAHL Plus with Full Text (EBSCOhost)—on June 17, 2024, for original literature on this topic without language or geography limitations. These databases were chosen due to the availability of research relevant to cancer survivorship and AI acceptability and usability. The complete search strategy for all databases is included in Figure 1. Given that AI is a relatively new tool to the public, we chose to search for articles published within the past 10 years. A single reviewer performed title and abstract screening, followed by a detailed full-text review of articles based on predefined eligibility criteria.

Inclusion criteria were studies investigating individuals 18 and 99 years of age; studies investigating cancer survivors; studies investigating cancer survivors’ acceptance of AI or adoption of AI for seeking information; and original research studies.

Patient acceptance of artificial intelligence (AI)/adoption of AI for seeking information—cancer survivors.

Our exclusion criteria were wrong patient population: children (under the age of 18), pregnant women, nonviable neonates or neonates of uncertain viability, prisoners, others involuntarily detained or incarcerated (including those helped in treatment centers as a condition of their parole); wrong setting: studies with only provider's acceptance and adoption of AI; wrong intervention and wrong patient population: studies that did not focus on patients with cancer; wrong study design: studies published as conference proceedings, systematic reviews, and scoping reviews; and wrong outcomes: Studies assessed AI tools but did not assess acceptance, adoption, usability, perceptions, and/or engagement.

We included a few studies with both provider and cancer survivor participation due to the limited literature on cancer survivors’ perspectives only.

Following the Preferred Reporting Items for Systematic Reviews and Meta-Analysis (PRISMA) guidelines, we conducted a review that culminated in the selection of 12 articles deemed most relevant to our study objectives. 13 The PRISMA framework is widely recognized for its rigorous and reliable standard of guidelines for conducting empirical literature reviews.

For the analysis, we employed a hybrid approach that combined inductive and deductive reasoning. Inductive reasoning allowed us to identify recurring patterns and themes that emerged organically from the data. These included factors such as trust in AI, perceived usability of AI tools, and the role of personalized AI in enhancing patient engagement. Simultaneously, deductive reasoning was applied to test pre-established concepts and frameworks. This approach helped us situate our findings within broader theoretical paradigms, providing a structured lens through which to interpret the data. This process also enabled a comprehensive synthesis of findings, revealing both commonalities across the studies and unique perspectives influenced by demographic and digital differences among participants. By combining inductive and deductive reasoning, we bridged empirical observations with theoretical constructs, offering a nuanced understanding of how cancer survivors perceive and engage with AI technologies in their healthcare experiences. This approach provided a balanced and in-depth analysis that captured the complexity of patient interactions with AI.

Results

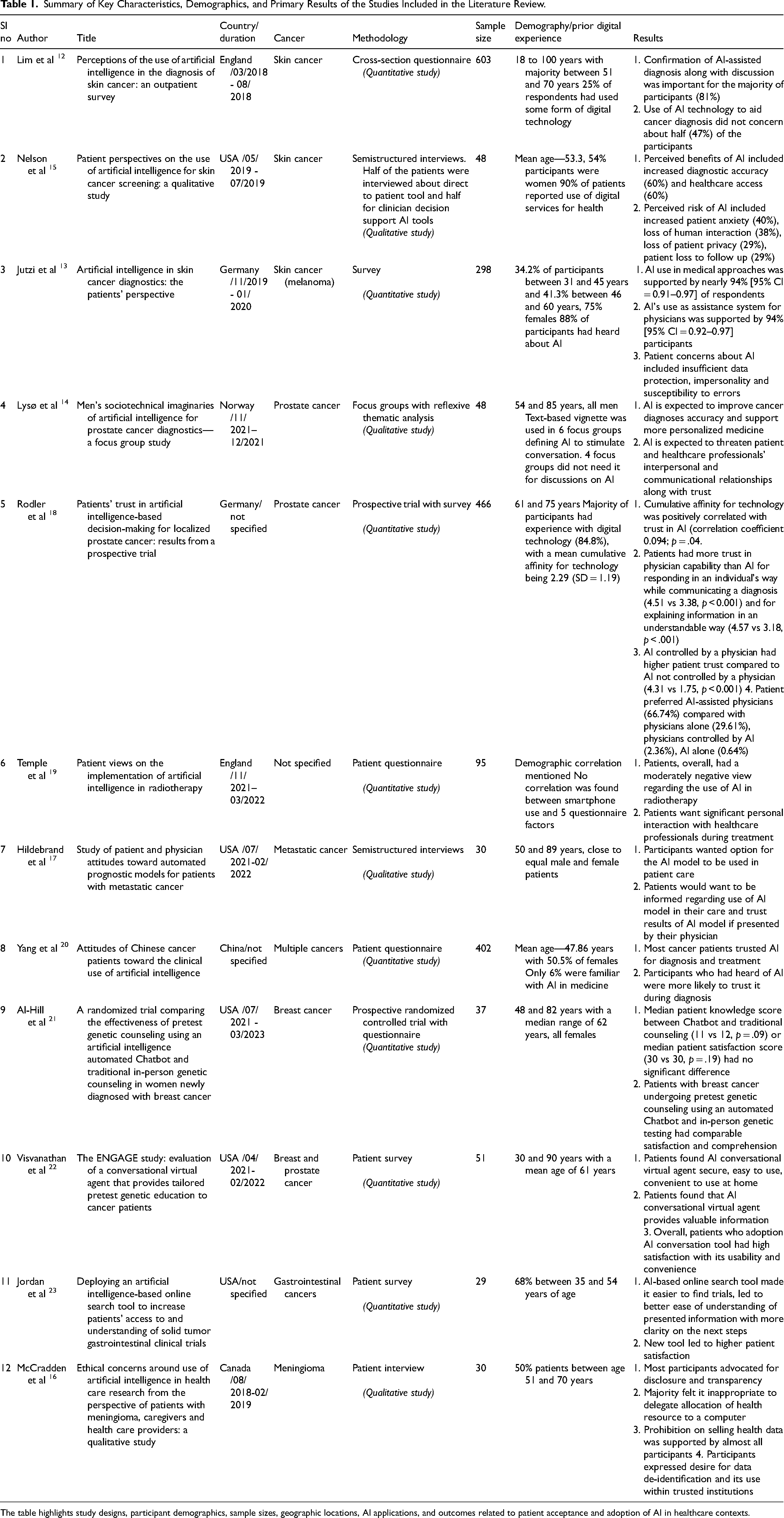

Our search returned 858 references, and after removing duplicates, we screened 581 references and identified 12 studies for inclusion in this literature review. This was completed using a strict inclusion and exclusion criteria list agreed upon by the authors. The first author applied inductive and deductive reasoning to exclude article abstracts according to the inclusion and exclusion criteria list created. Screening was completed using the systematic review tool Covidence and the automation tools within to aid in abstract and article screening. 14 A PRISMA diagram related to the selection process can be found in Figure 1. 15 Key information about the studies is included in Table 1. Of these 12 included studies, 5 were from the United States, 2 from England, 2 from Germany, and 1 from Canada, Norway, and China. All studies were published from 2018 onwards and included cancer survivors of skin cancer, meningioma, prostate cancer, breast cancer, gastrointestinal cancer, and metastatic cancer. Six studies employed patient questionnaires or surveys, 3 employed interviews, 2 were prospective trials, and 1 held focus groups. Four studies included patients without cancer16–19 and 2 included providers and caregivers.19,20

Summary of Key Characteristics, Demographics, and Primary Results of the Studies Included in the Literature Review.

The table highlights study designs, participant demographics, sample sizes, geographic locations, AI applications, and outcomes related to patient acceptance and adoption of AI in healthcare contexts.

Though a patient's age can impact their views on AI, this was not seen in multiple included studies. No association between age and preference for AI involvement at different stages of diagnosis has been found. 16 Patients’ age and level of education were not positively correlated with trust in AI. 22 Similarly, no significant correlation was found between age and gender with strength of views on AI use in radiotherapy. 23

Prior digital experience can also affect cancer survivors’ acceptance and adoption of AI tools. There was variation in prior knowledge or experience with digital technology or AI by study participants in the included studies (Table 1) and a correlation between the cumulative use of technology and trust in AI. 22 Additionally, patients who heard of AI were more likely to trust it in making a diagnosis. 24 We divided the identified studies into 3 major themes.

Theme 1: Cancer Survivors’ Acceptance of AI

Cancer Diagnosis and Trust in Decision Making

We identified 5 studies to further our understanding of cancer survivors’ perspectives on using AI in cancer diagnosis. Three studies used surveys, 1 used semistructured interviews and 1 employed focus groups. Most participants in these studies were older than 46 years old. Close to half of respondents were not concerned about using AI technology to aid specialists in skin cancer diagnosis. 16 Perceived benefits of AI included increased diagnostic accuracy, healthcare access, and reduced healthcare cost. 19 This study noted patient education as one of AI's strengths. Further, patients expected AI to improve cancer diagnosis accuracy and facilitate more personalized medicine. 18

Patients’ concerns included data protection, data misuse, error susceptibility, and lack of transparency. 17 Perceived risks of AI included increased patient anxiety, loss of human interaction, loss of patient privacy, patient loss to follow up, nefarious use of AI, and human deskilling. 19 While many respondents supported AI use and were willing to learn more, they wanted to retain the human element provided by the involvement of physicians in their care. 16 Patients also expressed concern about the disruption of interpersonal relationships with physicians and loss of physician skills due to dependence on the AI system.17,18

Another theme was the importance of collaboration between humans and AI. 19 Most respondents supported AI as an adjunct to physicians. 17 Cancer survivors with melanoma were more supportive of using AI applications for early skin cancer detection, with a preference for physicians and AI classifying lesions independently. Most patients felt it essential for a dermatologist to confirm and discuss the cancer diagnosis. 16 These findings were similar to a prospective trial assessing patients’ trust in AI. 22 In this study, patients had more confidence in physicians than in AI for responding to diagnoses, communicating individually, and explaining information in understandable ways. Patients also had higher trust in diagnoses made by AI controlled by physicians than AI not controlled by physicians. Moreover, patients overwhelmingly preferred AI-assisted physicians to physicians alone for current clinical scenario treatment. Most cancer survivors support using AI as an adjunct to increase diagnostic accuracy. Together, these studies represent the patients’ desire for AI tools to be used as support rather than to replace physicians.

AI in Therapy, Prognosis, and Clinical Care

We reviewed 3 studies that examined cancer survivors’ perspectives on this theme. In a validated questionnaire, cancer survivors’ positivity or negativity toward AI implementation in radiotherapy suggested a moderately negative patient view of AI use. 23 In questions about “personal interaction,” results indicated a strong patient preference for some level of personal interaction. Patients reported more trust in AI model results if presented by their physicians and expressed a desire to be informed when AI models were used in their care. 24 Predictive models can elicit negative responses, as noted in some patients after seeing this study's median survival prediction. Chinese cancer patients’ attitudes toward the clinical use of AI revealed that most patients with cancer trusted AI in diagnosis and treatment. Additionally, patients who were familiar with AI were more likely to trust them being used in diagnosis. 24 Patients trusted oncologists more when the opinions of the oncologist and AI diverged.

Theme 2: Cancer Survivors’ Adoption of AI for Seeking Information

AI-Based Automated Chatbots

Significant patient acceptance among newly diagnosed patients with breast cancer was also found when comparing the effectiveness of AI-powered Chatbots for pretest genetic counseling to traditional genetic counseling methods. 25 Further, AI-based conversational virtual agents were evaluated to provide tailored pretest genetic education and assessed patients’ responses to adoption, use, and experience with AI applications. 26 All participants in this study found AI-based conversational virtual agents secure and easy to use. Patients found them convenient for home use and were very satisfied with their usability and convenience.

AI-Based Online Search Tools

Other assessments include a novel AI-based online search tool to increase patients’ access and understanding of gastrointestinal solid tumor clinical trials. 27 This study included a new cancer trial search tool based on natural language processing with restructured trial information to improve search efficiency and automated identification of relevant trials for an individual patient. Patients found it easier and faster to find relevant trials and it easier to understand trial information with clearer next steps. Patients had better overall satisfaction with the novel online search tool than currently available tools for finding cancer trial information.

Theme 3: Cancer Survivors’ Perspective Regarding the Ethical Use of AI

Insights regarding meningioma patients’ ethical concerns for using AI in healthcare research were assessed by providing hypothetical issues. 20 Most participants in this study favored disclosure and transparency while supporting nonconsented healthcare data use. Most participants did not favor the commercial selling of healthcare data and delegating healthcare resource utilization to a computer. This study also identified a desire for data de-identification and the use of data within trusted institutions. Providers were more skeptical regarding the use of AI in healthcare research. These findings reiterate earlier studies regarding patients’ concerns for data misuse.

Discussion

The clinical care of cancer survivors involves diagnosis, clinical decision making, treatment, prognosis, and education. AI is expected to play a more significant role in improving these aspects of clinical care. Studies included in this literature review provide a better understanding of cancer survivors’ perspectives regarding accepting and adopting AI, including seeking more information.

Patient acceptance is crucial for the successful integration of technology in healthcare. Cancer survivors are open to using AI to enhance diagnostic accuracy; however, they prefer it as a support tool alongside physicians, valuing the patient-physician relationship. This preference has important design implications for AI healthcare solutions aimed at cancer survivors. Future research should explore the meaningful human interactions necessary for effectively integrating AI into their care. Future research should also explore the lack of correlation between patient age, gender, and education level for AI trust and adoption.22,23 Prior studies show that AI has significant potential to speed up early diagnosis and improve cancer survivors’ outcomes. 28 AI's image-based screening tools, like Google's automated retina disease assessment, are comparable to or better than physician evaluation.29,30

Cancer survivors showed interest in AI prognostic models but wanted results shared by their physicians, again highlighting higher trust in physicians and healthcare teams.21,23 Some patients reacted negatively to the predictive model, which revealed emotional elements as a response to technology output. 21 Patients with poor prognosis may respond negatively to AI-generated prognostic models. This highlights the importance of human guidance in presenting AI data, especially when it's emotionally challenging. Further research is needed to understand how human interactions can enhance trust and acceptance among cancer survivors during the development and implementation of these models. AI and machine learning algorithms have also started to play a role in cancer treatment and predictive models, with their use expected to increase with time. 31

We also found that cancer survivors’ education and knowledge about their health condition have a significant effect on a patient's treatment, prognosis, and satisfaction. 32 With limited resources and time, healthcare professionals often need help to spend adequate time with patients and caregivers for effective patient education. 33 AI has significant potential to fill this gap with AI technologies such as Chatbots. Chatbots are AI-based interactive auditory and written communication tools. 25 Chatbot use in healthcare is steadily growing, including in oncology.34,35 Some of the uses of Chatbots in medicine have included family history intake, risk assessment, pretesting education, and sharing average results. 25 Cancer survivors found Chatbots easy to use, convenient, and secure. 26 Knowledge and comprehension of cancer survivors between automatic Chatbot-based counseling and in-person genetic counseling were also comparable, supporting their role as an adjunct to the current system in improving the accessibility of these services. 25 Studies using Chatbots, like digital genetic conversation Chatbot “ROSA,” with patients without cancer also had positive patient feedback.34,36 These studies further demonstrate cancer survivors’ willingness to adopt AI-based Chatbots to seek information. One promising area that can be implemented at scale using AI-based Chatbots is general pretrained models (GPT) like ChatGPT and Gemini.37,38 However, this technology is not designed to provide patient education and has issues with the validity of results. 39 Further development efforts focusing on output to support patients, including cancer survivors’ questions and robust validation, can help improve adoption and unleash the complete potential of these tools.

AI can improve cancer survivors’ access to clinical trials by simplifying information. Those in rural areas or smaller hospitals may struggle with guidance from oncologists due to limited access to in-person and virtual care. By quickly analyzing data, AI helps survivors find and understand relevant clinical trials. The AI-based cancer trial search tool can provide more relevant information and better patient satisfaction than current tools. 27 AI-based cancer trial search tools can improve clinical trial accessibility of disadvantaged populations. 40 They can also help clinical trial organizers recruit faster, improve trial completion time, and share results with the broader community. 40 Further studies should incorporate AI-based search tools for various cancer types and survivor populations to better understand factors influencing the wider adoption of this tool.

As AI use grows in healthcare, it is important to understand cancer survivors’ perspectives about its ethical use. One potential issue in the ethical use of AI is a lack of transparency with black box issues and a poor understanding of some AI systems beyond input and output. 41 When AI is used in clinical care, obtaining informed consent may become more complicated. Improving AI model explainability and educating patients and healthcare personnel can enhance the acceptance of AI tools. For example, Mirada Medical Ltd in Oxford, UK, has implemented targeted educational efforts to boost clinician and patient understanding of AI. 23

Cancer survivors also have valid concerns regarding data protection, misuse, and a lack of transparency.17,19,20 This perspective is shared by providers and the public as well. 20

Concerns about AI misuse highlight the importance of data protection and transparency for cancer survivor tools. Trust between survivors and healthcare teams is vital; without it, survivors may not share their data, limiting AI model training. De-identifying data and using it within trusted institutions may help. Future studies should explore innovative methods to enhance data protection as AI becomes more integrated into our lives.

Limitations

A key limitation of this study is that the abstract review, article inclusion, and subsequent data abstraction included studies were completed by one reviewer due to resource constraints. To mitigate bias, however, a checklist of inclusion and exclusion criteria was formed by 4 authors under the guidance of the Principal Investigator. Uncertainties for study inclusion were resolved through group discussion of mentioned authors. Future research should be done to include 2 reviewers to systematically reduce bias in chosen articles through the PRISMA guidelines. Other limitations include the heterogeneity of patient populations, intervention type, and setting, and outcome due to the small number of articles chosen.

We found that one area that can significantly impact cancer survivors’ care is education using AI driven technologies like Chatbots. Given limited studies incorporating patients’ perspectives in this area, more studies are needed to ensure that cancer survivors’ concerns and expectations are included in Chatbot tool development.

These studies offer insights into cancer survivors’ views on various common cancers, highlighting that experiences and expectations of AI may differ among them. More research is needed to grasp the perspectives of diverse stakeholders. Early consideration of explainability and patient education about AI in clinical care is crucial, particularly for surgical procedures where informed consent is vital. 42

A key limitation of many studies is the lack of diversity in patient demographics. Most participants had prior digital experience, which may not reflect the broader population, particularly among socially disadvantaged groups and older cancer survivors. This experience can shape their views on AI. Including a more diverse demographic is crucial for understanding cancer survivors’ perspectives and reducing bias in AI development.

Moreover, many of these studies were done before introducing next-generation AI-based search tools like “ChatGPT” and “Gemini.”37,38 Cancer survivors’ perspectives may have evolved since the introduction of these tools. Future studies should strive to understand cancer survivors’ acceptance and adoption of these newer AI-based tools.

Recommendations for Improved Cancer Survivor Adoption and Acceptance of AI

Focus on preserving human interaction and patient-physician relationships while developing AI tools to augment diagnosis and decision making.

Ensure adequate data protection and misuse safeguards during the AI tool's development and deployment.

Develop AI tools with clear explainability of their design, functionality, and output.

Develop clear plans for communicating and educating clinical care teams and patients about the deployed AI tool's role in diagnosis, decision making, and information seeking, as well as its limitations.

To reduce bias and increase usability, include training data from diverse patient demographics, educational levels, and digital technology adoption levels.

Conclusion

As AI furthers its integration into the clinical care of cancer survivors, cancer survivors’ perspectives should be further explored and incorporated into their development. Cancer survivors in studies examined in this review were accepting of AI use in clinical care but also have some valid concerns, especially around data misuse and disruption of patient-physician relationships with the use of AI. Cancer survivors have shown interest in adopting AI-based search tools like Chatbots. These tools can also raise awareness and decrease patient concerns about AI integration in clinical care. More studies with diverse patient populations are needed to reduce bias and ensure equitable development of AI-based clinical care tools for cancer survivors.

Supplemental Material

sj-docx-1-jpx-10.1177_23743735251380949 - Supplemental material for Acceptance and Adoption of Artificial Intelligence Among Cancer Survivors Seeking Information: A Literature Review

Supplemental material, sj-docx-1-jpx-10.1177_23743735251380949 for Acceptance and Adoption of Artificial Intelligence Among Cancer Survivors Seeking Information: A Literature Review by Prabal Chourasia, Melissa Coffel, Jamie Lynn Conklin, William A. Wood and Saif Khairat in Journal of Patient Experience

Footnotes

Authors’ Contributions

PC was involved in methodology, formal analysis, investigation, writing original draft, and writing—review & editing; MC in methodology, formal analysis, resources, writing—review & editing, and project administration; JLC in methodology, data curation, writing—review & editing; WAW in writing—review & editing; and SK in conceptualization, methodology, resources, writing—review & editing, supervision, and funding acquisition.

Data Availability

Data sharing is not applicable to this article as no datasets were generated or analyzed during the current study.

Declaration of Conflicting Interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Statement of Informed Consent

There are no human subjects in this article and informed consent is not applicable.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Research reported in this publication was supported by the National Center For Advancing Translational Sciences of the National Institutes of Health under Award Number RC2TR004380. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

Ethical Approval

Ethical approval is not applicable for this article.

Statement of Human and Animal Rights

All procedures in this study were conducted in accordance with the University of North Carolina at Chapel Hill's Institutional Review Board (IRB # 23-1451) approved protocols.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.