Abstract

Shared decision-making (SDM) between clinicians and patients is a key component of patient experience, but measurement efforts have been hampered by a lack of valid and reliable measures that are feasible for routine use. In this study, we aim to investigate

Keywords

Background

Given increasing reliance on patient experience measures in US performance-based compensation systems, as well as evolving public expectations with regard to patient-centered health care, a focus on patient experience is critical (1). Patient experience measurement has been described as “reports from patients on what they did or did not experience in their interactions with providers and the healthcare system” (2). In addition to the importance of positive patient experience per se, there is increasing evidence of positive correlations between experience, patient safety, and clinical outcomes (3). Improving the measurement and transparency of patients’ experience could contribute widely to improvement in the quality of healthcare delivery.

As a key component of patient experience, shared decision-making (SDM) between clinicians and patients has garnered increased attention in recent years, having been promoted in the US Patient Protection and Affordable Care Act (4,5) and championed as “the pinnacle of patient-centered care” (6). However, the ability to assess patients’ experience of SDM has been hampered by a lack of measures that have proven psychometric properties such as validity, responsiveness, and, critical for provider group performance assessment, reliability, while at the same time are practical enough for use in routine clinical settings. This measurement gap also hinders SDM performance improvement.

To help meet this need, Elwyn and colleagues developed

Another approach to SDM measurement was undertaken by the US Agency for Healthcare Research and Quality as a supplement to the widespread Consumer Assessment of Healthcare Providers and Systems (CAHPS) Clinician and Group Survey (CG-CAHPS version 3.0). The CAHPS survey is used by providers and payers of US health-care services to “evaluate and compare healthcare providers and to improve the quality of healthcare services” (11). The CAHPS supplemental SDM section focuses on medication-related decisions—discussing reasons to take medication, discussing reasons not to take medication, and discussing which medication is best. The CAHPS communication items evaluate clinician performance in explaining things in a way that is easy to understand, listening carefully, showing respect, and spending enough time with the patient.

Although CAHPS scores have been evaluated in various healthcare settings, no prior studies have compared CAHPS communication and SDM measures to

Methods

Data Sources

We conducted a secondary analysis of the full cross-sectional 2017 California Patient Assessment Survey data set. The Patient Assessment Survey is administered annually by the Pacific Business Group on Health to adult patients of 153 California provider groups taking commercial capitation risk, and results are incorporated into California’s pay-for-performance program. Participating provider groups are geographically diverse practices representing all of California’s major metropolitan regions as well as rural areas within the state. Each provider group includes both primary and specialty care clinicians.

Our use of the deidentified 2017 Patient Assessment Survey data set was approved by the Pacific Business Group on Health. This project was considered exempt from further review by Dartmouth College’s Committee for the Protection of Human Subjects (study #31002).

Participants

Patient Assessment Survey participants include privately insured adult (aged 18 years and older) patients who received ambulatory healthcare services at one of the 153 participating California provider groups between January and October 2016. The Patient Assessment Survey’s standard sampling procedure excludes patients younger than 18 years of age, uninsured patients, and patients with public or other noncommercial health insurance. Therefore, our secondary analysis of the Patient Assessment Survey data set also excludes these populations.

Questionnaire administration

In accordance with the standard Patient Assessment Survey administration procedure, a random sample of eligible patients were invited to complete the survey. The recruitment protocol included a series of survey administration modes, beginning with e-mail invitations and progressing to mail then attempting telephone administration for prior nonrespondents. All questionnaires were administered between December 2016 and March 2017. Questionnaires were available in English, Spanish, Chinese, Korean, and Vietnamese languages.

Measures

The 2017 Patient Assessment Survey consisted of 35 total items derived from (1) the CAHPS Clinician & Group version 3.0 survey and supplement (12) and (2) the

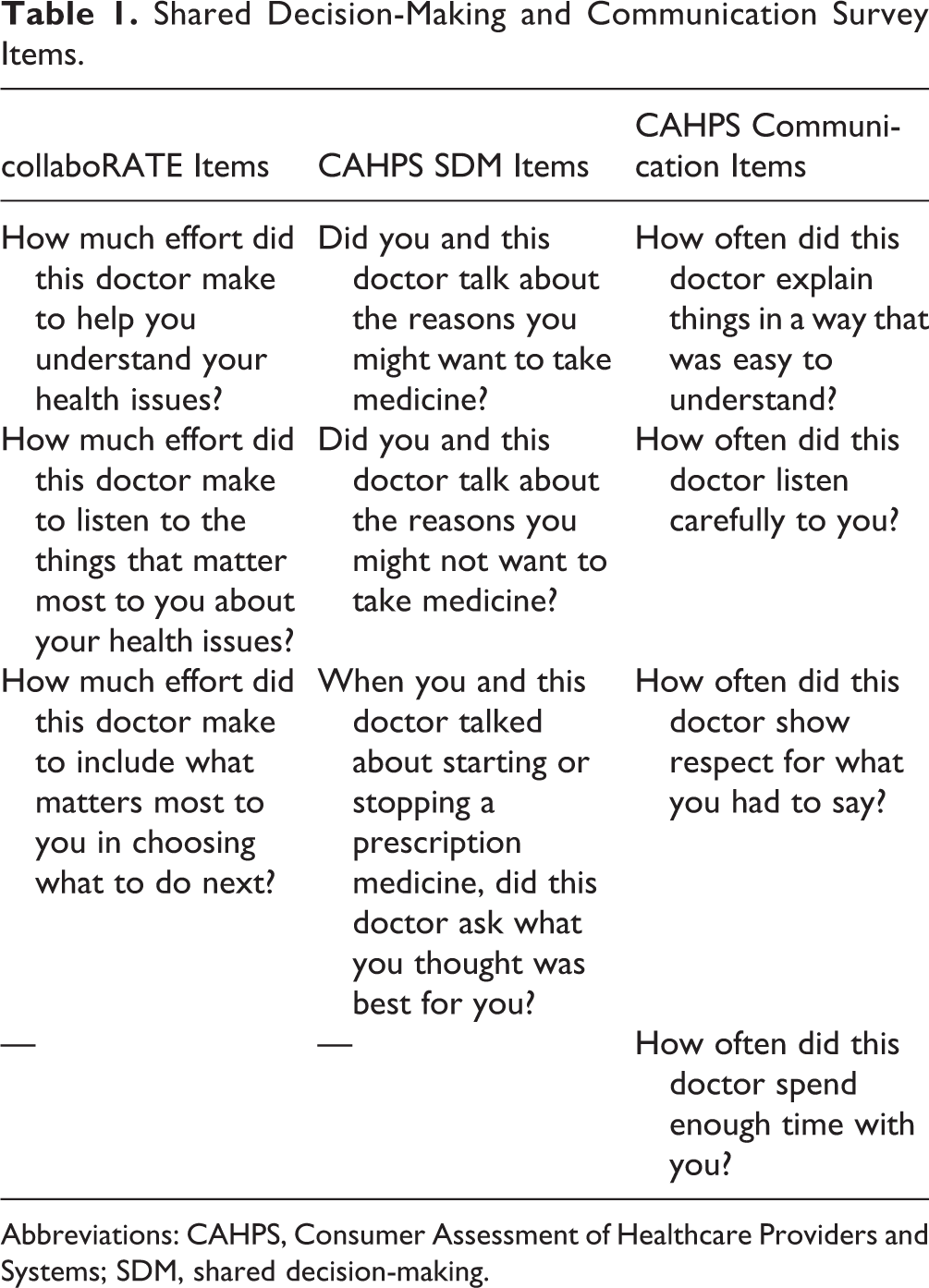

Shared Decision-Making and Communication Survey Items.

Abbreviations: CAHPS, Consumer Assessment of Healthcare Providers and Systems; SDM, shared decision-making.

Consumer Assessment of Healthcare Providers and Systems SDM item responses were given on a yes/no response scale, while CAHPS communication items were rated on a never/sometimes/usually/always response scale.

Statistical Analysis

In all analyses, survey responses with missing data on the outcome measure of interest were excluded from the analysis. Due to our interest in evaluating reliability under a worst-case scenario that emphasizes the relation of missing data to reliability, we did not use multiple imputation. Data were unweighted and physician group scores were case-mix adjusted by patient age, education, general and mental health status, race/ethnicity, mode and language of survey administration, and provider specialty. Analysis was conducted using Stata 13 statistical software.

Scoring methods

For each of the 3 measures (

Provider group-level reliability analysis

Score reliability is a statistical measure of “how well one can confidently distinguish the performance of one physician [or provider group] from another” (13). The reliability of provider group performance scores depends on 2 things: “(1) a sufficient number of patients eligible for a given quality measure ([eg, SDM] per provider group) and (2) performance variation across [provider groups] on that quality measure (14).” To evaluate provider group-level score reliability, we adopted the approach used by Scholle and detailed by Adams (13,14). For each measure (CAHPS communication, CAHPS SDM, and

Snijders and Bosker (1999) Reliability Formula.

We report ICCs and median reliability estimates for each measure and across all provider groups (14). As reliability depends heavily on sample sizes, we also report response rates for each measure. Finally, we conducted a sensitivity analysis to determine the impact of the patient case-mix adjustment on provider group-level score reliability.

A minimum threshold for acceptable score reliability for performance profiling at the provider group level has been established at 0.70 (13,14). We therefore present the minimum number of patient reports required at the provider group level to reach the minimum 0.70 reliability threshold for each included measure.

Concurrent validity analysis

To examine concurrent validity between CAHPS communication, CAHPS SDM, and

Limitations

Our study design had several limitations. First, as per Patient Assessment Survey standard procedures, questionnaires were administered to patients more than 2 months following their clinic visits, presenting the possibility of recall bias. Further, the 10 survey items analyzed in this study were among 28 total items contained within the 2017 Patient Assessment Survey; the impact of those additional 18 items on possible selection and response biases is unknown. Finally, we have access to demographic data only for respondents to the Patient Assessment Survey; we do not have access to detailed data on their respective healthcare providers or provider groups. We are therefore unable to further contextualize our results based on these individual provider and group characteristics.

Results

A demographic profile of patient respondents from 153 participating provider groups is available in Online Appendix 1.

Collabo RATE and CAHPS scores

The 2017 Patient Assessment Survey included 31 265 patient responses across 153 California provider groups.

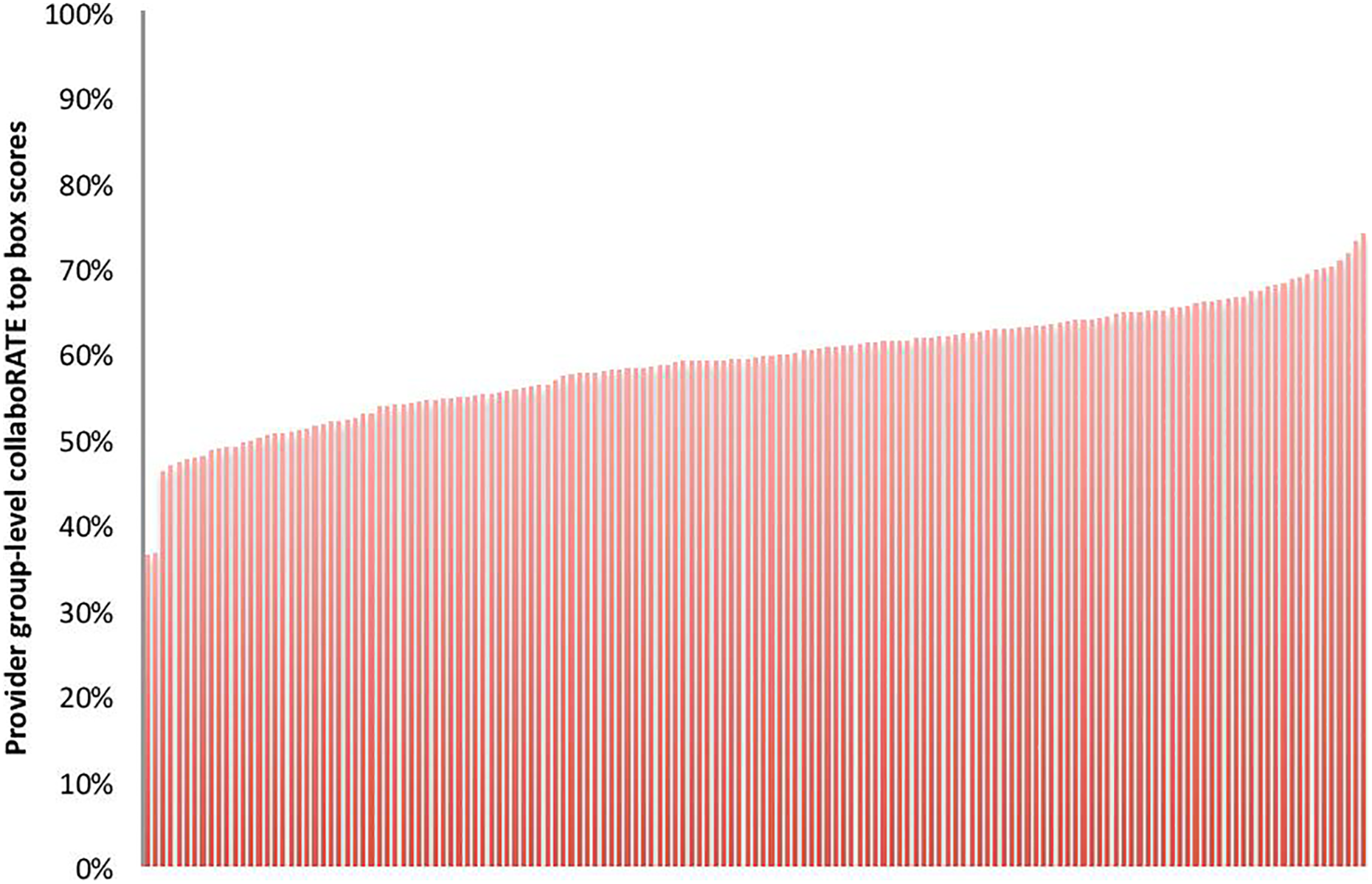

Case-mix adjusted collaboRATE top-box scores by provider group (n = 153 groups).

CAHPS Communication and Shared Decision-Making Provider Group Scores.

Abbreviations: CAHPS, Consumer Assessment of Healthcare Providers and Systems; SDM, shared decision-making.

Provider Group-Level Score Reliability

Based on case-mix adjusted scores,

Provider Group-Level Reliability.

Abbreviations: CAHPS, Consumer Assessment of Healthcare Providers and Systems; ICC, intracluster correlation coefficient; SDM, shared decision-making.

Given its ICC of 0.01,

In sensitivity analysis of scores unadjusted for patient case mix, the CAHPS communication and SDM group-level composite scores shifted an average of 11.5% and 16.1%, respectively, following case-mix adjustment;

Concurrent Validity

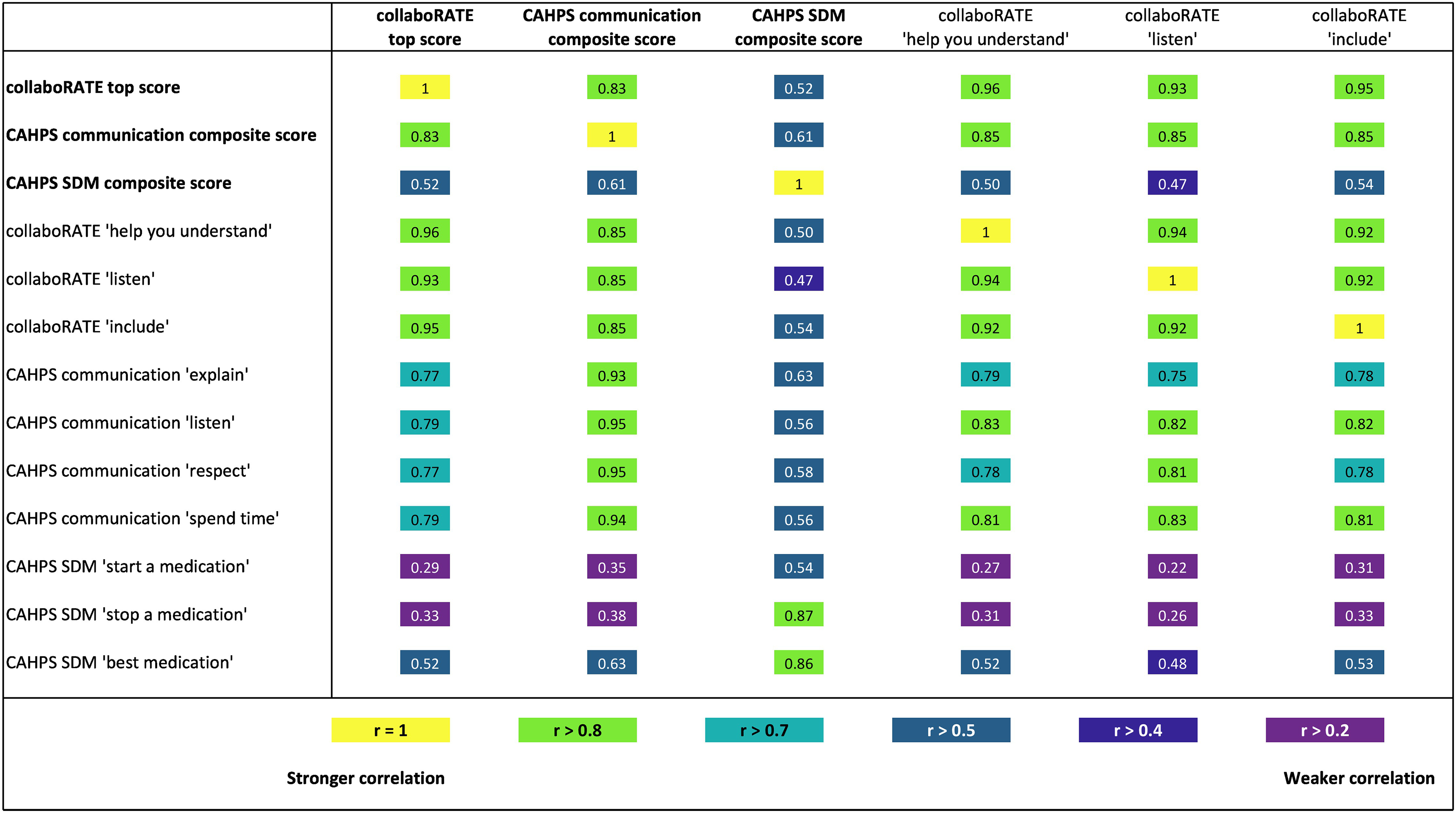

At the measure level, the strongest association was observed between

CollaboRATE correlations with Consumer Assessment of Healthcare Providers and Systems (CAHPS) communication and shared decision-making measures.

Between individual items, strong associations were observed among the

Discussion

Key Findings

Although

Correlation analysis demonstrates limited association between the

As the CAHPS SDM measure specific to medication decision-making is relevant to only the fraction of clinical encounters in which patients and providers make explicit decisions about starting or stopping a medication (no more than 52.6% of respondents in this sample), sample sizes for the CAHPS SDM measure are consistently low; these smaller sample sizes (median

Context in Existing Literature

While a strict reliability standard of 0.70 ensures fair comparison across provider groups for national or regional performance management and incentivization purposes, we recognize that obtaining the required sample sizes to meet this reliability standard may be too resource-intensive to be attainable in local quality improvement and research projects. For these local projects, we therefore recommend 20 to 30 observations per provider group in analyses designed to assess differences between groups and approximately 50 observations per provider group for inferential analyses (18).

Our findings support Hays’ evaluation of CAHPS measure reliability, which found larger required sample sizes for the CAHPS SDM composite (n = 396) than for the CAHPS communication composite (n = 295) (19). However, that study’s use of the Spearman-Brown reliability formula (20) resulted in larger recommended sample sizes than those we report in the current study (CAHPS communication:

We observed consistent mean

Adjusting for case mix is often advocated in healthcare quality measurement (21). It is likely that SDM scores will vary because of patient characteristics; language barriers, literacy levels, and other patient sociodemographic factors may limit perceived (or actual) SDM. Case-mix adjustment parses the variance attributable to provider performance from the variance associated with patient characteristics. Hence, as case-mix adjustment reduces overall variation between measured entities (22), the reliability measures based on case-mix adjusted scores may represent lower bound estimates, while the reliability measures calculated using unadjusted scores can be considered upper bound estimates.

Conclusion

Valid measurement relies on clarity about the constructs being measured, as well as meaningful interpretation of variation in scores. In this study, we demonstrate that

Supplemental Material

Appendix_1_(1) - Measuring Patient-Reported Shared Decision-Making to Promote Performance Transparency and Value-Based Payment: Assessment of collaboRATE’s Group-Level Reliability

Appendix_1_(1) for Measuring Patient-Reported Shared Decision-Making to Promote Performance Transparency and Value-Based Payment: Assessment of collaboRATE’s Group-Level Reliability by Rachel C Forcino, Marcus Thygeson, A James O’Malley, Marjan J Meinders, Gert P Westert and Glyn Elwyn in Journal of Patient Experience

Footnotes

Acknowledgments

The authors gratefully acknowledge Grace Lin, MD, for facilitating PAS data access.

Author Contributions

Rachel C Forcino, Marcus Thygeson, Glyn Elwyn contributed to conception or design of the work. Rachel C Forcino, Marcus Thygeson, A James O’Malley, and Glyn Elwyn contributed to the acquisition, analysis, or interpretation of data. Rachel C Forcino drafted the manuscript; Marcus Thygeson, A James O’Malley, Marjan J Meinders, Gert P Westert, and Glyn Elwyn critically revised the manuscript.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Glyn Elwyn is a developer of the

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.