Abstract

Patient experience is a critical measure for ambulatory primary care, although it is unclear how to best improve patient experience scores. This study aimed to determine whether use of a real-time feedback (RTF) device improved patient experience scores in a cluster-randomized trial. The primary outcomes were change from baseline in 9 Clinician and Group Consumer Assessment of Healthcare Providers and Systems (CG-CAHPS) question and domain scores most closely related to the RTF questions asked in a linear mixed effects model. There were no observed statistically significant intervention-related differences in CG-CAHPS scores in any of the 9 CG-CAHPS questions or domains (

Introduction

Measures of quality are increasingly tied to payments from insurance companies, including Medicare (1). Quality is defined in many ways, with patient experience being a key feature (2,3). Patient experience, while difficult to measure (4,5), has been shown to be correlated with improved patient adherence, potentially health outcomes (5 –10), and financial performance (11,12). Despite benefits related to maximizing patient experience, it is unclear how to best improve this measure in a primary care setting, which can qualitatively differ from other settings (13).

Studies suggest that primary care patient experience can be improved by transformation into patient-centered medical homes (14), clear data feedback to staff (15), publicly reported data (16), and potentially financial incentives (17). Measurement and feedback of patient experience data are key to improved patient experience (18), however, practitioners express concern about low response rates, lag time for data, and representativeness of samples (4,19 –21). Therefore, improving patient experience must include increasing the number of respondents and reducing the time to feedback received. Health care settings have begun trialing technology adjuncts which provide real-time feedback (RTF) on patient experience (19,22 –26). Real-time feedback is typically collected via electronic hand-held devices or kiosks that allow the customer to provide instant feedback after the health care experience. These data are collected, analyzed, and used by the health care team. Many RTF device studies employ mixed methods and feasibility designs (19,22 –26). To our knowledge, no study has investigated whether RTF devices improve patient experience scores in a randomized study design.

This cluster randomized trial aimed to determine whether use of RTF devices improves patient experience scores in primary care clinics. We hypothesized that clinics randomized to use RTF devices have more timely access to patient experience data, allowing them to optimize ongoing interventions related to improving patient experience. As use of RTF devices is new in health care, we also sought to analyze the correlation between RTF device scores and patient experience scores and investigate patient usage.

Methods

Setting

This is a cluster randomized controlled trial in an urban pediatric, adult, and family medicine primary care network affiliated with a tertiary care center. The network had 36 total clinics. Two clinics were excluded because they were not on the same electronic health record (EHR), and 1 was excluded because it had only been open for 1 month. Of the 33 eligible clinics, 9 clinics (27%) were considered “teaching sites” because they included medical learners; the others were considered “non-teaching sites” operating with a private practice model. The primary care network uses the Press Ganey Associates’ Clinician and Group Consumer Assessment of Healthcare Providers and Systems (CG-CAHPS) survey to measure patient experience (27). After a clinic visit, a survey in English or Spanish, based on preferred language in the EHR, is mailed to patients. The CG-CAHPS scores are presented as “top box” scores, which calculate the percentage of respondents who report the best possible answer (eg, “very good,” “always,” or “yes, definitely”). During this study, the network’s leadership directed all clinics to focus on initiatives to improve patient experience. This included 3 meetings over 1 year where representatives from all clinics gathered to learn quality improvement (QI) tools, apply them to patient experience initiatives, and share best practices. Additionally, CG-CAHPS scores in physician-related domains were tied to physician bonuses and all CG-CAHPS scores were displayed on widely distributed quarterly performance dashboards. Six QI coaches helped lead these patient experience QI projects. Each coach had some clinics in the intervention and control groups.

Intervention

Clinics in the intervention group received the HappyOrNot RTF device (28). HappyOrNot is used to assess customer and employee satisfaction in real time. The RTF device is placed in high-traffic areas and gathers service responses that get relayed to leadership. The RTF data potentially allow sites to know if experience interventions are working immediately, increases the representativeness of responses, and ultimately may lead to improved CG-CAHPS scores through more effective improvement initiatives.

The device features a large written question and 4 response buttons approximately 3 feet off the ground. The buttons contain pictures that range from sad to smiling faces and are pushed voluntarily by the user (Figure 1). The device has a 30-second refractory period where it will not record another response to avoid repeated responses. Data are transmitted via a cellular network and accessible on a web-based dashboard the day after entry. Responses are segmented by day of the week and hour of the day and compare an individual site’s responses to all other sites (Figure 1).

Example clinic report and picture of real-time feedback device used. Blue boxes obscure clinic name for confidentiality. Question on real-time feedback device is example from company website and not a question used in this study (28). While devices recorded button pushes at any time and displayed them on clinic reports (eg, after 5:30

In this study, RTF devices actively collected data from 8:30

To optimize response rates, a 1-month pilot period occurred prior to the start of data collection. During this time, RTF devices were trialed in various locations throughout each clinic to garner the most patient responses each day.

Randomization

Multivariable matching before randomization (29) was employed based on type of clinic (pediatric, internal medicine or family medicine, and teaching or nonteaching site), the prior year’s CG-CAHPS scores, and total annual visits. Clinics were grouped by type of clinic, then ordered by total annual visits and by prior year’s CG-CAHPS scores, and then matched in descending fashion. Each matched clinic pair was randomized to intervention or control group via a computer-generated random number. As 15 devices were provided for this study, 15 clinics each were randomized to the intervention or control groups, and 3 were not randomized.

Data Collection

From July 2017 through mid-March 2018, RTF devices were present in clinics and alternated between one of 2 questions: (1) “Was our entire clinic team attentive and respectful today?” and (2) “Did our entire clinic team explain things clearly today?” Questions were displayed in English and Spanish. Questions rotated on the 1st and 15th of every month to capture the inherent daily, weekly, and monthly clinic variability. The former question was presented 9 times during the study period while the latter question was presented 8 times. Questions were similar, although not identical to questions and domains asked on the CG-CAHPS survey. Data collected included question, response on a 4-point Likert scale, and date and time of the response.

During the study period, the primary care network generated CG-CAHPS data for all clinics. Surveys were analyzed according to the patient’s clinic visit date, so that CG-CAHPS and the RTF device data were over the same period. Clinics could access their CG-CAHPS data electronically at any time and clinic leadership received monthly CG-CAHPS dashboards.

To determine how individuals were using the RTF devices, an inconspicuous research assistant approached patients immediately after they used the device. From July 2017 through August 2017, a convenience sample of individuals were verbally asked the following: (1) “What behaviors would the care team have to exhibit to get a smiley face?” (2) “What do you think the practice hopes to learn from asking this question?” (3) “Any other comments?” This survey was pilot tested on inpatient units. Initially, this survey was pursued to provide clinics with ideas on how to improve patient experience. However, this survey allowed comparison between individuals’ responses to the 3 survey questions with the question currently displayed on the RTF device. A determination was made by the research team (MLR and MD) regarding whether the respondent was (1) responding to the specific question posed by the device, (2) responding to a different question or experience, or (3) if it was unclear. For example, a person who responded to the survey question “What behaviors would the care team have to exhibit to get a smiley face?” with “Call me in quickly, lower wait, good service” was judged to not be responding to “Did our entire clinic team explain things clearly today?” Other representative responses that were deemed to not be responding to the question being asked included “The doctor in general,” “Just pushed a button,” and “short wait time.” Each intervention clinic was surveyed at least once but due to variable patient volume, respondents came from only 14 clinics.

Analysis

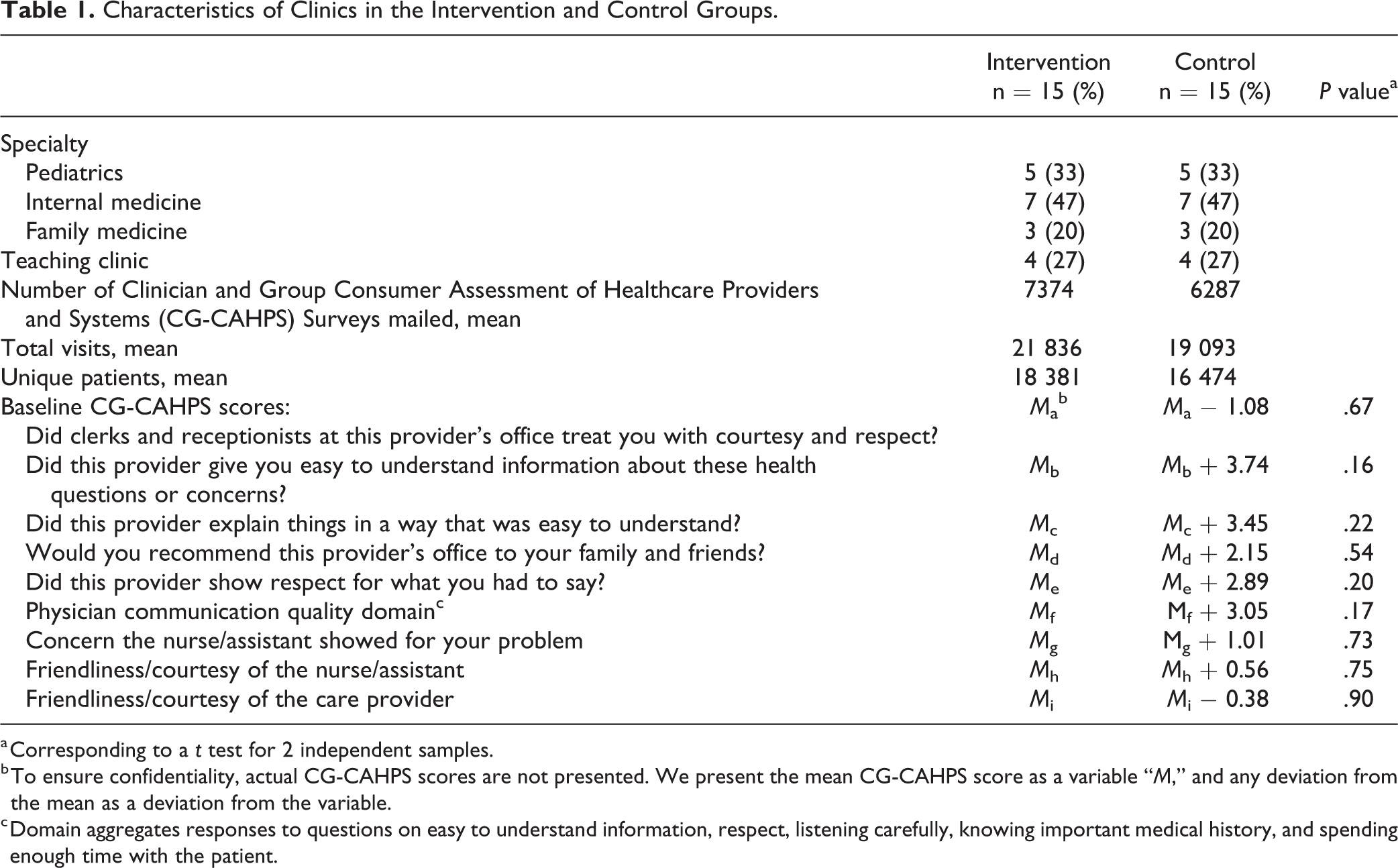

Characteristics of clinics in the intervention and control groups were compared using descriptive statistics. To ensure confidentiality, mean CG-CAHPS scores are presented as a variable (“

To test whether receiving an RTF device was correlated with increased CG-CAHPS scores, the primary analysis compared the change from baseline in CG-CAHPS top box scores between intervention and control groups for questions and domains related to (1) courtesy and respect and (2) patient communication. One year of baseline CG-CAHPS scores (May 2016-April 2017) was used for matching prior to randomization, but to ensure comparable changes, CG-CAHPS scores from June 2017 were considered the baseline month for all clinics. The following 9 CG-CAHPS questions and domains were hypothesized to relate to the questions displayed on the RTF devices: (1) “Did clerks and receptionists at this provider’s office treat you with courtesy and respect?”; (2) “Did this provider give you easy to understand information about these health questions or concerns?”; (3) “Did this provider explain things in a way that was easy to understand?”; (4) “Would you recommend this provider’s office to your family and friends?”; (5) “Did this provider show respect for what you had to say?”; the domain of (6) Physician communication quality, which aggregates responses to questions 2, 3, and 5 above as well as questions on providers listening carefully, knowing important medical history, and spending enough time with the patient; (7) “Concern nurse/assistant showed for your problem”; (8) “Friendliness/courtesy of the nurse/assistant”; and (9) “Friendliness/courtesy of the care provider.”

Change scores were computed as CG-CAHPS top box score in each month minus CG-CAHPS top box score at baseline for each CG-CAHPS question or domain and each clinic across all months and used as the outcome vector in a linear mixed effects model with random intercept for clinic and adjusted for treatment group, time as a linear effect, and a group times time interaction. Statistical contrasts tested the joint effect of the group main effect and the interaction between group and time.

Power was based on the probability of finding a statistically significant difference in specific CG-CAHPS change top box scores between the intervention and control arms. Assuming N(0,1) distributed errors with a clinic-specific autoregressive correlation structure to account for the likely positive correlation of CG-CAHPS scores over time reported from the same clinic (Intraclass correlation coefficient [ICC] = 0.37), we simulated scores based on a linear hierarchical model with expected baseline CG-CAHPS scores of 2.0 for both groups and containing a time × intervention effect of 0.20 (scores in the intervention group are expected to increase over time from a baseline of 2.0 to 3.0 at the end of the study period). Based on a sample size of 15 clinics per treatment arm (30 clinics total), we computed power of 88% at the α = 0.05 level of significance, adjusted for testing of our 2 primary CG-CAHPS top box scores of interest.

A secondary analysis of only clinics in the intervention group examined the correlation between CG-CAHPS top box scores with RTF device scores to understand whether data from RTF devices approximated data from CG-CAHPS, whether or not RTF devices led to improvement. Standardized CG-CAHPS top box scores for all months were used as the outcome vector in linear mixed effects models for each question or domain with random intercept for site and adjusted for time and specific standardized RTF device question scores.

Finally, as the research team was concerned that individuals responding to the RTF devices may not have been responding to the RTF device questions, descriptive statistics present data from the convenience sample survey described above.

Ethical

Use of RTF devices in all settings was voluntary and responses were anonymous. This study was approved by the Albert Einstein College of Medicine [Blinded] Institutional Review Board and the network’s leadership provided consent for all clinics to participate. No one from the HappyOrNotcompany participated in the study design, data analysis, manuscript writing, or decision to publish or not publish the results.

Results

All 30 clinics enrolled in the intervention and control groups completed the 9 months of the study. Characteristics for the 15 intervention and 15 control clinics are presented in Table 1. There were no significant differences in mean CG-CAHPS scores at baseline.

Characteristics of Clinics in the Intervention and Control Groups.

a Corresponding to a

b To ensure confidentiality, actual CG-CAHPS scores are not presented. We present the mean CG-CAHPS score as a variable “

c Domain aggregates responses to questions on easy to understand information, respect, listening carefully, knowing important medical history, and spending enough time with the patient.

Over the course of the 9-month study, 69 608 responses were recorded on the RTF devices. Clinics had a mean of 515 (standard deviation 353) responses per clinic per month.

For our primary outcome comparing change from baseline in CG-CAHPS top box scores between intervention and control groups for questions and domain related to (1) courtesy and respect and (2) patient communication, there were no observed intervention-related differences in CG-CAHPS scores over time (Table 2). Monthly changes in CG-CAHPS scores were observed to vary randomly and the intervention did not impact CG-CAHPS scores.

Estimated Changes in Clinician and Group Consumer Assessment of Health Care Providers and Systems Top Box Scores.a

Abbreviation: CG-CAHPS, Clinician and Group Consumer Assessment of Healthcare Providers and Systems; PG, Press Ganey.

a Based on the fixed effects estimates in the linear mixed model of changes in top box scores. Model contains fixed effects of group, time, and group × time.

For the secondary outcome, investigating whether the CG-CAHPS questions and domain analyzed were correlated with responses on the RTF devices, there were no statistically significant correlation between CG-CAHPS top box scores and RTF device scores (

Estimates of Correlation Between Clinician and Group Consumer Assessment of Health Care Providers and Systems (CG-CAHPS) Top Box Scores and Feedback Device Scores From the Same Month.a

a Based on a linear mixed effects model for standardized outcome and covariates with random intercept for within-site correlation and adjusting for fixed effects of time and specified HON main question. Results are presented as model estimate (

Finally, 106 individuals at 14 clinics were approached for the convenience sample survey and 96 patients (91%) responded. Of note, 6 clinics had 1 respondent, 1 clinic had 28 respondents (29%), and another clinic had 21 respondents (22%). Twenty-one patients (22%) were answering the specific question posed by the RTF device, 65 (68%) were not, and it was unclear if 10 individuals (10%) were answering the specific question.

Discussion

In one of the first cluster-randomized trials investigating the effect of an RTF device on CG-CAHPS patient experience scores in a large ambulatory primary care network, clinics that received the device did not have significantly improved CG-CAHPS scores compared to control clinics. Intervention clinic CG-CAHPS question and domain scores were not correlated with RTF device responses. This discrepancy could be due to many factors including patients not reading the question on the devices or different types of patients responding to CG-CAHPS surveys compared to those responding to the devices. A convenience sample survey suggested at least 68% of individuals responding to the RTF devices were not responding to the specific question on the device, despite large font, scripts used by clinic staff to encourage appropriate responses, and questions presented in English and Spanish.

When discussing patient experience, many clinicians and health system leaders lament delays in receiving data and low response rates (4,19 –21). While RTF devices may alleviate some of these concerns, it is unclear if they improve patient experience as current RTF device literature focuses on facilitators and feasibility of successful RTF implementation, not effectiveness or accuracy (19,22 –26). With approximately 17 responses per clinic per day available in-real time, these devices provided increased responses and quicker data to facilitate QI.

Unfortunately, despite the increased and timely response data, there was no significant improvement in CG-CAHPS scores comparing intervention to control clinics. This finding is striking given that this primary care network met many of the facilitators cited in the literature as key to improved patient experience (18,30): leadership focus, measurement strategies, accountability, and experience with QI. Given that each site had a QI coach, monthly transformation meetings, and 3 network-wide meetings to discuss improving patient experience, it is clear that patient experience was a top priority in this network. Unfortunately, the addition of RTF devices did not significantly increase patient experience scores in this network of clinics.

This failure to disprove the null hypothesis may be because most patients who utilized RTF devices were not responding to the specific questions asked on the devices. This suggests that the responses they provided may not have been valid to drive targeted improvement efforts. Wofford et al describe the feasibility of administering patient experience surveys in real time using technology-based platforms to enhance QI efforts in a primary care clinic and Wright et al investigate using an RTF device in a practice setting (24,25). Neither study, however, assessed the validity of patient responses and comprehension of the tool. We acknowledge that it is possible that patients who complete paper or electronic CG-CAHPS surveys also may not comprehend all questions (31). The amount of patient comprehension of both RTF devices and CG-CAHPS surveys is an important area for future research in order to drive improvement in patient experience.

This study has several limitations. There were only 30 clinics in the intervention and control groups total. These numbers may leave this study open to type II error. Additionally, improvement on ambulatory primary care CG-CAHPS scores may require more than 9 months to be evident and/or require more than just RTF devices. The RTF devices may be an additional tool to improve patient experience scores as opposed to a solution by themselves. Similarly, other QI activities in the primary care network may have taken precedence in the minds of the clinic leadership and therefore reduced focus on patient experience improvement. The questions posed on the RTF devices were not rigorously pilot tested as are CG-CAHPS questions, and we were unable to utilize exact word for word CG-CAHPS questions on the RTF devices, both of which may have reduced the potential for correlation between responses on the RTF devices and CG-CAHPS domains. Finally, the convenience sample survey which suggested most individuals were not responding to the question posed by the device was not a validated survey and used a convenience sampling strategy, both of which may bias results. The survey was conducted to generate new change strategies for improving patient experience, and so we are limited to the data collected. Finally, all of these results were generated from an urban, primary care network and may not be applicable to inpatient settings, subspecialty settings (13), or nonurban settings.

In conclusion, clinics randomized to receive RTF devices were not significantly more likely to improve CG-CAHPS scores as compared to control clinics. Additionally, responses recorded on the devices were not correlated with CG-CAHPS questions or domain, and it is unclear if individuals were responding to the question asked by the RTF device. Further research is needed to determine how to best improve patient experience scores across ambulatory care networks.

Footnotes

Authors’ Note

All procedures in this study were conducted in accordance with the Albert Einstein College of Medicine Institutional Review Board, Bronx, New York, USA (Approval Number: 2016-6657) approved protocols. This article does not contain any studies with animal subjects. Informed consent was waived by Albert Einstein College of Medicine Institutional Review Board, Bronx, New York, USA (Approval Number: 2016-6657) due to the anonymity of patient responses and lack of patient health information.

Acknowledgments

The authors gratefully acknowledge the HappyOrNot company that donated 15 real-time feedback devices for this study.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: HappyOrNot® company provided 15 real-time feedback devices for this study. No one from the HappyOrNot® company participated in the study design, data analysis, manuscript writing, or decision to publish or not publish the results.