Abstract

Management scholarship should be placed in a unique position to develop relevant scientific knowledge because business and management organizations are deeply involved in most global challenges. However, different critical voices have recently been raised in essays and editorials, and reports have questioned research in the management field, identifying multiple deficiencies that can limit the growth of a relatively young field. Based on an analysis of published criticisms of management research, we would like to shed light on the current state of management research and identify some limitations that should be considered and should guide the growth of this field of knowledge. This work offers guidance on the main problems of the discipline that should be addressed to encourage the transformation of management research to meet both scientific rigor and social relevance. The article ends with a discussion and a call to action for directing research toward the possibility and necessity of reinforcing “responsible research” in the management field.

Keywords

Introduction

Research in the social sciences comprises a branch of human knowledge integrated by different fields of knowledge that define a long academic history. Some academic disciplines, such as economics, psychology, and sociology, have their origins in the late 18th and early 19th centuries. Conversely, the management discipline emerged from a practical need for skilled business managers at the end of the 19th century (Agarwal & Hoetker, 2007), and since then this field has undergone significant growth (Birkinshaw et al., 2014; Honig et al., 2017). It seems clear that judging by the number of academics involved and the volume of research outputs provided, the field of management research has experienced impressive success. However, it is worth considering whether it is growing in the correct direction and whether it is providing substantial relevant knowledge to understand management processes.

Emerging criticisms in the literature question trust in research in the management field (Bedeian et al., 2010; Davis, 2015; Honig et al., 2017, 2018; Tsui, 2016). The knowledge generated by academics in the management field is often criticized because of its reduced relevance for practitioners (Bansal et al., 2012; Bartunek & Rynes, 2014; Bullinger et al., 2015; Kieser & Leiner, 2012). As Davis (2015) argues, it is necessary to reflect on why and for what scientific knowledge is generated in the management field. Management research should always aim to understand and explain the empirical puzzles in the business world and organizations. Therefore, the relevance to practice is central to management research. However, in many cases, management academics focus on issues that are irrelevant to professionals, and most research results are not normally documented in terms of applications, nor are they accessible to practitioners. Over the years, this problem has generated a sizable body of literature on bridging the relevance gap (Bansal et al., 2012; Bartunek & Rynes, 2014; Bullinger et al., 2015; De Frutos-Belizón et al., 2019; Kieser & Leiner, 2012). Nevertheless, after several decades of debate, and despite important efforts to bridge the research–practice gap, no solution seems to have been found, and the discussion continues in the literature. Likewise, according to the evidence shown in some papers, the management field has important deficiencies in its compliance with scientific standards of falsifiability, replicability, and data transparency, all of which are essential to generate scientific knowledge (Barley, 2016; Bedeian et al., 2010; Bettis et al., 2016; Goldfarb & King, 2016; Honig et al., 2017; Lewin et al., 2016; Starbuck, 2016; Tsui, 2016). Recently, different critical voices through essays, editorials, and position papers have questioned research in the management field, criticizing the research as pursuing novelty over truth, lacking a connection with management practice, using poor methodological research practices, and exposing the vulnerability of its scientific claims (Barley, 2016; Bettis, Ethiraj, et al., 2016; Davis, 2015; Honig et al., 2018; Lewin et al., 2016; Starbuck, 2016; Tsui, 2016).

However, the relevance of management research goes beyond just the managerial profession, as it also has social implications. In fact, it could be considered that management scholars have a great opportunity with their research to guide business actions toward practices closer to a prosperous and sustainable socioeconomic context. As suggested by Barley (2010), from a reflective position, the academics that are a part of the management field should adopt a more pragmatic vision and reflect on how organizations and their management are transforming our society. Current societies rely on organizations as the primary social actors. Profit-making organizations are the most influential of all of them, surpassing governments in many cases. In different stages of history, and in certain contexts, other organizations, such as the church, have played this dominant role, but Western economies can currently be described as firm-driven systems. This development has exponentially increased the social relevance of management research and the social responsibility of academics in this field. Conscious of this, even some of the pioneers in the management field came to consider the discipline as a science-based professional activity that has the opportunity to contribute to the “greater good” (Drucker, 1974; Simon, 1967; Taylor, 1914). However, it is surprising that this social commitment of the discipline has been traditionally overlooked. J. P. Walsh et al. (2003) analyzed 1,738 works published from 1958 to 2000 in the Academy of Management Journal. They concluded that, “scholarship in our field has pursued society’s economic objectives much more than it has its social ones” (J. P. Walsh et al., 2003, p. 859). The authors verified that over 70% of the articles focused on performance-oriented outcomes while the rest only had a social orientation in their results. Tsui and Jia (2013) replicated the previous study but focused on papers drawing on Chinese samples in the period from 1981 to 2012. Their findings showed that despite China having a more socialist doctrine, economically it has an ultra-capitalist system that does not equally consider the social and economic content of firms’ outcomes. The findings showed that over 80% of the works in English journals and over 90% in Chinese journals were clearly oriented on performance or economic outcomes.

As a response to these problems, a new vision has recently emerged that considers the necessity of transforming the research developed in the field of management toward a more responsible science (Community for Responsible Research in Business and Management [CRRBM], 2017, revised 2020; Tsui, 2013, 2016). This trend assumes that the central problems suffered by the management field are connected, given that the relevance of the research is debatable when the quality is in doubt. To inspire changes, the CRRBM1 (2017, CRRBM (2017)) proposes a set of principles that guide research in the field of management to build a solid body of knowledge (epistemic values) with social implications (social values). As research is fundamentally evaluated by its publication in top journals, the social implications of the knowledge generated are frequently underestimated. Currently, scientific publication is rewarded over other research outputs in academic careers. Since research quality judgments are difficult, systems increasingly rely on “impact” measures that can be easily evaluated. As a result, the current system of rewards in the academe has meant that for most researchers obtaining a publication in a top journal has high priority for the development of their academic career, propagating the so-called publish-or-perish culture (Barley, 2016; Lewin et al., 2016; Starbuck, 2016).

Therefore, in view of the relevance and reliability problems suffered by this field of knowledge, the current article invites discussion and debate on the need to substantively rethink management research toward a responsible science perspective. However, we find in the literature various criticisms from opinion statements and without apparent connection. Therefore, since the published criticisms do not represent a structured field, we review the literature to provide a complete background of the main concerns about management research that could hinder the development of a responsible science. This work makes important contributions. On one hand (a) based on an analysis of published criticisms of management research, we try to shed further light on previous discussions about the state of management research by identifying shortcomings that challenge the growth of a relatively young field. This represents a relevant contribution that differentiates this work from previous studies, since, according to our knowledge, this article offers guidance about the main problems of the discipline that should be addressed to encourage the transformation of management research to meet both scientific rigor and social relevance. On the other hand, as a (b) second contribution, from the ideals of socially responsible science, we encourage the transformation of the management research field by considering both epistemic and social values, recognizing the mutual dependency between science and society and fulfilling the criteria of rigor and relevance.

To do this, the structure of the article is as follows. Initially, we develop a background section summarizing the main problems already raised by past syntheses. Next, we explain the methodology used to review the literature: criteria for the selection of articles, coding, and content analysis. Specifically, after this rigorous selection process, 70 papers were considered as primary sources in our data sample. In the following section, we describe the main flaws on which to act in the management field and propose guidance on the possible solutions that should be implemented. Finally, the article concludes with a discussion and call to action for directing research toward the possibility and necessity of reinforcing “responsible research” in the management field.

The crisis of the management field

The management discipline emerged from a practical need for skilled business managers at the end of the 19th century (Agarwal & Hoetker, 2007), and since then, this field has undergone significant growth (Birkinshaw et al., 2014; Honig et al., 2017). In the years after the Second World War, mainly in the United States, we witnessed a substantial increase in the number and quality of business schools that contributed to the rigor of the discipline through their academic programs. However, the outpouring of the systematic and empirical research that has supported the management discipline is frequently considered to have emerged in the mid-1950s (Agarwal & Hoetker, 2007). It is considered that the management field reached the necessary legitimacy as a scientific discipline when business schools adopted the natural sciences model in response to the challenges raised in two seminal reports published in 1959 (Gordon & Howell, 1959; Lewin et al., 2016; Pierson, 1959). The first of them, published by the Ford Foundation and written by Robert Aaron Gordon and James Edwin Howell (1959), was titled “Higher Education for Business.” The second, issued by the Carnegie Foundation and written by Frank C. Pierson, was titled “The Education of American Businessmen: A Study of University-College Programs in Business Administration” (Pierson, 1959). Both reports criticized business schools for the impracticality of their outputs and their lack of scientific content. Specifically, Gordon and Howell (1959) assessed management research, expressing that, “the business literature is not, in general, characterized by challenging hypotheses, well-developed conceptual frameworks, the use of sophisticated research techniques, penetrating analysis, the use of evidence drawn from relevant underlying disciplines [. . .], or significant conclusions” (1959, p. 379). At that time, the state of business school education was largely characterized by a trade school orientation, without deep scholarly content. Both reports criticized the lack of scientific foundations of business schools and called for increasing attention to scientific rigor and propelling business research into the realm of social sciences (Gordon & Howell, 1959; Pierson, 1959). These critical reports generated a “scientification” in the management field, and scholars developed a more rigorous research based on sophisticated empirical analyses. This scientification process assumed that the management research must adopt the positivist model of the natural sciences. These two reports encouraged business schools to give faculty more time for research tasks and to approach other academic disciplines that contribute to the growth of the discipline (Porter & McKibbin, 1988). According to the arguments of these reports, the establishment of links with nearby disciplines would suppose a way to obtain a greater legitimacy and a significant base resource, given the limited stock of knowledge and research of management science in the early years (Gordon & Howell, 1959; Pierson, 1959). Therefore, since the management field lacked relevant maturity as a discipline, it seems logical that its growth and evolutionary path is significantly influenced by ideas from other fields of knowledge such as economics, sociology, psychology, anthropology, and mathematics (Birkinshaw et al., 2014). As noted by Porter and McKibbin (1988), over the 25 years following the referred reports, the “research agenda for most schools looked rather different than it had in the 1950s, both in amount and character” (Porter & McKibbin, 1988, p. 166). Since the 1960s, the discipline has had considerable evolutionary growth, as the number of publications and articles published in management increased in a remarkable way (Honig et al., 2017; Porter & McKibbin, 1988; Van Baalen & Karsten, 2012). However, it is worth considering whether it is growing in the correct direction and whether it is providing substantive relevant knowledge to understand management processes.

Recently, different critical works have questioned research in the management field, criticizing the fact that research is pursuing novelty over truth, as well as its lack of connection with management practice, the poor methodological research practices, and the vulnerability of its scientific claims (Barley, 2016; Bettis et al., 2016; Davis, 2015; Honig et al., 2017, 2018; Lewin et al., 2016; Starbuck, 2016; Tsui, 2016). Currently, academic careers reward publication over other research outputs. Since research quality judgments are difficult, systems rely on “impact” measures that can be easily evaluated. Therefore, just as in many other academic disciplines, it also happens that management research is driven by the standards established by journals and academic reward systems. As a result, academics seek novelty and impact in their research outputs to obtain publications more easily and advance their academic careers (Davis, 2015). As some works have supported, this is intensified and reinforced by the way in which PhD students are trained, the criteria for selecting manuscripts of journal reviewers and editors, and the rewards policies that award positions and promotions (Barley, 2016; Lewin et al., 2016; Starbuck, 2016). As stated by Honig et al. (2018), through a dialogue with editors, the current system of rewards in the academy has meant that for most of the researchers, obtaining a publication in a top journal has high priority for the development of their academic career, implementing the so-called publish-or-perish culture. Similarly, a few years earlier, Birkinshaw et al. (2014) in an open discussion on the state of the management field and its future perspectives showed their concern about the distancing from practice and the generation of “fetishist” theory, regardless of its instrumental value for the professional world and society. Likewise, as Davis (2015) argues, it is necessary to reflect on why and for what scientific knowledge is generated in the management field. Management research should always aim to understand and explain the empirical puzzles in the business world and organizations. Therefore, the relevance to practice is central to management research (Bansal et al., 2012; Bartunek & Rynes, 2014). However, in many cases, management academics focus on issues that are irrelevant to professionals, and most research results are not normally documented in terms of applications, nor are they accessible to practitioners (Bansal et al., 2012; Bullinger et al., 2015; Kelemen & Bansal, 2002). In fact, a relevant number of works in the literature on bridging the relevance gap in the management field has accumulated over the years, and this gap is an ongoing concern for many management scholars (Bansal et al., 2012; Bartunek & Rynes, 2014; Bullinger et al., 2015; Cohen, 2007; Kieser & Leiner, 2012). As some more reflective works have proposed, this problem has its origin in the adoption in the past of a positivist paradigm closer to the natural sciences that caused the search for rigor over relevance (De Frutos-Belizón et al., 2019; Lewin et al., 2016; I. Walsh et al., 2015). Therefore, according to these works, a more pragmatic and interpretive reconsideration of the paradigm from which management science is focused is necessary (De Frutos-Belizón et al., 2019). For example, the focus should be on other more interpretive research paradigms such as grounded theory (I. Walsh et al., 2015).

However, paradoxically this empiricist and positivist emphasis to obtain legitimacy as a discipline in the past has not guaranteed that there are not multiple criticisms of bad methodological practices in the management field (Bettis, Ethiraj, et al., 2016; Sartal et al., 2021). According to the evidence that some papers show, the management field has important deficiencies in the compliance of scientific standards of falsifiability, replicability, and data transparency, which are essential to generate scientific knowledge (Barley, 2016; Bedeian et al., 2010; Bettis, Ethiraj, et al., 2016; Goldfarb & King, 2016; Honig et al., 2017; Lewin et al., 2016; Sartal et al., 2021; Starbuck, 2016; Tsui, 2016). All this happens no more than six decades after the publication of the Ford Foundation and Carnegie Commission reports. For example, Bedeian et al. (2010) surveyed management professors, asking them about their perceptions relative to research in the discipline. The empirical evidence extracted confirmed that they harbor skepticism about the reliability of published research. With a similar objective, Goldfarb and King (2016) assessed a sample of 300 published studies in top outlets for research on management. They estimated that 24%–40% of the reported results would probably not be confirmed if the study were repeated. Various works have shown their concern regarding various methodological problems, promoting debates and providing recommendations to editors and journals to change this situation. However, the previous literature is still varied and focuses on issues of a different nature. For example, some studies that have warned of misinterpretations of p-values, the statement of hypotheses after knowing the results, or the specific selection of variables in a model (Aguinis et al., 2017; Bedeian et al., 2010; Bettis, Ethiraj, et al., 2016; Orlitzky, 2012; Starbuck, 2016). Others, such as Aguinis et al. (2013), criticized the erroneous methodological treatment of outliers in management research. Cho and Kim (2015) refuted some of the erroneous properties attributable to the alpha coefficient, criticizing its misinterpretation and its extensive use as an indicator of absolute reliability in the management field. By contrast, other works, such as Roloff and Zyphur (2019) or Sartal et al. (2021), consider that replicability problems also come in many cases from the lack of information provided on the methodology used, blaming the lack of transparency as one of the fundamental problems. In short, as Aguinis et al. (2017) argue, a manuscript is more likely to be considered for publication if it contains significant results or the hypotheses are supported.

Recently, based on these criticisms, the Community for Responsible Research for Business and Management (CRRBM) published a relevant position paper that inspires, encourages, and supports credible and useful research in the business and management disciplines. This document advocates development and proposes seven principles that support the development of responsible research. Clearly, these principles seem capable of guiding a substantive rethinking of our field of knowledge toward a responsible science that generates useful knowledge for the business world and society. However, compliance with these principles can be threatened by various problems that dominate the management field.

Davis (2015) made an interesting critical metaphor comparing the architecture of research in the field of management with the famous Winchester Mystery House (San José, California). The legend states that the widow of the founder of the Winchester rifle company would be pursued by the ghosts of those people who died by her husband’s rifles unless he built a mansion to house these spirits. Therefore, Winchester Mystery House would not own a master building plan, but its construction would be its sole purpose. Davis (2015) wonders whether after several decades of building the management knowledge field, the discipline is facing an expensive ornamental building with no practical purpose, like the Winchester Mystery House. According to the latest records of 2019 published by the Web of Science, over 9,000 works are published every year in more than 226 journals in the management field, and the number increases every year. However, we should ask ourselves whether we are clear about where we are going in our construction. Therefore, it is necessary to identify the potential burdens that can seem to be more, such as a mysterious house with any construction being an end.

As we have seen in the literature, critical evidence has led to identifying various deficiencies suffered by research in the management field. However, these criticisms represent an unstructured field, with multiple criticisms without apparent connection and from various reflections or position papers. Therefore, in the following section, we review the literature to provide a complete background of the main concerns about management research that could hinder the growth of this field of knowledge.

Method

As we have indicated, the objective of the literature review was to identify in the published criticisms of management research the main concerns about management research that could hinder the development of a responsible science. Specifically, with the development of this systematic review, we propose a conceptual consolidation in a fragmented sphere of multiple criticisms of research in the management field. Systematic reviews add rigor to the review process and its results by following transparent and reproducible procedures (Sartal et al., 2021; Tranfield et al., 2003). In our review, following Tranfield et al. (2003), we have used systematic data search and analysis procedures, specifically, through three stages: data collection, data coding, and data analysis.

Step 1: data collection

At this stage, we plan the literature review and define the scope and range of the literature under analysis (Tranfield et al., 2003). To identify the relevant publications for our study, the searches were developed using the international electronic databases, “ISI Web of Science (WoS; Thomson Reuters)” and “ABI / INFORM Complete.” These databases contribute to our study what some authors call “certified knowledge” (Ramos-Rodríguez & Ruíz-Navarro, 2004) for the impact of the analyzed research. These data sources are reliable and common in many bibliometric studies carried out in the management field (Thunnissen & Gallardo-Gallardo, 2019), since they contain sufficiently broad information for any type of study. The objective of the search in these databases was to identify peer-reviewed published criticisms of management research in multiple formats such as regular papers, position papers, editorials, or opinion statements.

Five criteria guided our article selection process. Specifically (a) we restricted the time frame of our search to 2009–2020, inclusive. To ensure a review of the most recent relevant concerns published in our field, we restricted sources to those published in the past decade (beginning from 2009), as we considered this as an appropriate period to analyze the criticisms that remain latent in the current field of knowledge. In addition, most relevant work that criticizes the research in the field of management only emerged in this decade, a period of boom in works that reflect on the development of research and its limitations. However, as will be shown below, the reference lists of all articles initially included in the review were examined using the snowball method, which permitted us to include additional relevant papers in our review. However (b) those categories of publications that were not peer-reviewed, without available authorship, or with content unrelated to research in the field of management were excluded. Furthermore (c) we excluded conference articles, books, book chapters, theses, and monographs. To ensure the scientific quality of our primary sources in our data sample (d) we selected papers from journals indexed in the Journal Citation Reports (JCR) and SCOPUS database with at least Q2 in the SCImago Journal Rank (SJR). Finally (e) as international academic debate depends predominantly on English papers, only papers published in English were considered.

During the search process2, search terms included keyword combinations with Boolean operators (“and,” “or”), for example, “research—practice gap,” “publication practices ‘replication,’” “management research/field ‘rigor-relevance gap,’” “science-practice gap, and ‘ethical problems.’” The different search string combinations resulted in 169 valid results in WoS and 223 valid results in “ABI / INFORM Complete.” However, we found a significant overlap between the results of both databases, and the duplicates were eliminated. After this first filter, 123 preliminary results were considered relevant for our review.

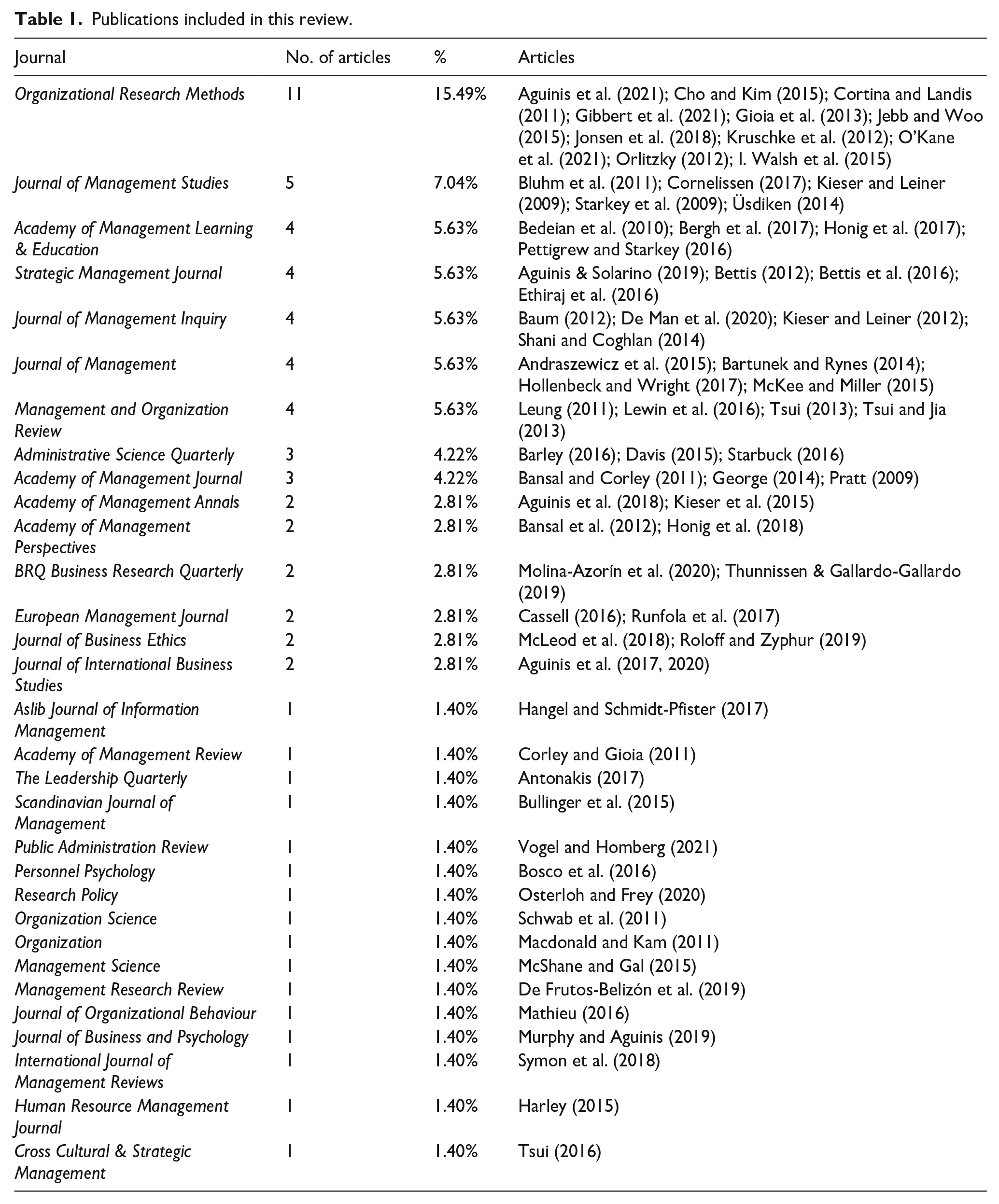

Following the selection process, we obtained the full-text articles through their databases or other open-access channels (ResearchGate or Google Scholar). We performed a second manual filtering of the results to qualitatively determine which publications identified in the search met the criteria and could therefore be included in the literature review. To mitigate any potential bias, the evaluation was based on the independent judgment of two members of the research team, who reviewed the titles and abstracts or read the full texts when necessary. After this second manual refinement, some preliminary documents were also excluded, with additional reasons for this. For example, some papers that were initially classified as scientific publications were verified to be conference papers or publications in non-indexed journals. Some editorial papers were also excluded since, although they initially met the inclusion criteria, they were introductions to a special issue and did not focus on any specific topic in-depth. Likewise, some full texts were unobtainable and were therefore excluded. Finally, after this selection process, 70 papers comprised the primary sources for our data sample (Table 1). As two of the authors were involved in this process, we computed a Cohen’s Kappa indicator on the agreement in their decisions. The Cohen’s Kappa index is an index of interrater agreement between two raters. Unlike a simple percentage of agreement, Cohen’s Kappa index adjusts the effect of chance on the percentage of agreement obtained (Cohen, 1960), and is therefore considered as a robust measure. Normally, a value higher than .40 suggests satisfactory interrater agreement (Fleiss, 1981); in this first step, a value greater than .80 was found for all articles evaluated by the two authors. Likewise, Cohen’s Kappa values were also statistically significant at an alpha level of .001 or .01.

Publications included in this review.

This final sample of 70 articles was organized in an Excel spreadsheet in which descriptive information was included for each article (author, year of publication, title, journal, number, volume, keywords, and abstract) (Appendix 1). These descriptive data were reviewed to avoid any errors in their writing and to ensure the accuracy and consistency of the information.

Step 2: information coding

The coding stage allows you to classify and divide large amounts of accumulated data into lower and coherent parts to later analyze how they are related. Once a set of articles is selected as primary sources, we develop a coding scheme and analysis method according to the objective of our study (Potter & Levine-Donnerstein, 1999; Sartal et al., 2021; Zupic & Čater, 2015). As we have previously noted, the objective of the literature review is to identify, in a particularly fragmented field, the main concerns about management research that could hinder the development of a responsible science. Therefore, in accordance with the objective of our study, we reviewed the content of the articles that were a part of our data sample. Specifically, we performed a content analysis of the title and keywords provided by the authors, the abstracts, and even the full texts. This analysis made it possible to discover links between subject areas, trace their development, and establish a codified structuring of emerging works in the literature on limitations in management research. The content analysis was developed through exhaustive parallel coding procedures independent of two of the members of the research team, which allowed us to verify the reliability of the analysis. Specifically, we created coding categories and subcategories to structure the literature around information related to the analyzed limitations, methodological concerns, more general criticisms, and proposals for improvement on research in the management field, for example.

The coding process involved three stages. In the first stage, we randomly selected a few articles for two authors to propose independent coding and then to compare and discuss the proposed categories and subcategories. This step helped determine specific rules and definitions for possible coding categories that helped to provide uniformity to this procedure. In the second stage, we randomly divided the rest of the manuscripts among two members of the scientific team, so that each one could code the assigned articles according to the established coding guidelines and the list of codes. Finally, in the third stage, we discussed the shared codings and disagreements that arose in specific categories. This detailed and rigorous process enabled us to reduce possible bias in the qualitative interpretation of each manuscript. This was verified by confirming that the agreement between the coders in the research team was at a satisfactory range of Cohen’s Kappa values. For example, in the second step, there were two authors involved in the encoding process, and in the 70 articles evaluated, we obtained Cohen’s Kappa values greater than .50 and that were statistically significant at an alpha level of either .001 or .01. Furthermore, values greater than .80 were obtained for most articles. Thus, these values indicate very good interrater agreement in the encoding process. Only five articles had moderate Cohen’s Kappa values between .50 and .80. However, the disagreements were easily discussed and resolved by the coders.

Step 3: analysis and dissemination of information

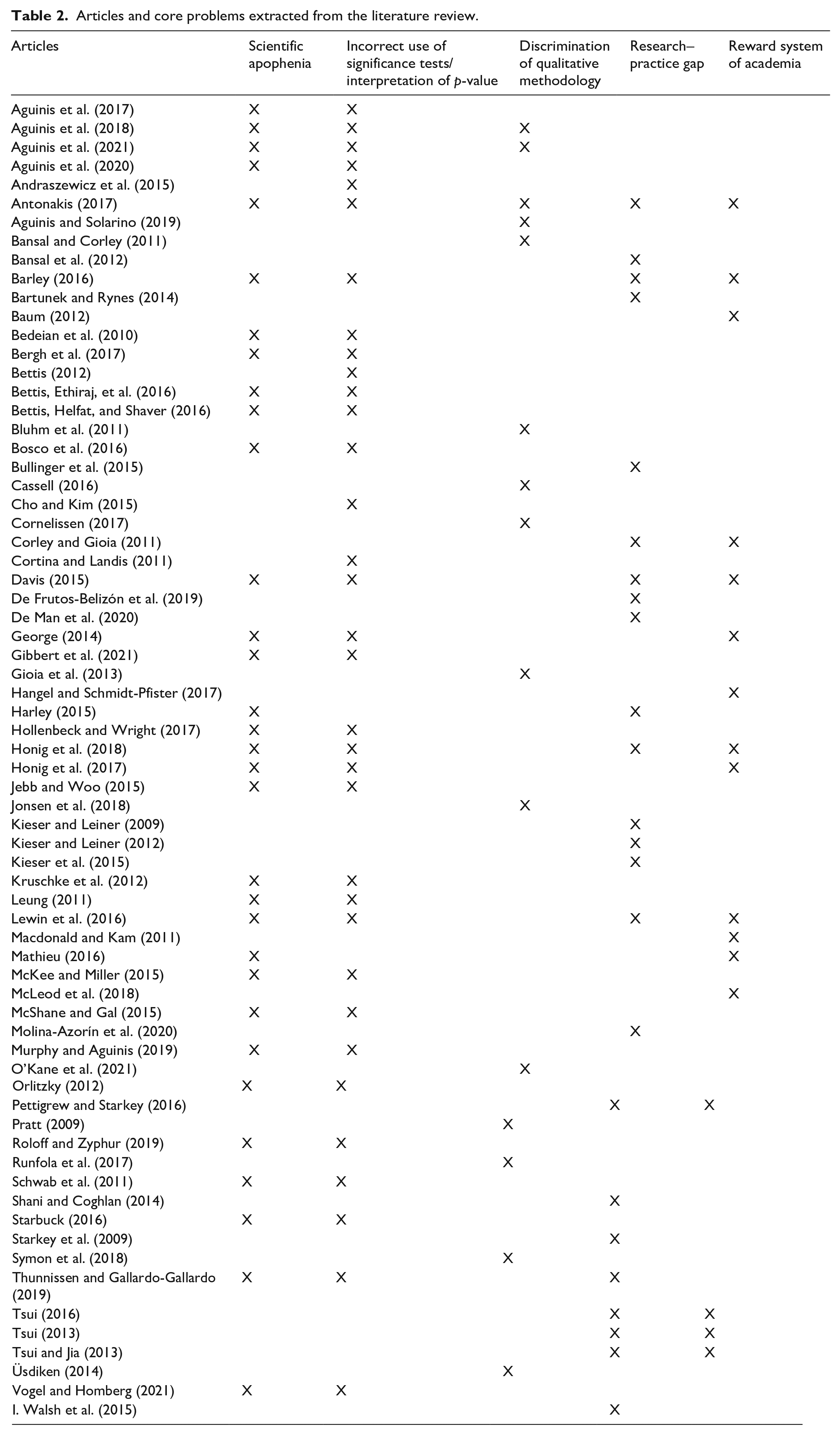

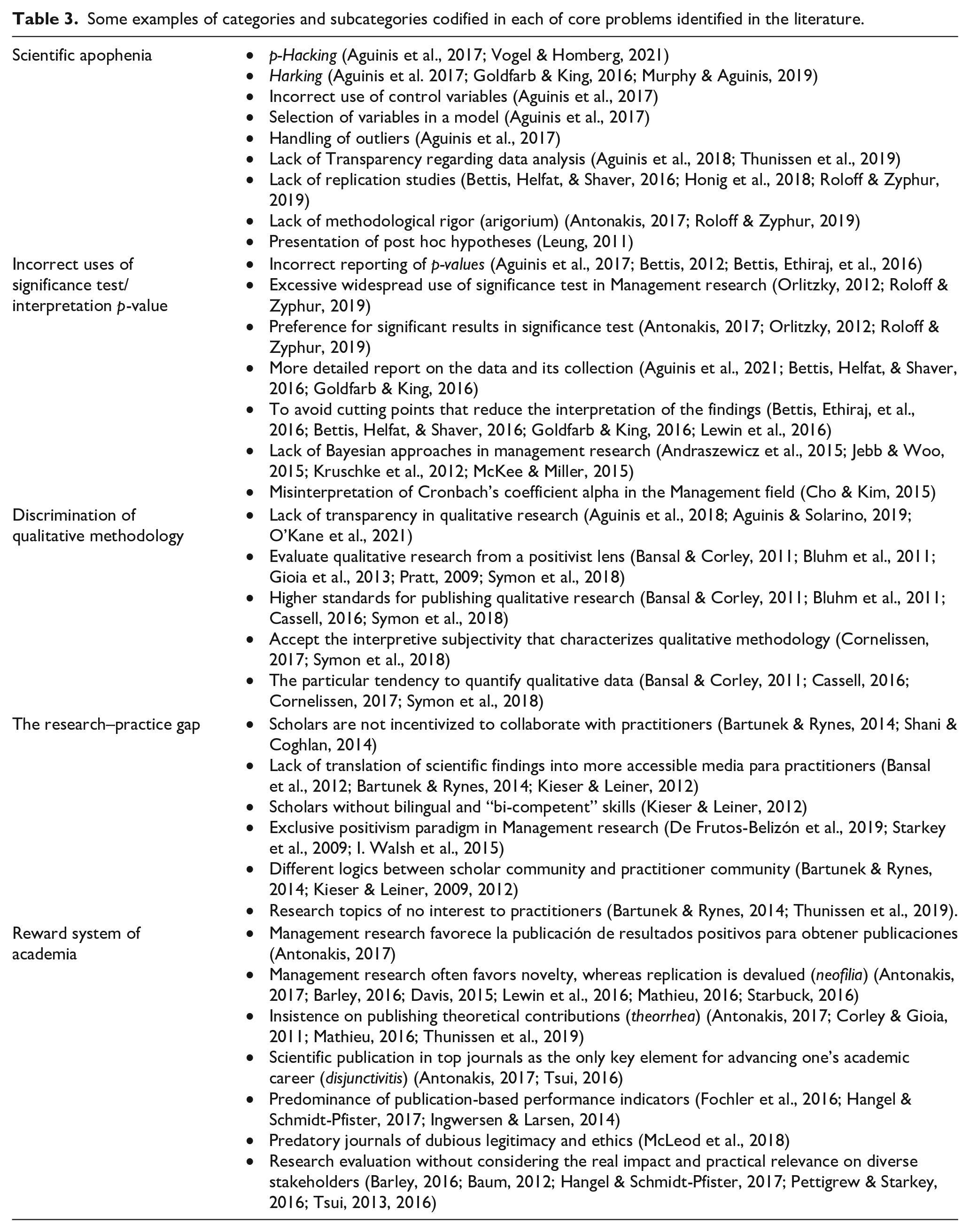

Once the content of the manuscripts was coded, the main findings of the literature review were analyzed (Tranfield et al., 2003). Specifically, the analysis of the content data collected was carried out in another Excel file. From the information ordered according to the coding template, all the information of each article was gathered, both descriptive and on specific codified content. In the second Excel analysis sheet, we used tables to structure the information obtained in terms of the frequency of each category, the subcategory of coded content, and the qualitative interpretation of the full text. The final analysis of these tables by the scientific team allowed us to recognize and identify the types of criticism raised in the recent literature about research in the management field. Specifically, we grouped the different problems identified in the literature and coded them around five core concerns (Table 2). Thus, for example, within one of these core issues called scientific apophenia, we grouped other related categories and subcategories of problems previously identified as the handling of outliers or the incorrect use of control variables (Table 3). These five critical areas will be addressed in the following section.

Articles and core problems extracted from the literature review.

Some examples of categories and subcategories codified in each of core problems identified in the literature.

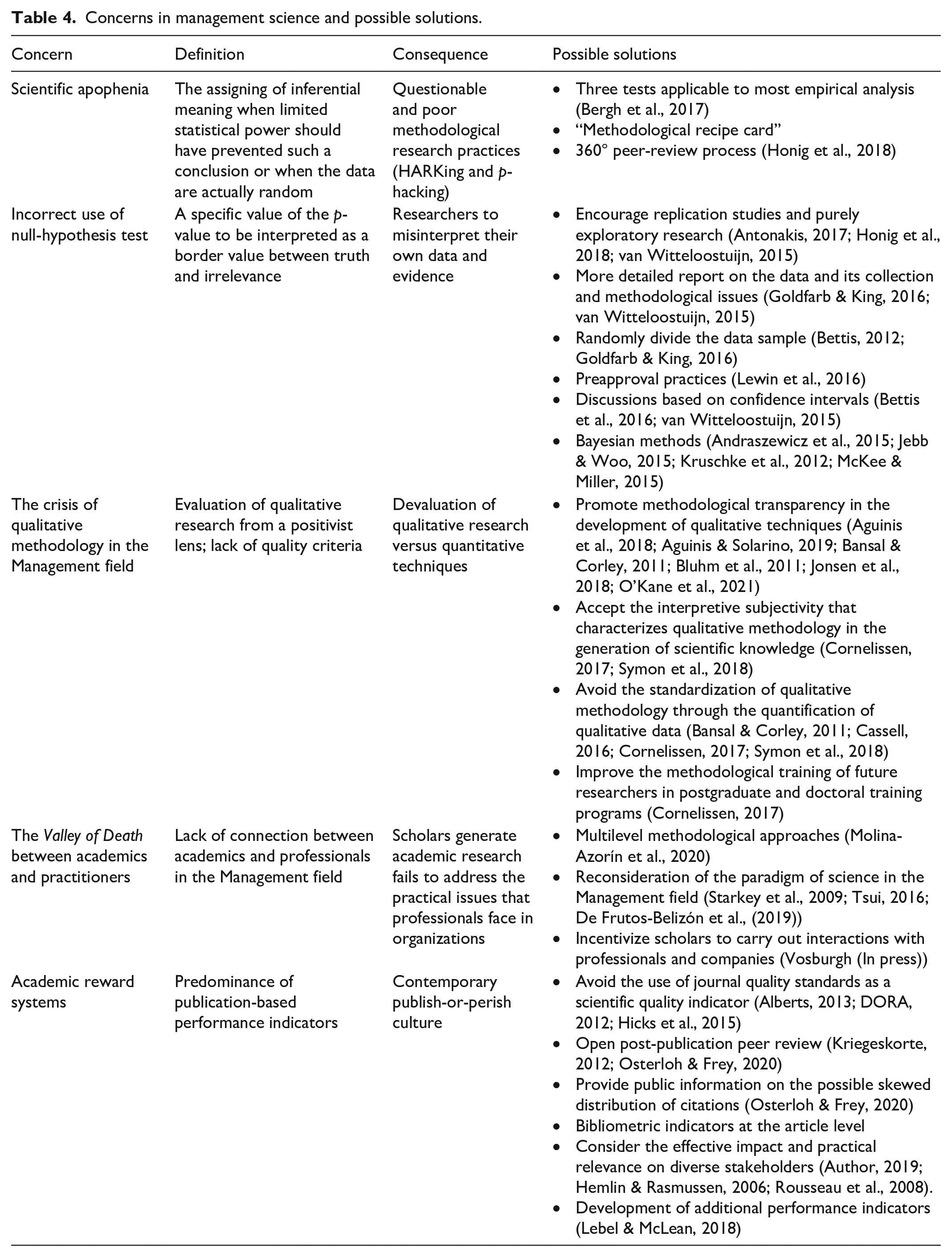

Results: the main flaws of management research

In this section, we described five basic problems in management research that were identified from the systematic review of the literature. The first three problems are methodological in nature: (a) scientific apophenia, (b) the incorrect use of significance tests and the interpretation of p-value, and (c) the “discrimination” of qualitative methodology. The others are more generic and can be considered as core problems, including (d) the research–practice gap and (e) the reward system of academia. The limitations were compiled to synthesize prior findings in the literature and identify the main causes that may hinder the development of responsible science in the management discipline, including the growth of this field of knowledge.

Scientific apophenia in the field of management

The findings of our review of the literature on criticism in the field of management identified the quality of research as one of the main problems of the discipline, even considering it as a problem that threatens the scientific integrity of the field. Obviously, questionable research practices in the management field put at risk the replication, the falsification of the theory, and the reproducibility of the scientific knowledge generated. This, in turn, indirectly also affects the credibility and impact of the research generated among interested parties and, ultimately, society. However, this is not a recent issue, as the quality of research in the management field has been under attack for more than two decades (Hambrick, 1994). Recent critical voices agree that management research often falls short of the necessary scientific standards of falsifiability, replicability, and data transparency (Barley, 2016; Bettis, Ethiraj, et al., 2016; Cho & Kim, 2015; Davis, 2015; Gibbert et al., 2021; Honig et al., 2017; Lewin et al., 2016; Starbuck, 2016; Tsui, 2016; Vogel & Homberg, 2021). Similarly, recent works have yielded a large number of non-replicable findings, including some that have received numerous citations (Ethiraj et al., 2016; Goldfarb & King, 2016; Vogel & Homberg, 2021). Replications evaluate the robustness and reliability of a given study by using a different data sample than the original study in a similar setting. Therefore, the publication of only statistically significant results, without the publication of replications of the original studies, results in the inconsistent generation of repeatable scientific knowledge (Bettis, 2012; Bettis et al., 2016; Cho & Kim, 2015; Goldfarb & King, 2016; Honig et al., 2018). Goldfarb and King (2016) referred to these practices that infer on the quality of the research generated as the “scientific apophenia” of management research. The term “apophenia” comes from clinical psychology and refers to the consideration of a connection between unrelated or independent elements. Specifically, Goldfarb and King (2016, p. 168) define scientific “apophenia” as the “assigning of inferential meaning when limited statistical power should have prevented such a conclusion or when the data are actually random.”

Scientific apophenia and the replicability deficit of research in management have been related in the literature to different types of questionable research practices, such as hypothesizing after results are known (HARKing) and data manipulation or dredging (p-hacking). HARKing and p-hacking are practices without legitimacy, thereby giving a false appearance to the formulation of wrong predictions (Aguinis et al., 2018, 2020; Bedeian et al., 2010; Bettis et al., 2016; Hollenbeck & Wright, 2017; Goldfarb & King (2016); Murphy & Aguinis, 2019; Starbuck, 2016). With practices such as HARKing, unexpected results are reported as if they had been hypothesized in advance (Aguinis et al., 2020; Bosco et al., 2016; Murphy & Aguinis, 2019; Starbuck, 2016). The “HARKing” practice consists of gathering data first, then performing statistical analyses and then formulating hypotheses. Only at the end of the process does the researcher attempt to identify theoretical foundations or previous works that support or reject the hypotheses just raised (Hollenbeck & Wright, 2017; Starbuck, 2016). “HARKers” attempt to create the misperception that pre-existing theories have sustained correct assumptions. Obviously, it is completely acceptable to follow an inductive approach and analyze data to identify inferences about systematic patterns and implications. In fact, possible findings that the researcher had previously considered should not be considered. However, a behavior that implies misrepresenting the research procedures by portraying inferences from data as propositions that have been hypothesized before data analysis is a completely illegitimate practice. Similarly, p-hacking or data manipulation supposes adulterating the data matrix with multiple calculations or alterations in order to find a determined equation or finding that reflects robust patterns (Bedeian et al., 2010; Starbuck, 2016). These practices can generate false findings that are not replicable. In this way, the researcher can lend false support to theory, making the theory seem more determinative than it actually is (Lewin et al., 2016).

These techniques are clearly encouraged by the greater willingness of academic journals to publish novel studies that generate impact. The research culture and incentive system that prevail in the academy have increased pressure on scholars (Bedeian et al., 2010; Bettis et al., 2016; Starbuck, 2016). According to different studies, the academic incentive system encourages publications in a small number of top journals and often favors novelty over truth in publications, something that has fuelled, in many cases, unreliable research findings (Barley, 2016; Baum, 2012; Davis, 2015; Lewin et al., 2016; Macdonald & Kam, 2011; Starbuck, 2016). This situation means that some researchers consider choosing between publishing at all costs and advancing their academic careers or the ideals and values for which they were interested in research. Some works suggest that one of the main reasons for this problem has been the preference among management journals to publish “cutting-edge” and “ground-breaking” findings, focusing mainly on positive empirical findings, not demanding reporting or debate of null findings or study of outlier observations, and rarely, considering for publication replication studies (Aguinis et al., 2018; Bettis, Helfat, & Shaver, 2016; Honig et al., 2018). This confirmation bias is omnipresent in management research (Davis, 2015; Leung, 2011). On the basis of an in-depth analysis, van Witteloostuijn (2015) concludes that the social sciences, particularly management research, have been unquestionably ignoring basic falsifiability principles, as advocated by Popper (1959). With these actions, scientific journals are giving support to a cynical academic culture of false communication, mediocre scientific standards that support poor and ambiguous theories and ritualistic personnel evaluations.

However, as Starbuck (2016) argues, journals are not the only ones responsible for generating unprofessional and questionable actions. In general, the current academic culture has a certain cynical and careerist nuance, in part because of the specificity of the attributes of research publications used by universities when evaluating the merits of research or advertising faculty achievements. For the researcher, achieving publication in top journals has high priority. The use of indicators and statistical procedures generates a cynical ethos that considers research to be the only way to advance one’s academic career. That is, publishing a paper is considered as the means to an end, and ignores the paper’s possible usefulness, practical implications, or social impact. In turn, universities compete with one another using the “significant” contributions of their faculty members. Obviously, the selective reporting of hypotheses, and, in short, the use of inappropriate statistical procedures, contradicts the scientific principles of falsification, reliability, and replication as well as the social and ethical value of integrity (Popper, 1959). The socially responsible approach to science requires the joint consideration of epistemic and social values in the development of research. Irresponsible behavior is incompatible with important epistemic values and with society’s expectations of honesty in science based on the integrity of research. Furthermore, misconduct is often associated with non-epistemic values closer to self-interest in furthering a professional career.

To work against research misconduct, some works have recently proposed non-demanding and feasible tools that could uncover questionable research practices. Bergh et al. (2017) propose three tests applicable to most empirical analyses and explain and evidence their effectiveness, specifically (a) congruence of statistical tests, (b) simulation of significance, and finally (c) verification based on matrices of descriptive statistics. Other authors insist on the development of actions during the peer-review process to deter this academic misconduct. Specifically, Honig et al. (2018) propose that the peer-review processes should require a “methodological recipe card” that provides detailed information to the reviewers about the empirical analysis and increase transparency. In addition, Honig et al. (2018) propose that journals implement codes of conduct for reviewers and, in turn, encourage authors to provide an evaluation of the review process. These proposals generate a 360 degree peer-review process that improves the perception of impartiality (Honig et al., 2018).

The incorrect use of significance tests and the interpretation of p-value

As in many other disciplines, null-hypothesis significance tests are the basis of quantitative empirical research in the management field (Starbuck, 2016). Nevertheless, for approximately 80 years, methodologists have warned of the use of null-hypothesis statistical tests without any apparent success. Researchers have ignored these warnings, continuing to use them even though their interpretations are often erroneous and indicate that the researchers do not understand what the tests indicate (Cortina & Landis, 2011; Hubbard, 2016; McShane & Gal, 2015; Orlitzky, 2012; Starbuck, 2016). The p-values obtained in these tests do not reflect any information on the rigor and reliability of the findings (Aguinis et al., 2020; Bettis, 2012; Bettis, Ethiraj, et al., 2016; Hubbard, 2016; Schwab et al., 2011; Vogel & Homberg, 2021).

However, this was another of the findings in our review of the literature on the most-published criticisms of management research. Significance tests are broadly misunderstood, which causes researchers to misinterpret their own data and evidence, and their incorrect use, as well as other dubious research methods, calls into question the standards of falsifiability, replicability, and data transparency in management research. Weak methodology in management research results in unreliable information for the professional world and little usefulness for society.

The dimension of p-values is often interpreted as an indicator of the robustness of the results obtained, thus the misinterpretation that small p-values indicate stronger or reproducible findings. In the same way, certain cutoff values of the p-value (.05, .01, and .001) are understood as critical values in testing a hypothesis (Bettis et al., 2016; Goldfarb & King, 2016; Schwab et al., 2011). This notion causes a specific value of the p-value to be interpreted as a border value between truth and irrelevance. Therefore, null-hypothesis significance tests facilitate the empirical demonstration of a concrete fact but can often also erroneously consider unimportant observations as significant or vice versa. The dichotomous treatment given by researchers to the statistical tests distorts the meaning of the p-values because there is not a large inferential difference between coefficients with a p-value of .04 and another with a p-value of .06, for example. These illusory interpretations do nothing but hinder progress toward a better understanding of relevant social and behavioral processes that take place in organizations. Additional knowledge about the origin of the establishment of the p-value cutoff values could contribute to a better understanding of these values and encourage a more prudent and judicious interpretation. The p < .05 cutoff was set as a competitive response to certain nonconformities and disputes over book royalties between two reputed statisticians (Goldfarb & King, 2016). In the 1920s, Kendall Pearson, who was engaged in the sale of extensive statistical tables, was unwilling to allow Ronald A. Fisher to use the tables in his new book. In response, Ronald A. Fisher avoided this barrier by creating a method of inference that was supported only in two values, p-values of .05 and .01 (Goldfarb & King, 2016). Despite this development, Fisher himself later recognized that Pearson’s more continuous method of inference was more appropriate and preferable than his binary perspective. Fisher acknowledged that it was more correct to report the p-values by refraining from emphasizing the thresholds. This allows the evidence to be interpreted more easily on a continuum and in the context of previous findings. Therefore, one could say that p-values are used, to some extent, because in the 1920s a statistician considered that sharing his work could affect his income (Goldfarb & King, 2016).

In consideration of these practices, journals have proposed new policies specifically aimed at increasing the reliability of the empirical tests of our discipline (Aguinis et al., 2017; Bettis et al., 2016; George, 2014; Lewin et al., 2016; Roloff & Zyphur, 2019; Starbuck, 2016; Tsui, 2016). By doing so, they implicitly encourage researchers to publish replication studies and null results, giving signals about the possibilities of these outcomes as being accepted. With this shift in approach, journals also encourage purely exploratory research aimed at identifying and describing phenomena. Any statistical analysis could be presented discussing all the findings as positive, negative, or null. As Honig et al. (2018) suggest, journals should also dedicate more publication space to replication studies to recognize their value. According to these authors, more emphasis should be placed on requiring the presentation of replication “road maps” that facilitate future replication work. Similarly, to avoid false positives in empirical tests, different scholars suggest that the authors should provide a more detailed report on the data and its collection, the methodological models used in the research, the experimental conditions tested, or the observations eliminated (Aguinis et al., 2021; Bettis, Helfat, & Shaver, 2016; Goldfarb & King, 2016). This reporting allows readers and reviewers to more rigorously evaluate the authenticity and robustness of the reported findings. The implementation of mechanisms that diminish the possibility of finding false positives could also be strengthened, such as randomly dividing the data into two parts before beginning the analysis (Bettis, 2012; Bettis, Helfat, & Shaver, 2016; Goldfarb & King, 2016), as random effects will be unlikely to emerge in a second dataset. Meanwhile, Lewin et al. (2016) propose the implementation of preapproval practices. Through these practices, authors present a study proposal where the literature is reviewed and the theoretical basis is explained. In addition, a research question is posed and a data source is proposed, but researchers do not present analyses, the results, or conclusions. Only after the proposal is reviewed and accepted do the authors commit themselves to completing the study as previously proposed. In return, the journal would guarantee publication, regardless of the findings finally obtained.

It is likewise advisable to avoid cutting points that reduce the analysis and interpretation of the findings to mechanical rules of borders of false or true to justify scientific conclusions (Bettis, Ethiraj, et al., 2016; Bettis, Helfat, & Shaver, 2016; Goldfarb & King, 2016; Lewin et al., 2016). More thoughtful interpretations of test statistics need to be encouraged (Goldfarb & King, 2016, p. 175). For example, instead of supporting the interpretations of any scientific findings on specific cutoff points, discussions could be developed based on the confidence intervals, explaining the standard errors or the possibilities of obtaining certain results in a dataset and later analyzing the implications for the research proposals or hypotheses established (Bettis et al., 2016).

Some works go even further and have proposed a deinstitutionalization of statistical significance tests that implies an epistemological reform of the research paradigm (McKee & Miller, 2015; Orlitzky, 2012). This paradigm shift should be based on the abandonment of the idea that the objectivity of a finding depends on the result of a test of statistical significance (Andraszewicz et al., 2015; Kruschke et al., 2012; McKee & Miller, 2015). Rather, this new paradigm should approach the social construction of knowledge in any social science, characterized by more subjectivist norms and values (Orlitzky, 2012). Once again, the excessive use of statistical significance tests, their incorrect use, or the preferences to publish mainly positive results because they are considered more interesting, showing values that are contrary to the ideas of responsible science. These practices reflect values of instrumentality, opposed to epistemic and social values, turning the “potentially good science into junk science” (Tsui, 2016, p. 14).

As some works have proposed, this implies adopting the ideas of Bayesian approaches to probability that are characterized by a subjective and interpretivist vision of reality and an explicit recognition of probability in the validation of a theory (Andraszewicz et al., 2015; Jebb & Woo, 2015; Kruschke et al., 2012; McKee & Miller, 2015). Bayesian estimation is a type of statistical inference that explicitly specifies the probabilities that a hypothesis can be true. That is, the variability or uncertainty in the perceptions of researchers about a hypothesis to be tested, the possible measurement inaccuracies, and, ultimately, the imprecision in the scientific reasoning process is recognized. Bayesian analysis simulates a complete distribution over the joint parameter space under conditions of uncertainty, revealing a range of variation and relative probability of different possible combinations of parameter values. That is, under the use of these methods, rather the probabilities of the hypotheses conditional on the evidence that are known are analyzed. Therefore, in response to the known limitations of the significance tests (effects of small samples, erroneous interpretations, and “null effects”) (Andraszewicz et al., 2015; Cortina & Landis, 2011), the Bayesian statistical inference tests can be proposed as a relevant alternative. However, the known advantages of Bayesian methods still contrast with a dearth of use in the management field (Kruschke et al., 2012).

“Discrimination” of qualitative methodology in the management field

Recent literature reviews indicate a consolidated trend of growth in qualitative research in the field of management (Bluhm et al., 2011; Cornelissen, 2017; Jonsen et al., 2018; Symon et al., 2018; Üsdiken, 2014). In recent decades, relevant management journals, such as the Academy of Management Journal, have published editorials that encouraged management researchers to develop qualitative techniques that increase the sources of knowledge about organizational phenomena, providing guidelines to evaluate these techniques in the discipline (e.g., Bansal & Corley, 2011; Pratt, 2009). This growth has been notorious despite the fact that it developed with notable differences between the European context, the American context, and the rest of the world (Bluhm et al., 2011; Üsdiken, 2014). This uneven pace of development is explained, fundamentally, by the differences in terms of epistemological and ontological traditions that have dominated these research contexts (Bluhm et al., 2011; Cassell, 2016). This concern highlights the importance of developing sound scientific methods and processes and giving value to the plurality of methodological techniques without devaluing qualitative methodology. However, as Üsdiken (2014) found in an extensive review, qualitative research represented around 20% of management research in the last period analyzed. Despite this relatively high presence, we should not be overly optimistic as the recent literature has also highlighted certain barriers faced by researchers conducting qualitative research in the field of management (Bluhm et al., 2011; Cassell, 2016; Cornelissen, 2017; Symon et al., 2018). Furthermore, while this growth is positive and appears to be getting stronger, it may still seem like a low proportion of the research compared with their quantitative counterparts.

Although qualitative techniques have strengths that can be used to provide knowledge in the research field of management, our findings show that there are certain problems related to their development, evaluation, and consequent reliability arising in the research community. Qualitative methodology and its interpretive approach are unique in their ability to describe, interpret, and explain organizational issues. Later in the research process, quantitative methods allow for a description of the prevalence and generalization of the phenomena analyzed (Bluhm et al., 2011). Therefore, qualitative techniques are important to understand business phenomena in workers, teams, and organizations and describe their development over time, especially in the field of management research. In fact, multiple well-established theories in the field of management are derived from purely qualitative research (Runfola et al., 2017). However, the logical positivist tradition that prevails in research is the major concern that the research community has about qualitative research in this field. This discipline-dominating research paradigm sets much higher standards for publishing qualitative research compared with quantitative approaches (Bansal & Corley, 2011; Bluhm et al., 2011; Cassell, 2016; Symon et al., 2018). Yet, these standards evaluate qualitative research from a positivist lens. For this reason, the research community raises concern about the reliability and validity of these standards, and that concern causes them to be marginalized and devalued with respect to quantitative research (Pratt, 2009). This also makes it a less conspicuous research strategy because, in addition to their high demand in terms of field work, they are more difficult to publish. Faced with this difficulty, management scholars opt for the easy route of methodological marginalization that lacks epistemic values found in responsible science. Once again, rewarding academics based on publication-based performance indicators leads to values of convenience and instrumentality associated with career development. These values cannot be considered as epistemic, social, or even ethical and should never interfere in the methodological decisions of the researcher, since they contradict the scientific principles listed above (Popper, 1959).

There have been attempts in the literature to establish quality criteria that support the evaluation and mark the development of qualitative research (Bansal & Corley, 2011; Bluhm et al., 2011; Gioia et al., 2013; Symon et al., 2018), but these do not stop imitations of certain purely positivist conventions. The drawbacks are that, in contrast to quantitative methodology, qualitative techniques are supported by a research paradigm characterized by a wide range of philosophical positions (Symon et al., 2018). In this sense, unlike the positivist philosophical consensus that prevails in the discipline regarding quantitative methodology, a standardized pattern for the development of qualitative methodology seems somewhat complex, regardless of whether it is conducive or not. The methodological pluralism offered by qualitative methods offers a wide range of options under which to investigate different research questions, enriching the opportunities to understand organizational phenomena in greater depth.

Possibly, the most recommended quality criterion in the development of qualitative research is transparency (Aguinis et al., 2018; Aguinis & Solarino, 2019; Bansal & Corley, 2011; Bluhm et al., 2011; Jonsen et al., 2018; O’Kane et al., 2021). Promoting this detailed and transparent description of the entire qualitative research process, as suggested in some works, aims to provide improved validity, replication, and standardization of these techniques. For example, Aguinis and Solarino (2019) offer recommendations to improve transparency and replicability in qualitative research. According to the authors, it is necessary to provide specific and detailed information on aspects such as the type of qualitative technique used, the research environment, the position of the researcher with respect to the participants, the sampling procedures or the relative importance of each case. In addition, it is important to provide information on the treatment, and subsequent analysis of the qualitative evidence obtained. For example, the saturation point and the judgments that defined it, the data coding process, and the complete anonymous disclosure of the raw materials (interviews, recordings, transcripts . . .) that supported the study (Aguinis & Solarino, 2019).

However, we could also argue that quantitative research is not entirely transparent unless we are experts in statistical methodological techniques (Symon et al., 2018). Therefore, this emphasis on transparency in qualitative methodology is based on the little familiarity that some researchers or reviewers may have with these methods, trying to equate these techniques to quantitative methods to gain legitimacy. However, other works have indicated that it is necessary to accept the interpretive subjectivity that characterizes qualitative methodology in the generation of scientific knowledge (Cornelissen, 2017; Symon et al., 2018) without applying purely positivist criteria, such as replication, precision, standardization, or elimination of investigator bias. In fact, the criterion of transparency can be interpreted differently based on the epistemological perspective from which the research is approached. From the perspective of critical theory, transparency would not have to be related to the detailed methodological description but rather to a high level of democratic involvement of the participants in the qualitative study (Symon et al., 2018). Although transparency is understood as a criterion of the positivist tradition, the application of a higher standard of methodological description will not lack benefits if it gives greater credibility to this methodology in our discipline. Therefore, improving transparency standards in qualitative studies is still beneficial and recommended by improving the reliability and possible replicability of the study (Aguinis et al., 2018; Aguinis & Solarino, 2019; O’Kane et al., 2021).

Instead, we consider it necessary to be critical of the particular tendency to quantify qualitative data. The so-called factor analytic, following the principles of grounded theory, reduces large amounts of qualitative data collected through observations and testimonies, among other sources, into significant factors that explain certain phenomena. With this analytical approach, we try to establish an implicit analogy between qualitative and quantitative methodology that reflects the predominance of quantitative techniques with positivist pragmatism in our discipline (Cornelissen, 2017). However, even appreciating the possible methodological strengths of this trend, this close analogy reduces the range of methods and restricts the theorizing styles in our field based on descriptive and interpretive approaches. It would only imply theorizing from qualitative methods from the extraction of constructs that are organized under hypothetical linear relationships. From our point of view, this standardization of qualitative research only leads to the impoverishment of our field itself. This is a concern shared by different works in recent years that see the diversity of methodological approaches endangered (Bansal & Corley, 2011; Cassell, 2016; Cornelissen, 2017; Symon et al., 2018). We also consider this as detrimental to the richness that the diversity of qualitative methods and their distinctive stamp provide in the generation of detailed explanatory descriptions of organizational phenomena. Paradoxically, greater transparency or quantification of the qualitative methodology can provide greater legitimacy, though it could be negative if it is at the cost of losing integrity and epistemological diversity in our field of knowledge.

On the basis of these statements, we consider that we are in time to develop incremental changes that prevent us from having to reach radical changes in the way knowledge is generated in our field. Cornelissen (2017) stated that an appropriate time to face these problems could be in the postgraduate and doctoral training programs. These programs should provide future researchers in our field with methodological knowledge that addresses, in addition to technical issues, the epistemological roots and the different ways of theorizing that each type of methodology offers. However, we believe the responsibility for preserving this methodological pluralism does not lie solely with qualitative researchers but also with epistemological guardians such as editors and reviewers. If the trends to standardize qualitative methodology are supported by editors and reviewers, then preferences for these trends are implicitly established and could end up being the norm in our discipline. Similarly, journals in our field could designate qualitative researchers as associate editors more assiduously (Symon et al., 2018). In this way, more editorials could be published that initiate discussions of criteria in qualitative methodology instead of suggesting only prescriptive recommendations so that qualitative research is more easily publishable. These publishers should also encourage the development of novel, diverse methodological approaches that avoid the standardization of qualitative research in the field of management. This argument could also be extended to qualitative researchers themselves who must resist movements that impose harmful standardization. Studies that follow less conventional qualitative research designs, such as focus groups, case studies, or critical reviews, contribute to broadening the horizons of qualitative methodology in our field and tend to have a greater impact on the body of knowledge in our discipline (Bluhm et al., 2011; Runfola et al., 2017). Likewise, it would be important to act on the review process, promoting reflexivity about the epistemological paradigm that they apply in their evaluations and honestly regarding the methodological training they may possess. For example, training for reviewers and discussion or mutual learning between reviewer and author could be encouraged (Symon et al., 2018).

Practical relevance in the field of management: “The Valley of Death” between management academics and management practitioners

The widening research–practice gap, with management research increasingly divorced from the practitioner world, is another key problem of the management field widely recognized by the literature (Bansal et al., 2012; Bartunek & Rynes, 2014; Bullinger et al., 2015; De Frutos-Belizón et al., 2019; De Man et al., 2020; Kieser et al., 2015; Kieser & Leiner, 2012; Thunnissen & Gallardo-Gallardo, 2019). Academics are generally uninterested in the problems of practitioners when framing research questions, and practitioners do not resort to academic research to solve their problems (Bartunek & Rynes, 2014; Kieser & Leiner, 2009).

As management was born as an applied field, research was initially focused on solving specific applied problems (Agarwal & Hoetker, 2007). However, as the literature widely recognizes, at some point in that history, the two communities started to diverge. As noted above, most studies agree that this point has its origin in the publication of reports by the Carnegie Foundation and the Ford Foundation (Gordon & Howell, 1959; Pierson, 1959), which criticized business schools for the quality of their research outputs because of the lack of scientific content. These critical reports generated “scientification” in the management field and led scholars to develop more rigorous research based on sophisticated empirical analyses (Bartunek & Rynes, 2014). This “scientification” at the expense of applicability and practical relevance for the professional world could be the origin of the problem that continues today.

Although there is prima facie agreement that the gap exists, there is intense debate about its nature and the reasons behind it. Shapiro et al. (2007) suggests two fundamental reasons that hinder the transfer of scientific research to professionals: lost before translation and lost in translation. Lost before translation refers to the fact that research outputs cannot be translated into practice, because their arguments are previously disconnected by relying on different logics. Lost in translation refers to the problems that arise specifically in the transfer of scientific knowledge to the professional community. The interlocutors do not understand one another because they communicate through different languages. Practitioners fail to read publications in academic journals, as the research they publish is written by researchers for researchers. Research results are not normally documented in a way that facilitates application and accessibility to practitioners (Bansal et al., 2012; Kieser & Leiner, 2009). Bullinger et al. (2015) suggested that researchers prefer producing knowledge, not worrying about translating it and disseminating it to the professional community. Similarly, scholars are more encouraged to publish than to collaborate and engage with professionals (Bartunek & Rynes, 2014; Shani & Coghlan, 2014).

The literature has exerted considerable efforts in explaining how the disconnections between academics and professionals should be managed to bring research closer to the needs of professionals (De Frutos-Belizón et al., 2019; Shani & Coghlan, 2014). Some studies have described the differences and particularities of both communities, arguing that they should relate to one another without crossing their own borders (Bansal et al., 2012; Bartunek & Rynes, 2014; Kieser & Leiner, 2012). Management practitioners and academics must develop independently, but they need to form closer alliances to realize the full potential that management research can offer to organizations. If this were to take place, it would be particularly relevant for both of them to inform one another about topics of interest and specific concerns. A key element of this relational perspective would be active listening. Management professionals need to be aware of innovations in management research while academics in this field should listen to professionals, especially in the initial stages of the process, when they are framing research questions. Translating the scientific findings into more accessible media for the professional community could be a good solution. Research translated into informative books may be considerably more influential than journal articles for management practitioners. Publications aimed at practitioners should be steeped in scientific evidence but written for a more general audience, combining interest insights for practitioners as well as scientific findings. However, to establish efficient relationships between both communities, scholars must develop bilingual and “bi-competent” skills. In this way, academics can generate relevant knowledge for the solution of professional problems and, in turn, contribute to the advancement of scientific knowledge in the management field. Academia should, therefore, be able to not only speak the languages of practice and science but also transfer interpretative schemas between the two communities (Kieser & Leiner, 2012).

By contrast, the growing diversity and specialization of the management field in various areas sometimes lead to a simplification of the complexity involved in the real problems facing companies (Pettigrew & Starkey, 2016). In fact, the normal thing is that the problems that arise in the companies imply phenomena in multiple areas of knowledge and levels of analysis, but the research mainly focuses on a single level. Therefore, recent works also suggest that not enough attention has been paid in the management field to a multilevel methodological approach (Molina-Azorín et al., 2020), which can offer opportunities to improve and advance in research closer to the reality of business practice.

Other works have also proposed a more pragmatic and interpretive reconsideration of the paradigm from which management research is focused (De Frutos-Belizón et al., 2019; Starkey et al., 2009; Tsui, 2016; I. Walsh et al., 2015). According to these works, it is possible that the adoption in the middle of the past century of a positivism paradigm close to the natural sciences has generated management research that gives greater importance to rigor over relevance. Thus, it is ignored that the social realities studied by management science are completely contextualized by their environment. Assuming a more pragmatic paradigm would deepen understanding, meaning, and action rather than notions related to the description or prediction close to classical positivism. Approached from the same angle, entrepreneurial universities are classified as institutions that play a double relevant role: generating new knowledge and disseminating the said knowledge to society. For some years now, the literature has proposed this idea as one more step in the evolution of the role that universities play in the current knowledge society (Audretsch, 2014; Guerrero & Urbano, 2012). This new role makes these institutions responsible for generating research and creating mechanisms and institutions dedicated to facilitating the dissemination of newly gained knowledge in society. According to this idea, entrepreneurial universities adopt a completely knowledge-generating approach to solving real problems; relevance and applicability are two guiding values indispensable to this approach (Audretsch, 2014; Guerrero & Urbano, 2012), values that are much closer to the social values associated with responsible science.

Obviously, all these aims would be much easier to achieve if the incentive system in academia is not based solely on the impact within the academy. This would allow academics to focus beyond simply publishing, therefore fostering greater predisposition to establish relationships with professionals or develop skills that would help transfer the research to the professional world.

The reward system of academia

The academic reward system and current academic culture of publishing or perishing can be considered as two of the fundamental problems in the field of management because, as we have observed, they influence the problems previously exposed. Likewise, this is a concern that directly and indirectly affects the development of practically all the principles of responsible science. Recent literature has criticized the current emphasis of scholars on publishing, explaining how it determines the behavior of researchers and can be the basis for other problems in our field (Barley, 2016; Baum, 2012; Davis, 2015; Hangel & Schmidt-Pfister, 2017; Lewin et al., 2016; Macdonald & Kam, 2011; Starbuck, 2016; Tsui, 2016).

On the basis of scientific publication as a unit of recognition, in the late 1950s, Robert K. Merton (1957, 1973) developed the notion of a reward system of science based on peer reputation. As Merton (1957, p. 642) suggested, “the institution of science has developed an elaborate system for allocating rewards to those who variously live up to its norms.” According to him, the concept of recognition can be understood in general terms as “the giving of symbolic and material rewards” by scientific peers (Merton, 1973, p. 429).

There is no doubt that scientific publications must provide an accessible knowledge base that allows for a constructive exchange of knowledge among researchers. However, in the current academic culture where there is intense competition for measurable successes, academics have become irremediably focused on publishing while adapting to the contemporary publish-or-perish culture. Academics are evaluated on the basis of their publications in top journals, considering almost exclusively the “journal impact factor” (JIF). Therefore, those who want to be rewarded orient their work toward the standards and research topics defined by the agenda of these journals (Corley & Gioia, 2011; Davis, 2015; Starbuck, 2016; Tsui, 2016). The recent literature agrees with the criticism that management research often favors novelty, whereas replication is devalued (Barley, 2016; Davis, 2015; Lewin et al., 2016; Mathieu, 2016; Starbuck, 2016). Most management scientific journals expect the articles they receive to make some theoretical contribution, which has caused an obsession in many scholars to develop and publish theory at all costs (theorrhea) (Antonakis, 2017; Corley & Gioia, 2011; Thunnissen et al., 2019). Davis (2015) criticized management theory for prioritizing and valuing the superiority of novel knowledge, rare and curious findings, and questionable and counterintuitive findings over “truth” as well as the accumulation of rigorous knowledge. Barley (2016) also suggested that this circumstance is intensified by the way in which PhD students are trained, the criteria for choosing manuscripts by journal editors and reviewers, and the rewards policies that award positions and promotions. In the academic world, career incentives have distorted the final goal of the publications because they are perceived as a key element for advancing one’s academic career, something that is not always compatible with scientific rigor and ethical behavior (Tsui, 2016). Einstein’s theory of relativity superseded Newtonian mechanics because it better explained the observed reality and not because it represented a novel, counterintuitive, or provocative knowledge (Tsang, 2016).

Different scholars have suggested that the predominance of publication-based performance indicators, such as JIFs or Hirsch (h)-index, determine the conduct of academics and foster a behavior determined by obtaining numerous publications of measurable value (Fochler et al., 2016; Hangel & Schmidt-Pfister, 2017; Ingwersen & Larsen, 2014). This is what is known as reactivity, which refers to how actors respond to the circumstance that their actions are being evaluated and alter their behavior to improve the performance of their evaluation (Fochler et al., 2016, p. 178). If academics are valued fundamentally for their output in terms of publications, then a high percentage of their behavior will be directed to that output for which they are valued. Hangel and Schmidt-Pfister (2017) recently analyzed the motivations for research throughout the different stages of the academic career. The authors concluded that, “the tension between wanting and having to publish reveals a shift from publishing to a consequence of getting interesting research results, to doing research in order to publish” (Hangel & Schmidt-Pfister, 2017, p. 541). These findings suggest a general perception that obtaining publications is the conditio sine qua non, regardless of the stage in the academic career. The decoupling between career incentives or rewards and the development and progress of our field can be the source of multiple disruptions. It is considered that the quantitative indicators used to evaluate academic performance can replace deeper considerations regarding quality and scientific rigor as well as the attention on possible practical and social implications of the generated knowledge. This culture has come to question the knowledge base of science, that is, the reliability of the findings (Fanelli, 2012). As Nosek et al. (2012, p. 616) observed, “to the extent that publishing itself is rewarded, then it is in scientists” personal interests to publish, regardless of whether the published findings are true. The rewards that guide the academic career based on “impact” (citation counts) and “productivity” (article counts) is actually a perverse incentive system that fosters the appearance of many questionable research practices (Bettis et al., 2016; Goldfarb & King, 2016). This context has even fostered the increasing appearance of predatory journals of dubious legitimacy and ethics that potentially influence measures of scientific productivity (McLeod et al., 2018).