Abstract

We examined the competitive effects of the largest, statewide K–12 private school voucher program in the United States. We relied on network proximity data to generate a drive-time measure that accounts for road lengths, intersection turn times, speed limits, and traffic patterns. This allowed us to calculate travel time between two points in 1-minute intervals. We used this precise measure in a difference-in-differences framework to examine impacts on student math and English language arts test scores and graduation rates. Using a student-level dataset that covers the 2006–07 through 2015–16 school years, we found little evidence that the average student in an Indiana traditional public school had been affected—either positively or negatively— by the enactment and growth of the Indiana Choice Scholarship Program. We discuss policy implications of these findings.

Keywords

Introduction

Passed as part of House Enrolled Act 1003-2011, the Indiana Choice Scholarship Program (ICSP) is a means-tested private school choice program currently supporting ~69,000 students with state funds to offset the cost of private school tuition. It is the largest voucher program in the country, and enrollment has grown more than 10-fold since the program’s inception (Table 1). During the first year of operation, ICSP scholarships were limited to a maximum of 7,500 students, but legal uncertainty about the program meant that student participation fell short of the cap that year, and just 3,911 students participated. The program participation cap doubled in the program’s second year, and for the 2013–14 school year, the enrollment cap was removed altogether so that ICSP scholarships could be awarded to all eligible applicants. By 2015–16, the final year of this study, enrollment in Indiana’s voucher program had reached 32,686 students, representing 19.62% of all private school voucher recipients in the United States that year. Given its size and scope, Indiana presents a rich opportunity to examine the systemic effects of the nation’s largest voucher program.

Growth in the Indiana Choice Scholarship Program, 2011–12 through 2023–24

Source. Indiana Department of Education, Office of School Finance.

Over the period we studied, eligibility for the program was initially targeted to low-income public school students but gradually expanded to include more categories of students over time. Initially, enrollment in this program was limited to students with two semesters’ experience in a public school. Another requirement was that applicants came from households where the family income did not exceed 150% of the maximum qualifying income for free and reduced-price lunch (this equated to ~$61,000 for a family of four that year). Also eligible were students who previously received an award from a scholarship-granting organization. In 2013–14, four categories of students were added to the eligibility list: kindergarten students, those with special educational needs from families earning up to 200% of the free and reduced-price lunch cutoff ($87,136 for a family of four), siblings of students who previously received an award from a scholarship-granting organization and students coming from failing public schools. Furthermore, after the program’s first 2 years of operation, there was no enrollment cap on the total number of possible participants.

Although a matching analysis of participating students in grades 5–8 concluded that it had generally no effect on participants’ English language arts scores and a negative impact of −0.15 SDs on participants’ math scores (Waddington & Berends, 2018), there has never been a large-scale student-level analysis of the program’s impact on nonparticipants. This is unfortunate, because some program observers may worry that students who don’t choose a private school risk being left behind academically. The central research question we aimed to answer, therefore, was whether a competitive system built on the principle of choice could serve as a rising tide that lifts all boats or instead exacerbates existing inequities. Specifically, did private school competition lead to changes in student achievement and graduation at a traditional public school?

Various arguments have been proposed in support of the idea that the competitive pressures induced by an education marketplace can serve as a tide that lifts all boats (Friedman, 1955; Hoxby, 2003). In response to the fiscal pressure to attract and retain students, schools may seek innovative approaches to improve student learning. In contrast, a potential drawback is the possibility that nonchoosers will be left behind in public schools with lower peer quality, furthering inequitable schooling experiences (Bifulco et al., 2009). For example, the executive director of the Indiana Association of Public School Superintendents, J. T. Coopman, has said about the program, “There’s a price that’s going to be paid down the road because we’re going to end up with the haves and the have-nots” (Wang, 2014, p. 4). To test these theories, we conducted a systemic effects analysis of Indiana’s private school voucher program, which is the largest voucher program in the nation. We analyzed the ICSP’s competitive effect on the achievement and attainment outcomes of 837,000 students. We relied on geocoded competition measures in a difference-in-differences framework, focusing on district-operated public schools as the population of interest. The intuition behind the research design, which has previously been applied in Florida (Figlio et al., 2023), rests on the knowledge that while the establishment of the ICSP may have represented a shock for all public schools in Indiana, those facing a more competitive landscape to begin with likely felt this shock more acutely.

Our contributions to the competitive-effects literature are fourfold. First, we focus on Indiana, where the voucher program was much larger than programs in any other state at that time, thus generating meaningful competition for the public schools. The ICSP is large in two ways—it serves a nontrivial portion of the school-aged population, and these students attend a large, diverse, and growing set of participating private schools. Approximately 35,000 students had participated by the final year of our study, representing roughly 3% of the school-aged population in the state that year, and they attended 316 unique private schools. Due in part to its size but also the scope of eligible future participants, what we observed from this bellwether state has implications for the rest of the country. In recent years in particular, private school choice legislation across the country has followed Indiana’s lead in designing large-scale programs with expansive eligibility criteria (EdChoice, 2023). Thus, Indiana’s already established program offers unique insights into what other states might soon experience.

Second, we have designed the study to capture different dimensions of competition within the same study by leveraging variation across geography and time. Although the previous literature is limited by its (often singular) choice of competition measure (e.g., Hoxby, 2003), we can rule out this measurement choice as a contributing factor for why we observed what we observed in Indiana. Within any given year, we coded two overarching measures of competitive threat—the travel time in minutes to the nearest private school competitor and the average count of private school competitors within x minutes—to assess distance between schools. Furthermore, we measured distance via drive time instead of crow’s flight. We calculated drive time before the policy was enacted to avoid potential endogeneity bias that could occur if new private schools established in response to the voucher program and systematically chose to establish near low-performing public schools, for example, where they might find it easier to recruit applicants. Because we took this caution, any observed changes in student achievement associated with the distance measure are likely to reflect a public school response to the competitive pressure associated with passage of the voucher law.

Related to this point about the different ways to measure a competitive threat, we also considered the dynamic impacts of competition by allowing the effects to trend up or down as the program matured. We initially looked at the competitive impact of the ICSP on student test scores in the program’s first year of operation (2011–12), when enrollment numbers were still small. This helped us to cleanly isolate the competitive impact of the private school choice option from school compositional changes resulting from student transfers and resource changes associated with the loss of per-pupil funds, which can occur when there is an enrollment decline in the public school sector. We then repeated the analysis incorporating 5 years of outcome data to maximize statistical power and to take advantage of higher dosage in later years when greater numbers of students exercised this choice option. These data included school years ending in 2011 (the prepolicy year), 2012 (the first postpolicy year), 2013, 2014, 2015, and 2016. We also used this model to test for changes in public school students’ likelihood of graduation that could be attributed to the program.

Third, it may be the case that public schools only responded to the program once the competitive pressure they face passed beyond a certain threshold or they lost a meaningful number of students. We therefore conducted a dosage analysis of test-score changes to search for a potential tipping point in the school choice marketplace. That is, we were interested in estimating whether there is a point at which public school test scores change as a significant mass of students transfers from their assigned traditional public school to a private school of choice by way of the state’s private school choice program, the ICSP. Before this hypothetical point is reached, public schools might more easily dismiss the threat of the private school transfer option, failing to view it as a legitimate threat to their anticipated student enrolment and consequently squashing any efforts to enact meaningful changes in response. To explore this possibility, we calculated the competitive response associated with the drive time to the nearest private school with a minimum of 10, 20 30, 40, or 50 voucher students in the program’s first year. We are the first study to test for the tipping-point theory in this context.

Fourth, many of the prior studies of competitive threat focused on a single outcome, such as student test scores (Figlio & Karbownik, 2016; Hoxby, 2003), but competition effects may show up in longer-term measures, which is why we included graduation as an additional, longer-term outcome to examine.

In summary, the methods used for our comprehensive competitive analysis of the nation’s largest voucher program employed multiple measures of competition, including drive time to the nearest private school and a count of private school competitors within a given drive-time radius and a test for potential impacts at multiple time points (i.e., first program year and then first 5 years). We found null overall effects on public school achievement and null effects on a student’s likelihood of graduation.

The remainder of this study proceeds as follows. First, we review the theoretical framework underlying the competitive-effects literature. We then place the ICSP in the context of the broader private school choice landscape. Next, we review prior studies and identify our contribution. The methods section describes our data and empirical methods and is followed by a presentation of the results. The article concludes with a discussion of the main findings.

Theoretical Framework

Theoretically, the competitive impact of the ICSP on public school students’ test scores and likelihood of graduation could be positive, negative, or neutral. On the one hand, we may observe efficiency gains if public school leaders respond in creative and productive ways to the increased market pressure to achieve better academic outcomes at the same cost (Friedman, 1962). The theory of action by which schools would accomplish this might consist of some combination of the following reforms: a renewed focus on effective instructional leadership practices (Grissom et al., 2013), better use of technology and strategic staffing (Jacob, 2011), efforts to boost organizational capacity and efficiency (Chubb & Moe, 1990), the development of human capital by way of teacher coaching (Kraft et al., 2018), and revisions to school start schedules to better match teenagers’ sleep habits (Heissel & Norris, 2018). Prior research by Rouse et al. (2013) identified specific mechanisms by which we might observe positive effects. These include increasing instructional time, organizing the school day differently, making changes to the learning environment, increasing the resources to which teachers have access (e.g., time for collaborative planning and individual professional development), and decreasing principal control over things such as curriculum decisions, personnel, budget spending, and teacher evaluation. Notably, even nonaction on the part of public schools could result in the perception of achievement gains if the hardest-to-serve and lowest-performing students depart with a voucher, a scenario for which our research design must account.

Focusing on student attainment in isolation for a moment, it may be the case that this is an outcome for which it takes several years for a competitive effect to materialize. This is because of the large-scale operational changes required to impact such a long-term outcome. As such, potential impacts on the likelihood of student graduation might be lagged, taking multiple years of competitive pressure to materialize. It also may be challenging to observe impacts on an outcome that has a high baseline rate due to ceiling effects.

On the other hand, we may observe lower achievement outcomes in traditional public schools in response to competition from private schools if, for example, public schools pursue incoherent curricular or pedagogic reforms or misuse scarce resources. It is worthwhile spending some time to understand how school funding formulas contribute to potential outcomes in a competitive environment. In the absence of hold-harmless provisions in public school funding formulas, such as those enacted in response to the expansion of the charter school sector in Massachusetts (Ridley & Terrier, 2018), student transfers through Indiana’s voucher program lower the amount of state funding received at the school level because it is based on enrollment counts, even if the per-pupil funding amount remains unchanged. Although recent research described a causal relationship between school spending and student achievement, on average (Jackson, 2018), context, policy design, and use all play a role in determining whether and how school spending matters for students (McGee, 2023). Thus, although we might observe a decline in traditional public school student achievement if public school budgets are seriously impacted by the voucher program, this is not assured. If, for example, school budgets are only marginally impacted by a small number of student transfers or public schools can absorb reductions by cutting fixed costs to adapt to their new funding reality, students may be unaffected by the reduction in revenue.

Another avenue by which we could observe negative impacts on public schools is if private schools “cream skim” the brightest and most motivated students and families (Altonji et al., 2015) or push out the most challenging students based on academic ability, motivation, disciplinary records, or cost to educate. This type of compositional churn would result in the perception of lower overall achievement scores in the public schools, even in the face of nonresponse to the competitive threat by public schools. Disproportionate student mobility also could reduce overall peer quality, a resource that contributes to general educational quality in a school. Prior research has largely investigated such claims in the realm of charter schools (e.g., Gilmour et al., 2023; Zimmer & Guarino, 2013), but recent research by Waddington et al. (2022) tested for compositional churn in the context of the same voucher program we examine here, the ICSP. Waddington et al. (2022) found no evidence consistent with the claim of cream skimming, operationalized as private schools attracting high-ability students or students who are less costly to educate because they do not fall in the categories of English language learner or special education student. On the question of push-out, they noted that low-achieving students using vouchers exit their private schools at a slightly higher rate than low-achieving students in public schools.

Alternatively, we may observe neutral impacts on student outcomes. This might occur because of investment in cosmetic reforms that are not strongly associated with improvements to the instructional climate, such as creating brochures or applying fresh paint to the walls of the school facility.

As a final theoretical note, it is worth observing that any initial changes to achievement or attainment outcomes may level off. This latter point about nonlinearity is important and the motivation for why we examined the tipping-point theory in addition to overall impacts. That is, it is unclear a priori whether any achievement or attainment changes—either positive or negative—will be linearly related to competitive pressures because schools likely will respond to the program dynamically, adjusting their response to the voucher program in real time with changes to school practices, staffing, curriculum, in-service opportunities, and so on.

Ultimately, the direction of effects is unclear. Therefore, our null hypothesis is that traditional public schools will demonstrate no change in academic outcomes in response to student transfers through Indiana’s private school voucher program. The alternative hypothesis is that traditional public school achievement will either increase or decrease in response to competition from this targeted private school voucher program.

ICSP

With 53,262 students participating in 2022–23, the ICSP is the largest statewide private school voucher program in the United States. Launched in 2011, there has been a more than 10-fold increase in student participation since its inception due to expanding eligibility criteria over time, which added kindergarten students, siblings of voucher recipients, special education students, and those zoned to failing traditional public schools (Austin et al., 2019). Waddington and Berends (2018) reported that the lowest-income students in grades 5 through 8 who switched to an Indiana private school with a voucher between 2011–12 and 2014–15 saw a decrease in their math scores. Qualitative research by Austin (2019) suggested that, for older students, this may be related to difficulties they experience in adjusting to private school expectations.

The ICSP serves a broad and diverse population and has grown from serving just 0.40% of total students—that is, traditional public, public charter, private, and ICSP scholarship students—in 2011–12 to 2.89% by 2015–16 (Indiana Department of Education [INDOE], 2016). In the first 5 years of the program, most participants lived in metropolitan areas. Over this period, however, a growing percentage of students came from suburban areas, climbing form 16% in 2011–12 to 22% in 2015–16. The percentage of students coming from a rural area or a town has held steady at about 8% each. Of the 32,686 students participating in the ICSP in 2015–16, 22,790 were renewing a previous choice scholarship. Of the remaining new choice students, 18% were special education students.

School participation in the ICSP remained high, climbing from 241 schools in 2011–12 to 316 by 2015–16. A survey of private school leaders revealed three common motivations for participating in the ICSP: an opportunity to expand the school mission to the larger community, a way to help existing private school families, and a way to help needy children in the community (Austin, 2015). In addition to common private school regulations such as the school accreditation requirement, nondiscrimination rules, employee background checks, and compliance with health and safety codes, voucher-accepting private schools in Indiana are required to administer the battery of state tests that is administered annually in public schools and report those data to the state, which then reports an A–F grade for all public schools and voucher-participating private schools based on combined test scores and graduation-rate data. Participating private schools are subject to continuing eligibility regulations, including rules that they must allow the state to review their curriculum, observe classroom instruction, and review instructional materials and the stipulation that they cannot receive a D or F grade in 2 consecutive years.

Numerous features of program design may generate meaningful competitive effects in Indiana. First, the barriers to entry into the competitive landscape are lower for private schools in Indiana than in other states simply because so many private schools already administered the state test before the voucher program became law. Thus, participation in the voucher program does not bring the burden of switching testing regimes for a private school that is considering accepting students through the program. The high baseline rate of voluntary participation in state testing is likely motivated by a rule stating that private schools wishing to participate in the Indiana High School Athletic Association must be accredited, which requires private schools to administer the state test.

Second, private schools receive accountability grades from the state, and those grades are made available to the general public. Unlike choice programs in other states, this practice makes it easy for parents, legislators, advocates, and members of the general public to draw comparisons across the public and private sectors and make assessments about which particular schools are performing well. However, compelling private schools to teach and assess the same standards as public schools may limit differentiation, stifling innovation, and thus may attenuate any potential effects of competition from the program.

Third, the program serves a broad and diverse population. For example, Berends et al. (2023) documented a shift in participation from mostly low- and modest-income families to those with higher incomes between 2011–12 and 2014–15. They also noted a decrease in the percentage of voucher users who were Black and an increase in those who were White or Hispanic owing to policy changes starting in 2013. This means that a greater segment of the general population is incentivized to pay attention to school performance and to consider switching sectors in search of better outcomes for their children. For children aged between 5 and 22 years in 2015–16, there were eight eligibility pathways, any one of which could qualify a student for a voucher. These various pathways cover students from low- and middle- income households (family income could not exceed $67,295 for a family of four), students with special educational needs, students zoned to failing traditional public schools (defined as those receiving an F grade from the state), siblings of voucher recipients, and students who previously received a voucher through the ICSP or an early education grant to attend prekindergarten at a participating private school.

Prior Literature

In this section we review the prior literature on this Indiana program specifically and the prior literature on the indirect impacts of private school choice nationally.

Prior Literature on the ICSP

Prior research on the direct impacts of the ICSP sheds light on program participants by examining changes in their test scores and parental satisfaction rates. Research on the achievement impacts for students who use vouchers in Indiana reported a modest negative impact in math and null effects in English language arts. Waddington and Berends (2018) exact matched on the following variables: race, sex, and baseline year, grade, and school. This matching strategy still resulted in meaningful differences between the two groups at baseline, such as differences in prior test scores, so they included prior achievement as a covariate in the regression model. They reported an average achievement loss of −0.15 SDs in mathematics during students’ first year of attending a private school compared with similar students in traditional public schools. This negative finding in math was consistent across all 4 years of outcome data examined and across most student subgroups. One important point of clarification is that this study focused on students in the upper elementary and middle school grades, whereas nearly 50% of students receiving vouchers were in the lower elementary grades of kindergarten through fourth grade. Although the exclusion of students in nontested grades was unavoidable for their participant-effects analysis, in our study, we paid attention to all voucher users when judging the competitive effects of the program.

There has been one prior analysis of the competitive effects of the ICSP (Canbolat, 2021). The study did not use student-level data to measure academic gains and losses but relied on school-level proficiency data to estimate effects. Canbolat (2021) concluded that voucher participation was associated with a reduction in the percentage of students in that district scoring at the level of proficient or higher on the state math and English language arts exams. One way we were able to build on this important prior work in this study was by widening the focus beyond students who scored around the proficiency cut score to consider possible test score changes for students at all points along the distribution of prior achievement.

This study broadens our understanding of the specific effects of Indiana’s voucher program by focusing on student-level test scores—as opposed to broad proficiency categories—and graduation outcomes so that we can see at a more fine-grained level whether and how traditional public school students are affected by the program.

Prior Literature on the Indirect Impacts of Private School Choice

A 2013 systematic review summarized 21 evaluations of the effect of increased competition resulting from private school vouchers in the United States (Egalite, 2013). Since then, there have been two studies of the competitive effects of Florida’s tax-credit scholarship program (Figlio & Hart, 2014; Figlio et al., 2023), which is also a private school choice program but with a funding stream that’s structured a little differently. There has also been a 2019 meta-analysis on the competitive effects of charter school, voucher school, and public school choice policies, which concluded that the overall effect of competition on student achievement is positive (Jabbar et al., 2019). For the 17 studies with schools as the unit of analysis, the coefficient on private school competition from voucher or other forms of private school choice programs was .22 SDs, significant at p < .05. For studies with students (k = 17) or districts (k = 1) as the unit of analysis, the researchers reported null effects. This meta-analysis found no overall negative impact of school choice on the test scores of students who were “left behind.”

Prior research on the competitive effects of private school choice has focused on test-score outcomes. Table 2 organizes this literature by methodologic approach: difference in differences (seven studies), fixed effects (seven studies), regression discontinuity design (four studies), ordinary-least-squares regression (five studies), and hierarchical linear modeling (one study), with some studies employing multiple methods. The difference-in-differences designs mirror the approach we pursued.

Prior Studies of the Competitive Effects of Vouchers

Notes. Full references for each study cited in this table are provided in the References section.

In addition to the variation in identification strategy just described, these studies also differed in how they operationalized the measure of a competitive threat. For example, Greene and Winters (2004), Greene (2001), and Chakrabarti (2013) used a binary measure indicating the receipt of an F grade to identify which Florida public schools experienced a competitive threat because students in those traditional public schools suddenly became eligible to transfer to a private school at state expense. Other studies measured competitive pressures by proximity. These included the distance to the nearest private school, for example, and the density and diversity of private school competitors within a given radius (Carnoy et al., 2007; Egalite & Mills, 2021; Figlio & Hart, 2014; Greene & Marsh, 2009; Greene & Winters, 2007; Winters & Greene, 2011; Jacob, 2014).

Table 2 summarizes the overarching takeaways from this literature. Most studies (n = 14) found the overall academic impact of competition on outcomes for students who remained in public schools to be positive; a further seven studies found the impact to be neutral to positive, meaning that competitive pressure resulted in zero losses overall and gains for at least some students in some subject areas; one study found the impact of increased competitive pressure from voucher programs on traditional public school performance to be neutral, and one study reported negative effects on proficiency rates. Interestingly, three studies reported that traditional public school performance was strongest when there was a dramatic increase in the intensity of competition from voucher programs (Carnoy et al., 2007; Forster, 2008a; Gray et al., 2014). This suggests that increased competitive pressure may result in higher test scores in traditional public schools up to some unknown point, but the specific nature of this relationship and the tipping point at which positive effects start to level off or change direction is unknown.

Research Methodology

Research Questions

We address the following three research questions:

Does private school competition lead to changes in student outcomes at traditional public schools?

• For achievement outcomes? • For attainment outcomes?

Are there heterogeneous impacts by student and school subgroups?

• For achievement outcomes? • For attainment outcomes?

Is there a tipping point at which increases in student transfers by way of the ICSP predict changes in student outcomes at nearby traditional public schools?

• For achievement outcomes? • For attainment outcomes?

Data

The data for this project came from two sources. First, student-level data on traditional public school students’ graduation status and math and English language arts (ELA) performance on the state test—the ISTEP+—were provided by the Indiana Department of Education and cover school years 2006–07 to 2015–16. The ISTEP+ is administered annually in grades 3 through 8, and the results are used for school and student accountability purposes. Test scores are standardized within grade and year to have a mean of zero and SD of one. Second, physical addresses for all Indiana public and ICSP schools also were provided by the Indiana Department of Education. Using the Maptitude software package, we calculated the expected drive time from each public school to every voucher program–participating private school. These data then allowed us to subsequently calculate drive time to the closest private school or the count of private schools within a given drive-time cap. This measure incorporates road lengths, intersection turn times, speed limits, and anticipated traffic patterns to calculate precise travel time in minutes.

Drive-Time Measure

Distance to school is consistently shown to play an important role in families’ school decisions (Harris & Larsen, 2017). Most previous school choice competitive-effect studies used some measure of physical distance to school to account for this fact, but distance is typically operationalized in a simplistic way as the Euclidean distance between two points (e.g., Egalite & Mills, 2021; Figlio & Hart, 2014; Misra et al., 2012), which ignores the psychological aspect of the distance between two points and implicitly treats distances in urban areas as equivalent to distances in rural areas despite the vast difference in commuters’ experiences. Therefore, we used a measure of competitive pressure based on actual drive time between schools. This measure presents a number of advantages over the crow’s-flight measure of distance. Because it is calculated from millions of anonymized vehicle sensors, it accounts for busy intersections that frequently experience congestion, stop signs and traffic lights along the route, speed limits, and so on. This is particularly important in rural areas where the terrain is hilly because the drive-time measure follows the driving route instead of an aerial distance from point A to point B, which is not a realistic representation of true distance. Our improved distance measure therefore reduces statistical noise by accounting for the psychic barrier of a long commute to school more accurately than the inferior crow’s-flight measures widely used in the prior literature.

Sample Description

The analysis sample contained 2,420,574 observations of 837,797 unique students from 2006–07 through 2015–16 (Table 3). Approximately three quarters of students (74%) were White, 10% were Black, and 10% were Hispanic. The remaining students included Asian, Native Hawaiian or other Pacific Islander, American Indian or Alaska Native, and multiracial students. Almost half the students (47%) were low income, 16% were designated as having special educational needs, and 6% had limited English proficiency.

Descriptive Characteristics of Analysis Sample, Math and English Language Arts Outcomes

Notes. n = 2,420,574 observations, 837,797 unique students, 1,403 unique schools; Competitor school count refers to the number of participating private schools within a 90-minute drive of a given public school.

There were 1,403 unique traditional public schools in our sample, with an average enrollment of 503 students. Over half the schools (55%) had a male principal, and 91% of all principals were White compared to just 7% Black and 1% Hispanic. Teacher demographic characteristics largely mirrored those of principals, with the average value of the Teacher percent White variable at 94%, Teacher percent Black at just 4%, and Teacher percent Hispanic at 1%. The remaining 1% of teachers identified as Asian, Native Hawaiian or other Pacific Islander, American Indian or Alaska Native, or multiracial. On average, 5% of teachers were in their first year of teaching.

Table 3 also presents descriptive statistics of the competition measures on which we relied. The average drive time to the nearest private school competitor was 8.54 minutes, the average count of private school competitors within a 90-minute drive was 104 schools, the average count of private school competitors within a 30-minute drive was 23 schools. Finally, the competitor counts within 20- and 10-minute drives were 11 and 3 schools, respectively. Histograms of the drive-time and competitor-count measures are presented in Figures 1 and 2.

Histogram of the drive-time measure.

Histograms of the competitor count measures.

Empirical Approach

We adopted a two-part empirical approach to address our three research questions, first testing for a competitive impact in the program’s initial implementation year and then testing in all postpolicy years. The difference-in-differences approach is powerful in this setting because it does not require random assignment for causal inference, just the implementation of a school choice policy that differentially impacts different groups of schools. Finally, readers should note that students in charter schools were excluded from the sample to allow us to more cleanly estimate the competitive pressure experienced by district-operated public schools.

Testing for a Competitive Impact in the First Year of the ICSP

In the first part of our analysis, a fixed-effects model was employed in a difference-in-differences framework to estimate the effect of private school competition on public school performance in the program’s first year of operation. The specific fixed effects employed included a student fixed effect—which held constant individual student characteristics, such as motivation and drive—and a grade-by-year fixed effect—which held constant features of the grade-level environment in a given year, such as the academic or behavioral characteristics of a particular cohort of grade-level peers. The difference-in-differences component of the model relied on two differences for identification. The first difference was between schools facing differing levels of competitive pressure, and the second difference was time. The intuition behind the model was that schools with low levels of competitive pressure from private schools form a useful counterfactual for schools with high levels of competition, after accounting for fixed differences between students and grade-level cohorts.

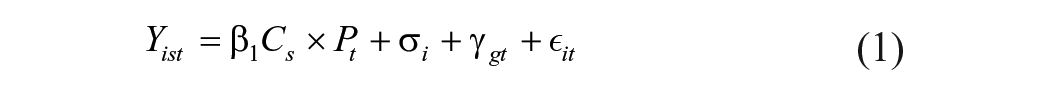

The formal empirical model is

where Yist is the average standardized math or reading score for student i in school s in year t; Cs is one of five possible measures of prepolicy competitive pressure facing school s (i.e., the drive time to the nearest private school or the count of ICSP-participating private schools within a 90-, 30-, 20-, or 10-minute drive-time radius); Pt is an indicator variable identifying the first postpolicy year, 2011–12, although we also can alter this to allow all postpolicy years enter the model and restrict the effects to be constant in the postpolicy period; σi is a student fixed effect; γgt is a grade-by-year fixed effect; and ϵit is an idiosyncratic disturbance term, clustered at the district level. The β1 coefficient on the two-way interaction of the competition measure and the postpolicy indicator is the parameter of interest. The key identifying assumption underlying this research design is that public schools with greater or lesser competitive pressure would have trended similarly in the absence of state-funded tuition vouchers. Evidence to support the assumption of parallel trends is presented in the online Appendix.

As a follow-up to this analysis, we also generated a version of the drive-time variable that only counted drive time to a private school that meets an enrollment threshold (e.g., drive time to the nearest private school that has accepted 10+ choice students). Those results are presented separately.

Testing for a Competitive Impact in All Postpolicy Years

It may be the case that first-year impacts were muted because of the program’s small size at that time. It also might be the case that competitive effects look different as more time passed after the program became law, as more and different types of private schools opted to participate in the maturing program. Fortunately, because we had data from 2006–07 through 2015–16, we were not restricted to looking for impacts in the first year alone. Multiyear results are presented separately. Using this larger sample also allowed us to estimate a dynamic model that accommodates the possibility of time-varying treatment effects. To accomplish this, we changed the model specification in the following way:

where τ allows the policy to have separate effects in the immediate postpolicy year (2011–12), 1 year after initial adoption (2012–13), and 2 or more years after adoption (2013–14, 2014–15, or 2015–16).

We argue that our empirical approach avoids endogeneity bias in two primary ways. First, we ensured that we were focusing on private schools that made location decisions many years ago by mapping private school location from the prepolicy period. Second, our analysis focused on a period of dramatic and sudden expansion in student participation in the voucher program. This meant it is was unlikely that private schools had time to respond to the program by establishing or moving to a new location in such a short timeframe.

Equation (3) presents our strategy for estimating graduation effects:

where Y is a binary indicator for on-time graduation, the competition measures are as previously defined, Xstudent is a vector of student characteristics (e.g., gender, race/ethnicity, and indicators for free/reduced-price lunch eligibility, limited English proficiency, and special education), δ represents a school fixed effect, τ represents a year fixed effect, and μ is a heterogeneous error term, clustered by district. The rationale for switching to a school fixed-effect approach here was driven by the nature of the outcome variable. Whereas student fixed effects are useful for leveraging within-student variation in test scores, which fluctuated from year to year for each student in the sample, graduation is a one-time binary outcome that does not vary within students (either they graduate or they do not). Because there is no variation within graduation status for an individual student, school fixed effects offer a more intuitive approach, allowing us to control for school-level unobserved heterogeneity when measuring the effect of competition.

Mapping Procedure

We used precise drive-time measures to plot the distance between a traditional public school and the nearest private school competitor enrolling ICSP students, accounting for variables such as traffic patterns, road types, and intersection turn times. We successfully geolocated 100% of the 1,697 traditional public schools and the 342 ICSP schools in our sample. We then created distance and travel time matrices between all traditional public schools and ICSP schools for each school year under study.

For visualization purposes, we started by creating drive-time rings in five increments of 10 minutes from each ICSP school for 2011–12 and 2012–13 (Figure 3). Although the rings in Figure 3 are not used in the analysis—which instead relies on the continuous drive-time measure for more precision—they permit identification of geographic areas in Indiana where there are private schools that do not have any traditional public schools located within 30 minutes of their location. When comparing the two figures, it is also easy to spot those geographic areas experiencing change over time. For example, there are areas where the traditional public schools did not have any close ICSP schools in 2011–12 but then did have at least one of the 58 additional ICSP schools in 2012–13.

Drive-time rings from Indiana voucher schools to traditional public schools, 2011–12 and 2012-13.

Establishing Parallel Trends

The key identifying assumption for this analysis was that, in the absence of the ISCP legislation, public schools exposed to relatively higher levels of private school competition would have experienced the same trends as public schools exposed to relatively lower levels of competition. To test this assumption, we calculated year-by-year estimates of the effects of competition from the voucher program, including five leads of the policy-implementation year: 2007, 2008, 2009, 2010, and 2011. Online Appendix Table A1 presents estimates of the coefficient of interest—the interaction between the competition measure and year indicators—from separate regression models that included controls for student and grade-by-year fixed effects. We found little evidence to suggest a violation of the parallel-trends assumption.

Results

We start by presenting the results for the first research question, which asks whether private school competition leads to changes in student achievement and attainment outcomes at traditional public schools.

Findings from Analysis of Achievement Impacts

We first present the findings of the impact of competition on test scores of students in traditional public schools. Table 4 presents estimates of the effects of private school competition on public school students’ math and ELA achievements in the first year (columns 1 and 3) and in all postpolicy years (columns 2 and 4) for the full sample of students. For the drive-time model results presented in the first row, we report a small positive effect in ELA, significant at p < .10, and null effects in math. Across multiple model specifications, we never uncovered a negative effect on math or ELA achievements.

Effects of Private School Competition on Public School Achievement, Math and English Language Arts

Notes. These coefficients represent the interaction between the measure of competition and the postpolicy years: school years ending in 2012 (the first postpolicy year), 2013, 2014, 2015, and 2016. Drive time is reverse coded for ease of interpretation. Competitor school count refers to the number of participating private schools within a 90-, 30-, 20-, and 10-minute drive of a given public school. Models included fixed effects at the student and grade-by-year levels. Standard errors are clustered by district in parentheses (n = 4,188,932).

p < 0.10.

In what follows, we discuss the findings associated with each of the competition measures employed.

Drive Time to Nearest Private School as a Measure of Competition

A decrease in the drive time to the nearest private school competitor was associated with no change in public school math achievement in the years after the policy went into effect. Looking at math achievement, a 10-minute decrease in the drive time to the nearest private school competitor was associated with a 0.0018 SD increase in public school student achievement in the postpolicy period. A 10-minute decrease in drive time was associated with an increase in math achievement of about 1% of an SD. To make sure that this finding wasn’t being driven by our choice of competition measure, we also used the drive-time data to generate alternative measures of competition, including a private school density measure we call the competitor school count. This represents the count of private schools within a given drive-time radius of a public school. Findings from these models are consistent with the drive-time results already discussed.

Competitor School Count as a Measure of Competition

Examining public school responses to the competitor school count in 90-, 30-, 20-, and 10- minute radii, we found essentially null effects. That is, although some specifications revealed a marginally significant (p < .10) positive effect, it was too small to rule out a spurious relationship. For example, an increase of one private school competitor within a 90-minute radius of a given public school was associated with a 0.0002 SD increase in ELA achievement in the first year after the policy went into effect. In sum, increased private school competition was associated with an increase in student test scores of zero to ~1% of an SD depending on the particular measure and level of statistical significance used.

Findings from Analysis of Attainment Impacts

We turn next to the findings of the impact analysis examining traditional public school graduation rates as the outcome variable. Graduation rates were calculated using the standard 4-year adjusted cohort method. The student sample for this analysis consisted of public school students who were expected to graduate in 2011 (i.e., 1 year before the voucher policy became law), 2012 (the first outcome year), or later. This included 450,421 students. Descriptive statistics (see online Appendix Table A6) reveal a very similar distribution of student characteristics as in the test-score impact sample. Most students (77%) were White, 10% were Black, and 7% were Hispanic. The remaining students included Asian, Native Hawaiian or other Pacific Islander, American Indian or Alaska Native, and multiracial students. Thirty-nine percent were low income, 10% were labeled as having special educational needs, and 4% were of limited English proficiency. School-level characteristics also as before, although school enrollment sizes were much larger now, with a mean enrollment value of 1,519 students, which is unsurprising for a sample of high school students.

Student graduation status is a binary variable coded one to indicate a student graduated on time (83% of students in our overall sample) and zero otherwise. Reasons offered for nongraduation included removal by parents (23% of nongraduates), disinterest in the curriculum (5% of nongraduates), and expulsion (1%). Foreign exchange students and those who moved out of state were removed from the sample altogether.

Table 5 displays the effects of private school competition on traditional public school students’ probability of graduating on time. To ease interpretation, we used a linear probability model. Effects can be interpreted in the following manner: An increase in private school competition operationalized as a 1-minute reduction in drive time to the nearest competitor is associated with a β percentage point change in a student’s probability of graduating on time from high school, holding all other variables in the model constant. We present results in three categories: first-year impacts, to gauge the initial response; all postpolicy years, to maximize statistical power; and fifth-year impacts, to give the program time to mature so that schools have more time to calibrate their response to the new competitive threat. We observed null overall effects in all three variations.

Effects of Private School Competition on Traditional Public School Students’ Probability of Graduating on Time

Notes. n = 450,421. These coefficients represent the interaction between the measure of competition and the postpolicy years: school years ending in 2012 (the first postpolicy year), 2013, 2014, 2015, and 2016. Drive time is reverse coded for ease of interpretation. Models include controls for prepolicy competitive pressure, school and year fixed effects, and a vector of student-level control variables (e.g., gender, race/ethnicity, and indicators for special education, limited English proficiency, and low-income status). Robust standard errors are clustered by district in parentheses.

p < .10;**p < .05.

Subgroup Findings

Thus far we have identified neutral to small positive overall achievement responses and neutral overall attainment responses to private school competition after Indiana’s voucher bill became law. The second research question asks whether there are heterogeneous impacts by student and school subgroups, so we now move to this set of results.

To test subgroup effects for the achievement outcomes, the public school student subgroups examined were female, White, Black, Hispanic, low-income, special education, limited English proficiency, and elementary-aged students (Table 6). Interpreting the model that incorporated all available years of data, which was our preferred specification, we observed positive math effects associated with the drive-time measure for female students. Specifically, a 1-minute decrease in drive time to the nearest private school competitor was associated with an achievement increase of 0.0020 SD. Still looking at math outcomes, we also observed marginally statistically significant (p < .10) positive effects for White, low-income, and special education students. The count of private school competitors within a given radius was not associated with any changes in public school students’ math achievement.

Effects of Private School Competition on Public School Achievement, Student Subgroups

Notes. These coefficients represent the interaction between the measure of competition and the postpolicy year(s). Drive time is reverse coded for ease of interpretation. Models included fixed effects at the student and grade-by-year level. Standard errors are clustered by district in parentheses.

p < .10; **p < .05.

Turning next to the student subgroup findings for ELA achievement under our preferred specification, we observed a positive effect for students with limited English proficiency of 0.0047 SD under the drive-time measure and null effects for other student subgroups. The count of private school competitors within a given radius was not associated with any changes in public school students’ ELA achievement.

Given the large number of hypotheses tested in Table 6, we applied a Bonferroni correction, adjusting the significance level to control the familywise error rate. Because we ran five models (drive time and competitor count in 90, 30, 20, and 10 minutes) over eight subgroups in two subjects (math and ELA) over two time periods (first postpolicy year and all postpolicy years), there were a total of 160 regressions. From an original significance level (α) of 0.05, we divided by the number of hypotheses to determine the Bonferroni-adjusted significance level (.05/160 = .0003). With this correction, the results we previously highlighted as statistically significant no longer held. We also considered a Benjamini and Hochberg (1995) adjustment, which controls for the false discovery rate in lieu of the familywise error rate. This alternative approach to multiple hypothesis testing lead us to the same conclusion of null effects (see online Appendix Table A2).

We also checked for dosage effects among these student subgroups (see online Appendix Table A3). As before, no clear patterns emerged, indicating that the overall perceived threat of private school proximity was not differentiated by the number of voucher students attending nearby private schools in the postpolicy years.

Thus far we have examined the achievement outcomes for specific student subgroups within the public school sample. It may be the case, however, that the more relevant subgroup is at the school level, not the student level. That is, the voucher program may be more likely to elicit a competitive response from certain types of public schools defined by the populations they serve, such as schools serving large proportions of low-income students or students of color, many of whom qualify for the voucher program. Online Appendix Table A5 presents the competitive-effects impacts, broken out this time by the following school-level subgroups: high-poverty schools (defined as public schools with student poverty levels above the 75th percentile for the state of Indiana), low-poverty schools (defined as public schools with student poverty levels below the 25th percentile), high-minority schools (defined as schools in which the percentage of students who are White is below the 25th percentile), low-minority schools (defined as schools in which the percentage of students who are White is above the 75th percentile), and urban/suburban schools (defined as schools located in a locale coded as a large, midsized, or small city or suburb compared with schools located in locales coded as a town or rural area). No clear patterns emerged from these data, leading us to conclude that there was a null effect. We also checked for dosage effects among these school-level subgroups (see online Appendix Table A5), but there was little evidence of a pattern of impacts that corresponds with the dosage hypothesis.

We turn next to the subgroup findings for the attainment outcome, graduation, which were reported in Table 5. We found largely null effects, although students in high-poverty traditional public schools experienced a 1% increase in their probability of graduating in the first year after the voucher policy went into effect. This effect faded away in subsequent years. It was also helpful to consider the graduation impact by individual outcome year, in case the overall effect masks heterogeneity over time, but this did not appear to be the case in Indiana in this period. Graduation effects were insignificant in all 5 years examined.

Tipping-Point Analysis

We turn next to the results addressing the third research question, which asks if there is a tipping point at which increases in student transfers by way of the ICSP predict changes in student outcomes at nearby traditional public schools? To motivate this question, we posited that it might be the case that only private schools receiving a critical mass of voucher students actually represent a tangible competitive threat to the traditional public schools in that area. To test this dosage theory, we checked whether there was a change in public school students’ achievement in the first postpolicy year that was associated with a reduction in the drive-time distance to the nearest private school that had accepted a critical mass of voucher students— a minimum of 10, 20, 30, 40, or 50 voucher students (Table 7). At the lowest threshold of 10 voucher students, we observed a marginally statistically significant (p < .10) positive effect on ELA achievement. Specifically, a 1-minute decrease in the drive time to a private school with at least 10 voucher students was associated with an increase in public school achievement of 0.0005 SD in ELA. We did not see statistically significant effects at higher concentrations of voucher students, suggesting that a strong dosage effect was not at play. Thus, it appeared that the private school threat elicited a small positive change in public school achievement after the voucher program was enacted but that the public school response was not mediated by the perceived size of the competitive threat, at least in terms of voucher student count.

Dosage Effects of Private School Competition on Traditional Public School Achievement, Math and English Language Arts (ELA)

Notes. These coefficients represent the interaction between the measure of competition and the first postpolicy year: school year ending in 2012. Drive time is reverse coded for ease of interpretation. Models included fixed effects at the student and grade-by-year levels. Standard errors are clustered by district in parentheses (n = 4,157,559).

p < .10.

When interpreting these findings, it is helpful to note the jump in student participation in year 3, which may have represented a sea change for traditional public schools that had been casually observing up to that point how eligible families were responding to the voucher option. Specifically, student enrollment more than doubled from year 1 to year 2, increasing from 3,911 to 9,139 students, and then jumped to 19,809 students by year 3. Given the dramatic increase in student participation over time due to the expansion and then removal of participation caps, we might expect to observe different effects for each of the outcome years examined in this analysis. In particular, year 3 may be an inflection point at which it became clear to traditional public schools that the voucher program was a legitimate threat that was now beginning to grow at a substantially faster rate. Finally, it also may be the case that traditional public schools needed multiple years to respond to the voucher threat and that the initial improvements that had taken root in earlier years took until year 3 to bear fruit.

Figure 4 documents impact estimates for each outcome year separately to see whether there was an increase in achievement scores around year 3. It is interesting to note the positive and statistically significant increase in math scores that occurred in year 3, which is consistent with the hypothesis that the competitive effect became most salient in this year. In contrast, the effects for ELA are statistically equivalent to zero in all outcome years.

Competitive effects by five individual outcome years, math and English language arts (ELA) using the drive-time measure.

Figure 5 documents a similar pattern using the dosage analysis, which examined the effect of drive time to the nearest private school with 10, 20, 30, 40, or 50 voucher students.

Competitive effects by five individual outcome years, math and English language arts (ELA) using drive time to the nearest private school with at least 10 voucher students.

Discussion

We used five specifications of a new and innovative competition measure to estimate the traditional public school response to competitive pressure from the ICSP. Our first research question asked whether private school competition leads to changes in student outcomes at traditional public schools. We uncovered largely null effects on math and ELA achievements and null effects on students’ probability of graduating from high school. Another way of interpreting these findings is that we found no evidence that increased private school competition was associated with decreases in student achievement in Indiana’s traditional public schools in the program’s first year of operation, when 3,911 students transferred to the private sector by way of this school choice program, or in later years, when as many as 32,000 students participated. These findings may help assuage the concerns of some choice opponents, who expressed fears of negative effects for nonchoosers at the program’s inception.

Our second research question asked whether there were heterogeneous impacts by student and school subgroups. Although we found positive math effects associated with the drive-time measure for female students and positive ELA effects for students with limited English proficiency, these findings were no longer statistically significant after we made adjustments for multiple hypothesis testing. Subgroup analyses of the graduation impact revealed a benefit for students in high-poverty traditional public schools, but this effect disappeared after the first year of policy implementation.

Our third research question asked whether there was a tipping point at which increases in student transfers by way of the ICSP predicted changes in student outcomes at nearby traditional public schools. We found little evidence to support this tipping-point theory. That is, we did not observe a larger response by traditional public schools that were close to private schools accepting a greater number of voucher students, which casts doubt on the tipping-point theory. This finding was consistent with findings from Milwaukee, WI, where Carnoy et al. (2007) reported that “this effect seems to have been a one-time response of all public schools to a change in contextual conditions rather than a continuous and differentiated improvement based on the degree of competition in the Milwaukee school market” (p. 6).

The findings reported in this study differ from Canbolat (2021), who also studied the competitive effects of the ICSP and reported negative effects on the school-level percentage of English language–proficient students on the state test. There are several possible reasons for this, but one stands out as empirically testable. Canbolat focused on school-level proficiency rates, searching for changes in student achievement around the middle of the achievement distribution. It may be the case that competition affects students in the tails of the achievement distribution differently. Thus, we divided students’ baseline math performance into thirds and created indicators for low-achieving (bottom third), average (middle third), and high-achieving (top third) subgroups to see how they subsequently fared once the voucher program was enacted. Results are presented in online Appendix Table A7, but the overall takeaway is unchanged from our primary finding of largely null effects across all three groups.

The implications of this study for policy and practice are as follows: First, policymakers, parents, and other community stakeholders may find it reassuring that there was no evidence of unintended impacts on nonchoosing students left behind in traditional public schools. This should assuage concerns about negative spillover effects as more private school voucher programs establish and existing programs expand. Second, if it is the case that private school choice and traditional public education can coexist without one sector harming the other, attention can now switch to thinking about ways to maximize equity in this broader educational context. Indiana has taken some steps in this direction, focusing on students with special needs. Legislation passed during the 2013 session of the Indiana General Assembly determined that either the private school or the traditional public school district could serve as the provider of special educational services for eligible students. Prior to this change, only traditional public schools could access the state funding that supports special education students. In 2015–16, 3,204 voucher students were eligible for these funds. Of these, 19% selected their private school of choice as the special education service provider, whereas the remaining 81% opted to receive services from the public school system. Moving forward, policymakers may determine that other categories of exceptional students participating in the voucher program (e.g., English language learners and gifted and talented students) also could be eligible for compensatory funding to support their learning regardless of which school sector they have opted into. The third implication of this work stems from the follow-on research questions it raises: Do new private schools locate close to underperforming traditional public schools? Or do they locate in areas where there is little competition from other providers? What are the effects of the ICSP on racial stratification in the public and private school sectors? There is clearly a great need for better data collection on private schools generally. Researchers could design more informative studies about publicly funded programs such as the ICSP if there were detailed, reliable, comprehensive, and accessible data on private schools available. This requires state and even federal investment in better data-collection practices and systems to match efforts already in place on the public school side.

Four limitations to this analysis merit further discussion. First, the empirical approach we employed compares outcomes before and after the policy change in public schools experiencing more and less exposure to competition; it is not a comparison of no competition to some competition. Therefore, readers should bear in mind that estimates derived from this model are conservative estimates of the competitive effect of choice and that the true effect may be larger than what was reported here. Second, the drive-time measure calculated the driving distance between a public and private school to quantify the degree of competitive threat experienced by school A relative to school B. Another way to quantify the threat would be to aggregate the school-level average of the distance from students’ residence to a private school. This would capture the parents’ realized commute but would require access to students’ home addresses, which are not available to us. Third, Indiana is unique in terms of private school regulation and accountability for participating private schools. Thus, the accountability provisions built into the ISCP limit the external validity of our findings. That is, our findings may not hold for voucher programs in states with looser regulations for participating private schools.

Finally, competition from charter schools could complicate our analysis if steps are not taken to account for this. The first way we addressed this was by removing charter school students from the sample of students whose outcomes we examined so that we only estimated effects for students in traditional public schools. Another way that charter schools could complicate our analysis is by muddying our estimates of competitive threat. If areas with high levels of competition from private schools simultaneously experience high levels of competition from charter schools, our estimates could be biased. The second way we addressed the charter school issue, therefore, was by only measuring competitive threat at one time point—our baseline. This is not a dynamic measure because doing so would expose us to the possible bias caused by growth in private school competition in areas where charter schools also expanded during the same period. The only remaining concern is whether charter school growth is uneven within the categories we have defined as having high and low private school competition. Our model does not require that the charter sector remains consistent over time. It only requires that there is consistency in charter school growth within categories. That is, we assume that the public schools we identified as experiencing relatively high levels of private school competition at baseline experienced similar levels of growth in competition from charter schools—both brick-and-mortar charter schools and virtual charter schools—as other public schools categorized as experiencing high levels of private school competition. Similarly, we assume that the public schools we identify as experiencing relatively low levels of private school competition at baseline experience similar levels of growth in competition from charter schools.

Conclusion

Our goal with this study was to measure the competitive impact of the nation’s largest private school voucher program, which was serving ~32,000 students in the final year of our study. We measured whether increased private school competition predicts changes in student achievement and graduation rates at traditional public schools. We also tested for a tipping point at which increases in student transfers by way of the ICSP might predict changes in student achievement at nearby traditional public schools. We accomplished these goals by using an intuitively better measure than prior research in this area and a statewide dataset featuring >837,000 unique students. This is the first study of its kind to examine graduation rates as an outcome in this context.

One of this study’s primary contributions to the broader competitive-effects literature is that competition is measured five different ways, each building on the basic drive-time measure. No prior study in the competitive-effects literature has used this drive-time metric to quantify the competitive threat from a private school voucher program.

Overall, we found no evidence that students in traditional public schools have been negatively affected by the enactment and growth of the ICSP, as measured by their test-score outcomes or probability of graduating from high school. Furthermore, we found evidence of positive math effects for female students and positive ELA effects for students with limited English proficiency. These findings may offer some degree of reassurance to those who expressed fears of negative effects for nonchoosers at the program’s inception.

The primary implication of this study, therefore, is the reassurance it offers to policymakers that an income-targeted private school voucher program does not negatively affect academic outcomes for nonchoosing students in competition-affected traditional public schools. Furthermore, it may simply be the case that there was already a high level of competition between public and private schools in Indiana before the voucher program became law. This would put downward pressure on our estimates of the competitive effects of private school choice.

Our findings are largely consistent with prior literature in this area, which found that competitive effects are approximately zero or positive. In states where stronger positive competitive effects have been observed, it may be the case that those programs have design features that are more likely to yield competitive effects than the ICSP. Further comparative research is necessary to learn more about the potential importance of such policy design differences.

A number of outstanding questions could be addressed by future research on the competitive effects of private school choice. First, what explains the neutral to positive achievement impacts that have been observed to date across various studies? Are these differences related to specific features of program design, methodologic differences in how competition is operationalized, or local context? Over the period we studied, the income eligibility threshold remained at 150% of free and reduced-price lunch eligibility status. Is it the case that these eligibility categories are simply too narrow to exert much of a competitive threat to public schools weighing potential future enrollment losses?

Second, what other methodologies are available to study this phenomenon? We note that the bulk of prior studies on this subject have operationalized competition by using a geocoded measure such as the crow’s-flight distance between a public school and a voucher-accepting private school. We used the actual drive time between two points, which accounts for all manner of topographic, traffic, and social differences that can make the perceived distance between two points feel larger or smaller than the straight-line distance expressed in miles might otherwise communicate. Alternative measures might directly ask public school principals to name and rank their perceived competitors.

Third, what influences the location decisions of new private schools in states with private school choice programs like the ICSP? For example, do they establish nearly underperforming traditional public schools under the assumption that it will be easier to attract students to exit the low-performing school? In contrast, private school entrepreneurs might view such a market as overly saturated, in which case they might instead seek areas where there haven’t previously been a wide range of school choices. Descriptive cross-state research of this nature would be valuable to policymakers seeking to predict how the market might respond to the expansion of choice, particularly by way of universal programs.

Finally, does variation in local education policies play a role in influencing competitive impacts? It could be the case that competitive effects are strongest when choice is less constrained, such as when charter school caps are lifted or limits on the size of a voucher program are removed. Only by drawing on data from multiple states can researchers begin to identify patterns in the variations in findings.

Supplemental Material

sj-docx-1-ero-10.1177_23328584251326927 – Supplemental material for Effects of the Indiana Choice Scholarship Program on Public School Students’ Achievement and Graduation Rates

Supplemental material, sj-docx-1-ero-10.1177_23328584251326927 for Effects of the Indiana Choice Scholarship Program on Public School Students’ Achievement and Graduation Rates by Anna J. Egalite and Andrew D. Catt in AERA Open

Footnotes

Acknowledgements

We are grateful to the Indiana Department of Education for providing access to the state administrative records necessary to conduct these analyses. Jonathan Mills, Ben Scafidi, and R. Joe Waddington provided helpful comments on early drafts of the manuscript. We are also grateful to those who provided feedback at the annual research conferences of the Association for Public Policy Analysis and Management and the Association for Education Finance and Policy. All opinions expressed in this paper represent those of the authors and not necessarily the institutions with which they are affiliated. All errors in this paper are solely the responsibility of the authors.

Declaration of Conflicting Interests

The authors declare no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was supported by EdChoice, a 501(c)(3) nonprofit, nonpartisan organization.

Authors

ANNA J. EGALITE is a professor in the Department of Educational Leadership, Policy, and Human Development at North Carolina State University and a visiting fellow at the Hoover Institution at Stanford University. Her research focuses on the evaluation of education policies and programs intended to close achievement gaps.

ANDREW D. CATT is vice president of training at EdChoice. His research focuses on understanding a K-12 education system that empowers every family to choose the educational environment that is the best fit for each student.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.