Abstract

Canadian middle and high school students (N = 2,278) completed a “CTRL-F” curriculum teaching them how to evaluate online information by reading laterally to investigate sources, check claims, and trace information to original contexts. A subset of CTRL-F students (N = 316) were in classes with teacher-matched control groups (N = 287). Some CTRL-F students (N = 994) completed a delayed posttest. At pretest, students indicated preference for some lateral reading strategies, but preference rarely translated into use. Following instruction, CTRL-F students showed greater preference for and use of lateral reading than controls and greater alignment between preference and use. The curriculum’s impact varied by demographic factors but not by differences in implementation. Gains were maintained from posttest to delayed posttest. Direct instruction and practice in lateral reading appear to strengthen connections between students’ preferences and utilization of these strategies to evaluate online content relevant to academic and personal life.

Keywords

With smartphones and other Internet-enabled devices providing nearly continuous access to the Internet, today’s youth faces an unprecedented barrage of online information. In 2020, 98.0% of Canadian 15- to 24-year-olds used the Internet, with 42.5% online for more than 20 hours per week (Statistics Canada, 2021a). Canadian adolescents and young adults use the Internet for a variety of purposes, with more than three-quarters (76.9%) accessing news online and more than half using the Internet to find goods and services (72.4%) or health information (68.9%; Statistics Canada, 2021b). Because information of varying quality spreads widely and rapidly online, it is often difficult to discern accurate information from misinformation or deliberately false disinformation. This proliferation of misinformation and disinformation can have negative consequences for trust and engagement in a variety of domains. For example, both The National Academies of Sciences, Engineering, and Medicine and The Nobel Prize have convened meetings to understand and address misinformation and disinformation in science (The National Academies of Sciences, Engineering, and Medicine, n.d.; The Nobel Prize, n.d.).

Providing students with strategies for evaluating online information may help them navigate this complex information environment. A number of initiatives are underway internationally to improve fact-checking skills, such as NOVA Misinformation Nation (https://ny.pbslearningmedia.org/collection/nova-misinformation-nation/), Media Literacy Now in the U.S. (https://medialiteracynow.org/), and Faktabaari in Finland (https://faktabaari.fi/). We report initial findings from a large-scale study conducted in Canada that aimed to teach middle and high school students how to fact-check information by reading laterally. Lateral reading is a practice commonly used by expert fact-checkers to contextualize unfamiliar online content by researching what others have to say about it. The process entails leaving the original content (e.g., website, news article, social media post) to find out more about the person or organization promoting the information (i.e., the source) and what other sources have to say about the author’s claims (Wineburg & McGrew, 2019). In contrast to expert fact-checkers, middle school, high school, and college students in the United States rarely question the motives of online sources and are unlikely to leave the original content to see what others have to say about it (McGrew et al., 2018). For example, when asked to evaluate the reliability of a website about climate change, 96.8% of approximately 3,500 U.S. high school students stayed on the website (i.e., did not read laterally; Breakstone et al., 2021b). It is unclear whether students were unaware that they needed to consult external sources or, despite being aware, simply chose not to do so.

The lateral reading approach to evaluating online content aligns with online information problem-solving—that is, the process of addressing “problem[s] that can only be solved by gathering information” (Brand-Gruwel & van Strien, 2018, p. 401). The Information-based Problem Solving on the Internet (ISP-I) model describes the steps of this iterative process as defining the problem, searching for information, selecting relevant results from searches, processing and evaluating the information, and synthesizing information to draw conclusions (Brand-Gruwel et al., 2009). Students’ success in completing these steps may depend on their reading, evaluation, and computer skills as well as self-regulation. Similar steps are outlined in the New Literacies of Online Research and Comprehension framework (Kiili et al., 2018; Leu et al., 2013) and the Multiple-Document Task-based Relevance Assessment and Content Extraction (MD-TRACE) model of multiple text comprehension (Rouet & Britt, 2011). However, in the context of evaluating information found on the Internet, studies of multiple text comprehension have been criticized for reducing their ecological validity by using a predetermined set of sources, as opposed to allowing students to conduct online searches for themselves (Wineburg et al., 2022).

At the same time, lateral reading contradicts checklist-based approaches for assessing credibility, such as the popular CRAAP test (Currency, Relevance, Authority, Accuracy, Purpose), that encourage students to scrutinize the content and layout of information on a given website using cues such as professional appearance and domain name (Blakeslee, 2004). This strategy involving close examination of the original content has been described as vertical reading, which contrasts with the lateral reading exhibited by expert fact-checkers (Wineburg & McGrew, 2019). The CRAAP test and other checklists are poorly suited to students’ information literacy and source evaluation needs in the digital age (Meola, 2004; Scholz-Crane, 1998). However, these approaches have been adapted for the Internet, with detrimental consequences for students’ digital literacy skills (Caulfield, 2018). For example, teaching students to rely on a checklist may make students more vulnerable to misinformation and manipulation because checklists tend to focus on superficial aspects of websites that can be easily manipulated to make information appear credible (Breakstone et al., 2018).

Lateral Reading Instruction

Lateral reading is taught as part of the Stanford History Education Group’s civic online reasoning curriculum, which teaches strategies for answering: “Who is behind this information?” “What is the evidence?” “What do other sources say?” (McGrew et al., 2018; Stanford History Education Group, n.d.). For example, after six lessons in civic online reasoning, U.S.-based high school students (N = 289) performed better than controls (N = 256) in judging the credibility of online content (Wineburg et al., 2022). Other intervention studies teaching civic online reasoning to high school students have produced small, but significant, gains in students’ use of lateral reading to investigate the agendas of organizations funding websites (McGrew, 2020; McGrew & Byrne, 2021). Kohnen et al. (2020) used a think-aloud methodology to evaluate the impact of a one-day intervention on eighth grade students’ credibility evaluation strategies (N = 16). Students produced more accurate, well-supported evaluations and mentioned reading laterally more often (e.g., opening a new tab and googling a website) at posttest than at pretest. However, their credibility evaluation strategies were still dominated by vertical reading strategies and checklist-based techniques at posttest.

At the college level, the American Association of State Colleges and Universities’ Digital Polarization Initiative (American Democracy Project, n.d) taught students how to fact-check online content using strategies that relied heavily on lateral reading. Students were initially taught four fact-checking “moves” (look for trusted work, find the original, investigate the source, and circle back; Caulfield, 2017). These strategies were later adapted to fit the SIFT mnemonic: Stop; Investigate the source; Find better coverage; and Trace claims, quotes, and media to the original context (Caulfield, n.d., 2019). Studies evaluating the efficacy of the Digital Polarization Initiative’s curriculum, as well as other college-focused lateral reading interventions, found that instruction increased use of lateral reading, though gains tended to be modest (Breakstone et al., 2021a; Brodsky et al. 2021a, 2021b; McGrew et al., 2019).

A number of factors may explain students’ modest gains in response to lateral reading instruction. Fact-checkers’ use of lateral reading is complemented by their prior knowledge of how to navigate the online information environment (Wineburg & McGrew, 2019). For example, having recognized the need for additional context, they may consult Wikipedia as a first stop for learning more about a specific organization or person making a claim. When using search engines, fact-checkers may also leverage their prior knowledge of how search results are organized to decide which links to click. In contrast, students at all levels may lack sufficient prior knowledge of online sources and structures to select appropriate additional sources and make accurate conclusions about credibility (Kohnen et al., 2020; McGrew, 2020; Walsh-Moorman & Pytash, 2021). This limited technical understanding is evident in both middle-school and college students’ functionality-oriented mental models of the Internet (Brodsky et al., 2021c).

Students’ success may be further hindered by misconceptions and stigma around use of Wikipedia for learning about unfamiliar topics (Becker, 2015). For example, 77.8% of college students (N = 221) reported that their teachers had discouraged them from using Wikipedia as an information source (Brodsky et al., 2021a). This is in keeping with mixed perceptions of Wikipedia expressed by secondary school teachers, college instructors, and librarians in the United States (Konieczny, 2016; Polk et al., 2015). Explicit instruction in Wikipedia’s standards for ensuring credibility and in reading laterally using Wikipedia may help to improve attitudes and correct misconceptions about it (McGrew & Byrne, 2020).

The current study extends previous investigations of lateral reading instruction at the K–12 level by also examining the impact of instruction on students’ attitudes toward lateral reading, including use of Wikipedia to investigate sources. Increasing an individual’s skill in performing a behavior may improve their attitudes toward that behavior (Steinmetz et al., 2016). That is, students may have greater preference for lateral reading as the “best” approach for evaluating the credibility of online information after receiving lateral reading instruction. Additionally, the direct instruction and practice in lateral reading offered via a curriculum may help to align students’ preferences with their observed use of lateral reading to evaluate online content (Glasman & Albarracín, 2006; Sheeran et al., 2017). For example, Brodsky et al. (2022) found that college students in the United States showed increased preference for lateral reading as the “best” approach for evaluating credibility after receiving direct instruction and practice in using lateral reading strategies, with students who completed more assignments showing greater preference at posttest. Notably, students’ preference for lateral reading predicted their use of lateral reading only after they completed the lateral reading curriculum.

Digital Literacy Instruction in Canadian K–12 Education

Evaluating online information falls within a broader digital literacy skill set that includes some combination of “technological capacities, intellectual competencies, and ethical/behavioural comportment” (Hoechsmann & DeWaard, 2015, p. 4). Canadian provincial and territorial governments all mandate that digital literacy be integrated across the curriculum, but there is no consensus on how this should be done. While K–12 digital literacy instruction varies across provinces and territories, most Canadian students receive some explicit training in evaluating online information. According to the 2018 Programme for International Student Assessment (PISA), 79% of Canadian 15-year-olds reported that they had been taught “how to decide whether to trust information from the Internet” (Barber et al., 2020).

As part of evaluating online information, Canadian students are aware of the importance of verifying the accuracy of information. In a 2014 survey of 5,436 Canadian students in grades 4 to 11, 89% said they try to make sure that what they find online is correct when doing schoolwork, 60% percent said they verify information when reading online, and 56% said they verify information found through social media (Steeves, 2014). The most commonly cited verification strategies were the vertical reading strategy of “search[ing] inside a site [they] think is reliable” (75%) and the lateral reading strategy of “look[ing] at other sources to see if they say the same thing” (69%).

Canadian students are also likely to receive some form of instruction in evaluating online sources. In some provinces and territories, source evaluation is embedded in a broader cross-curricular digital literacy framework; in others, it is taught as part of language arts or social studies. As an example, Ontario’s secondary English curriculum lists the ability to “evaluate the credibility of sources” as an expectation (Ontario Ministry of Education, 2007). Teachers often rely on a combination of resources provided by Ministries and Departments of Education, teachers’ associations, and/or third-party organizations for guidance on how to teach source evaluation skills. The Ontario Ministry of Education offers an Adolescent Literacy Guide instructing students to assess sources based on “authority,” “currency,” “integrity,” “relevance,” and “validity” (Ontario Ministry of Education, 2016). The government of Alberta (2014) offers similar checklist-style guides, which also instruct students to evaluate online sources in terms of presentational style.

The Current Study

Students all over the world need to learn how to determine the credibility of online information. The current study reports findings from a large-scale effort to provide lateral reading instruction to Canadian middle school and high school students in fall 2020. Teachers in participating schools adopted a curriculum called “CTRL-F” that taught students to read laterally by leaving the original content to investigate sources (often using Wikipedia), check claims with other sources, and trace information back to its original context. The CTRL-F curriculum was created by adapting the Digital Polarization Initiative’s SIFT methodology for the Canadian K–12 context (Brodsky et al., 2021a, 2021b; Caulfield, n.d., 2019).

The overarching goal of this study was to evaluate whether the curriculum improved students’ performance on two outcomes: (1) students’ use of lateral reading when evaluating online content and (2) students’ preference for lateral reading as the “best” strategy for evaluating online content. For each outcome, we first asked whether changes in performance from pretest to posttest could be attributed to the CTRL-F curriculum. To do this, we examined pre/posttest differences for “matched” classes in which teachers taught at least one class using the CTRL-F curriculum and at least one other class serving as a “business-as-usual” control. We predicted that conditions would perform comparably at pretest, but that CTRL-F students would outperform controls on both outcomes at posttest. Having established the effects of the curriculum using the “matched” classes, we next investigated which factors may have impacted students’ responsiveness to the curriculum. To do this, we used the full sample of students who received CTRL-F instruction to examine how posttest performance for each outcome varied by student demographics and factors related to implementation of the curriculum.

The current study also extended previous studies of the effects of lateral reading interventions by addressing two gaps in the literature. First, studies have not typically included a delayed posttest to assess effects of the intervention over time. Therefore, for each outcome, we asked whether gains were maintained using a subsample of CTRL-F students who completed a delayed lateral reading assessment, with the hope that gains would be maintained over time. Second, previous studies have not determined whether students’ preferred strategies align with their use of lateral reading to evaluate online content. Therefore, we asked whether the association between lateral reading preference and use varied by condition and time. We expected that the alignment between preference and use would be similar for both conditions at pretest but that CTRL-F students would have greater alignment than controls at posttest due to the curriculum providing increased opportunities for students to practice lateral reading skills.

Method

Participants

Recruitment

In Summer 2020, CIVIX invited 350 middle and high school teachers (grades 7–12) from all Canadian provinces to participate in the CTRL-F research project. Teachers were recruited based on their prior participation in professional development events and use of information literacy resources produced by CIVIX. Teachers with multiple classes were invited to teach at least one class using the CTRL-F curriculum and one or more additional classes as business-as-usual controls. All data were fully deidentified prior to being shared with a team of educational researchers at the City University of New York. The researchers’ involvement in the project was approved by their institution’s IRB.

The full sample consisted of 2,278 middle and high school students in classes that received the CTRL-F curriculum and 287 students in control classes that only completed the pretest and posttest. 1 Of the CTRL-F students, 316 were in classes that had a teacher-matched control class. Additionally, 994 CTRL-F students (from 67 classes) completed a delayed posttest. While the CTRL-F curriculum was designed to be administered during a single term, term lengths in schools varied due to accommodations made for the COVID-19 pandemic. Therefore, not all CTRL-F classes were able to complete the delayed posttest due to time constraints. No effort was made to administer the delayed posttest after the term ended.

Class-level Characteristics

The full sample consisted of 147 middle school and high school classes taught by 80 teachers in nine Canadian provinces; 131 classes received the CTRL-F curriculum and 16 classes served as business-as-usual controls. The 16 control classes were matched with 16 CTRL-F classes taught by the same teachers. Two additional control classes were excluded due to differences in ages of students in the control and CTRL-F classes. The students in the CTRL-F classes taught by these teachers are part of the full sample of CTRL-F students.

The top panel of Table 1 presents summary statistics for factors related to the implementation of the curriculum. The number of students per class was calculated prior to reducing the sample to include only the students who completed both the pretest and the posttest. Class size did not differ for the matched CTRL-F and control classes: t(30) = −0.06, p = .955, Cohen’s d = −0.02.

Class-level and Student-level Characteristics for Matched Classes, Full Sample, and Subsample that Received the Delayed Posttest

Note: k indicates the number of classes. Three CTRL-F students did not provide demographic information at pretest or posttest. For 92 CTRL-F students, information about their age, gender, and languages most often spoken at home was taken from the posttest because it was not provided on the pretest.

These descriptives were calculated at the student level.

Based on a scale of 4 = 4 or less hours to 11 = 11 or more hours.

Participant-level Characteristics

The bottom panel of Table 1 presents summary statistics for students’ self-reported age, gender, and languages spoken most often at home. CTRL-F students and controls did not differ by age, Welch’s t(591.17) = 0.88, p = .380, d = 0.07, or percentage of male students, X2(1) = 0.13, p = .718. Compared to controls, CTRL-F students were less likely to speak English as a primary language at home, X2(1) = 7.97, p = .005, and more likely to report speaking a language other than English or French, X2(1) = 14.83, p < .001. 2 Students were not asked about their race/ethnicity or socioeconomic background due to space and time constraints in administering assessments.

The CTRL-F Curriculum

The CTRL-F curriculum was developed for middle and high school students. It consisted of four lessons requiring an estimated seven hours of instruction to complete in full. An introductory lesson provided an overview to the problem of “information pollution” and introduced students to the concept of lateral reading. Three subsequent lessons covered key lateral reading strategies: Investigate the Source, Check the Claim, and Trace the Information. For each lesson, teachers were provided with a complete lesson plan, slide deck, videos led by disinformation reporter Jane Lytvynenko and digital literacy expert Michael Caulfield, sets of hands-on practice activities, and suggested assessments. Activities and resources were free and available in English or French. Due to the COVID-19 pandemic, the curriculum was designed to readily adapt to in-class, remote/online, or blended learning scenarios. Below, we describe the four lessons that made up the curriculum. The activities, resources, and lesson plans for each module are available as supplemental materials in an openICPSR repository (Brodsky et al., 2023).

Lessons followed a consistent structure: starter activities, slide decks, and introductory videos explained the key concepts and provided a grounding for the lesson. Additional instructional videos modeled how to use lateral reading skills to evaluate the credibility of online information. Students then completed a series of checking activities (built in Google and Microsoft Forms) asking them to apply lateral reading skills to real-world examples. For each activity, feedback illustrated how to apply the skills to the examples, allowing students to check their work. Consolidation activities encouraged students to reflect on their learning and apply the skills to new online content they found on their own.

In the first lesson—“Why Verify?”—students watched an animated video that reviewed the problem of information pollution; the difference between misinformation and disinformation; and factors that contribute to low-quality, false, and misleading online information. Students also completed an activity to demonstrate the limits of their gut instincts before being introduced to the concept of lateral reading. A final video introduced the CTRL-F program and prepared students for learning lateral reading skills.

The second lesson introduced the first lateral reading strategy: Investigate the Source. With instruction supported by a slide deck, worksheet, class discussion, and video, CTRL-F classes explored different people and groups that publish and share information and their motives for doing so. Students then watched two instructional videos demonstrating how to use Wikipedia to evaluate the reliability of a source and the distinction between bias and agenda. Students then used Wikipedia to investigate a series of real-world examples that included junk sources, reputable news organizations, and agenda-driven groups. They consolidated their learning by finding and investigating new sources using Wikipedia. 3

The third lesson introduced the second lateral reading strategy: Check the Claim. This lesson taught students how to verify different types of claims, from headlines and potentially dubious “facts” to statements heard in online videos or from friends and family. Students began this lesson by playing “Three Claims” (a game similar to “Two Truths and a Lie”) to consider the ways in which claims are formed and how false claims can be framed as true. Students then used a slide deck to review differences between facts and opinions and between factual and value claims and applied this knowledge in a worksheet activity. Students then watched an introductory video explaining the role of fact-checking organizations in verifying information, followed by two instructional videos demonstrating how to use Google and fact-checking websites to verify claims of increasing complexity. Students then applied these skills by investigating real-world examples and also engaged in finding and verifying new claims. In practice problems that included claims encountered in visual information, such as when watching a YouTube or TikTok video, students were tasked with extracting a meaningful set of keywords to search to verify the claim.

The final lesson introduced the third lateral reading strategy: Trace the Information. This lesson taught students how to trace claims, quotes, and other media back to their original contexts. As a starter activity, students played “Reporting on Reporting,” a modified version of “Broken Telephone” that aimed to model how information can get distorted or reconfigured as it passes from one source to another. Students then watched an introductory video explaining how online information can become unmoored from its original context. Students then watched instructional videos demonstrating simple information-tracing skills, which included clicking through attribution links to locate an original source, using the “CTRL-F” command to locate relevant text on a webpage quickly, and checking the date to ensure that old information is not circulating as though it were recent. A final instructional video demonstrated how to use a reverse-image search to place online images in their original context. Students then applied these skills by tracing real-world examples to their original contexts and also engaged in finding and tracing new images.

Pretest, Posttest, and Delayed Posttest Assessments

Open-response Problems Assessing Lateral Reading Use

Four open-response problems were used to assess students’ use of lateral reading strategies to evaluate online information (see Table 2). Each problem featured a different type of online content (news source, advocacy group website, article with a claim, photograph). Different online content was used for pretest, posttest, and delayed posttest assessments to reduce the potential risks of “linkrot” (i.e., broken links) and “content drift” (i.e., unexpected changes in link destinations) (Zittrain et al., 2021).

Lateral Reading Problems and Accuracy Scoring for Trust Ratings on Pretest, Posttest, and Delayed Posttest Problem Sets

For the news source and the advocacy group website, students were asked to rate the trustworthiness of the sources on a scale of 1 = Not trustworthy and 5 = Trustworthy (e.g., “How trustworthy do you find The Daily Star as a source of news?” and “How trustworthy do you find this site as a source of information about kids’ health?”). For the article with a claim, students were asked to indicate whether the claim was accurate on a scale of 1 = Definitely not to 5 = Definitely yes (e.g., “Did a U.S. school district arm students with rocks?”). Lastly, for the photograph, students were asked to indicate whether it depicted what was claimed on a scale of 1 = Definitely not to 5 = Definitely yes (e.g., “Is this a photo of a letter from the University of Bern to Albert Einstein?”).

For each problem, students were then asked to explain how they came to their trust assessment in an open-response textbox. For the news source and advocacy group website, students were asked, “How did you decide if this source is trustworthy or not? Please be specific.” For the article with a claim, students were asked, “What are the reasons you chose the answer that you did? Please be specific.” For the photograph, students were asked, “What did you do to determine your answer? Please be specific.”

For each open-response lateral reading problem, students received a lateral reading score indicating if they did (1) or did not (0) use lateral reading to determine their assessment. This score was determined by searching the text response for keywords associated with lateral reading (see Table 3 for keywords and sample responses). Keywords were selected based on keywords used for automated scoring of lateral reading problems in other studies (Brodsky et al., 2021a, 2021b) and bottom-up based on students’ responses. Keywords (e.g., “bias”) that were not reliably associated with lateral reading were removed; see the appendix in supplemental materials (Brodsky et al., 2023) for details on assessing the reliability of the automated scoring procedure. To provide a more conservative measure of information evaluation skills, for each problem, students also received a score of 1 if they read laterally and correctly assessed the online content, as indicated by their trust ratings on the Likert-type scale (see Table 2, far right column). Students who did not read laterally and those who read laterally but provided an inaccurate rating were assigned a score of 0.

Keywords for Scoring Lateral Reading Problems and Sample Responses

Note. All responses were searched for keywords in the row labeled “All” for set and problem.

Multiple-choice Problems Assessing Lateral Reading Preference

Three multiple-choice problems were used to assess students’ preference for lateral reading—that is, whether they would select a lateral reading strategy as the “best” strategy for evaluating sources, verifying claims, and tracing photographs to their source. Each question had four response options, one of which was a lateral reading strategy. Each question was scored as a 1 if the student selected the lateral reading strategy and a 0 if they selected any other option. The problems and response options are presented in the results section.

Each multiple-choice problem used the same type of online content (i.e., a claim, a news source, and a photograph) as one of the lateral reading problems. To assess alignment between students’ preferred strategies and their use of lateral reading, students were assigned a score of 1 if they selected the lateral reading strategy on the multiple-choice problem and read laterally on the open response problem involving the same type of online content. Otherwise, students received a score of 0.

Procedure

Classroom Instruction

All participating teachers attended a two-hour workshop before beginning instruction. The workshop introduced the CTRL-F materials and rationale and guided teachers through the lateral reading strategies at the core of the program. All participating teachers agreed to teach the program (i.e., four modules) in its entirety (~7 hours of instruction). Due to the COVID-19 pandemic, instruction occurred in-person (i.e., classroom), online (i.e., remote), or partially online (i.e., blended; see Table 1).

CTRL-F instruction started in September 2020, though some teachers started instruction later in the term due to the COVID-19 pandemic. Teachers were asked to deliver instruction in CTRL-F classes over multiple class periods. They were instructed to administer the pretest assessment to both CTRL-F and control classes (where applicable) at least one day prior to beginning instruction in their CTRL-F class and administer posttests to both CTRL-F and control classes one week following completion of the CTRL-F curriculum, ideally with the posttest delivered no sooner than 21 days after the pretest. Students in the matched CTRL-F classes had more days between the pretest and posttest than controls, M = 27.6 (SD = 16.8; range 13–71) vs. M = 22.5 (SD = 7.5; range 13–44); Welch’s t(443.19) = −4.94, p < .001, d = −0.40. The full sample of CTRL-F students had an average of 32.9 days (SD = 17.4; range 8–100) between pretest and posttest administration. For the subsample of CTRL-F students who completed the delayed posttest, an average of 34.7 days (SD = 9.1; range 8–57) passed between the posttest and delayed posttest. As mentioned previously, variation in the amount of time between assessments may have been due to differences in term lengths across schools and staggered start dates for instruction.

Aside from completing the pretest and posttest, control classes were conducted in a “business-as-usual” manner. Following delivery of the posttest, teachers were provided with a CTRL-F mini-lesson to teach lateral reading skills to control classes. As a consequence, delayed posttest data were not collected from the control classes.

Pretest, Posttest, and Delayed Posttest Administration

Assessments were administered via Qualtrics survey software, with links distributed to teachers by CIVIX. Assessments were intended to take about 20 minutes to complete. Measures were presented in the following order: open-response lateral reading problems, multiple-choice lateral reading problems, and demographic items. The assessments are available as supplemental materials in the openICPSR repository (Brodsky et al., 2023).

Results

Results are organized by outcome. First, we present results for students’ use of lateral reading, followed by results for students’ preference for lateral reading strategies as the “best” way to evaluate online information. Lastly, we present analyses examining alignment between students’ preference and use. Descriptive and simple inferential analyses were conducted in R (version 4.0.3). We ran mixed-effects logistic regression models using the melogit command in Stata (version 17). Follow-up analyses (margins command) were run to interpret significant interactions. Analysis code and output and supplemental tables are available as supplemental materials in the openICPSR repository (Brodsky et al., 2023).

Use of Lateral Reading Strategies

Changes in Use After Instruction in the Matched Sample

We first examined students’ use of lateral reading when answering the open-response problems shown in Table 2. Table 4, panel 1 presents the percentage of students in the matched classes who read laterally on each problem; panel 2 presents the more conservative percentages of students who both read laterally on the problem and accurately rated the trustworthiness of the associated online content.

Percentage of Students in Matched Classes Who Read Laterally on Each Problem (Panel 1) and Who Read Laterally and Accurately Rated Each Problem (Panel 2) on the Pre/Posttest Problem Sets

Panel 1 of Table 5 presents the mixed-effects logistic regression model predicting whether students read laterally (0 vs. 1) on each of the four open-response problems for the matched classes. Panel 2 presents the same model predicting whether students read laterally and accurately rated the trustworthiness of the online content. To account for problems nested in students and students nested in classes, we included random intercepts at the class and student levels. Our fixed effects were time (pretest vs. posttest), condition (CTRL-F vs. control), and the time × condition interaction.

Mixed-effects Logistic Regression Models Predicting Whether Students Read Laterally on Each Open-response Problem (Panel 1) and Whether They Read Laterally and Accurately Rated Each Problem (Panel 2) (N CTRL-F = 316; N Controls = 287)

p < . 05, **p < .01, ***p < .001.

Note. Models include random intercepts for classes and students nested in classes. In panel 1, random-intercept-only null model ICCs were .21 [.13, .32] and .35 [.27, .44] for class-level and student-level random intercepts, respectively. Adding the interaction of time × condition improved model fit significantly as compared to the main effects–only model (X2(1) = 216.20, p < .001). In panel 2, random-intercept-only null model ICCs were .25 [.15, .37] and .39 [.30, .49] for class-level and student-level random intercepts, respectively. Adding the interaction of time × condition improved model fit significantly as compared to the main effects-only model (X2(1) = 130.39, p < .001).

For use of lateral reading (panel 1), the significant main effect of time was qualified by a significant time × condition interaction. At pretest, students rarely read laterally: out of four open-response problems, M = 0.41 (SD = 0.80) for CTRL-F students and M = 0.39 (SD = 0.74) for controls. Post-hoc analyses indicated that CTRL-F students and controls exhibited comparable probabilities of reading laterally (p = .861) at pretest. In contrast, at posttest, CTRL-F students were more likely to read laterally than controls (p < .001): M = 2.42 problems (SD = 1.39) for CTRL-F students and M = 0.55 (SD = 0.93) for controls. Controls were more likely to read laterally at posttest than at pretest (p = .001) but to a lesser extent than CTRL-F students (p < .001): 81.3% of CTRL-F students read laterally on at least one more problem at posttest than at pretest vs. 25.1% of controls.

Findings were similar for the more conservative measure of reading laterally and accurately rating trustworthiness (panel 2). At pretest, CTRL-F students who read laterally and accurately rated M = 0.30 problems (SD = 0.67) compared to M = 0.26 (SD = 0.62) for controls. At posttest, rates increased to M = 1.88 problems (SD = 1.38) for CTRL-F students compared to M = 0.36 (SD = 0.74) for controls. Additionally, 72.8% of CTRL-F students read laterally and accurately rated at least one more problem at posttest than at pretest vs. 16.7% of controls.

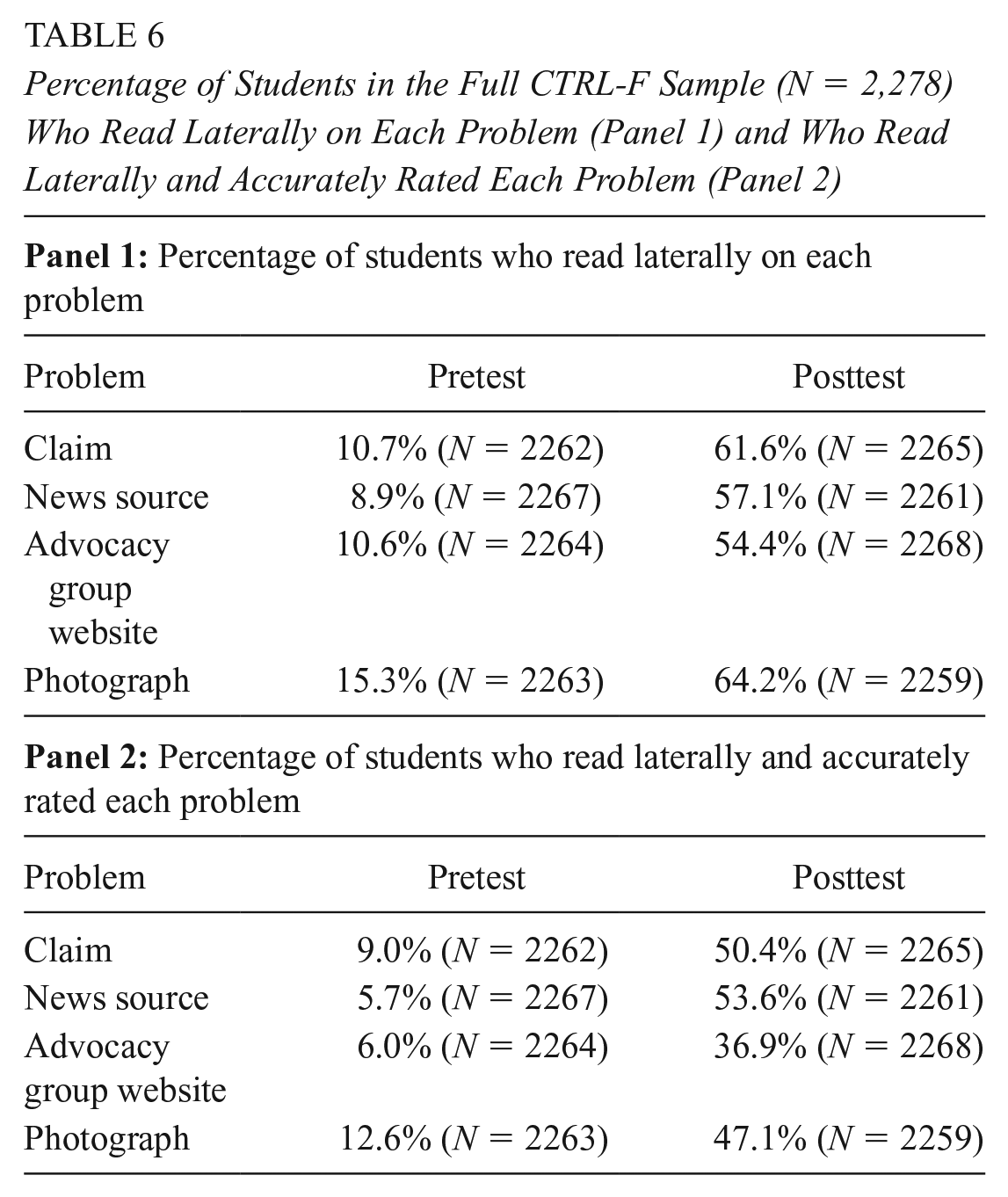

Factors Associated With Posttest Use in the Full Sample

We next used the full sample of CTRL-F students (N = 2,278) to examine how students’ use of lateral reading at posttest varied based on demographic characteristics and factors related to implementation of the curriculum. Table 6 presents the percentage of students in the full CTRL-F sample who read laterally on each problem (panel 1) and who read laterally and accurately rated each problem (panel 2). In the full sample, 77.0% of students read at least one more problem laterally at posttest than at pretest, with the average number of problems read laterally increasing from 0.45 (SD = 0.85) at pretest to 2.36 (SD = 1.46) at posttest. Similarly, 71.6% of students made gains in the number of problems read laterally and accurately assessed, with the average number of problems increasing from 0.33 (SD = 0.70) to 1.87 (SD = 1.39).

Percentage of Students in the Full CTRL-F Sample (N = 2,278) Who Read Laterally on Each Problem (Panel 1) and Who Read Laterally and Accurately Rated Each Problem (Panel 2)

We ran two mixed-effects logistic regression models predicting whether students read laterally on each open-response problem at posttest. The first model included only demographic factors as fixed effects: age, gender (1 = Male), English spoken most often at home (1 = Yes), French spoken most often at home (1 = Yes), and another language spoken most often at home (1 = Yes). The second model added fixed effects for implementation-related factors: class size, number of days between pretest and posttest, teacher-reported hours spent on CTRL-F instruction, and teacher-reported format of instruction (classroom, blended, or remote); see Table 1 for a summary of student-level and class-level characteristics. All continuous variables were standardized. To account for problems nested in students and students nested in classes, each model included random intercepts at the class and student levels.

In the demographics-only model, male students had lower odds of reading laterally than their peers (OR = 0.67, SE = 0.07, p < .001). Students who spoke a language other than English or French most often at home had higher odds of reading laterally (OR = 1.53, SE = 0.21, p =.002). Age was not a significant predictor (OR = 0.91, SE = 0.07, p =.255). Adding implementation-related factors did not improve model fit; none of implementation-related factors were significant. Full regression models are in Supplemental Table 1, panel 1. We then re-ran the models to predict the more conservative measure of reading laterally and accurately rating trustworthiness. Findings were similar to the previous models, see Supplemental Table 1, panel 2.

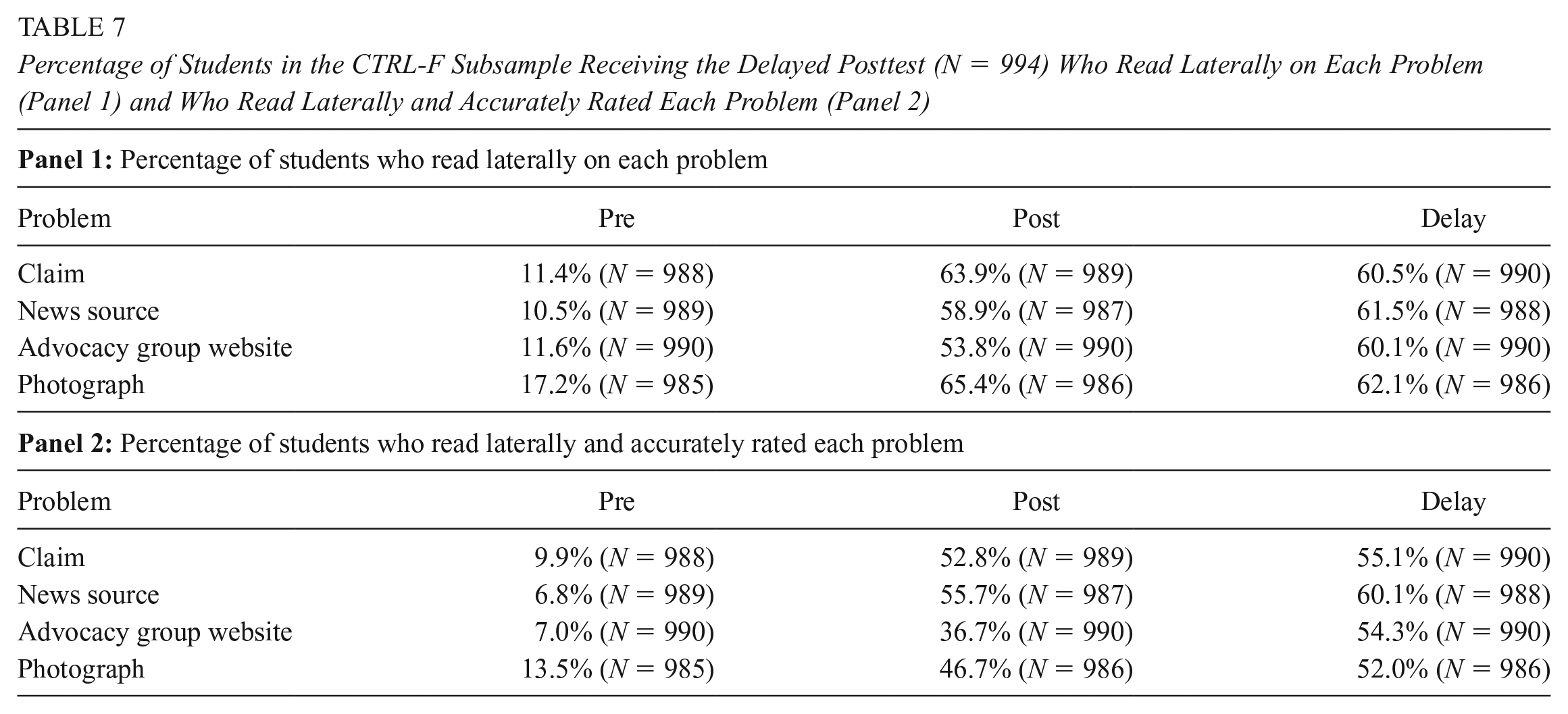

Changes in Use Over Time in the CTRL-F Subsample Receiving the Delayed Posttest

Lastly, we examined whether the use of lateral reading changed over time for the subsample of students who received the CTRL-F curriculum and completed the delayed posttest (N = 994). Table 7 presents the percentages of the delayed CTRL-F subsample that read laterally on each open-response lateral reading problem at pretest, posttest, and delayed posttest.

Percentage of Students in the CTRL-F Subsample Receiving the Delayed Posttest (N = 994) Who Read Laterally on Each Problem (Panel 1) and Who Read Laterally and Accurately Rated Each Problem (Panel 2)

We ran a mixed-effects logistic regression model predicting whether students read laterally on each open-response problem with a fixed effect of time (pretest, posttest, and delayed posttest). The reference level was set as the posttest to see if students’ performance worsened from posttest to delayed posttest. To account for problems nested in students and students nested in classes, we included random intercepts at the class and student levels. Students performed significantly worse at pretest than at posttest (OR = 0.04,SE = 0.003, p < .001) and comparably from posttest to delayed posttest (OR = 1.03, SE = 0.06, p = .537): out of four problems, M = 0.50 (SD = 0.89) at pretest, M = 2.41(SD = 1.40) at posttest, and M = 2.43 problems (SD = 1.47) at delayed posttest. The full regression model is presented in Supplemental Table 2, panel 1.

We re-ran the mixed-effects logistic regression model to predict the more conservative measure of reading laterally and accurately rating trustworthiness. Students performed significantly worse at pretest than at posttest (OR = 0.06, SE = 0.004, p < .001); performance improved further from posttest to delayed posttest (OR = 1.51, SE = 0.08, p < .001): M = 0.37 (SD = 0.72) at pretest, M = 1.91 (SD = 1.33) at posttest, and M = 2.20 (SD = 1.47) at delayed posttest. The full regression model is presented in Supplemental Table 2, panel 2.

Preference for Lateral Reading

Changes in Preference After Instruction in the Matched Sample

We next examined students’ preference for reading laterally when evaluating online content based on which strategies they selected as the “best” approach on the multiple-choice problems. Table 8 presents the percentage of students in the matched classes who selected each response option on the multiple-choice problems. At pretest, the most popular strategy for evaluating a news source was examining the source’s appearance. Less than a third of students selected the lateral reading strategy of learning more about the source’s reputation by consulting other sources, including Wikipedia. However, for evaluating a claim and photograph, students were already most likely to select lateral reading strategies at pretest, possibly reflecting their prior instruction to use keywords and conduct searches.

Percentage of Students in the Matched Classes (N CTRL-F = 316; N Controls = 287) Who Selected Each Response Option on Each Multiple-choice Problem on the Pre/Posttest Problem Sets

Lateral reading strategy taught in the CTRL-F curriculum.

We ran a mixed-effects logistic regression model prediction whether students selected the lateral reading strategy (0 vs. 1) on each multiple-choice problem for the matched classes. To account for problems nested in students and students nested in classes, we included random intercepts at the class and student levels. Our fixed effects were time (pretest vs. posttest), condition (CTRL-F vs. control), and the time × condition interaction. However, the model with both random effects did not converge. Therefore, we kept only the random intercept for students.

The final model is reported in Table 9. Only the time × condition interaction was significant. Post-hoc analyses indicated that, at pretest, CTRL-F students and controls had comparable probabilities of selecting the lateral reading strategy (p = .238): out of three multiple-choice problems, M = 1.36 (SD = 0.91) for CTRL-F students and M = 1.45 (SD = 0.88) for controls. At posttest, CTRL-F students were more likely to select lateral reading strategies than controls (p < .001): M = 2.61 (SD = 0.69) for CTRL-F students vs. M = 1.44 (SD = 0.92) for controls. CTRL-F students showed significant gains in selecting lateral reading strategies from posttest to pretest (p < .001), while controls did not (p = .911): 77.2% of CTRL-F students selected at least one more lateral reading strategy at posttest than at pretest vs. 28.6% of controls.

Mixed-effects Logistic Regression Model Predicting Whether Students Selected the Lateral Reading Strategy on Each of Three Multiple-choice Problems (N CTRL-F = 316; N Controls = 287)

p < . 05, **p < .01, ***p < .001.

Note. Model includes a random intercept for students. Random-intercept-only null model ICC was .13 [.10, .17] for the student-level random intercept. Adding the interaction of time × condition improved model fit significantly as compared to the main effects-only model (X2(1) = 223.05, p < .001).

Factors Associated With Posttest Preference in the Full Sample

We next used the full sample of CTRL-F students (N = 2,278) to examine how students’ preference for lateral reading at posttest varied based on demographic characteristics and factors related to the curriculum’s implementation. Table 10 presents the percentages of the full sample that selected each response option on the three multiple-choice problems. From pretest to posttest, the number of lateral reading strategies selected increased from M = 1.40 (SD = 0.96) to M = 2.54 (SD = 0.77). Most students (72.8%) selected at least one more lateral reading strategy at posttest than at pretest.

Percentage of Students in the Full CTRL-F Sample (N = 2,278) Who Selected Each Response Option on Each Multiple-choice Problem

Lateral reading strategy taught in the CTRL-F curriculum.

We ran mixed-effects logistic regression models to predict whether students selected the lateral reading strategy on each of three multiple-choice problems at posttest. The models included fixed effects for the same demographic and implementation-related factors used to predict lateral reading on the open-response problems. All continuous variables were standardized. To account for problems nested in students and students nested in classes, models included random intercepts at the class and student levels. In the demographics-only model, male students and students who spoke French most often at home had lower odds of selecting the lateral reading strategy than their peers: OR = 0.68, SE = 0.08, p < .001 and OR = 0.61, SE = 0.13, p = .022, respectively. Age was not a significant predictor (OR = 1.04, SE = 0.08, p =.626). Adding implementation-related factors did not improve model fit; none of implementation-related factors were significant. Full regression models are in Supplemental Table 3.

Changes in Preference Over Time in the CTRL-F Subsample Receiving the Delayed Posttest

Lastly, we examined whether the subsample of students who received the CTRL-F curriculum and completed the delayed posttest (N = 994) maintained their gains in preference for lateral reading over time. Table 11 presents the percentages of students selecting each response option on the three multiple-choice problems at pretest, posttest, and delayed posttest.

Percentage of Students in the CTRL-F Subsample Receiving the Delayed Posttest (N = 994) Who Selected Each Response Option on Each Multiple-choice Problem

Lateral reading strategy taught in the CTRL-F curriculum.

We ran a mixed-effects logistic regression model predicting whether students selected the lateral reading strategy on each of three multiple-choice problems with a fixed effect of time (pretest, posttest, and delayed posttest). The reference level was set as the posttest to see if students’ performance worsened from posttest to delayed posttest. To account for problems nested in students and students nested in classes, we included random intercepts at the class and student levels. Students performed significantly worse at pretest than at posttest (OR = 0.09, SE = 0.01, p < .001) and comparably at posttest and delayed posttest (OR = 0.94, SE = 0.08, p = .484): out of three multiple-choice problems, M = 1.40 (SD = 0.99) at pretest, M = 2.57 (SD = 0.76) at posttest, and M = 2.55 (SD = 0.78) at delayed posttest. The full regression model is presented in Supplemental Table 4.

Relations Between Lateral Reading Preference and Use

Using the matched classes, we ran a series of analyses to examine relations between students’ preference to select lateral reading strategies as the “best” approach to evaluate online information and their use of lateral reading on the open-response problems at pretest and posttest. Students’ preference and use aligned if they selected the lateral reading strategy on the multiple-choice problem and read laterally on the open-response problem with the same online content. Table 12, panel 1 presents the percentage of students in the matched classes whose preference and use of lateral reading aligned by problem; panel 2 presents percentages for the more conservative measure of selecting the lateral reading strategy on the multiple-choice problem and reading laterally and accurately rating trustworthiness of the content on the open-response problem.

Percentage of Students in Matched Classes Whose Preferences on Multiple-choice Problems Aligned With Their Use of Lateral Reading on Corresponding Open-response Problems (Panel 1) and With Their Use of Lateral Reading and Accurate Rating of Trustworthiness (Panel 2)

Panel 1 of Table 13 presents the mixed-effects logistic regression model predicting whether students’ lateral reading preference and use (0 vs. 1) aligned on each of the three problems. Panel 2 presents the same model predicting whether their preference was aligned with their use of lateral reading and accurate rating of each problem’s content. To account for problems nested in students and students nested in classes, we included random intercepts at the class and student levels. Our fixed effects were time (pretest vs. posttest), condition (CTRL-F vs. control), and the time × condition interaction.

Mixed-effects Logistic Regression Models Predicting Alignment of Preference and Use of Lateral Reading on Each Problem (Panel 1) and Whether Preferences Aligned with Use of Lateral Reading and Accurately Rating Each Problem (Panel 2) (N CTRL-F = 316; N Controls = 287)

p < . 05, **p < .01, ***p < .001

Note. Models include random intercepts for classes and students nested in classes. In panel 1, random-intercept-only null model ICCs were .24 [.15, .36] and .33 [.23, .43] for class-level and student-level random intercepts, respectively. Adding the interaction of time × condition improved model fit significantly as compared to the main effects-only model (X2(1) = 143.18, p < .001). In panel 2, random-intercept-only null model ICCs were .28 [.17, .41] and .38 [.28, .50] for class-level and student-level random intercepts, respectively. Adding the interaction of time × condition improved model fit significantly as compared to the main effects-only model (X2(1) = 93.06, p < .001).

For alignment of lateral reading preference and use (panel 1), the significant main effect of time was qualified by a significant interaction with condition. At pretest, students rarely exhibited alignment between their preference and use of lateral reading: out of three problems, M = 0.18 (SD = 0.47) for CTRL-F students vs. M = 0.18 (SD = 0.45) for controls. Post-hoc analyses indicated that CTRL-F students and controls had comparable probabilities of preferring the lateral reading strategy and using lateral reading to evaluate online content at pretest (p = .933). At posttest, CTRL-F students were more likely to exhibit alignment between preference and use of lateral reading than controls (p < .001): M = 1.75 (SD = 1.11) for CTRL-F students vs. M = 0.31 (SD = 0.66) for controls. Though controls were more likely to show alignment at posttest than pretest (p = .001), they made smaller gains than CTRL-F students (p < .001). Most CTRL-F students (78.5%) aligned on at least one more problem at posttest than at pretest as compared to only 18.1% of controls.

Findings were similar when predicting alignment between students’ preference for lateral reading strategies and their reading laterally and accurately rating the trustworthiness of the content (panel 2): at pretest, M = 0.16 (SD = 0.45) for CTRL-F students vs. M = 0.14 (SD = 0.41) for controls; at posttest, M = 1.44 (SD = 1.11) for CTRL-F students vs. M = 0.22 (SD = 0.55) for controls. Most CTRL-F students (67.6%) made gains by aligning on at least one more problem at posttest than at pretest in comparison to only 13.2% of controls.

Discussion

In fall 2020, Canadian middle and high school students learned how to evaluate online information using the CTRL-F curriculum, which taught them to read laterally to investigate sources, check claims, and trace information back to its original context. The current study evaluated the impact of the curriculum based on students’ use of lateral reading and their preference for lateral reading as the “best” strategy for evaluating online information. For each outcome, we used a subset of students from “matched” classes (i.e., classes where the teacher taught at least one CTRL-F and one control class) to examine whether changes in outcomes could be attributed to the curriculum. We then used the full sample of students who received the curriculum to examine variation in posttest performance by demographics and implementation-related factors. We also assessed whether gains were maintained over time for the subset of classes that had sufficient time to administer a delayed posttest. Finally, we used the matched classes to see if the curriculum strengthened the connection between students’ preference for and use of lateral reading strategies.

Like students in the United States (McGrew et al., 2018), Canadian middle and high school students rarely read laterally prior to instruction. However, following direct instruction and practice with lateral reading via the CTRL-F curriculum, students were more likely to read laterally. These gains are in keeping with prior studies of the Stanford History Education Group’s civic online reasoning curriculum that taught lateral reading strategies to high school students (e.g., McGrew, 2020; McGrew & Byrne, 2021; Wineburg et al., 2022), as well as studies of information problem-solving interventions (Brand-Gruwel & van Strien, 2018). It is worth noting that students in the “matched” control classes also showed some improvement from pretest to posttest, which might be attributed to inadvertent communication between students in CTRL-F and control classes or slight differences in problem difficulty across problem sets. To increase the internal validity of the findings, future studies should counterbalance problems used at pretest, posttest, and delayed posttest, though this may be difficult to achieve considering the changing links and availability of information online.

Students also made gains on the more conservative measure of reading laterally and making accurate judgments of the trustworthiness of the online content. However, like middle and high school students in the United States (Kohnen et al., 2020; McGrew, 2020), Canadian students did not always draw the correct conclusions, suggesting that they may lack requisite background knowledge to guide their behavior and evaluation strategies once they have left the original content and opened a new tab. Future studies of lateral reading interventions should consider assessing students’ prior knowledge about the online information environment to see if the accuracy of this knowledge is associated with their use of lateral reading and accurately rating trustworthiness. One implication of the findings is that lateral reading instruction should be used to complement, but not supplement, instruction in online information problem-solving, which may include teaching students how to select relevant search results and how to process, evaluate, and synthesize information from multiple sources (Brand-Gruwel et al., 2009).

Although students rarely demonstrated use of lateral reading on open-response problems at pretest, on the multiple-choice problems, over half identified two of the lateral reading strategies (i.e., searching keywords to check a claim, conducting searches to verify a photograph) as “best” methods prior to CTRL-F instruction. Students’ preference to corroborate claims with other sources is in keeping with previous findings that “verifying claims” was one of the most common evaluation strategies reported by Canadian students (Steeves, 2014). Students’ preferred strategies at pretest may also reflect their prior instruction in integrating information from multiple texts in classes such as history or language arts (Barzilai et al., 2018).

In contrast, students at pretest preferred to learn more about a news source’s reputation by examining its website for signs of professionalism. This preference for close examination may be the product of prior instruction in popular, though inappropriate, checklist approaches to information literacy (Breakstone et al., 2018; Caulfield, 2018; Meola, 2004; Scholz-Crane, 1998). Additionally, the lateral reading strategy for the news source explicitly mentioned using Wikipedia to learn more about the reputation of the source. Given both negative perceptions and stigma surrounding Wikipedia use, students may have been reluctant to select that option as their preferred strategy (Becker, 2015; Konieczny, 2016; Polk et al., 2015). On the plus side, students who received the CTRL-F curriculum made gains in their attitudes toward lateral reading, especially in their preference for consulting other sources when evaluating a news source. This is in keeping with findings from behavior change interventions that increasing skill in a behavior is associated with more positive attitudes toward that behavior (Steinmetz et al., 2016).

Students’ posttest outcomes differed by gender and language spoken most often at home. However, posttest outcomes did not vary by students’ age, suggesting that the curriculum was developmentally appropriate for both middle and high school students. At posttest, male students were less likely than their peers to engage in lateral reading, read laterally to reach an accurate conclusion about the trustworthiness of content, and indicate preference for lateral reading as the “best” strategy to evaluate online content. We suspect that the gender difference might be attributable, at least in part, to differences in students’ reading skills, as Canadian male students exhibited worse performance on the 2018 PISA reading assessment than female students (O’Grady et al., 2019). Reading skills are critical for successful online information problem-solving (Brand-Gruwel et al., 2009) but rarely measured as part of lateral reading intervention studies (see Brodsky et al., 2021a, for an exception).

We also found that students’ posttest performance varied by language background. Students who spoke another language besides English or French at home were more likely than their peers to read laterally. We speculate that this may be linked to students’ immigrant status, as prior research suggests that immigrant and nonimmigrant students in Canada have comparable levels of academic achievement (Cheng & Yan, 2018), and children of immigrants may even have higher educational attainment than their peers (Rothon et al., 2009). However, considering immigrant status alone does not take into account the heterogeneity of students’ achievements and experiences, including disparities between racial/ethnic groups (Kamanzi & Collins, 2021). Future studies should include additional demographic measures and measures of academic ability (e.g., reading skills) to better parse out associations with these different factors.

After accounting for influences of demographic factors, we found that posttest outcomes did not vary by implementation-related factors. Given the wide range of class sizes, days between pretest and posttest, hours of instruction, and implementation formats in the full sample of students, these findings suggest that the CTRL-F curriculum can be implemented successfully under diverse circumstances, as was necessitated by the COVID-19 pandemic. However, future studies should include observational data to better document how teachers implement the curriculum and identify other implementation-related factors that may impact students’ responsiveness to the curriculum. This observational data may also help identify areas of the curriculum where students are likely to struggle and where teachers should be provided with additional support (McGrew & Byrne, 2022).

Prior studies of lateral reading interventions, such as those conducted by the Stanford History Education Group, have not examined whether students’ gains remain stable over time. In the current study, students appeared to retain their lateral reading skills one month after the immediate posttest, though not all students who received the curriculum completed the delayed posttest and we did not have data for the control classes. While additional research is clearly needed, these findings are encouraging, and future studies of lateral reading intervention should seek to include a delayed posttest as part of their evaluation design.

Lastly, we found that, following instruction, students who received the CTRL-F curriculum were more likely than controls to select the lateral reading strategy as the “best” approach and act on their preference by reading laterally on the corresponding open-response problem. This finding aligns with Brodsky et al.’s (2022) study involving college students who received the Digital Polarization Initiative’s SIFT curriculum and offers additional evidence that direct instruction and practice with lateral reading serve to strengthen the association between students’ attitudes and behavior (Glasman & Albarracín, 2006; Sheeran et al., 2017). Greater alignment between students’ preference and use of lateral reading following instruction is also in keeping with research on the development of expertise, which highlights that deliberate practice with feedback is necessary for acquiring, retaining, and transferring knowledge and skills (Healy et al., 2014).

Conclusion

The current study assessed the impact of a fact-checking curriculum teaching lateral reading skills to a diverse sample of Canadian middle and high school students. At pretest, students selected some lateral reading strategies as the preferred strategy for evaluating online information yet rarely read laterally when given the opportunity to do so. Compared to controls, students who received the curriculum showed gains in their preference and use of lateral reading and developed a stronger association between their preferences and observed strategy use. Gains in both preference and use of lateral reading were maintained over time. Students’ posttest performance varied by demographic factors but not by implementation-related factors. Teaching students to read laterally offers a promising means of helping students identify trustworthy online content in today’s complex information landscape.

Footnotes

Acknowledgements

The authors would like to thank Michael Caulfield (Center for an Informed Public, University of Washington), whose SIFT lateral reading methodology provided the basis for CTRL-F; Joel Breakstone and Mark Smith (Stanford History Education Group, Stanford University) for consulting on the CTRL-F approach; Jane Lytvynenko for contributing her expertise and for being a public face of CTRL-F; and the teachers and their students across Canada who participated in the CTRL-F: Find the Facts curriculum evaluation.

Author Note

The CTRL-F curriculum materials (i.e., lesson plans, activities, and resources) used in this study are available as Supplemental Materials in the openICPSR repository at https://doi.org/10.3886/E184645V1. A preliminary version of findings was presented in a November 2021 report produced by CIVIX on the digital media literacy gap in Canada available at ![]() . Jessica E. Brodsky is now at the American Association of Veterinary Medical Colleges.

. Jessica E. Brodsky is now at the American Association of Veterinary Medical Colleges.

Declaration of Conflicting Interest

Jessica E. Brodsky and Patricia J. Brooks were paid as consultants by CIVIX.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article. The research reported here was supported by a grant awarded to CIVIX by the Government of Canada: Canadian Heritage’s Digital Citizen Contribution Program.

Open Practices

1.

This was determined after removing 780 CTRL-F and 78 control students who only completed the pretest, posttest, or delayed posttest. An additional 65 students (58 CTRL-F and 7 controls) were removed due to errors in their identifiers, which made it impossible to link data across time points. Lastly, 41 CTRL-F students were removed based on teachers reporting that these students completed less than 50% of the curriculum, and 5 CTRL-F students were removed because they completed the posttest less than a week after the pretest.

2.

English and French are the two official languages of Canada.

3.

This lesson also included an optional extension activity focused on evaluating expertise. Students could watch a video introducing the concept of “domain knowledge,” explaining the role journalists play in consolidating expert opinion for public consumption and providing tips for assessing the authority of individuals who publish content online. Students could then combine this knowledge with their source evaluation skills to assess the credibility of a number of purported experts on health, history, and science-related topics.

Authors

JESSICA E. BRODSKY holds a degree in educational psychology from The Graduate Center, City University of New York;

PATRICIA J. BROOKS is a professor of psychology at The College of Staten Island and The Graduate Center, City University of New York;

DIMITRI PAVLOUNIS is research director at CIVIX, a Canadian education charity dedicated to building the habits and skills of citizenship among youth;

JESSICA LEIGH JOHNSTON is director of communications at CIVIX, a Canadian education charity dedicated to building the habits and skills of citizenship among youth;