Abstract

College students, and adults in general, may find it hard to identify trustworthy information amid the proliferation of false news and misinformation about the COVID-19 pandemic. In Fall 2020, college students (N = 221) in an online general education civics course were taught through asynchronous assignments how to use lateral reading strategies to fact-check online information. Students improved from pretest to posttest in the use of lateral reading to fact-check information; lateral reading was predicted by the number of assignments completed and students’ reading comprehension test scores. Students reported greater use, endorsement, and knowledge of Wikipedia at posttest, aligning with the curriculum’s emphasis on using Wikipedia to investigate information sources. Students also reported increased confidence in their ability to fact-check COVID-19 news. While confidence was related to perceived helpfulness of the assignments, it was only weakly associated with lateral reading. Findings support the effectiveness of the online curriculum for improving fact-checking.

Keywords

While online platforms allow for fast and widespread sharing of information about the coronavirus pandemic (COVID-19), these technologies also allow for the proliferation of false news and deliberate misinformation, often intentionally designed to mislead or push an agenda (World Health Organization [WHO], 2020a). In Fall 2020, we used an online curriculum to teach “SIFT” fact-checking strategies (Stop, Investigate the source, Find better coverage, and Trace claims, quotes, and media to the original context; Caulfield, n.d; 2019) to college students in a general education civics course. SIFT strategies rely on lateral reading, that is, leaving the initial content to investigate sources and verify claims using trusted sources (Wineburg & McGrew, 2017). We examined whether the curriculum improved students’ use of lateral reading when fact-checking online content and students’ perceptions of how it affected their fact-checking of online news about the COVID-19 pandemic.

The COVID-19 “Infodemic”

In a February 2020 report on the COVID-19 outbreak, the WHO noted the presence of a “massive ‘infodemic’- an overabundance of information—some accurate and some not—that makes it hard for people to find trustworthy sources and reliable guidance when they need it” (WHO, 2020b, p. 2). COVID-19 misinformation includes blatantly false information, but it more often takes the form of information that is “reconfigured” (i.e., online content that is mislabeled or miscaptioned, or manipulated and repurposed in various ways; Brennen et al., 2020). Misinformation related to the COVID-19 pandemic has covered a wide range of topics (e.g., the actions of public authorities, virus spread and transmission, conspiracy theories) with potentially dire consequences for individual and public health (WHO, 2020a). For example, exposure to misinformation about the COVID-19 vaccine has increased vaccine hesitancy among adults in the United Kingdom and the United States (Loomba et al., 2021).

While misinformation has accompanied previous disease outbreaks, the rapid spread of information via social media platforms has posed an unprecedented challenge in the context of COVID-19 news (Zarocostas, 2020). Misinformation about COVID-19 has circulated faster on social media platforms (e.g., Facebook, Twitter, and YouTube) than the companies owning these platforms can flag or remove it (Frenkel et al., 2020). Fact-checking organizations have struggled to keep up with the onslaught of questions about the accuracy of COVID-19-related information (Izadi, 2020). Understanding the COVID-19 infodemic remains an area of active investigation (see Tsao et al., 2021, for a brief review). Areas of inquiry include characterizing COVID-19-related false news and misinformation (e.g., Brennen et al., 2020), how it spreads via different social media platforms (e.g., Cinelli et al., 2020; Pulido et al., 2020), and strategies for reducing its spread (e.g., Pennycook et al., 2020).

Surveys of American adults conducted by the Pew Research Center provide some insight into how different age-groups navigated news and information about COVID-19 at the height of the pandemic in 2020. Younger adults (ages 18–29 years) were consistently less likely than older adults to report following COVID-19 news very closely (e.g., 24% of younger adults vs. 37% of the total adults surveyed in November 2020; Pew Research Center, 2020a), while also relying more heavily on social media for news about COVID-19 (30% of younger adults vs. 19% of all the adults surveyed in April 2020; Pew Research Center, 2020b). In April 2020, only 26% of younger adults felt very confident that they knew how to find out if news about COVID-19 was accurate (Pew Research Center, 2020c). Most (52%) were only somewhat confident, and 21% were not too confident or not at all confident. These rates were comparable with those of all the U.S. adults surveyed. Given younger adults’ reliance on social media as a source of news and information about COVID-19, this age-group may benefit from direct instruction in strategies for fact-checking online information. Teaching these strategies as part of general education college courses is one means of reaching individuals in this age-group.

Reading Laterally to Fact-Check Online Information

In response to concerns about the spread of inaccurate information related to the COVID-19 pandemic, the current study sought to teach college students how to fact-check online information using strategies observed in expert fact-checkers. When Wineburg and McGrew (2017) asked a small sample of historians, college students, and expert fact-checkers to evaluate the trustworthiness of online social and political information, they found that fact-checkers tended to read laterally by “[leaving] a website and [opening] new tabs along a horizontal axis in order to use the resources of the Internet to learn more about a site and its claims” (p. 38). Expert fact-checkers were more effective and efficient at judging credibility than either historians or college students, who mostly read vertically by closely examining the features of the original content or website.

One of the sources that expert fact-checkers quickly turn to is Wikipedia. For example, Wineburg and McGrew (2017) observed one fact-checker “taking bearings” when evaluating an organization’s article about children’s bullying by using Wikipedia to learn more about the organization’s agenda. Similarly, in the context of using lateral reading to fact-check information about the COVID-19 pandemic, one could use Wikipedia to find out whether an organization posting vaccine-related information on social media had a hidden agenda. Although both teachers and students may be concerned about the reliability of information on Wikipedia (Garrison, 2018; Konieczny, 2016), Wikipedia articles are as accurate as those in the Encyclopaedia Britannica (Giles, 2005). Wikipedia articles are expected to be written from a neutral point of view, with information accompanied by citations to reliable sources (Wikipedia, n.d.-a). These citations can point students to other sources that they can use to verify claims. Furthermore, vetted Wikipedia editors with administrative privileges are able to protect controversial Wikipedia articles from public editing (Wikipedia, n.d.-b), making it less likely that the information is intentionally manipulated.

However, without direct instruction in lateral reading strategies and opportunities to practice the skills, college students are unlikely to read laterally. Additionally, students’ self-reported information verification strategies do not align with their observed behaviors (Brodsky et al., 2020; Hargittai et al., 2010; List & Alexander, 2018). Multiple recent studies have used performance-based tasks to assess students’ use of lateral reading. McGrew et al. (2018) asked middle school, high school, and college students to evaluate the credibility of online social and political content across media formats. Across tasks, college students rarely used lateral reading to research sources and verify claims. A follow-up study again found that, rather than reading laterally, college students examined features of the online information, like the domain name, About Us page, links, and aesthetics (i.e., vertical reading strategies; Wineburg et al., 2020).

Small-scale efforts to teach college students to read laterally have been moderately successful. College students who received a two-session in-class intervention were more likely to read laterally at posttest than students who did not receive the curriculum (McGrew et al., 2019). Similarly, students in an asynchronous online course who completed online modules teaching lateral reading strategies were more likely to use lateral reading at posttest than at pretest (Breakstone et al., 2021).

In 2018, the American Association of State Colleges and Universities launched the Digital Polarization Initiative (DPI; American Democracy Project, n.d.), which oversaw the piloting of an in-person fact-checking curriculum in colleges and universities across the United States. The curriculum taught college students four “moves” (i.e., strategies) for fact-checking online information, three of which relied heavily on lateral reading (Caulfield, 2017b). Each of these three moves involved reading laterally by leaving the original information to learn more about the information or its source. For the move “look for trusted work,” students were taught to research a claim by using independent sources like Google News and fact-checking sites. To “find the original,” students learned to use a reverse-image search to find out whether an image had been reconfigured. Last, for the move “investigate the source,” students used Wikipedia and Google News to learn about a source and whether it had a hidden agenda or biases that could influence the trustworthiness of the information.

Our institution was one of 11 colleges selected to pilot the DPI curriculum using performance-based assessments similar to those employed by McGrew et al. (2018). In Fall 2018, multiple sections of a general education civics course received the in-person curriculum and were compared with “business-as-usual” control sections at pre- and posttest (see Brodsky, Brooks, Scimeca, Todorova et al., 2021). At posttest, students in the DPI sections were more likely to read laterally and correctly assess trustworthiness than students in the control sections, with 61.0% of students in the DPI sections doing so on at least one of four problems. However, students did not consistently engage in lateral reading across posttest problems. Rather, they read laterally and accurately assessed the online content on just one out of four problems, on average, and some did not read laterally at all. Moreover, despite the curriculum’s encouragement of Wikipedia use, students in the DPI sections made only small gains in their self-reported use of Wikipedia and no gains in their trust of Wikipedia at posttest. Students’ inconsistent use of lateral reading and Wikipedia for fact-checking information may reflect a variety of factors, including attendance and class participation, motivation, and time constraints. These initial results indicate that additional practice with feedback is needed for students to demonstrate the metacognitive awareness, strategy selection, and automaticity of experts (Chi, 2006; Healy et al., 2014).

Research Objectives

In light of the rapid spread of COVID-19 misinformation and challenges associated with determining what information to trust about the pandemic, the current study examined the efficacy of a fully online curriculum for teaching college students how to read laterally to evaluate online information. The curriculum presented four fact-checking moves using the acronym SIFT: Stop, Investigate the source, Find better coverage, and Trace claims, quotes, and media to the original context (Caulfield, n.d; 2019). While students learned about all four SIFT strategies, we focused our assessment efforts explicitly on students’ use of the three SIFT strategies that rely on lateral reading (investigating the source, finding better coverage, and tracing information to the original context).

The study had several goals. First, we examined the extent to which students improved on the skills and content knowledge emphasized in the online SIFT curriculum. Specifically, we were interested in students’ reported use of lateral reading to fact-check online content and their use, endorsement, and knowledge of Wikipedia. Given the ongoing COVID-19 infodemic, we were also interested in how students’ use of lateral reading related to their self-reported confidence in their ability to fact-check COVID-19-related online news and the perceived helpfulness of the SIFT curriculum for fact-checking COVID-19-related online news.

Throughout the study, we chose to take into account individual differences in students’ reading comprehension. Prior studies of lateral reading for fact-checking online information have not considered this factor, even though reading laterally inherently relies on students’ ability to comprehend and integrate text-based information from a variety of sources. Furthermore, the current study was conducted at an open-admissions public college serving a diverse student body. As students’ reading comprehension skills were expected to vary widely (Perin, 2013), we included a measure of reading comprehension as a potential predictor of individual differences in responses to the online curriculum.

Method

Participants

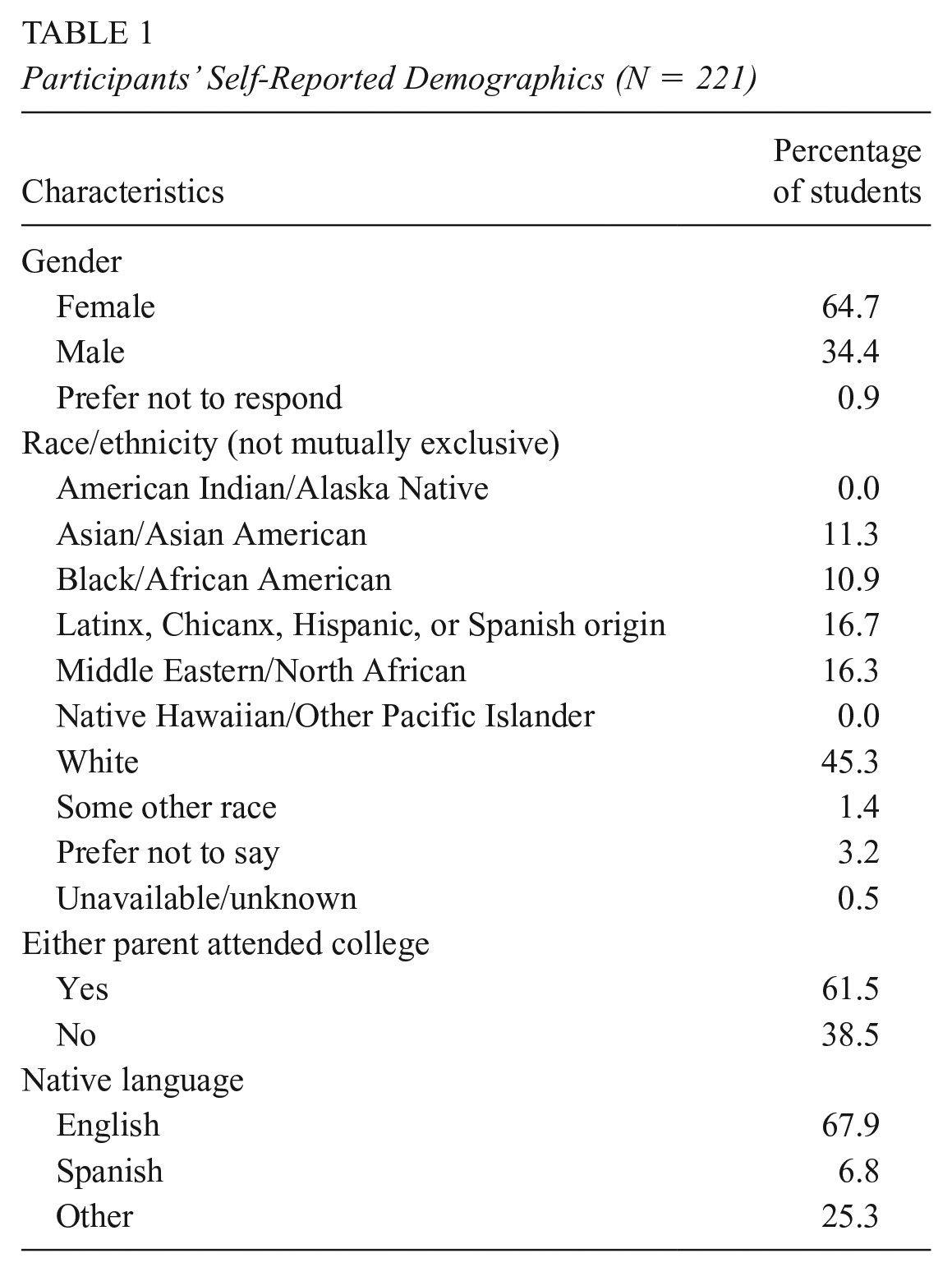

The participants were college students (N = 221; Mage = 19.6 years, SD = 4.5, range = 16–45) enrolled in 10 online sections of a general education civics course taught by seven instructors. The course was offered at a Hispanic-serving public institution located in the northeastern United States. The college has an open-enrollment policy, operates two campuses, and runs classes days, evenings, and weekends. Table 1 shows the self-reported demographics of the sample. About 40% of participants were first-generation students (i.e., neither parent attended college). Data were collected for purposes of course outcomes assessment, with the research protocol classified as exempt by the institutional review board. All participants completed pretest and posttest assessments.

Participants’ Self-Reported Demographics (N = 221)

The Online SIFT Curriculum

Over the course of the semester, the students completed five online homework assignments, which were graded based on completion. The pretest (Assignment 1) was administered in Week 2 of a 15-week semester and served to assess the students’ baseline knowledge and skills in using lateral reading to evaluate online news items spanning a broad range of topics. The posttest (Assignment 5) was administered in Week 15 to assess gains. Materials were presented in the following order on the pretest and posttest: lateral reading problem set; demographic questions (pretest only); online news consumption questions; 1 use of online news sources to find information about the COVID-19 pandemic; 2 confidence in fact-checking COVID-19 news; questions about Wikipedia use, endorsement, instruction, and quality of information; and a reading comprehension test (pretest only). On the posttest, students were also asked about their familiarity with the sources of the online content used in the pretest and posttest and about the perceived helpfulness of the online assignments for fact-checking online news about COVID-19. All the materials are described below or are available in our openICPSR repository (Brodsky, Brooks, Scimeca, Galati et al., 2021).

Assignments 2, 3, and 4 were administered in Weeks 4, 9, and 13 of the semester, respectively. All three assignments provided SIFT instruction via videos and practice problems. Across problems, the students were asked to assess a variety of types of online content (i.e., social media posts, online articles, and images) and received feedback on how to use SIFT strategies to fact-check the information. In Assignment 2, the students watched a series of videos about the SIFT moves and answered questions about information from the videos. They were then guided through using the SIFT moves to investigate the trustworthiness of online content about COVID-19-related public health policy issues overseen by state governments. In Assignment 3, the students reviewed the SIFT moves and practiced using them to assess content related to controversial aspects of the 2020 U.S. Census. In Assignment 4, the students again reviewed the SIFT moves and evaluated online content about the effects of COVID-19 lockdowns on the economy and the effects of announcements about the COVID-19 vaccine on global and U.S. markets.

At the end of each assignment, the students were asked to locate three new articles related to the topic of the assignment and use the SIFT moves to evaluate their trustworthiness. For 6 of the 10 course sections, the online assignments included an additional, brief video and four true/false questions about using Wikipedia to fact-check online content. The five assignments are available in our openICPSR repository (Brodsky, Brooks, Scimeca, Galati et al., 2021). The assignments were administered via Qualtrics software. The instructors posted a link to each assignment to the learning management system.

Lateral Reading Problems

Two sets of lateral reading problems (Problem Sets A and B) were used to assess students’ use of lateral reading at pretest and posttest. The assignment of problem sets to pre- and posttest was counterbalanced across instructors; that is, three instructors’ sections completed Set A at pretest and Set B at posttest, while four instructors’ sections completed Set B at pretest and Set A at posttest. In each problem set, problems were presented in random order.

Each problem set comprised four problems exemplifying different types of online content: an image, a social media post, a website with medical news, and a news article. The online content used in Set A was identical to one of the problem sets in the original DPI curriculum (Brodsky, Brooks, Scimeca, Todorova et al., 2021). The online content used in Set B was updated with content from the Four Moves blog (Caulfield, 2017a), a repository of lateral reading prompts maintained by the author of the original DPI curriculum. The content of Set B was selected to represent the diversity of information sources that students may encounter online, while also matching the formats and content types in Set A. The trustworthiness of the content varied across problems within each set.

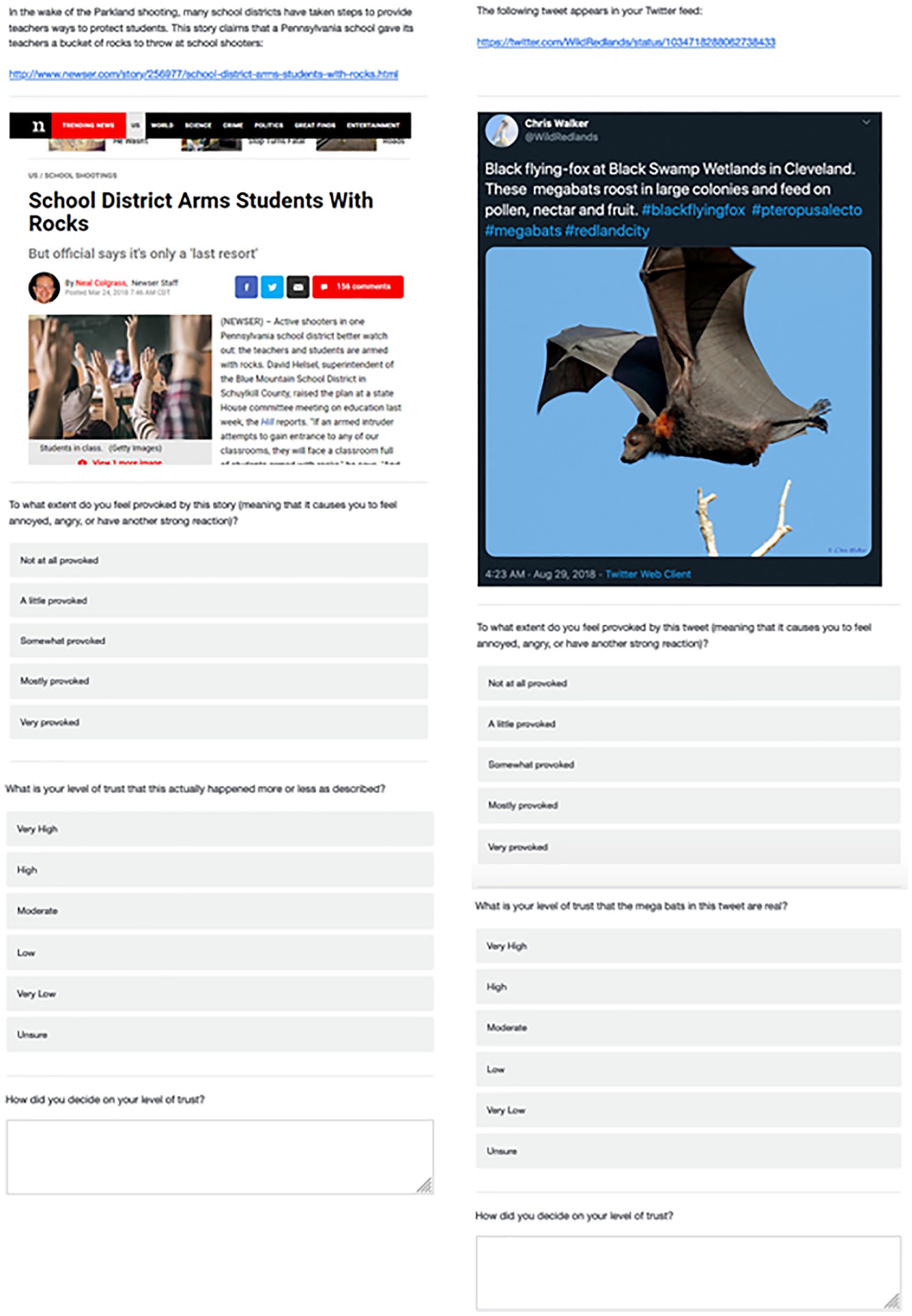

Students could use multiple SIFT moves to fact-check each problem. For example, for the Set A problem featuring an article published on newser.com titled “School District Arms Students With Rocks” (http://www.newser.com/story/256977/school-district-arms-students-with-rocks.html; left panel of Figure 1), students could use Wikipedia to investigate the source (Newser) or look for better coverage by searching keywords from the article’s headline (e.g., “school arms students rocks”) in Google or Google News. For the Set B problem consisting of a tweet claiming to show a photograph of megabats (right panel of Figure 1), students could choose to trace the photograph to get additional context by reverse searching the image using Google. Alternatively, they might try to find better coverage of the topic by typing keywords (e.g., “black flying-fox” or “megabat”) in Google or Google News.

Screenshots of two of the lateral reading problems.

For each problem, the students first rated how provoked they were by the content (1 = not at all provoked to 5 = very provoked) and then indicated their level of trust in the content using a Likert-type scale ranging from 1 = very low to 5 = very high or −9 = unsure. They were then prompted to explain their process for determining their level of trust by responding to the prompt “How did you decide on your level of trust?” using an open-response textbox. At the end of the posttest, the students were asked to indicate their familiarity with each of the sources used in the two problem sets (1 = not at all familiar, 2 = a little familiar, 3 = somewhat familiar, 4 = moderately familiar, and 5 = very familiar). Table 2 presents information about each content type, problem set, and online content used.

Content Type, Online Content, and Correct Trust Assessment for Problem Sets A and B

Scoring of Lateral Reading Problems

We scored each open response for the presence or absence of lateral reading using an automated approach validated in our previous work (Brodsky, Brooks, Scimeca, Todorova et al., 2021). Each response was searched for keywords that indicated use of lateral reading. We sometimes used truncated versions of keywords to increase the likelihood of capturing different forms of a word (e.g., “googl” captured “Google,” “Googling,” and “Googled”). We used the same keywords as Brodsky, Brooks, Scimeca, Todorova et al. (2021). 3 We also added a keyword for SIFT since the original list of keywords was developed prior to the use of the “SIFT” acronym in the fact-checking curriculum. A response was scored as “1” to indicate that the student reported reading laterally if it contained one or more of the target keywords; it was scored as “0” to indicate no lateral reading if the student did not use any of the keywords in the response.

To assess the reliability of the automated scoring procedure, we first used the keywords to score problem sets from a previous semester that had been manually scored for the presence or absence of lateral reading. The only difference in the content of the problem sets between semesters was that the tweet about megabats in Problem Set B in the current study came from a different Twitter account. There was 93.5% agreement between the automated and manual scoring; Cohen’s kappa = .72, which is considered substantial (Landis & Koch, 1977). Having established adequate reliability for the automated scoring procedure, we used the keywords to score the responses for the current study and also manually scored all responses. In the current study, there was 94.1% agreement between the automated and manual scoring (Cohen’s kappa = .80), which is comparable with our previous work (Brodsky, Brooks, Scimeca, Todorova et al., 2021). All disagreements were resolved in favor of the manual coding.

In addition to scoring the responses for evidence that the students used the skill of lateral reading, we also used a more conservative scoring scheme to determine whether or not the students used lateral reading and accurately assessed the trustworthiness of the content (see also Brodsky, Brooks, Scimeca, Todorova et al., 2021). To do this, we scored responses on the Likert-type scale asking about the trustworthiness of the online content as “0” for incorrect trust assessment and “1” for correct trust assessment (see Table 2). We then combined the keyword and trust assessment scores such that “0” indicated no use of lateral reading or use of lateral reading but with an inaccurate trust assessment and “1” indicated use of lateral reading with an accurate trust assessment.

Confidence in Fact-Checking COVID-19 Pandemic News

The students responded to the question “If you wanted to check the accuracy of news that you saw online about the COVID-19 pandemic, how confident are you that you would know what steps to take?” on a Likert-type scale of 1 = not at all confident, 2 = a little confident, 3 = somewhat confident, 4 = mostly confident, and 5 = very confident. This item was adapted from a similar item asked by the Pew Research Center as part of a survey conducted in April 2020 (Gottfried, 2020).

Wikipedia Use

The students responded to the following questions about their use of Wikipedia on a Likert-type scale of 1 = very unlikely to 5 = very likely: “How likely are you to use Wikipedia to look up information for academic purposes (e.g., for a school assignment)?” and “How likely are you to use Wikipedia to look up information for nonacademic purposes (e.g., for personal research)?”

Wikipedia Endorsement

The students responded to the question “How likely are you to recommend Wikipedia as a source of information to one of your classmates?” on a Likert-type scale of 1 = very unlikely to 5 = very likely.

Wikipedia Instruction

The students indicated the extent to which they agreed with the statement “My teachers have discouraged me from using Wikipedia as an information source” on a Likert-type scale of 1 = strongly disagree to 5 = strongly agree.

Wikipedia Quality of Information

The students indicated the extent to which they agreed with nine statements (three reverse scored) about the quality of information on Wikipedia on a Likert-type scale of 1 = Strongly disagree to 5 = Strongly agree. Scale items and reliability analyses are presented in the Results section.

Reading Comprehension

The students read Passage C from the June 2018 New York State Regents High School Examination in English language arts and answered six multiple-choice comprehension questions from the exam (New York State Education Department, 2018). This passage was excerpted from a 2012 article in The Wall Street Journal about fostering socioeconomic antifragility in response to unexpected events (Taleb, 2012, as cited in New York State Education Department, 2018).

Perceived Helpfulness of the Curriculum

The students indicated their agreement with five statements (two reverse scored) about the perceived helpfulness of the online SIFT curriculum for fact-checking online news about the COVID-19 pandemic. The response scale ranged from 1 = Strongly disagree to 5 = Strongly agree. Scale items and reliability analyses are presented in the Results.

Results

The results are organized by research questions. All the analyses were run in R (Version 3.6.2; R Core Team, 2018; RStudio Team, 2016).

Preliminary Analyses for Changes in Lateral Reading

As a first step in evaluating whether the curriculum increased the use of lateral reading to fact-check online information from pretest to posttest, we conducted preliminary analyses to determine the factors associated with the use of lateral reading at pretest and posttest. The preliminary analyses examined the possible effects of the instructor, curricular emphasis on the use of Wikipedia (i.e., inclusion of the additional video and four multiple-choice questions), problem set used at pre- and posttest (i.e., Set A or B), and problem content type (i.e., image, tweet, medical news, or news article). Within each problem set, we also looked at whether lateral reading was associated with student ratings of provocative content and familiarity with the source of the online content used in each problem. We conducted all the preliminary analyses twice, once with reported use of lateral reading as the dependent variable and once with reported use of lateral reading and accurate assessment of the trustworthiness of the content as the dependent variable. All these preliminary analyses are available in our openICPSR repository (Brodsky, Brooks, Scimeca, Galati et al., 2021).

Table 3 presents a breakdown of students who reported reading laterally (top panel) and students who read laterally with an accurate trust assessment (bottom panel) on each problem at pretest and posttest. The score for using lateral reading and accurately assessing trustworthiness is more conservative than the lateral reading score because it takes into account the accuracy of a student’s response on the Likert-type scale asking about the trustworthiness of the online content. Only 12.2% of students reported reading laterally on one or more problems at pretest, and only 9.5% read laterally and made an accurate trust assessment on at least one pretest problem. None of the preliminary analyses were significant in accounting for the variation in lateral reading at pretest.

Percentage of Students Who Read Laterally for Each Problem and Percentage Who Read Laterally and Accurately Assessed the Trustworthiness of the Information for Each Problem by Problem Set and Time (NAB = 126, NBA = 95, N = 221)

In contrast, 52.5% of students reported reading laterally on at least 1 problem at posttest, with 48.9% reporting lateral reading on at least one more problem at posttest than at pretest. On average, the students reported reading laterally on 1.30 (SD = 1.53) of the 4 posttest problems. Lateral reading at posttest varied by problem set (see Table 3, top panel) and instructor. Additionally, students’ familiarity with the sources of problems in Set B was related to their use of lateral reading, though this finding should be interpreted with caution since the image problem in Set B did not include a source and all the students were lacking familiarity ratings for this problem. No other preliminary analyses were significant.

Posttest results were similar with lateral reading and accurate trust assessment as the dependent variable: 46.6% of students reported reading laterally and accurately assessing the trustworthiness of at least 1 problem, and 44.3% made gains of at least 1 problem from pretest to posttest. On average, students reported reading laterally and accurately assessing the trustworthiness of the information on 0.91 (SD = 1.18) of the 4 problems at posttest. Matching the previous set of analyses, lateral reading with an accurate trust assessment at posttest varied by problem set (see Table 3, bottom panel), instructor, and familiarity (again, only for Set B). Additionally, use of lateral reading with an accurate trust assessment varied by content type, such that use of lateral reading with an accurate trust assessment was more likely to occur for the medical news than for the image. No other preliminary analyses were significant.

Since problem set was completely confounded with instructor (i.e. Instructor 2’s students received the easier Set B at posttest, while Instructor 3’s and Instructor 6’s students received the harder Set A at posttest), we could not retain both variables as predictors in our multivariate analyses. We chose to retain the problem set as the predictor since the online implementation of the curriculum was designed to reduce instructor effects. We also did not include familiarity with a problem’s source as a predictor since the data were incomplete for the problems in Set B. All of the nonsignificant factors in the preliminary analyses were excluded from further analysis.

Online Assignment Completion

Most of the students (72.0%) completed all three online homework assignments. Of the remaining students, 3.6% completed between two and three assignments (i.e., partial completion of an assignment), 14.9% completed two assignments, 1.4% completed between one and two assignments, 5.4% completed one assignment, and 2.7% completed no assignments. On average, the students completed 2.62 assignments (SD = 0.71). Given the different levels of engagement with online homework, we included the number of completed assignments (range 0–3) as a predictor in the models.

Students’ Reading Comprehension

The students’ performance on the pretest measure of reading comprehension varied widely. On average, they answered 3.75 (SD = 1.58, Mdn = 4) out of 6 questions correctly, with one student’s score imputed using the mean. Given the diversity of the students’ reading comprehension skills, we included the number of reading comprehension questions answered correctly (range 0–6) as a predictor in the models.

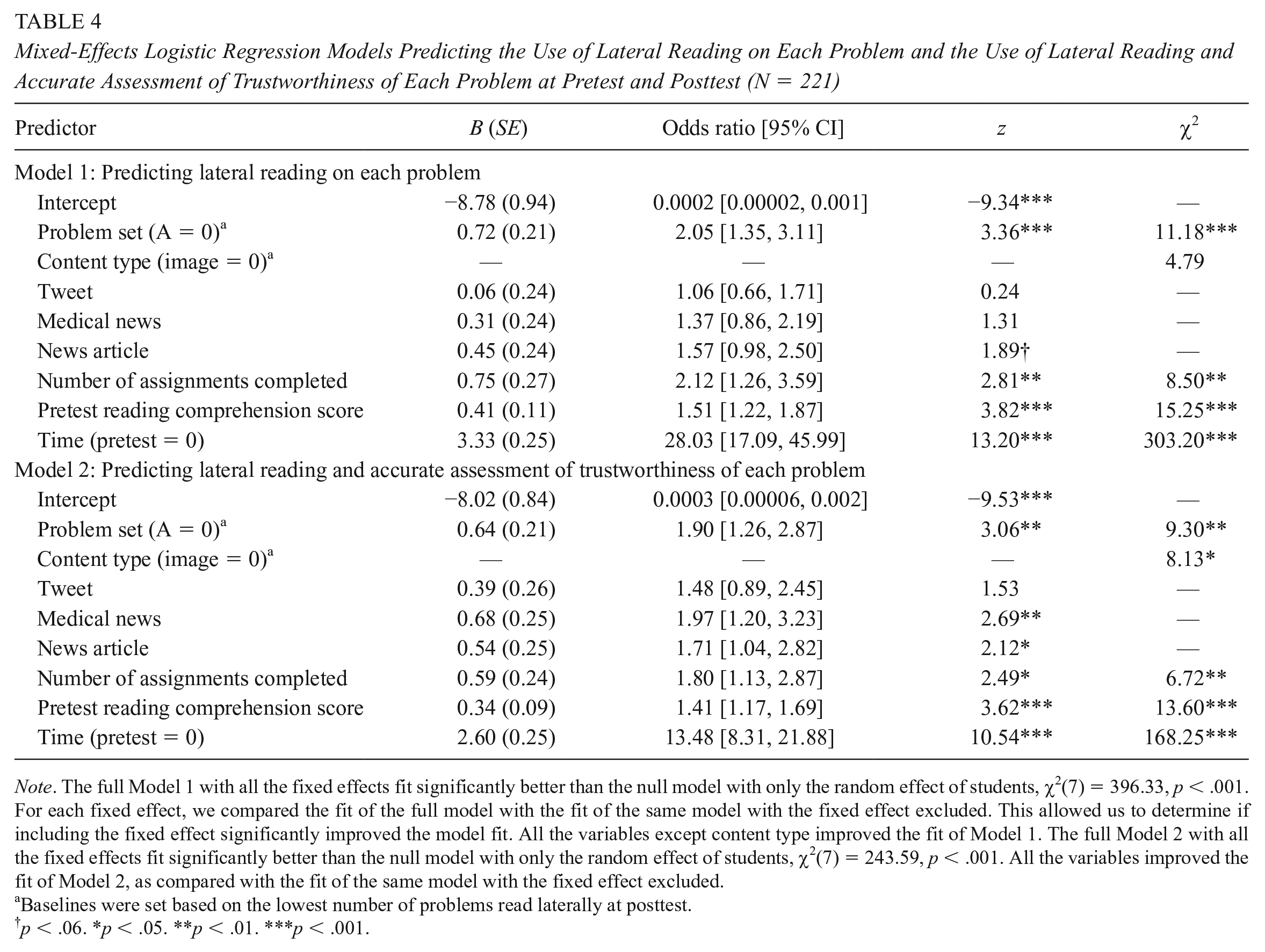

Changes in Lateral Reading

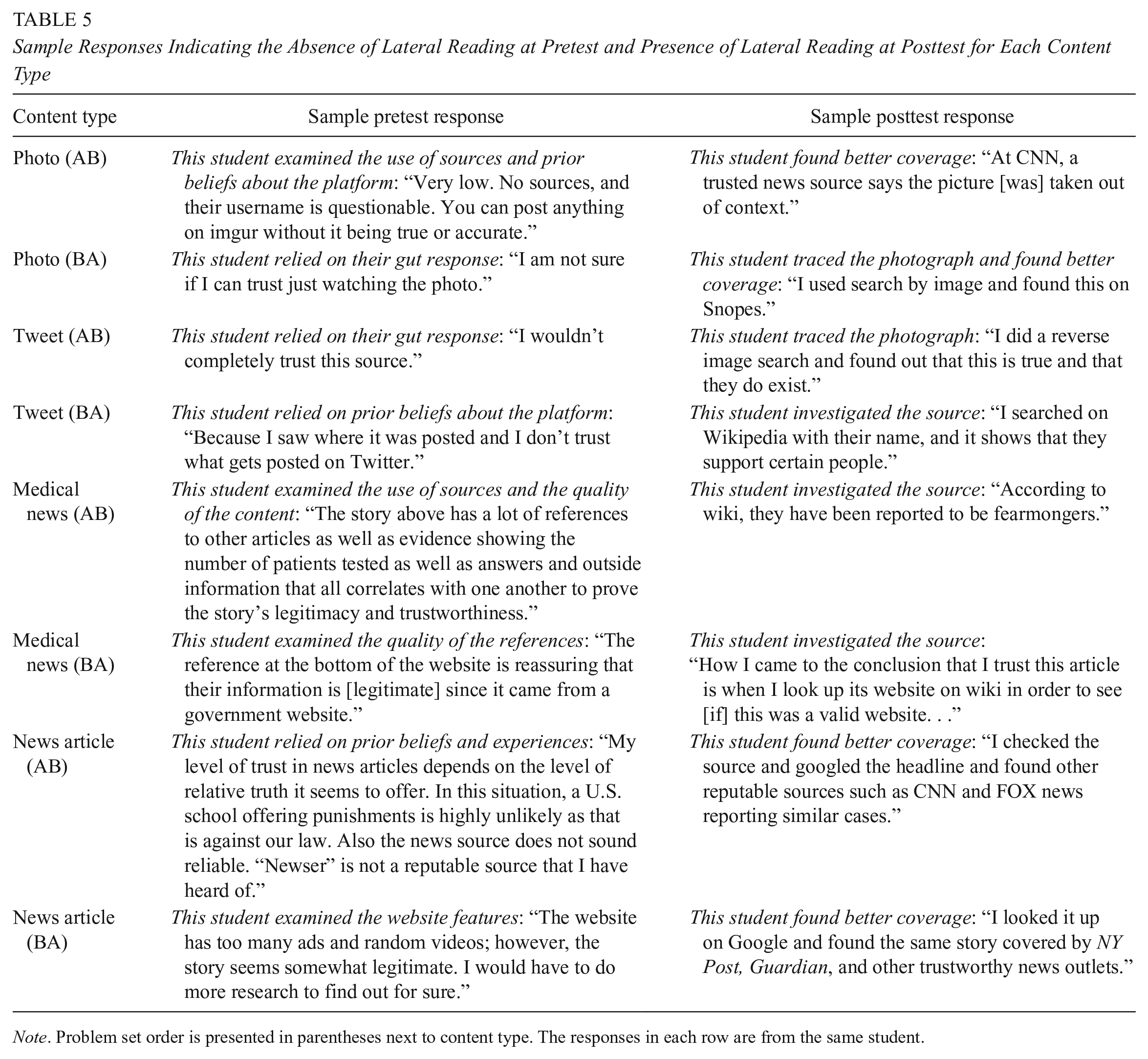

To determine whether the students improved from pretest to posttest in their reported use of lateral reading to fact-check online information, we ran mixed-effects logistic regression models. The models were run with a binomial logit link using the glmer function of the lme4 package (Bates et al., 2014) in R (R Core Team, 2018; RStudio Team, 2016). In Model 1, the dependent variable (coded as “1” or “0” for each problem) indicated whether or not the student reported reading laterally to determine the level of trustworthiness (see Table 4, top panel). The model included an intercept-only random effect for students. The fixed effects were the problem set (Set A vs. Set B), content type (image, tweet, medical news, or news article), time (pretest vs. posttest), number of online assignments completed (range 0–3), and pretest reading comprehension score (range 0–6). Controlling for the problem set and content type, the students were more likely to read laterally on the posttest than on the pretest. Table 5 presents sample responses for the problems to help illustrate the dearth of lateral reading at pretest and the diversity of SIFT moves used by the students at posttest. Students who completed more assignments and those with higher reading comprehension scores were more likely to have read laterally as well.

Mixed-Effects Logistic Regression Models Predicting the Use of Lateral Reading on Each Problem and the Use of Lateral Reading and Accurate Assessment of Trustworthiness of Each Problem at Pretest and Posttest (N = 221)

Note. The full Model 1 with all the fixed effects fit significantly better than the null model with only the random effect of students, χ2(7) = 396.33, p < .001. For each fixed effect, we compared the fit of the full model with the fit of the same model with the fixed effect excluded. This allowed us to determine if including the fixed effect significantly improved the model fit. All the variables except content type improved the fit of Model 1. The full Model 2 with all the fixed effects fit significantly better than the null model with only the random effect of students, χ2(7) = 243.59, p < .001. All the variables improved the fit of Model 2, as compared with the fit of the same model with the fixed effect excluded.

Baselines were set based on the lowest number of problems read laterally at posttest.

p < .06. *p < .05. **p < .01. ***p < .001.

Sample Responses Indicating the Absence of Lateral Reading at Pretest and Presence of Lateral Reading at Posttest for Each Content Type

Note. Problem set order is presented in parentheses next to content type. The responses in each row are from the same student.

In Model 2, the dependent variable (coded as “1” or “0”) indicated whether the student read laterally and accurately assessed trustworthiness on each problem (see Table 4, bottom panel). Controlling for the problem set and content type, students were more likely to have read laterally and reached an accurate trust assessment on the posttest than on the pretest. Additionally, the number of assignments completed and reading comprehension scores were associated with using lateral reading and accurately assessing trust.

Changes in Wikipedia Use, Endorsement, and Knowledge

On the pretest, 77.8% of students Agreed or Strongly agreed with the statement “My teachers have discouraged me from using Wikipedia as an information source.” Given the curriculum’s emphasis on using Wikipedia to learn more about a source, we examined whether the students made gains in their self-reported Wikipedia use and endorsement, and knowledge of Wikipedia quality from pretest to posttest. Table 6 presents the frequencies of responses at pretest and posttest to the two items assessing likelihood of Wikipedia use and the one item assessing Wikipedia endorsement. Wilcoxon signed rank tests indicated that the students self-reported a greater likelihood of using Wikipedia to look up information for academic and nonacademic purposes at posttest than at pretest. They were also more likely to recommend Wikipedia as an information source to one of their classmates at posttest (see Table 6).

Percentage of Students Who Indicated Each Response for Wikipedia Use and Endorsement by Time (N = 221)

Note. For each question, each row sums to 100%, with slight deviations due to rounding.

p < .001.

To further examine these changes, we ran a series of mixed-effects ordinal logistic regression models with a logit link using the clmm function of the ordinal package (Christensen, 2019) in R (R Core Team, 2018; RStudio Team, 2016). 4 The models included an intercept-only random effect for students. Our fixed effects were time (pretest vs. posttest), number of online assignments completed (range 0–3), and pretest reading comprehension score (range 0–6).

Model 1 used students’ self-reported likelihood of using Wikipedia to look up information for academic purposes as the ordinal dependent variable. The students reported a higher likelihood of using Wikipedia for academic purposes at posttest than at pretest (odds ratio [95% CI] = 8.50 [5.45, 13.27], z = 9.42, p < .001). Students who completed more of the online assignments also reported a higher likelihood of using Wikipedia for academic purposes (odds ratio [95% CI] = 1.53 [1.03, 2.27], z = 2.09, p = .036). Model 2 used students’ self-reported likelihood of using Wikipedia to look up information for nonacademic purposes as the ordinal dependent variable. The students reported a higher likelihood of using Wikipedia for nonacademic purposes at posttest than at pretest (odds ratio [95% CI] = 3.32 [2.26, 4.87], z = 6.14, p < .001). Students with higher reading comprehension at pretest also reported a higher likelihood of using Wikipedia for nonacademic purposes (odds ratio [95% CI] = 1.31 [1.10, 1.57], z = 2.96, p = .003). Model 3 used students’ self-reported likelihood of recommending Wikipedia as a source of information to one of their classmates as the ordinal dependent variable. The students reported a higher likelihood of recommending Wikipedia at posttest than at pretest (odds ratio [95% CI] = 8.49 [5.40, 13.36], z = 9.26, p < .001). The other variables in the model were not significant.

Table 7 presents descriptives for the nine-item scale assessing knowledge of Wikipedia quality at pretest and posttest. The nine-item scale showed adequate internal consistency (Cronbach’s α = .78). However, two items (“Wikipedia is a biased source of information” and “Wikipedia articles express the writer’s point of view and are not neutral”) had low item-rest correlations (.26 and .10, respectively). After removing these two items, reliability increased to α = .82, and all item-rest correlations were greater than .30 (Field et al., 2012). We averaged the students’ responses to the seven-item scale to create a single Wikipedia knowledge score.

Percentage of Students Who Indicated Each Response for Knowledge of Wikipedia Quality by Time (N = 221)

Note. For each item, each row sums to 100%, with slight deviations due to rounding.

Items dropped due to low item-rest correlation.

We ran a mixed-effects linear regression model using the lmer function of the lme4 package (Bates et al., 2014) in R (R Core Team, 2018; RStudio Team, 2016). 5 Our dependent variable was students’ mean score on the scale assessing their knowledge of Wikipedia quality. We included an intercept-only random effect for students. Our fixed effects were time (pretest vs. posttest), number of online assignments completed (range 0–3), and pretest reading comprehension score (range 0–6). The students’ knowledge of Wikipedia quality was more accurate at posttest than at pretest, B (SE) = 0.70 (0.05), t = 13.48, p < .001. Both the number of online assignments completed, B (SE) = 0.12 (0.05), t = 2.45, p = .015, and pretest reading comprehension scores, B (SE) = 0.06 (0.02), t = 2.79, p = .006, were positively associated with accurate knowledge about Wikipedia quality.

Changes in Confidence in Fact-Checking Online COVID-19 News

Table 8 shows students’ self-reported confidence in their ability to fact-check online news about the COVID-19 pandemic. At the beginning of the semester, only 27.6% of students were mostly or very confident in their ability to fact-check online news about the COVID-19 pandemic. However, at the end of the semester, 55.2% of students reported being mostly or very confident in their fact-checking ability.

Percentage of Students Who Indicated Each Response for Confidence in Knowing the Steps to Take to Check the Accuracy of Online News About the COVID-19 Pandemic at Pretest and Posttest (N = 221)

Note. For each question, each column sums to 100%, with slight deviations due to rounding.

p < .001.

To examine the differences in self-reported confidence in fact-checking online news about the COVID-19 pandemic, we ran a mixed-effects ordinal logistic regression model with a logit link using the clmm function of the ordinal package (Christensen, 2019) in R (R Core Team, 2018; RStudio Team, 2016). 6 The model included an intercept-only random effect for students and fixed effects of time (pretest vs. posttest), number of online assignments completed (range 0–3), and pretest reading comprehension score (range 0–6). The students’ confidence was higher at posttest than at pretest (odds ratio [95% CI] = 4.92 [3.29, 7.36], z = 7.75, p < .001). Pretest reading comprehension score was a significant positive predictor of confidence (odds ratio [95% CI] = 1.26 [1.07, 1.48], z = 2.79, p = .005), whereas the number of online assignments completed was unrelated to confidence (odds ratio [95% CI] =1.06 [0.75, 1.51], z = 0.35, p = .729).

Perceived Helpfulness of the Curriculum for Fact-Checking COVID-19 News

On the posttest, we asked the students to report on the helpfulness of the SIFT curriculum for fact-checking online news about the COVID-19 pandemic. Table 9 presents posttest descriptives for items assessing perceived helpfulness of the curriculum. As the scale showed adequate internal consistency (Cronbach’s α = .78), the students’ responses to the five items were averaged to create a single perceived helpfulness score. On average, the students reported that they agreed with statements about the curriculum’s helpfulness (M = 3.76, SD = 0.64 on a scale of 1 = strongly disagree to 5 = strongly agree).

Percentage of Students Who Indicated Each Response for Perceived Helpfulness of the Online SIFT Curriculum for Fact-Checking Online News About the COVID-19 Pandemic at Posttest (N = 221)

Note. For each item, each row sums to 100%, with slight deviations due to rounding. SIFT = Stop, Investigate the source, Find better coverage, and Trace claims, quotes, and media to the original context.

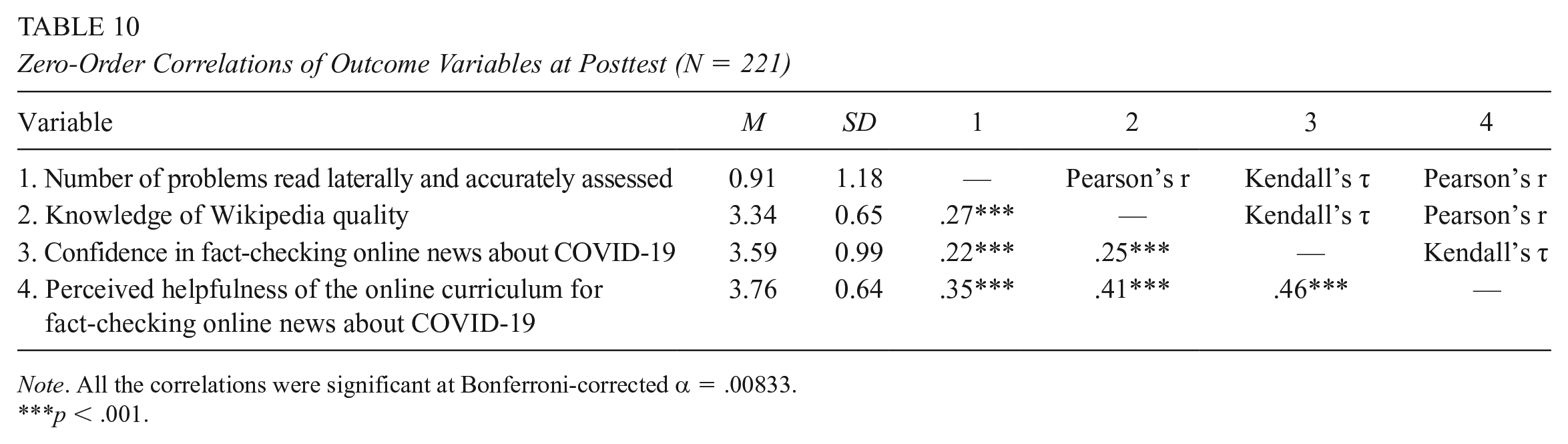

Table 10 presents zero-order correlations between various outcome variables assessed at posttest: number of problems read laterally and accurately assessed, knowledge of Wikipedia quality, confidence in fact-checking COVID-19 online news, ratings of the perceived helpfulness of the online curriculum for fact-checking COVID-19 online news. All of the variables were significantly associated, with the magnitude of the correlations in the small-to-moderate range.

Zero-Order Correlations of Outcome Variables at Posttest (N = 221)

Note. All the correlations were significant at Bonferroni-corrected α = .00833.

p < .001.

Discussion

Over the course of the Fall 2020 semester, college students in an online general education civics course completed asynchronous homework assignments to improve their information fact-checking skills. The instruction introduced the lateral reading strategies used by expert fact-checkers using the SIFT mnemonic: Stop, Investigate the source, Find better coverage, and Trace claims, quotes, and media to the original context (Caulfield, n.d; 2019). We examined whether the online SIFT curriculum increased the students’ reported use of lateral reading to fact-check online content and their use, endorsement, and knowledge of Wikipedia. In light of the COVID-19 infodemic (WHO, 2020b), we also assessed the curriculum’s impact on the students’ confidence in fact-checking online news about the COVID-19 pandemic and the perceived helpfulness of the curriculum.

In keeping with previous assessments of college students’ lateral reading (Brodsky, Brooks, Scimeca, Todorova et al., 2021; McGrew et al., 2018; Wineburg et al., 2020), we found that students rarely read laterally before receiving direct instruction (i.e., only 12.2% of students read laterally on at least one of the four pretest problems). However, as in previous in-person and online intervention studies (Breakstone et al., 2021; Brodsky, Brooks, Scimeca, Todorova et al., 2021; McGrew et al., 2019), the students made gains after receiving direct instruction in this skill, with more than half (52.5%) reading laterally on at least one problem at posttest. Similar gains were found on the more conservative measure of reading laterally and reaching an accurate trust assessment (i.e., from 9.5% of students at pretest to 46.6% at posttest). It is possible that the lower rate of reading laterally and reaching an accurate trust assessment, as compared with lateral reading alone, may be due to students reporting their initial trust assessment on the Likert-type scale and then failing to update their assessment after reading laterally. Additionally, psychological factors, such as confirmation bias (Nickerson, 1998), may have led students to draw conclusions that reinforce their existing beliefs even if they were successful in finding alternative perspectives. In the context of scientific issues, Sinatra and Lombardi (2020) argued that students need to be taught both how to evaluate the credibility of online information sources and how to reexamine their own judgments about the plausibility of the claims and evidence presented by those sources. They wrote, “We teach students to question the motivations and intentions of the information source, but what about their own motivations and intentions as they search?” (p. 128).

Overall, the current results are encouraging for the use of online, asynchronous assignments to teach college students how to read laterally. At the same time, the percentage of students who read laterally and made accurate trust assessments was lower in this study than in the in-person implementation of the DPI curriculum (46.6% vs. 61.0% of students at posttest; Brodsky, Brooks, Scimeca, Todorova et al., 2021). Additionally, as in the previous study, even after completing the online curriculum, the students did not use the lateral reading strategies consistently across the posttest problems. We interpret this finding in the context of skill acquisition and development of expertise. Acquiring a skill is initially an effortful, conscious process, which may eventually become automated but only through prolonged training and practice (Ericsson, 2006; Healy et al., 2014). For lateral reading strategies to become habitual, students need to apply what they have learned in the homework assignments to fact-check the online information they encounter in their daily lives. Unfortunately, the only information we have on the students’ application of the curriculum was the number of assignments completed, which was associated with lateral reading. It is also possible that we underestimated the extent of the students’ lateral reading, as our assessments relied on their written descriptions of how they determined the trustworthiness of the online information, and these descriptions varied in detail and clarity. Future studies should screen-record students as they engage in fact-checking information to gain insight into their use of strategies (see Brodsky et al., 2020; Wineburg & McGrew, 2017), though such an approach may not yet be viable at scale. Future iterations should also consider how best to leverage the synchronous portion of courses (whether in-person or online) to further reinforce and extend the use of lateral reading skills.

Given that the online curriculum was largely text-based, we included a brief reading comprehension test in the pretest and found that the measure predicted the use of lateral reading strategies at posttest. In light of this finding, we encourage researchers to include measures of reading ability and associated factors—for example, vocabulary knowledge, frequency and types of reading, domain-specific knowledge, and general reasoning (Freed et al., 2017)—in future fact-checking assessments and curricular interventions for college students. Including such measures will help explain the variability in students’ responses to the curriculum and identify students in need of further support.

Notably, while all SIFT instruction took place online and asynchronously to ensure fidelity of implementation, posttest performance still appeared to vary significantly by instructor. It is possible that some instructors engaged students with key concepts from the assignments during their synchronous class meetings to a greater extent than others. Alternatively, as most of the instructors taught only one class, the effect of instructor may reflect differences in the students enrolled (full-time vs. part-time, associate vs. baccalaureate) and other related factors (campus affiliation, day/evening/weekend classes). Instructor effect was also confounded with counterbalancing of problem sets, which may not have been of equivalent difficulty despite our efforts to create balanced sets of problems at pretest and posttest.

The SIFT curriculum appeared to have successfully increased students’ self-reported use, endorsement, and accuracy of Wikipedia knowledge. These gains suggest that college students, like high school students (McGrew & Byrne, 2020), benefit from direct instruction and repeated practice in using Wikipedia as a starting point for their lateral reading (McGrew et al., 2017). Future iterations of the curriculum should include additional explicit instruction on Wikipedia’s standards and policies for ensuring and maintaining the quality of articles. As in previous studies (e.g., Garrison, 2018), the students in this study reported being discouraged from using Wikipedia by their teachers. Therefore, it is also possible that they may have underreported their use of Wikipedia at pretest due to social desirability.

Similar to the younger adults surveyed by the Pew Research Center (2020c), most of the students at pretest were not very confident in their ability to fact-check news related to the COVID-19 pandemic. Their confidence in their fact-checking ability for COVID-19-related news improved from pretest to posttest and was positively associated with the perceived helpfulness of the curriculum. However, their confidence at posttest was only weakly associated with the number of problems read laterally and accurately assessed, highlighting the need for performance-based assessment of students’ information verification strategies (Hargittai et al., 2010; List & Alexander, 2018). Overall, these findings are in keeping with the disproportionately large gains in confidence relative to actual ability observed in novices after just a brief period of instruction (Sanchez & Dunning, 2018).

Conclusion

Previous research indicates that college students benefit from direct instruction on how to use lateral reading strategies to fact-check online information. The current study demonstrated that a lateral reading curriculum can be effective when delivered fully online via asynchronous assignments. From pretest to posttest, students made gains in their use of lateral reading and accurate assessment of the trustworthiness of online content and in their knowledge, use, and endorsement of Wikipedia for fact-checking purposes. The lateral reading curriculum increased students’ confidence in their ability to fact-check news about the COVID-19 pandemic. Students’ confidence was positively associated with perceived helpfulness of the online homework assignments for fact-checking COVID-19-related news but only weakly associated with their reported use of lateral reading and correct trust assessments at posttest. Teaching students to read laterally to fact-check online information is a promising first step in helping them discern trustworthy information online amidst the proliferation of false news and misinformation about the COVID-19 pandemic and other issues.

Footnotes

Acknowledgements

1.

Items assessing online news consumption and an analysis comparing responses at pretest and posttest are available in our openICPSR repository (Brodsky, Brooks, Scimeca, Galati et al., 2021).

2.

Items assessing the use of online news sources to find information about the COVID-19 pandemic and an analysis comparing responses at pretest and posttest are available in our openICPSR repository (Brodsky, Brooks, Scimeca, Galati et al., 2021).

3.

The keyword search terms were “wiki,” “googl,” “snope,” “politifact,” “cnn,” “national geographic,” “revers,” “search,” “researched,” “researching,” “look up,” “looked up,” “looking up,” “look for,” “looked for,” “looking for,” “look into,” “looked into,” “looking into,” “look it up,” “looked it up,” “looking it up,” “investigat,” “original,” “other websites,” “other sites,” “four moves,” “four factors,” “fact check,” “hoax,” “debunk,” and “SIFT.”

4.

The full models are available in our openICPSR repository (Brodsky, Brooks, Scimeca, Galati et al., 2021).

5.

The full model is available in our openICPSR repository (Brodsky, Brooks, Scimeca, Galati et al., 2021).

6.

The full model is available in our openICPSR repository (Brodsky, Brooks, Scimeca, Galati et al., 2021).

Authors

JESSICA E. BRODSKY is a doctoral student at the Graduate Center and the College of Staten Island, City University of New York. Her research interests include assessing and fostering media literacy, algorithm literacy, and fact-checking skills in college students and adolescents.

PATRICIA J. BROOKS is a professor of psychology at the College of Staten Island, City University of New York. Her research interests are in two broad areas: (1) individual differences in language learning and development and (2) effective teaching and learning.

DONNA SCIMECA is the core program coordinator at the College of Staten Island, City University of New York and teaches in the college’s Core 100 Program.

PETER GALATI is the assistant to the core program coordinator at the College of Staten Island, City University of New York and teaches in the college’s Core 100 Program. His research interests include civics and law, and historical understandings of human engagement with sharks.

RALITSA TODOROVA is a lecturer at Lehman College, City University of New York. Her research focuses on adolescent development, primarily in educational settings.

MICHAEL CAULFIELD is the director of Blended and Networked Learning at Washington State University Vancouver. His work focuses on civic digital literacies.