Abstract

The current study investigated the effectiveness of three distinct educational technologies—two game-based applications (From Here to There and DragonBox 12+) and two modes of online problem sets in ASSISTments (an Immediate Feedback condition and an Active Control condition with no immediate feedback) on Grade 7 students’ algebraic knowledge. More than 3,600 Grade 7 students across nine in-person and one virtual schools within the same district were randomly assigned to one of the four conditions. Students received nine 30-minute intervention sessions from September 2020 to March 2021. Hierarchical linear modeling analyses of the final analytic sample (N = 1,850) showed significantly higher posttest scores for students who used From Here to There and DragonBox 12+ compared to the Active Control condition. No significant difference was found for the Immediate Feedback condition. The findings have implications for understanding how game-based applications can affect algebraic understanding, even within pandemic pressures on learning.

Keywords

A

Algebra Learning and Educational Technologies

Algebraic principles, such as notation and transformations, can be challenging for students to learn. Many middle and high school students struggle to acquire basic algebraic principles, such as which transformations are mathematically valid (Marquis, 1988; National Math Advisory Panel, 2008) and how to convert between different algebraic representations (Koedinger & Nathan, 2004). These struggles have significant implications for students’ further mathematics achievement because advanced concepts are often presented in algebraic notation.

To improve mathematical understanding and performance among middle school students, researchers and developers have designed educational technology tools to support learning in different ways. Some tools, such as FH2T and DragonBox, are designed to engage students in game-based learning, allowing students to interact with algebraic notations and solve puzzlelike problems in a playful environment. Such game-based approaches have been found to increase students’ engagement and motivation as well as problem-solving and learning (Connolly et al., 2012; Foster, 2008; Gee, 2003; Ke, 2008; Samur & Evans, 2012). Other tools, such as ASSISTments, are designed to provide timely support and feedback on homework, and thus the problems resemble those in traditional mathematics textbooks. Below is an overview of the four conditions, the theories behind the design of the tools, and prior evidence of their efficacy in improving mathematical learning.

From Here to There! (FH2T)

FH2T (https://graspablemath.com/projects/fh2t) is a dynamic research-based game that implements theories of (a) perceptual learning and (b) embodied cognition to address cognitive and affective factors that lead to low proficiency in mathematics. Perceptual learning theory suggests that reasoning and learning about mathematics are inherently perceptual (Goldstone et al., 2017; Jacob & Hochstein, 2008; Kellman et al., 2010; Kirshner & Awtry, 2004; Patsenko & Altmann, 2010), and the visual presentation of notation affects how students reason about, process, understand, and learn mathematics (Braithwaithe et al., 2016; Harrison et al., 2020; Landy & Goldstone, 2010). For example, perceptual features, such as spacing and color of algebraic notations, can direct students’ attention to relevant information (e.g., highlighting the equal sign with a different font color in 4 + 7 = 13 − __ to support reasoning of equivalence; Alibali et al., 2018), and, over time, might help students develop an automatic routine for algebraic reasoning (e.g., balancing two sides of the equation when seeing an equal sign). Perceptual features also affect students’ actions, which reflect and further influence their learning. Embodied cognition theories contend that students’ physical experiences in the world influence their cognitive processes, including thinking and reasoning in mathematics (Abrahamson et al., 2020; Alibali & Nathan, 2012; Foglia & Wilson, 2013; Nathan et al., 2014; Shapiro, 2010; Wilson, 2002). Given that the two theories together suggest that learning is based in perception and action (Alibali & Nathan, 2012), environments within FH2T influence how students perceive and interact with instructional materials, which, in turn, is meant to inform their cognitive processes and problem-solving.

In FH2T, algebraic notations are turned into interactive objects that enforce mathematical rules through their physical movements. Students can dynamically manipulate and transform expressions by using various gestures, such as dragging and tapping, to perform operations on the screen. The system provides a fluid visualization that allows students to see how their actions transform algebraic expressions and equations. In each problem in FH2T, students are presented with two expressions: a starting expression, which is an active and transformable expression, and a target goal state, which is mathematically equivalent but perceptually different to the starting expression. Students transform the starting expression (e.g., “9b + 27c”) into the target goal state (e.g., “(3b + 9c) ∙ 3”) by using algebraically permissible actions and learned gestures. For example, students can drag 9 on top of 27 to factor 27 into 9 ∙ 3, and the system transforms the starting expression into “9b + 9 ∙ 3c.” As students encounter, discover, and practice mathematical principles in action, fluid visualizations may help direct students’ attention toward structural patterns in algebraic notation and develop their perceptual-motor routines of algebraic equation-solving (Nathan et al., 2016; Nathan & Walkington, 2017). (See online supplementary material, Appendix A, for more information.)

The effectiveness of FH2T has been examined across several studies over the past decade. For instance, a preliminary study with 130 middle school students showed that, after four 30-minute intervention sessions, students in the fluid visualizations condition, which is an early version of FH2T, improved on their procedural fluency, whereas students in the manual calculations condition did not (Ottmar et al., 2015). Similarly, a recent randomized controlled trial (RCT) with 475 middle school students showed that, after four 30-minute intervention sessions, students in the FH2T condition scored higher on mathematical equivalence compared to their peers in an online problem set condition (Chan et al., 2022). A study with an elementary version of FH2T further showed that, among 185 Grade 2 students, completing more problems in FH2T was associated with higher posttest scores (testing decomposition, operational strategies, and basic notation), and this effect was significant above and beyond students’ prior knowledge, as measured by the pretest (Hulse et al., 2019). Together, these findings suggest that FH2T may be effective at improving students’ algebraic understanding and mathematics performance, warranting a large-scale RCT to evaluate its effectiveness compared to other educational technologies.

DragonBox 12+ (DragonBox)

DragonBox (https://dragonbox.com/products/algebra-12) is an educational game that introduces advanced algebraic concepts to students ages 12–17. For each problem, students are asked to isolate a box containing a dragon—equivalent to solving an equation for x. The popularity of DragonBox is reflected in the public realm, where it is lauded as one of the best educational games for teaching algebra (Kahoot!, 2019). Its design has also been praised for using several research-backed design principles, including discovery-based learning, embedded gestures, multiple representations, immediate feedback, and adaptive difficulty (Cayton-Hodges et al., 2015; Torres et al., 2016). One of DragonBox’s major design principles is that students should not perceive DragonBox as a math game. Thus, at the start of the game, there are no numbers or variables; instead, students move cards with pictures of monsters, following the rules of algebra. Throughout the game, monster cards are gradually replaced by algebraic symbols; however, cards are never explicitly connected to mathematical properties.

Despite the popularity of DragonBox, research findings on its efficacy are mixed. In terms of supportive evidence, the DragonBox developers and the University of Washington Center for Game Science conducted an Algebra Challenge with K–12 students from 70 schools across 15 districts. They found that after 1.5 hours of playing a combined version of DragonBox 5+ and 12+, 93% of students were able to successfully answer three algebra problems (e.g., d ∙ x + m = 8) in a row (Liu et al., 2015). Similarly, Grade 8 students have shown significant gains in algebra problem-solving performance after using DragonBox for 8 hours across 4 weeks (Dolonen & Kluge, 2015), and its positive impacts may extend to students’ confidence and attitudes toward mathematics (Siew et al., 2016). However, Long and Aleven (2014, 2017) find that students in Grades 7–8 who played DragonBox for 3.5 hours across five sessions showed no improvements in problem-solving performance or confidence, even on problems included in the game. Further, despite the students’ higher enjoyment with DragonBox, their learning gains were significantly lower than those of students using an intelligent tutor system. Dolonen and Kluge (2015) also find that Grade 8 students using DragonBox showed higher engagement but lower learning gains compared to students who practiced problems similar to those found in standard algebra textbooks.

Contrasting DragonBox and FH2T

It is appropriate to compare DragonBox to FH2T because both use a game-based interface and are grounded in intuitive perceptual-motor routines, but they also vary in important ways. First, whereas DragonBox progressively transitions from pictures of monsters to abstract algebraic notation as the game proceeds, FH2T aims to promote fluency in algebraic notation by grounding perceptual-motor routines directly in notation. Second, all of DragonBox’s problems involve the same goal (i.e., isolate the dragon to solve for x); in contrast, each problem in FH2T has a unique goal state, encouraging flexibility in notational transformations. Third, DragonBox’s operations are introduced as in-game rules independent from mathematics (e.g., positive- and negative-color versions of the same picture can be combined to cancel them), whereas FH2T directly connects actions and operations to mathematical principles. These similarities and differences allowed the study team to begin exploring relative advantages and disadvantages of hiding mathematical materials, having flexible goals, and explicitly grounding actions in mathematical rationales.

Problem Sets With Immediate Feedback and Hints in ASSISTments (Immediate Feedback)

ASSISTments (https://new.assistments.org/; Heffernan & Heffernan, 2014) is an online homework system that provides feedback to students as they solve traditional textbook problems. The problem sets in ASSISTments are adapted from open-source curricula, thus resembling problems students would encounter in their textbooks and homework assignments. In ASSISTments, students are presented with problems one at a time on their screen; they can request hints if they need additional support on problem-solving and receive immediate correctness feedback on their responses. Teachers receive class reports on problem sets and students’ performance so they can identify struggling students and challenging problems, and then they use these data to inform instruction in their classroom. (See online supplementary material, Appendix A, for more information.)

The design of ASSISTments is informed by research on formative assessment and timely feedback. Teachers use formative assessments to gather data from students and guide their instruction to meet students’ needs (Black & Wiliam, 1998; Boston, 2002). When teachers adjust instruction based on formative assessments, students show significant improvement on their achievement (Bergan et al., 1991; Speece et al., 2003). Further, timely feedback and support during learning are beneficial to students (Butler & Woodward, 2018; Shute, 2008). Studies suggest that receiving feedback immediately after giving responses or completing problem sets might be effective for improving students’ procedural and conceptual knowledge (Azevedo & Bernard, 1995; Corbett & Anderson, 2001; Dihoff et al., 2003; Phye & Andre, 1989).

ASSISTments has had positive impacts on student learning. A preliminary study with 28 Grade 5 students found that they learned significantly more when provided with immediate feedback and on-demand hints in ASSISTments compared to completing traditional paper-and-pencil homework (Mendicino et al., 2009). Similarly, a study with 63 Grade 7 students conducted within ASSISTments showed that students who received immediate correctness feedback and opportunities to reattempt problems outperformed students who completed problems without feedback or reattempts (Kelly et al., 2013). A large-scale RCT with 2,850 students in Grade 7 further showed that combining ASSISTments with teacher training on adaptive teaching significantly improved students’ end-of-year performance on standardized mathematics scores compared to those of a business-as-usual control group (Roschelle et al., 2016). Together, these findings suggest that offering online problem sets with hints and immediate feedback plus teacher training is effective at improving students’ mathematics performance. In the current study, problem sets with immediate hints and feedback in ASSISTments were used as a high-quality, nongamified intervention treatment. Unlike the work of Roschelle et al. (2016), this study did not provide teacher training on adaptive instruction because FH2T and DragonBox only involve student components and do not include teacher training. Therefore, the current study provides insights into independent effects of the student components without teacher components in ASSISTments.

Active Control Problem Sets With Post-Assignment Feedback (Active Control)

The fourth condition was a version of ASSISTments that provides post-assignment, rather than immediate, hints and feedback. In this condition, problem sets were administered in “test mode,” so students did not receive any feedback or hints during problem-solving. Instead, students received a report with correctness feedback at the end of the problem set, and they could review their responses, revisit problems, and request hints. This condition served as the active control in the study, as it mimicked traditional homework assignments and allowed all students involved in the study to participate via technology.

The Current Study

The goal of the current study was to independently examine the efficacy of three widely used treatment conditions (i.e., FH2T, DragonBox, and ASSISTments with immediate feedback) on students’ algebraic understanding compared to the Active Control. The study was conducted between September 2020 and April 2021, which was during the peak of the COVID-19 pandemic in the United States. Given the restrictions of physical distancing, the school district offered students and their families a choice of classroom format (100% in person or 100% asynchronous virtual academy) for the 2020–2021 school year prior to the start of the fall semester. Random assignment to study condition occurred across formats, so the classroom format was not aligned to any one study condition. Although their choice was intended to be for the full school year, families were allowed to change their selection throughout the year. Regardless of students’ classroom format, all study sessions (i.e., assessments and interventions) were administered online, and students worked individually at their own pace, using a device.

Methods

Participants

A total of 52 Grade 7 mathematics teachers and their students from 11 middle schools (10 in-person schools and one virtual academy) were recruited from a large, suburban district in the southeastern United States in the summer of 2020. Together, teachers taught 190 mathematics classes and 4,092 students. Prior to random assignment, one school declined to participate. This school included four teachers and 377 students. Students enrolled in resource settings were not included in this study. This resulted in 10 schools (nine in person and one virtual), 37 teachers, 156 classes, and 3,612 students. The study sample was 49.1% White, 14.1% Hispanic, and 27.9% Asian. Additionally, 42.5% began the school year in the Virtual Academy. Student-level socioeconomic data (e.g., free or reduced-price lunch status) were not available. (All baseline and posttest sample sizes are presented in Figure 1.)

Consort figure representing attrition.

Design

This study randomly assigned students to study conditions within classrooms, ensuring that study conditions were equivalent with respect to teacher characteristics and classroom curricula. This approach was possible because teachers were able to use all four technology-based interventions within the classroom. Students were ranked within classrooms based on their prior state mathematics assessment scores, blocked into sets of five (i.e., quintets), and then randomly assigned into either the FH2T (40%), DragonBox (20%), Immediate Feedback (20%), or Active Control (20%) condition. Because a primary goal of the RCT was designed to test the efficacy of FH2T compared to other educational technologies, a larger proportion of students was assigned to FH2T. When classroom size did not allow for a quintet to be formed (e.g., 18 students, resulting in three excess students not in a complete quintet), remaining students in each classroom were placed in quintets drawn from all excess Grade 7 students within each school. Cross-school quintets were formed, as needed, to complete overall random assignment.

Attrition Analysis

One school dropped out following pretest assessment, resulting in a final pool of nine schools (eight in person and one virtual), 34 teachers, 143 classes, and 3,271 students participating at the start of the interventions. From this pool of 3,271 students, 1,850 had pretest and posttest assessments and constituted the analytic sample for this study. These students were enrolled in 127 classes across 34 teachers. Given the larger context of the COVID-19 pandemic, the overall attrition rate was 48.8%.

Our approach to causal inference under attrition, following the guidelines of What Works Clearinghouse (WWC; 2020) and others, was to estimate treatment effects for the subset of students in our analysis sample with complete pretest and posttest data. Under this approach, missing data methods, such as full-information maximum likelihood or multiple imputation, are not necessary because no claims are made about larger populations that include potential attritors and non-attritors.

The threat of attrition bias was assessed in two ways—first, by comparing attrition rates across conditions, and second, by checking whether non-attritting students assigned to different conditions were equivalent at baseline. As to the first strategy, Figure 1 shows attrition for all key study contrasts. Importantly, no contrast exceeds attrition percentage thresholds established by WWC. In the vernacular of WWC, this study can be characterized as a low-attrition RCT, and all key contrasts are eligible to “Meet WWC Group Design Standards Without Reservations” (WWC, 2020, p. 5). This characterization assumes that WWC would use the “Optimistic” attrition threshold, which entails assuming that sample loss is exogenous (i.e., unrelated) to intervention conditions in the study.

Anecdotal evidence and exploratory data analysis (online supplementary material, Appendix B) suggest that the relatively high attrition rate for DragonBox was largely driven by students in the Virtual Academy, due to difficulty installing the extra required software. In any event, differential attrition was not statistically significant (χ2(3, N = 1,849) = 3.978, p = .264), and differential as well as overall attrition rates for active control comparisons fell within tolerable threats of bias under optimistic assumptions (WWC, 2020).

Out of an abundance of caution, baseline equivalence of the analysis sample was examined by using student demographics and pretest scores reported in Table 1. Differences in covariate means for Active Control comparisons ranged in magnitude from below 0.001 to roughly 0.22 standard deviations. Therefore, the study met the WWC (2020) baseline equivalence requirement after statistical adjustments for covariates were made in Models 2–4, below. Baseline equivalence for Active Control comparisons were tested by using the methods of Hansen and Bowers (2008); differences were significant compared to DragonBox (χ2(8) = 15.6, p = .049), but not for the other two comparisons (Immediate Feedback: χ2(8) = 6.7, p = .569 and FH2T χ2(8) = 11.6, p = .17). There was evidence of higher pretest scores in each of the experimental conditions compared to the Active Control (Immediate Feedback: Z = 2.13, p = 0.033; DragonBox: Z = 3.05, p = 0.002; FH2T: Z = 2.13, p = 0.033), suggesting that the association between pretest and attrition was weaker in the Active Control and increasing the importance of pretest adjustments in Models 2–4, below.

Student Demographic Information by Condition and Pretest Scores (N = 1,850)

Note. EIP = Early Intervention Program; IEP = Individualized Education Program; SD = standard deviation.

Procedure

This research was approved by the Institutional Review Board at a university in the northeastern United States. This research involved typical educational practices and thus did not require parental consent. Instead, parents were informed about the research and the data collected from the educational technologies through a letter and could opt their child out of this study.

Students received nine 30-minute intervention sessions across the school year, with a 2-week window to complete each session. Four intervention sessions were administered in the fall semester and five sessions in the spring semester. Before and after the intervention, students received a 40- to 45-minute assessment on algebraic knowledge, mathematics anxiety, and self-efficacy. All interventions and assessments were administered online, and students worked individually at their own pace, using a device. For students receiving instruction in person, teachers dedicated instructional periods for the study assignments in mathematics classrooms. For students receiving virtual instruction, teachers included the study assignments as a part of students’ learning activities.

The mathematical content (arithmetic and algebraic equation solving) was aligned between the four conditions, and all students solved algebraic problems during their intervention sessions by using their assigned technology (including the Active Control condition). Within each condition, a countdown timer was embedded in the system to help ensure that students used each technology for a similar amount of time. Students could pause and resume the timer, so they could stop for breaks and continue when they were ready. A timer was not embedded in the assessments (i.e., pre-and posttest), so students could take as long as needed to complete the assessments.

In addition to using ASSISTments as an intervention technology (i.e., Immediate Feedback and Active Control conditions), it was the platform for the RCT in which assessments were administered, assigned intervention conditions were maintained within students over time, and fidelity data were recorded for all conditions (see details in Chan et al., 2022). All students, regardless of their intervention condition, logged in to their ASSISTments account and opened the same assignment link at the beginning of each study session. The assignment link then directed students to their technology intervention, and students spent 30 minutes with their assigned technology.

Measures

Algebraic Knowledge Assessment

The algebraic knowledge assessment consisted of 10 multiple-choice items from a previously validated measure of algebraic understanding (Star et al., 2015; Cronbach’s α = .89; see the 10 items on

Assessments were administered within the ASSISTments platform, in which students saw questions one at a time and selected a response option by using a mouse. No feedback was given to students about the correctness of their response. The pretest assessment was administered in September 2020, approximately 1 week prior to the intervention sessions. The posttest assessment was administered between the end of March and the beginning of April 2021, approximately 2 weeks following completion of the intervention.

Intervention Condition

Intervention condition was a four-level dummy-coded categorical variable indicating participation in FH2T, DragonBox, and the Immediate Feedback condition. The Active Control condition served as the referent group.

Race/Ethnicity

Students’ race/ethnicity was a three-level dummy-coded categorical variable indicating whether a student identified as non-Hispanic White, Asian, or some other ethnic/racial group. Non-Hispanic White was the referent group in the analyses.

Students’ Biological Sex

Students’ biological sex was a dummy-coded variable indicating whether a student identified as being male. Female was the reference group (0 = female, 1 = male).

Gifted Status

Students’ identification as gifted was a dummy-coded variable in the analysis (0 = not identified as gifted, 1 = identified as gifted).

Accelerated Mathematics

All students in our study were placed in one of two levels of mathematics classrooms by the district: accelerated, in which the teachers implemented more challenging course materials, or regular mathematics classes. Student enrollment in an accelerated mathematics class was a dummy-coded variable (0 = not in an accelerated mathematics class, 1 = enrolled in an accelerated mathematics class).

Early Intervention Program Status

Student participation in an early intervention program (EIP) in elementary school when identified as needing extra support was a dummy-coded variable (0 = not enrolled in EIP, 1 = enrolled in EIP).

Individualized Education Program Status

Students’ individualized education program (IEP) status was a dummy-coded variable (0 = did not receive an IEP, 1 = received an IEP).

Classroom Format

Students’ enrollment in an in-person versus virtual classroom was a dummy-coded variable (0 = in-person classroom, 1 = virtual classroom).

Completed Assignments

The total number of the nine intervention sessions a student completed was a continuous variable.

Intervention Conditions

In all four conditions, students accessed their learning technology through the ASSISTments platform. Students logged in to the platform with a username and a password and then clicked the assignment link that directed them to their assigned technology.

FH2T

FH2T consists of 14 worlds that focus on different mathematical concepts, and each world contains 18 problems (a total of 252 problems). In this study, all students in the FH2T condition started at World 1: Addition and worked their way through the worlds—World 2: Multiplication, World 3: Order of Operations + and ×, World 4: Subtraction and Negative Numbers, World 5: Mixed Practice of + and −, World 6: Division, World 7: Order of Operations, World 8: Equation + and −, World 9: Inverse Operations + and −, World 10: Distribution, World 11: Factoring, World 12: Equation +, −, ×, and ÷, World 13: Inverse Operations, and World 14: Final Review. All students were given 30 minutes to play FH2T for each session, after which the system would log students out of the game. The system also saves students’ progress, so students could start where they left off in each subsequent session.

DragonBox

DragonBox has 10 chapters, and each chapter contains 20 problems (a total of 200 problems). The problems together tap the Grade 7 mathematics standards in Common Core (Common Core State Standards for Mathematics, 2010) and cover the following content: addition, multiplication, division, parentheses, negative signs, fraction addition, combining like terms, variables, factoring, and substitution. Similar to FH2T, DragonBox students worked through problems at their own pace and started from where they left off in each subsequent session.

Because DragonBox is a phone- or tablet-based application that cannot be opened on a web browser, the study team provided tablets on which students played DragonBox in in-person classrooms. For students in virtual classrooms, the research team provided instructions and licenses for students to download the game on their own device. Regardless of the classroom format, students would log in to ASSISTments on their laptop or Chromebook at the beginning of each session, follow the instructions to start the timer for the session, and then play DragonBox on a tablet or personal device.

Because DragonBox is a commercial application, access to students’ progress and action-level data within the game was not possible. Therefore, at the end of each intervention session, students self-reported their progress—the chapter and problem at which they stopped that day—in ASSISTments. In case students did not report their progress at the end of the session, they were also asked to report their previous progress at the beginning of each intervention session before they started playing (after the first intervention session). Given that students in the DragonBox condition had to report their progress before and after gameplay, the timer for playing DragonBox was set to 25 minutes. This way, students could complete the sessions in 30 minutes without disrupting teachers’ lesson plans and instruction.

Immediate Feedback

Students in the Immediate Feedback condition solved traditional mathematics problems in ASSISTments (Heffernan & Heffernan, 2014). To ensure that the problems aligned with traditional instruction, the study team selected and adapted problems from three popular open-source middle-school mathematics curricula—Engage NY (2014), Utah Math Project (2016), and Illustrative Mathematics (2017)—that already existed in ASSISTments. The topics covered across the nine intervention sessions were (a) addition and multiplication, (b) subtraction and negative numbers, (c) division and fractions, (d) order of operations, (e) addition and subtraction in equation-solving, (f) distribution, (g) factoring, (h) multiplication and division in equation-solving, and (i) review.

For each intervention session, students received a problem set consisting of 35–45 problems. They started on the first problem of a problem set at the beginning of each session and worked through the problems in the same order. After 25 minutes, or after students had completed the problem set, the system directed students to their session report, which showed their performance on each problem. Students were directed to review their session report, revisit problems, and review the hints and correct answers within the 30-minute time frame.

Active Control

In the Active Control condition, the mathematical problems and the study procedure were identical to the Immediate Feedback condition. The only difference between the two conditions was that the hints and correctness feedback were available during problem-solving in the Immediate Feedback condition, whereas the hints and correctness feedback were available only after problem-solving (i.e., after 25 minutes or completing the problem set) in the Active Control condition. At this point, students could revisit any question, review their session report, and review the hints and correct answers within the 30-minute time frame.

Analytic Approach

Intervention effects were tested through a series of three-level hierarchical linear models, with students (N = 1,850), nested within classrooms (n = 127), nested within teachers (n = 34). Classroom format (in person versus virtual) was a Level 2 predictor, and all other predictors were Level 1 variables. The number of assignments completed and pretest scores were centered on their respective grand means. All other predictors were dummy-coded. The outcome measure was student performance on the posttest assessment. The primary analyses were registered on the Open Science Framework (

Results

Descriptive Statistics

Means and standard deviations for all variables were previously reported in Table 1. A correlation matrix is included in the online supplementary material to this article (Appendix C, Table C1); however, three sets of results within the correlation matrix are worth mentioning. As noted previously, intervention condition was related to pretest scores (χ2(3, N = 1,850) = 13.303, p = .004). In addition, condition was related to the number of assignments a student completed (χ2(3, N = 1,850) = 28.657, p < .001), with students in the DragonBox condition completing fewer assignments (M = 5.526, SD = 3.382) than those in the FH2T (M = 6.603, SD = 2.857), Immediate Feedback (M = 6.811, SD = 2.679), and Active Control conditions (M = 6.858, SD = 2.770). Intervention condition was unrelated to all other predictor variables, including child race/ethnicity (χ2(6, N = 1,850) = 11.316, p = .079), sex (χ2(3, N = 1,850) = 2.322, p = .508), gifted status (χ2(3, N = 1,850) = 4.408, p = .221), accelerated program (χ2(3, N = 1,850) = 0.248, p = .969), EIP status (χ2(3, N = 1,850) = 0.881, p = .830), IEP status (χ2(3, N = 1,850) = 6.462, p = .091), and enrollment in a virtual classroom (χ2(3, N = 1,850) = 2.540, p = .468).

Second, regarding the composition of virtual versus in-person classrooms, 67.3% of students in in-person classrooms were White, with 6.8% Asian and 25.9% identified with some other racial/ethnic group. In contrast, 61.7% of students enrolled in a virtual classroom were Asian, with 21.7% White and 16.7% identified with some other racial/ethnic group. Reflecting this pattern, enrollment in a virtual classroom was also related to most other predictors (see Table 2). Specifically, virtual classrooms had more gifted and accelerated students, but fewer males and fewer students enrolled in EIP. In addition, students in virtual classrooms completed more assignments (M = 7.321, SD = 2.766) than those in in-person classrooms (M = 6.090, SD = 2.952, t(92) = 2.489, p = .015) and had higher pretest scores (virtual: M = 6.729, SD = 2.622, and in-person: M = 3.924, SD = 2.172, respectively, t(92) = 6.180, p < .001).

Percentage of Students Within Each Classroom Format Who Was Male, Gifted, in an Accelerated Program, or Enrolled in EIP or Had an IEP

Note. *** p < .001, * p < .05.

Primary Analyses

An initial model tested intervention effects with no other covariates. This summarized the overall intervention effects only controlling for the inherent nested design. As shown in the first panel (i.e., Model 1) in Table 3, a significant intervention effect was observed (χ2(3, N = 1,850) = 17.325, p = 0.001). All three intervention conditions had higher performance on the posttest than did the Active Control condition, with the largest effect seen for DragonBox (γ = .641, Hedge’s g = .240), followed by FH2T (γ = .370, Hedge’s g = .138) and the Immediate Feedback conditions (γ = .325, Hedge’s g = .122).

Summary of HLM Models Predicting Posttest Scores (N = 1,850)

Note. All three coefficients also remained significant at p < 0.05 after Holm correction (Holm, 1979).

Model 1: Condition: χ2(3, N = 1,850) = 17.325, p = 0.001

Model 2: Condition: χ2(3, N = 1,850) = 12.226, p = 0.007; Race Effect: χ2(3, N = 1,850) = 38.567, p < 0.001

Model 3: Condition: χ2(3, N = 1,850) = 20.863, p < 0.001; Race Effect: χ2(3, N = 1,850) = 19.962, p < 0.001

Model 4: Condition: χ2(3, N = 1,850) = 22.911, p < 0.001; Race Effect: χ2(3, N = 1,850) = 20.769, p < 0.001; Cond x Pretest: χ2(3, N = 1,850) = 11.192, p = 0.011

Accelerated = Student in an accelerated mathematics program; Assign = Number of assignments completed; Dragon = DragonBox; Dragon × Pre = Dragon × Pretest; EIP = Enrolled in Early Intervention Program; FH2T = From Here to There; FH2T × Pre = FH2T × Pretest; Gifted = Identified as gifted; IEP = Has an Individual Education Program; Immediate = Immediate Feedback; Immediate Feedback × Pre = Immediate Feedback × Pretest Other race = Race/ethnicity other than Asian or White/non-Hispanic; Pretest = Pretest score (centered); Virtual = Enrolled in a virtual class.

Model 2 controlled for various student demographic and academic covariates existing prior to the randomization. All were significantly related to posttest performance, with the exception of IEP status. Intervention condition continued to be statistically significant (χ2(3, N = 1,850) = 12.226, p = 0.007), with effects for FH2T (γ = .338) and DragonBox (γ = .555) significantly larger than the Active Control condition. To produce Model 3, an indicator was included for enrollment in an in-person or virtual classroom and a post-randomization variable, the number of assignments completed by the student (a marker of dosage). Both were statistically significant predictors of the posttest score, with higher posttest scores observed for students in virtual classrooms (γ = .415) and those with a higher number of completed assignments (γ = .120). In this model, coefficients on intervention conditions were estimates of “natural direct effects” (VanderWeele, 2015, p.22): Effects of intervention were the number of completed assignments held fixed (assuming no unadjusted confounding between the number of completed assignments and posttest scores). Direct intervention effects were statistically significant (χ2(3, N = 1,850) = 20.863, p < 0.001), with students in FH2T (γ = .361, Hedge’s g = .135) and DragonBox conditions (γ = .719, Hedge’s g = .269) significantly outperforming students in the Active Control condition.

As our approach to attrition was conservative (excluding students without pretest data), we also fit the models including students with posttests but with missing pretests. These analyses were conducted by imputing the global mean for missing pretest scores, with an indicator variable for missing pretest scores. This approach yielded similar results as those presented here.

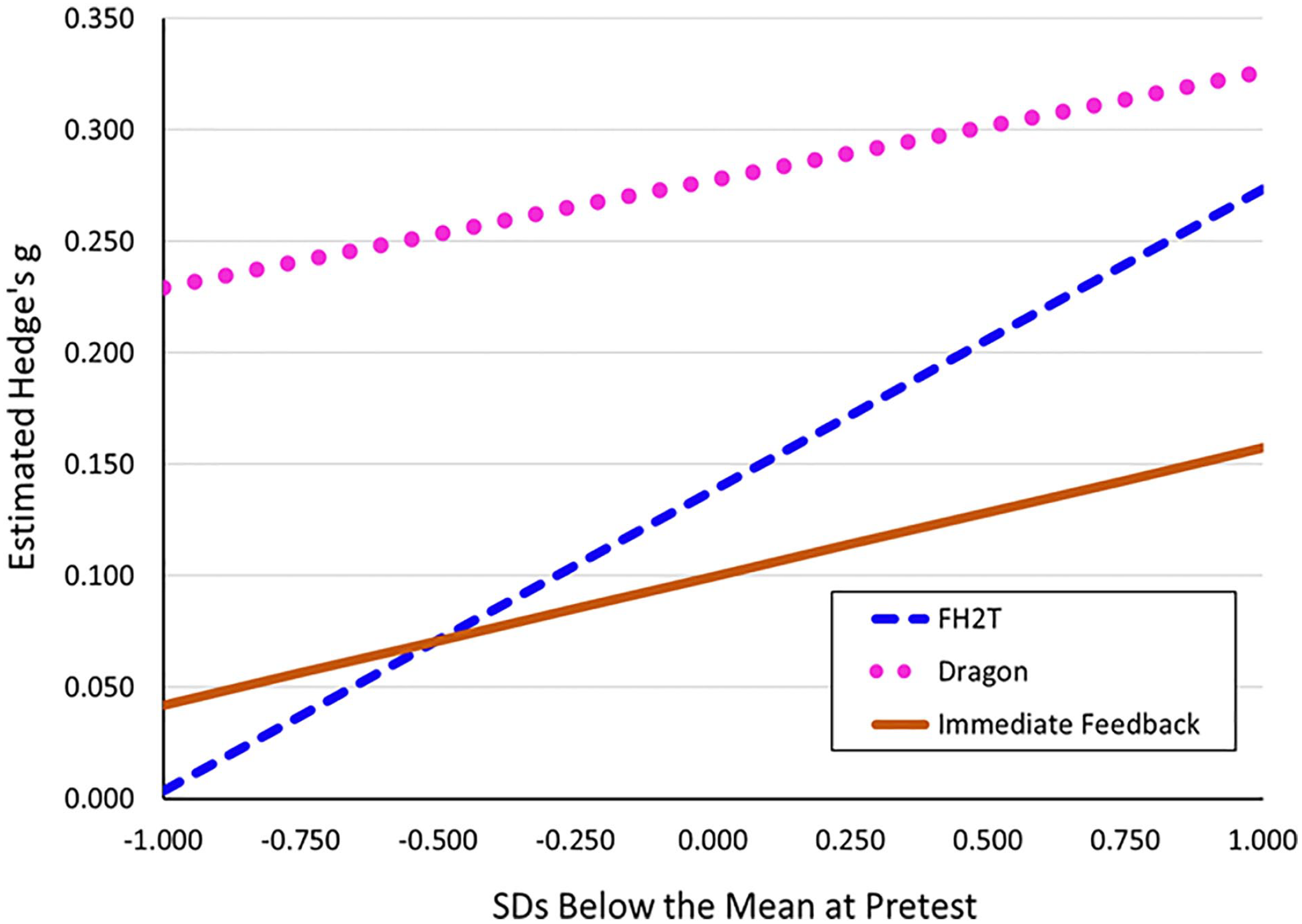

Follow-Up Interaction Analyses

A series of follow-up analyses tested for interactions between intervention condition and the various predictor variables. As reported in Model 4 (see Table 3), a significant interaction was observed between intervention condition and pretest scores (χ2(3, N = 1,850) = 11.192, p = 0.011). This reflected larger FH2T effects among students with higher versus lower pretest performance (see Figure 2). No other interactions with intervention condition were found to be statistically significant: Race/Ethnicity: Asian: χ2(6, N = 1,850) = 5.212, p = 0.517; Male: χ2(3, N =1,850) = 1.193, p = 0.755; Gifted: χ2(3, N = 1,850) = 2.785, p = 0.426; Accelerated program: χ2(3, N = 1,850) = 1.799, p = 0.615; EIP status: χ2(3, N = 1,850) = 0.196, p = 0.978; IEP status: χ2(3, N = 1,850) = 1.204, p = 0.752; Virtual classroom: χ2(3, N = 1,850) = 3.870, p = 0.276; Number of assignments completed: χ2(3, N = 1,850) = 1.757, p = 0.624.

Interaction between pretest performance and intervention condition.

Discussion

In summary, after a 4.5-hour intervention, students in FH2T and DragonBox conditions were found to have significantly higher posttest scores, reflecting students’ higher algebraic understanding, compared to students in the Active Control condition. These effects remained after controlling for students’ prior knowledge and demographic variables. Although students in the Immediate Feedback condition also displayed significantly higher posttest scores compared to students in the Active Control condition, this effect was no longer significant after controlling for covariates. Together, the findings suggest that FH2T and DragonBox are effective digital games to support Grade 7 students’ algebraic understanding compared to an active control condition using online problem sets with post-assignment feedback. Below, findings are discussed in detail.

Learning With Games: FH2T and DragonBox

In the current study, students in the two game-based learning interventions outperformed students in the Active Control condition on the posttest algebraic knowledge assessment. Further, the effect size for FH2T was similar to that reported in a prior study comparing the efficacy of FH2T to the Immediate Feedback condition (Hedge’s g = 0.16; Chan et al., 2022), providing additional support for the consistent positive effects of FH2T. Although effects were found for both games, they were designed based on different theoretical perspectives. FH2T and DragonBox are grounded in intuitive perceptual-motor routines and game-based interface; however, the two games differ in important ways. For instance, FH2T introduces algebraic notations from the start, whereas DragonBox gradually transitions students from pictures of monsters to abstract notations. Further, DragonBox asks students to isolate variables across notational contexts, whereas FH2T asks students to transform between mathematically equivalent but perceptually different expressions or equations (e.g., 9 ∙ 12 + 9 ∙ 23 and 9 ∙ 7 ∙ 5). It is possible that the progression from concrete pictures to abstract symbols (Fyfe et al., 2014) and the focus on isolating variables are elements within DragonBox that effectively support students’ algebra performance. However, qualitative investigations of teacher and student interactions suggest that the design of DragonBox can make it difficult for teachers and students to speak precisely about concepts introduced by the game and to connect these concepts back to mathematics (Dolonen & Kluge, 2015). FH2T provides students with opportunities to explore and learn which gesture-actions with algebraic notations are appropriate and allowed across mathematical contexts (Dörfler, 2003; Landy & Goldstone, 2009; Nogueira de Lima & Tall, 2008). However, because game-based learning has other potential benefits, such as increasing motivation (Hidi & Renninger, 2006; Rotgans & Schmidt, 2011) and enhancing engagement (Mora et al., 2015), it may be the case that DragonBox and FH2T support algebraic learning through engaging students with mathematical content and fostering positive attitudes toward mathematics. Although the current study does not tease apart these potential mechanisms, it does provide a foundation for future inquiries that could explore how various game elements differentially affect student learning from educational technology games. For example, future analyses could use the clickstream data within FH2T to explore the relations between students’ in-game behaviors and their learning outcomes.

Alternatively, the positive impacts of FH2T and DragonBox may be a result of their game-based context, which may increase students’ interest and engagement with mathematics and, in turn, improve their performance. Substantial research has highlighted the benefits of integrating gamification elements, such as rewards, leveling, challenges, supporting player skills, player controls, and feedback, into instruction to promote learning (Garris et al., 2002; Gee, 2003; Kalloo & Mohan, 2015). Technology-based educational games have been found to increase engagement and motivation, which have also increased student problem-solving, and learning (Connolly et al., 2012; Foster, 2008; Ke, 2008; Samur & Evans, 2012). Therefore, the motivational factors related to game-based learning may be another potential mechanism through which FH2T and DragonBox may support students’ algebraic understanding.

Further, anecdotes from participating teachers revealed that some students in the two problem-set conditions lacked the motivation to solve textbook problems, knowing that other students in the same classroom were playing games. Although the student-level randomization maximizes the statistical power to detect intervention effects, administering four different conditions within each classroom may inevitably highlight the differences between the interventions and ultimately contribute to the impacts of the game-based interventions compared to the problem-set conditions. Because of the COVID context, 32.7% of students received the intervention through their virtual classroom and worked on their study assignments at home, without the awareness of other intervention conditions. Additional data were also collected on students’ attitudes toward mathematics as well as their engagement with the technologies. Future analyses will use these additional data to explore whether game-based interventions supported algebraic understanding through increasing students’ motivations or engagements with their intervention and how the classroom context of the current study may interact with the intervention condition to influence student learning.

Interaction Between FH2T and Pretest Scores

Study effects for FH2T and DragonBox were largely unchanged when investigating interactions with multiple demographic and program variables related to students. However, results did indicate a significantly larger effect for FH2T among students who scored higher on the pretest. This finding suggests additional benefits of FH2T for students who already possess foundational or basic knowledge of algebraic concepts. In particular, the design features of FH2T, such as introducing algebraic notations from the start and having flexible goals, may require students to have some foundational algebraic knowledge to further benefit from the game. Past research on FH2T has been mixed in finding this relation. For example, in an investigation of Grades 6–7 students, Chan et al. (2022) do not find a significant interaction between level of pretest and student understanding of mathematical equivalence. However, in a sample of Grade 2 students, a significant interaction between pretest and progress within FH2T is found (Hulse et al., 2019). Specifically, among students with lower, as opposed to higher, prior knowledge, solving more problems in FH2T is associated with higher posttest scores on an arithmetic assessment. The moderating effects of prior knowledge may vary, depending on students’ grade level (i.e., second, sixth, seventh), the particular FH2T variable used (i.e., intervention assignment vs. progress within FH2T), and the outcome of interest (i.e., algebraic knowledge, mathematical equivalence, arithmetic). Future research should continue to explore the extent to which prior knowledge moderates the relationship between using FH2T and algebraic knowledge gain. Doing so will advance the understanding of when and for whom such interventions as FH2T are beneficial for learning mathematics.

Online Problem Sets

Although students in the Immediate Feedback condition also displayed significantly higher posttest scores compared to students in the Active Control condition, this effect was no longer significant after controlling for several covariates. Different from the prior findings within ASSISTments (Kelly et al., 2013; Mendicino et al., 2009), this study did not find consistent evidence that students in the Immediate Feedback condition outperformed the students in the Active Control condition with post-assignment feedback. It is important to note that the two problem-set conditions only involved the student aspects of ASSISTments (i.e., hints, feedback, performance report), whereas the prior efficacy study on ASSISTments also involved teacher training that provided support on monitoring student progress within the system and using student performance to inform instructional practices in the classroom (Roschelle et al., 2016). The current findings, therefore, extend prior research and suggest that implementing only student aspects of ASSISTments in a homework-style intervention may not consistently support students’ algebra learning. Teacher training may be a critical component of the ASSISTments intervention that effectively supports students’ mathematics achievement.

Study Context

It is important to note that the study was conducted within the larger context of the COVID-19 pandemic. This context affected many aspects of the current study. For instance, although significant effects were found for posttest scores of students in the FH2T and DragonBox conditions, overall, scores were lower at posttest than at pretest. This is likely due to interrupted learning and the overarching lack of typical gains seen across the country in mathematics due to the pandemic (Dorn et al., 2020). For example, Kuhfeld and Lewis (2022) find that the pandemic has had larger negative impacts on mathematics compared to reading and that those effects have not significantly diminished as of the 2021–2022 school year. Additionally, the most recent long-term trend National Assessment of Educational Progress results in mathematics achievement for 13-year-olds indicate a significant drop since the last data collection in 2012 (U.S. Department of Education, n.d.). Within this context, however, the current findings seem to suggest that FH2T and DragonBox were able to serve as supports to reduce the effects of interrupted learning for students in those conditions.

Additionally, the school district offered the students and their families options for synchronous in-person instruction in the school buildings or asynchronous virtual instruction at home (with the addition of synchronous help sessions). Regardless of the instructional format, all students in the current study received their assigned intervention online and worked individually at their own pace, using a device. Although the study procedure was not affected by the classroom format, the differences in students’ classroom format inadvertently introduced systematic variations in the study contexts. Specifically, students in in-person classrooms were aware of other intervention conditions, whereas students in virtual classrooms were not. Although no significant interactions were identified between study condition and instructional format, the presence versus absence of this awareness may have influenced students’ motivation to complete their intervention in unmeasured ways and, in turn, may have amplified the effects of game-based learning compared to traditional problem-solving.

Further, whether students enrolled in in-person or virtual classrooms was not random. A higher proportion of Asian students and high-performing students opted for virtual instruction, whereas a higher proportion of White students and low-performing students selected in-person instruction. These systematic differences between in-person and virtual classrooms may have been related to the high proportion of multigenerational households among Asian students, their anxiety about being bullied due to political and racial tensions, or satisfaction with virtual learning (Balingit et al., 2021). Regardless of the reasons behind the families’ decision for enrolling students in in-person or virtual classrooms, their choices had implications on the student compositions within the classrooms and school buildings. Specifically, as a higher proportion of Asian students and high-performing students opted for virtual instruction, the heterogeneity of the in-person classrooms was reduced through this process. Given the important differences between in-person and virtual classrooms, analyses controlled for the classroom format and found that intervention effects remained significant. Future work will further explore how the pandemic context affected student learning.

Limitations and Future Directions

The current study was limited in several ways. First, the attrition in the current study was nontrivial. Among the 3,612 students who participated at the start of the intervention, only 1,850 students completed pretest and posttest and thus were included in the current analyses. Although almost half of the students had dropped out of the study by the end of the intervention, the dropout rate was not related to the intervention condition, and there was minimal differential attrition between conditions. Second, as each technology condition functioned differently, time within the 30-minute sessions was used differently (all practice in FH2T, while ASSISTments included practice and review). Students in the DragonBox condition needed to stop a few minutes early to log their progress, which meant that those students did spend fewer minutes engaging in the technology. Although this step was necessary to maintain equal time within the classroom across conditions, future studies may explore differences in timing and overall effectiveness. Third, the current study focused on comparing each of the three treatment conditions to an active control condition. Future studies will examine differences across conditions to further inform the ways in which these technologies relate to the conceptual, procedural, and flexibility of algebraic thinking. Fourth, the current study provided evidence that Grade 7 students in the FH2T and DragonBox conditions did significantly better on the posttest reflecting algebraic understanding, but it did not offer insights into their areas of improvement or potential mechanisms. Preliminary follow-up analyses have revealed that FH2T and DragonBox improved on conceptual knowledge, but not on procedural knowledge or procedural flexibility (Chan et al., 2023). Future studies using the log data will explore how these intervention conditions affect aspects of students’ conceptual knowledge, whether students’ understanding was moderated by their prior knowledge or other demographic factors, and how the fidelity of implementation at the student level influenced student learning from each intervention. Addressing these questions is important, as they provide crucial information on the ways in which the interventions support algebra learning, for whom the interventions were effective, and the amount of intervention needed to result in learning gains. Finally, given the unique context of the COVID-19 pandemic, the data set may offer insights into how student learning was affected by the pandemic and inform future practices across instructional format and during stressful emergency situations.

Conclusion

Overall, the current study contributes to the efficacy of FH2T and DragonBox as interventions for supporting algebraic performance. Both games are promising interventions that focus on training students’ perceptual-motor routines in algebraic reasoning, yet they differ in several important ways. The findings have implications for future research in delineating the effective elements within each game. Further, they also have implications for educational practices, as they provide evidence that gamified learning platforms are effective ways for students to explore mathematical ideas and support their understanding.

Supplemental Material

sj-docx-1-ero-10.1177_23328584231165919 – Supplemental material for The Impacts of Three Educational Technologies on Algebraic Understanding in the Context of COVID-19

Supplemental material, sj-docx-1-ero-10.1177_23328584231165919 for The Impacts of Three Educational Technologies on Algebraic Understanding in the Context of COVID-19 by Lauren E. Decker-Woodrow, Craig A. Mason, Ji-Eun Lee, Jenny Yun-Chen Chan, Adam Sales, Allison Liu and Shihfen Tu in AERA Open

Footnotes

Funding

The research reported here was supported by the Institute of Education Sciences, U.S. Department of Education, through an Efficacy and Replication Grant (R305A180401) to Worcester Polytechnic Institute. The opinions expressed are those of the authors and do not represent views of the institute or the U.S. Department of Education. The authors would like to especially thank Kathleen, Barbara, and the teachers in the district who gave their time to participate, without whom the study would not have been possible. Also, thank you to the teams at Graspable Math, DragonBox, and ASSISTments for providing access to the technologies, as well as to Dr. Erin Ottmar for her vision of the study.

Supplemental Material

Supplemental material for this article is available online.

Authors

LAUREN E. DECKER-WOODROW is a principal research associate in the Education Studies practice at Westat; email:

CRAIG A. MASON is a professor of education and applied quantitative methods at the University of Maine; email:

JI-EUN LEE is a postdoctoral research scientist at Worcester Polytechnic Institute; email:

JENNY YUN-CHEN CHAN is an assistant professor of Early Childhood Education at the Education University of Hong Kong; email:

ADAM SALES is an assistant professor at Worcester Polytechnic Institute; email:

ALLISON LIU is a postdoctoral scholar at Worcester Polytechnic Institute; email:

SHIHFEN TU is a professor of education and applied quantitative methods and director of the School of Learning and Teaching at the University of Maine; email:

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.