Abstract

Teachers’ beliefs can have powerful consequences on instructional decisions and student learning. However, little research focuses on how teachers’ beliefs about the role of race and gender in mathematics teaching and learning influence educational equity within classrooms. This gap is partly due to the lack of studies focused on variation within classrooms, which in turn is hampered by the lack of instruments designed to measure mathematics-specific equity beliefs. In this study of 313 preservice and practicing elementary teachers, we report evidence of construct validity for the Attributions of Mathematical Excellence Scale. Factor analyses provide support for a four-factor structure, including genetic, social, personal, and educational attributions. The findings suggest that the same system of attribution beliefs underlies both racial and gender prejudice among elementary mathematics teachers. The Attributions of Mathematical Excellence Scale has the potential to provide a useful outcome measure for equity-focused interventions in teacher education and professional development.

Teachers’ beliefs influence their instructional decisions (Pajares, 1992), including decisions that shape the mathematical learning opportunities of girls, Native American and Indigenous people, and Students of Color (SoC). However, the field lacks a validated, mathematics-specific instrument to measure teachers’ racialized and gendered beliefs about mathematics learning. To date, instrument development in this area has focused on learning more broadly. In the present study, we report our work developing the Attributions of Mathematical Excellence Scale (AMES), which we posit is an important step toward advancing research on the role of teachers’ equity beliefs in mathematics education. We consider evidence of substantive validity, structural validity, and external validity to build an initial argument for the construct validity (Flake et al., 2017) of the AMES as a measure of elementary teachers’ systems of racialized and gendered attribution beliefs about students’ struggles and success in mathematics. This instrument responds to calls for research on racial attitudes in mathematics education (Battey & Leyva, 2018) and comes at a time when public cries for racial justice and equity—and the valuing of Black, Latinx, and Asian American lives—have reached a new pinnacle. Such an instrument is critical for addressing the opportunity gap in school mathematics, a persistent challenge with wide implications for broadening participation in science, technology, engineering, and mathematics.

The validity evidence we report also has the potential to advance theory because the AMES design operationalizes several transformative theoretical claims about mathematics teachers’ equity beliefs. First, we claim that individuals hold attribution beliefs about mathematics learning that differ from their more general attribution beliefs. Second, we claim that a common belief system—attributions of mathematical excellence—underlies racialized and gendered inequity in mathematics, although the effects are likely amplified for students with intersecting marginalized identities (Collins, 2000; Crenshaw, 1991; Leyva, 2017). Third, we claim that race-neutral and gender-neutral attribution beliefs are aligned with (rather than opposed to) racial stereotypes that make analogous attributions. For example, teachers who agree with ostensibly race-neutral statements (e.g., “Students who struggle to understand mathematics do not study enough.”) may be more likely than others to endorse analogous attributions of success to effort, even when these statements echo racial stereotypes (e.g., “Black students struggle in mathematics because they are lazy,” is an analogous attribution that echoes the racist stereotype “Black people are lazy.”) Building on analyses of “color-blindness,” in which individuals claim to not see race (e.g., Delgado & Stefancic, 2013), and related concepts of “color-evasiveness” (Annamma et al., 2017) and “race-evasiveness” (Chang-Bacon, 2021) in which individuals actively avoid discussing or acknowledging race, we conjecture that both attribution statements reflect the same underlying belief (i.e., the attribution of mathematical excellence to personal characteristics associated with race; c.f., ideology, below). If this conjecture holds, equity in mathematics teaching and learning is likely shaped by teachers’ attribution beliefs.

Teachers’ Attribution Beliefs

Attribution beliefs are individuals’ thoughts about the causes of actions or behaviors. More broadly, attribution theory assumes that people try to determine why people do what they do by attributing behavior to causes (Fiske & Taylor, 1991; Graham, 2020). People make two kinds of attributions—internal or dispositional attributions and external or situational attributions (Jones & Nisbett, 1971; Weiner, 1985). Internal attributions assign the cause of behavior to some internal characteristic of a person (e.g., personality, motives, race, or gender). External attributions assign the cause of behavior to a situation or event outside a person’s control (e.g., social pressures or luck). Researchers have found a tendency for individuals to explain their own behavior in ways that are favorable to them or their in-group, referred to as attribution bias (Ross, 1977). The overemphasis on dispositional, or internal, explanations for people’s behavior, even in cases with salient contextual factors, is referred to as correspondence bias, or the over-attribution effect (Ross, 1977). In other words, people have a cognitive bias that assumes that what a person says or does is dependent on the “kind” of person they are instead of any situational or contextual factor. This preference for internal explanations appears to be particularly powerful in achievement domains, including education (Reyna, 2008).

Existing studies suggest that teachers often fall prey to these forms of attributional biases (Bar-Tal & Guttmann, 1981; Bertrand & Marsh, 2015; Hall et al., 1989; Rolison & Medway, 1985). Teachers tend to attribute student failure to factors internal to the students (e.g., lack of effort Matteucci & Gosling, 2004) and external to themselves; however, students’ successes are generally attributed to teachers (Georgiou, 2008; Guskey, 1982; Yehudah, 2002)—for example, by virtue of instructional strategies (Gosling, 1994; Kulinna, 2007). How a teacher chooses to respond to a student’s low achievement is determined by the teacher’s attributions of the student’s low performance (Reyna & Weiner, 2001), and teachers’ attributions of students’ success inform their expectations of student performance (Jussim et al., 2009). These expectations tend to be self-fulfilling (Rejeski & McCook, 1980; Reyna, 2000, 2008) because new information tends to be filtered through existing beliefs (Cooper & Burger, 1980; Fennema et al., 1990; Fives & Beuhl, 2012; Pirrone, 2012; Rejeski & McCook, 1980). Thus, once established, teachers’ attributions of students’ achievement are unlikely to change without conscious awareness and deliberate effort.

Teachers’ attributions are related to student variables, with gender and race/ethnicity being the most prominent (Espinoza et al., 2014; Tenenbaum & Ruck, 2007). Although more recent research (Quinn, 2017) suggests that this is changing, teachers tend to perceive boys as being more mathematically capable than girls (Espinoza et al., 2014; Tiedemann, 2002; Tindall & Hamil, 2004), thus attributing boys’ success to ability and girls’ to effort. Conversely, low performance among girls is attributed to a lack of ability and among boys to insufficient effort (Espinoza et al., 2014; Fennema et al., 1990). Researchers have found that individuals’ tendency to favor internal explanations is foregrounded in relation to race/ethnicity because cultural stereotypes serve as a fruitful source of attribution information (Reyna, 2008). Teachers make judgments about students’ achievement and motivation based on race (Anderson-Clark et al., 2008). For example, White teachers provide more positive and less critical feedback to Black and Hispanic students than to White students (Harber et al., 2012) and view White students as more mathematically capable than Black students with similar performance (Battey et al., 2021). Although these studies do not explicitly capture teachers’ attributions, they do show bias and differential actions toward students by virtue of their race.

We assume that most, if not all, K–8 mathematics teachers strive to provide all their students with the best instruction possible. However, research linking teacher expectations and student outcomes suggests that well-intentioned teachers are still influenced by biases that negatively influence Black and Brown students’ mathematics success. Inspired by initial research on the role of colorblind racism beliefs in preservice teachers’ emotion regulation during race-salient experiences (DeCuir-Gunby et al., 2020), we conjecture that teachers’ attribution beliefs about the ultimate source of student differences in mathematics may explain how well they are able to follow through on their equity intentions.

Defining the Attributions of Mathematical Excellence Construct

The AMES measures teachers’ beliefs about why students excel (or struggle) in mathematics. It includes four subscales describing attributions of mathematical excellence that are genetic (AME-G), social (AME-S), personal (AME-P), and educational (AME-E). From the perspective of social cognition research, these scales are designed to reflect specific lay psychological theories (e.g., Rangel & Keller, 2011) that help individuals make sense of why others act the way they do. Psychological essentialism (Medin, 1989) is the deterministic belief that individuals’ behavior is explained by their underlying nature or essence. From the perspective of equity scholars within mathematics education research, these scales reflect specific ideologies (Battey & Leyva, 2016; Martin, 2012), which function to justify practices and policies in mathematics education. The AMES is designed to help researchers investigate teachers’ attribution belief system about students’ mathematical excellence by identifying the extent to which these beliefs are influenced by students’ race and gender.

The genetic and social AME scales (AME-G and AME-S, respectively) characterize mathematical excellence as a fixed trait. Our conceptualization of these two scales relies on a theoretical synthesis of research on social cognition and of scholarship on equity in mathematics education. Researchers have developed instruments to measure two different forms of psychological essentialism: genetic determinism, which is aligned with AME-G, and social determinism, which is aligned with AME-S. Genetic determinism refers to individuals’ beliefs attributing personal characteristics (including academic ability and performance) to biology (Keller, 2005). As Jamieson and Radick (2017) posit, “Twenty-first century biology rejects genetic determinism, yet an exaggerated view of the power of genes in the making of body and minds remains [common]” (p. 1260). By contrast, belief in social determinism (BSD) implies “that a person’s essential features . . . are shaped permanently and profoundly by social factors (e.g., upbringing, socialization, and social background)” (Rangel & Keller, 2011, p. 1056). Unlike genetic determinism, which focuses on an internal cause, BSD attributes the mathematical excellence of students to external social circumstances. BSD to explain academic achievement might point to parents’ level of educational attainment or parents’ inability or unwillingness to help their children in school.

Although psychological essentialism explains attributions about general traits and behaviors, scholarship in mathematics education has identified ideologies that are specific to mathematical traits and behaviors. Racial hierarchy in mathematics (Martin, 2009) is an ideology that builds the belief that race is a genetic trait, and it informs AME-G: “[B]elief in innate mathematics ability serves as a colorblind way of unconsciously believing in the racial hierarchy of ability” (Battey & Leyva, 2016, p. 64). The ideology of colorblindness (Bonilla-Silva, 2003; Bonilla-Silva & Forman, 2000; Neville et al., 2000) shifts discourse from internal genetic factors to external cultural and social proxies (e.g., parenting or values) and thereby makes discursive space for racist claims in putatively nonracial terms. Scholarship on this ideology informs AME-S. For AME-G and AME-S, students’ struggle or success in mathematics is attributed to factors that are ultimately outside teachers’ influence; thus, these attribution beliefs may undermine teachers’ motivation to support SoC.

Whereas AME-G and AME-S attribute mathematical excellence to immutable causes, the personal and educational AMES subscales (AME-P and AME-E, respectively) reflect the view that mathematical excellence is malleable. These subscales differ in whether mathematical excellence is a result of internal or external factors. The AME-P scale is informed by a long history in mathematics education of teachers’ attribution of girls’ (but not boys’) mathematics achievement to effort (e.g., Fennema et al., 1990; Tiedemann, 2000a, 2000b, 2002). It also builds on stereotypes that Black and Latinx students are lazy and do not try at school (Nasir & Shah, 2011; Oppland-Cordell, 2014). Some may be surprised by the apparent overlap between AME-P and the growth mindset (e.g., Dweck, 1986, 2006, 2008), which more recent work indicates is an asset for students. However, we distinguish between students’ views on the efficacy of their own effort and the way a teacher’s focus on student effort can absolve the teacher’s duty of care by holding students wholly responsible for their learning. In this way, AME-P builds on the long-standing critique of the racist function of meritocracy in mathematics education (e.g., Battey & Franke, 2015; Martin, 2009). Meritocracy claims that success is based on effort, implies that lack of effort explains lack of success, and therefore compounds—and provides justification to ignore—the historical, systemic, and institutional ways that opportunities and rewards are (and have been) distributed by race and gender instead of merit (Rubel, 2017).

The AME-E scale captures teachers’ beliefs that mathematical excellence is a consequence of the schools, teachers, and educational opportunities a student has experienced. Our distinction between AME-E and the other AMES subscales draws on the contributions of Jackson et al. (2017) in describing teachers’ views of students’ mathematical capabilities. Wilhelm et al. (2017) take up this work and distinguish teachers’ productive explanations (“ones that attribute student difficulty to instructional and/or schooling opportunities”; p. 349) from unproductive explanations (“ones that attribute student difficulty to inherent traits of the student, or their family or community”; p. 349). The AME-E measures the productive beliefs that SoC and girls struggle in mathematics because of a lack of educational access and that mathematical excellence often involves extraordinary access to educational resources. These beliefs are productive in the sense that teachers recognize their own role as a consequential aspect of mathematical excellence.

Validation Argument Overview, Research Questions, and Analytic Plan

For the current study, we investigated the validity of the AMES by drawing on a sample of preservice and in-service teachers. Our work was guided by the construct validation framework discussed in Flake et al. (2017) and more recently applied to the validation of a novel instrument for mathematics teacher anxiety (Ganley et al., 2019). In this framework, evidence for substantive validity, structural validity, and external validity is integrated to make an argument for the construct validity of an instrument. We framed our research questions in relation to a specific kind of validity and in the context of open theoretical questions to clarify how our results advance knowledge, even as they provide warrants for the use and further development of the AMES. This section also describes the statistical and psychometric analyses we used as warrants for the validation argument and to answer the research questions.

To establish initial evidence for substantive validity, we report our process of item design, item review and revision, and the innovation of using race-/gender-neutral statements to measure race and gender bias. We do not report a research question for substantive validity because this aspect of our work is not an empirical study in the traditional sense. Instead, we draw on our synthesis of research in social cognition and equity in mathematics education (see above) to operationalize the four distinct factors within the AMES: genetic, social, personal, and educational attribution beliefs.

To assess structural validity at the item level, we used the survey response data and considered item-level descriptive statistics and relationships between items. Building on our experience piloting two previous versions of the AMES, which suffered from skew and threshold effects, we applied two strategies to elicit a wider range of teachers’ beliefs: writing negatively worded items and writing items using identity-neutral language. Research Question 1 (RQ1) guided this phase of our study: How are ratings of negatively worded (reverse-coded) items related to teachers’ ratings of corresponding positively worded items? To what extent do negatively worded items increase the range of rating responses across the AMES items? The main hypothesis was that the negatively worded items would be correlated with corresponding positively worded items but have lower means, thereby increasing the range of AMES ratings. In addition, we asked Research Question 2 (RQ2): How are ratings of identity-neutral items related to teachers’ ratings of corresponding identity specific items? To what extent do identity-neutral items increase the range of rating responses across the AMES items? The main hypothesis we investigated was that identity-neutral items would be positively correlated with corresponding identity specific items but have lower means. To answer these questions, we compare the response patterns between negatively worded and positively worded items as well as the overall and within-factor item-total correlations.

To assess structural validity at the factor level, we used the survey response data and considered item-total correlations for each hypothesized factor, reliability estimates, and the results of confirmatory factor analysis (CFA). We found item dependencies between the identity-specific and identity-neutral versions of items that precluded modeling them as independent items with uncorrelated errors, a standard assumption of latent trait modeling. Ultimately, we combined the identity-specific and identity-neutral versions of items into testlets to account for between-item dependencies. Research Question 3 (RQ3) guided our work: Are the AMES testlets better modeled as a single trait, as two factors (race versus gender prejudice), or as four factors corresponding to distinct attribution beliefs? The main hypothesis for structural validity was that the hypothesized four-factor structure would fit the data better than plausible alternatives. We report testlet statistics as well as the CFA results with the testlet data to answer this question.

To assess external validity, we investigated how scores on the AMES were correlated with other psychological constructs. In this study, we were interested in whether social desirability played a role in teachers’ responses, because if responses on the AMES were biased by social desirability, then AMES scores would have less utility for teacher education or research. We also examined social determinism and genetic determinism because these constructs heavily informed the development of the AMES. Research Question 4 (RQ4) guided this part of the study: To what extent are scores on the AMES factors uncorrelated with social desirability and correlated with belief in social and genetic determinism in the ways theory predicts? We hypothesized that socially desirable responding would have a nonsignificant correlation with the four factors of the AMES, that social determinism would be correlated most with the AME-S factor and least with the AME-E and AME-P factors, and that genetic determinism would be correlated most with the AME-G factor and least correlated with the AME-E and AME-P factors. To answer this question, we use a CFA model with covariates to examine these correlations.

Method

Participants

We conducted our research in June 2020. The 313 participants included practicing teachers (n = 223) and preservice teachers (n = 90) from Indiana. All participants had previously participated in survey research for a larger project studying teachers’ knowledge and beliefs and had indicated that they were interested in follow-up research. The initial pool of teachers was a statewide representative sample of public school teachers in Grades 2–5, which was stratified based on school urbanicity, school percentage of SoC, and percentage of students eligible for free and reduced-price lunch. The sample of preservice teachers was constructed in two stages. First, volunteers were recruited from elementary teacher education programs in Indiana, and then participants were sampled from among volunteers in proportion to the size of each program.

Participants in the sample overwhelmingly identified as White (96%) and female (89%), following the regional demographics of elementary teachers in public schools (89% of elementary teachers in the Midwest identified as White, and 88% as female; National Center for Educational Statistics, 2021). All but one teacher identified as having English as their first language. Further details about the background and characteristics of the participants are available in the Supplemental Materials.

Instruments

The social desirability scale (SDS-17; α = 0.75; Stöber, 2001) is an instrument for measuring desirable responding, designed to update and replace the Marlowe-Crowne Scale (Crowne & Marlowe, 1960) as a reliable and valid measure of social desirability for adults. The BSD and belief in genetic determinism (BGD) scales measure two components of psychological essentialism: the tendency of individuals to explain others’ characteristics and behaviors by way of their underlying essence (Keller, 2005; Rangel & Keller, 2011). Both instruments have high reliability (α BSD = 0.84; α BGD = 0.87) and are supported by several validation studies. More information about the instruments is available in the Instrumentation section of the Supplemental Materials.

Procedure

Participants were invited by email to take an online survey administered via Qualtrics, two follow-up email reminders were sent, and data collection concluded after a 2-week period that began with the first invitation email. The AMES items were included in a longer survey that also included questions about demographic and background characteristics as well as questions about mathematics teaching, which were not germane to the present study. The whole survey took approximately 45 minutes to complete, and teachers were given a gift card as an incentive to participate. All participants provided informed consent before beginning the survey; all research instruments and procedures were approved by the Institutional Review Board at Indiana University before the study was conducted.

Results

Establishing Substantive Validity Through Operationalization

The AMES items are the product of three cycles of collaborative and cross-disciplinary item-writing based on a literature review coupled with field testing and item revision. We wrote and administered four pilot items tapping AME-G and AME-S in 2017 and administered them to 78 preservice teachers. Based on this pilot, we developed eight entirely new items tapping the same constructs and field-tested them with 245 preservice teachers in 2018. The 64 AMES items used in the present study built on what we learned from these field tests and expanded the instrument to include the AME-P and AME-E constructs, to include items with negative wording, and to include identity-neutral items in addition to the identity-specific items that were developed in previous cycles of item writing.

The AMES item design draws on the U.S. General Social Survey items that have been used for decades on nationally representative surveys of the U.S. population (Quinn, 2017). The AMES items differ in two ways. The General Social Survey questions require a yes or no response. Following recent work in sociology (Quinn, 2020; Valant & Newark, 2016), the AMES items allow a range of responses, which increases sensitivity to a broader range of beliefs. Second, the AMES items are mathematics specific.

The AMES items used in this study asked respondents to rate the truth (from 1 [Completely true] to 7 [Not at all true]) of statements that attribute stereotype-aligned indicators of mathematical excellence (e.g., “< White students / Boys > score higher on standardized math tests”) to one of the four sources stereotypically associated with race or gender: genetic (e.g., “because of basic genetic differences” or “. . . biological factors”), social (e.g., “because of cultural and religious expectations” or “. . . upbringing”), personal (e.g., “because they put in more effort” or “. . . spend more time studying”), and educational (e.g., “because they go to better schools” or “. . . have more educational opportunities”). A subset of items for each indicator (one genetic, two social, four personal, and two educational) were revised to contradict the relevant stereotype (i.e., negative wording). An example negatively worded genetic statement is “In my view, genes do not determine which students excel in mathematics.” An example negatively worded personal statement is “The students who excel in mathematics rarely have to try very hard.” These items were designed to be reverse-scored.

For each identity-specific item, we wrote an identity-neutral version without specific race or gender identifiers (e.g., “Students struggle to learn . . .” versus “Black students struggle to learn mathematics because they do not put in the required time and hard work.”). This design encodes the theoretical claim that statements that make attributions of mathematical excellence without specifying race or gender (i.e., identity-neutral items) are different in degree but not in kind from statements that echo racial and gender stereotypes (in the case of AME-G, AME-S, and AME-P) or that acknowledge a racial and gendered opportunity gap (AME-E). The items are provided in Tables 1–4. We use item labels in which the first character indicates the construct (g: AME-G, s: AME-S, and so forth), the numeral indicates a distinct attribution and mathematical excellence descriptor, and the second character indicates whether race (“r”), gender (“g”), or identity-neutral wording (“n”) is used; item labels appended with “x” are negatively worded. Thus, items g8nx and g8r share a genetic attribution and mathematical excellence descriptor, but g8nx is identity-neutral and negatively worded (“In my view, genes do not determine which students excel in mathematics.”), whereas g8r is race-specific (“In my view, genetic factors explain why Hispanic and Latino students struggle to learn mathematics.”).

Item wording and descriptive statistics for the AME-G subscale

Note. Labels are coded with “g” to indicate gender-specific, “r” for race-specific, and “n” for identity-neutral wording and with “x” to indicate negatively worded (i.e., counter-stereotype) items. M = mean; SD = standard deviation.

The AMES items are copyright © 2020 The Trustees of Indiana University.

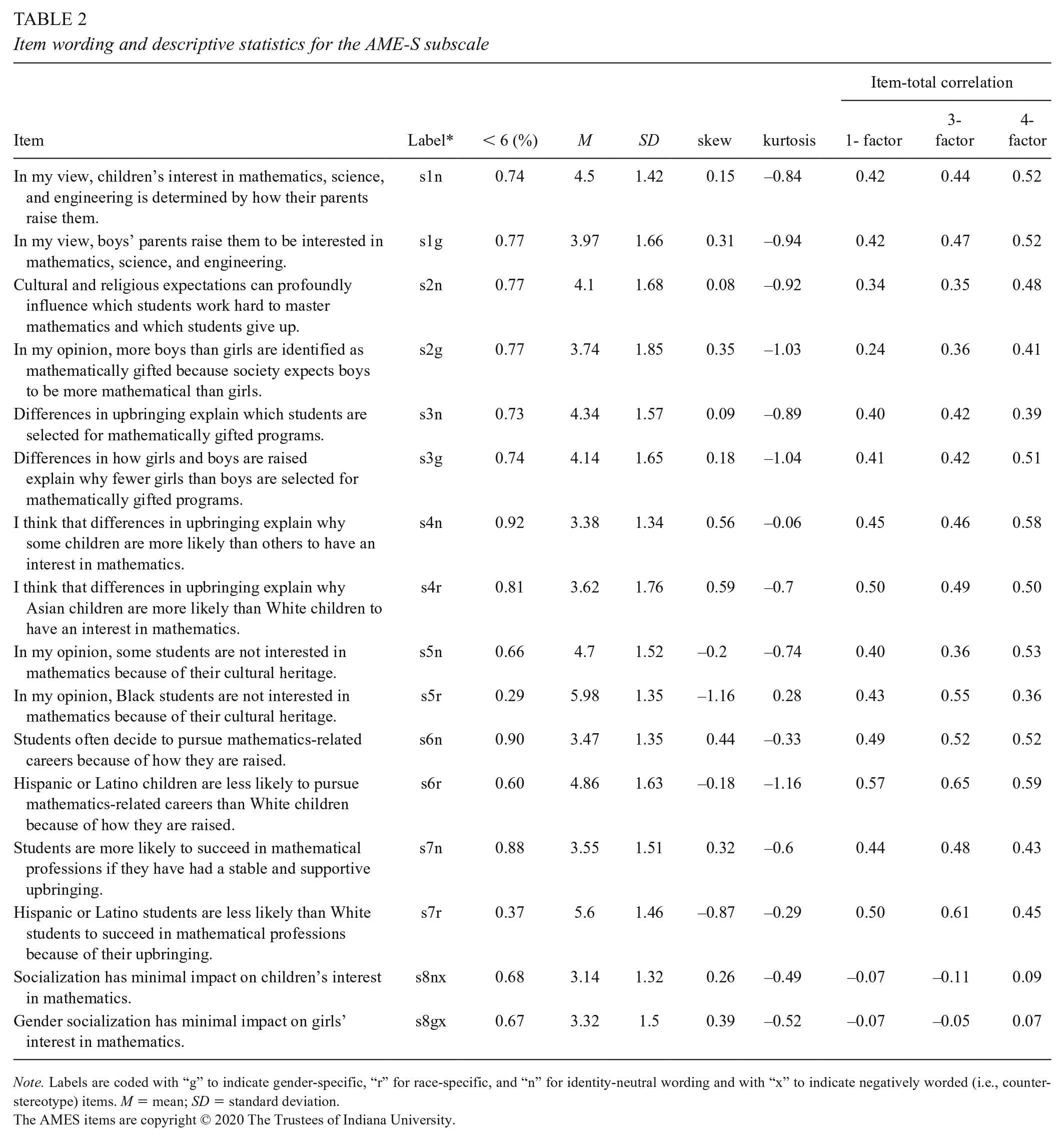

Item wording and descriptive statistics for the AME-S subscale

Note. Labels are coded with “g” to indicate gender-specific, “r” for race-specific, and “n” for identity-neutral wording and with “x” to indicate negatively worded (i.e., counter-stereotype) items. M = mean; SD = standard deviation.

The AMES items are copyright © 2020 The Trustees of Indiana University.

Item wording and descriptive statistics for the AME-E subscale

Note. Labels are coded with “g” to indicate gender-specific, “r” for race-specific, and “n” for identity-neutral wording and with “x” to indicate negatively worded (i.e., counter-stereotype) items. M = mean; SD = standard deviation.

The AMES items are copyright © 2020 The Trustees of Indiana University.

Item wording and descriptive statistics for the AME-P subscale

Note. Labels are coded with “g” to indicate gender-specific, “r” for race-specific, and “n” for identity-neutral wording and with “x” to indicate negatively worded (i.e., counter-stereotype) items. M = mean; SD = standard deviation.

The AMES items are copyright © 2020 The Trustees of Indiana University.

Structural Validity

The new set of 64 AMES items was designed to increase the range of responses on AMES items because the first two field tests revealed skewed item distribution and possible restriction in range. We found that negatively worded items increased the range of responses but were not highly correlated with the positively worded items. Because of this and other evidence that these items did not tap the intended constructs (see the Supplemental Materials), we removed the negatively worded items from the subsequent stages of analysis. The identity-neutral items also increased the range of responses and were correlated with the identity-specific items. However, the identity-neutral and identity-specific versions of items did not satisfy the assumption of local independence (see the Supplemental Materials). To address this psychometric issue, we adopted a testlet approach (Wainer & Kiely, 1987; Wainer & Lewis, 1990) and scored each pair of items together as a single indicator. We used CFA to evaluate how well the testlet scores could be modeled under the hypothesized factor structure.

In this section, we report on the structural validity evidence for items and then for testlets. We report the results that address specific research questions about items (RQ1 and RQ2) and testlets (RQ3) as well as the results that contribute to the validity argument for the AMES more generally in each category.

Items

We began our investigation of structural validity by evaluating the descriptive statistics for the 64 AMES items (Tables 1–4). Items that have skew exceeding an absolute value of 2 (Tabachnick & Fidell, 2013) or kurtosis exceeding an absolute value of 7 (Hair et al., 2010) are considered problematic because they may violate the normality assumptions of CFA. Only two items (g3g; e8gx) exceeded the skew threshold, and only one item (e8gx) exceeded the kurtosis threshold. We flagged these items and next considered the item means.

The item means varied systematically by attribution. The range of item means and the grand mean tended to be lower for social and educational attributions and higher for items with genetic and personal attributions (see Table 5). To address RQ1, we compared the range of item means and grand means of the negatively worded items with the corresponding statistics for identity-specific items. The negatively worded (reverse-scored) items had a substantially smaller grand mean and a lower range of item means, confirming our hypothesis that teachers would rate negatively worded attribution statements as more true than analogous positively worded items. The results in Table 5 also address RQ2, which concerned the distribution of responses to identity-specific versus identity neutral items. We found that identity-neutral items had smaller grand mean and a lower range of means than the identity-specific items.

Grand mean and range of item means by wording type and source of attribution

Note. There was only a single negatively worded item with a genetic attribution, two with social and educational attributions, and four with personal attributions.

Next, we examined item-total correlations results. We calculated these statistics in three ways, corresponding to the hypothesized four-factor structure distinguishing genetic, social, educational, and personal attribution beliefs and two alternatives: a unidimensional structure and a three-factor structure comprising identity-neutral items, gender-specific items, and race-specific items. These three sets of item-total correlations are presented in the last three columns of Tables 1–4. Except for one item (g8n), the reverse-scored negatively worded items had low or negative item-total correlations, suggesting that these items were not effective at tapping the same constructs as the other items, regardless of the factor structure used (RQ1). As a result of the findings from item analysis, we excluded the negatively worded items from subsequent analyses (see Item Analysis in the Supplemental Materials).

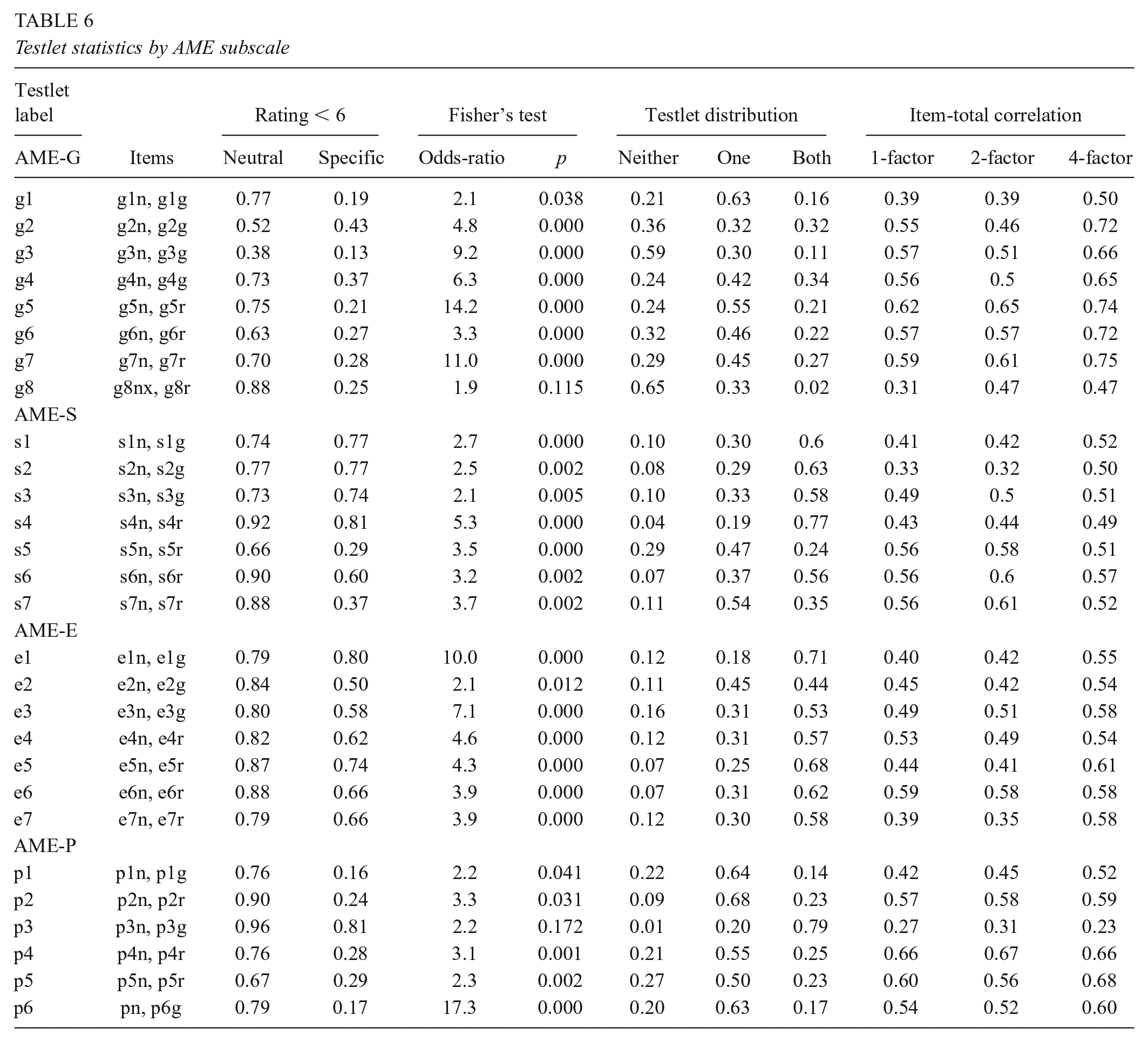

Testlets

The AMES testlets comprised pairs of dichotomized positively worded items that shared the same or very similar wording for the indicator of mathematical excellence (e.g., “high achievement scores in mathematics”) and the source of the attribution (e.g., “genetic factors”). One item in each testlet was identity-neutral, and the other was either race- or gender-specific. One challenge was scoring the testlets in a way that preserved the items’ meaning to maintain interpretability. For example, it did not make sense to add or average ratings on the two items, because these operations require an interval interpretation of item scores, but rating items are ordinal. Instead, we dichotomized the rating of items at a meaningful cut point and used a simple rule to score each testlet on a 3-point ordinal scale: 1 if both items were rated 5 or below (partially to completely true), 2 if either item was rated partially or completely true, and 3 if neither was rated partially or completely true.

We chose a cut point of 5 to dichotomize items based on our examination of the empirical distribution of the identity-specific item ratings. Many of these items evidenced a bimodal distribution with local minima near 5 (see Figure 1). Very few participants rated these statements as completely true, but many rated these statements as 5 or less, meaning partially true. Another group of participants tended to rate these statements as 6 or 7, meaning not at all true or nearly so. The cut score of 5 enabled us to distinguish between these groups and maintain meaningful scoring for the testlets. Significantly, we found that individuals who rated the identity-neutral item as true or partially true had higher odds of rating the identity-specific item as true or partially true (see RQ2). These results are presented in the fifth and sixth columns of Table 6. More analyses supporting the testlet scoring and factor structure are provided in the Testlet Analysis section of the Supplemental Materials.

Items from all factors evidenced a bimodal distribution with local minima near 5

Testlet statistics by AME subscale

Factor Analysis

We used CFA in Mplus 8.4 (Muthén & Muthén, 2017) to examine the factor structure of the AMES testlets by treating them as categorical items with three levels and using weighted least squares estimation. We considered three different models: a unidimensional model with all items loading on the same single factor, a two-factor model with race-specific items loading on one factor and gender-specific items loading on the second factor, and a four-factor model with factors corresponding to the four kinds of attributions (genetic, social, educational, and personal). We follow Kline (2016) and report model chi-square, root mean square error of approximation, confirmatory fit index, Tucker-Lewis index, and the standardized root mean square residual (SRMR). The guidelines indicate that there is good model fit when there is a nonsignificant chi-square test of model fit (p > .05), a high confirmatory fit index (≥ .90), a high Tucker-Lewis index (≥ .90), low root mean square error of approximation (< .08), and low SRMR (< 0.08). Table 7 presents these model fit statistics for Models 1–6.

Fit statistics for the CFA models of the AMES

Note. AME = Attributions of Mathematical Excellence; AMES = Attributions of Mathematical Excellence Scale; CFI = confirmatory fit index; CI = confidence interval; RMSEA = root mean square error of approximation; SRMR = standardized root mean square residual; TLI = Tucker-Lewis index.

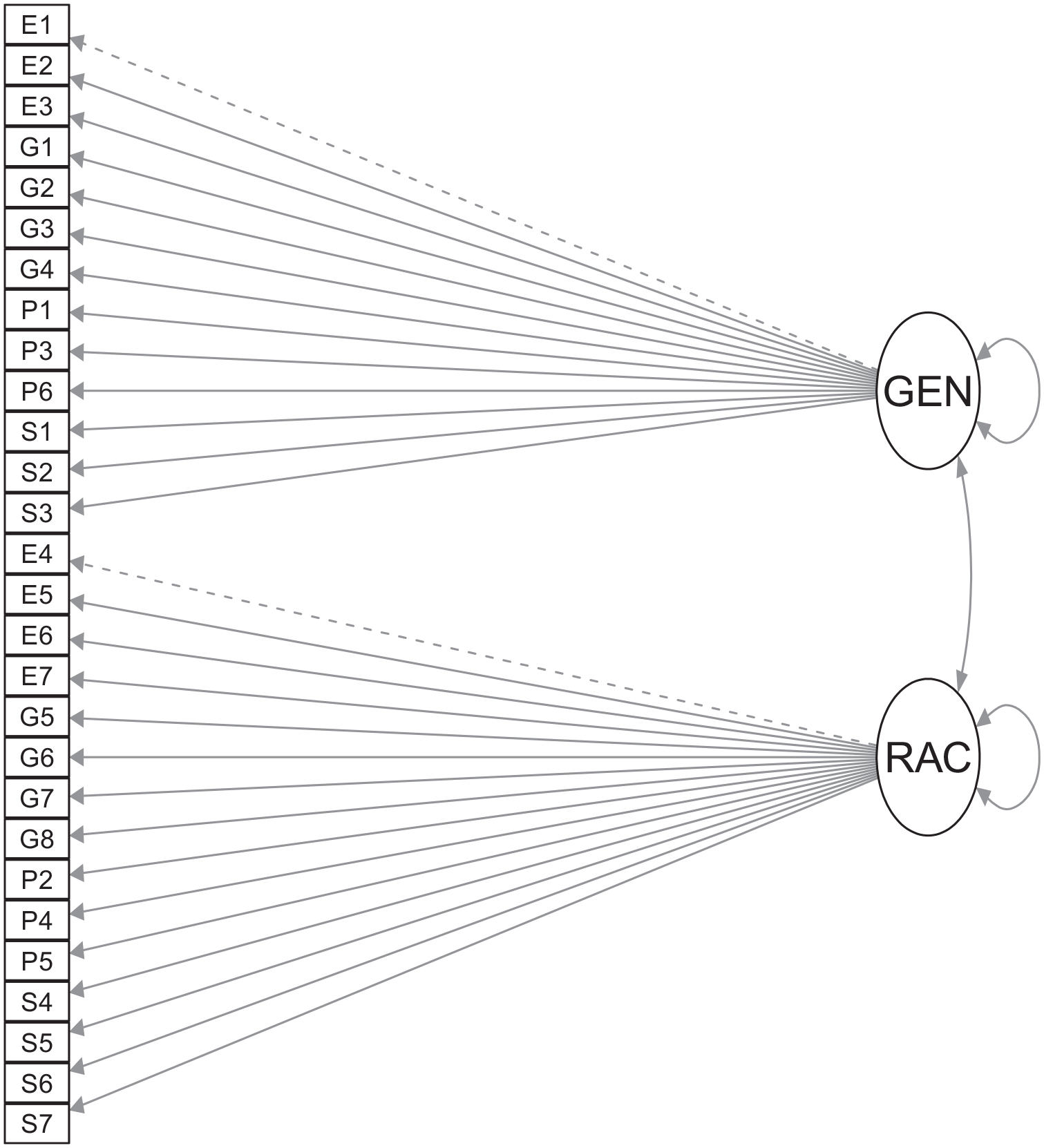

The unidimensional model (Model 1 in Tables 7–8; also see Figure 2) and two-factor model (Model 2 in Tables 7–8; also see Figure 3) evidenced poor fit, with all fit indices falling below (or above) the recommended thresholds. The correlation between the race and gender factors in the second model was high (r = 0.865; p = .000), suggesting that these putative factors were not empirically distinct. The four-factor attribute structure exhibited much better fit, with every index indicating good fit except χ2(1, N = 344) = 816.27, p = .000 and SRMR = 0.086 > 0.08. In the four-factor model, the lowest standardized factor loadings were 0.42, 0.51, and 0.56, and all factor loadings were statistically significant at p < .001 (see Model 3 in Tables 7–8; also see Figure 4). The correlations among the factors were moderately high: between genetic and social, r = .60; between educational and personal, r = .68; between genetic and personal, r = .62; between genetic and educational, r = .38; between social and personal, r = .71; and between social and educational, r = .70. All correlations were statistically significant at p < .001. These suggested that the underlying latent constructs were clearly differentiated yet also strongly related, with the exception of the moderately low correlation between genetic and educational attribution beliefs.

Standardized item loadings for the CFA models of the AMES

Note. AMES = Attributions of Mathematical Excellence Scale; CFA = confirmatory factor analysis.

Path diagram for Model 1

Path diagram for Model 2

Path diagram for Model 3

To create a more parsimonious scale, we considered empirical item misfit and removed four items. More details are provided in Removing Problematic Testlets in the Supplemental Materials. The four removed items did not change the estimates of reliability for each factor appreciably, with Cronbach’s alpha improving slightly for AME-P and worsening slightly for the other factors (AME-G, α = .89 vs. .90; AME-S, α = .76 vs. .79; AME-E, α = .80 vs. .82; AME-P, α = .82 vs. .80). We fit analogous versions of Models 1–3 with the 24 retained items and found similar results (Models 4–6; see Tables 7–8). The 24-item unidimensional model (Model 4) and two-factor model (Model 5) did not fit the data, whereas the four-factor model (Model 6) fit the data very well, with all fit indices indicating good fit—including SRMR, which was slightly above the cutoff for the 28-item model. These results suggest that the 24-item version of the scale performs as well as, if not better, than the 28-item version, and we used this version of the model in subsequent analyses to address external validity.

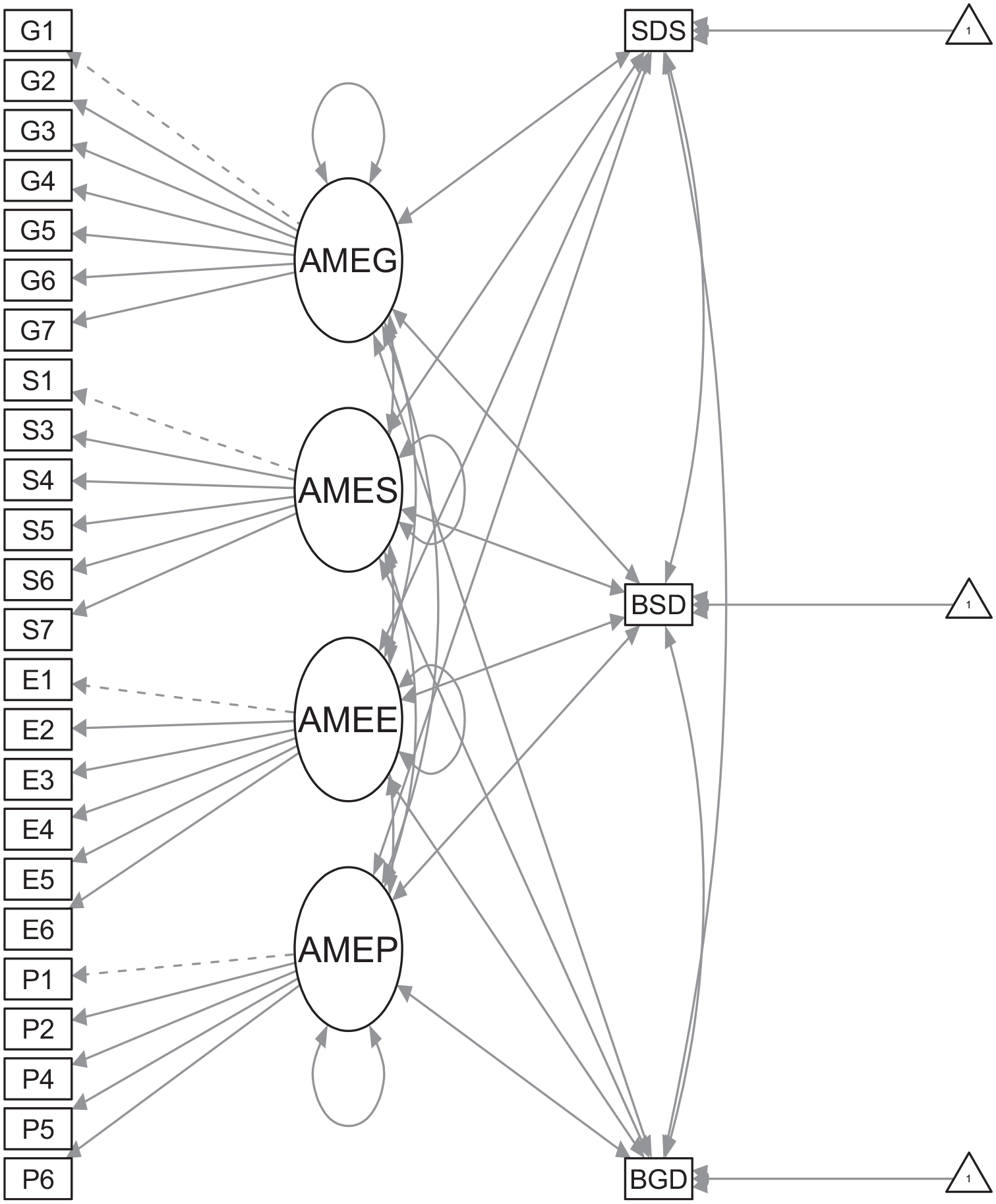

External Validity

All analyses examining the relationship between scores on the AMES factors and the other instruments were conducted with Mplus, using a CFA model with covariates (Model 7; see Figure 5) that extended Model 6, the parsimonious four-factor model. Raw scores for each external scale (SDS17, BGD, and BSD) were included in the model, and we report the correlations between the latent constructs for each of the AMES factors and these raw scores.

Path diagram for Model 7

AMES Factors and the SDS-17

To determine whether social desirability bias played a substantial role in teachers’ responses on the AMES, we examined the correlation between the SDS-17 and each AMES factor. We found that the correlations between the SDS-17 and three factors were not statistically significant at the 0.05 level (rGenetic = –.020, p = .73; rEducational = .121, p = .06; rPersonal = .036, p = .560). We did find that the SDS-17 and AME-S were significantly correlated at the 0.05 level (rSocial = .150; p = .02). This very low correlation suggests that socially desirable responding explains about 2%–3% of the variance in the AME-S factor. Interestingly, when we examined item correlations with the SDS-17 scale, all correlations were between –.1 and .1, indicating that the relationship may not be due to a single poorly performing item but is more widespread across multiple items. This finding confirms that the relationship is weak but also implies that further research is warranted to understand how social desirability might influence responses on the AME-S items.

Relations Among AMES Factors and Belief in Genetic and Social Determinism

We were interested in examining how well the pattern of correlations among the AMES factors and BGD and BSD accorded with theoretical expectations. Because the AME-G and AME-S factors were designed to reflect mathematics-specific and learning-specific versions of these more general beliefs, we expected these AMES factors to be more highly correlated with BGD and BSD, respectively, than were other AMES factors. Because BGD and BSD are both deterministic beliefs, we expected the AMES factors that frame mathematics excellence as a malleable trait would have the lowest correlations with BGD and BSD. We found that BGD had the highest correlation with the AME-G factor (r = .524; p = .000), then AME-S (r = .333; p = .000), then AME-P (r = .245; p = .000), and a non-significant correlation with AME-E (r = .062; p = .311). Similarly, BSD had the highest correlation with the AME-S factor (r = .392; p = .000), then AME-G (r = .296; p = .000), then AME-P (r = .265; p = .000), and the lowest correlation with AME-E (r = .183; p = .005). Our results provide a pattern of correlations with magnitudes ordered in line with expectations based the social cognitive theory of psychological essentialism, which adds credibility to our interpretation of the AMES factors.

Discussion

Attribution beliefs help teachers make sense of their students’ struggle and success in mathematics, but these attributions also shape how—and to whom—teachers respond. As is typical of beliefs in general, attribution beliefs about specific individuals tend to be stable (Green, 1971; Nespor, 1987), and this stability provides a window to the problem and promise that these beliefs pose for equity in mathematics education. On the one hand, theory suggests that once a teacher has attributed a student’s mathematical success or struggle to a specific cause, the student can do little to change the attribution because it becomes a self-reinforcing filter (Wang & Hall, 2018). On the other hand, if teachers—through professional development, for example—become conscious of their attribution belief system, they may be able to reflect on the attributions they make and use this awareness to improve their teaching practice. As a first step in investigating these potential mechanisms of attribution beliefs for educational equity, it is necessary to have a well-validated measure of the construct for use with preservice and in-service teachers. In this study, our goal was to report initial validity evidence for a novel instrument, the AMES.

We addressed the substantive validity of the AMES with a literature review to identify the construct and an iterative process to write and refine a wide range of items. The empirical factor analysis results evidenced structural validity by conforming to the theoretical structure we used to design the AMES: There were four moderately correlated factors related to genetic, social, educational, and personal attribution beliefs. Based on the item analysis, we dropped the negatively worded items to preserve scale coherence; we combined the identity-neutral and identity-specific versions of the remaining items into testlets to satisfy local independence; and we ultimately identified a 24-testlet scale for future use after removing redundant items. We evaluated external validity by correlating AMES scores with a measure of social desirability, which indicated that three of the factors are uncorrelated with social desirability and that the social factor is weakly correlated with it. All four AMES factors were related in the hypothesized ways with belief in social and genetic determinism.

Substantive Validity

The AMES was developed with several characteristics that address substantive validity. First, to anchor the items in classroom practice and increase the potential utility of the resulting instrument, we began with interview-based descriptions of teachers’ productive and unproductive beliefs about students’ struggle in mathematics (Jackson et al., 2017; Wilhelm et al., 2017). We expanded this construct to include a focus on mathematical excellence as well as struggle. Then, we drew on our novel synthesis of the research literature to identify four attributions of mathematical excellence that crossed the internal versus external and the malleable versus nonmalleable sources of attribution. At all stages, AMES items were developed through an iterative process of writing and revising that leveraged the varied expertise of our interdisciplinary team.

Structural Validity

Our investigation of structural validity evidence for the AMES advanced knowledge by generating a new hypothesis and supporting two theoretical claims. In response to skewed item ratings in initial pilots, we undertook two strategies to increase the range of responses and more completely capture the constructs. We answered RQ1 by evaluating the use of negative wording. Our hypothesis was not confirmed: These items were ultimately eliminated from the instrument because of negative or low item-total correlations. These findings suggest the new hypothesis that attribution beliefs form a loosely related system such that those who disagree with one attribution may agree not with its opposite but instead with an entirely different attribution.

We answered RQ2 by evaluating identity-neutral items. First, the identity-neutral items were successful in increasing the assessed range of the AMES constructs because the grand means of identity-neutral items were lower than that of identity-specific items for all four kinds of attribution statements. Second, item-total correlation evidence and CFA results reveal that the identity-neutral items loaded on the same constructs as the identity-specific items. These findings provide robust evidence aligned with prior theoretical claims about the foundational meaning of teachers’ “colorblind” or race-evasive statements (e.g., Battey & Leyva, 2016) and thereby makes an important empirical contribution to the field. Specifically, our survey data and psychometric methods suggest that stereotype-aligned, race-neutral attribution statements do not reflect different beliefs than race- and gender-based stereotypes, just milder, more common, and more socially-acceptable versions of the same underlying beliefs.

The AMES was designed to include four distinct kinds of attribution beliefs, including genetic, social, personal, and educational attributions. We answered RQ3 by evaluating this structure. We compared the hypothesized four-factor structure with two other plausible alternatives, a unidimensional model and a two-factor model distinguishing race-specific and gender-specific items. Item-total correlations and factor analysis fit indices strongly supported the four-factor model. The shortened instrument with the four-factor attribution structure also fit the data very well and provided additional evidence supporting the structural validity of the AMES. These results—and the comparison between the two- and four-factor models in particular—support another theoretical claim: Attribution beliefs may be a common source of gender and racial bias in mathematics education, something that has been largely overlooked because even among the rare studies that attend to race and gender bias (e.g., Riegle-Crumb & Humphries, 2012), researchers tend to frame each category of bias independently.

External Validity

We answered RQ4 by examining the relationship between AMES scores and several related constructs. We found that social desirability was not correlated with three of the AMES factors and only weakly correlated with the social factor, partially confirming our hypothesis. The findings of no (or low) correlations are remarkable in that many of the items reiterated racial and gender stereotypes, and a substantial portion of the teachers participating endorsed them to some degree. We found that the pattern of correlations between the AMES factors and BGD and BSD was consistent with social cognitive theory, which defines these constructs as closely related yet distinct components of psychological essentialism. These findings increase our confidence that the AMES is measuring what it purports to measure.

Implications for Practice

The moderate size of the correlations also show that the AMES is measuring constructs that—although related to BGD and BSD—are clearly distinct, and this finding is in line with our theoretical claim that mathematics-specific attribution beliefs are distinct from more general ones. Students perceive math as a difficult subject (Haag & Goetz, 2012) and are more anxious about it than other subjects (Pekrun et al., 2007); these differences may allow distinct attribution beliefs for mathematics to form. Teachers reported stronger beliefs in the role of innate ability for math than for German language arts (Heyder et al., 2020). Similarly, certain academic fields, including mathematics, are perceived by scholars in those fields to require more innate ability than others (e.g., Leslie et al., 2015). This common view of mathematics as different than other subjects may contribute to the persistence of teachers’ prejudice in this area. For example, Battey and colleagues (2021) found that preservice teachers interpreted White students as more mathematically capable than Black students who produced similar work regardless of the teachers’ general racial attitudes or the time they had spent in African American communities.

These observations lead to the question of what else beyond genetic and social determinism could contribute to mathematical attribution beliefs? Work in mathematics education (e.g., Battey & Leyva, 2016; Martin, 2009, 2012) suggests that teachers maintain discourses specific to mathematics. The discursive practices within schools, districts, and teacher communities may play a larger role in shaping and maintaining attribution beliefs than does the variation between teachers’ more general beliefs in social or genetic determinism, and such discourses might be influenced by professional development. Thus, the contexts in which teachers work likely reinforce these beliefs, as deficit discourses are reaffirmed through teachers’ interactions with colleagues and administrators (Horn, 2007).

Professional development focused on shifting teachers’ attribution beliefs about mathematical excellence should include opportunities for teachers to understand how different forms of mathematical instruction support students in demonstrating different levels of competence (Jackson et al., 2017). Professional development and teacher education should explicitly acknowledge prevailing negative master narratives about SoC and then support teachers in finding and retelling counter stories of mathematical competence (e.g., Stinson, 2008). Work should also explore how to adapt and adopt techniques used for disrupting other kinds of unproductive teacher beliefs. For example, Gill et al. (2020) find that preparing teachers to notice conflicts between their own beliefs and refutational texts produced more conceptual change in their beliefs about mathematics teaching and learning than did refutational texts alone.

Limitations and Future Directions

In this study, we reported preliminary evidence for the use of the AMES to measure mathematics attribution beliefs among preservice and in-service elementary teachers, but limitations in our work to date suggest important directions for future research. First, the AMES items only reflect a small portion of the possible attribution statements that could be used in such items. Future work should include open-ended interviews with preservice and in-service teachers to contribute further evidence of substantive validity by illustrating how teachers reason about students’ struggle and success in mathematics and whether all of these ways of reasoning are adequately represented by the AMES items.

Second, further evidence should be collected to support the interpretation of AMES scores as a reflection of teachers’ race- and gender-related biases. For example, correlations between AMES scores and other instruments that measure teachers’ racial or gender prejudice, including measures of implicit bias, would provide evidence about how well AMES taps race or gender bias. Third, the fit and reliability estimates for AMES should be confirmed with an independent sample. Fourth, the findings that we report do not speak in any way to the level of attribution beliefs that are consequential for students. Future research that is sensitive to within-classroom opportunity gaps either through test data or classroom observation would go a long way toward establishing how attribution beliefs are associated with educational equity.

Finally, this study was conducted with a large sample of preservice and in-service elementary teachers, but the sample has limitations. The extent to which some groups of teachers were under- or overrepresented in the achieved sample might have introduced systematic bias in the implied distribution of mathematics attribution beliefs among elementary teachers. Available data suggest that the study sample is similar demographically to teachers in the same state, but the results that we report in this study should be tested further with a nationally representative sample that includes Black, indigenous, and teachers of color whose voices are not well represented in this work to date.

Conclusions

In this study, we present the AMES and preliminary evidence of its construct validity by discussing evidence of substantive validity, structural validity, and external validity for its use with preservice and in-service elementary teachers. Our analyses show that the hypothesized structure fits the data much better than two alternatives, teasing apart four theoretical components related to distinct attribution beliefs. Scores on the instrument are correlated in expected ways with two constructs that inform the design of the measure, bolstering the theoretical grounding of the instrument design. By contrast, AME-G, AME-P, and AME-E scores are not correlated—and AME-S only weakly correlated—with social desirability, suggesting that the design has avoided a major potential threat to validity.

Together, these findings support the use of AMES as a pre-assessment to inform the design of professional development that accounts for elementary teachers’ beliefs about who excels in mathematics and why; to provide institutional feedback by tracking changes in these beliefs over time (for example, in a teacher education program with a focus on equitable instruction); as a pre- and post-test for equity-focused interventions that aim to shift teachers’ attribution beliefs; and as a research tool to enable commensurate analysis of the relationships between teachers’ beliefs, knowledge, and practice in a wide variety of educational contexts that exist in U.S. schools. The AMES instrument may have wider applicability, such as in other countries or with other populations of teachers (e.g., high school teachers), but we caution potential users to pilot the instrument before such use.

These research findings come at a time when the recent public reckoning with racial injustice in policing and the justice system has increased awareness of race- and gender-based inequities in other social and institutional systems of American society, including education. We believe that these conditions will continue to lead to increased interest in research efforts to understand inequity in education as well as new educational interventions to address it. Measurement is the cornerstone of all science, and in these endeavors, trustworthy instruments are of critical importance. Instruments that support valid and reliable interpretations of teachers’ attribution beliefs at scale are necessary to better understand inequity in classroom instruction and to understand how interventions influence—or are moderated by—teachers’ attribution beliefs. We hope that researchers will use the AMES to understand the role of attribution beliefs in classroom instruction and to evaluate interventions designed to address racial and gender equity in mathematics teaching and learning.

Supplemental Material

sj-docx-1-ero-10.1177_23328584221130999 – Supplemental material for Race, Gender, and Teacher Equity Beliefs: Construct Validation of the Attributions of Mathematical Excellence Scale

Supplemental material, sj-docx-1-ero-10.1177_23328584221130999 for Race, Gender, and Teacher Equity Beliefs: Construct Validation of the Attributions of Mathematical Excellence Scale by Erik Jacobson, Dionne Cross Francis, Craig Willey and Kerrie Wilkins-Yel in AERA Open

Footnotes

Acknowledgements

This work was funded in part by the National Science Foundation under Award #1561453 and Award #2200990. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the NSF.

Authors

ERIK JACOBSON is an associate professor of mathematics education in the department of curriculum and instruction at Indiana University, Bloomington. He develops instruments to measure mathematics teachers’ knowledge and beliefs and studies how these constructs develop over teachers’ careers and are related to instructional practice and student learning.

DIONNE CROSS FRANCIS is an associate professor of mathematics education at the University of North Carolina, Chapel Hill. She focuses on understanding the contextual and teacher-specific factors that motivate teacher actions as they plan and instruct, with the goal of determining the optimal design features of professional development that will allow teachers to thrive.

KERRIE WILKINS-YEL is an assistant professor of counseling psychology at the University of Massachusetts, Boston. Her research interests lie at the nexus of vocational psychology, social-justice advocacy, and inequity in the world of work, and she uses an intersectional approach to understand the influence of oppression and marginalization on academic achievement and career development among women and girls from diverse racial/ethnic backgrounds.

CRAIG WILLEY is an associate professor of mathematics education at Indiana University–Purdue University at Indianapolis. He examines the interplay between oppressive systems (e.g., racism) and opportunities to learn mathematics among children of color and seeks to extend theoretical frameworks in mathematics education addressing equity and inclusion.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.