Abstract

In this case study, we investigated the effectiveness of growth mindset and social belonging interventions in a college setting with large numbers of traditionally underrepresented groups (n = 1,091). In doing so, we highlight the characteristics of the students in our study that are important for determining whether we should expect such interventions to be effective for diverse higher education populations. Correlational analyses revealed no evidence that growth mindset or social belonging were barriers to academic success among targeted subgroups in our sample. Additionally, we found no evidence that underrepresented minority, first-generation, or low-income students substantially endorsed fixed mindset or belonging uncertainty measures at baseline. We discuss benefits of testing basic assumptions for interpreting null results, including choosing the most appropriate interventions, accurately identifying subgroups who face psychological barriers to academic success, and establishing “redundancy thresholds” at which messages do not need to be reinforced by interventions.

Seminal theories of college persistence suggest that academic success and social integration are twin pillars that support students’ persistence in the college environment (Astin, 1984; Tinto, 1993). However, traditionally underrepresented students on average begin college with less academic preparation and a lower sense of belonging (Hausmann et al., 2009; Maestas et al., 2007; Murphy & Zirkel, 2015; Ostrove & Long, 2007). College administrators must therefore develop a means of offering both academic and social support for students who face these challenges. Although programs that offer comprehensive support to students, such as learning communities (Carter et al., 2009; Hoffman et al., 2003) have yielded promising results in meeting both goals (Xu et al., 2018), such programs can be expensive to implement and difficult to scale up.

Light-touch interventions that draw on social-psychological theories and target students’ thoughts and beliefs are a low-cost solution that may produce powerful effects. Yet attempts to implement these interventions in new contexts have often suffered, with educational stakeholders unaware of the important ways in which their own students may differ from those in successful, publicized intervention studies. Despite the need to understand for whom and under what conditions an intervention would work, many of the factors that can produce variation in the treatment effects can be easily overlooked, such as the extent to which students or local contexts may be different from those where interventions have been successful. In this case study, we present an example of how testing basic assumptions about the students under study can help produce useful insights into why interventions may not be effective in some settings.

Psychological Interventions: Low-Cost Solutions

Unlike more intensive programs such as learning communities, light-touch psychological interventions can be short and less expensive to implement, narrowly targeting specific beliefs that have the potential to affect attitudes, behaviors, and academic success (Harackiewicz & Priniski, 2018; Walton, 2014). These interventions are rooted in specific psychological theories, allowing researchers to create short, yet impactful experiences. As Harackiewicz and Priniski (2018) detail in their review of psychological interventions in higher education, framing interventions target challenges that students often face during the transition to postsecondary institutions, helping them cope with adversity by framing those challenges as common and surmountable. These interventions attempt to affect how students make sense of their experiences in college, encouraging students to see challenges as normal obstacles that can be overcome with effort.

Among the most popular framing interventions in higher education are growth mindset interventions and social belonging interventions. Research suggests that students who believe intelligence is fixed and cannot be fundamentally improved are less likely to persist in the face of challenges and have less of a desire to seek challenge (Dweck & Leggett, 1988). Drawing on this theory, growth mindset interventions have proliferated, with many studies reporting higher student performance by encouraging them to accept the idea that intelligence is malleable and can be improved similar to any muscle (Aronson et al., 2002; Blackwell et al., 2007; Broda et al., 2018; Good et al., 2003; Paunesku et al., 2015; Yeager, Walton et al., 2016; Yeager, Hanselman et al., 2019). Likewise, students who feel like they do not belong are more prone to underperform and drop out of college (Hurtado & Carter, 1997; Strayhorn, 2012; Walton & Cohen, 2011). Building on this idea, social belonging interventions have raised performance and persistence by reassuring new students that many others before them have overcome the same belonging uncertainty that they now face (Broda et al., 2018; Walton & Cohen, 2011; Walton et al., 2015; Yeager et al., 2016).

Heterogeneous Outcomes in “Light-Touch” Interventions

Despite the success of these cost-effective psychological interventions, a key question is how generalizable these studies are. 1 This question is certainly relevant to the varying success of attempts to “nudge” students toward helpful resources and behaviors (Bettinger et al., 2012; Oreopoulos & Petronijevic, 2019), but is especially prevalent as psychological interventions continue to scale up. Two recent studies have highlighted the need to reevaluate the implementation of such interventions due to heterogeneity in effects. Yeager et al.’s (2019) landmark study among secondary students showed that growth mindset interventions were effective, on average, across 65 secondary schools. Importantly though, their study highlighted the importance of heterogeneity in these effects, investigating for whom and under what conditions they worked. Similarly, debate around Sisk et al.’s (2018) meta-analysis has focused on whether d = 0.08 is a relatively strong average effect of growth mindset interventions on academic achievement (Dweck, 2018). But what is perhaps more important is the heterogeneity underlying that meta-analytic average, and the fact that much of it could not be explained. Both works reaffirm the idea that these interventions are context dependent and that understanding the participant and context characteristics is as essential a consideration for success as the intervention procedure itself (Tipton, 2019; Yeager & Walton, 2011).

Two of the most rigorous recent studies, both involving growth mindset and social belonging interventions in higher education, further illustrate the struggle to identify conditions to which successful interventions generalize. Although Yeager et al. (2016) and Broda et al. (2018) both found that the interventions were effective, this was only for particular subgroups with each speculating as to why this was the case. Yeager et al. (2016) discussed that unaffected students may have had prior exposure to growth mindset messages, and that the intervention messages may not have aligned with campus messaging. Broda et al. (2018) reasoned that although African American students did not benefit from the intervention, Latino students may have had success due to a unique combination of lower baseline levels of growth mindset and higher levels of achievement. In both cases, the authors hint at participant and context characteristics that could influence the intervention effectiveness regardless of procedural similarities to previous studies.

Making Sense of Unexpected Effects

Researchers, instructors, and administrators alike who consider attempting these interventions in new educational contexts must therefore anticipate and be prepared to make sense of why their effects differ from those of previous studies. For many, this will mean making sense of null findings, and providing a more useful conclusion than “this didn’t work” (Jacob et al., 2019). Intervention leaders should aspire to determine, for example, whether the intervention did not provide a sufficient treatment for producing significant effects, or whether their local context may not be conducive for success of a particular intervention.

Fortunately, frameworks for understanding variations in program effects outline several key considerations that can guide this pursuit. For example, Weiss et al. (2014) specify three key considerations in their conceptual framework, including treatment contrast, client characteristics, and program context. The treatment contrast may help answer whether the intervention was conducted in a manner likely to produce a significantly different experience for those receiving the treatment. Defined as the difference between receiving regular services and receiving regular services plus a treatment, the treatment contrast essentially represents what the intervention itself is. For framing interventions, identifying the treatment contrast requires appreciating subtle details of its content that are likely to affect the quality of psychological change, such as whether stories about belonging are matched to students’ characteristics or whether “saying is believing” writing assignments ask students to format responses as a letter to others. These can affect the extent to which the desired treatment (e.g., mindset, belonging) is endorsed. However, even very well-designed treatments may not produce large treatment contrasts if the regular services to which it is compared offer similar elements of the treatment. For instance, first-year undergraduates receiving a growth mindset or social belonging intervention should not be expected to gain as much from it when elements of their campus’ orientation program and welcome week espouse similar messages. Thus, the treatment contrast is essential for determining whether such “light-touch” framing interventions should be expected to work in a given context.

Similarly, client characteristics and program context are important moderators of a treatment’s effectiveness (Weiss et al., 2014). As researchers push to generalize framing interventions and stakeholders attempt to implement these interventions at their local institutions, the characteristics of the student population and the specific local context are critical for answering the foundational question: Is it plausible to expect positive effects from the intervention? (Jacob et al., 2019). As previous studies have suggested, framing interventions may not be effective if students’ beliefs do not serve as barriers to achievement (Paunesku et al., 2015), if students do not possess attributes needed to make use of the intervention (Broda et al., 2018), or if the context (e.g., peers and instructors) exhibits norms that do not support the intervention’s message (Walton & Yeager, 2020; Yeager et al., 2016; Yeager et al., 2019). Of these, the least studied is the assumption that students’ beliefs are barriers to achievement, a key assumption of framing interventions. Despite an average positive association, Sisk et al.’s (2018) meta-analysis identified many studies where growth mindset is not positively associated with academic achievement. 2 Thus, testing basic assumptions like this in each new context can help us gauge how realistic it is to expect framing interventions to work.

The Present Study

In this case study, we present a Biological Science program’s attempt to improve the academic achievement of its first-year students using growth mindset and social belonging interventions. Below, we outline how the program was developed, and the assumptions about our students this implied for the program’s success. Drawing on frameworks for understanding variation in program effects (Jacob et al., 2019; Weiss et al., 2014), we then detail the treatment contrast, client characteristics, and program context that characterize our intervention.

Program Development

As many intervention studies do, this project began by identifying a valued goal and a group of students faced with barriers to achieving that goal. Specifically, the intervention was conducted at the University of California, Irvine (UCI) in Fall 2016, where roughly 45% of incoming freshmen who enrolled as Biological Sciences (Bio Sci) majors over the past decade did not graduate with this major in 4 years. On completion of the first year of college, underrepresented students disproportionately leave the major. Over the past decade, 49% of those who left the major were students from traditionally underrepresented ethnic minorities (URM), including Hispanic and African American students, despite the fact that they only made up less than one third of the Bio Sci majors. Similarly, 75% of those who left the major were the first generation in their family to attend college (FG; despite the fact that only 46% of incoming Bio Sci majors were FG). The research team’s goals were therefore to increase the achievement and retention of first-year Bio Sci students within the Bio Sci major overall, and particularly for students from URM, FG, and low-income (LI) backgrounds.

Growth mindset and social belonging interventions emerged as appealing and low-cost interventions to achieve both goals. These two framing interventions reflect the twin pillars of college persistence theories; whereas growth mindset interventions are built to provide support in the face of academic challenges, social belonging interventions are built to provide support in the face of social challenges (Yeager et al., 2016). Both are also expected to reduce psychological barriers that studies have shown are especially likely to be experienced by college students from URM, FG, and LI backgrounds (Aronson et al., 2002; Stephens et al., 2014; Walton & Cohen, 2011; Yeager et al., 2016).

Assumptions About Client Characteristics Underlying Program Success

Importantly, the program development outlined above implies three simple, but critical, assumptions about the vulnerability of our sample’s URM, FG, and LI students. If unmet, these assumptions are likely to quietly undermine our attempt to use psychological interventions to close achievement gaps. We use term vulnerability in light of Walton and Yeager’s (2020) framework of vulnerability and opportunity, which outlines client characteristic and program context considerations important for understanding where the effects of psychological interventions hold and where they do not. 3 Vulnerability, which is conceptualized as a tendency to endorse psychological beliefs that can undermine academic outcomes (e.g., “I cannot do much to change my basic intelligence”), must be present in order for psychological interventions to support students’ achievement. To further this notion, we specify three assumptions that must be met to actually claim vulnerability is present.

First is the assumption that in our sample, growth mindset and social belonging are positively associated with achievement, establishing these psychological beliefs as metrics of “vulnerability” that can explain achievement gaps. Second is the assumption that URM, FG, and LI students (our target population) actually exhibit vulnerability, beginning college without a growth mindset and a lack of social belonging. Only then could a psychological intervention be expected help students develop significantly more of a growth mindset and social belonging. These are foundational assumptions of any psychological interventions. The third assumption is specific to programs designed to close achievement gaps between subgroups: that URM, FG, and LI students begin college with less of a growth mindset and less social belonging relative to their respective peers. We return to these three assumptions after presenting additional study details below.

Treatment Contrast

Leading up to fall term of 2016, the research team designed an intervention based on several resources that were available as of Summer 2016. These included the procedures of growth mindset and sense of belonging interventions that had been recently published (Walton & Cohen, 2011; Walton et al., 2015; Yeager et al., 2016). The intervention was designed to be implemented during students’ first term on campus, reducing the amount of time during which students would have received the messages implied by framing interventions through existing services. Nevertheless, there was uncertainty about the extent to which orientation and welcome week experiences would have provided experiences that promote either a growth mindset or a sense of belonging. For example, special events held for newly admitted African American students and information conducted in Spanish for newly admitted Hispanic students may have fostered perceptions of belonging before students arrive on campus.

Client Characteristics

We recognized UCI students as an especially compelling population for generalization. As a Hispanic-serving and Asian American Native American Pacific Islander institution (AANAPISI), UCI is home to an incredibly diverse student body: More than 50% of UCI’s entire undergraduate population are FG college students. Additionally, more than 40% are from LI backgrounds, and 26% are URM students. It would seem, then, that UCI could be an ideal context for implementing framing interventions, as it has a large proportion of students for whom growth mindset and social belonging interventions have been especially effective in the existing literature. Data from California school districts that send significant numbers of students to UCI also show growth mindset measures are positively associated with academic outcomes (Claro & Loeb, 2019), presenting historical evidence supporting the expectation that mindset should be associated with academic success. However, because of the university’s diversity and the high quality of admitted students, 4 there was also a question of whether the URM, LI, and FG Bio Sci majors attracted to enroll at the university would already be endorsing growth mindset beliefs and showing little belonging uncertainty.

Program Context

Similarly, there is also uncertainty surrounding how the UCI context may moderate the impacts of the framing intervention. On the one hand, the relatively large number of FG and underrepresented students on this campus may itself be reducing barriers to belonging often felt by students from underrepresented groups. The greater presence of similar peers, especially, could naturally assuage doubts about belonging. As we have seen among “advantaged” students for whom framing interventions have little to no effect, conditions that foster belonging organically can render a “light-touch” intervention redundant (Paunesku et al., 2015; Yeager et al., 2016). On the other hand, other contextual factors beyond peer representation may still have created barriers to belonging among URM students, including the fact that less than 10% of all faculty at UCI were from URM backgrounds or that at a school with relatively large numbers of Hispanic students, cultural differences between subgroups under that racial umbrella may have been more salient (Nuñez, 2009).

Research Questions

In the context of a diverse, 4-year undergraduate institution, we investigate client characteristics to first test whether we should expect growth mindset or social belonging interventions to work. Although additional questions about the treatment contrast (“Was the treatment delivered as intended?”) and the program context (“Do the school’s teachers model growth mindset norms?”) must also be answered to fully understand why an intervention may be more or less effective, we focus on three often overlooked assumptions about client characteristics that must first be tested to establish the vulnerability of the sample under study.

Finally, we test whether the implemented treatment contrast actually affected academic achievement.

Method

Participants

The study was conducted among all first-year Bio Sci majors at UCI (N = 1,091). Students in our study were 52% Asian, 27% Hispanic, 15% White, 4% Black, and 3% other races; 68% of the students were female, 32% were male, and <1% (n = 3) declined to state gender. Additionally, 40% of the students were from LI backgrounds, and 46% represented the FG.

Procedure

We implemented two interventions in a first-year fall course taken by all Bio Sci majors, “Freshman Seminar” (Bio Sci 2A). Prior to the start of the term, researchers randomized the Bio Sci students enrolled in Bio Sci 2A into four treatment groups, with separate blocks according to the students’ FG status: Mindset (n = 274); Belonging (n = 269); Double (n = 273); Control (n = 275). Bookending the interventions, students were required to participate in pre- and postsurveys that were required for course credit, yielding a response rate of more than 94% for both surveys.

Social Belonging Intervention

The social belonging intervention was administered during Week 2 of a 10-week term. All students enrolled in Bio Sci 2A received an assignment through the university’s course management system. Students in the treatment condition all watched the same 5-minute video clip and were then asked to respond to a writing prompt. Similar to recent social belonging interventions, these videos centered on senior Bio Sci students sharing their stories of adjustment to college life (e.g., Broda et al., 2018). We intentionally recruited senior students who were diverse in terms of race/ethnicity and gender. The core messages were consistent with the manipulation of all recent belonging interventions. These included the ideas that (a) all students worry about whether they belong, (b) these worries go away in time if you take active steps to adjust, and (c) different backgrounds can be beneficial to a strong college education (Stephens et al., 2014; Walton et al., 2015; Yeager et al., 2016).

After watching the video, students were asked to respond to the following writing prompt: “What is one challenge you have faced so far since coming to college and how is it connected to something you saw in the video?” Theoretically, a writing component has the potential to increase treatment effects because it helps students internalize messages through a “saying is believing” effect (Aronson et al., 2002; Higgins & Rholes, 1978). Advocating for a message by writing about it is conducive to internalizing an attitude change. Students in the control group were asked to review a set of slides covering the major requirements in Bio Sci. As a control assignment, students were asked to answer the question “What is one question you have about the major?” and to “Write a brief letter to a future student summarizing the major requirements to inform them of things to keep in mind as a Biology major.”

Growth Mindset Intervention

The growth mindset intervention was administered during Week 8 of a 10-week term. Again, all students received the assignment through the university’s course management system. Treatment participants were asked to review a set of slides fostering the belief that intelligence is not a fixed entity, but a malleable quality that can improve, and respond to a writing prompt. These slides resembled those used in prior growth mindset interventions (e.g., original materials from Yeager et al., 2016), describing research studies on both the malleability of brain functioning and the benefits of having a “growth mindset” as a student. The writing prompt asked students to “List three reasons why students may underperform during their first year in college.” Students in the control condition were asked to review a set of slides highlighting the key areas of the brain and respond to the writing prompts “Why do you think the brain is such a mystery to students like you?” and “Why is it helpful for you to know about the brain?”

Measures

Survey measures reflected the psychological mechanisms hypothesized to mediate the relationships between the interventions and academic outcomes such as grades and persistence.

Growth Mindset

This was assessed using an established scale of three items (Dweck & Leggett, 1988). All statements were negatively worded, including items such as “You have a certain amount of intelligence, and you really can’t do much to change it.” On a scale from 1 = completely disagree to 6 = completely agree, higher scores therefore indicated endorsement of a fixed mindset. This measure, with a Cronbach’s α of .90, was reverse-coded for analyses.

Belonging Uncertainty

This was measured using a single item “When something bad happens, I feel that maybe I don’t belong at UCI.” Although this item is typically accompanied by two to three others to create a small scale of belonging uncertainty (e.g., Yeager et al., 2016), additional relevant items were not included in our data. Nevertheless, we believe it was the best single item to specifically capture students’ tendency to attribute negative experiences to a lack of belonging. It was measured on a scale from 1 = not at all true to 7 = very true.

Performance and Enrollment Measures

These were collected from university records at various points throughout the year, including grades in introductory biology courses from fall and winter terms, whether students enrolled in the winter course, and cumulative GPA at the end of students’ first quarter and first year. 5

Demographics

Variables such as racial/ethnic group, FG, and LI status were gathered from the university records based on self-reported data from students’ parents. FG was defined as any student whose parents did not attend college. LI students were those who qualified as Pell-grant eligible.

Analysis

Results of a randomization check ensured that there were no treatment conditions in which a certain race, FG, LI, or gender group was overrepresented. Key baseline variables were also examined using two-tailed t tests, showing no intervention condition differed from the control condition on measures of growth mindset, belonging uncertainty, as well as SAT scores (see online Supplemental Material).

To assess whether we should expect the intervention to work, we first used t tests to determine whether URM, FG, and LI students showed an association between measures of growth mindset and achievement, and social belonging and achievement. To avoid confounding this association with the treatment, we only analyzed students in the control condition. We did not conduct these analyses before implementing the interventions due to the low cost of the interventions and the desire to contribute to the field’s understanding of their effectiveness with distinct student populations.

Second, we investigated the assumption that URM, FG, and LI students endorsed sufficient levels of fixed mindsets or belonging uncertainty to warrant an intervention attempting to change those beliefs. We reasoned students’ mean levels of self-reported mindsets would need to signal sufficient amounts of belonging uncertainty or fixed mindset beliefs. An additional assumption we tested was that mindset beliefs would not organically change over time among URM, FG, and LI students in the control condition. We reasoned that if no differences in mindsets are present at baseline, they could still be a barrier to success if the context produces significant declines in mindset beliefs among URM, FG, and LI students relative to their peers.

Third, we analyzed baseline data to test basic assumptions about whether the mindsets of URM, FG, and LI students in this context differed from those of their peers. Despite data from other studies suggesting this was likely to be true, it is important to test this assumption within our own target sample.

Finally, to test whether or not the interventions affected students’ outcomes, multiple regression analyses were conducted on the three performance measures. Due to the timing of the growth mindset intervention, we hypothesized any effects on academic performance it produced would appear in the following term (winter). Each regression controlled for race, gender, LI status, FG status, and prior achievement (i.e., SAT scores). Two additional regressions predicting each of the psychological measures (growth mindset and belonging uncertainty) served as manipulation checks for the interventions.

Missing Data

Data for our analyses came from three sources: self-report surveys, instructor gradebooks, and university records. Students who did not take the presurvey (4%) or postsurvey (5%) were no more likely to have a specific background characteristic associated with race, FG status, or LI status. Additionally t tests showed SAT scores were unassociated with having taken the survey. Instructor gradebook data showed that 1.5% of students did not complete the fall biology course, whereas 7.5% of students did not complete the winter biology course. Finally, university records showed that 3% of students did not have end-of-year grades for the first year of courses. Analyses available in the online Supplemental Material show that the majority of student demographic characteristics are not correlated with missing data.

Results

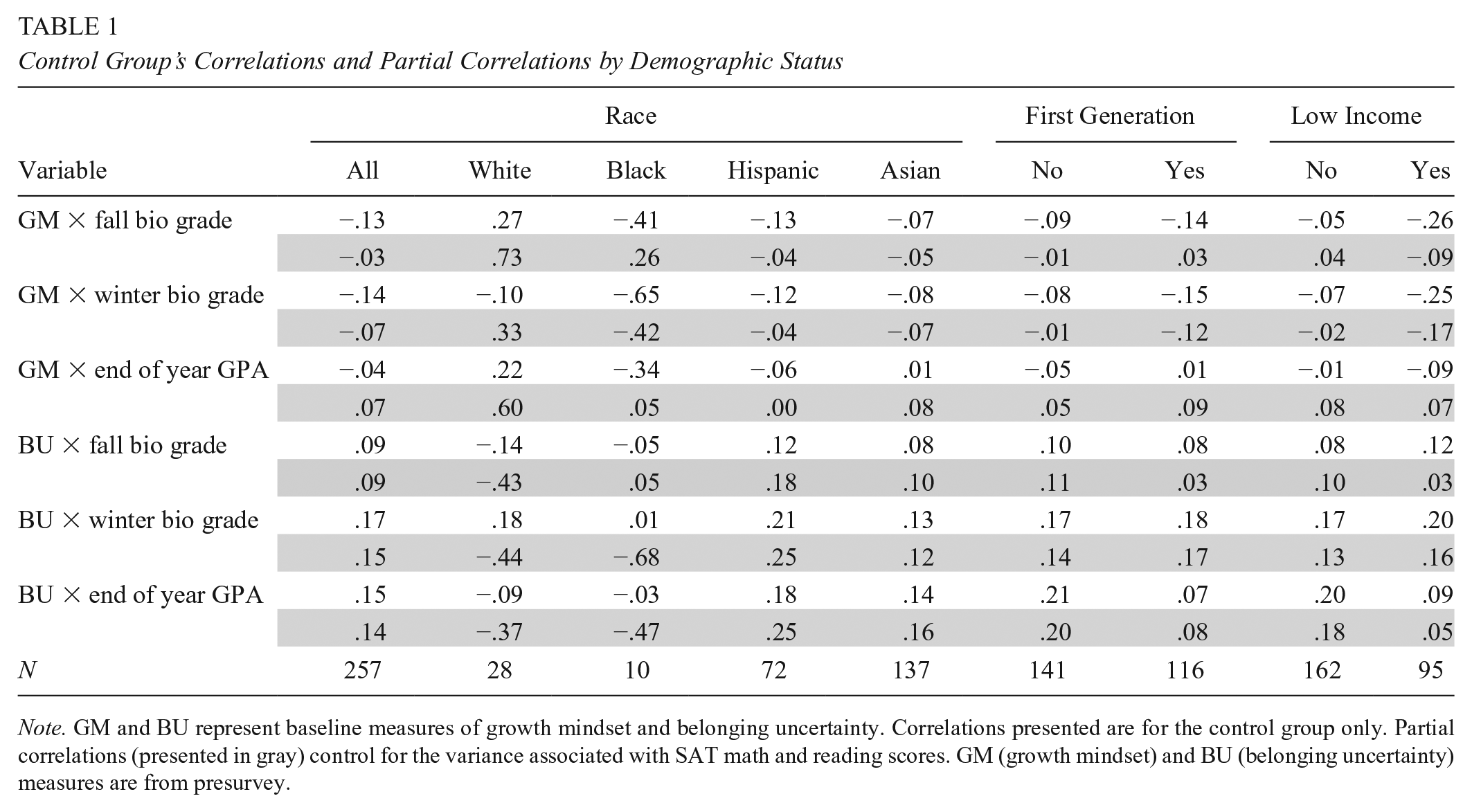

RQ 1: Are Belonging and Mindset Beliefs Barriers to Academic Success for URM, FG, or LI Students?

We did not meet the first assumption that either increasing growth mindset or decreasing belonging uncertainty should result in higher academic achievement for URM, FG, or LI students. Zero-order correlations from our sample’s control group show that higher achievement is consistently associated with lower growth mindset and greater belonging uncertainty (Table 1). These are both opposite of the assumed relationships that justify these framing interventions as a means of raising academic achievement. Partial correlations show that after controlling for SAT math and reading scores, high growth mindset and low belonging uncertainty are still not positively associated with academic achievement. The one exception is that higher growth mindset is consistently associated with higher end-of-year grades (after controlling for prior achievement), though these correlations vary in a small range between .00 and .09 for URM, FG, and LI students.

Control Group’s Correlations and Partial Correlations by Demographic Status

Note. GM and BU represent baseline measures of growth mindset and belonging uncertainty. Correlations presented are for the control group only. Partial correlations (presented in gray) control for the variance associated with SAT math and reading scores. GM (growth mindset) and BU (belonging uncertainty) measures are from presurvey.

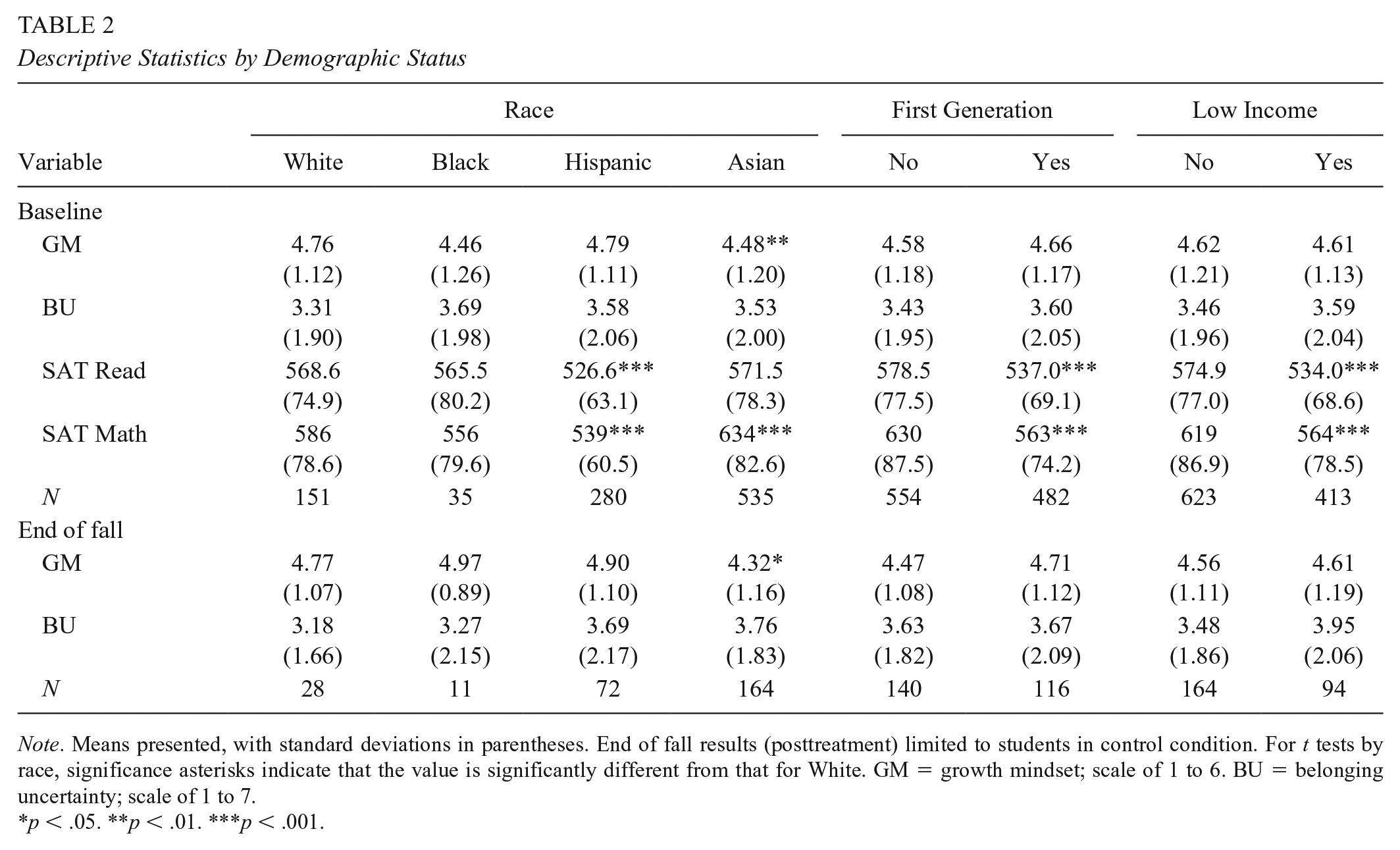

RQ 2: Do URM, FG, or LI Students Suggest that they Perceive a Lack of Belonging or a Fixed Mindset on Entering College?

At baseline, Table 2 showed that URM, FG, and LI students did not signal much room for improvement in growth mindset or belonging uncertainty. On a scale of 1 to 6 measuring growth mindset, URM, FG, and LI students all showed means of 4.4 to 4.8, signaling high growth mindsets. On a 1 to 7 scale of belonging uncertainty, URM, FG, and LI students reported means of 3.5 to 3.6, signaling somewhat low belonging uncertainty. This suggests we may not have met the assumption that mindset beliefs presented a barrier to success for the targeted students in this context had.

Descriptive Statistics by Demographic Status

Note. Means presented, with standard deviations in parentheses. End of fall results (posttreatment) limited to students in control condition. For t tests by race, significance asterisks indicate that the value is significantly different from that for White. GM = growth mindset; scale of 1 to 6. BU = belonging uncertainty; scale of 1 to 7.

p < .05. **p < .01. ***p < .001.

RQ 3: Do URM, FG, or LI Students have Belonging and Growth Mindset Beliefs that Significantly Differ from those of their Peers at Baseline?

Students from URM, FG, or LI backgrounds did not have significantly less growth mindset or significantly greater belonging uncertainty relative to their respective peers. Results in Table 2 suggested this was not simply due to a lack of power, as traditionally underrepresented groups including Hispanic students and FG students actually endorsed growth mindset beliefs slightly more than their peers. In addition, Hispanic and Black students each reported less belonging uncertainty than their Asian peers. Furthermore, at the end of fall, analysis of control group students suggested that differences in growth mindset and belonging uncertainty do not naturally appear over time. Where URM, FG, and LI students did consistently differ at baseline was academic preparation, showing lower SAT math and reading scores than their peers. This suggests that although URM, FG, and LI students may have needed more academic support than their peers, we did not meet the assumption that URM, FG, and LI students were especially likely to experience barriers to belonging or mindset beliefs, neither on an absolute scale nor relative to their peers.

RQ 4: Does Participation in Either a Growth Mindset or a Social Belonging Intervention Cause Students from URM, FG, or LI Backgrounds to Earn Higher Course Grades or GPAs?

First, manipulation checks (Table 3) showed that the growth mindset condition reported significantly higher growth mindset (Β = 0.18, SE = 0.07). Although this main effect did not achieve statistical significance in the double intervention condition, it was similarly positive, with an effect size of Β = 0.10. It is important to note that we did not hypothesize the effect of the double intervention on growth mindset would be weaker due to the potential cognitive burden of receiving both intervention messages, mainly because we intentionally separated the interventions by 6 weeks to lessen the likelihood of this happening. Interaction terms suggested that the intervention did not improve growth mindset beliefs significantly more for students from URM, FG, or LI backgrounds. The social belonging intervention had no main effect on belonging uncertainty. The model including interaction terms showed the social belonging intervention’s effects were not moderated by FG or LI status but suggested that it might be moderated by race. However, the valence of the moderation was inconsistent between the belonging and the double conditions, with the belonging-only condition more effective at reducing uncertainty among Black and Hispanic students compared with White students but the double condition less effective at reducing uncertainty among these underrepresented groups. For Asian students, calculating marginal effects (see online Supplemental Material) suggested that although the belonging intervention may have been more effective for them than for White students, the intervention did not ultimately reduce Asian students’ belonging uncertainty overall.

Manipulation Check: Effects of Intervention on Growth Mindset and Belonging Uncertainty

Note. Coefficients are standardized. Robust standard errors in parentheses. Positive values indicate increases in growth mindset or increases in belonging uncertainty. All demographic characteristics are dummy coded 1 = present, relative to the omitted group, 0 = not present. Models additionally control for gender and presurvey growth mindset. Interaction variables are listed in italics. See online Supplementary Material for regressions that test interaction terms in separate models. SB = sense of belonging; GM = growth mindset; int. = intervention; Gen = generation.

p< .05. **p< .01. ***p < .001.

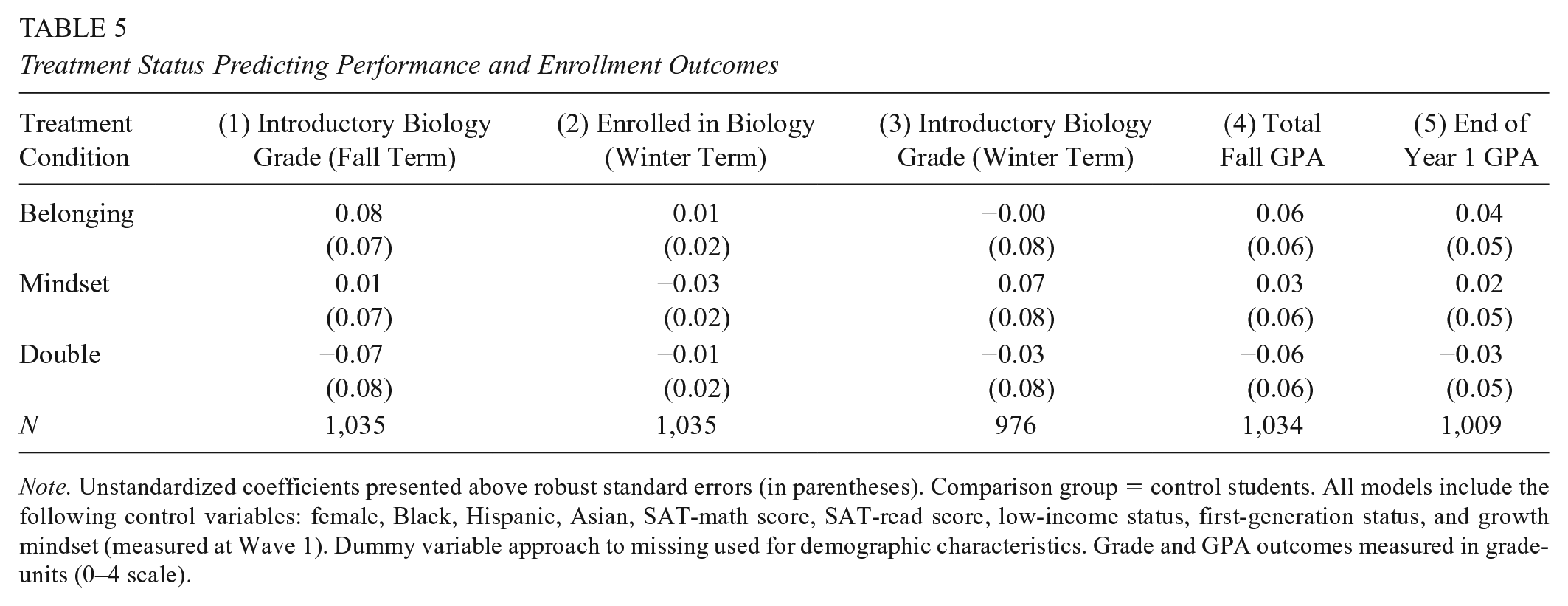

Analyses suggest that neither the growth mindset, the social belonging, nor the combined interventions showed consistent positive effects among URM, FG, or LI students’ introductory biology course grades, enrollment in the winter biology course, or cumulative GPA (Tables 4 and 5). A minimum detectable effects analysis (see online Supplemental Material) suggested that our study may have lacked the power to detect statistical significance for practically significant subgroup effect sizes. This made the consistency of the interventions’ direction and strength across multiple performance outcomes important for our interpretation of positive estimated effects (Jacob et al., 2019). Although estimated effects around this size occasionally appeared for some underrepresented groups (e.g., FG students in the belonging intervention) these effects often did not reappear in the double intervention. In other cases, such as LI students in the double condition, positive effects on performance above b = 0.10 grade points were juxtaposed with several negative effects. Finally, one of the strongest effects was observed on fall grades among Hispanic students who received the mindset treatment, despite the fact that the intervention occurred after 75% of the term had been completed. Therefore, despite an estimated boost of b = 0.24 grade points suggesting a practically meaningful effect, we maintained skepticism that this effect was caused actually caused by the interventions. This was especially true after seeing much lower estimated effects among Hispanic students in the double condition and lower estimated effects on winter biology grades, which was the term in which we expected to see the strongest effects of the mindset intervention.

Treatment Effects by Subgroup Population of Students

Note. Unstandardized coefficients presented above robust standard errors (in parentheses). The term n indicates sample size in the relevant treatment × subgroup. Comparison group = control students. All models include the following control variables: female, Black, Hispanic, Asian, SAT-math score, SAT-read score, low-income status, first-generation status, and growth mindset (measured at Wave 1). Dummy variable approach to missing used for demographic characteristics. Grade and GPA outcomes measured in grade-units (0–4 scale). FG = first generation; CG = continuing generation.

p< .05.

Treatment Status Predicting Performance and Enrollment Outcomes

Note. Unstandardized coefficients presented above robust standard errors (in parentheses). Comparison group = control students. All models include the following control variables: female, Black, Hispanic, Asian, SAT-math score, SAT-read score, low-income status, first-generation status, and growth mindset (measured at Wave 1). Dummy variable approach to missing used for demographic characteristics. Grade and GPA outcomes measured in grade-units (0–4 scale).

Discussion

In this study, we sought to understand whether two popular, low-cost framing interventions would be effective in a college context with relatively large proportions of URM, FG, and LI students. Although we found that the interventions did not affect students’ academic outcomes, we foreground how the process of testing basic, but often overlooked, assumptions can provide critical answers for why the interventions did not produce the intended results. One reason we conducted the interventions without testing these basic assumptions was that social psychological interventions are a low-cost way of tapping unexplored sources of motivation. This also serves as a case study for researchers and educators who may find that these short interventions do not produce the expected results, as simple analyses can provide data-driven explanations for why an intervention may not have worked Specifically, testing basic assumptions can shed light on whether the intervention was done incorrectly (i.e., had low fidelity), or whether it should not have been expected to work in the first place.

Our study highlights several assumptions about participant characteristics and the program context that should be tested and reported in work on these interventions. These include (a) testing that there is an association between the psychological mechanism and the targeted outcome within their own sample (e.g., Yeager et al., 2016), (b) assessing whether the target population already report high levels of growth mindset or low levels of belonging uncertainty (or anticipated belonging) on an absolute scale (e.g., Broda et al., 2018), and (c) identifying whether the target population’s beliefs are significantly different from those of their peers (e.g., Walton et al., 2015). Below, we discuss how making this process standard practice can help both researchers and practitioners meet the challenges of choosing the most appropriate intervention, identifying students for whom psychological beliefs are barriers to success, and identifying “redundancy thresholds.”

Choosing the Most Appropriate Intervention

The most prominent reason we should not have expected the intervention to succeed was because our context did not meet the cornerstone assumption of intervention research: the presence of an association between the psychological mechanism and the targeted outcome. By engaging in these interventions, we assumed that there was an association between growth mindset, belonging uncertainty, and academic outcomes among our students. But as Sisk et al. (2018) show in the first of their recent meta-analyses, the average association between growth mindset and academic achievement was only .02 when limited to students in postsecondary education and above. Underlying these averages was an exceptionally large amount of heterogeneity, 6 suggesting that the association between mindset beliefs and academic achievement can vary significantly between contexts including zero or even negative correlation. At UCI, many students come from CORE districts, in which data have shown that higher growth mindset is associated with academic outcomes (Claro & Loeb, 2019). The fact that we do not observe this at UCI suggests the potential for collider bias; that UCI may be admitting and attracting students with strong achievement but especially high growth mindset, which may be limiting the variation in growth mindset within its sample and reducing the association between these two factors within UCI itself. When selecting an intervention, practitioners should recognize that the selection process for their schools means their own students may not be like those in the most popular, successful interventions.

This is especially important when interpreting null findings. Yeager et al. (2016) conducted a correlational analysis before their first experiment, finding that growth mindset had very low variability and was not associated with full-time enrollment in one of their two experiments. They were then able to center their discussion on the program context and client characteristics that likely moderated the effect of their intervention, rather than speculate unnecessarily about procedural concerns and the treatment contrast. In the field of growth mindset, ample reports of these associations have revealed the heterogeneity of associations between growth mindset and achievement, encouraging us to entertain the possibility that null findings are simply due to the absence of that foundational association. However, much less information supporting this possibility is available for social belonging or other psychological beliefs, and reporting these associations as standard practice would help remedy this issue.

Identifying Students for Whom Psychological Beliefs Are Barriers to Success

A second assumption that often drives intervention work is that they will especially benefit certain groups of students facing the greatest barriers to academic success. In our context, URM, FG, and LI students are more likely to experience academic challenges relative to their peers. As growth mindset and social belonging interventions have been especially effective among these at-risk populations in many previous studies, we assumed that our URM, FG, and LI students would have lower levels of growth mindset and higher levels of belonging uncertainty relative to their peers. Checking for these assumed differences at baseline revealed that with no difference in these psychological beliefs to begin with, these interventions were unlikely to close achievement gaps.

Although demographic characteristics are often used to classify students into groups in need of academic support, more care should be taken before assuming certain demographic groups are more likely face psychological barriers to success. One example is the tendency to group together all Asian students, considering them all as an advantaged group. However, the ethnic backgrounds of Filipino, Hmong, and Vietnamese students may lead them to face barriers to success more similar to those of Hispanic and African American peers, than their Chinese, Japanese, and Korean peers (Teranishi, 2010). Although previous research has shown that interventions can be more effective among underrepresented students, it does not suggest that this is because social belonging or growth mindset affects academic outcomes fundamentally differently for people from underrepresented backgrounds. Rather, it is implied that these interventions are more effective for those who have lower levels of growth mindset or higher levels of belonging uncertainty. In several studies that found positive effects, URM, FG, and LI status have been more likely to fit that profile. But that assumption must be checked in different contexts. When it is not met, researchers should be careful not to conflate differences in academic experiences with differences in psychological beliefs and may avoid hypothesizing that the intervention will be moderated by demographic characteristics.

Identifying Redundancy Thresholds

Even among students who have growth mindset or high belonging uncertainty, a final necessary condition is that there must be room for improvement in these beliefs on an absolute scale. Both baseline and postsurvey measures of URM, FG, and LI students showed relatively high levels of growth mindset and low belonging uncertainty, making it possible that the messages espoused by the interventions were redundant for students in this context (Paunesku et al., 2015).

Certain characteristics of this context may help explain why URM, FG, and LI students would not have benefitted from a light-touch intervention with these messages. The university may be successful at attracting students with especially high growth mindset, or at reducing belonging uncertainty before students begin their first courses. Our context lacked a racial/ethnic majority at this university and had relatively large numbers of FG and LI students. This speaks to the university’s ability to attract underrepresented students, which may be an indicator of its ability to make these students feel welcome before beginning classes. Once students arrive on campus, its overall diversity may also be reducing the salience of using URM, FG, and LI status as social identifiers. Similarly, in a school where Hispanic students outnumber White students, and where LI and FG students roughly equal their respective counterparts, belonging uncertainty is less likely to be reinforced by numeric underrepresentation. If these accumulating influences are supporting students’ transition to university life, short framing interventions may not provide a practically significant difference (i.e., treatment contrast) compared with what students regularly experience.

Although gauging the possibility that the messages will be redundant is important, at present it does not actually seem to be strictly testable. Devoting attention to descriptive statistics of baseline data on these psychological measures may be eschewed in favor of evaluating the intervention itself, limiting researchers’ ability to identify a threshold at which the intervention message becomes redundant. Researchers striving to understand how contextual differences may affect psychological interventions should undoubtedly count baseline psychological beliefs as important client characteristics relevant to understanding their program’s effectiveness. Reporting mean values of mindset beliefs from different subgroups of interest would improve researchers’ ability to hone in on a “redundancy threshold” at which the intervention should not be expected to produce detectable changes.

After testing each of these assumptions, there are a couple of reasons that stakeholders interested in these framing interventions may still consider trying them even when the assumptions are not met. As we demonstrate through our own experience, the fact that these interventions are low cost is very appealing. Little university money or student time is wasted even when determining that the intervention should not have been expected to work to begin with. Additionally, the potential for collider bias to be misrepresenting true mindset-achievement correlations and the ambiguity of redundancy thresholds underscore the fact that these tests only provide guidelines for setting expectations about how effective interventions might be, not infallible diagnoses. Therefore, it is certainly understandable to proceed with interventions even when one or more of the above assumptions do not seem to be met. However, we implore researchers who proceed with low-cost framing interventions and ultimately do encounter null findings to report on the assumptions outlined in this article so that the field can benefit from conclusions more helpful than “this did not work.”

Intervention Design Considerations

Although the purpose of this case study was to foreground the client characteristics and program context details that may be overlooked, issues with design decisions in our study are worth noting for researchers who meet the above assumptions and move forward confidently with designing these interventions. As leaders in the field of framing interventions have pointed out, the characterization of these interventions as “low cost” should not be confused with low effort. Although these interventions work best when adapted to the specific needs of one’s local context, the fidelity of the intervention’s ability to affect mediating psychological processes (i.e., growth mindset, belonging uncertainty 7 ) can also be compromised when small details are altered. To illustrate the attention different procedural elements should be given when designing interventions for one’s own context, Table 6 lists just some of the ways our study’s delivery format, intervention manipulation, and postintervention activity differed from those of other intervention studies.

Panel A. Comparison Across Studies: Sense of Belonging Intervention

This is technically a difference-education intervention, not a belonging intervention. Because it falls under the umbrella of “framing interventions” (Harackiewicz & Priniski, 2018), we include it here because we believed when designing our study that the procedural details were similar and relevant. NA = not applicable.

Panel B. Comparison Across Studies: Growth Mindset Intervention

Although many features of the present study’s design were deliberately chosen, they may have had important, overlooked consequences. 8 Completing this assignment was required for a grade within a specific class only for students within the Bio Sci major, instead of as a part of all students’ orientation. This may have reduced students’ perceptions that the growth mindset messages in the intervention reflected institutional beliefs. In contrast to Yeager et al.’s (2016) finding that explicitly presenting growth mindset as something that can help may be less effective than simply implying this message may mean the materials were not as effective as possible. As for the writing prompt, the question it posed and the fact that it did not need to be written to a future student may have been too broad to encourage students to reflect specifically on mindset principles and connect them to strategy use. In the belonging intervention, the choice to use a single video could have reduced students’ receptiveness to its message. Although the senior students providing testimonials were diverse, it may not have been as effective as matching individual stories to students on the basis of their ethnicity and FG status.

Conclusion

With the rising costs of higher education, psychological interventions stand to offer university administrators the most cost-effective ways to provide academic and social support among underrepresented students. However, intervention researchers are behooved to think carefully about the conditions under which these interventions may not be effective. Several assumptions are made about the importance of psychological beliefs and which students struggle with those beliefs. Not recognizing important ways our students may differ from those in the most successful intervention studies can divert practitioners’ resources away from more appropriate interventions and can lead researchers to prioritize discussion of methodological errors when the characteristics of our sample are just as important as the intervention itself. Consequently, we implore researchers and practitioners to support the growth of framing interventions by devoting more attention to testing the assumptions that underlie targeted psychological interventions before concluding that they do not work, and ideally before conducting the interventions at all.

Supplemental Material

Testing_Assumptions_of_Mindset_Interventions_-_Supplemental_Material – Supplemental material for Testing Basic Assumptions Reveals When (Not) to Expect Mindset and Belonging Interventions to Succeed

Supplemental material, Testing_Assumptions_of_Mindset_Interventions_-_Supplemental_Material for Testing Basic Assumptions Reveals When (Not) to Expect Mindset and Belonging Interventions to Succeed by Peter McPartlan, Sabrina Solanki, Di Xu and Brian Sato in AERA Open

Footnotes

Acknowledgements

We have no known conflict of interest to disclose. The research reported here was supported by the Institute of Education Sciences, through Grant R305B170015.

Notes

Authors

PETER M

SABRINA SOLANKI is a postdoctoral researcher in the School of Public Policy at the University of Michigan. Her research focuses on higher education policy, teacher effectiveness, STEM education, and the evaluation of education interventions.

DI XU is an assistant professor of educational policy and social context at the University of California, Irvine. Her research examines the impacts of educational programs and policies on student academic performance, persistence, and degree completion at the postsecondary education level, with a particular focus on students from disadvantaged backgrounds.

BRIAN SATO is an associate teaching professor in molecular biology and biochemistry at the University of California, Irvine. His research focuses on improving STEM student outcomes at the classroom, program, and institutional levels.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.