Abstract

Deep reinforcement learning using convolutional neural networks is the technology behind autonomous vehicles. Could this same technology facilitate the road to college? During the summer between high school and college, college-related tasks that students must navigate can hinder successful matriculation. We employ conversational artificial intelligence (AI) to efficiently support thousands of would-be college freshmen by providing personalized, text message–based outreach and guidance for each task where they needed support. We implemented and tested this system through a field experiment with Georgia State University (GSU). GSU-committed students assigned to treatment exhibited greater success with pre-enrollment requirements and were 3.3 percentage points more likely to enroll on time. Enrollment impacts are comparable to those in prior interventions but with substantially reduced burden on university staff. Given the capacity for AI to learn over time, this intervention has promise for scaling personalized college transition guidance.

Introduction

In September 2016, self-driving cars arrived in Pittsburgh, Pennsylvania, under the watchful eyes of Uber’s Advanced Technologies Center. 1 Currently, the cars operate within a limited geographic area. The artificial intelligence (AI) that enables the cars to function autonomously requires painstakingly mapped roadways and intensive human supervision. For each road on which an autonomous vehicle can function, human operators have driven it multiple times while technology captures important traffic features. Human engineers have then meticulously processed the resulting data to create the artificial intelligence on which the autonomous vehicles rely. 2 This process of machine learning, deep reinforcement learning using convolutional neural networks, is the driving force behind autonomous vehicles as well as algorithmic medical diagnostics, Facebook automated photo tagging, email SPAM filters, and programs that defeat world champions in Jeopardy, chess, and Go. 3 Although AI often performs technical tasks faster and better than people, it is less clear whether AI could replace human judgment in addressing individuals’ personal needs.

We investigate this possible use of AI in the context of a different kind of journey—students’ transition from high school to college and the many twists and turns where they can veer off course over the summer. The college transition context provides a particularly intriguing challenge for this type of AI: To be successful, the system now has to cope with individual idiosyncrasies and variation in needs. Even after acceptance into college, students must navigate a host of well-defined but challenging tasks, such as completing their Free Application for Federal Student Aid (FAFSA) forms for financial aid, 4 submitting their final high school transcripts, obtaining immunizations, accepting student loans, and paying tuition, among others. Without support on those tasks that students find challenging, many stumble and succumb to “summer melt,” the phenomenon where college-intending high school graduates fail to matriculate. Summer melt affects an estimated 10% to 20% of college-intending students each year, with higher rates among low-income and first-generation college students (Castleman & Page, 2014a, 2014b). This differential attrition along the road to college can exacerbate socioeconomic gaps in college access and degree attainment that exist even among students with similar academic profiles (Kena et al., 2015). Solving the summer melt problem thus has important educational and societal consequences.

Previous efforts to address summer melt have supported students with additional individual counselor outreach (Arnold, Chewning, Castleman, & Page, 2015; Castleman, Owen, & Page, 2015) or through automated, customized text message–based outreach (Castleman & Page, 2015, 2017). Under both strategies, students could communicate with advisors one-on-one. Contacting students individually, counselors could manage caseloads of approximately 40 to 60 students per summer; automating outreach via text messaging enabled caseloads of approximately 200. Both approaches significantly improved on-time college enrollment. However, scaling these strategies would require significant resources because of the time needed for a human (counselor) to address the specific questions and personal needs of each student.

Artificial intelligence could dramatically change the viability of providing students with personal assistance. We tested whether a conversational AI system could efficiently support would-be college freshmen with the transition to college through personalized text message–based outreach over the summer. Like self-driving vehicles, conversational AI requires human supervision to adequately support students, particularly at the outset. Over time, the AI “learns” to handle an increasing array of circumstances and questions without human input. Unlike autonomous vehicles, which drive down the road in the same way regardless of the particular passengers they carry, an AI system helping aspiring college students needs to personalize its support by helping students on only those tasks where they need assistance. To facilitate this personalization, the conversational AI system can integrate with a university’s student information system and customize outreach according to students’ actual progress on each required transition task.

Compared to prior summer melt interventions, this integration of university student information system data is itself an innovation. In prior efforts, the locus of outreach was the secondary school environment, where counselors could identify college-intending students but could not observe student progress on specific transition tasks. In this study, the communication technology integrates with regularly updated university data and customizes outreach to students according to their actual progress on each required task. Through this integration, the system nudges and supports students only on requirements that are incomplete based on verifiable student-level data. In this way, the outreach is personalized to provide reminders, help, and guidance only when and where students falter in making progress or when they ask the AI system for additional assistance or resources.

We report on the use of this system in collaboration with Georgia State University (GSU), a large, public postsecondary institution located in Atlanta. Between April and August 2016, Pounce, the virtual assistant designed and implemented by AdmitHub (and named for the GSU mascot), sent text-based outreach to students admitted to join the incoming first-year class of 2016. 5 To test the efficacy of the system to help students complete the required pre-enrollment tasks and matriculate at GSU by the fall, we implemented Pounce via a field experiment. At the outset of our study, some admitted students had already committed to GSU while others were still choosing among their options (or had committed elsewhere). Consequently, we hypothesized that Pounce would function differently for these two groups. We stratified our sample and randomization accordingly. As hypothesized, the intervention had significant positive impacts on GSU-committed students but essentially no effect for admits who had not reported intentions to enroll at GSU. GSU-committed treatment students were 3.3 percentage points more likely to enroll than their control group counterparts, which translates to a 21% reduction in summer melt. These impacts mirror previous summer melt interventions that have demanded a higher burden on participating staff members.

In addition to demonstrating the impacts of this AI-enabled system to improve timely enrollment, a second key contribution of this paper relates to the university-level data to which we have access. These data provide us with an unusually rich window into how college transition interventions can impact students’ success in navigating the college transition and matriculation process. In prior studies, researchers examined students’ postsecondary intentions at the time of high school completion and whether students successfully matriculated to their intended (or any) postsecondary institution the following fall. Given our partnership with GSU, we additionally observe students’ success or failure in completing each required pre-matriculation task and the intervention’s impact on these process measures. With these data, we provide evidence for the theory of action underlying summer melt interventions more broadly as we observe the impact of the outreach not only on enrollment but also on students’ improved success with navigating the process of accessing financial aid, submitting required paperwork, and attending orientation, among other requirements.

Intervention Description

Designed by AdmitHub, the artificially intelligent system was customized for Georgia State University for the express purpose of reducing rates of summer melt among their students. To personalize each student’s support to only those tasks where they were not making timely progress, AdmitHub coordinated: (a) the pre-enrollment tasks required at GSU; (b) reliable, regularly updated data on which tasks the students had accomplished; (c) a series of initial responses to questions students were likely to ask about these tasks; and (d) a process for the AI system to learn answers to queries for which it lacked answers. Thus, AdmitHub developed an infrastructure to assemble and coordinate the following components in its GSU-specific virtual assistant, Pounce:

During summer 2016, Pounce sent text-based outreach to selected students admitted to GSU’s class of 2016. Outreach began in April 2016, with the following introductory message:

Hi {Student Name}! Congrats on being admitted to Georgia State! I’m Pounce—your official guide. I’m here to answer your questions and keep you on track for college. (Standard text messaging rates may apply.) Would you like my help?

After this introduction, Pounce offered to assist students with each GSU enrollment task, as applicable, through the end of August. Students continued to receive outreach until they reported intentions to enroll elsewhere, actively opted out of the communication, or the study period concluded. Students not selected for outreach experienced GSU’s standard enrollment processes.

Research Design

Site

Georgia State University is a public, four-year university located in Atlanta. Each year, GSU enrolls a freshman class of approximately 3,500 students, the majority of whom are Pell eligible and many of whom are first in their family to attend college. GSU has received significant attention for implementing innovative strategies aimed at improving its degree attainment outcomes. 8 In recent years, the university’s experience with summer melt signaled that prospective students struggled to navigate required pre-enrollment processes, particularly those related to financial aid. GSU observed rates of summer melt as high as 18% among admitted students who filed a commitment to enroll form (Personal communication with Scott Burke, associate vice president and director of admissions at GSU, summer 2016). Therefore, GSU was an ideal setting to test the impact of the AI-enabled outreach strategy.

Data and Analysis

We focused our implementation and analyses specifically on students who were accepted to join the GSU fall 2016 entering freshman class. Because the intervention required text-based communication, we restricted our sample to admitted students with an active U.S. cell phone number who provided consent for text message communication in their GSU application (N = 7,489). For the purpose of implementation and analysis, we received data only on those students eligible for the intervention based on these criteria. Nevertheless, GSU-reported data provided on the National Center for Education Statistics College Navigator indicate that 16,348 students applied to GSU for fall 2016 admission, and of these, 59% (approximately 9,645 students) were admitted. 9 Thus, we estimate that our sample makes up approximately 78% of students admitted for fall 2016 enrollment. This is substantial coverage, although it is worth considering how to increase this rate in the future.

At the time of randomization, just over one-quarter of students in the sample had already documented their intention to enroll in GSU. The remaining three-quarters had been admitted but had not yet committed to GSU. We hypothesized that the text outreach would impact these two groups differentially. First, many of those who had not committed to GSU might have had little intention of enrolling (e.g., already committing to another college or university). Second, once a student actively reported intentions to enroll elsewhere, Pounce outreach to that student concluded. Therefore, we first stratified the sample according to GSU commitment status at the time of randomization and then randomized students by subgroup to the treatment or control condition. In total, 3,745 students were assigned to received Pounce outreach, and 3,744 students were assigned to the control condition, the business-as-usual GSU pre-matriculation process. In Table 1, we present sample descriptive statistics overall and by commitment status. Student characteristics do not differ markedly by GSU commitment status. The sample is majority non-White, approximately three in five students are female, and one out of every three is a prospective first-generation college student. On average, students’ SAT scores are at about the 65th percentile nationally, and they have an average high school GPA of 3.51. 10 The majority of students had completed the FAFSA at the time the intervention began.

Descriptive Statistics of the Sample: Overall and by Commitment Status

Source. Georgia State University (GSU) administrative records.

Note. Standard deviations (in parentheses) reported for continuous variables only. Sample sizes reported to indicate minor levels of missingness on certain variables. DACA = Deferred Action for Childhood Arrivals; FAFSA = Free Application for Federal Student Aid.

In Table 2, we present coefficients from regressions of each of the baseline characteristics on an indicator for treatment assignment as a test of baseline equivalence of experimental groups. The stratified randomization produced well-balanced experimental groups both overall (Column 1) and within each subgroup (Columns 2 and 3). 11

Assessing Balance of Baseline Covariates in Randomization

Source. Georgia State University (GSU) administrative records.

Note. Each cell presents parameter estimate associated with regression of baseline covariate on indicator for treatment. Column 1 presents results from regressions including fixed effects for strata defined by GSU commitment status. Column 2 presents results only for those who were committed at the time of randomization, and Column 3 presents results only for those who were not committed at the time of randomization. Robust standard errors in parentheses. DACA = Deferred Action for Childhood Arrivals; FAFSA = Free Application for Federal Student Aid.

The data to which we have access for assessing outcomes represents an important contribution of this paper as it provides an unusually rich look into how this type of intervention can impact students’ success in navigating the college transition and matriculation process. In prior studies, researchers examined students’ postsecondary intentions at the time of high school completion and whether students successfully matriculated to their intended (or any) postsecondary institution the following fall, with little understanding of whether additional support led students to complete required tasks at a greater rate. Given the partnership with GSU, we observe not only students’ intentions and postsecondary enrollment outcomes but also their success or failure in completing required pre-matriculation tasks as well as the intervention’s impact on each of these process measures. In short, these data provide a window into the processes that can derail planned college matriculation. As noted previously, these data also informed Pounce’s targeting of outreach to students according to those processes where they were not making adequate progress (e.g., with tasks like submission of a final high school transcript) or where their progress was being hindered (e.g., if they were flagged for income verification after filing their FAFSA). We organize the pre-matriculation process outcomes to which we have access into two broad categories. The first category pertains to the process of financing postsecondary education and includes the following measures: submitting FAFSA, having a FAFSA verification hold on financial aid, accepting any student loan, accepting a Stafford loan, completing loan counseling, and setting up a tuition payment plan. The second category pertains to all other enrollment tasks, including submitting a final high school transcript, submitting a housing deposit, RSVPing for orientation, attending orientation, and having an immunization hold on registration.

We present impacts on each of these 11 process outcomes separately. In addition, we apply a method utilized by Casey, Glennerster, and Miguel (2012) and Kling, Liebman, and Katz (2007) to handle issues related to multiple hypothesis testing. Specifically, we collapse these 11 outcomes into two index measures: pre-matriculation tasks not related to financial aid and financial aid–related pre-matriculation tasks. To create our index measures, we simply average across the component variables, after recoding those variables where negative values signal improvement, so that the directionality of all components is the same. 12

We also examine whether and how the outreach influenced students’ timely enrollment at GSU specifically as well as at other postsecondary institutions. We hypothesize that the outreach will improve enrollment at GSU, although it is possible that the outreach might help students to complete these key tasks for other institutions. If this were the case, we might observe increased enrollment at postsecondary institutions besides GSU. Another possibility is that outreach would reduce enrollment at other institutions as GSU-intending students were able to maintain and follow through on their stated postsecondary plans. For example, the outreach may simultaneously increase matriculation at GSU while decreasing the likelihood that students will revert to “back-up” postsecondary plans, like enrolling in a two-year institution. To explore these possibilities, we additionally examine on-time college enrollment beyond GSU using data from the National Student Clearinghouse (NSC). 13

To assess the impact of Pounce on student-level outcomes, we use a linear probability model specification. We focus on intent-to-treat estimates given that the Pounce outreach may have led to improvements in student outcomes, even for those who never responded to the text-based outreach or ever opted out of the outreach during the course of the intervention. The models that we fit are of the following general form:

where α j represents a fixed effect for GSU commitment status at the time of randomization (including in the analyses that pool data across commitment status groups), TREATMENTij is an indicator for assignment to the treatment group, Xij represents a vector of student-level covariates, and ε ij is a residual error term. Our estimates of the β1 coefficient indicate whether targeting students for Pounce outreach serves to improve student success on the outcome measure considered. On both of our index measures, a positive coefficient indicates improvement in non–financial aid– and financial aid–related tasks overall. Regarding the component processes, in some instances, a positive coefficient indicates improvement, for example when the outcome is enrollment. For other outcomes, a negative coefficient indicates improvement, such as when the outcome is having an immunization hold on registration. To improve the precision of our impact estimates, our models include the baseline covariates presented in Table 1. We report these covariate-controlled results; however, our results are robust to the inclusion or exclusion of these baseline measures in both magnitude and statistical significance.

Results

To contextualize our results, in Table 3 we first report on treatment students’ engagement with Pounce. Overall, we observe a high level of system usage. Nearly all students targeted received outreach from Pounce (Column 1), and only a small share of students (6.6%) opted out (Column 9). The typical treatment group student received 43 unique messages from Pounce, with those committed to GSU at the time of randomization receiving more, as we would expect. Approximately 85% of treatment students responded to the Pounce system at least once (Column 3). This high level of engagement potentially reflects the value of messaging coming from an institution in which the student has indicated an interest. On average, students sent approximately 14 messages to Pounce, with student engagement again higher among those committed to GSU (who sent an average of 23 messages). Congruent with GSU’s perceptions regarding the causes of summer melt among their intending students, many of the workflows sent to the most students were those pertaining to financial aid and college financing.

Student Engagement With the Pounce System, Overall and by Whether Students Had Committed to Georgia State University (GSU)

Source. AdmitHub administrative records.

Despite the high rate of engagement, only a small share of students (13.5%) sent messages that the system could not handle automatically and therefore routed the messages, via email, to a GSU staff member. Staff responses were routed back to students via text, and these responses additionally fed into Pounce’s supervised machine learning process. Approximately one-third of students asked Pounce questions that triggered automatic or artificially intelligent responses. Among those committed to GSU, the average student received three AI answers automatically sent by Pounce, although the most engaged student received 40 AI responses. On average, treatment group students sent fewer than one message that required staff intervention (Column 6).

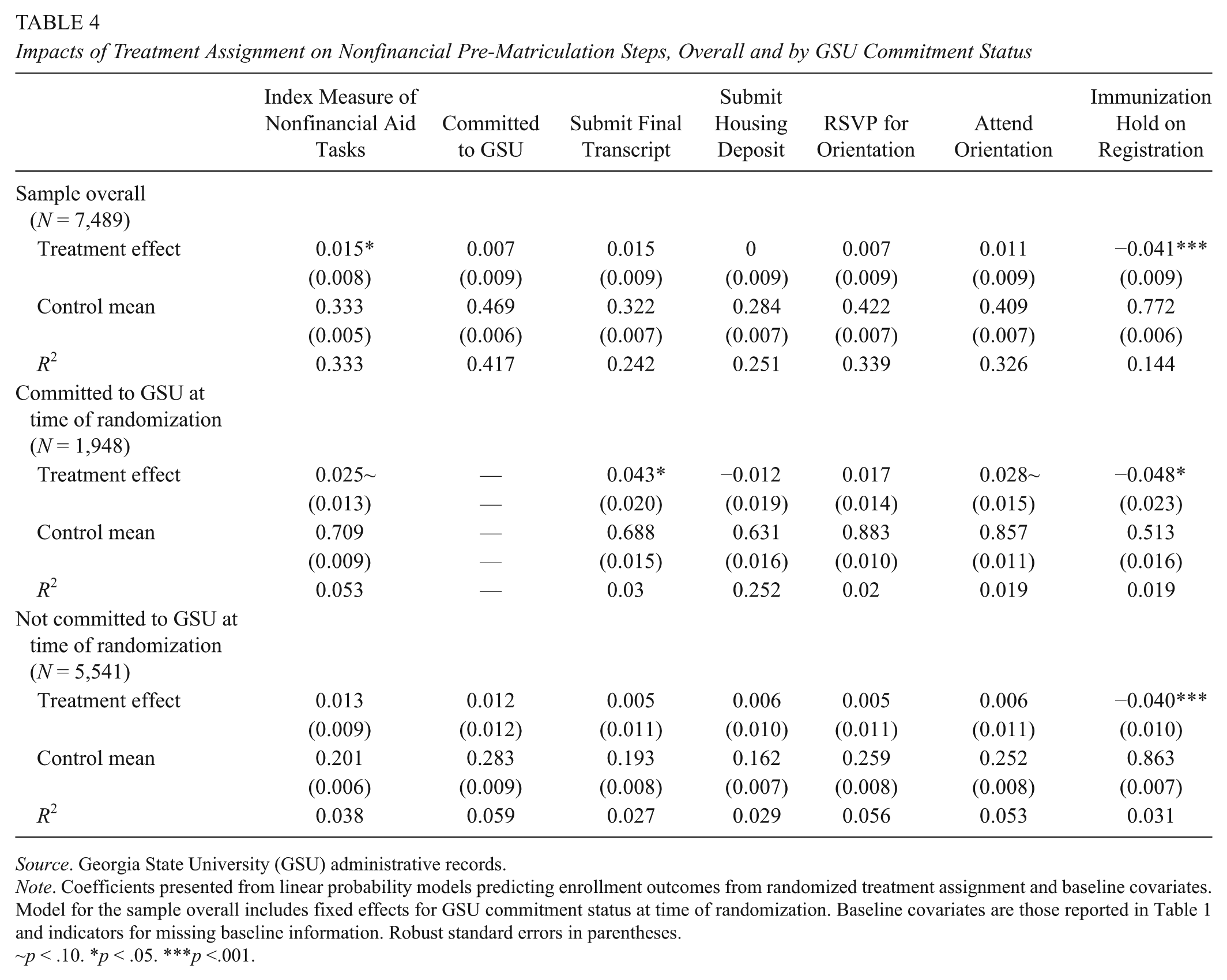

We present impacts on pre-matriculation process outcomes in Tables 4 and 5. In Table 4, we present impacts on the non–financial index measure and associated components, and in Table 5, we present impacts on the financial index measure and associated components. Across these tables, for students committed to GSU at the start of the study, we observe a robust pattern of positive and statistically significant impacts of Pounce outreach on student success with pre-enrollment tasks and timely enrollment at GSU. For those not committed to GSU, the impacts are essentially zero.

Impacts of Treatment Assignment on Nonfinancial Pre-Matriculation Steps, Overall and by GSU Commitment Status

Source. Georgia State University (GSU) administrative records.

Note. Coefficients presented from linear probability models predicting enrollment outcomes from randomized treatment assignment and baseline covariates. Model for the sample overall includes fixed effects for GSU commitment status at time of randomization. Baseline covariates are those reported in Table 1 and indicators for missing baseline information. Robust standard errors in parentheses.

~p < .10. *p < .05. ***p <.001.

Impacts of Treatment Assignment on Financial Pre-Matriculation steps, Overall and by GSU Commitment Status

Source: Georgia State University (GSU) administrative records.

Note. Coefficients presented from linear probability models predicting enrollment outcomes from randomized treatment assignment and baseline covariates. Model for the sample overall includes fixed effects for GSU commitment status at time of randomization. Baseline covariates are those reported in Table 1 and indicators for missing baseline information. Robust standard errors in parentheses. FAFSA = Free Application for Federal Student Aid.

p < .05. **p < .01.

In Table 4, we report a statistically significant overall impact on nonfinancial pre-enrollment tasks that is realized primarily by those committed to GSU at the outset of the intervention (Column 1). Committed GSU students assigned to the treatment group were 4 percentage points more likely to submit a final transcript, nearly 3 percentage points more likely to attend orientation, and nearly 5 percentage points less likely to have an immunization hold on their registration. In Table 5, we observe a somewhat stronger overall impact on tasks related to financial aid and college financing, with committed GSU students in the treatment group 3 percentage points less likely to have a FAFSA verification hold on their financial aid, 6 to 7 percentage points more likely to accept a college loan or a Stafford loan, specifically, and 6 percentage points more likely to complete college loan counseling.

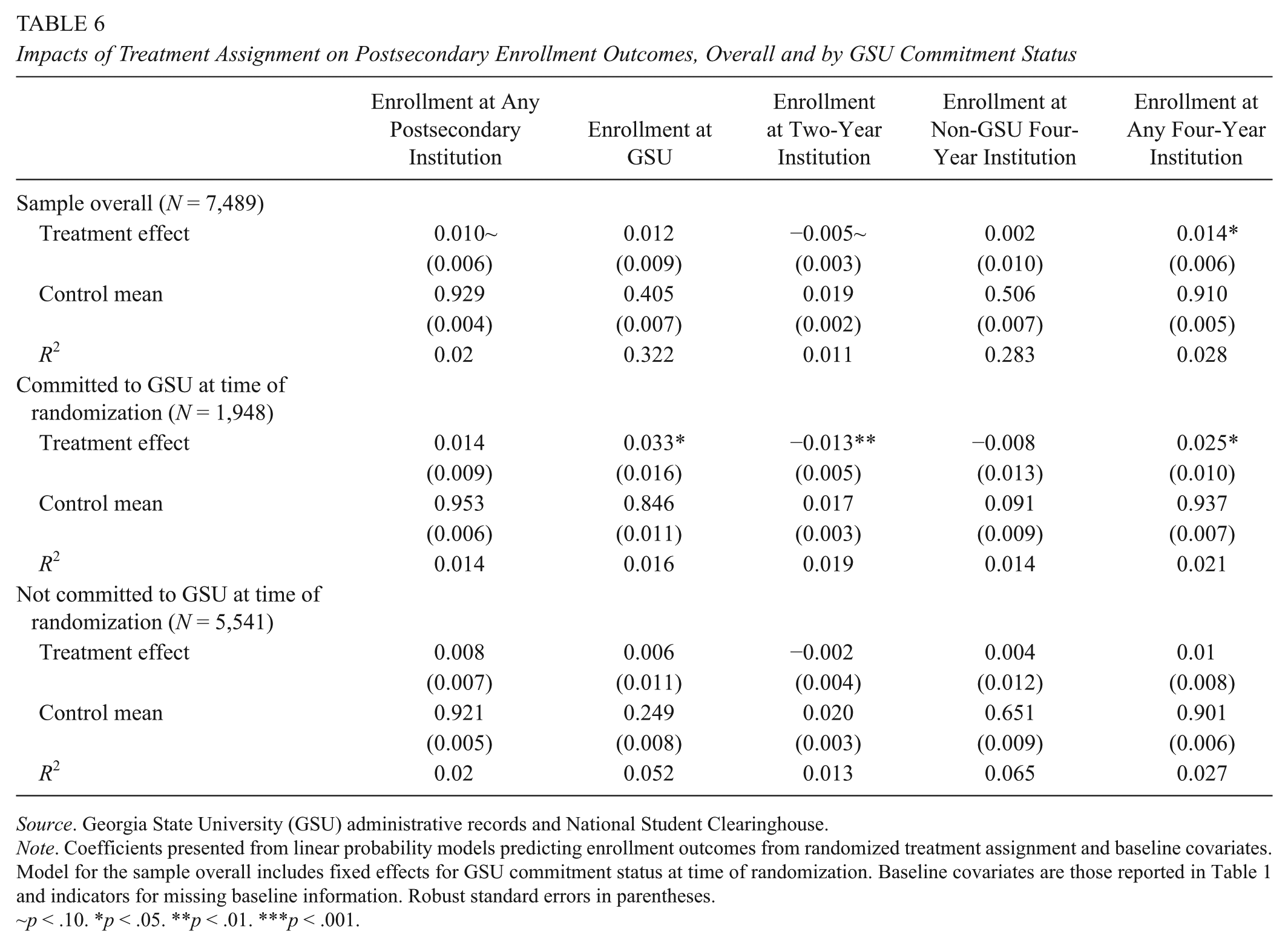

Ultimately, improvement in the completion rates of these pre-enrollment tasks culminated in GSU-committed treatment group students being 3.3 percentage points more likely to enroll successfully in GSU in the fall of 2016 (Table 6). If implemented for an entire incoming cohort of approximately 3,500 students, this impact equates to helping approximately 116 accepted students—who would have otherwise succumbed to summer melt—to matriculate at GSU.

Impacts of Treatment Assignment on Postsecondary Enrollment Outcomes, Overall and by GSU Commitment Status

Source. Georgia State University (GSU) administrative records and National Student Clearinghouse.

Note. Coefficients presented from linear probability models predicting enrollment outcomes from randomized treatment assignment and baseline covariates. Model for the sample overall includes fixed effects for GSU commitment status at time of randomization. Baseline covariates are those reported in Table 1 and indicators for missing baseline information. Robust standard errors in parentheses.

~p < .10. *p < .05. **p < .01. ***p < .001.

Finally, in addition to the GSU-specific impacts, in Table 6 we examine the impacts of the intervention on postsecondary enrollment overall—not just at GSU—using college enrollment records from the National Student Clearinghouse. Based on these data, we find that enrollment at other, non-GSU four-year institutions was virtually unaffected by the outreach, while enrollment in other two-year institutions was reduced by a statistically significant 1.3 percentage points. In other words, of the aforementioned 116 students, approximately 46 would have enrolled in a two-year institution, and 70 would not have enrolled anywhere at all.

Taken together, we conclude that the Pounce outreach impacted overall enrollment by supporting GSU-intending students to follow through on their stated intentions at higher rates and rely less on other postsecondary options, such as enrolling in a two-year institution instead. Reducing reliance on two-year institutions may have important downstream impacts on four-year degree completion rates (e.g., Goodman, Hurwitz, & Smith, 2017), although we are unfortunately not able to consider persistence effects in the data to which we have access.

A final question relates to whether the impacts vary according to salient student characteristics. We specifically consider heterogeneity by gender, race/ethnicity, first-generation status, and whether or not students are eligible for the Georgia HOPE Scholarship. 14 On most of these dimensions, we observe no evidence of significantly different impacts. The one potential exception to this is first-generation status. Specifically, our results suggest that the outreach led to larger improvements for first-generation students in navigating the financial aid process, although impacts on nonfinancial tasks and GSU enrollment were similar for first-generation and non–first generation college goers alike. 15

Discussion

Interventions to address summer melt shine a light on the challenges that students face in transitioning from high school to college. One implication of this body of work is that the transition process may be unnecessarily complex and simplifying processes and procedures themselves would benefit students. For example, many have proposed steps for simplifying the process by which students apply for and access financial aid (e.g., Bill & Melinda Gates Foundation, 2015; Dynarski & Scott-Clayton, 2006), and this work should serve as a call to institutions of higher education to simplify wherever possible. Where complexity cannot be alleviated, our findings suggest that this conversational AI system represents an effective, scalable approach to provide college-intending students with outreach and support to navigate the winding road of pre-matriculation tasks that they face in the summer prior to the start of college. The key impact that we observe—an improvement in timely enrollment of 3.3 percentage points—mirrors results from prior intervention studies implemented in a variety of contexts. Critically, the Pounce system implemented at GSU achieved similar impacts with a fraction of the staff resources of prior studies. Moreover, given the system’s ability to learn over time, future implementations should require less staff time to respond to student questions.

An important question relates to the cost of this intervention in comparison to previous summer melt intervention. The cost of the AdmitHub platform ranges between $7 and $15 per student per year in addition to per student costs associated with staff involvement in establishing the messaging system and monitoring student communication not handled automatically. 16 These costs are less than prior summer melt interventions involving individual counselor outreach, which ranged from $100 to $200 per student (Castleman, Page, & Schooley, 2014) and on par with those involving non–AI based text-based communication (e.g., Castleman & Page, 2015). A cost of $15 per student served would translate to GSU spending approximately $53,000 on the technology annually to mitigate summer melt. This cost is easily exceeded by the increase in tuition revenue the institution would realize through increasing enrollment by margins such as those found in our investigation. Furthermore, it seems plausible that the system would get even smarter over time, leading to additional reductions in staff involvement (allowing them to invest their time elsewhere), which could reduce costs further.

Pounce features two key innovations. First, the system integrates university data on students’ progress with required pre-matriculation tasks, thereby tailoring the outreach that students receive to support them only on tasks where the data suggest they may need help. In this way, the system ensures that messages sent are relevant to the individual recipients and reduces the likelihood of students heeding less attention to outreach that they regard as unrelated to their personal needs. Moreover, messaging students about tasks already completed could inadvertently confuse them and complicate rather than facilitate the transition process.

Second, the Pounce system leverages artificial intelligence to handle an ever-growing set of student issues, challenges, and questions. This system provides a strategy for universities to address summer melt that is personalized and improves over time, can be accessed by students on their own schedule 24 hours a day, and efficiently scales to reach large numbers of students.

Artificial intelligence and virtual assistants, such as Pounce, hold promise for increases in efficiency, especially for industries like education that rely heavily on communication. Of students who completed high school in 2014, for example, 68%—some 2 million individuals—transitioned directly to postsecondary education. 17 The matriculation process and its corresponding challenges remain reasonably consistent over time. Thus, artificially intelligent systems such as Pounce hold promise to provide these transitioning students with personalized support to stay on track while not burdening universities with excessive costs or staff time demands. Rather, this system can alleviate the need for staff to respond to common questions and instead free their time for those issues that only humans can solve. Just as self-driving cars perform poorly in bad weather, AI-enabled advising technology cannot handle all of the challenges that students face in navigating the complex terrain of accessing postsecondary education.

A final point is that matriculation to college is merely the first step in students’ postsecondary pathway. Although examining college persistence is beyond the scope of this paper, we recognize that initial matriculation is far from a guarantee that students will earn a degree. In addition, the types of challenges that students confront in the transition process can similarly hinder postsecondary success once enrolled. For this reason, in future work, we aim to examine the impact on persistence and degree completion of AI-enabled nudge technology implemented throughout the course of students’ undergraduate careers.

Footnotes

Acknowledgements

We are grateful to AdmitHub and especially to Andrew Magliozzi, Kirk Daulerio, and Cristina Herndon for their partnership in this research collaboration. Elliott Ribner provided excellent assistance with data management and assembly. At Georgia State University, we thank Tim Renick, Scott Burke, Darlene Lozano. and the admissions staff for their collaboration. Any errors or omissions are our own.

4.

The FAFSA is the Free Application for Federal Student Aid. Research points to the FAFSA in particular as being an arduous task in the process of accessing higher education (Bettinger, Long, Oreopoulos, & Sanbonmatsu, 2012; Dynarski & Scott-Clayton, 2006; Dynarski, Scott-Clayton, & Wiederspan, 2013; Kofoed, 2017; Page, Castleman, & Meyer, 2017; Page & Scott-Clayton, 2016).

5.

AdmitHub is an education technology startup that builds conversational artificial intelligence (AI) to guide students on the path to and through college. AdmitHub develops chatbots for colleges and universities to personify their mascots and augment their counseling staffs. As of fall 2017, AdmitHub has 15 college partners and has reached more than 75,000 students. For more information on AdmitHub, see ![]() .

.

6.

These workflows paralleled existing university email communication flows in both language and tone.

7.

Through a process of supervised machine learning, AdmitHub trained the Pounce system by observing all student conversation logs. The practical process of training conversational AI requires humans to review all chat logs and manually identify instances of error or confusion. That human feedback is then incorporated into the ongoing training process. This process is referred to as deep learning with convolutional neural networks (e.g., Johnson & Zhang, 2015; Kalchbrenner, Grefenstette, & Blunsom, 2014; Kim, 2014; Kim, Jernite, Sontag, & Rush, 2015).

11.

Only one variable suggests a difference, indicating that treatment group students were marginally more likely to be White (significant at the p < .10 level). Given the number of tests that we run, we could easily obtain this marginally significant result simply by chance. As an additional check, we ran tests to assess balance on the covariates jointly (Hansen & Bowers, 2008). With this methodology, we tested differences between treatment and control groups overall and within the commitment status subgroups groups. These p values associated with these omnibus tests (reported at the bottom of ![]() ) far exceed the threshold value of .05 and provide further evidence that the treatment and control groups are well balanced in terms of baseline covariates.

) far exceed the threshold value of .05 and provide further evidence that the treatment and control groups are well balanced in terms of baseline covariates.

12.

Kling, Liebman, and Katz (2007), for example, create an index of economic self-sufficiency that averages together five measures of employment, earnings, and public assistance receipt. The authors note that “the aggregation improves statistical power to detect effects that go in the same reaction within a domain.” Kling and colleagues build their index by averaging z-score transformations of each of the component variables. The z-score transformation allows variables to contribute equal weight, even when measured on different scales. Because all of our outcomes are measured on a 0–1 binary scale, we reason that the z-score transformation is not necessary. We find this to be the case, although we omit associated results for parsimony.

13.

The National Student Clearinghouse is a nonprofit organization that maintains student-level postsecondary enrollment records at approximately 96% of colleges and universities in the United States.

14.

We identify students as HOPE eligible if they are residents of Georgia and have a high school GPA of at least 3.0.

15.

This statement is based on the fact that the impact estimate for first-generation students was approximately twice as large as that for the non–first generation students. Nevertheless, we lack precision to differentiate these two effects statistically. For this reason, we consider this result as highly suggestive and for this reason do not include it in our tabular presentation of results.

16.

AdmitHub estimates that outreach to 50,000 students can be reasonably managed by 20% of one full-time equivalent staff member together with the support of approximate two student workers.

Authors

LINDSAY C. PAGE is an assistant professor of research methodology and a research scientist in the Learning Research and Development Center at the University of Pittsburgh. Her research focuses on quantitative methods and their application to questions regarding the effectiveness of educational policies and programs across the preschool to postsecondary spectrum.

HUNTER GEHLBACH is an associate professor in the University of California, Santa Barbara Gervitz Graduate School of Education. Working at the intersection of educational and social psychology, his primary interest are in the social and motivational aspects of education, survey design, and environmental education.