Abstract

This study examines the psychometric properties of two assessments of children’s approaches to learning: the Devereux Early Childhood Assessment (DECA) and a 13-item approaches to learning rating scale (AtL) derived from the Arizona Early Learning Standards (AELS). First, we administered questionnaires to 1,145 randomly selected parents/guardians of first-time kindergarteners. Second, we employed confirmatory factor analysis (CFA) with parceling for DECA to reduce errors due to item specificity and prevent convergence difficulties when simultaneously estimating DECA and AtL models. Results indicated an overlap of 55% to 72% variance between the domains of the two instruments and suggested that the new AtL instrument is an easily administered alternative to the DECA for measuring children’s approaches to learning. This is one of the first studies that investigated DECA’s approaches to learning dimension and explored the measurement properties of an instrument purposely derived from a state’s early learning guidelines.

A substantial body of recent research suggests that the level of

Development of State Standards for Early Childhood

Despite definitional differences regarding the nature of school readiness (Graue, 1993, 2006; Kagan, 1990; Scott-Little, Kagan, & Frelow, 2006), an effort to develop early learning standards for pre-kindergarten to third-grade children has arisen among regional and national policy makers (Brito, 2012; Neuman & Roskos, 2005; Scott-Little et al., 2006). In the United States, outcomes from the National Education Goals Panel (NEGP) in 1995 and the Goals 2000 legislation passed in 1994 (C. E. Snow & Van Hemel, 2008) led most states to create standards for assisting preschool professionals to guide the development of infants, toddlers, and preschoolers (K. Snow, 2011). These standards included the following domains: (a) physical well-being and motor development, (b) social and emotional development, (c)

In an analysis of 46 early learning standards documents published after 1999, Scott-Little et al. (2006) noted the limited amount of attention given in many standards, in particular, to socioemotional development and approaches to learning (see also Chen, Masur, & McNamee, 2010; Niemeyer & Scott-Little, 2001; Thigpen, 2014, for similar statements), two components identified as key to early success in school (NEGP, 1995; C. E. Snow & Van Hemel, 2008). In addition to the uneven discussion among the standards themselves, to our knowledge, no state has developed assessments tailored to any of their standards. Rather, extant measures are used whose norming samples may have different characteristics than their state’s population of children, for whom the standards are targeted. Similar limitations exist in the measurement of school readiness internationally (Brito & Limlingan, 2012).

Moreover, concerns with instruments’ measurement accuracy with young children (e.g., Isquith, Gioia, & Espy, 2004; Meisels & Fenichel, 1996; K. Snow, 2006, 2011), as well as their ease of use and interpretation (Diamond, Justice, Siegler, & Snyder, 2013; National Association for the Education of Young Children, 2002), raise issues in school readiness assessments. As these assessments were meant to support instruction, identify children at risk and in need of special services, and facilitate program evaluation and accountability, accuracy of measurement is paramount. Particularly, in program evaluation and accountability, important and politically sensitive program evaluations often are conducted by request of funders, such as federal agencies, state and local governments, nongovernment organizations, and private philanthropy that require reliable and valid data for meaningful investment decisions (Center on the Developing Child at Harvard University, 2011). An additional concern is that the high-stakes testing movement (Books, 2004; Kim & Sunderman, 2005; Woodside-Jiron & Gehsmann, 2009) coupled with oversimplified and one-time-used assessments has produced deleterious effects on vulnerable populations (Gehsmann & Templeton, 2011–2012). This concern was also reflected by UNICEF research (Brito & Lumlingan, 2012).

In light of these concerns (e.g., limited, reliable instrumentation based on specific learning criteria), we describe in this article an instrument designed to measure the domain of approaches to learning (AtL) based on one state’s early learning standards that is both a valid indication of the construct and an easily administered instrument. In the sections below, we provide additional information on the approaches to learning domain, a related literature on children’s executive functioning (EF), and the context of the large-scale study where the new AtL assessment was administered.

Assessing Approaches to Learning

School Readiness and Components of Approaches to Learning

The approaches to learning construct, introduced by Kagan et al. (1995) as a component of school readiness, has been identified as an important domain related to children’s positive early achievement outcomes in math, reading, and socioemotional development (Fantuzzo, Bulotsky-Shearer, Fusco, & McWayne, 2005; Ziv, 2013). According to Kagan et al. (1995), this construct comprises a combination of traits such as gender and temperament, predispositions and attitudes conditioned by culture, and learning styles. However, in contrast to predispositions, Kagan et al. suggested that learning styles are malleable and include variables that affect how children attitudinally address the learning process: their openness to and curiosity about new tasks and challenges; their initiative, task persistence, and attentiveness; their approach to reflection and interpretation; their capacity for invention and imagination; and their cognitive approaches to tasks (p. 23).

Given that certain styles of learning are favored over others in the U.S. educational system, Kagan et al. (1995) urged that further research is needed to understand approaches to learning so that all children, despite their diversity of learning styles, could have equal opportunities to learn with appropriate pedagogical adjustments. Two decades after Kagan et al.’s assertion that approaches to learning was an underresearched area, their claim is echoed in more recent surveys of the research literature (e.g., Scott-Little et al., 2006; C. E. Snow & Van Hemel, 2008; K. Snow, 2011), although efforts have been made to develop instruments to measure, in particular, the malleable aspects of this domain.

Using varied definitions of approaches to learning, researchers have studied characteristics such as initiative, curiosity, persistence, engagement, and problem solving (e.g., Bulotsky-Shearer, Fernandez, Dominguez, & Rouse, 2011; Li-Grining, Votruba-Drzal, Maldonado-Carreño, & Hass, 2010). In addition, researchers also consistently find connections to children’s early cognitive and developmental growth with characteristics defined as attentiveness, flexibility, and organization (Ziv, 2013), as well as resourcefulness, goal orientation, and planfulness (Chen et al., 2010; Chen & McNamee, 2007). For the most part, studies consistently demonstrate that variables associated with the approaches to learning domain have unique contributions to children’s achievement beyond other important cognitive and demographic variables such as intelligence, receptive and expressive vocabulary, parental income, and education.

Related Definitions of Executive Functioning, Self-Control, and “Everyday Behaviors”

The approaches to learning construct described above shares a great deal in common with the research and literature on executive functions, the latter defined as involving such metacognitive processes as found in working memory, attention shifting, inhibitory control processes, planning, error correction, resistance to interference, and memory updating, to name a few (Blair, Zelazo, & Greenberg, 2005; Carlson, 2005; Schmeichel & Tang, 2015, for additional terminology). As with the definitions of approaches to learning, various terms are associated with executive functioning processes, which include such designations as “impulsivity, conscientiousness, self-regulation, delay of gratification, inattention-hyperactivity, executive function, willpower, and intertemporal choice” (Moffit et al., 2011, p. 2693), descriptions that primarily reflect their disciplinary origin in the medically oriented fields.

Attempting to bring some “ecological validity” or real-life applications to these constructs and behaviors, which have been researched regularly in neuropsychological fields, Galinsky (2010) has focused on the

Thus, in comparing the executive function and approaches to learning literatures (with few overlapping citations), the latter body of research, although complementary to the executive function work, aims to focus on the malleability, learning, and practical manifestations of behaviors related to executive functioning rather than its actual nature, as related to particular neurological and brain structures (see the special issue of

However, common to both areas (EF and approaches to learning) is the limited research on standardized, reliable, easily administered assessments that practitioners can use to gain information about very young children, in particular, in this important area. Although there are a number of clinical instruments or protocols to measure various aspects of EF (Carlson, 2005), most of these are not designed for normal classroom use, usually requiring one-on-one administration, needing some props, and having limited psychometric stability. Thus, a consensus across all researchers examining EF, approaches to learning, or other self-regulatory behavior (e.g., McClelland, Acock, Piccinin, Rhea, & Stallings, 2013; Poropat, 2014) is that there are few easily administered, psychometrically robust instruments available for teachers or parents to use.

Approaches to Learning Instrumentation

Studies measuring the approaches to learning domain have followed either one of two approaches by (a) using items initially developed for other instruments and purposes or (b) developing dedicated instruments focused on approaches to learning itself. An example of the first are secondary analyses (e.g., Li-Grining et al., 2010) of the Early Childhood Longitudinal Study–Kindergarten Cohort (1998–1999). This study used a small subset of items taken originally from the Social Skills Rating System (Gresham & Elliot, 1990) in which parents and teachers rated a child’s behavior on aspects of persistence in a task, curiosity, creativity, ability to concentrate, ability to work independently, and paying attention (National Center for Educational Statistics, 2010).

The second approach includes instruments developed to measure aspects of approaches to learning directly (e.g., Chen & McNamee, 2007; McDermott, Leigh, & Perry, 2002) and comprise rating scales of children’s behaviors in a preschool classroom based on the frequency of various activities (e.g., individual engagement with books or peer interaction during play), as occurring

In contrast, McDermott et al.’s (2002) Preschool Learning Behavior Scale (PLBS) is a 29-item instrument designed to capture children’s overall behaviors across various activities according to their “Competence/Motivation,” “Attention/Persistence,” and “Attitude Toward Learning.” Developers of both instruments designed them for early childhood and kindergarten teachers to administer to children, but McDermott et al.’s AtL instrument is the sole one that is normed (see Fantuzzo, Perry, & McDermott, 2004).

Each of these instruments represents one of two major philosophical views on how children’s learning may be assessed. Bridging (Chen & McNamee, 2007) is a portfolio-type assessment, assessing a range of performances across many activities over time and requiring an integrative, qualitative judgment related to the child’s development. The Preschool Learning Behavior Scale (McDermott et al., 2002), on the other hand, uses a quantitative rating scale to index degrees of performance relative to previously identified latent factor structures. The merits of each view have been debated extensively among early childhood researchers and educators (Meisels & Fenichel, 1996; Pianta, Barnett, Justice, & Sheridan, 2012). For example, Gilliam and Frede (2012) stated that early childhood development is too “variable and rapid,” and children are “not consistent” in demonstrating their abilities for any test to capture a child’s ability accurately in the brief assessment time. Nonetheless, the selection of either a qualitative portfolio assessment or rating scale measures seems largely based on the user’s philosophical preferences, with those claiming a sociocultural orientation leaning toward portfolio assessment and those with more of a cognitive or psychometric perspective learning toward rating scale measures. Nonetheless, it is clear in the research literature that the majority of studies examining approaches to learning in young children favor the use of rating scales that are examined via statistical analyses.

A standards-based measure of approaches to learning

The new AtL assessment we describe below, as well as the Devereux Early Childhood Assessment (DECA), fall into the latter category and share the general advantages of teacher and guardian rating scales in terms of amount of information obtained, ease of administration, scoring, and prior reliability and validity testing (see Campbell & James, 2007). Although the DECA was designed primarily for early identification of social and emotional problems in young children, the Devereux Foundation (2003) has reported that the instrument measures an approaches-to-learning construct. However, we could not find published literature or research studies examining the psychometric properties of this aspect of the DECA instrument or any descriptions from the Devereux Foundation of items dedicated to assessing approaches to learning behaviors.

Related to the development of the shorter AtL instrument described here, a recent review of research (Diamond et al., 2013), funded by the Institute for Education Science (IES) on early intervention and education, highlighted the need for efficient, easily administered assessments with strong evidence of reliability and validity for use in research and educational applications. Diamond et al. (2013) suggested that the research is inadequate in early assessment regarding important characteristics, such as approaches to learning, and additional research is needed to add to the knowledge base in this arena.

The Current Study

In this article, we describe research regarding the psychometric properties of two assessments of approaches to learning, an important domain of school readiness. The context for this study was an Arizona early childhood initiative, First Things First (FTF), a comprehensive, statewide early childhood program intended to increase access to health and educational services for families and children, birth to age 5 years, and to improve preschoolers’ readiness for school. The project was designed to include a researcher-developed instrument created to assess approaches to learning as defined by the AELS (Arizona Department of Education, 2005) and the widely used and standardized DECA (LeBuffe & Naglieri, 1999a, 1999b).

The AtL scale was part of a demographic questionnaire in a larger battery of readiness assessments that included direct measures of language, literacy, math, and demographics (e.g., height and weight). The researcher-designed items rating kindergarteners’ AtL were derived from the state’s early learning standards, which specified that children should be developing attitudes and behaviors characterizing initiative, curiosity, engagement, persistence, reasoning, and problem-solving skills. The AtL instrument also was designed to reduce participant response burden by decreasing time to complete the assessment portfolio for each child and to reduce overall cost in the next stage of the project.

The purpose of this study is to examine the psychometric properties of the DECA and AtL assessments and to ascertain whether these instruments measure the same or different approaches to learning domains based on parent/guardian perceptions of their children, as defined by the AELS (Arizona Department of Education, 2005) and the DECA (LeBuffe & Naglieri, 1999a, 1999b). We accomplish these goals by (a) providing evidence for validity and reliability of the DECA and AtL instruments and presenting recent results based on contemporary statistical techniques concerning DECA’s validity, (b) testing whether the parent/guardian data from the DECA rating scale fit the hypothesized DECA measurement model to determine the degree to which the DECA’s model as a whole is consistent with the empirical data, (c) developing confirmatory factor analysis (CFA) models for DECA data by using the

Method

Sample

We used a proportional, stratified random sampling approach that included 1,145 kindergarten children attending 82 schools drawn from 48 districts across the state of Arizona. We used this sampling design to ensure that the sample was randomly selected and representative of the population. We randomly selected the sample at the child and school level that included three types of schools (public, private, and charter), from three different regions (northern, central, and southern). This sample distribution, derived across the type of schools and regions, was in close agreement with the state proportions (see Barbu, Levine-Donnerstein, Marx, & Yaden, 2012; Barbu, Marx, Yaden, & Levine-Donnerstein, 2015; Yaden et al., 2011).

The average age of the children in the sample was 5 years, 8 months with an age range from 5 to 6 years, 7 months. Fifty-one percent of the children were male, with 49.1% of all children identified as Hispanic. In the present sample, 5.5% of the children had an individualized educational plan, which is required for children with identified special needs, 51.3% were eligible for free or reduced-price lunch, and 73.2% had attended an out-of-home child care, nursery school, or pre-kindergarten program, including Head Start (Barbu et al., 2012). For the analyses, the rating scales from 1,025 families with 1,145 children were examined (some families had multiple children in the sample).

Procedures

For each participating school, a directory of first-time kindergarten students was obtained. From these directories, 14 first-time kindergarten children were randomly selected per school or from one class if only one class existed. From schools with two kindergarten classrooms, seven children were randomly selected from each classroom; from schools with three classrooms, five children per classroom were randomly drawn; and from schools with more than three classrooms, first, three classes were randomly selected, and then the targeted number of children (five per classroom) was randomly drawn from each. After children were identified, the teacher sent home the child with a packet containing a letter explaining the purpose of the study, a parent consent form, and a child assent form. The research staff then called parents to confirm they received the packet, further explained the study, responded to any questions, and invited them to participate.

Instrumentation

The parents/guardians of participating students completed two questionnaires: the newly developed AtL instrument and the DECA.

AtL rating scale

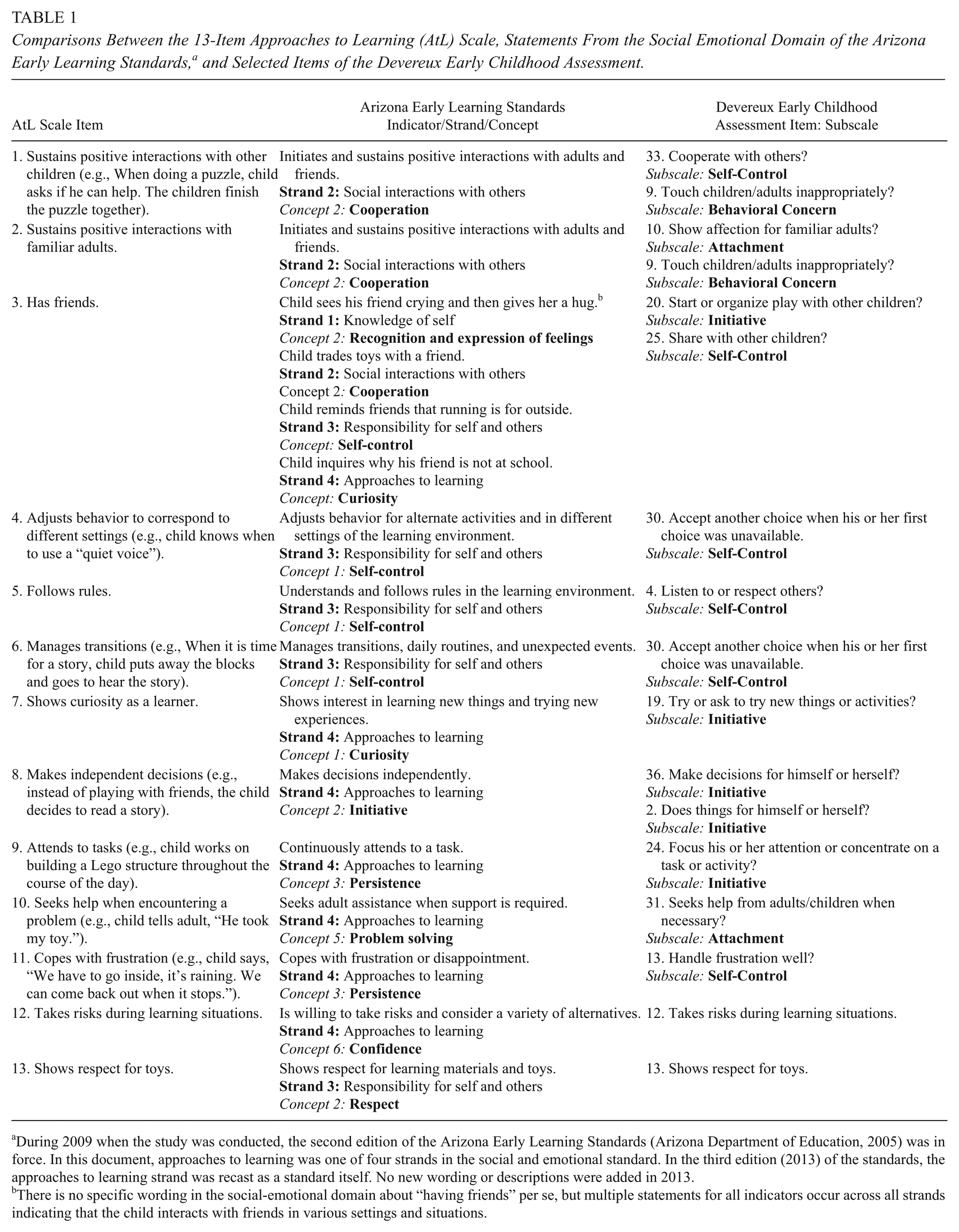

The newly developed AtL rating scale included 13 items derived from the state’s early learning guidelines. To illustrate its alignment with state standards and the DECA, Table 1 provides a comparison of the AtL items, the learning indicators from the AELS (Arizona Department of Education, 2005), and the selected items from the DECA with similarly worded items. The AtL instrument employs a 4-point scale: (a)

Comparisons Between the 13-Item Approaches to Learning (AtL) Scale, Statements From the Social Emotional Domain of the Arizona Early Learning Standards, a and Selected Items of the Devereux Early Childhood Assessment.

During 2009 when the study was conducted, the second edition of the Arizona Early Learning Standards (Arizona Department of Education, 2005) was in force. In this document, approaches to learning was one of four strands in the social and emotional standard. In the third edition (2013) of the standards, the approaches to learning strand was recast as a standard itself. No new wording or descriptions were added in 2013.

There is no specific wording in the social-emotional domain about “having friends” per se, but multiple statements for all indicators occur across all strands indicating that the child interacts with friends in various settings and situations.

In a previous analysis, we investigated the psychometric properties of the AtL instrument (Barbu et al., 2015) and found a one-factor structure via exploratory factor analysis (EFA) and CFA. Moreover, early childhood educators and researchers from a tri-university research consortium (University of Arizona, Arizona State University, and Northern Arizona University) assessed the face validity and content validity of this rating scale. These results, combined with evidence of reliability (0.83 for latent variables and ranging between 0.52 and 0.79 for manifest variables) and structural validity of the instrument (Barbu et al., 2015), supported the educational utility of the AtL as a tool for measuring school readiness among kindergarteners with a population similar to children in Arizona.

DECA

For comparison purposes with the researcher-developed AtL instrument and to index broader socioemotional characteristics in the larger study, we administered the DECA, a standardized, norm-referenced assessment of within-child protective factors, designed originally to identify resilience in children ages 2 to 6 (LeBuffe & Naglieri, 1999a, 1999b). The DECA’s 37 items are measured on a 5-point Likert scale indexing behaviors as occurring

The DECA is considered a reliable instrument for assessing children’s protective factors (LeBuffe & Naglieri, 1999b), based on research findings from infants to preschoolers (Barbu et al., 2012; Brinkman, Wigent, Tomac, Pham, & Carlson, 2007; Buhs, 2003; Bulotsky-Shearer, Fernandez, & Rainelli, 2013; Chittooran, 2003; Jaberg, Dixon, & Weis, 2009; Lien & Carlson, 2009; Meyer, 2008; Ogg, Brinkman, Dedrick, & Carlson, 2010).

Although the DECA measures a range of important constructs, recent research using structural equation modeling techniques has found issues related to DECA’s validity that may limit its use; therefore, researchers have recommended further investigation of DECA’s psychometric properties. For example, Ogg et al. (2010) found 10 problematic item pairs as a source of poor model fit of the DECA instrument in their sample of 1,344 participants recruited from 25 Head Start centers across a four-county region in the Midwest. In addition, Barbu et al. (2012) found insufficient discriminant validity of the DECA instrument based on samples of parents’ and teachers’ ratings of 1,145 entering kindergartners in the Southwest. More recently, Bulotsky-Shearer et al. (2013) found that DECA’s factor structure was not adequate using a large sample of culturally and linguistically diverse Head Start children (

A possible explanation for the departure of these recent conclusions from the results reported in DECA’s technical manual (LeBuffe & Naglieri, 1999a) and obtained with classical statistical analyses is that the traditional multivariate procedures are incapable of assessing and correcting for measurement errors (Byrne, 2009). Therefore, correlations between two latent variables in structural equation modeling (SEM) could be more than twice that for the individual observed variables analyzed with classical statistics, and hidden effects among latent variables (i.e., multicollinearity) could remain undetected (Bollen, 1989).

Data Analysis

We approached the data analysis in three phases. First, we developed CFA models for our sample DECA data based on the theoretical structure explained above. The purpose of assessing a model’s overall fit is to determine the degree to which the model as a whole is consistent with the empirical data. The null hypothesis states that the model fits the population data perfectly, and the aim is not to reject this null hypothesis. Although a wide range of goodness-of-fit indices have been developed to provide measures of a model’s overall fit, one is not superior to the others in all situations (Brown, 2006). In addressing the ordinal nature of the observed variables, Byrne (2009) suggested five goodness-of-fit indices to test and respecify the hypothesized model: chi-square (χ2), root mean square error of approximation (RMSEA), goodness-of-fit index (GFI), comparative fit index (CFI), and expected cross-validation index (ECVI). The following cutoff criteria (Hu & Bentler, 1999) were used as guidelines for goodness-of-fit indices between the target model and the observed data: (a) GFI values close to 0.95 or greater, (b) RMSEA values close to 0.06 or below, and (c) CFI values close to 0.95 or greater. In addition, the model with the smallest ECVI value suggests that the hypothesized model is well fitted and represents a reasonable approximation to the population (Browne & Cudeck, 1993).

Second, we developed CFA models for our sample DECA data by constructing

In the present analysis, a parceling approach for the DECA instrument was appropriate for two reasons. First, the errors were reduced in the final variance-covariance matrix due to each item’s specificity, in addition to the randomness of each item’s errors. Therefore, the value of GFI was expected to improve with a correct selection of factors forming each parcel (T. D. Little et al., 2002). Second, factor structures can be difficult to determine when analyzing individual items from a lengthy questionnaire. Practical convergence difficulties could arise in LISREL 8.80 when analyzing a larger number of manifest variables. In our case, this occurred when we added 13 more items from AtL to our sample DECA data, which already contained 37 measured variables.

The potential advantages of using parcels include the following: (a) Parcels better approximate normality than individual items (Brown, 2006), (b) they improve reliability and relationships with other variables (Kishton & Widaman, 1994), and (c) models based on parcels may be less complex than models based on individual items (i.e., fewer parameters, smaller input matrix) (Brown, 2006). However, a variety of disadvantages could exist in the following cases: (a) The assumption of unidimensionality is not met (i.e., each indicator loads on a single factor and the error terms are independent), (b) the likelihood of improper or nonconvergent solutions increases as the number of parcels decreases (Nasser & Wisenbaker, 2003), and (c) the use of parcels may not be feasible in situations in which too few items form a sufficient number of parcels (Brown, 2006). In our case, the

Third, we examined the relationship between DECA and AtL questionnaires to ascertain the extent to which these instruments measured the same or different approach-to-learning domains. In this phase, the parameters for both DECA and AtL models were estimated simultaneously, and the hypothesized structure was analyzed as a function of the overall goodness of fit for the combined model, followed by an examination of standardized residuals. We investigated the correlations among latent variables for the completely standardized solution, because the two tests (i.e., DECA and AtL) were measured using two different Likert scales.

Results

Data Missingness

For the current study, less than 3% of the data for guardians were missing, and we concluded that these results raised no concerns regarding data missingness. Data in this study were ordinal based on DECA’s five rating categories and AtL’s three rating categories for measurement. As a result, we generated polychoric correlation matrices using PRELIS 2.0. Two possible approaches are implemented in PRELIS 2.0 when data are missing: (a) the expectation maximization algorithm (EM; Dempster, Laird, & Rubin, 1977) and (b) Markov chain Monte Carlo method (MCMC; Gilks, Richardson, & Spiegelhalter, 1995). Collectively known as multiple imputation (MI), these procedures replace the missing values across multiple variables under the assumptions of either data missing at random (MAR) or data missing completely at random (MCAR) and multivariate normality. The assumption of multivariate normality of observed data is usually violated when the outcomes are rated on Likert-type scales (Lubke & Muthén, 2004), but the weighted least squares (WLS) estimator adjusts for this violation in large samples with categorical outcomes (Brown, 2006).

We used MCMC imputation technique and full-information maximum likelihood (FIML) estimation to correct for missing data and evaluate the consistency between the missing data treatment methods. The MCMC imputations were based on generation of five imputed data sets. The parameter estimates (i.e., factor loadings, available model fit statistics, etc.) for MCMC imputation and FIML were nearly identical across both missing data treatment methods; therefore, we reported the MCMC imputation results in agreement with data analyses in the larger project from which these data were drawn (Yaden et al., 2011). In our case, Little’s MCAR test, χ2(535, 984) = 951.11,

The original sample of 2,290 DECA and AtL questionnaires completed by the parents/guardians of 1,145 participating students was reduced to 2,075 (1,053 for DECA and 1,022 for AtL) by eliminating the participants without records. Consequently, data from participants with at least one response were imputed to complete the missing data, using the MI procedure (Rubin, 1987) under the MAR assumption, with a WLS estimator to correct the violation of multivariate normality. Finally, we identified 984 parent/guardian questionnaires for both DECA and AtL after eliminating the participants with only one questionnaire (i.e., one DECA or one AtL questionnaire).

DECA Model Analysis

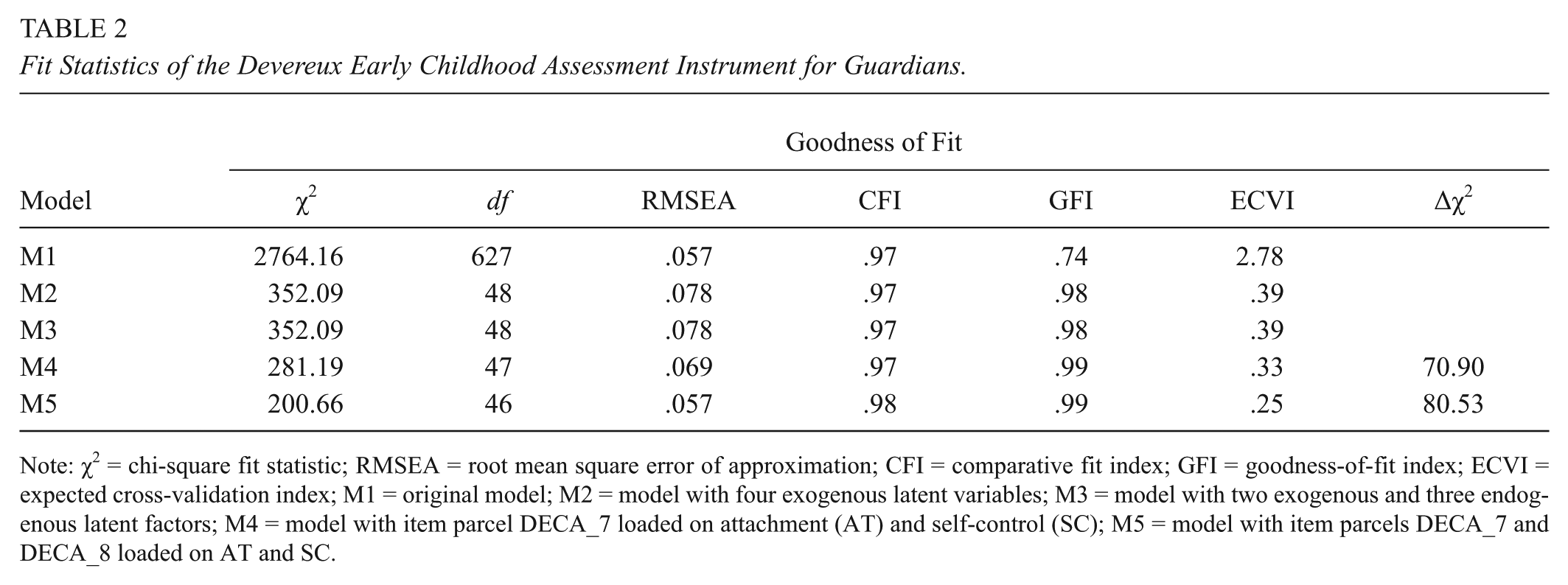

In the first model (M1) for the DECA (see Table 2), we followed the official prescription in assigning the indicators for each of the four latent variables considered. The variance of each latent variable was fixed to 1. The minimum fit function value was χ2(627,

Fit Statistics of the Devereux Early Childhood Assessment Instrument for Guardians.

Note: χ2 = chi-square fit statistic; RMSEA = root mean square error of approximation; CFI = comparative fit index; GFI = goodness-of-fit index; ECVI = expected cross-validation index; M1 = original model; M2 = model with four exogenous latent variables; M3 = model with two exogenous and three endogenous latent factors; M4 = model with item parcel DECA_7 loaded on attachment (AT) and self-control (SC); M5 = model with item parcels DECA_7 and DECA_8 loaded on AT and SC.

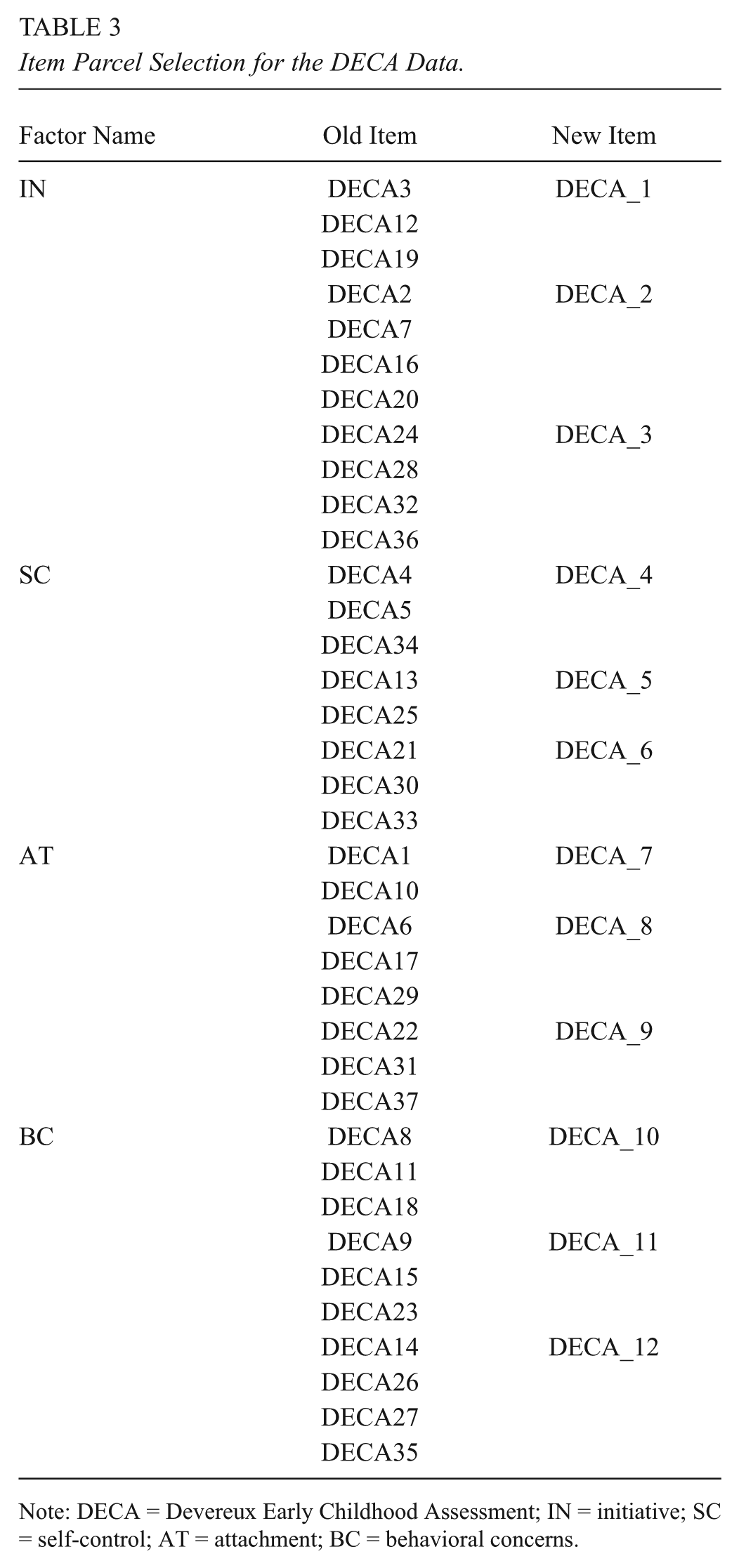

In parceling the DECA model, we averaged two, three, or four original indicators together to generate a new indicator (Bollen, 1989) and reduced the number of indicators for each of the four factors to three. Table 3 shows a detailed diagram of this item parceling selection process. Internal consistency reliability of the parcels was acceptable, ranging from .64 for the DECA 2 parcel to .87 for the DECA 12 parcel. In addition, the normality of the parcels indicated that the values for skewness were less than an absolute value of

Item Parcel Selection for the DECA Data.

Note: DECA = Devereux Early Childhood Assessment; IN = initiative; SC = self-control; AT = attachment; BC = behavioral concerns.

We formed and investigated two parcel models. The first model represented a parceled model consisting of four exogenous latent variables with three indicators per variable (M2). The second model included a structural model with two exogenous and three endogenous latent factors (M3). The fitting parameters for both models were similar: RMSEA (.078), ECVI (.39), CFI (.97), GFI (.98), and χ

Standardized factor intercorrelations, factor loadings, and residuals of the Devereux Early Childhood Assessment (DECA) model after parceling (M5) for parent/guardian samples. Overall fit of the M5 model: χ2(46,

Therefore, on the basis of the fit statistics, in conjunction with the following evidence—(a) a decrease of the ECVI value, (b) the distribution of the standardized residual values, and (c) a significant reduction in χ2 between models M4 and M5, Δχ

Combined DECA-AtL Model Analysis

Figure 2 presents the standardized factor intercorrelations, factor loadings, and residuals for the combined AtL-DECA model for parent/guardian data. The overall goodness-of-fit indices for this model, χ2(261,

Standardized factor intercorrelations, factor loadings, and residuals of the Devereux Early Childhood Assessment (DECA)–approaches to learning (AtL) combined model for parent/guardian samples. Overall fit of the DECA and AtL combined model: χ2(261,

First, the BC measure correlated negatively with all the other factors from both tests (i.e., IN, SC, AT, and AtL). This result was consistent with the way in which the instruments were constructed. The items measuring the BC factor were designed to address developmental factors that are contraindicative of the factors in the other scales. Therefore, items indicating large behavioral concerns were designed as indicators of a child’s low socioemotional development.

Second, correlations between the latent factor of the AtL instrument and latent factors of the DECA instrument ranged between .74 and .85 in absolute value,

Discussion

This is one of the first studies that examined an assessment developed specifically to reflect children’s performance on a particular state standard related to approaches to learning. Thus, aligned with the need for valid and reliable instruments that are easy for parents/guardians to complete (Diamond et al., 2013), we developed a 13-item instrument based on the AELS (Arizona Department of Education, 2005) that examined approaches-to-learning behaviors among kindergarteners. Additionally, this is one of the first research efforts of which we know that investigated the DECA’s approaches-to-learning dimension. Although DECA is a widely used tool that measures the socioemotional resilience of young children, we have found no studies in the literature that explore the approaches-to-learning component of the instrument.

In previous analyses (Barbu et al., 2015), we found that the 13-item AtL rating scale has a valid and reliable one-factor structure, and it can be reliably used to assess children’s approaches-to-learning behaviors. In this study, our purpose was to ascertain the extent to which AtL and DECA instruments measured similar approaches-to-learning domains (Arizona Department of Education, 2005; LeBuffe & Naglieri, 1999a, 1999b) based on parent/guardian’s perceptions of their children. Through an analysis of a combined DECA-AtL model of the two instruments, we found that although their Likert rating scales differed, they measured a similar approaches-to-learning domain, and thus, used simultaneously, these instruments are redundant. Our findings also supported the content validity of the AtL instrument, being based on a comparison of the AtL items with those of the AELS and DECA (see Table 1) and an analysis at the construct level between DECA and AtL instruments.

Unfortunately, an analysis at the item level between these two instruments was not possible because DECA’s technical manual (LeBuffe & Naglieri, 1999a, 1999b) does not identify which of its items is included in the approaches-to-learning domain. Moreover, our findings that the DECA subscales (i.e., self-control, initiative, attachment, and behavioral concerns) strongly correlated with AtL calls into question their uniqueness in measuring broader aspects of social and emotional development, in addition to the more narrow approaches-to-learning construct, which it states to measure (Devereux Foundation, 2003). In a previous analysis (Barbu et al., 2012), we found that the DECA subscales of self-control, initiative, and attachment lacked discriminant validity; therefore, scores on these subscales should be viewed with great caution regarding making decisions about children’s performances or their resilience. Thus, we invite the DECA’s authors to test and reevaluate the content and structural validity of their instrument and encourage research to explore the approaches-to-learning dimension of the DECA instrument in behavior and psychoeducational studies.

The statistical procedures used in this study provided an improved set of techniques: (a) to appropriately mitigate missing parent/guardian data and increase sample size; (b) to obtain the most accurate estimated model fit between DECA’s parent/guardian empirical data and its hypothesized measurement model, which indicated that DECA’s model as a whole was consistent with these data; and (c) to demonstrate evidence that AtL and DECA questionnaires correlate and both measure the approaches-to-learning domain. Moreover, using CFA with the

Limitations

Three potential sources of limitations are highlighted in this study. First, the use of the item parceling procedure could affect the structural parameter estimates and might lead to a biased estimate of model parameters in some situations, while allowing for a slight improvement of the model fitting. However, researchers have demonstrated that parcels do not outperform models based on individual items (Hau & Marsh, 2004). Therefore, we concluded that this technique was appropriate for our study.

A second limitation consists in data composed of ordinal measurement scales. Although Likert scales are widely used methods of capturing ratings from respondents in the social and behavior sciences, they produce imprecise response measures from a restrained number of categories (e.g., 5-point rating scales); thus, information might be lost due to the limited resolution of categories (i.e., less precision) (Neibecker, 1984). In our case, considering that DECA had five measurement categories and AtL included four, the presence or absence of any additional categories in Likert scales could lead to different results.

Finally, the

Conclusion

Researchers and program evaluators charged with assessing and evaluating early childhood programs have little guidance in choosing what assessments might be appropriate for measuring early learning standards for particular populations of children in different geographical areas. Also, while standardized instruments aimed to measure various abilities in the socioemotional domain exist (Denham, 2006; Denham, Wyatt, Bassett, Echeverria, & Knox, 2009), the main purpose of these instruments is primarily clinical. They have not been designed for large-scale administration or to address children’s readiness for kindergarten, as indicated by their progression on specific early learning standards.

In a recent review of research, funded by the Institute of Education Sciences from 2002 to 2006, Diamond et al. (2013) strongly recommended that “research identifying valid and reliable ways to measure children’s skills and capture their learning over time is greatly needed,” and furthermore, “there is a need to develop tools that can be readily used within everyday educational settings by teachers and other practitioners” (p. 29). Similarly, early childhood researchers associated with the Center on the Developing Child at Harvard University (2011) in their report,

Our goal was to create a statistically robust, easily administered assessment aimed to effectively measure the approaches-to-learning domain based on Arizona’s early learning standards, which are similar to the standards adopted by many other states. The question of generalizability to populations in other states and regions, in addition to assessments derived from other state standards, remains open. The response to these questions requires further research to ascertain AtL’s external validity beyond our sample, based on teacher and parent ratings. Moreover, our intent was to examine and determine whether the DECA and AtL instruments measured similar approaches-to-learning domains based on parent/guardian perceptions of children. In our next analysis, data from teachers’ responses to both instruments will be examined to ascertain whether the relationship between DECA and AtL’s instruments is retained.

We suggest that the AtL questionnaire, with its 13 items, serves as a more efficient and accurate tool for data collection of the approaches-to-learning domain than the DECA. However, we need to test this instrument among populations in other states with learning standards that similarly align with those from Arizona.

In addition, future research should evaluate AtL’s efficacy against other instruments (i.e., Bridging assessment and PLBS) that assess children’s learning behavior and to explore its generalizability in measuring the approaches-to-learning domain as an important component of school readiness. At the same time, we invite researchers and practitioners to test the AtL instrument with populations different from or similar to children in Arizona and to evaluate the psychometric properties of this promising tool in behavior and psychoeducational studies.

Footnotes

Authors

OTILIA C. BARBU, PhD, is scientist at the SPH Analytics and research associate at the University of Arizona, College of Education. Her research interests include mathematical and statistical modeling, measurement, assessment, and evaluation in education and healthcare.

DAVID B. YADEN, Jr., PhD, is professor of language, reading, and culture in the Department of Teaching, Learning and Sociocultural Studies at the University of Arizona, College of Education. His research interests include early childhood development and assessment, the early acquisition reading and writing in multilingual environments and the application of complex adaptive systems theory to the emergence and development of literacy knowledge and ability.

DEBORAH LEVINE-DONNERSTEIN, PhD, is senior lecturer and senior researcher in the Department of Educational Psychology, College of Education, at the University of Arizona. Her research encompasses first generation undergraduates, witnessed race-ethnic prejudice models, effects of sleep among youth, and early childhood education.

RONALD W. MARX, PhD, is dean and professor of educational psychology at the College of Education at the University of Arizona, where he holds the Lindsey/Alexander Chair in Education. His work in learning and instruction has focused on science education in urban school reform, and more recently, measurement issues in early childhood education.