Abstract

This study investigated whether sustained listening effort (sLE) contributes to measurable changes in fatigue, defined here as a decline in performance and/or motivation to sustain effort over time and to fatigue-related sleepiness, defined as a physiological state indexed by spontaneous pupil fluctuations. We further evaluated whether the Pupil Unrest Index (PUI) can serve as an objective marker of these processes. Twenty young adults with normal hearing completed a sustained listening task under speech-on-speech masking while simultaneously performing a secondary memory task, tested across four acoustic processing conditions. PUI increased from pre- to posttest in most participants, with the largest rise in the most difficult (unprocessed colocated) condition. Subjective fatigue ratings also increased across sessions, though without systematic differences between conditions. Behavioral results showed sequence effects: performance declined in intermediate conditions, whereas it remained stable in the easiest and hardest conditions, suggesting that both fatigue and motivational regulation shaped outcomes. Together, these findings demonstrate that sLE is associated with increased subjective fatigue and fatigue-related sleepiness. They further support the PUI as a promising objective physiological marker of fatigue-related sleepiness associated with sLE, complementing subjective and behavioral assessments.

Introduction

Listening in adverse acoustic environments is often effortful and can become exhausting, particularly for individuals with hearing impairment. In recent years, researchers have increasingly examined not only the momentary listening effort required to understand speech in challenging situations (McGarrigle et al., 2014; Pichora-Fuller et al., 2016), but also the sustained listening effort (sLE) needed to maintain performance over extended periods of time (Fiedler et al., 2021). Understanding how sLE impacts listeners is important because it may contribute to downstream consequences such as fatigue and reduced motivation.

Defining Fatigue and Sleepiness

Fatigue is a multidimensional construct that can be broadly described as a subjective, motivational, or performance-related decline in the capacity to sustain effort over time (Hornsby, 2013; McGarrigle et al., 2014). In the context of listening, fatigue has been operationalized as declining speech understanding (accuracy) or slower responses across the duration of a task, as well as increasing subjective ratings of tiredness or reduced motivation. By contrast, sleepiness refers to a physiological state characterized by an increased propensity to fall asleep. A well-established marker of sleepiness is spontaneous pupil fluctuation, also known as pupil unrest or hippus (Lowenstein et al., 1963; Schumann et al., 2017). Importantly, tonic pupil activity is also influenced by circadian processes and time-of-day effects. Previous research has shown that spontaneous pupil oscillations vary across the day, reflecting fluctuations in arousal and vigilance (e.g., Lowenstein et al., 1963; Morad et al., 2000). When interpreting PUI changes, potential diurnal influences must therefore be considered alongside task-induced fatigue effects. This phenomenon has been formalized by Lüdtke et al. (1998) in the Pupil Unrest Index (PUI), which quantifies spontaneous oscillations in pupil diameter over extended recordings in darkness. The PUI has been widely used in sleep and occupational research as an objective indicator of sleepiness and fatigue, but it has rarely been applied in the context of hearing research.

In the present study, sleepiness indexed by the PUI is interpreted as one physiological manifestation associated with sustained effort–related fatigue rather than fatigue per se.

Measuring Listening Effort

A wide variety of methods have been proposed to quantify listening effort, ranging from subjective rating scales to behavioral dual-task paradigms and physiological indices such as pupillometry and EEG (Gagné et al., 2017; Ohlenforst et al., 2017). Behavioral measures, such as reduced performance on a secondary task, are commonly interpreted as indicators of effort during a primary task. However, the relationship between these measures and longer-term states such as fatigue is complex and often inconsistent (Alhanbali et al., 2017; Holman et al., 2021). Subjective ratings capture listeners’ internal experience but are prone to biases (Moore & Picou, 2018) and may not align with physiological measures (Alhanbali et al., 2019).

Pupil Unrest as a Novel Measure

Applying the PUI to sLE research may provide a bridge between cognitive hearing science and fatigue research. Importantly, different research communities have referred to spontaneous pupil oscillations using varying terms, including hippus and fatigue waves (Turnbull et al., 2017; Winn et al., 2018). Better understanding how these physiological markers relate to sLE is necessary to validate the PUI as a robust, objective tool in hearing research.

Experimental Design Considerations

To examine the impact of sLE under varying acoustic demands, we employed a speech recognition task with a concurrent memory task to ensure sustained cognitive engagement throughout the experiment. The dual-task structure was selected to create a sustained and cognitively demanding listening context over time, rather than to derive a strict dual-task cost measure of listening effort in isolation. We further manipulated acoustic difficulty using four signal-processing conditions: (a) unprocessed colocated target and masker—a baseline with very high difficulty and no spatial segregation cues; (b) unprocessed spatially separated target and maskers—less effortful due to spatial cues; (c) stimuli processed using an ideal binary mask (IBM)—an oracle algorithm known to greatly reduce listening effort (Brungart et al., 2006) but not feasible in hearing aids; and (d) stimuli processed by the binaural minimum-variance distortionless response beamforming (BMVDR)—a realistic algorithm providing spatial enhancement while preserving binaural cues (Marquardt & Doclo, 2018). These conditions were included because they represent distinct levels of acoustic task demands (Rennies et al., 2019). In line with prior research, we assumed that higher acoustic demands would elicit greater listening effort, which we operationalized through subjective, behavioral, and physiological indices. This allowed us to test whether spatial and algorithmic cues differentially influence the development of fatigue (Marquardt & Doclo, 2018; Rennies et al., 2019). Each processing approach constituted a distinct experimental condition, and the algorithms were not combined with the unprocessed spatial configurations. An overview of the experimental procedure is provided in Figure 1.

Overview of study design. Trials (right column) consisted of the presentation of the secondary (memory) task, the presentation of the primary (reproduction) task, the reproduction of the primary task, and the reproduction of the secondary task. Each block (middle column) consisted of 20 trials and was followed by a categorical listening-effort rating (CALES), which was taken from Krueger et al. (2017) and modified for our purpose. The signal-to-noise ratio (SNR) was fixed for each block, and the different SNR conditions were randomly assigned to the blocks. During each session (left column), the main part of the testing session was preceded and followed by the Pupil Unrest Index (PUI) and the Multidimensional Fatigue Inventory (MFI) measurement. The MFI was also measured in the middle of the session.

Aims and Hypotheses

The present study investigated whether sLE leads to changes in fatigue and sleepiness and whether the PUI can serve as an objective physiological marker of these changes. Specifically, we hypothesized that:

By testing these hypotheses, our study aims to clarify how sLE contributes to fatigue and sleepiness, and to evaluate whether the PUI offers a practical and objective marker for use in hearing research.

Method

Participants

Twenty young adults (18 women, two men; age range 19–29 years) with self-reported normal hearing completed the study. Two additional participants began but did not complete the sessions due to scheduling conflicts and were not included in the final sample. All participants were native German speakers, received financial compensation (€12/hr), and provided written informed consent. They were instructed to abstain from caffeine for 6 hr prior to testing. This interval is consistent with prior psychophysiological research on pupillometry and vigilance (e.g., Lüdtke et al., 1998; McLellan et al., 2016), where shorter abstinence periods have been shown to leave residual stimulant effects on pupil size and arousal. This precaution ensured that our PUI measurements were not confounded by acute caffeine intake.

Stimuli and Acoustic Conditions

Stimuli were sentences from the German Oldenburg Matrix test (OlSa; Wagener et al., 1999). These sentences are spoken by a male speaker and have the fixed structure of name word, verb, numeral, adjective, object. For each word, 10 alternatives are available, and the words can be randomly combined to produce syntactically correct but semantically unpredictable sentences which cannot be easily memorized by the subjects. Target sentences were presented together with two different interfering male talkers. Interferers were generated by concatenating three random matrix sentences with randomly chosen starting points to ensure unpredictability. Spatialization was implemented using head-related impulse responses (HRIR; Kayser et al., 2009). The target was always presented from 0°, while the two interferers were either colocated (both at 0°) or spatially separated to the left (90°) and the right (−90°) of the listener.

Four processing conditions were used in the present study: two unprocessed conditions without algorithmic modifications (spatially separated and colocated, as described above) and two with speech enhancement algorithms. The IBM algorithm operated under the assumption of perfect a priori knowledge of the target and masker signals (“oracle knowledge”), to compare target and masker signals in time–frequency bins and discarded bins in which the target energy was lower than that of the combined maskers (Brungart et al., 2006). The BMVDR algorithm with partial noise estimation (Marquardt & Doclo, 2018) employed a binaural beamformer. This algorithm mixes part of the original signal back into the processed output to preserve binaural cues. The unprocessed colocated condition served as a difficult baseline lacking spatial cues, whereas the unprocessed spatially separated condition was expected to impose lower acoustic task demands due to spatial release from masking. Previous research has shown that spatial separation improves speech intelligibility (e.g., Brungart et al., 2006) and reduces subjective listening effort (Rennies et al., 2019). The IBM condition represented an upper-performance benchmark that strongly reduces listening effort but cannot be implemented in real hearing aids, whereas the BMVDR condition provided a realistic algorithmic enhancement aiming to improve spatial listening while preserving binaural cues. The main motivation for including these four conditions was to examine whether the presence of spatial cues or algorithmic enhancement influences the degree to which sLE leads to fatigue.

Procedure

Pretest: Individual speech recognition thresholds (SRT50), that is, signal-to-noise ratios (SNRs) required to obtain 50% word intelligibility, were determined adaptively in the unprocessed colocated condition. Relative to this individual baseline, six SNRs (−3, 0, +5, +10, +15, +25 dB) were employed in the main study. SNRs were computed as the differences in RMS levels of the target relative to the summed interferers after the HRIR convolution (before signal processing). The inclusion of higher SNRs (+15, +25 dB) was intentional to ensure ceiling-level intelligibility while still probing listening effort. This choice follows prior work (Rennies et al., 2019), which showed that listening effort remains sensitive even in positive SNR ranges where intelligibility is already at ceiling. Our study therefore deliberately emphasized the easier end of the SNR spectrum—compared with many speech intelligibility studies—so as to capture effort-related effects of the tested algorithms.

Main sessions: Each participant completed four sessions (one per processing condition), separated by at least 24 hr. The order of conditions was counterbalanced. To achieve this, all 24 possible permutations of the four algorithmic conditions were generated. Four permutations, each starting with a different condition, were removed to ensure that every condition was represented equally often in the first session across participants. Because the first session was expected to exert the strongest influence on subsequent performance and the sample was limited to 20 participants, this balancing was necessary. One of the remaining permutations was then randomly assigned to each participant.

The secondary memory task was deliberately designed to be demanding in order to prevent monotony and maintain cognitive engagement over the extended sessions. Unlike simpler vigilance-type tasks, this choice ensured that participants continuously allocated resources, which was essential to capture fatigue-related changes. Most importantly, the challenging task provided a sensitive way to examine how sLE contributes to fatigue, which was the primary aim of the present study. While this demanding design may limit generalizability to other populations, it was appropriate for our specific research question.

Each trial consisted of two target sentences from the Oldenburg Matrix corpus. The first sentence served as the secondary (memory) task and was presented without any maskers at 65 dB SPL. Participants were instructed to remember only the first, third, and fifth words (name, numeral, and object) to keep the memory load manageable. The second sentence constituted the primary (speech recognition) task and was presented with two interfering talkers under one of the four acoustic processing conditions. After both sentences had been played, participants reproduced them in reverse order—first the masked (primary) sentence in full, then the three words of the unmasked (secondary) one—so that both tasks required sustained attention and sequential memory. Each trial was separated by auditory beeps that signaled when to respond.

Trials were grouped into blocks of 20 sentences, each presented at a constant SNR. The six SNR levels were randomly assigned to the blocks within each session. Each of the four signal-processing conditions was tested in a separate session lasting about 75 min.

Responses were transcribed by an automatic speech recognition (ASR) system validated for the OlSa corpus (Ooster et al., 2018). Recognized words and timestamps were used to compute accuracy and response times. The ASR model had been validated previously (Ooster et al., 2018), showing robust and reliable performance across a wide range of speech-in-noise conditions, which ensures that its output provided a valid reference in the present study.

Subjective Measures

After each block (see Figure 1), participants rated listening effort using a categorical listening effort scale, adapted from the ACALES (Adaptive Categorical Listening Effort Scaling) procedure (Krueger et al., 2017). Subjective fatigue was assessed 3 times per session (start, midpoint, end) with a shortened six-item version of the Multidimensional Fatigue Inventory (MFI; Smets et al., 1995; German version: Kuhnt, 2020). The six items were selected to capture state-related aspects of fatigue that are sensitive to short-term fluctuations within a session. Given the within-session design of the present study, items reflecting more stable, trait-like dimensions of fatigue were excluded to ensure that the measure was appropriate for detecting transient changes over time. The full item set is provided in the Supplemental Materials. To reduce the influence of between-participant differences in initial fatigue, MFI state scores were baseline-corrected (post minus pre) within each session, yielding a within-person change measure. Random intercepts for subjects in the mixed-effects models additionally accounted for residual interindividual variability.

Physiological Measures

Fatigue-related sleepiness was indexed by the PUI (Lüdtke et al., 1998). Pupillograms were recorded for 11 min in complete darkness before and after each session with a GP3 HD eye tracker (Gazepoint, 2021). Participants fixated a dim central cross throughout. Time-of-day was not experimentally controlled. Because PUI was analyzed as a within-session pre–post change, each participant served as their own baseline, reducing the influence of slow diurnal fluctuations on condition comparisons.

We deliberately restricted PUI measurements to before and after each session, because repeated recordings during the experiment would have possibly introduced unpredictable influences on vigilance and fatigue levels.

Data quality was inspected first: datasets with more than 15% missing samples or with periods longer than 5 s of identical values were excluded. Artifact rejection followed Lüdtke et al. (1998): abrupt changes exceeding 0.1 mm within a 400 ms window were marked as missing. The remaining data were smoothed with a moving average of 39 samples. To quantify pupil unrest, absolute changes in pupil diameter were computed within 82-s segments. For each segment, the cumulative absolute changes in pupil diameter were then scaled by a factor of 60/82 to yield a standardized value per minute. The final PUI was defined as the mean of all valid segments. Higher PUI values indicate stronger spontaneous oscillations of pupil size and thus greater sleepiness.

Reaction Time Preprocessing

Stimulus presentation and response recordings were synchronized offline using an external marker. Each response phase began with a 500-ms beep that served both as a participant cue and as a time stamp for later alignment. Speech responses were continuously recorded and subsequently processed. Reaction times were then defined relative to the end of stimulus presentation (as marked by the beep) and measured from the onset of the first spoken word, followed by word-by-word timing. The ASR system (Ooster et al., 2018) used these externally marked onsets to align stimulus and response times with high reliability.

Response times shorter than 200 ms or exceeding three standard deviations above a participant's mean were excluded (Loenneker et al., 2024). Analyses were also repeated with log-transformed RTs, which confirmed the same conclusions.

Statistical Analysis

All analyses were conducted in R 4.3.1. (R Core Team, 2023) Mixed-effects models were fitted using lme4 (Bates et al., 2015) and summarized with easystats (Lüdecke et al., 2020). Multivariate effects were assessed with PERMANOVA from the vegan package (Dixon, 2003). Model assumptions were checked by visual inspection of residuals (qqplots, scale-location).

We used PERMANOVA to evaluate multivariate effects across correlated dependent variables (e.g., accuracy and response times) without assuming multivariate normality, providing a robust complement to the univariate mixed-effects models. For PERMANOVA, R2 values represent the proportion of variance in the distance matrix explained by the tested factor. These are typically small in absolute terms but can still be significant when the observed grouping explains more variation than expected under permutation.

All mixed-effects models included a random intercept for participant to capture between-subject variability. Although random-slope structures were considered, they proved unstable given the modest sample size; we therefore adopted an intercept-only model, consistent with recommendations to avoid overparameterization (Bates et al., 2015; Matuschek et al., 2017). Model diagnostics showed no collinearity or convergence issues, and including or excluding the SRT50 term did not change the fixed-effect estimates. The model structure was specified a priori based on theoretical considerations rather than optimized via AIC or −2LL, as our aim was to test hypothesized effects rather than identify the best-fitting model.

The original a priori sample size calculation was conducted in G*Power 3.1 (Faul et al., 2007) for the within-subject design focusing on the PUI. This yielded a required sample size of 19 participants; accordingly, we measured 20 complete data sets. At the time of planning, power analysis specifically tailored to linear mixed-effects models was not available to us. To address this limitation and in response to reviewer feedback, we conducted follow-up simulations using the R package mixedpower. For each of the linear mixed models reported in this study, we ran 1,000 simulation replicates to estimate statistical power across sample sizes (n = 10, 20, 30, 50, 70, 90, 120, 150) for predefined effect sizes derived from comparable studies. Because our primary interest lay in detecting sequence (time-on-task) effects, we summarize power for the sequence effect here. Using effect sizes approximated from Hornsby (2013), a ∼25% change in response time across the session yields an estimated power of 82.4% with n = 20 for the primary task and 99.7% for the secondary task. For intelligibility, using a ∼4% decline over time estimated from Moore et al. (2017), simulations indicated 63.3% power with n = 150 for the primary task and 79.7% power with n = 30 for the secondary task. For categorical listening effort, we assumed an increase of ∼2 scale steps across the session; simulations indicated 93.3% power with n = 20. Taken together, under the assumed effect sizes and variance structure of our data, a sample size of 20 participants was considered adequate for the principal outcomes (PUI, response times, categorical listening effort). As expected, detecting small declines in intelligibility over time would require larger samples. This post-hoc analysis corroborates our original G*Power-based planning for PUI and clarifies the operating characteristics for the other measures.

Results

Overview

A global analysis confirmed that sLE collectively influenced behavioral, subjective, and physiological measures (PERMANOVA, R^2 = .057, p = .028). We therefore examined each outcome domain separately. Complete statistical tables are available in the Supplemental Material. In the main text, we summarize the key findings for each domain. The following sections report the results in relation to the hypotheses outlined in the Introduction (H1–H4). Specifically, H1 and H2 address physiological and subjective fatigue (PUI, MFI), whereas H3 and H4 concern behavioral and condition-specific effects.

Subjective Fatigue (MFI)

MFI ratings increased significantly from baseline to the end of each session (β = −0.34, 95% CI [−0.59, −0.09], p = .008), reflecting a general rise in fatigue. The negative sign of the coefficient is due to coding of the time factor, but in absolute values participants reported more fatigue at the end of each session. Importantly, MFI increases were consistent across all processing conditions, without condition-specific differences. The model explained variance with marginal R2 = .08 and conditional R2 = .70, where the marginal R2 represents variance explained by fixed effects alone and the conditional R2 includes both fixed and random effects (Nakagawa & Schielzeth, 2013). This pattern confirms H2, indicating that sLE was accompanied by increasing subjective fatigue across sessions, although without the expected differentiation between acoustic conditions.

Fatigue-Related Sleepiness (PUI)

The PUI increased from pre- to postsession in most participants (69/80 sessions, see histogram in Supplemental Figure S1). The strongest rise was observed in the unprocessed colocated condition (intercept = 0.214, 95% CI [0.163, 0.265], p < .001) (see Figure 2). Compared to this baseline, all other conditions showed significantly smaller increases (e.g., IBM: β = −0.121, 95% CI [−0.191, −0.051], p < .001). The model explained variance with marginal R2 = .13 and conditional R2 = .19 (Figure 2).

Fatigue-related sleepiness (Pupil Unrest Index [PUI]). Mean PUI across listening conditions. Values represent low-frequency (0.05–0.3 Hz) pupil oscillations recorded in millimeters (mm); Error bars show ± 1 SE. Statistical details are reported in Supplemental Table S3.

These results support H1, showing that the PUI increased over time and was most pronounced in the most difficult (unprocessed colocated) condition, consistent with elevated physiological sleepiness predicted under sustained effort.

Behavioral Performance

Performance patterns across primary and secondary tasks are summarized in Figure 3, which visualizes accuracy across the two task domains. For the primary speech recognition task, accuracy improved with SNR (β = 0.13, 95% CI [0.11, 0.14], p < .001) as expected. Sequence effects indicated condition-specific declines: performance significantly decreased over time in the unprocessed separated (β = −0.16, p = .004) and BMVDR (β = −0.14, p = .011) conditions, but not in the easiest (IBM) or hardest conditions (unprocessed colocated). The model explained variance with marginal R2 = .36 and conditional R2 = .70.

Behavioral accuracy (primary and secondary tasks). Accuracy in the primary (top, a and b) and secondary (bottom, c and d) tasks. Panels on the left (a and c) illustrate effects of sequence, and panels on the right (b and d) illustrate effects of SNR. Full model results are provided in Supplemental Table S3.

For the secondary memory task, accuracy declined significantly over time (Sequence: β = −0.04, 95% CI [−0.07, −0.02], p = .002), indicating a general fatigue effect. Performance in the secondary task also benefited from higher SNRs in the primary task (β = 0.01, 95% CI [0.01, 0.02], p < .001). Interactions with algorithmic condition were inconsistent: while some comparisons (e.g., IBM) showed small advantages, most condition-specific effects were weak or nonsignificant. The model explained variance with marginal R2 = .04 and conditional R2 = .79.

This partially confirms H3, as performance declined across blocks, although response times shortened rather than increased. The condition-specific declines further partially align with H4, showing the strongest effects in intermediate difficulty conditions instead of a monotonic ranking with increasing condition difficulty.

Response Times

Across both tasks, response times shortened over the course of the session (primary task: beta = −0.008, 95% CI [−0.013, −0.002], p = .001; secondary task: beta = −0.014, 95% CI [−0.020, −0.008], p < .001). Detailed results by condition are provided in the Supplemental Material.

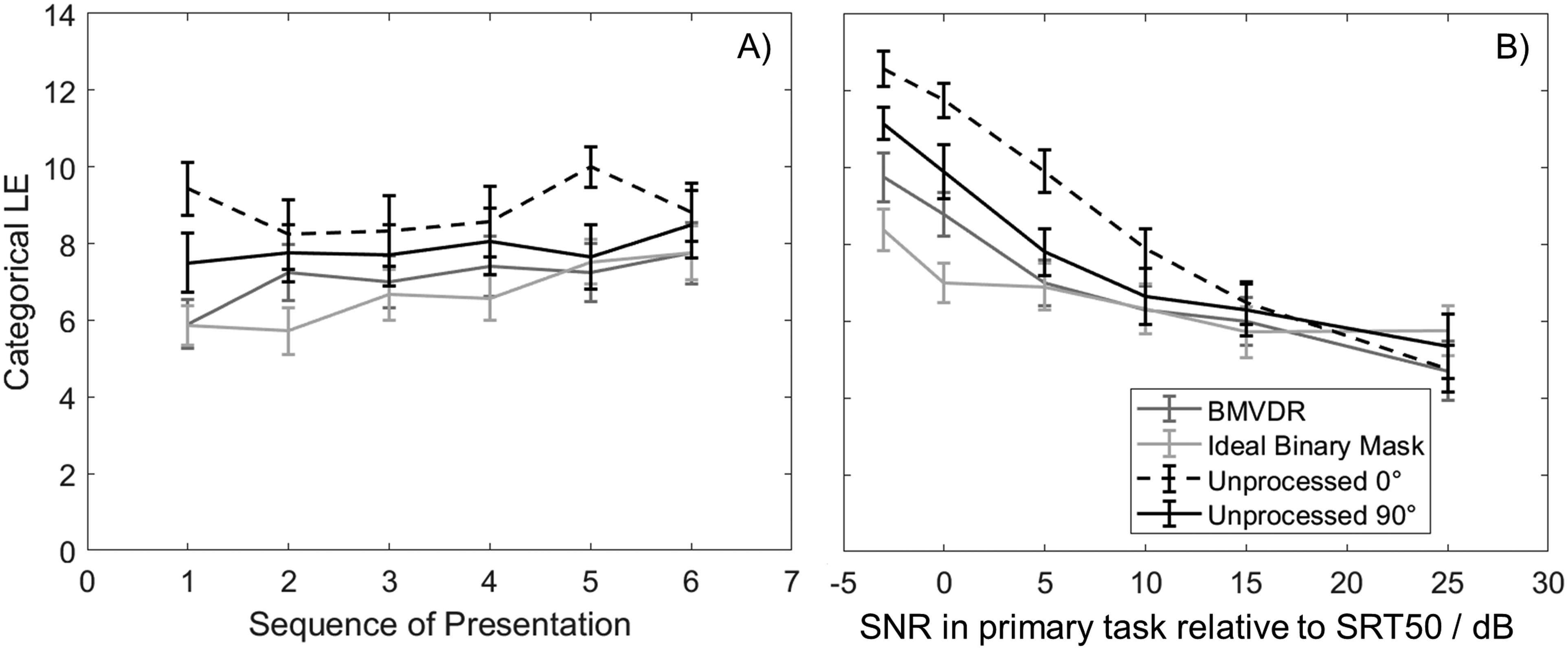

Perceived Listening Effort (CALES)

Figure 4 shows how categorical listening-effort ratings depended on SNR (i.e., task difficulty) and sequence. Categorical listening-effort ratings (CALES) provided fine-grained insights into how the experimental conditions modulated subjective listening effort across both factors. As expected, SNR strongly influenced perceived effort (β = −0.29, 95% CI [−0.33, −0.26], p < .001), with poorer SNRs yielding higher ratings. Over time, CALES ratings also increased significantly in several conditions, most prominently for the IBM (β = 0.52, 95% CI [0.26, 0.78], p < .001) and BMVDR (β = 0.36, 95% CI [0.10, 0.62], p = .007). In other words, listeners reported escalating effort as the session progressed, particularly under intermediate demands. By contrast, the unprocessed colocated condition elicited consistently high ratings without further increase, indicating a ceiling effect. The model explained variance with marginal R2 = .35 and conditional R2 = .76.

Categorical listening-effort ratings (CALES). Mean categorical ratings of perceived listening effort across acoustic processing conditions and SNR levels. Ratings reflect perceived task difficulty on a 13-point categorical scale (1 = no effort, 13 = extreme effort). Full model results are provided in Supplemental Table S2.

These findings also support H4, as the interaction of condition and sequence revealed the predicted gradient from high-effort (unprocessed colocated) to low-effort (IBM) conditions, confirming that perceived listening effort and fatigue-related measures varied systematically with acoustic difficulty.

Discussion

The aim of this study was twofold: first, to examine how sustained listening under varying acoustic task demands relates to changes in behavioral, subjective, and physiological indicators within a structured dual-task listening context and second, to investigate whether the PUI, a physiological measure traditionally used to index sleepiness, could serve as an objective marker of sLE-induced fatigue in auditory research.

Our results provide evidence that sustained listening under challenging speech-on-speech masking conditions leads to measurable increases in both subjective fatigue and physiological signs of sleepiness.

Importantly, subjective listening effort ratings varied systematically across acoustic conditions and mirrored the pattern observed in fatigue-related measures. Conditions associated with higher perceived effort were also associated with greater increases in subjective fatigue and fatigue-related sleepiness. Although our design does not allow a strict causal dissociation between acoustic task demands and effort investment, this converging multimodal pattern supports an association between sLE and fatigue-related processes.

Importantly, the observed effects were not uniform across conditions: while subjective fatigue (MFI) rose consistently, it did not differentiate between processing conditions, whereas PUI values showed condition-specific increases. This highlights the potential of the PUI as a promising tool for tracking listening-related fatigue in future studies.

Most participants exhibited an increase in PUI over time, with the strongest effect in the most demanding condition (unprocessed colocated). This supports the interpretation that PUI is sensitive not only to circadian or general fatigue, but also to cognitive load imposed by challenging listening. At the same time, the dissociation between PUI and behavioral measures indicates that PUI provides complementary information. For example, while performance in the hardest condition remained consistently poor, PUI values rose substantially—indicating accumulating fatigue-related sleepiness even without further behavioral decline.

One useful way to consider these differences across conditions could be provided by the framework of motivational fatigue (Hockey, 2013), which assumes that listeners continuously balance expected rewards against the subjective cost of sustained effort. When success remains achievable, effort is maintained; when the likelihood of improvement diminishes, effort is gradually withdrawn. Our data align with this dynamic adjustment model.

A more detailed interpretation of these condition-specific patterns helps to clarify the motivational mechanisms at play. In the most difficult condition (unprocessed colocated), participants faced a comparably difficult task with low intelligibility. Nevertheless, PUI values increased strongly, while behavioral performance remained stable—suggesting that participants appeared to maintain high effort investment despite limited success. This persistent effort may reflect an internally maintained performance standard to avoid failure or to maintain an above-average performance level (Alicke, 1985; Zell et al., 2020). Such “overinvestment” is a characteristic feature of motivational fatigue, in which individuals sustain effort to uphold self-efficacy even when the likelihood of success is low.

By contrast, in the intermediate conditions (unprocessed separated and BMVDR), performance declined and response times shortened, indicating a strategic reduction of effort once listeners recognized diminishing returns. This pattern exemplifies adaptive effort withdrawal—a shift toward faster but less precise responses when the cost of continued exertion outweighs expected gains. In the easiest condition (IBM), performance and effort ratings remained stable, implying that the task required minimal motivational regulation. Together, these gradients support a nonlinear relationship between task difficulty and fatigue: fatigue and motivation interact dynamically rather than monotonically increasing with load. Such findings align with theoretical accounts proposing that sLE reflects a continuous re-evaluation of effort investment under changing perceived utility (Hockey, 2013; Pachella, 1973).

Subjective measures offered mixed evidence: the MFI captured general increases in fatigue but was insensitive to condition-specific effects, while the categorical listening effort ratings tracked both SNR and sequence effects more closely.

This divergence emphasizes the importance of a multimodal approach, as recommended in recent reviews (e.g., Alhanbali et al., 2018; McGarrigle et al., 2014).

Interestingly, response times decreased across sessions in both the primary and secondary tasks. Although such speeding could partly reflect task familiarization, accuracy did not improve across conditions and tended to decline under higher acoustic demands. This pattern is consistent with a shift in response strategy over time, potentially reflecting a speed–accuracy tradeoff (Pachella, 1973) under sustained task demands. Thus, faster responses should not be interpreted uncritically as improved efficiency, as they may also indicate reduced effort investment. However, the present design does not allow us to fully disentangle practice effects from strategic adjustment.

Taken together, these findings underscore that no single measure is sufficient to capture the complexity of listening-related fatigue. Instead, combining subjective (MFI, CALES), behavioral (accuracy, RT), and physiological (PUI) measures provides a more robust understanding.

From a theoretical standpoint, these findings extend motivational fatigue theory to sustained listening contexts. They show that fatigue manifests differently depending on whether listeners perceive continued effort as worthwhile. Such condition-dependent effort regulation bridges subjective and physiological domains and provides insights into why fatigue-related declines are not always linear with task difficulty. In future work, formal modeling of motivation and effort investment could help quantify these adaptive processes and link them more directly to hearing-aid benefit and individual differences in listening goals.

Some limitations of the present study must be acknowledged. Not all participants exhibited increases in PUI; in some cases, levels remained stable or even decreased. This variability may be explained by individual differences in circadian rhythm, motivational state, or pupil responsivity. Future studies should examine baseline pupil responsiveness to better interpret such cases. Time-of-day was not experimentally controlled. Diurnal fluctuations in tonic pupil activity may therefore have contributed to variability. However, because PUI was analyzed as a within-session change, slow circadian drifts are unlikely to account for the condition-specific effects observed here.

Moreover, the sample was relatively small and homogeneous, consisting of young, normal-hearing adults, which limits generalizability.

Another limitation concerns the use of a shortened version of the MFI. Using only selected items is acceptable for research purposes as long as the resulting scores are not interpreted against normative reference data. This approach allowed us to reduce participant burden while maintaining sensitivity to fatigue-related changes.

We also acknowledge that the secondary memory task may represent another limitation. Our design required participants to reproduce two target sentences in reversed presentation order (i.e., first repeat the second, then the first sentence). This procedure ensured continuous cognitive engagement and helped prevent monotony across the long sessions, which was essential to capture fatigue-related changes. While effective for our specific research aims in a young normal-hearing sample, such a demanding task may be less suitable for older or hearing-impaired listeners, limiting the generalizability of the current paradigm. Future studies may adapt the secondary task difficulty to target populations while preserving sensitivity to motivational fatigue.

Finally, although PUI proved sensitive to task difficulty, its specificity as a marker of listening-related fatigue remains to be validated in more diverse populations and settings.

In conclusion, this study demonstrates that sLE contributes to both subjective fatigue and physiological sleepiness, with PUI emerging as a sensitive indicator of listening-related fatigue. The results highlight the need to consider motivational factors in interpreting fatigue effects, and they provide initial evidence that PUI may complement behavioral and self-report measures in capturing hidden costs of listening. These insights carry implications for hearing research and technology development, particularly in evaluating whether hearing devices can reduce cognitive load and protect against listening-related fatigue.

Supplemental Material

sj-docx-1-tia-10.1177_23312165261441694 - Supplemental material for The Relation Between Sustained Listening Under Difficult Conditions and Behavioral, Subjective, and Physiological Indicators of Fatigue

Supplemental material, sj-docx-1-tia-10.1177_23312165261441694 for The Relation Between Sustained Listening Under Difficult Conditions and Behavioral, Subjective, and Physiological Indicators of Fatigue by Ewald Strasser, Thomas Brand and Jan Rennies in Trends in Hearing

Footnotes

Authors' Note

All authors approved the final manuscript as submitted and agree to be accountable for all aspects of the work.

Acknowledgments

This study receipts the following financial support, authorship, and/or publication of this article: This project was funded by the Deutsche Forschungsgemeinschaft (German Research Foundation)—Projektnummer 352015383—SFB 1330 A1.

Ethics Approval Statements

The Ethics Committee of the University of Oldenburg waived the need for ethics approval for the collection, analysis and publication of the obtained and anonymized data for this noninterventional study based on the initial application (Drs.-Nr. 04/2018) for the Sonderforschungsbereich SFB TRR 31 “Das aktive Gehör,” Teilprojekt B1: “Modelling signal processing in auditory scene analysis.”

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the Deutsche Forschungsgemeinschaft (German Research Foundation)—(Project No. 352015383-SFB 1330 A1).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.