Abstract

Within the field of hearing science, pupillometry is a widely used method for quantifying listening effort. Its use in research is growing exponentially, and many labs are (considering) applying pupillometry for the first time. Hence, there is a growing need for a methods paper on pupillometry covering topics spanning from experiment logistics and timing to data cleaning and what parameters to analyze. This article contains the basic information and considerations needed to plan, set up, and interpret a pupillometry experiment, as well as commentary about how to interpret the response. Included are practicalities like minimal system requirements for recording a pupil response and specifications for peripheral, equipment, experiment logistics and constraints, and different kinds of data processing. Additional details include participant inclusion and exclusion criteria and some methodological considerations that might not be necessary in other auditory experiments. We discuss what data should be recorded and how to monitor the data quality during recording in order to minimize artifacts. Data processing and analysis are considered as well. Finally, we share insights from the collective experience of the authors and discuss some of the challenges that still lie ahead.

Introduction

Goal and Overview of This Article

In this introductory article, we offer advice on how to understand and incorporate pupillometry (the measurement of pupil size) as a measure of listening effort. The target audience includes researchers who have considered using pupillometry but might not be familiar with the technical or logistical challenges that are involved. For the purpose of having a standard set of recommendations in place, the authors have collected their shared experiences—both good practices as well as pitfalls—in this article. Original hypothesis-driven research can be found in numerous other publications and elsewhere in this special issue. But the

The attraction of pupillometry is that changes in pupil dilation appear to distinguish cognitive tasks that are more or less effortful across a wide variety of domains (Beatty, 1982), including those that do not involve speech intelligibility. Pupil dilation scales with mathematical ability (Ahern & Beatty, 1979), short-term memory capacity (Klingner, Tversky, & Hanrahan, 2011; Zekveld, Kramer, & Festen, 2011), Stroop task interference (Laeng, Ørbo, Holmlund, & Miozzo, 2011), and resolving ambiguity in language (Vogelzang, Hendriks, & van Rijn, 2016). It therefore has the potential to add value to assessments of speech perception especially where there is reason to believe that there could be different amounts of cognitive load exerted for tasks that are not clearly distinguished by task accuracy.

One of the most important things to note about pupillometry is that pupil size is not a monotonic direct index of effort but rather a complicated mixture that reflects the combined contributions of the autonomic nervous system (ANS; Zekveld, Koelewijn, & Kramer, 2018). For cognitive-evoked dilations, the response is nonlinear (Ohlenforst et al., 2017; Wendt, Koelewijn, Książek, Kramer, & Lunner, 2018); dilations are small for easy tasks but also small for

In the following sections, we begin by introducing the complicated term

What Do We Mean by Effort?

The Framework for Understanding Effortful Listening (FUEL; Pichora-Fuller et al., 2016) defines listening effort as the “deliberate allocation of mental resources to overcome obstacles in goal pursuit when carrying out a [listening] task” (p. 10 S). This definition highlights that effort arises not only as a result of the difficulty of the task itself (i.e., the intelligibility of the stimuli) but also of a result of the active application of the individual’s mental capacities to overcome an obstacle. Another essential component is the participant’s engagement or motivation to succeed in a task, which may vary widely across individuals. The FUEL has its roots in the classical capacity model described by Kahneman (1973), who emphasized the role of attention (and arguably used

Why Measure Listening Effort and Not Just Intelligibility?

Listening effort is increasingly recognized as an important aspect of hearing loss. Hearing difficulties and increased listening effort are reportedly connected with numerous medical, financial, and occupational challenges (Kramer, Kapteyn, & Houtgast, 2006; Nachtegaal et al., 2009) as well as feeling of social connectedness (Hughes, Hutchings, Rapport, McMahon, & Boisvert, 2018). Hétu, Riverin, Lalande, Getty, and St-Cyr (1988) reported interviews with individuals with hearing impairment who mentioned that fatigue related to their hearing difficulties and coping mechanisms was severe enough that they would be “… too tired for normal activities” after finishing work.

Two listeners might achieve the same intelligibility score but exert different amounts of effort to do so; pupil dilation appears consistent with subjective notions of relative difficulty even in these equally intelligible cases (Koelewijn, Zekveld, Festen, & Kramer, 2012). Because speech perception can involve a variety of cognitive linguistic skills in addition to auditory processing (Bronkhorst, 2015; Mattys, Davis, Bradlow, & Scott, 2012), the same intelligibility score can be obtained by a listener with moderate hearing loss exerting great focus, or by a person with typical hearing who is listening with less effort (Ohlenforst et al., 2017). Where audibility fails, cognitive compensation strategies (suppressing irrelevant information, relying on context, etc.) can compensate (Peelle, 2017; Rönnberg, Lunner, & Zekveld, 2013) as is often seen in the case of individuals with hearing impairment who show reliance on context in speech perception (Pichora-Fuller, Schneider, & Daneman, 1995). This greater reliance on top-down mechanisms appears to come at a cost of decline in other cognitive and physical tasks, or memory of words heard (e.g., Koeritzer, Rogers, Van Engen, & Peelle, 2018; McCoy et al., 2005). Listeners with normal hearing also engage in potentially effortful cognitive processes when listening to acoustically challenging speech, even when the speech is highly intelligible and supported by linguistic context (Koeritzer et al., 2018). Thus, an experimenter or clinician might be interested not only in the ultimate accuracy in a task, but also the mechanisms used to accomplish the task, and how effortful it was to complete the task.

In addition to the reasons stated earlier, it is also useful to remember that not all aspects of speech perception are gauged by whether the words are correctly identified. Other aspects include analyzing and updating a talker’s intention (Snedeker & Trueswell, 2004; Tanenhaus, Spivey, Eberhard, & Sedivy, 1995), predicting upcoming information (Altmann & Kamide, 1999; Tavano & Scharinger, 2015), identifying a talker (Best et al., 2018), perceiving prosodic emphasis (Dahan, Tanenhaus, & Chambers, 2001), translating speech into a different language (Hyönä, Tommola, & Alaja, 1995), and judging whether an utterance makes sense (Best, Streeter, Roverud, Mason, & Kidd, 2016). These would all be essential components of speech communication that would not be adequately quantified by a score of whether words were correctly repeated. Apart from pupillometry, other classic experimental measures like eye tracking and brain imaging show that there is value in granular responses that scale with task demands even when intelligibility is not the outcome measure of primary interest.

From the perspective of the audiologist, listening effort is arguably a worthwhile measurement

Apart from examining the relationship between effort measures and performance accuracy measures, it is also worthwhile to consider any sign of effort as an indication of task engagement, which could be a useful outcome measure in itself. For example, Teubner-Rhodes, Vaden, Dubno, and Eckert (2017) proposed an assessment of executive function that they call “Cognitive persistence.” Individuals who face listening difficulties might avoid challenging auditory environments (cf. Wu et al., 2018); tasks that evoke consistent signs of effort could indicate that the individual is at least willing to attempt the task.

Despite the showcase of pupillometry in this article, we remind the reader that it has not been conclusively established that the laboratory-based pupillometric measures of effort are directly related to symptoms such as everyday listening difficulties, susceptibility to fatigue, and poor recognition memory. Hornsby and Kipp (2016) showcase the need for systematic investigations into this connection and also highlight the concept of fatigue separately from episodic effort. However, pupillometry and other measures of effort likely play a fractional role in establishing those connections through converging sets of evidence and associations.

The Unique Value of Pupillometry

Considering the success of other measures of effort, such as dual-task paradigms (Gagné, Besser, & Lemke, 2017) and reaction times, which might not need such a complicated set of guidelines; what value is added by pupillometry? There are multiple benefits that we highlight here, which expand on the commentary on methodology by McGarrigle et al. (2014). First, pupillometry is a

Measures of effort each have their own advantages and limitations. Reaction times are subject to variations in manual dexterity and speech, which might change with age or physical abilities not related to the experimental task. Pupil size and range of dilation are also affected by age (Bitsios, Prettyman, & Szabadi, 1996; Kim, Beversdorf, & Heilman, 2000; Winn, Whitaker, Elliott, & Phillips, 1994) although there are published normalization methods (discussed later) that capitalize on the reliable pupillary light reflex as a standard of dynamic range (Piquado, Isaacowitz, & Wingfield, 2010). Pupillometry is arguably a more sensitive measure than dual-task cost, which does not provide temporal information. Compare, for example, measures of spectrally degraded speech perception by Winn, Edwards, and Litovsky (2015) using pupillometry and by Pals, Sarampalis, and Baskent (2013) using dual-task cost. We note, however, that dual-task measures can be logistically more feasible to conduct and are less affected by the methodological constraints outlined in this article. Functional magnetic resonance imaging studies have aimed to reveal the mechanisms that underlie listening effort via linking pupil dilation to the engagement of both domain-general attention and sensory-specific brain regions during speech comprehension (e.g., Kuchinsky et al., 2016; Zekveld, Heslenfeld, Johnsrude, Versfeld, & Kramer, 2014), during other cognitive tasks (e.g., Siegle, Steinhauer, Stenger, Konecky, & Carter, 2003), and during spontaneous fluctuations in alertness (e.g., Murphy, O’Connell, O’Sullivan, Robertson, & Balsters, 2014; Schneider et al., 2016).

Other neuroimaging methods with faster temporal resolutions, such as magnetoencephalography and electroencephalogram (EEG), have similarly sought to establish a neural basis for pupillary indices of listening effort. In fact, pupil dilation and EEG have been simultaneously registered in multiple studies. McMahon et al. (2016) showed that EEG alpha level was comodulated with pupil dilation for 16-channel vocoded speech, but for conditions of more-difficult six-channel vocoded speech, the relationship was much less clear. Miles et al. (2017) followed up with a related study aimed at discerning effects of intelligibility, finding that unlike EEG results, pupil dilation was related to intelligibility scores. Interestingly, the two measurements were not correlated with each other, suggesting that they tap into potentially different cognitive mechanisms. Further investigations are needed to better understand the potential connection of different measures.

There is a benefit of pupillometry in the context of testing participants who use assistive devices such as hearing aids and cochlear implants (CIs), which is that the experimenter can avoid problematic interference of the device with electrical or magnetic imaging techniques (Friesen & Picton, 2010; Gilley et al., 2006; L. Wagner, Maurits, Maat, Baskent, & Wagner, 2018). Similarly, functional magnetic resonance imaging can provide precise spatial information about the neural systems engaged during effortful speech processing (Lee, Min, Wingfield, Grossman, & Peelle, 2016; Obleser, Wise, Dresner, & Scott, 2007) but is not well suited to individuals with electronic implants, vascular disorders, or for presenting speech in relative quiet (because of machine noise). Functional near-infrared spectroscopy is unaffected by such interference and compatible with the use of implants (McKay et al., 2016), but it is slower than pupillometry (i.e., it cannot capture rapid changes), and considerably more expensive than EEG or pupillometry at the time of this writing. In all, pupillometry is not free from limitations, but is relatively easy and fast to set up, has a sufficient temporal resolution, is free from electrical artifact, and is comparatively inexpensive compared with some other imaging techniques.

Experimental Design and Planning

What Does Pupil Dilation Reflect?

Ranging between sizes of roughly 3 mm and 7 mm (Laeng, Sirous, & Gredebäck, 2012), the pupil dilates and contracts for multiple reasons (see Zekveld et al., 2018). In normal circumstances, the largest changes in pupil dilation occur in response to changes in luminance. When changing from light to dark environments, pupil diameter can increase by as much as 3 to 4 mm, or roughly 120% (Laeng et al., 2012). Conversely, the cognitive task-evoked pupil dilations that are central to this article are much smaller by comparison, on the order of 0.1 to 0.5 mm, depending on testing conditions and task. Because of these factors, one must manage the sources of dilation and constriction factors apart from the experimental task so that an evoked response can be a reliable indicator of the effort exerted during the task. In addition, the amount of pupil dilation evoked by a task can be modulated by the participant’s motivation and arousal state (Stanners et al., 1979), as will be discussed in detail in this article.

It is reasonable to consider task-evoked pupil dilation to reflect not a simply unitary concept of effort but rather some amalgamation of attention, engagement, arousal, anxiety, and effort (Nunnally, Knott, Duchnowski, & Parker, 1967; Pichora-Fuller et al., 2016). While it is not within the scope of this article to clarify the distinctions between these interrelated concepts, they all have been invoked in numerous explanations of the pupillary response over the years. We use the term “Listening effort” as a useful shorthand tool that can be understood to capture a union of these concepts as they relate to hearing (difficulties), but there could be valid reasons to unpack each of these concepts individually.

In agreement with the frameworks described by Kahneman (1973) and Pichora-Fuller et al. (2016), we highlight the critical role of

Since Kahneman’s (1973) influential monograph, examination of effort has historically been tied with the concepts of attention and arousal. Bruya and Tang (2018) are critical of Kahneman’s binding of attention and effort, suggesting that instead of characterizing attention as the use of cognitive or metabolic resources, we ought to instead consider it as the “readying” of metabolic resources in the form of adaptive gain modulation. Considering the physiological evidence in studies by Reimer et al. (2016) and McGinley, David, and McCormick (2015) and some of the speech perception work described later in this article and elsewhere in this issue, Bruya and Tang’s suggestion cannot be dismissed. It should be noted however, that even in Kahneman’s original book, the concept of effort

The persistent tradition is to consider larger pupil dilation to be a sign of increased listening effort, and therefore a negative outcome, compared with smaller pupil dilation. However, we should not assume that more effort (or larger pupil response) is always a negative thing. Engagement in speech communication can be a very productive and satisfying process, but only with sufficient effort or attention devoted to the input. Increased pupil dilation is a signal that a listener is at least

Task Selection—What Task Properties Will Evoke Pupil Dilation?

The experimental task should ideally demand that a listener exert intentional effort beyond passive awareness of sounds in the environment. Ideally, there would be multiple experimental conditions where the participant is motivated to exert more effort in at least one condition because it will produce better results. In the following sections, we review some relevant considerations for guiding task selection.

Stimulus difficulty and listener interest

For reliable and interpretable pupillometry results, there is a balance of making the stimuli not so easy as to demand too little cognitive effort and also not so difficult as to make cognitive effort futile (see previous section, and also Wendt et al., 2018 and Eckert, Teubner-Rhodes, & Vaden, 2016 for supporting data and discussion). In addition to stimulus difficulty, the experimenter should also consider stimulus

Basic psychoacoustics

Some basic tasks of auditory detection or discrimination might not demand cognitive resources sufficient to evoke a strong or consistent evoked pupil response although some reports do exist. For example, pitch discrimination elicits smaller pupil dilation in musicians than nonmusicians (Bianchi, Santurette, Wendt, & Dau, 2016), despite comparable peripheral sensitivity. Although no consistent pattern of pupil dilation would be expected if a participant simply hears different sounds coming from different locations, task-evoked changes do emerge in a task of explicit sound localization (Bala, Spitzer, & Takahashi, 2007). Beatty (1982) showed data from a study of selective attention to individual pure tones, revealing a dilation pattern that was detectable (and detectably different when tones were targets or distractors), but the dilations were on the order of 0.01 mm, which is one tenth the size of those normally reported in the easiest conditions in many other articles. Without sufficiently powered experiments with a large number of trials, it is unlikely that such small effects would emerge in a consistent fashion. Beatty (1982) also notes that experimenters should take caution to distinguish between tasks of signal

Speech perception in quiet

For listeners with normal hearing, speech perception in quiet can be automatic or effortless if it does not come coupled demands no particular challenge (e.g., syntactic structure, auditory distortion, etc.). It therefore might not demand substantial cognitive resources to complete, producing pupil dilations that do not always reliably emerge from the noise of random pupillary oscillations. Data from Zekveld and Kramer (2014) show pupil dilations to quiet speech that hover around the baseline levels although their data were clean enough to illustrate clear interpretable morphology. In a number of published studies, speech in quiet is presented with some kind of extra cognitive demand, such as memory load (Johnson, Singley, Peckham, Johnson, & Bunge, 2014), spectral degradation (Winn et al., 2015), anomalous semantic content (Beatty, 1982), lexical competition (A. Wagner, Toffanin, & Baskent, 2016), competition from a second language (Schmidtke, 2014), conflict of prosody and syntactic structure (Engelhardt, Ferreira, & Patsenko, 2010), object-focused syntactic structure (Wendt, Dau, & Hjortkjær, 2016), and pronoun ambiguity (Vogelzang et al., 2016). In these cases, it is crucial to emphasize that the evoked dilations are likely in response to language processing and not simply auditory encoding.

Linguistic aspects of effort

In each of the aforementioned examples of speech perception studies, some aspect of language processing was the focal point of investigation. These studies establish conclusively that the cognitive activity indexed by pupil dilation does not follow merely from audition alone, but also from language processing. In another example, Hyönä et al. (1995) found increased pupil dilation in a task of sentence

Not all speech stimuli demand the same kinds of language or cognitive processing, and therefore experimenters should guard against the notion of a unitary category of “speech perception.” In other words, just because stimuli are speech sounds, they might not elicit typical patterns of pupil dilation because they do not necessarily entail cognitive processes that relate to processing of natural speech. For example, it is possible that the popular style of “matrix” sentences where each word in a sentence is drawn from a closed set of choices elicits less effort, since most digits and colors can be distinguished by vowel alone (in English) and therefore might not reflect the effort needed to understand normal speech. Other sentence materials might be preferable to examine speech perception with a richer set of linguistic processes in play. Several studies have successfully used traditional speech-in-noise tests (such as the Dutch Versefeld sentences, Danish HINT test, English R-SPIN test, IEEE sentences, and others) and applied the pupillometry method.

Some linguistic stimuli might demand such limited amounts of cognitive processing that they do not elicit expected effects on pupil dilation. For example, auditory spectral degradation affects the pupillary response to sentence-length materials (Winn et al., 2015) but not recognition of individual spoken letters (McCloy et al., 2017). It is therefore worthwhile for the experimenter to consider whether the speech perception task involves some kind of linguistic computation or minimal auditory detection.

Increasing Motivation and Avoiding Boredom

Motivation will affect the pupillary response (Kahneman & Peavler, 1969). Left without a goal-directed task, a person’s pupil will change size as the mind wanders (Franklin, Broadway, Mrazek, Smallwood, & Schooler, 2013), in a way that will not be aligned with stimulus presentation. If the task does not give enough reason for the participant to engage, the pupil size will likely not give useful results. Monetary incentives have been shown by Heitz, Schrock, Payne, and Engle (2008) to increase the magnitude of pupillary responses. When people are curious about the answers to trivia questions, their pupils dilate more (Kang et al. 2009)—by a small (8% vs. 4%) but detectable amount.

Although boredom is to be avoided in order to elicit pupil dilation reliably, experimenters should also consider avoiding emotional stimuli that evoke pleasure, disgust, or an otherwise strong physiological response unrelated to the planned task. For example, sentence materials can be chosen to avoid notions of violence, sexuality, or trauma. Pupillary responses to emotionally toned or arousing stimuli were reported by Hess and Polt (1960) in an early influential paper. More recently, Partala and Surakka (2003) showed that compared with neutral stimuli, negative-valence stimuli evoked larger pupil responses, with largest dilations evoked by positive stimuli. If emotional response is not the target of investigation, then these kinds of stimuli could be avoided to reduce unwanted variability in the data.

Behavioral Considerations

In most pupillometry studies of listening effort, there is a behavioral component such as a spoken response or a button press, which can increase the measured pupil response by as much as 400% (Privitera, Renninger, Carney, Klein, & Aguilar, 2010) and this amplified response can sustain for several seconds. When the behavioral contribution is removed through deconvolution, the task-evoked pupil response is still present but is more modest and short lasting (cf. Hoeks & Levelt, 1993; McCloy, Larson, Lau, & Lee, 2016). Similar behavioral contributions to pupil size can be seen in studies of sentence recognition involving verbal responses. Winn et al. (2015) and Winn (2016) showed that pupil dilations from the verbal response were typically larger than those elicited by the listening task itself. Papesh and Goldinger (2012) carefully illustrated the effect of motor speech planning (as well as lexical frequency) on pupil dilations in a study involving cued response options that alternated between verbatim repetition or substituting “blah” in place of syllables. In numerous pupillometry studies of sentence perception, the timing of the behavioral response is so far separated from the listening response that the auditory-evoked pupil dilations recover almost completely back to baseline levels, and the behavioral-induced dilations are thus often not illustrated on published figures (Koelewijn, de Kluiver, Shinn-Cunningham, Zekveld, & Kramer, 2015; Koelewijn et al., 2012; Wendt et al., 2018; Zekveld, Kramer, & Festen, 2010).

We recommend letting pupil size return to baseline levels following a trial (though see studies that have employed deconvolution to tease apart pupillary effects arising from closely spaced visual stimuli, e.g., Wierda, Van Rijn, Taatgen, & Martens, 2012). In typical speech-in-noise testing, it is normally sufficient to wait 4 to 6 s after the completion of the participant’s verbal response, but for other experiments without extensive precedent in the literature, we recommend pilot testing involving extended recording time (e.g., 10 s beyond the stimulus or response) and inspecting the data to see when the aggregated data return to baseline levels.

Experimenters should be aware of all task events that would invoke intentional attention, including physical motion. McGinley et al. (2015) found that 20% of variance in pupil size in mice was explained by locomotion; increases in pupil dilation were substantial and long lasting during motion. In addition, locomotion has been found to suppress sensory-evoked responses (Williamson, Hancock, Shinn-Cunningham, & Polley, 2015).

Experiment Logistics and Constraints

Task selection for pupillometry is somewhat constrained by the measurement technique, specifically because of the timing of the response and the challenge of avoiding changes in pupil size that are unrelated to the target task. It is therefore not advisable to simply add pupil dilation measures to an existing behavioral procedure that was not designed for pupillometry. Instead, there should be deliberate planning to design testing methods to suit the nature of the measurement technique. A compelling reason to measure pupil size should justify the cost and effort of possibly altering the experimental procedure, based on the desire to obtain information not available in behavioral methods. The pupil dilation response has complicated innervation and is affected by a wide range of experiences and stimuli, so there is an unfortunate amount of noise inherent in any pupil measurement. However, this noise can be addressed if the experimenter is careful with the experimental setup and judicious with the monitoring of factors that would affect physiological measures for any unique testing condition. Absence of these considerations will undoubtedly weaken the measurement and potentially cause distrust of the method altogether, undermining the field’s confidence in the carefully produced studies that do exist.

Number of Trials

In the end, the number of trials (and participants) needed in any experiment will depend on the effect size of interest and power of the analytical approach. Generally, the experimenter will want to have at least 16 to 18 good recordings of pupil size for each condition. In any pupillometry experiment, there will be missing data because some trials will be dropped due to mistracking, contamination, or other reasons (e.g., scratch an itch or exercise a sore muscle can show up as surprisingly dramatic changes in pupil size that is unrelated to the listening task itself). Hence, it is wise to record a sufficient number of trials so that the estimation of the task-evoked response will stabilize. For sentence-perception tasks, 20 to 25 trials are normally a safe starting number. Fewer trials might be sufficient for listeners who are highly engaged in demanding tasks, where the effect is expected to be very large.

Number of trials for testing can be considered to be inversely proportional to the difficulty of the experimental task. For a very difficult task, a reliable large pupil dilation response (i.e., with a large-effect size) can be achieved with as few as 10 trials. For distinguishing more subtle differences between similar conditions (e.g., vocoders with different number of channels, small changes in SNR, linguistic content such as semantic context), a larger number of trials is advisable. This consideration highlights the importance of authors publishing measures of effect size along with their statistical tests.

Trial Events and Timing

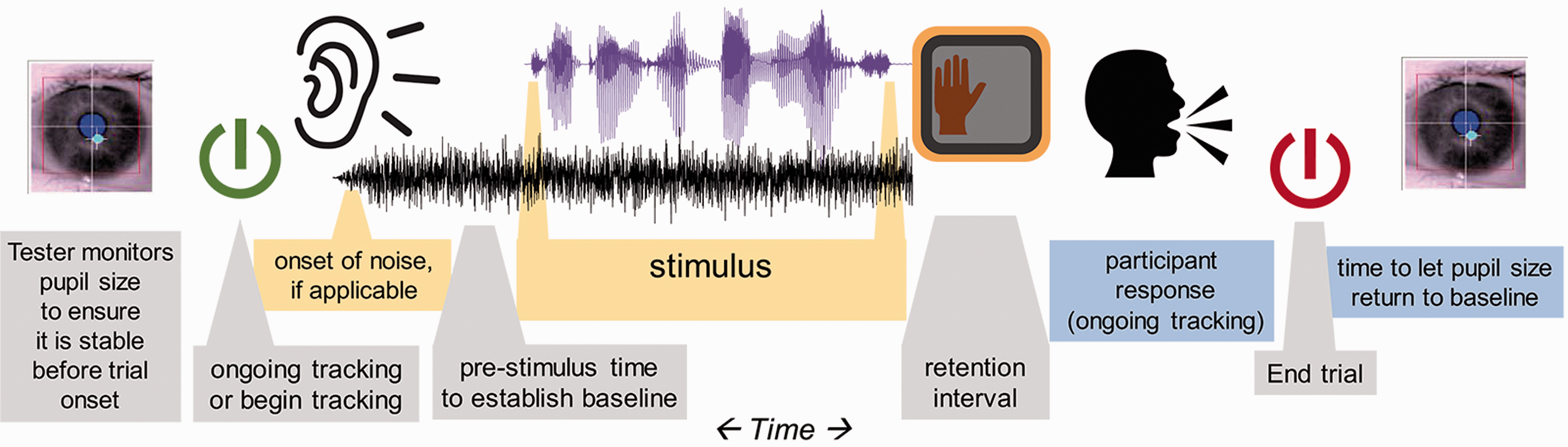

Trials should have consistent timing of events, for example, the onset of noise, an alerting sound, the stimulus itself, any cue to prompt a behavioral response, or any other relevant trial landmark. An illustration of an example trial timeline is given in Figure 1. Ideally, each trial should start with a drift-correction phase, in which the participant is required to look at a central fixation symbol before moving on (though this is not always possible when combining pupillometry with certain imaging modalities). Timing of each event should be planned carefully and intentionally. It is advisable to consider separating these events in time, because the pupillary responses to two events could sum together, obscuring dilations that arise from listening as opposed to those that arise from behavioral responses. Specifically, the Events in a basic pupillometry experiment for measuring listening effort. There are other experimental paradigms that are possible, this illustrates a commonly used sequence of events.

It has become more common for experimenters to introduce a cue that indicates the timing of an upcoming stimulus. For example, in tests of speech recognition in noise, there could be leading noise that lasts for 2 to 3 s before the onset of the speech (cf. Koelewijn et al., 2012, 2015, 2017; Wendt, Hietkamp, & Lunner, 2017; Wendt et al., 2018; Zekveld et al., 2010, 2013). There are at least two benefits of this practice. First, it alleviates the problem of target-masker separation, whereby simultaneous onset of speech and noise increases the difficulty of hearing the target signal. In addition, although the onset of sound could elicit a brief pupillary response, it could orient the listener so that the target signal of interest does not come as a surprise. However, the presence (or continuation) of noise after a signal, though common in published studies, could interfere with language processing, as shown by Winn and Moore (2018). As the pupillary response can be slow and long lasting, it is worthwhile to consider that the analysis window can be

Stimulus Duration

Most of the literature reviewed in this article features multiple trials of relatively short duration (2–6 s). For pupillometry, similar to other evoked measurements like EEG, magnetoencephalography, or auditory brainstem response, multiple stimuli of the same (or similar) type and duration are played in a testing block, and the responses are averaged in time. As long as the stimulus-driven portion of the overall evoked response is time-aligned, the part that is unrelated to the stimulus should be averaged out, leaving behind only the relevant task-evoked response. There will likely be cleaner patterns of data for these time-constrained stimuli compared with untimed stimuli, longer passages, or entire conversations, where one could not assume the same progression of cognitive processing landmarks trial-to-trial. Longer passages might not produce consistent patterns in dilation across stimuli (because of varying landmarks for parsing, resolution, or chunking) and therefore might have relevant phasic peaks neutralized by cross-trial averaging of data. The current article will focus on phasic responses to short stimuli.

Controlling the Visual Field

The amount of pupil dilation or constriction seen in response to changes in luminance far surpasses the amount of pupil dilation measured for cognitive tasks. Therefore, it is of critical importance to control the visual field when measuring task-evoked pupil dilation. Typically, the participant is stationary and visually fixated on an image that is either completely blank or with minimal stimulation. This is not to say that other protocols are impossible, but they would be subject to a higher amount of potentially confounding noise from movement, luminance effects on pupil size, and so on.

The setup in most labs includes a uniform solid color visual field that is neither too bright nor too dark. The visual field could be a plain wall, or a computer screen. Bright colors—especially white backgrounds on a computer screen—are problematic for multiple reasons. First, they could cause excessive pupil constriction; the cognitive response might not be strong enough to emerge. Second, they might cause discomfort for the participant, which we have noticed could result in a larger number of blinks and need for additional breaks during testing. Task-evoked pupil dilations have been observed reliably in dark-adapted conditions (McCloy et al., 2017; Steinhauer & Zubin, 1982). However, there are at least two cautions against testing in dark conditions. First, the pupils will dilate to accommodate low light, leaving less head room for task-evoked dilation. In addition, inspired by previous work by Steinhauer, Seigle, Condray, and Pless (2004), Wang et al. (2018) has recently shown that testing with brighter luminance elicits more reliable dilation because the parasympathetic nervous system releases its “grip” on the sympathetic nervous system’s dilation-inducing projections to the pupil dilator muscles.

Combining Pupillometry With Eye Tracking

Despite risk of contamination by changes in luminance and gaze position, there are published studies where pupillometry has been used in studies of visual search or other visual recognition tasks (described in the next paragraph). These experiments offer the value of introducing the well-documented effects of lexical competition and sentence processing that have been studied with the “visual-world” paradigm, which is notable for providing precise timing information and insight on perceptual competition. Cavanaugh, Wiecki, Kochar, and Frank (2014) used a drift-diffusion model to suggest that eye tracking and pupillometry shed light on dissociable factors relating to decision-making. They found that gaze fixation time corresponds to rate of evidence accumulation, while increasing pupil size corresponds to increasing decision threshold (i.e., willingness to commit to a decision).

Visual aspects of stimuli in a gaze-tracking experiment could affect pupil size and therefore deserve extra scrutiny in the context of pupillometry. Engelhardt et al. (2010) used images in conjunction with pupillometry in a sentence comprehension task but did not publish examples of the images used. Wagner et al. (2016) used black and white line drawings in a picture-gazing task where lexical disambiguation led to changes in pupil dilation. It should be noted that in that study, concurrent gaze changes during pupillometry might have led to unknown effects on pupil size due to changes in gaze location and changes in the local luminance of the image on the retina. Using a variation of this method, Wendt et al. (2016) also used picture stimuli that were controlled to have equal luminance, and perhaps more importantly were presented

Although the aforementioned studies demonstrate that pupillometry could be combined with “visual-world”-style testing paradigms, there are special considerations to be made, in light of the influence of gaze position and luminance on pupil size. Kun, Palinko, and Razumenić (2012) reported that even for small targets (angular radius of 2.5°) changes in luminance can result in changes in pupil size that can obscure cognitive load-related pupil dilations. However, Palinko and Kun (2011) have also demonstrated that when the experimenter has rigorous control over the placement and luminance of objects in a visual scene, it is possible to disentangle luminance and task-evoked changes in pupil size. In realistic everyday conditions, it might not be possible to exert such control. Kuchinsky et al. (2013) identified systematic changes in pupil size relating to gaze position, which were ultimately modeled and regressed out of the data.

Minimizing eye movement will likely lead to cleaner estimation of cognitive-evoked pupil size when using remote eye trackers, because gaze away from a remote stationary camera can cause a distorted estimation of pupil size, depending on the algorithm used. Systems that use the long axis of the ellipse fit to the pupil or that dynamically take into account the rotation of the eye away from the camera are unaffected by this issue (although one should always check their data to be sure). Methods for estimating and regressing out the degree to which a dataset is impacted by gaze position, including the proper design of a control viewing-only condition, have been described in detail by Gagl, Hawelka, and Huzler (2011) and others (e.g., Brisson et al., 2013; Hayes & Petrov, 2016; Kuchinsky et al., 2013).

Another reason to be cautious of eye movements in pupillometry tasks is that the luminance of visual field will change depending on what the participant is looking at, at any moment. If they shift gaze from a location with higher to lower luminance, pupil dilation might increase because of luminance instead of cognitive activity. Pupil size for a person shifting gaze around a room (or even around different areas of a screen) would be intractably convoluted with pupil size from luminance changes (and perhaps also with locomotion). Even if the visual scenes used are counterbalanced across the conditions of interest, one could not ensure a priori that participants would look at the displays in a consistent fashion across trials. In the best-case scenario, in which viewing patterns were relatively consistent, the added source of noise stemming from unpredictable changes in local luminance with fixations may minimize one’s ability to detect differences across conditions.

Data Collection

Data Quality Monitoring

Data contamination should be detected as soon as possible—during testing. Real-time monitoring of the eye or the recorded pupil diameter shows blinks or other dropouts of data. If real-time monitoring is not an option, the estimation of pupil size could be displayed for the experimenter at the end of every trial, to see if something is amiss. Even in the absence of clear problems like head movement and shuffling posture, the pupil response can fatigue after several trials or can show a pattern of fluctuation—called Pupillary

Data quality will likely change over the course of a long-testing session. It is common to observe a general decrease in pupil dilation over time, both in terms of baseline level and magnitude of dilation response. For experiments up to 1 to 1.5 h, these effects do not show up as significant. However, McGarrigle, Dawes, Stewart, Kuchinsky, and Munro (2017a) have shown an effect of task-related fatigue in pupil response during a longer sustained listening task. We have found that in typical sentence-perception experiments (with noise, or some other auditory distortion), fatigue is avoidable for most listeners if testing blocks are 2 h or shorter. Participants vary in how long they can engage and also their willingness to communicate their need for a break. Experimenters should remain vigilant for changes in participant alertness so that they can initiate breaks and avoid unwanted fatigue. Monitoring of data can reveal that the test is long enough that the participant is changing physiological state or alertness. After some reasonable number of trials (e.g., 25 trials in a sentence-recognition task), a break of a few minutes can refresh the listener.

Longer experiments could be split into different testing sessions although experimenters should be careful about splitting different compared conditions across different days, in case there are sizeable differences in pupil size or dynamic range for an individual across days. Be mindful that performance in a task can be situationally dependent and can vary by the day (Veneman, Gordon-Salant, Matthews, & Dubno, 2013). When possible, trials for different conditions could be interspersed or presented in alternating short blocks in the same testing period. The experimenter wants to ensure that the participant is in the same physiological state for each tested condition, so that any differences in pupil dilation are due to the task and not other unintended differences.

Pupillometry experiment setup and delivery improves with tester experience (just as for other methods such as EEG, where one detects when data are too noisy, develops criteria for removing an electrode, applying gel, etc.). One becomes more familiar with troubleshooting calibration and other unique situations over time, so early struggles with the method should not necessarily be taken as a sign that it will not be fruitful. Based on prior experiments and guidelines collected in this article, one could identify aspects of the testing procedure that would deviate from traditional psychoacoustics, like the increased interstimulus interval and extra attention to test difficulty and likelihood of participant disengagement.

The quality of pupil dilation measurements improves with attention to participant fatigue, comfort, readiness, and head movement. Although these factors might be noticeable in other types of behavioral psychoacoustic experiments, their effects might be even more damaging to a physiological measure like pupillometry. Examination of raw data (rather than aggregated smoothed averages) gives the experimenter a chance to identify situations that indicate that corrective action should be taken to the test protocol. For example, although blinks are normally not a problem (because they can be removed and smoothed over in postprocessing), an unusually large amount of blinks might indicate fatigue or a too-bright screen. Participants might also give long and tense blinks just at the moment of response, potentially erasing an important piece of the data. Consistently high variability in pupil baseline level before each stimulus might indicate that not enough time has passed since the last stimulus or response, as the pupil size might still be coming down from an evoked dilation.

Because attention to the aforementioned factors will likely improve with experience, we recommend that the testing procedure be at least as consistent and regimented as one would be in any other scientific procedure. It is also advisable to have testing be performed by those who have at least some practical experience with the method, perhaps by repeated practice and shadowing of more experienced lab members first.

Participant Inclusion and Exclusion Criteria and Other Considerations

Eye color

Most eye trackers are robust to differences in eye iris color, but there are occasional difficulties with very dark irises (for light-detecting systems) and light irises (for dark-detecting systems).

Makeup

Participants should be encouraged to avoid the use of mascara and eye-liner, as it can be erroneously detected as the pupil.

Age

Older listeners show generally weaker pupil dilation responses to light (Winn et al., 1994). Following Piquado et al. (2010), a control task that measures dynamic range is recommended when comparing younger and older adults.

Hearing status

Smaller amounts of pupil dilation are routinely observed in listeners with hearing loss and older listeners compared with young control groups with typical hearing (Koelewijn, Shinn-Cunningham, Zekveld, & Kramer, 2014). There is likely more than one reason for this, including listening fatigue draining a listener’s cognitive resources, age-related atrophy of pupillary dilator muscles, or some other factors. It does not necessarily mean that the tasks performed by older or hearing-impaired listeners are regarded as less effortful. It could mean that they are devoting less intentional attentional engagement because they are conserving energy in a continuously exhausting task.

Pharmacological effects

Drugs can impact the ANS, which will affect the pupil dilation response. Steinhauer et al. (2004) report that blocking the sympathetically mediated alpha-adrenergic receptor of the dilator enables targeted measurement of the parasympathetic branch, while blocking of the muscarinic receptor of the sphincter muscles allows only contributions of the sympathetic branch. They showed that tropicamide (a parasympathetic ANS activity blocker) eliminated differences in the task-evoked response, while dapiprazole (a sympathetic ANS blocker) merely decreased pupil size while maintaining the phasic task-evoked response. It could therefore be especially important to guard against drugs that affect the parasympathetic nervous system. Common muscarinic antagonists that are used to treat for Parkinson’s disease, peptic ulcers, incontinence, and motion sickness are all likely to inhibit the pupillary response.

Caffeine

Pupil dilations are larger after ingestion of caffeine (Abokyi, Oqusu-Mensah, & Osei, 2017). Caffeine has been shown to affect the pupil response for up to about 6 h, particularly in people who do not routinely consume it (Wilhelm, Stuiber, Lüdtke, & Wilhelm, 2014).

Eye diseases

Some conditions might affect the biological function or appearance of the eye, such as cataracts (lowers contrast between iris and pupil), nystagmus, amblyopia (“lazy eye”), and macular degeneration.

Anything that affects visual fixation and tracking ability

Tracking can be compromised by attention deficit problems, severe fatigue. Tracking quality is sometimes affected by hard contacts and glasses (especially bifocals where refraction will change depending on eye position with respect to the lenses) although glasses do not always pose a problem and can usually be discarded in situations where there are no visual stimuli.

Head injury or any history of neurological problems

These issues can affect gaze stability, congruence of eye movements (Samadani et al., 2015), and pupil dilation (Marmarou et al., 2007).

General hearing ability (avoiding floor-level intelligibility)

Participants who are unable to complete a task successfully will likely show reduced pupil dilation, because they might be more likely to abandon effort on the task.

Native language

When completing a task in a nonnative language, greater pupil dilation is observed, and some effects of language processing will deviate from those observed in native listeners (Schmidtke, 2014).

Fatigue

Although fatigue is obviously related to the study of effort, it can actually be a barrier to measurement of short-term task-evoked pupil dilation. Fatigued listeners will show a weakened pupillary response. McGinley et al. (2015) provide a clear and physiologically grounded explanation for the preference to test participants in a quiet and alert state, avoiding both fatigued and hyper-aroused states. Task-induced fatigue might be reflected in the baseline value of the pupillary response over the course of the experiment (i.e., lower baseline toward the end of the experiments). Chronic fatigue (need for recovery) effects the pupillary response as well (see Wang et al., 2018).

Measuring Pupil Dilation in Children

Relatively few published studies have used pupillometry to measure listening effort in children. Of those that have (e.g., Johnson et al., 2014; McGarrigle, Dawes, Stewart, Kuchinsky, & Munro, 2017b; Steel, Papsin, & Gordon, 2015), the age range appears to begin at 7 or 8 years. It is possible that the intentional attention mechanisms employed by adults and older school-aged children reflect cognitive activity that would simply not be invoked reliably by younger children. Furthermore, logistical constraints such as stabilized-head position, sustained attention, and patience for a very plain unstimulating visual field would certainly make pupil measurements in young children very difficult, even if their cognition and language skills were mature. It is therefore possible that pupillometry is not the ideal effort measurement to use with very young children. Later, we describe some studies of school-aged children and some related work on pupillometry in other young populations.

McGarrigle et al. (2017b) tested school-aged children (age 8–11 years old) and successfully measured differences in pupil dilation related to SNR. Notably, these SNRs did not produce differences in intelligibility, suggesting that children, like adults, can achieve the same score using different amounts of effort. Furthermore, behavioral response time did not distinguish the two listening conditions. McGarrigle et al.’s data demonstrate that it is feasible to use pupillometry for children of an age where attention and engagement are dependable, for at least 40 minutes. Incidentally, measurements in school-aged children with hearing loss might be

Johnson et al. (2014) measured pupil dilation in children aged 7.5 to 14 years and obtained results that indicated reliable differences between children and adults on a short-term memory overload task. Specifically, dilation magnitude grew as memory demands increased, up to a plateau; adults’ dilations continued to grow up to a higher plateau (eight items), while children showed a reversal of dilation patterns after a smaller number of items (6) had been reached.

Steel et al. (2015) measured pupil dilation in 11 - to 15-year-old children, but the experimental design was in some ways not optimal for pupillometry as much as it was for tests of binaural fusion. They measured peak pupil diameter for a 2-s window following stimulus onset, in an experiment where average reaction times spanned a range of 2 to 3.5 s, possibly resulting in the exclusion of true peak dilation which likely occurred after the pupil data recording period. Correlations between binaural hearing and pupil dilation in that study were reported but appear to be driven by overall group differences rather than within-group binaural hearing ability and also were affected by ceiling effects and general effects of age.

Pupillometry in children younger than 8 years is rare and is typically used for purposes other than listening effort tasks. Recovery latency of pupil dilations has been used as a biomarker for children at risk for autism spectrum disorder (ASD; Martineau et al., 2011; Lynch, James, & VanDam, 2017). Pupil size was also reported to be a biomarker for ASD by Anderson and Columbo (2009) although that study included a small number of participants, and, despite statistically detectable differences, data for the ASD group fell within the range of the control group.

Measuring pupil diameter in young children during listening tasks is a substantial challenge, for both theoretical and logistical reasons. Changes in pupil size can be measured in 8-month old infants in reaction to surprising physical events (Jackson & Sirois, 2009), and both 6 - and 12-month old infants show increased pupil dilation to odd social behaviors (Gredebäck & Melinder, 2010). Thus, the pupil response can be measured; for the purpose of this article, in question is whether the assumptions that we make about the nature of language processing and goal-directed task engagement used by adults in speech recognition tasks could generalize to very young listeners.

Hardware

Trackers

It is not within the scope of this article to recommend a particular product, especially because products continue to be improved with each year. A majority of pupillometry articles in the area of listening effort have used traditional eye trackers that might more commonly be used to track eye-gaze direction. They come in many varieties, including remote cameras (that sit on a desk beneath a monitor display), tower stands (which record a reflection of the eyes akin to a teleprompter in reverse), and eyeglasses outfitted with cameras. Many of these instruments also report an estimate of pupil size, with some degree of error. Quality of the camera and quality of the software algorithms for calculating pupil size are of extreme importance, for three main reasons. First, the pupil is small, so the amount of noise in the pupil size estimation must be limited. Second, the time it takes for the system to recover from losing track of the pupils (in the case of a blink, or a look off-screen) can result in the loss of valuable data. Finally, a change in pupil size can be indistinguishable from a change in distance to the camera unless head position is stabilized, or if there are supporting measurements made, like distance. Trackers that report absolute pupil size (in millimeters) necessarily must complete such a calculation, albeit sometimes without transparency in how it is done. Some trackers instead report pupil size in arbitrary units, akin to the number of pixels that the pupil occupies on a camera image. In addition, while some eye trackers model the rotation of the eye away from center or correct pupil size for gaze position in other ways, other trackers do not, and thus extra caution (such as applying correction factors; see Brisson et al., 2013; Gagl et al., 2011; Hayes & Petrov, 2016) must be taken into account when measuring pupil size in experiments that also feature eye movements.

Clinical pupillometers

Hand-held clinical pupillometers—as used for neurology, ophthalmology, and emergency medicine—have the advantage of being user friendly (via automated routines), less expensive than some full-fledged video-based eye trackers, and designed specifically for accuracy in measuring pupil size. However, they might not have been designed for research, which could result in limitations on recording time, lack of connectivity with popular experiment delivery software, or lack of synchronized event tagging.

Chin rests or other head stabilizers

Pupil size can be estimated more reliably if the distance from the eyes to the camera remains constant (particularly for trackers that do not automatically attempt to correct for distance). It is customary to use a stabilizer such as a chin rest, akin to what could be used at an optometrist’s office. However, chin rests are not always comfortable for participants, especially when they are giving verbal responses, or if it requires them to lean forward unnaturally. An alternative solution is to have the participant lean back to have her or his head position stabilized on the top of a sturdy and stationary chair.

Seating

Sturdy stationary (not rolling) chairs will make measurement easier. The participant’s comfort should be taken into consideration even more than for a traditional psychoacoustic experiment, because the act of shifting posture or tensing muscles will show up as changes in pupil dilation. A height-adjustable chair akin to a hairdresser’s chair (or height-adjustable table for the camera) is advisable to maintain a constant viewing angle and comfort for all participants. There are also chairs used for EEG recordings that have adjustable headrests to avoid muscle tension in the neck.

Room lighting

Light should be homogeneous in the whole room so that if a participant looks around, it won’t cause a reflexive dilation in response to changing luminance on the retina. Soft lighting is best, especially if it is adjustable for individuals (Zekveld et al., 2010). A range of 10 to 200 lux, with a median for older adults around 30 lux and for younger adults around 110 lux depending on the dynamic range of their pupil. As a reference, a normal in offices is around 300 to 500 lux.

Brighter luminance produces more reliable dilations than dark settings (Steinhauer et al., 2004; Wang et al., 2018) but take caution that too-bright lighting (especially projected directly at a participant from a computer screen) might also cause discomfort and high number of blinks. A moderate mid-range gray color background on a computer monitor or a plainly lit wall target avoids these issues of discomfort.

Handling of Raw Data

Sampling rate

The pupil changes size slowly, so a sampling frequency of 30 Hz or higher is sufficient. Very high sampling frequencies (e.g., above 120 Hz) of some trackers would be beneficial for studies of precise saccade timing but are not necessary for most pupillometry studies.

Data transfer

To ensure that stimulus timing landmarks are recorded and synchronized with corresponding timestamps in the eye tracking or pupillometry data, the experimenter should be sure that time tracking would not be compromised by the use of a single computer to handle all of the processing. There are two-computer solutions that use physically separate computers for experiment delivery and tracker data collection, with ethernet or USB links for data transfer. Timing is not as delicate an issue as it is for other methods such as EEG; there are also single-computer pupillometry solutions, which can be sufficient since pupillary responses are slow enough that a drift of 30 ms (less than the duration of one sample at 30 Hz) should not affect the quality of data.

Monocular and binocular tracking

The pupils should show congruent dilation patterns (Purves et al., 2004), so binocular tracking might not offer any substantial advantage over monocular tracking, apart from the opportunity to pick the eye that produces the fewest missing data samples.

Stimulus Timing

Of critical importance is waiting for the pupil to return to baseline size before the next trial. The duration of this interval will depend on the experimental task. Heitz et al. (2008) found that larger dilations on difficult test trials affected baseline levels for subsequent trials, even with interstimulus intervals of 3.5 s. Sentence repetition tasks might require nearly 4 to 6 s following the end of the participant’s verbal response (discussed in further detail later).

Response Timing

The pupillary response takes up to 1 s to emerge, with estimates ranging from roughly 500 ms to 1.5 s (Hoeks & Levelt, 1993; Verney, Granholm, & Marshall, 2004). McGinley et al. (2015) found that the derivative of the pupil was correlated to the pupil diameter 1.3 ± 0.7 s after corresponding cortical oscillations. Peak timing in sentence-recognition experiments appears to follow the same time course, emerging typically 0.7 to 1 .2 s following stimulus offset (Winn, 2016; Winn et al., 2015). Systematically longer stimuli elicit longer latency to peak in situations where duration differences are known by the participant before the trials begin (Borghini, 2017; Winn & Moore, 2018).

What Data to Record

In addition to pupil dilation, the experimenter should record accurate timestamps of the onset and offset of a stimulus, the timing of behavioral response (if any), and the horizontal and vertical gaze positions of the eye. Timestamps will be used to aggregate and align data, and the gaze coordinates can be used to ensure fixation at a target, as well as to covary gaze position with pupil size estimation.

Data Processing

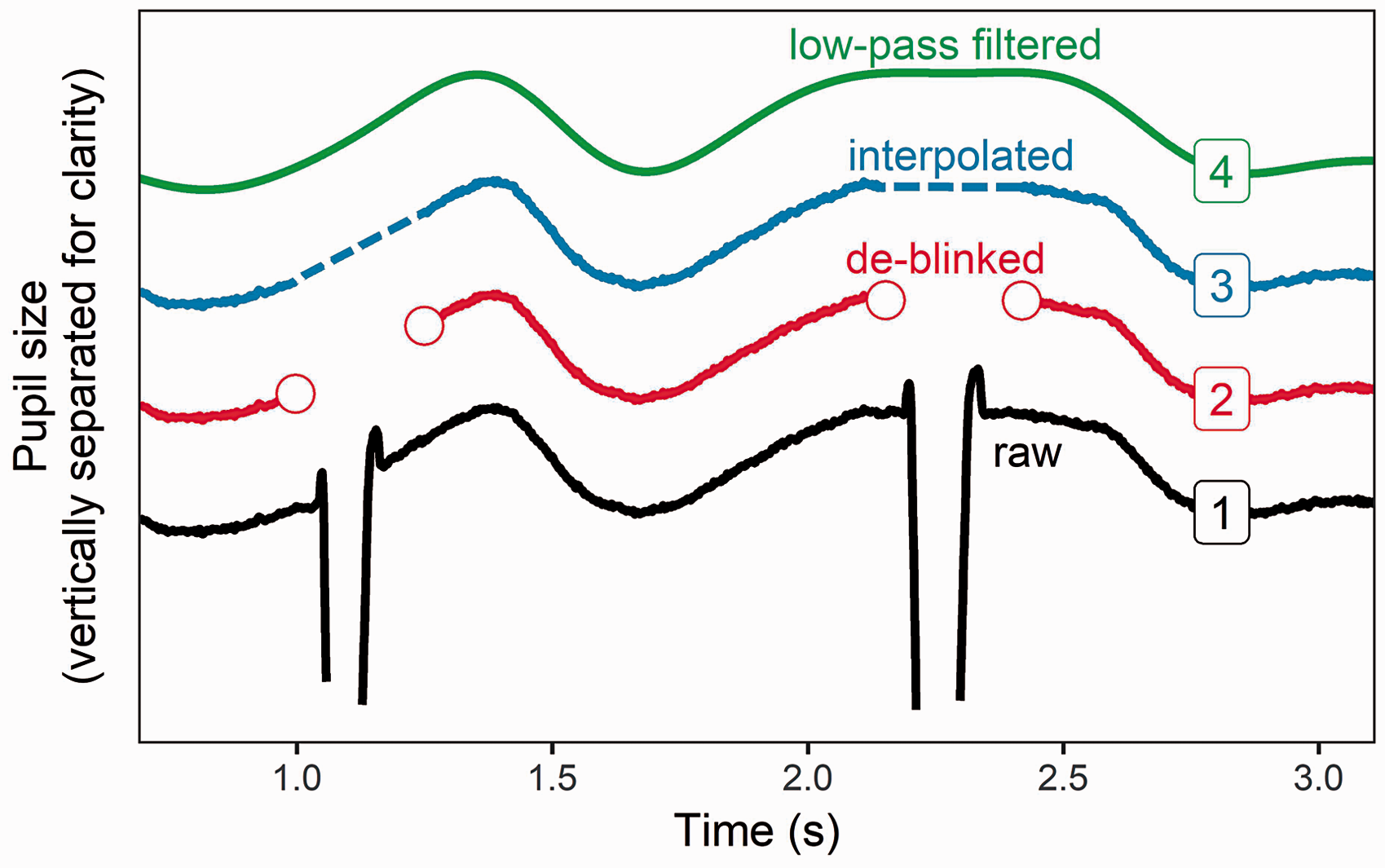

Raw pupil data must be processed in several steps before analysis and visualization. Figure 3 illustrates common steps in treating pupil data, described later.

Sequential steps of data processing. Raw data (black, marked no. 1) contain blinks that appear as transient changes in pupil dilation separated by a blank stretch of missing data. De-blinked data (no. 2, in red) expands the gap of missing data to remove the transient excursions. The gaps are interpolated (no. 3 in blue, interpolations in dashed lines). Finally, the data are low-pass filtered (no. 4, green).

De-blinking

Klingner et al. (2011) performed a thorough analysis of 20,000 blinks to estimate the expected perturbation of pupil size. They report that the pupil size before the blink undergoes a very brief dilation of about 0.04 mm, followed by a contraction of about 0.1 mm and then a gradual recovery to preblink diameter over the next 2 s. They also reported that the difference in statistical results did not change with the incorporation of a blink correction algorithm. It is likely the case that interpolating across blinks is a safe practice that will not affect results in any meaningful way. However, we recommend that interpolation begin roughly 50 ms before the blink and end at least 150 ms after the blink in order to avoid task-uncorrelated high-frequency changes in pupil size. Note that when a pupil trace consists of a larger percentage of blinks (>15%–25% of the relevant recorded time), interpolation might result in a flat trace that no longer shows a pupil dilation response. These traces should not be used for further analysis.

Low-Pass Filtering

Klingner et al. (2011) analyzed binocular pupil measurements and found that changes faster than 10 Hz are uncorrelated across the eyes, thus justifying low-pass filtering at 10 Hz. High sampling rates are therefore not essential for pupillometry. However, it would be advisable to maintain a sampling rate high enough to distinguish between fast and slow pupil responses (in terms of derivative or rate), as they have been shown to be driven by different neural systems (Reimer et al., 2016). Numerous studies report using an n-point smoothing average filter in place of an explicit low-pass filter.

Baseline Correction

The most common method of quantifying pupil dilation is not to report absolute pupil size but instead to report

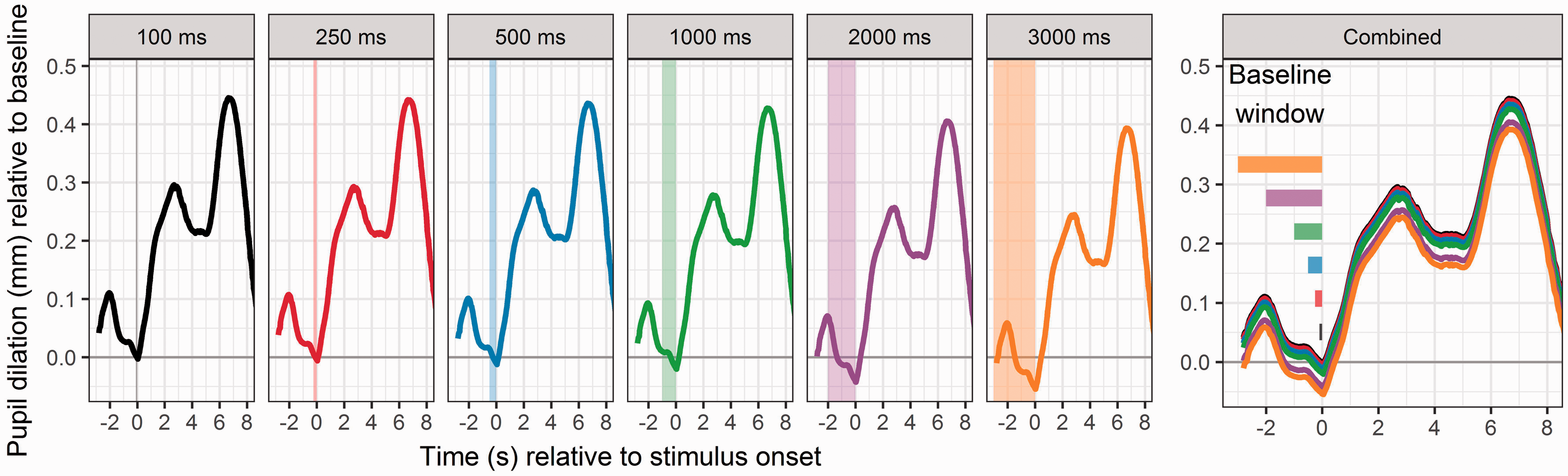

Duration of baseline intervals ranges from 100 ms (Karatekin et al., 2004) to 2 s (Ayasse, Lash, & Wingfield, 2017). Figure 4 illustrates how variation in the absolute baseline duration should play no substantial role in reporting pupil dilation. For baseline durations extending from 100 to 3000 ms, the baseline-corrected data are virtually identical. However, there are some factors that probably contribute to the common practice of using 1 s. First, it is a duration long enough that a single blink would not eliminate all data from the baseline window. However, it is short enough that to hopefully minimize influence of pupil dilations from a previous trial, as long as there is a sufficient intertrial interval.

Different baseline intervals end at the onset of the stimulus and extend backwards by variable durations (highlighted in each panel by a shaded vertical area). Comparison of the resulting baseline-corrected data is shown on the far-right panel, revealing negligible differences across baseline durations.

Baseline is typically computed for each trial, rather than for a whole test session, since the baseline level typically will drift downward over the course of a session (which, when using a single baseline average, would result in underestimation of dilations for early trials, and overestimation of dilation for late trials). To avoid single-trial erratic deviations in baseline that are unrelated to the stimulus (e.g., random large excursions in baseline size, or a long blink), one could also compute each trial’s baseline as a rolling average over a number of adjacent trials.

To elicit a more consistent baseline level across trials within a participant, one could use consistent timing landmarks and alerting stimuli to signal for trial onsets. For experiments of speech in noise, this could mean having a consistent duration of noise before stimulus onset so that the participant knows when to listen. For speech in quiet, Winn and Moore (2018) have used an alerting beep denoting trial onset, with the intention of avoiding any

Baseline pupil size, while not always reported in many published studies of listening effort, has been a subject of investigation. Some authors have suggested that tonic pupil size is a measure of global arousal levels (Granholm & Steinhauer, 2004), with alternative viewpoints framing tonic pupil size as an indicator of attentional capacity (Kahneman, 1973). Based on a review of various physiological studies done with animals, Laeng et al. (2012) suggest that tonic activity (i.e., not stimulus-time-locked) of the locus coeruleus (LC; indexed by pupil dilation, to be discussed further later) indicates the likelihood of abandoning a current task for another, while phasic activity signals the processing of attended task-relevant events. Expanding and updating this idea, physiological work with mice (McGinley et al., 2015) suggests that tonic pupil size is related to moment-to-moment readiness for sensory detection, with intermediate sizes measured during trials with better task performance and reduced response variability. Conversely, very low tonic size was interpreted as a sign of indicating drowsiness, and very high tonic size was also found to be suboptimal, consistent with hyper-activity that would cause asynchronous cortical activity.

Figure 5 illustrates two approaches to baseline correction methods that have been observed in the literature: absolute subtraction and proportional transformation (which can be considered an additional follow-up step following baseline subtraction). In the top panel, changes in raw pupil size are shown from two hypothetical participants. Participant 1 shows greater pupil size and greater apparent difference between dilation sizes in response to two different stimulus conditions (indicated by line color). However, the apparent lack of condition effect for Participant 2 is simply hidden by baseline differences. When subtracting baseline size to yield an absolute change in pupil size, Participant 1 retains a higher overall change in dilation, but the differences between conditions are now apparent for Participant 2 as well. When analyzing proportional differences relative to baseline, the two participants’ responses are transformed to look virtually identical. The consequences of these and other methods should be considered in situations where participants in different comparison groups have different overall dynamic range of pupil reactivity or substantial changes in baseline (as a result of, e.g., age differences, or testing at different times). It is worthwhile to consider Beatty’s (1982) aforementioned assertion that absolute dilation is independent of baseline size (which would undermine the proportional method) but to also consider data from Piquado et al. (2010) who normalized dynamic range to overcome substantial differences in pupil reactivity across younger and older participants. Systematic study and replication will hopefully discern the optimal way to treat data with variable baseline size.

Illustration of baseline correction and proportionalization of data for two hypothetical individuals each participating in two testing conditions indicated by line color. Raw pupil size is shown on the upper panels, absolute change (mm) in pupil size is shown in the middle panels, and proportional change in shown in the lower panels. Baseline intervals consisted of the 1-s of data prior to stimulus onset indicated at time 0.

Normalization

An equivalent amount of pupil dilation across two participants could be more meaningful for the one whose pupil has a smaller dynamic range. One such known contributor to overall dynamic range is aging. Older individuals tend to have pupils that are smaller in size, with a more restricted range of dilation, and which take longer to reach maximum dilation or constriction (Bitsios et al., 1996). In light of interindividual differences in pupil dynamic range, normalization methods are sometimes applied. Following baseline correction (e.g., subtraction of baseline level), local deviation from baseline can then be expressed in numerous ways, including percent or proportional change from baseline (Hess & Polt, 1964; Johnson et al., 2014), percent change of average experimental trial value versus average control trial values (Payne, Parry, & Harasymiw, 1968), within-trial mean scaling (Kuchinsky et al., 2013), grand-mean scaling (Engelhardt et al., 2010),

Each of these methods has advantages that might suit some experimenters’ needs. For example, the percent-of-range and

Figure 6 illustrates a hypothetical situation that illustrates an application of Piquado et al.’s (2010) method, in which change in pupil size is expressed as a proportion of the full dynamic range elicited by the pupillary light reflex. The apparently larger change in amount of evoked pupil dilation in “Participant 3” is rendered equivalent to that obtained in “Participant 4”; twice the change was observed for a pupil with twice the dynamic range. An alternative method has been used by Winn (2016) and Winn and Moore (2018), who compared the pupil dilation in one condition to the peak dilation obtained in a reference condition. Proportional change between the two conditions was considered to be normalized within individuals and therefore free from individual differences in pupillary reactivity. An intriguing area of future research could be to create a control task that determines the cognitive dynamic range of the pupil (e.g., a working memory task ranging from one item until cognitive overload). The pupil response could then be reported as a percentage of this Different amounts of change in pupil dilation for two hypothetical individuals who have different dynamic range of pupil size. In each panel, the difference in peak pupil dilation occupies the same proportion of the overall dynamic range.

Although there is no consensus gold standard method of normalization, this problem has been commonly addressed (or rather

Contrary to the aforementioned attempts to normalize differences in pupil reactivity, these differences might be a meaningful outcome measure. For example, Koelewijn, van Haastrecht, and Kramer (2018) found that participants with history of traumatic brain injury showed reduced phasic pupil dilations, despite reporting generally higher subjective effort ratings. In addition, peak pupil dilation correlated negatively with participants’ speech reception threshold, suggesting a decrease in phasic activity with a decrease in hearing acuity. Another example is given in by Jensen et al. (2018) who examined the impact of tinnitus and a noise-reduction scheme on the pupil response. They found that participants with tinnitus generally reported a greater need for recovery and showed smaller task-evoked pupil dilations (in contrast to the hypothesis of greater effort—greater dilations). The results of these studies suggest that there is a possibility of interpreting reduced pupil dilation not merely as reduced effort, but potentially as lower

Baseline Analysis

Numerous studies propose that the baseline (or

Data Alignment

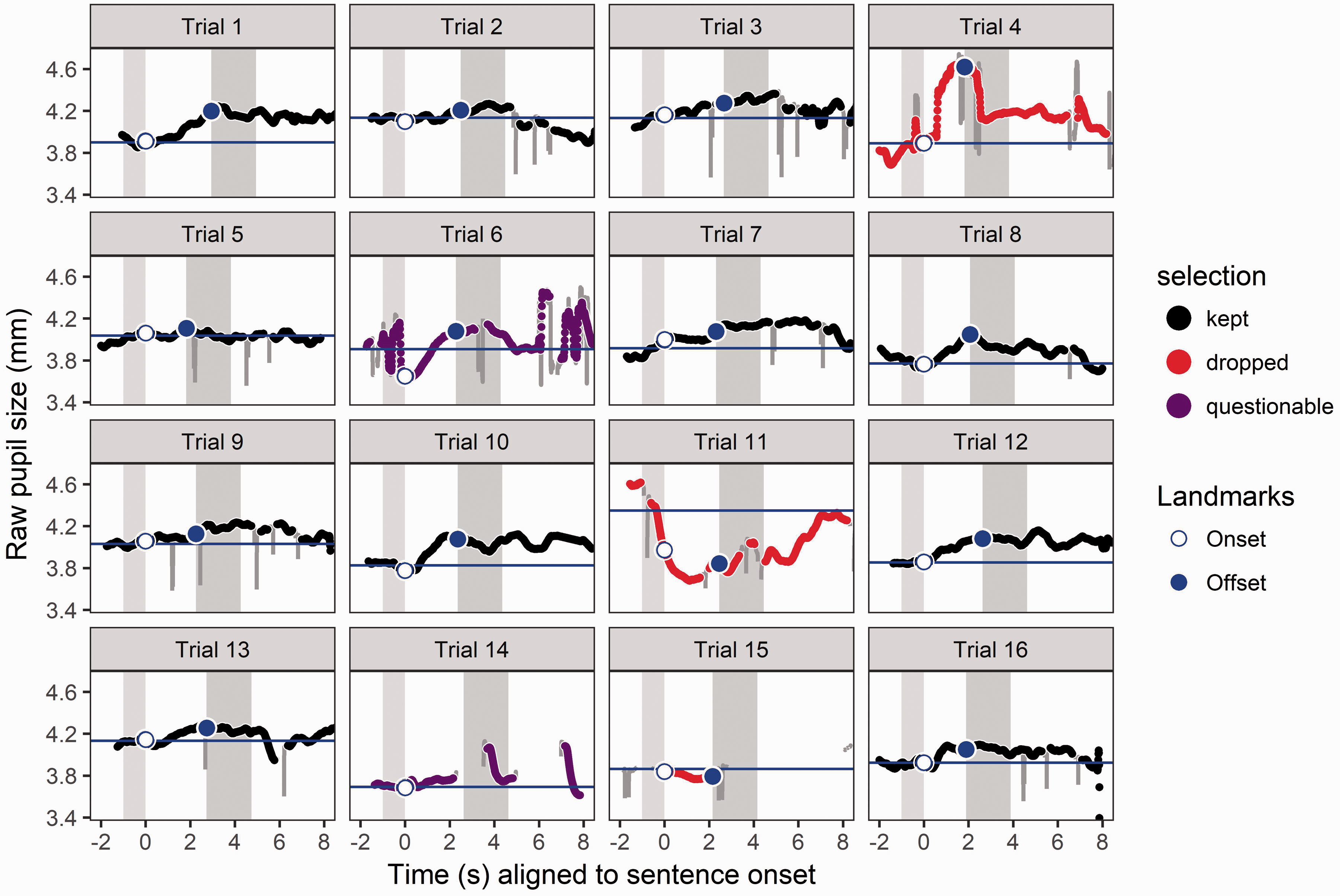

Time alignment is standard in pupillometric analysis, because task-irrelevant changes in pupil size are likely to neutralize when averaged across multiple trials, leaving only the evoked changes relevant to the experiment. In cases where stimuli are of variable duration (like sentences in a standard corpus), alignment can be done by onset or offset. Alignment by stimulus onset has been used to examine the effect of intelligibility (Zekveld et al., 2010) and masker type (Koelewijn et al., 2012) on pupil size. Klingner et al. (2011) aligned their data to stimulus offset for purposes of looking at peak pupil dilation resulting from stimuli of systematically longer durations. Winn et al. (2015) and Winn (2016) aligned data to stimulus offset for the purpose of separating listening responses from speaking responses and serendipitously found temporally specific effects of prolonged effort following challenging stimuli, which would have been otherwise partially obscured by onset alignment. Figure 7 shows a series of trials with stimulus onset and offset marked, and Figure 8 shows the aggregate of these trials, aligned by both onset and offset. There is arguably a more distinct onset slope for the onset-aligned data, and more distinct shape of dilation at offset for the offset-aligned data, but the data take the same general shape regardless of alignment position.

Sixteen individual trials of pupil data, with baseline period marked as thin gray vertical bar, and retention interval marked as thick gray vertical bar. The stimulus is played between these two bars. Baseline level for each trial is marked as the horizontal blue line. Lines plotted in color are low-pass filtered data overlaid on gray raw data that include transient vertical displacements that indicate blinks. Data in red are marked to be dropped from the data set due to excessive data loss or contamination (excessive nontask-evoked dilation) during baseline interval. Aggregation of trials displayed in Figure 7, excluding “dropped” trials. The left and right panels display data aligned to stimulus onset and offset, respectively. The thin gray bar (to the left in each panel) corresponds to the baseline interval, and the thicker gray bar (to the right) corresponds to the retention interval. Because stimuli were of variable duration, average values were used for the offset time for onset-aligned data, and for onset in offset-aligned data.

On a technical note, it is important to consider that there could be some timing drift or numerical rounding that occur with timestamps. For example, for 60 Hz data collection, some timestamps might be multiples of 16 while others are multiples of 17, even if they refer to the same ordered index (i.e., 1st, 2nd, 3rd, etc.) of sampled time across trials. To ensure that such data points are numerically aligned when aggregating across trials, the experimenter might need to transform the timing data to maintain consistent timestamps.

Identifying and Dropping Contaminated Trials

Trial-level data that are contaminated (e.g., by gross excursions during baseline) should be removed from analysis using automatic rules that are consistent and motivated by realistic constraints of task-evoked pupil responses. There is no substitute for visualizing and becoming familiar with the

Often, the first few trials are excluded from analysis because the pupillary responses look considerably different from those in the rest of the block (Wendt et al., 2018). When pupil dilation slopes steeply downward during stimulus playback, it is a good time to consider dropping the trial, because the “peak” dilation will likely be more than three standard deviations from the mean. Figure 7 illustrates an example of this pattern, as well as some other examples of contaminated or mis-tracked trials that could be dropped from a data set. We recommend being very conservative with dropping trials, especially if there is no obvious artifact like an eye movement or something explaining a deviating response. All remaining unexplained noise should be addressed by event-related averaging akin to other evoked responses.

Just as for other evoked-response methods, outliers should be detected and removed from the data set. In Figure 7, data for Trial 11 are marked to be dropped because an event-unrelated brief and excessive dilation that occurs during baseline driving all subsequent data downward following baseline correction. This contamination can be detected by identifying the average or peak dilation as an outlier, or by detecting deviation of the baseline value relative to adjacent baselines. Trial 15 is dropped because it contains a large amount of missing data due to blinks or tracker error. Trial 4 is marked to be dropped because of excessive distortion precisely during the time where there would be valuable dilation information. The range and rate of dilation observed in this trial is uncharacteristic of task-evoked changes but likely to detrimentally affect data aggregation. Trial 6 is marked as “questionable” because it contains excessive distortion during baseline (and later), which propagate forward to affect the calculation of subsequent data samples in the trial (just as for Trial 11). Standard criteria (such as absolute value of peak dilation or baseline levels being more than 3 standard deviations from the mean) could help to identify such situations. Measures of dissimilarity of individual trials might be applied in the future to characterize such deviant trials and evaluate them for contamination.

Although most of the group-averaged data from publications cited in this article take the general form illustrated in the figure earlier, it is important to note that morphology of responses will vary substantially across individuals. Lõo, van Rij, Järvikivi, and Baayen (2016) describe distinctive group patterns including differences in the number of peaks, the timing of peaks relative to trial landmarks, as well as the tendency for pupil size to either rise or

Analysis Techniques and Time Windows

As mentioned earlier, the pupil will start to dilate between roughly 0.5 to 1.3 s following the stimulus onset, and the peak dilation occurs typically roughly 700 ms to 1 s following the end of the stimulus (at least for sentence-recognition experiments where stimulus duration was relatively constant and therefore easy to predict by the listener). It is customary to measure peak pupil dilation, peak pupil latency, and mean pupil dilation in a fixed window of time around stimulus presentation. The popularity of these measurements (and peak dilation in particular) should not limit the creativity of experimenters to develop and use measurements that relate to specific hypotheses regarding the timing of the response. Some investigators have also measured the shape of the pupillary response over time (Kuchinsky et al., 2013; Wendt et al., 2018; Winn, 2016; Winn et al., 2015) using growth-curve analysis (Mirman, 2014) or generalized additive models (van Rij, 2012; van Rij, Hendriks, van Rijn, Baayen, & Wood, 2018). Alternative approaches include principal components analysis used in pupillometry experiments by Schluroff et al. (1986) with seven factors and Verney et al. (2004) with three factors.