Abstract

Spatial hearing in children with bilateral cochlear implants (BCIs) was assessed by: (a) comparing localization of stationary and moving sound, (b) investigating the relationship between sound localization and sensitivity to interaural level and timing differences (ILDs/ITDs), (c) evaluating effects of aural preference on sound localization, and (d) exploring head and eye (gaze) movements during sound localization. Children with BCIs (n = 42, MAge = 12.3 years) with limited duration of auditory deprivation and peers with typical hearing (controls; n = 37, MAge = 12.9 years) localized stationary and moving sound with unrestricted head and eye movements. Sensitivity to binaural cues was measured by a lateralization task to ILDs and ITDs. Spatial separation effects were measured by spondee-word recognition thresholds (SNR thresholds) when noise was presented in front (colocated/0°) or with 90° of left/right separation. BCI users had good speech reception thresholds (SRTs) in quiet but higher SRTs in noise than controls. Spatial separation of noise from speech revealed a greater advantage for the right ear across groups. BCI users showed increased errors localizing stationary sound and detecting moving sound direction compared to controls. Decreased ITD sensitivity occurred with poorer localization of stationary sound in BCI users. Gaze movements in BCI users were more random than controls for stationary and moving sounds. BCIs support symmetric hearing in children with limited duration of auditory deprivation and promote spatial hearing which is albeit impaired. Spatial hearing was thus considered to be “emerging.” Remaining challenges may reflect disruptions in ITD sensitivity and ineffective gaze movements.

Keywords

Introduction

The overall aim of the study was to assess spatial hearing in children using bilateral cochlear implants (BCIs). To do this, the study: (a) compared localization of sound that is moving rather than stationary, (b) investigated how sensitivity to interaural level and timing differences relates to localization of stationary and moving sound, (c) assessed effects of aural preference on sound localization, and (d) explored whether compensatory head and eye (gaze) movements are used during localization of stationary and moving sound.

Approximately 1–2/1000 infants per year are born with bilateral severe-to-profound sensorineural hearing loss (Butcher et al., 2019). Cochlear implants (CIs) have been used as a treatment option to help these children gain access to sound and spoken communication. BCIs have been provided in some countries to promote bilateral hearing which is intended to support spatial hearing (Balkany et al., 2008; Litovsky & Gordon, 2016; Peters et al., 2010; Polonenko et al., 2018). Although there are clear benefits of BCIs over unilateral CIs in children with bilateral hearing loss for speech perception in both quiet and noise, horizontal sound-source localization for stationary sound is poorer in children with BCIs compared to peers with typical hearing (Dorman et al., 2016; Grieco-Calub & Litovsky, 2010; Van Deun et al., 2009a; Zheng et al., 2015) as measured by localization error (root-mean-square error [RMSE] and mean absolute error [MAE]), and spatial discrimination acuity (minimum audible angle). The localization error measures of RMSE and MAE are correlated in children with BCIs (Fischer et al., 2021; Grieco-Calub & Litovsky, 2010; Killan et al., 2019; Van Deun et al., 2009a; Zheng et al., 2015).

One major area of concern is that interaural cues, which provide the basis for sound localization in the horizontal plane, can be distorted in BCI users due to mismatches between the two devices in stimulation level (Fitzgerald et al., 2015; Goupell et al., 2013; Simon et al., 1994; Smith et al., 2004), cochlear place-of-stimulation (Kan et al., 2013), and timing (Angermeier et al., 2021). Interaural timing differences (ITDs) are particularly affected as fine temporal cues are not provided through CIs (van Hoesel et al., 2008). The ITDs that come from the amplitude envelope of incoming sound to BCI processors may be more readily available (Laback et al., 2004; Seeber & Fastl, 2008; van Hoesel, 2004; Warnecke et al., 2020). In support, adults with postlingual onset of bilateral deafness who use BCIs are sensitive to envelope ITDs (Dorman et al., 2014; van Hoesel et al., 2008; van Hoesel & Tyler, 2003). Both children and adults with BCIs have better access to interaural level differences (ILDs; Dorman et al., 2014; Ehlers et al., 2017; Grantham et al., 2008; Salloum et al., 2010) which support sound localization abilities (Klingel & Laback, 2022). In children with immature auditory pathways, mismatched input from each CI could disrupt the potential for integration of this bilateral stimulation thereby compromising binaural processing. This might explain, in part, why children with BCIs show poorer sensitivity to ITD cues than adults with BCIs (Gordon et al., 2008; Grantham et al., 2008; Litovsky et al., 2010; Salloum et al., 2010), poor cortical processing of binaural cues (Easwar et al., 2017a), and poor spatial hearing (Anderson et al., 2022; Grieco-Calub & Litovsky, 2010; Killan et al., 2019; van Hoesel & Tyler, 2003; Zheng et al., 2015).

Poor spatial hearing in children with BCIs could also relate to hearing experience which is associated with age at onset of deafness in each ear, duration of daily BCI use and time since initial activation, as well as self-reported hearing challenges (Asp et al., 2011; Killan et al., 2019; Van Deun et al., 2009a). Age at testing may also be a factor as children younger than 6 years old with typical hearing less accurately localize stationary sound compared to older peers (Grieco-Calub & Litovsky, 2010; Lovett et al., 2012; Van Deun et al., 2009b). Binaural processing could also be altered by an aural preference for the better hearing ear which reflects strengthening of pathways from that side in children with asymmetric hearing loss (Gordon et al., 2013, 2015; Polonenko et al., 2018). These asymmetries have been shown in behavioral measures by better speech perception accuracy when using one CI than the other alone (Finke et al., 2017; Polonenko et al., 2018) and by asymmetric spatial release from masking (SRM; Chadha et al., 2011; Polonenko et al., 2018). Present clinical practice attempts to limit the potential for aural preference to develop by providing hearing in both ears with minimal delay. This means providing BCIs simultaneously at young ages for children with bilateral prelingual deafness, in two sequential surgeries with short interimplant delay or through the use of residual hearing with hearing aids or bimodal devices prior to bilateral cochlear implantation (Gordon et al., 2015). However, self-reports of hearing, such as through the Speech, Spatial, and Qualities of Hearing Scale (SSQ; Galvin & Noble, 2013; Gatehouse & Noble, 2004), confirm that children using BCIs or bimodal devices continue to find spatial listening challenging (Beijen et al., 2007; Lovett et al., 2010; Sparreboom, 2012).

The present study aims to expand on past findings of stationary sound localization in children with BCIs by using a visually hidden speaker which can move anywhere along a horizontal arc of 120° in front of the child and by allowing the child to move their head and eyes without restriction. Previous studies have often used a set number of loudspeakers that are visible to the child (Coudert et al., 2022; Grieco-Calub & Litovsky, 2010; Van Deun et al., 2009a) which could yield lower errors than a more “open-set” paradigm (Killan et al., 2019). In addition, children with BCIs might use strategies of head and eye movements to help sound localization accuracy and spatial acuity (Pastore et al., 2018, 2020). Use of head movements has been shown to support spatial awareness in dynamic listening situations (Brimijoin & Akeroyd, 2014; Pollack & Rose, 1967; Wallach, 1940; Wightman & Kistler, 1999). Children and adults with typical hearing use head movement to expose the better hearing ear upon an acute temporary change in binaural hearing through monaural ear plugging to aid in sound localization (Alemu et al., 2024). How children with BCIs use their head and eyes to localize sound is not known. With poor spatial hearing, they likely rely heavily on visual input to compensate as they do for speech perception (Bergeson et al., 2005; Lachs et al., 2001). Yet, adults with BCIs do not accurately move their heads toward sound sources reflecting impaired (Coudert et al., 2022; Gifford et al., 2018; Grange et al., 2018; Mueller et al., 2014) and/or effortful (Valzolgher et al., 2023) sound localization abilities. Use of head movements has also been shown to reduce errors in localizing moving sound in adults with symmetrical or asymmetrical age-related hearing loss (Gessa et al., 2022).

Whereas deficits in localization of stationary sound in children with BCIs have been examined, their ability to localize moving sound has not been explored. Moving sound is common in typical listening settings but localization of moving sound might use different auditory processes than localization of stationary sound. If so, localizing moving sound could be easier or more challenging than localizing stationary sound. Moving sound might occur over longer presentation periods than stationary sound and include ongoing changes in interaural cues that could increase spatial saliency of the stimuli (Perrott & Marlborough, 1989). If so, there may be greater sensitivity to moving sound. On the other hand, perception of moving sound requires additional processing to interpret its position as it changes with velocity and acceleration and auditory scene analysis to maintain perception of the sound as a single object as it moves (Bednar & Lalor, 2018; Locke et al., 2016; Perrott et al., 1993). Indeed, spatial acuity in adults with typical hearing is lower for moving sounds than stationary sounds (Harris, 1972; Middlebrooks & Green, 1991) and improves when velocity is reduced as measured by perception of the minimum audible movement angle (Chandler & Grantham, 1992; Perrott & Musicant, 1977). If localization of moving sound is more challenging than localization of stationary sound for adults with typical hearing it might be particularly poor in children with BCIs and worse than their localization of stationary sounds. Perception of moving sound is highly disrupted by hearing loss in adulthood (Klishova et al., 2021) and by monaural earplugging in both children and adults with typical hearing (Alemu et al., 2024; Slattery & Middlebrooks, 1994). A recent study also demonstrated that adult BCI users struggled to distinguish sound movement from stationary sound (Moua et al., 2019). Children with BCIs are even more likely to have difficulties localizing moving sound given poor spatial/binaural hearing development (reviewed above).

The following hypotheses were tested in the present study: children provided with BCIs have poorer spatial hearing than peers with normal hearing but gain abilities to localize stationary and moving sound with: (a) increased sensitivity to ILDs and ITDs; (b) reduced aural preference; and (c) more effective use of head and eye (gaze) movements.

Method

Participants

This study was conducted with approval from the Research Ethics Board at the Hospital for Sick Children and included 42 children provided with BCIs (MAge = 12.3) with minimal hearing deprivation and symmetric speech perception scores (see Table 1) and 37 peers with typical hearing (controls; (MAge = 12.9). Data from 33/37 participants in the control cohort were previously published in Alemu et al. (2024). Inclusion criteria were children with BCIs (at least 6 months of CI use) and age-matched peers with typical hearing who could complete all experimental tasks. The control cohort underwent audiometric testing in response to a pulsed tone (through ER-3A insert earphones) to ensure hearing thresholds fell within typical range (≤20 dB HL).

Demographic Measures and Hearing Outcomes for Children Provided With Bilateral Cochlear Implants.

Note. Mean values and standard deviation values are provided individually and by ear where applicable.

Demographic and hearing related measures for children with BCIs (n = 42) are summarized in Table 1 including age at test, age at cochlear implantation, delay between first and second implant (where applicable), duration of deafness (period of deprivation preimplant during which ear does not have access to sound softer than <30 dB HL), preimplant unaided pure tone audiometry, preimplant speech perception scores in quiet and internal devices. Speech perception scores were measured using age-appropriate speech tests including the Phonetically Balanced Kindergarten test, Glendonald Auditory Screening Procedure, Multisyllabic Lexical Neighborhood Test, Early Speech Perception Test, and the Word Intelligibility by Picture Identification test (Erber, 1982; Haskins, 1949; Kirk et al., 2022; Moog et al., 1990; Ross & Lerman, 1968). The pediatric BCI cohort was comprised of children who received their CIs in the same surgery (simultaneous bilateral) and children who received their CI devices at different times (sequential bilateral).

Sound Localization Paradigm

Auditory localization was measured in the free-field in the frontal azimuthal (horizontal) plane for BCI users (n = 42) and peers with typical hearing (n = 37). Testing took place in a cubic (2-m) soundbooth in which a loudspeaker was moved anywhere along a horizontal arc of 120° (between 60° from the left of center, and 60° to the right of center) by fixing it on a moving L-shaped “speaker arm” set-up as described previously (Alemu et al., 2024; Gordon et al., 2023). The arm was moved using a 57BYGH420-2 Wantai silent-stepper motor (10 dB SPL). All equipment was visually hidden from the participant using an acoustically transparent black curtain. This setup allows presentation of stationary and moving sound without the need for simulated movement or a speaker array. The main test application, sound presentation apparatus, and head-tracking were operated from a Zalman desktop PC (PC1) with 8-GB RAM and an Intel Core i5-4670 CPU at 3.40-GHz. Eye-tracking software and collection were operated from a Lenovo ThinkPad laptop (PC2) with 4-GB RAM and an Intel Core i5-4200M CPU at 2.50-GHz. Head- and eye-tracking positions were synchronized using timestamps from the main desktop.

Sound localization for all participants was measured in response to band-pass filtered white noise (125 Hz to 8 kHz). In a subset of participants, 26 children with BCIs (MAge [SD] = 13.4 [3.3] years) and 10 peers with typical hearing (MAge [SD] = 13.1 [2.2] years), sound localization was also tested in response to a 1 kHz pure tone with 100% amplitude-modulation at 40 Hz and this stimulus was also used to assess sensitivity to ILDs and ITDs (details below). Auditory stimuli were presented pseudorandomly anywhere in the 120° horizontal arc and participants were asked “where is the sound?” (Location 1, L1). Following their response, the sound was played while the speaker moved to a secondary position (Location 2, L2) in one of five movement conditions (0°, ±20°, or ±40°) and the participant was asked “where did the sound move to?.” Stimulus presentation was 3 s in duration for stationary presentations (L1) and varied for moving presentations (L1 to L2) depending on the magnitude of movement. Stimulus duration was smallest for nonmovement (0°) conditions (MDuration [SD] = 3.2 [1.5] s), then increased for small movements of 20° (MDuration [SD] = 6.7 [0.4] s] and was greatest for large movements of 40° (MDuration [SD] = 9.0 [0.2] s). Average velocities were 3.0 °/s for ±20° and 4.4 °/s for ±40°. Differences between these velocities are lower than auditory velocity discrimination thresholds identified by Carlile and Best (2002) when azimuth and duration are varied (Carlile & Best, 2002). Participants recorded their responses to L1 (the location of the stationary sound) and to L2 (the endpoint of sound movement) by moving a red laser dot operated by a smaller QSH4218-51-10-049 Trinamic silent-stepper motor and controlled by a Logitech Gamepad F310 videogame controller as previously described (Alemu et al., 2024). The user response was recorded as absolute azimuthal position (responses between ±90° permitted) at button press and reaction time as the duration of elapsed time between stimulus offset and the beginning of user response (movement of controller).

Head- and Eye-Tracking

Real-time head movements were collected using the EDTracker Pro wireless head tracker which tracked movement along three degrees of freedom (pitch, yaw and roll) with a sample rate of 125 samples per second. The MbientLab Metasensor was used for head tracking in the first three participants (all in the control group) but displacement drifts were found which had to be corrected manually in postprocessing (Alemu, 2020). Such drifts did not occur in subsequent data measured by the EDTracker Pro.

Head tracking data were collected continuously throughout the experiment beginning prior to the start of the first trial until after the final user response to the final trial and sent via radio frequency to the computer (PC1) controlling the stimulus and response so they were on the same clock. Sample rates were assessed in a subset of trials to confirm stable transfer of data. Head tracking data were epoched relative to stimulus presentation (stimulus onset at 0 s up to offset depending on stimulus duration as described above) and baselined to 0° at stimulus onset.

Real-time eye movements were collected concurrently using Pupil Labs Pupil Core eye tracking glasses (Kassner et al., 2014) on a second computer (PC2). The onset of eye movement recording was initiated and thus clocked for each trial by the stimulus program on PC1. These temporally synchronized eye movements were collected for each trial beginning at onset of speaker movement and ending once the participant response was recorded at a sample rate of ∼120 Hz. Calibration occurred both prior to sound localization testing and afterward as previously described (Alemu et al., 2024) and validated both displacement and timing of the measurements. During calibration, participants visually tracked colored dots that were presented sequentially from PC1 on a 33.5 cm × 51.3 cm monitor positioned in front of them while their heads were stabilized using a chinrest fixture positioned 64.2 cm from the monitor display (at eye-level). The dots were presented on a grid at vertical and horizontal angles of 13.2° and 22.4°, respectively. Postprocessing confirmed alignment between time and place of dot presentation (on PC1) and eye movements (on PC2). Eye movement data were assessed using the pupil detection confidence score provided by the Pupil Labs software (increasing confidence from 0 to 1).

Head- and eye-tracking displacement were calculated in the horizontal (azimuthal) plane and were binned relative to speaker location (or movement condition), stimulus presentation onset and stimulus offset. In postdata collection analyses, head and eye displacements over time were averaged to the nearest 10th ms (∼0.01 s) and converted to relative displacement (change in position relative to stimulus onset). Smoothing was applied using a simple moving average filter as described in Alemu (2020). The smoothing provided interpolation between eye tracking datapoints that were removed by PupilLabs’ “confidence” criterion (threshold <0.7) due to eye blinks and glass slippage.

For tracking in response to stationary presentation, speaker positions were binned in 12 increments of 10° from the range of 50° to 60° on the right hemifield to the range of −50° to −60° on the left hemifield. For tracking in response to moving presentation, binning was done in accordance with all five speaker movement conditions, 0°, ±20°, and ±40°. Gaze displacement was calculated as the summation of head- and eye-tracking displacement.

Concurrent head and eye responses were both of sufficient quality in 37/42 children with BCIs and 28/37 children with typical hearing. Gaze measures were calculated when both head and/or eye responses were available. Head responses but not eye responses were collected in four children with BCIs. Eye responses with no head response data (due to technical problems) were collected in nine children with typical hearing. Neither head nor eye responses were collected in one child with BCIs.

Sensitivity to Interaural Level and Timing Cues

A behavioral lateralization task was used to measure sensitivity to binaural cues using a two-alternative forced choice paradigm (Gordon et al., 2014; Gorodensky et al., 2019; Litovsky & Gordon, 2016; Polonenko et al., 2015; Salloum et al., 2010). Sensitivity to ILDs and ITDs was measured in response to a 1 kHz pure tone with 100% amplitude-modulation at 40 Hz presented bilaterally at 60 dB SPL for 2 s. This testing was completed in the subset of 21 children with BCIs and 10 peers with typical hearing who completed sound localization testing to the same sound. Stimuli for ILD and ITD lateralization testing were delivered through insert earphones in children with normal hearing and through the Cochlear NIC-4 system in children with BCIs. The NIC4 used custom sequence files specific to each child to instruct the research processors using the ACE coding strategy through the child's typically used MAP settings as clinically determined. Microphone volume and sensitivity settings were set to 10 and 12, respectively, for all participants. The 1 kHz carrier resulted in stimulation of apical electrodes #14–18 in all the children with BCIs. Bilateral CI stimuli were presented in six blocks each of which contained 14 stimuli in random order: seven ILDs (0, ±4, ±10, ±20 dB), five ITDs (0, ±0.5, ±1 ms), and two unilateral stimuli (left side only and right side only). A coding error was discovered in the acoustic insert earphone presentation system which removed the ITD for stimuli meant to contain ±1 ms ITD to children with normal hearing. Consistent with this, the proportion of responses to these stimuli were not significantly different from chance (50% right responses; t(19) = 0.77, p = .45) and not significantly different from responses to the 0 ms ITD condition. Thus, in effect, each block presented to the normal hearing group contained seven ILD stimuli (0, ±4, ±10, ±20 dB), three presentations of 0 ms ITD, one presentation of −0.5 ms ITD, one presentation of +0.5 ms ITD, and two unilateral conditions (left side only and right side only).

The task followed a two-alternative forced choice paradigm in which children indicated whether they heard the sound on their left or right side. Data trials were preceded by a training block consisting solely of unilateral stimulus presentation conditions in order to ensure the child understood task instructions. Performance accuracy of 100% in the training block was expected before proceeding to the task. An exclusion threshold of <80% accuracy was set for performance on unilateral presentation conditions across task blocks. Lateralization responses were coded as a binary value (“right” responses coded as “1” and “left” responses coded as “0”) and were analyzed by logistic regression analysis for each child for ILD and ITD conditions separately.

Spatial Release From Masking

An SRM paradigm assessed use of spatial separation for speech detection in noise and, as shown in previous studies, is sensitive to aural preference (Arras et al., 2022; Gordon et al., 2015, 2023). Target speech were spondee words presented in competing noise (speech-weighted spectrum) delivered at a constant level of 45 dB HL in 31 of the 42 children with BCIs (MAge [SD] = 12.5 [3.3] years), and 26 of 37 peers (MAge [SD] = 13.7 (2.2) years) with typical hearing. Spondee-word recognition thresholds (SRTs) were measured in three testing conditions: (a) speech in front, noise in front (S0N0); (b) speech in front, noise 90° left (S0N−90); and (c) speech in front, noise 90° right (S0N+90). SRTs were measured using a stepwise thresholding procedure where presentation level of target speech was decreased in 4 dB steps after correct repetition of the spondee and increased by 2 dB after incorrect responses. This was performed twice per testing condition. Measured SRTs (dB) were averaged across two repetitions for each condition. SRM was measured as SRM−90 = SNRS0N0 − SNRS0N−90 and SRM+90 = SNRS0N0 – SNRS0N+90. Missing data occurred because children/caregivers were either unable or unwilling to complete this testing (e.g., due to time constraints).

Self-Reported Hearing

The modified version of the SSQ (Galvin & Noble, 2013; Gatehouse & Noble, 2004) was administered to characterize the self-reported hearing challenges (including spatial hearing) in the children in both groups. The SSQ was administered in paper and digital (REDCap) versions in 27 of the total 42 BCI users (MAge [SD] = 13.0 [3.4] years) and 11 of the 37 peers with typical hearing (MAge [SD] = 13.5 [2.0] years). The adolescent variant of the SSQ was completed by children who were able to comprehend and respond to the questions following instructions from the tester whereas the parental variant of the SSQ was administered to parents/caregivers for children who were unable to either independently comprehend and/or respond to the questions. Missing data occurred because children/caregivers were either unable or unwilling to complete this testing.

Statistical Analyses

All statistical analyses were undertaken using the R programming language (Version 4.0.3) for statistical computing and graphics and the R-Studio integrated development environment (Version 1.3.1093) (R Core Team, 2021, RStudio Team, 2020). Statistical tests performed include linear mixed effects regression (accounting for random intercept by participant), simple linear regression, analysis of variance (e.g., two-way), and t-tests (Welch's/two-sample and paired). Linear mixed effects regression analysis was performed using functions from the lme4 package for R. These tests were utilized to test for effects of various factors such as group membership, demographics, and hearing measures on test outcome measures.

Perception of direction of movement was calculated during moving sound localization by coding the response error as a binary variable and fitting a logistic regression. Response error (Response error [°] = response location [°] – stimulus location [°]) was coded as a binary variable (i.e., 0 or 1) for each stimulus presentation (moving component of trial) where error in the rightward direction was coded as “1” and error in the leftward direction was coded as “0” to represent perceived direction of sound movement. Thereafter, logistic regression analyses were used to model proportion of responses judged to be moving in the “right” direction by stimulus location change condition.

Results

Children With BCI Have Good Speech Perception Bilaterally

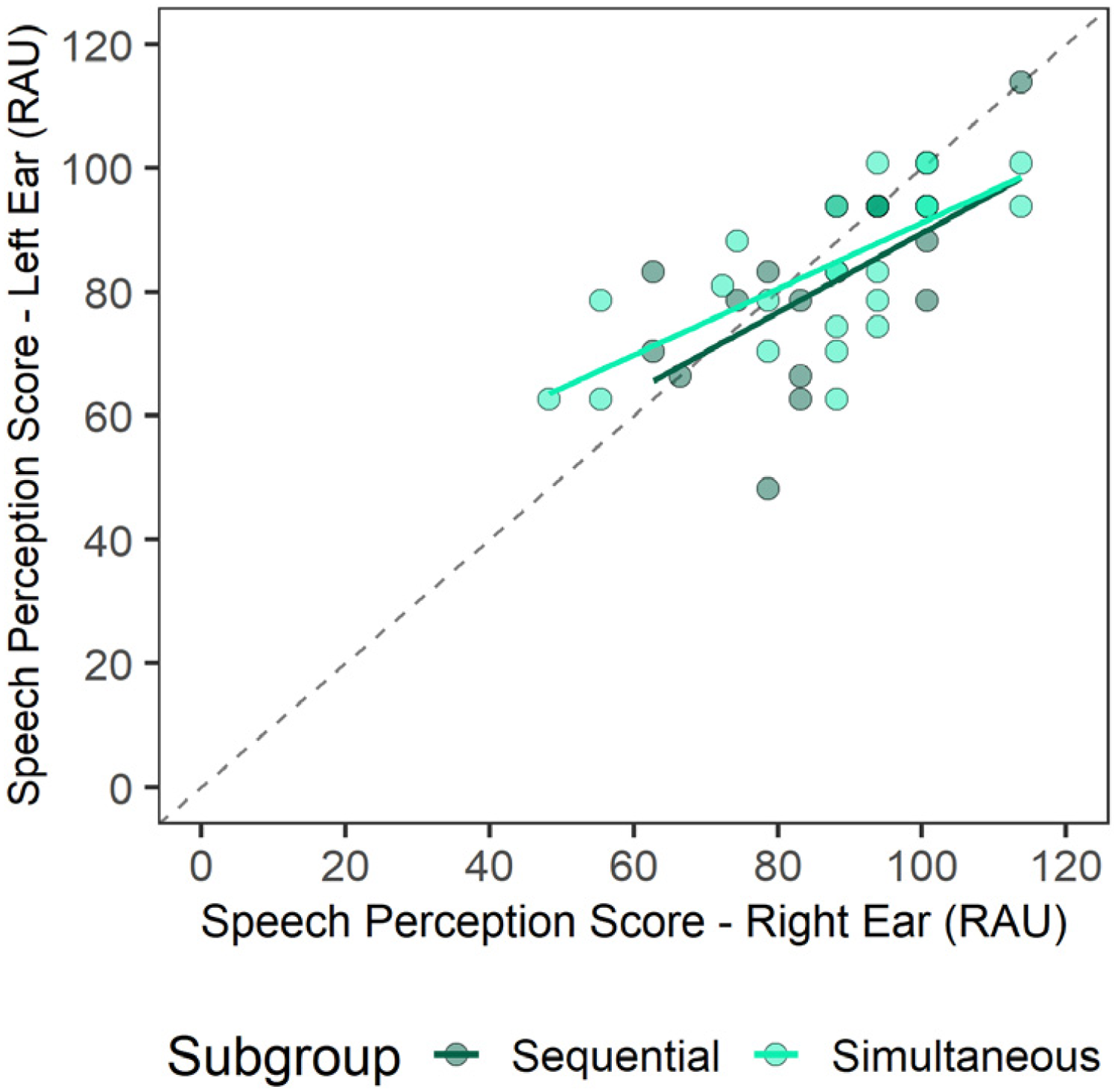

As shown in Figure 1, children who received their right or left CI at older ages had better residual hearing preimplant (lower preimplant unaided PTA [dB HL] thresholds). This is consistent with the short durations of deprivation in each ear detailed for both groups in Table 1. As shown in Figure 2, the most recent postimplant speech perception scores in quiet (RAU) were high (better than 50 RAU in each ear) and were symmetric; there was no significant difference between the two sides for the simultaneously implanted group (t(27) = −1.56, p = .13) or the sequentially implanted group (t(13) = −1.23, p = .24).

Preimplant unaided PTA (dB HL) is negatively associated with age at implantation with higher hearing thresholds being associated with earlier implantation (p < .001) for children with bilateral cochlear implants provided simultaneously and sequentially.

Postimplant speech perception scores were collected from age-appropriate word tests in children with BCIs implanted sequentially or simultaneously. Postimplant speech perception scores (RAU) collected in unilateral presentations in quiet were comparable between left and right ear presentations with no significant difference between the two sides (p = .05).

Right Ear Preference for Recognizing Speech in Noise

Figure 3A plots SRTs when noise is presented from the front (colocated), left and right positions in children with BCIs and peers with typical hearing (controls). SRT levels revealed that children with BCIs had significantly higher thresholds compared to controls (Control – BCI: Estimate [SE] = −10.0 [0.88], p < .001). SRM, when noise moved from the colocated condition to a spatially separated condition in either the left or right directions are plotted in Figure 3B. There was no significant difference in SRM between children with BCIs and controls (F(1,55) = 0.42, p = .52) but there was a significant effect of side of noise presentation (F(1,55) = 23.2, p < .001). In both groups, a hearing advantage was identified when noise was presented to the left rather than right side (SRM−90 – SRM+90: Estimate [SE] = 2.76 [0.57], p < .001), indicating a right ear advantage (see Figure 3C).

Spondee-word recognition thresholds (SRTs; dB) of target words presented at 0° azimuth amid speech-weighted noise presented at 45 dB HL in children with typical hearing (control, n = 26) and children with bilateral cochlear implants (BCIs, n = 31). (A) SRTs (dB) were higher in the BCI group (p < .001) and highest when speech and noise were colocated rather than spatially separated (p < .001). (B) Spatial release from masking (SRM; colocated − separated conditions) was larger for left than right positions of noise (p < .001) with no significant differences between groups (p = .52), indicating a right ear bias across groups. (C) Ear bias (asymmetry) in SRM is plotted for each group with a dashed line demarcating side of ear advantage. Most children had a right ear bias (n = 24 [77.4%] BCI and n = 23 [88%] control) and this asymmetry was not significantly different between groups (p > .05).

Children With BCIs Report Spatial and Other Hearing Challenges

Self-reported hearing as measured by the SSQ across subtests is plotted in Figure 4. The majority (59.3%) of the BCI cohort completed the parental variant of the SSQ and the other 40.7% completed the adolescent variant of the SSQ. All children in the control cohort completed the adolescent version of the SSQ. There was no significant difference in self-reporting hearing between the two variants of the SSQ in the BCI group (t(23.1) = −0.24, p = .81). Children with BCIs responded with significantly lower self-reported hearing scores overall compared to controls (F(1,90) = 72.4, p < .001). Significant group differences were observed across subtests including spatial hearing (BCI-Control: Difference = −3.14, p < .001), speech perception (BCI-Control: Difference = −2.64, p < .001) and qualities of hearing (BCI-Control: Difference = −1.80, p < .05).

Reported hearing challenges in 27 children with bilateral cochlear implants (BCI) (MSSQ [SD] = 7.0 [1.6]) measured by the Speech, Spatial and Qualities of Hearing Scale (SSQ) and 11 age-matched peers with typical hearing (controls; MSSQ [SD] = 9.5 [0.4]). BCI users had significantly lower self-reported hearing scores compared to controls across subtests (p < .001).

Children With BCI Have Emerging Spatial Hearing Abilities for Stationary Sound

Auditory localization of stationary and moving stimuli was measured in children with BCIs (n = 42) and controls (n = 37). Visualizations for auditory localization to stationary white noise are provided in Figure 5. In Figure 5A, linear regression fits are displayed by child to graphically represent slope and intercept values at a group level. The coefficient of determination (R2) by child was extracted based on linear regression analyses for each child. R2 values were significantly better than nil in BCI users (t(41) = 12.2, p < .001) but were significantly reduced compared to controls (t(41) = 3.7, p < .001). Thus, localization of stationary sound was considered to be “emerging.” Response errors, calculated as RMSE [°] are plotted by hemifield of sound presentation in Figure 5B. Linear mixed effects regression revealed BCI users had significantly reduced localization accuracy as measured by higher RMSE scores compared to controls (F(1,77) = 102.5, p < .001; Control-BCI: Estimate [SE] = −20.2 [2.0], p < .001) however there was no significant effect of hemifield (F(1,77) = 2.8, p = .10; left – right: Estimate [SE] = 2.1 [1.2], p = .10). As shown in Figure 5C, RMSE scores were positively correlated with mean reaction time (s) in controls (p < .01) and in peers with BCIs (p < .001). Correlation slopes were significantly steeper in the BCI group than controls (βBCI [SE] = 14.1 [3.5]°/s, p < .001; βControls [SE] = 4.11 (1.4)°/s, p < .01).

Responses to presentation of stationary white noise. (A) Response position plotted against stationary stimulus location (regression lines for each child) reveals greater variability in the BCI than control group. Coefficient of determination (R2) values of responses from each child were significantly lower in the BCI than control group (p < .001). (B) Root-mean-square error (RMSE; °) by side (hemifield) of stationary sound presentation (bars are mean [±1 SE] and circles are individual data] show higher RMSE values in BCI than controls (p < .001). There was no significant effect of hemifield (p = .10). (C) Response times increased with RMSE at a faster rate in BCI users than controls (p < .001).

Children With BCIs Have Emerging Abilities to Perceive Moving Sound

Visualizations for responses to localization of moving white noise are provided in Figure 6. Perception of direction of movement was modeled using logistic regression curves, shown in Figure 6A. In Figure 6B, Gaussian slopes from the logistic regression analyses were extracted by child and compared between groups to assess the shape of the psychometric function (log(10) transformation applied). Slopes in children with BCIs indicated responses were significantly better than chance (t(41) = 2.1, p < .05) but were significantly reduced compared to controls (t(63.4) = −14.4, p < .001), suggesting children with BCIs had “emerging” perception of moving sound but still had greater challenges detecting direction of sound movement than peers with typical hearing. Errors (RMSE) of the perceived change in stimulus location per hemifield of stimulus presentation are plotted by stimulus change condition in Figure 6C. A linear mixed effects regression revealed children with BCIs had significantly higher RMSE to response change compared to controls (F(1,77) = 125.2, p < .001; BCI-Control: Estimate [SE] = 28.3 [2.5], p < .001) indicating poorer accuracy in localizing moving sound in children with BCIs. As shown in Figure 6D, longer response latencies were significantly associated with higher RMSE of perceived change in moving sound location.

Responses to presentation of moving white noise. (A) Perception of direction of sound movement modeled by logistic regression curves for each child shows reduced sensitivity in the BCI group compared to the control group. (B) Logistic regression slope values were significantly reduced in BCI than controls (p < .001). (C) RMSE of the response change (°) relative to the stimulus change condition (L1 − L2) shows increased RMSE in BCI than controls (p < .001) and largest RMSE for larger sound movement conditions (p < .001). (D) Response times (s) to moving sound increase with RMSE at a faster rate in BCI than controls (p < .05) and for conditions with more sound movement (p < .001).

Effects of Stimulus on Localization Errors for Stationary or Moving Sound

Effects of auditory stimulus selection (1-kHz pure tone with amplitude modulation [AM] at 40-Hz vs. bandpass-filtered white noise [WN]) were assessed for the auditory localization task (both stationary and moving presentations) in the subset of 26 children with BCIs and 10 controls who completed sound localization to both stimuli. Task performance for stationary auditory localization was compared for the AM and WN stimuli in Figure 7A. A linear mixed effects regression found no significant effect of stimulus on RMSE in stationary sound localization (F(1,42.1) = 1.7, p = .19). Figure 7B plots perception of movement direction (measured by Gaussian slopes) between the AM and WN stimuli. A linear mixed effects regression found that direction perception slope was significantly better for the AM compared to the WN stimulus (F(1,41.4) = 11.4, p < .01).

Comparison of auditory localization of 1-kHz pure tone with 40-Hz amplitude modulation (AM) compared to bandpass-filtered white noise (WN) during stationary (A) and moving presentation (B) in 26 children with BCIs and 10 with typical hearing. There was no significant effect of auditory stimulus for stationary auditory localization accuracy (p = .2), However, there was an improvement of perception of direction of sound movement for listening to the AM stimulus compared to the WN stimulus (p < .01).

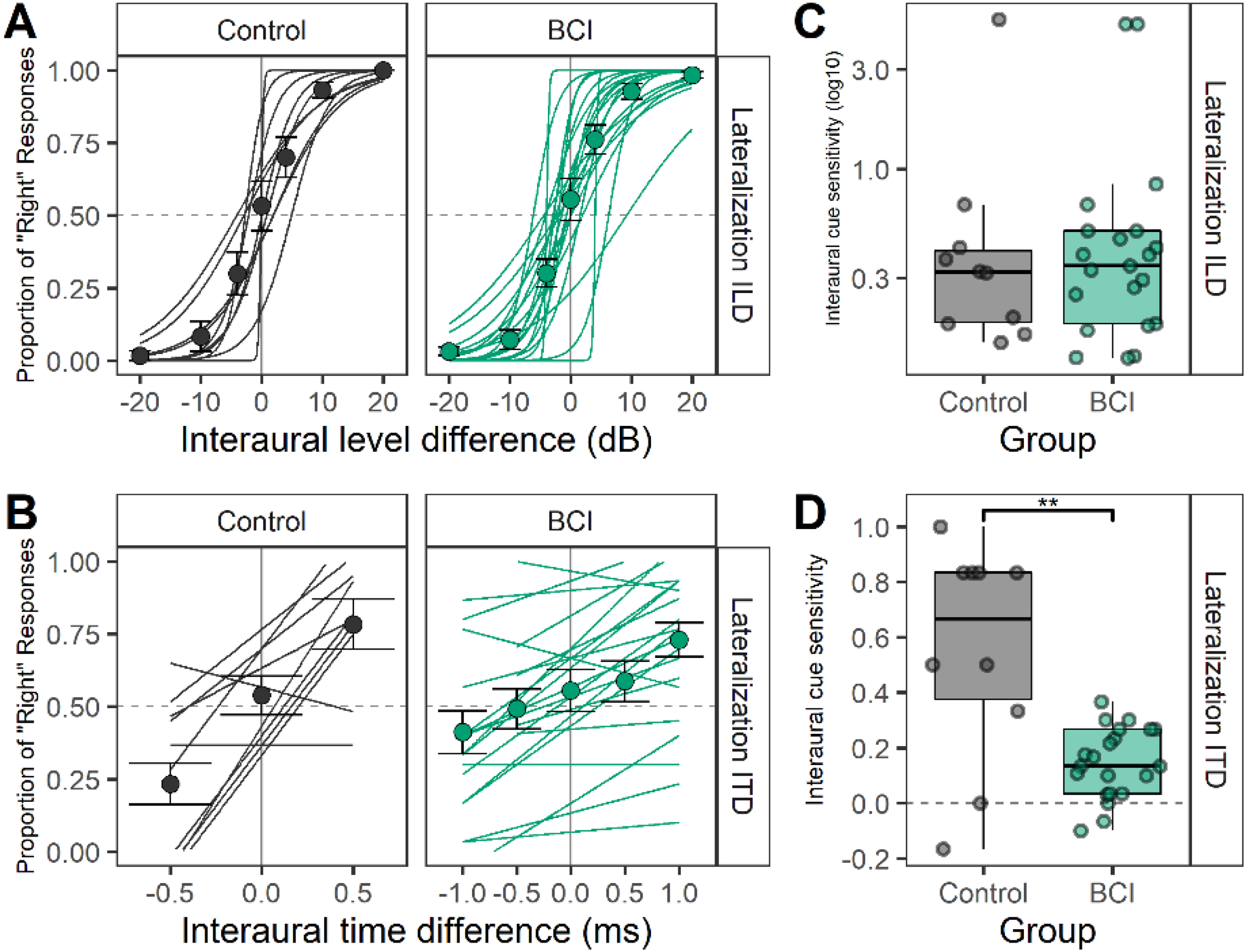

Children With BCI Have Better Access to ILD Than ITD Cues

Responses to the behavioral lateralization task were coded as “0” or “1,” indicating responses were heard either on the “left” or “right” side, respectively. Thereafter, proportion of “right” responses were modeled using logistic regression analyses to measure interaural cue sensitivity for ILD (see Figure 8A) and ITD (see Figure 8B) cues. Gaussian slopes derived from logistic regression are plotted per child for ILD (see Figure 8C) and ITD (see Figure 8D) cues. Slopes for ILD lateralization were similar between groups (t(16.2) = 0.02, p = .98) but children with BCIs had significantly reduced slopes compared to controls for ITD lateralization (t(9.9) = 3.2, p < .05). The ITD lateralization slopes were significantly greater than nil in the BCI group (t(20) = 5.2, p < .001).

Responses to lateralization of binaural cues with interaural level differences (ILDs) and interaural timing differences (ITDs). (A) Individual logistic regression curves of “right” responses to ILD conditions reveal similar sensitivity to ILDs between BCI (n = 21) and controls (n = 10). (C) Extracted regression slopes for the ILD lateralization task (bars = mean [1 ± SE], symbols = individual data) are not significantly different between groups (p = .98). (B) Individual logistic regression curves of “right” responses to ITD conditions show reduced sensitivity to ITDs between BCI and controls. Extracted regression slopes for the ITD lateralization task in D (bars = mean [±1 SE], symbols = individual data) are significantly different between groups (p < .05).

Localization of Stationary Sound and Direction Perception Acuity Improves With Better ITD Sensitivity

Effects of interaural cue sensitivity, measured by Gaussian slopes extracted from logistic regression analyses of ILD and ITD lateralization, are plotted in Figure 9 against measures of sound localization and aural preference measured by SRM asymmetries in both groups. Regression analyses revealed no significant effects (p > .05) of ILD sensitivity or group on RMSE in stationary sound localization (see Figure 9A), slopes of sound movement perception (see Figure 9B), or SRM asymmetry (see Figure 9C). By contrast, as shown in Figure 9D, increased ITD sensitivity was significantly associated with reduced RMSE (F(1, 27) = 8.3, p < .01) with significant effects of group (F(1, 27) = 8.4, p < .01) but no interaction (F(1, 27) = 1.4, p = .25). Thus, the group differences drove this relationship. Perception of movement direction was also significantly associated with ITD sensitivity (F(1, 26) = 22.4, p < .001) and group (F(1, 26) = 29.3, p < .001) with no interaction effects (F(1, 26) = 0.47, p = .50) (see Figure 9E), again reflecting better access to ITDs and better motion perception in the control group. Increasing ITD sensitivity was associated with reduced absolute SRM asymmetry (F(1, 27) = 8.8, p < .01) and a significant interaction between ITD slope and group (F(1, 27) = 8.1, p < .01), revealing that this association was particular to BCI users (Estimate [SE] = −13.2 [4.6], p < .01; see Figure 9F).

Top row: Slopes of ILD lateralization did not significantly affect localization of a 1-kHz pure tone with 40-Hz amplitude modulation (AM) stimuli in either stationary (A) or moving (B) conditions nor was associated with aural preference measured by asymmetric SRM benefits (dB) (C). Bottom row: ITD lateralization slopes were significantly associated with reduced stationary localization error (D) and improved direction perception acuity (E). Poorer ITD sensitivity was significantly associated with increased preference for one ear measured by SRM asymmetries (F).

Children With BCI Show Reduced and Less Accurate Gaze Changes During Auditory Localization of Stationary and Moving Sound

Concurrent head and eye responses to the auditory localization task were both collected using wearable technology and were both of sufficient quality in 37/42 children with BCIs and 28/37 children with typical hearing. Head and eye responses were analyzed as displacement waveforms or as degree of movement in the horizontal/azimuthal plane relative to stimulus onset and analyzed up to stimulus offset. Head and eye responses were then summated as gaze responses across binned stimulus location (°) for stationary sound in Figure 10A. As plotted in Figure 10B, BCI users had significantly reduced gaze area under the curve (AUC) compared to controls in response to stationary auditory localization (F(1,57.6) = 16.0, p < .001). Significant differences were found for all locations 20° and greater (−60° to −50°: Estimate [SE] = 5.3 [3.5]; −50° to −40°: Estimate [SE] = 13.8 [3.5]; −40° to −30°: Estimate [SE] = 7.8 [3.6]; −30° to −20°: Estimate [SE] = 6.7 [3.7]; −20° to −10°: Estimate [SE] = 5.2 (3.6); +10° to +20°: Estimate [SE] = 7.2 [3.5]; +20° to +30°: Estimate [SE] = 6.7 [3.6]; +30° to +40°: Estimate [SE] = 6.6 [3.6]; +40° to +50°: Estimate [SE] = 6.2 [3.5]; +50° to +60°: Estimate [SE] = 9.1 [3.5]). The percentage of time spent moving toward the target stationary sound, plotted by binned stimulus location in Figure 10C, reveals clear gaze movement to the correct place for peripheral positions with equal movements left to right (no greater than 50% chance) at a narrower range of central positions (−10° to +20°, highlighted by gray rectangles) in the children with typical hearing compared to the children with BCIs (−20° to +30°, highlighted by the teal green rectangles). Gaze responses are plotted against stimulus location change (°) for moving sound in Figure 10D. As shown in Figure 10E, there was significantly less gaze (measured by AUC) in response to moving sound for BCIs compared to controls for large speaker movements of 40° (F(4, 556.7) = 15.7, p < .001). As shown in Figure 10F, controls followed their gaze more accurately with the direction of the moving sound than BCI (F(1,63.2) = 11.9, p < .01). Although the BCI users moved their gaze in the correct direction less often for smaller movements of 20° than larger movements of 40°, these were nonetheless more accurate than chance (50%) (−20°: t(36) = 4.4, p < .001; +20°: t(36) = 4.4, p < .001).

(A) Gaze displacement (summated head and eye movements) is shown from onset of stationary sound presentation to 5 s (stationary sound offset occurs at 3 s) in bins of 10°. Horizontal blue bands indicate sound source location (L1). (B) Gaze displacement to stationary sounds measured as area under the waveform curve (AUC) reveal less movement in children with BCIs than controls (p < .001). (C) The proportion of gaze that was in the stationary stimulus hemifield was greater than 50% chance (shown by blue line) for positions outside of the shaded ranges. (D) Gaze displacement from onset of moving sound presentation to 10 s from onset. Blue lines indicate terminal sound location (L2). (E) Gaze displacement during moving sound presentation measured as AUC reveal less movement in children with BCIs than controls during large movements of 40° (p < .001). (F) Proportion of time gaze was in the same direction as sound movement and was significantly better than chance (50% shown by blue line) for all movement conditions >0° in both groups. The gaze proportions were significantly lower in the BCI group compared to controls (p < .01).

Discussion

The present study included children with BCIs who achieved generally symmetric speech perception in both ears but who still required higher SNR to recognize words and have more self-reported listening challenges than peers with typical hearing. A right-ear advantage for speech recognition in noise was found in both groups. Localization of stationary and moving sound was impaired in children with BCIs. The hypothesis that spatial hearing for moving sounds is better than that for stationary sound in children with BCIs was not supported. Localization of stationary sounds improved with better sensitivity to ITDs whereas good sensitivity to ILDs did not affect localization of either stationary or moving sound. As hypothesized, children with BCIs demonstrated less effective head and eye movements (gaze) than peers with typical hearing during stationary or moving sound localization tasks, particularly when sound was presented in more peripheral areas or moving over larger angles. These data are consistent with previous findings of poor binaural hearing in children with early-onset deafness despite provision of BCIs with limited delay but suggest that they use alternate strategies to gain some awareness of auditory space. Use of head and eye movements and good access to ILD cues could be utilized through therapy to further encourage spatial hearing. Moreover, efforts to improve access to ITDs through BCIs will be important to improve spatial hearing in children.

Children With Limited Duration of Deafness in Either Ear Gain Symmetric Hearing Bilaterally

As shown in Figure 3, children with BCIs had higher speech recognition thresholds in noise than their peers with typical hearing. Although thresholds for speech-in-noise are known to decrease with age (Jacobi et al., 2017), this does not explain these data given the similarity of ages between the groups (see Table 1) and the minimum duration of deafness in either ear in the BCI group (indicating little difference between chronological and “hearing” age). Rather, poor thresholds for speech-in-noise are consistent with hearing loss and CI use (McSweeny et al., 2021) and consistent with previous studies (Chadha et al., 2011; Ching et al., 2011; Gordon et al., 2023; Hess et al., 2018; Misurelli & Litovsky, 2015). Children with BCIs also reported having more hearing difficulties than controls in all areas of listening (speech, spatial hearing, and qualities of hearing) as measured by the SSQ scale (see Figure 4).

Despite their hearing challenges, children with BCIs showed similar benefits of spatial separation between speech and noise compared to controls. The SRM paradigm can be done for children at a wide range of ages with different speech materials (e.g., sentence, words, spondees; Ching et al., 2011; Corbin et al., 2017); different noises (e.g., speech-weighted, multitalker babble; Litovsky, 2005); different positions of separated noise (Srinivasan et al., 2016; Van Deun et al., 2010); different starting levels of noise and speech, and different methods to assess thresholds. The paradigm used here was most similar to that reported by Arras et al. (2022) and has been used in prior studies from our group with similar findings (Chadha et al., 2011; Gordon et al., 2023). It should be noted that the SRT benefits of spatial separation reported here are slightly higher than previous studies (Bronkhorst & Plomp, 1992; Grossmann et al., 2016; Schleich et al., 2004; Vermeire & Van de Heyning, 2009). This might reflect the levels of stimuli presented at dB HL in clinical soundbooths because they are calibrated with ANSI standards that take the speaker position into account (Jiang, 1998).

Increased benefit when noise was presented to the left ear compared to the right was found in both groups, indicating a comparative right ear advantage (SRM−90 – SRM+90: Estimate [SE] = 2.76 [0.57], p < .001). This was true despite generally symmetric hearing thresholds in both groups and symmetric speech perception results in the BCI group (see Figure 2). The right-ear advantage shown here is consistent with findings in adults and children during dichotic listening (Bryden, 1963; Kimura, 1961; Reiß & Reiß, 1998) and has previously been suggested to develop in children receiving BCIs simultaneously (Henkin et al., 2014). Whereas the right ear advantage was evident in this SRM measure in which children recognized and repeated spondee words in noise, it was not observed in the speech perception measures in quiet conditions (see Figures 2 and 3) or previous findings in which the SRM was completed using a speech detection threshold task that did not require language processing (Chadha et al., 2011). Together, these results suggest that a normally expected right ear advantage for speech recognition in noise involving specialized language processing mechanisms of the left auditory cortex for high-rate temporal processing (Luo & Poeppel, 2012; Tervaniemi & Hugdahl, 2003) may also be developing in children with hearing loss who use BCIs.

Children With BCIs With Minimal Duration of Deafness Have Emerging Abilities to Localize Both Stationary and Moving Sound

Data in Figure 5 showed that stationary sound localization was present but less precise in the BCI group than controls (as measured by coefficient of determination [R2] values of individual linear regressions between target and response angles). Similarly, average RMSE for stationary sound localization was low (MRMSE = 5.5°) for children with typical hearing and several fold higher (MRMSE = 25.8°) for children with BCIs (see Figure 5B). There is a wide range of reported errors by children using BCIs during stationary sound localization (13° and 63°) with lowest errors when head movement is unrestricted and speakers are visible (Coudert et al., 2022; Grieco-Calub & Litovsky, 2010; Gulli et al., 2024; Müller et al., 2022; Van Deun et al., 2009a; Zheng et al., 2015). Errors fell within the lower part of this range in the present cohort which may reflect their relatively strong and symmetric auditory speech perception abilities (see Figure 2), their older age at testing (MAge [SD] = 12.3 [3.3] years) than children in previous studies and longer duration of CI experience (MCI Experience [SD] = 8.4 [4.8] years). Localization of stationary sound improves with age in children with typical hearing (Van Deun et al., 2009b) and with hearing experience in children with BCIs (Zheng et al., 2015). The present cohort also had minimal periods of deprivation in either ear. Previous cohorts who received BCIs sequentially often had little access to hearing in the nonimplanted side during the period of unilateral CI use. Effects of interimplant delay on sound localization in such groups of children have been shown in several studies (Asp et al., 2011; Killan et al., 2019) and localization errors in those studies were typically higher than the errors reported in the present study despite the lack of visual cues and target positions occurring anywhere over the 120° horizontal arc.

In the present study, error in perceived change in location for moving sound was significantly greater for children with BCIs (MRMSE = 33.9°) than for peers with typical hearing (MRMSE = 5.6°) and these errors increased with larger sound movements (see Figure 6C). This was not supportive of the hypothesis that increased stimulus cues available for moving rather than stationary sound would assist spatial hearing in children with BCIs. Yet, there was some indication of moving sound perception in children with BCIs. Logistic regression analyses of response direction (left or right) revealed effects of stimulus movement direction and magnitude (see Figure 6A). The slopes of these responses were significantly lower for the BCI group than controls (see Figure 6B), indicating impaired perception of moving sound direction. Most children with BCIs showed positive slope values which suggest “emerging” abilities. Together, the findings related to perception of moving sound are consistent with data from adults with BCIs who demonstrated high errors tracking moving sound and even had difficulties discriminating moving from stationary sound (Moua et al., 2019). Although the duration and velocity of the presented sound increased with larger angular movements, there were no significant differences in these measures between the two stimuli. Moua et al. (2019) also reported that longer stimulus durations did not support localization of moving sound in adult BCI users and suggested that poor perception of moving sound reflected poor binaural hearing due to BCI device limitations and bilateral auditory deprivation. The present data are in keeping with their interpretations. Perception of moving sound was also found to deteriorate significantly through acute monaural ear plugging in adults and children with typical hearing (Alemu et al., 2024). Similar to findings in human BCI users, deafened ferrets were able to better localize sounds with BCIs with late-onset hearing loss compared to early-onset hearing loss and unilateral CIs (Isaiah et al., 2014). This highlights the importance of normal binaural hearing for perception of moving sound which is compromised in children with BCIs by early auditory deprivation (Anderson et al., 2022; Ehlers et al., 2017; Gordon et al., 2014) and by poor access to interaural cues through their BCIs (Aronoff et al., 2010; Grantham et al., 2008; Salloum et al., 2010).

Better Spatial Hearing When Envelope Cues Are Available

Auditory localization was assessed in response to two stimuli including bandpass-filtered white noise (125 Hz to 8 kHz) and a 1-kHz pure tone with 100% amplitude-modulation at 40 Hz (see Figure 7). There were no significant effects of auditory stimulus condition during stationary localization (see Figure 7A) however comparison of auditory stimuli for perception of movement direction (see Figure 7B) revealed higher slopes for localization of the amplitude-modulated pure tone compared to white noise, indicating higher perception acuity to direction of movement for the amplitude-modulated pure tone. Modulations in amplitude of the pure tone likely offer more salient envelope ITD cues than the flat wideband white noise. Past work has shown that fluctuating envelope cues resulted in lower response biases compared to flat envelope cues in auditory motion detection (Warnecke et al., 2020). These cues are likely important for BCI users whose access to fine structure ITDs requires coordination through research interfaces (Laback et al., 2011).

Better Use of ITDs Than ILDs for Spatial Hearing Performance

Access to interaural cues in a 1-kHz tone with 100% amplitude-modulation at 40-Hz presented bilaterally was measured in a lateralization task in a subgroup of children. As shown in Figure 8, children with BCIs demonstrated similar sensitivity to ILD cues on par with peers with typical hearing. On the other hand, children with BCI demonstrated impaired lateralization of the envelope ITD cues. These findings are consistent with many previous studies of both adults and children using BCIs (Dorman et al., 2014; Grantham et al., 2008; Salloum et al., 2010; van Hoesel et al., 2008). In general, sensitivity to temporal fine structure ITDs and envelope ITDs can vary widely in BCI users, ranging from very large ITDs required for perception beyond the physiological limit of ∼600 μs to smaller and more functionally usable values (∼150 μs; review by Laback et al., 2015). In children, hearing experience could play a role as sensitivity to onset ITDs was poorer in children with more limited BCI experience and in children receiving BCIs sequentially rather than simultaneously (Gordon et al., 2014). Children using bilateral hearing aids also demonstrate poorer onset ITD sensitivity but the degree of impairment decreases with better residual hearing (Gorodensky et al., 2019).

Comparisons between ILD sensitivity and sound localization revealed no association for either stationary or moving sound (see Figure 9A–B). These findings indicate that availability of ILD cues was insufficient for accurate spatial hearing performance in children with BCIs. ILD cues are required for discrimination of velocity of sound movement in individuals with typical hearing (Altman et al., 1988; Altman & Viskov, 1977). The lack of relationship between good ILD sensitivity in the children with BCIs and their direction perception or errors for locating moving sound position could reflect abnormal ILD processing. Despite good ILD sensitivity in behavioral tasks, children with BCIs show abnormal cortical representation of bilateral input (Smieja et al., 2020) and impaired cortical processing of ILDs (Easwar et al., 2018) and ITDs (Easwar et al., 2017a). By contrast, localization accuracy for stationary and moving sound was related to the group differences in ITD cue sensitivity which confirms that ITDs provide an important salient cue for spatial hearing of stimuli with sufficient low-frequency content (Laback et al., 2015). Moreover, some children with BCIs have better envelope ITD sensitivity than others as shown in Figure 8D and by other groups (Kan et al., 2018). Differences in ITD sensitivity could be attributed to a number of factors including interaural place matching and possibly lack of binaural auditory input in early life as found in deaf white cats (Hancock et al., 2010; Tillein et al., 2016; Yusuf et al., 2024), though age at onset of profound binaural hearing loss has previously been shown not to be related to ITD sensitivity (Laback et al., 2015).

Reduced ITD sensitivity was also associated with increased absolute SRM asymmetry in children with BCIs (see Figure 9C). SRM was calculated from SNR thresholds of target spondees with and without spatial separation to speech-weighted noise. ITDs serve as the dominant cue in horizontal localization of low-frequency stimuli such as speech and also in low-frequency attention-driven SRM (Laback et al., 2015). In past work by Smith et al. (2002), chimeric sound experiments showed consistent results, with envelope temporal differences associated with “what” sentence is heard and fine structure temporal differences associated with “where” sound is heard (Smith et al., 2002). Poor access to interaural cues particularly in the fine temporal structure, which serves as the dominant cue in attention-driven SRM, may thus drive and/or be driven by asymmetries in SRM benefit. The asymmetries in the SRM measure might also reflect an abnormal aural preference for one ear in children using BCIs. This has been reported in children with asymmetric access to sound between the ears such as children receiving BCIs sequentially (Sparreboom et al., 2021). Speech perception asymmetries were not found in the present cohort (as shown in Figure 2); however, asymmetries were found in the SRM measure. A normal ear advantage for recognizing words in noise was found for the right ear as in children with typical hearing (as shown in Figure 3C). Many children in the BCI group also showed a right ear SRM advantage but these ear asymmetries were often larger and sometimes weighted to the left ear. It is thus possible that these asymmetries reflected an abnormal aural preference (Easwar et al., 2017b; Gordon et al., 2013, 2015) rather than the normal ear advantage. If so, this could also explain why large SRM asymmetries were associated with poorer ITD sensitivity. Overall, poor ITD sensitivity, perhaps worsened by asymmetric hearing in some children with BCIs, was related to impaired sound localization.

Children Using BCIs Show Reduced and Random Gaze Movements During Spatial Hearing

Gaze displacement for children with typical hearing showed that they accurately followed the sound source with their head and eyes as shown in Figure 10. Gaze displacement, measured by AUC, was proportional to the angular distance of the stimulus from center (0°) in either direction and are consistent with previous findings using the same setup (Alemu et al., 2024). The unrestrained or free movement of head and eyes in the present study was allowed as head movements have been shown to facilitate spatial awareness when a sound is of sufficient duration or presented in repetition (Brimijoin & Akeroyd, 2014; Wallach, 1940; Wightman & Kistler, 1999) and to improve horizontal auditory localization compared to fixing the head in place (Pollack & Rose, 1967). Head movement might facilitate auditory access to interaural cues for sound localization; Beitel (1999) demonstrated that cats with no visual input (due to sectioned optical nerves) tracked moving sound by an initial orienting head movement followed by stepwise scanning movements (Beitel, 1999).

In children with BCIs, gaze AUC displacement was significantly reduced, indicating head and eye movements were not being used effectively to compensate for poor binaural cues during localization of stationary sound (see Figure 10A–C) and to a lesser extent in response to moving sound (see Figure 10D–F). Previously published gaze displacement (nonsummated) data in adolescents and young adults with typical hearing who wore unilateral ear plugs showed that they employed compensatory gaze movements through head turns toward the side of the plugged ear to increase the signal-to-noise ratio at the nonplugged ear (Alemu et al., 2024). In the present cohort of children with BCIs, proportion of time spent moving in the correct direction demonstrated better awareness of peripheral than centrally presented stationary sounds but no difference between small and larger angular movements of sound. Lingering errors and uncertainty for a wider range of frontal stationary positions in BCI users than the typical hearing group (see Figure 10C) may be mitigated by utilization of visual cues.

Study Limitations

The present study explored localization of stationary and moving sound while tracking concurrent head and eye responses in pediatric BCI users. The presentation of auditory stimuli focused on the frontal-horizontal (azimuthal) plane and did not introduce variations in elevation or positions beyond the azimuthal range of ±60°. This effectively limited study of monaural cues and front-back confusions which are affected by head movements (Pastore et al., 2018).

Summary and Conclusions

BCIs support symmetric hearing and access to ILDs when duration of deafness is minimal in children with bilateral sensorineural hearing loss and these devices facilitate some emerging spatial hearing abilities. However, impaired ITD perception and reduced and ineffective gaze movements in children with BCIs limit their perception of stationary and moving sound. Future work should explore improvements in CI technology and specifically methods of improving access to ITD cues to facilitate development of normal spatial hearing as well as spatial perception training potentially with visual cues.

Footnotes

ORCID iDs

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.