Abstract

In this review, we attempt to integrate the empirical evidence regarding stimulus-specific adaptation (SSA) and mismatch negativity (MMN) under a predictive coding perspective (also known as Bayesian or hierarchical-inference model). We propose a renewed methodology for SSA study, which enables a further decomposition of deviance detection into repetition suppression and prediction error, thanks to the use of two controls previously introduced in MMN research: the many-standards and the cascade sequences. Focusing on data obtained with cellular recordings, we explain how deviance detection and prediction error are generated throughout hierarchical levels of processing, following two vectors of increasing computational complexity and abstraction along the auditory neuraxis: from subcortical toward cortical stations and from lemniscal toward nonlemniscal divisions. Then, we delve into the particular characteristics and contributions of subcortical and cortical structures to this generative mechanism of hierarchical inference, analyzing what is known about the role of neuromodulation and local microcircuitry in the emergence of mismatch signals. Finally, we describe how SSA and MMN are occurring at similar time frame and cortical locations, and both are affected by the manipulation of

Introduction: SSA and MMN, Two Faces of Deviance Detection

Throughout their entire life, in each and every moment of it, humans and animals live immersed in an overwhelming flow of acoustic information continuously coming from all kinds of sources within their nearby environment. It is a major task of the auditory system to organize that acoustic jumble into perceptual constructs of biological relevance. Most of the sounds incessantly hitting the eardrum are repetitive and predictable and have meagre functional significance. The capacity of the auditory system to preattentively purge irrelevant foreseeable stimulation and provide perceptual saliency to those sounds that are unique, unpredictable, and therefore highly informative is generally referred to as deviance detection (also referred as change, surprise, or novelty detection, with varying usage across the literature). In other words, deviance detection is the response to a stimulus that diverges from a regularity in the stimulation previously identified by the processing system (Winkler & Schröger, 2015).

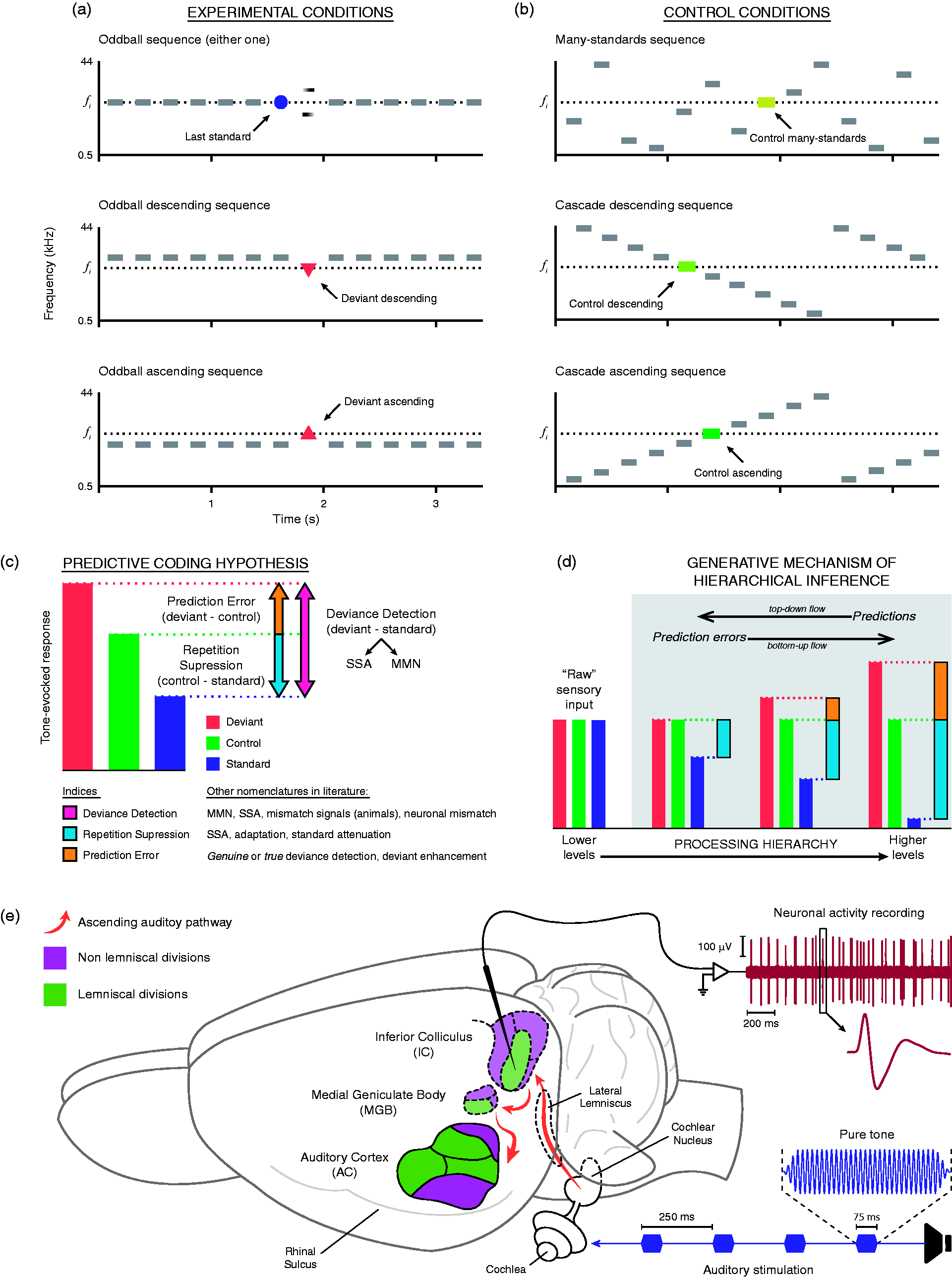

Deviance detection in the auditory system finds one of its most well-studied manifestations in the mismatch negativity (MMN), an event-related potential (ERP) recorded from the human scalp. An MMN can be elicited by any discriminable change in the auditory stimulation, peaking at 150 to 250 ms from change onset. That discriminable change has been widely reproduced experimentally using a classic oddball paradigm (Figure 1(a)), in which rare acoustic events ( (a) Classical oddball paradigm, displaying three possible experimental conditions for a given Auditory-evoked potentials (ERPs) recorded from the human scalp to standard and frequency deviant stimuli presented in an oddball sequence. (a) Middle-latency response (MLR) with its typical morphology (Na, Pa, and Nb) waveforms disclosing larger amplitude for deviant (red) compared with standard (blue) stimuli. The bottom plots correspond to the scalp distribution of the Nb latency range for deviant and standard stimuli. (b) Long-latency auditory-evoked potential for standard (blue) and deviant (red) stimuli, and the corresponding difference waveform (black) disclosing the mismatch negativity (MMN). The bottom plots correspond to the scalp distribution of the MMN latency range for deviant and standard stimuli, as well as the scalp distribution of the MMN (right).

Using the same oddball sequences that elicit the MMN in human ERP studies, an analogue deviance-detection process has been characterized in the response of some neurons distributed along the auditory pathways of several animal species. These neurons show a progressively reduced response to a repetitive standard sound, which is restored when stimulated by an unpredictable deviant sound. This special type of adaptation is considered a form of short-term plasticity, known as stimulus-specific adaptation (SSA). SSA is quantified as the index of change in the firing rate of a neuron in response to a deviant stimulus when compared with its response to that same stimulus played as a standard. Neurons exhibiting SSA are located subcortically within the nonlemniscal divisions of the auditory midbrain (Ayala et al., 2015; Ayala & Malmierca, 2015, 2018; Duque & Malmierca, 2015; Duque, Perez-Gonzalez, Ayala, Palmer, & Malmierca, 2012; Duque, Wang, Nieto-Diego, Krumbholz, & Malmierca, 2016; Malmierca, Cristaudo, Perez-Gonzalez, & Covey, 2009; Parras et al., 2017; Patel, Redhead, Cervi, & Zhang, 2012; Pérez-González, Hernández, Covey, & Malmierca, 2012; Pérez-González & Malmierca, 2012; Pérez-González, Malmierca, & Covey, 2005; Valdés-Baizabal, Parras, Ayala, & Malmierca, 2017; Zhao, Liu, Shen, Feng, & Hong, 2011) and thalamus (Anderson, Christianson, & Linden, 2009; Anderson & Malmierca, 2013; Antunes & Malmierca, 2014; Antunes, Nelken, Covey, & Malmierca, 2010; Bauerle, von der Behrens, Kossl, & Gaese, 2011; Duque, Malmierca, & Caspary, 2014; Parras et al., 2017) and are widely spread over primary (Chen, Helmchen, & Lutcke, 2015; Farley, Quirk, Doherty, & Christian, 2010; Hershenhoren, Taaseh, Antunes, & Nelken, 2014; Klein, von der Behrens, & Gaese, 2014; Natan et al., 2015; Natan, Rao, & Geffen, 2017; Nieto-Diego & Malmierca, 2016; Parras et al., 2017; Szymanski, Garcia-Lazaro, & Schnupp, 2009; Taaseh, Yaron, & Nelken, 2011; Ulanovsky, Las, Farkas, & Nelken, 2004; Ulanovsky, Las, & Nelken, 2003; von der Behrens, Bauerle, Kossl, & Gaese, 2009) and secondary (Nieto-Diego & Malmierca, 2016; Parras et al., 2017) areas of the auditory cortex (AC).

SSA was proposed to be the correlate of the deviance-detection mechanism at the neuronal level (Ulanovsky et al., 2003), which population activity summation would build up until being detectable on the scalp as an MMN (Nelken & Ulanovsky, 2007). Given the clinical potential of MMN, the need of knowing more about its neuronal substrate has encouraged numerous studies to delve into the SSA dynamics, neurochemical mechanisms, and anatomical distribution and connectivity to overcome the initial difficulties of linking the microscopic (SSA) and macroscopic (MMN) manifestations of the allegedly same physiological mechanism of deviance detection (Escera & Malmierca, 2014; Khouri & Nelken, 2015; Malmierca, Sanchez-Vives, Escera, & Bendixen, 2014; Nelken & Ulanovsky, 2007).

Adaptation or Modeling? Different Ways of Understanding Deviance Detection

In the context of an oddball paradigm, both MMN and SSA can be understood as indices of automatic deviance detection, which results from the overall difference between the responses to a given tone when it is presented as a deviant stimulus compared with when it is presented as a standard stimulus. But this contrast between deviant and standard responses could be accounted for in at least two different ways. On one hand, it could be due to an enhancement in the response to the deviant sound, as its appearance represents a violation of a previously established regularity. According to the

On the other hand, the contrast between deviant and standard could be simply due to attenuation of the response to the repetitive sound, as an effect of mere neuronal adaptation. The appearance of the deviant sound, physically different from the standard stimuli, would elicit the response of other novel afferences. The deviant sound would not produce an enhanced response, but just a nonadapted one (May & Tiitinen, 2010). This much simpler interpretation conforms to the

Beyond the classical oddball paradigm, adaptation seems also insufficient to explain how an MMN can be generated by the violation of regularities established by patterns more complex than sheer one-stimulus repetition (Heilbron & Chait, 2017). For example, in a two-tone pattern or alternation sequence (ABABABAB…), the repetition of one of the tones (ABABAB

In addition, the theoretical inference of a

Predictive Coding: Moving to a Common Framework

Predictive coding is one of the most influential and comprehensive theories of neural function addressing how the brain makes sense of the world (Heilbron & Chait, 2017). It has become very popular in the past decade, although some of the insights comprehended in this theoretical framework have a long tradition in the literature. Early in the history of cognitive psychology, Neisser (1976) already introduced the concept of perceptual cycle, which might be considered an ancestor of predictive coding. As the biological basis for Bayesian theories of perception and cognition, predictive coding offers compelling explanations for multiple phenomena from neuroanatomy (Friston, 2005) and electrophysiology (Rao & Ballard, 1999) to psychology (Knill & Pouget, 2004). Regarding neuroscience of perception, predictive coding was initially adopted in the study of visual processing (Lee & Mumford, 2003; Rao & Ballard, 1999), and the application of its principles to research in the auditory system is gaining momentum as of late (Denham & Winkler, 2018; Heilbron & Chait, 2017; Schröger et al., 2014; Schröger, Marzecová, & SanMiguel, 2015; Winkler & Schröger, 2015).

According to the predictive coding theory, perception emerges from integrating sensory information from the environment and our predictions based on an internal representation of that information (Auksztulewicz & Friston, 2016; Bastos et al., 2012; Friston, 2005). As in the

Optimization of perceptual representation throughout this hierarchical chain of processing levels of increasing abstraction complexity requires managing the relative influence of top-down prior expectations and bottom-up prediction errors. This process would require short-term synaptic plasticity. Mechanisms operating at the input of the neuron, such as synaptic depression and facilitation or inhibition, would differentially affect diverse parts of its dendritic tree to optimize the postsynaptic sensitivity of neurons acting as deviance-detection units, that is, neurons showing SSA (Garrido, Kilner, Stephan, et al., 2009). Thus, when repetitive stimuli can be predicted precisely by top-down afferents, bottom-up influences are reduced by decreasing the postsynaptic responsiveness of the neurons to the redundant sensory inputs, like the

Predictive coding provides a much more extensive explanation, as it postulates that any regularity, simple or complex, is susceptible to being encoded at some level of processing, thereby subduing suppressive effects at that level. That is, regularity encoding may lead to automatic

Deviance can only be defined in relation to something regular (Winkler & Schröger, 2015). Hence, the term

While an MMN evoked by a simple deviant like an infrequent decrement in tone intensity or an omission is difficult to explain in terms of adaptation alone (Duque et al., 2016), predictive coding accounts not only for that but also for how an MMN can be generated by the violations of abstract rules based on complex interstimulus relationships or transitional probabilities (Dehaene et al., 2015; Mittag, Takegata, & Winkler, 2016). The omission of an expected tone implies a violation of the established perceptual representation in the system, so the perceptive model would require an update. In other words, even if no sound has occurred, the auditory system must encode the no-tone event as a prediction error. Thus, that auditory response to the silence is in truth a pure prediction error, signaling the unexpected gap in the sequence. Regarding the alternation and the local/global sequences, the rationale is similar but instead features an unpredictable repetition of a tone. Note that, as that repeated tone is indeed a local standard (e.g., AAA

Understanding the brain essentially as a prediction machine has delivered great integrative potential to the scientific literature on perception and cognitive neuroscience (Clark, 2013; Hohwy, 2012). Several authors have been able to explain previous ERP evidence on auditory processes such as deviance detection, stream segregation, auditory scene analysis, and attention to sound, all together under the common theoretical framework of predictive coding (Garrido, Kilner, Kiebel, et al., 2009; Schröger et al., 2014, 2015; Wacongne, Changeux, & Dehaene, 2012; Winkler et al., 2009; Winkler & Schröger, 2015). As discussed earlier, predictive coding is also capable of reconciling the two classic MMN-based and SSA-based interpretations of deviance detection, postulating the adjustment of a generative model of the current stimulus train (as in the model-adjustment hypothesis) founded on plastic changes in synaptic connectivity (as in the adaptation hypothesis; Garrido, Kilner, Stephan, et al., 2009). In this review, we intend to thoroughly expand that integrative endeavor into SSA research. Using a predictive coding perspective, we reinterpret the evidence obtained from neuronal-level recordings, such as extracellular single-unit and multiunit activity (Figure 1(e)) or patch clamp recordings (for reviews more focused on macrocellular recording techniques, see, e.g., Escera & Malmierca, 2014; Fishman, 2014; Garrido, Kilner, Stephan, et al., 2009; Grimm, Escera, & Nelken, 2016; May & Tiitinen, 2010), as an attempt to reconcile SSA data with MMN observations within a common framework of Bayesian hierarchical inference.

Control Sequences for a Renewed Methodology in SSA Research

The oddball paradigm faces a major methodological limitation conforming to the predictive coding perspective: It confounds the effects of adaptation and expectation (Ruhnau, Herrmann, & Schröger, 2012). In other words, it does not allow the distinction between repetition suppression and more complex forms of predictive activity (Fishman & Steinschneider, 2012; Nelken & Ulanovsky, 2007; Taaseh et al., 2011). Repetition suppression is the result of abstracting the less computationally demanding interstimulus relationship (repetition) and establishing the simplest expectation in consonance: “The next input is going to be similar to the previous one encoded.” Since the input information is already represented in the system, there is no need to mobilize processing resources to represent it again. This type of prediction should require few encoding capabilities, so it could be resolved already at the lower levels of the processing hierarchy (Figure 1(d)), as suggested by the presence of SSA as early as the auditory midbrain (Bibikov, 1977; Malone, & Semple, 2001; Pérez-González et al., 2005).

In consequence, during the oddball sequence, the unchanging pattern formed by the repeated presentation of standards quickly generates top-down predictions that efficiently explain away the sensory input and suppress prediction error, which leads to a reduction of standard-evoked response by means of short-term synaptic plasticity (adaptation). But when the deviant stimulus interrupts the train of standards, two distinguishable processes take place, yielding two distinct components in the difference signal we call deviance detection (also neuronal mismatch, when recorded from single units; Parras et al., 2017). On one hand, the repetition rule has been violated, freeing the neuronal response from repetition suppression. Strictly speaking, this activity could be considered as a prediction error emerging, but for the purpose of clarity, we refer to this particular component as

Thus,

From a predictive coding standpoint, a sequence must meet at least two theoretical requirements to be considered an apt control for the oddball paradigm. First, the control sequence cannot feature the recurrent repetition of an individual stimulus, making it possible to assess the effect of

The Deviance detection and prediction error in representative neurons of the anesthetized rat. (a) Examples of lemniscal single-unit responses in each recorded auditory station (columns). The first row shows schematics of the lemniscal subdivisions (green) within each nucleus. The second row shows the frequency-response area (representation of neuronal sensitivity to different frequency-intensity combinations) of representative lemniscal neurons from each nucleus. Ten gray dots within each frequency-response area represent the 10 tones (

Nevertheless, the many-standards control might be somewhat conservative in the identification of

The Two Axes of Predictive Coding Hierarchy in the Auditory System

Using both many-standards and cascade controls, a recent study has provided evidence of a generative system of prediction error distributed hierarchically along the auditory pathway of anesthetized rats and confirmed it in awake mice (Parras et al., 2017), supporting predictive coding as a plausible interpretation of the organization and functioning of auditory neurons. Two vectors of increasing prediction error were identified in the auditory hierarchy: from lemniscal to nonlemniscal subdivisions, and from subcortical toward cortical structures (Parras et al., 2017). In the following, we adopt this view of hierarchical disposition to revisit the evidence of deviance detection at neuronal levels along the auditory pathway, to trace the roots of predictive activity in the auditory system and provide a robust neurophysiological basis for the MMN.

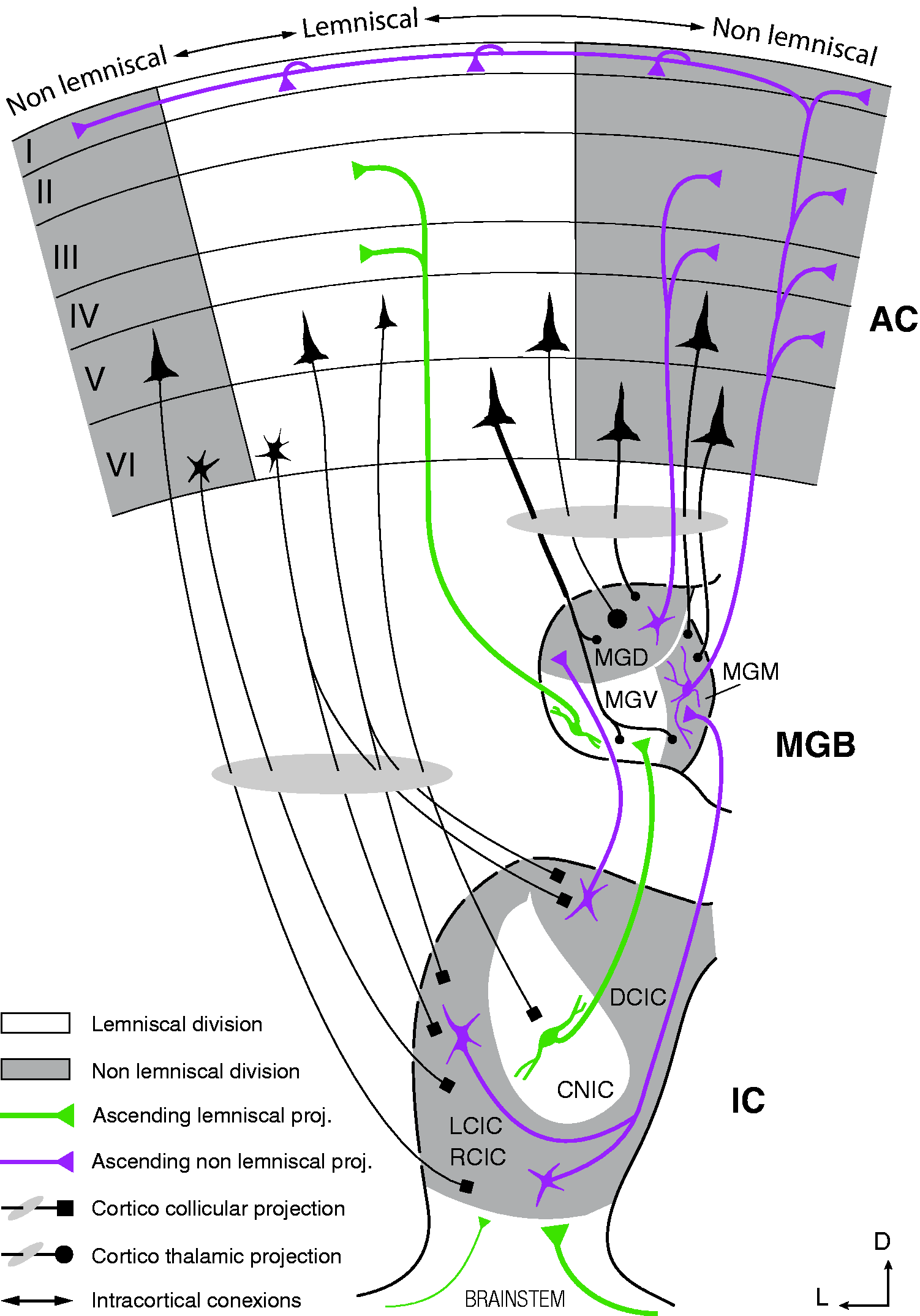

Lemniscal Versus Nonlemniscal Processing: Two Parallel Pathways of Auditory Information

Auditory information is transmitted along a series of nuclei arranged in a hierarchical manner, where different acoustic and contextual features are progressively extracted at each level of processing. Originating at the midbrain level of the auditory neuraxis, two parallel pathways can be distinguished marking each station they cross with structural and functional characteristic features. Almost half a century ago, Graybiel (1973) first coined and defined the so-called lemniscal line system and lemniscal adjunct system as a general categorization of sensory conduction routes referred to the lemniscus. Since then, the distinction between Schematic diagram of the auditory pathway, showing the major stations and projections that constitute the lemniscal and nonlemniscal pathways. Note that divisions in subcortical nuclei are well preserved across species, while AC fields vary markedly (Malmierca & Hackett, 2010). As a rule of thumb, lemniscal tonotopic laminae tend to project to their analogous lamina in the next lemniscal division and receive few cortical projections, shaping a sort of straightforward pathway to the cortex. Conversely, nonlemniscal divisions tend to project mostly to other nonlemniscal divisions and receive dense cortical projections, shaping a loop-like connectivity network ideal for hosting a generative mechanism of hierarchical inference (Figure 1(d)).

The lemniscal pathway represents a core of neurons in every auditory nucleus that tend to be sharply tuned and organized in rather clear tonotopic fashion made of anatomical laminae or bands. The majority of the neurons in each frequency lamina project to their corresponding homologous lamina in the next station of the lemniscal pathway (Malmierca, 2015), shaping a sort of straightforward pathway that relays sensory information mainly in bottom-up fashion (Figure 4). In addition to the sharp tuning of their frequency-response areas (Figure 3(a)), lemniscal neurons also show in general a better consistency in their response to the sound, including shorter latencies, higher firing rates, more overall spikes fired per stimulus, and higher spontaneous activity than their nonlemniscal counterparts (Malmierca, 2015). In other words, the response of these very tonotopically organized neurons is fundamentally driven by the physical features of the sound, receiving mostly (albeit not exclusively) ascending inputs from lower lemniscal stations in the auditory neuraxis. Hence, lemniscal divisions are thought to be in charge of accurately relaying sensory input about the stimulus characteristics, fundamentally disregarding its context or other abstract relations between sounds. As expected, recent experiments have demonstrated that subcortical neurons within lemniscal divisions do not generate prediction errors (Parras et al., 2017), so they are likely to be the prime provider of sensory input for their nonlemniscal analogues, distributing and feeding “raw” auditory information to the generative mechanism of hierarchical inference without being an active part of it. It is not until auditory information reaches the cortex that reliable deviance-detection activity can be found in the lemniscal pathway. As a matter of fact, it was lemniscal AC where SSA was discovered and characterized for the first time in the auditory system (Ulanovsky et al., 2003, 2004), and some authors have proposed it as the neural structure where the mechanism of hierarchical inference most probably initiates (Chen et al., 2015; Taaseh et al., 2011). The rat lemniscal pathway consists of the central nucleus of the IC, the ventral division of the MGB, and the primary AC that includes the A1 field, the anterior auditory field, and the ventral auditory field of the AC (Figure 3).

Parallel to the lemniscal pathway, another system referred to as the nonlemniscal pathway lies, in which any trace of tonotopical distribution is at its best diffuse. The nonlemniscal pathway consists of a belt of broadly tuned neurons that get inputs from the lemniscal core they are wrapping, and from other nonlemniscal stations: Subcortical nonlemniscal neurons send ascending projections to the next nonlemniscal station, while cortical neurons send descending projections mostly to the nonlemniscal divisions of the MGB and the IC (Figure 4; Malmierca & Ryugo, 2011; Saldaña, Feliciano, & Mugnaini, 1996). The fact that nonlemniscal neurons shape this loop-like connectivity with heavy cortical modulation, combined with their comparatively longer response latencies, the broadness of their frequency-response areas (Figure 3(b)), and their adjunct anatomical position relative to the lemniscal stream, strongly implies that they must exert an integrative function in the auditory system. In fact, this system of backward and forward connections between stations looks like the perfect network to host the top-down flow of predictions and the bottom-up transmission of prediction errors. Consequently, nonlemniscal divisions seem to form a higher order pathway of processing, constituting a secondary system capable of encoding more complex aspects of the auditory scene and tracking the history of stimulation, as required to account for the emergence of deviance-detection activity in the form of SSA or MMN, and for generating prediction error signals. The rat nonlemniscal pathway includes the rostral, lateral, and dorsal cortices of the IC; the dorsal (MGD) and medial (MGM) divisions of the MGB; and the suprarhinal auditory field and the posterior auditory field of the AC (Figure 3(b)).

Multilevel Hierarchical Auditory Processing: From the Midbrain to the Cortex Through the Thalamus

Anatomically speaking, the earliest generative units of prediction error are found in the cortices of the IC (Parras et al., 2017), at the beginning of the nonlemniscal pathway. Participation of subcortical nuclei in auditory deviance detection has been hinted at by several studies in humans (Althen et al., 2011; Cacciaglia et al., 2015; Cornella et al., 2012; Grimm, Recasens, Althen, & Escera, 2012; Shiga et al., 2015; Skoe, Chandrasekaran, Spitzer, Wong, & Kraus, 2014; Skoe & Kraus, 2010; Skoe, Krizman, Spitzer, & Kraus, 2013; Sonnadara, Alain, & Trainor, 2006; Tervaniemi et al., 2006), despite the technical challenge of recording noninvasively and correctly locating the source of a signal originating from such profound regions of the human brain (Bidelman, 2018; Coffey, Herholz, Chepesiuk, Baillet, & Zatorre, 2016; Coffey, Musacchia, & Zatorre, 2017). This evidence of early deviance detection is most interesting, considering hierarchical-inference hypothesis of predictive coding was in its inception formulated in terms of backward and forward connections between layers and areas of the cortex (Bastos et al., 2012; Friston, 2005). Subcortical involvement is thus somewhat unexpected, even if participation of subcortical structures was never explicitly discarded, or was even foreseen by some authors (Auksztulewicz & Friston, 2016), to the extent that the complex computational machinery of the subcortical auditory system has even led to the speculation of a comparable role of the IC and the primary visual cortex (King & Nelken, 2009). The IC is the auditory center in the midbrain where nearly all ascending pathways converge before sending information to the AC via the thalamus. Excitatory, inhibitory, and neuromodulatory projections originating in the auditory brainstem and cortical regions, as well as nonauditory centers, converge in the IC (Malmierca, 2015). This could provide IC neurons with the necessary inputs to be able to integrate information over time through changes in the efficiency of their synaptic connections based on their history of activation. Finding prediction error signaling as early as the auditory midbrain implies that this generative mechanism of hierarchical inference could be evolutionarily old and deeply rooted in the architecture of the auditory system.

We have seen that most of the deviance-detection activity elicited by the oddball paradigm in the IC cortices can be accounted for by repetition suppression. As auditory information flows up the auditory pathway, the prediction error component keeps growing in proportion (Parras et al., 2017). Starting off in the auditory midbrain with the smallest index of prediction error at the IC cortices, it increases at the level of the nonlemniscal auditory thalamus. Another enlargement ensues when the signal reaches the lemniscal fields of the AC, or primary areas. Finally, it grows again until it is able to explain 50% or more of the overall deviance-detection activity recorded in the nonlemniscal fields of the AC, or belt areas (Figure 5; Parras et al., 2017).

Emergence of prediction error along the auditory hierarchy. (a) Median normalized tone-evoked responses (lines indicate

The proportion of prediction error grows even larger when the animals are awake (Figure 5(c); Cai, Richardson, & Caspary, 2016; Parras et al., 2017) and aged (Cai et al., 2016), as well as when the stimulation has low intensities (Figure 5(d); Parras et al., 2017). When awake mice were presented tone sequences at a low intensity, prediction error reached up to 80% of the overall deviance-detection activity recorded in the nonlemniscal AC (Parras et al., 2017). In accordance with the predictive coding principles, insofar as anesthesia did not efface the trace of prediction error from the neuronal activity of any station, it can be assumed that the generative mechanism of hierarchical inference is automatic and preattentive. Nevertheless, the larger prediction error proportions detected in awake rodents suggest that the state of consciousness, alertness, and attention may play an important role in its modulation. Interestingly, there was an unexpected enhancement of prediction error when the intensity of the stimulation was low. This suggests that the generative mechanism of hierarchical inference may play a crucial role in facilitating perceptual saliency. When perception must be accomplished under challenging sensory conditions, the increased gain of prediction error in the whole auditory system plausibly aids stimulus discrimination (Parras et al., 2017). This saliency facilitation may attain increasing importance with aging, as top-down influences could compensate for degradation and impairments of ascending acoustic information in older individuals (Cai et al., 2016).

The Subcortical Contribution to Deviance Detection

As mentioned previously, auditory SSA was first discovered in A1, and initially it was thought to be absent in subcortical auditory nuclei (Ulanovsky et al., 2003). Added to the cortical origin of MMN and the cortical formulations of predictive coding, authors originally interpreted deviance detection as a purely cortical activity. This cortical nature had to be revisited and reconceptualized after the discovery of SSA in the IC (Ayala et al., 2015; Ayala & Malmierca, 2015, 2018; Duque et al., 2012, 2016; Duque & Malmierca, 2015; Malmierca et al., 2009; Parras et al., 2017; Patel et al., 2012; Pérez-González et al., 2005, 2012; Pérez-González & Malmierca, 2012; Valdés-Baizabal et al., 2017; Zhao et al., 2011) and MGB (Anderson et al., 2009; Anderson & Malmierca, 2013; Antunes et al., 2010; Antunes & Malmierca, 2014; Bauerle et al., 2011; Duque et al., 2014; Parras et al., 2017). Significant and strong deviance detection activity in the form of SSA appeared prominently in the IC cortices (Malmierca et al., 2009), the MGD, and even more intensely in the MGM (Antunes et al., 2010). The nonlemniscal divisions of subcortical nuclei encompassed most of the neurons showing complete SSA, while lemniscal neurons tended to display only partial and rather poor levels of SSA (Antunes et al., 2010; Malmierca et al., 2009). As a consequence, population levels of subcortical deviance detection were substantially higher in the nonlemniscal divisions. Furthermore, positive prediction error in the subcortical auditory system was found only in the nonlemniscal divisions (Parras et al., 2017).

The alleged cortical generation of deviance detection was not completely dismissed after the existence of SSA was demonstrated in subcortical stations. It was suggested that subcortical traces of deviance detection could be imposed by the cortex (Nelken & Ulanovsky, 2007) given the massive corticocollicular projections that the IC cortices receive and the impressively dense corticothalamic projections (Figure 3) that outmatch the thalamocortical output by a factor of 10 (Malmierca, Anderson, & Antunes, 2015). Descending projections must necessarily exert at least a considerable modulatory function (Ayala et al., 2015), but the prime source of deviance detection cannot be pinned down just by investigating connectivity. To address this question, studies of reversible deactivation of the AC using a cooling technique were conducted while recording the MGB (Antunes & Malmierca, 2011) and the IC (Anderson & Malmierca, 2013). The general results demonstrated that the AC clearly modulated the firing rate of the nonlemniscal neurons in a gain-control manner (Malmierca et al., 2015; Pérez-González et al., 2012), helping to increase the contrast between standard and deviant stimuli by affecting the discharge rate to both proportionally (Ayala, Pérez-González, & Malmierca, 2016; Duque, Ayala, & Malmierca, 2015; Pérez-González & Malmierca, 2012). However, the overall subcortical SSA levels and dynamics remained mostly unaffected by cortical deactivation, with only about half of the adapting IC neurons (Anderson & Malmierca, 2013) and almost none in the MGB (Antunes & Malmierca, 2011) showing a significant change in their SSA sensitivity. It would be very interesting to test how cortical deactivation specifically affects the prediction error component of subcortical SSA in future studies.

In light of these results, it is more plausible that deviance detection could be generated de novo at the intrinsic microcircuitry of each auditory station (Ayala & Malmierca, 2013). The great functional diversity of individual neurons suggests they perform as differentiated processing units. Some responses show partial or even absolute repetition suppression but no prediction error at all. Other responses contain partial proportions of repetition suppression and prediction error. Some neurons do not exhibit significant deviance detection despite being in a nonlemniscal division (Parras et al., 2017). Functionally distinct neurons undergo dissimilar effects when subjected to pharmacological manipulation, for example, by cholinergic modulation (Ayala & Malmierca, 2015). These data dovetail with an intrinsic de novo generation of deviance detection. The interaction of neurons with specialized computing roles, arranged in a local hierarchical network, could suffice to extract features and hold representations. Deviance detection in response to unpredictable events would build up as information flows throughout successive neuronal networks and processing stations along the auditory pathway.

Hence, predictive activity emerges from the interaction of neuronal networks hosted in different brain regions, something that must require a delicate balance of neurotransmitters and neuromodulatory influences to coordinate. Human studies on deviance detection have identified the influence of several neurotransmitter systems in MMN (Garrido, Kilner, Stephan, et al., 2009). In animal models, the microiontophoresis technique allows to permeate the vicinity of a recorded neuron with neurotransmitters and modulators that activate (agonists) or block (antagonists) certain membrane receptors, yielding measurable synaptic effects. By means of this precise neuropharmacological manipulation, many SSA studies have characterized the contribution of some of those membrane receptors to the generation of subcortical deviance detection. In the following subsections, we discuss some insights regarding this matter that might be of hypothetical interest to the predictive coding interpretation, despite the limitations imposed by the fact that none of these studies has used the many-standards or cascade controls thus far. Future research may use the proposed methodology to address the influence of neuromodulation over prediction error specifically.

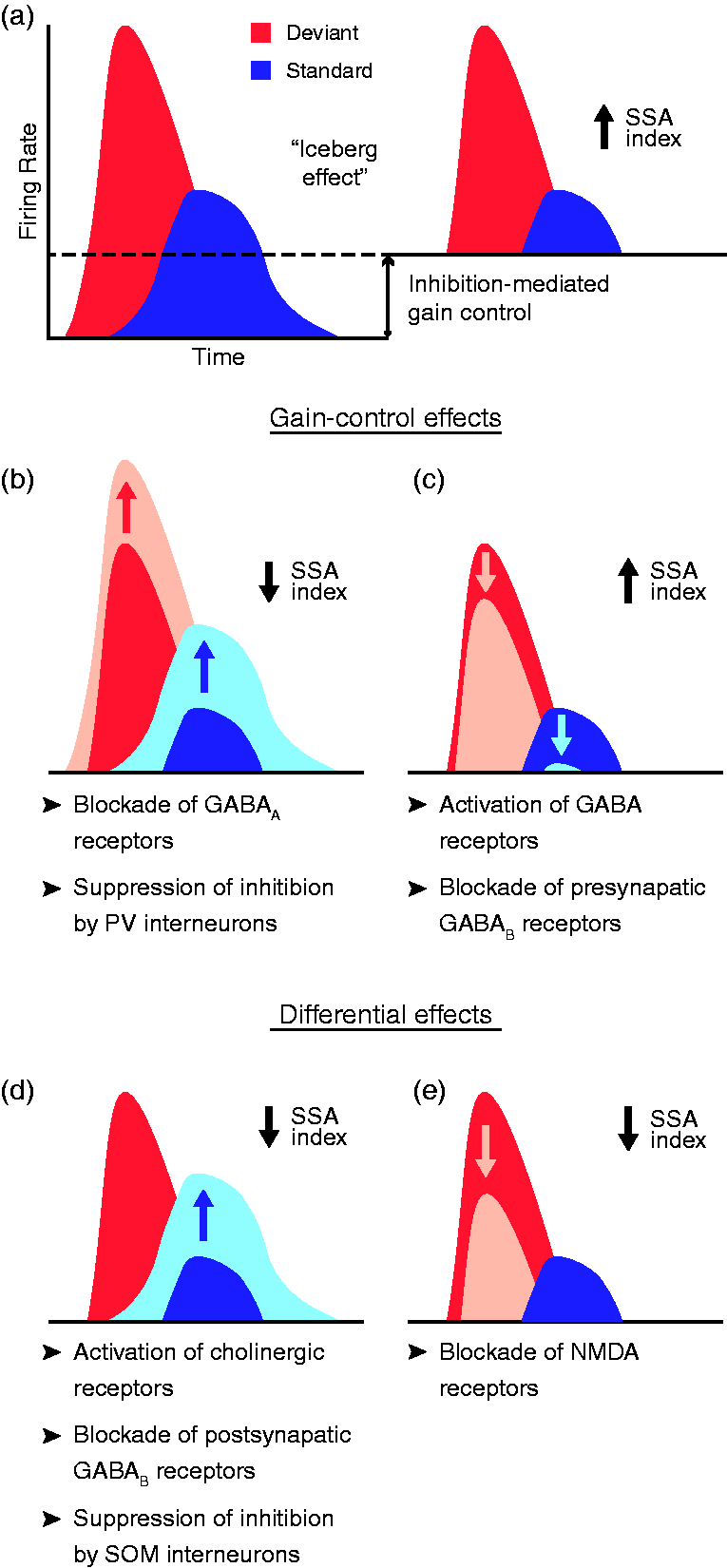

GABAergic Neuromodulation: A Gain Control of Deviance Detection

Synaptic inhibition must be essential for auditory deviance detection, inasmuch as it is responsible for “sculpting” the excitatory activity of the brain (Capano, Herrmann, & De Arcangelis, 2015). γ-Aminobutyric acid (GABA) is the chief inhibitory neurotransmitter in the mammalian central nervous system, binding to two main classes of receptors. GABAA receptors are ionotropic, part of a ligand-gated ion channel. GABAB receptors, on the other hand, are metabotropic receptors, regulating the opening or closing of ion channels via intermediate G proteins. In addition to GABA, glycine is another inhibitory neurotransmitter that can be found in the mammalian brainstem.

Several studies have thoroughly unraveled the role of GABAergic inhibition in subcortical deviance detection using the microiontophoresis technique. The application of the antagonist gabazine in the rat IC (Pérez-González et al., 2012) and MGB (Duque et al., 2014) has demonstrated the strong effect of inhibition exerted through GABAA receptors, which regulates the postsynaptic membrane potential (Sivaramakrishnan et al., 2004). When GABAA receptors were blocked, the general responsiveness of the neuron increased, reducing the proportional difference between deviant and standard responses, thus dampening deviance detection (Figure 6(b)). Conversely and coherently, the injection of the endogenous agonist GABA or the selective GABAA-receptor superagonist gaboxadol increased SSA levels (Duque et al., 2014). This suggests that subcortical deviance detection is modulated by a gain-control mechanism mediated by GABAA receptors that facilitates the relative saliency of unpredicted auditory events over the redundant ones, as in the so-called Effect of pharmacological and inhibitory manipulation on SSA index. (a) Schematic representation of the iceberg effect, with a dashed line representing the amount of activity reduced by inhibition. In the absence of inhibition (left), the higher excitability of the neuron yields larger tone-evoked firing rates, thus reducing the relative difference between the response to deviant and standard stimuli. Inhibition reduces both tone-evoked responses (right), increasing the deviant-to-standard ratio and thus enhancing SSA. (b, c) Gain-control effects, that is, effects affecting overall excitability of neurons that produce a change in SSA index, identified as of date via indicated manipulations. (d, e) Manipulations that yield a decrease in SSA index because of the differential effect exerted at the standard- or deviant-evoked responses. Albeit simplified for clarity in figure, this does not mean that the manipulation exerts exclusive effects on deviant or standard responses. Rather, a significantly much larger effect is observed at one that does not generalize to the other, even if the latter does not remain completely unaffected.

Some significant modulatory effects on deviance detection have been observed through pharmacological manipulation of GABAB receptors, although they are not as evident and profound as those mediated by the GABAA type. In the rat IC, the blockade of presynaptic GABAB receptors prompted a decrease of the overall neuronal excitability, yielding a subtle increase of SSA (Figure 6(c)). Conversely, the blockade of postsynaptic GABAB receptors reduced repetition suppression (Figure 6(d)), thereby reducing overall SSA (Ayala & Malmierca, 2018). Because GABAA receptors are ionotropic, blocking them rapidly affected deviance detection, while the effect of metabotropic GABAB receptors was slower (Ayala & Malmierca, 2018) because they are coupled to ion channels through second messengers (Mott, 2015). In the rat MGB, no significant differential effects were yielded by GABA or gaboxadol applications, even though gaboxadol does not bind to GABAB receptors (Bowery, Hill, & Hudson, 1983) while GABA activates both types (Duque et al., 2014). Taken together, in addition to the fact that the region of the MGB in which greater levels of SSA are observed, the MGM (Antunes et al., 2010), lacks GABAB receptors (Smith, Bartlett, & Kowalkowski, 2007) suggests that GABAA receptors may be the ones playing a pivotal role on inhibition-mediated modulation of deviance detection, while GABAB receptors might carry out auxiliary fine adjustments (Duque et al., 2014).

Glycinergic inhibition, on the other hand, is unlikely to play an eminent role in deviance-detection modulation. Pharmacological manipulation of glycine-mediated inhibition in the rat IC produced paradoxical effects on SSA (Ayala & Malmierca, 2018), which is not surprising considering that glycinergic receptors are mainly expressed in the lemniscal IC (Choy, Bishop, & Oliver, 2015; Merchán, Aguilar, López-Poveda, & Malmierca, 2005). Furthermore, rat MGB does not even express glycinergic receptors (Aoki, Semba, Keino, Kato, & Kashiwamata, 1988; Friauf, Hammerschmidt, & Kirsch, 1997), so its implication in deviance detection might be secondary, if any.

Finally, several combinations of GABAA, GABAB, and glycinergic antagonists were applied to test the effects of blocking multiple inhibitory receptors synchronously. Simultaneous coapplications of inhibition antagonists yielded evident augmented effects, generating a gradual increase of neuronal responsiveness that only affected significantly the standard-evoked response. The exacerbated decline of repetition suppression particularly, as a result of a combined application of inhibition antagonists, revealed the importance of a finely balanced and coordinated interplay of inhibitory receptors in deviance detection. Local inhibition could account for about half of the relative difference between the responses to standard and deviant stimuli, although it could not fully account for the generation of deviance detection (Ayala & Malmierca, 2018). This disposition seems to be unfolding a progression of consecutive inhibitory filters of redundant auditory information before reaching AC, emphasizing once again the fundamental contribution of subcortical processing in auditory predictive coding.

Cholinergic Modulation: Tuning Repetition-Sensitivity in Neurons

Cholinergic projections are known to play an important role in arousal, attention, and memory. Human MMN literature has suggested that cholinergic modulation favors the encoding of ongoing stimulation (Hasselmo & McGaughy, 2004; Jääskeläinen, Ahveninen, Belliveau, Raij, & Sams, 2007; Moran et al., 2013; Sarter, Hasselmo, Bruno, & Givens, 2005) and that it enhances responses to afferent sensory input in the AC (Hsieh, Cruikshank, & Metherate, 2000; Metherate & Ashe, 1993), implying that acetylcholine (ACh) could play an important role in deviance detection (Ranganath & Rainer, 2003).

This hypothesis has been tested in a microiontophoresis study in the rat IC (Ayala & Malmierca, 2015) using ACh chloride to activate the two main kinds of cholinergic receptors: nicotinic (ionotropic) and muscarinic (metabotropic) receptors. The infusion of the cholinergic agonist dampened repetition suppression (Figure 6(d)), but only in neurons exhibiting intermediate SSA levels (Ayala & Malmierca, 2015). In other words, ACh application prompted an increase in standard-evoked activity that did not generalize to the deviant-evoked responses. A decrease in deviance detection induced by cholinergic input would cohere with the lower levels of SSA observed in awake animals (Duque & Malmierca, 2015; von der Behrens et al., 2009) in which ACh levels are higher (Kametani & Kawamura, 1990; Marrosu et al., 1995). But concurrently, that reduced deviance detection may come with a relatively larger prediction error component (Parras et al., 2017). The precise relationship of components could be measured by including the aforementioned control sequences during the pharmacological manipulation in future studies. Whatever the case, it is apparent that cholinergic modulation in the IC contributes to persistence of the encoding of regular acoustical stimulation by decreasing repetition suppression (Ayala & Malmierca, 2015).

Interestingly, the excitability of neurons lacking significant SSA or displaying extreme SSA was mostly unaffected by cholinergic modulation, implying the existence of at least two types of deviance-detection units in the cortices of the IC. Neurons exhibiting complete SSA could act as hard static filters of redundant information, insensitive to ACh modulation. On the other hand, neurons showing partial SSA could intervene as a finer dynamic filter of auditory information, influenced by contextual and global brain states, such as deep sleep, wakefulness, attention, or arousal (Ayala & Malmierca, 2015). The presence of such diversity in the neuronal context-driven behavior further speaks in favor of a rich microcircuitry hosting populations of functionally heterogeneous neurons, which hierarchically interconnected should be capable of carrying out predictive coding already at the level of the nonlemniscal IC.

In addition, preparations with antagonists for the two types of cholinergic receptors were also examined. Scopolamine was used for blocking muscarinic receptors, and mecamylamine for the nicotinic receptors. As expected, affected neurons tended to augment their SSA levels for both cholinergic antagonists, but only scopolamine exhibited a significant increase at population level. Thus, muscarinic receptors play a prominent role in the delicate cholinergic modulation, most likely via M1-receptor subtype (Ayala & Malmierca, 2015). The activation of the M1-type receptor induces changes in potassium conductance that could act as an activity-dependent adaptation mechanism (Abolafia, Vergara, Arnold, Reig, & Sanchez-Vives, 2011; Sánchez-Vives, Nowak, & McCormick, 2000a, 2000b), making K+-mediated adaptation a potential mechanism underlying repetition suppression (Abolafia et al., 2011; Ayala & Malmierca, 2015; Malmierca et al., 2014). However, these results cannot be extrapolated to other structures of the auditory pathway, due to the different sources of cholinergic projections. The cholinergic input to the IC comes from the pontomesencephalic tegmentum (Motts & Schofield, 2009; Schofield, Motts, & Mellott, 2011), while the main source of ACh in AC is the basal forebrain (Bajo, Leach, Cordery, Nodal, & King, 2014; Edeline, Hars, Maho, & Hennevin, 1994; Zaborszky, van den Pol, & Gyengesi, 2012), so cortical testing is required.

Endocannabinoids: Modulating the Modulators

Endocannabinoids have been shown to play a role in short-term neural plasticity (Castillo, Younts, Chávez, & Hashimotodani, 2012), so their retrograde signaling could be involved in the modulation of deviance detection at the neuronal level. This was demonstrated by the application of two different agonists of CB1 cannabinoid receptors, anandamide (intravenously) and O-2545 (microiontophoretically). Both systemic and local injections prompted a decrease of repetition suppression in a subset of neurons in the rat IC (Figure 6(d); Valdés-Baizabal et al., 2017). The blockade of CB1 receptors, via microiontophoretic application of the antagonist AM251, leads to nonsignificant population effects. Nevertheless, there was a coherent tendency of some neurons to strengthen their repetition suppression (Valdés-Baizabal et al., 2017).

Those effects could be due to the retrograde modulation of inhibitory and excitatory inputs by cannabinoids, well described along the auditory pathway for both glutamatergic and GABAergic synapses (Zhao, Rubio, & Tzounopoulos, 2008). It is likely that IC neurons showing cannabinoid-mediated modulation received inhibitory input from GABAergic neurons expressing CB1 receptors in their presynaptic terminals (Merchán et al., 2005). The application of CB1 agonists would decrease GABA release of presynaptic inhibitory neurons, thereby increasing the activity and reducing SSA of the recorded postsynaptic neurons (Valdés-Baizabal et al., 2017). A synergistic activity of the endocannabinoid system with other neuromodulators is also a possibility. In any case, the degree of cannabinoid-mediated modulation would depend on the strength and nature of the inputs each neuron receives (Valdés-Baizabal et al., 2017).

The Cortical Contribution to Deviance Detection

Deviance detection and prediction error are widespread in the AC. Yet, great differences can be observed between the neuronal response coming from lemniscal (primary or core) and nonlemniscal (secondary or belt) fields of the AC. On one hand, the primary AC is the first station in the lemniscal pathway to reliably exhibit deviance detection. SSA in lemniscal fields manifests more robustly in the late component of the neuronal response, as repetition suppression tends to almost abolish the tail of the standard-evoked responses while allowing the persistence of much of the onset component (Figure 7(a); Chen et al., 2015; Hershenhoren et al., 2014; Nieto-Diego & Malmierca, 2016; Taaseh et al., 2011; Ulanovsky et al., 2003, 2004). Prediction error, also evident at the late component (Chen et al., 2015), seems to account for about 25% of the overall deviance-detection activity recorded in the lemniscal AC (Figure 5; Parras et al., 2017). That is a bigger proportion than that found in the nonlemniscal divisions of subcortical nuclei, but only up to half of the component observed in its nonlemniscal counterpart (Figure 5; Parras et al., 2017), emphasizing the hierarchical disposition that exists between lemniscal (core) and nonlemniscal (belt) auditory fields.

Variation of SSA index throughout time and cortical fields. (a) Grand-average multiunit responses (baseline-corrected firing rate, mean ±

On the other hand, the secondary AC is at the top of the auditory nonlemniscal pathway (Figure 4) and is where deviance detection and prediction error exhibit their uppermost expression. The belt fields of the AC show the greatest population indices of deviance detection (Figure 5), hosting a rich variety of context-driven responses, as it is characteristic of nonlemniscal neurons. SSA in the belt fields is not only the highest but also the swiftest in the AC, insofar as repetition suppression obliterates the standard-evoked response almost completely from its onset (Figure 7(a), (b); Nieto-Diego & Malmierca, 2016). Nonlemniscal AC is also the only part of the auditory system where prediction error equalizes or supersedes repetition suppression as the main component of deviance detection, with population ratios of 50% in anesthetized preparations (Figure 5(a), (b)) that swell up to 80% when stimulation intensity is near threshold (Figure 5(d)) or when animals are awake (Figure 5(c); Parras et al., 2017). This suggests that the nonlemniscal AC hosts the highest order populations of neurons within the auditory system. However, it is probable that the generative mechanism of hierarchical inference of auditory information is not exclusively constrained to the auditory system. Actually, the implication of areas beyond the auditory system is expected, continuing the neural processing hierarchy in charge of extracting increasingly abstract relationships between stimuli, as well as assembling multisensory perceptions by crossing information with other neural systems. As a matter of fact, local field potential (LFP) and ERP data from animal models have already pointed out the implication of prefrontal cortices in this auditory deviance-detection mechanism (Imada, Morris, & Wiest, 2013), as previously indicated by the prefrontal sources of the human MMN and the P3 (Dürschmid et al., 2016).

Participation of Cortical Microcircuitry: The Role of Interneurons

Cortical inhibitory interneurons are thought to play a crucial role in information processing and to shape how information is represented and transmitted within and between cortical neuronal populations (Yuste, 2015). The activity of excitatory neurons is shaped by feedback and recurrent networks that interweave complex excitatory-inhibitory interactions. Inhibitory neurons are remarkably diverse in their morphology and physiological properties, as well as in their complex connectivity patterns, targeting not only excitatory neurons but also other interneurons (Blackwell & Geffen, 2017; Isaacson & Scanziani, 2011). The two most common classes of GABAergic neurons are the parvalbumin- (PV) and somatostatin- (SOM) positive interneurons. PVs predominantly target the cell bodies of excitatory pyramidal neurons (Wang, Gupta, Toledo-Rodriguez, Wu, & Markram, 2002), whereas the majority of SOMs target the distal dendrites of excitatory pyramidal neurons (Figure 8(a); Ma, Hu, Berrebi, Mathers, & Agmon, 2006). Because PV and SOM in the AC have proven to contribute to tone frequency representation and behavioral selectivity in the AC (Blackwell & Geffen, 2017), and because cortical SSA is thought to emerge from a combination of thalamocortical depression and intracortical inhibitory–excitatory circuit effects (Nelken, 2014), the participation of interneurons in deviance detection has captured some attention as of late (Chen et al., 2015; Natan et al., 2015, 2017).

Interneurons exert inhibitory-mediated amplification effects over excitatory pyramidal neurons. (a) Schematic diagram showing only three common elements of the otherwise intricate cortical microcircuitry. PV-positive interneurons mostly inhibit perisomatic regions of pyramidal neurons, whereas SOM-positive interneurons mainly target the distal dendrites. In addition, both types of interneurons inhibit each other while targeted by excitatory recurrents of pyramidal neurons. The inhibition of both types of interneurons contributes to the amplification of the deviance detection signal, but in different manners. (b) Optogenetic photosuppression of PV-mediated inhibition leads to a nonspecific increase of the neuronal response, unveiling its gain-control action. (c) Optogenetic photosuppression of SOM-mediated inhibition increases the standard-evoked response, revealing a differential effect exerted on repetition suppression.

In an optogenetic preparation using a multichannel probe perpendicularly crossing all cortical layers of mouse A1 for recording neuronal responses (Natan et al., 2015, 2017), the selective photosuppression of either PVs or SOMs found that both interneurons were amplifying deviance detection, but in distinctive manners. On one hand, when PV interneurons were deactivated, putative excitatory responses to both deviant and standard sounds increased equally. As PV interneurons inhibit perisomatic regions of pyramidal neurons, the general suppression of excitatory responses disregarding stimulus history amplifies SSA levels in a gain-control manner (Natan et al., 2015), which resembles the gain control of subcortical SSA exerted mainly by GABAergic receptors (Figure 8(b); Ayala & Malmierca, 2018; Duque et al., 2014; Pérez-González et al., 2012). Furthermore, even if also present in the supragranular and infragranular layers, the strongest effect of PVs on SSA was recorded in the granular layer (layer IV), where thalamocortical inputs are delivered (Figure 4; Natan et al., 2015). This is consistent with the relative distribution of PV interneurons in the AC and suggests a continuity of the gain-control mechanism of deviance detection over all levels of the auditory hierarchy.

On the other hand, when SOM interneurons were deactivated, differential effects were observed. Only the excitatory response to repetitive stimuli increased, while deviant-evoked responses remained unaffected, resulting in a decrease of SSA levels (Figure 8(c)). Although PV-mediated inhibition was constant throughout the tone train, SOM-mediated inhibition increased with the repeated presentations of the standard (Natan et al., 2015, 2017). Furthermore, the deactivation of SOM interneurons after several repetitions of the standard generated disinhibitory effects, which did not occur when PV interneurons were photosuppressed (Natan et al., 2017). Therefore, these data suggest that SOM interneurons modulate repetition suppression in excitatory neurons of A1 by exerting inhibition and short-term plasticity at their distal dendrites (Figure 8(c)). In fact, the strength of SOM-mediated inhibition on excitatory responses correlates with the magnitude of neuronal deviance detection (Natan et al., 2017). In spite of the great differences between auditory and visual systems, is worth mentioning an analogous preparation in the mouse primary visual cortex (Hamm & Yuste, 2016). In this study, pharmacogenetic silencing of SOM interneurons yielded similar reductions on deviance detection, implying its role is preserved across sensory modalities. However, the use of the many-standards control in this study revealed that this reduction was affecting mainly the prediction error component, leaving repetition suppression rather intact (Hamm & Yuste, 2016). This apparent discrepancy could be addressed by including many-standards or cascade controls in future SSA studies in the AC.

Moreover, both PV and SOM interneurons exhibited SSA themselves (Natan et al., 2015), something that could also be corroborated with precise whole-cell recordings in layer 2/3 of mouse A1 (Chen et al., 2015). SSA was especially rapid and pronounced in SOM interneurons, while in PV interneurons, the decrease of the response was more gradual (Chen et al., 2015; Natan et al., 2015). It is somewhat surprising to find that circuit elements, such as PV or SOM interneurons, are also repetition-sensitive, while still capable of modulating deviance detection in excitatory neurons. Nevertheless, considering excitatory and inhibitory neurons form tight recurrent networks in AC, it is possible to hypothesize that adapting interneurons can amplify deviance detection in excitatory neurons through differential postsynaptic integration by excitatory neurons (Natan et al., 2015). Consequently, GABAergic interneurons present in AC seem to play a prominent role regulating cortical deviance detection, thus adding yet another layer to the consecutive gain-control GABA-mediated mechanisms already present in subcortical deviance detection (Ayala & Malmierca, 2018; Duque et al., 2014; Pérez-González et al., 2012).

Linking Cortical SSA and MMN: In the Right Place at the Right Time

Deviance-detection activity and prediction error signals are generated all over the AC, as consistently confirmed by copious evidence from ERP studies analyzing middle-latency responses (MLRs) and long-latency responses (MMN) in human participants, as well as from cellular recordings (SSA) in animal models (Grimm et al., 2016). When SSA was first discovered in A1 (Ulanovsky et al., 2003), there were two initial concerns regarding its link with MMN: its temporal development and its anatomical location. The swift emergence of cortical SSA seemed relatively early when compared with the long latencies observed in MMN, neither A1 fitted well with the topography of MMN (Figure 2(b)), whose sources are usually pinned down within the region of the secondary AC in humans (Alho, 1995), cats (Pincze, Lakatos, Rajkai, Ulbert, & Karmos, 2001), and rats (Shiramatsu, Kanzaki, & Takahashi, 2013).

However, recent confirmation of more robust and enduring SSA (Nieto-Diego & Malmierca, 2016), as well as larger prediction error (Parras et al., 2017), emerging from cortical fields beyond A1 has better accounted for the generation of MMN. Although some deviance detection has been identified in the late component of the response of A1 neurons (Chen et al., 2015; Nieto-Diego & Malmierca, 2016), this late component is overall stronger and lasts longer (over 200 ms after stimulus onset) within nonlemniscal AC fields (Figure 7(a), (b)), also fitting better anatomically with the sources of MMN (Nieto-Diego & Malmierca, 2016). To put it simple, nonlemniscal cortical SSA and MMN are happening at the same time and place, further indicating both are two manifestations of the same physiological event.

Furthermore, LFPs were simultaneously recorded along the multiunit activity to provide an intermediate measure between cellular SSA and scalp-recorded MMN (Nieto-Diego & Malmierca, 2016; Parras et al., 2017). The difference wave extracted from the LFPs correlated in time and strength with the deviance detection (Nieto-Diego & Malmierca, 2016) and prediction error (Parras et al., 2017) observed in the multiunit recordings, confirming greater levels and longer persistence of both in nonlemniscal fields. These difference waves showed the same morphology in all cortical fields, with a fast negative deflection (Nd) followed by a positive one (Pd; Figure 7(c)). On one hand, the Nd occurred earlier and tended to be larger in lemniscal fields than in the nonlemniscal ones, suggesting a lemniscal origin. The time course of this early deflection suggests SSA in lemniscal AC could be related with the modulations of the scalp-recorded MLRs (Figure 2(a)) that correspond to the first response of the primary AC to a deviant event, which take place previous to the occurrence of the MMN (Grimm et al., 2016). On the other hand, the Pd peaked homogeneously along the AC, so its generation must hinge on intracortical processing and reciprocal interaction between lemniscal and nonlemniscal fields, further suggesting a hierarchical processing of deviance detection and a bottom-up propagation of prediction error. Most important, the Pd tended to peak between 60 and 80 ms (Figure 7(c)), well within the range of MMN-like potentials in the rat (50–100 ms; Harms et al., 2014; Harms, Michie, & Näätänen, 2016; Jung et al., 2013; Shiramatsu et al., 2013). This synchronicity finally allows to fully overcome the discrepancies in the time course and anatomical source of the SSA and the MMN, definitively ameliorating the acceptance of cortical SSA as the neuronal substrate of MMN (Nieto-Diego & Malmierca, 2016).

Further Linking SSA and MMN: The Key Role of N -Methyl-D -Aspartate Receptors

An additional connection between SSA and MMN could be established analyzing whether neurophamacological manipulations of the different neurotransmitter systems yields analogous effects in both, as it would be the case if they are manifestations of the same physiological mechanism of deviance detection. However, the clearest and most robust neurophamacological effect identified as of date in MMN is exerted through

A first attempt using systemic subcutaneous injections of the noncompetitive antagonist MK-801 of NMDA receptors failed to find that expected impairment in awake rats (Farley et al., 2010). Recording multiunit activity from A1, a strong suppressive effect was evoked proportionally in the whole response to both standard and deviant sounds, not affecting significantly the magnitude and dynamics of SSA. The suppression of neuronal excitability increased with the dose of MK-801, but SSA remained intact. Insensitivity of A1 to the systemic blockade was also confirmed by LFPs, leading the authors to argue that SSA in A1 was independent of NMDA receptors (Farley et al., 2010). Accordingly, SSA in A1 could maybe account for the generation of the early mismatch signals detected within the MLRs (Grimm et al., 2016), but not for the MMN (Farley et al., 2010), which had to emanate necessarily from the nonlemniscal AC (Maess, Jacobsen, Schröger, & Friederici, 2007; Nieto-Diego & Malmierca, 2016; Opitz, Schröger, & Von Cramon, 2005).

Recently, nonetheless, an elegant research using local applications of NMDA-receptor antagonists showed different effects. In a study conducted in layer 2/3 of the mouse A1 (Chen et al., 2015), intracellular injections of MK-801 did not induce changes in the SSA of PV interneurons, but a drastic reduction of the responses to deviant stimuli in excitatory pyramidal neurons was registered. Rare sounds still evoked a larger response than standard stimuli, but unlike what happened during systemic applications, overall SSA in pyramidal neurons was significantly reduced (Figure 6(e)), and especially affected the late component (Chen et al., 2015). Moreover, the SSA reduction entailed the disappearance of the prediction error component (Chen et al., 2015). Discrepancies between the two experimental approaches could be caused by the fact that systemic injections (Farley et al., 2010) block NMDA receptors in all brain regions, not just in a single neuron at a time (Chen et al., 2015). Intracortical processing might be essential to the emergence of the late component in the deviant-evoked response, as well as to the generation of prediction error (Chen et al., 2015; Nieto-Diego & Malmierca, 2016; Parras et al., 2017). Therefore, intracortical processing via NMDA-mediated dendritic integration is likely to trigger the long-lasting late component of deviance detection, as well as prediction error, in the pyramidal neurons in A1. Proven its dependence on NMDA receptors, SSA shares yet another feature with MMN, strengthening the evidence of SSA and MMN as two different scales for measuring the same physiological mechanism of deviance detection.

Closing Remarks: Some Insights Attained Through a Predictive Coding Perspective

SSA is the quantified index of change in the firing rate of a neuron in response to a rare tone (deviant) randomly presented embedded in a sequence with another common tone (standard), at varying presentation probabilities (usually, 10%–30%, deviant vs. 90%–70%, standard). This definition of SSA in terms of probabilities is rather similar to the classic conceptualization of MMN recorded in the human scalp (Näätänen et al., 1978). After four decades of research, however, MMN have expanded far beyond this narrow definition to comprise any discriminable change in the organization of an ongoing stimulation, somewhat blurring its theoretical relation with the much simpler SSA. Throughout the current review, we have tried to improve this connection by reinterpreting the available evidence on SSA from a predictive coding standpoint. That integrative endeavor has provided several interesting insights that may be useful to enrich the understanding of MMN generation as well.

Definition of Main Terms in the Predictive Coding Framework.

Notwithstanding, SSA and MMN are only theoretically comparable in the context of an oddball paradigm, as the definition of SSA (Ulanovsky et al., 2003) describes the effects of representing a stimulus in the system and establishing the simple prediction that the following stimulus will be similar to the previous encoded, thereby suppressing the response to it. Thus, SSA by itself is inadequate to account for MMN signals generated in contexts where this repetition rule is not at play. SSA is an index for the violation of that particular representation, not a physiological mechanism that can be extrapolated to other stimulation contexts. The physiological mechanism is deviance detection as defined by predictive coding.

Prediction error and repetition suppression effects are confounded in the deviance-detection signal elicited by the oddball sequence. These components can be disentangled by the use of a control sequence that does not feature the standard-repetition rule, while generating a similar state of refractoriness in the system than the oddball. The proposed many-standards and cascade controls are applicable in SSA research, making possible to explain SSA in term of predictive coding empirically.

The application of control sequences has unveiled a predictive processing hierarchy in the auditory system, which roots go as profound as the cortices of the IC. The proportion of deviance detection accounted for by prediction error increases along two neuraxes in the auditory system: from lemniscal to nonlemniscal, and from the midbrain to the AC. Therefore, predictive activity is not exclusively cortical.

Within each processing station along the auditory pathway, the high heterogeneity in the functioning of single neurons exposed to deviant stimulation hinted the great importance of local microcircuitry in predictive processing. Along with the fact that deactivating the AC does not eliminate deviance detection, it follows that predictive activity must be emerging to a certain extent de novo at every level of the auditory pathway, instead of being just passed down from the cortex.

Neuromodulation exerts a tangible influence on the reciprocal interaction of processing levels that leads to deviance detection. The attenuating effect over repetition suppression that ACh modulates mainly via M1 muscarinic receptors is a good example of that, among others.

Inhibitory activity is key for an efficient deviance detection, unfolding gain-control mechanisms on predictive activity all over the auditory pathway, as well as facilitating repetition suppression.

Summary

After 15 years of thorough research since SSA was first discovered in the auditory cortex, there is a growing body of evidence indicating SSA is the neuronal correlate of MMN. It has been demonstrated that both cortical SSA and MMN occur over a similar time course and in similar cortical locations and that are both affected by the manipulation of NMDA receptors. Therefore, SSA and MMN must be, respectively, the microscopic and macroscopic manifestations of the same physiological mechanisms of deviance detection. Thus, the adoption of a common conceptual framework and shared nomenclature would be recommendable for explaining both dimensions of the same perceptual phenomenon. The predictive coding framework provides this needed integration by postulating a physiological generative mechanism of hierarchical inference founded on plastic changes in synaptic connectivity. That generative mechanism is not exclusively cortical as originally thought, inasmuch as new experimental approaches to SSA inspired in MMN research have unveiled the progressive emergence of prediction error signals from the IC and auditory thalamus. Many recent studies have highlighted the contribution of subcortical nuclei and the nonlemniscal pathway to the generation of SSA and prediction error. The emergence of prediction errors in a hierarchically organized network with roots in the IC expands the notion of predictive coding to subcortical brain structures and also challenges the prevailing corticocentric view of complex auditory processing and perceptual organization.

Footnotes

Acknowledgments

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work has been funded by Spanish MINECO (SAF2016-75803-P). GVC held a fellowship from the Spanish MICINN (BES-2017-080030).