Abstract

There is limited information on efficacy and durability of second-line antiretroviral therapy (2NL) beyond 12 months in resource-limited settings. A total of 73 patients were enrolled into a prospective 2NL observational cohort in Nigeria. Second-line antiretroviral therapy consisted of lopinavir/ritonavir plus nucleoside reverse transcriptase inhibitors. Time on 2NL ranged from 15 to 31 months. Genotypes were retrospectively done and not available to guide second-line regimen choice. At enrollment, median CD4 count was 121 cells/mm3, and median time on first-line antiretroviral therapy (1SL) was 24 months. At 6 to 9 months on 2NL, 72.6% (intention to treat [ITT]) and 88.3% (on treatment [OT]) had an undetectable viral load (UDVL). At 12 months, 65.8% (ITT) and 90.57% (OT) had UDVL. At >12 to 24 months and at >24 months, 57.5% (ITT) and 91.3% (OT) had UDVL. No statistically significant association was observed between CD4 at 2NL start, sex, genotypic sensitivity score of 2NL, or tenofovir (TDF) use in 1SL and viral suppression. Two patients developed major protease inhibitor mutations while on 2NL. We observed a high degree of viral suppression at 12 months and little loss of viral suppression thereafter.

Introduction

There are multiple antiretroviral (ARV) treatment programs in Nigeria, with more than 300 000 patients on therapy. Nigerian national treatment guidelines 1 are largely based on World Health Organization (WHO) guidelines, 2 with the choice of drugs consisting of those made available by donor agencies and implementing partners.

Antiretroviral treatment programs in resource-limited settings do not routinely monitor viral load (VL) 3 but rather use VL to confirm a strong suspicion of clinical or immunologic failure. Immunological and clinical treatment failure criteria as defined in the WHO guidelines are poorly predictive of virological failure of first-line regimens. In the absence of VL testing, the use of these criteria may result in delayed detection of treatment failure. 4 –7 Given that immunological and clinical decline occur temporally later than viral rebound, there can be considerable delay in identifying a failing regimen. 5,8 Consequently, most patients failing a first-line regimen start second-line regimens after experiencing accumulated drug resistance mutations (DRMs). 8,9 There is growing data on the efficacy of guideline-recommended second-line therapy once multiple resistant mutations have occurred, 10 –12 but there remains limited data on the ability of these regimens to maintain long-term virologic suppression. 11

The objective of the study was to describe the proportion of patients on the current recommended and accessible second-line regimens that were able to achieve and maintain viral suppression at and beyond 12 months of therapy. We also described the protease inhibitor (PI) resistance mutations that developed in patients who went on to fail their second regimen. Further defining the efficacy and safety of the current recommended second-line regimens may help to qualify the existing needs for expanded ARV drug armamentarium. These studies may also allow for a better understanding of the likelihood for effective salvage therapy for those patients failing second-line therapy in resource-limited settings.

Methods

This study recruited patients greater than 18 years of age into a prospective observational cohort between January 2008 and May 2009. Patients were followed prospectively every 3 months for 12 months from the time of enrollment. The study was conducted in Nigeria at 2 sites supported by the University of Maryland Institute of Human Virology’s ARV treatment programs: The University of Abuja Teaching Hospital Gwagwaladda and the Ecumenical Community of West Africa (ECWA) Evangel Hospital, Jos, Nigeria. The University of Abuja Teaching Hospital is a tertiary referral center and the ECWA Evangel Hospital is a secondary private, not-for-profit medical center. They both house PEPFAR-funded HIV treatment programs that provide care and antiretroviral therapy at no cost to patients. Institutional review board approval was obtained from the University of Maryland School of Medicine as well as the University of Abuja Teaching Hospital and the ECWA Evangel Hospital in Jos. The study was supported through a National Institutes of Health grant #5 U01 AI038858.

Patients who had been assessed by their clinic providers to have clinical, immunological, or virologic failure on their first-line ARV treatment regimen and who were to be switched to a second-line regimen were screened for the study. Based on WHO guidelines, clinical and/or immunologic failure was confirmed as true viral failure by a detectable VL >1000 copies/mL. Patients additionally had to have documented ART adherence >95% in the 3 months prior to screening. Informed consent was obtained from all participants either in English or in the local language per the patient’s preference. Basic demographic data, ARV treatment history, and blood samples for baseline genotypes were collected. In keeping with WHO 2006 ARV treatment recommendations, 2 second-line regimens contained lopinavir/ritonavir (LPV/r) in addition to emtricitabine (FTC) or lamivudine (3TC) as well as tenofovir (TDF) and/or zidovudine (ZDV). Patients failing a first-line thymidine analogue containing regimen were placed on a TDF second-line regimen, whereas patients failing a first-line TDF containing regimen were placed on a ZDV containing second-line regimen at the time of enrollment. At each 3-month interval visit, a history and standard of care blood tests including complete blood count, alanine aminotransferase, serum creatinine, and CD4 count were obtained as clinically indicated. At the 6th and 12th month visit, blood was also obtained for VL analysis and stored for future genotype assay. If a patient failed to receive a VL at the 6-month time period, this was obtained at the 9-month time period. Fifteen months after the study closed to enrollment (August 2010), a cross-sectional, end-of-study visit was conducted. Plasma samples for VL and genotypic resistance testing analyses were obtained, and an adherence questionnaire was administered to those patients still alive and in care.

Plasma was stored at −80°C until the time of VL and genotypic resistance testing assays. All the VL assays were processed at a single referral laboratory. The Amplicor HIV-1 monitor test kit version 1.5 (Roche, Indianapolis, Indiana) using the standard method (lower limit of detection: 400 copies/mL) was initially used, but later a switch to COBAS AmpliPrep/COBAS TaqMan HIV-1, version 2.0 COBAS AmpliPrep/COBAS TaqMan (automated) method (limit of detection: 20 copies/mL) was made. Genotypes were performed on all samples with a VL > 1000 using an in-house ABI3130xl-4 capillary array platform, the pol gene (codons 1-99 of protease and codons 1-257 of reverse transcriptase). Analysis for resistance mutations was done using the IAS-USA 2010 DRM list.

Data were first investigated using univariate analysis to describe the frequency and distribution of the outcome, primary exposure, covariates, and missing data. The crude association between CD4 count at baseline and viral suppression at 12 and 24 months was preliminarily explored using random effects models to account for clustered data. Variables with a crude association with the outcome or exposure at α .20 were investigated in multivariable regression models. Variables were retained if they were significantly associated with the odds of viral suppression or if they changed the primary exposure β estimate by more than 20%. Additionally, variables known to be confounders from the literature, even if they did not demonstrate a confounding effect in this data set, were included in the adjusted models. Models were created by adding 1 variable at a time and assessing the changes in the β estimates. Goodness of fit was assessed using Akaike information criterion to compare nested models. Time to event analysis was performed using Kaplan-Meier, and Wilcoxon rank-sum tests were used to assess significance. Kaplan-Meier curves were generated to estimate the mean time of VL failure at each time point. Viral failure was determined as a detectable viral RNA above the lower limit of detection per assay used. Intention-to-treat (ITT) analysis was conducted with all missing VL outcomes treated as failures. On-treatment (OT) analysis utilized only available viral load outcomes. All analyses were conducted using SAS 9.2 (Cary, North Carolina).

Results

Between January 2008 and May 2009, a total of 82 patients were screened and 73 were enrolled. Of the 9 screen failures, 6 had undetectable VLs (UDVLs) at screening and 3 opted not to enroll for personal reasons. In all, 67% of patients were female, and the median age was 35 years (interquartile range [IQR] 30-41). The median CD4 count at the start of second-line therapy was 121 cells/mm3 (Table 1). In all, 26 patients used TDF as part of their first-line regimen, while 45 patients had any of the following: ZDV only, stavudine (d4T) only, d4T then ZDV (or vice versa), and either ZDV or d4T then TDF. Previous ART regimens could not be fully confirmed for 2 patients. Median time on first-line regimen was 24 months (IQR 16-32 months). All patients were on a 3- or 4-drug second-line regimen; of which, all contained LPV/r and FTC or 3TC with the addition of either TDF or ZDV or both. No patients received d4T as part of their second-line regimen. Genotypic sensitivity scores (GSSs) of the second-line regimens are listed in Table 1.

Baseline Characteristics.

Abbreviations: ART, antiretroviral therapy; d4T, stavudine; IQR, interquartile range; TDF, tenofovir; ZDV, zidovudine.

There were 5 confirmed deaths, 6 patients were lost to follow-up per study criteria, and 5 additional patients prematurely discontinued from the study (Table 2). No participants reached a dose-limiting adverse drug effect or stopped therapy because of adverse drug effects. At 6 months on second-line therapy, there were 6 failures: 72.6% (ITT; n = 68; note 1) and 88.3% (OT; n = 45) of patients had a UDVL. At 12 months, there was 1 additional failure, 65.8% (ITT; n = 68; note 1) and 90.57% (OT; n = 36) had a UDVL. At both the >12- to 24-month period and >24 months period, there were 4 failures 57.5% (ITT; n = 73) and 91.3% (OT; n = 46) had UDVLs (Table 2). Patients suppressed at 6 months had 1.96 times the odds of being suppressed at 24 months compared to those that were not suppressed at 6 months (95% confidence interval 1.66-2.33).

Outcomes.

Abbreviation: LTFU, loss to follow-up.

Plasma samples were collected from patients at the time of enrollment and were stored. Genotypic resistance testing was obtained for 31 of these samples. These baseline genotypic resistance testing results were not available to guide second-line regimen choice. Of the 31 participants with baseline genotypic resistance testing, 2 had neither nucleoside reverse transcriptase inhibitor (NRTI), nonnucleoside reverse transcriptase inhibitor (NNRTI), nor PI mutations detectable. The remainder had 1 to 6 NRTI mutations. The median number of NRTI mutations was 3. Excluding the 2 aforementioned patients with wild-type virus, all had NNRTI mutations at baseline. There were no major PI resistance mutations at baseline. Genotype sensitivity score of second-line regimen was calculated using the Stanford HIV database methodology. 13 A GSS of 1 corresponds to 1 active drug in the second-line regimen; a score of 2 corresponds to 2 active drugs in the regimen and so on. In all, 22 (71%) patients had a GSS of 1, while 5 (16.1%) had a GSS of 2 and 4 (12.9%) had a GSS of 3. To be included in our study, all patients regardless of GSS had to be virologically failing (confirmed by VL testing). Patients with a GSS of 3 could represent failing patients with limited cross resistance or completely nonadherent patients. Of the 31 patients with available baseline genotypic resistance testing data, 7 of them had a TDF-based (no thymidine analogue exposure) first-line regimen. Of these 7 patients, 5 had a GSS of 2 or higher, compared with only 4 of the 24 patients with thymidine analogue containing first-line regimen having a GSS of 2 or higher.

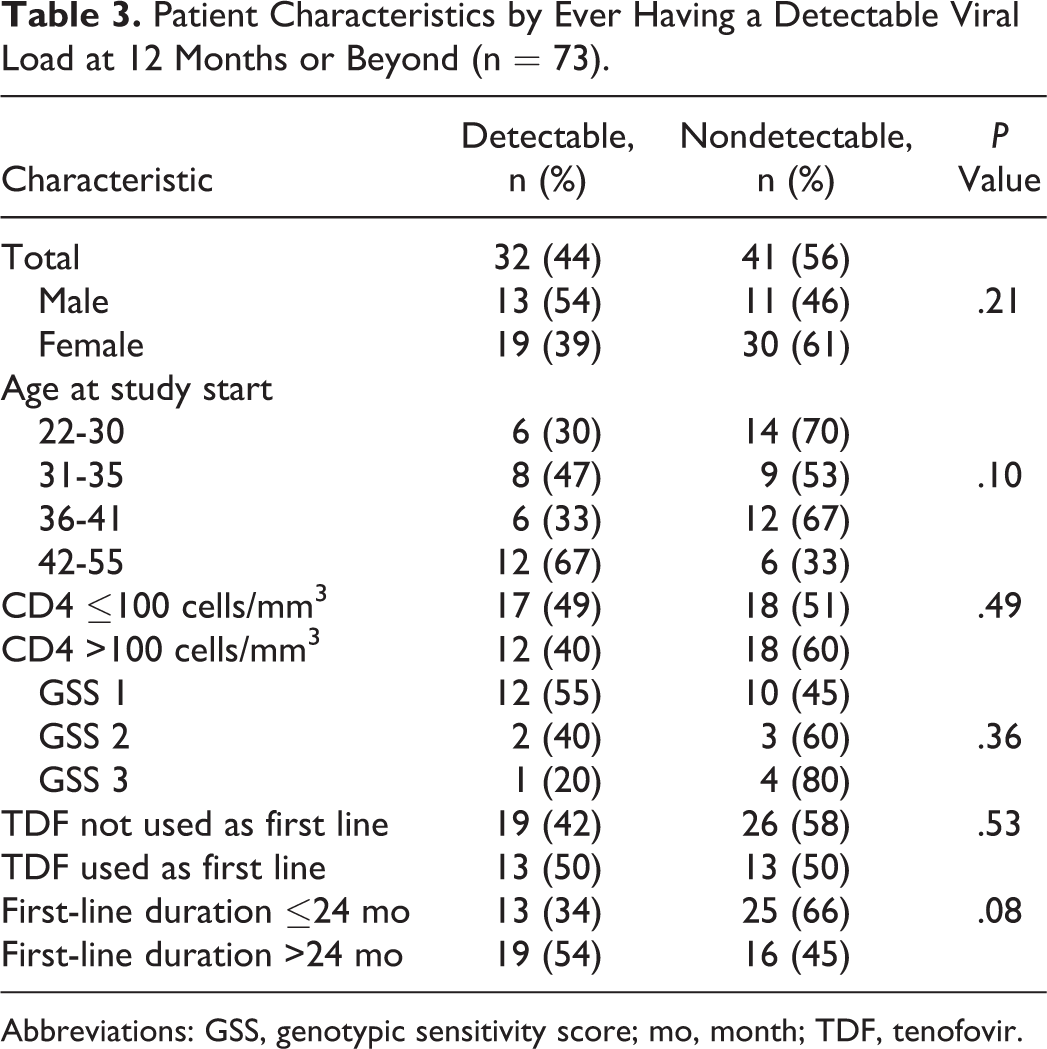

The association between time on first-line therapy and virologic suppression at or beyond 12 months approached but did not achieve statistical significance (Table 3). In addition, no statistically significant association was found between GSS at the start of second-line regimen, CD4 count at start of second-line treatment, or the use of a TDF first-line regimen and virologic suppression at 12 months or thereafter. Due to the small sample size, we additionally combined patients with a GSS of 2 or 3 into 1 group and compared them to patients with a GSS of 1 and found no statistically significant difference in time to failure (P = .52, data not shown).

Patient Characteristics by Ever Having a Detectable Viral Load at 12 Months or Beyond (n = 73).

Abbreviations: GSS, genotypic sensitivity score; mo, month; TDF, tenofovir.

Ten genotypic resistance patterns from 9 patients with VL > 1000 on second-line therapy were analyzed. Seven of those samples (including 2 from 1 patient) could be genotyped successfully. Two patients developed major protease mutations while on second-line therapy, 1 had an M46I and L76V at 6 months and the other an M46I and I54V at 6 months with the addition of I47V at 30 months. The patient had already developed multiple NRTI mutations including a T215F and K219Q and no additional NRTI mutations developed at 30 months.

At the cross-sectional end-of-study visit, additional adherence data were obtained from 30 patients. Adherence was assessed by pill count and patient interview and categorized as >95% or less. Twenty-six (87.7%) patients with available adherence data were documented as having >95% adherence. Of the 4 patients with less than 95% adherence, 1 had a detectable VL at the end-of-study visit.

Of note, the 30-month ITT survival curve in Figure 1 shows that a large proportion of patients on second-line therapy may experience failure at some point in time but will go on to become suppressed thereafter as reflected by our ITT and OT suppression rates in Table 2.

The 30-month survival curve intention to treat (ITT).

Discussion

We consider failure to be the inability to attain viral suppression below the level of assay detection at and beyond 12 months on second-line therapy. Although no patient stopped therapy due to an adverse event, the ITT outcomes showed less than 60% of patients to be alive, in care, and suppressed beyond 12 months. Overall 32 patients were deemed to have failed at or beyond 12 months (16 virologic failures, 6 lost to follow-up, 5 deaths, and 5 prematurely discontinued), representing a 56% (ITT) rate of suppression at or beyond 12 months. Our findings are consistent with other reports. A Thai study describing longer term outcomes (up to 144 weeks/3 years) of patients on second-line therapy demonstrated 65% and 93% of ITT and OT viral suppression rates (<400 copies) , respectively. 14 In a meta-analysis of retrospective second-line outcomes in low- and middle-income countries, 15 the cumulative pooled proportion of adult patients failing therapy was 23.1%, 26.7%, and 38.0% at 12, 24, and 36 months, respectively. In a study conducted in South Africa, Fox et al also describe a 77% viral suppression rate at 1 year on second-line therapy. 16

CD4 count at the time of switch to second-line regimen was not a predictor of failure in our study; however, early death and early loss to follow-up were significant causes of second-line treatment failure in our study patients. We observed 6.8% mortality and an 8.2% loss to follow-up. Of the five confirmed deaths within the cohort, 3 patients died prior to their 12-week visit, and all 5 deaths occurred prior to 28 weeks of treatment. The cause of death could be determined for only 1 patient who died of cryptococcal meningitis. Of those patients who died, the median CD4 count was less than 50 cells/mm3. A South African study reported a comparable 6% mortality and a 9% loss to follow-up at 24 weeks with a median CD4 count of 70 cells/mm3 at the start of second-line therapy for those patients who died. 17 The association between a low CD4 count at start of second-line therapy and early mortality was also reported in a Medecins San Frontieres study. 18 Regarding the 6 patients who were lost to follow-up per study protocol, we were able to confirm that 5 completely dropped out of care at the study sites. The sixth patient who was lost to follow-up was later found to still be in care at the same facility. These 5 patients were lost to follow-up by their week 12 visit. The mean CD4 count at enrollment for these 5 lost patients was 106 cells/mm3. We suspect these early losses could also represent early patient mortality. 19 As described by other researchers, these results clearly demonstrate the enormous impact that delayed recognition of first-line treatment failure has on subsequent second-line treatment outcomes. 9,17,18,20 Switching late to second-line therapy and only after a patient experiences advanced immune suppression or an AIDS-defining illness will possibly determine second-line therapy outcome regardless of the potency or GSS of the second-line regimen.

Encouragingly, there was a high degree of viral suppression for those who survived beyond 6 months. At >12 months and 12 to 24 months of therapy, ∼90% of patients who remained on treatment were virologically suppressed. Similar findings were seen in a study of shorter duration. 11 This high OT viral suppression rate occurred despite 69% of patients (for whom genotype data were available) having a GSS of 1. This observation potentially points to the potency of PI monotherapy that has been demonstrated in other studies 17,21,22 or possibly retained activity of TDF despite multiple thymidine analogue mutations (TAMs).

Although 70% of patients who did not show viral suppression at 6 to 9 months had a GSS of 1, we did not observe a statistically significant difference in viral suppression rate at or beyond 12 months of second-line therapy. Although not statistically significant, in those individuals on second-line therapy at 12 months or greater, a higher GSS was associated with higher rates of virologic suppression, suggesting decreased long-term durability in patients with a GSS of 1. Of the patients who ever had a detectable VL and GSS data at 12 months or beyond, 56% with a GSS of 1 failed compared with 40% with a GSS of 2 and 20% with a GSS of 3. The study may have lacked the power to determine an existing difference between GSS scores.

At our study sites, VL monitoring was not used routinely to determine treatment response or failure. Only patients suspected of clinical or immunologic failure according to WHO criteria were screened with VL testing to confirm true virologic failure, possibly excluding virologically failing patients who did not meet these criteria. As more routine use of VL monitoring is adopted and viral failure can be detected well prior to clinical or immunologic failure, it is probable that a higher proportion of patients will start second-line therapy prior to the onset of advanced immune suppression and prior to the accumulation of multiple DRMs. Recent guidelines also recommend the phase out of d4T and ZDV from first-line therapy. Although our study is not powered to show a difference in second-line treatment failure in patients receiving TDF versus those receiving d4T (and other TAMs), it can be surmised that there would be higher GSS in patients failing a TDF containing regimen than those failing a d4T or ZDV containing regimen, thus enhancing the efficacy of currently available second-line drug regimens. With the increasing adoption of these guidelines, it would be expected that future analyses similar to ours will reveal less second-line treatment failure resulting in mortality in the short term and accumulated drug mutations in the long term.

For those patients who fail to achieve viral suppression on second-line therapy, the evolution of LPV/r-resistant mutations remains an important clinical issue particularly with regard to future attempts at suppressive treatment. The development of major PI resistance mutations was rare in our study population and the likely result of the high rate of viral suppression in those patients who remained on treatment as well as the high mutation threshold of LPV/r despite the lack of viral suppression. 23 Of the 8 patients who remained on ART at 6 months and beyond, but failed to achieve viral suppression of <1000 copies/mL at whatever point in time they were sampled, 2 developed major PI mutations. One had an M46I and L76V at 6 months and the other an M46I and I54V at 6 months with the addition of I47V at 30 months. Taken together with observations that suppression at 6 to 12 months is a strong predictor of maintaining suppression beyond 12 months, these findings could be helpful for clinicians to determine the optimal timing of using VL measurements to evaluate efficacy of second-line therapy. It may be prudent to anticipate that patients who did not show viral suppression at 6 to 9 months should be considered more likely to be of second-line treatment failures in the longer term and have additional individually directed adherence interventions and intensification of their regimen as ARV drug access allows. A follow-up evaluation at 12 months that reveals unsuppressed virus may be considered a true virologic failure and continuing second-line therapy in these instances could potentially lead to the development of major protease mutations.

There are limitations to our study. As an observational cohort, patients were not randomized to their initial treatment regimen and confounding by indication cannot be ruled out. As a noninterventional study, while providers’ management is guided by standard operating procedures and National ART guidelines, certain variations exist according to provider preference and style. Also importantly, we preselected patients who documented good adherence, thus results would not be expected to be achieved in patients experiencing ongoing adherence concerns at the time of initiation of second-line therapy. Our small sample size reflects the difficulty of clinically and immunologically identifying failing patients in large HIV clinics without routine use of VL monitoring. Because of the prolonged enrollment time and dwindling resources, a smaller than expected sample size was realized. As a result, the minimum difference the study was able to detect at 80% power was 30% for the analysis by CD4 and 40% for the analysis by GSS and lack of associations should be taken with caution. In a bigger study, GSS might indeed be more significantly predictive of suppression with possibly only a marginal difference between GSS of 2 or 3. Finally, because of the high rate of OT viral suppression, we were not able to determine the rate and timing of PI mutations as planned.

In conclusion, for most patients achieving viral suppression by 6 months on second-line therapy, LPV/r-based regimens are able to maintain durable virologic suppression well beyond 12 months even in patients with low GSS scores. Drug-related toxicities had no discernible impact on long-term durability of the regimen in our study. There was limited accumulation of protease resistance mutations in patients failing therapy, but the first major protease mutations were seen as early as 6 months on a failing second-line regimen.

The long-term efficacy of second-line ART was strongly affected by early mortality occurring within the first 3 months of treatment. This may well be due to advanced HIV disease at the time of switching to second-line therapy and less likely due to the result of poor efficacy or toxicity of the regimen. These observations stress the importance of switching a patient to second-line therapy early, prior to immunological deterioration. Without the access to routine individual VL monitoring in resource-limited settings, this raises the clinical dilemma of how to recognize early viral failure prior to the onset of immunological or clinical deterioration. Leaving patients on a failing first-line regimen too long prior to switching to second-line therapy and not the potency of the second-line regimen may be the determining factor for second-line treatment success. If our observations hold up in larger prospective trials, large investments into expanding drug options for second-line treatment regimens, such as the inclusion of integrase inhibitors, may not result in a significant improvement in second-line outcomes 24 in clinical practice.

Footnotes

Authors’ Note

Underlying research materials (data) are all stored at the Institute of Human Virology, University of Maryland, Baltimore, Maryland, and can be accessed by making a request by e-mail to the corresponding author.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr Amoroso had been a consultant to BMS and Gilead and a speaker to MSD. In the past 5 years, Dr Gilliam has received honoraria for speaking or advisory boards from Abbott, Bristol Myers Squibb, Gilead, Tibotec, and Viiv. He currently has no relationships with any pharmaceutical companies.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The study was supported by the National Institutes of Health (grant number 5 U01 AI038858). Amoroso has received research support from Abbott. He currently has none.