Abstract

Introduction

Hand injuries can lead to lasting impairments that limit one's ability to perform activities of daily living.1,2 Individuals with hand injuries may experience a myriad of impairments that affect the range of motion, strength, and motor control of their fingers and/or wrist. 3 Hand injuries may require surgery for treatment followed by directed and custom hand rehabilitation programs.4,5

Rehabilitation typically involves working with clinicians through guided exercises aimed at improving range of motion, strength, and motor control. 6 Participants receive feedback from clinicians that they use to improve their movement patterns, increase strength in the hand, and reduce pain.7,8 Patients are advised to continue these exercises at home to promote recovery. While current rehabilitation practices benefit many individuals, a sizable proportion do not fully recover and live with chronic functional impairments. 9 Evidence suggests that geographical and socioeconomic barriers may hinder progress in rehabilitation and limit recovery after surgery. 10 Poor adherence to home-based rehabilitation programing may also be associated with poor outcomes. 11 Finally, it has been shown that some patients do not feel motivated to do their home-based exercises because they do not find them engaging or enjoyable. 12 This may stem from a lack of guidance when completing home-based exercises.13,14 Taken together, several factors seem to impose barriers to progress in post-operative hand rehabilitation.

Telemedicine and augmented reality (AR) may be useful tools for addressing issues concerning accessibility and adherence in rehabilitation. Previous reports have shown that telemedicine and AR approaches can help enhance rehabilitation for pain disorders,15,16 musculoskeletal disorders,17,18 cancer,19–21 stroke,22–24 and other neurological conditions.25,26 A novel AR implement that has shown promise is movement sonification in which sounds are linked to movement parameters like displacement, velocity, and acceleration.27,28 Evidence from stroke rehabilitation has shown that movement sonification may be an effective therapy for improving motor control and restoring function in the hand. 29 Importantly, many AR web-based applications are low cost or free-to-use. Thus, AR technology that employs movement sonification may be an engaging and enjoyable means of delivering therapy to patients facing geographic or socioeconomic barriers.30,31 Movement sonification can also provide objective feedback about movement patterns that guide patients through their home-based rehabilitation exercises potentially improving adherence. 28

We have recently developed and submitted for publication our AR web-based interface created using Google's based MediaPipe Hands. 32 In summary, specified finger landmarks are detected from a live webcam feed, enabling the real-time calculation of joint angles for joints of each digit (MCPJ, PIPJ, DIPJ) which are then mapped to specific acoustic parameters, producing auditory feedback that dynamically represents joint positions. The purpose of this study was to validate the use of movement sonification application for single-digit movements in healthy adults.

Methods

Subjects

To validate our study, healthy individuals between the ages of 18-80 were asked to partake in our study. All subjects had no prior experience with the movement sonification software. Participants were excluded if they had a previously diagnosed neurological disorder (eg, stroke, Parkinson's disease, cerebellar ataxia), learning disability (eg, ADHD, Dyslexia), or upper extremity injury that limited the motor control of the hand (eg, fracture, tendonitis, arthritis, carpal tunnel syndrome). All subjects gave their written informed consent to participate in the study, which had been approved by the local scientific and ethics committee.

Experimental Setup

Google's open source MediaPipe hand landmark detection was used to record single-digit movement of the fourth (ring) finger in flexion and extension (Image 1). This was paired with musical notes from low pitch (joint in extension) to high pitch (joint in flexion). The remainder of the digits did not produce sound. Participants used a personal computer, or one provided by the investigational team, to interact with the web-based program. They were then asked to complete a Qualtrics survey to ascertain construct, face, content, and predictive criterion validity of the movement sonification program.

MediaPipe Hand Tracking.

Construct Validity

A recorded video of an investigator using the program was played and participants were required to describe in their own words how the program worked. Construct validity was established based on the ability of participants to identify that movements of a single digit was linked to musical parameters and distinguish between different finger positions (flexion and extension) based on produced sounds. Two reviewers (AP and MG) reviewed the statements individually to ascertain if they understood the assignment based on their descriptions, and a third reviewer was used to resolve disagreements.

Face Validity

Facilitated through the Qualtrics survey, the authors determined if participants found the program to reliably convert finger movement to sound, and if the sounds helped them understand different finger positions during movement.

Content Validity

Participants were asked if the program only produced sound to movement of the fourth (ring) digit. This allowed us to determine the program's ability to produce sound with single-digit movements that was not affected by other digits or video content.

Predictive Criterion Validity

After participants had explored the video tutorial, they were then shown an example of a sound recording (flexion vs extension) from the video and presented with options of multiple finger configurations that would produce that sound. This included distractor images and options for none or all the above. This was repeated eight times. Next, the participants were shown a muted video of a hand configuration, and they had to correctly predict the correct change in pitch that would be produced by the program. This was repeated eight times with distractor options of sounds not produced by the program. Predictive Criterion validity was assessed on the ability of participants to correctly predict the corresponding sounds to different finger configurations and vice versa.

Results

Participants (n = 19) had a mean age of 32.21 years (range 25-60). The sample included 10 male and 9 female participants. Recruitment was conducted by convenience sampling of healthy adults from the University of Calgary community.

Construct Validity

We assessed construct validity based on the ability of naïve participants to identify that movements of the fourth digit linked to the tone of the sound produced by the online program. Seventeen of 19 participants (89.47%) accurately identified that the sound produced by the online sonification program corresponded to flexion and extension of a single finger (the ring finger). Representative comments included: “Pitch goes up when the ring finger is flexed, pitch goes down when straightened,” and “Flexion of the fourth digit increased the note pitch while movement of other fingers had no effect.” Only two participants provided descriptions unrelated to the intended function.

After participants explored the program for themselves, they responded to the question “After exploring the program for yourself, how do you think this program works?” Seventeen of 19 participants (89.47%) accurately identified that the sound produced by the online sonification program corresponded to flexion and extension of a single finger. The remaining two participants did not answer correctly or provided a response that was not relevant to the question. Representative comments included “It is able to identify the fourth digit and respond with an increased frequency as the digit is flexed.” and “The pitch of the sound changes to be higher when you flex the fourth digit but not the other fingers.”

Face Validity

We assessed face validity by asking participants “Was there a relationship between sound and movement in the previous video” after they watched the demonstration video. This helped us understand if participants understood that the program to convert movement to sound, and whether then sounds help them understand the different finger positions during movement. All 19 participants (100%) responded “yes” to the question suggesting high face validity to the online movement sonification program.

Content Validity

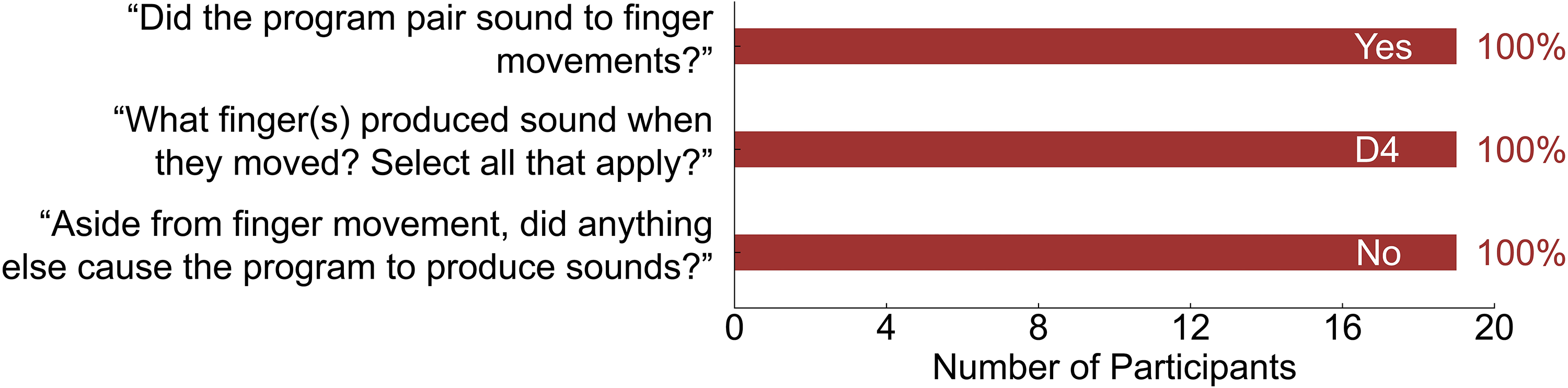

Content validity was assessed with a series of questions administered after participants had watched the demonstration video and had a chance to explore the online program. First, we asked participants “Did the program pair sound to finger movements?” All 19 participants answered “yes” (100% correct; Figure 1). Next, we asked participants “What finger(s) produced sound when they moved? Select all that apply?” and provided them with the options to select digits 1-5. All participants selected the fourth digit (100% correct; Figure 1). Finally, we asked participants “Aside from finger movement, did anything else cause the program to produce sounds?” as a means of assessing that the content of our online program was specific to only the fourth digit. Similarly, all 19 participants selected “no” (100% correct; Figure 1).

Content Validity.

Predictive Criterion Validity

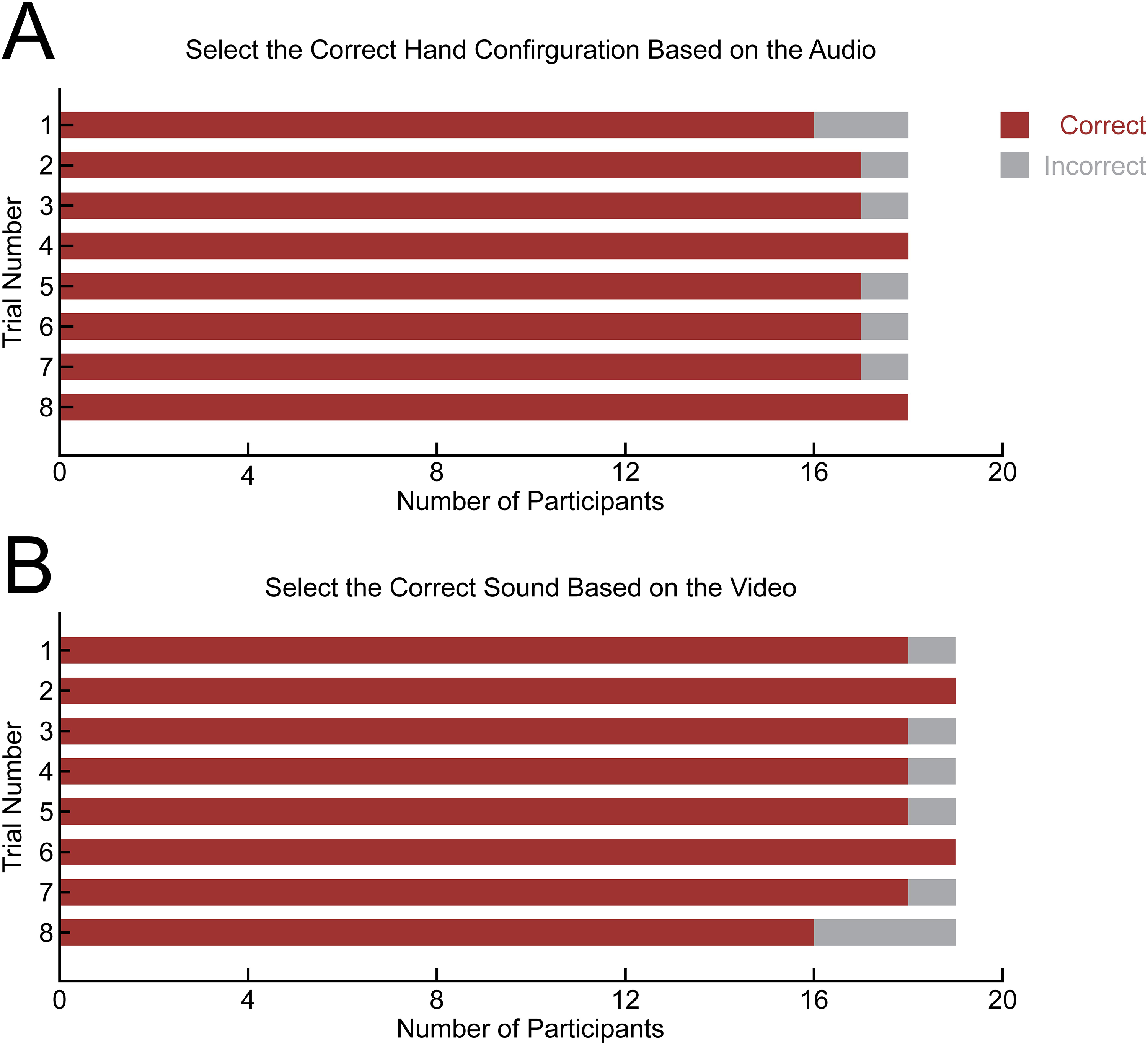

We tested predictive criterion validity by presenting participants with a sound and asked them “Listen to the following sound and select from the options below.” We presented participants with several images of hand configurations and were asked to select the hand configuration that best corresponded to the sound. This was repeated eight times. Data was missing from one participant. Overall, the average percentage of correct responses across the eight trials was 95.14% (Range: 88.89-100.00%; Figure 2A).

Predictive Criterion Validity. A) Participant Response Accuracy Across Eight Trials to Select Correct Hand Configuration Based on Audio. B) Participant Response Accuracy Across Eight Trials to Selected the Correct Sounds Based on Hand Configuration Seen in Video.

We tested predictive criterion validity a second way by presenting participants with a short demonstration video of a study investigator moving their finger (ie, flexion or extension) in the absence of sound. Participants were asked “Watch the following video and select the corresponding sound below.” Participants were presented with several options describing the pitch and any expected changes in pitch based on the movements observed in the video. This was repeated eight times. Overall, the average percentage of correct responses across the eight trials was 94.74% (Range: 84.21-100.00%; Figure 2B). Taken together, the results suggest that the online program has a high level of predictive criterion validity.

Discussion

This study validates the use of a novel movement sonification interface that uses Google's MediaPipe hand-tracking technology to map single-digit PIP joint motion to real-time auditory feedback. Our validation study was designed to assess construct validity, face validity, content validity, and predictive validity.33,34 In essence, validity is the extent to which an instrument measures what it is meant to measure. Overall, our results demonstrate excellent construct, face, content, and predictive criterion validity. This supports the feasibility of using music sonification as an intuitive and accessible tool for augmented hand rehabilitation.

In our study, 89.47% of participants were able to explain in their own words that the pitch changes corresponded to flexion and extension of the fourth digit, confirming strong construct validity. In addition, 100% of participants in our study correctly identified that there was a relationship between sound and movement, confirming face validity of the program. This supports the intuitive design of the interface and coincides with other AR rehabilitation systems that demonstrate that humans naturally integrate auditory cues with motor actions when the sonification mapping is intuitive.29,35

All participants in our study correctly identified that the fourth digit produced sound and that other digits did not trigger audio feedback, demonstrating excellent content validity. This addresses a major limitation of earlier sonification systems that used inertial sensor units that do not allow the capturing of finger or grasping movements. 36 In contrast, our study's findings are similar to those of a previous study by Dong et al, which demonstrates that MediaPipe's hand-tracking software enables precise digit and joint isolation. 32

In addition, participants in the current study correctly matched hand configurations with an average accuracy of 95.14% and correctly predicted sounds from muted videos with 94.74% accuracy. Both of which demonstrate strong predictive criterion validity. Predictive interpretation is central to motor learning as it enables users to anticipate correct movement patterns. Previous studies in neurologic rehabilitation show that predictive sonification improves movement timing, movement smoothness, and proprioception.29,30,37

In the context of neurologic rehabilitation, particularly after stroke, prior work has shown that music-based sonification can meaningfully enhance upper-limb recovery. One study demonstrated improvements in manual dexterity, motor function, and decreased perceived pain during rehabilitation. 29 Similarly, Sholz et al, reported that music sonification in post-stroke patients undergoing upper extremity rehabilitation improved movement smoothness and reduced joint pain. 30 Of note, these studies focused on large-joint or multi-segment movements. Our study adds to the literature by demonstrating that single-digit PIP joint motion can be accurately captured and sonified, using a web-based interface. Participants intuitively understood the sound-movement mapping, highlighting the accessibility and usability of this software. Our work also expands on existing AR tools such as the DIGITS app, which demonstrates that finger range of motion can be reliably measured using a smartphone camera. 32 Here, we demonstrated that using a no-hardware, webcam-based method, users can reliably perceive and predict sound mappings at the level of a single interphalangeal joint.

Despite the importance of hand rehabilitation for many common hand surgeries, including flexor and extensor tendon repair and hand fractures, adherence to rehabilitation protocols remains a major determinant to full functional recovery.5,14,38,39 Several barriers to adherence are recognized, including limited access to therapy, lack of guidance during home exercises, and reduced motivation due to pain or monotony.10,14 A prior study found that tailored information and recognition of gradual improvements play a large role in patient motivation and commitment to rehabilitation. 14 In addition, AR and VR technologies have been demonstrated to enhance hand rehabilitation by providing real-time feedback, and gamifying therapy, which increases patient engagement and adherence. 8 The current study adds to the evidence that our sonification tool may help address barriers to hand therapy adherence by (1) providing a browser-based platform that lowers geographic barriers to post-operative care, (2) providing real-time, joint-specific movement feedback and (3) increasing enjoyment, similar to gamified or AR-based rehabilitation tools.

Future work will evaluate the clinical efficacy of digit-level sonification in post-operative hand-injury populations. Randomized trials comparing sonification-based programs with standard writer or video-based protocols could assess functional outcomes, adherence, patient satisfaction, and barriers to care.

This study has several limitations. Firstly, it was conducted in healthy adults and excluded patients with learning disabilities or neurologic deficits. Therefore, results may differ in the general population. Our sample size of 19 individuals was small, and further studies will employ a larger number of individuals with less exclusions. Furthermore, survey-based studies are subject to individual compliance, and one of the participants failed to provide representative assessment in their description of the app affecting our construct validity. Finally, only a single digit in 2 configurations (flexion and extension) was used in this validation study. Other domains such as multi-digit movements, velocity, or joint angles were not assessed in this study as would be used in hand rehabilitation. Future studies would aim to target these areas.

Conclusion

This study validates the use of a novel movement sonification interface that uses Google's MediaPipe hand-tracking technology to map single-digit PIP joint motion to real-time auditory feedback. Overall, our results demonstrate excellent construct, face, content, and predictive criterion validity. This supports the feasibility of using music sonification as an intuitive and accessible tool for augmented hand rehabilitation.

Footnotes

Contributory Roles

Supervision: JY, MR, AK. Conceptualization: AP, MJG, RTM, RH, MR, JY. Investigation: AP, MJG, RTM. Methodology: AP, MJG, RTM, RH, MR, JY. Validation: AP, MJG, RTM, RH, MR, JY. Formal Analysis: AP, MJG, RTM. Writing—Original Draft: AP, MJG, EY. Writing—review & editing: AP, MJG, RTM, EY, RH, MR, AK, JY.

Financial Disclosure Statement

Authors do not have an affiliation (financial or otherwise) with a pharmaceutical, medical device, or communications organization. A research grant was provided by the Department of Surgery, Division of Plastic Surgery, University of Calgary, Calgary, AB, Canada

Participant Consent Statement

Informed, written consent was obtained from all participants included in the study regarding recruitment, participation, and the publication of their information.

Data Availability

Participant data and all findings of this study are available from the corresponding author upon reasonable request. All data are de-identified and stored in accordance with institutional ethics requirements.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethics Statement

Ethical Approval was provided by the Conjoint Health Research Ethics Board (CHREB), University of Calgary, Alberta, Canada. REB25-0645.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.