Abstract

Study Design

Modified Delphi study.

Objectives

Telementoring, the practice of providing remote guidance and teaching from a distance using telecommunication technology, has demonstrated feasibility and value in assisting surgeons in remote locations. However, limited evidence exists regarding its effectiveness in improving surgeon performance. This study aimed to develop metrics to assess spine surgeon performance in scoliosis surgery, potentially within the context of a telementoring training program.

Methods

Ten expert spine surgeons participated in a four-round modified Delphi process including both online and in-person meetings. The resulting metrics were validated for objective assessment by eleven surgeons, who reviewed and rated a video recording using the established rubric.

Results

The final set of metrics, comprising 50 procedural steps and 28 error categories, was unanimously approved by the panel. Additionally, the panel agreed that utilizing cameras, smart lenses, and output from intraoperative imaging monitors would provide sufficient visibility for assessing both steps and errors.

Conclusions

A set of assessment metrics for adolescent idiopathic scoliosis surgery was successfully defined and validated by reviewing example videos. The longer-term research objective is to employ this rubric to assess surgeon’s performance throughout a telementoring program, thus assessing its educational impact. The rubric could also be used in other contexts, such as live surgical observation.

Keywords

Introduction

Telementoring is defined as the remote assistance and evaluation of surgical procedures by a mentor or proctor, who is located at least one kilometer from the operating surgeon and the patient. 1 The literature often uses this term interchangeably with ‘teleproctoring’, however only ‘telementoring’ will be used here, covering both terms. These are different from the term ‘telesurgery’, which describes remote surgery performed by a surgeon through a surgical robot. Compared to telesurgery, telementoring is considered easier to establish, with lower associated costs and reduced sensitivity to network latency issues. 2 Telementoring has been demonstrated to be both feasible and valuable, particularly in assisting surgeons in remote or under-resourced areas of the world3,4 and in cases where proctor mobility was constrained, such as during the COVID-19 Pandemic.5,6 Numerous studies have demonstrated its safety and effectiveness as a surgical adjunct.3-7

From an educational perspective, the integration of emerging technologies such as video conferencing, instant messaging, and cloud-based applications has significantly transformed the landscape of distance education, making it more interactive, engaging, and accessible. Crucially, telementoring has proven particularly effective in supporting the professional development of in-service teachers, enhancing their technology integration skills and enabling them to adapt teaching practices to the evolving digital landscape. Moreover, telementoring has a positive impact on student outcomes. By providing personalized guidance and support, telementoring fosters deeper student engagement, improves academic achievement, and cultivates a stronger sense of motivation and self-efficacy. 8 In regard to its applications in the field of health, telementoring has been shown to be effective in supporting the development of healthcare professionals.9-12

The potential of telementoring in spine surgery is substantial, as it offers a pragmatic and cost-effective solution to address the challenges of a shortage of specialized spine surgeons, particularly in remote or underserved areas. By enabling experienced surgeons to virtually guide and mentor their colleagues in real-time, telementoring has the potential to enhance the quality of care, improve patient outcomes, and expand access to advanced surgical techniques. 13 A key advantage of telementoring in spine surgery is its ability to provide robust educational opportunities for the next generation of surgeons. Through virtual observation and interaction with seasoned experts, medical students, residents, and early-career surgeons can accelerate the acquisition of crucial skills and knowledge, ultimately fostering the development of a more proficient surgical workforce. 14 Furthermore, the integration of virtual reality and augmented reality technologies into the telementoring process has the potential to create even more immersive and effective learning environments for aspiring spine surgeons. 15

Although there is evidence that telementoring is perceived as user friendly, there is limited evidence on its impact on improving surgeon performance. To address this gap, it is first necessary to create assessment tools to measure proficiency in the skill sets required for performing surgical procedures. Therefore, this study aimed to take the necessary step to develop a set of metrics (a rubric) to assess improvements in surgeon performance in the surgical treatment of Adolescent Idiopathic Scoliosis (AIS) and to validate the rubric.

Materials and methods

A modified Delphi process in accordance with Zeliff and Heldenbrand,16,17 consisting of four rounds, was used to develop an assessment rubric for posterior adolescent idiopathic scoliosis (AIS) surgery.

Ethical Approval

The study has no direct involvement of patients and was deemed exempt from ethical approval by the Palestine Polytechnic University Institutional Board Review (approval ID KA/41/2023). Informed consent for the use of the surgical videos was obtained.

Panelists

For the Delphi process 10 international experts in the field of idiopathic scoliosis surgery were selected as panelists from nine countries Argentina, Brazil, Egypt, Germany, India, Palestine, Turkey, UK, USA.

For the rubric validation, 11 surgeons were involved (7 from the Delphi panel and 4 not involved in the development of the rubric).

Modified Delphi Process

Its steps included: 1. 2. 3. 4.

In addition to the Delphi process, one online and two face-to-face consensus meetings were conducted with the panelist to discuss and finalize the marking rubric.

The final results were analyzed by calculating the median, 1st quartile, 3rd quartile, and the interquartile range (IQR). An IQR equal to or less than 1.2 for any item was accepted as a “consensus (C)”, whereas any IQR greater than 1.2 was classified as “no consensus” (NC). All competencies (and errors) that have reached consensus (C) with a median score equal to or greater than 5 were included in the final evaluation rubric.

The final step in the development of the rubric was a grammatical check performed by two native English-speaking surgeons.

Rubric Validation, Stress Testing, and Reliability of Identification

This step assessed how reliably the rubric could be independently scored by 11 surgeons. This required independent reviewing and scoring of video recordings of the procedure. Panelists were asked to assess the competencies of a surgeon (AA, the designated telementor) performing scoliosis surgery from a recorded surgical video.

Adolescent Idiopathic Scoliosis Video Acquisition.

A video recording of scoliosis surgery performed in the Kenyata National Hospital, Nairobi, Kenya was recorded using technology developed by Proximie Inc (Proximie SAL. Bachoura, Beirut, Lebanon) with multiple cameras installed in the operating room and video feeds streamed into a central software program with HIPAA compliance. Although this tool is specifically designed for remote telementoring, it was used only for the recording of the case for the purposes of this study. The output included recordings from 3 different cameras in the operating room (one overhead at the center of the pilot surgical light, one from the cranial side of the operating table and another covering the entire operating room including the fluoroscopy screen) compiled as a tile to be viewed simultaneously (Figure 1). A sample video instant from the output of three cameras compiled as a tile (a) pilot lamp, (b) cranial side of the table, (c) room view.

Rubric Stress Testing and Reliability of Identification.

The video was marked by 11 surgeons (5 expert surgeons from the Delphi panel, 4 other spine surgeons who were not involved in the development of the rubric, and 2 surgeons who were involved in the development of the rubric). Each surgeon rated the performance level for completing the specified task (competency) on a Likert scale from 1 to 9, along with the presence and number of errors at each step. Additionally, this panel evaluated the video’s quality specifically considering whether performance level and errors could be accurately assessed from the video. Any difficulties encountered in viewing or assessing the video were documented into the evaluation sheet. The panel was given the marking choice of ‘cannot assess’ for each step and error and was asked to specify their reasons in the open text comments. A group of five surgeons (2 Delphi panel members, 2 other surgeons and 1 developer) repeated the same viewing and marking procedure after a time lag of 3 to 5 weeks for the calculation of the intraobserver and interobserver reliabilities. Only these evaluations were used for the purposes of Kappa statistics whereas the results from the entire panel were used for the evaluation of the capability to assess.

Statistical methods for the Delphi step included the descriptive statistics of the scores from the Delphi panel. In the validation step, in addition to the descriptive statistics, reliability of the rubric was evaluated by Cronbach-Alpha and Kappa statistics were used to evaluate the intra-and inter-observer reliability of the marking system. For the purpose of this analysis, the Likert scale used in this step was simplified into a scale of 5 to rate how well the surgeon performed the step in which the marks were grouped as 1 (very poor), 2 (marks of 2 and 3), 3 (marks of 4 and 5), 4 (marks of 6 and 7) and 5 (marks of 8 and 9, excellent).

Results

Development of the Rubric

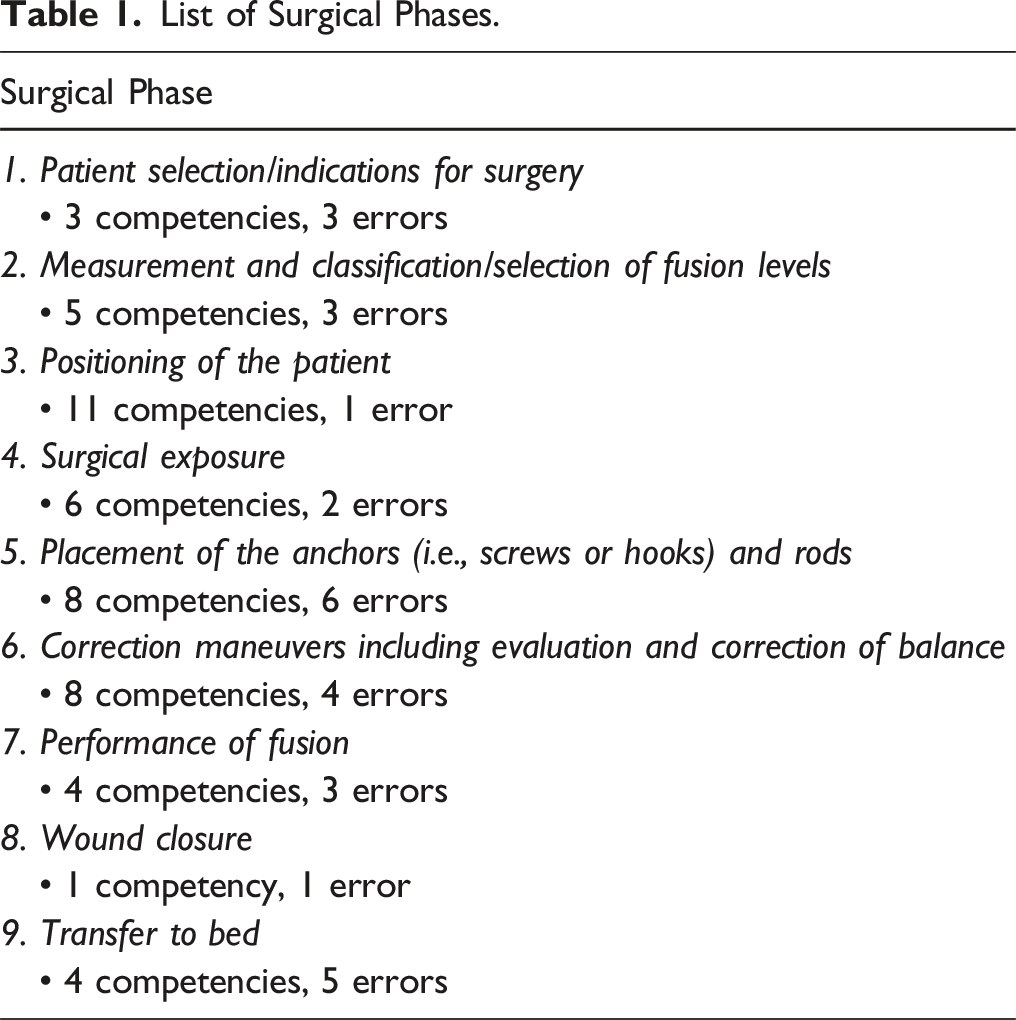

List of Surgical Phases.

A final rubric consisting of 9 surgical phases, 50 tasks/competencies and 28 potential errors was developed (Supplemental Material) and analyzed for internal consistency. The panel also decided that phases 1, 2, and 9 (ie, Patient selection/indications for surgery, Measurement and classification/selection of fusion levels, and Transfer to bed) may not be possible to evaluate on a video recording. Consequently, although these phases were included in the overall rubric, they were excluded from the following validation step.

Rubric Stress Testing and Reliability of Identification

The video recording used for this stage started at the time of the induction of anesthesia and ended when the patient was transferred to the bed, a total time of 4 h, 35 min and 38 sec with all three cameras recording the entire procedure simultaneously.

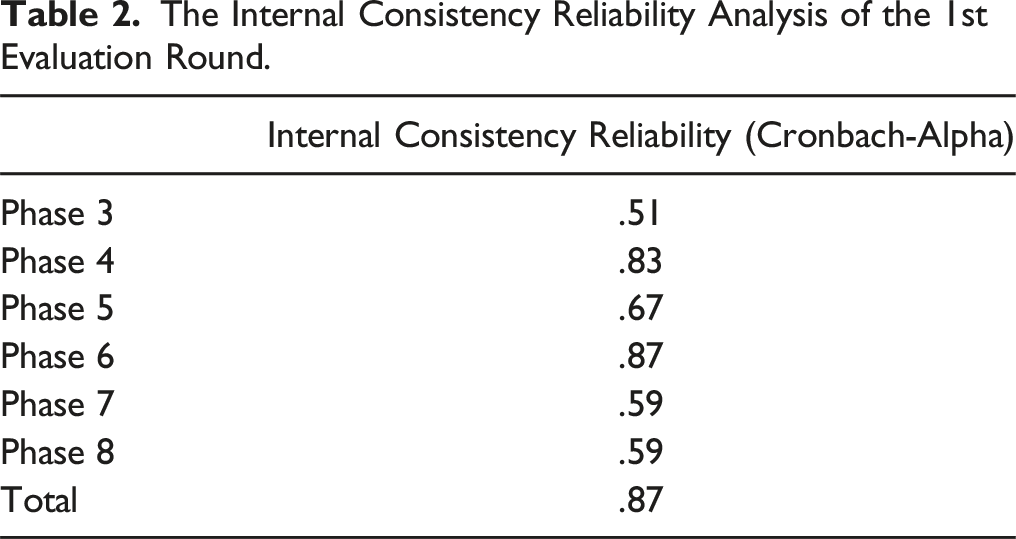

The Internal Consistency Reliability Analysis of the 1st Evaluation Round.

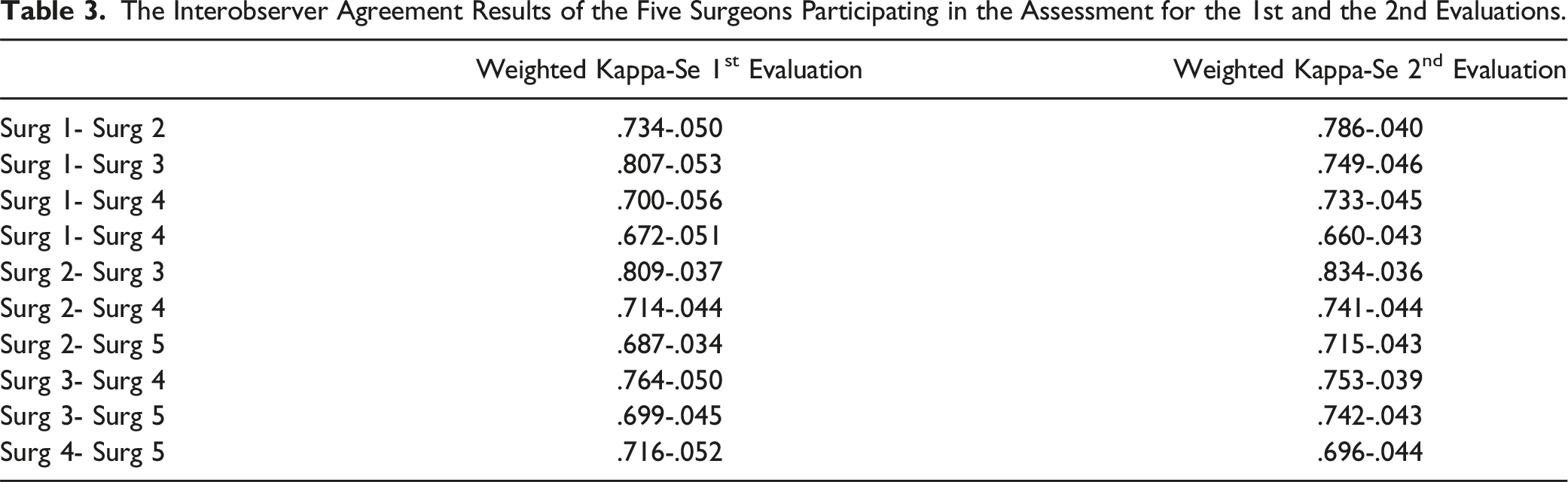

The Interobserver Agreement Results of the Five Surgeons Participating in the Assessment for the 1st and the 2nd Evaluations.

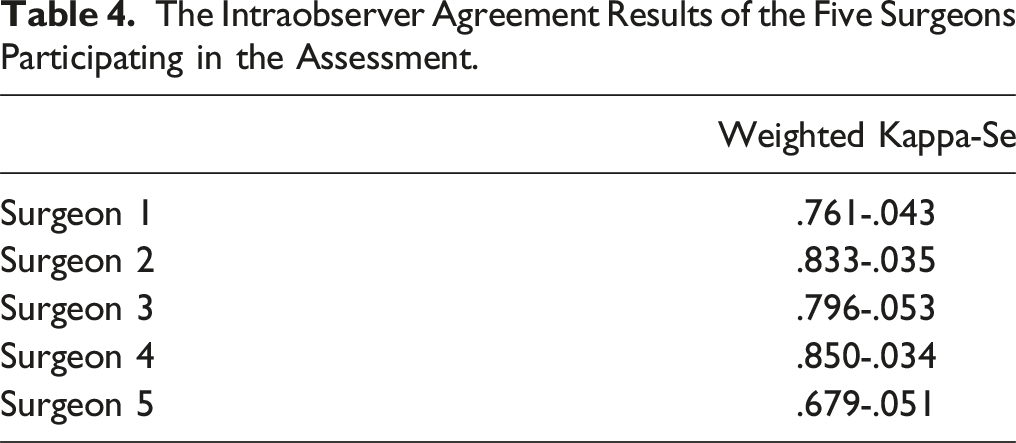

The Intraobserver Agreement Results of the Five Surgeons Participating in the Assessment.

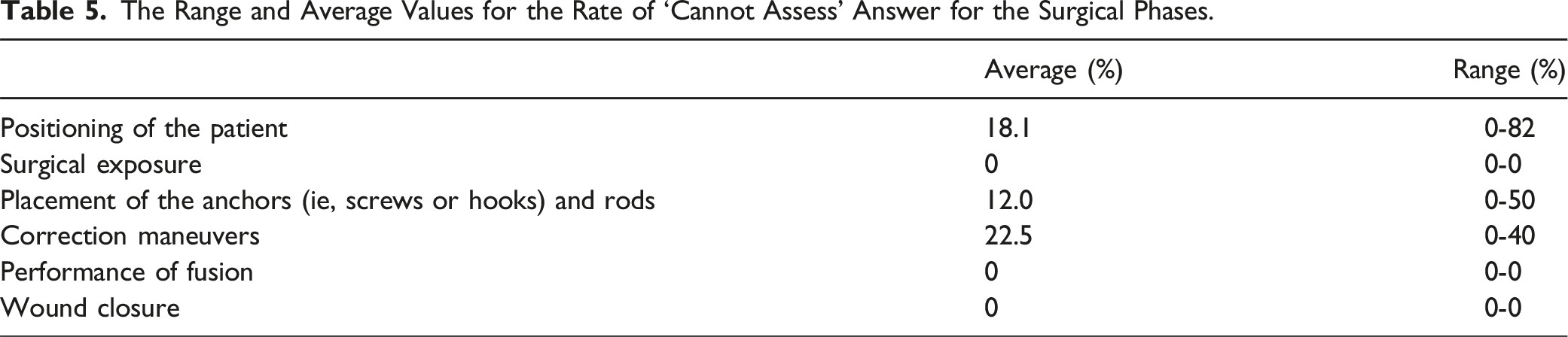

The Range and Average Values for the Rate of ‘Cannot Assess’ Answer for the Surgical Phases.

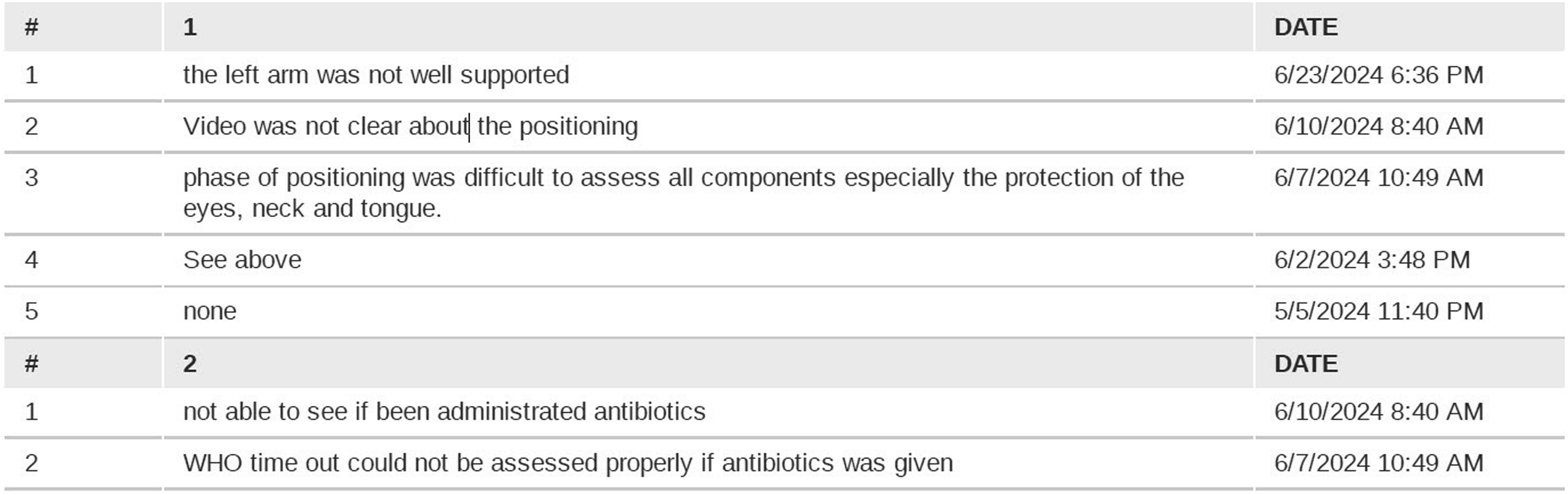

A sample sheet from the evaluation form demonstrating the reasons for the answer of ‘cannot assess’ during the positioning phase.

Discussion

The main result from this study was the development of a rubric to assess surgeon performance and competence in posterior surgery for adolescent idiopathic scoliosis (AIS). The created rubric showed good internal consistency and a good inter- and intraobserver reliability.

The need for developing this rubric was originally identified to assess learner improvement within a telementoring program, as remote methods are gaining popularity in surgical training. However, it would be applicable for the assessment of AIS surgery performance in other context such as live observation of surgery in the operating room. This live setting would even allow to assess additional steps and errors that were removed from the current rubric because could not be assessed when relying solely on videos.

Surgical education is undergoing a significant paradigm shift, moving from time-based training models to competency-based medical education (CBME). 19 This evolution requires well-defined objectives and reliable methods for assessing learners’ skills and competences at various stages of their training. In this framework, valid assessment tools are essential not only for achieving surgical competencies but also for promoting effective and meaningful learning.20,21 The rubric created in this study serves the purpose of filling the gap in assessing the specific skills of posterior surgery for AIS that can well complement more general assessment methos such as the Objective Structured Assessment of Technical skills (OSATS). 22

The use of the Delphi method or similar consensus-building approaches for developing assessment rubrics is well established in the literature. St-Louis and colleagues, for instance, reported the development of the Open Surgery Competency Assessment for Residents (OSCAR) tool. Following a comprehensive literature review to identify key competencies, they applied a Delphi process to determine the 12 most relevant surgical competencies for resident assessment. 23 Comparable methodologies have been employed to define entrustable professional activities (EPAs) and core competencies in other health professions, including dentistry24,25 and nursing.26,27 Burke et al. adopted an accelerated Delphi process to develop a core curriculum for robotic surgery. 28

To date, however, the results of these rubric based assessment in actual assessment scenarios over consecutive recorded procedures has been reported in a limited number of studies. Cheon and colleagues for example, using the Council of Ophthalmology’s Ophthalmology Competency Assessment Rubric, conducted evaluations of 10 simulated cataract surgery videos generated by residents. Two faculty experts, along with 10 residents (through peer and self-assessment), participated in the evaluation process. While expert and peer assessments demonstrated high inter-rater reliability, a systematic discrepancy was observed between these and the residents’ self-evaluations. 29 It remains unclear whether this divergence is specific to the rubric employed or reflects a more generalizable phenomenon across self-assessment practices. The present study cannot address this question due to the absence of self-assessment data.

Finally, beyond assessment purposes, the Delphi methodology has also been widely applied in the development of guidelines and curricular frameworks, particularly for competencies related to emergency procedures (eg, Refs. 30,31). In summary, the Delphi method is a validated and reliable approach for the development of assessment rubrics and educational content. Our study further supports this statement by demonstrating the feasibility of the process and that it may be used for distant education and assessment, and also, by providing data on its validation.

This study has several potential limitations related to the methodology used and the quality of the video used for the validation step. First, the quality of the recording, although using three different cameras from three different angles, was less than ideal, resulting in several ‘cannot assess’ answers in the rubric. This problem might be less relevant in the assessment of live surgeries however a better recording system for purposes of telementoring may be needed. A possible improvement of the quality of the recording is the use of smart glasses which provide a view of the surgery from the principal surgeon’s perspective and enhance the view of the operating field. 32 Another limitation is that the surgeon who performed the surgery for the purpose of the recording was the originator of the telementoring project and a member of the Delphi panel. It may therefore be introducing a bias in the assessment process since he performed the surgery according to the rubric that may have increased the number of steps visible on the recording (decreasing the number of ‘cannot assess’ answers from the assessors). This surgeon was excluded from the validation process to eliminate a potential bias in the scoring of his own surgery.

In conclusion, this study developed and validated an assessment rubric for posterior surgery for AIS. Future work would focus on validating further this rubric to monitor the progression of surgical skills during a telementoring training program.

Supplemental Material

Supplemental Material - Defining Metrics for Assessing Surgeon Performance During a Telementoring Program for Adolescent Idiopathic Scoliosis Surgery

Supplemental Material for Defining Metrics for Assessing Surgeon Performance During a Telementoring Program for Adolescent Idiopathic Scoliosis Surgery by Alaaeldin Ahmad, Engin Çetin, Steven Theiss, Selcen Pehlivan, Michael Cunningham, Monica Ghidinelli, and Emre Acaroğlu in Global Spine Journal.

Footnotes

Acknowledgments

The authors would like to extend their gratitude to our other Delphi panelists: Colin Nnadi (Oxford, UK), Ruben Alejandro Morales Ciancio (Mendoza, Argentina), Alpaslan Şenköylü (Ankara, Turkey), Youssry Elhawary (Cairo, Egypt), Bhavuk Garg (New Delhi, India), Cristiano Menezes (Nova Lima, Brazil), and Carolin Melcher (Neustadt in Holstein, Germany); our independent video evaluators: Luca Erdmann Bini Cordeiro (Belo Horizonte, Brazil), Francisco Moura Neto (Belo Horizonte, Brazil), and Mehmet Çetinkaya (Istanbul, Turkey); Moyo Kurt (Utrecht, Holland) for the invaluable contributions to this project, and George Museivi head of spine department in Kenyatta hospital. We would also like to thank Proximie Inc who donated the hardware and software used for the videos.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Proximie Inc provided cameras with associated hardware and software to anonymize the recordings and provide short-term access to the Delphi panelists. Support for data collection and manuscript preparation was provided by the AO Education Institute through AO Spine curriculum design and educational research projects.

Ethical Statement

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.