Abstract

Study Design

Prospective Observational Propensity Score.

Objectives

Randomization may lead to bias when the treatment is unblinded and there is a strong patient preference for treatment arms (such as in spinal device trials). This report describes the rationale and methods utilized to develop a propensity score (PS) model for an investigational device exemption (IDE) trial (NCT03115983) to evaluate decompression and stabilization with an investigational dynamic sagittal tether (DST) vs decompression and Transforaminal Lumbar Interbody Fusion (TLIF) for patients with symptomatic grade I lumbar degenerative spondylolisthesis with spinal stenosis.

Methods

Twenty-five baseline covariates were selected for their expected relationship to patient outcomes or enrollment bias. Subclassification by PS quintiles was used to design a sample of investigational DST patients and TLIF controls with excellent covariate balance in which to estimate causal treatment effects. Additionally, balance in PS covariates was compared to available matching covariates from seven randomized spine IDE trials.

Results

The PS subclassification design resulted in excellent balance across baseline covariates, as evidenced by small standardized mean differences and no significant between group differences after accounting for the PS design (all P ≥ 0.768). Differences in SMDs among covariates of randomized spine IDE trials were not significant (P = 0.396).

Conclusion

The PS subclassification design achieved excellent covariate balance between DST investigational and TLIF control participants. This PS designed sample shows covariate balance similar to that observed in published studies in which patients were randomized to investigational or control arms.

Clinical trial registered with https://www.clinicaltrials.gov (NCT03115983).

Keywords

Introduction

The randomized controlled trial (RCT) is considered the gold standard for the evaluation of investigational medical therapies. Randomly allocating participants to experimental and control groups is believed to balance confounding from known and unknown predictors of the outcome to produce an unbiased estimate of the treatment effect. 1 However, a randomized study design may not sufficiently address confounding from risks such as selection and preference bias when blinding is impractical and participants experience a strong aversion to their randomly assigned treatment (i.e., “resentful demoralization”). 2

Investigational trials of surgical spine procedures are uniquely challenging to design and to conduct, which is reflected in a paucity of surgical spine RCTs reported in the literature.3-6 A recent review of the Web of Science Core Collection Database by Muthu et al, 4 found just 263 spinal surgery RCTs from the United States published over 30 years from 1990 to 2019, or an average of 9 RCTs per year. Although Muthu et al 4 highlight that spinal surgery RCTs had increased over the last decade of the study period, they, and others3,7,8 describe effective blinding and strong participant treatment preference as ongoing challenges.

The addition of an investigational device further increases the complexity of spinal surgery trial designs. Surgeons and clinical care teams are aware of the device implanted, and placebo-controlled procedures raise ethical concerns given the risks of surgery and desire to treat a patient’s pain and dysfunction in a timely manner.7,9 Patients may have strong preferences for a treatment arm and thus be more challenging to recruit to a randomized trial, posing a risk for selection bias and limited generalizability of results.3,10,11 Additionally, outcomes for spinal device studies often include patient reported outcome measures (PROMs) such as the Oswestry Disability Index.12,13 In the presence of resentful demoralization, biased responses to PROMs are another potential threat to internal validity.2,14 Similarly, purported objective outcomes such as reoperations are ultimately influenced by patient reports of pain and dysfunction, and thus may be biased by negative preoperative impressions. When a RCT is impractical, a rigorously designed prospective observational study utilizing propensity score (PS) balancing 15 may be considered. In recent years, PS modeling has become more common in spinal investigational device exemption (IDE) trials for approval by the US Food and Drug Administration (FDA).16-18

As an alternative to randomization, well-designed PS matching studies can permit causal interpretations from comparisons of non-randomized device and control groups. 19 Briefly, the propensity score (PS) is the probability of receiving one treatment vs the other conditional on a set of evidenced-based baseline covariates known or suspected to be associated with the clinical outcome of interest (e.g. demographics and disease state). 15 Confounding is reduced by matching treated and control subjects based on their PS, using one of several approaches. 20 This matching often results in good balance in baseline covariates between treatment groups, similar to the results of randomization.21,22 As such, comparisons between treatment groups within the context of the PS design have valid causal interpretations.

The purpose of this report is twofold. Firstly, we describe the approach to leveraging a PS designed comparison to address potential selection bias in a non-randomized IDE trial (NCT03115983 23 ) to evaluate decompression and stabilization with an investigational dynamic sagittal tether (DST) vs decompression with Transforaminal Lumbar Interbody Fusion (TLIF), a common treatment for symptomatic grade I degenerative lumbar spondylolisthesis with spinal stenosis. Secondly, we compare the covariate balance in the PS designed sample to that observed in randomized IDE trials of lumbar spinal devices.

Methods

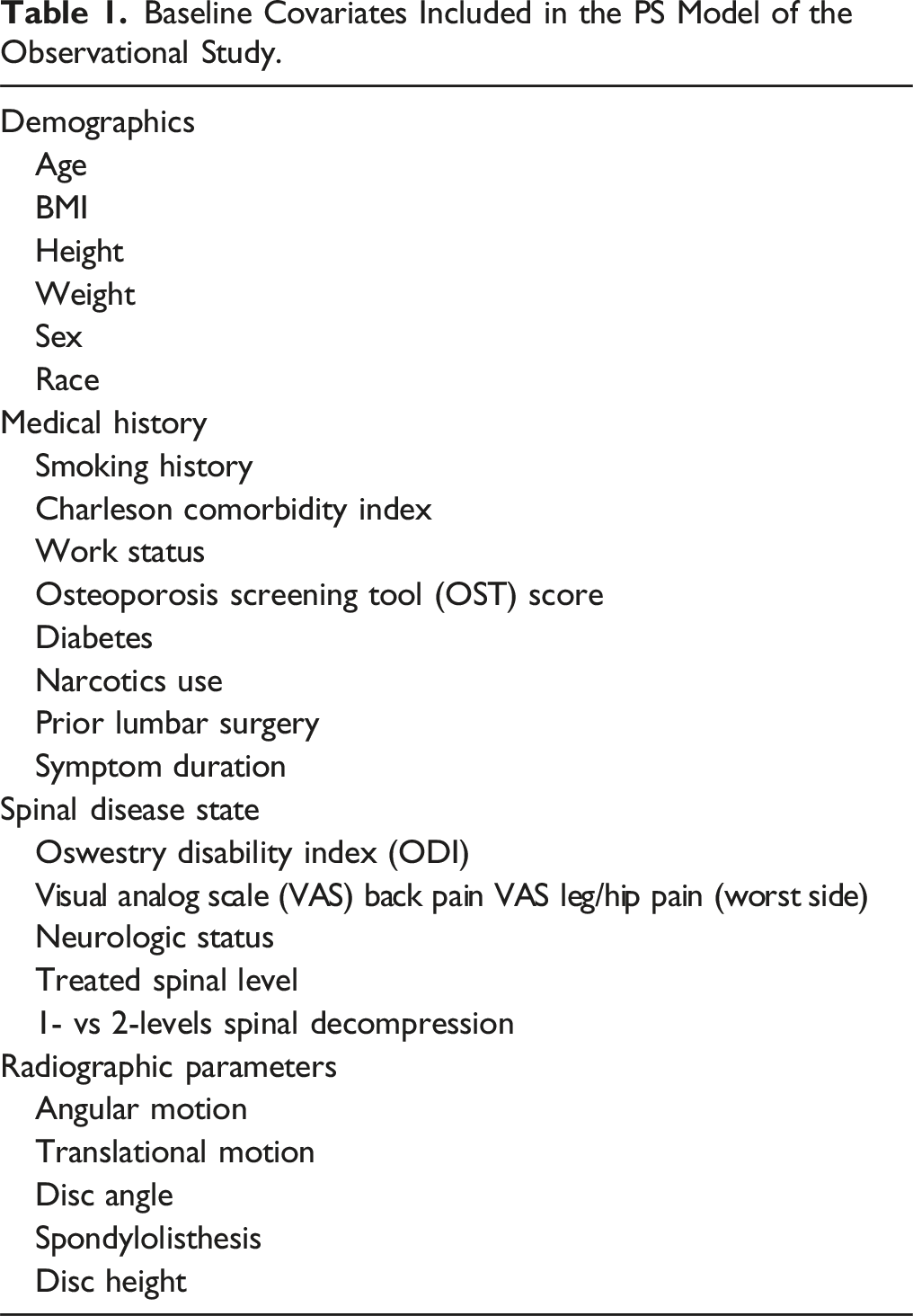

Baseline Covariates Included in the PS Model of the Observational Study.

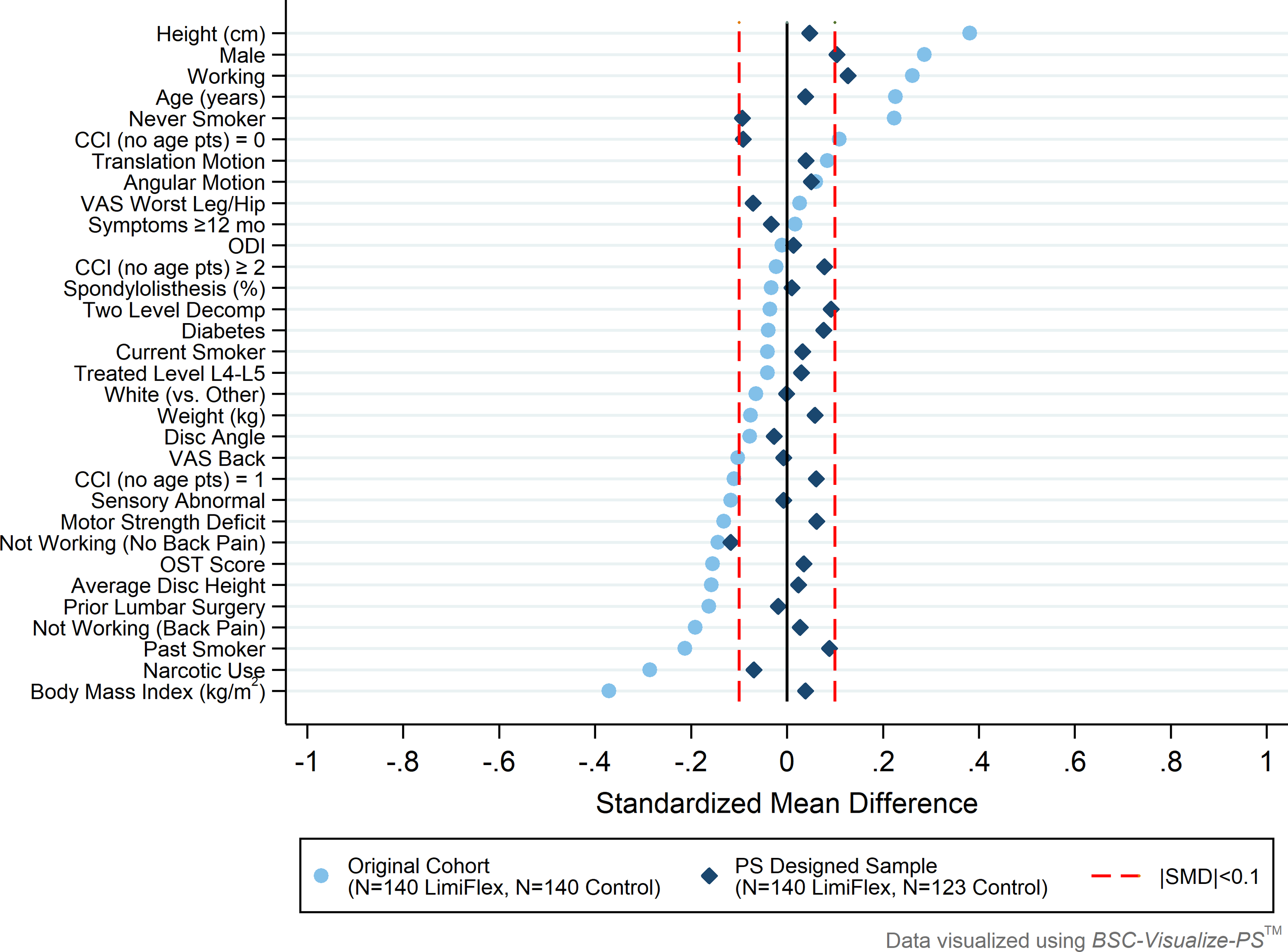

Subclassification based on PS quintiles 29 was used to create a sample of DST investigational participants and TLIF controls well-balanced with respect to the selected covariates within each of the 5 subclasses. The design was implemented using BSC-Design-PS™ which follows a published heuristic from Maislin and Rubin, 30 keeping all investigational participants and trimming (excluding) of control patients with PS values least like those in the investigational group. Within each subclass, subjects in either treatment group have similar likelihoods of receiving the study treatment as a function of baseline covariates. Therefore, analyses proceed as if there was stratified randomization. Group comparisons are made within each of these balanced subclasses and then statistically combined to determine a valid estimate of the average treatment effect on the treated (ATT). 20 The effectiveness of the PS design in improving covariate balance is illustrated in a “Love Plot”, as described by Ahmed et al, 31 as visualized through BSC-Visualize-PS™.

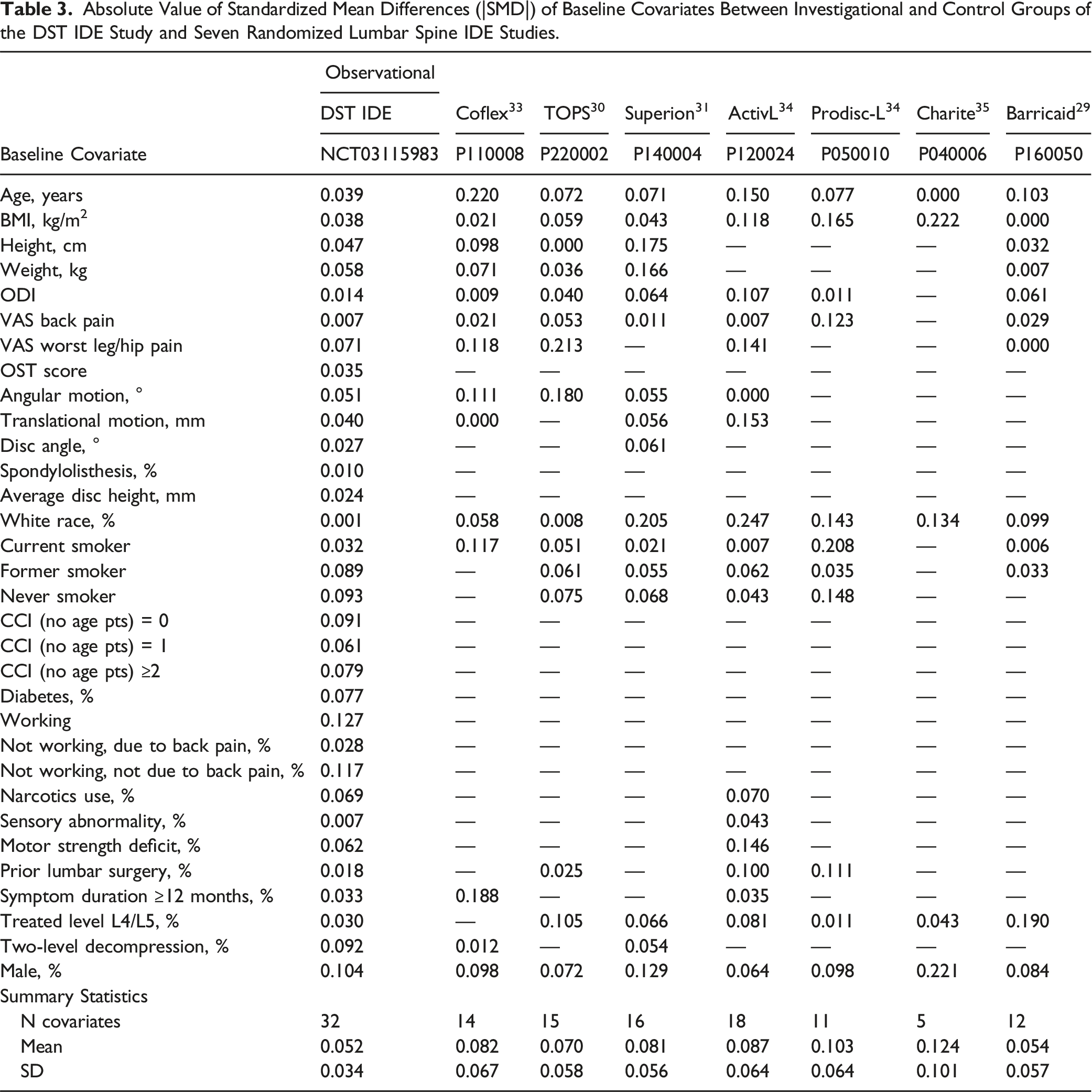

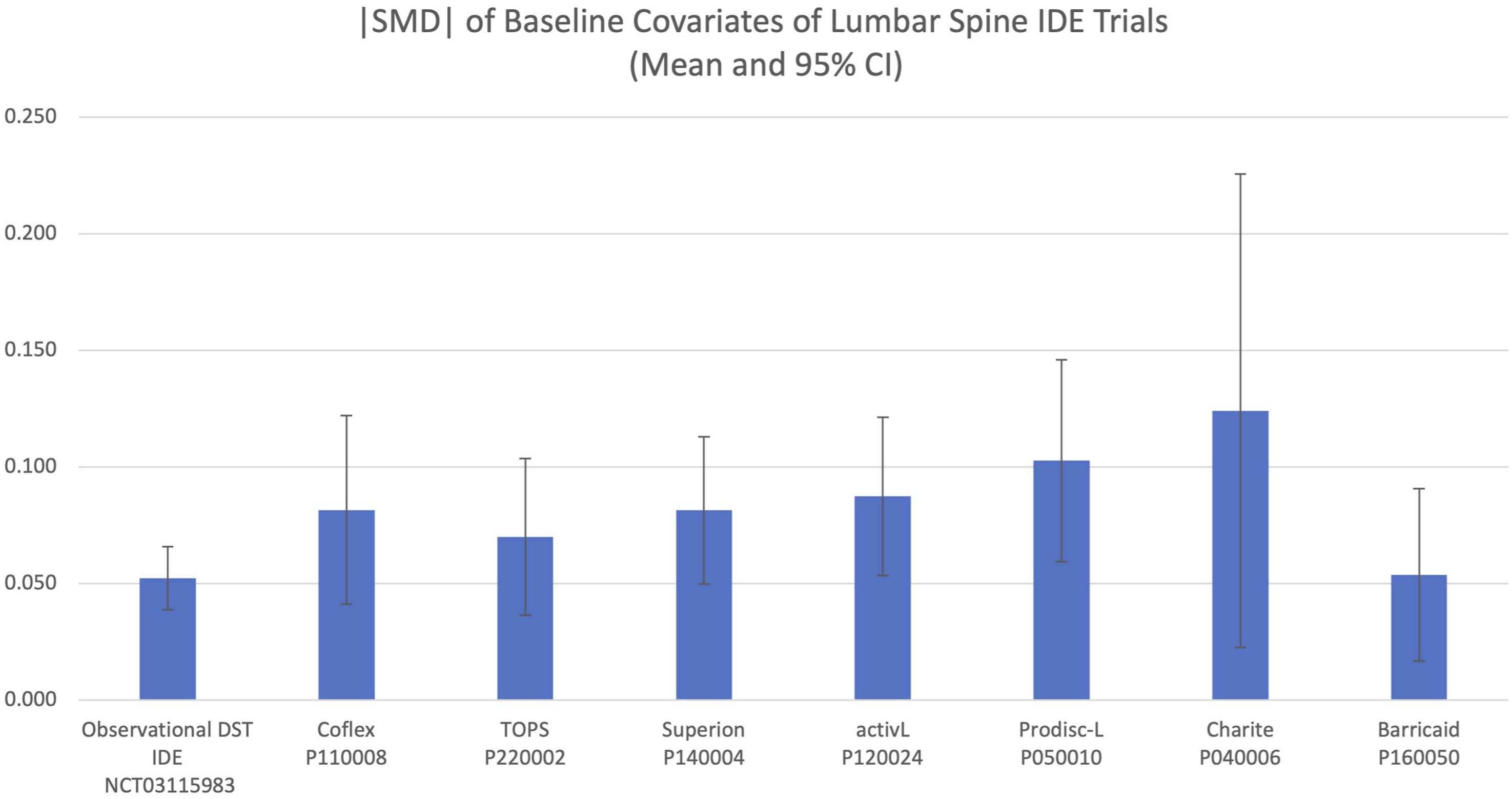

Additionally, the covariate balance achieved through the PS design was compared to the covariate balance reported in seven randomized spine IDE trials.32-38 Covariate data from the randomized IDE trials were obtained from the published summary of safety and effectiveness data (SSED) for each device. The absolute value of the standardized mean difference (|SMD|) was used to quantify the between-group covariate differences of the observational PS design and the seven comparable randomized spinal IDE trials. Comparison of the |SMD| of covariates among the RCTs and PS-balanced trial was performed with 1-way ANOVA as well as Student’s t test.

Results

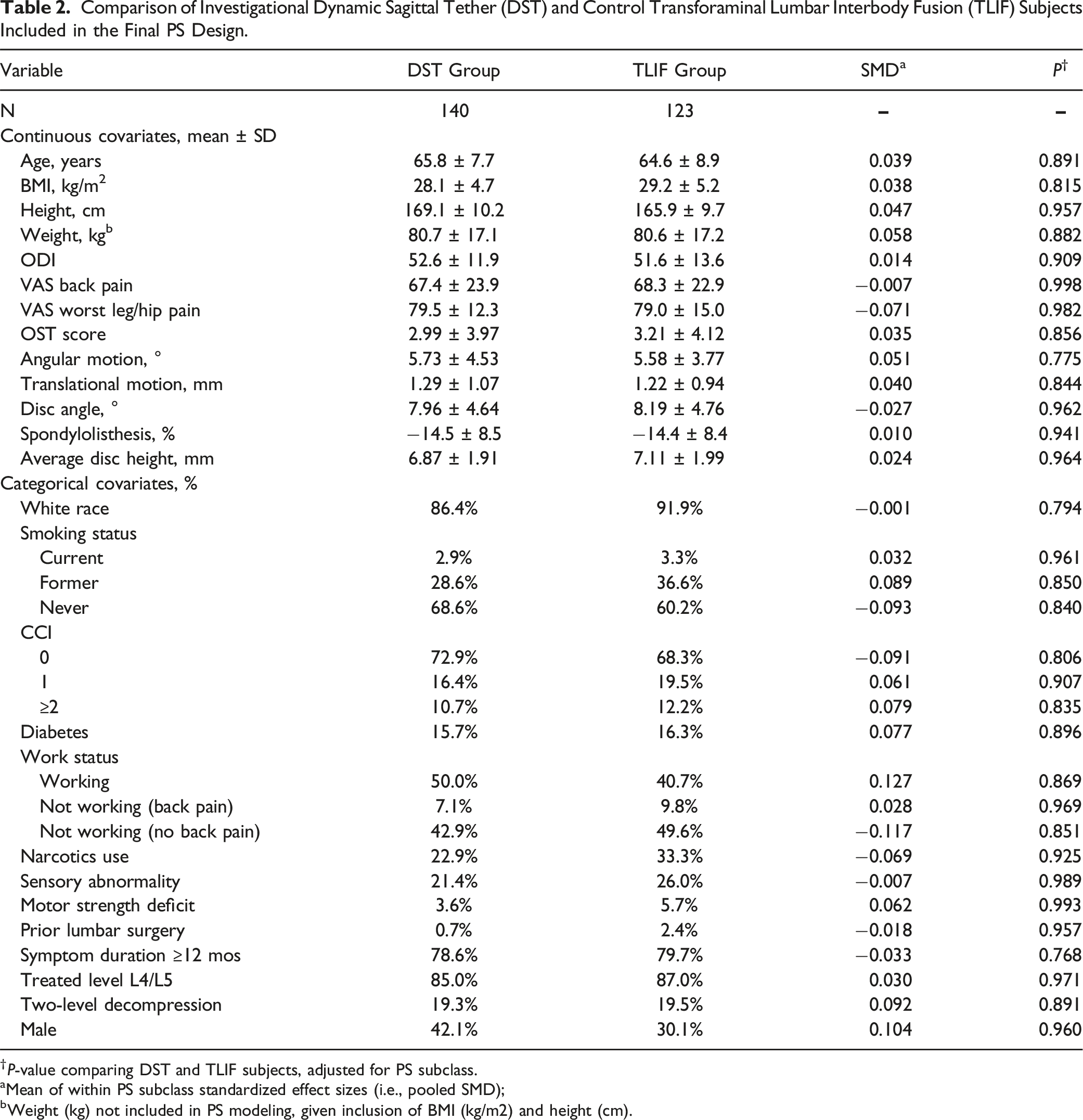

The PS subclassification heuristic resulted in a final PS design including all eligible 140 DST participants and 123 of 140 (88%) of eligible TLIF control patients. As illustrated in Figure 1 and detailed in Table 2, excellent balance in included baseline covariates was achieved through the application of the PS design. After controlling for PS subclass there were no significant covariate differences between investigational and control subjects (all P ≥ 0.768) and pooled |SMD| values across subclasses were close to zero (all |SMD| ≤0.127), representing near optimal balance as defined by Austin et al.

21

The balance in baseline covariates achieved in the PS observational design and the covariate balance achieved in seven lumbar spine IDE device trials is presented in Table 3 and summarized in Figure 2. The |SMD| across covariates in the PS designed sample were significantly lower than the average across recent RCTs (P < 0.01), with the PS design showing similar or smaller |SMD| than each of the individual RCTs. The |SMD| did not differ among the 7 RCTs alone (P = 0.396). Love Plot of pooled standardized mean differences illustrating the improved balance in covariates achieved through the PS design. Comparison of Investigational Dynamic Sagittal Tether (DST) and Control Transforaminal Lumbar Interbody Fusion (TLIF) Subjects Included in the Final PS Design. †P-value comparing DST and TLIF subjects, adjusted for PS subclass. aMean of within PS subclass standardized effect sizes (i.e., pooled SMD); bWeight (kg) not included in PS modeling, given inclusion of BMI (kg/m2) and height (cm). Absolute Value of Standardized Mean Differences (|SMD|) of Baseline Covariates Between Investigational and Control Groups of the DST IDE Study and Seven Randomized Lumbar Spine IDE Studies. Mean and 95% CI of |SMD| of available baseline covariates between investigational and control groups from the propensity score-balanced observational DST IDE study and seven randomized lumbar spine IDE device studies.

Discussion

Applying a propensity score subclassification design in this prospective, observational cohort achieved excellent covariate balance between patients receiving DST and TLIF, permitting causal interpretations of future outcomes comparisons between the non-randomized groups. When compared to similar IDE randomized trials, the PS-designed sample shows similar or better balance based on the average |SMD| values among the selected baseline covariates.

While the RCT is often considered the gold-standard study design, there is a dearth of RCTs in spinal surgery research literature likely due to practical challenges to blinding, ethical placebo conditions, and participant treatment preference.4,7 Spinal device studies, in particular, may not benefit from a RCT design given the inability to effectively double-blind and the potential for strong patient preference for the treatment condition leading to selection bias or poor recruitment. 3 When a RCT is not practical, an observational trial design incorporating propensity scores presents an alternative approach to creating the covariate balance between control and experimental groups required for causal inference.

The primary weakness of a PS design is the potential for unmeasured confounding. 25 While a PS design creates excellent balance between non-randomized groups on measured covariates (e.g., those included in the PS model), as well as balance in unmeasured covariates to the extent that they are correlated with these measured covariates, there remains the potential for remaining imbalance in covariates not included in the model. Randomization, on the other hand, is expected to achieve balance among both measured and unmeasured covariates across treatment and control groups. 1 To minimize the potential for such unmeasured confounding, following evidence-based practice, 25 the PS design described here included a rich set of covariates that are expected to be associated with study outcomes. These covariates were identified a priori through a collaborative process of statistical and clinical evaluation of current literature with subject matter experts and FDA. The chosen covariates captured a wide-array of information, including subject demographics and lifestyle factors, clinical and radiographical characteristics, and surgery-specific characteristics. As shown by Brookhart et al, 25 including covariates associated with study outcome can improve precision without increasing bias, whereas including covariates related to exposure but unrelated to outcome will worsen precision without decreasing bias. To further understand the robustness to unmeasured confounding, metrics such as the E-value 39 could be reported as part of future outcomes analyses. As defined in the seminal paper from Vanderweele and Ding, 39 the E-value is defined as “the minimum strength of association […] that an unmeasured confounder would need to have with both the treatment and outcome to fully explain away a specific treatment-outcome association, conditional on the measured covariates”. Thus, the larger the E-value, the more robust the results of the PS design are to possible unmeasured confounding.

We acknowledge that alternative study designs exist that attempt to mitigate the risk for bias from participant treatment preference in unblinded RCTs. An example is the two-stage randomized preference trial, where one cohort is assigned to their preferred treatment while another cohort is randomly allocated to treatment arms. Through this design, the effect of treatment preference on the relationship between exposure and outcome can be estimated. 40 An important drawback to the two-stage trial design is the larger sample size required, suggested to be double a traditional RCT. 41 Additionally, there may be limited patients available who are willing to be randomized, delaying recruitment of the RCT cohort. Our trial design incorporated open label allocation, separate investigational and control sites, plus a PS design to efficiently mitigate the effects of patient preference or other selection bias on the relationship between exposure and outcome. Participants from control sites were never offered the investigational treatment; therefore, there was no other study treatment available to prefer. Those at investigational sites chose the IDE arm, reducing the risk of resentful demoralization affecting responses to subjective outcomes or trial retention. Through the PS design, we were able to achieve balance across numerous baseline covariates between the control and investigational groups, limiting the risk of selection bias from inherent differences in patients who preferred the investigational treatment and those who received the control.

Finally, we note that while the PS design was shown to have similar or better covariate balance than that achieved across 7 RCT studies available for comparison, the publicly available covariates were not the same across all studies as these data were obtained from the Summary of Safety and Effectiveness Data documents published by FDA. There may have been unreported or unmeasured covariates from the RCT studies that demonstrated significantly better balance than those of our study (e.g., smaller |SMD|). However, the very small |SMD| achieved across numerous relevant covariates through the PS design makes it unlikely that existence of these covariates would result in meaningfully better balance within the RCTs.

In conclusion, the PS design is efficient and allows for causal interpretations from treatment group comparisons, while potentially avoiding patient preference bias and “resentful demoralization” sometimes seen in control groups of randomized trials. Propensity score designs should be considered a rigorous study design alternative when randomization is impractical, unethical, or may introduce bias.

Footnotes

Author Contributions

Greg Maislin: Study design, analysis, writing and editing. Brendan Keenan: Study design, analysis, writing and editing. Todd F. Alamin: Study design and editing. Louie Fielding: Study design, analysis, writing and editing. Ashley Scherman: Writing and editing. Robert Hachadoorian: Study design, analysis, editing. Clifford Pierre: Editing. Rick C Sasso: Study Design and Editing. William F Lavelle: Study Design and Editing. Jens Chapman: Editing.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Author Jens Chapman is the editor-in-chief of the Global Spine Journal. Author Todd Alamin received consulting fees, and grant and research support from Empirical Spine, Inc. Todd Alamin also holds stock in Empirical Spine, Inc. Author Louie Fielding is employed by Empirical Spine, Inc. Author Ashley Scherman received consulting fees from Empirical Spine, Inc for medical writing. Authors Rick Sasso, William Lavelle, and Jens Chapman received grant and research support from Empirical Spine, Inc. Biomedical Statistical Consulting® LLC provided paid biostatistical consulting services for this project and authors Greg Maislin, Brendan T Keenan, and Robert Hachadoorian received salary from BSC® for this work. Author Clifford Pierre has no conflicts to disclose. Authors did not receive compensation for authorship.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported in-full by Empirical Spine, Inc.