Abstract

Study Design:

A quality-control Internet-based study using recognized quality scoring systems.

Objective:

The aim of the study is to evaluate the quality, content and readability of online information on kyphosis.

Methods:

The 3 most frequently used search engines were identified and a search for “Kyphosis” was made in each. The 2 reviewers categorized their Web-sites by type, and the quality of each was assessed using well-known scoring systems, including the DISCERN score, JAMA benchmark, GQS, and the kyphosis specific content score. The Flesch-Kincaid grade level (FKGL) was used to assess the readability. The quality of the information was also evaluated according to the presence and absence of the HONcode.

Results:

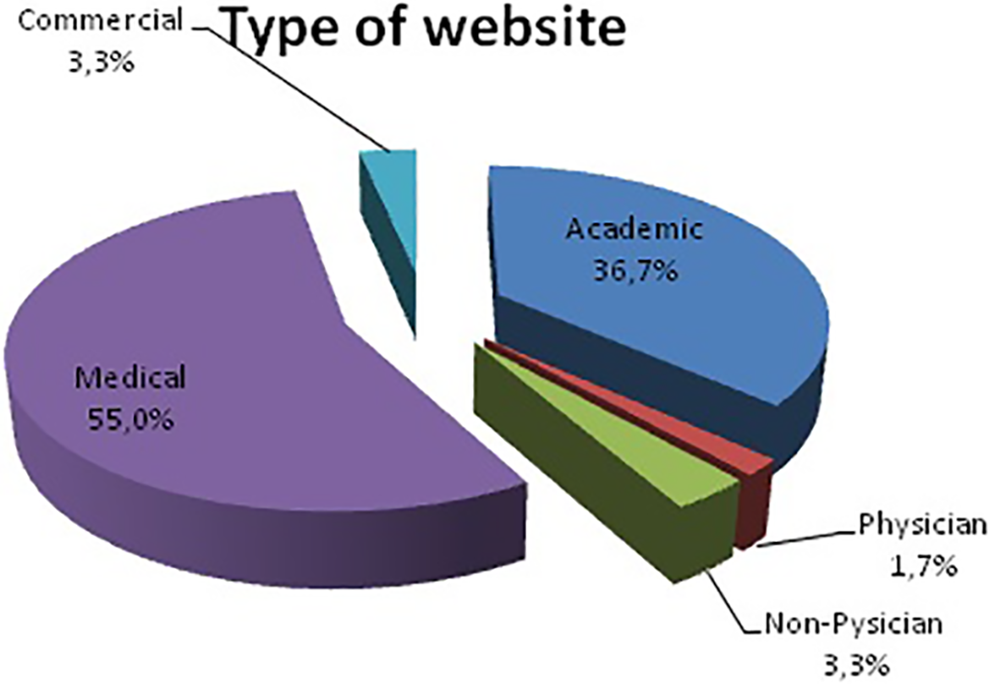

Sixty unique Web sites were identified and analyzed. The distribution of the categories was 33 (55%) medical, 22 (36.7%) academic, 2 (3.3%) non-physician, 2 (3.3%) commercial and 1 (1.7%) physician. There wasn’t statistically significant difference between the sources in terms of DISCERN, JAMA, GQS and KSC scores (P > 0.05). However, a review of the FKGL scores revealed that the academic-based websites’ FKGL score was significantly higher than the medical-based websites (P: 0.007). Also there wasn’t statistically significant difference among the DISCERN, JAMA, GQS, KSC, FKRS and FKGL scores of the web-sites according to the HON code’s presence (P > 0.05).

Conclusion:

Information about kyphosis on the Internet is of limited quality and low information value. The readability of the online information in our results showed a significantly higher reading level than the sixth grade level recommended by the AMA and NIH.

Introduction

In recent years, the Internet has become a widely used communication tool and a vital information source. The growth of the Internet has also increased access by patients to online healthcare information.1,2 This trend has led many medical practitioners to publish internet-based content or include it in patient interactions and applications.3,4 In many cases, information referral websites are a good source of support if they contain information that patients need, helping them learn about their illness and treatment options. 5

However, there are concerns about the accuracy and quality of the information available on the Internet due to a lack of professional peer review, regulation of content, or publication.6-8 The Internet’s increased role as an easily accessible medical reference resource for patients and families highlights the importance of ensuring access to accurate and understandable online health information. In some cases, online information may present misinformation and reflect biased perspectives, making outpatient counselling more difficult. 9 A 2018 study conducted by the Pew Research Center showed that 9 out of 10 American adults use the Internet and 72% research their health-related problems online. 10 Recent studies reported that 86% of those who use the Internet to evaluate health-related information believe that health information is reliable, and 64% said internet information influences their healthcare decisions. 11

Although a powerful research tool for both physicians and patients, the Internet has some limitations. The information it provides may be factually inaccurate, reflect industry bias, and lack medical professionals’ peer review. In addition, many sources are inappropriately written at higher reading levels higher than the eighth grade.12-17 Therefore, the Internet and its resources can have detrimental effects on patient psychology; because many people do not acknowledge the inaccuracies of some web-based resources, the physician-patient relationship can be negatively affected. 18

In internet-based studies of spinal diseases, the authors observed that the clinical information available on the Internet was of generally poor quality.9,19-27 To our knowledge, no published studies have evaluated internet resources on the spinal disease kyphosis. Therefore, this study aimed to assess the content, quality, and readability of information available online about kyphosis.

Materials and Methods

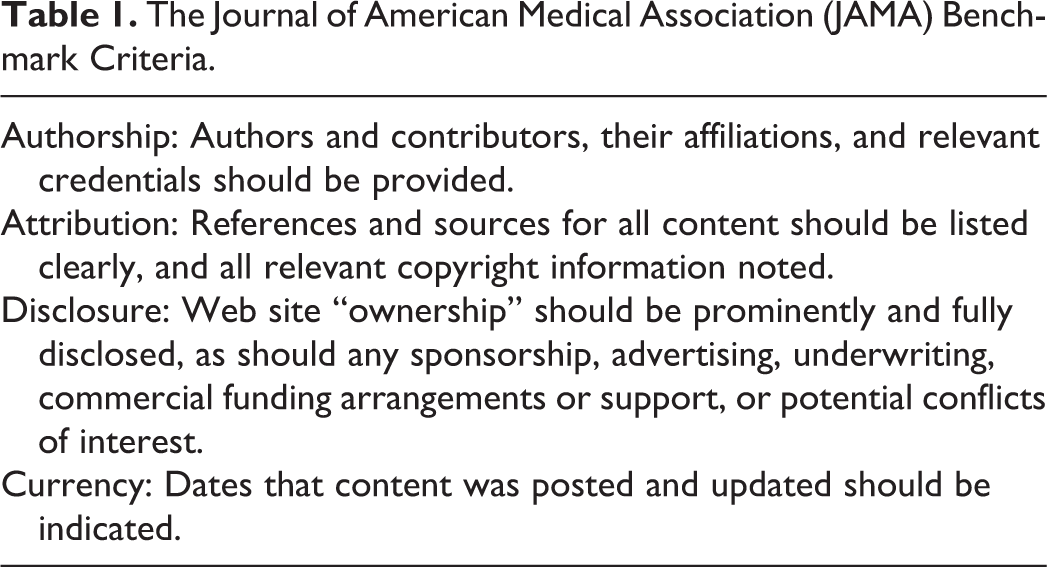

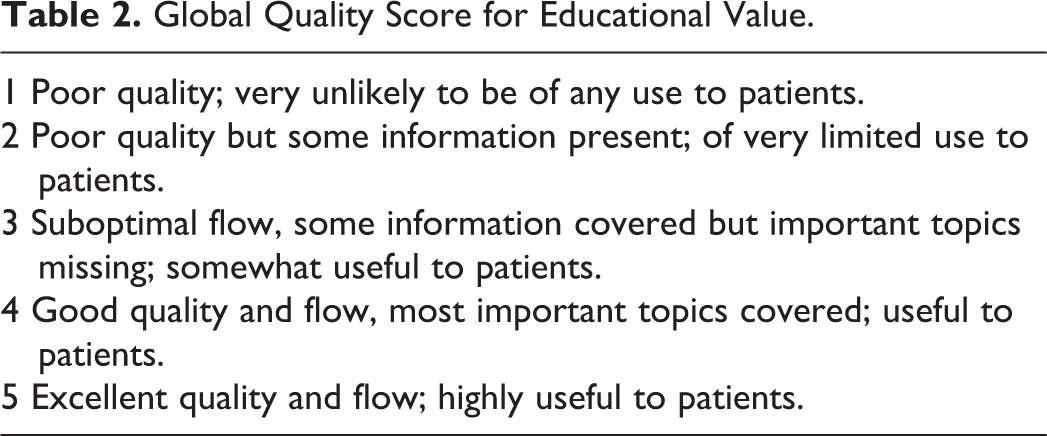

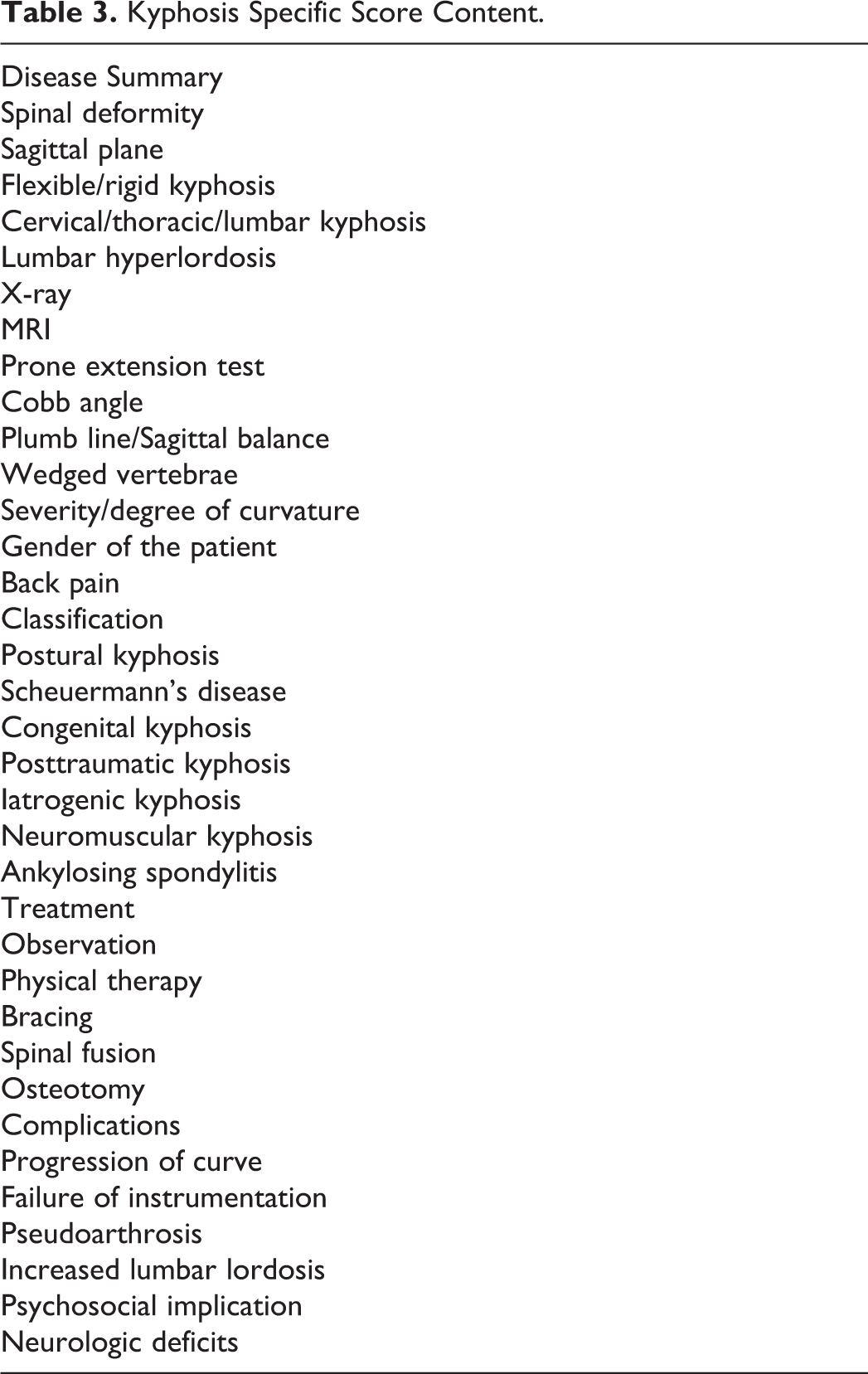

Like previous studies,22,28-30 screening for this study was conducted by typing “kyphosis” in the Google, Yahoo! and Bing search engines. As of December 2020, Google was the dominant search engine with a market share of 69.80%, followed by Bing (13.31%) and Yahoo! (2.11%). 31 All searches were conducted on the same day (December 13, 2020) and the cookies of all browsers were cleared before searching. The top 50 websites in each of the search engines were included in the study. Duplicate or inaccessible websites were excluded, and 60 unique websites were identified. The websites’ contents and the evaluation scores were determined independently by 2 authors, who closely examined each website. After evaluation, the websites were categorised as 1) academic, 2) physician, 3) non-physician (allied health professionals-occupational therapists, physiotherapists, chiropractors, and alternative medical providers), 4) medical (health-related websites) and 5) commercial. All unique websites were evaluated using the DISCERN instrument for measuring the reliability of written health information, the Journal of American Medical Association (JAMA) benchmark (Table 1), the Global Quality Score (GQS) (Table 2), the Flesch-Kincaid Readability Test Tool (FK) and the Kyphosis-Specific Content (KSC) score (Table 3) and the presence or absence of the Health On the Net (HON) Foundation seal.

The Journal of American Medical Association (JAMA) Benchmark Criteria.

Global Quality Score for Educational Value.

Kyphosis Specific Score Content.

The DISCERN process was created in Oxford, the UK, to assess written health information. It initially consisted of 16 questions. 32 The first eight questions focused on the publication’s reliability, and the next 7 questions addressed specific details of treatment options. The last question asks the user for the website’s overall rating. Each question is scored from 1 to 5. The minimum total score was six, and the maximum total score is 80. Websites were classified according to their total scores as follows: “excellent” (63 to 80), “good” (52 to 61), “medium” (39 to 50), “poor” (28 to 38) or “very poor” (<27).

The JAMA benchmark evaluates online information quality using 4 criteria (authorship, attribution, description, and currency). 18 Authorship requires details about authors or contributors to be provided on the website, along with their credentials and links. Attribution requires that all content references and resources be identified, along with all relevant copyright information. Disclosure requires specific and complete disclosure of website ownership, including commercial, financial ties and potential conflicts of interest. Finally, currency ensures that the website’s content is dated during the initial upload and subsequent updates. A score was awarded for each of the criteria met, resulting in scores ranging from zero to four, with 4 indicating the highest quality.

The websites were also rated using a GQS, which uses a 5-point scale to rate the website’s overall quality. The scores rank the website’s information quality and how the reviewer believes it will be useful to the patient. 33

The Flesch-Kincaid Grade Level (FKGL) test identifies the level of education a person needs to understand a particular text and ranges from fifth grade to college graduate level (5 was the lowest level and 12 was the highest). The Flesch-Kincaid Reading Ease (FKRS) test was used to rate the readability of the article. The score allows the reader to know the approximate level of education a person needs to read a given text easily. The ease of comprehension of a document was indicated by a number between zero and 100. Scores at or near 100 meant the document was straightforward to read, while scores at or near zero mean the document was highly-complex and challenging to understand. To obtain FK scores, each website’s text was transferred to a Microsoft Word (Redmond, Washington) document, a technique used in previous studies.34-36

Each website was then evaluated for KSC, a predefined kyphosis-specific content score developed to assess YouTube videos about kyphosis by Erdem and Karaca in 2018. 37 Under the KSC scoring system, 32 terms or topics were given one point if they were written on the website. KSC scoring was done separately by the 2 authors of this study. Websites with different scores were re-evaluated until a consensus was reached.

We also determined whether websites were certified by Health on the Net Code (HONcode). 38 The HON Foundation criteria are the most widely used online credibility code for medical information. The foundation was established in 1995 by a Swiss-based non-profit organisation to improve the quality of internet-based health information. The foundation provides a code of conduct for websites that acknowledge its principles and adhere to its standards; the websites are also subject to random audits for compliance.

Statistics

The IBM SPSS Statistics 22 (SPSS IBM, Turkey) program was used to evaluate the study’s findings. The suitability of the normal distribution parameters was assessed using the Kolmogorov Smirnov and Shapiro Wilks tests. Based on that evaluation, we found that the parameters did not reflect a normal distribution. In addition to descriptive statistical methods (mean, standard deviation, median and frequency), the Kruskal Wallis test was used to compare the categories. Dunn’s test was used to identify the group that caused the difference. The Mann Whitney U test was used to compare the scores based on the HON standards. Spearman’s correlation coefficient (rho) correlation analysis was used to examine the relationships between the scores. Using the intraclass correlation coefficient (ICC), lower and upper limits were calculated to determine inter-observer agreement levels. Significance was evaluated at the P < 0.05 level.

Results

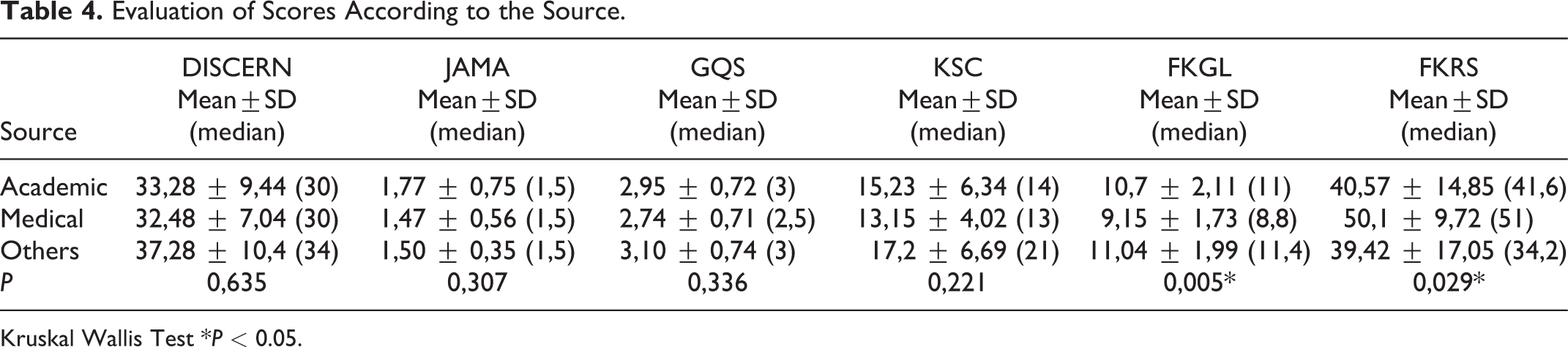

Websites were categorised according to their resources. The distribution of the categories was 33 (55%) medical, 22 (36.7%) academic, 2 (3.3%) non-physician, 2 (3.3%) commercial and 1 (1.7%) physician (Figure 1). The DISCERN, JAMA, GQS, FKGL, FKRS and KSC score for each website are summarised in Table 4.

Distribution of websites according to sources.

Evaluation of Scores According to the Source.

Kruskal Wallis Test *P < 0.05.

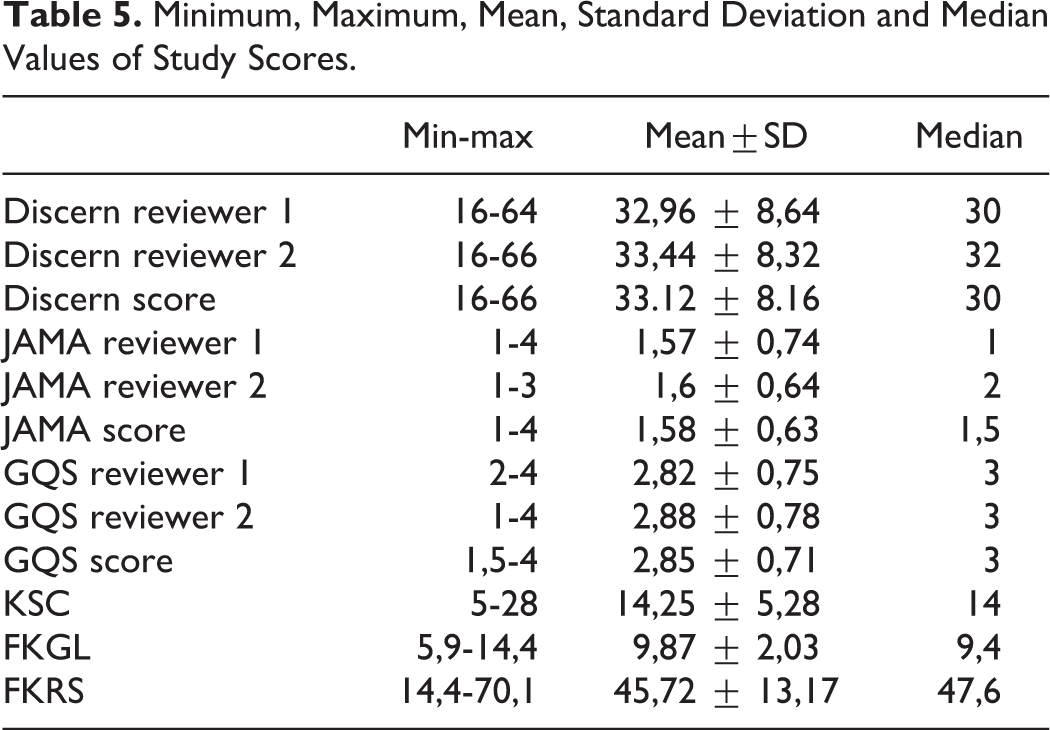

Observer 1’s DISCERN scores varied between 16 and 64 with a mean of 32.96 ± 8.64 and a median of 30. Observer 2’s DISCERN scores ranged from 16 to 66, with a mean of 33.44 ± 8.32 and a median of 32. The average DISCERN score was 33.12 ± 8.16, with a median of 30 (Table 5). For the DISCERN evaluation, the level of agreement between the 2 observers was 87.9%.

Minimum, Maximum, Mean, Standard Deviation and Median Values of Study Scores.

Observer 1’s JAMA scores varied between 1 and 4, with a mean of 1.57 ± 0.74 and a median of 1. Observer 2’s JAMA scores ranged from 1 to 3, with a mean of 1.6 ± 0.64 and a median of 2. The average JAMA score was 1.58 ± 0.63, and the median was 1.5 (Table 5). For the JAMA evaluation, the level of agreement between the 2 observers was 78.9%.

Observer 1’s GQS scores varied between 2 and 4, with a mean of 2.82 ± 0.75 and a median of 3. Observer 2’s GQS scores ranged from 1 to 4, with a mean of 2.88 ± 0.78 and a median of 3. The average GQS score was 2.85 ± 0.71, with a median of 3 (Table 5). For the GQS evaluation, the level of agreement between the 2 observers was 85.3%.

The FKGL scores ranged from 5.9 to 14.4, with an average of 9.87 ± 2.03 and a median of 9.4. The FKRS scores varied between 14.4 and 70.1, with an average of 45.72 ± 13.17 and a median of 47.6 (Table 5).

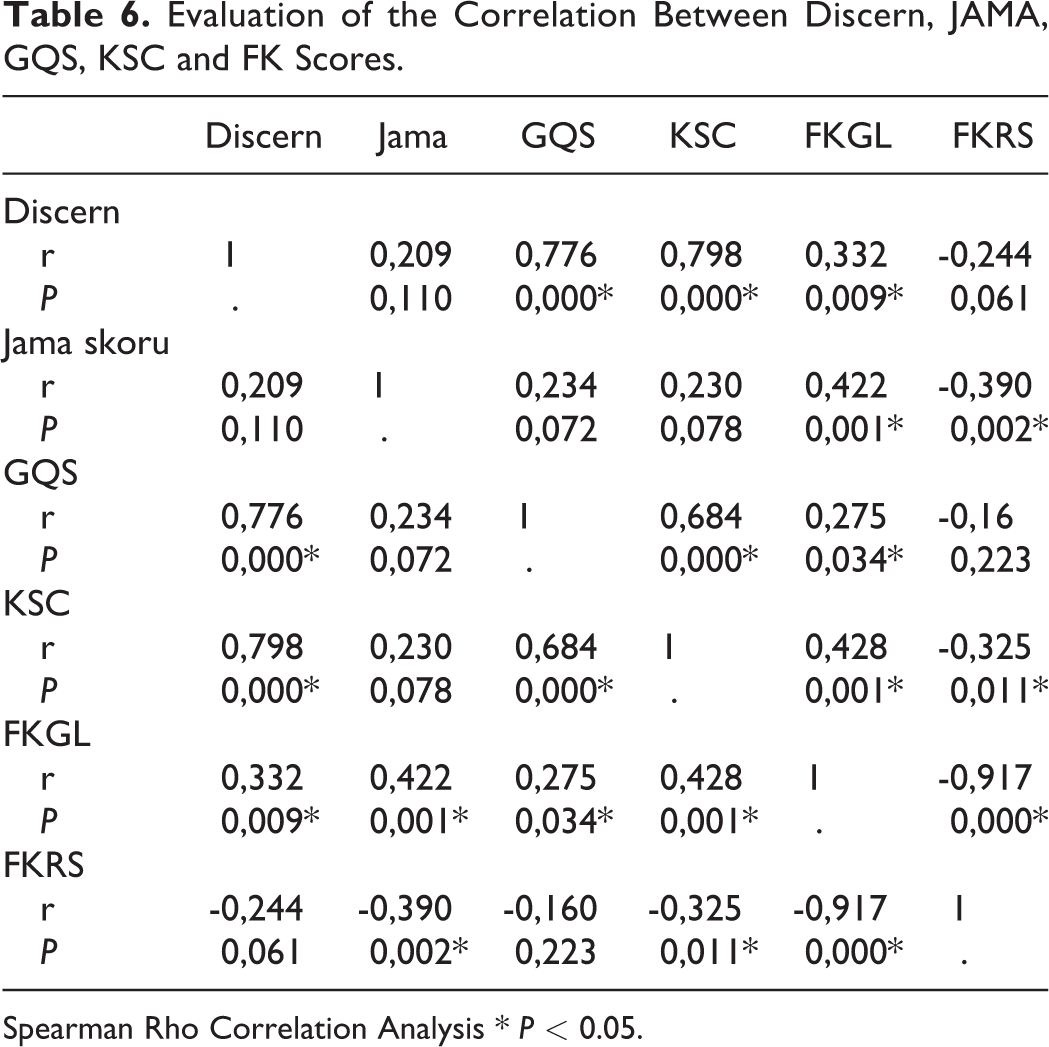

The KSC scores ranged from 5 to 28, with an average of 14.25 ± 5.28 and a median of 14 (Table 5). The correlation between the scores is shown in Table 6.

Evaluation of the Correlation Between Discern, JAMA, GQS, KSC and FK Scores.

Spearman Rho Correlation Analysis * P < 0.05.

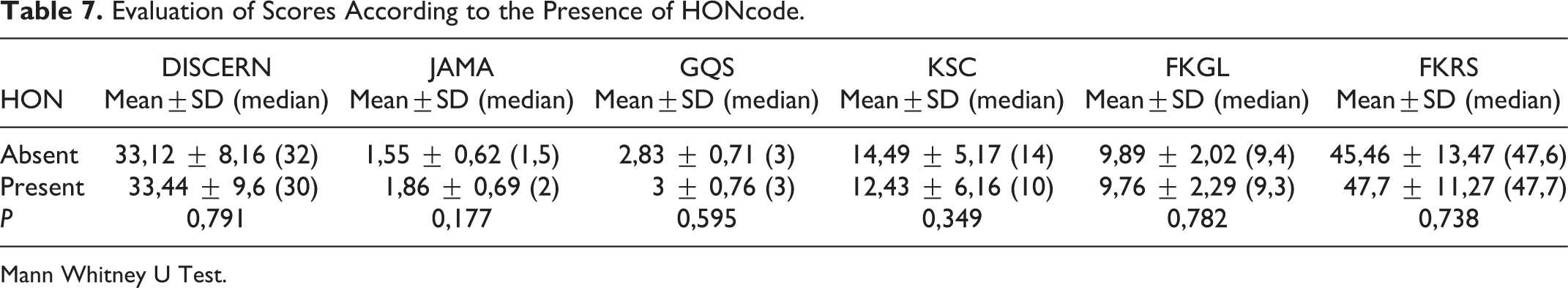

Seven (11.7%) of the websites were certified by the HONcode. There was no statistically significant difference among the DISCERN, JAMA, GQS, KSC, FKRS and FKGL scores of the web-sites according to the HON code’s presence (P > 0.05) (Table 7).

Evaluation of Scores According to the Presence of HONcode.

Mann Whitney U Test.

There was no statistically significant difference between the sources in terms of DISCERN, JAMA, GQS and KSC scores (P > 0.05) (Table 4). However, a review of the FKGL and FKRS scores revealed that the academic-based websites’ FKGL score was significantly higher than the medical-based websites (P: 0.007). The FKRS score was considerably lower than the medical-based websites (P: 0.038).

Discussion

Today, the Internet is the fastest growing and most popular source of information. Internet use has grown steadily to 4.9 billion users in December 2020, 63.2% of the world population uses the internet and there is an increase in internet usage rate of approximately 1266% between 2000-2020. 39 Stellefson et al. noted that 8 out of 10 Internet users have searched online for health information at least once, making it the third most popular Web activity besides using search engines and checking emails. 40 The Internet is a convenient information resource and a potential health education tool for disease management. Free access to such a large pool of information has changed the doctor-patient relationship. Self-informed patients are now more involved in medical decision-making. Despite the lack of professional and systematic review of internet content, patients and families often turn to online health information sources.

Sometimes, however, this information can be challenging to understand, inaccurate or misleading. Additionally, many patients cannot distinguish objective information designed to sell products and services from those of more neutral sources. Internet access for patients can adversely affect the patient-physician relationship through the patient’s resistance to the doctor’s advice and the collaborative decision-making process between physicians and self-trained patients. However, numerous studies have reported that the accuracy and quality of health-related information available on the Internet are low.24,28-30 Therefore, it is essential to evaluate online resources and help patients find high-quality, complete content and readable websites because the low-quality information may adversely affect the relationship between patients and physicians. 30 Our findings in this study showed that websites easily found by someone searching for kyphosis information are of low quality compared to standard assessment tools. These results are consistent with previous orthopaedic studies.18,32

Many studies have shown the low quality of internet-based information available for various medical conditions.18,41-44 Despite these deficiencies, patients still use the Internet. The primary problems with internet-based information are the lack of regulations and control mechanisms. Any person or authority can create a webpage without accurate information or controls. From the patient’s perspective, it is difficult to determine what information is reliable. One of the significant challenges facing the medical profession today is managing patients who read websites containing inaccurate or low-quality information. This can negatively affect doctor-patient dynamics. A physician needs to be aware of the information available to patients and learn what kind of online research physicians are currently doing on a particular topic.

Moreover, many patients are interested in additional information. If a doctor can direct the patient to the right place, this can increase the patient’s knowledge and improve patient outcomes and satisfaction. The lack of quality information available to patients may encourage doctors and academic institutions to create their own websites.

The average DISCERN score was 33.12 ± 8.16. Consistent with other studies on the spine, it showed that website information quality is poor.9,21-23,27 We believe the reason for the low average score could be that websites do not adequately explain the purpose of the online content or the target audience and offer no reference materials to support their explanations.

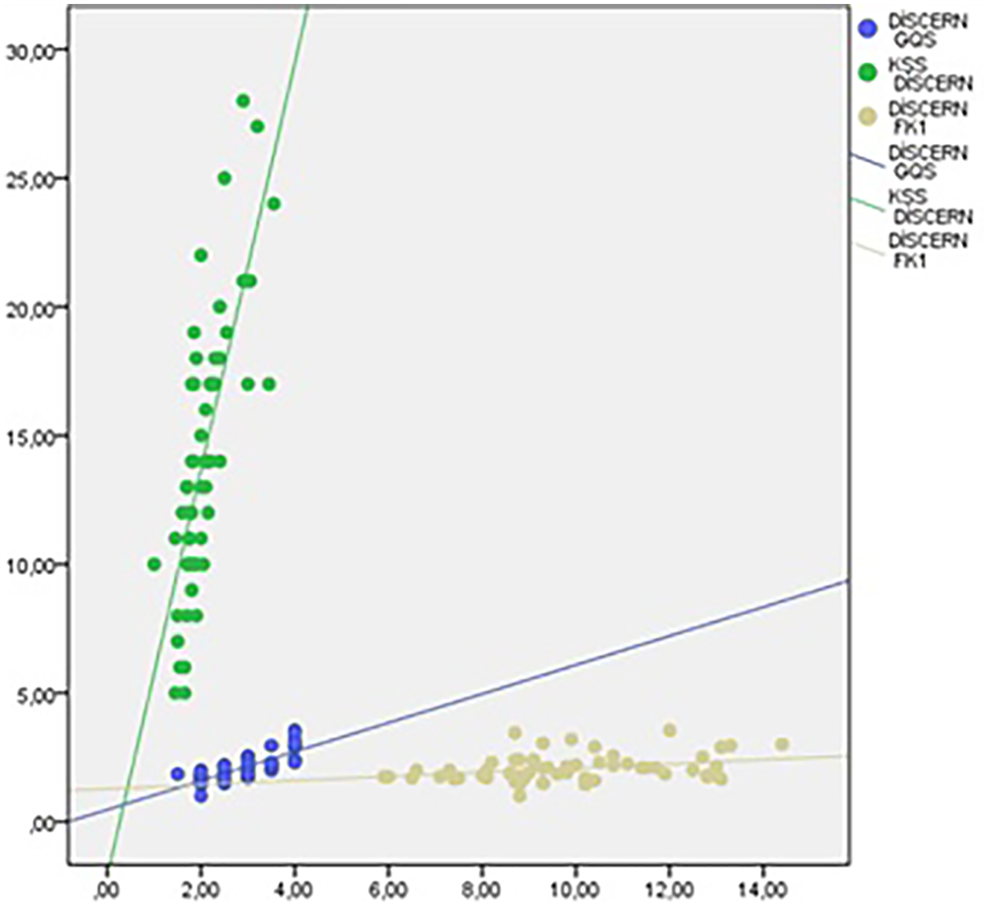

The average JAMA benchmark score was 1.58 ± 0.63 out of 4, similar to that reported in previous orthopaedic studies.21,22,29 In this study, only six (10%) websites had a JAMA score above 2. We believe the reason for such low JAMA scores was that most websites do not provide references or sources. This was even though the DISCERN evaluation’s last 2 questions related to references and citations, and those topics were reflected in the JAMA evaluation’s 2 main components. Unlike previous studies on internet resources, 22 this study found no significant statistical relationship between these 2 scores (Figure 2). This is likely because the DISCERN evaluation is more detailed and includes more components for scoring an article’s accuracy.

Relationship of DISCERN score to other scores.

Our results showed that the mean FKGL score was 9.87 ± 2.03, and the FCRS score was 45.72 ± 13.17. Based on these results, the FKGL score was almost 4 degrees higher than the sixth-grade reading level recommended by American Medical Assosication (AMA) and National Institutes of Health (NIH), 17 while the FKRS score was at the “difficult to read” level. This score means that if a patient wants to understand the average kyphosis website’s content, they should know English at about the high school level. This is troublesome because it puts many patients at a disadvantage and creates a barrier to the online information on kyphosis they desire. In our study, especially FKGL, DISCERN and JAMA, we found a positive and statistically significant relationship between GQS and KSC (Table 3). This demonstrates a linear relationship between the readability and quality of the article in kyphosis content.

In previous studies in the literature, HON code positivity was shown to be an essential indicator of the quality of internet broadcasts.19,21,22 This study found that the contents evaluated with the HON code were not significantly different from the DISCERN, JAMA, GQS, KSC and FK scores of the content with the HON code.

There were several limitations in our study that could be addressed and avoided. First, the search results or rankings changed frequently because the Internet’s content is continually evolving. Therefore, the data in this study should be thought of as a mere snapshot of kyphosis-specific websites. Second, there may be selection bias because we selected only those websites written in English. Finally, we only selected the top 50 websites from the 3 most popular search engines. We did not evaluate the quality and completeness of information on websites other than the 3 most commonly used search engines.

Conclusions

Like previous publications on spinal diseases, we found that the quality of websites providing information on kyphosis was variable and most were of low quality despite the exponential increase in the number of available sites. The websites’ readability, especially academic sources, were characterised by a reading level higher than the sixth grade recommended by the AMA and NIH. These established websites should be patient-oriented, and the general population should understand the information. With advancing technology and the increasing use of the Internet for health information, clinicians should direct patients to appropriate websites and help develop content that is useful to patients and in a language they can understand.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.