Abstract

Background

Parkinson’s disease is a progressive neurodegenerative disorder that contributes to the growing global health burden. YouTube has emerged as a ubiquitous source of health information among patients and their caregivers. Despite the increasing use of video-sharing platforms, the educational quality and reliability of Parkinson’s disease–related videos remain unknown.

Methods

This cross-sectional study evaluated 147 Parkinson’s disease–related YouTube videos. The research team collected general video information, and three instruments (Global Quality Score, modified DISCERN tool, and Patient Education Materials Assessment Tool) were applied to assess overall quality, reliability, and content understandability and actionability. Descriptive analyses were conducted overall, followed by detailed comparisons across the videos. Finally, using the Spearman correlation coefficient, we explored potential correlations between general video information and video quality and reliability.

Results

In this study, we observed moderate overall quality and reliability when assessed using the Global Quality Score, modified DISCERN tool, and Patient Education Materials Assessment Tool instruments.

Conclusions

Our findings demonstrated that YouTube contains substantial publicly available Parkinson disease–related content; however, the quality and reliability of the content varies and is generally inadequate to facilitate patient education. To better serve patients with Parkinson disease and their caregivers, multifaceted actions from healthcare professionals, science communicators, and internet platforms are necessary to elevate the quality and visibility of credible content.

Introduction

Parkinson’s disease (PD) is a chronic, progressive neurodegenerative disorder characterized by the degeneration of dopaminergic neurons in the substantia nigra and the pathological accumulation of α-synuclein in the form of Lewy bodies. 1 Clinically, PD manifests with hallmark motor features such as resting tremor, bradykinesia, rigidity, and postural instability; however, it is now well recognized that nonmotor symptoms, including depression, anxiety, cognitive impairment, sleep disturbances, autonomic dysfunction, and sensory abnormalities, constitute a substantial component of the disease burden. 2 These diverse symptom domains contribute to significant functional impairment, loss of independence, and a progressive decline in health-related quality of life. 3

PD poses an increasingly substantial global health burden. The age-standardized incidence, prevalence, and disability-adjusted life years (DALYs) have increased considerably since 1990.4,5 Most epidemiologists suggest that this increase in incidence may be partly attributable to environmental factors, although this hypothesis remains debated and represents an important area of ongoing research. The global incidence is expected to continue rising, largely driven by population aging and demographic transitions, with projections suggesting a doubling of cases in some regions by 2050. 4 This rising prevalence has considerable individual and societal impacts.

Pharmacological therapy for PD remains the mainstay of treatment; however, its long-term use is associated with complications such as motor fluctuations and dyskinesias. 6 To mitigate disability and optimize functioning, multidisciplinary approaches (physical therapy, psychological therapy, and dietary interventions) are widely recommended.7,8 For selected patients with refractory motor complications, advanced interventions, including deep brain stimulation (DBS), focused ultrasound, and emerging gene- or cell-based therapies, have become important options.9–11 Due to the clinical heterogeneity of symptom profiles and the continuous emergence of therapeutic options, patients and their caregivers have an urgent need for accurate and accessible information resources.

Against this background, the ways in which patients and their families obtain medical knowledge have become increasingly important. Traditional consultations with healthcare professionals remain essential; however, the demand for timely, understandable, and easily accessible information cannot be ignored. Among digital platforms, YouTube stands out as a major channel for health communication. For patients with chronic diseases such as PD, YouTube videos may offer a convenient means of accessing information on symptom management or patient experiences. However, although social networking platforms offer considerable convenience, their use in health communication still has significant limitations. Incorrect information on social media can easily lead patients to make inappropriate healthcare decisions. 12 It is concerning that patients with PD and their caregivers may rely on such content to manage complex lifelong conditions without critical evaluation.

Given the limited research specifically addressing PD content on YouTube, this study was designed to systematically evaluate the quality, reliability, and comprehensibility of videos related to PD. Our aim was to provide an overview of the current state of video content on this widely used platform. By applying validated assessment instruments, we sought to identify both strengths and shortcomings in available video resources. The significance of this work lies in its potential to inform patients with PD, caregivers, and healthcare professionals about the reliability of PD information on YouTube. Furthermore, our findings may help guide future efforts by medical institutions and professional organizations to enhance the accuracy and accessibility of online educational materials for people living with PD.

Methods

Ethics

The videos used in this study were publicly accessible; no unlisted or private videos were included, and no human or animal participants were involved. As the study did not involve collection of personal or sensitive data and interaction with human or animal participants, ethical approval and written informed consent were not required in accordance with institutional and international research ethics guidelines.

The reporting of this study conforms to the Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) guidelines. 13

Retrieval strategy

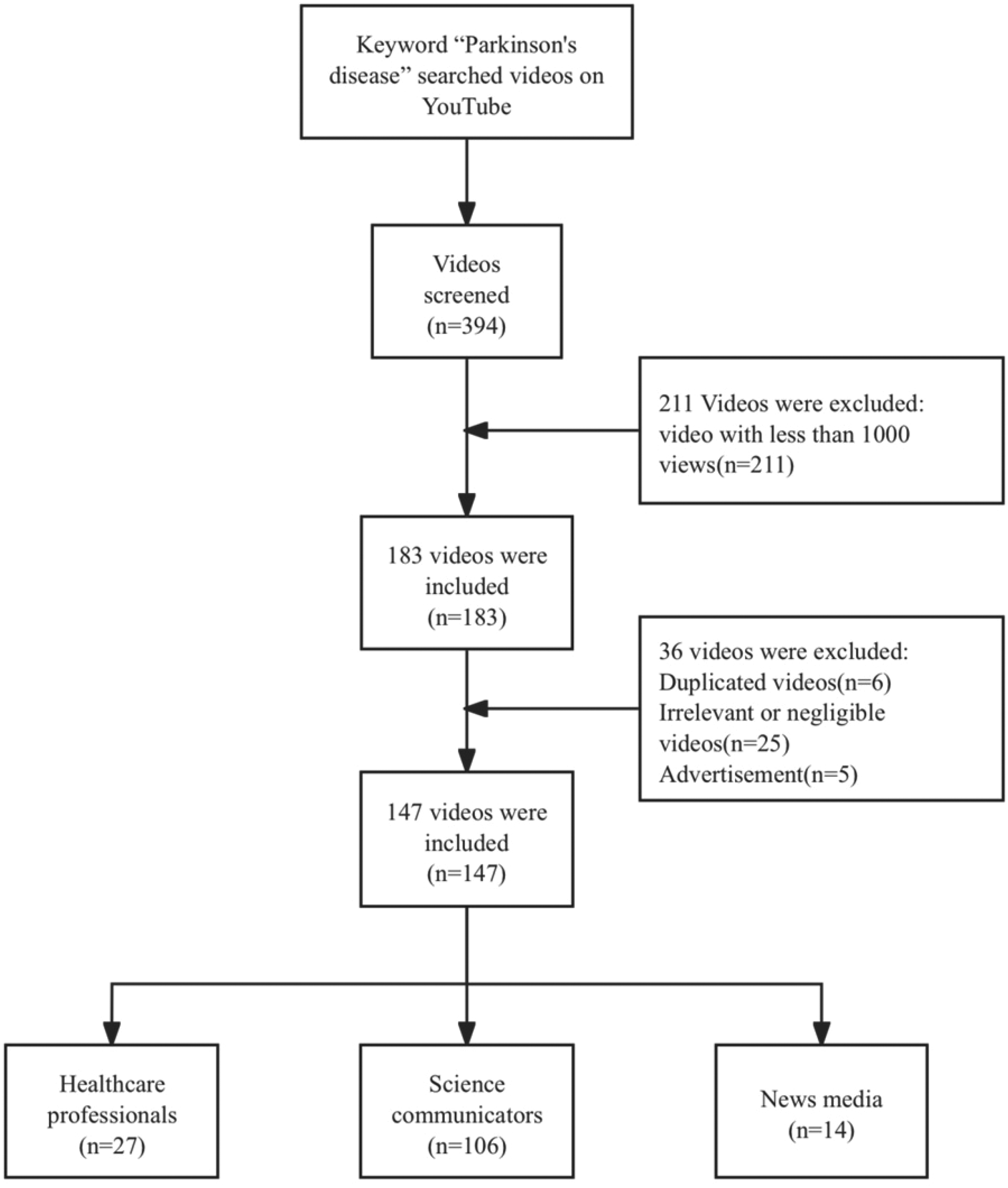

Videos were searched on YouTube using the keyword “Parkinson’s disease” on 12 September 2025, in China, yielding a total of 394 videos. To ensure sufficient visibility and relevance, only videos with at least 1000 views were included. This threshold was used as a pragmatic indicator of minimal audience exposure, focusing the analysis on videos that patients with PD and their caregivers are more likely to encounter and maintaining the feasibility of the rating procedure. Videos with fewer than 1000 views were excluded (n = 211). After removing duplicate entries (n = 6), irrelevant or negligible content (n = 25), and advertisements (n = 5), 147 videos were included in the final analysis. The retrieval and screening process is illustrated in Figure 1.

Video search strategy for Parkinson’s disease.

Data extraction

The variables collected included the following: video title, video identification code (ID), direct uniform resource locator (URL), number of days since upload, video duration (s), total number of views, number of likes, and number of comments. These parameters were selected to capture both the descriptive characteristics of the videos and indicators of audience engagement.

Data extraction was performed independently by two reviewers. Any discrepancies in the recorded information were resolved through discussion and consensus, with a third reviewer consulted when necessary. To ensure data accuracy, all entries were double-checked prior to analysis. All extracted data were recorded in an Excel spreadsheet.

Video classification

The included videos were classified according to two dimensions: source type and therapy type.

Six source categories were defined: doctor (n = 17), nurse (n = 6), therapist (n = 4), science communicator (n = 103), news media (n = 14), and others (n = 3). The doctors, nurses, and therapists categories specifically referred to healthcare professionals presenting content in a personal capacity rather than representing an institution. In contrast, science communicators included both individual creators who share medical knowledge and institutional accounts dedicated to health education.

Videos were also classified based on the therapy modalities they addressed: pharmacological therapy (n = 73), physical therapy (n = 35), DBS (n = 21), psychological therapy (n = 9), dietary intervention (n = 16), gene therapy (n = 2), and focused ultrasound (n = 1). Videos covering multiple therapy types were coded into all relevant categories.

Both classification steps were performed independently by two reviewers, with disagreements resolved through discussion and, when necessary, adjudication by a third reviewer.

Quality assessment

To evaluate the reliability and overall quality of the collected videos, we employed three validated instruments: the Global Quality Score (GQS), the modified DISCERN tool (mDISCERN), and the Patient Education Materials Assessment Tool (PEMAT).

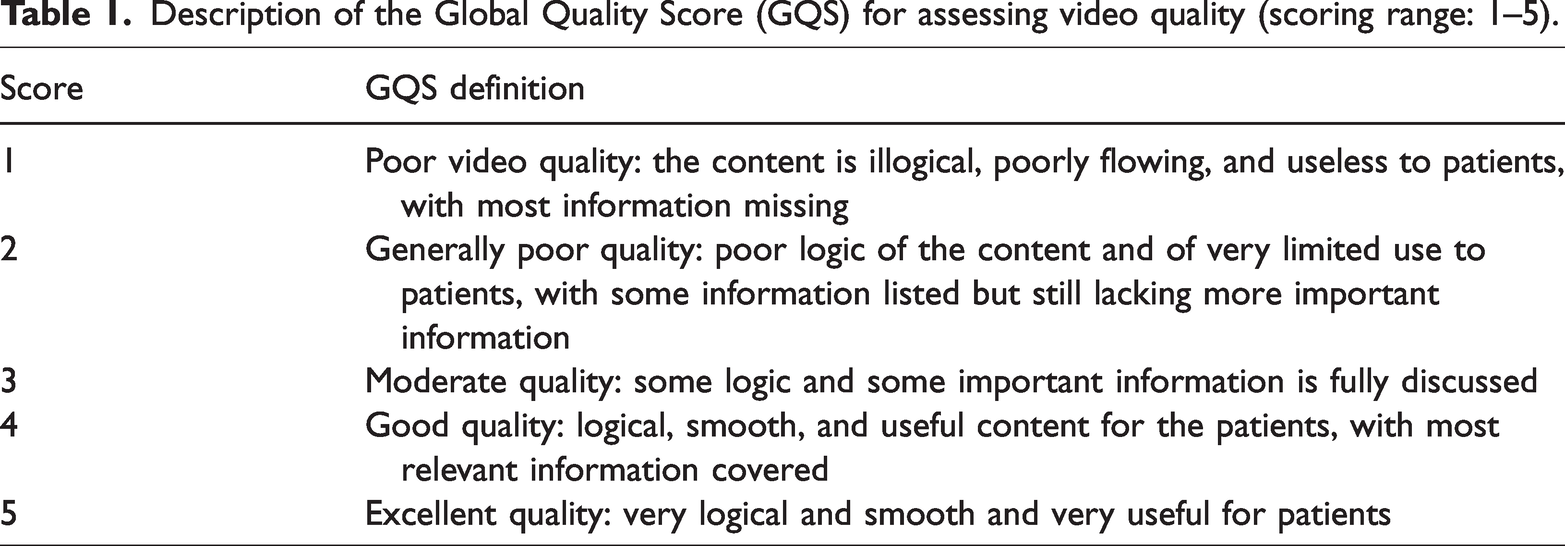

GQS used a 5-point Likert scale to evaluate the overall quality of each video, 14 with scores ranging from 1 (poor) to 5 (excellent) (Table 1). This scale considered the professionalism of the content, comprehensiveness of information, clarity of presentation, and the extent to which the content was understandable to the general viewer.

Description of the Global Quality Score (GQS) for assessing video quality (scoring range: 1–5).

For mDISCERN, 15 reviewers evaluated five predefined criteria: clarity, relevance, traceability, robustness, and mention of uncertainties (Table 2). Each criterion was scored as “yes” (1 point) or “no” (0 points), with a total score ranging from 0 to 5. Higher scores indicated greater reliability of the video content.

Description of the modified DISCERN (mDISCERN) tool for evaluating video reliability (Scoring range: 0–5).

In addition, PEMAT was used to evaluate the understandability (i.e. comprehensibility) (PEMAT-U) and actionability (PEMAT-A) of patient education materials. 16 It consists of 17 items divided into two domains: understandability and actionability (Table 3). Each item was rated as 0 (disagree) or 1 (agree), and the cumulative score was divided by the total possible points to yield a percentage score ranging from 0% to 100%. Higher scores indicated greater clarity, comprehensibility, and actionability of the material.

Patient Education Materials Assessment Tool for audiovisual materials (PEMAT-A/V).

Statistical analysis

Medians, means, and SDs were used for descriptive statistics. The Kruskal–Wallis test was used to identify significant differences between two or more independent variables. Spearman analysis was used to evaluate correlations between variables. All statistical analyses were performed using Statistical Package for Social Sciences (SPSS) version 28.1. Statistical significance was set at p <0.05.

Results

Video characteristics

A total of 147 videos were included. Table 4 presents the general characteristics of the sample. Videos had been uploaded to YouTube for a median of 1159 days (612–2761), with a median duration of 234 s (65–513). The median number of views was 44,195 (12,908–172,023), accompanied by 389 likes (111–2600) and 15 comments (3–100).

General characteristics, quality, and reliability of the videos.

GQS: Global Quality Score; mDISCERN: modified DISCERN tool; PEMAT-U: Patient Education Materials Assessment Tool–comprehensibility; PEMAT-A: Patient Education Materials Assessment Tool–actionability; M: mean.

Regarding video source, most videos were uploaded by science communicators (106/147), followed by news media (14/147) and healthcare professionals (27/147), including doctors (17/147), nurses (6/147), and therapists (4/147).

Regarding therapeutic modalities addressed, pharmacological therapy was mentioned most frequently (73/147), followed by nonpharmacological supportive therapies (60/147), including physical therapy (35/147), dietary interventions (16/147), and psychological therapy (9/147). Additionally advanced therapies were mentioned (24/147), including DBS (21/147), gene therapy (2/147), and focused ultrasound (1/147). Furthermore, 40 videos did not refer to any specific treatment.

Regarding video quality, GQS exhibited a median of 3 (3–4), the mDISCERN median score was 2 (2–3), and the median scores for PEMAT-U and PEMAT-A were 69.23 (61.54–76.92) and 50 (25–50), respectively.

Video quality scores grouped by video source

Regarding GQS, the median ratings provided by the science communicator and news media groups were both 3, whereas the healthcare professional group demonstrated a slightly higher median score of 3.5 (3–4). Overall, the informational quality of the videos was moderate, with content from healthcare professionals exhibiting comparatively better quality and credibility.

Regarding mDISCERN, the median scores for the science communicator, news media, and healthcare professional groups were 2.5, 3, and 2, respectively. Although the absolute differences were small, this finding indicates slight but discernible variations in perceived reliability.

Regarding PEMAT-U, all three groups reported the same median score of 69.23, with interquartile ranges (IQRs) of 69.23–84.62, 61.54–76.92, and 61.54–69.23, respectively. These results reflect an overall moderate to even high level of understandability among the evaluated videos. Although most videos were reasonably easy to comprehend, subtle differences in interpretation may still exist.

In contrast, PEMAT-A demonstrated more pronounced differences across the groups. Median scores were 50, 50, and 25 for science communicator, news media, and healthcare professional groups, respectively, with corresponding IQRs of 50–75, 25–50, and 25–50. The extent to which videos provided clear and actionable guidance varied substantially depending on the video source.

The Kruskal–Wallis tests further supported these interpretations. No statistically significant group differences were observed for GQS (H = 3.813, p = 0.149), mDISCERN (H = 1.106, p = 0.575), or PEMAT-U (H = 3.623, p = 0.163). However, a significant difference was noted for PEMAT-A (H = 6.776, p = 0.034) (Table 5, Figure 2).

Video quality scores by different source.

*p < 0.05 indicates statistical significance.

GQS: Global Quality Score; mDISCERN: modified DISCERN tool; PEMAT-U: Patient Education Materials Assessment Tool–comprehensibility; PEMAT-A: Patient Education Materials Assessment Tool–actionability; H: Kruskal-Wallis H statistic.

Video quality scores from different video sources.

Video quality scores grouped by therapy type

For GQS, the median ratings provided by the pharmacological therapy and nonpharmacological supportive therapy groups were both 3, whereas the advanced therapies group demonstrated a slightly higher median score of 4 (3–4). Although all three therapy categories were typically rated as having moderate to good overall quality, videos related to advanced therapies were perceived as having slightly higher quality and comprehensiveness compared with the other two categories.

For mDISCERN, the median scores were 2.5, 2, and 3 for the pharmacological therapy, nonpharmacological supportive therapy, and advanced therapies groups, respectively. This trend suggested notable differences in perceived reliability. Videos addressing advanced therapies were considered more dependable and evidence-based, whereas videos addressing nonpharmacological supportive therapies were viewed as relatively less reliable, possibly reflecting greater variability in the strength of supporting evidence for such interventions.

For PEMAT-U, all three therapy type groups reported an identical median score of 53.85, with IQRs of 46.15–65.38, 46.15–65.38, and 53.85–76.92 for the pharmacological therapy, nonpharmacological supportive therapy, and advanced therapies groups, respectively. Although the central tendency was identical across groups, videos concerning advanced therapies demonstrated slightly wider dispersion toward higher scores, indicating somewhat better clarity and comprehension for patients with PD and their caregivers within this category.

For PEMAT-A, all three groups reported an identical median score of 50, with IQRs of 25–50, 50–75, and 50–75 for the pharmacological therapy, nonpharmacological supportive therapy, and advanced therapies groups, respectively. Despite similar medians, the wider IQRs observed for the nonpharmacological supportive therapy group and the advanced therapies group imply that the potential for translating information into practical actions was more heterogeneous in these groups compared with pharmacological therapy content.

The Kruskal–Wallis tests indicated statistically significant differences across the three therapy groups for GQS (H = 7.292, p = 0.026) and mDISCERN (H = 9.912, p = 0.007), confirming that perceptions of quality and reliability varied meaningfully by therapy type. No statistically significant group differences were observed for PEMAT-U (H = 1.636, p = 0.441) or PEMAT-A (H = 4.243, p = 0.120) (Table 6, Figure 3).

Video quality scores by therapy type.

*p < 0.05 indicates statistical significance.

GQS: Global Quality Score; mDISCERN: modified DISCERN tool; PEMAT-U: Patient Education Materials Assessment Tool–comprehensibility; PEMAT-A: Patient Education Materials Assessment Tool–actionability; H: Kruskal-Wallis H statistic.

Video quality scores from different therapy types.

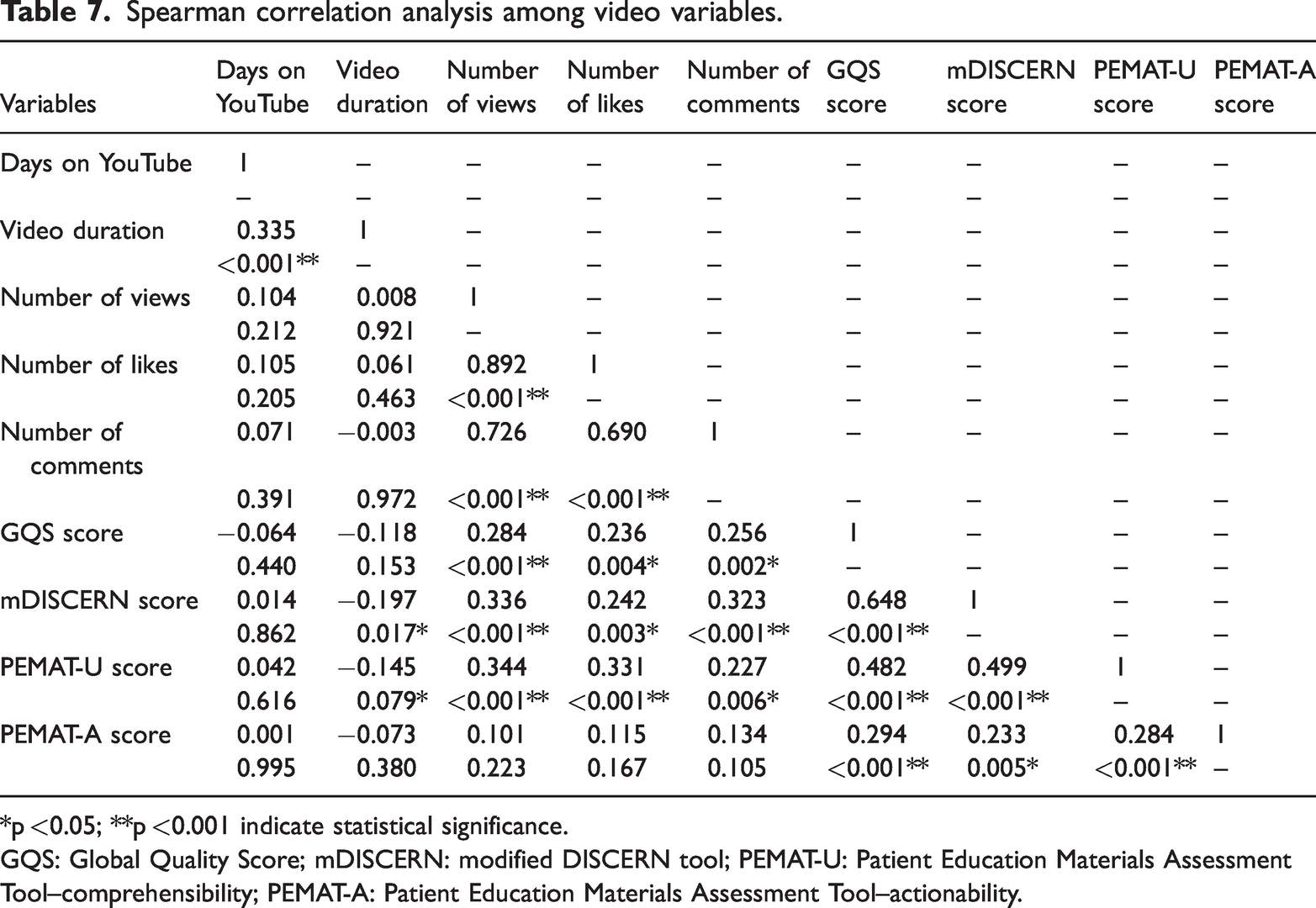

Spearman correlation analysis

As the data were not normally distributed, Spearman correlation analysis was used (Table 7). The Spearman correlation matrix revealed associations between video characteristics (days on YouTube, duration, views, likes, and comments) and quality scores (GQS, mDISCERN, PEMAT-U, and PEMAT-A). Days on YouTube and video duration were significantly and positively correlated (r = 0.335, p < 0.001), and strong positive correlations were observed among popularity indicators (views, likes, and comments; all p < 0.001). Spearman correlation analysis also revealed that viewer engagement metrics (number of views, likes, and comments) were significantly and positively associated with video quality and reliability measures. Particularly, the number of views was positively correlated with GQS, mDISCERN, and PEMAT-U scores (all p < 0.001). Similarly, the number of likes and comments was significantly and positively associated with GQS, mDISCERN, and PEMAT-U scores (all p < 0.05). However, none of these engagement metrics demonstrated a significant correlation with PEMAT-A. Additionally, video duration was negatively correlated with mDISCERN (r = −0.197, p = 0.017) and PEMAT-U (r = −0.145, p = 0.079), implying that shorter videos may have higher-quality ratings or greater understandability for patients with PD and their caregivers. Quality scores (GQS, mDISCERN, PEMAT-U, and PEMAT-A) were moderately to strongly positively correlated with each other, indicating consistency across different instruments. Specifically, GQS was positively correlated with mDISCERN and with both PEMAT scores (all p < 0.001), and mDISCERN was correlated positively with PEMAT-U (r = 0.499, p < 0.001) and PEMAT-A (r = 0.233, p = 0.005). PEMAT-U and PEMAT-A were also positively correlated with each other (r = 0.284, p < 0.001). In contrast, days on YouTube and video duration showed no consistent significant associations with overall quality or engagement measures.

Spearman correlation analysis among video variables.

*p <0.05; **p <0.001 indicate statistical significance.

GQS: Global Quality Score; mDISCERN: modified DISCERN tool; PEMAT-U: Patient Education Materials Assessment Tool–comprehensibility; PEMAT-A: Patient Education Materials Assessment Tool–actionability.

Discussion

In this study, we observed moderate overall quality and reliability of videos on PD when assessed using the GQS, mDISCERN, and PEMAT instruments. In the evaluation and analysis of videos from different sources, only PEMAT-A, representing the actionability dimension, demonstrated a statistically significant difference, whereas overall quality (GQS), reliability (mDISCERN), and understandability (PEMAT-U) did not differ significantly between groups. Despite comparable levels of understandability, the extent to which viewers could apply the information to health-related behaviors varied substantially. This finding suggests that clarity alone is insufficient, and effective digital health communication must also provide specific and realistic guidance. In contrast, the analysis of different therapy types revealed a different pattern. Both GQS and mDISCERN exhibited statistically significant differences, whereas PEMAT-U and PEMAT-A did not differ significantly. The higher quality and reliability of advanced therapy videos likely reflect the stronger evidence base and regulatory oversight behind these treatments. In contrast, the variability observed in nonpharmacological supportive therapy content may arise from uneven evidence and less consistent messaging. These findings highlight the need for improved quality control and clearer standards in online health information. Strong positive correlations were also observed between engagement metrics (views, likes, and comments) and quality scores (GQS, mDISCERN, and PEMAT-U), although not with PEMAT-A. In other words, audience engagement align to some extent with educational clarity; however, popularity alone does not guarantee content that is clinically actionable for patients with PD and their caregivers. Overall, these findings highlight the imbalance between reach and rigor in digital health communication as well as the ongoing gap between the public’s demand for reliable information and the uneven quality of available content.

Our findings are consistent with those reported in the literature on health-related video quality. Many prior studies have documented that YouTube medical content is highly heterogeneous. User engagement (views and likes) is often associated with presentation style or popularity but not necessarily with scientific rigor. For example, analyses of YouTube content on dermatology, smoking cessation, and diabetes have found that videos uploaded by nonprofessional sources or general communicators often attract the most views, even when their accuracy is questionable.17–19 Similarly, in an evaluation of YouTube videos about cancer screening, accuracy did not always correspond with popularity. 20

Regarding PD specifically, prior assessments have identified substantial gaps in reliability and usefulness. Erdem and Karaca reported that many PD-related videos lacked proper referencing or failed to disclose potential risks. 21 Building on this work, our study focuses specifically on short-form video content and employs multiple quality/education metrics across different source types and therapy categories, thereby offering a more nuanced picture of the current PD video landscape.

The following limitations and potential biases should be taken into account when interpreting the findings. As the platform evolves daily (new uploads appear, algorithms shift, and engagement fluctuates), our analysis may not represent the long-term trajectory of PD content, nor capture the subsequent emergence of higher-quality videos.

Although GQS, mDISCERN, and PEMAT are widely used, they inevitably involve elements of subjective judgment. Even with independent double rating and consensus procedures, the scores may still be influenced by the reviewers’ professional backgrounds and individual thresholds. These tools were developed to assess overall educational quality, perceived reliability, and patient-oriented understandability and actionability of health information. However, they are not designed for a formal line-by-line comparison of every diagnostic or therapeutic recommendation with current evidence-based guidelines for PD. Consequently, our ratings reflect how the videos perform on these validated educational scales rather than a systematic audit of guideline adherence or a comprehensive verification of specific clinical details. Therefore, these findings should be interpreted as an assessment of the educational and informational quality of YouTube videos on PD from a patient-facing perspective, rather than as direct guidance for detailed clinical decision-making. Nonetheless, within this scope, the findings offer valuable insights for neurologists and educators into the information environment that patients with PD and their caregivers are likely to encounter online.

Additionally, the study excluded videos with fewer than 1000 views. As a result, some videos with low-view counts but high quality or very recent uploads may have been missed. Future studies could examine such low-view videos as a separate subgroup to determine whether their quality systematically differs from that of more widely viewed material. Furthermore, the analysis was limited to English-language videos on a single platform, which reduces generalizability to non-English content or to other platforms such as TikTok or Vimeo. Although the median video duration of 234 s aligns with short-form content, the study did not explicitly stratify or analyze quality metrics by video length beyond correlation analyses, potentially overlooking how brevity influences information depth, comprehension, and viewer retention in patient education contexts.

Despite these limitations, our findings have practical implications. Clinicians and academic institutions should be encouraged to create evidence-based video content with clear references and practical recommendations for symptom management, care planning, and treatment discussions. Patient advocacy groups and science communicators can improve accuracy by collaborating directly with medical specialists and transparently reporting sources and publication dates. Platforms such as YouTube may also consider integrating a labeling system (ideally supported by professional societies or independent third parties) to help direct viewers toward content that meets basic quality standards. 22 For patients with PD and their caregivers, the key takeaway is that YouTube may serve as a useful supplement but not a replacement for professional consultations, particularly when considering high-risk or invasive options such as DBS or gene therapies.

Future studies should move beyond single time points. Longitudinal analyses can track how video quality shifts over time, whereas cross-language comparisons may reveal disparities in global access to accurate information. It will also be important to evaluate not only what patients with PD watch but also how this content shapes decisions, adherence, and health outcomes. Integrating behavioral data with content assessment may offer a more complete view of the role digital media plays in chronic disease management.

In summary, YouTube remains a powerful channel for disseminating knowledge about PD; however, the quality of its content is inconsistent and frequently fails to meet the standards for reliable patient education. To better meet the needs of patients with PD and their caregivers, collaborative efforts from healthcare providers, science communicators, and digital platforms are necessary to enhance the quality and visibility of trustworthy content.

Footnotes

Acknowledgments

We would like to thank the YouTube platform for providing access to publicly available educational videos, which formed the basis of this study. Additionally, we express our gratitude to the video creators for their contributions to raising awareness and educating the public on Parkinson’s disease.

Author contributions

Yuhang Wang, Longkui Jiang, and Xin Liu designed and supervised the study. Xin Liu wrote the manuscript. Xin Liu, Shuaiyan Wang, and Lizhen Gan contributed to its revision and editing. Xin Liu, Shuaiyan Wang, and Lizhen Gan checked and corrected the manuscript where required. Yuhang Wang and Longkui Jiang contributed to its translation and polishing. All authors approved the final version of the manuscript.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Data availability

The datasets generated or analyzed during this study are available from the corresponding author on reasonable request.

Declaration of conflicting interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical considerations

The videos used in this study were publicly accessible, and no unlisted or private videos or human or animal participants were involved. As no personal or sensitive data were collected and no interaction with human or animal participants occurred, ethical approval and informed consent were not required in accordance with institutional and international research ethics guidelines.

Funding

No funding.