Abstract

Perceptual biases surrounding the credibility of female sports reporters has been a robust area of research for decades, taking on many methodological forms. One vein of this research has been experimental designs where reporter sex is systematically manipulated to examine impacts on perceived credibility. Despite remarkable similarities in overall study design, findings from these studies have been mixed, variably demonstrating biases against female reporters, in favor of female reporters, or no biases at all. This paper reports results of a systematic review of this literature, highlighting differences in stimuli (e.g., medium, visual prominence of reporters), theoretical mechanisms, and measures employed in order to illuminate possible reasons for these varied findings.

Exploration of the challenges and biases faced by female sports reporters has been a vibrant stream of scholarship in the field for decades. Researchers have probed potential biases against female reporters using multiple methodological approaches, including surveys of journalists (Hardin & Shain, 2006) and editors (Laucella et al., 2016), interviews with female sports journalists (Cramer, 1994), ethnographic (Genovese, 2015) and netnographic observation (Demir & Ayhan, 2022), content analysis of media coverage (Boczek et al., 2022; Eastman & Billings, 2000), and more.

In addition to these, one consistent vein of research in this area over the past three decades is experimental designs that seek to establish theoretically supported cause-effect relationships in these biases (e.g., Charitat & Cianfrone, 2023; Ordman & Zillmann, 1994). In some respects, these experimental studies bear striking similarities. Quite often a between-subjects manipulation is affected such that nearly identical stimuli are presented between groups of participants, where the sex of the reporter/author/commentator is manipulated. In some studies, these manipulations are somewhat subtle (e.g., a change in byline and reporter photograph; Mudrick & Lin, 2017), whereas in others the manipulations are more pronounced (e.g., female versus male reporter appearing onscreen; Greer & Jones, 2012). Samples of research participants are asked to read/watch/listen to the stimulus and then evaluate the reporter along some salient criteria, typically some assessment of credibility, knowledgeability, authoritativeness, or similar.

Despite this general uniformity in study design, outcomes from these studies are quite varied. For example, Etling and Young’s (2007) experiment found a bias against female reporters such that they were rated as less authoritative than male sports reporters. A few years later, Baiocchi-Wagner and Behm-Morawitz (2010) found no difference between female and male reporters in rated credibility. Later Greer and Jones (2012) report results indicating that female reporters were rated as more competent than males. Thus, broadly generalizing what is known about biases regarding female sports reporters from these seemingly similar experimental studies is limited, potentially inhibiting the accumulation of knowledge that is a hallmark of “normal science” (Kuhn, 1996, p. 10). With this in mind, the purpose of this paper is to systematically review this specific form of empirical research examining biases against female sports reporters in order to illuminate differences across study outcomes, and then identify major characteristics of these studies that might help explain the assortment of findings (i.e., differences in study stimuli, measures, theoretical foundations).

Literature Review

As previously noted, a wealth of scholarship has examined the challenges that women have faced in sports journalism and broadcasting, illuminating longstanding biases in both the volume and nature of sports coverage (Schoch, 2020), challenges faced by female sports reporters both in the newsroom (Harris & Bowes, 2025) and with audiences (Johnson et al., 2023). The total body of literature on the topic is vast, and given the attention allocated to the subject, one might expect some consistency across the literature. Indeed, some consistency can be found. Consider the programmatic studies that examine newsroom practices and lived experiences of reporters (Hardin & Shain, 2005, 2006; Hardin & Whiteside, 2009), or over-time content analysis of sports media (Cooky et al., 2013, 2015, 2021) in order to explore these biases. However, studies employing experimental designs over the past 30 years in order to establish cause/effect relationships have yielded less consistency.

Normal Science

“Normal science…is a highly cumulative enterprise, eminently successful in its aim, the steady extension of the scope and precision of scientific knowledge.” (Kuhn, 1996, p. 52)

Systematic progression of the body of knowledge is a defining characteristic of science. In the opening pages of his landmark essay on The Structure of Scientific Revolutions, Kuhn (1996) repeatedly notes that scientific advancement is a “piecemeal process” (p. 1), an “incremental process” (p. 2) and a “cumulative process” (p. 3). In times of normal science, which Kuhn argues constitutes the bulk of scientific discovery, scholars operate on a shared foundation of previous work. Within this shared framework, science progresses through “miniscule” (p. 24) advancements, and the work of normal science is to advance the body of knowledge slowly but additively. By Kuhn’s view, in pursuing normal science, we add detail to our further understanding of a phenomenon. The goal is not so much to branch off into new directions, but instead to better map known terrain and gain a more granular understanding of current territory.

Kuhn’s conception and definition of paradigms, crisis, and scientific revolution are not adopted here in a strict sense. Instead, they are invoked as a useful framework for examining the research employing a specific methodological approach to a somewhat unified body of literature probing a generally singular question—what is the impact of a reporter’s biological sex on audience perceptions of his/her perceived credibility? To the extent that experimental studies examining the effect of reporter sex on audience perceptions are executed remarkably uniformly, they reflect a shared paradigm of sorts. Similarities in general study design, measurement, and execution unite research in this area, and in this sense, they somewhat reflect Kuhn’s view of scientific inquiry operating within a shared paradigm during periods of normal science. Despite these surface-level consistencies in design, findings generated from these studies have been less uniform, inhibiting the forward progression of what is known about the relationship between reporter sex and audience perceptions. To illuminate this inconsistency, this study systematically examined the constellation of published articles that reflect the general properties described earlier in the introduction: (a) experimental studies that (b) manipulated reporter biological sex (c) in order to examine impacts on credibility perceptions.

Method

Sample

To identify all published studies that meet these criteria, an exhaustive search was conducted using the following parameters: • An advanced search of multiple EBSCO databases (Academic Search Complete, Communication Source, and SPORTDiscuss) was conducted for various permutations of “reporter,” “sex,” “gender,” “experiment,” and “sport.” • Similar searches were conducted via Google Scholar. • Once articles were identified, reference lists within each article were also consulted for possible works to include. Furthermore, subsequent articles citing each identified source were reviewed using the above search terms. This process was repeated as additional works were identified.

This process yielded a final sample of 20 articles 1 . Articles within the sample were published between 1994 and 2025 and appear in a variety of scholarly outlets. Four articles were in Communication & Sport and the Journal of Sports Media each. The International Journal of Sports Communication contained two of the sampled articles, as did Journalism & Mass Communication Quarterly. The remainder were from an assortment of journals.

Article Characteristics

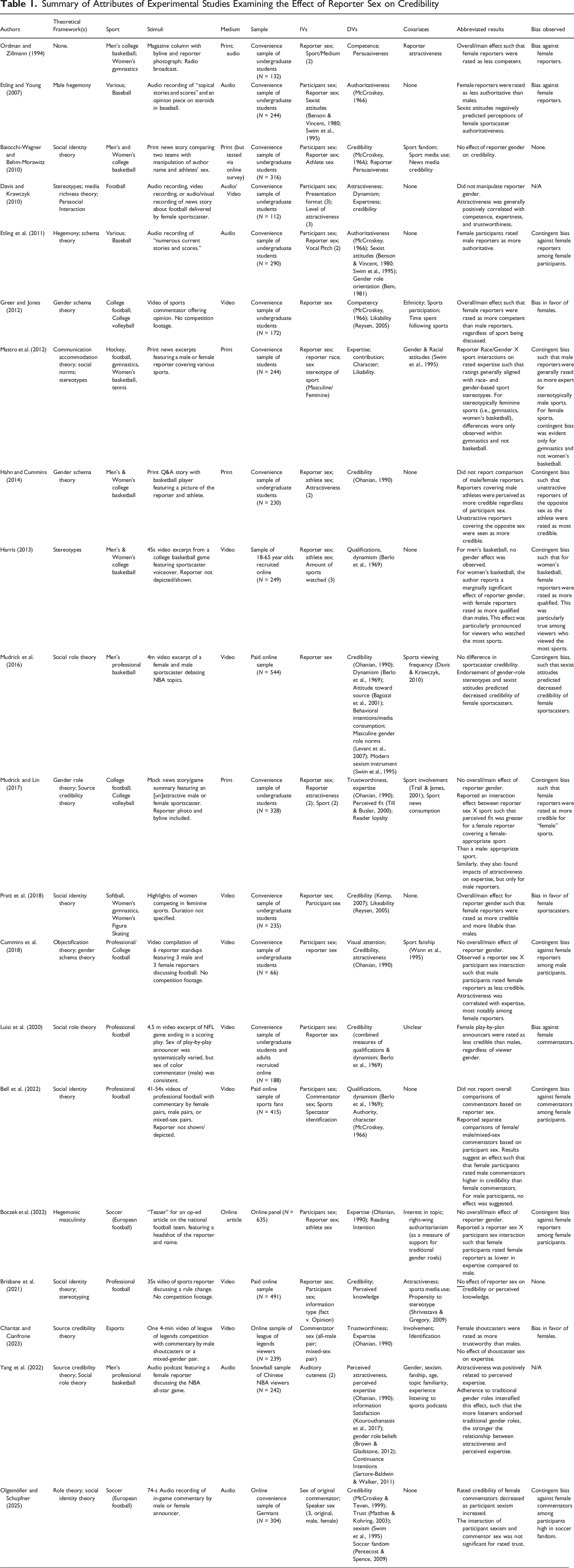

Summary of Attributes of Experimental Studies Examining the Effect of Reporter Sex on Credibility

Results

Bias Observed

Clearly, the most important attribute of these studies is the nature of the outcomes demonstrated therein with respect to potential biases against female sports reporters. Perhaps owing to the first of these studies, many have sought to test an assumed or predicted bias against female reporters, based on the stereotypical notion that sports is a masculine domain. As will soon be discussed in the review of theoretical frameworks, traditional gender-role stereotypes (e.g., Mudrick & Lin, 2017) and hegemonic masculinity (e.g., Etling & Young, 2007) undergird such assumptions. According to this stereotypical view, men are superior in terms of their knowledge and ability to offer insights and reportage on the topic, which would translate to enhanced credibility.

Bias Against Female Reporters

One of the earliest known studies exploring perceptions of female sports reporters arguably cast the mold for much of what followed. In their study, Ordman and Zillmann (1994) reported a bias against female reporters that cut across both male and female sports (i.e., men’s college basketball; women’s gymnastics). For both sports, female reporters were rated as less competent than male reporters. Just over a dozen years later, Etling and Young (2007) observed a similar bias, where a female sports reporter was rated as less authoritative than a male reporter delivering an identical audio recording of an opinion/editorial on steroid use in baseball.

More recently, Luisi et al. (2020) likewise found a bias against female reporters in the context of 4.5-min play-by-play commentary of a professional (American) football. In their study, female commentators were rated as less credible than males, independent of participants’ sex. Moreover, these evaluations were also independent of participants’ sexist attitudes. Thus, select studies examining print (Ordman & Zillmann, 1994) and audio (Etling & Young, 2007) news stories, as well as play-by-play commentary of athletic competition (Luisi et al., 2020) have demonstrated a bias against female sports reporters/commentators across multiple sports.

Bias in Favor of Female Reporters

Other scholarship on the matter has been more mixed, sometimes reporting findings precisely in opposition to these studies. Again, it bears repeating that these studies generally follow the same general methodological approach with respect to study design.

First, consider Greer and Jones (2012) and their study examining commentary surrounding collegiate football or women’s collegiate volleyball. In that study, participants watched a short video of a female/male sports reporter discussing a female/male athlete’s recent injury and explicitly offering opinion on its impact on the team’s future performance. Contrary to theoretically derived predictions, participants in that study reported a bias in favor of female reporters such that they were rated as more competent than male reporters. The authors interpreted this to be the result of numerous factors, including changing social norms, greater rated attractiveness of the female reporter, or heavy female composition of the sample who judged the female reporter more favorably.

A few years later, Pratt et al. (2018) likewise found a bias in favor of female reporters whereby they were rated as more credible than males delivering audio commentary within highlight videos. However, it bears noting that in Pratt et al.’s (2018) study, only female athletes competing in stereotypically feminine sports (i.e., softball, women’s gymnastics, women’s figure skating) were shown. The authors offer several interpretations for their findings, including salience of social identity among female participants leading to more favorable evaluations, and increased recognition of female participation in sports.

Most recently, a bias in favor of favor of females was observed in the context of esports, where Charitat and Cianfrone (2023) adapted a similar experimental design within this relatively new athletic context. In their study, participants viewed a 4-min video of League of Legends competition, where either an all-male or mixed-sex pair of shoutcasters offered running commentary. Although no difference was found for rated expertise, findings showed that compared to their male counterpart, the female shoutcaster was rated as significantly more trustworthy. The authors speculated that participants recognized the professional nature of the shoutcasters, which would mitigate any traditional biases against female reporters. Thus, a select few studies have offered results suggesting that female reporters were rated as more credible than their male counterparts, but no consensus explanation for the findings emerged.

No Bias Observed

Just as studies have demonstrated biases both against and for female reporters, a number of studies have failed to find any differences in terms of perceived credibility. For example, Baiocchi-Wagner and Behm-Morawitz (2010) failed to observe any bias in their comparison of reported credibility of male/female authors of a print news story that “compares and contrasts two basketball teams’ strengths and weaknesses before concluding with his or her ‘projection’ of the regional championship winner” (p. 267). The stories attributed to female/male authors were identical except for a manipulation of the authors’ names/byline and athlete sex, so the subtlety of the manipulation could potentially be interpreted as an explanation for this lack of between-groups differentiation.

However, nearly a dozen years later, Brisbane et al. (2021) similarly observed no sex-based difference in rated credibility or perceived knowledge in a context where the manipulation of reporter sex was more overt. Whereas Baiocchi-Wagner and Behm-Morawitz’s (2010) employed a text stimulus, Brisbane et al. (2021) employed 35-s video stimuli that featured female/male journalists reporting either fact-based or opinion-based stories discussing a proposed rule change in professional (American) football. Their results found no difference in the rated credibility or perceived knowledge of female/male reporters. In sum, studies over the decades have variably found biases against female reporters, in support of female reporters, or none at all.

Contingent Bias Observed

A more common observation across the literature is some contingent bias variably in support of or against female sports reporters, depending on multiple factors including reporter characteristics, sport being examined, participant sex, or other individual characteristics.

In terms of contingent biases against female reporters, these at times depend on the sex of the participant. For example, Etling et al. (2011) compared listener response to audio recordings of female/male reporters covering “numerous current stories and scores” (p. 9). They found that female study participants rated male reporters as more authoritative, although no such difference was evident among male participants. A near identical pattern was observed by Bell et al. (2022) who compared evaluations of all-male, all-female, or mixed-sex pairs of commentators in an NFL broadcast. Their results suggest that female study participants rated the all-female and mixed-sex pairs of commentators as less credible than the all-male pair. No similar pattern was observed among male participants, who rated all pairs of commentators similarly. Boczek al. (2022) reported an identical bias in the context of soccer (European football). In their study, participants read a short teaser for an opinion-based column. Their results similarly indicated that female participants rated male reporters as significantly higher in perceived expertise than female reporters. Cummins et al. (2018) observed a similar contingent bias against female reporters; however, it was only among male study participants who rated female reporters as less credible when delivering news and information on (American) football. Thus, these biased perceptions have variably been observed at times among female but not male participants, and vice versa. Furthermore, these variable contingent biases were observed across varied sports (i.e., professional American football, European football) or sports news.

In addition to participant sex, another relatively uniform contingent condition involves the sex of the athlete or the closely related concept of sport gender typing (i.e., stereotypically masculine v. feminine sports). Such studies often emphasize reporter-sport congruence or fit, with the assumption being that men are presumably better qualified to speak on men’s sports and vice-versa. One such example is Harris’s (2013) study examining viewer response to female/male commentators offering voiceover commentary in a 45-s video excerpt of women’s/men’s college basketball. That study found that for women’s basketball, female reporters were rated as more qualified. This was particularly true among viewers who viewed the most sports. However, for men’s basketball, no such effect emerged. Likewise, Mastro et al. (2012) observed contingent biases that fell along gender lines, with male reporters being evaluated more favorably when covering stereotypically masculine sports (i.e., hockey, football), and female reporters being evaluated more favorably when covering feminine sports (i.e., gymnastics; women’s basketball). Similarly, Mudrick and Lin (2017) examined evaluations of female/male reporters covering a women’s versus men’s sports (i.e., college volleyball and college football, respectively). Their findings showed that that rated fit was greater for a female reporter covering a female sport than a stereotypically male sport. Notably, this difference in perceived fit did not carry over to perceptions of reporter expertise or trustworthiness, which did not differ by reporter sex. Lastly, it also bears emphasizing that some studies employing variation in athlete sex have failed to find such contingent biases based on presumed fit or congruence (Baiocchi-Wagner & Behm-Morawitz, 2010; Ordman & Zillmann, 1994).

Other attributes of both the reporter and study participants have also been shown to govern credibility perceptions. For example, reporter attractiveness has been a topic of examination in multiple studies that have yielded mixed results. For example, Hahn and Cummins (2014) manipulated reporter sex, athlete sex, and reporter attractiveness in their study of reader evaluations of a print Q&A story with female/male college basketball players. Although no overall bias was observed, they report a contingent bias as a function of reporter attractiveness and athlete sex, such that unattractive reporters covering the opposite sex were rated as most credible. Mudrick and Lin’s (2017) aforementioned study examining evaluations of female/male reporters likewise examined attractiveness as a categorical attribute of the reporter (i.e., high/low reporter attractiveness conditions). However, their findings were mixed and not in concert with Hahn and Cummins (2014). Specifically, Mudrick and Lin (2017) report that for male reporters, more attractive reporters were judged as more trustworthy. For female reporters, no impacts of attractiveness on expertise or trustworthiness were observed. In addition, a number of studies have employed attractiveness as an evaluation of female/male reporters, not a manipulated variable. Such studies have reported that reporter attractiveness was correlated with perceived expertise (Cummins et al., 2018; Yang et al., 2022).

Lastly, a growing number of recent studies have demonstrated contingent effects as a function of an individual’s endorsement of sexist views or traditional gender roles. For example, Mudrick and Lin (2017) found that greater endorsement of gender-role stereotypes and sexist attitudes predicted decreased credibility of female sportscasters. Similarly, both Yang et al. (2022) and Olgemöller and Schupfner (2025) employed measures of sexist attitudes or support for traditional gender roles in their predictive models, both demonstrating causal relationships between those individual characteristics and credibility perceptions. Thus, inclusion of such individual-level characteristics illustrates the utility of examining not just biological sex but individual differences as an explanatory mechanism.

Having reviewed the varied outcomes across these studies, the question then turns to explanations for these inconsistencies. Differences across an assortment of study attributes may help illuminate this assortment of findings.

Differing Stimuli

Testing for effects across a robust and diverse array of message types, contexts, and repetitions is an important aspect of experimental designs in communication research (Jackson et al., 1989; Jackson & Jacobs, 1983). Doing so helps aid generalizability of study findings and provides weight in support of the argument that cause-effect relationships are explored via broader concepts and not the idiosyncratic function of a singular message or unique stimulus. Thus, in this sense, the diversity of media content reflected across these studies could be viewed as a strength. However, differences in textual/audio/visual modalities reflected in the media employed, visual appearance of reporters, nature of the information (e.g., opinion vs. fact-based reportage), and more could clearly contribute to different study outcomes. Moreover, studies in this literature frequently fail to employ any type of message repetition, and many reflect single-stimulus designs where study participants see and evaluate only a single message or exemplar of a broader phenomenon (i.e., one reporter versus multiple sports reporters or stories).

Medium Effects

Among the studies reviewed, stimuli spanned print/text formats (n = 6), audio (n = 6), video only (n = 1), and audio-visual content (n = 10) 2 reflecting a variety of types of stories or messages. Only one study reviewed here directly compared media formats to specifically test for medium effects. 3 Davis and Krawczyk (2010) drew upon media richness theory in order to examine how reporters’ visual attractiveness impacts credibility perception, but that study did not offer any specific predictions. Their stimuli were either audio recordings, a video recording without sound, or an audio-video recording of a female sports reporter reading a script about (American) football. However group means were not reported, and differences in audience response between these formats were hard to discern.

Even within a single medium, differences in the nature of the content reflected by these experimental materials also makes generalization difficult. For example, although video content reflects the most common form of experimental stimuli, these videos reflect a variety of sports-related content, including fact- and opinion-based sports reporting (e.g., Brisbane et al., 2021; Greer & Jones, 2012), or play-by-play or color commentary (e.g., Harris, 2013; Luisi et al., 2020). In some cases, the reporter was visibly depicted (e.g., Cummins et al., 2018), whereas in other stimuli the reporter was not shown (e.g., Bell et al., 2022). Thus, video stimuli varied in the nature of the information as well as visual salience or prominence of the reporter.

Visual Depiction of Reporter

Independent of medium, the visual depiction of female/male reporters is arguably another important (and inconsistent) property of stimuli employed across the literature. Most typically, studies testing for effects of reporter sex did not include visual depictions of reporters (n = 10). In these studies, manipulation was achieved via game voiceover from female/male commentators (e.g., Charitat & Cianfrone, 2023) or delivering news reports (e.g., Etling & Young, 2007). A smaller number of studies (n = 7) included visual depiction of reporters, although this was manifested in different ways. For example, studies employing text/print stimuli manipulated reporter sex via a reporter photo and byline that accompanied a text passage (Boczek et al., 2022; Hahn & Cummins, 2014; Mastro et al., 2012; Mudrick & Lin, 2017), arguably a somewhat subtle manipulation. Other studies employed more overt manipulations where reporters appeared onscreen delivering news or editorial content (e.g., Brisbane et al., 2021; Greer & Jones, 2012). In a select few studies, reporters were visually depicted in some experimental conditions but not all (Davis & Krawczyk, 2010; Ordman & Zillmann, 1994). In sum, studies across the literature may have uniformly varied the biological sex of reporters, but the visual representation of those manipulations varied in their prominence.

Sport Examined and Nature of Information

Although potentially more superficial, another possible reason for different findings across the body of literature is the various sports employed as the context for these studies. Again, the previous discussion of contingent effects due to sport gender typing and alignment or fit with reporter employed may be more salient. Nonetheless, totaling across the individual sports, men’s sports were more commonly employed (n = 19) at a rate twice that of women’s sports (n = 10). Thus, even within the scholarly literature focusing on perceptions of female sports reporters, a bias toward men’s sports was found.

Within men’s sports, (American) football was the most common (n = 8), and stimuli focusing on this sport included depictions of actual competition (e.g., Bell et al., 2022), talking-head coverage (e.g., Cummins et al., 2018), or print news stories (e.g., Mudrick & Lin, 2017). The next most common men’s sport employed was basketball, and again, stimuli included both athletic competition (e.g., Harris, 2013), sports talk (Mudrick et al., 2016), and print (e.g., Hahn & Cummins, 2014). Baseball was featured less frequently (n = 2), as was soccer/European football (n = 2), and hockey (n = 1).

With respect to women’s athletics, the most common sport employed within stimuli was basketball (n = 4). In those studies, three employed print stories on the topic and only one depicted actual athletic competition (Harris, 2013). Women’s gymnastics (n = 3) and volleyball (n = 2) served as the context for investigation an equal number of times, with stimuli reflecting actual athletic competition (e.g., Pratt et al., 2018) as well as print news coverage (Ordman & Zillmann, 1994). Lastly, softball (n = 1) and women’s figure skating (n = 1) were also employed, and 1 study included tennis as a gender-neutral sport.

Differing Theories

Despite the varied study outcomes, some greater consistency emerges with respect to the theoretical or conceptual frameworks employed as a vantage point for testing potential biases surrounding female sports reporters. Although precise articulations of and sources for these theories/perspectives vary, many of these studies are rooted in the notion of sports as a traditionally masculine domain. As such, women are systemically disadvantaged when discussing the topic. This sentiment cuts across a variety of related perspectives, including male hegemony/hegemonic masculinity (Boczek et al., 2022; Etling et al., 2011; Etling & Young, 2007); gender schema theory (Cummins et al., 2018; Etling et al., 2011; Greer & Jones, 2012; Hahn & Cummins, 2014); social/gender role theory (Luisi et al., 2020; Mudrick et al., 2016; Mudrick & Lin, 2017; Olgemöller & Schupfner, 2025; Yang et al., 2022), or merely stereotypes (Davis & Krawczyk, 2010; Harris, 2013; Mastro et al., 2012). Likewise, notions of “fit” also fall under this umbrella based on the stereotypical notion that female reporters are better suited for coverage of female athletes (Mudrick & Lin, 2017).

These studies have generally (although not consistently) yielded findings in support of this argument, demonstrating overall biases or contingent biases against female sports reporters as credible sources of sports information (Cummins et al., 2018; Etling et al., 2011; Etling & Young, 2007; Luisi et al., 2020). However, findings employing this perspective are not perfectly uniform and also provide evidence directly contradictory to schema/stereotype-oriented frameworks (e.g., Greer & Jones, 2012).

A second common theoretical framework employed across this literature is Social Identity Theory (SIT; Tajfel & Turner, 1979). That theory generally holds that individuals associate or identify with broader social categories or in-groups based on some salient criteria (e.g., race/ethnicity; sex/gender; team affiliation). Furthermore, individuals seek to maintain positive social status through association with positive or successful ingroups and derogation of salient outgroups. For example, SIT was employed in the broader context of sports to explain differences in the extent to which fans display in-group affiliation via “basking in reflected glory” after team victories (BIRGing; Cialdini et al., 1976), or “cutting off reflected failure” (CORFing; Synder et al., 1986) or “cutting off future failure” (COFFing; Wann et al., 1995) after team losses.

In the context of sports reporting, studies have generally posited that these biases impact individual response such that membership in associated in-groups based on reporter/participant sex will impact perceptions of or responses to reporters (Baiocchi-Wagner & Behm-Morawitz, 2010; Bell et al., 2022; Brisbane et al., 2021; Pratt et al., 2018). 4 However, among the studies employing this theoretical framework, findings have been inconsistent. For example, several studies employing SIT have failed to find predicted biases in perceived credibility based on reporter/participant sex (Baiocchi-Wagner & Behm-Morawitz, 2010; Bell et al., 2022; Brisbane et al., 2021). Furthermore, although Pratt et al. (2018) found support for their hypotheses, examination of the stated predictions suggest better fit with aforementioned schema/gender role/stereotype-based perspectives than Social Identity Theory. Thus, support for SIT as a mechanism explaining potential gender/sex biases remains in question.

Lastly, an assortment of additional theoretical or conceptual frameworks has been invoked in studies testing for sex-based biases in credibility perceptions. Although virtually all the studies reviewed here discussed source credibility extensively as a central concept, several studies have named source credibility as the theoretical basis for study predictions (Charitat & Cianfrone, 2023; Mudrick & Lin, 2017; Yang et al., 2022). Additional frameworks include Media Richness Theory (Davis & Krawczyk, 2010), Objectification Theory (Cummins et al., 2018), or Communication Accommodation Theory (Mastro et al., 2012) to support predictions surrounding differences in individual response to competing media forms or female/male sports reporters, respectively.

Differing Samples

Given the present focus on experimental designs, a common attribute of these studies is the use of human subjects as sources of data with respect to the impacts of the manipulated message attributes. However, the nature of these samples varies in terms of demographics, means of recruitment, as well as sample size. 5 The total number of participants employed across these studies was 5,676, with an average of 283.80 (SD = 147.06) participants per study. The smallest sample reported was N = 66 (Cummins et al., 2018), and the largest was N = 635 (Boczek et al., 2022).

With respect to the demographic composition of samples, the majority of articles reviewed here (n = 12) generally relied on samples of undergraduate students, reflecting just under half the participants employed in this literature (undergraduate participant n = 2,369; 41.75%). At times, these were explicitly labeled as convenience samples (e.g., Pratt et al., 2018) whereas other articles simply referred to participants as undergraduate students (e.g., Baiocchi-Wagner & Behm-Morawitz, 2010). Furthermore, the nature of these student samples was at times acknowledged as a study limitation (e.g., Luisi et al., 2020) or possible explanation for study outcomes. For example, Greer and Jones (2012) noted that a majority of participants in their study were “women majoring in communication” (p. 76), and offered that as a possible explanation for their observation that female reporter was rated as more competent than the male reporter employed their stimuli.

The composition of the remainder of the samples varied, although most reflect the use of online survey-experiments with participants recruited through a variety of approaches. For example, several studies (Bell et al., 2022; Boczek et al., 2022; Brisbane et al., 2021; Mudrick et al., 2016) employed paid participants recruited through Amazon Mechanical Turks (MTurks), Qualtrics, or unspecified panel vendors, reflecting roughly 40% of the total body of participants across these studies (n = 2,389; 42.09%). Other online studies employed ostensibly purposive samples, such as Chinese NBA viewers (Yang et al., 2022) or League of Legends players (Charitat & Cianfrone, 2023) appropriate to the specific study.

Finally, some studies also referenced some means of checking the quality of participant responses. Such efforts reflect deleting participants due to quickly completing a study (Boczek et al., 2022) or other attention checks, such as correctly answering questions regarding the study stimuli (Bell et al., 2022; Yang et al., 2022).

Differing Measures

One important property of empirical research that contributes to the accumulation of knowledge during normal science is uniformity in measurement. Unlike our peers in the STEM disciplines who may have the advantage of highly standardized measures endorsed by a governing body (e.g., the National Institute of Standards and Technology), those of us within the social sciences operate with greater latitude, particularly with respect to the central focus of this review, “credibility.”

On the one hand, studies reviewed here have generally drawn upon a few consistent and popular measures of credibility. On the other, actual application of these measures has varied in important ways, including use of only select subscales, abbreviated or adapted versions of the measures, or amalgamations of separate subscales into idiosyncratic composite measures for analysis. The result is at times an apples-to-oranges comparison across the literature, or at the very least, comparison of different varieties of apples.

McCroskey’s (1966) measure of credibility is a broadly cited multi-dimensional instrument that captures authoritativeness and character. The original measure consisted of 22 and 20 seven-point Likert-type statements, or abbreviated 12-item semantic differential items to capture authoritativeness and character respectively. However, scholars examining credibility of sports reporters have employed that scale in differing ways. Baiocchi-Wagner and Behm-Morawitz (2010) acknowledged that the original instrument consisted of separate subscales measuring authoritativeness and character, but they then report results suggesting that all items were combined into a single measure of credibility. Etling and Young (2007) also employed the measure from McCroskey to assess perceived authoritativeness, but they reported that they adapted a smaller subset of 15 of 22 items from the original scale for use. Greer and Jones (2012) also report drawing upon McCroskey (1966), focusing on “competency” as their construct of interest. Lastly, Olgemöller and Schupfner (2025) report using the competence sub-scale from McCroskey and Teven (1999). Thus, all four studies drew upon/cited the same general measure, but in different ways, focusing on different components of credibility.

Another common measure of credibility is the work of Berlo et al. (1969). That scale was likewise a multi-dimensional scale designed to capture three aspects of source credibility (i.e., safety, qualification, and dynamism), each measured via five semantic differential items. A small number of studies reviewed here (n = 4) drew upon items from this measure. For example, Harris (2013) employed discrete measures of dynamism and qualifications. Similarly, Luisi et al. (2020) also employed measures of dynamism and qualifications from Berlo et al. (1969). However, they combined these subscales into a single measure of credibility for subsequent analysis. Thus, dependent measures from the two studies that employed the same credibility scale are again close but not perfectly uniform.

Permutations of Ohanian’s (1990) measure may be the most common across the literature, appearing in 7 of the studies reviewed here. Ohanian’s (1990) scale captures the dimensions of expertise, trustworthiness, and attractiveness, each via 5 adjectives. However again, applications of this measure vary. Some studies report employing discrete subscales, such as Boczek et al. (2022) and Yang et al. (2022), who employed the expertise subscale. Likewise, Mudrick and Lin (2017) and Charitat and Cianfrone (2023) employed separate measures of expertise and trust(worthiness) in their studies. However, other studies report using variable permutations. For example, Hahn and Cummins (2014) and Cummins et al. (2018) report combining the items from that scale based on factor analytic results that suggested only two dimensions they labeled “credibility” and “attractiveness.”

Furthermore, some studies report varied combinations of multiple measures. For example, Mudrick et al. (2016) report using Ohanian’s (1990) measure of trustworthiness, attractiveness, and expertise, along with Berlot et al.’s (1969) measure of dynamism, and then combining all into a single construct of credibility. Likewise, Bell et al. (2022) report using measures of dynamism and qualifications from Berlo et al. (1969) as well as authority and character from McCroskey (1966). Furthermore, their method reports separate reliability measures for each subscale. However, their results report findings regarding a single credibility measure, suggesting that all items were combined into a single dependent measure.

Lastly, a small number of studies do not explicitly cite a source for the credibility measures employed. For example, Ordman and Zillmann (1994) and Mastro et al. (2012) report the items used within their method, but a source for these measures was not offered. Similarly, Davis and Krawczyk (2010) fully report the items used in their study via an appendix. But again, a source was not offered, and review of those items suggests single-item measures of expertise, dynamism, etc. Brisbane et al. (2021) report four items used to capture perceived credibility, but a source is not offered, and results suggest that items were combined into a single measure. Thus, across these studies generally studying “credibility,” varied differences in operationalization contribute to measurement error (Loken & Gelman, 2017) and prohibit direct comparisons.

Beyond these measures of credibility, studies have employed an assortment of other tools to capture other variables of interest. As previously noted, adherence to or endorsement of traditional gender roles/norms have emerged as an important variable to help explain perceived credibility of female/male sports reporters (Mudrick & Lin, 2017; Yang et al., 2022). However, measurement of these concepts varied. For example, Etling and Young (2007) and Etling et al. (2011) used items from Benson and Vincent’s (1980) Sexist Attitudes Toward Women Scale and Swim et al.’s (1995) Old Fashioned Sexism Scale. Mastro et al. (2012) likewise cited the work of Swim et al. (1995), noting that items were modified from that study to measure gender-based attitudes as a covariate in their analysis. Mudrick et al. (2016) also cite the work of Swim et al. (1995) but instead refer to the measure as the modern sexism instrument, and they also used Levant et al.’s (2007) Male Role Norms Inventory-Revised to assess support for traditional male gender roles. Olgemöller and Schupfner (2025) report using items that “align closely” with the Swim et al. (1995) measure (p. 7).

Boczek et al. (2022) also assert the importance of individual endorsement of traditional gender roles, but they assessed this trait via a measure of right-wing authoritarianism (RWA; Manganelli Rattazzi et al., 2007). Lastly, Yang et al. (2022) employed support for traditional gender roles as a moderator in their study of the effect of auditory cuteness on perceived expertise, citing Brown and Gladstone’s (2012) measure of the construct. Thus, although multiple studies have asserted the importance of gender role beliefs, they have measured it via different scales.

Discussion

Despite growth in female participation in sports (McGuire, 2025) and sports viewing (Nielsen, 2023), biases surrounding women’s role in sports journalism persist in varied forms. This paper reviewed 30 years of scholarly research using experimental studies designed to test the impact of reporter sex on audience perceptions of reporter credibility in order to document consistencies and discrepancies in study findings, as well as various study attributes that may contribute to differing outcomes. Among the articles reviewed here, an equal number of studies alternately found biases against female reporters (e.g., Ordman & Zillmann, 1994), in favor of female reporters (e.g., Pratt et al., 2018), or no bias whatsoever (e.g., Baiocchi-Wagner & Behm-Morawitz, 2010). More commonly, these biases were dependent upon various study attributes, such as participant sex and/or athlete sex (e.g., Etling et al., 2011), reporter attractiveness (e.g., Mudrick & Lin, 2017), or endorsement of traditional gender roles (e.g., Yang et al., 2022). Thus, consistent accumulation of knowledge is hampered by inconsistency in findings and sometimes directly contradictory outcomes, leading to the question posted by this essay’s title: What do we know? Despite these multiple studies collectively employing more than 5,000 research participants, the answer remains somewhat inconclusive.

One possible and intuitive explanation for these varied study findings over the decades is broader social change regarding normative perceptions surrounding female participation in sport. For example, the National Collegiate Athletic Association (NCAA) reports double-digit growth in women’s participation and leadership in college sports over the past decade (McGuire, 2025). Ongoing coverage of Olympic games has documented increased representation of women’s sports both in newspapers (Dean & Somaini, 2024) and televised coverage of the games (Angelini & Arth, 2022). In professional sports, increased viewership of WNBA has been widely celebrated, attributed to interest in star athletes such as Caitlin Clark (Bachman, 2024) and increased interest overall (Poole, 2025). With this growth, changes in normative perceptions could arguably follow, which would seemingly be reflected in the diminished biases regarding credibility perceptions. However, the three studies reviewed here that documented overall biases against female sports reporters were published at equal time intervals, each separated by more than a decade (i.e., Ordman & Zillmann, 1994; Etling & Young, 2007; Luisi et al., 2020). Thus, changes in normative perceptions would not seem to be reflected. Furthermore, scattered among these chronologically were other studies variably demonstrating opposite effects, no effects, or contingent effects. As such, changes in social norms may be plausible, but the scholarship reviewed here cannot reliably speak to such changes as a singular explanatory mechanism.

Accumulation of Knowledge during Times of Normal Science

Returning to Kuhn’s (1996) thoughts on the work that characterizes normal science, many of the studies reviewed here certainly reflect that type of research. Recall that during periods of normal science, researchers operate under a shared paradigmatic framework, employing similar approaches to study design and measurement to develop more granular knowledge of the area of inquiry. If one were to ascribe to this view, the early work of Ordman and Zillmann (1994) clearly establishes a blueprint for much of the research that followed—between-subjects manipulations of media messages in order to affect variation in reporter sex, random assignment of male/female research participants to experimental conditions, measurement of some form of perceived or rated credibility. Much of what followed largely mimics this general design, incrementally adding other variables (e.g., athlete gender, audience, and reporter characteristics) to provide more nuanced detail to what’s known about this cause-effect relationship. However, the assortment of at-times contradictory findings does not reflect a consistent accumulation of knowledge, and the sometimes unsystematic way this research has collectively unfolded could possibly help explain differences in study findings.

Stimuli, Sport, and Replication

As noted here, research in this area reflects notable differences in media platforms and modalities, visual prominence of the manipulation of reporter sex, sport and sex of the athlete, theories used to undergird the predicted effects, and measures used to capture potential effects. The end result is arguably a failure to systematically replicate study findings in order to more confidently demonstrate robustness of effects over time and context. To be fair, this is hardly limited to research on this specific topic, and the “replication crisis” has been observed in other social scientific disciplines (Jensen et al., 2023; Maxwell et al., 2015). Although straightforward replication could help illuminate sometimes contrasting findings, careful consideration of various study and design attributes noted here could also aid systematic progression within the literature.

With respect to the messages tested in the studies reviewed here, their generally common feature is media coverage of sports. However, this reflects a remarkably large universe, and wide differences in study stimuli likely have some bearing on varied study outcomes. Moving forward, exploring these differences in a way that is explicitly grounded in the meaningful differences afforded by competing media platforms is needed in order to better speak to potential medium impacts. Questions surrounding the impact of competing media platforms on credibility perceptions are hardly novel or unique to sports communication (e.g., Lee, 1978; Newhagen & Nass, 1989), and impacts of media form have been broadly documented (e.g., Bracken, 2006; Kiousis, 2006). In short, what differences between media platforms and messages should make a difference, and why?

With respect to both the medium being tested as well as type of message, differences can variably magnify or minimize the role of the reporter, a central aspect of this research. For example, text-based media lend themselves to easy experimental manipulation in the form of alternate bylines/reporter photographs next to a story (e.g., Boczek et al., 2022; Hahn & Cummins, 2014; Mastro et al., 2012). However, this also may moderate potential impacts on credibility perceptions if readers selectively attend to or choose to ignore this message element. In audio-visual stimuli, reporter sex is more overt and persistent in the form of the reporter’s voice (e.g., Bell et al., 2022) or physical appearance (e.g., Greer & Jones, 2012), which arguably would yield greater impacts on viewer response.

With respect to the visual inclusion of the reporter within the message, depiction is important to the extent that it helps make reporter sex an overt cue that could influence perceptions. Too subtle a manipulation could serve as a possible explanatory mechanism for lack of effects/differences in perception (e.g., Baiocchi-Wagner & Behm-Morawitz, 2010). Moreover, studies employing Social Identity Theory rest upon salience of reporter sex to denote in-group/out-group status among study participants (e.g., Brisbane et al., 2021), and prominence of the reporter could play a key role in helping activate that salience. Lastly, visual depiction of the reporter is also important in study designs that have incorporated reporter attractiveness in some fashion (e.g., Yang et al., 2022). All these considerations may have direct bearing on reader/viewer response, depending on the proposed theoretical mechanism at work.

With respect to the sport being examined, a key consideration is obviously athlete sex, which has been a factor in some studies (e.g., Harris, 2013; Mudrick & Lin, 2017). Beyond this, broader normative perceptions surrounding stereotypically masculine and feminine sports also merit persistent consideration (e.g., Pratt et al., 2018) in order to more clearly and consistently map the terrain of extant knowledge.

Theory and Replication

With respect to theoretical frameworks, scholarship in this domain has relied on competing perspectives, and no single approach has emerged as dominant. Thus, a goal of future work is to identify theories that are supported by study findings and rule out those that fail to explain observed biases. To be certain, these competing perspectives are not necessarily a weakness or at odds—they’re just different. Furthermore, study findings have variably supported (Etling & Young, 2007) or failed to support (Baiocchi-Wagner & Behm-Morawitz, 2010) theoretically derived predictions. In the case of the former, future work should seek to continue to explore relationships in order to continue to explore limiting conditions or meaningfully expand contexts and applications. For example, if future work continues to examine esports (e.g., Charitat & Cianfrone, 2023) or other new forms of competition, researchers should explicitly articulate precisely what attributes surrounding this novel context could/should present novel findings. In the case of theoretically driven studies where predictions are not supported, genuine consideration must be given to why the lack of support was discovered: Is the theory no longer valid (and why)?; Was there a flaw in the research design that yielded the finding (e.g., lack of salience of reporter sex within the stimulus)?

In both scenarios (i.e., studies demonstrating support for predictions, as well as lack of support), it is paramount that researchers more directly test the theoretical mechanisms at work, as this allows greater confidence in support for or elimination of a given theory. Take the notion of stereotypes/schema as an explanatory framework. Brisbane et al.’s (2021) study predicting sex-based differences in perceptions of female/male journalists failed to find evidence supporting this hypothesis. In that study, they offered stereotyping as a theoretical mechanism, arguing that stereotyping is a two-stage process whereby stereotypes are first automatically activated in the first stage and then applied to an encounter in the second stage. Thus, failure to support the predicted findings could alternately be the result of (a) broad, normative changes in these stereotypes, (b) individual-level differences in endorsement of these stereotypes, (c) failure within study participants to automatically activate the stereotype, or (d) failure to apply the stereotype. Careful, precise measurement is needed to verify both individual-level belief in as well as activation of these stereotypes and not the mere presumption of stereotypical views. In the case of the final possibility, failure to apply stereotypes when evaluating a target could be the result of sensitization to the study purpose (Leustek, 2017). Thus, it could be that the theory is incorrect, or it could be that it was not directly tested.

Similarly, studies relying on Social Identity Theory as the explanatory mechanism would be well served to employ manipulation checks to affirm individual recognition of and membership to a social group. Furthermore, that group membership must be salient during viewing in order to impact subsequent evaluations, and failure to support SIT-derived predictions could be a function of lack of salience of the specific identity in question. Lastly, Social Identity Theory also holds that individuals may hold multiple identities that are not mutually exclusive (Campo et al., 2019; Dalai & Naraine, 2024). This invites questions of which identity is most salient when making subsequent judgements. In sum, careful and more direct measurement of concepts and theoretical mechanisms is needed to help explain and hopefully alleviate inconsistent findings across the literature.

Measurement (and Inclusion) of Key Concepts

This careful testing of theoretical mechanisms also invites careful attention to measurement of key concepts, as well as their integration into research designs. As noted at the outset of this essay, a common hallmark of these studies is factorial manipulation of reporter sex and oftentimes participant sex. However, observers have long noted the distinction between biological sex and more sociologically oriented concept of gender, which invokes broader discussions of identity and social norms (Butler, 1990). Furthermore, gender identity has been robustly embraced by some exploring the nexus of sport and communication (Kane et al., 2013; Lenskyj, 2012). Despite this, most of the work reviewed here adheres to binary definitions of reporter/participant sex.

Thus, perhaps the greatest need in advancing this literature is to more strongly integrate gender, both as a function of an individual participant’s identity as well as endorsement of traditional gender-role norms as an explanatory mechanism. On the latter, a select few studies reflect this shift to varying degrees (Boczek et al., 2022; Brisbane et al., 2021; Mudrick et al., 2016; Yang et al., 2022). Future work exploring biased perceptions need to more precisely capture the more nuanced concept of gender instead of, or in combination with biological sex. Furthermore, use of these measures in more sophisticated analyses (e.g., mediation/moderation) has potential to advance our understanding of these biases beyond statistical procedures that more strongly rely on categorical measures. For example, Yang et al.’s (2022) recent work employing moderation analysis found that the more participants embraced traditional/stereotypical gender roles, the lower the perceived expertise of a female podcaster. Furthermore, gender role beliefs also moderated the relationship between expertise and intent to continue the podcast. Thus, future work should directly assess gender identity as well as support of stereotypical gender roles for inclusion in analyses, as recent studies demonstrate the potentially greater predictive ability of these concepts compared to simple biological sex or sport gender typing (Olgemöller & Schupfner, 2025; Yang et al., 2022;).

In addition, as previously noted, the key outcome across all this body of literature is the notion of credibility or a close analog. However, operational measurement of this outcome varies across the literature, inhibiting direct comparisons between studies. On the one hand, differing scales may be required due to differences in context. For example, returning to earlier concerns regarding stimuli selection, some media may emphasize select attributes of credibility that are less salient in other contexts (e.g., dynamism; Metzger et al., 2003). Furthermore, credibility could be variably tied to the reporter, news organization, or medium, further adding imprecision to measurement. Thus, a modicum of flexibility may be justified.

Nonetheless, recall that one attribute of a paradigm is shared approaches to measurement among scholars working within an area of research (Kuhn, 1996). Thus, future work exploring biased perceptions should work to not only identify the source of measurements employed (i.e., what measure is being used) but work to embrace greater uniformity in use of these measures. Greater consistency in measurement represents one modest means of ruling out the potential explanations of differing study outcomes. For example, although a case could certainly be made for alternate measures depending on study context, Ohanian’s (1990) scale including expertise, trustworthiness, and attractiveness has been most commonly employed and also reflects important dimensions that encapsulate distinct aspects of reporter performance and characteristics.

Conclusion

Despite the differing outcomes reflected within the literature suggested here, the wealth of research exploring biased perceptions of female reporters offers one silver lining—these biases have been broadly recognized by scholars who are committed to continued investigation using an array of tools, methods, or approaches. Fervent interest in this topic remains, and the hope is that this review illuminate some of the possible reasons for these differing study outcomes and encourages greater consistency and systematic accumulation of knowledge to help identify, explain, and overcome these biased perceptions. In closing, the following recommendations are offered: • Fully and exhaustively consult the relevant literature in order to draw parallels with past studies. Review of the articles cited herein reveals that some fail to consult all the scholarship in this area, overlooking some contributions. Future work should fully connect to the literature in this area in order to compare and contrast findings. Consistencies within cited literature should be explicitly noted, and discrepancies must be carefully and thoroughly accounted for in future work. • Researchers should design research scenarios and employ study stimuli that embrace the salient attributes that may have causal impact (e.g., visual prominence of the reporter). As noted above, differences in study stimuli can variably minimize or emphasize the role of the reporter, their visual and aural prominence, and the type of information presented. All these should be carefully considered and explicitly accounted for in research designs and again, connected back to relevant literature to illuminate consistent and discrepant findings. • Carefully and, when possible, directly measure theoretical processes and causal variables (e.g., identity salience; endorsement of traditional gender roles). Explicitly examining these can help better test theoretically derived predictions, explain study findings, and rule out unsupported theoretical approaches. • Draw upon established measures appropriate to the study context, balancing the unique attributes of a given research design while also connecting with the literature broadly. Furthermore, use these measures in a manner consistent with their development and with past literature. Again, consistency in measurement is a relatively modest means of aiding the systematic advancement of the literature.

Lastly, it bears repeating that the present study has focused solely on experimental designs with categorical manipulation of independent variables, and a host of additional literature can offer valuable insights. Furthermore, recent studies have embraced more holistic predictive/causal models that can integrate a wider constellation of variables and characteristics, and such approaches hold great potential for illuminating important variables in addition to and in conjunction with reporter sex. Thus, greater use of such approaches can help further advance this decades-old line of inquiry.

Footnotes

Ethical Considerations

This study does not involve collection of data from human subjects but instead reflects the analysis of existing information/documents. No ethical approval or informed consent was sought for the conduct of this research.

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The author whose name is listed immediately above certify that he has NO affiliations with or involvement in any organization or entity with any financial interest, or non-financial interest in the subject matter or materials discussed in this manuscript.