Abstract

Due to the rise of fraudulent responders, either human or automated bots, researchers must carefully monitor online survey data quality. The literature on preventing and detecting fraudulent responders is growing, but the prevalence and experience of researchers experiencing fraudulent responders remains unknown. Through an online survey of researchers who have published studies using online surveys, this study explores researchers’ (

Introduction

Over the past two decades, online survey research has increased dramatically and become a leading data collection approach among social scientists and other researchers (Chandler & Shapiro, 2016; Daikeler et al., 2020; van Quaquebeke et al., 2022). This is primarily due to the ease of distribution and cost-effectiveness of online surveys in recruiting larger samples as well as enabling researchers to access populations that otherwise would be difficult to reach (Bybee et al., 2022; Chandler & Shapiro, 2016; Coppock, 2019; Donegan & Gillan, 2022; Evans & Mathur, 2018; Hammond, 2018; Lawrence et al., 2023; Wright, 2005). Many researchers use online surveys as they can be easily distributed via email, with anonymous or individualized links, online panels (e.g., Qualtrics, Amazon Mechanical Turk), or social media posts or advertisements (Vehovar et al., 2016).

While there are several benefits to using online surveys in research, it behooves researchers to monitor and maintain online survey data quality. Though not exclusive to online surveys, researchers need to be aware of the potential for carelessness in responses (Ward & Meade, 2023), which occurs when participants do not read the entire survey question or are unable to understand the question due to differences in comprehension capabilities (Lopez & Hillygus, 2018). There is also the possibility that a participant accidentally participates in an online survey more than once (Lawlor et al., 2021). More recently, a critical aspect of data quality that researchers need to be vigilant of is the rise of fraudulent responders (also known as “

A fraudulent human responder is someone who is deliberately dishonest in an online survey by assuming a false identity or responding with fake answers (Lopez & Hillygus, 2018) and/or trying to take the survey more than one time while using phony profiles (Zhang et al., 2022). An automated bot, also known as a survey bot, is algorithmically programmed by a human to automatically answer online surveys, frequently more than once, while providing fake data (Kennedy et al., 2021; Storozuk et al., 2020). Importantly, both human and bot fraudulent responders have malicious intent and deliberately misrepresent themselves, which is often done to receive monetary benefit from online survey participation incentives (Bethlehem, 2010; Hulland & Miller, 2018; Kumarasamy et al., 2024; Lawlor et al., 2021; Wright, 2005). In addition to the increased financial expenditure from wrongly providing monetary incentives for fraudulent responders, their data can also have adverse implications on the reliability of research findings if included in the analyses (Agrawal et al., 2025).

While there has been an increase in researchers publishing about their experiences with fraudulent responses (e.g., Arevalo et al., 2022; A. M. Bell & Gift, 2023; C. J. Bell et al., 2020; Brainard et al., 2022; Bybee et al., 2022; Griffin et al., 2022; Kumarasamy et al., 2024; Pozzar et al., 2020; Salinas, 2022), it is still unknown how prevalent this issue is among online survey researchers. Awareness of a technology-related issue, like fraudulent responders accessing online surveys, may strongly influence intentions to implement prevention strategies (Dinev & Hu, 2007). It is unknown if there are differences between those who do and do not have experience with fraudulent responders in their knowledge of fraudulent responders. This study contributes to the literature by addressing the following research questions:

(1) How many online survey researchers have experienced fraudulent responses affecting their surveys?

(2) Are there differences between researchers’ knowledge of fraudulent responders in online surveys between those who have experienced fraudulent responders (EFR) and those who have not experienced fraudulent responders (NEFR) in their surveys?

(3) How do researchers handle fraudulent responders affecting their online surveys?

Methods

Recruitment and Data Collection

We obtained a list of researchers who had published peer-reviewed journal articles between 2017 and 2022 using an online survey by searching the Web of Science Core Collection database. The search terms included “online survey,”“web survey,” or “web-based survey.” The language was set to English and the region to the United States. The search yielded 15,204 publication results, with 12,258 unique email addresses. In this study, we only kept the email addresses from academic institutions (i.e., ending with an “.edu” domain), resulting in 7,799 academic researchers. Initial recruitment emails were sent on May 16, 2023, with follow-up reminder emails sent on May 25th and June 1st. One email failed to deliver, and 655 emails bounced back, resulting in 7,143 successfully delivered emails. To prevent fraudulent responses and ensure data quality, each researcher was invited to participate in the survey via an email containing a personal survey link. The survey link directed the participant to the informed consent, which provided information about the study, including the risk of harm and potential benefits of the research, and explained that they had the option to stop participating in the study at any point. This study was designed to minimize the risk of harm to participants by using a non-invasive online survey for the study design, giving the participants the choice to opt in/stop participating, and not asking sensitive questions. Participants were informed that the results of the study could lead to better quality data and potential policy implications. Those who opted to participate in the online survey clicked the reCAPTCHA box at the bottom of the informed consent webpage and were directed to the online survey. The online survey took approximately 10 minutes to complete. After completing the online survey, participants could enter their name and email address to participate in a drawing for a $25 Amazon gift card (one gift card per every 100 participants). The study procedure was approved by the university’s Institutional Review Board. The survey response rate was 10.6%, or 758 responses. Participants were excluded from the final sample due to completing less than 70% of the survey (

Measures

In this online survey, participants were asked about their experiences with online surveys, including questions related to fraudulent responders or “scammers.” Throughout the online survey, we opted to use the term “scammer” due to simplicity and ease of reading for the participant. A definition for “scammer” was initially provided for participants, “Scammers refer to human or automated respondents (e.g., bots) who are deliberately fraudulent in their responses (e.g., for monetary incentive, to sway the results) and may complete an online survey multiple times.”

Knowledge of Fraudulent Responders in Online Surveys

Participants rated their level of agreement with five statements pertaining to their knowledge of fraudulent responders in online surveys. The five statements, adapted from Dinev and Hu (2007) included: (1) “I am aware of the online survey scammer problems and consequences,” (2) “I discuss with colleagues and/or people around me about scammers in online surveys,” (3) “I read about the problems of scammers infiltrating online surveys,” (4) “I am confident that I can remove scammers responses from my online survey data,” and (5) “I am confident I can prevent scammers from infiltrating my online surveys.” Responses were measured on a 5-point Likert scale ranging from 1 (

We asked participants, “How difficult do you think it is to protect online surveys from scammers?” Response was measured on a 5-point Likert scale ranging from 1 (

Online Survey Experience With Fraudulent Responders

Participants were asked, “Have you experienced scammers affecting your online survey research?” Response options included:

Next, we wanted to know what participants did once they determined their study had been infiltrated by fraudulent responders. We asked two questions. “What did you do when you determined that your study had been affected by scammers

Finally, we asked three questions about the quality of the data. “What percentage of the completed responses did you identify as scam responses?” Response options included: 1 (

Demographic Information

Participants' age, gender, race/ethnicity, and level of education were measured. “What is your age in years?” Response options ranged from 18 to more than 90 in increments of 1 year. “What is your gender?” Response options included:

Data Analysis

Given the exploratory nature of this study, data were primarily analyzed descriptively. The differences in knowledge of fraudulent responders between the two groups (i.e., experienced fraudulent responders vs. not experienced fraudulent responders) were compared using Welch’s

Results

A little over one-third of the participants (

Experience with Fraudulent Responders.

Participant Characteristics

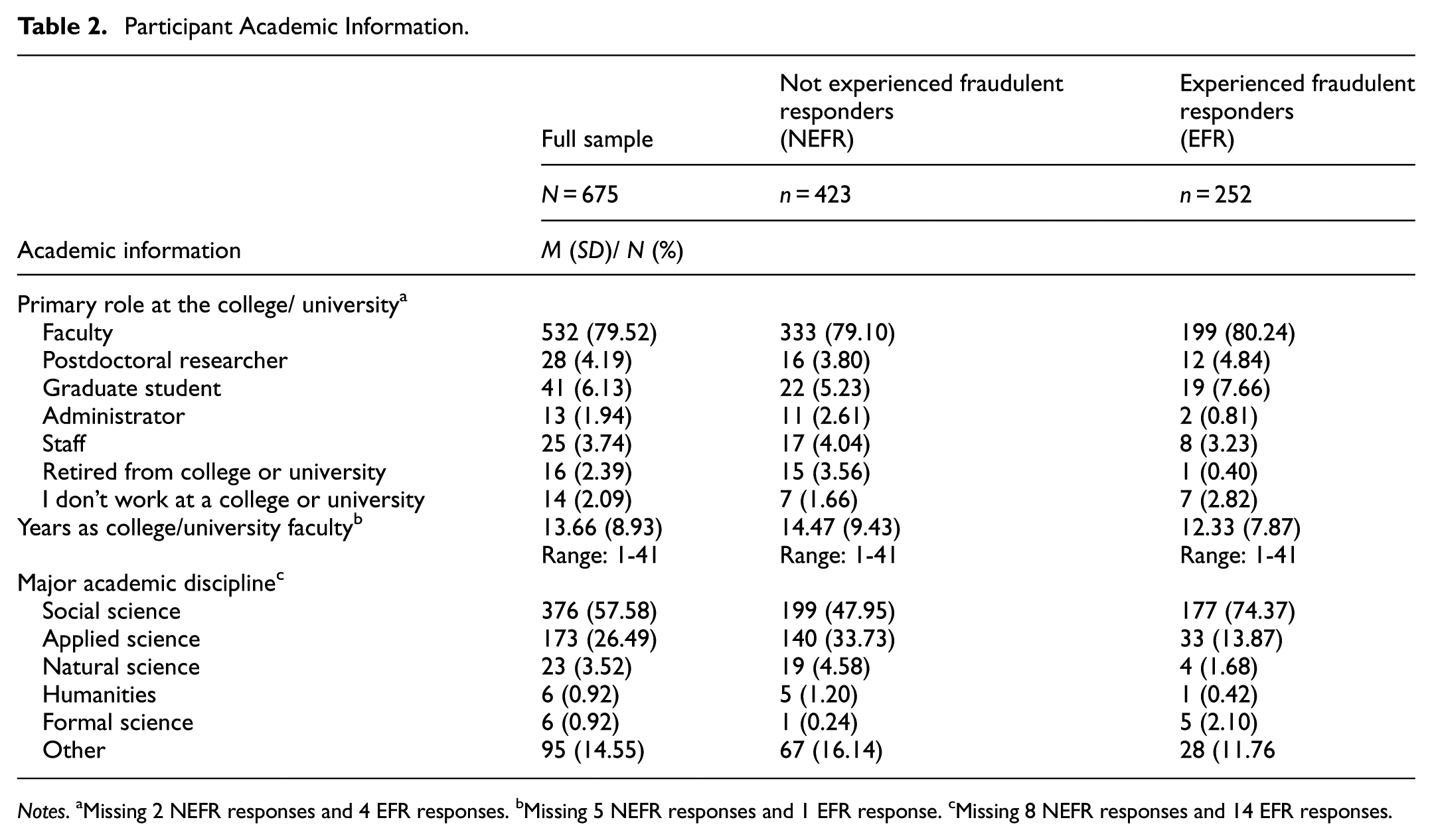

Participants in this study were, on average, aged 44.94 years (

Participant Characteristics.

Participant Academic Information.

Knowledge of Fraudulent Responders

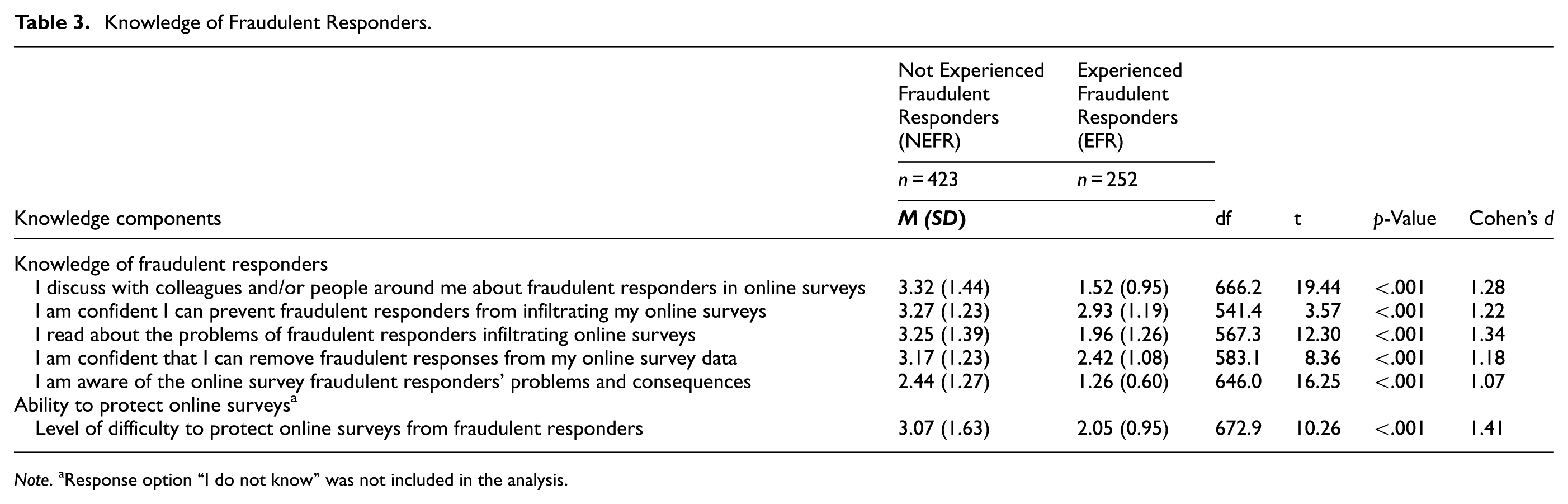

EFR participants reported statistically more agreement, compared to NEFR participants, that they were aware of online fraudulent responder problems and consequences (Cohen’s

Knowledge of Fraudulent Responders.

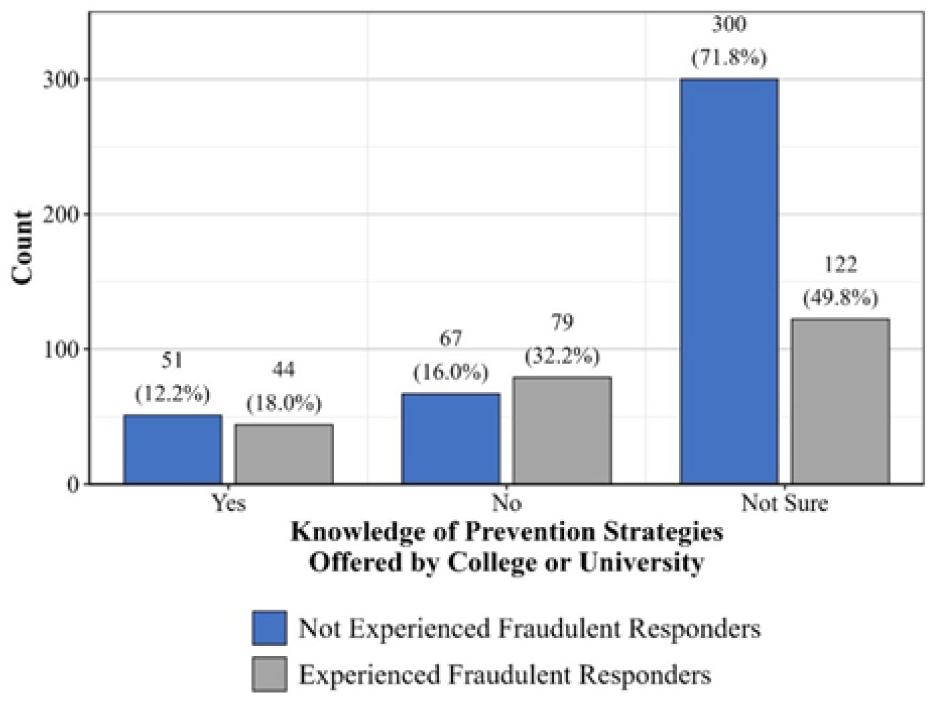

Knowledge of prevention strategies offered by college or university,

How Researchers Handled Fraudulent Responders

Of the EFR participants, about two-fifths (40.24%) reported noticing the issue of fraudulent responders in their online survey during the recruitment phase, while most (51.79%) noticed it during the data cleaning phase. See Figure 3 for the phase respondents noticed that online survey had been affected by fraudulent responders. Once participants determined their study had been affected by fraudulent responders, they took between one to six actions to address this issue, with an average of 2.69 actions (

Phase of the study when participants noticed that their online survey had been affected by fraudulent responders.

Reducing the impact of fraudulent responders necessitated further actions on the part of participants. Participants tried between one and ten tactics to reduce the impact of fraudulent responders with an average of trying 4.24 tactics (

How Researchers Handled Fraudulent Responders.

Discussion

This study examined researchers’ knowledge of and experience with fraudulent responders infiltrating online surveys. Most of our participants were social scientists, which was expected given the prevalence of survey research in social science disciplines (Cheung et al., 2017; Das et al., 2018; Newman et al., 2021). Over one-third (37.33%) of the researchers in this study experienced fraudulent responders affecting their online survey research; however, this number may be understated, as 19.26% of the participants did not know or did not check if they had experienced fraudulent responders. Given the increasing prevalence of fraudulent responders in online research, researchers have an ethical responsibility to be aware of and assess whether their surveys have been infiltrated by fraudulent responders. In addition, researchers who utilize online surveys must stay up to date with methods regarding data quality in order to reduce any risks associated with fraudulent responders (Yarrish et al., 2019).

There were significant differences found between EFR and NEFR participants in awareness of and confidence in addressing fraudulent responders. Awareness of the consequences of technological issues has been found to directly influence actions in implementing prevention or protection strategies (Dinev & Hu, 2007). Most EFR participants implemented multiple strategies to reduce the impact of fraudulent responders in their survey. However, the majority of them (51.79%) did not realize that their data was infiltrated by fraudulent responders until after the recruitment phase, which could have resulted in a smaller sample size than planned (Bauermeister et al., 2012) and reduced the statistical power of their survey study. Further, only about one-sixth of these participants were completely confident that they addressed the fraudulent responder problem in their data. Given the evolving nature of fraud in online surveys (Griffin et al., 2022; Simone et al., 2024), it is possible that the set of fraud detection strategies participants implemented were inadequate to detect complex fraudulent responses. Future research on fraudulent responders should explore psychosocial determinants as well as the variation in types and strategies used by fraudulent responders. Qualitative research is also needed to better understand which strategies researchers are using to identify and resolve their fraudulent responder issue.

Half of the EFR participants (50%) reported that they did not know if their college or university had prevention strategies or resources to help researchers who experienced fraudulent responders. This percentage rose to almost three-fourths (72%) of the NEFR participants. Given the rise of fraudulent responders, colleges and universities need to invest in prevention strategies and resources for researchers at their institution who are encountering this critical issue. Unfortunately, from our data it was not clear whether colleges and universities, as well as specific disciplines, are aware of these risks and/or providing resources, including training, for students, research staff, and faculty members. Without additional training in this area, it is likely that this problem will continue to increase over time. Based on the responses from this sample of college and university researchers, even if their institution has policies and/or resources to address online survey fraudulent responders, the information is not easily accessible. Future research should explore how the presence or absence of institutional policies, including whether current institutional review boards (IRB) frameworks sufficiently guide researchers in this evolving area, influences researchers’ preparedness and response strategies to address fraudulent responders. Additionally, research should focus on designing educational strategies to increase awareness of online survey fraud among researchers, creating training programs to help researchers use available institutional resources to combat fraud, and providing grounded recommendations to mitigate the impact of fraudulent responders. Another line of research investigating the characteristics of fraud and developing reliable tools to prevent and detect fraudulent responses is also needed to safeguard the scientific integrity of survey research (Agrawal et al., 2025). Research also needs to consider the ethical burden of excluding data due to fraudulent responders.

The adverse impacts of fraudulent responders on the use of scientific resources were reflected in the inability and/or unwillingness of about 9% of our EFR participants to publish their data. Hence, the increase in fraudulent responses in online surveys affects the quality of the data, which, in turn, may affect whether research can be published and thus potentially impact researchers’ career productivity (e.g., annual reviews, progress toward tenure).

Limitations

Although this study provides valuable contributions to the literature, we must acknowledge its limitations. The sample was limited to researchers who published their online survey research, had a United States college or university email address, and chose to participate in the study, which may limit the generalizability of the findings. This study does not reflect the experiences of non-academic researchers or academic researchers who may have experienced online survey fraudulent responders but have not published their research. Additionally, the fraudulent responder experiences for staff and students at colleges and universities in the United States may not be fully reflected, as the sample was predominantly comprised of college and university faculty members. Future research should explore researchers’ knowledge of and experience with fraudulent responders in online surveys among researchers working in different roles/organizations and outside of the United States. Additionally, there was a low response rate (10.6%), which is a well-documented challenge in survey-based research, particularly for online surveys (Daikeler et al., 2020, 2022; Nulty, 2008). We sent two reminder emails and included personal survey links to encourage study participation. While we have a relatively large sample of online survey researchers (

Conclusion

While this study does not provide tools to prevent or detect fraudulent responses, we do offer some guidance to researchers, especially those who have not experienced survey fraud yet. First, awareness of fraudulent responders is the most critical aspect of protecting the integrity of the data. Second, it is crucial to implement necessary protocols for preventing and detecting fraud (e.g., adding honeypot questions, recording metadata) before starting participant recruitment. Third, and related to the previous point, make an effort to get familiar with institutional resources and the survey platform before data collection. For example, some universities subscribe to advanced survey security features that remain unknown to researchers. Fourth, remember that not all fraudulent responders are alike. Hence, if your survey is targeted by fraudulent responders, stay flexible as you prepare the plan to detect and mitigate the effects of fraudulent responses, and document the steps clearly to aid reporting transparency. Fifth, the fraud detection process can not only miss identifying fraudulent responses (false negatives), but it can also mark some legitimate responses as fraudulent responses (false positives). Given this, researchers should closely work with their institute’s ethics board to balance human subjects’ protection (e.g., privacy concerns from collecting identifiable information to detect fraud) and the integrity of data. If providing participation incentives, seek the ethics board’s guidance to appropriately deal with the problem of fraudulent responders. For example, by including a statement in informed consent stating that “responses deemed low-quality or fraudulent will not receive participation incentives.”

In conclusion, this study elucidates the experiences that online survey researchers, who published peer-reviewed journal articles of studies using online surveys between 2017 and 2022, have had with fraudulent responders infiltrating their online surveys. Researchers must be more proactive in preventing and removing fraudulent responses. Colleges and universities must also develop effective educational programs to train researchers on how to prevent online survey fraud and, if it happens, prepare them to systematically address the issue using a set of robust tools and strategies.

Footnotes

Ethical Considerations

This study received ethical approval from the Clemson University IRB (approval # IRB2023-0283) on April 07, 2023.

Consent to Participate

Informed consent was reviewed prior to starting the online survey. Those who opted to participate in the online survey clicked the reCAPTCHA box at the bottom of the informed consent webpage and were directed to the online survey.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data is available upon reasonable request.