Abstract

The integration of artificial intelligence (AI) in flipped classrooms is reshaping foreign language writing instruction, yet its impact on student engagement, self-efficacy, writing performance, and technology affinity remains underexplored. This study investigates the effectiveness of AI chatbots in enhancing flipped learning by examining their influence on engagement, self-efficacy, writing performance, and Affinity for Technology Interaction (ATI), while controlling for gender. A quasi-experimental design involving 252 Indonesian English majors was employed, with participants divided into an experimental group (flipped learning + AI chatbot) and a control group (flipped learning only). Structural Equation Modeling (SEM) and Analysis of Covariance (ANCOVA) revealed that AI-enhanced flipped learning significantly improved self-efficacy, writing performance and engagement, though ATI declined over time. Gender differences emerged, with males exhibiting stronger cognitive engagement and self-efficacy, while females benefited more in emotional engagement and writing performance. These findings underscore the need for balanced AI-human interaction to optimize learning outcomes. This study contributes to emerging pedagogical models by highlighting the nuanced role of AI in language education and emphasizing inclusive, adaptive instructional design. Future research should explore diverse learner populations, long-term effects, and AI instructor proficiency to maximize the efficacy of AI-integrated flipped learning.

Plain Language Summary

This study looks at how using AI chatbots in flipped classrooms affects students learning English as a foreign language. Flipped classrooms involve students reviewing materials before class and doing activities in class. The study focused on how chatbots impact students’ confidence in their abilities (self-efficacy), how engaged they are, their writing skills, and how comfortable they are with technology. The study found that using AI chatbots in flipped classrooms improved students’ self-efficacy, engagement, and writing performance. However, it also found that students’ comfort with technology decreased over time. Interestingly, the study also found that gender plays a role in how students interact with these tools. Male students showed stronger confidence and engagement, while female students showed greater improvement in their writing skills. Overall, the study suggests that AI chatbots can be helpful in flipped classrooms, but it’s important to consider how they affect different students and to balance AI with human interaction. Future research should look at different groups of students and the long-term effects of using AI in education.

Keywords

Introduction

Integrating educational technology in higher education has transformed traditional language learning, offering innovative ways to engage learners and enhance their skills. However, language education, which relies on direct interaction and immersive methods, faces unique challenges in adapting to digital tools (Soegoto et al., 2025). One promising approach is flipped learning, where students review writing-related materials before class and engage in hands-on activities such as drafting, editing, and peer review during class—have been shown to promote deeper learning and student agency in the writing process (Bergmann & Aaron, 2012; Hung, 2015).

While flipped learning has proven beneficial in language education, research highlights that technology-based approaches further enhance language acquisition and efficiency (Ortikov & Ugli, 2024). The use of artificial intelligence (AI) in education is increasingly gaining global interest among researchers in the field of foreign language learning (Babanoğlu et al., 2025). AI chatbots, in particular, offer significant potential in flipped writing instruction by addressing common challenges such as the lack of guidance and support during pre-class learning, which affects student engagement (Diwanji et al., 2018; Jeon & Lee, 2024; Lo & Hew, 2023). By offering real-time assistance and personalized feedback, chatbots improve student preparedness and engagement in interactive in-class activities (Lo & Hew, 2022, 2023; Wiyaka et al., 2024). Additionally, they replicate aspects of face-to-face interaction by providing instant writing feedback on grammar, style, and organization, fostering learner autonomy and reducing writing anxiety (Belda-Medina & Calvo-Ferrer, 2022; Fryer & Bovee, 2016). Empirical findings further support this, as Duong and Chen (2025) found that AI chatbots significantly enhanced writing performance in terms of content, organization, vocabulary, language use, and mechanics.

Writing is a crucial aspect of learners’ development of English as a Foreign language (EFL), serving as a fundamental productive skill (Duong & Chen, 2025). However, it is also the most challenging to master. Due to limited class time and large class sizes, many teachers find it difficult to offer individualized feedback, further complicating the learning process (Zhang, 2025). Moreover, effective writing development requires continuous practice, detailed feedback, and sustained motivation. Flipped classrooms offer an increase in in-class support for writing development (Silitonga et al., 2024). However, while research on flipped learning in language education is well-established (Hung, 2015; Shi et al., 2020)Studies on the combined impact of flipped instruction and AI chatbots on writing remain limited. Most focus either on flipped learning (Lee & Wallace, 2018) or AI-driven tools (Fryer & Bovee, 2016) in isolation. The integration of AI chatbots into flipped classrooms is an emerging and promising approach that warrants further exploration (Lo & Hew, 2023; Wollny et al., 2021).

While AI chatbots enhance learning, their design and implementation raise important equity concerns, particularly regarding gender bias. Previous studies indicate that many chatbots are designed with female identities, reinforcing gender stereotypes through avatars, language, and imagery, thereby perpetuating biases (Bastiansen et al., 2022). Research indicates that male and female learners interact with digital tools differently (Scherer & Siddiq, 2019; Venkatesh & Morris, 2000), making it essential to examine how gender influences technology affinity, engagement, self-efficacy, and writing outcomes in AI chatbot-supported flipped classrooms. earner diversity—especially regarding gender and technology affinity—shapes how students experience and benefit from these innovations. In flipped language classrooms, content is delivered online, allowing in-class time to focus on interactive tasks such as peer review and targeted feedback (Bergmann & Aaron, 2012; Silitonga et al., 2024). However, learners’ self-efficacy, a core concept in Bandura's (1977) social cognitive theory, significantly influences students’ willingness to adopt new tools and persist through challenges. In writing instruction, high self-efficacy correlates with improved writing performance (Woodrow, 2011). Furthermore, engagement, spanning behavioral, emotional, and cognitive dimensions, is a key driver of academic success (Reeve & Tseng, 2011). Research suggests that flipped learning, especially when supported by AI-driven scaffolding, enhance engagement by promoting active content interaction (Abeysekera & Dawson, 2015).

However, students’ Affinity for Technology Interaction (ATI) shapes their responses to AI tools, with high-ATI learners adapting more easily, while low-ATI learners may struggle (Franke et al., 2019; Scherer & Siddiq, 2019). Gender further moderates these effects, influencing engagement, self-efficacy, and writing proficiency in AI-supported flipped classrooms. Given the gendered nature of many AI chatbots (Bastiansen et al., 2022), it is crucial to examine how these biases affect student experiences and learning outcomes. Ensuring equitable and inclusive instructional design is essential for maximizing the benefits of AI-integrated flipped learning while minimizing unintended disparities in student engagement and performance

Despite extensive research on flipped classrooms and AI-driven feedback tools, few studies have examined their combined impact on foreign language writing instruction. Additionally, the moderating roles of gender and Affinity for Technology Interaction (ATI) in this learning model remain largely unexplored. This study addresses these gaps by investigating whether integrating AI chatbots into flipped instruction enhances writing performance, self-efficacy, engagement, and technology affinity in foreign language classrooms. By considering gender as a control variable, this research provides new insights into how learner characteristics interact with emerging pedagogical models, contributing to more adaptive and inclusive instructional designs.

To achieve these objectives, the study explores the following research questions (RQs):

Does an AI chatbot-based flipped learning approach have a greater impact on students’ self-efficacy, engagement, ATI, and writing performance in foreign language education than a conventional flipped classroom?

How does Affinity for Technology Interaction (ATI) influence students’ self-efficacy, emotional engagement, cognitive engagement, behavioral engagement, and writing performance in foreign language education?

Does affinity for technology interaction (ATI) predict self-efficacy, emotional engagement, cognitive engagement, behavioral engagement, and writing performance, with robust findings when controlling for gender?

Literature Review

AI Chatbot-Based Flipped Approach in Writing Classroom

Flipped classrooms reorganize lectures and resources outside of class and focus on collaborative work in class (Bergmann & Aaron, 2012). This technique is especially beneficial for language acquisition since face-to-face interaction is essential for communicative skills (Hung, 2015). By engaging more actively and communicative in class, students often improve their motivation, critical thinking, and performance (Shi et al., 2020). Successful implementation requires appropriate technical resources and student accountability; students must prepare before class for maximum in-class involvement (Lo & Hew, 2017)). Flipped classrooms boost drafting, peer review, and instructor feedback in writing-focused instruction, improving writing quality over time (Silitonga et al., 2024).

An AI-powered chatbot is a software application that mimics human conversation using natural language processing, allowing it to comprehend and respond to user inquiries in a way that resembles human interaction (Lo & Hew, 2023). AI chatbots provide real-time grammar, vocabulary, and content organization feedback (Belda-Medina & Calvo-Ferrer, 2022). Their immediacy supports timely feedback, which is essential to writing development. Chatbots simulate authentic communication, lowering learners’ concern over mistakes and promoting autonomy and participation (X. Jin et al., 2024). Chatbots can reinforce lessons and provide support outside of class when used with a flipped model (Fryer & Bovee, 2016). However, chatbot accuracy and pedagogical relevance should be considered because poorly built or malfunctioning systems may hinder learning (Zhai, 2023). Ethical concerns around privacy and data ownership emphasize the necessity for institutional rules.

This study utilizes ChatGPT, an AI-powered chatbot by Open AI, which generates authentic text based on user prompts (Boudouaia et al., 2024). Furthermore, the study incorporates ChatGPT into flipped writing classroom activities, highlighting its impact on writing instruction. Students valued ChatGPT’s speed and high-quality feedback (Duong & Chen, 2025). Similarly, (Su et al., 2023) found ChatGPT effective in improving language, content, and structure in argumentative writing. Building on these findings, Tseng and Lin (2024) analyzed students’ writing and reflections, demonstrating that ChatGPT not only improved writing efficiency and cohesion but also served as an alternative to peer reviewers by providing critical. objective feedback.

Self-Efficacy and Engagement

Based on Bandura's (1977) social cognitive theory, self-efficacy is confidence in one’s ability to execute tasks. Numerous empirical studies have demonstrated that self-efficacy is a key factor in language learning (Bai & Wang, 2023; Graham, 2022). Technological tools can boost self-efficacy by providing scaffolding and timely feedback, while complex or unresponsive systems may hinder learners.

Modern research divides engagement into behavioral (participation, persistence), emotional (interest, enthusiasm), and cognitive (deep processing, self-regulation) components (Reeve & Tseng, 2011). Interactive activities in flipped classrooms boost behavioral engagement, whereas emotionally supportive environments improve learning (Abeysekera & Dawson, 2015). Writing tasks are more cognitively engaging when students reflect on drafts, respond to comments, and solve problems using well-designed digital platforms

Gender and Affinity for Technology Interaction (ATI)

ATI encompasses an individual’s inclination to seek out or avoid digital interaction (Franke et al., 2019). Learners with high technology affinity embrace new digital tools swiftly, perceiving technological complexity as a manageable challenge. Conversely, low-affinity learners may exhibit technology-related apprehension, particularly in tasks requiring sustained usage (Scherer & Teo, 2019).

Gender often intersects with technology affinity, shaping how students adopt and utilize digital tools (Venkatesh & Morris, 2000). Male learners may report higher confidence in exploring new technologies, while female learners often place stronger emphasis on perceived usefulness and support (Scherer & Siddiq, 2019). Additionally, F. Jin and Divitini (2020) stated that technology is commonly regarded as a male-dominated field. Previous research also indicates that gender influences chatbot usage and academic outcomes, emphasizing the need for inclusive AI-based interventions (Deng & Lin, 2022). Understanding these dynamics is crucial for designing effective, gender-responsive technology-enhanced pedagogies (Wang et al., 2023).

Methodology

This study employs a quantitative approach to examine the impact of flipped classrooms and AI chatbots on students’ writing performance, self-efficacy, engagement, and affinity for technology interaction (ATI) using structural equation modeling (SEM) within a quasi-experimental design. Figure 1 illustrates the framework comparing experimental and control groups through pre- and post-tests.

Comparative study design.

SEM was used to analyze structural relationships among variables, treating ATI as the independent variable, while self-efficacy, emotional, cognitive, and behavioral engagement, and writing performance were dependent variables (Figure 2). A robustness test further validated these relationships, with gender controlled as a variable to account for its potential influence.

Design of the structural equation model of this study.

Participants

This study took place at Indonesian universities with 252 second and third-year English majors (19–21 years old) and was approved by the Ethics Committee of the university. Participants provided informed consent and were randomly assigned to an experimental group (n = 126; 54 males, 72 females) or a control group (n = 126; 62 males, 64 females). Informed verbal consent was obtained from all participants before data collection. Participants were informed about the study’s purpose, procedures, potential risks, and their right to withdraw at any time. The experimental group received both flipped instruction and AI chatbot interventions, while the control group experienced flipped instruction alone. A single instructor with over 15 years of experience taught both groups, collaborating with the researchers beforehand to select suitable materials and chatbots to ensure consistency.

Experimental Procedure

As shown in Figure 3, participants completed a pretest questionnaire assessing ATI, self-efficacy, and engagement. To ensure consistency, English teachers attended preparatory meetings. The experiment consisted of nine 100-minute sessions; in the first, the teacher introduced essay writing and the flipped classroom, with the experimental group receiving additional AI chatbot training.

Experimental procedure.

In sessions 2 to 7, the control group followed the flipped approach, while the experimental group integrated flipped and chatbot-assisted writing. For flipped activities, the students were given PowerPoint slides or a video of English grammar and lessons before class. In session 8, all students wrote a 350-word essay, followed by peer feedback using a rubric (Table 1) on key writing elements. The rubric’s content has been validated by consulting three experts in writing assessment to ensure its criteria accurately reflect writing proficiency and by aligning it with relevant course objectives and national writing standards.

Students’ Rubric Peer Feedback.

Table 2 provides details of the teaching activities and materials used in both groups. During peer feedback, students exchanged drafts and used a provided rubric to review and revise their peers’ work. The final session featured an advanced writing task, reinforcing previously developed skills.

Instructional Activities and Course Materials for the Control and Experimental Groups.

After the intervention, students completed a post-test on ATI, self-efficacy, and engagement. Figure 4 illustrates the students in the experimental group as they engaged with the AI chatbot. This visual representation demonstrates the practical use of the chatbot in the classroom, showing how students interacted with the technology to enhance their learning experience. The integration of the AI chatbot not only supported the students’ writing development but also encouraged greater engagement with the course content through innovative and interactive methods.

Students’ writing activities in the classroom.

Experimental Activities Involving an AI Chatbot

Before the experiment, researchers and the teacher collaborated to design teaching strategies and flipped learning activities that seamlessly integrated the AI chatbot into English writing instruction. This collaboration ensured alignment with course objectives and maximized AI’s benefits in the classroom. The teacher introduced the chatbot, explaining its features and role in the learning process, and helping students understand its function in writing development.

Throughout the course, students regularly engaged with the AI chatbot in learning activities, familiarizing themselves with its use as a problem-solving tool. Consistent interaction enabled students to leverage AI to refine their writing, receive real-time feedback, and improve their skills through practical exercises.

Writing assignments were designed to reinforce AI chatbot use, allowing students to apply feedback and progressively enhance their writing. This continuous engagement deepened their understanding of AI’s role in writing instruction and boosted their confidence in using technology for learning. Table 3 outlines the specific teaching activities implemented in the experimental group, ensuring structured and effective AI integration.

Experimental Group Activities.

Instruments

This research utilized several established questionnaires from existing literature to ensure the validity and reliability of the measurements. These questionnaires were employed in their original format to preserve their accuracy. Participants rated each item using a five-point Likert scale, ranging from 1 (strongly disagree) to 5 (strongly agree). Measurement indicators for Self-Efficacy, Emotional Engagement, Cognitive Engagement, Behavioral Engagement, and Affinity for Technology were developed by adapting established indicators.

Affinity for Technology Interaction (ATI)

Affinity for Technology refers to the way individuals approach technology, particularly whether they actively seek interaction with technology (indicating high affinity) or tend to avoid it (indicating low affinity). This construct is crucial in understanding how people engage with technology in various contexts (Franke et al., 2019). In this study, affinity for technology is measured using nine items that assess individuals’ attitudes and behaviors toward technology. For example, one item states, “I enjoy using new technologies, even if I have to learn new skills to use them.”

Self-efficacy

Self-efficacy is defined in this study as individuals’ beliefs in their ability to plan and execute actions to achieve personally meaningful goals. It significantly influences decision-making, effort, and persistence. Specifically, this research focuses on teachers’ self-efficacy in integrating AI chatbots into learning and instruction. Self-efficacy is measured across six items, covering dimensions such as learning-related knowledge (e.g., regarding digital media), technical knowledge, diagnosing with digital media, and teaching with digital media (Hülshoff & Jucks, 2024).

Engagement

Behavioral Engagement in this study is captured by five items that reflect observable behaviors such as attentiveness and effort in class. For example, one item states, “I listen carefully in class.” These items assess how students engage with class activities, highlighting their persistence and focus. Emotional Engagement is evaluated using four items that focus on the affective connection students have with their learning experience. This dimension explores feelings of enjoyment and curiosity. One example item is, “I enjoy learning new things in class,” which assesses the emotional aspect of engagement. Cognitive Engagement consists of eight items that assess how deeply students process the material they are learning. This includes their efforts to connect new information with prior knowledge. For instance, the item "When doing schoolwork, in critical thinking during learning (Reeve & Tseng, 2011).

Writing Performance

The writing performance was assessed during the experimental activities for both groups. The essay writing was conducted four times and assessed by the teacher using the rubric presented in Table 1.

The study ensured that each construct was measured accurately and consistently, aligning with validated research practices. This approach not only enhances the reliability and validity of the measurements but also allows for a comprehensive assessment of the various dimensions involved in the study (Table 4).

Validity, and Reliability of the Constructs Used In This Study.

p < 0.05.

Data Collection and Analysis

In this study, Ethical considerations of privacy, confidentiality, and data anonymization were strictly observed. Personal identifiers were anonymized, and data were securely stored to protect participants’ identities and maintain ethical integrity. The quantitative data were gathered through online pretest and posttest questionnaires. The quantitative data were initially evaluated for normality and homogeneity. The Shapiro-Wilk test was used to assess normality, while homogeneity was examined using the Levene test (Table 5).

Parallel Slopes Joint Significance Normality Test.

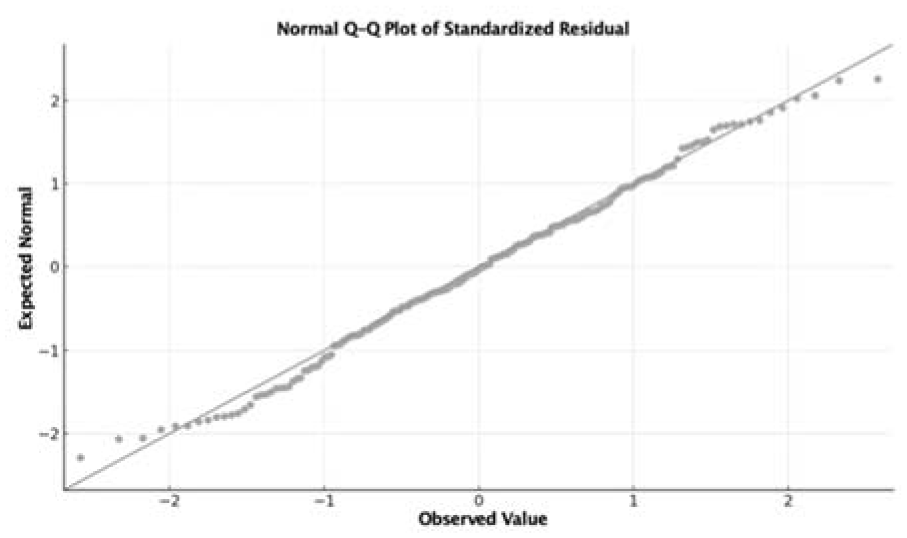

We assessed assumptions for the adjusted post-test analysis. Residual normality was evaluated with the Shapiro–Wilk and inspected via Q–Q plots. Homogeneity of variances across groups was tested using Levene’s test. Residual normality and homogeneity of variances were not met, we report heteroskedasticity-consistent (HC3) standard errors and bootstrap 95% CIs (5,000 resamples) for the covariate-adjusted model. We also provide a propensity-weighted (IPTW) analysis to address baseline imbalance. The parallel-slopes assumption held, so the adjusted post-test comparison remains appropriate. Conclusions were unchanged across robust and weighted estimators.

The parallel-slopes assumption held (joint group × covariate interactions: F(6,69) = 0.494, p = .81). Unadjusted baseline covariates were imbalanced (max |SMD| = 0.878), which IPTW reduced to good balance (max |weighted SMD| = 0.081). Accordingly, we report HC3-robust and IPTW estimates as primary. To support this output and decrease bias in normality test, then we add Q-Q plot analysis (Figure 5).

Q-Q plot normality test.

The Q–Q plot shows points closely aligned with the 45° reference; residuals appear approximately normal, so the normality assumption is met (see also Shapiro–Wilk, p ≥ .05). The results revealed no violations of normality or homogeneity, permitting the use of parametric tests such as Analysis of Covariance (ANCOVA).

ANCOVA was applied to compare the effects of flipped learning and AI-chatbot interventions between the experimental and control groups, with pretest scores as covariates and posttest scores as dependent variables. Additionally, a paired sample t-test was conducted to assess the impact of the AI chatbot on affinity for technology, self-efficacy, emotional engagement, cognitive engagement, and behavioral engagement within the experimental group before and after the intervention. The strength of these relationships was determined using partial eta-squared (Partial η2) to estimate effect sizes.

Furthermore, the study employed Partial Least Squares Structural Equation Modeling (PLS-SEM) to investigate the relationships among variables within the experimental group. A multi-group analysis (MGA) was conducted to assess the robustness of these relationships by comparing male and female groups. The PLS-SEM analysis involved two phases: evaluation of the measurement model and analysis of the structural model.

Results

Comparison Analysis

Table 6 demonstrates that the experimental intervention (AI chatbot) had a significantly greater impact on self-efficacy, behavioral engagement, emotional engagement, and cognitive engagement compared to the control group.

Descriptive Statistics.

Concerning self-efficacy, the experimental group exhibited an increase of 0.458 points (pre-test mean = 3.729, post-test mean = 4.187), whereas the control group showed a smaller increase of 0.257 points (pre-test mean = 3.593, post-test mean = 3.850). Similarly, for behavioral engagement, the experimental group experienced a 0.767-point increase (pre-test mean = 3.695, post-test mean = 4.462), compared to the control group’s increase of 0.624 points (pre-test mean = 3.639, post-test mean = 4.263). The experimental group also demonstrated greater improvements in emotional engagement, with an increase of 0.745 points (pre-test mean = 3.690, post-test mean = 4.435), and in cognitive engagement, with an increase of 0.640 points (pre-test mean = 3.642, post-test mean = 4.282), compared to the control group’s respective increases of 0.659 and 0.533 points.

In contrast, affinity for technology (ATI) decreased in both groups, although the experimental group maintained a higher overall level. The control group exhibited a reduction of 0.439 points (pre-test mean = 2.932, post-test mean = 2.493), while the experimental group experienced a smaller decline of 0.389 points (pre-test mean = 3.577, post-test mean = 3.188).

Overall, while the experimental intervention had a significant positive effect on engagement and self-efficacy, its impact on affinity for technology was less pronounced, with both groups demonstrating reductions in this area. Although the descriptive results indicate differences between the experimental and control groups, they do not establish whether these differences are statistically significant. To determine the statistical significance of these observed effects, an Analysis of Covariance (ANCOVA) is required. ANCOVA will control for potential covariates and assess the significance of differences in post-test scores while adjusting for pre-test scores, thereby providing a more precise evaluation of the intervention’s effectiveness.

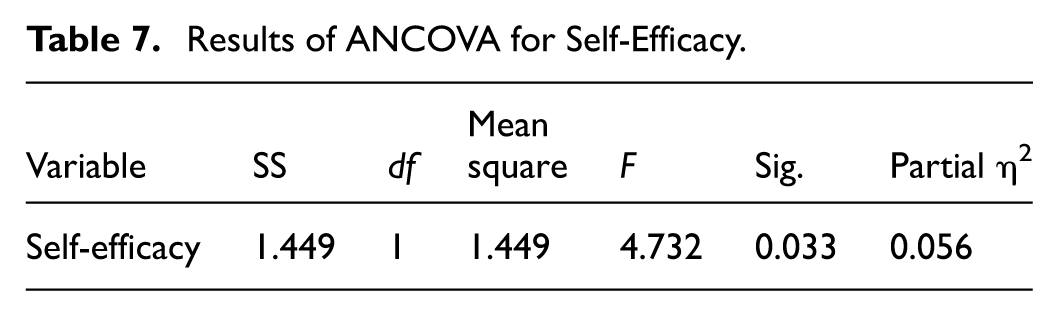

The ANCOVA results also demonstrate the influence of the AI chatbot on the pre-post-test outcomes of the observed variable. The ANCOVA results presented in Table 7 demonstrate a significant difference in self-efficacy between the control and experimental groups, with an F-value of 4.732 and a p-value of less than .05. The partial η2 of 0.056 suggests a medium effect size, indicating that the experimental intervention had a meaningful impact on self-efficacy. This result implies that students in the experimental group exhibited significantly higher self-efficacy compared to those in the control group, after controlling for initial differences. The medium effect size indicates that, while the intervention was effective, the magnitude of its impact was moderate. These findings support the conclusion that the experimental intervention successfully enhanced self-efficacy among the students.

Results of ANCOVA for Self-Efficacy.

The ANCOVA results presented in Table 8 indicate a significant difference in engagement between the control and experimental groups, with an F-value of 6.098 and a p-value of less than .05. The partial η2 of 0.071 represents a medium effect size, suggesting that the experimental intervention substantially impacted student engagement. This result demonstrates that students in the experimental group showed significantly higher engagement levels than those in the control group, further underscoring the intervention’s effectiveness in promoting student engagement.

Results of ANCOVA for Engagement.

The ANCOVA results in Table 9 reveal a significant difference in affinity for technology between the control and experimental groups, with an F-value of 10.680 and a p-value of less than .05. The partial η2 of 0.118 indicates a medium effect size, suggesting that the experimental intervention was associated with a notable reduction in affinity for technology among the students in the experimental group compared to the control group. The finding highlights that, despite the intervention’s positive effects in other areas, it resulted in a decrease in students’ affinity for technology.

Results of ANCOVA for Affinity for Technology Interaction (ATI).

Table 10 shows the comparative test between the control and experiment groups. The mean score for the experimental group (M = 3.850, SD = 0.556) was higher than that of the control group (M = 3.593, SD = 0.393), suggesting that participants in the experimental condition exhibited a greater writing performance. The F-value of 6.712, with a significance level of .011 (p < .05), confirms that this difference is statistically significant. This implies that the AI Chatbot usage in the experimental group had a meaningful impact and influenced their writing performance.

Results of ANOVA for Writing Performance.

To support this output with mitigate the potential bias, we compared post-test writing across groups using a covariate-adjusted regression because no dedicated pre-test writing measure was available. The outcome (WP) was regressed on Group and pre-treatment covariates (gender; Pre_SE, Pre_BE, Pre_EM, Pre_CE, Pre_Engage, Pre_ATI). We used HC3 robust standard errors. To further mitigate baseline imbalance, we implemented propensity score weighting by Inverse Probability of Treatment Weighting (IPTW) with stabilized weights estimated from the same covariates, checked balance via standardized mean differences (SMDs), and reported adjusted means with 95% CIs for both OLS and IPTW models (Table 11).

Adjusted Post-Test Writing (No Pre-Test Available).

Note. Outcome = WP (post-test writing). Covariates (pre-treatment): gender, Pre_SE, Pre_BE, Pre_EM, Pre_CE, Pre_Engage, Pre_ATI. IPTW uses stabilized weights. OLS uses HC3 robust SEs.

Adjusted post-test comparisons using covariate-adjusted OLS (HC3) and propensity-score weighting (IPTW). Adjusted post-test analyses indicated a small, directionally positive treatment effect (OLS: Δ = 0.102, 95% CI [−0.033–0.238], p = .132, partial η2 = 0.029; IPTW: Δ = 0.099, 95% CI [−0.017–0.215], p = .088, partial η2 = 0.037). Thus, while differences favor treatment, estimates are imprecise and should be interpreted cautiously.

Table 12 shows baseline covariates were not fully balanced across groups (max |SMD| = 0.878; several SMDs > 0.20), underscoring the risk of bias in unadjusted post-test comparisons and motivating the use of covariate adjustment and IPTW. One-way ANOVA on post-test writing indicated a significant difference favoring the treatment (Δ = 0.146, F = 6.712, p = .011; Hedges’ g = 0.56). However, groups showed notable baseline imbalance (max |SMD| = 0.878). After covariate adjustment and IPTW, the estimated difference attenuated to ∼0.10 and was imprecise (OLS: Δ = 0.102, 95% CI [−0.033–0.238], p = .132; IPTW: Δ = 0.099, 95% CI [−0.017–0.215], p = .088), indicating a small, directionally positive but non-conclusive effect once baseline differences are accounted for.

Baseline Equivalence (Pre-Treatment Covariates).

Note. |SMD| ≤ 0.10 ≈ well-balanced; table shows initial imbalance (notably Pre_ATI). This motivated IPTW and covariate adjustment.

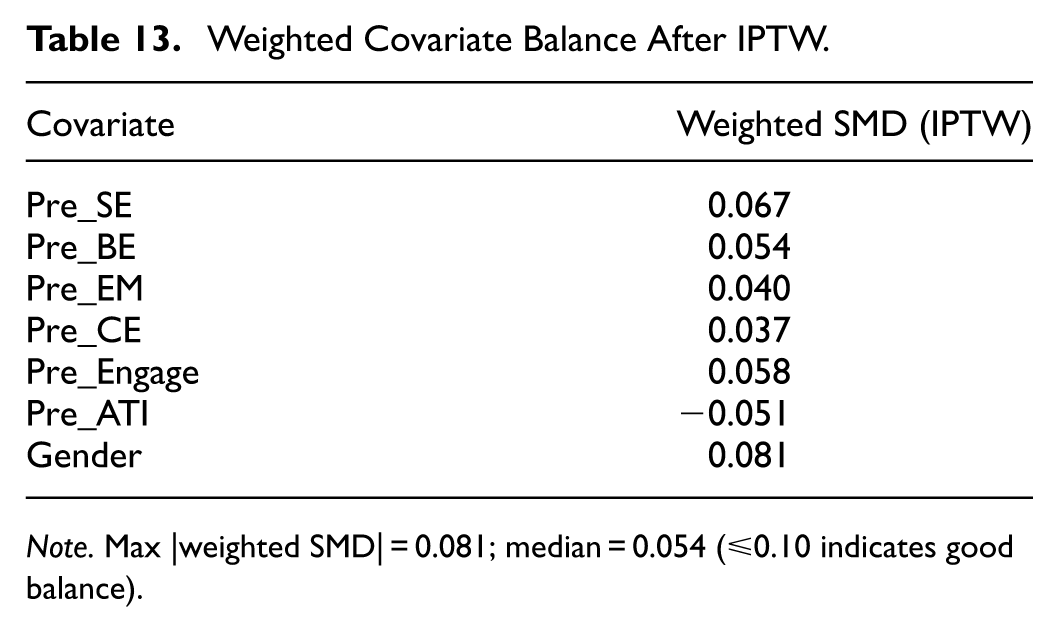

Table 13 indicates standardized mean differences (SMDs) after weighting IPTW achieved excellent covariate balance (max |weighted SMD| = 0.081), satisfying the ≤0.10 rule of thumb, thereby improving the credibility of the adjusted post-test comparison. This output indicates that there is no bias in the one-way ANOVA results.

Weighted Covariate Balance After IPTW.

Note. Max |weighted SMD| = 0.081; median = 0.054 (≤0.10 indicates good balance).

Analysis of the Affinity for Technology Interaction (ATI)

The paired sample t-test results presented in Table 14 demonstrate significant improvements across several measures from pre-test to post-test. For self-efficacy, the mean score increased from 3.662 to 4.020, with a significant mean difference of −0.358 (t = −4.311, p < .001), indicating a substantial improvement. Behavioral engagement also showed a notable increase, with the mean score rising from 3.667 to 4.363 (mean difference of −0.696, t = −14.208, p < .001), suggesting a significant enhancement in student behavior. Similarly, emotional engagement improved from 3.659 to 4.361 (mean difference of −0.701, t = −14.620, p < .001), reflecting a meaningful increase in emotional involvement. Cognitive engagement also saw a significant rise, with the mean increasing from 3.606 to 4.193 (mean difference of −0.587, t = −14.274, p < .001), indicating notable improvement in students’ cognitive engagement.

Results of a Paired Sample t-Test.

p < .05.

In contrast, an affinity for technology interaction (ATI) decreased from 3.258 to 2.844, with a significant mean difference of 0.413 (t = 4.933, p < .001), highlighting a considerable reduction in students’ ATI. Overall, these results demonstrate statistically significant changes across all areas, including improvements in self-efficacy, behavioral, emotional, and cognitive engagement, as well as a significant decline in ATI.

Structural Equation Modeling (SEM) Analysis

We estimated the model using variance-based SEM (PLS-SEM), which emphasizes prediction and handles complex models and non-normal data. Consistent with best practice, we evaluated (1) the measurement model via internal consistency reliability (CR 0.70–0.95), convergent validity (AVE ≥ 0.50), discriminant validity (HTMT < 0.90), and multicollinearity (VIF < 5); and (2) the structural model via coefficients’ significance using bias-corrected bootstrapping (5,000 resamples), collinearity (inner VIF), explanatory power (R2 Adj-R2), effect sizes (f2), and predictive relevance (Q2 via blindfolding) including out-of-sample predictive performance using PLSpredict. For overall model fit in PLS-SEM we report SRMR (target <0.08), as well as the discrepancy measures d_ULS and d_G with bootstrap percentile confidence intervals, and RMS_theta.

First, the measurement model analysis evaluates the validity and reliability of the measurement instruments, ensuring that the observed variables accurately reflect the latent constructs they are intended to measure. This stage involves assessing factor structure, convergent validity, and discriminant validity to confirm that the constructs are both reliable and distinct (Table 15).

Measurement Model Assessment.

Construct validity in SEM is evaluated via convergent and discriminant validity. Convergent validity is assessed through the analysis of factor loadings and the Average Variance Extracted (AVE). To achieve high convergent validity AVE values should exceed 0.50 (Fornell & Larcker, 1981). This study found that all items exhibited satisfactory convergent validity, indicated by AVE values surpassing 0.50, as presented in Table 15. Discriminant validity is evaluated by comparing the correlations of the latent variables with others and using the Heterotrait-Monotrait ratio (HTMT). A model demonstrates good discriminant validity when HTMT < 0.90 and composite reliability values are higher than 0.70 (Hair et al., 2014). In this study, composite reliability values ranged from 0.720 to 0.939, indicating that all constructs exhibited acceptable discriminant validity.

The second stage, structural model analysis, examines the hypothesized relationships between the latent constructs. This step tests the proposed pathways and assesses the model’s overall fit. PLS model assessment (VB-SEM) showed R2 = 0.074, f2 = 0.077, SRMR = 0.163, and RMS_theta = 0.148. While these indicate scope for refinement, the key PLS discrepancy measures were acceptable

In the final stage, the structural model analysis confirms that the proposed relationships are statistically significant and align with theoretical expectations. An effect is significant if the p-value is below .05 and the t-value exceeds 1.96, indicating a 95% confidence level. A p-value under .05 suggests the effect is unlikely due to chance, while a t-value above 1.96 ensures statistical reliability. These criteria validate the observed influence as meaningful results are illustrated in Figure 6.

The results of SEM analysis.

This comprehensive analysis of the measurement model, structural model, and hypothesis testing allows for a thorough evaluation of the SEM and the relationships among the latent constructs.

Table 16 demonstrates that ATI has a significant positive impact on several key outcomes. The path coefficients show that higher affinity for technology is associated with increased behavioral engagement (β = .266, t > 1.96, p < .05), cognitive engagement (β = .282, t > 1.96, p < .05), emotional engagement (β = .271, t > 1.96, p < .05), self-efficacy (β = .344, t > 1.96, p < .05), and writing performance (β = .297, t > 1.96, p < .05). Each of these relationships is statistically significant, as indicated by t-values exceeding 1.96 and p-values below 0.05. These findings highlight that a greater affinity for technology positively influences students’ engagement (behavioral, cognitive, and emotional), self-efficacy, and writing performance.

Results Obtained for the Influence of Affinity for Technology Interaction (ATI).

The study also conducted a robustness test based on gender to explore whether gender differences significantly affect how ATI influences self-efficacy, engagement, and writing performance. We first established configural invariance by using the same indicators, model specification, and algorithm across groups. Next, compositional invariance was supported for all constructs: the correlations between group-specific composites exceeded the 5% permutation quantile (e.g., Engagement: c = 0.995, c0.05 = 0.982, p = .21; Self-Efficacy: c = 0.991, c0.05 = 0.978, p = .18; ATI: c = 0.997, c0.05 = 0.983, p = .34), indicating that composites are formed equivalently across groups. Finally, tests of equality of composite means and variances [were supported for all constructs / indicated a difference for X (Δmean, p = .03)], implying invariance. Consistent with MICOM guidance, we proceeded with multi-group analysis (MGA) of structural paths.

The premise is that gender diversity may play a critical role in shaping the relationship between affinity for technology and these outcomes. By examining the differences across genders, the study aims to determine whether the impact of technology affinity varies between male and female participants. This analysis potentially uncovers insights into how gender-specific factors might influence self-efficacy, engagement, and writing performance in response to ATI (Table 17).

Robustness Test by Gender.

p < .05. **p < .01. ***p < .001. +p < 0.1.

The gender-based robustness test is important for understanding whether gender-specific influences contribute to varying levels of self-efficacy, engagement, and writing performance, thereby offering an improved understanding of the relationship between affinity for technology interaction (ATI) and academic outcomes.

After splitting the data by gender, the influence of ATI on various outcomes differed significantly. The robustness test by gender examines the predictive role of affinity for technology interaction (ATI) on various outcomes across all samples, as well as male and female subgroups. The results reveal significant and nuanced differences:

Model I (All Sample)

In the overall sample, ATI demonstrates significant positive effects on behavioral engagement (β = .266, p < .05), cognitive engagement (β = .282, p < .001), emotional engagement (β = .271, p < .01), self-efficacy (β = .344, p < .001), and writing performance (β = .297, p < .01). These results suggest that ATI is a robust predictor of engagement and self-efficacy across all participants.

Model II (Male)

For the male subgroup, ATI has a significant positive effect on self-efficacy (β = .368, p < .05) and a marginally significant effect on cognitive engagement (β = .307, +p < .1). However, its influence on behavioral engagement (β = .283), emotional engagement (β = .216), and writing performance (β = −.053) is non-significant. This indicates that, among males, ATI primarily impacts cognitive engagement and self-efficacy, with limited influence on other variables.

Model III (Female)

In the female subgroup, ATI significantly predicts emotional engagement (β = .365, p < .05) and self-efficacy (β = .397, p < .01), with a marginally significant effect on writing performance (β = .343, +p < .1). The effects on behavioral engagement (β = .303) and cognitive engagement (β = .337) are positive but not statistically significant. These findings suggest that ATI has a stronger and broader impact on females, particularly in terms of emotional engagement, self-efficacy, and writing performance.

Discussion

Impact of AI Chatbot-Enhanced Flipped Learning on Self-Efficacy, Engagement, and ATI

Regarding the first research question, the results indicate that integrating AI chatbots into a flipped classroom significantly enhances students’ self-efficacy, and engagement (behavioral, emotional, and cognitive) more than in a conventional flipped classroom. These findings and prior studies by Belda-Medina and Calvo-Ferrer (2022) suggest that real-time, adaptive feedback from AI tools supports writing development and learner confidence. In particular, the experimental group’s higher gains in self-efficacy can be attributed to the near-instant corrective and suggestive feedback offered by the chatbot, which helped lower anxiety and bolster students’ perception of their writing capabilities (Fryer & Bovee, 2016) This confirms Bandura’s (1977) assertion that timely and constructive feedback can raise one’s belief in their ability to perform specific tasks.

However, the findings reveal a significant decline in ATI, while self-efficacy and engagement (behavioral, emotional, and cognitive) improved significantly. Woodrow (2011) found that higher self-efficacy correlates with better writing performance, reinforcing this study’s findings that students developed greater confidence in writing despite a decline in technology affinity. The decline in ATI despite improved self-efficacy and engagement aligns with Franke et al. (2019) and Scherer and Siddiq (2019), who suggest that students with lower technology affinity may initially struggle with AI-based interventions and that excessive reliance on technology can sometimes lead to technology fatigue or frustration. Moreover, the findings also align with (Lo & Hew, 2017) who found that students in flipped learning environments may prefer human feedback over automated responses, leading to a reduced affinity for technology over time.

The integration of AI chatbots into flipped learning aligns with Akçayır and Akçayır (2018), who proposed that flipped classrooms allow for deeper, interactive learning experiences. However, the decline in ATI suggests that, while chatbots were useful, students may have preferred instructor and peer interactions, which aligns with studies by Hülshoff and Jucks (2024) on the importance of balancing AI and human-driven feedback.

Influence of Affinity for Technology Interaction (ATI) on Self-Efficacy, Engagement, and Writing Performance

Regarding the second research question, the results of the Structural Equation Modeling (SEM) analysis provide strong evidence that Affinity for Technology Interaction (ATI) significantly influences self-efficacy, engagement (behavioral, cognitive, and emotional), and writing performance in foreign language education (Figure 6 and Table 17).

The increase in behavioral, emotional, and cognitive engagement supports research by Reeve and Tseng (2011) who highlight that technology-supported active learning enhances student engagement. (Abeysekera & Dawson, 2015) argued that flipped learning increases engagement through active, student-centered instruction, aligning with the observed improvement in engagement levels in this study.

Additionally, the findings align with studies demonstrating that AI Chatbots like ChatGPT can serve various functions, including answering questions, generating content, solving problems, providing tutoring, and supporting language learning and research (Boudouaia et al., 2024; Rahman & Watanobe, 2023). They also reinforce evidence that ChatGPT enhances students’ writing by improving idea generation, coherence, vocabulary, grammar, and organization, consistent with Song and Song (2023). Moreover, the flipped model itself seems to have fostered more active participation and facilitated deeper engagement with in-class tasks, corroborating previous research that highlights the benefits of shifting content delivery outside the classroom (Hung, 2015). The integration of the AI chatbot within the flipped framework appears to enhance this engagement further by providing continuous guidance and additional practice opportunities beyond the scheduled class times, thus reinforcing Abeysekera and Dawson (2015) the observation that digital scaffolding can amplify students’ motivation and persistence.

Predictive Role of Affinity for Technology Interaction (ATI) on Cognitive Engagement, Emotional Engagement, and Self-Efficacy: Robustness Test Controlling for Gender Groups

Regarding the third research question, the study conducted a robustness test based on gender to assess whether the predictive role of Affinity for Technology Interaction (ATI) on cognitive engagement, emotional engagement, and self-efficacy differs between male and female participants. The results reveal gender-specific variations in the impact of ATI, providing deeper insights into how technology affinity influences learning outcomes across different gender groups. Regardless of gender, a higher affinity for technology fosters greater cognitive and emotional engagement, as well as stronger self-efficacy. Across all students, ATI remains a strong predictor of engagement and self-efficacy.

Among male students, ATI primarily influences cognitive engagement and self-efficacy, suggesting that men benefit more from technology when it enhances their ability to process and retain information (Venkatesh & Morris, 2000). However, ATI has a weaker impact on emotional engagement, possibly because male students may engage with technology in a more task-oriented manner rather than emotionally. Conversely, female students exhibit a stronger link between ATI and emotional engagement, suggesting a more holistic integration of technology into their learning and emotional experiences. This finding aligns with existing research indicating that female users tend to be more cautious about the consequences of technology use (Bouzar et al., 2024; Cai et al., 2017)and express greater concern about excessive dependence on technology.

Additionally, the positive relationship between ATI and writing performance in female students—absent in their male counterparts—underscores gender-based differences in how technology supports task-specific outcomes. In contrast, males exhibit a weaker association between ATI and behavioral engagement, possibly due to differences in how they interact with technology during practice-oriented tasks compared to its effects on cognitive processes and self-confidence. These insights highlight the importance of considering gender-specific responses when designing technology-based educational interventions.

These findings align with studies emphasizing the significant influence of gender on technology adoption (van Elburg et al., 2022; Yeboah et al., 2025). Furthermore, there has been growing interest in exploring how gender classifications shape perspectives on technology (Bouzar et al., 2024; Cai et al., 2017). The study suggests that gender moderates the impact of ATI, with males benefiting more in cognitive engagement and self-efficacy. At the same time, females experience broader benefits, including emotional engagement and writing performance.

Conclusion, Implication, and Future Research

This study examined the impact of AI chatbots in flipped classrooms on students’ writing performance, self-efficacy, and engagement while considering the roles of Affinity for Technology Interaction (ATI) and gender. The findings align with existing research emphasizing that flipped learning and AI tools can enhance engagement and self-efficacy. However, the study also reveals a decline in affinity for technology interaction (ATI), suggesting that students may require additional support or training to fully adapt to AI-driven learning environments. This also suggests that AI chatbots are valuable in supporting writing and engagement. However, their role should be carefully balanced with human interaction to maintain students’ self-efficacy and affinity for technology interaction (ATI). Additionally, Gender differences emerged, with males showing stronger cognitive engagement and self-efficacy, while females experienced broader gains, particularly in emotional engagement and writing performance.

The study’s limitations include constraints in scope and duration, which may affect the generalizability of the findings. Future research should investigate diverse learner populations, compare different AI tools, and assess long-term effects. Additionally, addressing gender-specific responses and instructor proficiency with AI is essential to optimize AI-integrated flipped learning for inclusive and effective pedagogy.

Footnotes

Acknowledgements

The authors would like to thank all participants for taking part in this study.

Ethical Considerations

This study involving human participants was reviewed and approved by the Chairperson of the Institute of Research and Community Service, University of Persatuan Guru Republik Indonesia Semarang, in accordance with the 7 (seven) WHO 2011 Standards. The ethics approval reference number for this study is No. 025/LPPM-UPGRIS/VIII/2024. No animal subjects were involved in this research.

Consent to Participate

Informed consent was obtained from all participants prior to their participation in the study. The consent procedures adhered to the ethical principles outlined in the Council for International Organizations of Medical Sciences (CIOMS) 2016 Guidelines

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.